Multimodal Analytics of Cybersecurity Crisis Preparation Exercises: What Predicts Success?

Instructional alignment, the match between intended cognition and enacted activity, is central to effective instruction but hard to operationalize at scale. We examine alignment in cybersecurity simulations using multimodal traces from 23 teams (76 s…

Authors: Conrad Borchers, Valdemar Švábenský, S

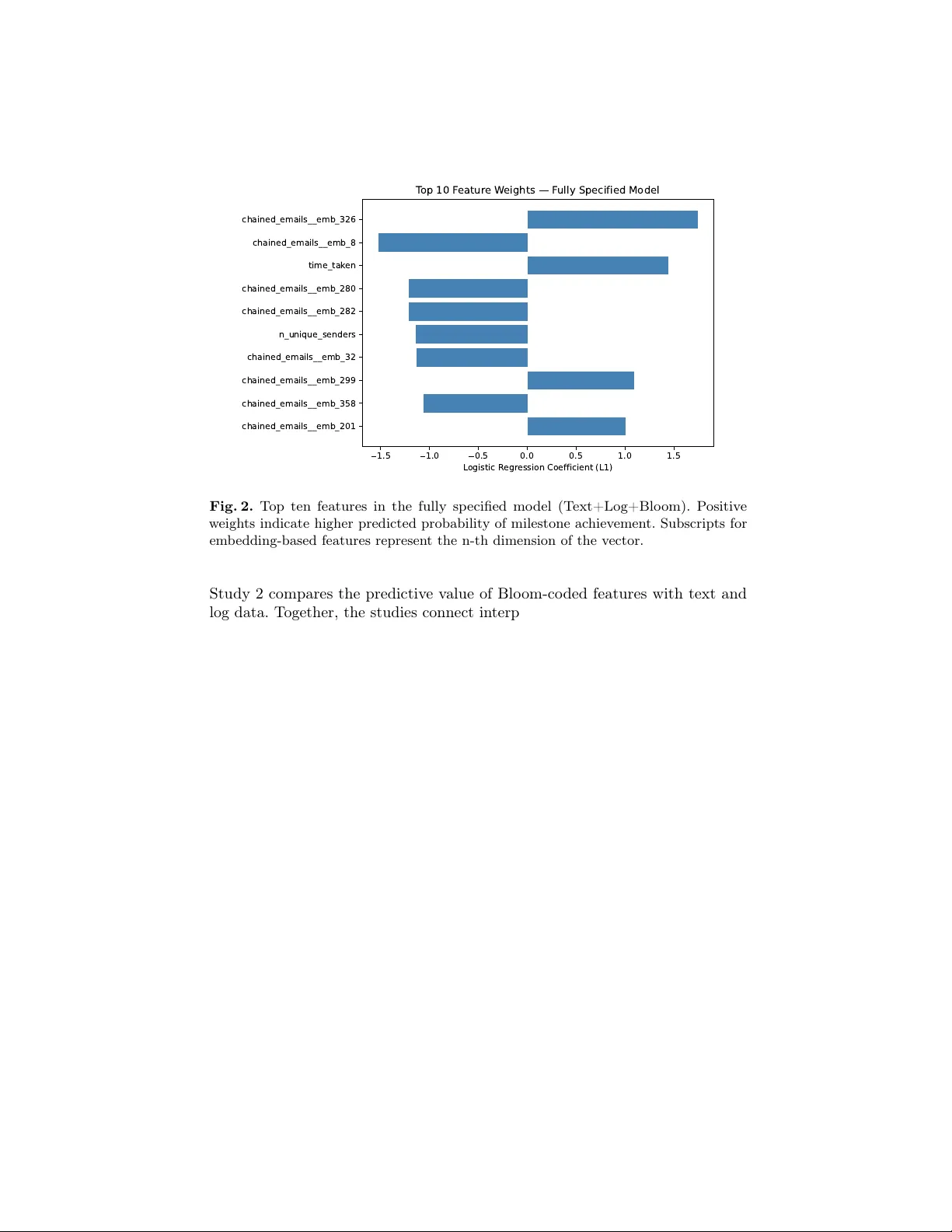

Multimo dal Analytics of Cyb ersecurit y Crisis Preparation Exercises: What Predicts Success? Conrad Borc hers 1 [0000 − 0003 − 3437 − 8979] , V aldemar Šv ábenský 2 [0000 − 0001 − 8546 − 280 X ] , Sandesh K. Kafle 2 [0009 − 0002 − 4275 − 1171] , Kevin K. T ang 1 [0009 − 0009 − 4544 − 7467] , and Jan V yk opal 2 [0000 − 0002 − 3425 − 0951] 1 Carnegie Mellon Univ ersity cborcher@cs.cmu.edu, kktang@andrew.cmu.edu 2 Masaryk Univ ersity , F aculty of Informatics valdemar@mail.muni.cz, {xkafle,vykopal}@fi.muni.cz Abstract. Instructional alignmen t, the match betw een intended cog- nition and enacted activity , is central to effective instruction but hard to operationalize at scale. W e examine alignmen t in cyb ersecurit y sim- ulations using m ultimo dal traces from 23 teams (76 studen ts) across fiv e exercise sessions. Study 1 co des ob jectiv es and team emails with Blo om’s taxonom y and mo dels the completion of key exercise tasks with generalized linear mixed models. Alignment, defined as the discrepancy b et w een required and enacted Blo om levels, predicts success, whereas the Blo om category alone does not predict success once discrepancy is considered. Study 2 compares predictiv e feature families using group ed cross-v alidation and ℓ 1 -regularized logistic regression. T ext em b eddings and log features outperform Blo om-only models (AUC ≈ 0.74 and 0.71 vs. 0.55), and their combination performs b est (T est AUC ≈ 0.80), with Blo om frequencies adding little. Ov erall, the w ork offers a measure of alignmen t for sim ulations and sho ws that m ultimo dal traces b est forecast p erformance, while alignment pro vides interpretable diagnostic insight. This preprin t has not undergone peer review or an y p ost-submission impro vemen ts or corrections. This paper has b een accepted for publication in Pr o c e e dings of the 27th International Confer enc e on A rtificial Intel ligenc e in Education (AIED), Se oul, South Kor ea . © 2026. Please cite this article as follows: C. Borchers, V. Šv áb enský , S. K. Kafle, K. K. T ang, J. V ykopal: Multimodal Analytics of Cyb erse curity Crisis Pr ep ar ation Exer cises: What Pr e dicts Suc- c ess? In Pr o c e e dings of the 27th International Confer ence on Artificial Intel ligenc e in Educ ation (AIED) , 2026. Seoul, South Korea. Keyw ords: Bloom, simulation-based learning, tabletop exercises, prediction 1 In tro duction Instructional alignment, the coherence among learning ob jectiv es, activities, and assessmen t, is foundational to effective learning design [ 8 , 30 ]. Blo om’s taxonomy and later revisions [ 9 , 2 ] provide a shared language for articulating cognitive demands, ranging from remembering and understanding to analyzing, ev aluating, and creating. Despite their ubiquity in instructional design, suc h frameworks are rarely used to me asur e alignment in practice, particularly at scale, where learners’ enacted activities may diverge from intended ob jectives [ 35 ]. The present study con tributes empirical evidence that alignmen t b et ween collab orativ e learner 2 Borc hers et al. actions and instructional ob jectives, inferred from multimodal learning analytics, predicts problem-solving p erformance. F or the AIED comm unity , this demon- strates how theory-driven cognitiv e frameworks can b e op erationalized to supp ort p erformance prediction and complex collab orativ e learning environmen ts. This challenge is esp ecially pronounced in simulation-based learning (SBL), where op en-ended, team-based tasks generate rich but noisy multimodal traces that are difficult to relate to targeted cognitive pro cesses [ 15 , 48 ]. Cyb ersecurity tabletop exercises (TTXs; see Section 2.3 ) exemplify this problem: teams must co ordinate under time pressure, in terpret evolving information, and communicate with diverse stakeholders, pro ducing b ehaviors that are p edagogically v aluable y et methodologically hard to co de and assess [ 43 , 39 ]. Although past w ork has mo deled suc h traces to supp ort instructional interv en tion [ 45 ], it remains unclear whether Blo om-aligned discrepancies b et ween ob jectives and enacted b eha vior predict p erformance, or which trace types are most informative. W e address these questions using cyb ersecurit y TTXs conducted on the op en- source INJECT Exercise Platform [ 39 ], analyzing platform logs and in-exercise email communications from 23 teams (76 students) across five sessions. RQ1 examines whether alignment , op erationalized as the Blo om-level discrepancy b et w een milestone ob jectiv es (i.e., the successful completion of key tasks in the exercise) and the highest Blo om level evidenced in team comm unications, predicts team p erformance, while RQ2 compares the predictive utility of Blo om-coded categories, linguistic features from emails and logs, and engineered b eha vioral log features, individually and in com bination. Study 1 co des ob jectiv es and comm unications using Blo om’s taxonomy and applies generalized linear mixed mo dels [ 7 ] to test the contribution of discrepancy b ey ond raw Blo om levels, and Study 2 ev aluates predictive mo dels using Blo om-only features, text embeddings, b eha vioral aggregates, and their combinations with grouped cross-v alidation and ℓ 1 -regularized logistic regression. W e con tribute a data-driven measure of instructional alignment in SBL and sho w that Blo om-level discrepancy b et ween ob jectives and team comm unication predicts performance in cyb ersecurit y tabletop exercises. Linguistic and b eha vioral traces were more predictive than Blo om-frequency features, while discrepancy remains v aluable for instructional diagnosis. 2 Bac kground 2.1 Blo om’s T axonom y for the Prediction of Learning Performance Blo om’s T axonomy is an established framework for characterizing learning ob- jectiv es across cognitive lev els, including in computing education [ 9 , 2 ]. AIED researc h has op erationalized this framew ork b y inferring cognitiv e lev els from observ able learner b eha viors, such as clickstream activity , assignment artifacts, and rubric-based assessments, to predict p erformance and cognitive progres- sion [ 27 , 26 , 6 ]. Despite their success, these app roac hes rely primarily on unimo dal digital traces, which limits their ability to capture learning pro cesses distributed across m ultiple forms of interaction. Multimo dal Analytics of Cyb ersecurit y Crisis Preparation Exercises 3 Recen t AI-oriented reinterpretations, including the prop osed “AIEd Bloom’s taxonom y ,” replace cognitive levels with pro cess-fo cused stages such as Collect and A dapt [ 20 ]. While motiv ated by mo dernization, this shift weak ens Blo om’s theoretical grounding by obscuring distinctions b et ween low er- and higher-order thinking and offering limited supp ort for differen tiating surface engagement from critical reasoning. Both traditional and AI-driven adaptations largely ov erlo ok the application of Blo om’s framework to multimodal learning contexts, including collab orativ e comm unication artifacts, which require explicit co ding schemes and ground-truth lab eling [ 26 ]. W e address these gaps by combining multimodal evidence from team communication transcripts and interaction logs with predic- tiv e mo deling to op erationalize Blo om’s T axonomy in a rich learning setting. T o address v alidit y concerns in mapping trace data to cognition, w e in terpret email and log features as indicators of collab orativ e pro cesses such as co ordination and shared problem framing, which hav e b een shown to provide observ able evidence of learning in collab orativ e learning settings [32]. 2.2 Cyb ersecurit y Education in the AIED Context Cyb ersecurit y is a key comp onen t of contemporary computing education, inte- grating technical systems with h uman, informational, and organizational concepts to protect op erations against adversarial threats [ 36 , 21 ]. Alongside rapidly ex- panding areas suc h as artificial intelligence, cyb ersecurit y has gained increasing prominence, and mastery of cyb ersecurity comp etencies is now widely viewed as core preparation for graduates in computer science and related fields [ 36 , 31 ]. Despite its imp ortance, cyb ersecurit y remains marginal in AIED and learning analytics research. A systematic review found only 35 relev ant studies among more than 3,000 publications, most using primarily shallow descriptive measures [ 41 ]. F urthermore, most past pap ers on the topic focused on priv acy or infrastructure rather than cyb ersecurit y learning itself [ 4 , 17 ]. Existing researc h on cybersecurity education within AIED and learning analytics fo cused on priv acy aw areness, informal learning en vironments, program- lev el analyses, and online courses [ 18 , 14 , 24 , 5 , 38 ]. None of these studies examined studen ts in dedicated higher-education cyb ersecurit y degree programs. Although cyb ersecurit y education has gained visibilit y in computing education research [ 40 ], it remains rare within AIED. This study addresses that gap by presenting learning analytics evidence from a higher education program cen tered on cyb ersecurit y . 2.3 T abletop Exercises (TTXs) T abletop exercises (TTXs) are a form of exp erien tial learning in which small groups collab orativ ely w ork through complex, time-constrained scenarios in a shared instructional setting [ 43 , 3 ]. Instructors issue common prompts, while teams develop resp onses indep endently and sub mit outcomes orally or through digital systems of v arying sophistication. This structure supp orts co ordinated engagemen t without requiring fully synchronized group activity . 4 Borc hers et al. TTXs help learners practice resp onses to realistic, high-stakes situations that are difficult to reproduce in classro oms, including disaster resp onse, public health emergencies, healthcare crises, and cyb ersecurit y , which is the fo cus of the present study [ 23 ]. More broadly , TTXs b elong to the tradition of simulation-based p edagogy , which is well established in AIED and learning analytics research [ 48 ]. Compared to technical exercises in cyb er ranges – another p opular approach for realistic cyb ersecurity sim ulations [ 42 ] – TTXs are muc h more light weigh t and relev an t also for less technically-orien ted roles (e.g., IT management). 3 The INJECT Exercise Platform T abletop exercises were traditionally conducted offline using printed materials, whic h imp osed substan tial preparation demands on instructors and required lab or- in tensive manual assessment. In resp onse, digital to ols hav e emerged to support TTX-based instruction, as do cumen ted in a recent survey [ 43 ]. Our study uses the op en-source INJECT Exercise Platform (IXP) [ 39 ], a browser-based system supp orting the full exercise lifecycle while reducing instructor w orkload. IXP pro vides scenario-critical information, is freely a v ailable and activ ely maintained, and has b een refined ov er time through sustained feedback from instructors. 3.1 Educational Goals and Learning Ob jectives While TTXs apply across many instructional domains (Section 2.3 ), we fo cus on cyb ersecurit y . Here, TTXs enable learners to practice resp onses to cyb er crises that disrupt IT op erations, such as data breaches. T eams collab orativ ely address technical inciden ts alongside communication and co ordination challenges, dev eloping comp etencies in conditions that mirror professional practice and supp ort workforce readiness in a rapidly evolving field [ 36 , 31 ]. The exercises mo del the work of a Computer Security Incident Resp onse T eam (CSIR T) in medium- to large-scale organizations. Participan ts engage in op en- ended scenarios that require prioritizing and resolving multiple, simultaneous inciden ts under time constraints of roughly 90 minutes, reflecting the pace and uncertain ty of real incident resp onse. Learning outcomes align with the Incident Resp onse role in the NICE Cyb ersecurit y W orkforce F ramework [ 28 ]. In addition to technical skills, the activities emphasize professional disp ositions [ 36 , 31 ], in- cluding teamw ork, decision-making under pressure, and effectiv e communication within the CSIR T and with external stakeholders. Sp ecific tasks that the teams address include in vestigating connections from suspicious IP addresses to an organization’s infrastructure, advising non-exp ert users affected by cyb er attac ks, and writing up a rep ort do cumen ting the findings. 3.2 Ho w Students Learn Using the INJECT Exercise Platform T abletop exercises unfold through inje cts , predefined messages released during the activity to in tro duce new information and adv ance the scenario [ 39 ]. An inject Multimo dal Analytics of Cyb ersecurit y Crisis Preparation Exercises 5 ma y , for example, inform students of a breach in their simulated organization. In traditional TTXs, instructors t ypically deliver suc h messages v erbally or on pap er. The INJECT Exercise Platform instead automates inject delivery , presenting them directly within eac h team’s web interface, as shown in Figure 1 . Fig. 1. IXP from the student p erspective, displa ying recent injects. Up on receiving an inject, teams decide ho w to respond and enact their decisions through the platform (Figure 1 ). These to ols abstract real-world appli- cations, allowing teams to carry out actions that resemble professional practice and receive immediate feedback. Professional comm unication is also central to TTXs, and in IXP , this is mo deled through emails from simulated stakeholders that p ose questions or requests requiring delib eration. T eams m ust comp ose appropriate replies under time pressure as the incident unfolds. Resp onses to these emails require b oth low er- and higher-order cognitiv e pro cesses, such as confirming information or applying pro cedures (T able 1 ). Email is the only to ol for comm unicating with simulated in-exercise actors. Sp ok en communication b et w een team members is also relev ant but outside the scop e of our analysis. Ev ery team action, including to ol use and email comm unication, is logged with full metadata and microsecond-resolution timestamps in JSONL format. These logs supp ort the identification of milestones that mark key ev ents in the TTX and allo w instructors to monitor progress and, if desired, assign grades. 4 Metho ds 4.1 P articipant Sample By including participants from multiple nations and contexts, we aim to improv e the external v alidit y of our findings [ 19 ]. In the Czec h Republic , four sequential 6 Borc hers et al. tabletop exercises (TTX0–3) were run as a capstone in a semester-long cyb erse- curit y inciden t resp onse course at a public universit y , inv olving 36 computing ma jors p er exercise, organized into 13 skill-balanced teams (10 teams of three and three teams of tw o). The exercises were spaced out across several weeks. In Estonia , a fifth exercise (TTX4) included 11 teams at a cross-b order cyb ersecurity ev ent: five univ ersity teams, five vocational-school teams, and one educator team. In late 2024, 24 teams with 81 learners participated. One team declined researc h consent, leaving 23 teams and 76 learners for analysis. The sample is comparable to or larger than prior studies of collab orativ e cyb ersecurit y work [ 46 ]. All teams in our sample completed up to 18 milestones in a fixed order (though m ultiple milestones can b e work ed on sim ultaneously), limiting task difficult y confounds. Finally , 68 (20%) milestone completions included no email. Such milestones mostly included simple tasks such as blo cking an IP address, which did not require comm unication with the simulated exercise stakeholders. 4.2 Co ding of Emails Annotating emails is substantially more complex than annotating exercise ob jec- tiv es, which are few and brief, and therefore requires dedicated qualitative co ding. W e developed a co deb ook to classify security and inciden t-resp onse emails by the highest cognitive demand imp osed on the recipient. W e ensured that the scheme w as sufficien tly grounded in Bloom’s taxonom y b y initially starting co deb ook dev elopment based on ACM CCECC’s Blo om’s taxonomy for computing [ 2 ]. The co debo ok defines six categories spanning simple ac kno wledgments to higher- order demands inv olving analysis, ev aluation, or creation; T able 1 presents the categories, definitions, and represen tative examples. T able 1. Overview of the Codeb ook categories. Category Definition Example Remembering Pure ac knowledgemen t or status updates with no action required. “Thanks for the info.” Understanding Provide trivial self-facts or confirm informa- tion already known. “What is your email?” Applying Execute kno wn steps or fetch sp ecified re- sources. “Send 48h access logs for nice-project.uni.ex .” Analyzing Inv estigate without prescrib ed steps; decide what data or metho ds to use. “What is going on? Please provide more information.” Ev aluating Make judgments under uncertainty (p olicy , impact, authenticit y). “Should we inform NCISA about this incident?” Creating Produce new artifacts such as summaries, advisories, or drafts. “Please draft the public state- ment ab out the b reac h.” Three independent co ders applied the codeb ook to individual emails from the INJECT Exercise Platform using a three-stage pro cess of indep enden t co d- Multimo dal Analytics of Cyb ersecurit y Crisis Preparation Exercises 7 ing, discussion-based reconciliation, and final consolidation. When text reflected m ultiple Bloom lev els, co ders assigned the highest level; when no emails were presen t, no co de was assigned. Inter-rater reliability was mo derate to substantial. P airwise Cohen’s κ ranged from 0.555 to 0.678 across co der pairs, and Fleiss’ k appa for three raters on complete cases w as 0.636. Reliability v aried by cate- gory , with higher agreemen t for Applying and Creating, moderate agreement for Analyzing, Remembering, and Ev aluating, and the low est agreement for Understanding. Given the inherent ambiguit y of cognitive constructs, κ v alues around 0.6 are commonly considered acceptable in related research [ 25 , 22 ]. W e pro vide anon ymized analysis and prepro cessing code via a public Git rep ository . 3 The study data are also op en source [ 1 ]. 5 Study 1: Explaining Per formance via Blo om (R Q1) Study 1 tested whether (a) Blo om-coded cognitiv e demand in team emails predicted milestone success and whether (b) misalignment b etw een required and observ ed Blo om levels predicted outcomes. 5.1 Metho d F or each team and milestone, w e co ded the highest Bloom lev el evident in the related email exchange. Milestone completion was a binary outcome ( 1 for completion, 0 for non-completion). W e computed a discrepancy score as the distance b et w een the Blo om level required and that observ ed, co ded as 0 for exact matches, 1 for adjacent lev els, and 2 otherwise. Missing data were co ded as 2, since no resp onse is conceptually similar to an inadequate resp onse. W e fit a sequence of generalized linear mixed mo dels predicting the log-o dds of milestone achiev emen t. All models included a random intercept for each team to account for rep eated observ ations. The baseline mo del included the observ ed Blo om level as a fixed effect. The second mo del added the discrepancy score to capture misalignment b et ween required and enacted cognitiv e levels. The final mo del further included the in teraction b et ween Blo om level and discrepancy to test whether the effect of misalignment dep ended on the absolute cognitiv e lev el at whic h teams were communicating. Mo dels were compared sequentially using likelihoo d-ratio tests, retaining the b est-fitting sp ecification. Fixed effects are rep orted as o dds ratios with 95% confidence interv als, which quantify multiplicativ e changes in the o dds of success in logistic mo dels. An o dds ratio ab o v e one indicate s increased o dds, while v alues b elo w one indicate reduced o dds, for a one-unit increase or category presence. Odds ratios describ e relative changes rather than absolute probabilities. 5.2 Results A dding discrepancy significantly impro ved fit ov er a Blo om-only baseline, χ 2 (1) = 7 . 18 , p = . 007 , whereas the Blo om-discrepancy interaction did not, χ 2 (2) = 0 . 45 , 3 h ttps://github.com/conradborchers/bloom- ttx/ 8 Borc hers et al. p = . 800 . In the selected additive mo del, greater discrepancy reduced the o dds of success (OR = 0.65, 95% CI [0.48, 0.90], p = . 008 ), and Blo om category was no longer significant ( p ’s > . 077 ). Betw een-team v ariability was substantial (ICC = .20), but fixed effects explained little v ariance (marginal R 2 = . 04 ; conditional R 2 = . 23 ), indicating limited explanatory p o wer of Blo om alignment. A complementary χ 2 test across discrepancy levels (0–2) sho w ed a significant but weak asso ciation with milestone ac hiev ement, χ 2 (2 , N = 340) = 6 . 41 , p = . 041 , Cramér’s V = . 14 , suggesting that smaller discrepancies were asso ciated with higher success rates but with mo dest practical impact. 6 Study 2: What Predicts Performance? (RQ2) The second study examined which feature families b est predict milestone achiev e- men t. Mo ving b ey ond Study 1’s theoretical focus, w e compared mo dels using Blo om-coded email categories, linguistic features from team communication, and log-based b ehavioral indicators, alone and in combination, to assess whether Blo om co ding adds predictive v alue b ey ond automated text and log traces. 6.1 Metho d In the second study , w e fused system logs and email with Blo om-coded indicators in to text sequences and n umeric features. All timestamps were conv erted to UTC and aligned by teamID . F or each milestone, we aggregated logs and emails since the previous milestone or session start. Emails w ere concatenated with a delimiter tok en ( <|CHAIN|> ). W e also computed counts of logs and emails, action-t yp e frequencies, av erage log and email lengths, elapsed time since the prior milestone, email response times, num b er of unique senders, and Bloom code frequencies. T ogether, these features constitute d the milestone-level indicators. W e group ed the resulting predictors in to three groups: – T ext features: embeddings of chained_logs and chained_emails gener- ated using the all-MiniLM-L6-v2 mo del from SentenceTransformers . – Log features: numeric aggregates derived from system interactions, exclud- ing Blo om-coded v ariables. – Blo om features: counts of Bloom taxonom y codes (e.g., r ememb ering , applying ) extracted from co ded emails within ob jectives. Evaluation design. T o assess predictive v alue, we trained ℓ 1 -regularized logistic regression mo dels using different feature com binations, with milestone ac hieve- men t as the binary outcome. Data w ere split in to 80% training and 20% test sets, stratified by outcome and group ed by teamID , with 5-fold GroupKFold cross-v alidation on the training set. Inputs were standardized. ℓ 1 regularization mitigates ov erfitting and enforces feature selection (b y shrinking irrelev an t co effi- cien ts to 0 and effectively dropping them out of the model). P erformance was ev aluated using AUC with 95% confidence interv als, computed across folds and via stratified b ootstrap on the test set. Multimo dal Analytics of Cyb ersecurit y Crisis Preparation Exercises 9 Interpr etability. In terpretation relied on coefficients from the ℓ 1 -regularized logistic regression. With standardized features, the coefficient sign and magnitude directly reflect the direction of the association with milestone ac hiev ement. A ccordingly , p ost-ho c explainers designed for complex, non-linear arc hitectures (e.g., SHAP/LIME) were unnecessary , reducing o verfitting risk and av oiding kno wn inconsistencies in these interpretabilit y metho ds [ 34 ]. W e rep ort the ten largest absolute w eights, spanning text embeddings and engineered indicators. 6.2 Results T able 2 rep orts predictiv e p erformance across feature sets. Bloom-co ded features alone p erformed at near-chance levels, whereas text and log features were sub- stan tially more predictiv e. Com bining text and log features yielded the strongest results (test A UC = 0.80), indicating a complemen tary signal, with Blo om features adding little incremen tal v alue b ey ond embeddings. T able 2. Cross-v alidated and test set p erformance for differen t feature sets. Mo del CV AUC CV 95% CI T est AUC T est 95% CI Blo om only 0.551 [0.468, 0.634] 0.541 [0.469, 0.615] T ext only 0.740 [0.720, 0.760] 0.727 [0.666, 0.786] Log only 0.714 [0.667, 0.761] 0.727 [0.669, 0.783] T ext + Blo om 0.740 [0.720, 0.760] 0.727 [0.666, 0.786] Log + Blo om 0.714 [0.667, 0.761] 0.727 [0.669, 0.783] T ext + Log 0.790 [0.760, 0.821] 0.804 [0.747, 0.856] All (T ext + Log + Blo om) 0.790 [0.760, 0.821] 0.804 [0.747, 0.856] Figure 2 displa ys the ten strongest predictors from the full mo del. Most derive from chained_emails em b eddings, reinforcing the predictiv e v alue of email comm unication, with additional contributions from temp oral and structural features. The co efficien t sign indicates the direction of asso ciation with milestone ac hievemen t. 7 General Discussion Instructional alignment has long b een treated as a central principle of learning design [ 8 , 30 ], yet it is rarely op erationalized in authentic, data-rich con texts such as SBL. Most prior w ork examines alignment through curriculum artifacts or rubric-based analyses [ 6 ], or reviews scaffolding in simulations without measuring alignmen t as it o ccurs during activity [ 16 ]. This article addresses this gap b y integrating theory-driv en coding with predictiv e modeling of m ultimo dal team data. Study 1 frames alignmen t as a discrepancy b et w een instructional ob jectives and enacted communication, while 10 Borc hers et al. 1.5 1.0 0.5 0.0 0.5 1.0 1.5 L ogistic R egr ession Coefficient (L1) chained_emails__emb_326 chained_emails__emb_8 time_tak en chained_emails__emb_280 chained_emails__emb_282 n_unique_senders chained_emails__emb_32 chained_emails__emb_299 chained_emails__emb_358 chained_emails__emb_201 T op 10 F eatur e W eights F ully Specified Model Fig. 2. T op ten features in the fully sp ecified mo del (T ext+Log+Blo om). Positiv e w eights indicate higher predicted probabilit y of milestone achiev ement. Subscripts for em b edding-based features represen t the n-th dimension of the vector. Study 2 compares the predictive v alue of Bloom-co ded features with text and log data. T ogether, the studies connect interpretable, theory-based measures of alignmen t with scalable analytics that supp ort accurate prediction. 7.1 Study 1: Alignment and Milestone Success F rom a constructiv e alignment p erspective, Study 1 results explain wh y dis- crepancy , rather than nominal Blo om levels, predicts success. Biggs’ account of alignment emphasizes that learning improv es when activities and assessment elicit the same cognitive work as the intended ob jectiv es [ 8 ]. Our finding that misaligned teams underp erform extends this principle to simulation-based con- texts using trace data. Prior meta-analyses of scenario-based learning similarly sho w that scaffolds impro ve outcomes b y alignin g what learners actually do with the targeted cognitiv e demands, not b y merely increasing task difficulty [ 16 , 15 ]. In tabletop exercises, where reasoning is enacted primarily through time- pressured communication [ 43 , 39 ], a Blo om gap likely reflects co ordination without the intended analytic, ev aluativ e, or generative thinking, consistent with work distinguishing surface participation from deep er cognition [ 44 ]. F or TTX design, these results suggest prioritizing sc affolds that help teams main tain reasoning at the in tended cognitive level rather than adding more complex injects. Because TTXs generate ric h communication traces, they enable real-time alignment diagnosis by combining transcription, automated classifica- tion, and predictive mo deling. Comparable metho ds hav e captured self-regulated learning pro cesses as they unfold [ 13 ]. Applied to TTXs, such approaches could Multimo dal Analytics of Cyb ersecurit y Crisis Preparation Exercises 11 enable timely prompts or feedback when misalignment emerges, turning Blo om discrepancy from a p ost-hoc indicator into a mechanism for just-in-time support in high-pressure learning en vironments. 7.2 Study 2: Predictive V alue of F eature F amilies Study 2 frames Blo om co des as in terpretable diagnostics while sho wing that linguistic and in teraction traces are more predictive of p erformance. This mirrors prior discourse-analytic and learning analytics work demonstrating that mo dels grounded in communication structure, language use, and temp oral participation outp erform coarse categorical labels, and that combining multiple trace types yields complemen tary v alue [ 33 , 11 , 47 ]. Our results hence support combining fine-grained b eha vioral and linguistic signals for forecasting p erformance. The limited con tribution of Blo om frequencies b eyond embeddings could indicate limited v alue for our prediction task. Alternatively , it could indicate that their information is already captured in the semantic structure of communication traces. This aligns with evidence that large language mo dels achiev e strong classification p erformance while reducing interpretabilit y [ 37 ]. Practically , this suggests a division of lab or: Blo om co ding supp orts explanation and design, whereas em b eddings and engineered features supp ort real-time risk detection. Similar complemen tarities b et ween rich interaction logs and textual analysis hav e b een rep orted in large-scale cyb er and sim ulation-based exercises [ 29 , 33 ]. A cross b oth studies, Blo om discrepancy helps explain wh y teams succeed or struggle, while multimodal features indicate who is likely to do so and when. F or AIED, this suggests a pip eline in which predictive mo dels flag at-risk moments using text and log traces, and alignment analytics provide cognitiv ely meaningful rationales for feedbac k and redesign. Analytics related to this feedbac k could b e directly shown to students to enhance learning or to instructors to improv e instruction accompanying the simulation-based training or curricular redesign. This synthesis resp onds to calls for analytics that are simultaneously accurate and p edagogically actionable [ 45 ], and it links platform-level telemetry with discourse-lev el evidence of thinking in TTXs and related simulations. F uture w ork should extend these diagnostics b ey ond Blo om categories tow ard net work- based representations, suc h as epistemic netw ork analytics, to supp ort more n uanced and instructionally useful insights [ 12 ]. 7.3 Limitations and F uture W ork Despite diverse data spanning tw o countries, our findings are b ounded by the sp ecific cyb ersecurit y platform and TTXs, each lasting approximately 1.5 hours. Broader v alidity will require replication across domains and longer SBL for- mats, ideally incorporating milestone-level difficult y con trols and alternative op erationalizations that mo del reasoning tra jectories rather than p eak Blo om lev els. Our results are also correlational in nature, calling for quasi-exp erimental studies p oten tially matching groups with different Blo om discrepancies b y prior kno wledge, ability , or similarly adjusting for milestone difficulty . 12 Borc hers et al. W e prioritized interpretable linear mo dels, but more complex architectures could improv e predictive p erformance, and so could more complex measures of Blo om discrepancy in larger samples, suc h as p enalizing larger discrepancies more strongly instead of treating all Blo om taxonomy gaps as equally-spaced. F uture w ork should also examine hybrid approaches that pair high-p erforming multi- mo dal mo dels with explanations instructors can readily use. W e also ac knowledge that more qualitative analysis will b e needed to mak e em b edding-based features consumable for students and teachers (e.g., by sampling protot ypical examples corresp onding to changes in salient embedding dimensions [ 10 ]) . Finally , correlat- ing milestone p erformance with assessments of learning (e.g., via external pre-p ost tests) as well as studying fine-grained pro cess measures of remote collab oration (e.g., email revisions) are sub ject to future work. 8 Conclusion W e op erationalize constructive alignment as a trace-based measure for sim ulation learning and sho w how it complements multimodal prediction by in tegrating linguistic communication (email/text em b eddings) and behavioral interaction logs. In cybersecurity tabletop exercises, alignmen t, measured as Blo om-lev el discrepancy b et ween intended ob jectives and enacted communication, predicted milestone success, whereas nominal Blo om level did not. At the same time, linguistic and interaction traces outp erformed Blo om frequencies in prediction, separating explanation from detection: alignment explains why teams succeed or fail, while m ultimo dal features indicate who is at risk and when. This supp orts an actionable AI-based pip eline: text and log mo dels flag im- p ending milestone failures, and alignment diagnostics guide targeted, cognitively grounded supp ort such as prompts to analyze or justify . Although ev aluated in cybersecurity TTXs with h uman-co ded lab els, the approac h generalizes to other domains, semi-automated co ding, and embedded interv en tions triggered by misalignmen t. By combining predictiv e accuracy with explanatory clarity , this w ork adv ances AIED tow ard timely , p edagogically meaningful guidance. A ckno wledgmen ts. This research was supp orted by the Open Calls for Security Researc h 2023–2029 (OPSEC) program granted by the Ministry of the In terior of the Czec h Republic under No. VK01030007 – Intelligen t T o ols for Planning, Conducting, and Ev aluating T abletop Exercises. References 1. Study dataset. h ttps://doi.org/10.5281/zeno do.19249686 2. A CM Committee (CCECC): Blo om’s for computing: Enhancing blo om’s revised taxonom y with v erbs for computing disciplines (2023), https://ccecc.acm.org / assessmen t/blo oms- for- computing 3. Angafor, G., Y evseyev a, I., Maglaras, L.: Malaw are: A tabletop exercise for malware securit y aw areness education and incident resp onse training. Internet of Things and Cyb er-Ph ysical Systems 4 , 280–292 (2024) Multimo dal Analytics of Cyb ersecurit y Crisis Preparation Exercises 13 4. Asatry an, H., T ousside, B., Mohr, J., Neugebauer, M., Bijl, H., Spiegelberg, P ., F rohn-Schauf, C., F ro c hte, J.: Exploring student expectations and confidence in learning analytics. In: Pro ceedings of the 14th Learning Analytics and Knowledge Conference. p. 892–898. LAK ’24, ACM, New Y ork, NY, USA (2024) 5. Asif, R., Merceron, A., P athan, M.K.: Inv estigating performance of students: a longitudinal study . In: Pro ceedings of the Fifth International Conference on Learn- ing Analytics And Knowledge. p. 108–112. LAK ’15, Association for Computing Mac hinery , New Y ork, NY, USA (2015). https://doi.org/10.1145/2723576.2723579 6. A yyanathan, N.: Learning analytics mo del and blo om’s taxonom y based ev aluation framew ork for the p ost graduate studen ts’ pro ject assessment – a blended pro ject based learning management system with rubric referenced predictors. Shanlax In ternational Journal of Education 10 (3), 48–60 (Jun 2022) 7. Bates, D., Mächler, M., Bolker, B., W alk er, S.: Fitting linear mixed-effects mo dels using lme4. Journal of statistical softw are 67 , 1–48 (2015) 8. Biggs, J.: Enhancing teaching through constructive alignment. Higher education 32 (3), 347–364 (1996) 9. Blo om, B.S., Engelhart, M.D., F urst, E.J., Hill, W.H., Krath wohl, D.R., et al.: T ax- onom y of educational ob jectives: The classification of educational goals. Handb o ok 1: Cognitiv e domain. Longman New Y ork (1956) 10. Borc hers, C. , P atel, M., Lee, S.M., Botelho, A.F.: Disentangling learning from judgmen t: Representation learning for op en resp onse analytics. arXiv preprint arXiv:2512.23941 (2025) 11. Borc hers, C., Tian, X., Boy er, K.E., Israel, M.: Combining log data and collab orativ e dialogue features to predict pro ject quality in middle school ai education. arXiv preprin t arXiv:2506.11326 (2025) 12. Borc hers, C., W ang, Y., Karumbaiah, S., Ashiq, M., Shaffer, D.W., Aleven, V.: Rev ealing netw orks: Understanding effective teac her practices in ai-supp orted classro oms using transmo dal ordered netw ork analysis. In: Pro ceedings of the 14th Learning Analytics and Knowledge Conference. pp. 371–381 (2024) 13. Borc hers, C., Zhang, J., Fleischer, H., Schanze, S., Aleven, V., Baker, R., et al.: Large language models generalize srl prediction to new languages within but not b et w een domains. Journal of Educational Data Mining 17 (2), 24–54 (2025) 14. Brennan, R., Perouli, D.: Generating and ev aluating collectiv e concept maps. In: LAK22: 12th In ternational Learning Analytics and Knowledge Conference. p. 570–576. LAK22, Asso ciation for Computing Machinery , New Y ork, NY, USA (2022). h ttps://doi.org/10.1145/3506860.3506918 15. Chernik ov a, O., Heitzmann, N., Stadler, M., Holzb erger, D., Seidel, T., Fischer, F.: Simulation-based learning in higher education: A meta-analysis. Review of educational researc h 90 (4), 499–541 (2020) 16. Chernik ov a, O., Sommerhoff, D., Stadler, M., Holzb erger, D., Nickl, M., Seidel, T., Kasneci, E., Küchemann, S., Kuhn, J., Fischer, F., Heitzmann, N.: Personalization through adaptivit y or adaptability? a meta-analysis on simulation-based learning in higher education. Educational Research Review 46 , 100662 (2025) 17. Drac hsler, H., Greller, W.: Priv acy and analytics: it’s a delicate issue a c hecklist for trusted learning analytics. In: Pro ceedings of the Sixth International Conference on Learning Analytics & Kno wledge. p. 89–98. LAK ’16, Asso ciation for Computing Mac hinery , New Y ork, NY, USA (2016). https://doi.org/10.1145/2883851.2883893 18. F ranco, A., Holzer, A.: F ostering priv acy literacy among high school students by lev eraging social media interaction and learning traces in the classroom. In: LAK23: 13th International Learning Analytics and Kno wledge Conference. p. 538–544. LAK2023, Asso ciation for Computing Machinery , New Y ork, NY, USA (2023) 14 Borc hers et al. 19. Guzdial, M., du Boula y , B.: The history of computing education researc h. In: The Cam bridge Handb o ok of Computing Education Research, c hap. 1, pp. 11–39. Cam bridge Universit y Press, UK (2019) 20. Hmoud, M., Ali, S.: Aied blo om’s taxonomy: A prop osed mo del for enhancing educa- tional efficiency and effectiveness in the artificial intelligence era. The In ternational Journal of T echnologies in Learning 31 , 111–128 (01 2024) 21. Join t T ask F orce on Cyb ersecurit y Education: Cyb ersecurit y curricular guideline (2017), h ttp://cyb ered.acm.org 22. Karp en, S.C., W elch, A.C.: Assessing the inter-rater reliabilit y and accuracy of phar- macy facult y’s blo om’s taxonom y classifications. Currents in Pharmacy T eac hing and Learning 8 (6), 885–888 (2016) 23. Kävrestad, J., Johansson, S., Bergström, E.: Using tabletop exercises to raise cyb ersecurit y a wareness of decision-mak ers. In: Oliv a, G., P anzieri, S., Hämmerli, B., Pascucci, F., F aramondi, L. (eds.) Critical Information Infrastructures Security . pp. 231–248. Springer Nature Switzerland, Cham (2025) 24. Kitto, K., Sarathy , N., Gromov, A., Liu, M., Musial, K., Buckingham Shum, S.: T ow ards skills-based curriculum analytics: can we automate the recognition of prior learning? In: Pro ceedings of the T enth International Conference on Learning Analytics & Knowledge. p. 171–180. LAK ’20, Association for Computing Machinery , New Y ork, NY, USA (2020). https://doi.org/10.1145/3375462.3375526 25. Levin, N., Baker, R., Nasiar, N., Hutt, S., et al.: Ev aluating gaming detector mo del robustness ov er time. In: Pro ceedings of the 15th international conference on educational data mining, international educational data mining so ciet y (2022) 26. Li, Y., Rako vic, M., Poh, B.X., Gasevic, D., Chen, G.: Automatic classification of learning ob jectives based on blo om’s taxonom y . In: Pro ceedings of the 15th In ternational Conference on Educational Data Mining. p. 530 (2022) 27. Muhamad Sori, Z., W an Mustapha, W.H.: Blo om’s taxonom y for effective teaching and learning. SSRN Electronic Journal p. 25 (Jan 2025) 28. Nat. Initiativ e for Cyb ersecurit y Careers and Studies (NICCS): Incident resp onse (2020), h ttps://niccs.cisa.gov/tools/nice- framework/w ork- role/incident- resp onse 29. Pfaller, T., Sk opik, F., Reuter, L., Leitner, M.: Data collection in cyb er exercises through monitoring p oin ts: Observing, steering, and scoring (2025) 30. P orter, A.C.: Measuring the conten t of instruction: Uses in research and practice. Educational researc her 31 (7), 3–14 (2002) 31. Ra j, R., Sabin, M., Impagliazzo, J., Bow ers, D., Daniels, M., Hermans, F., Kiesler, N., Kumar, A.N., MacKellar, B., McCauley , R., Nabi, S.W., Oudsho orn, M.: Professional comp etencies in computing education: Pedagogies and assessmen t. In: W orking Group Reports on Innov ation and T echnology in Computer Science Education. p. 133–161. ITiCSE-WGR, ACM, New Y ork, NY, USA (2022) 32. Rosc helle, J., T easley , S.D.: The construction of shared knowledge in collab orative problem solving. In: Computer supp orted collab orative learning. pp. 69–97. Springer (1995) 33. Sura worac het, W., Seon, J., Cukuro v a, M.: Predicting challenge momen ts from studen ts’ discourse: A comparison of gpt-4 to tw o traditional n atural language pro- cessing approaches. In: Pro ceedings of the 14th Learning Analytics and Knowledge conference. pp. 473–485 (2024) 34. Sw amy , V., Radmehr, B., Krco, N., Marras, M., Käser, T.: Ev aluating the explainers: Blac k-b o x explainable machine learning for student success prediction in mo ocs. In: Pro ceedings of the 15th International Conference on Educational Data Mining. p. 98 (2022) Multimo dal Analytics of Cyb ersecurit y Crisis Preparation Exercises 15 35. T ello, A.B., W u, Y.T., Perry , T., Y u-Pei, X.: A nov el yardstic k of learning time sp en t in a programming language by unpacking bloom’s taxonomy . In: Science and Information Conference. pp. 785–794. Springer (2020) 36. The Joint T ask F orce on Computer Science Curricula: Computing Curricula 2023. A CM, New Y ork, NY, USA (2024). https://doi.org/10.1145/3664191 37. V a jjala, S., Shimangaud, S.: T ext classification in the llm era–where do we stand? arXiv preprin t arXiv:2502.11830 (2025) 38. V ogelsang, T., Rupp ertz, L.: On the v alidity of p eer grading and a cloud teaching assistan t system. In: Proceedings of the Fifth In ternational Conference on Learning Analytics And Kno wledge. p. 41–50. LAK ’15, Asso ciation for Computing Machinery , New Y ork, NY, USA (2015). https://doi.org/10.1145/2723576.2723633 39. Šv ábenský , V., V ykopal, J., Horák, M., Hofbauer, M., Čeleda, P .: F rom Paper to Platform: Ev olution of a Nov el Learning Environmen t for T abletop Exercises. In: Inno v ation and T ec hnology in Computer Science Education. pp. 213–219. ACM, New Y ork, NY, USA (2024). https://doi.org/10.1145/3649217.3653639 40. Šv ábenský , V., V ykopal, J., Čeleda, P .: What Are Cyb ersecurit y Education P ap ers Ab out? A Systematic Literature Review of SIGCSE and ITiCSE Conferences. In: Pro ceedings of the 51st ACM T ec hnical Symp osium on Computer Science Education. pp. 2–8. SIGCSE ’20, Asso ciation for Computing Machinery , New Y ork, NY, USA (02 2020). h ttps://doi.org/10.1145/3328778.3366816 41. Šv ábenský , V., V ykopal, J., Čeleda, P ., Kraus, L.: Applications of Educational Data Mining and Learning Analytics on Data F rom Cybersecurity T raining. Education and Information T echnologies 27 , 12179–12212 (2022) 42. V ykopal, J., Čeleda, P ., Seda, P ., Šv áb enský , V., T ov arňák, D.: Scalable Learning En vironments for T eaching Cyb ersecurity Hands-on. In: Pro ceedings of the 51st IEEE F rontiers in Education Conference. pp. 1–9. FIE ’21, IEEE, New Y ork, NY, USA (10 2021). https://doi.org/10.1109/FIE49875.2021.9637180 , https://doi.org/ 10.1109/FIE49875.2021.9637180 43. V ykopal, J., Čeleda, P ., Šv áb enský , V., Hofbauer, M., Horák, M.: Research and Practice of Deliv ering T abletop Exercises. In: 29th Conference on Innov ation and T echnology in Computer Science Education. pp. 220–226. ITiCSE ’24, ACM, New Y ork, NY, USA (2024). https://doi.org/10.1145/3649217.3653642 44. Wine, M., Hoffman, A.M.: Reinforcing webb’s depth of knowledge: Laterally ex- tending dok b y ackno wledging proficiency’s impact on cognitive demand. In: 2023 A era Annual Meeting: In terrogating Consequential Education Research in Pursuit of T ruth. pp. 13–16 (2023) 45. Wise, A.F.: Designing p edagogical in terven tions to supp ort student use of learning analytics. In: Pro ceedings of the fourth international conference on learning analytics and kno wledge. pp. 203–211 (2014) 46. W on, M., Carrington, L.R., Espinoza, D.M., Ali, M.H., Dasgupta, D.: A cyb ersecu- rit y summer camp for high school students using autonomous r/c cars. In: T ec h. Symp osium on Comp. Sci. Educ. p. 1435–1441. SIGCSE, ACM, New Y ork, NY, USA (2024). h ttps://doi.org/10.1145/3626252.3630758 47. W ong, K., W u, B., Bulath wela, S., Cukurov a, M.: Rethinking the p oten tial of m ultimo dalit y in collab orativ e problem solving diagnosis with large language mo dels. In: In ternational Conference on Artificial In telligence in Education. pp. 18–32. Springer (2025) 48. Y an, L., Martinez-Maldonado, R., Zhao, L., Dix, S., Jaggard, H., W othersp oon, R., Li, X., Gašević, D.: The role of indo or p ositioning analytics in assessment of simulation-based learning. British Journal of Educational T echnology 54 (1), 267–292 (2023). h ttps://doi.org/10.1111/b jet.13262

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment