"What Did It Actually Do?": Understanding Risk Awareness and Traceability for Computer-Use Agents

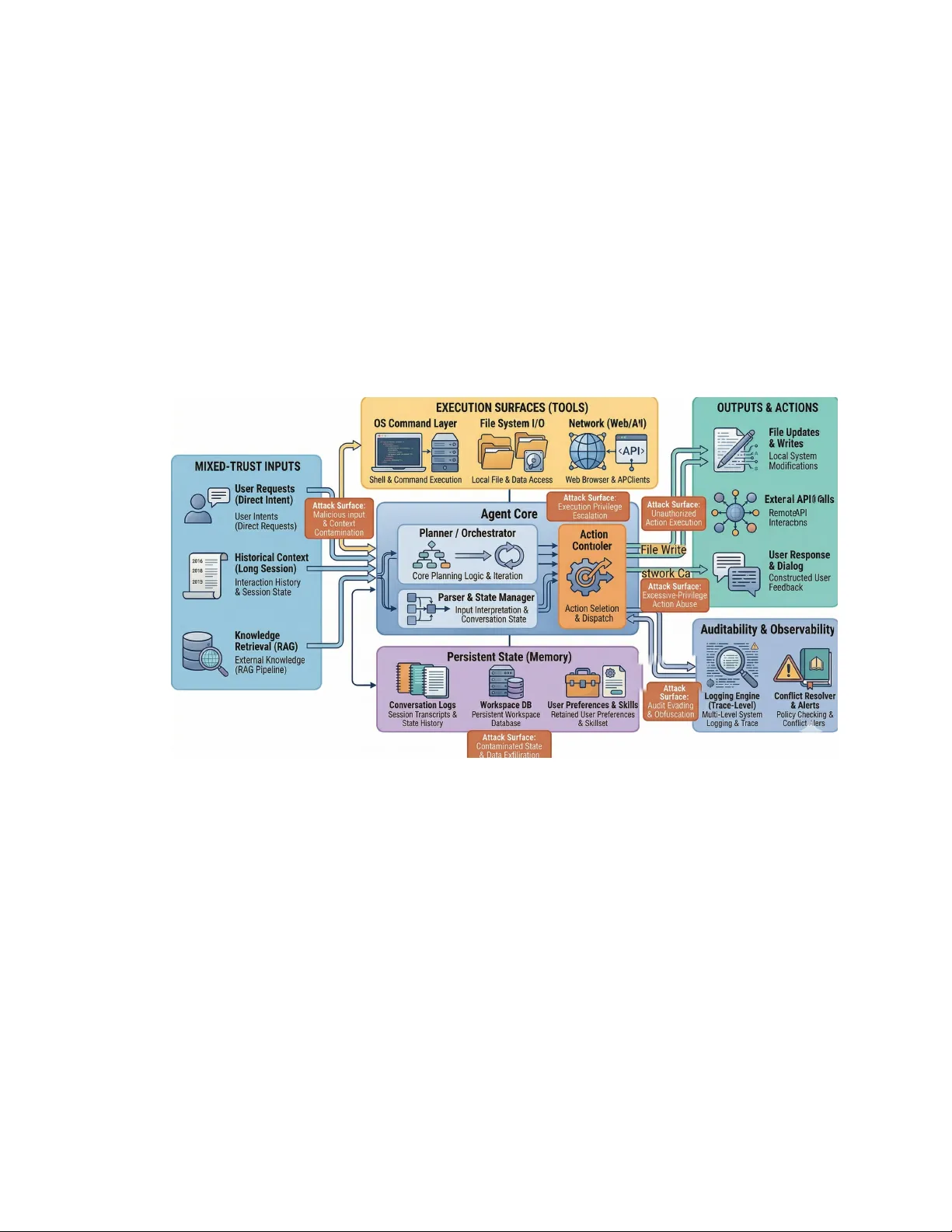

Personalized computer-use agents are rapidly moving from expert communities into mainstream use. Unlike conventional chatbots, these systems can install skills, invoke tools, access private resources, and modify local environments on users' behalf. Y…

Authors: Zifan Peng