Next-Token Prediction and Regret Minimization

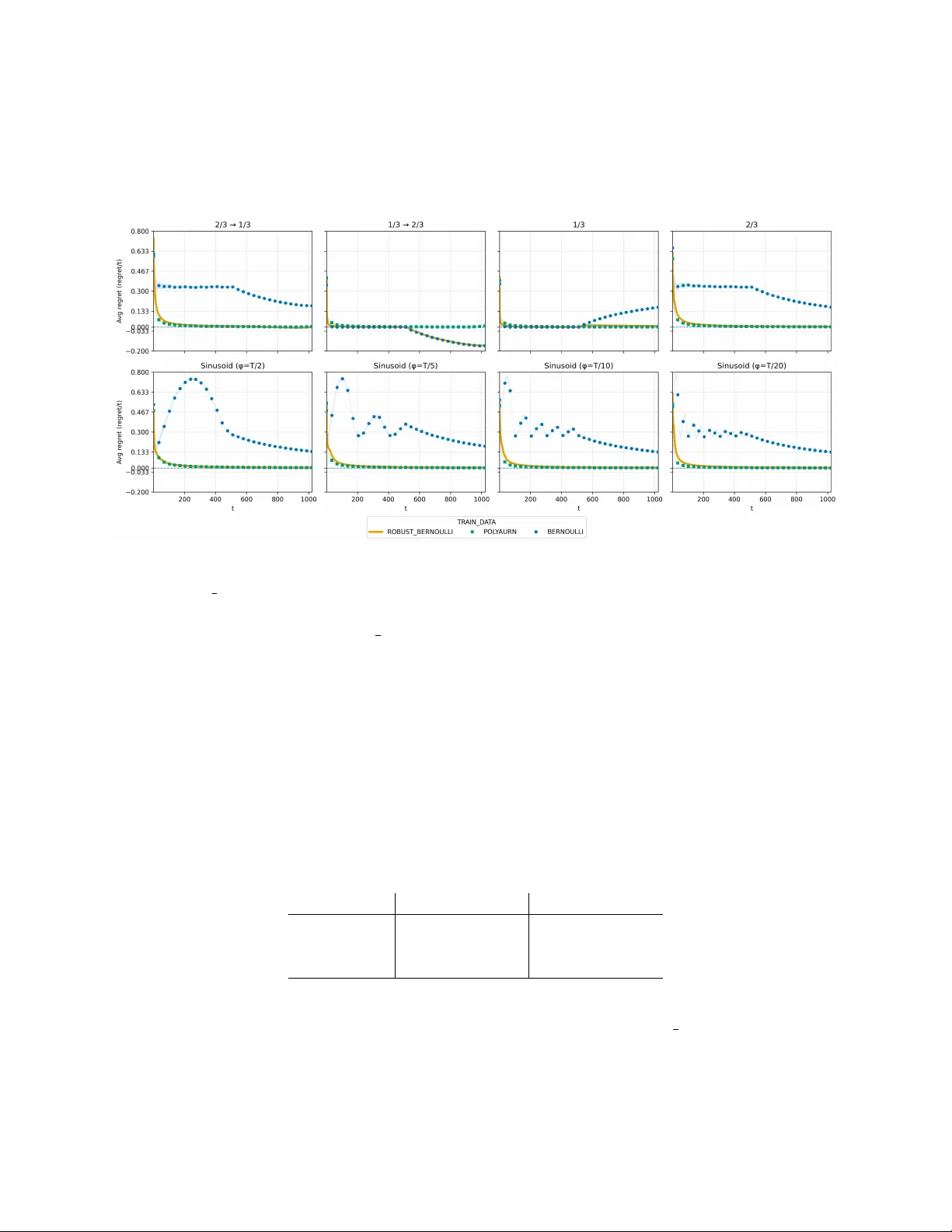

We consider the question of how to employ next-token prediction algorithms in adversarial online decision-making environments. Specifically, if we train a next-token prediction model on a distribution $\mathcal{D}$ over sequences of opponent actions,…

Authors: Mehryar Mohri, Clayton Sanford, Jon Schneider