On the Convergence of Proximal Algorithms for Weakly-convex Min-max Optimization

We study alternating first-order algorithms with no inner loops for solving nonconvex-strongly-concave min-max problems. We show the convergence of the alternating gradient descent--ascent algorithm method by proposing a substantially simplified proo…

Authors: Guido Tapia-Riera, Camille Castera, Nicolas Papadakis

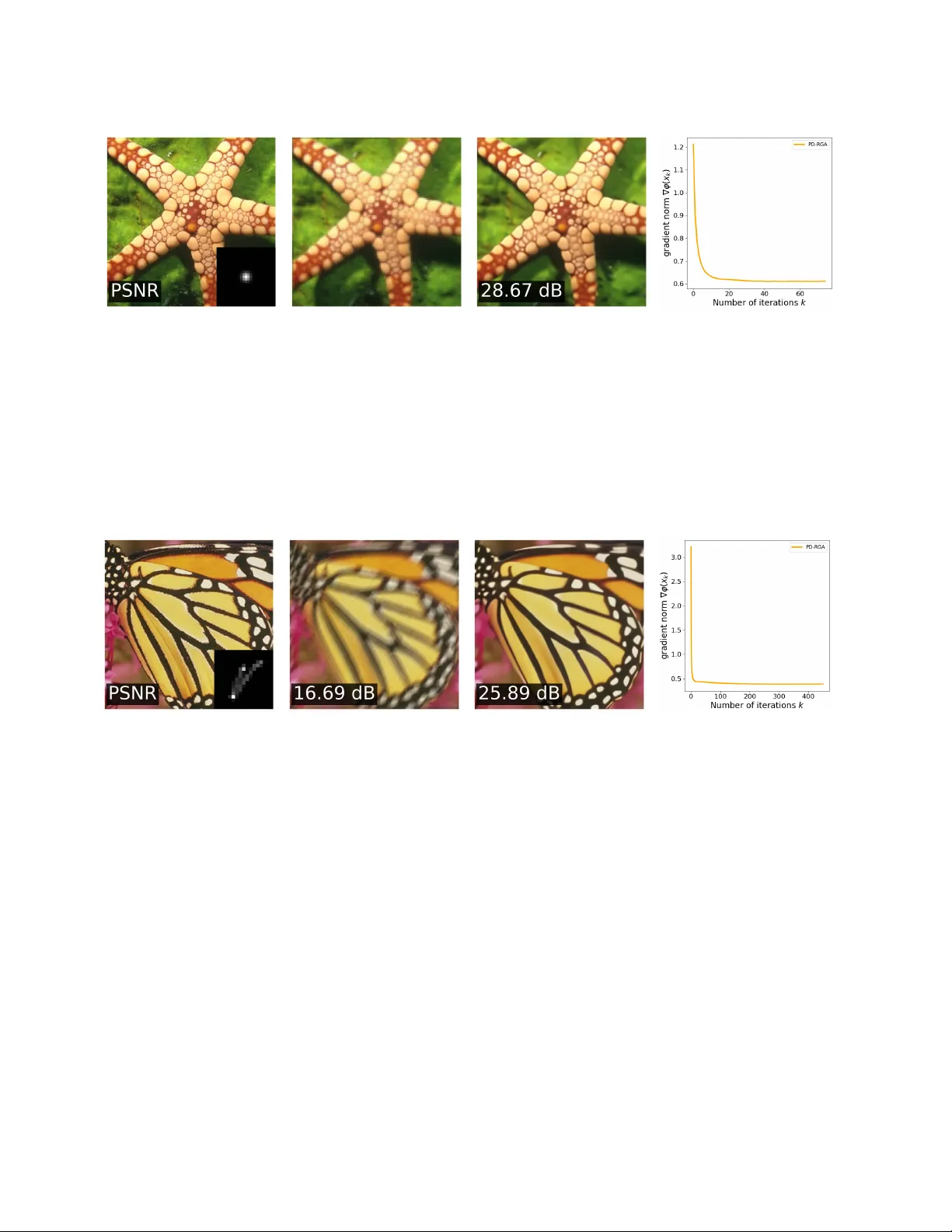

On the Con v er gence of Proximal Algorithms for W eakly-con v e x Min-max Optimization Guido T apia-Riera ∗ Camille Castera Nicolas Papadakis Uni v . Bordeaux CNRS, INRIA, Bordeaux INP , IMB, UMR 5251 F-33400 T alence, France A B S T R AC T W e study alternating first-order algorithms with no inner loops for solving noncon ve x– strongly-concav e min-max problems. W e show the con v ergence of the alternating gradi- ent descent–ascent algorithm method by proposing a substantially simplified proof com- pared to pre vious ones. It allows us to enlarge the set of admissible step-sizes. Building on this general reformulation, we also prov e the con ver gence of a doubly proximal al- gorithm in the weakly con v ex–strongly conca ve setting. Finally , we show ho w this new result opens the w ay to ne w applications of min-max optimization algorithms for solving regularized imaging in verse problems with neural networks in a plug-and-play manner . 1 Intr oduction A challenge in min-max optimization is to design algorithms that remain applicable in the absence of con ve xity and/or conca vity of the objectiv e functions. This challenge arises in tasks such as train- ing generativ e adversarial networks (GANs) [ 13 ], adversarial learning [ 20 ], robust training of neural networks [ 21 ], distributed signal processing [ 10 , 22 ], (see [ 26 ] further details on applications). W e consider optimization problems of the form min x ∈ R d max y ∈ R n { Φ( x, y ) − h ( y ) } (1.1) where Φ : R d × R n → R , called the coupling , is dif ferentiable with Lipschitz-continuous gradient. W e assume that Φ is concave with respect to y , and ρ − weakly con vex in x , for some ρ > 0 . This means that ∀ y ∈ R n , Φ( · , y ) is possibly noncon vex but Φ( ., y )+ ρ || . || 2 is con vex. The function h : R n → R ∪{ + ∞} is proper , con vex and lo wer-semi-continuous, and referred to as the r e gularizer . This paper studies the follo wing two alternating algorithms for tackling ( 1.1 ): for all k ≥ 1 , ∗ Corresponding author: guido-samuel.tapia-riera@math.u-bordeaux.fr Gradient Descent–Regularized Gradient Ascent ( GD-RGA ) ( x k +1 = x k − η x ∇ x Φ( x k , y k ) y k +1 = pro x η y h ( y k + η y ∇ y Φ( x k +1 , y k )) , Proximal Descent–Regularized Gradient Ascent ( PD-RGA ) ( x k +1 = pro x η x Φ( · ,y k ) ( x k ) y k +1 = pro x η y h ( y k + η y ∇ y Φ( x k +1 , y k )) , where η x , η y > 0 are step-sizes, pro x is the proximal operator (see Definition 5 ), and ∇ x , ∇ y denote the partial deri v ati ve (or gradient) w .r .t. x and y , respectiv ely . The main adv antage of GD-RGA and PD-RGA is that they do not require solving exactly the inner problem (the max in ( 1.1 )) at each iteration. As such the y circumvent the computational e xpensiv eness of bi-le vel optimization approaches that make use of inner loops [ 30 , 2 , 9 ]. It is well known that when the coupling term Φ is bilinear , the iterates of simultaneous ( i.e, both v ariables update based on the previous iterate) first-order methods used to solve problem ( 1.1 ) may div erge, whereas those of the alternating scheme remain bounded. In recent years, this behavior , together with more fav orable con ver gence properties, has led alternating methods to be preferred o ver their simultaneous counterparts in practice [ 1 , 11 , 31 ]. GD-RGA relies on explicit gradient descent–ascent updates of the coupling term Φ and has pre viously been studied [ 3 , 17 ]. In contrast, proximal (or implicit) updates of Φ , as in PD-RGA , hav e receiv ed significantly less attention. Our interest in this second approach is motiv ated by recent adv ances where neural networks are designed and trained to act as firmly non-expansi ve operators [ 24 ] or as proximal operators of weakly con ve x functions [ 15 ]. Algorithms for solving ( 1.1 ) hav e been thoroughly studied when Φ is con vex–concave [ 5 , 12 , 14 , 8 ]. There, under appropriate constraint qualifications [ 28 , 7 ], algorithms may con verge to so-called saddle points ( x ∗ , y ∗ ) , i.e., satisfying Φ( x ∗ , y ) − h ( y ) ≤ Φ( x ∗ , y ∗ ) − h ( y ∗ ) ≤ Φ( x, y ∗ ) − h ( y ∗ ) , for all ( x, y ) ∈ R d × R n . Howe ver , this characterization of solutions fails in the noncon vex–str ongly concave setting (only the upper inequality holds true). In prior work on the noncon vex setting [ 3 , 6 , 17 ], the problem ( 1.1 ) is reformulated as min x ∈ R d φ ( x ) , where φ ( x ) := max y ∈ R n Φ( x, y ) − h ( y ) , (1.2) and the notion of solution is relaxed to merely finding approximations of critical points of φ . Our work considers the case where ( 1.2 ) is noncon ve x but dif ferentiable, for which we will require either h to be strongly-concav e or Φ to be strongly concave w .r .t. its second argument (see Proposition 2.3 ). In this setting, we aim to find ε -stationary points, defined next. Definition 1 ( ε -stationary points) . Let ϵ ≥ 0 . W e say that x is a ε -stationary point of φ if ∥∇ φ ( x ) ∥ ≤ ε . If ε = 0 , then x is a stationary point. 1.1 Main contributions For GD-RGA , we re visit the proof of con ver gence to the ε -stationary points of ( 1.2 ). By getting rid of approximation in the key parts of the analysis (see Lemma 3.5 ), we obtain tighter bounds on the range of admissible step-sizes GD-RGA (see Theorem 3.2 ) and pro vide a significantly shorter proof than that of prior w ork [ 3 , 17 ]. W e prov e the con ver gence of PD-RGA (Theorem 4.1 ) with the same comple xity as GD-RGA . T o the best of our knowledge, this is the first proof of con vergence for this algorithm in the weakly-con vex setting. Finally , we use PD-RGA to tackle imaging inv erse problems where the 2 proximal operator pro x η x Φ( · ,y k ) in PD-RGA is implemented by a neural network in a plug-and-play fashion. 1.2 Related W ork Lo wer bounds have been deriv ed for first-order algorithms in the noncon ve x-strongly-concav e smooth setting [ 32 , 16 ]. They state that a dependence of order ε − 2 in the number of iterations required to reach an ε -stationary point is unav oidable, and our methods attain this rate. W e point out that stochastic first- order methods for solving ( 1.1 ) hav e also been studied, primarily using the geometrical structure of the objecti ve function, in particular the Polyak–Łojasiewicz condition [ 18 ], and v ariance reduction tech- niques [ 25 ]. While nonsmooth first-order methods arise when, instead of assuming strong conca vity , one considers concavity or nonconcavity in the variable y of the coupling term Φ or in the regularizer − h or when non-smoothness is assumed in the coupling term [ 19 , 26 , 17 ]. Closely-related work on GD-RGA. GD-RGA can be seen as a generalization of the smooth version of the algorithm proposed in [ 17 ], where con ver gence to ε -stationary points is established under the assumption that Φ( x, · ) is strongly conca ve for all x and that h is the indicator function of a nonempty , con ve x, and bounded set for which prox h is the projection on this set. W e relax the strong concavity assumption on Φ( x, · ) by allowing it to be optionally carried by − h . In addition, our analysis allo ws for general h and corresponding proximal operators prox h . In particular , when Φ( x, · ) is strongly concav e we drop the regularization by taking h ≡ 0 which is not possible in [ 17 ]. Finally , we provide a larger range of admissible step-sizes than in [ 17 ]. The algorithm GD-RGA has also a strong connection with Algorithm 2.1 in [ 3 ] where the authors include an additional conv ex regularizer f w .r .t. to the variable x , and used via its proximal operator pro x f . The results therein apply to the setting we consider for GD-RGA when the strong concavity originates from − h . The key dif ference in their analysis is that they use the properties of the proximal operator associated with h , pro x h , when Φ is strongly conca ve w .r .t. y , we depart from this approach and rather make use of the ascent lemma (see Lemma 2.1 ). This separation allows us to relax the conditions imposed on the step-sizes. W e note that both aforementioned works assume that the coupling Φ has a jointly Lips- chitz continuous gradient, i.e , there exists L Φ > 0 such that ∥∇ Φ( x, y ) − ∇ Φ( x ′ , y ′ ) ∥ ≤ L Φ ∥ ( x, y ) − ( x ′ , y ′ ) ∥ , ∀ x, x ′ ∈ R d , y , y ′ ∈ R n . W e relax this assumption by requiring only block- wise Lipschitz continuity of the gradient (see Assumption 1 , 2 , and 3 ), which allo ws us to get a refined range of step-sizes, by considering tighter inequalities. Similarly to [ 3 ], the work [ 6 ] introduces a regularizer f w .r .t. the x -variable. Rather than focusing on ε -stationary points, they establish that any accumulation point of φ + f is indeed a critical point under additional assumptions than those considered in our work, in particular the semialgebraicity of the objecti ve function, which are beyond the scope of our work. Since we share the block-wise Lipschitz continuity assumption of the gradient of Φ , the mapping φ exhibits properties similar to those studied in [ 3 ]. Furthermore, when f ≡ 0 , their algorithm reduces to GD-RGA ; howe ver , the corresponding con ver gence analyses dif fer significantly . Related work for PD-RGA. While PD-RGA is less studied, [ 4 ] considers a similar algorithm that dif fers by featuring an additional inertial step on the v ariable y . Howe ver the theoretical analysis in [ 4 ] cov ers the con ve x (and non-smooth) setting, and is only valid for non-zero momentum parameters. In 3 comparison, we do not consider inertial steps, and allo w the function to be weakly-conv ex in Φ w .r .t. x , which requires a significantly dif ferent analysis. Organization. The paper is or ganized as follows. Section 2 provides tools of con vex and nonconv ex optimization including regularity properties of the mapping φ defined in ( 1.2 ). Con ver gence of GD- RGA and PD-RGA to ε -stationary points is studied in Sections 3 and 4 , respecti vely . Finally , Section 5 presents numerical experiments, including Plug-and-Play image restoration. 2 Pr eliminary In this section, we provide the necessary background on weakly and strongly con ve x functions, smooth (dif ferentiable) functions, proximal operators associated with weakly conv ex functions, and regularity properties of the mapping φ defined in ( 1.2 ). These concepts constitute essential tools for the conv er- gence analysis of GD-RGA and PD-RGA to wards an ε -stationary point. Notation W e denote by ∥ · ∥ the ℓ 2 -norm, by Id the identity operator , by O the big O notation, by N 0 = N ∪ { 0 } , and by ∥·∥ S the spectral norm of a matrix. 2.1 T ools f or min-max optimization Definition 2 (Strong/weak con ve xity) . Let g : R d → R ∪ { + ∞} and α > 0 , then g is α -str ongly con vex if g ( x ) − α 2 ∥ x ∥ 2 is con vex and α -weakly con vex if g ( x ) + α 2 ∥ x ∥ 2 is con vex. W e say that a function f is strongly concav e if − f is strongly con vex. Definition 3 (Subdif ferential) . Let g : R d → R ∪ { + ∞} and x, y ∈ R d . If for all x ′ ∈ R d we have that g ( x ′ ) ≥ g ( x ) + ⟨ z , x ′ − x ⟩ , we say that z is a subgradient of g at x . The set of all subgradients of g at x , denoted by ∂ g ( x ) , is called the subdiffer ential of g at x . Definition 4 ( L -smoothness) . W e say that a dif fer entiable function g is L -smooth if ∇ g is L -Lipschitz continuous, that is, ther e exists L ≥ 0 suc h that for all x, x ′ ∈ R d , ∥∇ g ( x ) − ∇ g ( x ′ ) ∥ ≤ L ∥ x − x ′ ∥ . W e recall an important property for L -smooth functions, called the descent lemma, see e.g. [ 23 , Lem.1.2.3, pp. 25]. Lemma 2.1 (Descent/Ascent lemma) . F or an L -smooth function g : R d → R we have that | g ( x ′ ) − g ( x ) − ⟨∇ g ( x ) , x ′ − x ⟩| ≤ L 2 ∥ x ′ − x ∥ 2 ∀ x, x ′ ∈ R d . W e now define the proximal operator , at the heart of GD-RGA and PD-RGA . Definition 5 (Proximal operator) . Let g : R d → R be ρ -weakly conve x, pr oper and lower semicontinous (l.s.c) and let τ > 0 . F or all x ∈ R d , the pr oximal operator of g at x with step-size τ is defined as: pro x τ g ( x ) ∈ argmin z ∈ R d g ( z ) + 1 2 τ ∥ z − x ∥ 2 . (2.1) 4 The set prox τ g ( x ) is non-empty , single-v alued when τ ρ < 1 [ 27 , Def. 2.2]. Con vex functions are 0 -weakly con ve x, making their proximal operator always single-v alued. 2.2 Ingredients f or the con vergence analysis In this subsection, we present important properties of the mapping φ . T o this end, we mak e the follo w- ing assumptions: Assumption 1. (i) Let y ∈ R n ther e exists L y x > 0 such that for all x 1 , x 2 ∈ R d we have that ∥∇ y Φ( x 1 , y ) − ∇ y Φ( x 2 , y ) ∥ ≤ L y x ∥ x 1 − x 2 ∥ . (ii) F or all x ∈ R d , Φ( x, · ) is concave. Furthermor e, ther e exists µ > 0 such that either Φ( x, · ) is µ -str ongly concave for all x ∈ R d , or − h is µ -str ongly concave. (iii) The r e gularizer h is pr oper , l.s.c, and con vex. No w , thanks to Assumption 1 , we can establish the well-posedness of φ using [ 6 , Lem. 1, pp. 146]: Lemma 2.2 (Lipschitz continuity of the solution mapping) . Under Assumption 1 the solution map R d ∋ x 7− → y ∗ ( x ) = argmax y ∈ R n Φ( x, y ) − h ( y ) ∈ R N is single-valued and it is L y x /µ -Lipschitz. Note that ∀ x ∈ R d , φ ( x ) = Φ( x, y ∗ ( x )) − h ( y ∗ ( x )) = max y ∈ R n { Φ( x, y ) − h ( y ) } thanks to the definition of y ∗ . No w , we establish that the mapping φ is continuously differentiable ( C 1 ). T o this end we make the ne xt assumption: Assumption 2. (i) Let x ∈ R d and y ∈ R n ther e exist L xx , L xy > 0 such that for all x 1 , x 2 ∈ R n and y 1 , y 2 ∈ R d we have (a) ∥∇ x Φ( x 1 , y ) − ∇ x Φ( x 2 , y ) ∥ ≤ L xx ∥ x 1 − x 2 ∥ , (b) ∥∇ x Φ( x, y 1 ) − ∇ x Φ( x, y 2 ) ∥ ≤ L xy ∥ y 1 − y 2 ∥ . (ii) F or all y ∈ R n , Φ( · , y ) is ρ -weakly con vex. (iii) The function φ defined in ( 1.2 ) is lower bounded, i.e. inf x ∈ R d φ ( x ) > −∞ . Proposition 2.3 (Smoothness of φ ) . Under assumptions 1 and 2 we have that φ is ρ − weakly con vex and of class C 1 with gradient given by ∇ φ ( x ) = ∇ x Φ( x, y ∗ ( x )) . Furthermor e, its gradient is L φ := L xx + ( L xy L y x ) /µ -Lipschitz continuous. Pr oof. The regularity part ( C 1 ) of this result follo ws from [ 6 , Prop. 1, pp. 146] while the Lipschitzness of ∇ φ is giv en by [ 6 , Lem. 2, pp. 147]. The ρ − weakly conv exity follows directly using Assumption 2 - ( ii ) and from the fact that supremum preserv es the triangular inequality . T o end this section, let us introduce a suf ficient condition for a sequence ( w k ) k ≥ 0 to achiev e an ε - stationary point (Definition 1 ) for the mapping φ defined in ( 1.2 ). Proposition 2.4. Let N ≥ 1 , ( w k ) N k =0 ⊂ R d , and Assumption 2 -(iii) hold true. Assume that the mapping φ satisfies φ ( w N ) ≤ φ ( w 0 ) − C 1 N − 1 X k =0 ∥∇ φ ( w k ) ∥ 2 + C 2 wher e C 1 , C 2 ∈ R . (2.2) If C 1 , C 2 > 0 , then for any ϵ > 0 ther e exists k = O ( ϵ − 2 ) suc h that ∥∇ φ ( w k ) ∥ < ϵ . 5 Pr oof. By Assumption 3 -(ii) we hav e that φ ( w 0 ) − φ ( w N ) < φ ( w 0 ) − inf w φ ( w ) < + ∞ , i.e there exists C 3 ∈ R such that φ ( w 0 ) − inf w φ ( w ) < C 3 . Using this fact we deduce that min 0 ≤ k ≤ N − 1 ∥∇ φ ( w k ) ∥ 2 ≤ 1 N N − 1 X k =0 ∥∇ φ ( w k ) ∥ 2 < C N , where C = ( C 3 + C 2 ) /C 1 > 0 . Thus for any N there exists 0 ≤ k < N such that ∥∇ φ ( w k ) ∥ < p C / N . Gi ven ϵ > 0 we choose N = ⌈ C /ϵ 2 ⌉ so k ≤ N = O ( ϵ − 2 ) and we hav e that ∥∇ φ ( w k ) ∥ < ϵ . 3 Con ver gence analysis f or GD-RGA W e recall that GD-RGA is defined ∀ k ≥ 0 by x k +1 = x k − η x ∇ x Φ( x k , y k ) (3.1) y k +1 = pro x η y h ( y k + η y ∇ y Φ( x k +1 , y k )) . (3.2) In this section, we prov e the con ver gence of GD-RGA towards an ε -stationary point (Definition 1 ) of the mapping φ defined in ( 1.2 ). T o this end, we make the follo wing assumption. Assumption 3. (i) Let x ∈ R d ther e exists L y y > 0 such that for all y 1 , y 2 ∈ R n we have ∥∇ y Φ( x, y 1 ) − ∇ y Φ( x, y 2 ) ∥ ≤ L y y ∥ y 1 − y 2 ∥ . (ii) The condition number satisfies κ y := L y y /µ ≥ 1 . Remark 3.1. Note that when the str ong concavity originates fr om the coupling term Φ , we have κ y ≥ 1 . In contrast, when it originates fr om the r e gularizer − h , this is no longer necessarily true. However , it is always possible to choose L y y sufficiently lar ge so as to ensur e that κ y ≥ 1 . Let us no w state the main result of this section. Theorem 3.2. Let Assumptions 1 , 2 , and 3 hold true. F or any initial point ( x 0 , y 0 ) ∈ R d × R n we consider the sequence ( x k , y k ) k ≥ 0 gener ated by GD-RGA with step-sizes 0 < η y ≤ 1 L y y and 0 < η x < η y L y y µ 2 κ y L xy L y x . (3.3) Then, for every ε > 0 there e xists k = O ( ε − 2 ) suc h that ∥∇ φ ( x k ) ∥ < ε . From now on and throughout this work, the step-size η y is parameterized as η y = τ /L y y for some τ ∈ (0 , 1] . Consequently , the sequence ( x k , y k ) k ≥ 1 generated by GD-RGA depends on τ . Howe ver , without loss of generality , we simply write ( x k , y k ) k ≥ 1 to av oid explicitly including τ in the notation. T o prov e Theorem 3.2 , we first provide key results that form the basis of our analysis of GD-RGA . W e recall that φ ( x ) = max y ∈ R n (Φ( x, y ) − h ( y )) and that under Assumption 1 we write y ∗ ( x ) = argmax y ∈ R n (Φ( x, y ) − h ( y )) . Lemma 3.3. Let Assumptions 1 and 2 hold true and ( x k , y k ) k ≥ 1 be a sequence gener ated by GD-RGA . Then for any η x > 0 we have φ ( x N ) ≤ φ ( x 0 ) − η x 2 (1 − 2 L φ η x ) N − 1 X k =0 ∥∇ φ ( x k ) ∥ 2 + η x 2 (1 + 2 L φ η x ) L 2 xy N − 1 X k =0 ∥ y ∗ ( x k ) − y k ∥ 2 . (3.4) 6 The proof of this result is presented in Appendix A.1 . One of the main dif ficulties in the analysis of con ver gence for both algorithms is to control the gap ∥ y ∗ ( x k ) − y k ∥ 2 (for all k ∈ N ) between the theoretical maximizer y ∗ ( x k ) of the inner problem at iteration k in ( 1.1 ) and the current iterate 1 y k gi ven by ( 3.2 ). Notation. T o ease the computations, thr oughout the paper , we denote y ∗ ( x k ) by y ∗ k , ∥ y ∗ k − y k ∥ 2 by δ k , and β = µ/ ( L xy L y x ) . 3.1 Control of δ k The aim of this section is to derive a bound for δ k . The key dif ference between our con ver gence analysis and those in [ 17 , 3 ] lies in the construction of this control. Our approach yields a less restricti ve bound and allo ws us to e xplicitly characterize an admissible range for the step-size η x , a step not employed in the aforementioned works. Lemma 3.4 (General bound for δ k ) . Let Assumptions 1 and 3 hold true and ( y k ) k ≥ 1 be a sequence gener ated by ( 3.2 ) . Then for e very k ∈ N 0 we have δ k +1 ≤ 1 − τ 2 κ y δ k + κ y τ y ∗ k +1 − y ∗ k 2 ∀ 0 < τ ≤ 1 . (3.5) W e now refine it by using the update rule of x k in GD-RGA . Lemma 3.5 (Bound of δ k for GD-RGA ) . Let Assumptions 1 , 2 and 3 hold true and ( x k , y k ) k ≥ 1 be a sequence gener ated by GD-RGA . Then for every k ∈ N 0 we have δ k ≤ γ k δ 0 + γ − 1 + τ 2 κ y 1 L 2 xy k − 1 X j =0 γ k − 1 − j ∥∇ φ ( x j ) ∥ 2 ∀ 0 < τ ≤ 1 , wher e γ = 1 − τ / 2 κ y + 2 κ y η 2 x / ( τ β 2 ) . Furthermor e, if η x < τ β / (2 κ y ) then | γ | < 1 . Pr oof. Exploiting the L y x /µ -Lipschitz continuity of y ∗ with respect to x (see Lemma 2.2 ) and the x -update ( 3.1 ) of GD-RGA relation ( 3.5 ) becomes δ k +1 ≤ 1 − τ 2 κ y δ k + κ y L 2 y x η 2 x τ µ 2 ∥∇ x Φ( x k , y k ) ∥ 2 . Using Cauchy-Schwarz inequality on ∥∇ x Φ( x k , y k ) ∥ 2 = ∥∇ x Φ( x k , y k ) + ∇ φ ( x k ) − ∇ φ ( x k ) ∥ 2 we get δ k +1 ≤ 1 − τ 2 κ y δ k + 2 κ y L 2 y x η 2 x τ µ 2 ∥∇ φ ( x k ) − ∇ x Φ( x k , y k ) ∥ 2 + 2 κ y L 2 y x η 2 x τ µ 2 ∥∇ φ ( x k ) ∥ 2 . (3.6) By Proposition 2.3 and Assumption 2 (i)-(b), ∥∇ φ ( x k ) − ∇ x Φ( x k , y k ) ∥ 2 ≤ L 2 xy ∥ y ∗ k − y k ∥ 2 = L 2 xy δ k , and ( 3.6 ) becomes δ k +1 ≤ 1 − τ 2 κ y + 2 κ y L 2 y x L 2 xy η 2 x τ µ 2 δ k + 2 κ y L 2 y x η 2 x τ µ 2 ∥∇ φ ( x k ) ∥ 2 . 1 Since the proximal step to update variable y is the same in both GD-RGA and PD-RGA , the following result will be reused for the analysis of Algorithm 2. 7 Using β = µ/ ( L xy L y x ) the last inequality writes δ k +1 ≤ 1 − τ 2 κ y + 2 κ y η 2 x τ β 2 δ k + 2 κ y η 2 x τ β 2 L 2 xy ∥∇ φ ( x k ) ∥ 2 . (3.7) Denoting γ = 1 − τ / 2 κ y + 2 κ y η 2 x / ( τ β 2 ) and by induction in ( 3.7 ) we deduce the result. Furthermore we ha ve | γ | < 1 . Indeed, using the hypothesis η x < τ β / (2 κ y ) it is straightforward to v erify that γ < 1 , while by construction − 1 / 2 < γ since τ ∈ (0 , 1] and κ y ≥ 1 . 3.2 Proof of Theor em 3.2 Thanks to Lemma 3.5 we can no w bound ( 3.4 ), which constitutes the core of the proof of Theorem 3.2 . Pr oof of Theor em 3.2 . Plugging Lemma 3.5 into Lemma 3.3 we get φ ( x N ) ≤ φ ( x 0 ) − η x 2 (1 − 2 L φ η x ) N − 1 X k =0 ∥∇ φ ( x k ) ∥ 2 + η x 2 (1 + 2 L φ η x ) L 2 xy δ 0 N − 1 X k =0 γ k + η x 2 (1 + 2 L φ η x ) γ − 1 + τ 2 κ y N − 1 X k =0 k − 1 X j =0 γ k − 1 − j ∥∇ φ ( x j ) ∥ 2 . (3.8) Since η x < τ β / (2 κ y ) and | γ | < 1 , we ha ve that P N − 1 k =0 γ k < P + ∞ k =0 γ k = 1 1 − γ > 0 and N − 1 X k =0 k − 1 X j =0 γ k − 1 − j ∥∇ φ ( x j ) ∥ 2 = N − 2 X j =0 N − 2 − j X m =0 γ m ! ∥∇ φ ( x j ) ∥ 2 ≤ 1 1 − γ N − 1 X k =0 ∥∇ φ ( x k ) ∥ 2 , where we exchange the order of summation and reinde x via m = k − 1 − j . Then ( 3.8 ) becomes φ ( x N ) ≤ φ ( x 0 ) − η x (1 − 2 L φ η x ) 2 N − 1 X k =0 ∥∇ φ ( x k ) ∥ 2 + η x (1 + 2 L φ η x ) L 2 xy δ 0 2(1 − γ ) + η x (1 + 2 L φ η x ) 2 γ − 1 + τ 2 κ y 1 1 − γ N − 1 X k =0 ∥∇ φ ( x k ) ∥ 2 . (3.9) Noting that γ − 1 + τ 2 κ y 1 1 − γ = − 1 + τ 2 κ y (1 − γ ) and rearranging the terms in ( 3.9 ), we get φ ( x N ) ≤ φ ( x 0 ) − η x 2 2 + (1 + 2 L φ η x ) τ 2 κ y (1 − γ ) N − 1 X k =0 ∥∇ φ ( x k ) ∥ 2 + η x (1 + 2 L φ η x ) L 2 xy δ 0 2(1 − γ ) . (3.10) Recalling that 1 − γ > 0 , the final result is deduced by applying Proposition 2.4 . 3.3 Step-size discussion Here we compare the step-size constraints of our Theorem 3.2 with the ones proposed in the related literature. 8 W e recall that if the coupling term Φ has a jointly Lipschitz continuous gradient, then there exists L Φ > 0 such that ∥∇ Φ( x, y ) − ∇ Φ( x ′ , y ′ ) ∥ ≤ L Φ ∥ ( x, y ) − ( x ′ , y ′ ) ∥ , ∀ x, x ′ ∈ R d and y , y ′ ∈ R n . This is the hypothesis made in [ 17 , 3 ]. This property implies that L y y = L xy = L y x = L Φ so that our constraint ( 3.3 ) on η x simplifies to 0 < η x < η y / (2 κ 2 y ) . T able 1 summarizes our results under the jointly Lipschitz hypothesis. W e observ e that 16( κ y + 1) 2 > 3( κ y + 1) 2 > 2 κ 2 y , which shows that Theorem 3.2 allo ws to take lar ger step-sizes than [ 17 , 3 ]. T able 1: Admissible step-sizes under the jointly Lipschitz setting for GD-RGA . [ 17 ] [ 3 ] Ours η y ≤ 1 /L Φ 1 /L Φ 1 /L Φ η x ≤ η y / (16( κ y + 1) 2 ) η y / (3( κ y + 1) 2 ) η y / (2 κ 2 y ) Under the block-wise Lipschitz framework W e share this hypothesis with [ 6 ]. Then in T able 2 we present the respectiv e step-sizes T o carried out this comparison, we assume 2 that L xy = L y x . In this setting, recalling that L φ = L xx + L xy L y x /µ and observing that µL 2 xy + µL xx + L 2 xy + 4 κ 2 y L 2 y x > 0 , we deduce that our algorithm allows for larger step-sizes than in [ 6 ]. Nev ertheless, if L xy = L y x , it is not possible to determine for all the cases which bound on η x is tighter without additional assumptions. T able 2: Admissible step-sizes under the block-wise Lipschitz setting for GD-RGA . [ 6 ] Ours η y ≤ 1 /L y y 1 /L y y η x ≤ µ/ ( µ ( L 2 xy + L φ ) + 2 κ y (2 κ y + 1) L 2 y x ) η y L y y µ/ ( 2 κ y L xy L y x ) 4 Con ver gence analysis f or PD-RGA W e recall that PD-RGA is defined ∀ k ≥ 0 by x k +1 = pro x η x Φ( · ,y k ) ( x k ) (4.1) y k +1 = pro x η y h ( y k + η y ∇ y Φ( x k +1 , y k )) . (4.2) Note that the y -update in PD-RGA is the same as in GD-RGA . The difference between both algo- rithms lies in the x -update: an e xplicit gradient step is performed in ( 3.1 ), whereas PD-RGA realizes a proximal step on the coupling function ( 4.1 ) Φ that is ρ − weakly con ve x. Hence, to ha ve a well-posed proximal step (see Definition 5 ), we make the follo wing assumption. Assumption 4. The step-size η x satisfies η x ρ < 1 . Hence the pr oximal step of ( 4.1 ) is single-valued. Let us no w state the main result of this section. Theorem 4.1. Let Assumptions 1 , 2 , 3 , and 4 hold true. F or any initial point ( x 0 , y 0 ) ∈ R d × R n we consider the sequence ( x k , y k ) k ≥ 0 gener ated by PD-RGA with step-sizes 0 < η y ≤ 1 L y y and 0 < η x < min η y L y y µ √ 2 L xy L y x ( √ 2 κ y + η y L y y ) , 1 ρ . Then, for every ε > 0 there e xists k = O ( ε − 2 ) suc h that ∥∇ φ ( x k ) ∥ < ε . 2 If φ is of class C 2 , then by Clairaut’ s theorem this assumption is straightforwardly satisfied. 9 Recall that we parameterize the step-size η y as η y = τ /L y y for some τ ∈ (0 , 1] . F or simplicity , we write ( x k , y k ) k ≥ 1 to av oid explicitly including τ in the notation for the sequences generated by PD-RGA . In order to prove Theorem 4.1 , we first provide ke y results which form the basis of the con vergence analysis. Notation. W e r ecall that the mapping φ is defined as φ ( x ) = max y ∈ R n (Φ( x, y ) − h ( y )) and that under str ong concavity (Assumption 1 ), y ∗ ( x ) = argmax y ∈ R n (Φ( x, y ) − h ( y )) . W e also r ecall that we denote y ∗ k = y ∗ ( x k ) , δ k = ∥ y ∗ ( x k ) − y k ∥ 2 and β = µ/ ( L xy L y x ) . Lemma 4.2. Let Assumptions 1 and 2 hold true and ( x k , y k ) k ≥ 1 be a sequence gener ated PD-RGA . Then for any η x > 0 we have φ ( x N ) ≤ φ ( x 0 ) − η x 2 (1 − 2 ρη x ) N − 1 X k =0 ∥∇ φ ( x k +1 ) ∥ 2 + η x 2 (1 + 2 ρη x ) L 2 xy N − 1 X k =0 ∥ y ∗ ( x k +1 ) − y k ∥ 2 . (4.3) The proof of this result is presented in Appendix B . Note that the right-hand side of ( 4.3 ) con- tains ∥ y ∗ ( x k +1 ) − y k ∥ 2 whereas for GD-RGA the corresponding term in relation ( 3.4 ) depends on δ k = ∥ y ∗ ( x k ) − y k ∥ 2 . This subtle distinction complicates the analysis: while δ k explicitly measures the gap between the inner problem’ s maximizer y ∗ ( x k ) at x k and the current iterate y k , the analogous interpretation does not hold for the term in ( 4.3 ). This discrepancy arises from the fact that the proxi- mal step can be seen as an “implicit” step, which introduces an index shift and alters the relationship between the terms. 4.1 Control of y ∗ k +1 − y k 2 The following result shows that the term y ∗ k +1 − y k 2 can be controlled by considering an additional constraint on the step-size η x . Lemma 4.3. Let Assumptions 1 , 2 , and 4 hold true and ( x k , y k ) k ≥ 1 be a sequence gener ated by PD- RGA . Then, for all θ > 0 and η 2 x < β 2 / (2(1 + 1 /θ )) we have that y ∗ k +1 − y k 2 ≤ (1 + θ ) β 2 β 2 − 2 η 2 x (1 + 1 /θ ) δ k + 2 η 2 x (1 + 1 /θ ) L 2 xy ( β 2 − 2 η 2 x (1 + 1 /θ )) ∥∇ φ ( x k +1 ) ∥ 2 . Pr oof. Applying triangular and Y oung inequalities to y ∗ k +1 − y k 2 we get for any θ > 0 y ∗ k +1 − y k 2 ≤ y ∗ k +1 − y ∗ k 2 + 2 y ∗ k +1 − y ∗ k ∥ y ∗ k − y k ∥ + ∥ y ∗ k − y k ∥ 2 ≤ 1 + 1 θ y ∗ k +1 − y ∗ k 2 + (1 + θ ) ∥ y ∗ k − y k ∥ 2 . (4.4) Using the L y x /µ -Lipschitz continuity of y ∗ w .r .t. x (Lemma 2.2 ) and lev eraging the first-order optimal- ity condition of the proximal step ( 4.1 ), x k +1 − x k = − η x ∇ x Φ( x k +1 , y k ) , relation ( 4.4 ) becomes y ∗ k +1 − y k 2 ≤ 1 + 1 θ L 2 y x η 2 x µ 2 ∥∇ x Φ( x k +1 , y k ) ∥ 2 + (1 + θ ) δ k . (4.5) 10 Applying the Cauchy-Schwartz inequality , Proposition 2.3 and Assumption 2 (i)(b), we get ∥∇ x Φ( x k +1 , y k ) ∥ 2 ≤ 2 ∥∇ φ ( x k +1 ) − ∇ x Φ( x k +1 , y k ) ∥ 2 + ∥∇ φ ( x k +1 ) ∥ 2 ≤ 2 L 2 xy y ∗ k +1 − y k 2 + ∥∇ φ ( x k +1 ) ∥ 2 . Plugging this last inequality into ( 4.5 ) and reorg anizing the terms we get 1 − 2 L 2 y x L 2 xy η 2 x µ 2 1 + 1 θ y ∗ k +1 − y k 2 ≤ 2 L 2 y x η 2 x µ 2 1 + 1 θ ∥∇ φ ( x k +1 ) ∥ 2 + (1 + θ ) δ k . Defining β = µ/ ( L xy L y x ) yields y ∗ k +1 − y k 2 1 − 2 η 2 x β 2 1 + 1 θ ≤ 2 η 2 x β 2 L 2 xy 1 + 1 θ ∥∇ φ ( x k +1 ) ∥ 2 + (1 + θ ) δ k . W e finally take η 2 x < β 2 / (2(1 + 1 /θ )) , which implies 1 − 2 η 2 x β 2 1 + 1 θ > 0 in the abov e inequality and allo ws to recov er the statement of the lemma. Recall that, since the y v ariable is updated in the same way for both GD-RGA and PD-RGA , we can apply Lemma 3.4 to PD-RGA and thereby obtain that: δ k +1 ≤ 1 − τ 2 κ y δ k + κ y τ y ∗ k +1 − y ∗ k 2 ∀ 0 < τ ≤ 1 . (4.6) W e now refine ( 4.6 ) specifically for PD-RGA . Lemma 4.4 (Bound of δ k for PD-RGA ) . Let Assumptions 1 , 2 , 3 , and 4 hold true and ( x k , y k ) k ≥ 1 be the sequence gener ated by PD-RGA . Then, for every k ∈ N 0 we have δ k ≤ γ k δ 0 + γ − 1 + τ 2 κ y 1 (1 + θ ) L 2 xy k − 1 X j =0 γ k − 1 − j ∥∇ φ ( x j +1 ) ∥ 2 ∀ 0 < τ ≤ 1 , wher e γ = 1 − τ 2 κ y + 2 κ y η 2 x (1+ θ ) τ ( β 2 − 2 η 2 x (1+1 /θ )) . Furthermore , if η x < τ β / ( q 4 κ 2 y (1 + θ ) + 2 τ 2 (1 + 1 /θ )) , then | γ | < 1 . Pr oof. Using the Lipschitz property of y ∗ (Lemma 2.2 ) and the first order optimality condition of the promximal operator ( 4.1 ) ( i.e, x k +1 − x k = − η x ∇ x Φ( x k +1 , y k ) ) in ( 4.6 ) we get δ k +1 ≤ 1 − τ 2 κ y δ k + κ y L 2 y x η 2 x τ µ 2 ∥∇ x Φ( x k +1 , y k ) ∥ 2 . (4.7) No w , by Y oung’ s inequality we hav e that ∥∇ x Φ( x k +1 , y k ) ∥ 2 ≤ 2 ∥∇ x Φ( x k +1 , y k ) − ∇ φ ( x k +1 ) ∥ 2 + 2 ∥∇ φ ( x k +1 ) ∥ 2 . (4.8) Plugging ( 4.8 ) into ( 4.7 ) we get δ k +1 ≤ 1 − τ 2 κ y δ k + 2 κ y L 2 y x η 2 x τ µ 2 ∥∇ φ ( x k +1 ) ∥ 2 + 2 κ y L 2 y x η 2 x τ µ 2 ∥∇ x Φ( x k +1 , y k ) − ∇ φ ( x k +1 ) ∥ 2 . 11 By Proposition 2.3 and Assumption 2 (i) we deduce that ∥∇ φ ( x k +1 ) − ∇ x Φ( x k +1 , y k ) ∥ 2 ≤ L 2 xy y ∗ k +1 − y k 2 . Then, we obtain δ k +1 ≤ 1 − τ 2 κ y δ k + 2 κ y L 2 y x η 2 x τ µ 2 ∥∇ φ ( x k +1 ) ∥ 2 + 2 κ y L 2 y x η 2 x L 2 xy τ µ 2 y ∗ k +1 − y k 2 . Using β = µ/ ( L xy L y x ) , the pre vious inequality becomes δ k +1 ≤ 1 − τ 2 κ y δ k + 2 κ y η 2 x τ β 2 L 2 xy ∥∇ φ ( x k +1 ) ∥ 2 + 2 κ y η 2 x τ β 2 y ∗ k +1 − y k 2 . (4.9) Plugging Lemma 4.3 into ( 4.9 ) we deduce δ k +1 ≤ 1 − τ 2 κ y + 2 κ y η 2 x (1 + θ ) τ ( β 2 − 2 η 2 x (1 + 1 /θ )) δ k + 2 κ y η 2 x τ L 2 xy ( β 2 − 2 η 2 x (1 + 1 /θ )) ∥∇ φ ( x k +1 ) ∥ 2 . (4.10) Denoting γ = 1 − τ 2 κ y + 2 κ y η 2 x (1+ θ ) τ ( β 2 − 2 η 2 x (1+1 /θ )) and by induction in ( 4.9 ) we deduce that δ k ≤ γ k δ 0 + γ − 1 + τ 2 κ y 1 (1 + θ ) L 2 xy k − 1 X j =0 γ k − 1 − j ∥∇ φ ( x j +1 ) ∥ 2 . W e finally observe that γ < 1 for η x < τ β / ( q 4 κ 2 y (1 + θ ) + 2 τ 2 (1 + 1 /θ )) , while by construction − 1 / 2 < γ since 0 < τ ≤ 1 ≤ κ y . Note that the constraint on η x in Lemma 4.4 implies the one of Lemma 4.3 since η 2 x < τ 2 β 2 2(2 κ 2 y (1 + θ ) + τ 2 (1 + 1 /θ )) = β 2 4 κ 2 y (1 + θ ) /τ 2 + 2(1 + 1 /θ ) < β 2 2(1 + 1 /θ ) . (4.11) T o allow for the largest admissible v alues of η x , we fix θ = τ / ( √ 2 κ y ) in order to maximize the mapping θ 7→ θ / (2 κ 2 y θ 2 + (2 κ 2 y + τ 2 ) θ + τ 2 ) in ( 4.11 ). W ith this v alue, we obtain: η x < τ β / ( √ 2( √ 2 κ y + τ ) . Corollary 4.5. Under assumptions 1 - 4 we have that for η x < τ β / ( √ 2( √ 2 κ y + τ )) y ∗ k +1 − y k 2 ≤ ( √ 2 κ y + τ ) β 2 τ δ 0 √ 2 κ y ( β 2 τ − 2( √ 2 κ y + τ ) η 2 x ) γ k + 2( √ 2 κ y + τ ) η 2 x L 2 xy ( β 2 τ − 2( √ 2 κ y + τ ) η 2 x ) ∥∇ φ ( x k +1 ) ∥ 2 + γ − 1 + τ 2 κ y β 2 τ L 2 xy ( β 2 τ − 2 η 2 x ( √ 2 κ y + τ )) k − 1 X j =0 γ k − 1 − j ∥∇ φ ( x j +1 ) ∥ 2 . (4.12) wher e γ = 1 − τ 2 κ y + √ 2( √ 2 κ y + τ ) η 2 x β 2 τ − 2( √ 2 κ y + τ ) η 2 x with | γ | < 1 . The proof of this result simply consists in combining Lemma 4.4 with Lemma 4.3 for θ = τ / ( √ 2 κ y ) . 12 4.2 Proof of Theor em 4.1 Thanks to Corollary 4.5 we can no w bound ( 4.3 ), and show Theorem 4.1 . Before addressing this, note that combining the constraint on η x in Corollary 4.5 with the one gi ven in Assumption 4 , we get η x < min τ β / ( √ 2( √ 2 κ y + τ )) , 1 /ρ . This set of conditions on the step-size cannot be further reduced with our analysis. It is indeed possible to construct one dimensional examples of the form − ax 2 + bxy − cy 2 , a, b, c > 0 , such that either the first or the second condition is the smallest one. Pr oof of Theor em 4.1 . The strategy consists in refining Lemma 4.2 in order to apply Proposition 2.4 . Combining Corollary 4.5 with Lemma 4.2 we hav e that φ ( x N ) ≤ φ ( x 0 ) − η x 2 (1 − 2 ρη x ) N − 1 X k =0 ∥∇ φ ( x k +1 ) ∥ 2 + η x (1 + 2 ρη x )( √ 2 κ y + τ ) β 2 τ L 2 xy δ 0 2 √ 2 κ y ( β 2 τ − 2( √ 2 κ y + τ ) η 2 x ) N − 1 X k =0 γ k + η x (1 + 2 ρη x ) 2 γ − 1 + τ 2 κ y β 2 τ β 2 τ − 2( √ 2 κ y + τ ) η x N − 1 X k =0 k − 1 X j =0 γ k − 1 − j ∥∇ φ ( x j +1 ) ∥ 2 + η x (1 + 2 ρη x ) 2 2( √ 2 κ y + τ ) η 2 x ( β 2 τ − 2( √ 2 κ y + τ ) η 2 x ) N − 1 X k =0 ∥∇ φ ( x k +1 ) ∥ 2 . (4.13) Since | γ | < 1 , we hav e P N − 1 k =0 γ k < P + ∞ k =0 γ k = 1 1 − γ and N − 1 X k =0 k − 1 X j =0 γ k − 1 − j ∥∇ φ ( x j +1 ) ∥ 2 = N − 2 X j =0 N − 2 − j X m =0 γ m ! ∥∇ φ ( x j +1 ) ∥ 2 ≤ 1 1 − γ N − 1 X k =0 ∥∇ φ ( x k +1 ) ∥ 2 , where we exchange the order of summation and reinde x via m = k − 1 − j . Then ( 4.13 ) becomes φ ( x N ) ≤ φ ( x 0 ) − η x 2 (1 − 2 ρη x ) N − 1 X k =0 ∥∇ φ ( x k +1 ) ∥ 2 + η x 2 (1 + 2 ρη x )( √ 2 κ y + τ ) τ β 2 L 2 xy δ 0 √ 2 κ y ( τ β 2 − 2( √ 2 κ y + τ ) η 2 x )(1 − γ ) + η x 2 (1 + 2 ρη x ) β 2 τ − 2 η 2 x ( √ 2 κ y + τ ) γ − 1 + τ 2 κ y β 2 τ 1 − γ + 2( √ 2 κ y + τ ) η 2 x N − 1 X k =0 ∥∇ φ ( x k +1 ) ∥ 2 . (4.14) Since γ − 1 + τ 2 κ y 1 1 − γ = − 1 + τ 2 κ y (1 − γ ) , it follo ws that (1 + 2 ρη x ) β 2 τ − 2 η 2 x ( √ 2 κ y + τ ) γ − 1 + τ 2 κ y β 2 τ 1 − γ + 2( √ 2 κ y + τ ) η 2 x = = − (1 + 2 ρη x ) 1 + τ 2 β 2 2 κ y (1 − γ )( β 2 τ − 2( √ 2 κ y + τ ) η 2 x ) . (4.15) Combining relations ( 4.15 ) and ( 4.14 ) we get φ ( x N ) ≤ φ ( x 0 ) − η x 2 2 + τ 2 β 2 (1 + 2 ρη x ) 2 κ y (1 − γ )( β 2 τ − 2 η 2 x ( √ 2 κ y + τ )) N − 1 X k =0 ∥∇ φ ( x k +1 ) ∥ 2 + η x 2 (1 + 2 ρη x )( √ 2 κ y + τ ) τ β 2 L 2 xy δ 0 √ 2 κ y ( τ β 2 − 2( √ 2 κ y + τ ) η 2 x )(1 − γ ) . (4.16) 13 Since | γ | < 1 and β 2 τ − 2 η 2 x ( √ 2 κ y + τ ) > 0 , we hav e that 1 (1 − γ )( β 2 τ − 2 η 2 x ( √ 2 κ y + τ )) > 0 and 1 ( τ β 2 − 2( √ 2 κ y + τ ) η 2 x )(1 − γ ) > 0 . Therefore, the result is deduced by applying Proposition 2.4 . 5 Experiments In this section, we apply our algorithms to two noncon vex–strongly conca ve examples. 5.1 T oy experiment Inspired by [ 3 ], we consider the problem min x ∈ R max y ∈ R g ( x ) + xy − 0 . 5 y 2 , where g ( x ) = ( 0 . 5 − x 2 if | x | ≤ 0 . 5 , ( | x | − 1) 2 otherwise . (5.1) Note that g is C 1 and ρ = 2 -weakly con ve x. W e define Φ( x, y ) = g ( x ) + xy − 0 . 5 y 2 so that Φ( x, . ) is µ = 1 -strongly concav e w .r .t. y . Note that pro x η x Φ( · ,y k ) ( x k ) = pro x η x g ( x k − η x y k ) so following the closed formula for pro x η x g established in [ 27 , Exam. 9.1, pp. 21] we ha ve that pro x η x g ( x k − η x y k ) = ( x k − η x y k ) / (1 − 2 η x ) if | x k − η x y k | ≤ 0 . 5 − η x , ( x k − η x y k + 2 η x ) / (1 + 2 η x ) if x k − η x y k ≥ 0 . 5 − η x , ( x k − η x y k − 2 η x ) / (1 + 2 η x ) otherwise . By the coupling structure the Lipschitz constants in Assumptions 1 – 3 are L xy = L y x = L y y = 1 hence κ y = 1 . W e refer to the simultaneous 3 version of GD-RGA as the P arallel Pr oximal Gradient Descent– Ascent (PPGA) which is studied in [ 6 ]. The mapping φ has two stationary points at x ∗ = ± 2 / 3 . Follo wing the theoretical step-size bounds, we set η y = 1 for all methods; while, we take η x = 0 . 29 for GD-RGA and PD-RGA and η x = 0 . 06 for PPGA . As shown in Figure 1 , GD-RGA attains the smallest value of |∇ φ | which means that it reaches an ε -stationary point (here a critical point) before PD-RGA and PPGA , which require more iterations to reach this point. All methods con ver ge to the same critical point. 5.2 Imaging application: Plug-and-Play (PnP) in a nutshell W e now provide an application of PD-RGA to imaging restoration problems formulated as min x ∈ R d λf ( x ) + g ( x ) where f is a data-fidelity term, g a weakly con vex re gularizer and λ > 0 a parameter that controls the regularization. Here we consider f ( x ) = 1 / (2 σ 2 ) ∥ Ax − b ∥ 2 which corresponds to the fidelity to a degraded observ ation b = Ax ∗ + n through a linear operator A : R d → R n and an additiv e Gaussian noise n with standard deviation σ . Then, via dualization, min x ∈ R d λ/ (2 σ ) ∥ Ax − b ∥ 2 + g ( x ) can be written equiv alently as the following min-max problem min x ∈ R n max y ∈ R d ⟨ Ax − b, y ⟩ − σ 2 2 λ ∥ y ∥ 2 + g ( x ) . (5.2) 3 That is GD-RGA with the update y k +1 = pro x η y h y k + η y ∇ y Φ( x k , y k ) for y . 14 0 10 20 30 40 50 Number of iterations 1 0 1 4 1 0 1 2 1 0 1 0 1 0 8 1 0 6 1 0 4 1 0 2 1 0 0 log scale E v o l u t i o n o f | ( x k ) | GD-RGA PD-RGA PPGD A 5 4 3 2 1 x 4 2 0 2 4 y Initial point F inal point T r a j e c t o r y o f t h e i t e r a t e s ( x k , y k ) GD-RGA PD-RGA PPGD A Figure 1: W ith the initial point ( x 0 , y 0 ) = ( − 5 , 5) , all algorithms con verge to x ∗ = − 2 / 3 . Considering the coupling Φ( x, y ) = ⟨ Ax − b, y ⟩ − σ 2 2 λ ∥ y ∥ 2 + g ( x ) , PD-RGA writes ( x k +1 = pro x η x g ( x k − η x A T y k ) y k +1 = y k + η y Ax k +1 − b − σ 2 λ y k , which can be seen as a noncon ve x primal-dual method [ 5 ]. Next we consider the Plug-and-Play frame- work of [ 15 ], in which the regularization g is replaced by a function ϕ σ parameterized by a neural network. In practice, the function ϕ σ is L 1 − L -smooth, L L +1 -weakly con ve x with L < 1 / 2 , and it can be e v aluated together with its proximal mapping. More precisely , we use a neural network D σ that satisfies D σ = pro x ϕ σ and is pretrained to denoise images degraded with Gaussian noise [ 24 , 15 ]. Note that we are restricted to the step-size η x = 1 , as we only hav e access to prox ϕ σ with the denoiser of [ 15 ]. By the coupling structure of Φ , the block-wise Lipschitz constants of Assumptions 1 - 3 are L xx = L 1 − L , L xy = L y x = ∥ A ∥ S , L y y = µ = σ 2 /λ hence κ y = L y y /µ = 1 . Note that the y -step size for PD- RGA becomes 0 < η y ≤ λ/σ 2 . Since L < 1 / 2 it follo ws that ρ = L L +1 > 3 . Hence we hav e to guarantee η x = 1 < min σ 2 / ( λ (2 + √ 2) ∥ A ∥ S ) , 3 , which, similarly to [ 15 ], restricts the value of the regularization parameter to λ ≤ σ 2 / ((2 + √ 2) ∥ A ∥ S ) . W e now consider two image restoration problems, where D σ is the GSDRUNet denoiser [ 15 ] with pre- trained weights 4 . T est images are taken from the Set3C dataset av ailable in the DeepInverse library [ 29 ]. The observ ations b are then generated using the Downsampling and BlurFFT operators imple- mented in DeepInverse . Super -resolution In single image super-resolution, a lo w-resolution image b ∈ R n is observed from the unkno wn high-resolution one x ∈ R d via b = S H x + σ where H ∈ R d × d is the con volution with anti-aliasing kernel and S ∈ R n × d is the standard s -fold do wnsampling with d = s 2 × n [ 15 , Sect. 5.2.2].Here ∥ A ∥ S = 0 . 24 and we consider σ = 0 . 03 so we set λ = 0 . 00109 and η y = 1 . 21 which are the largest possible values. As shown in Figure 2 the method provides high quality restoration, with PSNR v alues in par with state-of-the-art Plug-and-Play methods [ 15 , Sect. 5.2.2]. Deblurring For image deblurring the operator A is a con volution with circular boundary conditions. Specifically , A = F Λ F ∗ where F is the orthogonal matrix of the discrete Fourier transform (being 4 https://github.com/samuro95/Prox- PnP 15 Ground truth Observation Reconstruction Evolution of ∥∇ φ ( x k ) ∥ Figure 2: Super-resolution of an butterfly from the data set CBSD68 do wnscaled with the indicated blur kernel and scale s = 2 . F ∗ its in verse) and Λ a diagonal matrix so b = F Λ F ∗ x + σ [ 15 , Sect. 5.1.1]. Here ∥ A ∥ S = 1 and we consider σ = 0 . 03 so we set λ = 0 . 00026 and η y = 0 . 28 . Figure 2 illustrates that the image reconstructed by PD-RGA improv es the PSNR by nearly 10 dB, with similar results as reported in [ 15 , Sect. 5.2.2]. Ground truth Observation Reconstructed Evolution of ∥∇ φ ( x k ) ∥ Figure 3: Deblurring of an butterfly from the data set CBSD68 downscaled with the indicated blur kernel. 6 Conclusions and perspectiv es In this work, we studied gradient algorithms for solving noncon vex-strongly concav e min-max prob- lems. W e presented a direct con ver gence analysis for the gradient descent-ascent algorithm, improv- ing upon state-of-the-art step-size rules. Le veraging our general proof strategy , we proposed the first con ver gence analysis for PD-RGA in a weakly con vex–strongly concav e setting. Through numerical examples, we demonstrated that PD-RGA allo ws to tackle re gularizing in verse problems where neural networks act as proximal operators. From an application standpoint, PD-RGA opens the possibility of extending the applications con- sidered in [ 4 ], such as multi-kernel support vector machines and classification with minimax group fairness, to noncon vex scenarios. Future w ork will focus on e xtending our proof strategy to nonsmooth couplings, noneuclidean scenarios (for example using Bregman proximal operators [ 6 ]), and stochastic v ariants of our algorithms as well as on relaxing the strong concavity assumption. 16 Acknowledgement Experiments related to imaging restoration presented in this paper were carried out using the PlaFRIM experimental testbed, supported by INRIA, CNRS (LABRI and IMB), Univ er- sit ´ e de Bordeaux, Bordeaux INP and Conseil R ´ egional d’Aquitaine (see https://www.plafrim.fr ). A Pr oofs f or the analysis of con ver gence of GD-RGA A.1 Proof of Lemma 3.3 Pr oof. By the descent Lemma 2.1 we get φ ( x k +1 ) ≤ φ ( x k ) + ⟨∇ φ ( x k ) , x k +1 − x k ⟩ + L φ 2 ∥ x k +1 − x k ∥ 2 . By ( 3.1 ) we hav e x k +1 − x k = − η x ∇ x Φ( x k , y k ) , so it implies φ ( x k +1 ) ≤ φ ( x k ) − η x ⟨∇ φ ( x k ) , ∇ x Φ( x k , y k ) ⟩ + L φ η 2 x 2 ∥∇ x Φ( x k , y k ) ∥ 2 . Adding ±∇ φ ( x k ) in the second ar gument of the last inner product produces φ ( x k +1 ) ≤ φ ( x k ) − η x ∥∇ φ ( x k ) ∥ 2 + η x ⟨∇ φ ( x k ) , ∇ φ ( x k ) − ∇ x Φ( x k , y k ) ⟩ + L φ η 2 x 2 ∥∇ x Φ( x k , y k ) ∥ 2 . (A.1) W e now bound the last two terms in the right hand-side of ( A.1 ). Using Y oung’ s inequality we first get ⟨∇ φ ( x k ) , ∇ φ ( x k ) − ∇ x Φ( x k , y k ) ⟩ ≤ 1 2 ∥∇ φ ( x k ) − ∇ x Φ( x k , y k ) ∥ 2 + ∥∇ φ ( x k ) ∥ 2 . (A.2) Applying Cauchy-Schwartz we ha ve ∥∇ x Φ( x k , y k ) ∥ 2 ≤ 2 ∥∇ φ ( x k ) − ∇ x Φ( x k , y k ) ∥ 2 + ∥∇ φ ( x k ) ∥ 2 . (A.3) Then by Proposition 2.3 , we obtain ∥∇ φ ( x k ) − ∇ x Φ( x k , y k ) ∥ 2 = ∥∇ x Φ( x k , y ∗ k ) − ∇ x Φ( x k , y k ) ∥ 2 ≤ L 2 xy ∥ y ∗ ( x k ) − y k ∥ 2 . (A.4) Plugging ( A.2 ), ( A.3 ) and ( A.4 ) into ( A.1 ) produces φ ( x k +1 ) ≤ φ ( x k ) − η x 2 (1 − 2 L φ η x ) ∥∇ φ ( x k ) ∥ 2 + η x 2 (1 + 2 L φ η x ) L 2 xy ∥ y ∗ ( x k ) − y k ∥ 2 . Summing ov er k = 0 , . . . , N − 1 we deduce the result. A.2 Proof of Lemma 3.4 Pr oof. W e distinguish two cases based on the source of the µ -strong concavity: whether it arises from Φ or from − h . Case 1: W e assume that Φ( x, · ) is µ -strongly concave in its second component for all x ∈ R d . W e 17 recall that Φ( x k +1 , · ) is concav e and L y y -smooth (Assumption 3 ), so that in this case, we necessarily hav e µ ≤ L y y and κ y = L y y /µ ≥ 1 . From the ascent Lemma 2.1 , we first hav e Φ( x k +1 , y k +1 ) − h ( y k +1 ) ≥ Φ( x k +1 , y k ) + ⟨∇ y Φ( x k +1 , y k ) , y k +1 − y k ⟩ − L y y 2 ∥ y k +1 − y k ∥ 2 − h ( y k +1 ) . (A.5) Next, by optimality condition of the prox operator in ( 3.2 ), there exists s k +1 ∈ ∂ h ( y k +1 ) such that 0 = s k +1 + η − 1 y ( y k +1 − y k − η y ∇ y Φ( x k +1 , y k )) . Then, by definition of the subdifferential we hav e h ( y ∗ k +1 ) ≥ h ( y k +1 ) + ⟨ s k +1 , y ∗ k +1 − y k +1 ⟩ . Plugging this relation into ( A.5 ) we obtain Φ( x k +1 , y k +1 ) − h ( y k +1 ) ≥ Φ( x k +1 , y k ) + ⟨∇ y Φ( x k +1 , y k ) , y k +1 − y k ⟩ − L y y 2 ∥ y k +1 − y k ∥ 2 − h ( y ∗ k +1 ) − 1 η y ⟨ y k +1 − y k , y ∗ k +1 − y k +1 ⟩ + ⟨∇ y Φ( x k +1 , y k ) , y ∗ k +1 − y k +1 ⟩ . (A.6) Using again strong-concavity , Φ( x k +1 , y ∗ k +1 ) ≤ Φ( x k +1 , y k ) + ⟨∇ y Φ( x k +1 , y k ) , y ∗ k +1 − y k ⟩ − µ/ 2 y ∗ k +1 − y k 2 , from which we bound ( A.6 ) by belo w obtaining Φ( x k +1 , y k +1 ) − h ( y k +1 ) ≥ Φ( x k +1 , y ∗ k +1 ) − h ( y ∗ k +1 ) − L y y 2 ∥ y k +1 − y k ∥ 2 + µ 2 y ∗ k +1 − y k 2 − 1 η y ⟨ y k +1 − y k , y ∗ k +1 − y k +1 ⟩ . As ⟨ y k +1 − y k , y ∗ k +1 − y k +1 ⟩ = 1 2 y k − y ∗ k +1 2 − y k +1 − y ∗ k +1 2 − ∥ y k − y k +1 ∥ 2 , we get Φ( x k +1 , y k +1 ) − h ( y k +1 ) ≥ Φ( x k +1 , y ∗ k +1 ) − h ( y ∗ k +1 ) − 1 2 L y y − 1 η y ∥ y k +1 − y k ∥ 2 − 1 2 1 η y − µ y ∗ k +1 − y k 2 + 1 2 η y y k +1 − y ∗ k +1 2 . Multiplying by 2 η y and rearranging the inequality we obtain y k +1 − y ∗ k +1 2 ≤ ( η y L y y − 1) ∥ y k +1 − y k ∥ 2 + (1 − η y µ ) y ∗ k +1 − y k 2 + 2 η y Φ( x k +1 , y k +1 ) − h ( y k +1 ) − Φ( x k +1 , y ∗ k +1 ) + h ( y ∗ k +1 ) . (A.7) Recalling that η y L y y = τ ∈ (0; 1] and κ y ≥ 1 , we have η y µ = τ /κ y ≤ 1 and ( A.7 ) becomes y k +1 − y ∗ k +1 2 ≤ (1 − τ κ y ) y ∗ k +1 − y k 2 + 2 τ /L y y Φ( x k +1 , y k +1 ) − h ( y k +1 ) − Φ( x k +1 , y ∗ k +1 ) + h ( y ∗ k +1 ) . (A.8) The µ − strong concavity of the coupling Φ w .r .t y -v ariable implies that Φ( x k +1 , y k +1 ) ≤ Φ( x k +1 , y ∗ k +1 ) + ⟨∇ y Φ( x k +1 , y ∗ k +1 ) , y k +1 − y ∗ k +1 ⟩ − µ 2 y k +1 − y ∗ k +1 2 . (A.9) From Lemma 2.2 we get 0 ∈ ∇ y Φ( x k +1 , y ∗ k +1 ) − ∂ h ( y ∗ k +1 ) . W e thus ha ve ∇ y Φ( x k +1 , y ∗ k +1 ) ∈ ∂ h ( y ∗ k +1 ) so that − h ( y k +1 ) ≤ − h ( y ∗ k +1 ) − ⟨∇ y Φ( x k +1 , y ∗ k +1 ) , y k +1 − y ∗ k +1 ⟩ . (A.10) 18 Summing ( A.9 ) and ( A.10 ) then gi ves Φ( x k +1 , y k +1 ) − h ( y k +1 ) ≤ Φ( x k +1 , y ∗ k +1 ) − h ( y ∗ k +1 ) − µ 2 y ∗ k +1 − y k +1 2 . Replacing the last inequality in ( A.8 ), we get y k +1 − y ∗ k +1 2 ≤ 1 − τ µ L y y y ∗ k +1 − y k 2 − τ µ L y y y ∗ k +1 − y k +1 2 , 1 + τ µ L y y y k +1 − y ∗ k +1 2 ≤ 1 − τ µ L y y y ∗ k +1 − y k 2 . Recalling that κ y = L y y /µ , we obtain δ k +1 = y k +1 − y ∗ k +1 2 ≤ κ y − τ κ y + τ y ∗ k +1 − y k 2 ≤ κ y κ y + τ 2 y ∗ k +1 − y k 2 . (A.11) Case 2: W e now assume that h is strongly con vex. Our strate gy in this case is to exploit the fact that the operator pro x h is a contraction. Also note that Id + η y ∇ y Φ( z , · ) for z ∈ R d is non-expansi ve, i.e , ∥ (Id + η y ∇ y Φ( z , · )) x − (Id + η y ∇ y Φ( z , · )) y ∥ 2 ≤ ∥ x − y ∥ 2 . (A.12) Using [ 27 , Lem. 5.5, pp. 13] we ha ve that pro x η y h is 1 / (1 + µη y ) -Lipschitz and by the fact that the theoretical maximizer y ∗ k +1 is a fixed point of ( 3.2 ). W e hav e that y ∗ k +1 − y k +1 = pro x η y h ( y ∗ k +1 − η y ∇ y Φ( x k +1 , y ∗ k +1 )) − prox η y h ( y k − η y ∇ y Φ( x k +1 , y k )) 2 ≤ 1 1 + µη y 2 y ∗ k +1 − y k + η y ∇ y Φ( x k +1 , y ∗ k +1 ) − ∇ y Φ( x k +1 , y k ) 2 ( A.12 ) ≤ 1 1 + µη y 2 y ∗ k +1 − y k 2 . (A.13) Since δ k +1 = y ∗ k +1 − y k +1 , η y = τ /L y y , with 0 < τ ≤ 1 , and κ y = L y y /µ , ( A.13 ) becomes δ k +1 ≤ κ y κ y + τ 2 y ∗ k +1 − y k 2 . (A.14) Conclusion Both cases yield the same control (relations ( A.11 ) and ( A.14 )) for δ k , so it does not matter whether the strong conca vity originates from Φ or − h . Now using triangular and Y oung inequalities in ( A.14 ), we deduce δ k +1 ≤ κ y κ y + τ 2 ∥ y ∗ k − y k ∥ 2 + 2 ∥ y ∗ k − y k ∥ y ∗ k +1 − y ∗ k + y ∗ k +1 − y ∗ k 2 ≤ κ y κ y + τ 2 (1 + θ ) ∥ y ∗ k − y k ∥ 2 + κ y κ y + τ 2 1 + 1 θ y ∗ k +1 − y ∗ k 2 . for any θ > 0 . Choosing θ ∗ = (2 κ y − τ )( κ y + τ ) 2 2 κ 3 y − 1 that is strictly positi ve for 0 < τ ≤ 1 ≤ κ y , we obtain δ k +1 ≤ 1 − τ 2 κ y δ k + κ y τ y ∗ k +1 − y ∗ k 2 , where we used the fact that κ y κ y + τ 2 1 + 1 θ ∗ = κ 2 y (2 κ y − τ ) τ (3 κ 2 y − τ 2 ) ≤ κ y τ for 0 < τ ≤ 1 ≤ κ y . 19 B Pr oof of Lemma 4.2 f or the analysis of PD-RGA Pr oof. Since φ is ρ − weakly con vex (Assumption 2 (iii)), we ha ve φ ( x k +1 ) ≤ φ ( x k ) + ⟨∇ φ ( x k +1 ) , x k +1 − x k ⟩ + ρ 2 ∥ x k − x k +1 ∥ 2 . From first order optimality condition of ( 4.1 ) we hav e x k +1 − x k = − η x ∇ x Φ( x k +1 , y k ) . Then φ ( x k +1 ) ≤ φ ( x k ) − η x ⟨∇ φ ( x k +1 ) , ∇ x Φ( x k +1 , y k ) ⟩ + ρη 2 x 2 ∥∇ x Φ( x k +1 , y k ) ∥ 2 . Adding ±∇ φ ( x k +1 ) in the second ar gument of the last inner product we get φ ( x k +1 ) ≤ φ ( x k ) − η x ∥∇ φ ( x k +1 ) ∥ 2 + η x ⟨∇ φ ( x k +1 ) , ∇ φ ( x k +1 ) − ∇ x Φ( x k +1 , y k ) ⟩ + ρη 2 x 2 ∥∇ x Φ( x k +1 , y k ) ∥ 2 . (B.1) Applying Y oung’ s inequality we obtain ⟨∇ φ ( x k +1 ) , ∇ φ ( x k +1 ) − ∇ x Φ( x k +1 , y k ) ⟩ ≤ 1 2 ∥∇ φ ( x k +1 ) ∥ 2 + 1 2 ∥∇ φ ( x k +1 ) − ∇ x Φ( x k +1 , y k ) ∥ 2 . By Cauchy-Schwartz inequality we get ∥∇ x Φ( x k +1 , y k ) ∥ 2 ≤ 2 ∥∇ φ ( x k +1 ) ∥ 2 + 2 ∥∇ x Φ( x k +1 , y k ) − ∇ φ ( x k +1 ) ∥ 2 . Using Proposition 2.3 we hav e ∥∇ φ ( x k +1 ) − ∇ x Φ( x k +1 , y k ) ∥ 2 = ∥∇ x Φ( x k +1 , y ∗ ( x k +1 )) − ∇ x Φ( x k +1 , y k ) ∥ 2 ≤ L 2 xy ∥ y ∗ ( x k +1 ) − y k ∥ 2 . Plugging the last three inequalities in ( B.1 ) leads to φ ( x k +1 ) ≤ φ ( x k ) − η x 2 (1 − 2 ρη x ) ∥∇ φ ( x k +1 ) ∥ 2 + η x 2 (1 + 2 ρη x ) L 2 xy ∥ y ∗ ( x k +1 ) − y k ∥ 2 . Refer ences [1] James P . Bailey , Gauthier Gidel, and Georgios Piliouras. Finite regret and cycles with fixed step- size via alternating gradient descent-ascent. In Jacob Abernethy and Shiv ani Agarwal, editors, Pr oceedings of Thirty Third Confer ence on Learning Theory , volume 125 of Pr oceedings of Ma- chine Learning Resear ch , pages 391–407. PMLR, 2020. [2] J.F . Bard. Practical Bilevel Optimization: Algorithms and Applications . Noncon ve x Optimization and Its Applications. Springer US, 1998. ISBN 9780792354581. [3] Radu Ioan Bot ¸ and Axel B ¨ ohm. Alternating proximal-gradient steps for (stochastic) noncon vex- concav e minimax problems. SIAM Journal on Optimization , 33(3):1884–1913, 2023. 20 [4] Radu Ioan Bot ¸, Ern ¨ o Robert Csetnek, and Michael Sedlmayer . An accelerated minimax algorithm for con ve x-concav e saddle point problems with nonsmooth coupling function. Computational Optimization and Applications , 86(3), 2022. [5] Antonin Chambolle and Thomas Pock. A first-order primal-dual algorithm for con vex problems with applications to imaging. Journal of mathematical imaging and vision , 40(1):120–145, 2011. [6] Eyal Cohen and Marc T eboulle. Alternating and parallel proximal gradient methods for nons- mooth, noncon ve x minimax: A unified con ver gence analysis. Mathematics of Operations Re- sear ch , 50(1):141–168, 2025. [7] Raf ael Correa, Abderrahim Hantoute, and Marco A. L ´ opez. Fundamentals of Con vex Analysis and Optimization: A Supr emum Function Appr oach . Springer , 2023. [8] Constantinos Daskalakis, Stratis Skoulakis, and Manolis Zampetakis. The complexity of con- strained min-max optimization. In Pr oceedings of the 53r d Annual ACM SIGA CT Symposium on Theory of Computing , New Y ork, NY , USA, 2021. Association for Computing Machinery . ISBN 9781450380539. [9] S. Dempe. F oundations of Bilevel Pr ogramming . Noncon ve x Optimization and Its Applications. Springer US, 2005. ISBN 9780306480454. [10] Geor gios B. Giannakis, Qing Ling, Gonzalo Mateos, Ioannis D. Schizas, and Hao Zhu. Decen- tralized learning for wireless communications and networking. In Splitting Methods in Commu- nication, Imaging, Science, and Engineering , pages 461–497. Springer International Publishing, 2016. [11] Gauthier Gidel, Reyhane Askari Hemmat, Mohammad Pezeshki, R ´ emi Le Priol, Gabriel Huang, Simon Lacoste-Julien, and Ioannis Mitliagkas. Negati ve momentum for improv ed game dynam- ics. In Kamalika Chaudhuri and Masashi Sugiyama, editors, Pr oceedings of the T wenty-Second International Confer ence on Artificial Intelligence and Statistics , volume 89 of Pr oceedings of Machine Learning Resear ch , pages 1802–1811. PMLR, 2019. [12] Noah Golo wich, Sarath Pattathil, Constantinos Daskalakis, and Asuman Ozdaglar . Last iterate is slower than averaged iterate in smooth con ve x-concav e saddle point problems. In Jacob Aber- nethy and Shi v ani Agarw al, editors, Pr oceedings of Thirty Thir d Confer ence on Learning Theory , volume 125 of Pr oceedings of Machine Learning Resear ch , pages 1758–1784. PMLR, 2020. [13] Ian Goodfello w , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farley , Sherjil Ozair , Aaron Courville, and Y oshua Bengio. Generativ e adversarial networks. Communications of the A CM , 63(11):139–144, 2020. [14] Erf an Y azdandoost Hamedani and Necdet Serhat A ybat. A primal-dual algorithm with line search for general con vex-conca ve saddle point problems. SIAM J ournal on Optimization , 31(2):1299– 1329, 2021. [15] Samuel Hurault, Arthur Leclaire, and Nicolas P apadakis. Proximal denoiser for con vergent plug- and-play optimization with noncon vex re gularization. In International Confer ence on Machine Learning , pages 9483–9505, 2022. [16] Haochuan Li, Y i Tian, Jingzhao Zhang, and Ali Jadbabaie. Complexity lower bounds for noncon ve x-strongly-concav e min-max optimization. In M. Ranzato, A. Beygelzimer , Y . Dauphin, P .S. Liang, and J. W ortman V aughan, editors, Advances in Neural Information Pr ocessing Sys- tems , volume 34, pages 1792–1804. Curran Associates, Inc., 2021. 21 [17] T ianyi Lin, Chi Jin, and Michael I Jordan. T wo-timescale gradient descent ascent algorithms for noncon ve x minimax optimization. J ournal of Machine Learning Resear ch , 26(11):1–45, 2025. [18] Mingrui Liu, Xiaoxuan Zhang, Zaiyi Chen, Xiaoyu W ang, and T ianbao Y ang. Fast stochastic A UC maximization with o (1 /n ) -con ver gence rate. In Jennifer Dy and Andreas Krause, editors, Pr oceedings of the 35th International Confer ence on Machine Learning , volume 80 of Pr oceed- ings of Machine Learning Resear ch , pages 3189–3197. PMLR, 10–15 Jul 2018. [19] Mingrui Liu, Hassan Rafique, Qihang Lin, and T ianbao Y ang. First-order con ver gence theory for weakly-con ve x-weakly-concav e min-max problems. Journal of Machine Learning Resear ch , 22 (169):1–34, 2021. [20] Sijia Liu, Songtao Lu, Xiangyi Chen, Y ao Feng, Kaidi Xu, Abdullah Al-Dujaili, Mingyi Hong, and Una-May O’Reilly . Min-max optimization without gradients: Con ver gence and applications to black-box e v asion and poisoning attacks. In International Confer ence on Machine Learning , volume 119, pages 6282–6293, 2020. [21] Aleksander Madry , Aleksandar Makelo v , Ludwig Schmidt, Dimitris Tsipras, and Adrian Vladu. T ow ards deep learning models resistant to adversarial attacks. In International Confer ence on Learning Repr esentations , 2018. [22] Angelia Nedi ´ c, Alex Olshe vsky , and Michael G. Rabbat. Network topology and communication- computation tradeof fs in decentralized optimization. Pr oceedings of the IEEE , 106(5):953–976, 2018. [23] Y urii Nesterov . Lectur es on Con vex Optimization , volume 137 of Springer Optimization and Its Applications . Springer , 2nd edition, 2018. [24] Jean-Christophe Pesquet, Audre y Repetti, Matthieu T erris, and Yves W iaux. Learning maximally monotone operators for image recov ery . SIAM Journal on Imaging Sciences , 14(3):1206–1237, 2021. [25] Hassan Rafique, Mingrui Liu, Qihang Lin, and T ianbao Y ang. W eakly-con ve x–concave min–max optimization: prov able algorithms and applications in machine learning. Optimization Methods and Softwar e , 37(3):1087–1121, 2022. [26] Meisam Razaviyayn, T ianjian Huang, Songtao Lu, Maher Nouiehed, Maziar Sanjabi, and Mingyi Hong. Noncon ve x min-max optimization: Applications, challenges, and recent theoretical ad- v ances. IEEE Signal Pr ocessing Magazine , 37(5):55–66, 2020. [27] Marien Renaud, Arthur Leclaire, and Nicolas Papadakis. On the moreau en velope properties of weakly con ve x functions. arXiv pr eprint arXiv:2509.13960 , 2025. [28] R. T yrrell Rockafellar . Con vex analysis . Princeton Mathematical Series. Princeton Univ ersity Press, Princeton, N. J., 1970. [29] Juli ´ an T achella, Matthieu T erris, Samuel Hurault, Andre w W ang, Leo Davy , J ´ er ´ emy Scan vic, V ictor Sechaud, Romain V o, Thomas Moreau, Thomas Davies, Dongdong Chen, Nils Laurent, Brayan Monroy , Jonathan Dong, Zhiyuan Hu, Minh-Hai Nguyen, Florian Sarron, Pierre W eiss, Paul Escande, Mathurin Massias, Thibaut Modrzyk, Brett Lev ac, T ob ´ ıas I. Liaudat, Maxime Song, Johannes Hertrich, Sebastian Neumayer , and Georg Schramm. Deepin verse: A python package for solving imaging in verse problems with deep learning. J ournal of Open Source Softwar e , 10 (115):8923, 2025. 22 [30] W enhan Xian, Feihu Huang, and Heng Huang. Escaping saddle point efficiently in minimax and bile vel optimizations. In International Joint Confer ence on Artificial Intelligence , pages 6659– 6668, 2025. [31] Zi Xu, Huiling Zhang, Y ang Xu, and Guanghui Lan. A unified single-loop alternating gradient projection algorithm for nonconv ex–conca ve and con vex–nonconca ve minimax problems. Math. Pr ogr am. , 201(1–2):635–706, 2023. ISSN 0025-5610. [32] Siqi Zhang, Junchi Y ang, Crist ´ obal Guzm ´ an, Negar Kiyav ash, and Niao He. The complexity of noncon ve x-strongly-concave minimax optimization. In Cassio de Campos and Marloes H. Maathuis, editors, Pr oceedings of the Thirty-Se venth Confer ence on Uncertainty in Artificial In- telligence , volume 161 of Pr oceedings of Machine Learning Resear ch , pages 482–492. PMLR, 2021. 23

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment