$R_{dm}$: Re-conceptualizing Distribution Matching as a Reward for Diffusion Distillation

Diffusion models achieve state-of-the-art generative performance but are fundamentally bottlenecked by their slow iterative sampling process. While diffusion distillation techniques enable high-fidelity few-step generation, traditional objectives oft…

Authors: Linqian Fan, Peiqin Sun, Tiancheng Wen

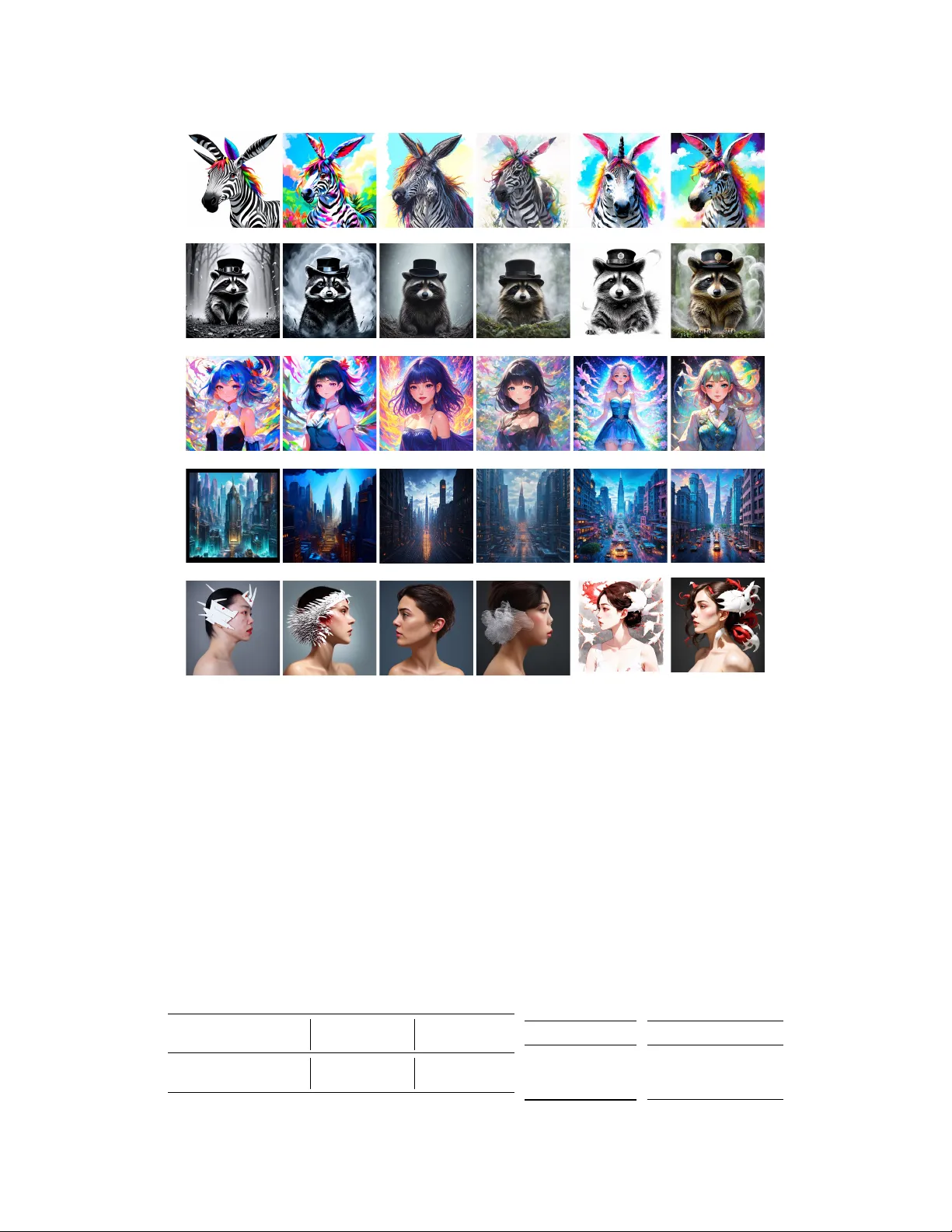

R dm : Re-conceptualizing Distrib ution Matching as a Reward f or Diffusion Distillation Linqian Fan 1,2 Peiqin Sun 1 ∗ Tiancheng W en 1 Shun Lu 1 Chengru Song 1 1 Kling T eam, Kuaishou T echnology 2 Tsinghua Univ ersity DMD GNDM (ours) DMDR GNDMR (ours) Figure 1: (T op) Samples from 4-step v anilla DMD and our GNDM. (Bottom) Samples from 4-step DMDR and our GNDMR. Our models achie ve better perceptual fidelity with fe wer artifacts and better details. Abstract Diffusion models achiev e state-of-the-art generative performance but are funda- mentally bottlenecked by their slow , iterati ve sampling process. While diffusion distillation techniques enable high-fidelity , few-step generation, traditional objec- tiv es often restrict the student’ s performance by anchoring it solely to the teacher . Recent approaches ha ve attempted to break this ceiling by integrating Reinforce- ment Learning (RL), typically through a simple summation of distillation and RL objectiv es. In this work, we propose a no vel paradigm by r e-conceptualizing dis- tribution matching as a r eward , denoted as R dm . This unified perspecti v e bridges * Correspondence to speiqin@gmail.com Preprint. the algorithmic gap between Dif fusion Matching Distillation (DMD) and RL, providing se veral primary benefits: (1) Enhanced Optimization Stability: W e in- troduce Group Normalized Distrib ution Matching (GNDM), which adapts standard RL group normalization to stabilize R dm estimation. By lev eraging group-mean statistics, GNDM establishes a more robust and effecti ve optimization direction. (2) Seamless Reward Integration: Our rew ard-centric formulation inherently supports adaptive weighting mechanisms, allowing for the fluid combination of DMD with external re ward models. (3) Improv ed Sampling Efficiency: By align- ing with RL principles, the frame work readily incorporates Importance Sampling (IS), leading to a significant boost in sampling ef ficiency . Extensiv e experiments demonstrate that GNDM outperforms vanilla DMD, reducing the FID by 1.87. Furthermore, our multi-re ward v ariant, GNDMR, surpasses existing baselines by striking an optimal balance between aesthetic quality and fidelity , achie ving a peak HPS of 30.37 and a low FID-SD of 12.21. Ultimately , R dm provides a flexible, stable, and efficient framework for real-time, high-fidelity synthesis. Codes are coming soon. 1 Introduction Diffusion models[ 1 – 5 ] hav e established a ne w state-of-the-art in generati v e modeling, but they are fundamentally limited by their iterativ e sampling process, which incurs significant computational ov erhead. T o achieve high-fidelity , real-time synthesis, researchers hav e explored v arious distillation strategies[ 6 – 12 ]. Among these, Distribution Matching Distillation (DMD)[ 11 , 12 ] has been widely adopted due to its exceptional ability to enable high-fidelity generation in just one or a fe w steps. Howe v er , traditional distillation objecti ves inherently bottleneck the performance of student models, as the optimization target is derived exclusi vely from a pretrained teacher [ 13 , 14 ]. T o break this performance ceiling, recent studies [ 15 , 16 , 13 ] hav e integrated Reinforcement Learning (RL) with diffusion distillation. Howe v er , these approaches typically rely on a naive linear combination of RL objectives and distillation losses. Departing from these paradigms, we adopt a fundamentally different perspecti ve: re-conceptualizing distribution matching as a reward . This formulation allows the distillation objecti v e to be seamlessly integrated and jointly optimized with task-specific rew ards within a unified reward frame work, as illustrated in Figure 2. Building upon this conceptual shift, we formally define distrib ution matching as R dm . This transition rev eals a critical challenge: as image noise increases, the variance of R dm amplifies significantly . This escalating variance destabilizes the estimated optimization direction and leads to inefficient training, a phenomenon we analyze in Section 4.2. T o mitigate this, we draw inspiration from established RL practices and introduce Group Normalization (GN) to provide a stabilized, superior optimization gradient, resulting in Group Normalized Distrib ution Matching (GNDM). Moreov er , framing DMD strictly as a re ward maximization problem unlocks sev eral critical adv antages when integrating with other re wards: First, R dm naturally informs the design of an ef fecti ve adaptiv e weighting function to balance multiple objecti v es. Second, it seamlessly incorporates Importance Sampling (IS), which significantly improv es sampling efficiency during training. Most importantly , this paradigm shift effecti vely constructs a algorithmic bridge between diffusion distillation and the broader RL ecosystem. W e can now ef fortlessly le verage established RL techniques[ 17 – 20 ] to further refine the distillation process under a single, unified mathematical umbrella. Our contributions are summarized as follo ws: • W e propose R dm , which nati vely incorporates powerful RL techniques into the diffusion distillation pipeline. This unification resolves the optimization conflicts inherent in prior joint-training methods and enables more intuitiv e control ov er training dynamics. • W e introduce Group Normalized Distrib ution Matching (GNDM) to pro vide high-fidelity directional guidance and propose a unified re ward framework (GNDMR) to holisticlly optimize the distillation process. • W e conduct extensi ve e xperiments demonstrating that GNDM achiev es superior distillation performance ov er vanilla DMD. Furthermore, our unified GNDMR frame work surpasses e x- isting baselines, yielding a highly optimal balance between visual aesthetics and distillation efficienc y . 2 Prompt ... Real Score Estimator Fake Score Estimator Group Norm. ... Group T rajectory Sampler Fake Score Estimator Unified Reward Framework: GNDMR ( G roup N ormalized D istribution M atching with other R ewards) Generator ... ... Reward Model 1 Reward Model K ... Reward Model 2 Figure 2: Our unified rew ard framew ork GNDMR. After re-conceptualizing distribution matching as a rew ard, R dm and other rew ards perform GRPO simultaneously . 2 Related W ork Distribution Matching Distillation. Distribution Matching Distillation (DMD)[ 11 ] is a foundation work that applies score-based disti llation to large-scale dif fusion models. A lot of follow-up work emerged to enhance its stability , theoretical grounding, and generation quality . DMD2[ 12 ] integrates a GAN loss to eliminate the reliance on costly paired regression data. Flash-DMD[ 21 ] designs a timestep-aware strategy and incorporates pixel-GAN to achiev e faster conv ergence and stable distillation. While numerous works need GAN to achie ve better performance, TDM[ 22 ] combines trajectory distillation and distribution matching for better alignment and eliminates GAN. Decoupled DMD[ 23 ] mathematically decomposes the DMD objecti ve, re vealing that Classifier-Free Guidance (CFG) augmentation acts as the primary generati v e engine while distrib ution matching serves as a regularizer , enabling optimized decoupled noise schedules. DMDR[ 13 ] combines DMD with RL, utilizing the distribution matching loss as a regularization mechanism to safely allow the student generator to explore and ultimately outperform the teacher . Reinfor cement Learning for Diffusion Models. Reinforcement learning (RL) has been widely adopted to align diffusion models with human preferences. V arious algorithms ha ve been de veloped for this purpose: ReFL[ 24 ] achie ves strong performance but inherently relies on differentiable re ward models, whereas Direct Preference Optimization (DPO)[ 25 ] optimizes the policy using pairwise data. Alternativ ely , Denoising Diffusion Policy Optimization (DDPO)[ 26 ] requires only a scalar reward, a process that Group Relati ve Polic y Optimization (GRPO)[ 18 ] further simplifies by eliminating the critic model via group normalization. Applying RL to distilled dif fusion models is typically treated as an independent post-training phase: Pairwise Sample Optimization (PSO)[ 27 ] first adapted policy optimization for distilled models, and Hyper -SD[ 28 ] incorporated human feedback to further boost accelerated generation. Breaking away from decoupled training, DMDR[ 13 ] introduced the first framew ork to simultaneously optimize both DMD and RL objecti ves. Our proposed method is also built upon the DMDR * framew ork. 3 Preliminaries 3.1 Distribution Matching Distillation The goal of Distribution Matching Distillation (DMD)[ 11 ] is to distill a multi-step dif fusion model (teacher) into a high-fidelity , fe w-step generator (student) G θ . The primary objecti ve of DMD is Distribution Matching Loss (DML), which minimizes the reverse-KL div ergence between the teacher’ s distrib ution p real and student’ s distrib ution p fake . The gradient of DML is: ∇ θ L DMD = − E ε,t ′ ( s real ( x t ′ ) − s fake ( x t ′ )) ∇ θ G θ ( ε ) , (1) where ε ∼ N ( 0 , I ) , t ′ ∼ U ( T min , T max ) and x ′ t is the dif fused sample obtained by injecting noise into x 0 = G θ ( ε ) at dif fused time step t ′ . s real and s fake are score functions gi ven by score estimator µ real * W e discuss only the GRPO-based variant of DMDR. 3 and µ fake . During training, µ fake with parameter ψ is initialized with µ real , and updating to track the distribution of G θ through denoising diffusion objecti v e: L denoise = || µ ψ fake ( x t ′ , t ′ ) − x 0 || 2 2 . (2) In few-step generation training, G θ ( ε ) is revised by backward simulation as introduced from DMD2[ 12 ]. W e follow SDE-based inference methods, starting from Standard Gaussian noise, it iteratively perform denoising ˆ x 0 | t = G θ ( x t ) and noising x t − 1 = α t − 1 ˆ x 0 | t + σ t − 1 ε . Thus, we hav e: p θ ( x t − 1 | x t ) = N ( α t − 1 G θ ( x t ) , σ 2 t − 1 I ) , (3) and we can redefine the gradient of one-step DML in Equation (1) to multi-step DML: ∇ θ L DMD = − E t ′ ,x t ∼ G θ ( s real ( x t ′ ) − s fake ( x t ′ )) ∇ θ G θ ( x t ) (4) 3.2 Denoising Diffusion Policy Optimization Follo wing Denoising Dif fusion Policy Optimization (DDPO)[ 26 ], we map the denoising process to the following multi-step Mark ov decision process (MDP): s t ≜ ( t, x t , c ) , π ( a t | s t ) = p θ ( x t − 1 | x t , c ) , P ( s t +1 | s t , a t ) ≜ δ c , δ t − 1 , δ x t − 1 , a t ≜ x t − 1 , ρ 0 ( s 0 ) ≜ c, δ T , N ( 0 , I ) , R ( s t , a t ) ≜ r ( x 0 , c ) , t = 0 , 0 , otherwise . Where δ x is Dirac delta distribution and T denoted the length of sampling trajectories. After collecting denoising trajectories { x T , x T − 1 , ..., x 0 } and likelihoods log p θ , we can use policy gradient estimator depicted in REINFORCE[29, 30] to update parameter θ via gradient descent: ∇ θ J DDPO = E " r ( x 0 ) T X t =1 ∇ θ log p θ ( x t − 1 | x t ) # , (5) T o overcome the limitation where optimization is confined to one step per sampling round due to the on-policy requirement of the gradient, we utilize an importance sampling estimator[ 31 ]. This formulation facilitates multi-step optimization by re weighting gradients from trajectories produced by θ old : ∇ θ J DDPO IS = E " r ( x 0 ) T X t =1 p θ ( x t − 1 | x t ) p θ old ( x t − 1 | x t ) ∇ θ log p θ ( x t − 1 | x t ) # (6) In this context, the e xpectation is taken with respect to the denoising sequences generated under the previous parameter set θ old . Note that for ease of observ ation we omit the condition c in all formulas. Equation (5) is similar in form to Equation (4), which inspires us to regard term ( s real − s fake ) as a rew ard. 4 Methodology 4.1 R dm : Distribution Matching as a Reward The key to establishing the connection between Equation (4) and Equation (5) is uncov ering the relationship between ∇ θ G θ ( x t ) and ∇ θ log p θ ( x t − 1 | x t ) , which are intrinsically linked through Equation (3). By taking the log-deriv ati ve of Equation (3), we obtain the follo wing identity: ∇ θ log p ( x t − 1 | x t ) = x t − 1 − µ θ ( x t ) σ 2 t − 1 · ∇ θ µ θ ( x t ) (7) where µ θ ( x t ) = α t − 1 G θ ( x t ) . Consequently , if we set R dm ( x t , x t − 1 , t ′ ) = s real ( x t ′ ) − s fake ( x t ′ ) x t − 1 − µ θ ( x t ) · σ 2 t − 1 α t − 1 , (8) 4 the relationship between the ∇ θ G θ ( x t ) and ∇ θ log p θ ( x t − 1 | x t ) can be formulated as: R dm ( x t , x t − 1 , t ′ ) ∇ θ log p θ ( x t − 1 | x t ) = ( s real ( x t ′ ) − s fake ( x t ′ )) ∇ θ G θ ( x t ) . W e define the policy gradient objecti ve function for the distillation-based polic y as: ∇ θ J DDPO DM = E t ′ ,x t ∼ G θ [ R dm ( x t , x t − 1 , t ′ ) ∇ θ log p ( x t − 1 | x t )] , (9) Comparing to Equation (5), we use only one sample from the trajectory instead of all samples, ensuring consistency with the traditional DML as in Equation (4). Finally , W e ha ve ∇ θ J DDPO DM = −∇ θ L DM D strictly established. 4.2 Revisiting the Distrib ution Matching Reward Next, we further explore the meaning of our new-defined distribution matching rew ard R dm . By substituting x t − 1 − µ θ ( x t ) = σ t − 1 ε x ( ε x is the standard gaussian noise which sampled to obtain x t − 1 ), we can rewrite Equation (8) as R dm ( t, t ′ ) = R s ( t ′ ) · σ t − 1 α t − 1 ε x , (10) where R s ( t ′ ) := s real ( x t ′ ) − s fake ( x t ′ ) . Figure 3: Larger diffused timesteps, higher score vari- ance. The vector R s ( t ′ ) functions as the critical guidance term that drives the generation of G θ tow ard the manifold of p real and away from p fake . Howe ver , R s ( t ′ ) fluctuates across dif fused samples x t ′ at timesteps t ′ . Specifically , as t ′ increases, the diffused sample x t ′ be- comes increasingly blurred, resulting in more ambiguous directional information provided by R s ( t ′ ) , as shown in Figure 3. Although recent works lev erage the sample weighting mechanisms introduced by DMD[ 11 ] to enhance stability and fidelity , the v ariance of R s ( t ′ ) is higher at larger values of t ′ , which can mislead the optimiza- tion trajectory , thereby increasing the difficulty of ef fecti ve model distillation. Further analysis in Section 5.3. Beyond the optimization challenges posed by the high variance at larger timesteps, the fundamental objecti v e of the distrib ution match- ing rew ard R dm div erges significantly from standard RL paradigms. Unlike con v entional reward metrics such as HPS[ 32 ] or CLIP Score[ 33 ], where unbounded maximization often exacerbates re- ward hacking, R dm essentially acts as a div ergence measure that is optimized to approach zero. This stabilization dynamic is conceptually analogous to PREF-GRPO[ 34 ], where win-rate con v erges to 0.5. This fundamental shift from absolute maximization to distribution alignment introduces a critical advantage: R dm provides an inherent safeguard against ov er-optimization, and it is a well-defined rew ard for regularization. 4.3 Group Normalized Distrib ution Matching After re-concepting the distribution matching as a reward, we aim to address the inaccurate estimation issue in the calculation of R s ( t ′ ) . As Group Normalization (GN) on R dm allows for a more stable estimation of the rew ard direction by the mean-subtraction mechanism, we naturally extend R dm to a GRPO setting. Recall the MDP formulation defined in Section 3.2. The generator G θ samples a groups of G individ- ual images { x i 0 } G i =1 and the corresponding trajectories { ( x i T , x i T − 1 , ..., x i 0 ) } G i =1 . The advantage of i -th sample at trajectory step t and diffused timestep t ′ is A i,t ′ dm ,t = R dm ( x i t , x i t − 1 , t ′ ) − mean ( { R dm ( x i t , x i t − 1 , t ′ ) } G i =1 ) std ( { R dm ( x i t , x i t − 1 , t ′ ) } G i =1 ) . (11) The policy is updated by maximizing the Group Normalized Distrib ution Matching (GNDM) objec- tiv e: J GNDM ( θ ) = E t,t ′ , { x i } G i =1 ∼ G θ old [ f ( r, A dm , θ , η )] , (12) 5 where f ( r, A dm , θ , η ) = 1 G G X i =1 min r i t ( θ ) A i,t ′ dm ,t , clip r i t ( θ ) , 1 − η , 1 + η A i,t ′ dm ,t , (13) r i t ( θ ) = p θ ( x i t | x i t − 1 ) p θ old ( x i t | x i t − 1 ) . In each group, we share the generator timestep t and dif fused timestep t ′ , separately . It maximizes component div ersity while ensuring consistency within the group. W e prove it is ef fectiv e in ablation study Section 5.3. Furthermore, we introduced importance sampling in Equation (12), which enables to increase sampling efficienc y while maintaining performance by update generator multiple times in once sampling, as discuss in Section 5.2. 4.4 GNDM with Other Rewards T o further improve the generativ e quality and alleviate mode seeking, following DMDR[ 13 ], we introduce other re ward R o in addition to the R dm . As the same to Equation (11), W e employ GN to R o : A i o ,t = R o ( x i 0 ) − mean ( { R o ( x i 0 ) } G i =1 ) std ( { R o ( x i 0 ) } G i =1 ) . (14) Unlike A i,t ′ dm ,t , which is calculated as a dense rew ard on latent trajectory , A i o ,t is calculated as a sparse rew ard on final image. The total adv antage of i -th sample A i sum ,t at generation time t can be defined as the combination of the DM-deriv ed adv antage A i,t ′ dm ,t and K auxiliary advantages A i o j ,t : A i sum ,t = A i,t ′ dm ,t + K X j =1 w j A i o j ,t , (15) where w j is the weighting funciton for j -th auxiliary reward. The final GRPO objective with multi-rew ards is: J GNDMR ( θ ) = E t,t ′ , { x i } G i =1 ∼ G θ old [ f ( r, A sum , θ , η )] . (16) 4.5 Adaptive W eight Design in Practice Implement In practice, the term x t − 1 − µ θ ( x t ) introduces significant stochasticity and potential numerical instability , as x t − 1 is obtained by adding gaussian noise from µ θ ( x t ) . T o address this, we define a stabilized rew ard term R dm by applying a sign-based normalization to the denominator to guarantee positiv e correlation. W e also consider the weighting function proposed by DMD[ 11 ], as we found it can improv e distillation efficienc y . The final distillation matching reward can be defined as R dm ( x t , x t − 1 , t ′ ) = s real ( x t ′ ) − s fake ( x t ′ ) sign ( x t − 1 − µ θ ( x t )) C S || µ real ( x t ′ , t ′ ) − ˆ x 0 | t || 1 , (17) where sign ( x ) = 1 if x > 0 , and − 1 otherwise. C and S is the number of channels and spatial locations, respectiv ely . After applying GN towards R dm , we multiply the scaler w dm with A dm to maintain the original amplitude: w dm ,t = 1 | x t − 1 − µ θ ( x t ) | + ϵ σ 2 t − 1 α t − 1 . (18) This formulation ensures a stable optimization signal by reducing the v ariance inherent in the ra w sampling residuals. When incorporating with other rewards, we found multiply adapti ve weight β dm ,t based on w dm ,t can effecti v ely improv e the target score without collapsing by keeping their amplitudes consistent: β dm ,t = || w dm ,t || 1 C S . (19) 6 Algorithm 1: GNDMR training procedure Input : Pretrained real diffusion model µ real , number of prompts N , number of generated samples per prompt M (group size), number inference timesteps T . Output : Distilled generator G θ . // Initialize generator and fake score estimators from pretrained model 1 G θ ← copyW eights ( µ real ) , µ fake ← copyW eights ( µ real ) 2 while train do // Sample trajectories 3 Sample a batch of prompts Q = { q 1 , q 2 , . . . , q N } 4 for eac h q ∈ Q do 5 Sample a group of M trajectories { ( x i T , x i T − 1 , ..., x i 0 ) } M i =1 // Update fake score estimation model 6 Sample generated timesteps t and diffused timesteps t ′ 7 x t ′ = forwardDif fusion(stopgrad( G θ ( x t ) ), t ′ ) 8 L denoise = denoisingLoss( µ fake ( x t ′ , t ′ ) , stopgrad( G θ ( x t ) )) // Equation (2) 9 µ fake = update( µ fake , L denoise ) // Compute rew ards and advantages 10 for eac h q ∈ Q do 11 Sample generated timesteps t and diffused timesteps t ′ 12 for i = 1 to M do 13 R i dm = R dm ( x i t , x i t − 1 , t ′ ) , R i o = RM ( x i 0 ) // Equation (17) 14 A i,t ′ dm ,t = GN ( R i dm ) , A i o ,t = GN ( R i o ) // Equation (11), Equation (14) 15 A i sum ,t = weightedAdd( A i,t ′ dm ,t , A i o ,t ) // Equation (20) // Update generator 16 for tr ain generator loop do 17 Use the same generated timesteps t when compute rew ards 18 J GNDMR ( θ ) = GRPOLoss( A sum , r t ( θ ) ) // Equation (16) 19 G θ = update( G θ , J GNDMR ( θ ) ) As w dm ,t is pixel-wise, we keep it consistent with the other rew ards’ dimensions by av eraging them out in the sample dimension. As discussed in Section 5.3, β dm ,t shows significance on both DMDR and GNDMR. Finally , we rewrite Equation (15) with designed weight as A i sum ,t = w dm ,t A i,t ′ dm ,t + β dm ,t X j w j A i o j ,t . (20) Algorithm 1 outlines the final training procedure. Additional details are provided in the supplementary materials. 5 Experiments Experiment Setting. The distillation is conducted on the LAION-AeS-6.5+[ 35 ] solely with its prompts. W e use DFN-CLIP[ 36 ] and HPSv2.1[ 32 ] as rew ard models by default, where HPS represents aesthetic quality and CLIP captures image–text alignment, jointly guiding DMD to ward more di verse and semantically aligned modes. Meanwhile, to reduce sampling overhead, we propose a variant termed GNDMR-IS, which updates the generator twice for each sampling by importance sampling estimator . The trained distilled models are all flow-based[ 37 , 38 ] and support inference on stochastic sampling[ 39 ]. W e also consider cold start strategy for faster and stable con v ergence[ 13 ], more experiment details can be found in the supplementary materials. Evaluation Metrics. T o comprehensively e v aluate our approach, we adopt a di verse set of metrics. Fine-grained image-text semantic alignment is measured via the Human Preference Score (HPS) v2.1 [ 32 ], while PickScore (PS) [ 40 ] gauges o verall aesthetic quality and perceptual appeal. T o capture broader nuances of human judgment such as object accuracy , spatial relations, and attribute binding, we incorporate the recently proposed Multi-dimensional Preference Score (MPS) [ 41 ]. Beyond perceptual assessments, CLIP Score (CS) [ 36 ] and FID [ 42 ] serve to quantitati vely verify distillation 7 T able 1: Comparison against state-of-the-art methods. * denotes our reproduced results. Img-Free represents whether the training requires external image data. Cost refers to the product of sample size and sampling iterations. The best results are marked in red , second-best in orange . Method NFE Res. Img-Free HPS ↑ PS ↑ MPS ↑ CS ↑ FID ↓ FID-SD ↓ Cost ↓ Stable Diffusion 3 Medium Comparison Base Model (CFG=7) 50 1024 - 29.00 22.72 12.10 38.86 24.48 - - Flow-GRPO[18]* (CFG=7) 50 1024 - 30.35 22.90 12.56 38.52 26.63 12.39 - Hyper-SD[28] (CFG=5) 8 1024 ✗ 27.20 21.90 11.22 37.76 26.94 10.09 - LCM[39] 4 1024 ✗ 27.76 22.31 11.61 36.97 27.71 15.90 - DMD2[12]* 4 1024 ✗ 26.64 22.36 11.37 38.00 27.28 16.51 - Flash-SD3[46] 4 1024 ✗ 27.47 22.65 11.98 38.07 26.01 12.21 - DMDR[13]* 4 1024 ✓ 29.50 22.77 11.98 38.10 29.10 14.11 - GNDMR-IS 4 1024 ✓ 30.00 22.89 12.48 38.15 28.84 12.47 128*4k GNDMR 4 1024 ✓ 30.37 22.88 12.53 38.20 28.02 12.21 128*8k Stable Diffusion 3.5 Medium Comparison Base Model (CFG=3.5) 50 512 - 27.78 22.59 11.91 38.46 20.69 - - Flow-GRPO[18]* (CFG=3.5) 50 512 - 31.81 23.24 12.93 39.21 29.27 9.39 - DMD2[12]* 4 512 ✗ 30.44 22.92 12.73 38.59 26.64 14.63 - DMDR[13]* 4 512 ✓ 30.83 23.07 12.80 38.22 26.05 16.73 - GNDMR-IS 4 512 ✓ 30.88 22.94 12.86 38.39 25.60 16.68 128*3k GNDMR 4 512 ✓ 31.25 23.15 12.93 38.59 24.44 13.93 128*6k ef fectiv eness. W e also compute FID-SD [ 43 ] by comparing the outputs of all baselines against images generated by the original pre-trained diffusion models. 5.1 Comparison with State-Of-The-Art (SO T A) W e validate the text-to-image generation performance in both aesthetics alignment and distillation ef ficiency on 10K prompts from COCO2014[ 44 ] following the 30K split of karpathy . W e compare our 4-step generati v e models GNDMR and its v ariant GNDMR-IS (update 2 times generator per sampling by importance sampling strategy) against SD3-Medium[ 1 ] and SD3.5-Medium[ 45 ], as well as other open-sourced SO T A distillation models. W e reproduced DMD2[ 12 ], as it serves as the foundational distribution-based model in this domain. Additionally , we implemented DMDR (w/ GRPO)[ 13 ], a pioneer method within the same cate gory . T o further e valuate our model’ s performance against RL techniques directly applied to the teacher model, we extended the application of Flo w-GRPO[ 18 ] to the base model, positioning this as the ceiling of RL performance in this context. Quantitative Comparison. As shown in T able 1, our quantitati ve analysis highlights the superiority of GNDMR across three key dimensions. Regarding Aesthetics Alignment , on both SD3 and SD3.5, GNDMR consistently outperforms existing 4-step models. It surpasses the teacher and achieves comparable aesthetic performance to this Flo w-GRPO optimized model, underscoring the ef ficacy of our alignment formulation. Furthermore, it enables Highly Efficient, Data-Free Distillation . Operating without external image datasets, GNDMR achiev es a strictly lower FID than the data-free DMDR baseline on both SD3 and SD3.5. For SD3, its remarkably low FID-SD indicates accurate teacher distribution matching without the aesthetic degradation seen in Hyper-SD, striking a superior balance between visual quality and distribution fidelity . Finally , our framework deli vers Reduced T raining Cost , the GNDMR-IS variant halves training e xpenses while maintaining highly competitive alignment, demonstrating the accelerated con ver gence and resource ef ficiency of our paradigm. Qualitative Comparison. As shown in Figure 4, GNDMR consistently achie ves superior aesthetic quality , exhibiting richer details, enhanced color vibrancy , and stronger prompt alignment across di verse styles. Furthermore, our method significantly mitigates the visual artifacts prev alent in DMDR outputs. Our GNDMR demonstrates an optimal balance between high aesthetic appeal and clean image synthesis. 5.2 Importance Sampling Correction One significant advantage of treating distrib ution matching as a re ward is the ability to le verage the Importance Sampling (IS) estimator [ 31 ]. This enables multi-step updates for the student model from 8 Base Model Hyper- SD LCM Flash - SD3 DMDR GNDMR The image is of a rac coon wearing a Peaky Blinders hat, surrounded by swirling mist and rende red with fine detail. A colorful digital painting with a front view and anime- i nspired vibes fea t uring a magical composition. An intricate and elega nt art deco- i nspired metropolis with retrofuturistic eleme nts in a cyberpunk style. A woman's face in profile, with white c arapace plates extruding from the skin and red kintsurugi. A hybrid creature concept painting of a zebra-striped unicorn with bunny ea rs and a colorful mane. Figure 4: Qualitative Results. Our GNDMR has better aesthetics than other models and fe wer artifacts than DMDR. a single sampling iteration, effecti vely addressing the high sample demand typically associated with Reinforcement Learning (RL). Our experiments, conducted on SD3-Medium (512 × 512), employ HPSv2.1 as an auxiliary rew ard and report HPSv2.1 on HPDv2 test prompts[32]. As illustrated in Figure 5a, while increasing the batch size (e.g., from 16 × 8 to 32 × 16 ) enhances rew ard optimization per training step, it substantially raises the sampling cost. By implementing 5 training iterations per sample with a clip range of η = 0 . 5 , our 32 × 16 (w/ IS) configuration achieves a con ver gence rate and final performance comparable to the standard 32 × 16 setup, while requiring significantly fewer total samples e v en less than the baseline 16 × 8 configuration (see Figure 5b). T able 2: Ablation on Group Normalization (GN) on R dm . W e first only distill model for 500 iteration then continue training with different re w ards. Method only R dm +HPS +PS FID ↓ FID ↓ HPS ↑ FID ↓ PS ↑ GNDMR 23.07 24.47 30.22 22.32 22.76 w/o GN 24.94 25.40 30.39 24.61 22.71 T able 3: Ablation on sampling strate gy (left) and timestep intervals of group nor- malization (right). t ′ t FID ↓ 24.94 ✓ 24.34 ✓ ✓ 23.07 Interval FID ↓ [0 , 300] 23.71 [300 , 600] 23.46 [600 , 1000] 23.43 9 0 200 400 600 800 1000 T raining Steps 31.4 31.6 31.8 32.0 32.2 32.4 32.6 HPS 16x8 16x16 32x16 32x16 (w/ IS) (a) Equal Updates Comparison 0 100000 200000 300000 400000 500000 T otal Samples 31.4 31.6 31.8 32.0 32.2 32.4 32.6 HPS 16x8 16x16 32x16 32x16 (w/ IS) (b) Equal Sampling Budget Comparison Figure 5: Importance Sampling (IS) improves sampling efficienc y . (a) W ith the same number of training steps, larger batch sizes lead to better rew ard optimization, but (b) they also require more samples. By introducing IS, the 32×16 (w/ IS) setting achiev es comparable performance under a reduced sampling budget. 5.3 Ablation Study W e explore the ef fecti veness of R dm from multiple perspecti ves through ablation studies belo w . By default, we use SD3-Medium with 512 × 512 and first perform 500 iterations v anilla DMD for fast training and observation, the results were reported on COCO30K. Effect of group normalization on R dm . The primary advantage of our GNDMR lies in applying Group Normalization (GN) to R dm . T o in vestigate this ef fect, we conduct the experiments sho wn in T able 2. During the first 500 distillation training iterations, GNDMR results in a lower FID, providing a better initialization for subsequent optimization. In the follow-up training, where HPS and PS are separately introduced as additional rewards, GNDMR continues to achiev e lower FID while maintaining comparable performance on the target re wards. Sampling strategy of generation timestep t and diffused timestep t ′ . Since Group Normalization (GN) is applied to R dm , the same t ′ is shared within each group, as t ′ determines the noise level of the dif fused samples. W e further examine whether sharing the same t within a group af fects performance. The first ro w in T able 3 (left) corresponds to the v anilla DMD baseline. Sharing both t ′ and t within a group yields the best performance, consistent with Equation (10), since R dm depends on both t ′ and t . Effect of diffused timestep intervals of group normalization on R dm . As shown in T able 3 (right), larger timestep intervals correspond to higher noise lev els, which increase the variance of R dm estimation as shown in Figure 3. Applying GN to regions with larger interv al values ef fecti vely stabilizes the estimation and leads to lower FID. Effect of β dm ,t . As illustrated in Figure 6, it is challenging to consistently improve the tar get rew ard through simple static weighting w . This dif ficulty arises because the distillation matching incorporates a dynamic coefficient w dm ,t that e v olves during training. W ithout scaling the rew ard by the same le vel coefficient β dm ,t , it is nearly impossible to maintain a proper balance between the two terms using a fixed weight w , often leading to unstable optimization or suboptimal reward gains. By contrast, applying β dm ,t to the rew ard term ensures a synchronized weighting scheme, allo wing the rew ard to improv e steadily without collapse. 6 Conclusion In this work, we present a novel frame work for improving dif fusion distillation by re-conceptualizing distribution matching as a re ward. Our approach bridges the gap between dif fusion distillation and RL, resulting in more ef ficient and stable training. Through extensi ve e xperiments, we demonstrate that GNDM outperforms v anilla DMD, achie ving a notable reduction in FID scores. Furthermore, the GNDMR framew ork, which integrates additional re wards, achie ves an optimal balance between aesthetic quality and fidelity . Our method of fers a flexible, effi cient, and stable framework for real-time, high-fidelity image synthesis, while also providing a nov el direction for the application of 10 600 800 1000 1200 1400 T raining Steps 31.0 31.2 31.4 31.6 31.8 32.0 32.2 HPS w=1 w=10 w=100 w=10 (w/ beta) (a) Effect of β dm ,t on DMDR 600 800 1000 1200 1400 T raining Steps 30.8 31.0 31.2 31.4 31.6 31.8 32.0 32.2 HPS w=1 w=10 w=100 w=10 (w/ beta) (b) Effect of β dm ,t on GNDMR Figure 6: Ablation study on the effect of β dm ,t and the weighting factor w . "w/ beta" denotes the configuration using β dm ,t as defined in Equation (19), whereas other variants set β dm ,t = 1 . The HPS is ev aluated on the HPDv2 test prompts. The results demonstrate that incorporating β dm ,t leads to more stable and superior re ward optimization compared to static weighting under both DMDR and GNDMR setting. the latest RL techniques in dif fusion model distillation, pa ving the w ay for future adv ancements in the field. References [1] Patrick Esser , Sumith Kulal, Andreas Blattmann, Rahim Entezari, Jonas Müller , Harry Saini, Y am Levi, Dominik Lorenz, Axel Sauer , Frederic Boesel, et al. Scaling rectified flow trans- formers for high-resolution image synthesis. In F orty-first international confer ence on machine learning , 2024. [2] W illiam Peebles and Saining Xie. Scalable diffusion models with transformers. In Pr oceedings of the IEEE/CVF international confer ence on computer vision , pages 4195–4205, 2023. [3] Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser , and Björn Ommer . High- resolution image synthesis with latent diffusion models. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 10684–10695, 2022. [4] Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffusion probabilistic models. Advances in neural information pr ocessing systems , 33:6840–6851, 2020. [5] Y ang Song, Jascha Sohl-Dickstein, Diederik P Kingma, Abhishek Kumar , Stefano Ermon, and Ben Poole. Score-based generativ e modeling through stochastic dif ferential equations. arXiv pr eprint arXiv:2011.13456 , 2020. [6] Chenlin Meng, Robin Rombach, Ruiqi Gao, Diederik Kingma, Stef ano Ermon, Jonathan Ho, and T im Salimans. On distillation of guided diffusion models. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 14297–14306, 2023. [7] T im Salimans and Jonathan Ho. Progressi ve distillation for fast sampling of diffusion models. arXiv pr eprint arXiv:2202.00512 , 2022. [8] Y ang Song and Prafulla Dhariwal. Improved techniques for training consistency models. In The T welfth International Conference on Learning Representations , 2024. URL https: //openreview.net/forum?id=WNzy9bRDvG . [9] W eijian Luo, Zemin Huang, Zhengyang Geng, J Zico Kolter , and Guo-jun Qi. One-step diffusion distillation through score implicit matching. Advances in Neural Information Pr ocessing Systems , 37:115377–115408, 2024. [10] Mingyuan Zhou, Zhendong W ang, Huangjie Zheng, and Hai Huang. Guided score identity distillation for data-free one-step text-to-image generation. In The Thirteenth International Con- fer ence on Learning Repr esentations , 2025. URL . 11 [11] T ianwei Y in, Michaël Gharbi, Richard Zhang, Eli Shechtman, Fredo Durand, William T Freeman, and T aesung Park. One-step diffusion with distribution matching distillation. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 6613–6623, 2024. [12] T ianwei Y in, Michaël Gharbi, T aesung Park, Richard Zhang, Eli Shechtman, Fredo Durand, and Bill Freeman. Improved distrib ution matching distillation for fast image synthesis. Advances in neural information pr ocessing systems , 37:47455–47487, 2024. [13] Dengyang Jiang, Dongyang Liu, Zanyi W ang, Qilong W u, Liuzhuozheng Li, Hengzhuang Li, Xin Jin, David Liu, Zhen Li, Bo Zhang, et al. Distribution matching distillation meets reinforcement learning. arXiv pr eprint arXiv:2511.13649 , 2025. [14] Y anzuo Lu, Y uxi Ren, Xin Xia, Shanchuan Lin, Xing W ang, Xuefeng Xiao, Andy J Ma, Xiaohua Xie, and Jian-Huang Lai. Adversarial distrib ution matching for dif fusion distillation to wards efficient image and video synthesis. In Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision , pages 16818–16829, 2025. [15] Zhiwei Jia, Y uesong Nan, Huixi Zhao, and Gengdai Liu. Reward fine-tuning two-step dif fusion models via learning dif ferentiable latent-space surrogate reward. In Pr oceedings of the Computer V ision and P attern Recognition Confer ence , pages 12912–12922, 2025. [16] W eijian Luo. Diff-instruct++: Training one-step te xt-to-image generator model to align with human preferences. arXiv pr eprint arXiv:2410.18881 , 2024. [17] John Schulman, Filip W olski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov . Proximal policy optimization algorithms. arXiv preprint , 2017. [18] Jie Liu, Gongye Liu, Jiajun Liang, Y angguang Li, Jiaheng Liu, Xintao W ang, Pengfei W an, Di Zhang, and W anli Ouyang. Flo w-grpo: Training flo w matching models via online rl. arXiv pr eprint arXiv:2505.05470 , 2025. [19] Jiajun Fan, T ong W ei, Chaoran Cheng, Y uxin Chen, and Ge Liu. Adaptiv e div ergence regularized policy optimization for fine-tuning generati v e models. In The Thirty-ninth Annual Confer ence on Neural Information Pr ocessing Systems , 2025. URL https://openreview.net/forum? id=aXO0xg0ttW . [20] Shih-Y ang Liu, Xin Dong, Ximing Lu, Shizhe Diao, Peter Belcak, Mingjie Liu, Min-Hung Chen, Hongxu Y in, Y u-Chiang Frank W ang, Kwang-T ing Cheng, Y ejin Choi, Jan Kautz, and P avlo Molchanov . Gdpo: Group re ward-decoupled normalization policy optimization for multi-rew ard rl optimization, 2026. URL . [21] Guanjie Chen, Shirui Huang, Kai Liu, Jianchen Zhu, Xiao ye Qu, Peng Chen, Y u Cheng, and Y ifu Sun. Flash-dmd: T o wards high-fidelity fe w-step image generation with efficient distillation and joint reinforcement learning. arXiv pr eprint arXiv:2511.20549 , 2025. [22] Y ihong Luo, T ianyang Hu, Jiacheng Sun, Y ujun Cai, and Jing T ang. Learning fe w-step dif fusion models by trajectory distribution matching. In Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision , pages 17719–17728, 2025. [23] Dongyang Liu, Peng Gao, David Liu, Ruoyi Du, Zhen Li, Qilong W u, Xin Jin, Sihan Cao, Shifeng Zhang, Stev en HOI, and Hongsheng Li. Decoupled DMD: CFG augmentation as the spear , distrib ution matching as the shield. In The F ourteenth International Confer ence on Learning Repr esentations , 2026. URL https://openreview.net/forum?id=jBztvOiCKE . [24] Jiazheng Xu, Xiao Liu, Y uchen W u, Y uxuan T ong, Qinkai Li, Ming Ding, Jie T ang, and Y uxiao Dong. Imagere ward: Learning and ev aluating human preferences for text-to-image generation. Advances in Neural Information Pr ocessing Systems , 36:15903–15935, 2023. [25] Bram W allace, Meihua Dang, Rafael Rafailo v , Linqi Zhou, Aaron Lou, Senthil Purushwalkam, Stefano Ermon, Caiming Xiong, Shafiq Joty , and Nikhil Naik. Diffusion model alignment using direct preference optimization. In Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , pages 8228–8238, 2024. 12 [26] Ke vin Black, Michael Janner , Y ilun Du, Ilya Kostriko v , and Sergey Le vine. T raining dif fusion models with reinforcement learning. arXiv pr eprint arXiv:2305.13301 , 2023. [27] Zichen Miao, Zhengyuan Y ang, K e vin Lin, Ze W ang, Zicheng Liu, Lijuan W ang, and Qiang Qiu. T uning timestep-distilled dif fusion model using pairwise sample optimization. In The Thirteenth International Conference on Learning Repr esentations , 2025. URL https://openreview. net/forum?id=fXnE4gB64o . [28] Y uxi Ren, Xin Xia, Y anzuo Lu, Jiacheng Zhang, Jie W u, Pan Xie, Xing W ang, and Xuefeng Xiao. Hyper-sd: Trajectory se gmented consistency model for ef ficient image synthesis. Advances in neural information pr ocessing systems , 37:117340–117362, 2024. [29] Ronald J W illiams. Simple statistical gradient-following algorithms for connectionist reinforce- ment learning. Machine learning , 8(3):229–256, 1992. [30] Shakir Mohamed, Mihaela Rosca, Michael Figurnov , and Andriy Mnih. Monte carlo gradient estimation in machine learning. J ournal of Machine Learning Resear c h , 21(132):1–62, 2020. [31] Sham Kakade and John Langford. Approximately optimal approximate reinforcement learning. In Pr oceedings of the nineteenth international conference on mac hine learning , pages 267–274, 2002. [32] Xiaoshi W u, Y iming Hao, Keqiang Sun, Y ixiong Chen, Feng Zhu, Rui Zhao, and Hongsheng Li. Human preference score v2: A solid benchmark for e valuating human preferences of text-to-image synthesis. arXiv preprint , 2023. [33] Jack Hessel, Ari Holtzman, Maxwell Forbes, Ronan Le Bras, and Y ejin Choi. Clipscore: A reference-free e valuation metric for image captioning. In Pr oceedings of the 2021 confer ence on empirical methods in natural languag e pr ocessing , pages 7514–7528, 2021. [34] Y ibin W ang, Zhimin Li, Y uhang Zang, Y ujie Zhou, Jiazi Bu, Chunyu W ang, Qinglin Lu, Cheng Jin, and Jiaqi W ang. Pref-grpo: Pairwise preference re ward-based grpo for stable text-to-image reinforcement learning. arXiv pr eprint arXiv:2508.20751 , 2025. [35] Christoph Schuhmann, Romain Beaumont, Richard V encu, Cade Gordon, Ross Wightman, Mehdi Cherti, Theo Coombes, Aarush Katta, Clayton Mullis, Mitchell W ortsman, et al. Laion- 5b: An open large-scale dataset for training next generation image-text models. Advances in neural information pr ocessing systems , 35:25278–25294, 2022. [36] Alex Fang, Albin Madappally Jose, Amit Jain, Ludwig Schmidt, Ale xander T oshev , and V aishaal Shankar . Data filtering networks. arXiv preprint , 2023. [37] Y aron Lipman, Ricky TQ Chen, Heli Ben-Hamu, Maximilian Nickel, and Matt Le. Flow matching for generativ e modeling. arXiv preprint , 2022. [38] Xingchao Liu, Chengyue Gong, and Qiang Liu. Flow straight and fast: Learning to generate and transfer data with rectified flow . arXiv preprint , 2022. [39] Simian Luo, Y iqin T an, Longbo Huang, Jian Li, and Hang Zhao. Latent consistency models: Synthesizing high-resolution images with few-step inference. arXiv pr eprint arXiv:2310.04378 , 2023. [40] Y uval Kirstain, Adam Polyak, Uriel Singer , Shahbuland Matiana, Joe Penna, and Omer Le vy . Pick-a-pic: An open dataset of user preferences for text-to-image generation. Advances in neural information pr ocessing systems , 36:36652–36663, 2023. [41] Sixian Zhang, Bohan W ang, Junqiang W u, Y an Li, T ingting Gao, Di Zhang, and Zhongyuan W ang. Learning multi-dimensional human preference for text-to-image generation. In Pr o- ceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , pages 8018–8027, 2024. [42] Martin Heusel, Hubert Ramsauer , Thomas Unterthiner , Bernhard Nessler , and Sepp Hochreiter . Gans trained by a two time-scale update rule con ver ge to a local nash equilibrium. Advances in neural information pr ocessing systems , 30, 2017. 13 [43] Fu-Y un W ang, Zhaoyang Huang, Alexander Bergman, Dazhong Shen, Peng Gao, Michael Lingelbach, Keqiang Sun, W eikang Bian, Guanglu Song, Y u Liu, et al. Phased consistency models. Advances in neural information pr ocessing systems , 37:83951–84009, 2024. [44] Tsung-Y i Lin, Michael Maire, Serge Belongie, James Hays, Pietro Perona, Dev a Ramanan, Piotr Dollár , and C Lawrence Zitnick. Microsoft coco: Common objects in context. In Eur opean confer ence on computer vision , pages 740–755. Springer , 2014. [45] Stability AI. Sd3.5. https://github.com/Stability- AI/sd3.5 , 2024. [46] Clement Chadebec, Onur T asar , Eyal Benaroche, and Benjamin Aubin. Flash dif fusion: Accel- erating any conditional dif fusion model for fe w steps image generation. In Pr oceedings of the AAAI Confer ence on Artificial Intelligence , v olume 39, pages 15686–15695, 2025. [47] Zeyue Xue, Jie Wu, Y u Gao, Fangyuan K ong, Lingting Zhu, Mengzhao Chen, Zhiheng Liu, W ei Liu, Qiushan Guo, W eilin Huang, et al. Dancegrpo: Unleashing grpo on visual generation. arXiv pr eprint arXiv:2505.07818 , 2025. [48] Junzhe Li, Y utao Cui, T ao Huang, Y inping Ma, Chun Fan, Miles Y ang, and Zhao Zhong. Mixgrpo: Unlocking flow-based grpo efficiency with mixed ode-sde. arXiv preprint arXiv:2507.21802 , 2025. 14 A Implementation Details A.1 Experiment Details W e optimize both the generator and the fake score network using the AdamW optimizer . By default, the momentum parameters β 1 and β 2 are set to 0 . 9 and 0 . 999 , respecti vely . The fake score is updated once for ev ery single generator update. All experiments are conducted on 8 NVIDIA H800 GPUs. SD3-Medium W e adopt a constant learning rate of 1 × 10 − 6 for both the generator and the f ake score. Gradient norm clipping is applied with a threshold of 1 . 0 . The models are trained at a resolution of 1024 × 1024 with a total batch size of 128 , utilizing a group size of 8 and 16 groups. Classifier-Free Guidance (CFG) is set to 7 . 0 . T o accelerate conv ergence, we initially train for 500 iterations with the Human Preference Score (HPS) weight w hps = 5 and CLIP Score weight w cs = 5 . Subsequently , we continue training for an additional 7.5k iterations, increasing both weights to 10 ( w hps = 10 , w cs = 10 ). SD3.5-Medium W e adopt a constant learning rate of 1 × 10 − 6 for both the generator and the fak e score, with gradient norm clipping set to 1 . 0 . T raining is performed at a resolution of 512 × 512 with a batch size of 128 , a group size of 8 , and 16 groups. The CFG is set to 3 . 5 . The training process is completed within 8k iterations using w hps = 10 and w cs = 10 . A.2 T raining Algorithm Details For a comprehensi ve understanding, Algorithm 2 details the specific implementation for constructing the weighted advantage aggre gation used during our training process. Algorithm 2: weightedAdd # x_curr, x_next, x_0: current, next and final sample from trajectory # alpha_next, sigma_next: noise level at diffusion process # G: trained few-step generator # timestep: diffused timestep added in forward_diffusion noise = randn_like(x_curr) pred_x0 = G(x_curr) mu_next = alpha_next * pred_x0 x_next = mu_next + sigma_next * noise # diffused x noise = randn_like(x_curr) noisy_x = forward_diffusion(pred_x0, noise, timestep) # denoise using real and fake denoiser fake_x0 = mu_fake(noisy_x, timestep) real_x0 = mu_real(noisy_x, timestep) # calculate Rdm and weighting_factor introduced by DMD dm_factor = abs(pred_x0 - real_x0).mean(dim=[1,2,3], keepdim=True) R_dm = (real_x0 - fake_x0) / sign(x_next - mu_next) / dm_factor w_dm = (1 / abs(x_next - mu_next) + 1e-7) * (sigma_next **2 / alpha_next) # calculate other rewards for j in range(K): R_oj = RewardModel(x_0) beta_dm = w_dm.mean(dim=[1,2,3], keepdim=True) # calculate advantages and sum them up A_dm = GroupNorm(R_dm) A_oj = GroupNorm(R_oj) A_sum = w_dm * A_dm for j in range(K): A_sum += beta_dm * w_j * A_oj 15 T able 4: Comparison of different noise initialization strate gies. Strategy FID ↓ Random 24.68 Shared 23.07 B Additional Experiments In this section, we provide further ablation studies to validate the design choices within our framework. By default, all experiments in this section are conducted using SD3-Medium at a resolution of 512 × 512 . For rapid ev aluation, we solely consider the HPS reward and train GNDM for 500 iterations before ex ecuting the full GNDMR process. B.1 Effect of Noise Initialization When applying GNDM, the noise initialization strategy plays a critical role. Existing approaches div erge on this front: while DanceGRPO[ 47 ] and MixGRPO[ 48 ] utilize shared initial noise across all candidates within a group, Flow-GRPO[ 18 ] employs independent random initialization. Our empirical results, summarized in T able 4, demonstrate that shared noise initialization yields superior , more robust distillation performance, as e videnced by a lo wer FID score. B.2 Design of the Adaptive W eight β dm ,t The coefficient β dm ,t is designed to calibrate the influence of the distillation weight w dm ,t . A ke y technical challenge arises from the dimensionality mismatch: w dm ,t is a pixel-wise metric, whereas reinforcement learning re wards are typically sample-wise. T o ev aluate its necessity and the optimal granularity , we compare three configurations: 1. A baseline setting with no balancing coefficient (i.e., β dm ,t = 1 ). 2. A pixel-wise application, where β dm ,t is directly set to w dm ,t . 3. A sample-wise variant, where β dm ,t is computed by taking the mean of w dm ,t ov er all dimensions except for the batch dimension. Experimental results in Figure 7 demonstrate that the sample-wise formulation of β dm ,t is significantly more effecti ve at improving the HPS than both the pixel-wise and baseline configurations, as it provides a much more stable re ward signal across the generated samples. B.3 Sensitivity Analysis of the Reward W eight w hps T o further analyze the sensiti vity of our adaptiv e weight, we v ary the rew ard weight for R hps , denoted as w hps , across the set { 1 , 10 , 15 , 20 } . As shown in Figure 9, enlarging w hps improv es the overall HPS performance. Furthermore, without integrating β dm ,t , the model struggles to improve the tar get HPS consistently , underscoring the need for our adaptive weight β dm ,t . B.4 Sensitivity of Clip Range η in Importance Sampling The clip range η is a piv otal hyperparameter for importance sampling correction. While a larger η permits more aggressiv e policy updates, it often introduces training instability . Conv ersely , an excessi vely lo w η restricts learning progress and may fail to correct for distribution shifts from the behavior policy . As shown in Figure 8, unlike standard GRPO-based fine-tuning, which typically uses a highly conservati ve clip range (e.g., 1 × 10 − 5 ), our distillation framework benefits from a larger η to enable rapid, efficient con v ergence. 16 600 800 1000 1200 1400 T raining Steps 31.2 31.4 31.6 31.8 32.0 32.2 HPS sample-wise pix el-wise baseline Figure 7: Design of the adaptiv e weight. 600 800 1000 1200 1400 T raining Steps 30.0 30.5 31.0 31.5 32.0 32.5 HPS clip=0.05 clip=0.5 clip=2.5 clip=5 Figure 8: Effect of the clip range. 600 800 1000 1200 1400 T raining Steps 31.4 31.6 31.8 32.0 32.2 32.4 32.6 HPS w=1 w=10 w=15 w=20 (a) Sensitivity analysis of w with β dm ,t . 600 800 1000 1200 1400 T raining Steps 30.6 30.8 31.0 31.2 31.4 HPS w=1 w=10 w=15 w=20 (b) Sensitivity analysis of w without β dm ,t . Figure 9: Sensitivity analysis of the re ward weight w . C Additional Qualitative Results Figure 10 presents additional qualitati ve comparisons between our proposed GNDMR and se veral state-of-the-art distillation baselines. Across various comple x prompts, GNDMR consistently yields more aesthetically pleasing results with enhanced textural detail and structural inte grity . 17 Base Model Hyper- SD LCM Flash - SD3 DMDR GNDMR Two pilots in uniform stand in front of an airplane on a blue background. ufo sightings, with ufo spacecraft a nd red lanterned light a snap back new yor k yankees cap A cat holding a sign that says '4 steps' A close up of an old elderly man w i th green eye s l ooking straight at the camera A raccoon trappe d insi de a glass jar f ul l of colorful candie s, t he backgr ound i s steamy with vivid colors Figure 10: Qualitative results. T ext prompts are selected from DMDR [ 13 ] (top three rows) and Flash-SD [46] (bottom three rows). 18

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment