GeoHCC: Local Geometry-Aware Hierarchical Context Compression for 3D Gaussian Splatting

Although 3D Gaussian Splatting (3DGS) enables high-fidelity real-time rendering, its prohibitive storage overhead severely hinders practical deployment. Recent anchor-based 3DGS compression schemes reduce redundancy through context modeling, yet over…

Authors: Xuan Deng, Xi, ong Meng

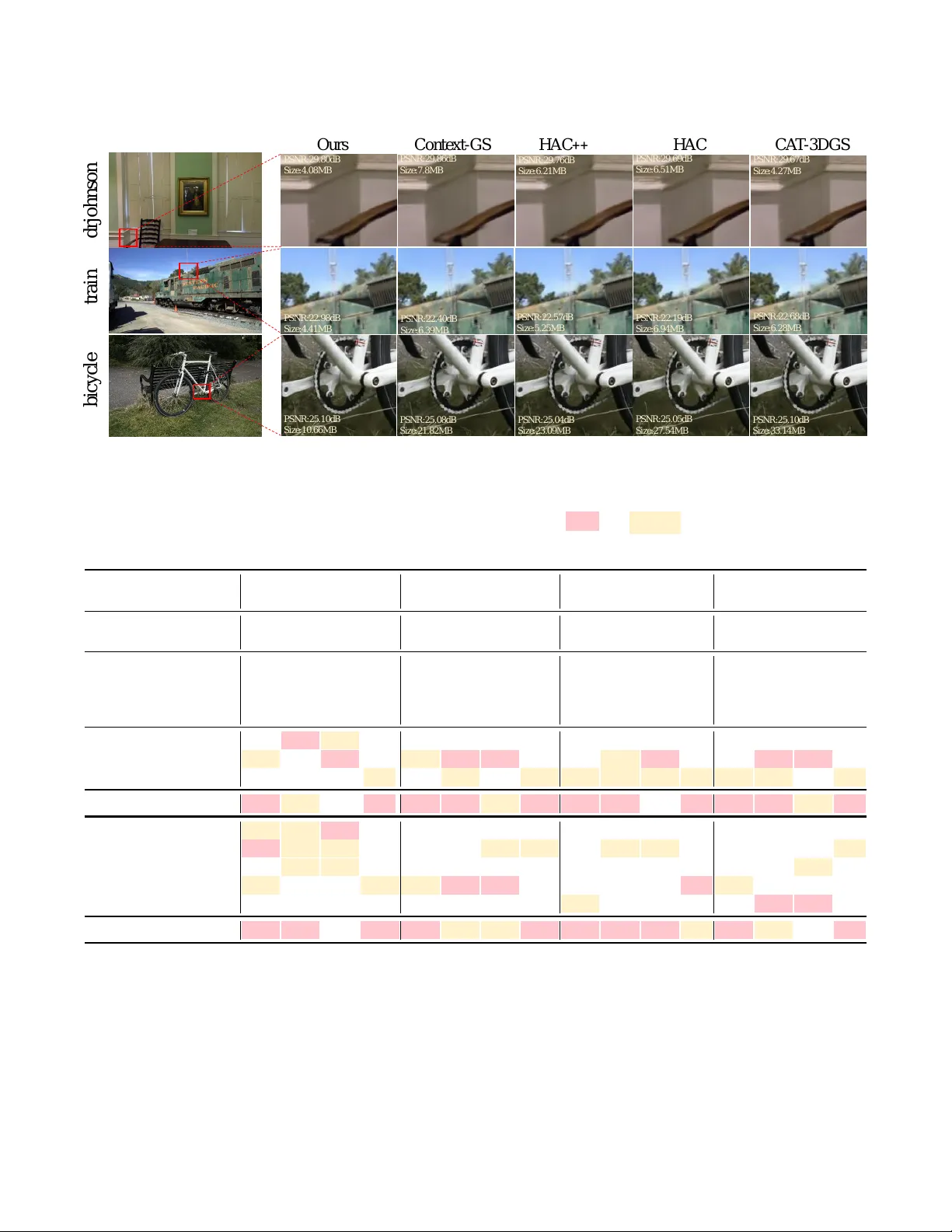

Unpublishe d w orking draft. Not for distri butio n. GeoHCC: Local Ge ometr y- A ware Hierar chical Context Compression for 3D Gaussian Splaing Xuan Deng Harbin Institute of T echnology , Peng Cheng Laboratory Shenzhen, China dengxuan168168@gmail.com Xiandong Meng Peng Cheng Laboratory Shenzhen, China mengxd@pcl.ac.cn Hengyu Man Harbin Institute of T echnology Harbin, China manhengyu@hotmail.com Qiang Zhu Peng Cheng Laboratory , Shenzhen, China zhuqiang@std.uestc.edu.cn Tiange Zhang Peng Cheng Laboratory , Shenzhen, China zhangtg.@pcl.ac.cn Debin Zhao Harbin Institute of T echnology Harbin, China dbzhao@hit.edu.cn Xiaopeng Fan Harbin Institute of T echnology , Peng Cheng Laboratory , Harbin Institute of T echnology Suzhou Research Institute Harbin, China fxp@hit.edu.cn Abstract Although 3D Gaussian Splatting (3DGS) enables high-delity real- time rendering, its pr ohibitive storage ov erhead severely hinders practical deployment. Recent anchor-based 3DGS compression schemes reduce redundancy through context modeling, yet ov er- look explicit geometric dependencies, leading to structural degra- dation and suboptimal rate-distortion performance. In this paper , we propose GeoHCC, a ge ometry-aware 3DGS compression frame- work that incorporates inter-anchor geometric correlations into anchor pruning and entropy coding for compact representation. W e rst introduce Neighborhood-A ware Anchor Pruning (NAAP), which evaluates anchor importance via weighted neighborho od feature aggregation and merges redundant anchors into salient neighbors, yielding a compact yet geometr y-consistent anchor set. Building upon this optimized structure, we further develop a hi- erarchical entropy coding scheme, in which coarse-to-ne priors are exploited thr ough a lightweight Geometry-Guided Convolution (GG-Conv) operator to enable spatially adaptive context modeling and rate-distortion optimization. Extensive experiments demon- strate that GeoHCC eectively resolves the structure preservation bottleneck, maintaining superior geometric integrity and rendering delity over state-of-the-art anchor-based approaches. CCS Concepts • Computer vision ; • Theory of computation ; • Data compres- sion. ; A CM Reference Format: Xuan Deng, Xiandong Meng, Hengyu Man, Qiang Zhu, Tiange Zhang, Debin Zhao, and Xiaopeng Fan. 2018. GeoHCC: Local Geometr y-A ware Hierarchical Context Compression for 3D Gaussian Splatting. In Proceedings of Make sure to enter the correct conference title from your rights conrmation email (Conference acronym ’XX). ACM, New Y ork, NY, USA, 10 pages. https: //doi.org/XXXXXXX.XXXXXXX 27 27. 05 27. 1 27 . 15 27. 2 27 . 25 27. 3 130 150 170 190 P S N R (d B ) FPS O urs H A C+ + Co n t e x t -G S CA T -3D G S HAC 19. 48MB 20. 82M B 29. 72M B 20. 14M B 21. 8MB Figure 1: T rade-o between rendering FPS and PSNR for dierent metho ds. Marker size denotes storage cost, where smaller markers correspond to lower storage. 1 Introduction 3D Gaussian Splatting (3DGS) [ 18 ] has emerged as a powerful ex- plicit 3D representation that models scenes with a set of anisotropic Gaussians with learnable attributes, including position, co vari- ance, opacity , and spherical harmonics, enabling ecient optimiza- tion and high-quality novel-view synthesis. Its optimized rasteriza- tion supports fast convergence and real-time rendering, oering a strong alternative to implicit neural representations. However , high-quality reconstruction typically requires millions of primi- tives, leading to considerable storage and memory overhead. This redundancy hinders practical deployment, especially on resource- constrained platforms, and has motivate d extensive research on compact 3DGS representations and compression. Early 3DGS compression methods [ 10 , 11 , 13 , 19 , 21 , 30 , 38 , 58 ] mainly reduce storage through primitive pruning or vector quanti- zation. While eective, they focus on compressing attribute value of each individual gaussian and largely ignore the inherent geometric 2026-03-31 02:07. Page 1 of 1–10. Unpublishe d w orking draft. Not for distribution. Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Trovato et al. correlation among Gaussians. Although such dependencies have been widely exploited in image and video compression [ 7 , 15 , 17 , 22 – 24 , 31 – 33 , 44 , 50 ], modeling them within 3DGS remains non-trivial due to the irregularly distribution of Gaussian primitives in 3D space. Locality-aware-GS [ 45 ] attempts to incorporate local geo- metric relations directly over massive unstructured Gaussian prim- itives, but the cost of neighb orhood correlation search becomes prohibitive at large scale. Conversely , Anchor-based representa- tions, e.g., Scaold-GS [ 29 ], alleviate this issue by hierachically clustering primitives ar ound anchors and thus provide a more com- pact and structured representation. Nevertheless, Scaold-GS treats anchors as isolated entities, leaving the local geometric correlations among neighboring anchors underexplored. T o further alleviate spatial redundancy , recent advances have in- corporated more structured priors into anchor-based compression framework. Inspired by NeRF feature grids [ 14 , 34 , 36 ], HAC [ 4 ] and HA C++ [ 5 ] leverage hash grids for context modeling. Context- GS [ 47 ] takes a dierent approach by organizing anchors hier- archically to capture dependencies across representation levels, thus improving entr opy modeling. Despite these advances, a fun- damental limitation persists: current methods largely overlook the intrinsic geometric structures among anchors. Grid-based context models (e.g. HA C) rely on coarse extrinsic discretization and of- ten fail to capture ne-grained geometric relationships in irregular anchor clouds. Hierarchical methods such as Context-GS [ 47 ], de- spite their stronger r epresentational capacity , typically construct multi-level context structures on top of heuristic pruning strate- gies, such as opacity-based anchor removal. By directly discarding low-importance anchors, these methods may disrupt local geomet- ric continuity and weaken the structural foundation required for reliable coarse-to-ne context prediction. Since ner levels can no longer inherit suciently stable geometric priors from the base layers, ultimately leading to suboptimal structure preservation and entropy estimation. In this w ork, we argue that geometry-aware context modeling is crucial to unlocking the full compr ession potential of 3DGS. Rather than ser ving merely as attribute carriers, anchors are treated as nodes in a geometric graph where local neighb orhoods exhibit strong geometric correlations. Based on this insight, we propose GeoHCC, a geometry-aware 3DGS compression framework that in- corporates inter-anchor geometric correlations into anchor pruning and entropy coding for compact representation. W e rst introduce Neighborhood-A ware Anchor Pruning (NAAP), which evaluates anchor importance via graph aggregation and merges redundant anchors into salient neighbors. Building on the preserved local struc- ture, we further design a hierarchical entrop y coding scheme with geometry-guide d indexed context. T o implement this eciently , we design a lightw eight Geometry-Guided Convolution (GG-Conv) operator that aggregates contextual information from lo cal geomet- ric features, enabling the entropy model to learn spatially adaptive and geometry-consistent priors for more ecient compression. As shown in Figur e 1, experiments on BungeeNerf [ 52 ] show that GeoHCC achieves a favorable FPS–PSNR trade-o than existing methods while requiring lower storage cost. Our main contributions are summarized as follows: • W e propose GeoHCC, a local geometry-aware hierarchical context compression frame work for 3D Gaussian Splatting that reformulates anchor compression via geometry-induced graphs for consistent pruning and ecient hierarchical en- tropy modeling. • T o preserve the local geometric p erception capability of an- chors, we propose Neighborho od-A war e Anchor Pruning (NAAP), a graph-based adaptive sparsication strategy . By evaluating geometric importance and merging redundant anchors into their salient neighbors, NAAP preser ves the inherent geometric correlation among anchors, producing a compact yet geometry-consistent representation with mini- mal delity loss. • T o exploit local geometric correlation in sparsied Gaussians, we propose hierarchical geometry-guided context modeling via a lightweight GG-Conv . By capturing spatially adaptive priors over irregular neighborhoods, our method achieves accurate probability estimation and state-of-the-art compres- sion with high rendering quality . 2 RelatedW ork 2.1 Grapth-based 3D data mo deling Graph Signal Processing (GSP) [ 39 , 53 ] provides a framework for analyzing signals on irregular domains by modeling data as nodes in a graph. In 3D vision, it has been widely applied to point cloud analysis (e .g., DGCNN [ 48 ] and PointNet++ [ 41 , 42 ]), where local graphs encode geometric relations for feature aggregation. Simi- lar principles have also been applied to point cloud compression, where exploiting inter-point correlations improves coding e- ciency [ 8 , 9 , 12 ]. In this paper , we propose GeoHCC, which re- formulates anchor-based 3DGS as an irregular geometric graph to jointly optimize anchor pruning and entropy coding via local geometry guidance, achieving signicant bitrate r eduction while preserving high-delity structural details. 2.2 Compact 3DGS Representation 3D Gaussian Splatting (3DGS) [ 18 ] achieves high-delity and ef- cient radiance eld rendering, yet its explicit and unstructured Gaussian representation is less regular than the grid-base d rep- resentations of NeRFs [ 2 , 34 , 51 ], leading to prohibitive storage overhead that necessitates advanced compression techniques. Existing methods primarily tackle the storage challenge of 3D Gaussian Splatting by either reducing the number of primitives or learning compact attribute repr esentations, without explicit rate- distortion optimization. Pruning-based approaches [ 1 , 6 , 10 , 21 , 27 , 37 , 40 , 43 ] eliminate less contributive Gaussians using heuristic criteria, such as learnable masks, gradient magnitudes, or view- dependent signicance. Complementary attribute compression tech- niques include spherical harmonics co ecient pruning [ 35 ] and vector quantization [ 13 , 21 , 46 ]. In addition to these primitive-level techniques, a representative anchor-based approach is Scaold- GS [ 29 ], which uses anchor points to hierarchically distribute local 3D Gaussians and predicts their attributes on-the-y conditioned on viewing direction and distance within the view frustum. In- spired by the anchor-based representation of Scaold-GS [ 29 ], a series of recent works has focused on rate-distortion (RD) optimize d 2026-03-31 02:07. Page 2 of 1–10. Unpublishe d w orking draft. Not for distribution. GeoHCC: Local Geometr y- A ware Hierarchical Context Compression for 3D Gaussian Splaing Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y compression by improving entrop y modeling through the exploita- tion of spatial or structural priors. These anchor-based metho ds have achieved impr essive storage reduction while maintaining high rendering quality . For instance, HA C [ 4 ] and HA C++ [ 5 ] employ hash-grid-based spatial organization to derive compact context r ep- resentations. Context-GS [ 47 ] and CompGS [ 28 ] further exploit hi- erarchical structure and anchor-primitive dependencies to improve entropy coding. Meanwhile, CA T -3DGS [ 54 ] adopts channel-wise autoregressive models to capture intra-attribute correlations, and Liu et al. [ 26 ] enhance density estimation through a Mixture-of- Priors formulation. While these methods achieve impressive rate-distortion perfor- mance in 3D Gaussian Splatting compression, we argue that the core of eective compr ession lies in the entropy model. A well-designed entropy mo del can leverage local ge ometric correlations among anchors, enabling ecient coding and reducing storage require- ments. Howev er , curr ent anchor-based 3DGS compression methods still have room for impr ovement in entrop y model design, partic- ularly in exploiting lo cal geometric priors. Drawing inspiration from geometr y-aware modeling in point cloud compression and graph neural networks, we propose a local geometry-aware entropy model. This model represents the unstructured anchor cloud as an irregular ge ometric graph and uses a lightweight Geometry-Guide d Convolution (GG-Conv ) to adaptively aggr egate geometry-aware contextual priors from neighboring anchors. 3 Methodology 3.1 Preliminaries 3D Gaussian Splatting (3DGS). 3DGS [ 18 ] utilizes a collection of anisotropic 3D neural Gaussians to depict the scene so that the scene can be eciently rendered using a tile-based rasterization technique. Beginning from a set of Structure-from-Motion (SfM) points, each Gaussian point is represented as follows: 𝐺 ( p ) = exp − 1 2 ( p − 𝝁 ) ⊤ 𝚺 − 1 ( p − 𝝁 ) , (1) where p denotes the coordinates in the 3D scene, and 𝝁 and 𝚺 represent the mean position and covariance matrix of the Gaussian point, respectively . T o ensure the positive semi-deniteness of 𝚺 , it is parameterized as 𝚺 = RSS ⊤ R ⊤ , where R and S denote the rotation and scaling matrices, respectively . Furthermore, each neural Gauss- ian possesses an opacity attribute 𝛼 ∈ R 1 and view-dependent color c ∈ R 3 , modeled via spherical harmonics [ 56 ]. All attributes of the neural Gaussians, i.e., { 𝝁 , R , S , 𝛼 , c } , are learnable and optimize d by minimizing the reconstruction loss of images rendered through tile-based rasterization. 3.2 Overview Building up on the compact anchor-base d representation of Scaold- GS [ 29 ], we propose GeoHCC, a local ge ometry-aware hierarchical context compression framwork for 3DGS, as illustrated in Figure 2. W e dene each anchor as a tuple a = { p , f , s , o } , comprising po- sition p ∈ R 3 , feature f ∈ R 𝐶 , scaling factor s ∈ R 3 , and osets o ∈ R 𝐾 × 3 . The pipeline b egins with anchor densication inher- ited from Scaold-GS, which spawns additional anchors based on view-space gradients to capture ne-grained scene details. T o miti- gate anchor redundancy and enhance the compression eciency of 3DGS, we introduce the Neighborhood-A war e Anchor Pruning (NAAP) module (Sec. 3.3). Instead of relying on simple heuristic thresholding (e.g., xed opacity values), NAAP constructs a lo- cal geometric graph to evaluate the importance of anchors in a neighborhood-aware manner and adaptively merges the attributes of r edundant anchors into their salient neighbors, producing a com- pact yet locally geometry-consistent representation. Based on the pruned anchor set, we further organize the anchors into a multi- level hierarchy for ecient entropy coding. Specically , we develop a hierarchical entrop y coding scheme with geometry-guide d con- text (Sec. 3.4). T o enhance context modeling capability , we employ a lightweight Geometry-Guided Convolution (GG-Conv ) that ag- gregates local geometric priors from the irregular neighborhoo d graph as coarse-grained information to guide ner-level entr opy estimation. Finally , the decoded anchors generate 3D Gaussians via learnable osets, achieving high-delity rasterization with ex- tremely low storage ov erhead. 3.3 Neighborhoo d- A ware Anchor Pruning Conventional 3DGS pruning strategies typically rely on point-wise attribute thresholds (e .g., opacity) to remo ve less important Gauss- ian anchors. Howev er , such independent evaluation ignores local geometric distributions, may inadvertently eliminate structurally essential anchors, especially in low-density regions. T o address this limitation, we propose Neighborhood-A ware Anchor Pruning (NAAP), which evaluates anchor importance within a local ge o- metric neighborhood context and preserves structural continuity through adaptively merges redundant information. A s illustrated in Figure 3, NAAP consists of four steps: Step 1 & 2: Graph Construction and Imp ortance Evaluation. W e initially construct a local geometric graph G = ( A , E ) to capture the local geometric correlations among anchors, where A = { a 1 , . . . , a 𝑁 } represents the set of anchors and E denotes the edges connecting neighboring anchors, each anchor a 𝑖 possesses a position p 𝑖 and an accumulated average opacity ¯ 𝛼 𝑖 , which is de- rived by accumulating the opacity values of its associated neural Gaussians ov er N training iterations. W e conne ct each anchor to its 𝐾 -nearest neighbors within a radius 𝑟 , forming the local neighb or- hood N ( 𝑖 ) , which provides a lightweight representation of local geometric structure for importance estimation. T o distinguish structurally essential anchors from noise or re- dundant anchors, we dene a neighborhood-weighted imp ortance score. Specically , for each anchor (taking a 𝑖 as an example), we rst compute a distance-based weight 𝑤 𝑖 𝑗 = ( ∥ p 𝑖 − p 𝑗 ∥ 2 + 𝜖 ) − 1 between between itself and its neighbor a 𝑗 ∈ N ( 𝑖 ) , which assigns larger weights to closer neighbors. Then a smoothed neighborhood opacity Φ 𝑖 is calculated to aggregate local context: Φ 𝑖 = ¯ 𝛼 𝑖 + Í 𝑗 ∈ N ( 𝑖 ) 𝑤 𝑖 𝑗 ¯ 𝛼 𝑗 1 + Í 𝑗 ∈ N ( 𝑖 ) 𝑤 𝑖 𝑗 . (2) The nal imp ortance score 𝜉 𝑖 is formulated as a linear interpolation between the anchor-specic opacity and its neighborhood-aware observation: 𝜉 𝑖 = ( 1 − 𝜆 ) ¯ 𝛼 𝑖 + 𝜆 Φ 𝑖 . (3) 2026-03-31 02:07. Page 3 of 1–10. Unpu blish e d w orking draft. Not for distribution. Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Trovato et al. Framew ork of GeoHC C C l omap Ini t A n c h o r D ensi f i cat i on&Neighborhood - A w ar e A ncho r Pruni ng Level D i vi de A nchor - bas ed G aus si an Level 1 A nchor - bas ed G aus si an L evel 2 L ocal G eom et ry - G u i d ed C ont ext MLP MLP μ σ Ent r opy Mode l ( μ , σ ) ( μ , σ ) Attribu te F eatur e: 𝑭 Sca ling : 𝑺 O f f set: 𝑶 Entr opy Mode l Po si tio n ( x ,y ,z ) D eco de D i st ance, di r ect i on Po si tio n s C o lo r s Op acity s Scalin g s Ro tation s ML P s Ren d er in g R ender ed RG B i m age H i era r chi cal G eom et ry - G ui de d C ont ex t Mo del i ng Anchor Bit st re am 𝑜 1 𝑜 2 𝑜 3 𝑜 4 𝑜 𝑖 D eco de and R ende ri ng Figure 2: O verview of the proposed 3DGS compression framework. Starting with Colmap initialization, anchors rst undergo densication to capture ne-grained scene details. T o optimize the resulting structure, we introduce Neighb orhood-A ware Anchor Pruning (NAAP), which constructs a local graph to evaluate geometric importance. Unlike simple removal, NAAP adaptively merges the attributes of redundant anchors into their salient neighbors, producing a compact yet locally geometr y- consistent representation. Subsequently , the preserved anchors are organized into Hierarchical Geometr y-Guided Context Modeling module, where we employ a Local Ge ometry-Guided Context to guide entropy coding. Finally , the decode d attributes (e.g., colors, opacitys, scalings, rotations) generate 3D Gaussians via learnable osets for high-delity rasterization. A nc ho r Po s i t i o n: 𝑝 𝑖 O pa c i t y : ത 𝛼 𝑖 Sc a l i ng : 𝑠 𝑖 O f f s e t : 𝑜 𝑖 N e i g h b o r h o o d - A w a r e A n c h o r Pr u n i n g Op ac it y ( α ) L o c a l N e i gh b o r P r e s e r v e r ╳ Re m ove L ow - S c or e An chor s A nc ho r P o s i t i o n: 𝑝 𝑖 O pac i t y : ϕ 𝑖 S c a l i ng : 𝑆 𝑖 O f f s e t : 𝑂 𝑖 P r u n ed An chor s M e r ge L ow - Scor e An ch or s A nchor - bas e d G r ap h Im po r t a n c e S c o r e Ca l ul a t i o n ( 𝝃 𝒊 ) W e i gh t e d A t t r i b ut e T r a n s f o m e r x : R et ai ned C r i t i c al L o w - O pa c i t y A nc ho r : P r uned A nc ho r x x x x x x x x Figure 3: Neighborhood-A ware Anchor Pruning (NAAP) pipeline starts by constructing an anchor-based geomet- ric graph, with nodes colored by average opacity (darker = higher). Importance scores ( 𝜉 𝑖 ) are computed via neigh- borhood aggregation. Low-score anchors are identied as redundant and asso ciated with their nearest salient neigh- bor (Local Neighb or Preserver). Instead of direct removal, a W eighted Attribute Transformer merges attributes from pruned anchors (opacity ¯ 𝛼 𝑖 , scaling 𝑠 𝑖 , oset 𝑜 𝑖 ) into the near- est retained anchor , yielding fuse d attributes ( 𝜙 𝑖 , 𝑂 𝑖 , 𝑆 𝑖 , etc.) to preserve geometric and app earance information in a com- pact structure. where 𝜆 ∈ [ 0 , 1 ] is a balancing factor . Given a threshold 𝜏 , we identify redundant anchors using the pruning mask: M 𝑝𝑟 𝑢𝑛𝑒 = { a 𝑖 | 𝜉 𝑖 < 𝜏 } . (4) This design enables anchors residing in structurally signicant clusters to be pr eserved even if their individual opacity is relatively low . Step 3 & 4: Neighbor Association and Attribute Merging. Un- like previous pruning strategies that directly discard anchors in M prune , we instead consolidate their information into the surviving geometry via a dedicated W eighted Attribute Transformer . For each redundant anchor a 𝑟 ∈ M prune , we identify its nearest salient neighbor a 𝑘 ∈ A \ M prune as the target for information transfer . Let lowercase 𝜃 ∈ { o , s , 𝛼 } and uppercase Θ ∈ { O , S , 𝜙 } denote the raw and fused attributes (osets, scaling, and opacity), respectively . The transformation is governed by: Θ 𝑘 = ( 1 − 𝛾 ) 𝜃 𝑘 + 𝛾 𝜃 𝑟 , (5) where 𝛾 controls the contribution of the pruned anchor . This integra- tion eectively condenses the ge ometric and appearance attributes of removed regions into their nearest neighbors ( 𝜃 → Θ ), ensuring that comprehensiv e scene information is preserved rather than lost. Finally , eliminating the anchors in M prune yields a compact, locally geometry-consistent representation. As shown in Fig. 3, conventional threshold-based pruning would discard low-opacity anchors (highlighted by the red circle) induced by optimization noise. In contrast, NAAP can identify non-salient nodes within high-density clusters, which often play a crucial role in modeling key scene details during rendering. 3.4 Hierarchical Geometr y-Guided Context Modeling While hierarchical context models [ 25 , 28 , 47 ] have shown promis- ing performance for anchor-based 3DGS compression, they of- ten suer from structural ineciencies. Specically , existing ap- proaches typically rely on heuristic neighb or po oling or rigid spatial partitioning, which may fail to preserve precise ge ometric corre- spondence acr oss hierarchy levels. As a result, the retrieved context is not always well-aligned with the query anchors, leading to sub- optimal entropy estimation. T o overcome these limitations, we 2026-03-31 02:07. Page 4 of 1–10. Unpublishe d w orking draft. Not for distribution. GeoHCC: Local Geometr y- A ware Hierarchical Context Compression for 3D Gaussian Splaing Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y k e rn e l L e v e l 2 L e v e l 1 ( 1 , ) L ( K , 3 + ) ML P ( K , ) ( K, ) ╳ ( K , ) L ╳ L o o k u p Mu l t i p l i cat i o n H ierar chical G eo m et ry - G uide d Co nt ex t M o deling Re tri e v a l GG - Co nv c e n t ro i d a n c h o r o u t l i n e s B a l l K N N B a l l K N N No r m I n t e r p Figure 4: This module exploits cross-level correlations by mapping Level 1 attributes to Level 2 quer y anchors via a deterministic mapping M to obtain the preliminar y attributes A ( 2 ) 𝑝𝑟 𝑒 . Then, a 𝑘 -NN graph is constructe d over A ( 2 ) 𝑝𝑟 𝑒 to form the graph-base d geometric prior G ( 2 ) 𝑔𝑒𝑜 . Within the GG-Conv (dashed b ox): (1) Geometry Branch: Relative spatial osets Δ p 𝑖 𝑗 between the query anchor and its neighbors are used to query a learnable 3D kernel (W eight Look-up T able) via trilinear interpolation, generating dynamic geometric weights w 𝑖 𝑗 that explicitly encode the lo cal spatial layout. (2) Feature Branch: This branch extracts semantic- geometric embe ddings e 𝑖 𝑗 by transforming the concatenated residual features Δ f ( 1 ) 𝑖 𝑗 and relative osets Δ p 𝑖 𝑗 through an MLP 𝜙 . Finally , the dynamic weights modulate these embe ddings to produce the rened geometry-aware feature h 𝑖 , which provides a structured foundation for deriving the nal lo cal geometry-guide d context a 𝑖 ( 𝑐 𝑡 𝑥 ) for Level 2 attribute entropy coding. propose a streamlined two-lev el autoregressive model that explic- itly enforces geometric alignment between coarse and ne anchors. As shown in Figure 4, by strictly aligning the coarse geometry (Level 1) with ne attributes (Level 2), our method achieves pr ecise context retrieval and r enement via a three-step process: 1) Hierarchical Partitioning. T o leverage multi-scale spatial cor- relations, we organize the anchor set V into a two-level hierarchy ( L = 2 ) following Context-GS [ 47 ]. W e rst derive a coarse repre- sentation A ( 1 ) by quantizing anchor positions with a scaled voxel size 𝜖 1 = 𝑠 · 𝜖 0 : A ( 1 ) = { a 𝑗 ∈ V | 𝑗 = min { 𝑖 : ⌊ 𝑝 𝑖 / 𝜖 1 ⌋ } } , (6) where 𝜖 0 is the initial ne-grained voxel size and 𝜖 1 represents the coarse-grained resolution controlled by the scaling factor 𝑠 . The ne level is then dened as A ( 2 ) = V \ A ( 1 ) to ensure a disjoint structure. This hierar chy allows A ( 1 ) to serve as a coarse spatial prior for the entropy coding of A ( 2 ) . 2) Inter-Level Context Retrieval. T o fully exploit the information provided by the coarse r epresentation, we propose an inter-level retrieval strategy that transfers coarse-level attributes ( e.g., feature f , scaling s , and osets 𝑜 ) to the ne-level query anchors, establish- ing a preliminary feature representation for subsequent geometric context renement. This strategy utilizes the v oxel grid as a spatial bridge to align attributes across levels. Sp ecically , based on the voxel partitioning rules in Eq. 6, we establish a deterministic map- ping M that associates each ne-level query anchor a ( 2 ) 𝑗 ∈ A ( 2 ) with its corresponding coarse-level parent a ( 1 ) 𝑖 within the same voxel, yielding a preliminary context set A ( 2 ) 𝑝𝑟 𝑒 : A ( 2 ) 𝑝𝑟 𝑒 = { ( p ( 2 ) 𝑗 , f ( 1 ) 𝑖 , s ( 1 ) 𝑖 , o ( 1 ) 𝑖 ) | a ( 2 ) 𝑗 ∈ A ( 2 ) , 𝑖 = M ( a ( 2 ) 𝑗 ) } , (7) where p ( 2 ) 𝑗 is the ne-level position and the tuple ( f ( 1 ) 𝑖 , s ( 1 ) 𝑖 , 𝑜 ( 1 ) 𝑖 ) denotes the decoded attributes inherite d from the 𝑖 -th coarse-level anchor in A ( 1 ) . T o e xplicitly capture the geometric relations among neighboring contexts and model local structure dependencies, we construct a Ball k-nearest neighb or (Ball k-NN) graph [ 42 ] over A ( 2 ) 𝑝𝑟 𝑒 based on the spatial positions of the ne-level anchors. This graph prior , denoted as G ( 2 ) 𝑔𝑒𝑜 = ( A ( 2 ) 𝑝𝑟 𝑒 , E ) , where E represents the edges connecting spatially neighb oring anchors, encapsulates both the inherited coarse-level pr eliminary contexts and the ne-level local geometric corr elations. It provides a structured foundation for subsequent geometr y-guided feature renement in the GG-Conv . 3) Geometry-Guided Convolution (GG-Conv). For the graph- based anchor prior G ( 2 ) 𝑔𝑒𝑜 with its 𝑘 -NN graph structure, a straight- forward approach is to directly process these retrieved features through a Multi-Layer Perceptron (MLP), as adopted in Context- GS [ 47 ]. Howev er , while G ( 2 ) 𝑔𝑒𝑜 provides a coarse graph-structur ed contextual priors, simply concatenating them cannot ade quately capture the ne-grained ge ometric correlations in irregular and unstructured anchor distributions. T o address this, we propose GG- Conv , which performs adaptive feature renement upon the local neighborhood. As illustrated in Fig. 4, GG-Conv renes the prelim- inary features of each quer y anchor a ( 2 ) 𝑖 ( 𝑝𝑟 𝑒 ) by aggregating context priors from its 𝑘 -NN neighbors a ( 2 ) 𝑗 ( 𝑝𝑟 𝑒 ) through two cooperative branches. Geometry Branch : T o capture local ge ometric correlations, we compute normalized relative osets Δ ˆ p 𝑖 𝑗 = Norm ( p 𝑗 − p 𝑖 ) to query a learnable 3D kernel T ∈ R 𝐷 × 𝐷 × 𝐷 × 𝐶 . Specically , Δ ˆ p 𝑖 𝑗 is treated as a continuous query coordinate within the grid space of T , from which a dynamic kernel w 𝑖 𝑗 is retrieved via trilinear interpolation: w 𝑖 𝑗 = Tri-Interp ( Look-up ( T , Δ ˆ p 𝑖 𝑗 ) ) (8) Here Tri-Interp ( · ) interpolates over the eight nearest integer grid cells in T to perceive ne-grained geometric variations b eyond dis- crete grid resolutions. This mechanism ensures spatially-continuous weighting for eective geometry-aware feature aggregation. 2026-03-31 02:07. Page 5 of 1–10. Unpublishe d w orking draft. Not for distribution. Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Trovato et al. Feature Branch : Concurrently , the feature branch extracts semantic- geometric correlations. Specically , we rst concatenate the resid- ual features Δ f 𝑖 𝑗 = f 𝑗 − f 𝑖 with the relative spatial osets Δ p 𝑖 𝑗 . The concatenated feature is then tranformed via an MLP to extract rened semantic embeddings that are implicitly aware of the lo cal geometry: e 𝑖 𝑗 = 𝑀 𝐿𝑃 ( [ Δ f 𝑖 𝑗 ∥ Δ p 𝑖 𝑗 ] ) (9) where [ · ∥ ·] denotes the concatenation operation. Finally , the dy- namic geometric weights from the rst branch explicitly mo dulate these embeddings to produce the geometr y-guided context f 𝑖 ( 𝑐 𝑡 𝑥 ) : f 𝑖 ( 𝑐 𝑡 𝑥 ) = 𝑗 ∈ N ( 𝑖 ) w 𝑖 𝑗 ⊙ e 𝑖 𝑗 (10) where ⊙ denotes the element-wise product. The Local Geometr y-Guided Context a 𝑖 ( 𝑐 𝑡 𝑥 ) is formed by con- catenating the rened geometr y-aware feature f 𝑖 ( 𝑐 𝑡 𝑥 ) with inherited attributes ( p 𝑖 , s 𝑖 , 𝑜 𝑖 ). By integrating local neighborhood correlations with global geometric priors, a 𝑖 ( 𝑐 𝑡 𝑥 ) provides a comprehensive r ep- resentation of the anchor’s state . This a 𝑖 ( 𝑐 𝑡 𝑥 ) is subsequently pro- jected via an MLP to predict distribution parameters: 𝜇, 𝜎 , Δ adj = MLP ( a 𝑖 ( 𝑐 𝑡 𝑥 ) ) , (11) where 𝜇 and 𝜎 characterize the attribute distribution, and Δ adj adaptively adjusts the quantization step based on local structural complexity . By operating on graph-structured priors, GG-Conv enables spatially-adaptive bitrate allocation, prioritizing complex geometric regions while maintaining high compression eciency in smoother areas. 3.5 Bitstream Composition. The nal bitstream is composed of three parts: 𝑅 = 𝑅 geo + 𝑅 attr + 𝑅 model , where 𝑅 geo , 𝑅 attr , and 𝑅 model denote the bitrate associated with anchor geometry , hierarchical anchor attributes, and model parameters, respectively . Specically , 𝑅 geo represents the e xplicit anchor coordinates, which are losslessly compressed after quanti- zation. 𝑅 attr comprises the hierarchical attributes (featur es, scalings, osets) of both A ( 1 ) and A ( 2 ) , encoded via the proposed hierarchi- cal geometr y-guided context modeling. 𝑅 model stores the quantized weights of the shared MLPs and GG-Conv modules as a lightweight model header . 3.6 Optimization The proposed GeoHCC for 3DGS compression is optimized under a rate-distortion objective: L = L render + 𝜆 L anchor , (12) where L render denotes the rendering loss inherited from Scaold- GS [ 29 ] and ser ves as the distortion term, while L anchor denotes the estimated entropy-coded bitrate of the anchor attributes, including positions, opacities, and feature descriptors, following HA C++ [ 5 ] and Context-GS [ 47 ], and serves as the rate term. The hyperparam- eter 𝜆 > 0 controls the trade-o between reconstruction delity ( L render ) and compression eciency ( L anchor ). 4 Experiments 4.1 Implementation Details W e implement GeoHCC within the Py T orch framew ork, building upon the ocial Scaold-GS codebase [ 29 ]. T o ensur e the con- vergence of geometry-aware components, models are trained for 35,000 iterations. W e adopt a minimalist two-level hierarchy ( 𝐿 = 2 ) and set the voxelization scaling factor 𝑠 consistent with Context- GS [ 47 ]. For local geometr y-aware perception via Ball K-NN graph construction, the neighb or count is uniformly xed at 𝐾 = 8 for both NAAP and the formation of G ( 2 ) 𝑔𝑒𝑜 , while the GG-Conv volu- metric kernel size is set to 𝑘 = 5 . Unless other wise sp ecied, we follow the default Scaold-GS conguration, utilizing an anchor feature dimension of 50 and a compressed latent dimension of 12. 4.2 Experiment Details Datasets. Our method is evaluated on four standard real-world benchmarks: Mip-NeRF360 [ 3 ] (utilizing all 9 scenes), Bunge eN- eRF [52], DeepBlending [16], and T anks&T emples [20]. Baseline Methods. W e compare our method with a wide range of state-of-the-art 3DGS compression approaches, which can be grouped into two main streams. The rst fo cuses on compact repr e- sentations via parameter pruning or vector quantization, including Scaold-GS [ 29 ], Compressed3D [ 37 ], and CompGS [ 28 ]. The sec- ond stream, more related to our work, improves entropy coding by exploiting contextual priors. Examples include HAC [ 4 ] and HA C++ [ 5 ], which adopt hash grids for spatial compactness, and Context-GS [ 47 ], which models anchor-level context as hyperpriors. More recently , Liu et al. [ 26 ] introduce d a Mixtur e-of-Experts (MoE) module for robust feature learning, while CA T -3DGS [ 55 ] applies channel-wise autoregressive modeling for attribute compression. W e benchmark against these methods to validate the ee ctiveness of our local geometr y-aware hierarchical context modeling. Metrics. W e evaluate compr ession performance in terms of storage size, measured in megabytes (MB). T o assess the visual quality of rendered images generated from the compressed 3DGS data, we employ three standard metrics: Peak Signal-to-Noise Ratio (PSNR), Structural Similarity Index (SSIM) [ 49 ], and Learne d Perceptual Image Patch Similarity (LPIPS) [57]. 4.3 Experiment Results Quantitative Analysis. As shown in T able 1, our proposed Geo- HCC achieves signicant impro vements over the Scaold-GS [ 29 ] backbone. It delivers an average storage reduction of over 21 × while maintaining competitive or even sup erior rendering delity in terms of PSNR and SSIM. Compared with state-of-the-art methods, including HA C [ 4 ], HA C++ [ 5 ], Context-GS [ 47 ], CA T -3DGS [ 54 ], and Liu et al. [ 26 ], GeoHCC consistently demonstrates better rate- distortion performance, especially at low bitrates. Notably , in sev- eral scenes under high-rate settings, GeoHCC even surpasses the uncompressed Scaold-GS in both PSNR and SSIM. Furthermor e, the Rate-Distortion curves in Fig. 6 conrm that GeoHCC provides a superior performance envelope, achieving higher rendering quality at equivalent bitrates across a wide range of compression ratios. 2026-03-31 02:07. Page 6 of 1–10. Unpublishe d w orking dr aft. Not for distribution. GeoHCC: Local Geometr y- A ware Hierarchical Context Compression for 3D Gaussian Splaing Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y d r j o h n so n t r a i n b i c y c l e Ou r s C on tex t - GS HAC ++ HAC C A T - 3 DGS P S N R: 2 5 . 1 0 d B S i z e : 1 0 . 6 6 M B P S N R: 2 2 . 9 8 d B S i z e : 4 . 4 1 M B P S N R: 2 9 . 8 0 d B S i z e : 4 . 0 8 M B P S N R: 2 9 . 8 6 d B S i z e : 7 . 8 M B P S N R: 2 9 . 7 6 d B S i z e : 6 . 2 1 M B P S N R: 2 9 . 6 9 d B S i z e : 6 . 5 1 M B P S N R: 2 9 . 6 7 d B S i z e : 4 . 2 7 M B P S N R: 2 2 . 4 0 dB S i z e : 6 . 3 9 MB P S N R: 2 2 . 5 7 d B S i z e : 5 . 2 5 M B P S N R: 2 2 . 1 9 d B S i z e : 6 . 9 4 M B P S N R: 2 2 . 6 8 d B S i z e : 6 . 2 8 M B P S N R: 2 5 . 0 8 d B S i z e : 2 1 . 8 2 M B P S N R: 2 5 . 0 4 d B S i z e : 2 3 . 0 9 M B P S N R: 2 5 . 0 5 d B S i z e : 2 7 . 5 4 M B P S N R: 2 5 . 1 0 d B S i z e : 3 3 . 1 4 M B Figure 5: Qualitative results of the proposed method compared to existing compression metho ds. T able 1: Comparison against existing compression approaches. Our metho d is evaluated under two settings: a high-delity mode and a high-compression mode, showcasing its versatility . Cells colored Red and yellow highlight the top-performing and second-best results, respectively , for both the low-bitrate and high-bitrate regimes. All size are reporte d in MB. Datasets Mip-NeRF360 [3] BungeeNeRF [52] DeepBlending [16] T ank&T emples [20] Methods psnr ↑ ssim ↑ lpips ↓ size ↓ psnr ↑ ssim ↑ lpips ↓ size ↓ psnr ↑ ssim ↑ lpips ↓ size ↓ psnr ↑ ssim ↑ lpips ↓ size ↓ 3DGS [2] 27.49 0.813 0.222 744.7 24.87 0.841 0.205 1616 29.42 0.899 0.247 663.9 23.69 0.844 0.178 431.0 Scaold-GS [29] 27.50 0.806 0.252 253.9 26.62 0.865 0.241 183.0 30.21 0.906 0.254 66.00 23.96 0.853 0.177 86.50 Compact3DGS [21] 27.08 0.798 0.247 48.80 23.36 0.788 0.251 82.60 29.79 0.901 0.258 43.21 23.32 0.831 0.201 39.43 Compressed3D [37] 26.98 0.801 0.238 28.80 24.13 0.802 0.245 55.79 29.38 0.898 0.253 25.30 23.32 0.832 0.194 17.28 Morgen. et al. [35] 26.01 0.772 0.259 23.90 22.43 0.708 0.339 48.25 28.92 0.891 0.276 8.40 22.78 0.817 0.211 13.05 CompGS [28] 27.26 0.803 0.239 16.50 - - - - 29.69 0.901 0.279 8.77 23.70 0.837 0.208 9.60 HA C(low-rate) [4] 27.53 0.807 0.238 15.26 26.48 0.845 0.25 18.49 29.98 0.902 0.269 4.35 24.04 0.846 0.187 8.10 Context-GS(low-rate) [47] 27.62 0.778 0.237 12.68 26.90 0.866 0.222 14.00 30.11 0.907 0.265 3.43 24.20 0.852 0.184 7.05 HA C++(low-rate) [5] 27.6 0.803 0.253 8.34 26.78 0.858 0.235 11.75 30.16 0.907 0.266 2.91 24.22 0.849 0.190 5.18 Ours (low-rate) 27.64 0.805 0.247 8.23 26.93 0.866 0.231 11.59 30.25 0.909 0.267 2.83 24.32 0.852 0.187 4.92 HA C(high-rate) [4] 27.77 0.811 0.230 21.84 27.08 0.872 0.209 29.72 30.34 0.906 0.258 6.35 24.40 0.853 0.177 11.24 HA C++(high-rate) [5] 27.82 0.811 0.231 18.48 27.17 0.879 0.196 20.82 30.34 0.911 0.254 5.287 24.32 0.854 0.178 8.63 Context-GS(high-rate) [47] 27.75 0.811 0.231 18.41 27.15 0.875 0.205 21.80 30.39 0.909 0.258 6.60 24.29 0.855 0.176 11.80 CA T -3DGS [54] 27.77 0.809 0.241 12.35 27.35 0.886 0.183 26.59 30.29 0.909 0.269 3.56 24.41 0.853 0.189 6.93 Liu. et al. [26] 27.68 0.808 0.234 15.64 27.26 0.875 0.207 20.83 30.45 0.912 0.250 5.65 24.21 0.861 0.163 8.98 Ours (high-rate) 27.82 0.812 0.2351 12.24 27.46 0.883 0.196 19.48 30.49 0.912 0.250 5.63 24.43 0.856 0.177 8.49 Qualitative Analysis. As shown in Figure 5, GeoHCC produces sharper structural details and signicantly fewer artifacts compared to b oth the baseline and comp eting metho ds. This improvement stems from our locally geometry-aware constraints, which lever- age local geometric context as strong regularization to suppress oaters while enhancing ne lo cal texture details. Thanks to the geometry-guide d context modeling via GG-Conv , our method ef- fectively preser ves high-frequency textures even at low bitrates, where other approaches often suer from blurring or “p opping” artifacts. 2026-03-31 02:07. Page 7 of 1–10. Unpublishe d w orking draft. Not for distribution. Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Trovato et al. 26. 4 26. 6 26. 8 27 27. 2 27. 4 27. 6 10 15 20 25 30 P S N R ( dB ) s i z e (M B ) B u n ge e N e R F O urs H a c + + Con t e x t -G S CA T -3D G S H a c 23. 9 24 24. 1 24. 2 24. 3 24. 4 24. 5 24. 6 2. 5 4. 5 6. 5 8. 5 10. 5 12. 5 P S N R (dB ) si z e (M B ) T an k & T e m p les O urs Co n t e x t -G S H a c + + CA T -3D G S H a c 27. 5 27. 55 27. 6 27. 65 27. 7 27. 75 27. 8 27. 85 6 11 16 21 P S N R (dB ) si z e (M B ) M ip - Ne RF 360 O urs Con t e x t -G S H a c + + CA T -3D G S H a c Figure 6: Rate-Distortion (RD) performance cur ves. Comparison with advance d compression techniques (HA C, HA C++, Context- GS, CA T -3DGS) on the Mip-NeRF360, Bunge eNeRF, and T ank&T emples datasets. Ge oHCC demonstrates robust coding eciency , maintaining the upper envelope across a wide range of bitrates. T able 2: Comparison of storage breakdown (Positions, Features, MLP , Others, “Others” includes auxiliary structures and metadata (e .g., scalings, osets, masks).), and rendering FPS on the "Bicycle" scene between Context-GS (vanilla EM, i.e., Entropy Modeling) and our GG-Conv-based Hierarchical Ge ometry-Guide d Context EM. Methods Storage Costs (MB) ↓ Fidelity Rendering Positions Features MLP Others T otal PSNR ↑ SSIM ↑ LPIPS ↓ FPS V anilla EM [47] 5.24 8.27 0.3162 11.24 25.07 25.07 0.7391 0.2683 135 GG-Conv-based EM(two level) 4.69 4.51 0.2381 6.31 15.75 25.16 0.7415 0.2704 170 GG-Conv-based EM(three level) 4.66 6.82 0.3162 7.54 19.34 25.10 0.7416 0.2703 160 T able 3: Quantitative ablation study on the De ep Blending dataset. (1) Ours: the full version of our proposed compact neural 3DGS compression framework with both NAAP and GG-Conv . (2) Ours w/o GG-Conv: our method without the GG- Conv comp onent. (3) Ours w/o NAAP: our method without the NAAP comp onent. (4) Ours w/o NAAP & GG-Conv: our method without both proposed components. Method PSNR ↑ SSIM ↑ LPIPS ↓ size ↓ Ours w/o NAAP & GG-Conv 30.10 0.9062 0.2659 3.76 MB Ours w/o GG-Conv 30.13 0.9087 0.2656 3.72 MB Ours w/o NAAP 30.20 0.9095 0.2648 3.58 MB Ours 30.31 0.9106 0.2636 3.47 MB 4.4 Ablation Study and Analysis Eectiveness of Dierent Components. As shown in T able 3, we conduct an ablation study on the Deep Blending dataset to evaluate the contribution of each proposed component in our Ge o- HCC framework. Removing NAAP (“Ours w/o NAAP”) leads to a decrease of 0.11 dB in PSNR, 0.001 in SSIM, and a slight increase of 0.11 MB in storage size compared to the full model. Removing GG-Conv (“Ours w/o GG-Conv”) results in a drop of 0.18 dB in PSNR, 0.002 in SSIM, and an additional 0.25 MB in size. When both components are removed (“Ours w/o NAAP & GG-Conv”), the per- formance degrades further by 0.21 dB in PSNR, 0.004 in SSIM, and 0.29 MB in storage compared to the full model. These results demon- strate that b oth NAAP and GG-Conv are essential for achieving high rendering quality under strong compression constraints. Notably , the combined performance gain fr om NAAP and GG-Conv exceeds the sum of their individual improvements, revealing strong synergy between the tw o modules. This indicates that NAAP’s preservation of lo cal ge ometric structures among anchors creates more struc- tured representations that enhance the eectiveness of subsequent hierarchical entropy coding, allowing GG-Conv to achieve supe- rior compression eciency and r endering quality under tight bit constraints. T able 4: Storage comparison between vanilla pruning (Context-GS [47]) and our GeoHCC method. Method Positions (MB) Features (MB) V anilla Pruning 0.7531 1.1108 NAAP (Ours) 0.7689 ( +2.1%) 1.0847 ( -2.4%) Eectiveness of NAAP. As shown in T able 4 on the De ep Blending dataset, compared to vanilla pruning [ 47 ], NAAP increases posi- tions storage by only 2.1% while reducing features storage by 2.4%. This indicates that NAAP selectively retains a small number of crit- ical anchors to preserve local geometric structures. These anchors enable more coherent local geometry in the gaussian eld, which in turn creates stronger spatial corr elations among features. End-to- end optimization then exploits this structure to remov e redundancy more eectively , allowing signicantly better entr opy coding of the features. Thus, the tiny anchor o verhead is largely compensated by much greater feature compression, re vealing that geometry-aware anchor preservation is a highly ecient way to impr ove structur ed representation and ov erall compression in Gaussian splatting. 2026-03-31 02:07. Page 8 of 1–10. Unpublishe d w orking draft. Not for distribution. GeoHCC: Local Geometr y- A ware Hierarchical Context Compression for 3D Gaussian Splaing Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Eectiveness of Hierarchical Geometr y-Guided Context Mo d- eling. W e evaluate the proposed hierarchical geometry-guided con- text modeling module against the vanilla entr opy model [ 47 ] under the same NAAP framework. As shown in T able 2, compared to the baseline, our two-le vel GG-Conv-based EM signicantly reduces the total storage cost from 25.07 MB to 15.75 MB (37.2% reduction) while improving PSNR from 25.07 dB to 25.16 dB. Most notably , the storage for anchor features is nearly halved (from 8.27 MB to 4.51 MB), and the overhead in the "Others" category drops from 11.24 MB to 6.31 MB, demonstrating sup erior redundancy elim- ination. W e also nd that deep er hierarchies do not necessarily enhance eciency . Although the three-level scheme slightly opti- mizes position storage (4.66 MB), it increases feature costs to 6.82 MB and total storage to 19.34 MB. This stems from the excessive computational overhead and comple x cross-layer dep endencies that provide diminishing returns in delity . Therefore, we adopt the two-level design. The eciency of our model is driven by the GG-Conv operator , which leverages local ge ometric correlations to dynamically weight anchor attributes, producing geometry-aware context. This facilitates the derivation of informed conditional pri- ors with high structural delity , which enhances the e xploitation of inter-anchor dependencies and ensures a thorough reduction of attribute redundancy in both features and metadata. 5 Conclusion T o enhance 3DGS compression performance, we propose a lo- cal geometry-aware framework that consistently exploits geomet- ric relationships within anchor neighb orhoods. By introducing Neighborhood-A ware Anchor Pruning (NAAP), we achieve a sparse yet locally geometr y-consistent hierarchy through adaptive neigh- bor merging. Building upon this structure, our hierarchical ge ometry- guided context modeling scheme, powered by the lightweight GG- Conv operator , leverages geometr y-guided coarse-to-ne priors to eectively minimize inter-anchor redundancy . Extensive experi- ments demonstrate that our method achieves state-of-the-art rate- distortion performance, delivering superior rendering quality at substantially reduced storage costs. References [1] Muhammad Salman Ali, Maryam Qamar , Sung-Ho Bae, and Enzo T artaglione. 2024. Trimming the fat: Ecient compression of 3d gaussian splats through pruning. arXiv preprint arXiv:2406.18214 (2024). [2] Milena T Bagdasarian, Paul Knoll, Florian Barthel, Anna Hilsmann, Peter Eisert, and Wieland Morgenstern. [n. d.]. 3dgs. zip: a survey on 3D gaussian splatting compression methods (2024). URL https://arxiv. org/abs/2407.09510 ([n. d.]). [3] Jonathan T Barron, Ben Mildenhall, Dor V erbin, Pratul P Srinivasan, and Peter Hedman. 2022. Mip-nerf 360: Unbounded anti-aliase d neural radiance elds. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition . 5470–5479. [4] Yihang Chen, Qianyi Wu, W eiyao Lin, Mehrtash Harandi, and Jianfei Cai. 2024. Hac: Hash-grid assisted context for 3d gaussian splatting compression. In Euro- pean Conference on Computer Vision . Springer , 422–438. [5] Yihang Chen, Qianyi Wu, W eiyao Lin, Mehrtash Harandi, and Jianfei Cai. 2025. Hac++: Towar ds 100x compression of 3d gaussian splatting. arXiv preprint arXiv:2501.12255 (2025). [6] Kai Cheng, Xiaoxiao Long, K aizhi Y ang, Yao Y ao, W ei Yin, Y uexin Ma, W enping W ang, and Xuejin Chen. 2024. Gaussianpro: 3d gaussian splatting with progres- sive propagation. In Forty-rst International Conference on Machine Learning . [7] Zhengxue Cheng, Heming Sun, Masaru Takeuchi, and Jiro K atto. 2020. Learned image compression with discretized gaussian mixture likelihoods and attention modules. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition . 7939–7948. [8] Xuan Deng, Xingtao Wang, Xiandong Meng, Debin Zhao, and Xiaop eng Fan. 2025. P VINet: Point-V oxel Interlaced Network for Point Cloud Compression. IEEE Signal Processing Letters 33 (2025), 61–65. [9] Tingyu Fan, Linyao Gao, Yiling Xu, Zhu Li, and Dong W ang. 2022. D-dp cc: Deep dynamic point cloud compression via 3d motion prediction. arXiv preprint arXiv:2205.01135 (2022). [10] Zhiwen Fan, Kevin W ang, Kairun W en, Zehao Zhu, Dejia Xu, Zhangyang W ang, et al . 2024. Lightgaussian: Unbounded 3d gaussian compression with 15x reduc- tion and 200+ fps. Advances in neural information processing systems 37 (2024), 140138–140158. [11] Guangchi Fang and Bing W ang. 2024. Mini-splatting: Representing scenes with a constrained number of gaussians. In European conference on computer vision . Springer , 165–181. [12] W ei Gao, W enxu Gao, Xingming Mu, Changhao Peng, and Ge Li. 2026. Overview and comparison of avs point cloud compression standard. arXiv preprint arXiv:2602.08613 (2026). [13] Sharath Girish, Kamal Gupta, and Abhinav Shrivastava. 2024. Eagles: Ecient accelerated 3d gaussians with lightweight encodings. In European Conference on Computer Vision . Springer , 54–71. [14] Zhiyang Guo, W engang Zhou, Min W ang, Li Li, and Houqiang Li. 2025. Hand- NeRF++: Modeling Animatable Interacting Hands With Neural Radiance Fields. IEEE Transactions on Pattern A nalysis and Machine Intelligence (2025). [15] Dailan He, Y aoyan Zheng, Baocheng Sun, Y an W ang, and Hongwei Qin. 2021. Checkerboard context model for ecient learned image compression. In Pro- ceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition . 14771–14780. [16] Peter Hedman, Julien Philip, True Price , Jan-Michael Frahm, George Drettakis, and Gabriel Brostow . 2018. Deep blending for free-viewpoint image-based ren- dering. ACM Transactions on Graphics (T oG) 37, 6 (2018), 1–15. [17] Zhaoyang Jia, Bin Li, Jiahao Li, W enxuan Xie, Linfeng Qi, Houqiang Li, and Yan Lu. 2025. To wards practical real-time neural video compression. In Proceedings of the Computer Vision and Pattern Recognition Conference . 12543–12552. [18] Bernhard Kerbl, Georgios K opanas, Thomas Leimkühler , and George Drettakis. 2023. 3D Gaussian splatting for real-time radiance eld rendering. ACM Trans. Graph. 42, 4 (2023), 139–1. [19] Sieun Kim, K yungjin Lee, and Y oungki Lee. 2024. Color-cued ecient densica- tion method for 3d gaussian splatting. In Procee dings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition . 775–783. [20] Arno Knapitsch, Jaesik Park, Qian- Yi Zhou, and Vladlen Koltun. 2017. T anks and temples: Benchmarking large-scale scene reconstruction. ACM Transactions on Graphics (T oG) 36, 4 (2017), 1–13. [21] Joo Chan Le e, Daniel Rho, Xiangyu Sun, Jong Hwan Ko, and Eunbyung Park. 2024. Compact 3d gaussian representation for radiance eld. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition . 21719–21728. [22] Jiahao Li, Bin Li, and Y an Lu. 2023. Neural video compression with diverse contexts. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition . 22616–22626. [23] Jiahao Li, Bin Li, and Y an Lu. 2024. Neural video compression with feature modulation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition . 26099–26108. [24] Han Liu, Hengyu Man, Xingtao W ang, W enrui Li, and Debin Zhao. 2026. MRT: Learning Compact Representations with Mixed RWK V - Transformer for Extreme Image Compression. In Proceedings of the AAAI Conference on Articial Intelli- gence , V ol. 40. 10430–10438. [25] Lei Liu, Zhenghao Chen, W ei Jiang, W ei W ang, and Dong Xu. 2024. Hemgs: A hybrid entropy model for 3d gaussian splatting data compression. arXiv preprint arXiv:2411.18473 (2024). [26] Lei Liu, Zhenghao Chen, and Dong Xu. 2025. 3D Gaussian Splatting Data Com- pression with Mixture of Priors. In Proceedings of the 33rd ACM International Conference on Multimedia . 8341–8350. [27] Rong Liu, Rui Xu, Yue Hu, Meida Chen, and Andrew Feng. 2024. Atomgs: Atomizing gaussian splatting for high-delity radiance eld. arXiv preprint arXiv:2405.12369 (2024). [28] Xiangrui Liu, Xinju Wu, Pingping Zhang, Shiqi W ang, Zhu Li, and Sam K wong. 2024. Compgs: Ecient 3d scene representation via compressed gaussian splat- ting. In Proce edings of the 32nd A CM International Conference on Multimedia . 2936–2944. [29] T ao Lu, Mulin Y u, Linning Xu, Yuanbo Xiangli, Limin W ang, Dahua Lin, and Bo Dai. 2024. Scaold-gs: Structured 3d gaussians for view-adaptive rendering. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Re c ognition . 20654–20664. [30] Saswat Subhajyoti Mallick, Rahul Goel, Bernhard Kerbl, Markus Steinberger , Francisco Vicente Carrasco , and Fernando De La T orre. 2024. T aming 3dgs: High- quality radiance elds with limited resources. In SIGGRAPH Asia 2024 Conference Papers . 1–11. [31] Hengyu Man, Xiaopeng Fan, Riyu Lu, Chang Yu, and Debin Zhao. 2024. MetaIP: Meta-network-based intra prediction with customized parameters for video coding. IEEE Transactions on Circuits and Systems for Video T e chnology 34, 10 2026-03-31 02:07. Page 9 of 1–10. Unpublishe d w orking draft. Not for distribution. Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Trovato et al. (2024), 9591–9605. [32] Hengyu Man, Xiaop eng Fan, Ruiqin Xiong, and Debin Zhao. 2023. Tree- Structured Data Clustering-Driven Neural Network for Intra Prediction in Video Coding. IEEE Transactions on Image Processing 32 (2023), 3493–3506. [33] Hengyu Man, Hao W ang, Riyu Lu, Zhaolin W an, Xiaopeng Fan, and Debin Zhao. 2025. Content-A ware Dynamic In-Loop Filter With Adjustable Complexity for VVC Intra Coding. IEEE Transactions on Circuits and Systems for Vide o T echnology 35, 6 (2025), 6114–6128. [34] Ben Mildenhall, Pratul P Srinivasan, Matthew T ancik, Jonathan T Barron, Ravi Ramamoorthi, and Ren Ng. 2021. Nerf: Representing scenes as neural radiance elds for view synthesis. Commun. ACM 65, 1 (2021), 99–106. [35] Wieland Morgenstern, Florian Barthel, Anna Hilsmann, and Peter Eisert. 2024. Compact 3d scene representation via self-organizing gaussian grids. In European Conference on Computer Vision . Springer , 18–34. [36] Thomas Müller , Alex Evans, Christoph Schied, and Alexander K eller . 2022. In- stant neural graphics primitives with a multiresolution hash enco ding. A CM transactions on graphics (TOG) 41, 4 (2022), 1–15. [37] K Navaneet, Kossar Pourahmadi Meibodi, Soroush Abbasi K oohpayegani, and Hamed Pirsiavash. 2023. Compact3d: Compressing gaussian splat radiance eld models with vector quantization. arXiv preprint arXiv:2311.18159 2, 3 (2023). [38] Simon Niedermayr, Josef Stumpfegger , and Rüdiger W estermann. 2024. Com- pressed 3d gaussian splatting for accelerated novel vie w synthesis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition . 10349– 10358. [39] Antonio Ortega, Pascal Frossard, Jelena K ovačević, José MF Moura, and Pierre V andergheynst. 2018. Graph signal processing: Overview , challenges, and appli- cations. Proc. IEEE 106, 5 (2018), 808–828. [40] Panagiotis Papantonakis, Georgios Kopanas, Bernhard K erbl, Alexandre Lanvin, and George Drettakis. 2024. Reducing the memory footprint of 3d gaussian splatting. Proceedings of the ACM on Computer Graphics and Interactive T echniques 7, 1 (2024), 1–17. [41] Charles R Qi, Hao Su, Kaichun Mo, and Leonidas J Guibas. 2017. Pointnet: Deep learning on point sets for 3d classication and segmentation. In Proceedings of the IEEE conference on computer vision and pattern recognition . 652–660. [42] Charles Ruizhongtai Qi, Li Yi, Hao Su, and Leonidas J Guibas. 2017. Pointnet++: Deep hierarchical feature learning on point sets in a metric space . Advances in neural information processing systems 30 (2017). [43] Kerui Ren, Lihan Jiang, T ao Lu, Mulin Yu, Linning Xu, Zhangkai Ni, and Bo Dai. 2024. Octree-gs: T owards consistent real-time rendering with lod-structured 3d gaussians. arXiv preprint arXiv:2403.17898 (2024). [44] Xihua Sheng, Jiahao Li, Bin Li, Li Li, Dong Liu, and Y an Lu. 2022. T emporal context mining for learned video compression. IEEE Transactions on Multimedia 25 (2022), 7311–7322. [45] Seungjoo Shin, Jaesik Park, and Sunghyun Cho. 2025. Locality-aware gaussian compression for fast and high-quality rendering. arXiv preprint (2025). [46] Henan W ang, Hanxin Zhu, Tianyu He, Runsen Feng, Jiajun Deng, Jiang Bian, and Zhibo Chen. 2024. End-to-end rate-distortion optimized 3d gaussian repre- sentation. In European Conference on Computer Vision . Springer , 76–92. [47] Y ufei W ang, Zhihao Li, Lanqing Guo, W enhan Y ang, Alex Kot, and Bihan W en. 2024. Contextgs: Compact 3d gaussian splatting with anchor level context model. Advances in neural information processing systems 37 (2024), 51532–51551. [48] Y ue W ang, Y ongbin Sun, Ziwei Liu, Sanjay E Sarma, Michael M Br onstein, and Justin M Solomon. 2019. Dynamic graph cnn for learning on p oint clouds. ACM Transactions on Graphics (tog) 38, 5 (2019), 1–12. [49] Zhou W ang, A.C. Bovik, H.R. Sheikh, and E.P . Simoncelli. 2004. Image quality assessment: from error visibility to structural similarity. IEEE Transactions on Image Processing 13, 4 (2004), 600–612. doi:10.1109/TIP.2003.819861 [50] Zhitao W ang, Hengyu Man, W enrui Li, Xingtao Wang, Xiaopeng Fan, and Debin Zhao. 2026. T -GVC: Trajectory-Guided Generative Video Coding at Ultra-Low Bitrates. In Proceedings of the AAAI Conference on A rticial Intelligence , V ol. 40. 7141–7149. [51] T ong Wu, Y u-Jie Yuan, Ling-Xiao Zhang, Jie Y ang, Y an-Pei Cao, Ling-Qi Y an, and Lin Gao. 2024. Recent advances in 3d gaussian splatting. Computational Visual Media 10, 4 (2024), 613–642. [52] Y uanbo Xiangli, Linning Xu, Xingang Pan, Nanxuan Zhao, Anyi Rao, Christian Theobalt, Bo Dai, and Dahua Lin. 2022. Bunge enerf: Progressive neural radiance eld for extreme multi-scale scene rendering. In European conference on computer vision . Springer , 106–122. [53] Runyi Y ang, Zhenxin Zhu, Zhou Jiang, Baijun Y e, Xiaoxue Chen, Yifei Zhang, Y uantao Chen, Jian Zhao, and Hao Zhao. 2024. Spe ctrally pruned gaussian elds with neural compensation. arXiv preprint arXiv:2405.00676 (2024). [54] Y u- Ting Zhan, Cheng- Y uan Ho, Hebi Y ang, Yi-Hsin Chen, Jui Chiu Chiang, Y u-Lun Liu, and W en-Hsiao Peng. 2025. CA T-3DGS: A context-adaptive tri- plane approach to rate-distortion-optimized 3DGS compression. arXiv preprint arXiv:2503.00357 (2025). [55] Y u- Ting Zhan, Cheng- Yuan Ho, Hebi Y ang, Yi-Hsin Chen, Jui Chiu Chiang, Yu- Lun Liu, and W en-Hsiao Peng. 2025. CA T -3DGS: A context-adaptive triplane approach to rate-distortion-optimized 3DGS compression. In Proceedings of the Thirteenth International Conference on Learning Representations (ICLR) . [56] Qiang Zhang, Seung-Hwan Baek, Szymon Rusinkiewicz, and Felix Heide. 2022. Dierentiable point-based radiance elds for ecient view synthesis. In SIG- GRAPH Asia 2022 Conference Papers . 1–12. [57] Richard Zhang, Phillip Isola, Alexei A Efros, Eli Shechtman, and Oliver W ang. 2018. The unreasonable ee ctiveness of deep features as a perceptual metric. In Proceedings of the IEEE conference on computer vision and pattern recognition . 586–595. [58] W enkang Zhang, Yan Zhao, Qiang W ang, Zhixin Xu, Li Song, and Zhengxue Cheng. 2026. D-fcgs: Feedfor ward compression of dynamic gaussian splatting for free-viewpoint vide os. In Proce edings of the AAAI Conference on A rticial Intelligence , V ol. 40. 16361–16369. 2026-03-31 02:07. Page 10 of 1–10.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment