Fairness Scheduling for Coded Caching in Multi-AP Wireless Local Area Networks

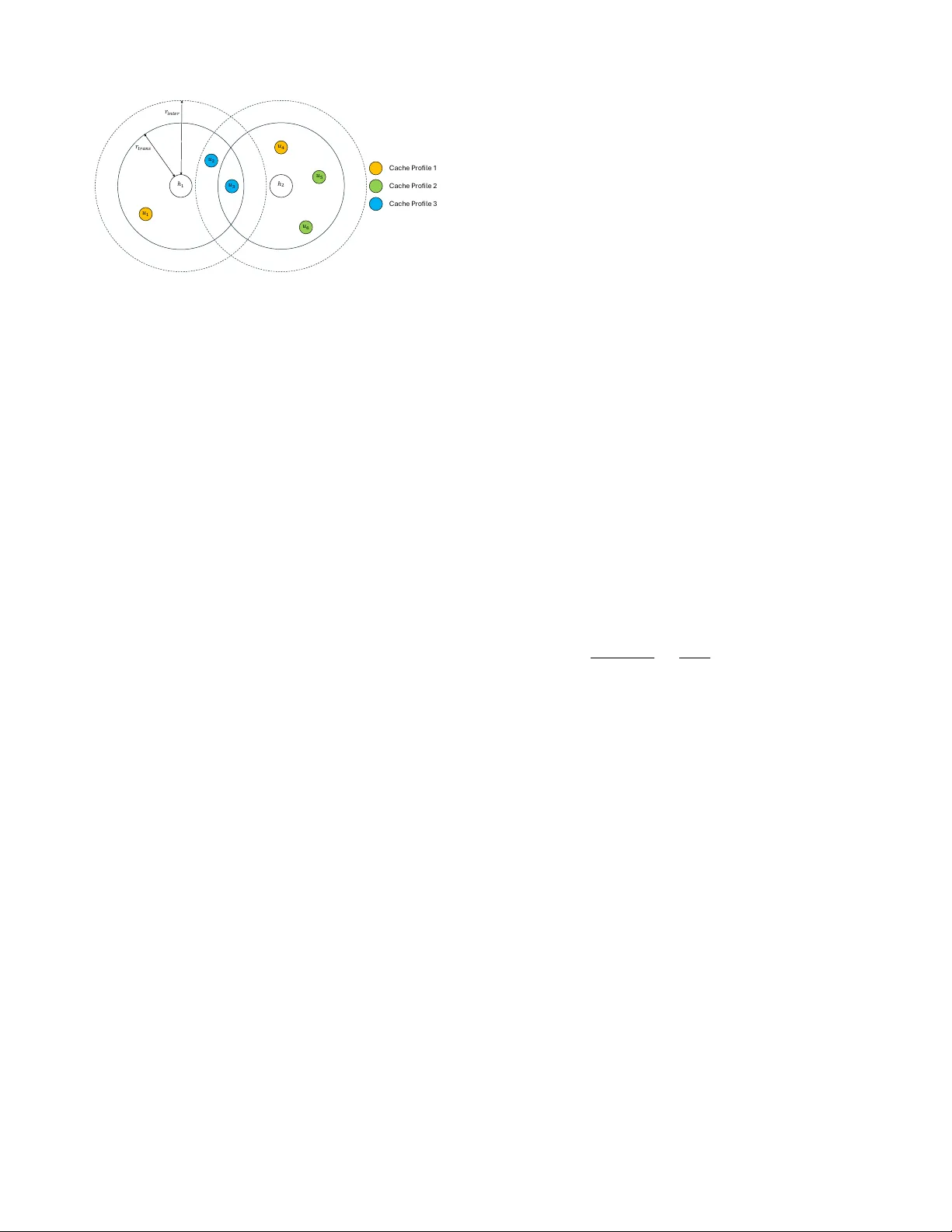

Coded caching (CC) exploits cumulative cache memory at user devices and coding to transform unicast traffic into multicast transmissions. While information theoretic results show significant gains over uncoded caching for various network topologies, …

Authors: Kagan Akcay, MohammadJavad Salehi, Giuseppe Caire