FI-KAN: Fractal Interpolation Kolmogorov-Arnold Networks

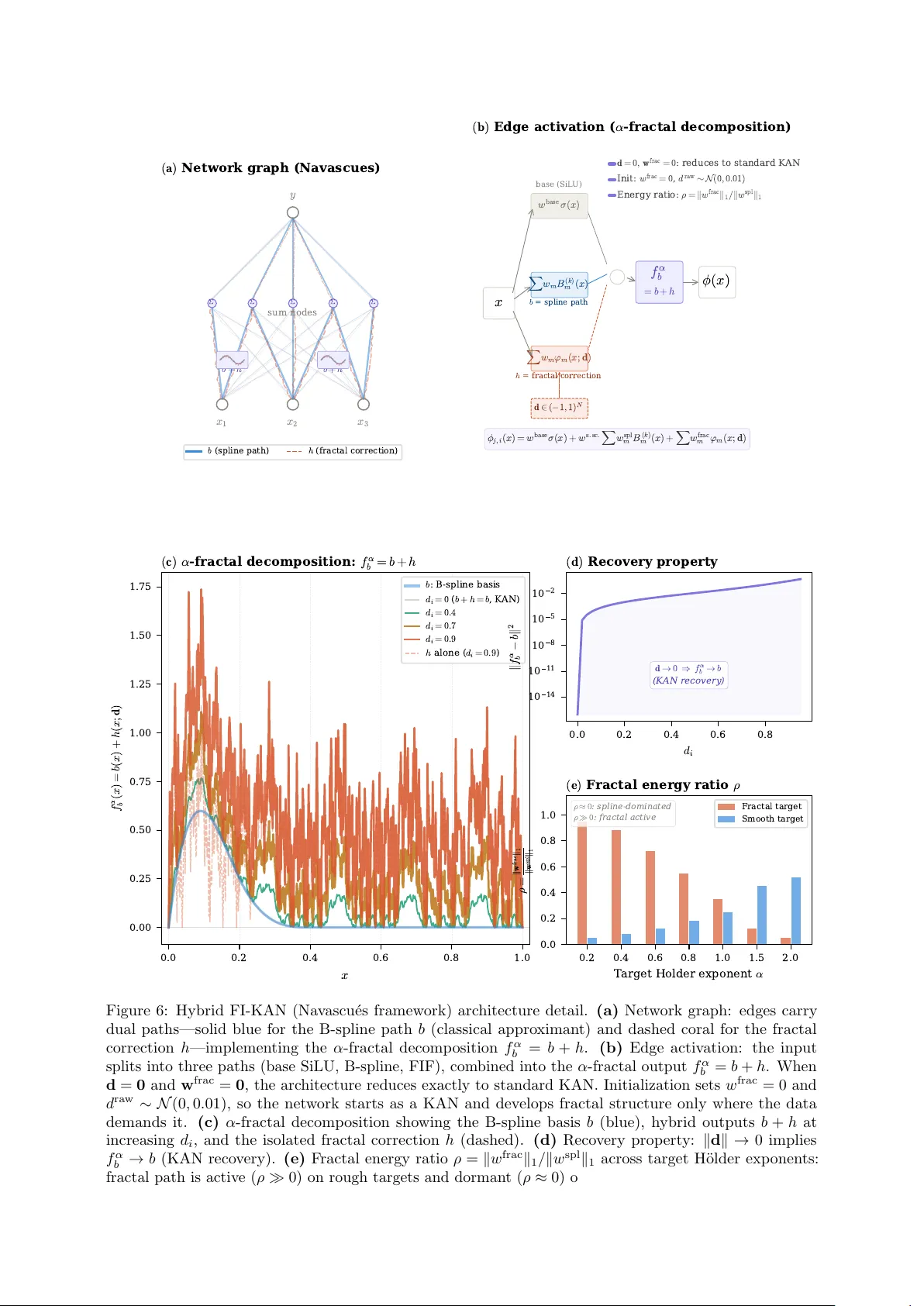

Kolmogorov-Arnold Networks (KAN) employ B-spline bases on a fixed grid, providing no intrinsic multi-scale decomposition for non-smooth function approximation. We introduce Fractal Interpolation KAN (FI-KAN), which incorporates learnable fractal inte…

Authors: Gnankan L, ry Regis N'guessan