Categorical Perception in Large Language Model Hidden States: Structural Warping at Digit-Count Boundaries

Categorical perception (CP) -- enhanced discriminability at category boundaries -- is among the most studied phenomena in perceptual psychology. This paper reports that analogous geometric warping occurs in the hidden-state representations of large l…

Authors: Jon-Paul Cacioli

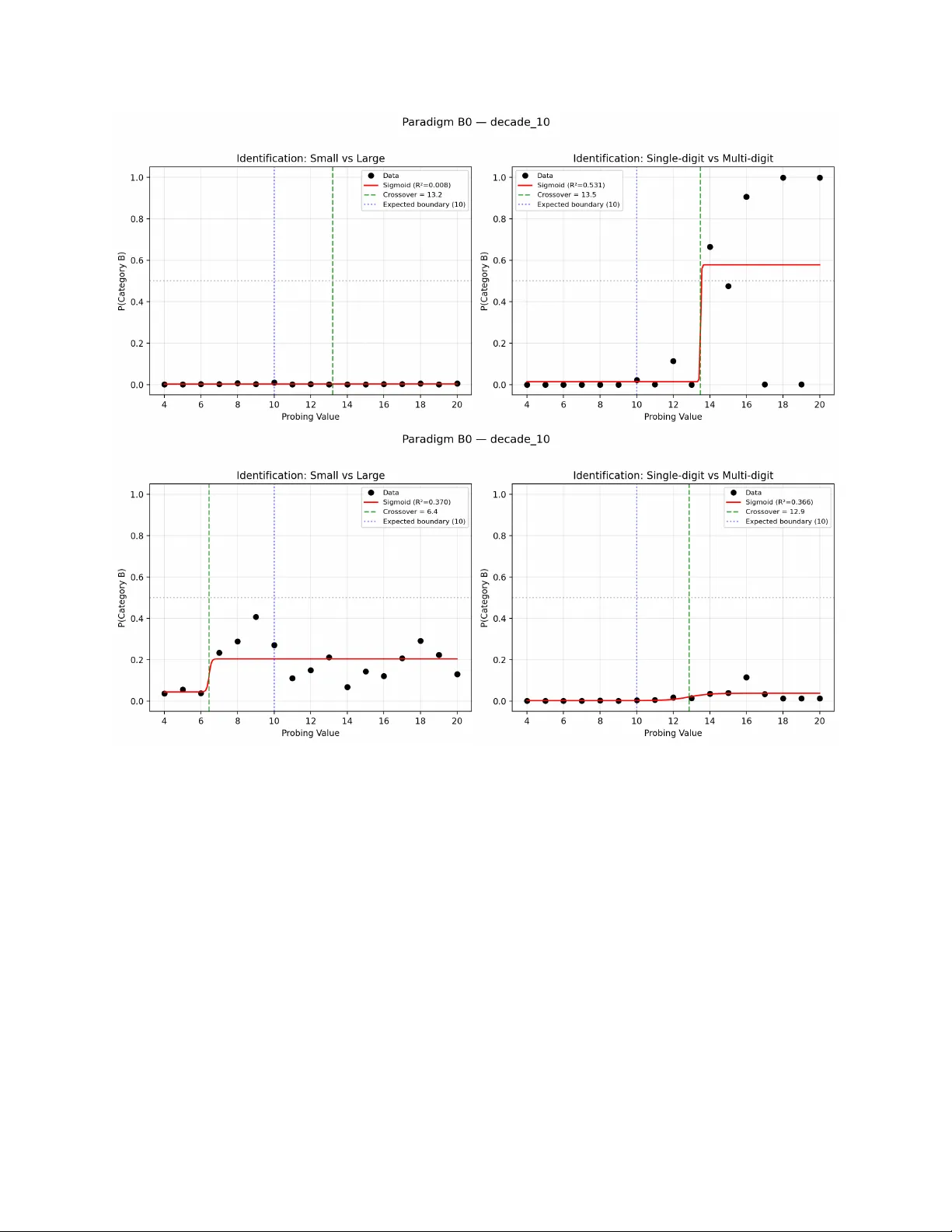

Categorical P erception in Large Language Mo del Hidden States: Structural W arping at Digit-Coun t Boundaries Jon-P aul Cacioli Indep enden t Researc her, Melbourne, A ustralia Classical Minds, Modern Mac hines Abstract Categorical perception (CP) — enhanced discriminabilit y at category boundaries — is among the most studied phenomena in p erceptual psyc hology . This pap er reports that analogous geometric w arping o ccurs in the hidden-state representations of large language mo dels (LLMs) pro cessing Arabic n umerals. Using represen tational similarit y analysis across six mo dels from v e architec- ture families, the study nds that a CP-additive mo del (log-distance plus a boundary b o ost) ts the represen tational geometry b etter than a purely con tinuous mo del at 100% of primary la yers in every mo del tested. The eect is sp ecic to structurally dened b oundaries (digit-count tran- sitions at 10 and 100), absen t at non-b oundary control positions, and absent in the temperature domain where linguistic categories (hot/cold) lac k a tok enisation discon tin uity . T w o qualitativ ely distinct signatures emerge: “classic CP” (Gemma, Qw en), where mo dels b oth categorise explicitly and show geometric w arping, and “structural CP” (Llama, Mistral, Phi), where geometry w arps at the b oundary but mo dels cannot rep ort the category distinction. This disso ciation is stable across boundaries and is a prop ert y of the architecture, not the stim ulus. Structural input-format discon tinuities are sucient to pro duce categorical p erception geometry in LLMs, indep enden tly of explicit semantic category knowledge. Keyw ords: categorical p erception, large language mo dels, representational similarity analysis, n umerical cognition, hidden states, tok enisation 1. In tro duction Categorical p erception (CP) — the observ ation that stim uli v arying con tinuously along a physical dimension are p erceived as falling in to discrete categories, with enhanced discriminabilit y at cat- egory b oundaries — is one of the most extensively studied phenomena in p erceptual psychology . First demonstrated for sp eec h sounds b y Lib erman, Harris, Homan, and Grith (1957), CP has since b een do cumen ted across domains including colour (Wina wer et al., 2007), facial expressions (Etco & Magee, 1992), and m usical pitc h (Burns & W ard, 1978). The canonical CP signature com- prises three comp onents: (a) a sharp identication function at the category b oundary , (b) a p eak in discrimination p erformance at the b oundary that exceeds what the iden tication function alone w ould predict, and (c) represen tational w arping — increased p erceptual distance b et w een stim uli that straddle the b oundary relative to equidistan t within-category pairs (Harnad, 1987; Goldstone & Hendrickson, 2010). The theoretical signicance of CP lies in what it reveals ab out the relationship b et ween con tinuous sensory input and categorical mental representations. T wo classes of explanation dominate the 1 literature. Under acquired CP accoun ts, exp erience with categorical distinctions warps the under- lying p erceptual space, such that the warping is a consequence of category learning (Goldstone, 1994). Under structural accounts, the warping reects prop erties of the represen tational format it- self — discon tinuities in the input that imp ose categorical structure regardless of explicit category kno wledge (McMurray , 2022). Distinguishing these accounts requires access to the represen tational geometry , which is dicult in biological systems but straightforw ard in articial neural netw orks. Large language mo dels (LLMs) provide an ideal test case. Their hidden-state represen tations are directly observ able, their training data are characterisable, and their arc hitectural prop erties (tok enisation, la yer depth, atten tion structure) create well-dened represen tational discon tinuities that can b e manipulated exp erimen tally . Arabic numerals are a particularly clean domain: the transition from single-digit to double-digit num bers (9 → 10) in volv es a simultaneous change in c haracter count, tok en coun t (for most tok enisers), and lexical form — a structural discontin uit y analogous to the v oice onset time b oundary in sp eec h CP studies. Recen t work has established that LLMs enco de numerical magnitude in their hidden states fol- lo wing a logarithmic compression consistent with W eb er’s Law (Cacioli, 2026a). This geometry is presen t at all lay ers but is causally implicated only at early la yers — a disso ciation b et ween represen tational structure and functional relev ance that parallels ndings in neuroscience (Zhu et al., 2025). Separately , Park, Y un, Lee, and Shin (2024, 2025) demonstrated that LLMs represen t categorical concepts as p olytope structures in hidden-state space, and Bonnasse-Gahot and Nadal (2022) show ed theoretically that CP-like geometry — warping of the Fisher information metric at category b oundaries — emerges in deep lay ers of trained neural net works regardless of output b eha viour. What has not been tested is whether the full CP signature — iden tication, discrimination, and rep- resen tational w arping ev aluated join tly — appears in LLM hidden states for a stim ulus domain with a w ell-dened structural b oundary . The present study lls this gap, applying formal psyc hophys- ical metho dology (representational similarit y analysis, signal detection theory , coun terbalanced forced-c hoice psyc hophysics) to the hidden states of six LLMs pro cessing Arabic numerals. 1.1 The Presen t Study W e test categorical p erception at digit-coun t b oundaries (10 and 100) in six mo dels from ve ar- c hitecture families. The design comprises v e paradigms: P aradigm A (represen tational geometry via RSA), P aradigm B0 (identication), P aradigm B (discrimination), Paradigm C (precision gra- dien t), and Paradigm E (causal interv en tion). T emperature serves as a cross-domain control: a con tinuous physical dimension with a linguistic category b oundary (hot/cold) but no tok enisation discon tinuit y . A nonce-token remapping con trol tests whether the CP eect requires linguistic surface form or can b e induced by ordinal information alone. Eigh t hypotheses w ere pre-registered on the Op en Science F ramework (osf.io/qrxf3) prior to data collection, along with tw elv e exploratory analyses. The primary claim rests on representational geometry (Paradigm A), following the precedent of P ark et al. (2024) and Bonnasse-Gahot and Nadal (2022) who made geometric CP claims without requiring b eha vioural conv ergence. The iden tication task (P aradigm B0) serv es as a secondary analysis documenting the relationship b et w een explicit categorisation and geometric structure. This extends the standard CP proto col b y adding a representational criterion — justied by the unique adv antage of LLM researc h: direct access to the representational geometry , whic h in human studies must b e inferred indirectly from the relationship b et ween iden tication and discrimination (McMurray , 2022). 2 2. Metho d 2.1 Mo dels Six mo dels from ve architecture families were tested, selected to maximise arc hitectural div ersity within the constraint of tting in 16 GB VRAM (AMD RX 7900 GRE): Mo del P arameters F amily Role Llama-3-8B-Instruct 8.0B Meta Primary Mistral-7B-Instruct- v0.3 7.2B Mistral AI Primary Gemma-2-9B-IT 9.2B Go ogle Primary Qw en2.5-7B-Instruct 7.6B Alibaba Primary Phi-3.5-mini-instruct 3.8B Microsoft Primary (scale prob e) Llama-3-8B-Base 8.0B Meta Exploratory (instruction-tuning con trol) All mo dels w ere loaded in FP16 (BF16 for Gemma) with output_hidden_states=True . Phi- 3.5-mini required a communit y fork (Lexius/Phi-3.5-mini-instruct) with man ual DynamicCache compatibilit y patc hes for T ransformers 5.0.0. 2.2 Stim uli 2.2.1 Numerical Domain (Primary) Decade-10 condition. Sev enteen probing v alues (4– 20) spanning the single-digit/double-digit b oundary at 10. The b oundary is dened structurally b y the digit-coun t transition, not empirically from iden tication p erformance (see §1.1 and v0.5 revision rationale). F our of the six mo dels’ tok enisers exhibit a token-coun t discontin uit y at this b oundary: Gemma and Qw en enco de single-digit num b ers as one token and double-digit n umbers as t wo tok ens; Mistral and Phi show the same pattern oset b y a leading-space tok en (2→3 tokens). Llama-3 (b oth instruct and base) is the exception: its BPE vocabulary includes merged tokens for common m ulti-digit num bers, so b oth single- and double-digit num b ers are enco ded as single tok ens (e.g., “9”→ [24] , “10”→ [605] ). That Llama nonetheless shows strong CP geometry despite ha ving no token-coun t discon tinuit y indicates that character-coun t and lexical-form c hanges — not tok en count per se — are sucien t to pro duce the b oundary eect (see Supplemen tary T able S2 for exact token IDs across all mo dels). The 100-b oundary inv olv es an analogous transition (tw o-digit to three-digit character strings) and a token-coun t increase for Gemma, Qwen, Mistral, and Phi, pro ducing the 3.9–12.7 eect-size amplication reported in §3.1.1. Con trol-15 condition. Nine probing v alues (11–19) cen tred on 15, a non-b oundary position in the same numerical range. If CP-like warping app ears equally at 15 as at 10, the eect is general represen tational inhomogeneit y , not categorical p erception (H0 falsication con trol). Decade-100 condition. Thirteen probing v alues (70–130) spanning the double-digit/triple-digit b oundary at 100, with a matc hed con trol at 150 (Con trol-150: v alues 130–170). 3 Eigh t carrier sentences em b edded each probing v alue in natural language con texts (e.g., “Approxi- mately {N} cases w ere observ ed”). F ollo wing Rogers and Da vis (2009), sen tences w ere split: indices 0–3 for iden tication (Paradigm B0) and indices 4–7 for RSA centroids (Paradigm A), preven ting rep etition artefacts from contaminating the geometry . 2.2.2 T emp erature Domain (Secondary) Eigh teen probing v alues ( 20 to 100°C) spanning the hot/cold linguistic b oundary (~22°C), with a control condition (35–51°C, no b oundary). T em- p erature has a linguistic category distinction (hot/cold) but no tokenisation discon tinuit y — “21” and “23” tok enise iden tically . The temp erature domain tests whether linguistic category kno wledge alone, without structural input-format discontin uit y , is sucient to induce representational CP . 2.2.3 Nonce-T ok en Remapping Con trol (E10) Seven teen nonce tok ens (“glorp”, “blic ket”, “tazmo”, “fen wic k”, …) mapp ed to ordinal p ositions 1–17 (corresp onding to v alues 4–20). T wo conditions: nonc e_no_or der (nonce tok ens em b edded in carrier sen tences with no ordering infor- mation) and nonc e_or der e d (a preamble establishing the complete ordering preceded each sen tence). Theoretical RDMs used ordinal p osition as the contin uous baseline (d_ij = |rank_i rank_j|), not log-magnitude. This con trol tests whether CP requires the linguistic/tokenisation structure of real num b ers or can b e induced by ordinal information alone. 2.3 P aradigm A: Represen tational Geometry (RSA) F or eac h probing v alue, hidden states were extracted at all lay ers (em b edding + transformer la yers) from four RSA carrier sentences (indices 4–7). Centroids w ere computed by av eraging across sen tences, yielding one representation p er v alue p er lay er per mo del. P airwise cosine distances b et w een centroids formed the empirical representational dissimilarit y matrix (RDM) at each lay er. Euclidean distance w as computed as a co-primary metric. Five theoretical RDMs were compared: 1. Con tinuous (W eb er/Log): d_ij = |log(x_i) log(x_j)| 2. CP-A dditive: d_ij = |log(x_i) log(x_j)| + · 1[dierent category] ( = 1.0 template) 3. CP-Multiplicativ e: d_ij = |log(x_i) log(x_j)| · (1 + · 1[dieren t category]) 4. Categorical: d_ij = 0 if same category , 1 if dierent 5. Linear: d_ij = |x_i x_j| RSA w as p erformed via Sp earman rank correlation betw een empirical and theoretical RDMs, with signicance assessed b y Mantel p erm utation tests (10,000 p ermutations per la yer). Lay erwise mul- tiple comparisons were corrected using the Benjamini-Ho c h b erg false discov ery rate (FDR) at = .05. The pre-registered mo del comparison hierarc h y was: (1) Primary: Contin uous vs CP-Additiv e; (2) Mechanism: CP-Additiv e vs CP-Multiplicativ e. 2.4 P aradigm B0: Iden tication Three identication framings per model: “small/large”, “single-digit/multi-digit”, and “one digit/t wo digits” . Eac h framing used a chat-templated forced-c hoice format with counterbalanced A/B option order (follo wing W eb er Appendix A metho dology; Cacioli, 2026a). P(category_b) w as computed as the a verage across b oth orders to correct for p osition bias. Sigmoid functions were t to each iden tication curv e to estimate crossov er p oin t, slop e, and R². 4 2.5 P aradigm B: Beha vioural Discrimination F orced-c hoice discrimination: “Which is larger: A) x or B) x ?” for 900 trials (3 p osition categories [cross-b oundary , within-b elow, within-ab o ve] 6 log-distance lev els [0.10–0.60] 50 pairs per cell, with ±10% jitter; collapsed to a 2 6 factorial [cross-b oundary vs within-category 6 distances] for analysis). Both option orders w ere tested (counterbalanced) with logit extraction for A/B tok ens. The primary dep enden t v ariable was | logit| (decision condence), used as a reaction- time analogue following the rationale that mo dels process all tok ens in a single forw ard pass and condence magnitude is the computational analogue of pro cessing ease (Cacioli, 2026b). 2.6 P aradigm C: Precision Gradien t Lo cal representational precision was dened as 1/||h(n+1) h(n)|| for adjacen t probing v alues at eac h lay er, following Cacioli (2026a). CP predicts a distance spike (precision dip) at the category b oundary . 2.7 P aradigm E: Causal In terven tion (E5) A ridge-regression prob e was trained on Paradigm A cen troids predicting binary category mem- b ership (< 10 vs 10), dening the “category direction” at each lay er. Direction v alidity w as conrmed via PCA: PC1 correlation with category mem b ership (mean = .83 at primary la y ers) and prob e-PC1 cosine similarity . A ctiv ation patc hing was p erformed via PyT orch forw ard ho oks: at each target lay er, hidden states w ere mo died in-place by adding · ||w|| · v_cat, where v_cat is the unit category direction, ||w|| is the prob e weigh t norm, and {0.25, 0.50, 0.75, 1.00}. T en random-direction controls (same norm) established the sp ecicit y baseline. The dep enden t v ariable was (| logit|) — the c hange in discrimination condence under patching. 2.8 H4: Boundary Contribution to Represen tational Distance Hierarc hical regression at each primary lay er tested whether boundary-crossing adds unique v ari- ance b ey ond log-distance. Step 1: regress empirical pairwise distances on |log(x_i) log(x_j)|. Step 2: add a b oundary-crossing indicator (1 if pair straddles the b oundary , 0 otherwise). R² and F-test for the addition of the b oundary predictor. 2.9 Pre-Registration and Statistical Analysis All h yp otheses and analysis pip elines w ere pre-registered on OSF (osf.io/qrxf3) prior to data col- lection. Conrmatory tests used Bonferroni correction within h yp othesis families ( = .05). Eect sizes (Cohen’s d, R², Sp earman ) are rep orted for all comparisons. Bootstrap 95% condence in terv als (10,000 resamples, seed = 42) are rep orted where applicable. All co de, stimuli, and analy- sis scripts are a v ailable at h ttps://anonymous.4open.science/r/weber-B02C (directory m3_pilot/ ). Seed = 42 throughout. Pre-registered exclusion rules: If discrimination accuracy is at ceiling ( 99%) or chance ( 51%), d’ is uninformative and h yp otheses contingen t on d’ (H2, H2b, H3, H5) are declared not ev aluable. If no iden tication framing pro duces a crosso v er within the probing range, the McMurray (2022) predicted-vs-observ ed test (H2b) is not ev aluable. 5 3. Results Results are organised b y paradigm, follo wing the analysis hierarc hy specied in the pre-registration. Section 3.1 presen ts the primary conrmatory analysis (representational geometry). Sections 3.2– 3.4 present secondary analyses (iden tication, discrimination, and precision gradien t). Sections 3.5–3.7 present the three control conditions (non-b oundary con trol, temperature domain, and nonce- tok en remapping). Section 3.8 presen ts the causal interv en tion. Section 3.9 summarises h yp othesis outcomes. 3.1 Represen tational Geometry: CP-A dditiv e Dominates at All Lay ers (H1) The primary conrmatory test compared ve theoretical RDMs against empirical represen tational dissimilarit y matrices at each lay er of eac h mo del. T able 1 summarises the decade-10 condition (single-digit/double-digit b oundary at 10). T able 1. P aradigm A results: decade-10 b oundary . CP-Additiv e vs Contin uous comparison across six mo dels. “CP > Cont” rep orts the n umber of primary lay ers where the CP-A dditive mo del ac hieved higher Sp earman than the Contin uous mo del. “Mean ” is the mean dierence across primary la yers. All Man tel p erm utation tests (10,000 p erm utations) w ere signican t at p < .001 at all primary lay ers after Benjamini-Ho c hberg FDR correction at = .05. Mo del Primary La yers CP > Cont Mean Max (CP-A dd) Max (Cont) Llama-3-8B- Instruct 17/17 17/17 +0.023 0.940 0.921 Mistral-7B- Instruct 17/17 17/17 +0.060 0.929 0.889 Gemma-2-9B- IT 23/23 23/23 +0.063 0.890 0.834 Qw en2.5-7B- Instruct 15/15 15/15 +0.035 0.832 0.788 Phi-3.5-mini 17/17 17/17 +0.087 0.698 0.600 Llama-3-8B- Base 17/17 17/17 +0.032 0.956 0.927 CP-A dditive ac hiev ed higher Spearman correlation with the empirical RDM than the Contin uous mo del at 100% of primary lay ers for all six mo dels (H1 supp orted). The pre-registered success criterion ( 50% of primary lay ers for 3/5 instruct mo dels) was exceeded maximally . Figure 1 sho ws the empirical RDM for Llama-3-8B-Instruct at la yer 16, illustrating the blo ck structure at the 9/10 boundary . The CP-A dditive adv antage is not a trivial consequence of adding a free parameter: the b oundary b o ost was xed at 1.0 (not t to data), the eect was absen t at non-b oundary con trol p ositions (§3.5.1), absen t in the temp erature domain (§3.5.2), and the hierarc hical regression (§3.1.2) conrmed that b oundary-crossing contributes 5–27% unique v ariance b eyond log-distance — a non-trivial geometric feature, not an artefact of mo del complexity . The eect w as robust to distance metric: Euclidean distance pro duced iden tical results (CP-Additiv e > Contin uous at 100% of primary la y ers, all mo dels; Supplementary T able S1). Euclidean max for the CP-A dditive mo del ranged from 0.702 (Phi) to 0.955 (Base). 6 Figure 1: Empirical represen tational dissimilarity matrix (RDM) for Llama-3-8B-Instruct at la yer 16 (decade-10 condition, cosine distance). Eac h cell shows the pairwise cosine distance b et ween hidden state cen troids for tw o probing v alues. The block structure at the 9/10 b oundary is visible as an abrupt increase in cross-b oundary distances. The pre-registered mechanism test compared CP-Additiv e (constant b oundary b oost) with CP- Multiplicativ e (prop ortional scaling). CP-A dditiv e pro vided sup erior t at a majority of primary la yers in ve of six mo dels: Mistral (17/17), Gemma (22/23), Qwen (13/15), Phi (17/17), and Base (12/17). Llama-Instruct was split (8/17). This establishes that the b oundary eect op erates as a constant additiv e displacement in represen tational space rather than a prop ortional scaling of existing distances, consistent with the Fisher information warping predicted by Bonnasse-Gahot and Nadal (2022). 3.1.1 Replication at the 100-Boundary The decade-100 condition (double-digit/triple-digit b oundary at 100, probing v alues 70–130) replicated the decade-10 pattern (T able 1b). CP-Additiv e exceeded Contin uous at 100% of primary la yers for all six mo dels, with substan tially larger eect sizes. T able 1b. P aradigm A results: decade-100 b oundary and control-150. Same format as T able 1. E4 ratio = decade-100 mean CP adv antage / decade-10 mean CP adv an tage. Mo del CP > Cont (100) Mean (100) Max (CP-A dd) Max (Con t) Ctrl-150 E4 Ratio Llama-3- 8B-Instruct 17/17 +0.319 0.676 0.442 +0.017 12.7 7 Mo del CP > Cont (100) Mean (100) Max (CP-A dd) Max (Con t) Ctrl-150 E4 Ratio Mistral-7B- Instruct 17/17 +0.268 0.323 0.136 +0.042 3.9 Gemma-2- 9B-IT 23/23 +0.279 0.394 0.260 +0.016 4.0 Qw en2.5- 7B-Instruct 15/15 +0.162 0.404 0.241 +0.012 4.6 Phi-3.5- mini 17/17 +0.476 0.580 0.177 +0.012 4.6 Llama-3- 8B-Base 17/17 +0.305 0.619 0.338 +0.016 8.8 The 100-b oundary eect was 3.9–12.7 larger than the decade-10 eect across mo dels (E4). The matc hed control at 150 (probing v alues 130–170) sho w ed negligible CP adv an tage ( = +0.012 to +0.042), far smaller than b oth the decade-100 and decade-10 eects. The scaling of eect size with b oundary magnitude is interpretable: the 100-b oundary in v olves a transition from tw o-digit to three-digit n umbers, which changes b oth c haracter count and token coun t for all tested tok enisers, pro ducing a larger structural discon tinuit y than the 10-b oundary . 3.1.2 Hierarc hical Regression (H4) A t each primary lay er, pairwise represen tational distances w ere regressed on log-distance (Step 1), then a b oundary-crossing indicator was added (Step 2). The b oundary predictor added signicant unique v ariance at 100% of primary la yers for all six mo dels (all F-test p < .001). T able 2 summarises the hierarc hical regression results. T able 2. H4 hierarc hical regression: unique v ariance con tributed by b oundary-crossing b ey ond log-distance. Mo del Sig lay ers Mean R² Max R² Llama-3-8B-Instruct 17/17 0.050 0.084 Mistral-7B-Instruct 17/17 0.145 0.200 Gemma-2-9B-IT 23/23 0.174 0.231 Qw en2.5-7B-Instruct 15/15 0.079 0.115 Phi-3.5-mini 17/17 0.267 0.331 Llama-3-8B-Base 17/17 0.067 0.113 Boundary-crossing explains 5–27% of v ariance in representational distance b eyond what log- magnitude compression accoun ts for. The prop ortionally largest R² appears in Phi (0.267), whic h has the weak est ov erall geometry (max = 0.747). The b oundary eect is prop ortionally dominan t in the smaller mo del even though its absolute geometry is attenuated. 3.2 Iden tication: Classic CP vs Structural CP (H8) Three identication framings (“small/large”, “single-digit/m ulti-digit”, “one digit/t wo digits”) were administered to each instruct model using coun terbalanced A/B forced-choice. T able 3 summarises 8 iden tication outcomes using the digit_count framing, which pro duced the best signal across mo d- els. T able 3. P aradigm B0 results: identication at the decade-10 b oundary . “Boundary at 10?” indicates whether the iden tication function crossed 0.50 within ±1 step of the structural boundary . Mo del Boundary at 10? P attern CP Type Llama-3-8B-Instruct No Gradien t, nev er crosses 0.50 Structural CP Mistral-7B-Instruct No Extreme p osition bias; 0.50 after coun terbalancing Structural CP Gemma-2-9B-IT Y es Sharp step at exactly 10 (slop e = 6.3) Classic CP Qw en2.5-7B-Instruct Y es Step at 11–12 (shifted 1–2 steps from structural b oundary) Classic CP Phi-3.5-mini No Flat at 0.50; no category signal Structural CP Llama-3-8B-Base N/A No chat template; ra w-logit iden tication uninformativ e Structural CP T w o qualitativ ely distinct patterns emerged (Figure 3). Gemma and Qw en sho wed classic CP : both geometric warping and explicit categorisation at the b oundary , with sharp sigmoid iden tication functions. Llama, Mistral, Phi, and the base mo del show ed structur al CP : geometric w arping without explicit iden tication — the category b oundary is imp osed b y represen tational format rather than explicit category knowledge. 9 Figure 2: Identication functions at the decade-10 b oundary for t w o represen tative mo dels. T op: Gemma-2-9B-IT sho ws a sharp step function crossing 0.50 at the structural b oundary under the single-digit/m ulti-digit framing (classic CP). Bottom: Llama-3-8B-Instruct shows a at identi- cation function that never crosses 0.50 under either framing (structural CP). Both mo dels show comparable CP geometry (T able 1) despite the identication disso ciation. This dissociation was stable across b oundaries: at the 100-boundary , the same mo dels that failed to iden tify the 10-b oundary also failed to iden tify the 100-b oundary , and vice versa. The disso ciation is therefore a prop ert y of the arc hitecture, not the stim ulus. Cross-mo del correlation conrmed the dissociation: identication slop e did not predict geometric CP strength (Spearman = 0.14, p = .79, n = 6; E9). Mo dels with the sharp est iden tication b oundaries (Gemma: slop e = 6.34) did not sho w stronger geometric CP than mo dels with no iden tication at all (Phi: slop e = 0.0, y et largest R² = 0.331 in the hierarc hical regression). This conrms that geometric CP and explicit iden tication are disso ciated, consisten t with H8. 10 Prompt robustness analysis (E6) revealed that only Qwen pro duced consisten t crosso ver lo cations across all three framings (within 0.51 steps). Gemma and Phi show ed framing-dep enden t b oundaries (>2 steps disagreemen t), and Llama, Mistral, and the base mo del produced no crossov er under any framing. 3.3 Beha vioural Discrimination (H2) 3.3.1 Accuracy All four capable instruct mo dels (Llama, Mistral, Gemma, Qwen) achiev ed 99.9% accuracy on the forced-choice “Which is larger?” discrimination task. Phi-3.5-mini and Llama-3-8B-Base show ed chance-lev el accuracy after counterbalancing (pure p osition bias). All instruct mo dels except Phi exhibited strong rst-option (A) bias prior to coun terbalancing; Phi sho wed second-option (B) bias — an architecture-dependent eect that extends the position bias do cumen ted in P aradigm B0 (E12). Per the pre-registered exclusion rule, d w as uninformativ e and H2 (accuracy-based), H2b (McMurra y strict test), H3 (meta-d ), and H5 (M-ratio) were declared not ev aluable. 3.3.2 Condence (R T Analogue) Although accuracy was at ceiling, the magnitude of the decision signal — | logit| betw een the c hosen and unc hosen option — v aried systematically with b oundary p osition (cf. Cacioli, 2026b, for the use of | logit| as a condence measure in LLM psyc hophysics). This is an operational proxy for the pro cessing-ease signal measured by reaction time in h uman CP studies, not a b eha vioural equiv alen t; its v alidit y rests on the assumption that larger represen tational distance at the decision la y er pro duces larger logit separation, which is conrmed by the Paradigm A geometry . Cross-b oundary pairs pro duced signicantly higher condence than within-category pairs at matched log-distances (T able 4). T able 4. Paradigm B results: condence-based discrimination. Conf = mean | logit| for cross- b oundary minus within-category pairs. Cohen’s d and Mann-Whitney U test for the condence dierence. “Sig lev els” reports the n umber of log-distance bins (of 6) where the condence dierence reac hed signicance at p < .05. Mo del Conf(cross) Conf(within) Conf Cohen’s d p Sig levels Llama-3- 8B-Instruct 8.39 7.77 +0.62 0.45 <.001 4/6 Mistral-7B- Instruct 10.59 10.16 +0.43 0.29 <.001 3/6 Gemma-2- 9B-IT 8.63 8.44 +0.18 0.47 <.001 4/6 Qw en2.5- 7B-Instruct 20.94 20.70 +0.23 0.23 .001 2/6 Phi-3.5- mini 0.005 0.005 +0.0003 0.09 .38 1/6 Llama-3- 8B-Base 0.075 0.061 +0.015 0.55 <.001 4/6 The condence eect was signican t for v e of six mo dels (all except Phi), with medium eect sizes (Cohen’s d = 0.23–0.55). This demonstrates that the geometric warping do cumen ted in Paradigm A propagates to b eha vioural output: the additiv e boundary bo ost in represen tational distance translates to larger evidence magnitude at the decision stage. 11 The distance-controlled pattern w as interpretable: the condence dierence w as signican t at larger log-distances (0.30–0.60) but not at the smallest distances (0.10–0.20), where pairs are close together regardless of b oundary p osition. Llama-3-8B-Base sho w ed the largest eect size (d = 0.55) despite pure p osition bias at the accuracy lev el: the magnitude of its p osition-biased logit v aried with b oundary p osition, consisten t with the P aradigm A nding that base and instruct mo dels share categorical geometric structure. Phi-3.5-mini sho w ed no condence eect (d = 0.09, p = .38), despite exhibiting geometric CP in P aradigm A (CP-A dditiv e > Contin uous at 17/17 primary lay ers). This disso ciation b et w een geom- etry and b eha vioural propagation further supports the geometry-function distinction: the additive b oundary b o ost is present in the representational space of the smaller mo del but is insucient — giv en Phi’s attenuated ov erall geometry (max = 0.698) and near-zero condence magnitudes (| logit| 0.005) — to propagate to the output la y er as a measurable condence dierence. 3.4 Precision Gradien t (P aradigm C) Lo cal representational precision (1/||h(n+1) h(n)||) show ed a boundary-sp ecic spik e at 9→10 for all six models. Precision ratios (boundary distance / mean non-b oundary distance) ranged from 1.42 (Phi) to 2.29 (Mistral) at the decade-10 b oundary , compared with ratios near 1.0 (0.92–1.07) at the matched con trol p osition 15. This lo cal expansion of representational distance at the b oundary corresp onds directly to the Fisher information peak predicted b y Bonnasse-Gahot and Nadal (2022): in their framework, optimal categorisation warps the metric tensor of the representational space such that Fisher information is maximal at category b oundaries. The precision gradien t measured here is an empirical proxy for this metric w arping — it quan ties the lo cal expansion of representational space relative to the input space at the b oundary , exactly as their theory predicts. 3.5 Con trol Conditions Both geometric and b ehavioural CP measures used in this study are analytically inv ariant to the sampling temp erature parameter: hidden states are computed deterministically from xed inputs (temp erature aects only the softmax distribution ov er next-token probabilities), and | logit| is dened on raw logits which are unaected b y temperature scaling (E2). 3.5.1 Non-Boundary Con trol (H0) At the con trol p osition (15), the Con tinuous mo del con- sisten tly outp erformed CP-Additiv e (negative CP adv an tage for all six mo dels). The warping is sp ecic to structurally dened boundaries and absen t at arbitrary non-boundary positions within the same numerical range (H0 not falsied). At con trol p osition 150, CP adv antages w ere negligi- ble (+0.012 to +0.042; see T able 1b), far smaller than the decade-100 eects (+0.162 to +0.476) and comparable in magnitude to the temperature domain negativ es, conrming that the massiv e 100-b oundary eects are b oundary-sp ecic. 3.5.2 T emperature Domain (H6) The temperature domain ( 20 to 100°C, hot/cold linguistic b oundary at ~22°C) show ed negativ e CP adv antage for all six mo dels (T able 5b). The Contin uous mo del outperformed CP-Additiv e at the hot/cold boundary , and the temp erature con trol condition (b oundary at 43°C, no linguistic distinction) show ed equally negative adv an tages. T able 5b. T emp erature domain RSA results (H6). CP adv an tage is the mean (CP-Additiv e Contin uous) across primary la yers. Negative v alues indicate Contin uous ts b etter. 12 Mo del Hot/cold CP wins Hot/cold T emp ctrl Llama-3-8B-Instruct 0/17 0.062 0.062 Mistral-7B-Instruct 1/17 0.038 0.061 Gemma-2-9B-IT 1/23 0.027 0.054 Qw en2.5-7B-Instruct 4/15 0.009 0.063 Phi-3.5-mini 0/17 0.039 0.055 Llama-3-8B-Base 0/17 0.069 0.065 Despite having a linguistic category distinction, temp erature lac ks a tok enisation discon tinuit y — “21” and “23” tok enise iden tically — and therefore do es not produce represen tational w arping. This is a k ey positive null: it demonstrates that LLM categorical p erception requires structural input- format discontin uit y and is not induced by linguistic category kno wledge alone (H6 not supp orted). 3.5.3 Nonce-T ok en Remapping Control (E10) The nonce-tok en exp eriment tested whether categorical geometry can b e induced b y ordinal information alone, without the linguistic surface form of real num b ers. Sev en teen nonce tokens (“glorp”, “blick et”, “tazmo”, …) were mapp ed to ordinal p ositions 1–17 in t w o conditions: no ordering information (nonce_no_order) and an explicit pream ble establishing the ordering (nonce_ordered). T able 5 summarises E10 results. T able 5. E10 nonce-tok en remapping con trol: CP-Additiv e adv an tage across conditions and mo d- els. Mo del nonce_no_order nonce_ordered decade_10 Llama-3-8B-Instruct 0.010 +0.012 +0.023 Mistral-7B-Instruct 0.002 +0.005 +0.060 Gemma-2-9B-IT +0.004 +0.004 +0.063 Qw en2.5-7B-Instruct 0.010 +0.017 +0.035 Phi-3.5-mini 0.023 +0.007 +0.087 Llama-3-8B-Base 0.008 +0.004 +0.032 nonce_no_order: Clean null. No signicant geometry and no CP at the arbitrary b oundary p osition. Mean CP adv an tage w as negativ e or negligible for all mo dels ( 0.023 to +0.004). The con trol w orks as designed. nonce_ordered: Ordinal geometry emerged strongly , with a small but consistent CP adv an tage at the boundary (mean +0.004 to +0.017 across mo dels). Ho wev er, this CP eect was 3–10 smaller than the decade-10 eect and 10–100 smaller than the decade-100 eect. E10 establishes a three-level hierarch y (Figure 5). Without ordering information, nonce tokens pro duce no geometry and no CP . With ordering information, mo dels build ordinal representations from context alone, with a w eak CP-like eect at the boundary position. With real n um b ers, the tok enisation and digit-coun t discon tinuit y amplies the b oundary eect b y an order of magnitude. The linguistic surface form is the amplier, not the sole cause. 13 Figure 3: E10 nonce-tok en remapping con trol: three-level hierarc hy . Mean CP adv antage ( ) for nonce tok ens without ordering information (grey), nonce tok ens with explicit ordering (blue), and real Arabic numerals at the decade-10 b oundary (red). Real n umbers pro duce 3–10 larger CP eects than ordered nonce tokens, and nonce tokens without ordering pro duce no CP . A notable case is Phi-3.5-mini, which ac hieved near-perfect ordinal geometry in the nonce_ordered condition (max = 0.97 for the contin uous mo del) y et its CP adv antage w as only +0.007. This dis- so ciation b et ween geometry strength and CP magnitude demonstrates that strong ordinal geometry do es not automatically pro duce categorical w arping; the w arping requires a structural discontin uit y . 3.6 Instruction-T uning Con trol (H7) Llama-3-8B-Base sho wed nearly iden tical CP geometry to Llama-3-8B-Instruct (CP adv an tage +0.032 vs +0.023; precision ratio 1.71 vs 1.57). The base mo del achiev ed the highest max in the study (CP-Additiv e = 0.955), conrming that categorical geometric structure is presen t in pretrained representations and is not introduced b y instruction tuning (H7 supp orted). Where base and instruct models div erge is in identication and discrimination b ehaviour: the base model cannot categorise or compare num bers in forced-c hoice tasks (no chat template, pure p osition bias at the accuracy lev el), y et its represen tational geometry con tains the same categorical signature. This is a disso ciation b etw een representational structure and b eha vioural comp etence. 3.7 La y erwise Prole (E1) and Lo cal Manifold Analysis (E7, E8) CP geometry (the CP-Additiv e adv an tage ov er Contin uous) emerged at primary lay ers and was absen t at early and late lay ers for all mo dels (E1; Figure 2). The b oundary p osition induced a lo cal manifold rotation: the rst principal component of the local neigh b ourhoo d rotated 81.6–89.6° at the decade-10 boundary relative to non-b oundary p ositions (E7; range across mo dels: Mistral 81.6°, Gemma 82.3°, Qwen 86.5°, Llama-Base 88.8°, Llama-Instruct 89.1°, Phi 89.6°). Phase-reset 14 analysis conrmed that represen tational similarity tra jectories show ed a signicant discontin uity at the b oundary (Mann-Whitney p < .01, all mo dels; mean ratio of b oundary-to-non-b oundary distance: 1.51–2.37; E8). The relationship b et w een CP strength ( ) and global compression qualit y ( ) v aried across mo dels: larger models (Gemma, Mistral, Llama-Base) show ed anticorrelation at the decade-100 b oundary ( = 0.30 to 0.79), suggesting that CP lo cally disrupts the contin uous magnitude geometry , while Phi sho wed strong positive correlation ( = +0.88 to +0.99), consistent with the b oundary eect b eing the dominan t geometric feature in the smaller mo del (E11). Figure 4: Lay erwise CP-A dditive adv an tage ( = CP-A dditive minus Contin uous ) across all la yers for six mo dels at the decade-10 boundary . The shaded region indicates appro ximate primary la yer range. All mo dels show p ositiv e at primary lay ers. Llama-3-8B-Base (dashed) shows comparable CP geometry to its instruct coun terpart. 3.8 Causal In terv ention (E5) A ctiv ation patc hing tested whether the categorical geometry iden tied in P aradigm A is causally implicated in discrimination behaviour. This analysis was p erformed on Llama-3-8B-Instruct as a pro of-of-concept; full cross-architecture replication is left for future work. A ridge-regression prob e trained on RSA centroids (prob e accuracy = 1.00 at all primary lay ers; PC1–category correlation: mean = .83) dened the “category direction” at each la yer. Patc hing along this direction at graded dose lev els ( {0.25, 0.50, 0.75, 1.00}) pro duced large, specic, dose-dep enden t c hanges in discrimination condence at early lay ers but negligible eects at mid-to-late lay ers where CP geometry is strongest (T able 6; Figure 4). T able 6. E5 causal in terv ention results (Llama-3-8B-Instruct). | conf| = absolute change in dis- crimination condence under category-direction patc hing. Sp ecicit y = ratio of category-direction eect to mean random-direction eect (10 random controls). 15 La yer Depth Cat conf Rand 5 Early 0.621 0.009 70.1 Monotonic 8 Early-primary 0.242 0.006 43.6 Monotonic 16 Mid-primary 0.018 0.002 10.8 Monotonic 23 Near p eak RSA 0.058 0.002 34.3 Monotonic 27 Late 0.010 0.001 12.1 Non-monotonic Figure 5: Causal interv en tion via activ ation patching (E5, Llama-3-8B-Instruct). (A) Dose- resp onse: absolute change in discrimination condence ( conf ) as a function of patching dose ( ) for ve lay ers. Early lay ers (5, 8) show strong, monotonic, dose-dep enden t eects; mid-to-late la yers (16, 23, 27) are at near the random direction band. (B) Sp ecicit y (category direction eect divided by random direction eect) at across la yers. The absolute eect of category-direction patc hing dropp ed by approximately 60 from la y er 5 (| conf| = 0.621) to lay er 16 (| conf| = 0.018). The category direction at early lay ers — where geometry is still forming — produced large, specic, dose-dep enden t changes in discrimination con- dence. At mid-to-late la y ers where CP geometry peaks, patching produced negligible eects despite remaining direction-sp ecic (sp ecicit y 10–34 ). This pattern replicates exactly the disso ciation found in the W eb er study (Cacioli, 2026a) for magnitude represen tations: early la yers are causally implicated, late p eak-RSA lay ers are causally inert. A sign rev ersal across la yers w as observ ed: lay er 5 patc hing increased condence (+0.621), while la yer 8 patching decreased it ( 0.242). Both eects were monotonic and highly sp ecic, indicating that the category direction has opp osite functional roles at dierent depths. This suggests a programme-level principle: representational structure and functional relev ance disso ciate across depth. The lay er with the most interpretable geometry — the one a researcher w ould b e most tempted to analyse — is not the la yer performing the computational w ork. This holds for b oth magnitude (Cacioli, 2026a) and category (presen t study). 16 3.9 Hyp othesis Summary T able 7 summarises outcomes for all pre-registered hypotheses. T able 7 . Pre-registered hypothesis outcomes. Hyp othesis Outcome Key Evidence H0 (F alsication) Not falsied Con trol p ositions (15, 150) sho w no CP H1 (Representational warping) Supp orted CP-A dd > Cont at 100% of primary lay ers, all 6 mo dels H2 (Behavioural d ) Not ev aluable Ceiling accuracy (pre-registered exclusion) H2b (McMurray strict test) Not ev aluable No clean identication b oundary in most mo dels H3 (Meta-d ) Not ev aluable Ceiling accuracy (pre-registered exclusion) H4 (Boundary contribution) Supp orted R² = 0.05–0.27 at 100% of la yers, all models H5 (M-ratio) Not ev aluable Ceiling accuracy (pre-registered exclusion) H6 (Cross-domain) Not supp orted T emp erature shows no CP (p ositiv e null) H7 (Instruction-tuning) Supp orted Base Instruct for geometry; div erge for b ehaviour H8 (ID-geometry disso ciation) Supp orted Gemma/Qw en = classic CP; Llama/Mistral/Phi = structural CP F our h yp otheses w ere supp orted (H1, H4, H7, H8). One h yp othesis yielded an informativ e negativ e (H6). F our h yp otheses were not ev aluable due to pre-registered exclusion criteria (ceiling accuracy in the discrimination task). All t welv e pre-registered exploratory analyses were completed. 4. Discussion The present study applied formal psychoph ysical metho dology — representational similarit y analy- sis, signal detection theory , counterbalanced forced-choice psyc hoph ysics, and causal interv en tion — to the hidden states of six large language mo dels pro cessing Arabic n umerals. The results establish that categorical p erception, one of the most extensively studied phenomena in p erceptual psychol- ogy , has a structural analogue in articial neural netw orks. This section considers what the ndings mean for theories of categorical perception, what they rev eal ab out the relationship b et w een rep- resen tational structure and b eha vioural comp etence, and what metho dological implications they carry for the study of b oth biological and articial cognition. 4.1 Categorical P erception Without Category Kno wledge The central nding is that all six mo dels show categorical warping at digit-count b oundaries — CP-A dditive outp erforms Contin uous at 100% of primary la yers, with the eect scaling b y 4–13 17 at the larger b oundary — yet only t wo of six mo dels can explicitly iden tify the category distinction when asked. This disso ciation b et w een representational structure and explicit categorisation (H8) is the most theoretically consequential result. In the human CP literature, the relationship b et ween iden tication and discrimination has b een treated as denitional. McMurra y (2022) argued forcefully that many putative demonstrations of CP fail his strict test: observed discrimination m ust exceed what is predicted from the identication function alone. The logic of this test presupp oses that the only evidence for categorical structure is b eha vioural — because in human studies, the represen tational geometry is not directly observ able. LLMs extend this framework. Direct access to the geometry reveals that categorical warping is presen t in models that cannot rep ort the category . The four “structural CP” mo dels (Llama, Mistral, Phi, Base) exhibit the full geometric signature — additive boundary b o ost, precision gradien t spik e, manifold rotation of 82–90° at the b oundary , phase-reset discon tinuit y — without an y abilit y to p erform the identication task. This is not a failure of the CP paradigm; it is a disso ciation that McMurray’s framework, designed for systems where representations are opaque, w as not p ositioned to detect. The implication for cognitive science is that the standard tw o-task criterion (identication + dis- crimination) is sucien t but not necessary for establishing CP . When represen tational geometry is directly accessible, geometric w arping at the boundary is itself evidence for categorical structure, re- gardless of whether the system can report the category . This aligns with the theoretical p ositions of Bonnasse-Gahot and Nadal (2022), who show ed that Fisher information w arping at category b ound- aries emerges in deep lay ers of trained neural net works regardless of output behaviour, and Park et al. (2024), who demonstrated categorical p olytope structure without requiring identication tasks. The present study pro vides the rst empirical demonstration that this representational-geometric form of CP app ears alongside — and disso ciates from — the classical b eha vioural form within the same set of systems. 4.2 Structural vs A cquired Categorical P erception The distinction b et w een structural and acquired CP — b etw een warping imposed b y prop erties of the representational format and warping that emerges from category learning — has b een dicult to adjudicate in biological systems because the t wo sources of w arping are t ypically confounded. Hu- mans who learn colour categories also ha ve retinal cone tuning curves; humans who learn phoneme categories also hav e co c hlear lter banks. Input structure and learned categorisation cov ary . LLMs oer a cleaner separation. Three con verging results establish that the CP do cumen ted here is primarily structural rather than acquired. First, the temp erature domain control (H6). T emp erature has a linguistic category b oundary (hot/cold) but no tokenisation discontin uit y — “21°C” and “23°C” tokenise identically . If CP w ere driv en primarily b y learned semantic categories, temp erature should show at least some warping. It do es not. CP adv an tage w as negative for all six mo dels in the temperature domain ( 0.009 to 0.069), compared with p ositiv e adv an tages of +0.023 to +0.087 for n umbers. This is consistent with the structural account, though it should be noted that “hot/cold” is a relativ ely fuzzy , con text- dep enden t b oundary — a sharp er linguistic category (e.g., grammatical num b er) w ould provide a stronger test. Second, the nonce-tok en remapping control (E10). Nonce tokens mapp ed to ordinal p ositions with an explicit ordering preamble pro duced a w eak CP eect ( = +0.004 to +0.017), but this was 18 3–10 smaller than the decade-10 eect and 10–100 smaller than the decade-100 eect. Ordinal con text alone can induce a trace of b oundary warping — likely reecting the mo del’s ability to learn w eak categorical structure from in-context ordinal information — but the tokenisation and digit-coun t discontin uit y amplies this eect b y an order of magnitude. The Phi disso ciation is particularly informative: near-p erfect ordinal geometry ( = 0.97) with negligible CP adv antage (+0.007), demonstrating that ordered represen tations do not automatically produce categorical w arping. Strong ordinal geometry is not sucient; the structural discon tinuit y in the input format is required to pro duce the large eects do cumen ted for real n umbers. Third, the instruction-tuning control (H7). Llama-3-8B-Base and Llama-3-8B-Instruct show nearly iden tical CP geometry ( = +0.032 vs +0.023; the base mo del actually achiev es the highest max in the study). The categorical structure is presen t in pretrained represen tations b efore an y instruction tuning — it is a prop ert y of ho w the model enco des the distributional statistics of digit strings, not a pro duct of explicit category instruction. T ogether, these three con trols conv erge on the same conclusion: structural prop erties of the input — tokenisation b oundaries, digit-coun t transitions, character-length changes — are sucient to pro duce categorical p erception geometry in LLMs, and the eect do es not require learned seman tic category knowledge. This do es not mean that all CP is structural, nor that semantic categories could never pro duce CP under dieren t conditions. The classic/structural CP disso ciation (H8) suggests that instruction tuning can add an explicit categorisation capacit y on top of the structural geometry , pro ducing the full CP signature in some arc hitectures (Gemma, Qw en) but not others. The structural warping, ho wev er, is universal. This result has implications b ey ond LLMs. It provides a pro of of concept that categorical p erception can arise from representational format constrain ts without category learning — a p ossibilit y that has b een theorised (McMurra y , 2022; Massaro, 1987) but never demonstrated in a system where the geometry , the training data, and the input format are all sim ultaneously observ able. 4.3 The Geometry-F unction Dissociation The causal interv en tion (E5) rev ealed a disso ciation b et w een represen tational structure and func- tional relev ance that replicates across both the magnitude (Cacioli, 2026a) and category domains. A t early lay ers (5 and 8), patching along the category direction pro duced large, sp ecic, dose- dep enden t changes in discrimination condence (| conf| up to 0.621, sp ecicity 44–70 ab ov e random con trols). At mid-to-late lay ers where CP geometry p eaks (lay ers 16–27), patching pro- duced negligible eects (| conf| = 0.01–0.06) despite remaining direction-sp ecic. This pattern is problematic for the common in terpretability practice of identifying the lay er with the strongest prob e accuracy or the highest RSA t and declaring it the “represen tation la yer” for a giv en concept. The la yer with the most in terpretable geometry is not the la y er p erforming the computation. This is not a n ull result — the geometry is real, and it is causally grounded at early la yers — but it means that the computational w ork happens where the geometry is still forming, not where it has crystallised into its most legible form. The sign rev ersal at la y ers 5 and 8 (positive and negativ e eects on condence, resp ectiv ely) further suggests that the category direction plays functionally dieren t roles at dieren t depths. This is consisten t with recent w ork on sup erposition and polysemanticit y in neural net w ork represen tations (Elhage et al., 2022): the same geometric feature may supp ort dieren t computations at dierent la yers, and a single “category direction” extracted b y a linear probe ma y conate functionally 19 distinct subspaces. F or the CP literature, this nding complicates the use of representational similarity analysis as a standalone measure of categorical structure. RSA can identify where CP geometry is present, but not where it matters. Causal metho ds are required to establish functional relev ance — a lesson that applies equally to neuroimaging studies of human categorical perception, where the distinction b et w een represen tational presence and causal relev ance is often elided. 4.4 A dditiv e vs Multiplicative W arping The pre-registered mechanism test established that the b oundary eect is additive rather than m ulti- plicativ e: a constan t displacemen t in representational distance at the b oundary , not a prop ortional scaling of existing distances. Five of six mo dels fav oured the additiv e mo del; only Llama-Instruct w as split. This nding connects to the theoretical framework of Bonnasse-Gahot and Nadal (2022), who deriv ed that optimal categorisation pro duces a lo cal spike in Fisher information at the category b oundary — a phenomenon that manifests geometrically as a lo cal additiv e increase in represen- tational distance. The additive nature of the eect is imp ortan t b ecause it means the b oundary op erates as an indep enden t structural feature lay ered on top of the contin uous magnitude geom- etry , rather than mo dulating that geometry . This is consistent with the hierarc hical regression results (H4), where b oundary-crossing explained 5–27% of unique v ariance b ey ond log-distance: the b oundary adds information to the geometry rather than distorting the information already presen t. 4.5 Implications for Theories of Categorical P erception The present study sits at the intersection of classical psychoph ysics, computational neuroscience, and AI interpretabilit y . Three sets of implications follow. F r om c o gnitive scienc e to AI: The psychoph ysical to olkit — iden tication functions, forced-choice discrimination with SDT, represen tational similarity analysis, precision gradients — pro vides a rigorous framew ork for c haracterising how neural netw orks represent categorical structure. This framew ork go es b eyond the binary “do es the mo del know X?” question typical of NLP ev alua- tion and instead asks how knowledge is geometrically enco ded, how it v aries across lay ers, and whether represen tational structure is causally linked to b ehaviour. The nonce-token control (E10) illustrates the v alue of psychoph ysical design: b y manipulating the relationship b et ween ordinal information and linguistic surface form, it isolates the specic con tribution of tokenisation structure to categorical geometry — a precision that standard NLP probing metho ds do not ac hieve. F r om AI to c o gnitive scienc e: LLMs pro vide a system where the acquired-vs-structural distinction can b e cleanly tested b ecause the representational format, the training data, and the resulting geometry are all observ able. The nding that structural input properties (tokenisation, digit coun t) pro duce categorical warping without explicit category learning oers an existence pro of for the structural accoun t of CP that has b een debated for decades in the human literature (Massaro, 1987; McMurray , 2022). It do es not resolv e the debate for biological systems, but it establishes that the structural mec hanism is computationally viable — that format-driven warping can pro duce the full geometric CP signature in a learning system. The present w ork complements a growing b ody of research applying cognitive science constructs to LLM represen tations. Park et al. (2024, 2025) demonstrated that LLMs represent categori- 20 cal concepts as polytop e structures, establishing that categorical geometry exists in hidden states but without testing the psyc hophysical signature (iden tication, discrimination, b oundary-sp ecic w arping) that denes CP . Shani, Marjieh, and Griths (2025) sho w ed that LLM represen tations compress along typicalit y gradients in a manner consisten t with human categorisation, but fo cused on within-category structure rather than the b et w een-category b oundary eects that are diagnostic of CP . The W eber study (Cacioli, 2026a) established that numerical magnitude follows logarithmic compression consisten t with W eb er’s Law, pro viding the contin uous baseline geometry on which the presen t categorical eects are superimp osed. The presen t study integrates these strands: it tests CP sp ecically — the b oundary phenomenon — using the formal psychoph ysical methodology (RSA with theoretical mo del comparison, SDT-based discrimination, identication functions, causal in- terv ention) that the prior w ork did not employ . The result is that LLM categorical structure is not merely present as a geometric fact but op erates through a sp ecic mechanism (additive b ound- ary w arping driven b y input-format discon tinuit y) that can b e dissociated from explicit category kno wledge, quan tied at each lay er, and causally tested. Bidir e ctional: The geometry-function disso ciation (E5) iden ties a metho dological hazard shared b y cognitiv e science and AI in terpretabilit y researc h: the lay er (or brain region, or time windo w) with the most legible represen tational structure ma y not b e the la yer p erforming the relev an t computation. This is not a nov el insigh t in neuroscience — the distinction b etw een neural coding and neural computation has b een drawn b efore (de Wit et al., 2016) — but the present study pro vides an unusually clean demonstration b ecause the entire computational pip eline is observ able and causally manipulable. 4.6 Limitations Sev eral limitations should b e noted. First, the causal in terv en tion w as performed on one model only (Llama-3-8B-Instruct) as a proof-of-concept; although the geometry-function dissociation replicates the pattern found in the W eber study for magnitude represen tations, the generality of the causal nding across architectures remains to b e tested. Second, the n umerical domain is a particularly clean case for structural CP because digit-coun t boundaries pro duce a sim ultaneous change in tok enisation, character count, and lexical form. Whether structural CP extends to domains with subtler input-format discontin uities (e.g., morphological b oundaries in inected languages) is an op en question. Third, the pre-registered discrimination h yp otheses (H2, H3, H5) w ere not ev aluable due to ceiling accuracy — Arabic numeral comparison is trivially easy for capable LLMs. A harder discrimination task (e.g., cross-format comparison: “Which is larger, nine or 12?”) would likely pro duce non-ceiling accuracy and enable the full SDT analysis. F ourth, the control-150 condition sho wed small but non-zero CP adv an tages ( = +0.012 to +0.042), suggesting p ossible leakage of the 100-b oundary eect into the con trol range. These v alues w ere far smaller than the decade- 100 eects (+0.162 to +0.476) and comparable in magnitude to statistical noise in the temp erature domain, but the con trol is not as clean a null as the control-15 condition for the decade-10 b oundary . Fifth, the temp erature domain tested only one non-structural category b oundary , and “hot/cold” is a relatively fuzzy , gradient b oundary; additional domains with sharp er linguistic categories (e.g., grammatical n umber, animacy) w ould strengthen the cross-domain generalisation of the structural accoun t. Sixth, the study tested mo dels in the 3.8–9.2B parameter range; whether the same patterns hold at substan tially larger scales (70B+) is unknown. Seven th, while the present study infers structural input-format discontin uities as the primary mechanism, the Supplementary T able S2 analysis reveals that the relationship b et w een tokenisation and CP is not straightforw ard: Llama- 3’s BPE vocabulary merges multi-digit num b ers in to single tok ens, eliminating the token-coun t 21 discon tinuit y that is presen t in Gemma, Qwen, Mistral, and Phi — yet Llama sho ws comparable CP geometry . This suggests that character-coun t and lexical-form changes (which are common to all mo dels) are sucient, and that tok en-count c hanges amplify but are not necessary for the eect. A same-domain, dierent-tok enisation control (e.g., re-tokenising identical stim uli with alternativ e BPE vocabularies) would pro vide a stronger test and is an imp ortan t direction for future work. 4.7 Conclusion Structural input-format discon tinuities — tok enisation b oundaries, digit-coun t transitions, and c haracter-length c hanges — are sucient to pro duce the full geometric signature of categorical p erception in LLM hidden states, indep enden tly of explicit semantic category kno wledge. The geometric warping at category b oundaries is univ ersal across arc hitectures, present in pretrained represen tations b efore instruction tuning, absent in domains that lack structural input discontin u- ities, and causally grounded at early la yers where represen tations are still forming rather than at later lay ers where the geometry reaches its p eak legibility . The dissociation b et w een geometric CP (universal) and explicit iden tication (arc hitecture- dep enden t) demonstrates that representational structure can outrun b eha vioural comp etence. F or AI interpretabilit y , this cautions against equating the most legible representation with the most functionally relev an t one. F or theories of categorical p erception more broadly , it provides a computational existence pro of that CP geometry can arise from the format of the input represen tation, not only from category learning, in a system where b oth the format and the learning are fully observ able. A c kno wledgments This researc h was conducted as part of the Classical Minds, Mo dern Machines indep enden t researc h programme. No external funding was receiv ed. Use of Generativ e AI: Claude (Anthropic) w as used as a researc h assistan t for co de generation and gure pro duction. The author takes full responsibility for the accuracy of all conten t. LLMs are the ob jects of ev aluation in this study , not components of the experimental metho d. Data A v ailabilit y All co de, stim uli, pre-registration, and analysis scripts are av ailable at h ttps://anonymous.4open.science/r/weber- B02C (directory m3_pilot/ ). Raw hidden-state extractions are a v ailable up on request. Pre- registration: https://osf.io/qrxf3/o v erview?view_only=4afe7fb d28764087a65a4222578ad625. References Cacioli (2026a). W eb er’s Law in large language mo del hidden states. Under review. Cacioli (2026b). Do LLMs kno w what they kno w? Signal detection theory meets metacognition. Under review. Bonnasse-Gahot, L., & Nadal, J.-P . (2022). Categorical p erception: A groundw ork for deep learning. Neur al Computation , 34 (2), 437–475. 22 Burns, E. M., & W ard, W. D. (1978). Categorical p erception — phenomenon or epiphenomenon: Evidence from exp eriments in the perception of melo dic m usical in terv als. Journal of the A c oustic al So ciety of A meric a , 63 (2), 456–468. de Wit, L., Alexander, D., Ekroll, V., & W agemans, J. (2016). Is neuroimaging measuring infor- mation in the brain? Psychonomic Bul letin & R eview , 23 (5), 1415–1428. Elhage, N., Nanda, N., Olsson, C., Henighan, T., Joseph, N., Mann, B., Ask ell, A., Bai, Y., Chen, A., Conerly , T., DasSarma, N., Drain, D., Ganguli, D., Hateld-Do dds, Z., Hernandez, D., Jones, A., Kernion, J., Lovitt, L., Ndousse, K., … Olah, C. (2022). T o y mo dels of sup erp osition. arXiv:2209.10652 . Etco, N. L., & Magee, J. J. (1992). Categorical p erception of facial expressions. Co gnition , 44 (3), 227–240. Goldstone, R. L. (1994). Inuences of categorization on p erceptual discrimination. Journal of Exp erimental Psycholo gy: Gener al , 123 (2), 178–200. Goldstone, R. L., & Hendrickson, A. T. (2010). Categorical p erception. Wiley Inter disciplinary R eviews: Co gnitive Scienc e , 1 (1), 69–78. Harnad, S. (Ed.). (1987). Cate goric al p er c eption: The gr oundwork of c o gnition . Cambridge Uni- v ersity Press. Lib erman, A. M., Harris, K. S., Homan, H. S., & Grith, B. C. (1957). The discrimination of sp eec h sounds within and across phoneme b oundaries. Journal of Exp erimental Psycholo gy , 54 (5), 358–368. Massaro, D. W. (1987). Categorical partition: A fuzzy-logical mo del of categorization b ehavior. In S. Harnad (Ed.), Cate goric al p er c eption: The gr oundwork of c o gnition (pp. 254–283). Cambridge Univ ersity Press. McMurra y , B. (2022). The myth of categorical p erception. Journal of the A c oustic al So ciety of A meric a , 152 (6), 3819–3842. P ark, K., Y un, S., Lee, J., & Shin, J. (2024). The geometry of categorical and hierarchical concepts in large language mo dels. . P ark, K., Y un, S., Lee, J., & Shin, J. (2025). The linear representation of categorical and hierarchi- cal concepts in LLMs. In Pr o c e e dings of the International Confer enc e on L e arning R epr esentations (ICLR 2025) (Oral). Rogers, J. C., & Da vis, M. H. (2009). Categorical p erception of sp eec h without stim ulus repetition. In Pr o c e e dings of Intersp e e ch 2009 (pp. 376–379). Shani, C., Marjieh, R., & Griths, T. L. (2025). Compression in LLMs mirrors human typicalit y gradien ts. . Wina wer, J., Witthoft, N., F rank, M. C., W u, L., W ade, A. R., & Boro ditsky , L. (2007). Russian blues reveal eects of language on color discrimination. Pr o c e e dings of the National A c ademy of Scienc es , 104 (19), 7780–7785. Zh u, F., Dai, D., & Sui, Z. (2025). Language mo dels enco de the v alue of num bers linearly . In Pr o c e e dings of the International Confer enc e on Computational Linguistics (COLING 2025) (pp. 693– 709). 23 Supplemen tary Materials Supplemen tary T able S2: T ok eniser Enco ding of Boundary-Relev ant Numbers T ok en coun ts for num bers spanning the decade-10 (9->10) and decade-100 (99->100) boundaries. Three tokenisation patterns emerge: (i) Llama-3 (instruct and base) uses merged BPE tokens for m ulti-digit num b ers, pro ducing no token-coun t discontin uit y at either b oundary; (ii) Gemma and Qw en use p er-digit tok enisation with single-digit n umbers as one tok en, yielding a 1->2 tok en jump at the decade-10 boundary and 2->3 at decade-100; (iii) Mistral and Phi prep end a leading-space tok en and tokenise p er-digit, yielding 2->3 and 3->4 token jumps resp ectively . Decade-10 b oundary: Num b er Digits Llama-3 Mistral Gemma Qwen Phi 9 1 1 tok 2 tok 1 tok 1 tok 2 tok 10 2 1 tok 3 tok 2 tok 2 tok 3 tok tok ens 0 +1 +1 +1 +1 Decade-100 b oundary: Num b er Digits Llama-3 Mistral Gemma Qwen Phi 99 2 1 tok 3 tok 2 tok 2 tok 3 tok 100 3 1 tok 4 tok 3 tok 3 tok 4 tok tok ens 0 +1 +1 +1 +1 F ull t ok en IDs (decade-10 range): Num b er Llama-3 (In- struct/Base) Gemma-2-9B Qw en2.5-7B Mistral-7B Phi-3.5-mini 4 [19] (1 tok) [235310] (1 tok) [19] (1 tok) [_, 4] (2 tok) [_, 4] (2 tok) 5 [20] (1 tok) [235308] (1 tok) [20] (1 tok) [_, 5] (2 tok) [_, 5] (2 tok) 6 [21] (1 tok) [235318] (1 tok) [21] (1 tok) [_, 6] (2 tok) [_, 6] (2 tok) 7 [22] (1 tok) [235324] (1 tok) [22] (1 tok) [_, 7] (2 tok) [_, 7] (2 tok) 8 [23] (1 tok) [235321] (1 tok) [23] (1 tok) [_, 8] (2 tok) [_, 8] (2 tok) 9 [24] (1 tok) [235315] (1 tok) [24] (1 tok) [_, 9] (2 tok) [_, 9] (2 tok) 24 Num b er Llama-3 (In- struct/Base) Gemma-2-9B Qw en2.5-7B Mistral-7B Phi-3.5-mini 10 [605] (1 tok) [1, 0] (2 tok) [1, 0] (2 tok) [_, 1, 0] (3 tok) [_, 1, 0] (3 tok) 11 [806] (1 tok) [1, 1] (2 tok) [1, 1] (2 tok) [_, 1, 1] (3 tok) [_, 1, 1] (3 tok) 12 [717] (1 tok) [1, 2] (2 tok) [1, 2] (2 tok) [_, 1, 2] (3 tok) [_, 1, 2] (3 tok) 15 [868] (1 tok) [1, 5] (2 tok) [1, 5] (2 tok) [_, 1, 5] (3 tok) [_, 1, 5] (3 tok) 20 [508] (1 tok) [2, 0] (2 tok) [2, 0] (2 tok) [_, 2, 0] (3 tok) [_, 2, 0] (3 tok) Note: Llama-3 (b oth Instruct and Base) shares the same tok eniser; tok en IDs are iden tical. The _ symbol denotes a leading-space token prep ended b y Mistral and Phi tokenisers. Despite the absence of a token-coun t discon tinuit y in Llama-3, all mo dels show comparable CP geometry at the decade-10 b oundary (T able 1), indicating that c haracter-count and lexical-form changes are sucien t to pro duce the eect. Dr aft gener ate d [date]. Corr esp ondenc e: Jon-Paul Cacioli, synthium@hotmail.c om. 25

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment