DiffAttn: Diffusion-Based Drivers' Visual Attention Prediction with LLM-Enhanced Semantic Reasoning

Drivers' visual attention provides critical cues for anticipating latent hazards and directly shapes decision-making and control maneuvers, where its absence can compromise traffic safety. To emulate drivers' perception patterns and advance visual at…

Authors: Weimin Liu, Qingkun Li, Jiyuan Qiu

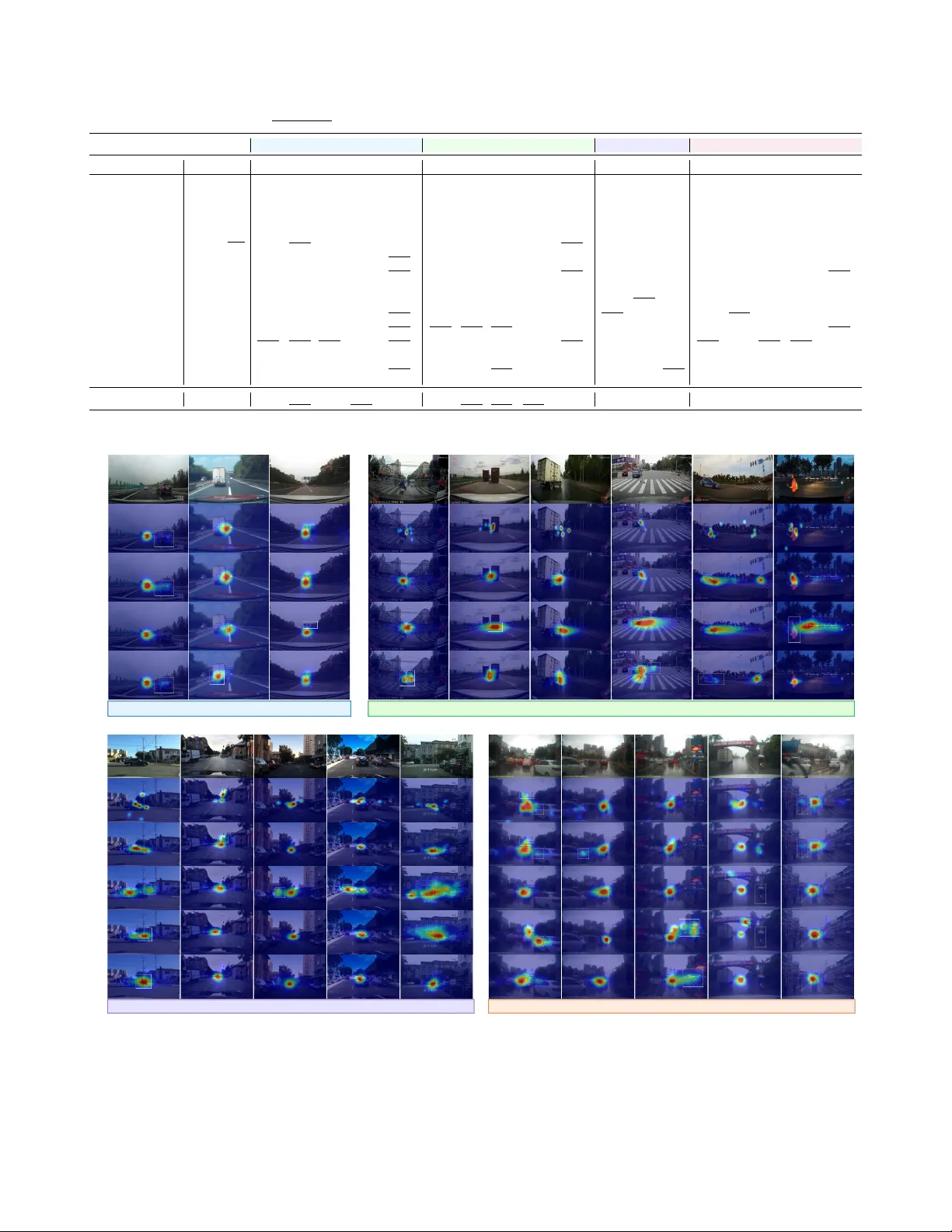

DiffAttn: Diffusion-Based Driv ers’ V isual Attention Pr ediction with LLM-Enhanced Semantic Reasoning W eimin Liu 1 , Qingkun Li 2 ∗ , Jiyuan Qiu 3 , W enjun W ang 1 ∗ , Joshua H. Meng 4 Abstract — Drivers’ visual attention pro vides critical cues f or anticipating latent hazards and dir ectly shapes decision-making and control maneuvers, wher e its absence can compromise traffic safety . T o emulate drivers’ perception patterns and advance visual attention prediction for intelligent vehicles, we propose DiffAttn, a diffusion-based framework that formulates this task as a conditional diffusion-denoising process, enabling more accurate modeling of drivers’ attention. T o capture both local and global scene features, we adopt Swin T ransformer as encoder and design a decoder that combines a Featur e Fusion Pyramid for cross-layer interaction with dense, multi- scale conditional diffusion to jointly enhance denoising learning and model fine-grained local and global scene contexts. Addi- tionally , a lar ge language model (LLM) layer is incorporated to enhance top-down semantic reasoning and improve sensitivity to safety-critical cues. Extensive experiments on four public datasets demonstrate that DiffAttn achiev es state-of-the-art (SoT A) performance, surpassing most video-based, top-down- feature-dri ven, and LLM-enhanced baselines. Our framework further supports interpretable driver -centric scene understand- ing and has the potential to improve in-cabin human-machine interaction, risk perception, and drivers’ state measurement in intelligent vehicles. I . I N T R O D U C T I O N As autonomous driving systems and intelligent v e- hicular algorithms hav e adv anced significantly in recent years, dri vers no w have more opportunities to engage in non-driving-related tasks (NDR Ts) during prolonged and monotonous autonomous driving, where their gazes are no longer required to be continuously fixed on the road [1]. Un- der such conditions, accurate measurement and assessment of dri vers’ visual attention distrib ution becomes critically im- portant for ensuring the safety and reliability of autonomous vehicles, especially in scenarios requiring human-machine cooperation or take-ov er [2]. Reliable attention measurement not only provides quantitativ e indicators of dri vers’ cognitiv e states, but also serves as a fundamental component for driver monitoring systems, risk ev aluation, and adaptive human- vehicle interfaces in automated dri ving [3]. * Corresponding authors: W enjun W ang and Qingkun Li. 1 W eimin Liu is with School of vehicle and Mobility , Tsinghua University , Beijing, China lwm23@mails.tsinghua.edu.cn 2 Qingkun Li is with Beijing Key Laboratory of Human-Computer Inter- action, Institute of Software, Chinese Academy of Sciences, Beijing, China qingkun.li.thu@gmail.com 3 Jiyuan Qiu is with Remote Sensing and Earth Observation Laboratory , Univ ersity of Copenhagen, Copenhagen K, Denmark jiqi@ign.ku.dk 1 W enjun W ang is with School of vehicle and Mobility , Tsinghua Uni- versity , Beijing, China wangxiaowenjun@tsinghua.edu.cn 4 Joshua H. Meng is with California P A TH, University of California, Berkeley , CA, USA hdmeng@berkeley.edu Ra n d o m sa l i e n c y Re f i n e d sa l i e n c y Co n d i t i o n Co n d i t i o n Co n d i t i o n Co n d i t i o n Co n d i t i o n 𝐒 ! 𝐒 " 𝐒 " # $ 𝐒 % 𝐒 & 𝑝 ! ( 𝐒 " # $ | 𝐒 " , 𝐜 ) 𝑞 ( 𝐒 " | 𝐒 " # $ ) … … … … … … De n o i s i n g Di f f u s i o n Im a g e Ra n d o m sa l i e n c y Re f i n e d sa l i e n c y Co n d i t i o n Co n d i t i o n Co n d i t i o n Co n d i t i o n Co n d i t i o n 𝐒 ! 𝐒 % 𝐒 %#$ 𝐒 & 𝑝 ! (𝐒 %#$ |𝐒 % , 𝐜) 𝑞(𝐒 % |𝐒 %#$ ) … … … … Den oisi ng Dif fus ion Image Random saliency Refined saliency Conditio n Conditio n Conditio n Conditio n LLM - enhan ced saliency mode l Fig. 1: Overvie w of the proposed LLM-enhanced, conditional diffusion- based driv ers’ visual attention modeling method DiffAttn . Recent advancements in vision-based models for intelli- gent v ehicles, such as object detection [4] and semantic se g- mentation [5], have shown progress in individual tasks, yet they struggle to identify crucial visual cues and understand scene risks inv olved in traffic en vironment like experienced driv ers do in case of an emergency [6]. In comparison to machine intelligence, humans are capable of quickly detecting the most relev ant stimuli, and locating potential hazards in complex situations through visual attention [7]. In situations where dynamic dri ving task (DDT) ex ecution relies heavily on vision for scene perception and understanding, driv ers’ visual attention is essential for percei ving the traffic en vironment and interacting with traffic participants, since visual attention pro vides crucial cues for their intended control maneuvers and accident av oidance capabilities. Over - looking latent hazards can pose threats to traffic safety and potentially result in accidents and casualties [8]. For traffic safety , dri vers’ visual attention prediction by mimicking human drivers’ visual behavior and attention mechanisms, could be greatly beneficial in supporting autonomous driving, assessing dri vers’ states, and deliv ering hazard warnings. Driv ers’ visual attention can be categorized into bottom-up and top-down mechanisms. Bottom-up control is data-driv en and guided by salient objects or areas in the driving scene that stand out against the background due to image-based conspicuities. These elements attract or ev en distract driv ers’ attention, such as billboards, advertisements, or vehicles driving in the opposite lane, which are less safety-critical [9]. T op-down attention, howe ver , is task-driv en and goal- oriented where factors including experience, knowledge, memory , and expectations could prompt and guide driv ers to focus on objects or ev ents relev ant to the driving-task- related information and allocate less attentional resources to stimuli that are irrelev ant. For instance, driv ers mostly fixate their attention on vanishing points to get a broader vie w of the road ahead [10]. In complex dynamic dri ving environ- ments, both bottom-up and top-do wn factors continuously ev olve and compete for drivers’ visual attentional resources. Therefore, both types of factors should be considered when modeling dri vers’ visual attention when ex ecuting DDT . In this work, we aim to propose a diffusion-based frame- work to model human-like visual attention pattern without additionally depending on top-down features like temporal information, segmentation maps or optical flows. The main contributions of this study are summarized as follows. (1) W e propose DiffAttn , a diffusion-based framework that formulates driv ers’ visual attention prediction as a con- ditional denoising process, which naturally aligns with the Gaussian-like spatial distribution of human gazes, providing both theoretical coherence and superior performance. (2) T o capture local and global contexts in traffic scenes, we adopt Swin Transformer as encoder and a decoder that combines channel-attenti ve feature fusion with dense multi- scale conditioning and prediction, enabling ef fectiv e denois- ing with fine-grained details and holistic scene awareness. (3) T o enhance top-down feature representations without explicitly introducing top-do wn modalities, we incorporate a LLM layer into the saliency decoder of the netw ork, enabling the model to better reason about semantic context across scales and strengthen its sensitivity to safety-critical cues. (4) Extensiv e e xperiments on four public datasets demon- strate SoT A performance of our method, surpassing video- based approaches, top-down-feature-driv en methods, and ev en some LLM-enhanced baselines. Beyond benchmark results, our framework also promotes interpretable driv er- centric scene understanding and has potential to support safer human-machine interaction in intelligent driving systems. A. Related W orks CNN-based approaches. In the studies of Ji et al. [11] and Deng et al. [12], the network models were designed in a U-Net pattern, where cascaded CNN layers in encoder extract image features and the decoder gradually upsam- ples feature maps and mak e final predictions. T o bring more temporal dependencies and top-down features into the network, Xia et al. [13] adopted ConvLSTM as temporal processing module to predict driv ers’ attention in critical situations from video clips. By doing this, information of previous fixation locations could flo w along sequence which benefits sequential attention inferences. Fang et al. [14] used segmentation labels as top-down cues to facilitate task- specific attention allocation in their network SCAFNet to reason semantic-induced scene variation in driv ers’ visual attention predictions. Howe ver , the improv ements in model performance were built on sacrifice of lightweight architec- ture and computation expenses, as network composes more complicated modules for bridging relationships among cues. T ransformer -based approaches. Transformer was first utilized in natural language processing studies. T o lev erage T ransformer’ s power in long-range dependency modeling, V ision-T ransformer (V iT) [15] di vides an RGB image into patches, flattens them, and treats the image as sequential data for T ransformer input. Zhao et al. [16] proposed Gate-D AP and explored the network connection gating mechanism for driv er attention prediction to boost prediction performance through learning the importance of input top-down features like segmentation maps and optical flows. Although Gate- D AP sho ws accurate prediction performance on DAD A-2000 and BDD-A datasets, the V iT -based backbone design might hinder network performance in modeling dri vers’ foveal vision which constitutes local information of visual attention. Most existing studies on driv ers’ visual attention predic- tion use full CNN or Transformer that directly map images to attention maps. Although ef fectiv e, these approaches treat attention prediction as a deterministic regression task, ov er- looking the probabilistic and spatially distributed nature of human gaze. Diffusion-based methods instead frame atten- tion prediction as a generativ e process, gradually refining a noisy map into a realistic attention distribution. I I . M E T H O D A. T ask Definition and Motivation Driv ers’ visual attention prediction seeks to forecast the intensity and distribution of visual attention on salient areas within the dri ving scene. This is achiev ed by predicting a saliency map ˆ S ∈ R 1 × H × W , which indicates the probability of fixation occurrence. This formulation is subtracted to the observation that when humans allocate visual attention to a scene, foveal vision provides the highest resolution of fine- grained local visual information and allows for acuity and contrast sensitivity around fixations [17]. Meanwhile, periph- eral vision provides non-detailed, coarse-grained, texture- like yet long-range visual information [17]. Consequently , a salienc y map is normally adopted to depict the perceptual spatial interactions of both local and non-local information, as well as the intertwined foveal and peripheral vision. T o mimic drivers’ visual attention allocation pattern, the groundtruth salienc y map S is generated by conducting Gaus- sian filtering on a binary fixation map F , where F ( x 0 , y 0 ) = 1 when ( x 0 , y 0 ) has valid fixation from the dri ver . The formulation of groundtruth saliency map is gi ven as, S ( x, y ) = ( F ∗ G )( x, y ) = W X i =1 H X j =1 F ( i, j ) · G ( x − i, y − j ) , (1) G ( x, y ) = 1 2 π σ x σ y exp − 1 2 x 2 σ 2 x + y 2 σ 2 y , (2) where σ x and σ y implies standard deviations of Gaussian kernel G in x - and y -axis to represent parafoveal and the peripheral area around fixations, respectiv ely . T ypically , σ x = σ y . The final groundtruth saliency map is generated with min-max normalization, indicating the probability of visual attention falling within the vicinity of fixations. In this work, we proposed DiffAttn, where the network takes color image I ∈ R 3 × H × W as inputs only , and out- puts saliency maps ˆ S s ∈ R 1 × H 2 s × W 2 s at four scales S = { s | 0 , 1 , 2 , 3 } to constitute training losses with groundtruth. By model inference, only saliency map at input resolution Patch Partition Image 𝐈 CLA CA CA CLA CLA CLA CLA CLA CA CA ⨁ FC GAP ⊙ 𝐗 ! "#$% & 𝓕 ' 𝓕 ( Feature Fusion Pyr am id (FFP) Cross - Layer Attenti on (CLA) Swin Transformer Block Linear Embedding Swin Transformer Block Patch Merg ing Swin Transformer Block Patch Merg ing Stage 1 Stage 4 x2 x2 x2 … … 1/4 1/8 1/16 1/32 W- MSA LN LN MLP SW - MSA LN LN MLP 𝐳 !" # " 𝐳 ! 𝐳 ! " 𝐳 !$ # 𝐳 !$ # Noise Predictor 𝝐 ) * Noise Predictor 𝝐 ) & Noise Predictor 𝝐 ) + Noise Predictor 𝝐 ) , Refined Saliency Maps LL M Block s = 0 s = 1 s = 2 s = 3 upsample Saliency Encoder Saliency Decoder # 𝐒 % # 𝐒 # # 𝐒 & # 𝐒 ' 𝐅 % 𝐅 & 𝐅 ' 𝐅 ( 𝐗 % 𝐗 & 𝐗 ' 𝐗 ( 𝐗 %% 𝐗 %& 𝐗 %' 𝐗 %( 𝐗 &% 𝐗 && 𝐗 &' 𝐗 '% 𝐗 '& 𝐗 (% 𝐗 (% Mult i - scale dense - co nnected conditional diffusion Fig. 2: DiffAttn architecture overvie w . For saliency encoder, we adopt SwinT -Base [19] pretrained on ImageNet. The decoder is designed with an LLM- enhanced feature fusion pyramid (FFP), which bridges the encoder outputs, and a multi-scale dense-connected conditional diffusion module, where feature maps produced by FFP are densely connected and serve as conditioning signals for noise learning in the diffusion process. The noise predictors generate saliency maps at multiple scales, which are all supervised with groundtruth saliency maps. Saliency map generated at s = 0 during testing. ˆ S 0 would be used for ev aluation. Overvie w of the framework of our proposed network is shown in Fig. 2 . B. Preliminaries of Diffusion Model Diffusion models hav e demonstrated remarkable genera- tiv e capabilities across a variety of tasks. The Denoising Diffusion Probabilistic Model (DDPM) [18] introduced a framew ork that lev erages Markovian processes in both for- ward and re verse stages. T ypically , diffusion models are categorized into unconditional and conditional types. Un- conditional models aim to directly approximate the data distribution, whereas conditional models focus on generating data under specific conditions. In DDPM, target data distribution x 0 is transformed into a noisy sample x T through a sequence of conditional prob- abilities q ( x τ | x 0 ) as, q ( x τ | x 0 ) := N ( x τ ; √ α τ x 0 , (1 − α τ ) I ) , (3) α τ := τ Y t =0 α t = τ Y t =0 (1 − β t ) , (4) x τ = √ α τ x 0 + √ 1 − α τ ϵ , ϵ ∼ N ( 0 , I ) , (5) where q represents the forward noise introduction process. β t denotes the noise v ariance schedule as defined in DDPM, and N implies Gaussian distrib ution. Formally , this process iterativ ely adds Gaussian noise to x 0 , generating a series of latent noisy samples x τ for τ ∈ { 0 , 1 , . . . , T } . In the denoising process, a noise predictor ϵ θ learns to rev erse the diffusion process and iterativ ely recover x 0 under the guidance of visual conditions c as, p θ ( x τ − 1 | x τ , c ) := N ( x τ − 1 ; µ θ ( x τ , τ , c ) , σ 2 τ I ) , (6) µ θ ( x τ , τ , c ) = 1 √ α τ ( x τ − β τ √ 1 − α τ ϵ θ ( x τ , τ , c )) , (7) where σ 2 τ indicates transition v ariance. C. Saliency Encoder Encoder plays an essential role in feature representation from model inputs. While CNNs hav e been widely used in prior works such as [12], their local receptiv e fields limit long-range contextual modeling. Given that dri vers’ atten- tion inv olves both local visual cues and global contextual information, in this work, we adopt Swin Transformer [19] as backbone, as its hierarchical shifted windo w attention design allows it to effecti vely model both local details and global context. The configuration of Swin Transformer block is sho wn in Fig. 5 . Hierarchical outputs of Swin T ransformer- based saliency encoder are X 0 ∈ R C e × H 4 × W 4 , X 1 ∈ R 2 C e × H 8 × W 8 , X 2 ∈ R 4 C e × H 16 × W 16 , and X 3 ∈ R 8 C e × H 32 × W 32 . D. Saliency Decoder DiffAttn decoder takes multi-scale features from the en- coder and hierarchically upsamples these skip-connected feature maps from deep to shallow layers to form saliency predictions. As shown in Fig. 2 , DiffAttn saliency decoder consists of Feature Fusion Pyramid (FFP) module with LLM- based semantic enhancement along skip connection path, and multi-scale dense-connected conditional diffusion. Featur e fusion pyramid. In this work, we integrate a feature fusion pyramid (FFP) between the encoder and decoder to enhance multi-scale feature aggregation, preserve spatial details, aid the recovery of information lost during downsampling, and strengthen the complementary represen- tation of features across levels. As illustrated in Fig. 2 , FFP employs a channel attention (CA) mechanism to refine feature representations and facilitate cross-layer interactions. Output from the salienc y encoder at each scale { X ii } 4 i =0 is first processed by a channel attention module, followed by a series of cross-layer attention (CLA) modules. Design of CLA is to enhance encoder features and facilitate hierarchical information flow within FFP . CLA is formulated by channel attention on concatenated lower- and higher-le vel features, X i,j +1 = CA [ X i,j , u ( ELU ( κ ( X i +1 ,j )))] , (8) where X i,j +1 denotes the intermediate output of each CLA cell. X i,j represents the lower -lev el feature. The higher- lev el feature X i +1 ,j is first processed by a con volution operation κ with ELU activ ation, and then upsampled by u . FFP produces fused feature maps X 22 , X 13 , and X 03 at resolutions ( H 4 , W 4 ) , ( H 8 , W 8 ) , and ( H 16 , W 16 ) , respecti vely . LLM-based semantic enhancement. LLMs provide strong capabilities for capturing high-le vel semantics, reason- ing about contextual relationships, and transferring knowl- edge from large-scale training. For drivers’ visual attention prediction, these capabilities are particularly valuable be- cause gaze behavior is influenced not only by bottom-up visual saliency b ut also by top-down semantic understanding. Recent work such as SalM 2 [20] lev erages the CLIP [21] model to enrich semantic representations of driving scenes. In this work, we adopt the strategy proposed in LLM4Seg [22] to further leverage the capability of LLMs in enhanc- ing visual semantic understanding, thereby improving the modeling of dri vers’ visual behavior , particularly top-down attention, which remains a challenge that most existing stud- ies hav e not explicitly addressed. T o this end, we introduce an additional LLM layer F LLM along the skip-connection path from the saliency encoder to the decoder at the deepest lev el. Concretely , the feature map X 30 is first reshaped and flattened into (8 C e , H × W 32 2 ) , linearly projected by ϕ , passed through the LLM layer F LLM , subsequently linearly projected by ψ , and finally reshaped back to (8 C e , H 32 , W 32 ) . This process can be expressed as, y 30 = ϕ Reshape & Flatten X 30 , X 30 ← Reshape ψ F LLM y 30 . (9) L i n e a r L i n e a r R e s h a pe F l a tt e n P os i ti o n a l E m be ddin g S a l i e n c y E n c o d e r S a l i e n c y D e c o d e r R e s h a pe LLM e n h a n c e m e nt L L a M A 3. 2 - 1B Fig. 3: Network architecture of LLM-based semantic enhancement. In this work, LLM enhancement is applied only at the deepest scale level, both to reduce computational cost and to ensure that its benefits can be propagated to higher levels through feature fusion and dense cross-scale connections, as discussed in the following subsection. Multi-scale dense-connected conditional groundtruth diffusion. In this work, we adopt groundtruth driv er attention map S g as target data distribution. The generation of a noisy sample S τ can be written as, q ( S τ | S g ) := N ( S τ | √ α τ S g , (1 − α τ ) I ) . (10) The subsequent noise modeling process is realized through a U-Net network. As sho wn in Fig. 4 , the network takes as input the summation of the visual condition c , the current time embedding, and the noisy sample at time step τ , and outputs the denoised sample corresponding to time step τ − 1 . Multi-scale prediction strategy , which has been proven effecti ve in many vision tasks such as semantic segmentation and depth estimation, where each scale has an individual noise predictor , denoted as ϵ s θ . Lev eraging predictions at multiple resolutions allows the model to account for scale variations, where finer scales emphasize small, localized cues, while coarser scales highlight the centers of larger salient regions. The visual condition on each output scale s is calculated by hierarchically upsampling and concatenating feature outputs of FFP from lo wer lev el for feature represen- tation enhancement, as well as adding refined saliency output from lower lev el. An example for the calculation of visual condition at bottom ( s = 3) and top lev el ( s = 0) are as, F 3 = upsample × 2 ( X 30 ) , (11) c 3 = ELU ( f 3 ( F 3 )) , (12) F 0 = u × 2 ( X 03 ); u × 3 ( X 12 ); u × 4 ( X 21 ); u × 5 ( X 30 ) , (13) c 0 = ELU f 0 F 0 + u g 0 ˆ S s − 1 , (14) where c s and ( f s , g s ) denote the visual condition and 2D con volution operations at scale s , respectiv ely . 𝐒 ! 𝐒 " 𝐒 " #$ 𝐒 % 𝑝 & (𝐒 " #$ |𝐒 " , 𝐜) 𝑞 (𝐒 " |𝐒 " #$ ) … … Denoising Diffusion Conv ⨁ 𝜏 U- Net Conv & upsample + Visual condit ion c " 𝐒 " #$% 𝐅 # 𝜏 Time Embedding Fig. 4: Network architecture of multi-scale conditional diffusion. The final dri ver attention maps are generated by applying a sigmoid acti vation to the denoised outputs at each scale. E. Loss Function The overall loss function, av eraged over all scales, is com- posed of binary cross entropy (BCE) loss, KL-Diver gence (KLD) loss and diffusion denoising (DD) loss, L = 1 |S | X s ∈S λ 1 L s BCE + λ 2 L s KLD + λ 3 L s DD , (15) where λ implies loss weights. The direct output of the saliency map at each scale is upsampled to input resolution for the calculation of each respectiv e loss component. Diffusion denoising loss supervises the latent saliency at each time step after refinement by reversing the dif fusion process. It could be written as, L s DD = ∥ ϵ s − ϵ s θ ( S s τ , τ , c s ) ∥ 2 . (16) I I I . E X P E R I M E N T S A. Datasets T rafficGaze [12] contains 16 video clips with resolution (1280 , 720) and recorded on urban roads in China. Each image has 28 gaze providers for fixation collections in the lab . 10, 2 and 4 videos are used for train, validation and test. D ADA-2000 [14]. Driver Attention and Accident Dataset contains 2000 recorded accident videos on crowded city roads from various locations worldwide, sourced from web- sites. The video clips hav e a resolution of (1584 , 660) , and each frame is annotated by fiv e gaze pro viders. BDD-A [23]. Berkeley DeepDri ve Attention dataset con- tains 926, 203 and 306 video clips for train, validation and test of visual attention prediction. Images are with resolution (1280 , 720) , where each frame has 4 providers for gaze collection in the lab . The driving videos include braking ev ents and were recorded in b usy urban areas in the US. DrFixD-rainy [24] contains 16 traffic driving videos in rainy conditions, where image frames are with resolution (1280 , 720) . Each frame has 30 in-lab gaze providers. 10, 2 and 4 videos are assigned for training, validation and test. B. Implementations Details W e implement the proposed method DiffAttn in PyT orch and train it on a single NVIDIA R TX 3090 GPU. The model is optimized using AdamW with a batch size of 18, a learning rate of 1 × 10 − 5 , and a weight decay of 0 . 001 . All input RGB images are resized to (192 , 320) , with random color jittering and horizontal flipping applied for data augmentation. The loss function employs weighting coefficients of λ 1 = 1 and λ 3 = 0 . 001 for all datasets, while λ 2 is set to { 1 , 0 . 2 , 0 . 1 , 1 } for T rafficGaze, D ADA-2000, BDD-A, and DrFixD-rainy , re- spectiv ely . Diffusion step T i is set to 300 on all scales for all datasets. Denoising steps T e are set to { 12 , 16 , 15 , 12 } on all scales for Traf ficGaze, DAD A-2000, BDD-A, and DrFixD- rainy , respectively . For LLM enhancement, we employ the frozen 15 th layer of LLaMA3.2-1B [25] as LLM layer F LLM . The linear projections within LLM enhancement module are set to ( C e , 2028) and (2048 , C e ) , respecti vely . The ev aluation employs location-based metrics (A UC-J, NSS) and distribution-based metrics (KLD, SIM, CC) to assess the dri vers’ visual attention prediction. C. Experiment Results The proposed DiffAttn outperforms 15 baseline methods across all four benchmarks, as summarized in T able I and visualized in Fig. 5 . Qualitati ve comparisons in Fig. 5 demonstrate DiffAttn’ s superiority in capturing both bottom- up salient regions and top-down semantic features. In nor- mal dri ving scenarios, DiffAttn e xhibits enhanced top-do wn reasoning capabilities. For instance, in Fig. 5 (a)(b), while baseline models like AD A restrict attention to the forward view , DiffAttn additionally attends to safety-critical elements such as overtaking vehicles and traffic signs. In complex and hazardous situations, DiffAttn shows precision in localizing latent risks. For instance, in accident-prone scenarios shown in Fig. 5 (d)(g)(h), DiffAttn reliably detects high-risk agents like crossing bicyclists and pedestrians, while simultaneously maintaining aw areness of the intended path and contextual cues. By contrast, SCAFNet and Gate-D AP either miss critical hazards or produce attention artifacts. At intersec- tions and under adverse conditions, DiffAttn closely aligns with human driv ers’ attention patterns. As shown in Fig. 5 (k)(n)(q), DiffAttn jointly focuses on traffic lights, STOP signs, and roadway geometry , whereas competing models often neglect these top-down semantic features or scatter attention to irrelev ant background elements. These results highlight DiffAttn’ s ability to balance immediate local haz- ards with higher-lev el dri ving semantics, producing attention maps with richer spatial details and stronger consistenc y with human gaze patterns. D. Ablation Studies This subsection presents the results of ablation studies on our experimental setting, where most experiments were conducted on two challenging datasets, D AD A-2000 and BDD-A, to validate both the ef fectiv eness and the underlying rationale of our proposed algorithm. Ablation study on LLM-based semantic enhancement. T able II reports results on the BDD-A and DAD A-2000 datasets when the LLM layer is remov ed or replaced with the 28 th layer of DeepSeek-R1-Distill-Qwen-1.5B [36], follow- ing LLM4Seg [22]. Removing the LLM layer consistently degrades performance, indicating that it provides essential semantic cues for accurate attention prediction while intro- ducing only a small parameter overhead. In addition, loading pretrained weights from LLaMA or DeepSeek consistently improv es performance, and using a frozen layer outperforms a trainable one. This suggests that the pretrained LLM’ s ability to model long-range dependencies and capture rich global semantic representations that cannot be reliably fine- tuned from the limited, task-specific saliency data. Further- more, despite from text to visual modality , LLaMA achiev es slightly better results than DeepSeek v ariant, likely due to the rich, abstract semantic representations it encodes from large-scale text corpora, which provide high-level guidance for attention allocation in visual scenes. In Fig. 6 , we present a qualitati ve comparison of salienc y prediction results with dif ferent LLM options. Specifically , in Fig. 6 (a), when the ego-vehicle is steering left and entering the main road, the proposed LLM-enhanced variant allocates attention not only to the distant point along the main road, which is primarily focused on by the non-enhanced variant or the CLIP-enhanced SalM 2 method, but also to the vehicles parked on the opposite roadside. Meanwhile, in Fig. 6 (b), the LLM-enhanced variant attends strongly to the driving trajectory of the vehicle crossing ahead, while also noticing a road sign at the intersection to av oid potential conflicts or violations of traffic rules. These examples indicate that our proposed LLM-enhanced method could better compre- hend traffic scenes and allocate more top-down attention to ev ent-driven features that are critical for driving safety . In addition, as reported in [20], SalM 2 model requires square- shaped inputs due to constraints of CLIP model, whereas our T ABLE I: T est performance on four datasets (“I” indicates single image input. “V” implies video sequence. “S” indicates single modal of RGB image. “M” indicates multiple modals of input such as semantic segmentation. “#” indicates number of computation parameters. Results best in bold , second best underlined. “-” implies metrics not reported. “N/A ” indicates no implementation due to data requirement.) Dataset T rafficGaze D ADA-2000 BDD-A DrFixD-rainy Method Input #. ↓ KLD ↓ CC ↑ SIM ↑ NSS ↑ A UC-J ↑ KLD ↓ CC ↑ SIM ↑ NSS ↑ A UC-J ↑ KLD ↓ CC ↑ SIM ↑ KLD ↓ CC ↑ SIM ↑ NSS ↑ A UC-J ↑ MLNet [26] I+S 15M 0.87 0.87 0.45 5.69 0.90 11.78 0.04 0.07 0.30 0.59 1.20 0.61 0.43 3.69 0.79 0.63 3.90 0.93 A CLNet [27] V+S - 0.27 0.91 0.77 5.73 0.95 1.95 0.46 0.30 3.23 0.93 1.12 0.61 0.46 - - - - - CPFE [28] I+S - 0.26 0.89 0.77 4.33 0.94 2.18 0.33 0.21 2.43 0.91 1.65 0.42 0.30 - - - - - T ASED-Net [29] V+S 21M 1.43 0.94 0.79 5.73 0.97 1.78 0.46 0.31 3.20 0.92 1.24 0.55 0.42 0.85 0.84 0.59 4.21 0.95 CDNN [12] I+S < 1M 0.29 0.95 0.78 5.83 0.97 1.83 0.43 0.31 2.93 0.94 1.14 0.62 0.45 0.52 0.82 0.63 4.11 0.95 SCAFNet [14] V+M 55M 0.66 0.94 0.77 6.10 0.98 2.19 0.50 0.37 3.34 0.92 1.48 0.56 0.40 1.87 0.84 0.67 4.17 0.94 DrFixD-rainy [30] V+S 40M 0.28 0.94 0.78 6.01 0.98 1.78 0.45 0.29 3.00 0.94 1.09 0.64 0.47 0.47 0.85 0.67 4.19 0.96 FBLNet [31] I+S 87M 0.46 0.90 0.69 6.50 0.97 1.92 0.50 0.33 4.13 0.95 1.40 0.64 0.47 0.50 0.85 0.69 4.29 0.95 SCOUT+ [32] V+M 5M 0.39 0.91 0.72 5.35 0.97 N/A N/A N/A N/A N/A 1.04 0.63 0.48 0.50 0.84 0.65 3.95 0.95 SalM 2 [20] I+S < 0.1M 0.28 0.94 0.78 5.90 0.98 1.71 0.37 0.31 2.10 0.92 1.08 0.64 0.47 0.47 0.86 0.68 4.31 0.95 MTSF [33] V+S 194M 0.26 0.96 0.82 5.98 0.98 1.61 0.51 0.36 3.44 0.93 1.61 0.51 0.36 0.62 0.77 0.61 4.02 0.96 DPSNN [11] I+S 8M 0.25 0.95 0.80 6.07 0.98 1.84 0.43 0.30 2.89 0.94 1.52 0.53 0.43 0.41 0.85 0.71 4.39 0.97 SalEMA [34] V+S 218M 0.30 0.93 0.77 5.81 0.97 1.65 0.48 0.33 3.26 0.95 1.20 0.60 0.45 0.47 0.85 0.67 4.11 0.95 Gate-D AP [16] V+M 106M 0.33 0.92 0.77 5.92 0.98 1.65 0.52 0.36 3.14 - 1.12 0.61 0.49 0.48 0.85 0.69 4.21 0.97 Fu et al. [35] I+S 41M 0.26 0.93 0.73 - - 2.30 0.47 0.34 3.21 0.91 - - - - - - - - DiffAttn (Ours) I+S 92M 0.24 0.95 0.82 6.11 0.99 1.58 0.51 0.36 3.49 0.95 1.09 0.64 0.50 0.40 0.88 0.73 4.59 0.97 Col or GT Dif fAtt n ADA SCAFN et Col or GT Dif fAtt n Gat e - DAP SCAFNet DADA - 2000 (a) (b) (c) (d) (e) (f) (g) (h) (i) Tr a f f i c Gaze DrFixD - rainy (o) (p) (q) (r) (s) BDD -A (j) (k) (l) (m) (n) Colo r GT Diff Attn Gat e - DAP MTS F SalM² Colo r GT Diff Attn DrFi xD - ra iny Gat e - GAP SalM² Fig. 5: Qualitative results on T rafficGaze: (a) Surrounding vehicle driving in right lane; (b) Changing to right lane with a truck ahead; (c) Straight driving with a traffic sign ahead. Qualitative results on DAD A-2000: (d) Motorcycle crossing; (e) T wo trucks ahead; (f) Nearby truck changing lane ahead; (g) Pedestrian crossing; (h) T urning right with collision risk in volving a taxi; (i) Pedestrian running in front of ego-vehicle. Qualitative r esults on BDD-A: (j) V ehicle crossing ahead; (k) Straight driving with a traffic light ahead; (l) Driving past parked cars; (m) Lane change with a braking vehicle ahead; (n) Approaching a ST OP line. Qualitative results on DrFixD-rainy: (o) Entering a main road with congested traf fic; (p) Nearby left vehicle changing into the ego lane; (q) Driving through a green light; (r) Driving on a rural road with a bicyclist on the right; (s) Pedestrians standing at the roadside. T ABLE II: Ablation study on LLM-based semantic enhancement (“F” and “T” denote frozen and trainable parameters, respectively). Dataset BDD-A D ADA-2000 Method #. ↓ KLD ↓ CC ↑ SIM ↑ KLD ↓ CC ↑ SIM ↑ NSS ↑ AUC-J ↑ w/o F LLM 91M 1.126 0.632 0.506 1.639 0.502 0.377 3.472 0.949 LLaMA-F 92M 1.087 0.636 0.502 1.582 0.510 0.360 3.486 0.949 LLaMA-T 153M 1.114 0.632 0.501 1.606 0.507 0.370 3.493 0.949 DeepSeek-F 92M 1.097 0.634 0.497 1.660 0.502 0.381 3.474 0.949 DeepSeek-T 139M 1.113 0.629 0.494 1.684 0.495 0.378 3.427 0.948 proposed LLM-enhancement method is considerably more flexible with respect to input resolution. Color DiffAtt n w. ℱ LLM Di ffAtt n w/o. ℱ LLM SalM² (a) (b) (c) (d) Fig. 6: Qualitativ e comparison of saliency prediction results: with LLM enhancement (second row), without LLM enhancement (third row), and CLIP-based method SalM 2 [20] (last row). Ablation study on denoising process. T o further in- vestigate the refinement process of denoising, we visualize intermediate results in Fig. 7 . As illustrated, our model con verges rapidly within only a few steps, transitioning from random noise to dispersed but plausible clusters of attention, and ultimately forming a human-like attention distribution. (a) (b) 𝜏 = 2 𝜏 = 5 𝜏 = 10 𝜏 = 𝑇 ! 𝜏 = 2 𝜏 = 5 𝜏 = 10 𝜏 = 𝑇 ! Groundtruth Groundtruth Fig. 7: V isualization of the denoising process ( τ : current step). Meanwhile, to examine the effect of denoising steps more systematically , we conducted ablation experiments on the D ADA-2000 and BDD-A datasets. Specifically , we fix the total diffusion steps T i to 300 for both datasets (same as default), and consider two strate gies: (1) training the model with different denoising step settings, and (2) altering the number of denoising steps only during inference without retraining. The quantitative results reported in T able III indicate that directly reducing the number of denoising steps at inference leads to se vere performance degradation. In contrast, when the model is trained with reduced denoising steps, it maintains relativ ely strong performance. Although such configurations do not surpass the default experimental setting, they still achie ve competitiv e results compared with some baselines, ev en under a small number of denoising steps. Representative qualitati ve results are shown in Fig. 8 , which further highlight that our model can already reach quantitativ ely competitive performance with as fe w as 2 ∼ 5 denoising steps. Nevertheless, for the main experiments we adopt 15 or 16 denoising steps, as this setting yields consistently superior results for SoT A benchmarks. Further exploration is required regarding model deployment, since increasing the number of denoising steps inevitably leads to higher GPU consumption and reduced inference speed. Nev ertheless, under the current configuration, an inference speed of 8 FPS remains practically feasible. T ABLE III: Ablation study on denoising steps T e . Dataset D ADA-2000 ( T e = 16) BDD-A ( T e = 15) Method KLD ↓ CC ↑ SIM ↑ NSS ↑ A UC-J ↑ KLD ↓ CC ↑ SIM ↑ τ = T e 1.582 0.510 0.360 3.486 0.949 1.087 0.636 0.502 (1) T rain model with different denoising steps T e = 10 1.595 0.507 0.361 3.465 0.949 1.100 0.634 0.502 T e = 5 1.606 0.506 0.374 3.488 0.950 1.090 0.635 0.500 T e = 2 1.586 0.509 0.366 3.486 0.950 1.096 0.634 0.500 (2) Change denoising steps without training model τ = 10 1.626 0.505 0.368 3.451 0.948 3.065 0.099 0.108 τ = 5 1.926 0.447 0.268 3.034 0.930 2.687 0.161 0.133 τ = 2 3.472 0.016 0.060 0.117 0.612 3.096 0.037 0.093 (a) (b) (c) 𝑇 ! = 2 𝑇 ! = 5 𝑇 ! = 10 𝑇 ! = 15 𝑇 ! = 2 𝑇 ! = 5 𝑇 ! = 10 𝑇 ! = 16 𝑇 ! = 2 𝑇 ! = 5 𝑇 ! = 10 𝑇 ! = 15 Fig. 8: V isualization of denoising results with different T e . I V . C O N C L U S I O N W e propose DiffAttn, a diffusion-based framework for driv ers’ visual attention prediction that formulates the task as a conditional diffusion-denoising process aligned with Gaussian-like human attention patterns. A Swin T ransformer encoder with a multi-scale conditional decoding design cap- tures both local details and global scene context, while an LLM layer enhances top-down semantic representations for safety-critical cues. Experiments on four public datasets demonstrate SoT A performance of our method, surpassing multiple video-based, top-do wn-feature-driv en, and LLM- enhanced methods. Our findings of fer prospects for modeling driv ers’ visual behavior and pro vide insights into the applica- tion possibilities of visual attention prediction for intelligent human-machine interaction and drivers’ state measurement. V . A C K N O W L E D G M E N T This work was jointly supported by National Natural Science Foundation of China under Grant 52502518, CAS Major Project under Grant RCJJ-145-24-14, the Open Fund Project of State Key Laboratory of Intelligent Green V e- hicle and Mobility under Grant KFY260407 and Tsinghua Univ ersity-T oyota Joint Research Center for AI-T echnology of Automated V ehicle under Grant TT AD-2025-05. R E F E R E N C E S [1] Q. Li, Z. W ang, W . W ang, and Q. Y uan, “Understanding driver preferences for secondary tasks in highly autonomous vehicles, ” in International Confer ence on Man-Machine-En vironment System Engi- neering . Springer , 2022, pp. 126–133. [2] W . Liu, Q. Li, W . W ang, Z. W ang, C. Zeng, and B. Cheng, “Deep learning based take-over performance prediction and its application on intelligent vehicles, ” IEEE Tr ansactions on Intelligent V ehicles , 2024. [3] W . Liu, Q. Li, Z. W ang, W . W ang, C. Zeng, and B. Cheng, “ A literature revie w on additional semantic information conve yed from driving automation systems to drivers through advanced in-vehicle hmi just before, during, and right after takeover request, ” International Journal of Human–Computer Interaction , vol. 39, no. 10, pp. 1995– 2015, 2023. [4] J. Qiu, C. Jiang, and H. W ang, “Etformer: An ef ficient transformer based on multimodal hybrid fusion and representation learning for rgb-dt salient object detection, ” IEEE Signal Pr ocessing Letters , 2024. [5] J. Qiu, C. Jiang, P . Zhang, and H. W ang, “Evsmap: An efficient volumetric-semantic mapping approach for embedded systems, ” in 2024 IEEE/RSJ International Confer ence on Intelligent Robots and Systems (IR OS) . IEEE, 2024, pp. 9839–9846. [6] S. Baee, E. Pakdamanian, I. Kim, L. Feng, V . Ordonez, and L. Barnes, “Medirl: Predicting the visual attention of drivers via maximum entropy deep in verse reinforcement learning, ” in Proceedings of the IEEE/CVF international conference on computer vision , 2021, pp. 13 178–13 188. [7] E. Pakdamanian, S. Sheng, S. Baee, S. Heo, S. Kraus, and L. Feng, “Deeptake: Prediction of dri ver takeover behavior using multimodal data, ” in Pr oceedings of the 2021 CHI Confer ence on Human F actors in Computing Systems , 2021, pp. 1–14. [8] Q. Li, Y . Su, W . W ang, Z. W ang, J. He, G. Li, C. Zeng, and B. Cheng, “Latent hazard notification for highly automated driving: Expected safety benefits and driver behavioral adaptation, ” IEEE T ransactions on Intelligent Tr ansportation Systems , 2023. [9] L. Itti and C. Koch, “ A saliency-based search mechanism for overt and covert shifts of visual attention, ” V ision r esear ch , vol. 40, no. 10-12, pp. 1489–1506, 2000. [10] I. K otseruba and J. K. Tsotsos, “ Attention for vision-based assistive and automated driving: a revie w of algorithms and datasets, ” IEEE transactions on intelligent transportation systems , 2022. [11] S. Ji, T . Deng, F . Y an, and P . Du, “ A driving position-sensitiv e neural network for dri ver fixation prediction, ” in 2022 41st Chinese Control Confer ence (CCC) . IEEE, 2022, pp. 6660–6665. [12] T . Deng, H. Y an, L. Qin, T . Ngo, and B. Manjunath, “How do driv ers allocate their potential attention? driving fixation prediction via conv olutional neural networks, ” IEEE T ransactions on Intelligent T ransportation Systems , vol. 21, no. 5, pp. 2146–2154, 2019. [13] Y . Xia, D. Zhang, J. Kim, K. Nakayama, K. Zipser , and D. Whitney , “Predicting driver attention in critical situations, ” in Computer V ision– ACCV 2018: 14th Asian Confer ence on Computer V ision, P erth, Austr alia, December 2–6, 2018, Revised Selected P apers, P art V 14 . Springer , 2019, pp. 658–674. [14] J. Fang, D. Y an, J. Qiao, J. Xue, and H. Y u, “Dada: Driver attention prediction in driving accident scenarios, ” IEEE transactions on intel- ligent transportation systems , vol. 23, no. 6, pp. 4959–4971, 2021. [15] A. Dosovitskiy , L. Beyer, A. K olesnikov , D. W eissenborn, X. Zhai, T . Unterthiner, M. Dehghani, M. Minderer , G. Heigold, S. Gelly et al. , “ An image is worth 16x16 words: T ransformers for image recognition at scale, ” arXiv preprint , 2020. [16] T . Zhao, X. Bai, J. Fang, and J. Xue, “Gated driver attention pre- dictor , ” in 2023 IEEE 26th International Conference on Intelligent T ransportation Systems (ITSC) . IEEE, 2023, pp. 270–276. [17] E. E. Stewart, M. V alsecchi, and A. C. Sch ¨ utz, “ A revie w of interac- tions between peripheral and foveal vision, ” Journal of vision , vol. 20, no. 12, pp. 2–2, 2020. [18] Y . Song and S. Ermon, “Improved techniques for training score- based generative models, ” Advances in neural information processing systems , vol. 33, pp. 12 438–12 448, 2020. [19] Z. Liu, Y . Lin, Y . Cao, H. Hu, Y . W ei, Z. Zhang, S. Lin, and B. Guo, “Swin transformer: Hierarchical vision transformer using shifted windows, ” in Proceedings of the IEEE/CVF international confer ence on computer vision , 2021, pp. 10 012–10 022. [20] C. Zhao, W . Mu, X. Zhou, W . Liu, F . Y an, and T . Deng, “Salm 2 : An extremely lightweight saliency mamba model for real-time cognitive awareness of driver attention, ” in Proceedings of the AAAI Confer ence on Artificial Intelligence , vol. 39, no. 2, 2025, pp. 1647–1655. [21] A. Radford, J. W . Kim, C. Hallacy , A. Ramesh, G. Goh, S. Agarwal, G. Sastry , A. Askell, P . Mishkin, J. Clark et al. , “Learning transferable visual models from natural language supervision, ” in International confer ence on machine learning . PmLR, 2021, pp. 8748–8763. [22] F . T ang, W . Ma, Z. He, X. T ao, Z. Jiang, and S. K. Zhou, “Pre-trained llm is a semantic-aware and generalizable segmentation booster , ” arXiv pr eprint arXiv:2506.18034 , 2025. [23] Y . Xia, D. Zhang, J. Kim, K. Nakayama, K. Zipser , and D. Whitney , “Predicting driver attention in critical situations, ” in Computer V ision– ACCV 2018: 14th Asian Confer ence on Computer V ision, P erth, Austr alia, December 2–6, 2018, Revised Selected P apers, P art V 14 . Springer , 2019, pp. 658–674. [24] H. Tian, T . Deng, and H. Y an, “Driving as well as on a sunny day? predicting driver’ s fixation in rainy weather conditions via a dual- branch visual model, ” IEEE/CAA Journal of Automatica Sinica , vol. 9, no. 7, pp. 1335–1338, 2022. [25] A. Dubey , A. Jauhri, A. Pandey , A. Kadian, A. Al-Dahle, A. Letman, A. Mathur, A. Schelten, A. Y ang, A. Fan et al. , “The llama 3 herd of models, ” arXiv e-prints , pp. arXiv–2407, 2024. [26] M. Cornia, L. Baraldi, G. Serra, and R. Cucchiara, “ A deep multi- lev el network for saliency prediction, ” in 2016 23rd International Confer ence on P attern Recognition (ICPR) . IEEE, 2016, pp. 3488– 3493. [27] W . W ang, J. Shen, F . Guo, M.-M. Cheng, and A. Borji, “Re visiting video saliency: A large-scale benchmark and a new model, ” in Pr oceedings of the IEEE Confer ence on computer vision and pattern r ecognition , 2018, pp. 4894–4903. [28] T . Zhao and X. Wu, “Pyramid feature attention network for saliency detection, ” in Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , 2019, pp. 3085–3094. [29] K. Min and J. J. Corso, “T ased-net: T emporally-aggregating spatial encoder-decoder network for video saliency detection, ” in Proceedings of the IEEE/CVF International Conference on Computer V ision , 2019, pp. 2394–2403. [30] T . Deng, L. Jiang, Y . Shi, J. W u, Z. W u, S. Y an, X. Zhang, and H. Y an, “Dri ving visual saliency prediction of dynamic night scenes via a spatio-temporal dual-encoder network, ” IEEE T ransactions on Intelligent Tr ansportation Systems , vol. 25, no. 3, pp. 2413–2423, 2023. [31] Y . Chen, Z. Nan, and T . Xiang, “Fblnet: Feedback loop network for driver attention prediction, ” in Proceedings of the IEEE/CVF International Confer ence on Computer V ision , 2023, pp. 13 371– 13 380. [32] I. K otseruba and J. K. Tsotsos, “Scout+: T owards practical task-dri ven driv ers’ gaze prediction, ” in 2024 IEEE Intelligent V ehicles Symposium (IV) . IEEE, 2024, pp. 1927–1932. [33] L. Jin, B. Ji, B. Guo, H. W ang, Z. Han, and X. Liu, “Mtsf: Multi-scale temporal–spatial fusion network for driv er attention prediction, ” IEEE T ransactions on Intelligent T ransportation Systems , 2024. [34] P . Linardos, E. Mohedano, J. J. Nieto, N. E. O’Connor, X. Giro-i Nieto, and K. McGuinness, “Simple vs complex temporal recurrences for video saliency prediction, ” arXiv preprint , 2019. [35] R. Fu, T . Huang, M. Li, Q. Sun, and Y . Chen, “ A multimodal deep neural network for prediction of the driver’ s focus of attention based on anthropomorphic attention mechanism and prior knowledge, ” Expert Systems with Applications , vol. 214, p. 119157, 2023. [36] D. Guo, D. Y ang, H. Zhang, J. Song, R. Zhang, R. Xu, Q. Zhu, S. Ma, P . W ang, X. Bi et al. , “Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning, ” arXiv pr eprint arXiv:2501.12948 , 2025.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment