Cost-Matching Model Predictive Control for Efficient Reinforcement Learning in Humanoid Locomotion

In this paper, we propose a cost-matching approach for optimal humanoid locomotion within a Model Predictive Control (MPC)-based Reinforcement Learning (RL) framework. A parameterized MPC formulation with centroidal dynamics is trained to approximate…

Authors: Wenqi Cai, Kyriakos G. Vamvoudakis, Sébastien Gros

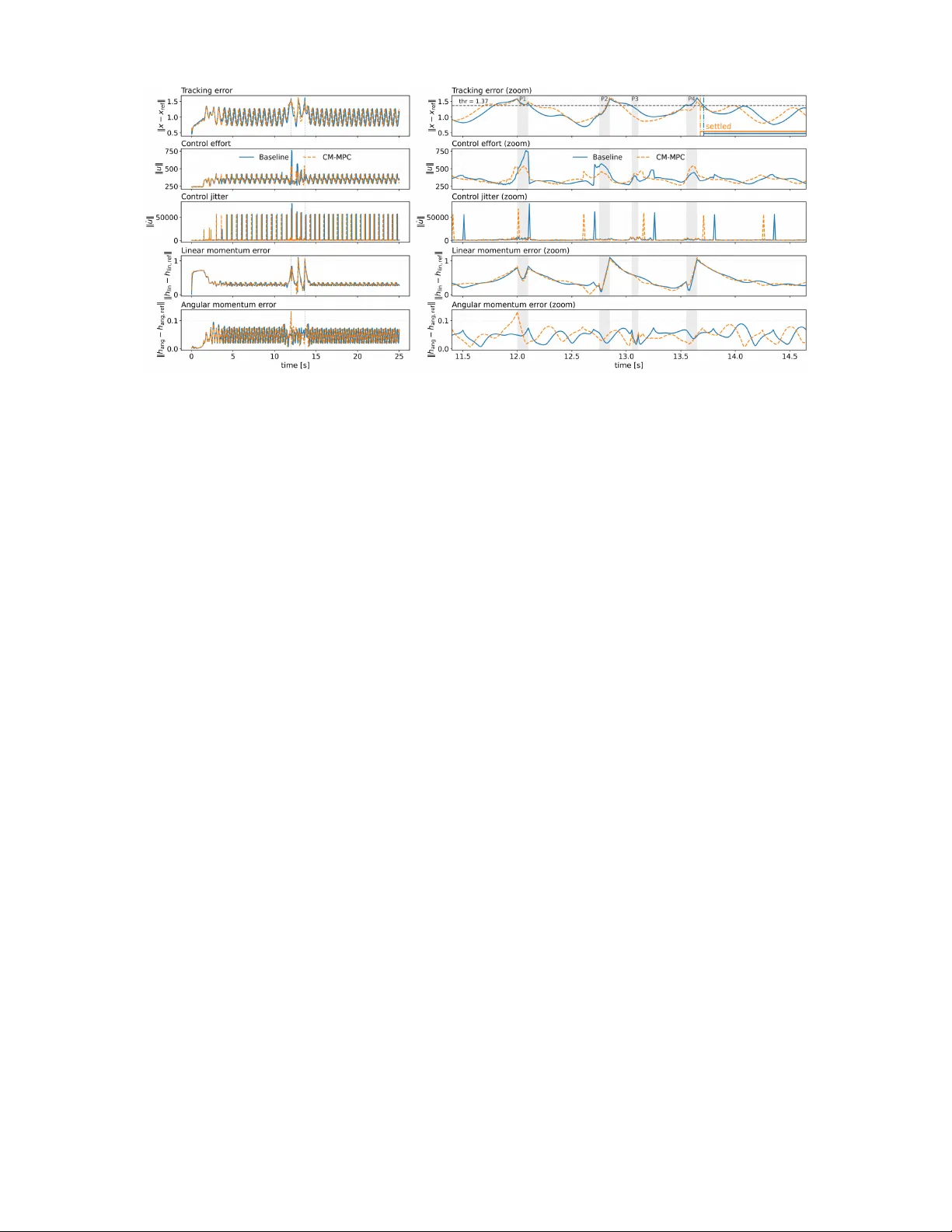

Cost-Matching Model Pr edictiv e Contr ol f or Efficient Reinf or cement Lear ning in Humanoid Locomotion W enqi Cai 1 , K yriakos G. V amvoudakis 2 , S ´ ebastien Gros 3 , and Anthony Tzes 1 Abstract — In this paper , we propose a cost-matching approach for optimal humanoid locomotion within a Model Predictiv e Control (MPC)-based Reinforcement Learning (RL) framework. A parameterized MPC formulation with centroidal dynamics is trained to approximate the action-value function obtained from high-fidelity closed-loop data. Specifically , the MPC cost-to-go is evaluated along recorded state–action trajectories, and the parameters are updated to minimize the discrepancy between MPC-predicted values and measured returns. This formulation enables efficient gradient-based learning while a voiding the computational burden of repeatedly solving the MPC problem during training. The proposed method is validated in simulation using a commercial humanoid platform. Results demonstrate impro ved locomotion performance and robustness to model mismatch and external disturbances compared with manually tuned baselines. I . I N T RO D U C T I O N Humanoid robotics has advanced rapidly in recent years. Humanoid locomotion poses particular challenges due to in- termittent contacts, high degrees of freedom, and strict safety constraints, while also requiring robustness to disturbances and modeling errors [1]. Model Predictive Control (MPC) has emerged as a domi- nant paradigm for humanoid locomotion because it provides a systematic mechanism to encode task objectiv es and enforce constraints through online optimization. T o satisfy real-time requirements, many MPC pipelines rely on simplified or reduced-order models that enable lightweight computation and high update rates [ 2 ]. Howe ver , such simplified models may neglect important effects present during high-fidelity ex ecution (e.g., contact realization, inertial coupling, and actuator or tracking-stack abstractions). Consequently , robust performance often relies hea vily on expert tuning of cost weights and terminal penalties [ 3 ]. Alternati vely , richer formulations that tighten kinematic consistency or incorporate more detailed dynamics [ 4 ] can impro ve fidelity but typically increase computational complexity and complicate real-time deployment [ 5 ]. In practice, this trade-off is often addressed using predictiv e–reactive hierarchies, where an MPC planner 1 W enqi Cai and Anthony Tzes are with the Electrical Engineering Program, New Y ork University Ab u Dhabi (NYUAD), 129188, UAE. Email: wenqi.cai@nyu.edu; anthony .tzes@nyu.edu 2 Kyriak os G. V amvoudakis is with the School of Aerospace Engineer- ing, Georgia Institute of T echnology , Atlanta, GA 30332, United States. Email: kyriak os@gatech.edu 3 S ´ ebastien Gros is with the Department of Engineering Cybernetics, Norwegian University of Science and T echnology (NTNU), T rondheim, Norway . Email: sebastien.gros@ntnu.no This work was supported in part by: a) NSF under grant Nos. SLES- 2415479 , CPS- 2227185 , b) NASA ULI under grant No. 80 NSSC 25 M 7104 , and c) the NYUAD Center for AI and Robotics, funded by T amkeen under the NYU AD Research Institute A ward CG010. generates reference trajectories that are executed by a higher- rate tracking module [ 6 ]. While effecti ve for real-time operation, this architectural separation can amplify systematic planning–ex ecution mismatches and make performance sen- sitiv e to cost design and tuning, particularly when operating with short horizons [7]. Reinforcement Learning (RL) has demonstrated strong capability in learning robust locomotion behaviors directly from data with efficient runtime ex ecution [ 8 ]. Ho wever , humanoid locomotion represents a challenging learning regime. T raining from scratch is often sample-inefficient and sensiti ve to reward design and curriculum choices, and successful deployments typically rely on large-scale simulation pipelines with additional mechanisms to mitigate sim-to-real discrepancies (e.g., domain randomization [ 9 ] and system identification [ 10 ]). Furthermore, purely learned policies do not naturally enforce hard safety and contact constraints [ 11 ]. These limitations hav e motiv ated control– learning formulations that connect RL with adaptive optimal control concepts and provide explicit conv ergence or stability analyses under disturbances [ 12 ], [ 13 ]. In this direction, MPC– learning hybrids aim to retain constrained optimization for deployment while lev eraging learning to improv e performance under model mismatch and uncertainty [14], [15]. In this context, this work builds upon our prior MPC-based Reinforcement Learning (MPC-RL) framework—initiated in [ 16 ] and further dev eloped in subsequent works (e.g., [ 17 ], [ 18 ], [ 19 ])—which treats a parameterized MPC as a structured v alue/action-value function approximator or policy approxima- tor . W ithin this framew ork, the predicti ve model, cost function, and constraints of the MPC are parameterized, and closed- loop behavior is improv ed by adjusting these parameters using RL signals. This approach allows learning to operate within the MPC structure, thereby preserving constraint handling and interpretability [ 20 ]. Ho wev er, a key limitation arises in complex, time-critical humanoid locomotion stacks: standard gradient-based MPC-RL methods typically require repeatedly solving the MPC optimization within the learning loop, making training prohibitively expensi ve when the MPC itself is already operating near real-time computational limits. Motiv ated by this challenge, we propose Cost-Matching MPC (CM-MPC), an efficient learning procedure that pre- serves the MPC-RL perspectiv e while av oiding solve-in- the-loop training. The central idea is to learn the MPC parameters θ by minimizing the discrepancy between an MPC surrogate cost-to-go Q MPC θ and a measured long-horizon return Q meas computed from closed-loop trajectories. Rather than differentiating through repeated MPC solves, Q MPC θ is ev aluated by rolling out the parameterized predictive model along recorded action segments and accumulating parame- terized stage costs, together with differentiable penalties for state-constraint violations. This formulation enables efficient gradient-based learning while maintaining deployment as a standard constrained receding-horizon MPC controller . Contributions. The contributions of this work are twofold. First, we introduce a cost-matching learning framework that extends our prior MPC-RL formulation while substantially reducing the computational b urden during training by eliminat- ing the need for repeated MPC solv es in the learning loop. The proposed framework is generic and can be applied to systems formulated as Optimal Control Problems (OCPs). Second, we demonstrate the approach on humanoid locomotion by de veloping a constrained OCP formulation based on centroidal dynamics and validating the learning procedure in high- fidelity simulation. An open-source implementation of the framew ork is also provided at https://github.com/R ISC- NYUAD/humanoid_mpc_cost_matching . I I . P RO B L E M F O R M U L A T I O N Consider humanoid locomotion control within a hierarchi- cal control architecture [ 21 ]. In this architecture, an upper- lev el nonlinear MPC planner operates at a lower frequency and generates reference commands, while a high-frequency Proportional Deriv ative (PD)-type tracking controller maps the MPC commands to joint torques (from u to ˜ u ), as sho wn in Fig. 1. Fig. 1: Cost-Matching MPC-RL framework for humanoids. In this work, we shall focus on the learning-based parame- terization of the MPC layer , while the low-lev el tracking controller is treated as a fixed module. Fig. 1 provides an overvie w of the proposed CM-MPC framew ork for humanoids, and the remaining components are introduced in the subsequent sections. A. Centr oidal Dynamics A complete centr oidal dynamics model is adopted in this work, building on the OCS2 centroidal formulation [ 22 ], [ 21 ]. Let I denote the inertial (world) frame and B the base frame attached to the robot trunk. The generalized coordinates of the robot are defined as q = [ p ⊤ b , θ ⊤ b , q ⊤ j ] ⊤ ∈ R 6+ n j , where p b ∈ R 3 denotes the base position in I , θ b ∈ R 3 represents the base orientation (ZYX Euler angles), and q j ∈ R n j denotes the joint angles. The state x ∈ R 12+ n j := h ⊤ lin h ⊤ ang q ⊤ ⊤ concate- nates the centroidal momentum h and the configuration q , where h lin , h ang ∈ R 3 denote the linear and angular momenta expressed in I . The control input u ∈ R 12+ n j consists of the contact wrenches for the left ( L ) and right ( R ) feet together with the joint velocities u := w ⊤ c,L w ⊤ c,R v ⊤ j ⊤ , where w c,i = [ f ⊤ c,i , m ⊤ c,i ] ⊤ ∈ R 6 represents the contact force and moment ex erted at the i -th foot ( i ∈ { L, R } ), and v j ∈ R n j denotes the commanded joint velocity . The system dynamics ˙ x = f dyn ( x , u ) is ˙ h lin = X i ∈{ L,R } f c,i + M g , (1a) ˙ h ang = X i ∈{ L,R } r c,i ( q ) × f c,i + m c,i , (1b) ˙ q = Ξ ( q ) v ⊤ b ω ⊤ b v ⊤ j ⊤ , (1c) where M denotes the total mass and g is the gravitational acceleration. The v ector r c,i denotes the moment arm from the Center of Mass (CoM) to the contact point of foot i , expressed in the inertial frame I . The matrix Ξ ( q ) maps generalized ve- locities to the configuration deriv ativ e ˙ q . These transformation- related quantities can be efficiently computed using rigid- body algorithms provided by the Pinocchio library [ 23 ]. The base velocity variables ( v b , ω b ) are determined by the centroidal momentum h and the joint velocities v j through h = A ( q ) v b ω b v j = A b ( q ) v b ω b + A j ( q ) v j , where A ( q ) is the Centroidal Momentum Matrix (CMM) [ 24 ], which captures the inertial coupling between base and limb motions. The matrices A b and A j denote the corresponding block partitions of the CMM. The base velocity can therefore be obtained as v b ω b = A b ( q ) − 1 ( h − A j ( q ) v j ) , ensuring that the kinematic e volution remains consistent with the centroidal momentum dynamics. B. Constraints The feasible state–input set is defined through equality and inequality constraints. T o distinguish phase-dependent constraints, let P st and P sw denote the sets of stance and swing phases, respectively . 1) Inequality Constraints: • Joint Limits. The joint configurations are restricted by mechanical limits: q min j ≤ q j ≤ q max j . • Foot Collision. T o pre vent self-collision, the minimum distance between the left and right feet, computed using Signed Distance Fields (SDF) Φ sdf , must maintain a safety margin d safe : Φ sdf ( q ) ≥ d safe . • Friction Cone. T o av oid foot slippage, the contact force for any activ e foot must lie within the friction cone: q f x c,i 2 + f y c,i 2 ≤ µf z c,i , ∀ i ∈ P st . • Center of Pressure (CoP). T o maintain contact stability with foot dimensions ( d x , d y ) , the CoP must remain within the support region: | m x c,i |≤ d y f z c,i , | m y c,i |≤ d x f z c,i , ∀ i ∈ P st . 2) Equality Constraints: • Zero V elocity . During stance, the foot maintains rigid ground contact and the linear velocity of the foot contact frame is constrained to zero: v c,i = J c,i ( q ) ˙ q = 0 , ∀ i ∈ P st , where J c,i ( q ) denotes the contact Jacobian. • Zero Wrench. Feet in the swing phase are contact-free, and their contact wrench must vanish: w c,i = 0 , ∀ i ∈ P sw . • Normal V elocity T racking. The normal ( z -axis) velocity of the swing foot is constrained to follow a reference profile v z ref to ensure accurate landing: v z c,i = v z ref ( t ) , ∀ i ∈ P sw . C. Optimal Contr ol Objective Humanoid locomotion is formulated as a constrained OCP ov er a finite prediction horizon N . Giv en the centroidal dynamics and feasibility constraints, the planner seeks an optimal control sequence that minimizes J MPC := T ( x N ) + N − 1 X i =0 L ( x i , u i ) , (2) where L ( · ) and T ( · ) denote the stage and terminal costs, respectiv ely . The stage cost is composed of L = L trac + L base + L com + L swin + L torq , (3) capturing reference tracking, dynamic stability , and physical plausibility . Specifically: • W e enforce tracking of the reference state trajectory x ref while regularizing the control ef fort: L trac = ∥ x − x ref ∥ 2 Q + ∥ u − u ref ∥ 2 R . • A task-space kinematic penalty is imposed on a base- attached frame to regularize upper-body motion: L base = ∥ e base ( x , u ) ∥ 2 Q base , where e base ∈ R 18 collects the task- space tracking errors. This term mitigates upper-body oscil- lations and promotes an upright posture during locomotion. • T o improve balance rob ustness, the horizontal CoM position is regularized toward the midpoint of the active foot contacts: L com = ∥ p xy com ( q ) − p xy mid ( q ) ∥ 2 Q com , where p xy com denotes the CoM position projected onto the horizontal plane, and p xy mid := 1 2 ( p xy c,L ( q ) + p xy c,R ( q )) denotes the midpoint between the left and right foot contacts. • T o regulate foot clearance and landing orientation, devia- tions of the swing-foot pose and twist from their references are penalized: L swin = P i ∈P sw ∥ e sw ,i ( x , u ) ∥ 2 Q sw , where e sw ,i ∈ R 18 denotes the task-space tracking error of the swing-foot frame relative to its reference trajectory . • T o discourage contact force distributions that gener- ate large joint torques, the contact wrench is regular - ized through the induced generalized torques: L torq = P i ∈P st J c,i ( q ) ⊤ w c,i 2 Q torq , where J c,i ( q ) denotes the contact Jacobian. The terminal cost is defined as a quadratic penalty on the terminal state, T ( x N ) = x N − x ref N 2 Q f . (4) The abov e OCP provides a structured mechanism for generating feasible locomotion beha viors. Ne vertheless, model mismatch and unmodeled effects can lead to suboptimal MPC policies and degraded closed-loop performance. T o address this issue, the next section introduces a data-dri ven adaptation of the MPC parameterization using rollout data collected during execution. I I I . C O S T - M AT C H I N G M P C - B A S E D R E I N F O R C E M E N T L E A R N I N G W e present the cost-matching method using the general Markov decision process (MDP) notation in order to em- phasize that the proposed approach applies to any problem that can be formulated as a constrained OCP and for which real-world data is av ailable. A. P arameterized MPC as a Q Function Consider a discounted infinite-horizon MDP with state s ∈ S , action a ∈ A , stage cost L ( s , a ) , and discount factor γ ∈ (0 , 1] . The objective is to minimize the expected discounted cumulati ve cost. T o obtain a structured and differentiable approximation of the optimal policy and its associated action-value function, we employ a finite-horizon parameterized model predictive control (MPC) scheme as a function approximator . The parameter vector θ ∈ R p jointly parameterizes the predictive model and cost functions of the MPC, enabling data-driv en adaptation in the presence of model mismatch and uncertainties. a) P arameterized MPC scheme: Giv en a measured state s , the horizon- N parameterized MPC problem is min x , u T θ ( x N ) + N − 1 X i =0 L θ ( x i , u i ) , (5a) s . t . ∀ i = 0 , . . . , N − 1 x i +1 = f θ ( x i , u i ) , g ( u i ) ≤ 0 , (5b) h ( x i , u i ) ≤ 0 , c ( x i , u i ) = 0 , (5c) h f ( x N ) ≤ 0 , c f ( x N ) = 0 , (5d) x 0 = s . (5e) The predictiv e model f θ , stage cost L θ , and terminal cost T θ are parameterized and adapted through learning. b) MPC-induced action-value function: T o enable learn- ing without repeatedly solving the optimization problem, we define the MPC-induced action-value along given actions recorded from the real system. For a length- N action segment a 0: N − 1 := { a 0 , . . . , a N − 1 } , the predicted rollout is x 0 = s , x i +1 = f θ ( x i , a i ) , i = 0 , . . . , N − 1 . (6) Note that even when the recorded actions are feasible on the real system, the predicted trajectory generated by (6) may violate state-related constraints due to model mismatch. Consequently , constraint-violation penalties are incorporated directly into the rollout-based value ev aluation. Define the per-stage and terminal violation residuals r i := [ h θ ( x i , a i )] + c θ ( x i , a i ) , r N := " [ h f θ ( x N )] + c f θ ( x N ) # , (7) where [ · ] + denotes the element-wise operator [ y ] + = max( y , 0) . Let the weights W , W f ≻ 0 , and define ϕ ( r i ) := ∥ r i ∥ 2 W , ϕ f ( r N ) := ∥ r N ∥ 2 W f . (8) The MPC-induced action-value function is then defined as Q MPC θ ( s , a 0: N − 1 ) := T θ ( x N ) + ϕ f ( r N )+ N − 1 X i =0 L θ ( x i , a i ) + ϕ ( r i ) , (9) where { x i } and { r i } are generated by (6) and (7) . Impor- tantly , (9) can be ev aluated through forward rollout and cost accumulation, and is differentiable with respect to θ , without requiring the solution of (5) during training. B. Action-V alue Evaluation via Data Rollouts Consider a dataset of trajectories collected from the real en vironment D = { ( s k , a k , ℓ k ) } M − 1 k =0 , where ( M ≫ N ) denotes the trajectory length and ℓ k = L ( s k , a k ) is the observed stage cost. The trajectories need not be optimal. For each time index k , we define the measured discounted return-to-go along the recorded trajectory as Q meas ( s k , a k ) := M − k − 1 X i =0 γ i L ( s k + i , a k + i ) . (10) This quantity represents the long-term cost accumulated by the system under the ex ecuted actions. In the proposed framew ork, Q meas serves as the learning target, while Q MPC θ provides a finite-horizon, model-based approximation ev aluated along the same recorded action segment. The discrepancy between these quantities primarily reflects a systematic mismatch between the real system and the parameterized predictiv e model. Giv en an index k , we compute Q MPC θ by initializing (6) at x 0 = s k and rolling out the parameterized model over the recorded action segment ( a k , . . . , a k + N − 1 ) . This estimate is compared with Q meas , which accumulates the discounted costs along the measured trajectory from k to M − 1 . From an RL perspectiv e, Q MPC θ and Q meas represent two ev aluations of the action-value at the same anchor state s k for the ex ecuted action segment: a finite-horizon model- based surrogate and the measured rollout return. While typically M ≫ N , the discount factor γ in Q meas and the terminal shaping T θ ( x N ) in Q MPC compensate for the horizon mismatch. Discounting attenuates far-future costs, while the terminal term summarizes the residual cost-to-go beyond the prediction horizon. This observation highlights the importance of terminal cost design in short-horizon MPC and motiv ates learning its relativ e weight to align the surrogate with long-term performance. C. Cost-Matching Objective and Gradient The key idea behind the learning objecti ve is to adjust θ such that the MPC-induced value Q MPC θ ev aluated along recorded action segments matches the long-term return Q meas observed on the real system. Giv en the dataset D and the measured returns (10) , the cost-matching objective is min θ L ( θ ) := E D h ( Q MPC θ − Q meas ) 2 i . (11) The expectation in (11) is approximated using empirical av erages ov er mini-batches. For a fixed recorded action segment, Q MPC θ is obtained via the forward rollout (6) and accumulation of the cost and penalty terms in (9) . Therefore, ∇ θ Q MPC θ can be computed by differentiating through the rollout recursion and the corresponding cost and penalty ev aluations. The loss gradient is ∇ θ L ( θ ) = E D 2( Q MPC θ − Q meas ) ∇ θ Q MPC θ . (12) Since training does not require solving (5) , gradient ev aluation reduces to differentiating a length- N rollout and scales linearly with the horizon length N . The parameters are updated using a first-order optimization method, θ ← θ − α ∇ θ L ( θ ) , (13) with step size α > 0 . After conv ergence, the learned parameters θ ⋆ define a structured MPC controller deployed online by solving (5) in a receding-horizon fashion. D. Con vergence of Cost Matching The cost-matching update (13) is a stochastic optimization of (11) . The follo wing theorem establishes con ver gence to a first-order stationary point under standard smoothness and bounded-variance assumptions. Assumption 1. F or each sample ξ = ( s , a 0: N − 1 , Q meas ) , define q ξ ( θ ) := Q MPC θ ( s , a 0: N − 1 ) . Assume that q ξ ( θ ) is continuously differ entiable in θ , and that ther e exist constants B Q , G Q , L Q > 0 such that, for all samples ξ and all θ , θ ′ , | q ξ ( θ ) − Q meas |≤ B Q , ∥∇ q ξ ( θ ) ∥≤ G Q , ∥∇ q ξ ( θ ) − ∇ q ξ ( θ ′ ) ∥≤ L Q ∥ θ − θ ′ ∥ . Mor eover , the mini-batch gradient estimator b ∇L ( θ ) used in (13) is unbiased and has bounded variance, i.e., E [ b ∇L ( θ ) | θ ] = ∇L ( θ ) , E b ∇L ( θ ) − ∇L ( θ ) 2 θ ≤ σ 2 . □ Theorem 1 (Con ver gence of cost matching) . Suppose that Assumption 1 holds and the loss L in (11) has Lipschitz- continuous gradient with constant L L = 2( G 2 Q + B Q L Q ) . Let the parameter sequence { θ j } be generated by θ j +1 = θ j − α b ∇L ( θ j ) , with a constant step size 0 < α ≤ 1 /L L . Then, for any K ≥ 1 , 1 K K − 1 X j =0 E ∥∇L ( θ j ) ∥ 2 ≤ 2( L ( θ 0 ) − L inf ) αK + αL L σ 2 , wher e L inf := inf θ L ( θ ) . In the full-batch case ( σ = 0 ), L ( θ j ) is monotonically nonincreasing and ∇L ( θ j ) → 0 as j → ∞ . Pr oof. For a single sample ξ , the gradient of the squared matching error is ∇ ( q ξ ( θ ) − Q meas ) 2 = 2( q ξ ( θ ) − Q meas ) ∇ q ξ ( θ ) . By Assumption 1, this gradient is Lipschitz with constant 2( G 2 Q + B Q L Q ) , hence ∇L is also Lipschitz with the same constant. Using the standard descent lemma [ 25 ] for smooth objectiv es, we hav e L ( θ j +1 ) ≤ L ( θ j ) − α ∇L ( θ j ) ⊤ b ∇L ( θ j )+ L L α 2 2 ∥ b ∇L ( θ j ) ∥ 2 . T aking the conditional expectation and using the unbiased and bounded-variance assumptions yields E [ L ( θ j +1 )] ≤ E [ L ( θ j )] − α 2 E [ ∥∇L ( θ j ) ∥ 2 ] + L L α 2 2 σ 2 , where the factor 1 / 2 follo ws from α ≤ 1 /L L . Summing this inequality from j = 0 to K − 1 giv es the stated bound. The full-batch case follows by setting σ = 0 . ■ Theorem 1 shows that the proposed cost-matching update con verges to a first-order stationary set of (11) under Assump- tion 1, which is compatible with our setting since training is performed over a bounded rollout buf fer . E. Constraints and F easibility The treatment of constraints differs between the learning and deployment phases. During training, the MPC-induced action-v alue function (9) incorporates state-related constraints through differentiable penalty terms based on the violation measures defined in (8) . This soft-penalty formulation allows constraint violations to be e valuated along data-dri ven rollouts without requiring the predicted trajectory to remain feasible, which is essential when the parameterized model does not perfectly capture the true system dynamics. After training, the learned parameters θ ⋆ define a structured MPC controller that is deployed by solving the constrained optimization problem (5) in a receding-horizon manner . In this phase, input and state constraints are enforced as hard constraints, thereby ensuring feasibility and safety in closed- loop operation. Because the learning process shapes the MPC model and cost to match the long-term performance of the real system, the resulting controller benefits from both data-driv en adaptation and the constraint-handling guarantees inherent to MPC. This separation—soft constraint handling during learning and hard constraint enforcement during online MPC ex ecution—enables efficient gradient-based training while preserving the safety and feasibility guarantees of MPC at runtime. I V . I N S TA N T I A T I O N O N H U M A N O I D L O C O M O T I O N This section instantiates the general cost-matching frame- work introduced in Section III for the humanoid locomotion OCP described in Section II. Rollouts are collected from real- world execution, and the MPC-induced value is ev aluated by replaying the logged MPC commands in the parameterized predictiv e model initialized at the measured state. W e next describe the model and cost parameterizations adopted in this locomotion setting. A. P arameterized Model and Objective The predictive model f θ used in (6) is obtained by discretizing the centroidal dynamics (1) . T o account for systematic mismatch between the predictiv e model and the real system, we parameterize the centroidal momentum propagation using learnable gains: ˙ h lin = θ hl ⊙ X i ∈{ L,R } f c,i + M g , (14a) ˙ h ang = θ ha ⊙ X i ∈{ L,R } r c,i ( q ) × f c,i + m c,i , (14b) where θ hl ∈ R 3 > 0 and θ ha ∈ R 3 > 0 are included in θ . This parameterization preserves the centroidal dynamics structure while absorbing systematic discrepancies caused by imperfect contact wrench realization, unmodeled compliance, and abstraction effects introduced by the low-le vel tracking controller . The parameterized stage cost retains the decomposi- tion in (3) as L θ = L trac ( θ ) + L base ( θ ) + L com ( θ ) + L swin ( θ ) + L torq ( θ ) , and the terminal cost is defined as T θ ( x N ) = ∥ x N − x ref N ∥ 2 Q f ( θ ) . Quadratic weights are parameterized through diagonal scalings as Q θ = diag( θ q ) , R θ = diag( θ r ) , Q f θ = diag( θ q f ) , where θ q , θ r , θ q f ∈ R 12+ n j ≥ 0 are learnable vectors. The weight matrices Q base ( θ ) , Q com ( θ ) , Q sw ( θ ) , and Q torq ( θ ) are parameterized analogously . B. Cost-Matching T raining Loop T raining is performed on-policy using the parameterized MPC controller . At iteration j , the parameter vector θ j defines a closed-loop locomotion policy obtained by solving (5) in a receding-horizon manner . Trajectories are collected by ex ecuting the resulting commands through the fixed low- lev el tracking module within a high-fidelity simulator . From the recorded trajectories, and for each sampled index k , we compute the measured return Q meas k (data length M ) and the rollout-based surrogate Q MPC θ j ,k (data length N ). The parameters are then updated by minimizing the squared discrepancy between these quantities, as summarized in Algorithm 1. C. Implications for Locomotion Control For bipedal locomotion, this instantiation yields several practical benefits. (i) Mitigating short-horizon bias. By matching Q MPC θ to long-horizon returns, the learned stage and terminal shaping terms ef fectiv ely act as a tail-cost approximation, mitigating the short-horizon bias inherent in real-time humanoid MPC. (ii) Compensating model mismatch. The learned param- eters adapt both the prediction model and the objectiv e to systematic discrepancies between the planning model and high-fidelity execution, including modeling inaccuracies and abstraction effects introduced by the tracking controller . Algorithm 1 On-policy Cost-Matching T raining for Hu- manoid Locomotion. 1: Input: initial parameters θ 0 , discount γ , stepsize α 2: for j = 0 , 1 , 2 , . . . do 3: Data collection. Run the closed-loop controller induced by (5) with θ j for M steps through the full execution stack, and log D j = { ( s k , a k , ℓ k ) } M − 1 k =0 with a k := u k and ℓ k := L ( s k , a k ) . 4: Sample a mini-batch of indices B ⊂ { 0 , . . . , M − 1 } . 5: for each k ∈ B do 6: Measured retur n. Compute Q meas k = P M − k − 1 i =0 γ i L ( s k + i , a k + i ) via (10). 7: MPC-side rollout. Set x 0 := s k and propagate for i = 0 , . . . , N − 1 : x i +1 = f θ j ( x i , a k + i ) . 8: Surrogate value. Evaluate Q MPC θ j ,k := Q MPC θ j ( s k , a k : k + N − 1 ) via (9). 9: end f or 10: Form L ( θ j ) = 1 |B| P k ∈B ( Q MPC θ j ,k − Q meas k ) 2 . 11: Update θ j +1 ← θ j − α ∇ θ L ( θ j ) . 12: end f or (iii) Robustness to disturbances. When trajectories are collected under external disturbances, the measured returns reflect the recov ery behaviors of the system. Consequently , the learned MPC objectiv e is implicitly tuned tow ard policies that improv e disturbance rejection under the same constrained MPC structure. (iv) Policy improvement. Although the update minimizes a value discrepancy rather than directly maximizing return, improving the fidelity of the MPC internal cost-to-go makes the receding-horizon minimizer of the surrogate objective more consistent with the true long-term performance, thereby yielding improved closed-loop policies. (v) T raining efficiency . Each update differentiates through a length- N rollout without repeatedly solving the MPC optimization problem within the learning loop, resulting in a computational cost that scales linearly with N . V . S I M U L A T I O N S T U D I E S A N D A N A L Y S I S This section ev aluates the proposed cost-matching MPC (CM-MPC) on a commercial humanoid platform (Unitree G1) using the locomotion stack described in Section II. In the following experiments, we compare two controllers operating under identical MPC horizon length, constraints, and tracking stack: (i) a manually tuned baseline using the initial parameters θ 0 , and (ii) the learned CM-MPC controller using the conv erged parameters θ ⋆ . A. Cost-Matching Diagnostics The learning objecti ve is to reduce the discrepancy be- tween the MPC-induced surrogate cost-to-go Q MPC θ and the measured long-horizon return Q meas collected from closed- loop executions. Figure 2 reports the validation mean squared error (MSE) of this value mismatch across training rounds. The mismatch decreases rapidly during the early training iterations and gradually conv erges as learning progresses. The block-wise ev olution of θ provides insights into the mechanisms underlying this improvement. In particular, the terminal cost weights ( Q f ) increase significantly throughout training, effecti vely acting as an implicit tail-cost approxima- tion that compensates for the inherent myopia of the finite- horizon MPC formulation. Concurrently , torque-regularization parameters increase, which suppresses impulsiv e control actions, while swing-foot tracking weights decrease slightly . This reshaping of the cost landscape prev ents ov erly strict kinematic tracking and instead allows the solver greater flexibility to prioritize whole-body balance and compliant behavior under uncertainties. Fig. 2: Closed-loop validation of the value mismatch MSE Q MPC θ − Q meas across training rounds (shaded region: 10 − 90 % across seeds). At each training round, 50 trajectories are collected ( 600 steps per trajectory), and the cost-matching objectiv e is optimized using 2000 gradient updates with dis- count factor γ = 0 . 985 . The inset summarizes the normalized block-wise ev olution of θ , illustrating how learning reshapes the MPC objective. Figure 3 provides complementary diagnostics at the sample lev el. The figure shows the density of Q MPC θ versus Q meas before training and after con vergence. After learning, the root-mean-square error (RMSE) between the two values decreases and the residual distribution tightens, indicating improv ed consistency between the MPC surrogate value and the measured return. T ogether , these diagnostics confirm that the learned parameters significantly improve the fidelity of the MPC internal cost-to-go approximation. (a) Round 0 ( θ 0 ). (b) Con ver ged ( θ ⋆ ). Fig. 3: V alue-matching diagnostics on a fixed ev aluation set: density plot of Q MPC θ versus Q meas . B. Robustness Evaluation under Disturbances T o assess whether the improved surrogate value represen- tation translates into better closed-loop behavior , we ev aluate both the baseline MPC and the learned CM-MPC under a scripted disturbance benchmark applied to the Unitree G1 humanoid. As illustrated in Figure 4, the disturbance profile consists of three lateral force pulses f ext ,y = 18 N with duration 0 . 10 s applied at t = { 12 . 00 , 12 . 75 , 13 . 55 } s (P1/2/4), and one yaw torque pulse m ext ,z = 8 N m with duration 0 . 06 s at t = 13 . 05 s (P3). This mixed force–torque sequence excites both linear and angular momentum channels without modifying the nominal reference trajectories. Consequently , differences in post-disturbance recov ery can be attributed to the objectiv e and model reshaping induced by the learned parameters θ . Fig. 4: Simulation snapshots of the humanoid during locomo- tion under a time-scheduled disturbance benchmark consisting of lateral force pulses f ext ,y and a yaw-torque pulse m ext ,z . Figure 5 shows a representative rollout comparing the baseline controller and CM-MPC. From top to bottom, the plots report the tracking error ∥ x − x ref ∥ , control effort ∥ u ∥ , control jitter ∥ ˙ u ∥ (discrete-time input difference), linear momentum error ∥ h lin − h lin , ref ∥ , and angular momentum error ∥ h ang − h ang , ref ∥ . Compared with the baseline controller , CM-MPC settles earlier to the pre-disturbance error band and produces smoother corrective actions, as evidenced by reduced control effort ∥ u ∥ and lower control jitter ∥ ˙ u ∥ during the recovery phase. At the same time, the momentum tracking errors do not consistently decrease: ∥ h lin − h lin , ref ∥ remains broadly comparable, and ∥ h ang − h ang , ref ∥ may increase during parts of the transient. This behavior reflects a deliberate trade-off induced by the learned parameters θ ⋆ . By relaxing strict tracking penalties (e.g., reduced swing-foot tracking weights) while increasing actuation regularization (larger R / torque-related weights), CM-MPC allocates its limited control authority under constraints tow ard stabilizing whole-body motion and rejecting disturbances, rather than enforcing exact momentum reference tracking at ev ery instant. C. Compr ehensive Statistical Analysis T o validate the observed improvements statistically , T able I summarizes results over six disturbance trials (mean ± std). The learned CM-MPC improv es nominal tracking while reducing high-percentile control effort, indicating that the learned parameters θ produce a better trade-off between tracking accuracy and control smoothness under the same constrained MPC structure. More importantly , the post- push recovery metrics sho w consistent improv ements. The Post-Push RMS decreases, while the Post-Push Peak and IAE (Integral Absolute Error) are reduced by 4 . 80% and 6 . 82% , respectiv ely , indicating smaller overshoot and less accumulated deviation following the disturbance. The Settling Time improv es by 31 . 25% under the same threshold-and-hold criterion, reflecting faster return to the pre- disturbance performance band. Momentum metrics remain largely comparable, with a minor trade-off in linear momen- tum error ( − 0 . 99% ). This observation is consistent with the qualitativ e analysis in Figure 5, where the learned controller prioritizes faster stabilization and smoother actuation during transient recov ery rather than enforcing strict reference momentum tracking. T ABLE I: Comprehensive Evaluation of Metrics (Mean ± Std) over Six Trials. Metric Baseline CM-MPC Impr . (%) Overall Trac king RMS Error ( ∥ e ∥ 2 ) 1.022 ± 0.012 1.009 ± 0.016 +1.29 Peak Error ( ∥ e ∥ ∞ ) 1.620 ± 0.021 1.597 ± 0.045 +1.41 Control Effort & Smoothness Max Effort ( ∥ u ∥ 99% ) 472.7 ± 4.3 447.8 ± 7.1 + 5.26 Max Jitter ( ∥ ˙ u ∥ 99% ) [ 10 4 ] 5.66 ± 0.00 5.60 ± 0.03 +1.10 Momentum Analysis Lin. Mom. ( ∥ h lin ∥ 2 ) 0.468 ± 0.011 0.467 ± 0.011 +0.35 Ang. Mom. ( ∥ h ang ∥ 2 ) 0.048 ± 0.001 0.048 ± 0.001 +1.18 Lin. Mom. Err . ( ∥ h lin − h ref lin ∥ 2 ) 0.372 ± 0.010 0.376 ± 0.010 -0.99 Ang. Mom. Err . ( ∥ h ang − h ref ang ∥ 2 ) 0.048 ± 0.001 0.048 ± 0.001 +1.18 P ost-Push Recovery Post-Push RMS ( ∥ e post ∥ 2 ) 1.026 ± 0.010 1.014 ± 0.015 +1.17 Post-Push Peak ( ∥ e post ∥ ∞ ) 1.539 ± 0.030 1.465 ± 0.020 + 4.80 Integral Abs. Err. (IAE) 0.547 ± 0.033 0.510 ± 0.030 + 6.82 Settling T ime ( t s ) 0.053 ± 0.010 0.037 ± 0.010 + 31.25 ⋇ P ost-push recovery metrics. Let e post ( t ) ≜ ∥ x ( t ) − x ref ( t ) ∥ ev aluated over the post-push window t ∈ [ t push end , t push end + T post ] . Define Post-Push RMS = ∥ e post ∥ 2 , Post-Push Peak = ∥ e post ∥ ∞ , and IAE = R max( e post − ¯ e pre , 0) dt (where ¯ e pre is the pre-push baseline). Settling Time t s is the first time after t push end that e post ( t ) remains within a pre-push threshold for a fixed hold duration. Overall, the simulation results demonstrate that the cost- matching procedure effecti vely minimizes the discrepancy between Q MPC θ and Q meas throughout training. The opti- mized parameters θ ⋆ lead to improved disturbance rejection characterized by faster recovery , reduced control effort, and smoother actuation, while simultaneously compensating for modeling discrepancies in the simplified centroidal dynamics through data-driven adaptation. V I . C O N C L U S I O N This paper presents a cost-matching frame work for learning the parameters of a constrained centroidal MPC controller for humanoid locomotion. By matching the MPC surrogate Fig. 5: Representativ e closed-loop response under the disturbance benchmark. Left: full-horizon signals. Right: zoom around the push interval. The gray bands indicate push windows. The dashed horizontal line indicates the settle threshold computed from pre-disturbance statistics, and the “settled” marker indicates the first time the error remains below this threshold. cost-to-go to measured long-horizon returns from closed- loop rollouts, the method av oids repeated MPC solves in the learning loop. Simulations show improv ed value consistency and enhanced robustness to model mismatch and external disturbances, suggesting that cost matching is a practical and computationally efficient way to shape long-horizon locomotion behavior while retaining standard constrained MPC deployment. R E F E R E N C E S [1] W . Li, G. Y ang, J. Wu, C. Pan, L. Sheng, and Q. Zhang, “ A comprehensiv e review on humanoid robots: perspectives from academia and industry , ” Engineering Information T echnology & Electr onic Engineering , vol. 27, no. 2, p. 250105, 2026. [2] Y .-M. Chen, J. Hu, and M. Posa, “Beyond inverted pendulums: T ask- optimal simple models of legged locomotion, ” IEEE T ransactions on Robotics , vol. 40, pp. 2582–2601, 2024. [3] S. Katayama, M. Murooka, and Y . T azaki, “Model predicti ve control of legged and humanoid robots: models and algorithms, ” Advanced Robotics , vol. 37, no. 5, pp. 298–315, 2023. [4] M. Y . Galliker , N. Csomay-Shanklin, R. Grandia, A. J. T aylor, F . Farshidian, M. Hutter , and A. D. Ames, “Planar bipedal locomotion with nonlinear model predictiv e control: Online gait generation using whole-body dynamics, ” in 2022 IEEE-RAS 21st International Confer ence on Humanoid Robots (Humanoids) , 2022, pp. 622–629. [5] Z. Gu, J. Li, W . Shen, W . Y u, Z. Xie, S. McCrory , X. Cheng, A. Shamsah, R. Griffin, C. K. Liu et al. , “Humanoid locomotion and manipulation: Current progress and challenges in control, planning, and learning, ” arXiv preprint , 2025. [6] K. Zhang, Y . Yin, J. W u, L. Jiang, and Y .-a. Y ao, “Gravity-compensated model predictive control and whole-body optimization for humanoid locomotion, ” Journal of Mechanisms and Robotics , vol. 18, no. 3, p. 031007, 2026. [7] J. Carpentier and P .-B. Wieber , “Recent progress in legged robots locomotion control, ” Curr ent Robotics Reports , vol. 2, no. 3, pp. 231– 238, 2021. [8] J. Peters, S. V ijayakumar, and S. Schaal, “Reinforcement learning for humanoid robotics, ” in Proceedings of the thir d IEEE-RAS international confer ence on humanoid r obots , 2003, pp. 1–20. [9] T . Haarnoja, B. Moran, G. Lever , S. H. Huang, D. Tirumala, J. Humplik, M. W ulfmeier , S. Tun yasuvunakool, N. Y . Siegel, R. Hafner et al. , “Learning agile soccer skills for a bipedal robot with deep reinforcement learning, ” Science Robotics , vol. 9, no. 89, p. eadi8022, 2024. [10] Y . Li, J. Li, W . Fu, and Y . W u, “Learning agile bipedal motions on a quadrupedal robot, ” in 2024 IEEE International Confer ence on Robotics and Automation (ICRA) , 2024, pp. 9735–9742. [11] H. Zhang, L. He, and D. W ang, “Deep reinforcement learning for real- world quadrupedal locomotion: a comprehensiv e review , ” Intelligence & Robotics , vol. 2, no. 3, pp. 275–297, 2022. [12] A. S. Chen and K. G. V amvoudakis, “Robot learning optimal control via an adaptiv e critic reservoir , ” in 2025 IEEE 64th Conference on Decision and Control (CDC) , 2025, pp. 3842–3847. [13] W . Gao, Y . W ang, and K. G. V amvoudakis, “Output-feedback learning- based adaptiv e optimal control of nonlinear systems, ” A utomatica , vol. 187, p. 112875, 2026. [14] Z. Zhang, X. Chang, H. Ma, H. An, and L. Lang, “Model predictiv e control of quadruped robot based on reinforcement learning, ” Applied Sciences , vol. 13, no. 1, p. 154, 2022. [15] K. G. V amvoudakis, Y . W an, F . L. Lewis, and D. Cansev er , Handbook of r einforcement learning and contr ol . Springer , 2021. [16] S. Gros and M. Zanon, “Data-driven economic NMPC using rein- forcement learning, ” IEEE T ransactions on Automatic Contr ol , vol. 65, no. 2, pp. 636–648, 2019. [17] M. Zanon and S. Gros, “Safe reinforcement learning using robust MPC, ” IEEE T ransactions on Automatic Contr ol , vol. 66, no. 8, pp. 3638–3652, 2020. [18] W . Cai, A. B. K ordabad, and S. Gros, “Energy management in resi- dential microgrid using model predicti ve control-based reinforcement learning and shapley value, ” Engineering Applications of Artificial Intelligence , vol. 119, p. 105793, 2023. [19] W . Cai, A. B. K ordabad, H. N. Esfahani, A. M. Lekkas, and S. Gros, “MPC-based reinforcement learning for a simplified freight mission of autonomous surface vehicles, ” in 2021 60th IEEE Conference on Decision and Control (CDC) , 2021, pp. 2990–2995. [20] W . Cai, S. Sawant, D. Reinhardt, S. Rastegarpour , and S. Gros, “ A learning-based model predictive control strategy for home energy management systems, ” IEEE Access , vol. 11, pp. 145 264–145 280, 2023. [21] J.-P . Sleiman, F . Farshidian, M. V . Minniti, and M. Hutter , “A unified MPC framew ork for whole-body dynamic locomotion and manipulation, ” IEEE Robotics and Automation Letters , vol. 6, no. 3, pp. 4688–4695, 2021. [22] F . Farshidian et al. , “OCS2: An open source library for optimal control of switched systems, ” [Online]. A vailable: https://github.com/leggedr obotics/ocs2. [23] J. Carpentier , G. Saurel, G. Buondonno, J. Mirabel, F . Lamiraux, O. Stasse, and N. Mansard, “The Pinocchio C++ library – A fast and flexible implementation of rigid body dynamics algorithms and their analytical deriv atives, ” in IEEE International Symposium on System Inte grations (SII) , 2019. [24] D. E. Orin, A. Goswami, and S.-H. Lee, “Centroidal dynamics of a humanoid robot, ” Autonomous r obots , vol. 35, no. 2, pp. 161–176, 2013. [25] Y . Nesterov , Introductory lectur es on conve x optimization: A basic course . Springer Science & Business Media, 2013, vol. 87.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment