EpiPersona: Persona Projection and Episode Coupling for Pluralistic Preference Modeling

Pluralistic alignment is essential for adapting large language models (LLMs) to the diverse preferences of individuals and minority groups. However, existing approaches often mix stable personal traits with episode-specific factors, limiting their ab…

Authors: Yujie Zhang, Weikang Yuan, Zhuoren Jiang

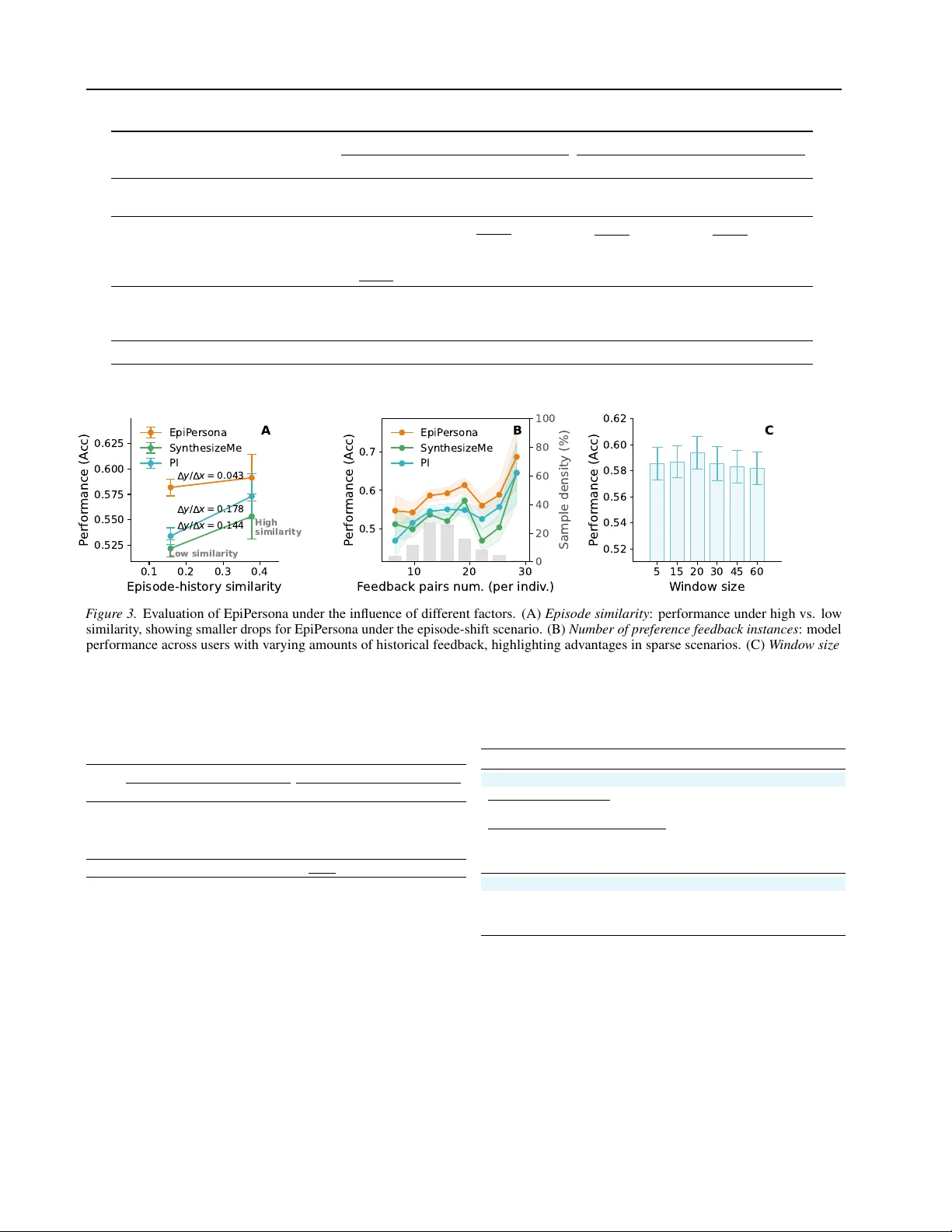

EpiP ersona: P ersona Projection and Episode Coupling f or Pluralistic Pre ference Modeling Y ujie Zhang 1 W eikang Y uan 1 Zhuoren Jiang 1 Pengwei Y an 1 Abstract Pluralistic alignment is essential for adapting large language models (LLMs) to the diverse preferences of individuals and minority groups. Howe ver , existing approaches often mix stable personal traits with episode-specific factors, lim- iting their ability to generalize across episodes. T o address this challenge, we introduce EpiPer - sona , a framew ork for explicit persona–episode coupling . EpiPersona first projects noisy pref- erence feedback into a lo w-dimensional persona space, where similar personas are aggregated into shared discrete codes. This process separates en- during personal characteristics from situational signals without relying on predefined preference dimensions. The inferred persona representation is then coupled with the current episode, enabling episode-aware preference prediction. Extensi ve experiments show that EpiPersona consistently outperforms the baselines. It achie ves notable per - formance gains in hard episodic-shift scenarios, while remaining ef fecti ve with sparse preference data. 1. Introduction Aligning artificial intelligence with human preferences has been a crucial point of both theoretical and applied research for AI systems ( Kirk et al. , 2024a ; Zhang et al. , 2024 ; Zhu et al. , 2025 ; Guan et al. , 2025 ; Xu et al. , 2025 ). Howe ver , most existing approaches treat human preferences as uni- form, ov erlooking the heterogeneity of human preferences ( Li et al. , 2025 ; Oh et al. , 2025 ). There is an increasing need for systems that can meet the wide range of social and moral v alues found in real societies ( Zheng et al. , 2023 ; Kirk et al. , 2024b ; Chen et al. , 2025 ). Addressing the preferences of individuals and minority groups is therefore essential, making pluralistic alignment ( Poddar et al. , 2024 ; Chen 1 Zhejiang Uni versity . Correspondence to: Zhuoren Jiang < jiangzhuoren@zju.edu.cn > . Pr eprint. Mar ch 31, 2026. et al. , 2025 ; Ryan et al. , 2025 ) a vital step to ward b uilding human-centered AI systems. As large language models increasingly demonstrate the po- tential for mentalistic capabilities ( Xie et al. , 2024 ; Cross et al. , 2025 ; Riemer et al. , 2025 ), there is a gro wing demand for them to understand and adapt to individuals’ preferences ( Hagendorff , 2024 ; Sorensen et al. , 2025 ). Existing studies hav e sought the pairwise comparison approach (e.g., ‘Which answer do you prefer?’) to identify personalized preferences due to its user -friendliness ( Zheng et al. , 2023 ; Kirk et al. , 2024b ; Ryan et al. , 2025 ; Balepur et al. , 2025 ; Li et al. , 2025 ; Poddar et al. , 2024 ). In terms of preference modeling, ap- proaches can be categorized into non-parametric modeling and parametric modeling. Non-parametric modeling lev er- ages historical retriev al ( Zollo et al. , 2024 ) or text-based preference summarization ( Balepur et al. , 2025 ; Ryan et al. , 2025 ) to infer the personalized preference. While intuiti ve, this approach is less effecti ve at higher-le vel abstractions and ef ficient compression as preferences are particularly im- plicit and complex ( Jang et al. , 2023 ; Balepur et al. , 2025 ). P arametric modeling compresses long-term interaction his- tories into a semantic space, such as predefined preference dimensions ( Oh et al. , 2025 ; Li et al. , 2025 ) (e.g., harm- lessness, helpfulness, and humor) or context compression ( Zhao et al. , 2023b ; Poddar et al. , 2024 ) for downstream tasks such as pluralistic re ward learning ( Bradle y & T erry , 1952 ). Howe ver , the unique challenges remain unsolved. T wo interconnected challenges are highlighted: F irst , existing methods mix stable personal traits with tempo- rary episodic signals in the representation, failing to separate stable traits from dynamic episodic influences. As a result, static traits are not disentangled, limiting cross-episode gen- eralization. Second , pre v ailing methods rely on predefined dimensions to model preferences. Human preference is bet- ter understood as a latent semantic space to be disco vered ( Poddar et al. , 2024 ), while predefined dimensions constrain its richness and introduce priori biases. T o address these challenges, we propose persona projec- tion , which disentangles stable traits (i.e., the persona) from episode-specific preference feedback. It is motiv ated by the insight that indi viduals’ preferences are dri ven by their la- tent and stable personas. For example (see Figure 1 ), a user 1 A in a work decision scenario and user B in an online shop- ping scenario may both prefer concise and direct responses; their feedback thus reflects the same ef ficiency-driven per - sona. Formally , our method projects individuals’ preference feedback from the high-dimensional token space X ⊂ R D into a lo w-dimensional persona space Z p ⊂ R d ( d ≪ D ), enabling feedback x ∈ X with similar latent personas to be aggregated into shared persona codes z p ∈ Z p . In other words, we learn a mapping x 7→ z p . This mapping function con verts the originally redundant and noisy feedback into a combinatorial persona code, enabling robust generalization across individuals and episodes. Building on persona projection, we introduce EpiPersona, a method for PERSON A – EPI sode coupling. It constructs persona codes automatically without relying on predefined dimensions by introducing parameterized abductive reason- ing and VQ-based mapping ( V an Den Oord et al. , 2017 ). After disentangling personas, we further couple them with the current episode, capturing both stable persona and situa- tional factors, enabling robust prediction of cross-episode preferences. T o thoroughly assess EpiPersona’ s ability to capture indi- vidual pluralistic preferences, we conducted experiments across tasks, including LLM-as-a-judge and pluralistic re- ward learning. In the unseen-user setting, our method con- sistently outperforms the baselines, achie ving a notable im- prov ement in challenging episodic-shift scenarios. A closer look at EpiPersona rev eals that: a) In challenging episodic- shift scenarios, EpiPersona sho ws more rob ust generaliza- tion; its performance degrades by only 0.043, compared to 0.177 and 0.144 drops for SO T A baselines. b) Even when user preference data is limited, EpiPersona can accurately infer preferences from minimal information, highlighting its adaptability . 2. Related work Pluralistic alignment adapts the preferences of div erse groups, especially minority populations, thereby maximiz- ing the collectiv e benefits ( Zollo et al. , 2024 ) and promoting fairness across heterogeneous user communities ( Kirk et al. , 2024b ; a ). Studies show that even high-performing mod- els struggle to satisfy preferences across dif ferent regions, cultures, and topics ( Kirk et al. , 2024b ). These challenges underscore the diversity and complexity of human prefer- ences. Due to the sparseness of indi vidual preference data ( Oh et al. , 2025 ; Li et al. , 2025 ), existing approaches use human feedback in the form of pairwise preference compar- isons ( Zheng et al. , 2023 ; Poddar et al. , 2024 ; Ryan et al. , 2025 ; Balepur et al. , 2025 ; Li et al. , 2025 ; Kirk et al. , 2024b ). Prior studies employ the following methods to model plu- ralistic preferences: Non-parametric modeling. Pr efer ence summarization (text-based). Preference dimensions are often defined as such attributes as helpfulness, honesty , and harmlessness ( Askell et al. , 2021 ). Howe ver , simplified dimensions may not be able to capture the diversity of human preferences ( Kirk et al. , 2024a ). Studies ( Ryan et al. , 2025 ; Balepur et al. , 2025 ) utilize preference attribution methods to ex- tract individual preferences. Among them, SynthesizeMe ( Ryan et al. , 2025 ) uses bootstrap reasoning on limited user interaction data and verifies inferred preferences on the val- idation set to synthesize the personas. PI ( Balepur et al. , 2025 ) le verages abducti ve reasoning on preference data to infer users’ underlying needs and interests, and then gener - ates outputs tailored to these inferred characteristics. They can be adaptiv e to in-context learning, such as LLM-as-a- judge optimizing ( Gu et al. , 2024 ) and personal LLM ( Ryan et al. , 2025 ; Li et al. , 2025 ; Balepur et al. , 2025 ; Du et al. , 2025 ). Retrieval-augmented generation (RAG). Personal- LLM ( Zollo et al. , 2024 ) combines context learning with historical information retriev al to produce the personalized responses. In contrast, EpiPersona employs the parameter- ized modeling approach to globally and abstractly represent personalized preferences. Parametric modeling. Parametrized preferences hav e been used for personalized rew ard model training ( Zhao et al. , 2023b ; Poddar et al. , 2024 ) and personal LLM fine-tuning ( Huang et al. , 2024 ). GPO ( Zhao et al. , 2023b ) adds a fe w- shot preference module on top of the base LLM and learns to predict group preference scores from historical user data. VPL ( Poddar et al. , 2024 ) compresses historical user pref- erence data into a parameterized latent distribution using the V AE module. Pr edefined prefer ence dimensions. AM- PLe ( Oh et al. , 2025 ) proposed a multi-dimensional rew ard function. It employs a posterior update method to model user preferences in a multi-dimensional manner and pre- dict preference scores. Additionally , existing research ( Li et al. , 2025 ) constructs a comprehensiv e preference space from users’ behavioral and descriptive information, such as psychological models of fundamental needs. In con- trast, EpiPersona disentangles stable personas from episode- specific preference feedback and does not require predefined preference dimensions. 3. Problem Setting Let U denote a population of indi viduals and E the space of episodes. Each individual u ∈ U may participate in multiple episodes e ∈ E , where each episode is associated with a query q e . For a gi ven episode e , the system presents a set of candidate responses { τ ( i ) e } K i =1 . W e assume that preferences are generated by an underlying, unobserved rew ard function: R u ( τ e ) → R , (1) 2 E p i so d e 1: I need to de c i de toda y w he the r to a do pt a ne w surgi c a l tool . G i v e m e a qui c k rec om m en da ti on . E p i so d e 1: I w an t to b u y a d raw i n g s o ftw are th at I c an s tar t u s i n g q u i ckl y. A n y re c o mmen d ati o n s ? R espo n se 1: G o ahe ad i f … ; o th e rwis e , d e l ay an d ru n a s mall tr i al fir s t. R e spo n se 2: T h e re are many o p ti o n s . Y o u c o u l d c o mp are th e fo l l o w i n g 10 s o ftw are … R e sp o n se 1: Ch o o s e X o r Y , s i n c e th e y’ re u s e r - fri e n d l y, fu l l y fe atu r e d ... R e spo n se 2: T h i s to o l h as man y featu re s . … S tu d y e ac h mo d u l e b e fo re d e c i d i n g A B Preferenc e feedb a c k s pa c e D X d p × N A × N B U p d a te d b y x x Pe rs o na s pac e L a t en t p er s o n a La tent Pe rs o na mo de l ing u Z ( 6 ) p z ( 6 ) ( 4 ) ( 1 ) ( , , , ) ... p p p z z z ( 5 ) ( 6 ) ( 7 ) ( , , , ) ... p p p z z z P er s o n a co d e EpiPers o n a - B E piP e rs o na - A Pers o n a - epis o de c o upl in g Perso n a p ro j ec t io n u Z : u Z : u Z u Z e Enc D ec E p iso d e - sp ecifi c Pre fere n ce Re w a rd sc o re e : ep iso d e : res p o n s e e L a t en t p er s o n a F igure 1. W e propose EpiPersona , which first maps the preference feedback space X into a persona space Z p . Based on an individual’ s historical preference information, we model the individual’ s latent persona Z u . W e further introduce two variants of EpiPersona that couple persona Z u with episode context e for preference prediction: EpiPersona-A , which is tailored to inferring indi viduals’ episode-specific preferences in a plug-and-play manner , and EpiP ersona-B , which is designed for pluralistic rew ard learning. which reflects the utility assigned to response τ e by individ- ual u under episode e . Observed feedback. The reward function R u is not di- rectly observ able. Instead, for each indi vidual u and episode e , we observe noisy pairwise preference feedback of the form: x = ( τ ( i ) e , τ ( j ) e , y e ) , (2) where y e ∈ { 0 , 1 } . y e = 1 indicates τ ( i ) e is preferred ov er τ ( j ) e in episode e . W e denote the collection of observations for individual u as: X u = { ( τ ( i ) e , τ ( j ) e , y e ) | e ∈ E u } . (3) Latent persona assumption. W e define the episode- related response τ e and a latent persona Z u of individual u . W e assume that the underlying rew ard function can be modeled as: R u ( τ e ) ˆ = R ( Z u , τ e ) ∈ R , (4) where R ( · , · ) is an estimated re ward function. The latent per - sona Z u is unobserved b ut can be inferred from observ able features X u : Z u ∼ p ( Z u | X u ) . (5) 4. Method W e present an overvie w of EpiPersona in Figure 1 . First, we disentangle indi viduals’ stable persona traits from their pref- erence feedback via persona projection, mapping feedback with similar personas into a shared latent space (Section 4.1 ). Subsequently , the model couples the inferred latent persona with the specific episodes to enable contextualized prefer- ence prediction (Section 4.2 ). The EpiPersona achiev es persona disentanglement through parameterized abducti ve reasoning (Section 4.3 ) and vector-quantized mapping (Sec- tion 4.4 ), automatically constructing a structured persona space without requiring predefined preference dimensions. 4.1. Persona pr ojection Formulation and assumptions. W e assume that each observed preference feedback x ∈ X u from individual u is jointly determined by the episode-specific responses τ e and a latent, stable persona Z u . Formally , the preference formation process can be expressed as x ∼ p ( · | Z u , τ ( i ) e , τ ( j ) e ) . (6) Latent persona modeling. Specifically , we model an in- dividual’ s persona Z u as a latent variable that potentially follows a multimodal distrib ution, reflecting that a single in- dividual may e xhibit multiple persona patterns ( Chen et al. , 2025 ). Concretely , we represent the latent persona of indi- vidual u as a set of persona codes: Z u = { z p | z p ∈ Z p } (7) where each z p corresponds to a stable persona code. Cru- cially , each persona code z p lies in a shar ed persona space Z p , which is common to all individuals. T o further represent the persona code z p , we le verage the indi vidual’ s feedback x ∈ X u . Specifically , we map feedback instance x into the persona code z p ∈ Z p via a persona projection function g : g : x 7→ z p , z p ∈ Z p ⊂ R d , (8) 3 where d ≪ D and D is the dimension of the original feed- back x ∈ R D . It learns to automatically construct the persona space. In this way , Z u = { g ( x ) | x ∈ X u } ⊆ Z p captures the sta- ble yet div erse persona traits of an individual, while ground- ing them in the shared persona space Z p . This shared space Z p is also jointly updated from feedback across all individ- uals, enabling it to retain collectiv e uni versality . W e realize the mapping g through parameterized abductive r easoning (Section 4.3 ) and VQ-based mapping (Section 4.4 ). The ov erall persona projection mapping function is illustrated in Figure 2 . Objective of persona pr ojection. In summary , the objec- tiv e of persona projection is to map episode-specific feed- back x into a low-dimensional persona code z p ∈ Z u . This process aim to disentangle stable persona from episodic variability . 4.2. Persona-episode coupling Based on persona projection, we couple the user persona Z u with episode-related context to model an indi vidual’ s prefer- ences. This process captures how an individual’ s persona may be acti v ated in an episode. W e propose two modeling variants tailored to dif ferent downstream scenarios: EpiPersona-A: This method generates a natural language description of the indi vidual preference under the episode e . Note that the input only includes the latent persona Z u (based on historical preference) and episode e . It allows the generated Pref e to be used in a plug-and-play manner , such as judging the individuals’ potential choices. Formally , the process is defined as: Pref e = g ψ ( Z u , e ) (9) where g ψ is a transformer-based language model trained with an NTP ( Radford et al. , 2018 ) objective. The output follows a JSON format containing ke ys such as persona , value , identification , intent , style , etc. Detailed comparison of EpiPersona-A with related methods is in Appendix A.6 . EpiPersona-B: The reward is modeled via a Bradley-T erry preference comparison ( Bradley & T erry , 1952 ) based on latent persona Z u : R θ ( Z u , τ e ) = W ⊤ ϕ h θ ( Z u , τ e ) (10) where h θ is an encoder producing episode-persona coupled representation, ϕ is an optional nonlinear transformation, W maps the representation to a scalar reward. More detailed model architecture is sho wn as Figure 5 in the Appendix. For the inference algorithm, see the Alg. 1 and 2 in the Appendix. 4.3. Parameterized abductiv e reasoning T o realize the persona projection, we approach this using ab- ductive r easoning ( Peirce , 1934 ; Balepur et al. , 2025 ; Ryan et al. , 2025 ), which seeks the plausible persona for observed data. Building on this idea, we introduce parameterized ab- ductiv e reasoning, which estimates an individual’ s persona by comparing choices. The estimated persona r is repre- sented as a learnable v ector and does not require the LLM to generate a human-readable reasoning trace. Formally , λ ( P , τ ( i ) e , τ ( j ) e , y e ) 7→ r , (11) where P denotes the prompt that elicits the model to infer the persona underlying the user’ s choice, λ is the LLM- based encoder . The estimated persona r captures the poten- tial rationale behind why the indi vidual prefers one response ov er another . At this stage, r is still instance-le vel and not shared across users. … … C o m p r e s s e d W i n d o w s VQ - b a s e d m a p p i n g … i n jec t P a r a m et e r i z ed a b d u c t i v e r ea so n i n g Pe r s o n a s p ac e d p Pe r s o n a c o d e ( ) ( ) { 1 , , } 2 a r g m in wm m P p wz − ‖ ‖ u Z L a t en t p er s o n a ( ) ( ) ( , , , ) ij e e e y F igure 2. Overview of the persona projection mapping. 4.4. VQ-based mapping Building on parameterized abductiv e reasoning, we further map the estimated persona r into a shared persona space using a VQ-based mapping, thereby capturing a shared insight. The procedure is as follo ws: V ariable persona compr essed window . Let the estimated persona r ∈ R d be injected into compressed windows as an embedding. The resulting window representation is then projected into a shared representation space via f θ ( · ) , yield- ing w ( w ) = f θ ( r ) . Each window w thus encapsulates a latent estimated persona deri ved from observed indi vidual feedback. Both the windo w length and the embedded vec- tors within the window are fle xibly configurable. Persona codebook. W e then map these windo ws onto a set of learnable persona code z p , without assuming the predefined dimensions. Where P denotes the codebook size. The persona space Z p is defined as: Z p = { z (1) p , z (2) p , . . . , z ( P ) p } , z p ∈ R d (12) 4 Nearest-neighbor quantization. Let the windows of u be defined as W ∈ R n × d , where n denotes the number of windows for user u . w ( w ) is quantized by selecting the nearest persona code from Z p : m ∗ ( w ) = arg min m ∈{ 1 ,...,P } ∥ w ( w ) − z ( m ) p ∥ 2 . (13) The corresponding persona code is then defined as: Z u = z ( m ∗ ( w ) ) p n w =1 . (14) Commitment loss. T o ensure the encoder output remains close to the assigned code, we utilize the commitment loss: L commit = 1 n n X w =1 ∥ w ( w ) − sg ( z ( w ) p ) ∥ 2 , (15) where sg [ · ] denotes the stop-gradient operator . Exponential moving average update. W e adopt an ex- ponential moving a verage scheme to stabilize codebook learning. For each persona code z ( m ) p , we maintain an EMA count N m and an EMA embedding E m . At each update step, we first accumulate statistics ov er the current batch: N m ← γ N m + (1 − γ ) n X w =1 I h m ∗ ( w ) = m i , (16) E m ← γ E m + (1 − γ ) n X w =1 I h m ∗ ( w ) = m i w ( w ) , (17) where γ ∈ (0 , 1) is the EMA decay rate and I [ · ] denotes the indicator function. The persona code is then updated as: z ( m ) p ← E m N m . (18) 5. Experiment 5.1. Experiment setting Baselines. W e compare EpiPersona against two cate- gories of methods: a) Non-parametric preference modeling. These methods model preferences using natural language and can be adapted to do wnstream LLMs in a plug-and-play manner: SynthesizeME ( Ryan et al. , 2025 ) synthesizes personas through LLM-based bootstrap reasoning and v al- idation; PersonalLLM ( Zollo et al. , 2024 ) retriev es simi- lar interactions as in-conte xt examples to adapt to indi vid- ual preferences; PI ( Balepur et al. , 2025 ) infers personas from preference feedback and incorporates them into con- text for alignment. b) Parametric preference modeling. GPO ( Zhao et al. , 2023b ) uses a transformer to predict pref- erences conditioned on context samples; VPL ( Poddar et al. , 2024 ) employs a V AE to model individual preferences as latent distributions; P AL ( Chen et al. , 2025 ) lev erages ideal points in a latent preference space with mixture modeling for pluralistic alignment; Bradley-T erry (BT) ( Bradle y & T erry , 1952 ) learns rew ard scores from pairwise compar- isons without personalization. Detailed descriptions of all baselines are provided in Appendix A.4 . T asks W e ev aluate on two tasks that assess different as- pects of personalized preference modeling: a) LLM-as-a- judge. Follo wing existing work ( Ryan et al. , 2025 ), this task ev aluates the ability to generate natural language de- scriptions of indi vidual preferences. Giv en an indi vidual’ s historical pairwise preference data and the current episode, the model generates the individual’ s preference in natu- ral language, which is then used by an LLM judge to pre- dict which of two candidate responses the individual w ould prefer . This task is designed for EpiPersona-A and non- parametric baselines. T o ensure robustness, we permute the order of candidate responses, ef fectiv ely doubling the testset size. b) Pluralistic reward learning. This task ev al- uates the ability to predict fine-grained preference scores. Giv en an individual’ s historical pairwise preference data, the current episode and a response candidate, the model pre- dicts the utility score for that response. Follo wing standard practice ( Zhao et al. , 2023a ; Chen et al. , 2025 ), we ev aluate on pairwise comparisons and report accuracy . This task is designed for EpiPersona-B and parametric baselines. See Appendix A.1 for the complete task formulation. Datasets Prism ( Kirk et al. , 2024b ) is a values & contro- versy guided human feedback dataset that links the sociode- mographics and stated preferences of 1,500 participants from 75 countries. The dataset includes pairwise compari- son feedback, where participants e valuate multiple model re- sponses in the same con versational context, enabling person- alized and context-a ware model alignment analysis. Arena ( Zheng et al. , 2023 ) is a large-scale crowdsourced bench- mark where indi viduals interact with two anon ymous LLMs simultaneously and choose their preferred response without knowing the models’ identities. By capturing individual preference signals in real con versational settings, the dataset enables ev aluation of how well models adapt to di verse individual e xpectations. Dataset setting. Notably , the dataset contains no overlapping individuals between the train and test sets. Moreover , the historical and current samples do not share ov erlapping con v ersations. This setup ensures we ev aluate on unseen individuals with unseen con versations, which aligns with real-world scenarios. The distribution and characteristics of the dataset are shown in Appendix A.3 . 5 Implementation setup Fine-tuning is performed using the LoRA method applied to the main linear layers. The VQ codebook is initialized using K-means, and the commit loss weight is set to 0.2. λ is consistent with the finetuned backbone model used. Additional settings for EpiPersona- A: For do wnstream evaluati on (LLM-as-a-Judge), LLMs including GPT -OSS-120B and Llama-3.3-70B-Instruct are employed. The backbone model used is Llama-3.1-8B. Ad- ditional settings f or EpiPersona-B: The backbone model used is Llama-3.1-8B and Llama-3.2-3B. Additional imple- mentation details are provided in Appendix A.5 . 5.2. Results W e ev aluate EpiPersona from tw o aspects: 1) Performance val idation of EpiP ersona-A and EpiPersona-B , and 2) Con- tribution of each component to preference modeling. EpiPersona-A impr oves LLMs’ ability to judge person- alized preferences. As shown in T able 1 , we compare EpiPersona-A with non-parametric preference modeling approaches. Compared to the RAG-based method P ersonal- LLM , our approach achiev es performance gains, improving by approximately 3% on the Prism dataset and approxi- mately 3 . 6% on the Arena dataset. W e attribute this im- prov ement to two main limitations of RA G-based methods. F irst , retrie ving and concatenating historical interactions sig- nificantly increases the input length, which poses challenges for effecti ve context utilization by large language models. Second , it tends to focus on localized or recent individual information, potentially o verlooking indi viduals’ global and relativ ely stable preferences. In contrast, state-of-the-art methods such as SynthesizeMe and PI generate global individual personas based on histori- cal interactions. While ef fecti ve in capturing static individ- ual traits, these approaches are less capable of adapting to episode-dependent preference v ariations. Our method bal- ances contextualized preferences and static personas by pre- dicting episode-specific preferences conditioned on a latent persona. Moreover , parametric individual history modeling enables effecti ve preference compression and abstraction, improving generalization and rob ustness. EpiPersona-B impr oves perf ormance on pluralistic re- ward learning. As shown in T able 2 , we compare EpiPersona-B (ours) with parametric preference modeling methods on pluralistic re ward learning. EpiPersona-B con- sistently achie ves higher or comparable performance than other baselines, demonstrating the effecti veness of disen- tangling the persona from episode-related information via persona projection. Specifically , while VPL and P AL model latent individual information, their performance are sensi- tivity to context and model size. In contrast, EpiPersona-B shows rob ust preference prediction, achieving the highest scores on Prism with both Llama-3.2-3B and Llama-3.1-8B, and leading performance on Arena for Llama-3.1-8B. Ablation of EpiP ersona. T o v alidate the contrib ution of each component in EpiPersona, we conduct ablation stud- ies on the LLM-as-a-judge task across both datasets using EpiPersona-A. T able 3 presents the results comparing two main ablation variants. Ablation v ariant 1: T ext-based pr eference modeling (w/o modeling preference on latent persona) includes global per- sona pr ediction , which summarizes the te xt-based persona from the historical information, and episode-specific pr efer- ence pr ediction , which couples the text-based persona with the episode to predict the episode-specific preference (see prompt in Appendix A.10 .4). Ablation variant 2: Parametric prefer ence modeling represents indi vidual preferences with a latent persona Z u . T o assess the contribution of specific components, we con- ducted ablation studies by remo ving the VQ-based mapping ( w/o VQ-based mapping ) and parametric abducti ve reason- ing ( w/o param. abductive reasoning ) modules. Component-wise analysis. For ablation variant 1 (T ext- based preference modeling) , using the stable global per- sona achiev es moderate performance. Adding episode- specific preference prediction with different LLMs brings minor improv ements, suggesting that te xt-based approaches alone have limited ability to capture episode-lev el pref- erences For instances, despite using different LLMs (e.g. Llama-3.1-8B and GPT -OSS-120B) as generators and fine- tuning the models to adapt from stable personas to dynamic preferences (with distilled Llama-3.1-8B), it still fails to achiev e superior performance. For ablation variant 2 (Parametric pr eference modeling) , representing indi vidual preferences with a latent persona Z u boosts performance. Ablation studies show that remo ving the VQ-based mapping or the parametric abductiv e reason- ing module reduces accuracy , confirming that each com- ponent contributes to ov erall performance. Our full model achiev es the highest scores, indicating that parametric mod- eling with explicit latent personas enhances robustness com- pared to text-based approaches. 5.3. Analysis W e analyze episode generalization from three perspectiv es: (1) episode similarity , (2) the number of individual pref- erence feedback instances , i.e. , reflecting the data distri- bution, and (3) window size , i.e . , the number of observable preference feedback instances, treated as a hyperparameter . Analysis of episode similarity effects. Episode similar - ity refers to the similarity between an individual’ s current 6 T able 1. Comparison on LLM-as-a-judge task. V alues are percentages with standard deviations (95% CI). P R I S M A R E N A G P T - O S S - 1 2 0 B L L A M A - 3 . 3 - 7 0 B G P T - O S S - 1 2 0 B L L A M A - 3 . 3 - 7 0 B R A N D O M 5 0 % 5 0 % 5 0 % 5 0 % L L M A S A J U D G E ( B A S E M O D E L ) 5 6 . 3 0 ± 1 . 2 6 % 55 . 9 0 ± 1 . 2 2 % 6 4 . 3 6 ± 3 . 9 1 % 6 4 . 1 8 ± 3 . 9 1 % S Y N T H E S I Z E M E ( G P T- O S S - 1 2 0 B ) 5 7 . 2 4 ± 1 . 2 7 % 5 7 . 6 7 ± 1 . 2 7 % 6 4 . 8 9 ± 4 . 1 1 % 6 4 . 3 9 ± 3 . 9 3 % S Y N T H E S I Z E M E ( L L A M A - 3 . 1 - 8 B ) 5 7 . 2 7 ± 1 . 2 8 % 5 6 . 8 6 ± 1 . 2 6 % 6 3 . 8 3 ± 4 . 2 6 % 6 4 . 1 7 ± 3 . 8 5 % P I ( G P T - O S S - 1 2 0 B ) 5 4 . 0 0 ± 1 . 3 7 % 5 7 . 2 2 ± 1 . 2 8 % 6 1 . 7 2 ± 4 . 7 8 % 6 3 . 2 8 ± 3 . 9 8 % P I ( L L A M A - 3 . 1 - 8 B ) 5 7 . 4 8 ± 1 . 2 8 % 5 6 . 5 8 ± 1 . 3 6 % 6 3 . 0 6 ± 4 . 1 3 % 6 3 . 9 7 ± 3 . 9 7 % P E R S O N A L L L M ( D E M O N U M = 1 ) 5 4 . 3 9 ± 1 . 2 6 % 5 5 . 8 1 ± 1 . 2 4 % 5 9 . 7 1 ± 4 . 1 0 % 5 9 . 3 3 ± 4 . 0 9 % P E R S O N A L L L M ( D E M O N U M = 3 ) 5 6 . 2 9 ± 1 . 3 8 % 5 6 . 4 2 ± 1 . 2 5 % 6 2 . 0 1 ± 4 . 1 3 % 6 3 . 1 3 ± 3 . 8 9 % P E R S O N A L L L M ( D E M O N U M = 5 ) 5 6 . 4 6 ± 1 . 3 4 % 5 6 . 2 1 ± 1 . 2 4 % 6 2 . 4 3 ± 4 . 9 7 % 6 4 . 1 3 ± 3 . 9 2 % E P I P E R S O N A - A 5 9 . 3 8 ± 1 . 2 5 % 5 9 . 1 5 ± 1 . 3 0 % 6 6 . 0 7 ± 3 . 9 8 % 6 5 . 0 1 ± 3 . 9 0 % 0.1 0.2 0.3 0.4 Episode-history similarity 0.525 0.550 0.575 0.600 0.625 P erfor mance (A cc) y / x = 0 . 0 4 3 y / x = 0 . 1 4 4 y / x = 0 . 1 7 8 Low similarity High similarity A EpiP ersona SynthesizeMe PI 10 20 30 F eedback pairs num. (per indiv .) 0.5 0.6 0.7 P erfor mance (A cc) B EpiP ersona SynthesizeMe PI 5 15 20 30 45 60 W indow size 0.52 0.54 0.56 0.58 0.60 0.62 P erfor mance (A cc) C 0 20 40 60 80 100 Sample density (%) F igur e 3. Evaluation of EpiPersona under the influence of different factors. (A) Episode similarity : performance under high vs. low similarity , showing smaller drops for EpiPersona under the episode-shift scenario. (B) Number of prefer ence feedback instances : model performance across users with varying amounts of historical feedback, highlighting advantages in sparse scenarios. (C) W indow size : effect of the number of observ able preference feedback instances on EpiPersona. T able 2. Comparison of the pluralistic reward model learning. V alues are percentages with standard de viations (95% CI). P R I S M A R E NA L L A M A - 3 . 2 - 3 B L L A M A - 3 . 1 - 8 B L L A M A - 3 . 2 - 3 B L L A M A - 3 . 1 - 8 B G P O 5 5 . 2 6 ± 1. 2 5 % 5 6 . 4 8 ± 1. 2 4 % 5 3 . 8 7 ± 4. 1 4 % 5 6 . 6 9 ± 4. 0 5 % V P L 5 8 . 2 6 ± 1. 7 5 % 5 8 . 2 3 ± 1. 7 5 % 5 6 . 6 9 ± 5. 8 1 % 5 4 . 9 3 ± 5. 7 1 % P A L 5 6 . 8 1 ± 1. 7 5 % 5 4 . 2 3 ± 1. 7 3 % 6 0 . 5 6 ± 5. 6 3 % 5 6 . 6 9 ± 5. 8 1 % B T 5 6 . 5 8 ± 1. 4 7 % 5 7 . 1 1 ± 1. 5 5 % 5 1 . 9 4 ± 5. 3 1 % 5 4 . 0 6 ± 5. 4 2 % O U R S 5 9 . 6 0 ± 1 .7 6 % 5 8 . 5 4 ± 1. 7 6 % 59 . 5 7 ± 5. 6 7 % 57 . 4 5 ± 5. 1 2 % episode and their historical preference episodes. Lo w sim- ilarity indicates a large episodic gap and shift (i.e., an episode-shift scenario ), implying that historical episodes are less informativ e to the current sample. Episode sim- ilarity is computed using Qwen3-Embedding . As shown in Figure 3 (A), the results reveal two interesting findings. F inding 1 : EpiPersona, SynthesizeMe , and PI all perform better under high episode similarity , suggesting that similar contexts help models more accurately infer indi vidual pref- erences. This also indicates that samples in the episode-shift scenario are relativ ely hard. F inding 2 : Under lo w episode T able 3. Ablation study on EpiPersona. P R I S M A R E N A A B L A T I O N V A R I A N T 1 : T E X T - B A S E D P R E F E R E N C E M O D E L I N G Global persona pr ediction O N LY S TA B L E P E R S O NA G P T - O S S - 1 2 0 B 5 7 . 5 4 ± 1 . 1 5 % 6 4 . 0 5 ± 4 . 1 3 % Episode-specific pr efer ence pr ediction W I T H D I S T I L L E D L L A M A - 3 . 1 - 8 B 5 6 . 9 8 ± 1 . 3 8 % 6 2 . 5 2 ± 4 . 0 8 % W I T H G P T - O S S - 1 2 0 B 5 6 . 8 2 ± 1. 6 3 % 6 3 . 0 4 ± 4. 1 5 % W I T H L L A M A - 3 . 1 - 8 B 5 5 . 9 6 ± 1. 2 6 % 6 1 . 2 7 ± 4. 0 0 % A B L A T I O N V A R I A N T 2 : P AR A M E T E R - BA S E D P R E F E R E N C E M O D E L I N G W / O V Q - BA S E D M A P P I N G 5 7 . 5 6 ± 1. 1 3 % 6 2 . 6 6 ± 4. 0 1 % W / O PAR A M . A B D U C T I V E R E A S O N I N G 5 7 . 9 9 ± 1. 1 3 % 6 4 . 3 6 ± 4. 0 1 % E P I P E R S O NA ( O U R S ) 5 9 . 3 8 ± 1. 2 5 % 6 6 . 0 7 ± 3. 9 8 % similarity , our method shows a smaller performance drop, demonstrating its ability to handle unseen episodes and im- prov e generalization during inference: our model drops only 0 . 043 , compared to 0 . 178 and 0 . 144 for the other methods. In the episode-shift scenario, our method outperforms the state-of-the-art by 6% . 7 Analysis of instance number . As sho wn in Figure 3 (B), across v arying ranges of user preference counts, our method maintains consistently competitiv e performance compared to the baselines. The benefits of EpiPersona are especially apparent when user preferences are sparse. These two analyses collecti vely validate EpiPersona’ s strong generalization capability: it maintains rob ust performance under both episodic distribution shift and data scarcity , out- performing SO T A baselines in both challenging settings. Analysis of window size (observable prefer ence feed- back). As shown in Figure 3 (C), we further examined the performance of EpiPersona with different visible win- dow sizes. The results show that e v en with relativ ely small window sizes, the model maintains consistent performance. These findings suggest that EpiPersona can ef fectively uti- lize relativ ely sparse historical information. In practical applications, where user history is often short or partially missing, the model’ s ability to perform reasonably well with a small windo w size supports its applicability in such scenarios. More results are presented in Appendix A.9 . 6. Conclusion W e introduced EpiPersona, a novel method for pluralistic alignment that jointly models stable personas and dynamic episodes. By projecting individual preference feedback into a low-dimensional, shared persona space via parameterized abductiv e reasoning and vector quantization, our method effecti vely disentangles enduring personal traits from sit- uational v ariations. Extensiv e experiments on real-world personalized datasets demonstrate that EpiPersona outper - forms existing parametric and non-parametric baselines in both LLM-as-judge and pluralistic rew ard learning tasks. The result sho ws that the proposed persona-episode cou- pling can adapt to the episode-shift and sparsity scenarios. Impact Statement This paper advances pluralistic alignment in large language models by disentangling stable user traits from situational context. Our goal is to help AI systems better serve div erse populations, particularly when user data is sparse or pref- erences shift across conte xts. Rob ust preference modeling can reduce “one-size-fits-all” alignment failures and bet- ter accommodate heterogeneous values and communication needs. The proposed method provides a modeling frame- work that may support personalization research and re ward learning. As with any personalization technology , certain consider- ations warrant attention: (1) Privacy: Persona represen- tations may unintentionally encode sensitiv e attributes or enable inference of priv ate information from behavioral traces. (2) Responsible deployment: Like other prefer- ence modeling techniques, our method should be deployed thoughtfully to av oid potential misuse in manipulati ve or surveillance conte xts. T o mitigate these risks, we use publicly av ailable resear ch benchmarks (Prism and Arena) that ha ve undergone peer revie w and ethics revie w with established priv acy protec- tions. W e strictly adhere to dataset usage agreements and do not collect new user data. W e emphasize that our method is intended for scientific resear ch to advance understand- ing of pluralistic alignment, and we will release it under a restricti ve license that encourages responsible use while prev enting misuse. W e belie ve this work contributes positi vely to building more inclusiv e AI systems. As personalized alignment meth- ods continue to develop, we encourage the community to advance complementary research on pri vacy-preserving per - sonalization techniques, fairness e valuation across demo- graphic groups and episodic contexts, and best practices for responsible deployment. References Askell, A., Bai, Y ., Chen, A., Drain, D., Ganguli, D., Henighan, T . J., Jones, A., Joseph, N., Mann, B., Das- sarma, N., Elhage, N., Hatfield-Dodds, Z., Hernan- dez, D., K ernion, J., Ndousse, K., Olsson, C., Amodei, D., Brown, T . B., Clark, J., McCandlish, S., Olah, C., and Kaplan, J. A general language assistant as a laboratory for alignment. ArXiv , abs/2112.00861, 2021. URL https://api.semanticscholar. org/CorpusID:244799619 . Balepur , N., Padmakumar , V ., Y ang, F ., Feng, S., Rudinger , R., and Boyd-Graber , J. L. Whose boat does it float? im- proving personalization in preference tuning via inferred user personas. In Che, W ., Nabende, J., Shutov a, E., and Pilehvar , M. T . (eds.), Pr oceedings of the 63rd Annual Meeting of the Association for Computational Linguis- tics (V olume 1: Long P apers) , pp. 3371–3393, V ienna, Austria, July 2025. Association for Computational Lin- guistics. ISBN 979-8-89176-251-0. doi: 10.18653/v1/ 2025.acl- long.168. URL https://aclanthology. org/2025.acl- long.168/ . Bradley , R. A. and T erry , M. E. Rank analysis of incom- plete block designs: I. the method of paired comparisons. Biometrika , 39(3/4):324–345, 1952. Chen, D., Chen, Y ., Rege, A., W ang, Z., and V inayak, R. K. P AL: Sample-efficient personalized reward mod- eling for pluralistic alignment. In The Thirteenth In- ternational Confer ence on Learning Repr esentations , 8 2025. URL https://openreview.net/forum? id=1kFDrYCuSu . Cross, L., Xiang, V ., Bhatia, A., Y amins, D. L., and Haber , N. Hypothetical minds: Scaf folding theory of mind for multi-agent tasks with large language models. In The Thirteenth International Confer ence on Learning Repr e- sentations , 2025. Du, B., Y e, Z., W u, Z., Monika, J., Zhu, S., Ai, Q., Zhou, Y ., and Liu, Y . V aluesim: Generating backstories to model in- di vidual v alue systems. arXiv pr eprint arXiv:2505.23827 , 2025. Gu, J., Jiang, X., Shi, Z., T an, H., Zhai, X., Xu, C., Li, W ., Shen, Y ., Ma, S., Liu, H., et al. A survey on llm-as-a- judge. arXiv pr eprint arXiv:2411.15594 , 2024. Guan, J., W u, J., Li, J.-N., Cheng, C., and W u, W . A surve y on personalized alignment–the missing piece for large language models in real-world applications. arXiv pr eprint arXiv:2503.17003 , 2025. Hagendorff, T . Mapping the ethics of generative ai: A comprehensiv e scoping revie w . Minds and Machines , 34 (4):39, 2024. Huang, Q., Liu, X., Ko, T ., W u, B., W ang, W ., Zhang, Y ., and T ang, L. Selectiv e prompting tuning for personal- ized conv ersations with LLMs. In Ku, L.-W ., Martins, A., and Srikumar , V . (eds.), F indings of the Association for Computational Linguistics: ACL 2024 , pp. 16212– 16226, Bangkok, Thailand, August 2024. Association for Computational Linguistics. doi: 10.18653/v1/2024. findings- acl.959. URL https://aclanthology. org/2024.findings- acl.959/ . Jang, J., Kim, S., Lin, B. Y ., W ang, Y ., Hessel, J., Zettle- moyer , L., Hajishirzi, H., Choi, Y ., and Ammanabrolu, P . Personalized soups: Personalized large language model alignment via post-hoc parameter merging. arXiv pr eprint arXiv:2310.11564 , 2023. Kirk, H. R., V idgen, B., R ¨ ottger , P ., and Hale, S. A. The benefits, risks and bounds of personalizing the alignment of large language models to individuals. Natur e Machine Intelligence , 6(4):383–392, 2024a. Kirk, H. R., Whitefield, A., Rottger , P ., Bean, A. M., Mar - gatina, K., Mosquera-Gomez, R., Ciro, J., Bartolo, M., W illiams, A., He, H., et al. The prism alignment dataset: What participatory , representativ e and indi vidualised hu- man feedback rev eals about the subjectiv e and multi- cultural alignment of lar ge language models. Advances in Neural Information Pr ocessing Systems , 37:105236– 105344, 2024b. Li, J.-N., Guan, J., W u, S., W u, W ., and Y an, R. From 1,000,000 users to ev ery user: Scaling up personal- ized preference for user -lev el alignment. arXiv pr eprint arXiv:2503.15463 , 2025. Oh, M., Lee, S., and Ok, J. Comparison-based active prefer - ence learning for multi-dimensional personalization. In Che, W ., Nabende, J., Shutova, E., and Pilehvar , M. T . (eds.), Pr oceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , pp. 33145–33166, V ienna, Austria, July 2025. Association for Computational Linguistics. ISBN 979-8-89176-251-0. doi: 10.18653/v1/2025.acl- long. 1590. URL https://aclanthology.org/2025. acl- long.1590/ . Peirce, C. S. Collected papers of charles sanders peirce , volume 5. Harv ard Uni versity Press, 1934. Poddar , S., W an, Y ., Ivison, H., Gupta, A., and Jaques, N. Personalizing reinforcement learning from human feedback with variational preference learning. Advances in Neural Information Pr ocessing Systems , 37:52516– 52544, 2024. Radford, A., Narasimhan, K., Salimans, T ., Sutskev er , I., et al. Improving language understanding by generativ e pre-training. 2018. Riemer , M., Ashktorab, Z., Bouneffouf, D., Das, P ., Liu, M., W eisz, J. D., and Campbell, M. Position: Theory of mind benchmarks are broken for lar ge language models. In F orty-second International Confer ence on Machine Learning P osition P aper T rac k , 2025. Ryan, M. J., Shaikh, O., Bhagirath, A., Frees, D., Held, W ., and Y ang, D. SynthesizeMe! inducing persona-guided prompts for personalized rew ard models in LLMs. In Che, W ., Nabende, J., Shutova, E., and Pilehvar , M. T . (eds.), Pr oceedings of the 63rd Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , pp. 8045–8078, V ienna, Austria, July 2025. Association for Computational Linguistics. ISBN 979-8-89176-251-0. doi: 10.18653/v1/2025.acl- long. 397. URL https://aclanthology.org/2025. acl- long.397/ . Sorensen, T ., Mishra, P ., Patel, R., T essler , M. H., Bakker , M. A., Ev ans, G., Gabriel, I., Goodman, N., and Rieser , V . V alue profiles for encoding human v ariation. In Pr o- ceedings of the 2025 Confer ence on Empirical Methods in Natural Languag e Pr ocessing , pp. 2047–2095, 2025. V an Den Oord, A., V inyals, O., et al. Neural discrete rep- resentation learning. Advances in neural information pr ocessing systems , 30, 2017. 9 Xie, C., Chen, C., Jia, F ., Y e, Z., Lai, S., Shu, K., Gu, J., Bibi, A., Hu, Z., Jurgens, D., et al. Can large language model agents simulate human trust behavior? Advances in neural information pr ocessing systems , 37:15674–15729, 2024. Xu, Y ., Zhang, J., Salemi, A., Hu, X., W ang, W ., Feng, F ., Zamani, H., He, X., and Chua, T .-S. Personalized generation in lar ge model era: A survey . arXiv pr eprint arXiv:2503.02614 , 2025. Zhang, Z., Rossi, R. A., Kveton, B., Shao, Y ., Y ang, D., Zamani, H., Dernoncourt, F ., Barrow , J., Y u, T ., Kim, S., et al. Personalization of lar ge language models: A survey . arXiv pr eprint arXiv:2411.00027 , 2024. Zhao, C., Zhao, H., He, M., Zhang, J., and Fan, J. Cross- domain recommendation via user interest alignment. In Pr oceedings of the ACM web confer ence 2023 , pp. 887– 896, 2023a. Zhao, S., Dang, J., and Grover , A. Group preference opti- mization: Fe w-shot alignment of large language models. In NeurIPS 2023 W orkshop on Instruction T uning and Instruction F ollowing , 2023b. Zheng, L., Chiang, W .-L., Sheng, Y ., Zhuang, S., W u, Z., Zhuang, Y ., Lin, Z., Li, Z., Li, D., Xing, E., et al. Judging llm-as-a-judge with mt-bench and chatbot arena. Ad- vances in neural information pr ocessing systems , 36: 46595–46623, 2023. Zhu, M., W eng, Y ., Y ang, L., and Zhang, Y . Personality alignment of large language models. In The Thirteenth International Confer ence on Learning Repr esentations , 2025. Zollo, T . P ., Siah, A. W . T ., Y e, N., Li, A., and Namkoong, H. Personalllm: T ailoring llms to individual preferences. In Pluralistic Alignment W orkshop at NeurIPS 2024 , 2024. 10 A. A ppendix A.1. T ask design T ask 1: LLM-as-a-Judge. W e note that Eq. 19 defines a general e valuation paradigm rather than a model-specific design. In this paradigm, an LLM is prompted to infer a natural-language description of an indi vidual user’ s preference based on historical interactions X u , optionally conditioned on the current episode e . This formulation is applicable to a broad class of non-parametric baselines that rely on in-conte xt prompting. Pref = G ( X u , e ) or Pref = G ( X u ) (19) The generated preference Pref is expressed in natural language and summarizes the individual’ s persona or preference. Subsequently , preference and a set of candidate responses { τ 1 , τ 2 } are provided to a large language model acting as a judge. The judge predicts which response the user is more likely to select: ˆ y e = arg max τ ∈{ τ 1 ,τ 2 } P ( y e = 1 | Pref , τ ) . (20) This task is mainly designed for EpiP ersona-A and non-parametric preference modeling, following the LLM-as-a-judge ev aluation paradigm. T ask 2: Pluralistic Reward Lear ning . Let u denote an individual user with latent persona Z u , and let e denote the current episode. The user’ s historical preference information is summarized as X u . Giv en a candidate response τ under episode e , the goal is to predict whether the user would select this response. W e model the individual-specific utility of a response via a re ward function R θ ( Z u , τ ) , (21) where Z u captures stable persona traits inferred from X u , and τ denotes the response content. Follo wing the Bradley-T erry formulation, the probability that the user selects τ under episode e is defined as P ( y e = 1 | Z u , τ ) = exp R θ ( Z u , τ ) 1 + exp R θ ( Z u , τ ) . (22) The training data consists of tuples ( X u , e, τ , y e ) , where y e ∈ { 0 , 1 } indicates whether the user selects the response. The model is trained by minimizing the negati ve log-likelihood ov er the observed labels. At inference time, R θ ( Z u , τ ) serves as a scalar utility score for ranking candidate responses. Model performance is e valuated using accurac y (Acc). This task is primarily designed for EpiPersona-B and parametric preference modeling. A.2. Contribution Our work makes three k ey contrib utions: 1. Disentangled persona modeling. W e propose persona pr ojection , which separates stable individual traits (latent personas) from episode-specific preference feedback, addressing the limitation of prior methods that mix stable traits with temporary episodic signals. 2. Flexible, combinatorial latent persona modeling. W e introduce a parameterized abductive r easoning and VQ-based mapping approach to automatically construct persona codes without relying on predefined dimensions, capturing latent semantics of human preferences. 3. Episode-coupled prefer ence prediction. W e dev elop EpiP ersona , which couples personas with situational episode information, enabling robust prediction of cross-episode preferences. Experiments show that EpiPersona generalizes well to unseen users and episodic-shift scenarios while remaining effecti ve with limited preference data. 11 20 40 V alue 0 25 50 75 100 Count 103 16 5 2 1 1 0 2 0 1 Ar ena - Hist. sample/user 10 20 30 V alue 0 50 100 150 200 Count 37 111 201 174 79 72 32 10 4 3 P rism - Hist. sample/user F igure 4. The distribution of the history samples per user . A.3. Dataset construction W e follow the existing study ( Ryan et al. , 2025 ) in adopting their dataset split (train, v alidation, and test sets) as well as the division of each individual’ s historical and current data to ensure more consistent and continuous experimental v alidation. These two datasets compile the high-quality and challenging indi vidual data to serve as a benchmark for personalized alignment. The distribution of historical sample numbers per individual is shown in Figure 4 . The statistics of user numbers and historical/current pairs for both datasets are summarized in T able 4 . T able 4. Statistics of user numbers and historical/current pairs for Arena and Prism datasets. A R E NA P R I S M U S E R N U M H I S T / C U R PA I R S U S E R N U M H I S T / C U R PA I R S T R A I N 2 3 2 0 2 / 1 0 3 2 8 0 4 1 9 2 / 2 1 7 7 V A L I DATI O N 1 9 1 5 5 / 8 0 6 5 9 7 3 / 5 1 4 T E S T 8 9 5 1 4 / 2 8 4 3 7 8 5 8 1 6 / 3 0 3 3 A.4. Baselines a) Non-parametric preference modeling. These methods model individuals’ preferences using natural language , and therefore can be directly adapted to downstream LLMs in a plug-and-play manner . SynthesizeME ( Ryan et al. , 2025 ): Integrate LLM-based bootstrap reasoning, v alidation-dri ven persona synthesis, e xample selection, and prompt optimization, forming a framew ork for indi vidual persona modeling. PersonalLLM ( Zollo et al. , 2024 ): Retrie ve similar interactions based on embeddings and adds a small number of indi vidual feedback samples as conte xtual examples, enabling the LLM to dynamically adapt to indi vidual preferences during generation and mitigating data sparsity without additional fine-tuning. PI ( Balepur et al. , 2025 ): PI abductiv ely infers individuals’ personas from their preferences over selected and rejected outputs, and then incorporates these personas into the context to assess whether they can effecti vely guide the model toward outputs that align with individuals’ lik ely choices. b) Parametric preference modeling. GPO ( Zhao et al. , 2023b ): (Group preference optimization) is a transformer -based few-shot preference model that di vides each training group’ s preference data into context and target samples, learning to predict target preferences conditioned on the context. VPL ( Poddar et al. , 2024 ): (V ariational Preference Learning) employs a V AE to model individual preferences as latent variables, inferring each indi vidual’ s hidden preference distribution from a small amount of feedback, and then conditioning the re ward model on this distribution to achie ve personalized alignment with div erse individual preferences. P AL ( Chen et al. , 2025 ): (Pluralistic Alignment Framework) The P AL framework lev erages the ideal point of indi viduals (which represents each individual’ s preference as a point in a latent preference space) combined with mixture modeling to learn a unified latent re ward representation from div erse human preferences, thereby achieving few-shot generalizable pluralistic alignment for heterogeneous indi vidual preferences. Bradley-T erry Reward Model ( Bradley & T erry , 1952 ): The Bradley-T erry Rew ard Model learns latent rew ard scores from pairwise comparisons, modeling the probability of one output being preferred over another without using personalized historical information. W e fine-tune the models on the training set to fit the distribution of the dataset. 12 A.5. Implementation details The maximum input length for forming abducti ve reasoning is 1600 tokens, while the current context and response hav e a maximum length of 1024 tokens (EpiPersona-B). Training parameters include a per-de vice batch size of 2, gradient accumulation steps of 2, learning rate of 5e-6, weight decay of 0.01, warmup steps of 100, and bf16 mixed-precision training enabled. T raining is conducted on eight NVIDIA H20 GPUs. LoRA is implemented with parameters set as r = 8 , lora alpha = 32 , lora dropout = 0 . 1 . EpiP ersona-A Distills Qwen-2.5-72B for personal, episode-specific reasoning, using a JSON dictionary format with fields such as persona , value , pr eferr ed style , and intent (see prompt in Appendix A.10 .1). W e set codebook size 16 for Arena and 64 for Prism. Due to the limited size of the Arena dataset, we first pre-trained the transformer-based model on the Prism dataset (for EpiPersona-A) to stabilize training. A.6. Comparison of prefer ence modeling methods acr oss core capabilities W e formalize the core capabilities of preference modeling as: human-observable and interpretable (Human-O/I), machine- understandable (Machine-U), compression capability (Compact-C), and global preference abstraction capability (Global-A). T ext-based summarization and RA G offer interpretability b ut provide weaker compression, learnability , and abstraction, which may undesirably focus on users’ isolated and fragmented information. P arameterized pr eference modeling excels in learnability and abstraction b ut is less observ able and plug-and-play . Predefined dimensions compress preferences into dense vectors, aiding learning and interpretability , yet rely heavily on manual design, limiting flexibility . Our approach proposes a learnable personalized preference attribution (EpiPersona-A) that capitalizes on the strengths of these four capabilities. Specifically , Machine-understandable: EpiPersona-A parameterizes users’ static personas employing Parameterized abducti ve reasoning and User prototype mapping; Compression capability: Parameterized static personas serve as a highly compressed representation of user preferences, pre venting loss of persona information via text summarization; Global pr efer ence abstraction capability: EpiPersona-A provides a macro-le vel, abstract characterization of user personalities, instead of isolated or fragmented local information; Human-observable and interpr etable: Dynamic preferences are predicted in textual form, making them plug-and-play compatible with an y LLM. T able 5. Comparing various preference modeling methods in terms of k ey attrib utes. Predef.: Predefined dimensions; T ext-based sum.: text-based preference summarization; P arametrized.: Parametrized modeling; EpiPersona-A: Our proposed method. M E T H O D H U M A N - O / I M AC H I N E - U C O M PAC T - C G L O B A L - A T E X T - B A S E D S U M . ! - - - P A R A M E T R I Z E D . - ! ! ! P R E D E F . ! ! ! - R AG ! - - - E P I P E R S O N A - A ! ! ! ! A.7. Figure of model details Figure 5 illustrates the detailed architecture of our model, including the parameterized abducti ve reasoning module and the VQ-based mapping mechanism. A.8. Inference algorithm W e summarize the inference procedure for EpiPersona. Giv en user preference feedback and an episode, the algorithms first encode feedback using parameterized abducti ve reasoning and con vert it to latent persona representations via VQ-based mapping. W e then generate either episode-specific preferences (EpiPersona-A) or re ward scores for candidate responses (EpiPersona-B) (see algorithm 1 and 2 ). A.9. Windo w size experiment T o inv estigate the impact of windo w size, we conduct experiments on EpiPersona with varying window sizes on arena dataset, as shown in Figure 6 . On the Arena dataset, the model maintains stable performance with window sizes between 15 and 60. These results suggest that it is robust across a range of windo w sizes. 13 … … D e c g u i d e E p i s o d e - s p e c i f i c p r e f e r e n c e C o m p r e s s e d Wi n d o w s VQ - b as e d m ap p i n g … i n je ct … … VQ - b a s e d m a p p i n g (B) E p i Pe r so n a - B (A ) E p i P e r so n a - A En c En c P aram et eri z ed a b d u ct i ve re a s o n i n g Pe r s o n a s p ac e d p Pe r s o n a c o d e ( ) ( ) { 1 , , } 2 a r g m in wm m P p wz − ‖ ‖ F igure 5. Model architecture details (parameterized abductive reasoning and VQ-based mapping). Algorithm 1 EpiPersona-A: Persona-episode coupled preference generation Input: observed feedback X u for user u , episode e Encode feedback: R u = { λ ( P , x ) | x ∈ X u } // param. abducti ve reasoning Inject representations into windows: W = window ( R u ) // inject R u to fixed-length windo ws Compute latent persona set via VQ mapping: Z u = g ( W ) Generate personalized preference: P r ef e = g ψ ( Z u , e ) Format P r ef e as JSON with keys: persona, value, identification, intent, style Output: P r ef e Algorithm 2 EpiPersona-B: Episode-aware persona-based re ward modeling Input: observed feedback X u for user u , episode e , candidate responses { τ ( i ) e } K i =1 Encode feedback with parameterized abductiv e reasoning: R u = { λ ( P , x ) | x ∈ X u } Inject representations into windows: W = window ( R u ) Compute latent persona set via VQ mapping: Z u = g ( W ) for each candidate response τ ( i ) e do Compute episode-persona representation: h = h θ ( Z u , τ ( i ) e , e ) Compute rew ard: R θ ( Z u , τ ( i ) e , e ) = W ⊤ ϕ ( h ) end for Output: { R θ ( Z u , τ ( i ) e , e ) } K i =1 20 40 60 W indow size 0.60 0.65 0.70 A cc 5 15 30 45 60 Ar ena 95% CI A cc F igure 6. Windo w size experiment on EpiPerona (Arena). 14 A.10. Prompt This section lists the prompts used for modeling user beliefs and preferences, including: 1. Prompt for fine-grained belief and preference extraction; 2. LLM-as-a-Judge Prompt for EpiPersona-A; 3. LLM-as-a-Judge Prompt with Retrie val Augmentation; 4. T ext-based Episode-specific Preference Prediction Prompt (used in ablation test) ; 5. Parameterized Abducti ve Reasoning Prompt ( P ); 6. EpiPersona-A Prompt for episode-specific context coupled with user representation Z u . 1. Prompt for fine-grained belief and preference extraction (guidance for EpiPersona-A) Given the dialogue context below and two possible responses| one selected by the user and one not: Dialogue context: {context} Chosen answer: {chosen} Rejected answer: {rejected} ---Task--- Analyze the user’s selection and extract explicitly defined preference elements. ---Definitions--- The extracted preferences should be divided into the following categories: 1. Stable preferences (long-term, consistent traits): persona: the user’s personality or character traits. value: the user’s values, opinions, or attitudes. identification: the user’s social identity, role, or profession if inferable. 2. Dynamic preferences (context-dependent and situational): style: the user’s preferred expression style, tone, or format. intent: the user’s goal or intention in choosing the response. ---Rules--- 1. If a preference element cannot be inferred, return None. 2. Do not guess or invent information beyond what can be reasonably inferred from the context. 3. The output must be strictly formatted as JSON and must not contain comments. ---Output Format--- { "stable_preferences": { "persona": "", "value": "", "identification": "" }, "dynamic_preferences": { "style": "