PReD: An LLM-based Foundation Multimodal Model for Electromagnetic Perception, Recognition, and Decision

Multimodal Large Language Models have demonstrated powerful cross-modal understanding and reasoning capabilities in general domains. However, in the electromagnetic (EM) domain, they still face challenges such as data scarcity and insufficient integr…

Authors: Zehua Han, Jing Xiao, Yiqi Duan

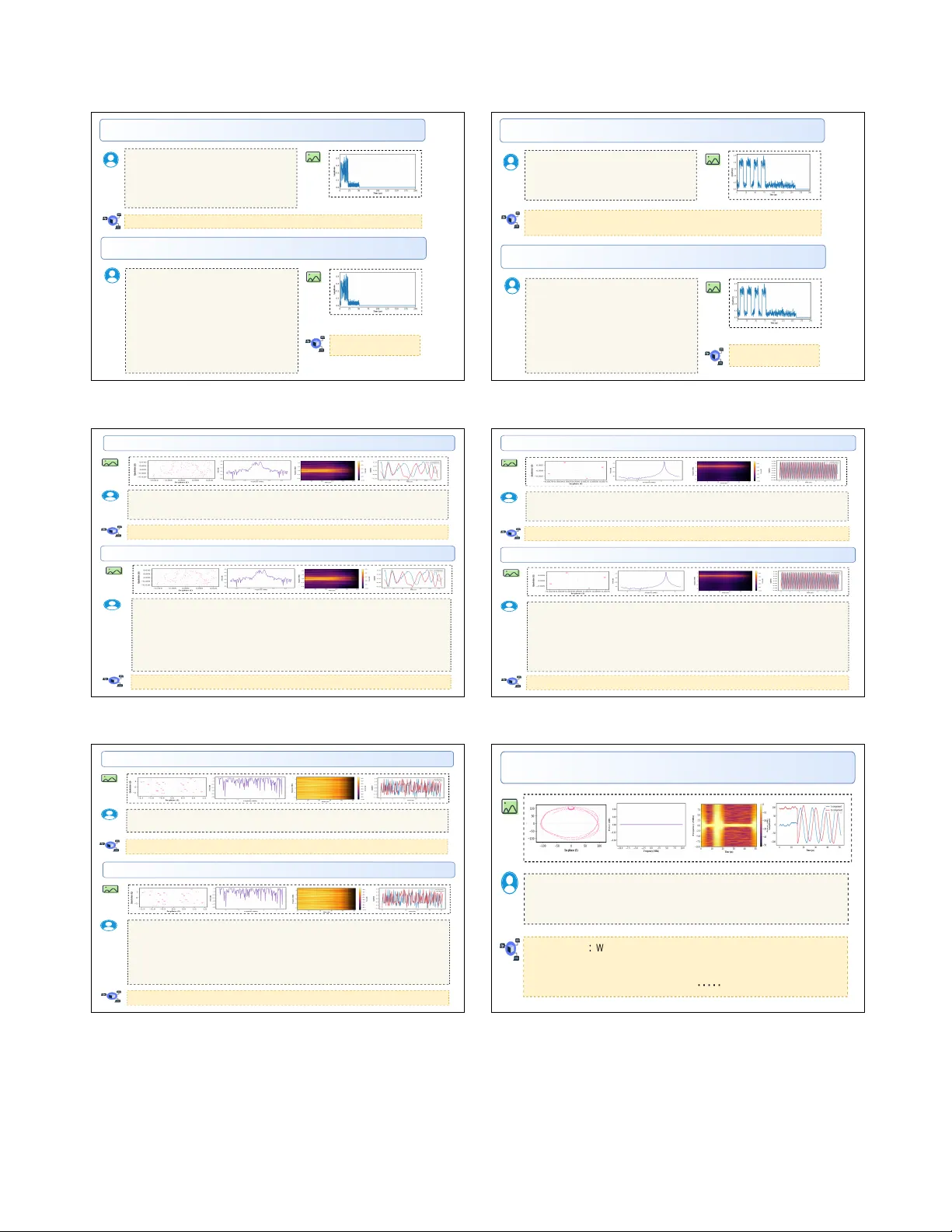

PReD: An LLM-based F oundation Multimo dal Mo del for Electromagnetic P erception, Recognition, and Decision Zeh ua Han Jing Xiao Yiqi Duan Mengyu Xiang Y uheng Ji Xiaolong Zheng Chenghan yu Zhang Zhendong She Jun yu Shen Dingw ei T an Shic hu Sun Zhou Cong Mingxuan Liu F engxiang W ang Jinping Sun Y angang Sun Based on the interference characteristics shown in the signal visualizations, formulate a comprehensive multi-domain anti-jamming strategy . Jamming_type is : Sweep. Anti-interference strategy: In the time domain, adopt burst communication methods to avoid the scanning cycle of interference, and ... Based on the following four images (IQ constellation diagram, FFT spectrum, STFT time-frequency diagram, and IQ component plot), determine the modulation type of the signal. GFSK is the modulation type indicated by the analysis of the four images. Based on the four provided signal visualizations — the IQ constellation diagram, FFT spectrum diagram, STFT time-frequency diagram, and IQ component diagram — what is the protocol type of the signal? The protocol type is AIR-GROUND-MTI. Based on the signal characteristics observed in the provided images, the emitter identified is USRP_X310_10. These signal diagrams come from one emitter . Please identify which emitter it is. Looking a t the waveform in the image, can you determine the pulse rep etition interva l (PRI)? Choose th e correct opti on from the lis t below . A. 43.5±1 µs B. 63.0±1 µs C. 40.0±1 µs D. 42.0±1 µs E. Unable to determine D. 42.0±1 µs Please an alyze this tim e- domain am plitude plot. First, determine if a signal i s present. If yes, specif y the start and end times of the signal in microseconds. Y es, a signa l is present. The time range of the signal is from 17.2 to 22.3 micro seconds. Perception Decision Signal Parameter Estimation Anti-Jamming Strategy Decision-Making Emitter Identification Modulation Recognition Protocol Recognition Signal Segment Detection PReD Decision Perception Recognition Figure 1. Ov erview of PReD and its capabilities, instantiated across six core electromagnetic (EM) tasks that span the three tiers of p erception, recognition, and decision-making. The figure demonstrates how PReD pro cesses multi-view signal visualizations to address a div erse range of EM instructions, from quan titative parameter estimation to op en-ended anti- jamming strategy generation. Abstract Multimo dal L ar ge L anguage Mo dels have demon- str ate d p owerful cr oss-mo dal understanding and r e a- soning c ap abilities in gener al domains. However, in the ele ctr omagnetic (EM) domain, they stil l fac e chal- lenges such as data sc ar city and insufficient inte- gr ation of domain know le dge. This p ap er pr op oses PR eD, the first foundation mo del for the EM do- main that c overs the intel ligent close d-lo op of "p er c ep- tion, r e c o gnition, de cision-making." W e c onstructe d a high-quality multitask EM dataset, PR eD-1.3M, and an evaluation b enchmark, PR eD-Bench. The dataset enc omp asses multi-p ersp e ctive r epr esentations such as r aw time-domain waveform, fre quency-domain sp e ctr o- gr ams, and c onstel lation diagr ams, c overing typic al fe atur es of c ommunic ation and r adar signals. It sup- p orts a r ange of c or e tasks, including signal dete ction, mo dulation r e c o gnition, p ar ameter estimation, pr oto- c ol r e c o gnition, r adio fr e quency fingerprint r e c o gnition, and anti-jamming de cision-making. PR eD adopts a multi-stage tr aining str ate gy that unifies multiple tasks for EM signals. It achieves close d-lo op optimiza- tion fr om end-to-end signal understanding to language- driven r e asoning and de cision-making, signific antly en- hancing EM domain exp ertise while maintaining gen- er al multimo dal c ap abilities. Exp erimental r esults show that PR eD achieves state-of-the-art p erformanc e on PR eD-Bench c onstructe d fr om b oth op en-sour c e and self-c ol le cte d signal datasets. These r esults c ol le ctively validate the fe asibility and p otential of vision-aligne d foundation mo dels in advancing the understanding and r e asoning of EM signals. 1. Introduction Recen t m ultimo dal large language models (MLLMs), enabled by instruction tuning and cross-mo dal align- men t, hav e achiev ed notable breakthroughs on visual question answering, retriev al, and reasoning tasks (e.g., LLaV A [ 1 ], BLIP-2 [ 2 ], GPT-4V [ 3 ], ImageBind [ 4 ]), driving a unified in telligence paradigm that uses nat- ural language as the primary in terface. Ho wev er, these general-purpose large language mo dels (LLMs) principally rely on world kno wledge and language- reasoning capabilities derived from image, video, and audio mo dalities; they lack intrinsic represen tations of electromagnetic (EM) signal priors such as ra w In- phase and quadrature (I/Q) wa v eforms, sp ectrogram structures, constellation geometry , micro-Doppler sig- natures, and cyclostationarity . This deficit limits their applicabilit y in high-demand domains such as sp ec- trum managemen t, wireless security , and radar se- man tic understanding, where exp ert-lev el, auditable reasoning ab out EM signals is required. Mean while, EM-/wireless-orien ted LLM efforts ha ve emerged (e.g., WirelessLLM [ 5 ], RadioLLM [ 6 ], RFF-LLM [ 7 ]), but man y of these concentrate on netw ork planning [ 8 , 9 ] or knowledge-lev el QA [ 10 ] and treat signal features as external prompts rather than forming a unified foun- dation for ra w EM data. T ogether, these observ ations indicate that an integrated “mo del–data–ev aluation” framew ork tailored to the shap es and task ecology of EM data is essential. A primary challenge in the EM domain is construct- ing datasets suitable as inputs to large EM mo dels. Public EM signal rep ositories are scarce or insuffi- cien t: many are generated from simulations or co ver single communication scenarios, with limited diver- sit y in mo dulation formats, proto col stac ks, and in- terference types [ 11 ]. Represen tative datasets such as RadioML2016 [ 12 ], RadioML2018 [ 13 ], and Hisar- Mo d2019.1 [ 14 ] are widely used in researc h but are not directly fit for training and ev aluating EM large mod- els. Second, unified multi-task capability is lacking: tasks including signal detection, modulation recogni- tion, parameter estimation, protocol classification, ra- dio frequency (RF) fingerprinting, and an ti-jamming strategy selection are t ypically mo deled separately , and there is no widely adopted base that unifies these tasks in a shared multimodal EM feature space aligned with instruction-driv en interfaces. Third, a clear exp ert gap exists when transferring general-purp ose MLLMs to the EM domain: a general-purp ose LLMs often fails to internalize EM priors (e.g., cyclostationarit y , car- rier/sym b ol timing, inter-band coupling), yielding un- stable p erception and reasoning under low SNR, strong in terference, or complex wa veforms, and limiting inter- pretabilit y . Existing EM large-mo del researc h typically focuses on single-task or knowledge-cen tric intelligence. F or example, WirelessLLM emphasizes knowledge align- men t, rule understanding, and netw ork optimization but rarely op erates directly on raw I/Q wa veforms or sp ectrograms [ 5 ]. RadioLLM in tegrates LLMs in cog- nitiv e radio via prompt and tok en reprogramming [ 6 ], y et it remains largely a “languagefeature prompt” ap- proac h and do esnt deliv er an end-to-end p erceptual- to-decisional capability from signals to seman tic ac- tions. In classical RF mac hine learning, deep mo d- els hav e adv anced mo dulation classification, timefre- quency feature mo deling, synchronization/registration, and protocol-sequence recognition, but these successes are typically confined to task-sp ecific, small-scale mo d- els with limited data and lack instruction align- men t and standardized ev aluation that would sup- p ort closed-lo op p erception, recognition, and decision- making generalization and verifiabilit y [ 15 – 17 ]. W e presen t a unified foundation mo del for EM p erception, recognition, and decision-making, termed PReD . An ov erview of our en tire framework is pro- vided in Fig 1 . At the data lay er, w e construct multi- dimensional, feature-visualized signal corpora that raw I/Q w av eform, frequency-domain sp ectrum, short-time F ourier transform (STFT) sp ectrograms, and constel- lation diagrams. Eigh t human annotators pro vide reasoning-st yle descriptions and consistency labels un- der a unified questionanswer proto col, forming m ulti- gran ularity sup ervision for multiple task families, in- cluding signal segmen t detection (SSD), signal param- eter estimation (SPE), mo dulation recognition (MR), proto col recognition (PR), emitter iden tification (EI), and an ti-jamming strategy decision-making (AJSD). A t the model lay er, we design a visual input paradigm that join tly represen ts EM signals from complemen- tary views across time, frequency , and constellation domains, and adopt a multi-stage training pip eline to supp ort m ulti-task learning at scale. At the learning paradigm level, we in tro duce unified multi-task instruc- tion alignment that uses language as a cross-task in- terface, enabling an end-to-end lo op from p erception to recognition and to decision-making. This design re- tains the cross-mo dal expressiveness of general multi- mo dal large language mo dels while comp ensating for missing EM priors. The main contributions are as follows: • T o the b est of our kno wledge, this work pro- p oses and implements the first integrated founda- tion model for the EM domain that unifies p ercep- tion, recognition, and decision-making. • T o supp ort efficient training and comprehensive ev aluation, we build a large-scale, cross-view EM dataset that cov ers time, frequency , time-frequency , and constellation p erspectives with reasoning-st yle sup ervision. • Extensiv e exp erimen ts indicate comp etitiv e p erfor- mance across diverse EM tasks; datasets, mo dels, and related co de will b e released to facilitate fur- ther research. 2. Related W ork Recen t years hav e seen sustained adv ances in Large Language Mo dels (LLMs) and multimodal v ariants (MLLMs) [ 18 ]. Via instruction tuning, cross-mo dal alignmen t, and to ol augmentation, these systems ad- v ance general-purp ose intelligence in visual question answ ering, retriev al, reasoning, and op en-w orld under- standing [ 19 ]. Represen tative imagetext mo dels in- clude BLIP-2 [ 2 ], LLaV A [ 1 ], MiniGPT-4 [ 20 ], and Qw en-VL [ 21 ]; unified cross-sensor representations are explored by ImageBind [ 4 ]; long-context videotext and to ol integration are addressed by Flamingo [ 22 ], K osmos-2 [ 23 ], and GPT-4Vis [ 24 ]. T ogether, these w orks construct transferable in terfaces among natu- ral images, audio and sp eec h, web corp ora, and lan- guage patterns, enabling a language-centered multitask paradigm [ 25 ]. 2.1. General-Purpose Multimodal Large Models A prev ailing design treats an LLM as the semantic h ub coupled with vision and audio enco ders. Cross- mo dal alignment is realized through pro jection heads or query routers, and instruction tuning with prefer- ence optimization harmonizes multitask b eha vior. This “bac kb onealignmen tinstruction” pip eline has b ecome a standard for reasoning o ver images, video, and sp eec h [ 26 ]. T r ansfer to EM is nontrivial : (i) missing mo dal- it y priorscyclostationarity , carrier/symbol timing off- sets, phaseenv elop e and constellation geometry , inter- band sp ectral coupling, and micro-Dopplerare not cap- tured by features from natural image or audio cor- p ora [ 27 , 28 ]; (ii) interface mismatch b ey ond categori- cal recognition, EM requires regression and closed-lo op con trol (e.g., SNR and timing-offset estimation, chan- nel/jammer recov ery , synchronization, an ti-jamming searc h), whereas generic instruction spaces empha- size QA-style generation [ 29 ]; (iii) sup ervision/metric mismatc hdatasets seldom provide executable physical ground truths or counterfactual con trols, encouraging seman tic plausibility o ver testable EM correctness. 2.2. Large-Model Directions in the EM Domain Kno wledge alignmen t and cognitiv e-radio- orien ted w ork. WirelessLLM targets net work plan- ning, rule understanding, and knowledge-based QA for cognitiv e wireless decisions but seldom op er- ates directly on raw I/Q wa veforms or sp ectrograms [ 5 ]. RadioLLM introduces prompt and tok en repro- gramming to em b ed LLMs in cognitive-radio w ork- flo ws and impro ves programmability , y et it remains a languageexternal-feature paradigm without unified mo deling of ra w EM data [ 6 ]. RFF-LLM enhances device-lev el in terpretability via language in terfaces but is limited b y single-task scop e and controlled data [ 7 ]. Synthesis of limitations : existing approaches rarely co ver, within one framew ork, signal detection, mo du- lation/proto col recognition, emitter iden tification, pa- rameter estimation, and an ti-jamming decision-making under a unified instruction space; moreov er, EM- deriv ed features are often used as auxiliary prompts rather than a joint representation enforcing cross-view consistency among I/Q, sp ectrum, sp ectrograms, and constellations, which weak ens robustness in low-SNR regimes. These gaps motiv ate EM-tailored foundation mo dels that integrate data, mo deling, and ev aluation to close the loop across p erception, recognition, and decision-making for auditable EM intelligence [ 30 ]. Dataset Partitioning Instruction Se t PReD-Bench PReD-1.3M SNR Looking at the waveform in the image, can you determine the pulse repetition interval (PRI)? Select the correct answer from the options below . Wrap your answer with and : A. 43.5±1 µs B. 63.0±1 µs C. 40.0±1 µs D. 42.0±1 µs E. Unable to determine D Please analyze this time-domain amplitude plot. First, determine if a signal exists. Y ou must answer yes or no and wrap the answer with and . If yes , specify the start time using ... and the end time using ... (in microseconds). yes . T ime range: from 17.2 to 22.3 microseconds. Based on the interference characteristics shown in the signal visualizations, formulate a comprehensive multi-domain anti-jamming strategy . Jamming_type is : Sweep\n\nAnti-interference strategy: In the time domain, adopt burst communication methods to avoid the scanning cycle of interference, and OpenQA # OpenQA* MCQA Instruction formulation 1. Signal Segment Detection 2. Signal Parameter Estimation 3. Modulation Recognition 4. Protocol Recognition 5. Emitter Identification 6. Anti-Jamming Strategy Decision-making T ask MCQA OpenQA Signal imag e generation IQ data Constellation | FFT Spectrum | STFT Spectr ogram | I/Q Time Series | T ime-domain Amplitude [[ 0.707, 0.707], [ 0.000, 1.000], [ 0.383, -0.924], [ 0.924, -0.383]] Figure 2. Overall pip eline for the construction of the PReD-1.3M training set and the PReD-Bench. W e first generate five t yp es of signal visualizations from the ra w IQ signals. W e then create corresponding OpenQA and MCQA pairs for eac h task t yp e to form a complete instruction set. Finally , a p ortion of this set is sampled to obtain the PReD-Bench, which is k ept strictly separate from the training data. 3. The PReD Dataset and Benc hmark In this section, w e detail the construction of our large- scale EM instruction corpus, PReD-1.3M, and our hier- arc hical ev aluation benchmark, PReD-Bench. Figure 2 illustrates the ov erall pip eline, whic h begins with sig- nal visualization, pro ceeds to instruction formulation, and concludes with the partitioning of the instruction set in to training and ev aluation splits. W e b egin by de- scribing the shared metho dology for data formulation. 3.1. Signal Representation and T ask F ormulation The EM instruction formulation defines six core tasks: SSD, SPE, MR, PR, EI, and AJSD. Each instruc- tion is paired with visual signal represen tations such as w av eform, FFT sp ectrum, STFT sp ectrogram, or con- stellation images. Multi-format questionanswer tem- plates (Op enQA and MCQA) are used to syn thesize b enc hmark-ready sup ervision for MLLMs training. An EM signal is fundamentally a complex-v alued time series x [ n ] ∈ C N . W e map eac h raw sample to four complementary visual views: • I/Q Constellation sampled scatter p oin ts { x [ k ] } that rev eal sym b ol constellations and mo dulation geometry; • FFT Magnitude Sp ectrum S FFT ( f ) = | FFT( x [ n ]) | , capturing bandwidth and frequency- domain structure; • STFT Sp ectrogram S STFT ( t, f ) , trading off timefrequency resolution to reveal pulsed or sweep patterns; • Ra w I/Q W av eform real and imaginary compo- nen t trajectories x I [ n ] and x Q [ n ] , together with the amplitude env elop e for parameter readout. T o supp ort instruction-st yle training [ 31 ], w e design t wo question formats and corresp onding output tags. Eac h signal sample in MCQA format includes a built-in "Unable to answer" option to mitigate mo del ov er- confidence on ambiguous inputs. • Multiple Choice Question-Answer Pairs: the user presents four images and five options (in- cluding Unable to answer ); the assistant returns A [ 32 ]. • Op en-ended Question-Answ er Pairs: the user pro vides four images and instructs “to only output the result”; the assistant resp onds with a struc- tured tag such as .... A. RADAR-AL TIMETER E. unable to answer D Based on the interference characteristics shown in the signal visualizations, formulate a comprehensive multi-domain anti-jamming strategy . Jamming_type is : Sweep\n\nAnti- interference strategy: In the time domain, adopt burst communication methods to avoid the scanning cycle of interference, and MCQA OpenQA Multiple Signal Images Single Signal Image Vision Encoder MLP Projector T okenizer user: According to this image, can you provide the pw and pri of the signal respectively? Use , tag format in your response, and do not include extra explanations. assistant: Analysis result: pw = 6.0 us, pri = 40.0 us. Instruction Pre-trained LLM Decoder Multi-stage T raining PReD LLM Decoder Figure 4. Overall pip eline of PReD. (Bottom to top) Multi-view EM renderings are enco ded and pro jected in to the token space, where they are combined with tok enized instructions. A pre-trained LLM deco der is first aligned with visual features and then fine-tuned through a multi-stage curriculum to b ecome the sp ecialized PR eD LLM Dec o der . The mo del supp orts t wo output mo des: op en-ended generation (Op enQA) and structured multiple-c hoice question answering (MCQA). Visual-Language Alignment Stage-1 General Single-Image Instruction T uning Stage-2 General Multi-Image Instruction T uning Stage-3 EM Domain Specialization Stage-4 Category Hyp erparameter Stage-1 Stage-2 Stage-3 Stage-4 Vision Resolution 384 Max 384 × (2 × 2) Max 384 × (6 × 6) Max 384 × (6 × 6) T okens 729 Max 729 × 5 Max 729 × 10 Max 729 × 10 Data Dataset LCS General Single-Image General Multi-Image* PReD-1.3M * Samples 558K 858K 832K 1 . 8M Model T rainable Comp onen ts Projector F ull Mo del F ull Mo del F ull Mo del T unable Parameters 21 . 5M 8 . 6B 8 . 6B 8 . 6B T raining Per-Device Batch Size 8 4 2 2 LR: ψ V iT N/A 2 × 10 − 6 2 × 10 − 6 2 × 10 − 6 LR: { θ Proj , ϕ LLM , ϕ LoRA } 1 × 10 − 3 1 × 10 − 5 1 × 10 − 5 1 × 10 − 5 Epo chs 1 1 1 1 T able 2. T raining schedule for PReD across four stages on 16 NVIDIA A100 GPUs (80GB each). A star * indicates datasets mixed with general visual corp ora from earlier stages. consisten tly outperforms all general-purp ose baselines and remains strong in the decision-making stage that requires un tagged, generative matc hing ( OpenQA ). The results reveal a clear capability b oundary: while general-purp ose mo dels show rudimentary skill on sim- ple p erception tasksfor instance, GPT-5 ac hieves 61.0 accuracy on the SSD (MCQA) taskthey completely fail at complex recognition and decision-making. This is most eviden t in the anti-jamming (AJSD) task, where all generalist mo dels score near zero on BLEU4, proving incapable of generating v alid strategies. In contrast, PReD excels (BLEU4=0.253). This confirms that generalist mo dels are limited to shallo w pattern matching due to a lack of domain priors, whereas PReD successfully forges the full p erception-to-decision pip eline via its sp ecialized arc hitecture. 5.2. Ev aluation on General Multimodal Bench- marks An imp ortan t question is whether PReDs domain- orien ted optimization affects its general multimodal understanding capabilities. T o inv estigate this, we con- ducted comprehensive ev aluations on sev eral widely Model EM Perception EM Recognition EM Decision-Making SSD SPE MR PR EI AJSD ( OpenQA # ) MCQA OpenQA ∗ MCQA OpenQA ∗ MCQA MCQA MCQA BLEU4 R OUGE METEOR CIDEr qwen2.5-vl-7b † [ 38 ] 28.7 0.2 20.5 0.1 24.2 14.0 – 0.000 0.079 0.115 0.128 qwen3-vl-8b † [ 36 ] 21.3 1.3 18.8 2.0 11.6 2.8 – 0.000 0.094 0.121 0.228 qwen3-vl-235b-a22b † [ 36 ] 27.7 0.5 30.4 2.0 20.2 8.6 – 0.000 0.090 0.070 0.151 seed-1.6 vision [ 39 ] 41.3 1.5 40.4 3.1 23.4 21.4 – 0.000 0.095 0.039 0.169 claude sonnet-4 [ 40 ] 28.7 1.4 48.5 2.8 25.2 21.6 – 0.000 0.103 0.078 0.154 GPT-5 [ 41 ] 61.0 7.2 42.9 13.3 12.0 19.2 – 0.000 0.017 0.028 0.055 gemini-2.5 pro [ 42 ] 25.3 6.2 56.3 12.0 29.0 24.0 – 0.000 0.081 0.036 0.129 PReD (Ours) 99.3 80.6 97.1 81.9 72.0 72.0 69.4 0.253 0.559 0.515 0.617 T able 3. Benchmark results for EM perception, recognition, and decision-making tasks. A superscript † marks an Instruct (instruction-tuned) v ariant. OpenQA ∗ indicates answers with a sp ecific tag whose results are directly matc hed; OpenQA # indicates answers without a sp ecific tag, where matching is p erformed using BLEU4, ROUGE, METEOR, and CIDEr. Since EI task requires device-sp ecific RF fingerprin t learning, general MLLMs arenot comparable and their results are omitted. Model MMStar AI2D HallusionBenc h SEEDBenc h IMG POPE ScienceQA TEST A v erage GPT-4v [ 3 ] 49.7 75.9 46.5 71.6 75.4 82.1 66.9 PReD (Ours) 50.2 74.3 54.5 73.7 70.4 69.2 65.4 Qwen-VL-Max [ 43 ] 49.5 75.7 41.2 72.7 71.9 80.0 65.2 LLaV A-Next-Interlea ve-7B [ 44 ] 44.5 73.8 34.8 72.0 85.5 73.2 64.0 DeepSeek-VL-7B [ 45 ] 40.5 65.3 34.5 70.1 85.6 80.9 62.8 MiniCPM-V-2 [ 46 ] 39.1 62.9 36.1 67.1 86.3 80.7 62.0 ShareGPT4V-7B [ 47 ] 35.7 58.0 28.6 69.3 86.6 69.5 58.0 T able 4. Results on general multimodal benchmarks. used benchmarks (see T able 4 ). Our main compar- isons include representativ e op en-source mo dels of sim- ilar scale, with LLaV A-Next-In terleav e-7B serving as a k ey metho dological reference. The results sho w that PReD achiev es an av erage score of 65.4, slightly surpassing its reference model LLaV A-Next (64.0) and on par with other strong op en- source baselines such as Qw en-VL-Max (65.2). This indicates that PReD successfully maintains competi- tiv e general multimodal understanding while b enefiting from its domain-oriented enhancements. F or a broader persp ectiv e, we also rep ort results from the high-p erformance proprietary reference GPT- 4V (1106-preview, No v. 2023). PReD demonstrates comparable o verall capability (65.4 vs. 66.9) and no- tably achiev es stronger robustness on HallusionBench (54.5 vs. 46.5). This suggests that our prop osed training paradigm ma y contribute to improv ed reliabil- it y under hallucination-prone scenarios. In summary , PReD illustrates the p oten tial of building a foundation mo del that effectively integrates domain exp ertise with broad generalization ability . 6. Conclusions W e ha ve presen ted PReD , the first foundation mo del to unify the full pip eline of p erception, recognition, and decision-making in the EM domain. Our core metho d- ology inv olves a no vel, large-scale EM corpus, PReD- 1.3M, which represents signals via complemen tary vi- sual views, coupled with a multi-stage curriculum to progressiv ely instill domain exp ertise. Extensiv e ex- p erimen tal v alidations demonstrate that PReD signif- ican tly outp erforms current state-of-the-art general- purp ose MLLMs on PReD-Benc h, a b enc hmark sp ecif- ically tailored for ev aluating EM in telligence. This substan tiates PReD’s sup erior sp ecialized proficiency within this particular scien tific domain. Crucially , PReD ac hieves this sp ecialized excellence while main- taining strong, competitive p erformance on standard m ultimo dal b enc hmarks, demonstrating that domain sp ecialization need not come at the cost of general vision-language capabilities. Our w ork not only charts a scalable path for EM intelligence but also v alidates the immense p oten tial of adapting vision-aligned foun- dation mo dels to other complex scientific domains. App endix This appendix provides supplementary material that delv es into the architectural details, training metho d- ology , ev aluation proto cols, and extensiv e exp erimen- tal analysis of the PReD mo del. W e b egin by de- tailing the four-stage curriculum training pipeline for PReD (Sec. A), cov ering data composition, progres- siv e tuning strategies, and key hyperparameters. This is follow ed by a description of the automatic metrics used to assess free-form answ ers in our OpenQA # set- ting (Sec. B). Subsequently , w e present a series of complemen tary exp eriments (Sec. C) designed to of- fer deep er insigh ts, including a critical ablation study on the domain-sp ecific expertise stage to demonstrate the prev ention of catastrophic forgetting, a systematic analysis of input modality con tributions, an out-of- domain generalization test, and a robustness analysis across v arying Signal-to-Noise Ratios (SNR). Finally , to provide a more in tuitive understanding of our work, w e include additional visual and qualitative analyses (Sec. D and b eyond), featuring a hierarc hical visual- ization of the PReD-Bench comp osition, a comparative radar plot of mo del performance, and representativ e case studies show casing PReD’s capabilities across the full sp ectrum of EM tasks. A. Details of T raining Pip eline for PReD PReD is built on Qwen-3 and a pre-trained SigLIP vi- sion enco der, and is trained with a four-stage curricu- lum. Fig. 5 pro vides an ov erview of the data com- p osition across stages, while T able 5 summarizes the corresp onding hyperparameters and training schedule. The curriculum b egins with Stage 1 (Pretrain) , a w arm-up phase that exclusively trains the pro jec- tor using a relatively high learning rate of 1 × 10 − 3 , while b oth the vision enco der (ViT) and the lan- guage mo del remain frozen. This stage utilizes the blip_laion_cc_sbu_558k dataset to establish ini- tial vision–language alignment . As shown in T a- ble 5 , it op erates with a base resolution of 384 , a token budget of 729 , and a flat patch merging strategy . F rom Stage 2 onw ard, the full mo del is progres- siv ely unfrozen for end-to-end tuning . In Stage 2 (26.5%; 858,231 samples), we in tro duce data from LLaV A-UHD-v2-SFT-Data to consolidate single- image instruction-following capabilities. A decoupled learning-rate scheme is applied: the ViT branc h is fine-tuned with 2 × 10 − 6 , while the pro jector and language-mo del parameters are up dated with 1 × 10 − 5 . Corresp ondingly , the spatial cov erage is expanded to Max 384 × (2 × 2) and the visual tok en budget is in- creased to Max 729 × 5 . The patch merging strat- Figure 5. Overview of our four-stage curriculum and data comp osition. The outer ring sho ws the training stages; the middle ring indicates data regimes (LCS, general single/m ulti-image); the inner ring lists EM tasks (SSD, SSE, MR, PR, EI, AJSD) emphasized in Stage 4. P ercent- ages denote the prop ortion of samples used p er stage. egy is switched to sp atial_unp ad in this stage to b etter handle the gridded visual inputs. Stage 3 (15.1%; 489,974 samples) incorp o- rates multi-image data from the M4-Instruct-Data dataset to enable multi-view mo deling . T o balance computational cost and efficiency , we filter out 3D and video samples from this dataset, resulting in a final sub- set of 489,974 samples that fo cuses on static multi-view inputs. T o retain previously learned capabilities, the training set for this stage is mixed with 40% of the general single-image corpus from Stage 2. This stage marks a significan t expansion of model ca- pacit y: the spatial cov erage and token budget are fur- ther increased to their maximums of Max 384 × (6 × 6) and Max 729 × 10 . T o accommo date the longer con- text, the maxim um sequence length is also doubled from 4 , 096 to 8 , 192 . Stage 4 (41.1%; 1,332,759 samples) fo cuses on fine- tuning the mo del for sp ecialized EM tasks using our curated PReD-1.3M dataset. This final stage main- tains the maximal spatial cov erage and token capacity established in Stage 3. F urthermore, to preven t catastrophic forgetting of general abilities, the training corpus is augmen ted b y mixing in 25% of the Stage 2 single-image corpus and 50% of the Stage 3 multi-image corpus. A cross all four stages, optimization uses Adam W with DeepSpeed-Zero3, a cosine learning-rate sc hed- ule, w eight deca y of 0 , and a w armup ratio of 0 . 03 . The pro jector emplo ys an mlp2x2_gelu head, and the Category Hyp erparameter Stage-1 Stage-2 Stage-3 Stage-4 Vision Resolution 384 Max 384 × (2 × 2) Max 384 × (6 × 6) Max 384 × (6 × 6) T okens 729 Max 729 × 5 Max 729 × 10 Max 729 × 10 Mo del T rainable Comp onen ts Projector F ull Mo del F ull Mo del F ull Mo del T unable Parameters 21 . 5M 8 . 6B 8 . 6B 8 . 6B T raining P er-device Batch Size 8 4 2 2 Gradien t Accum ulation 1 1 1 1 LR: ψ ViT N/A 2 × 10 − 6 2 × 10 − 6 2 × 10 − 6 LR: { θ Proj , ϕ LLM } 1 × 10 − 3 1 × 10 − 5 1 × 10 − 5 1 × 10 − 5 Ep o ch 1 1 1 1 Optimizer A dam W A dam W A dam W A dam W Deepsp eed Zero3 Zero3 Zero3 Zero3 W eight Decay 0 0 0 0 W armup Ratio 0 . 03 0 . 03 0 . 03 0 . 03 LR Schedule cosine cosine cosine cosine Pro jector Type mlp2x2_gelu mlp2x2_gelu mlp2x2_gelu mlp2x2_gelu Vision Select Lay er − 2 − 2 − 2 − 2 P atch Merge Type flat spatial_unpad spatial_unpad spatial_unpad Max Seq Length 4 , 096 4 , 096 8 , 192 8 , 192 A100 GPUs 2 × 8 2 × 8 2 × 8 2 × 8 T able 5. T raining schedule across four curriculum stages. selected vision lay er is − 2 . Eac h stage is trained for one ep och with p er-device batch sizes of { 8 , 4 , 2 , 2 } on a 2 × 8 A100 GPU setup. Ov erall, this four-stage training pip eline starts from general visual p erception (Stages 1–2), adv ances to m ulti-view pro cessing (Stage 3), and culminates in domain-sp ecific EM exp ertise (Stage 4). The combi- nation of curriculum design, dataset mixing, and de- coupled optimization balances stabilit y and plasticity , leading to robust end-to-end EM p erception, category- lev el recognition, and downstream decision making, while keeping the total compute and sequence lengths within the limits rep orted in T able 5 . B. Details of Ev aluation Metrics T o ev aluate free-form answ ers in the OpenQA # setting, w e adopt four standard automatic metrics. BLEU-4 (BLEU4) measures lo cal n-gram precision (1–4 grams) with a brevity penalty [ 48 ]. ROUGE is recall ori- en ted, including ROUGE-N and ROUGE-L to assess co verage via n-gram recall and LCS-based F-score, re- sp ectiv ely [ 49 ]. METEOR p erforms alignment-based matc hing, com bines precision and recall with a frag- men tation p enalt y , and allows stem and synonym matc hes to capture semantic paraphrases [ 50 ]. CIDEr emplo ys TF–IDF-weigh ted n-gram representations and cosine similarity against multiple references to empha- size consensus with the reference set [ 51 ]. C. Complementary Exp erimen ts C.1. Ablation Study on Domain-Specific EM Exper- tise Stage (Stage-4) T o inv estigate the impact of our curriculum learning strategy , we conduct a crucial ablation study fo cus- ing on the data comp osition of the domain-sp ecific EM exp ertise stage. W e compare tw o mo dels: 1) PReD − , whic h is fine-tuned in Stage 4 exclusively on our sp ecial- ized PReD-1.3M dataset. 2) PReD , which is fine- tuned on a mixtur e of the PReD-1.3M dataset and the general visual corp ora from the first three stages. W e ev aluate b oth mo dels on tw o fron ts: their p er- formance on sp ecialized domain tasks and their general m ultimo dal capabilities. P erformance on Domain-Specific EM T asks. Our ev aluation on sp ecialized EM tasks uses t wo types of metrics. F or the fiv e recognition and reasoning tasks (SSD, SPE, MR, PR, EI), w e rep ort standard accu- racy . F or the text-generation task, AJSD, w e cal- culate a comp osite score from four standard metrics: BLEU-4, ROUGE-L, METEOR, and CIDEr. T o en- sure a consistent scale for a veraging across all tasks, Metho d SSD SPE MR PR EI AJSD A verage PReD − (w/o General Corp ora) 83.55 86.37 72.40 74.40 71.83 50.05 73.10 PReD (Ours) 83.40 85.70 72.00 72.00 69.43 48.59 71.85 T able 6. P erformance comparison on the sp ecialized EM benchmark. The v ariant fine-tuned exclusively on EM data ( PReD − ) achiev es a marginal p erformance gain on these domain-sp ecific tasks. As shown in T able 7 , this sligh t adv antage comes at the cost of a severe degradation in general multimodal capabilities. Metho d MMStar AI2D HallusionBench SEEDBench IMG POPE ScienceQA TEST A verage PReD − (w/o General Corp ora) 39.47 54.44 8.10 56.75 0.74 56.02 35.92 PReD (Ours) 50.20 74.32 54.47 73.67 70.37 69.16 65.37 T able 7. Ablation study on mixing general-domain data in the final training stage. W e compare our full mo del ( PReD ) against a v ariant ( PReD − ) that was fine-tuned exclusiv ely on the sp ecialized EM dataset without the general visual corp ora. Mixing general-domain data significantly prev ents catastrophic forgetting and bo osts performance across general b enc hmarks. this comp osite score is derived b y a veraging the four metrics and multiplying the result by 100. The detailed p erformance comparison is presented in T able 6 . The hyper-sp ecialized PReD − mo del, trained without general corp ora, achiev es a sligh tly higher a v- erage score of 73.10% compared to our final PReD mo del’s 71.85%. This indicates that while fo cusing solely on domain data yields a marginal p erformance gain on sp ecialized tasks, it comes with a significant trade-off. P erformance on General Multimodal Benc h- marks. The nature of this trade-off b ecomes clear when ev aluating general capabilities, as presen ted in T able 7 . Here, the b enefit of our data mixing strat- egy is dramatic. Our full model, PReD , achiev es a strong a verage score of 65.37%. In stark contrast, the p erformance of PReD − collapses to 35.92%. This sig- nifican t gap highligh ts that fine-tuning exclusiv ely on sp ecialized data leads to severe catastrophic forget- ting of general m ultimo dal kno wledge acquired in ear- lier stages. In summary , these results demonstrate the critical role of mixing general-domain data in the final training stage. While a narrow fo cus on EM data yields a slight edge (+1.25%) in sp ecialized accuracy , it catastrophi- cally degrades general abilities. Our strategy success- fully mitigates this issue, creating a robust model that excels in its sp ecific domain without sacrificing its foun- dational multimodal comp etence. This balance is cru- cial for developing a true EM foundation mo del. C.2. Ablation Study on Input Modalities T o understand the contribution of each input mo dalit y , w e conducted a comprehensiv e ablation study across t wo distinct tasks: mo dulation recognition (a clas- sification task, T able 8 ) and anti-jamming decision- making (a generation task, T able 9 ). F or all exp eri- men ts, unselected mo dalities w ere replaced with zero- filled placeholders to maintain consisten t input dimen- sionalit y . Our findings reveal consistent patterns across b oth tasks. Finding 1: IQ as the F oundational Mo dalit y . A cross b oth tasks, the IQ mo dality consistently prov es to b e the most informative when used alone. It ac hieves the highest single-mo dalit y accuracy in modulation recognition (50.8%) and dominates all metrics in an ti- jamming decision-making (0.363 a verage score). This is ph ysically exp ected, as IQ data represents the lossless, ra w complex-v alued information of the signal con tain- ing all amplitude and phase v ariations. It carries the strongest discriminativ e cues, forming a robust foun- dation for downstream tasks. Finding 2: Complementarit y vs. Redundancy . The com bination of mo dalities highligh ts clear syn- ergistic and redundant relationships. F or instance, in mo dulation recognition, Constel lation+IQ (64.2%) significan tly outperforms the sum of its parts. This demonstrates that while IQ provides temp oral de- tails, the Constellation diagram explicitly visualizes the mo dulation state space (symbol grid), transform- ing statistical distributions into distinct geometric vi- sual patterns that are easier for the vision enco der to capture. Con versely , FFT+STFT (37.8%) p er- forms p oorly , indicating substantial redundancy . Since STFT already encapsulates sp ectral information ov er time, the global spectral statistics pro vided b y FFT offer limited additional entrop y compared to the time- frequency view. This pattern holds in the decision- making task, where com binations including b oth IQ Constellation FFT STFT IQ Accuracy ✓ × × × 43.4 × ✓ × × 35.0 × × ✓ × 30.2 × × × ✓ 50.8 ✓ ✓ × × 54.8 ✓ × ✓ × 58.4 ✓ × × ✓ 64.2 × ✓ ✓ × 37.8 × ✓ × ✓ 55.6 × × ✓ ✓ 57.0 ✓ ✓ ✓ × 59.2 ✓ ✓ × ✓ 68.8 ✓ × ✓ ✓ 70.0 × ✓ ✓ ✓ 61.0 ✓ ✓ ✓ ✓ 72.0 T able 8. MR accuracy (%) for differen t mo dalit y combina- tions. The optimal result is highligh ted in b old. and other mo dalities lik e STFT or Constel lation yield top results within their cardinality groups. Finding 3: The Efficiency-A ccuracy Sweet Sp ot. While using all four mo dalities consistently yields the b est p erformance on b oth tasks (72.0% and 0.4860), a clear p oin t of diminishing returns emerges. The three-mo dalit y combination of Constel- lation+STFT+IQ achiev es 70.0% accuracy in recogni- tion and an a verage score of 0.414 in decision-making. These results represent 97.2% and 85.1% of the full four-mo dalit y p erformance, resp ectiv ely . This makes the Constel lation+STFT+IQ combination a highly effectiv e trade-off. It successfully co vers the three crit- ical ph ysical dimensions of EM signals: temp oral dy- namics (IQ), sp ectral fo otprin t (STFT), and mo dula- tion top ology (Constellation), rendering the marginal gain from adding the final FFT mo dality relatively small. In summary , our cross-task ablation provides clear guidance for mo dalit y selection. IQ is indispens- able as the information source. Constellation and STFT pro vide critical, complementary p erspec- tiv es that explicitly visualize features hidden in the time domain. FFT , while b eneficial, often ov erlaps with STFT and offers the least unique information. F or resource-constrained applications, the Constel la- tion+STFT+IQ trio is an optimal choice, while the full four-mo dalit y input should b e used when maximizing p erformance is the primary goal. Figure 6. Zero-shot mo dulation recognition accuracy of PReD on the OOD RadioML2016.04c dataset across SNR lev els. The ov erall accuracy is 66.0%. C.3. Out-of-Domain Generalization f or Modulation Recognition T o further assess the generalization capabilities of PReD b ey ond its training distribution, we conducted a c hallenging out-of-domain (OOD) ev aluation. While PReD was trained for mo dulation recognition us- ing only the ‘RadioML2016.10a‘ dataset, w e ev alu- ated its zero-shot p erformance on the unseen ‘Ra- dioML2016.04c‘ dataset, whic h features different chan- nel conditions and signal c haracteristics. W e con- structed a multiple-c hoice question b enc hmark by sam- pling 10 signals for each mo dulation type at ev ery av ail- able Signal-to-Noise Ratio (SNR), resulting in a total of 2,200 ev aluation samples. On this challenging OOD b enc hmark, PReD ac hieved a strong ov erall accuracy of 66.0% (1452/2200), demonstrating robust generalization to unseen signal en vironments without any fine-tuning. T o gain deeper insigh ts into the mo del’s robustness to noise, we analyzed its p erformance across different SNR lev els, as detailed in Figure 6 . The results reveal a clear and physically exp ected trend: p erformance is mo dest in the low-SNR regime ( < − 10 dB) where signals are hea vily corrupted b y noise; it then improv es dramat- ically in the mid-SNR range ( − 10 dB to 10 dB), and finally stabilizes at a high level in the high- SNR range ( > 10 dB). This b eha vior, which mirrors the p erformance curv es of sp ecialized recognition mo dels, confirms that PReD has learned to extract meaningful physical fea- tures from the signals rather than merely ov erfitting to the source dataset. This v alidates its p oten tial as a robust foundation mo del for electromagnetic signal pro cessing. Input Mo dalit y BLEU4 ROUGE METEOR CIDEr A verage Constel lation 0.069 0.329 0.227 0.405 0.258 FFT 0.056 0.305 0.194 0.381 0.234 STFT 0.094 0.374 0.267 0.447 0.2955 IQ 0.149 0.438 0.337 0.530 0.363 Constel lation+FFT 0.070 0.331 0.227 0.409 0.259 Constel lation+STFT 0.104 0.384 0.279 0.465 0.308 Constel lation+IQ 0.168 0.464 0.359 0.549 0.385 FFT+STFT 0.095 0.373 0.267 0.449 0.296 FFT+IQ 0.153 0.441 0.341 0.534 0.367 STFT+IQ 0.185 0.488 0.381 0.567 0.405 Constel lation+FFT+STFT 0.102 0.379 0.276 0.464 0.305 Constel lation+FFT+IQ 0.168 0.463 0.357 0.548 0.384 Constel lation+STFT+IQ 0.193 0.499 0.388 0.575 0.414 FFT+STFT+IQ 0.185 0.489 0.381 0.567 0.405 Constel lation+FFT+STFT+IQ 0.253 0.559 0.515 0.617 0.486 T able 9. Ablation on input mo dalities for the anti-jamming strategy decision-making task. C.4. Perf ormance Analysis under V arying SNR T o provide a more granular view of PReD’s robustness, w e analyze the p erformance of the EM Recognition tasks under v arying SNRs. Within this category , we presen t the results for MR and PR , as their underly- ing b enc hmarks contain explicit SNR lab els suitable for this analysis. The EI task is excluded from this anal- ysis b ecause its dataset consists of real-w orld captured signals that lack ground-truth SNR annotations. As illustrated in Figure 7 , b oth tasks exhibit a re- markably consistent and physically exp ected trend: mo del accuracy degrades gracefully as the SNR de- creases. This demonstrates that PReD has learned to lev erage signal qualit y in a meaningful wa y , reinforcing its capability as a robust foundation mo del for the EM domain. The p erformance drop is most pronounced in the negative SNR regime, where the signal p o wer is w eaker than the noise p ow er, y et the mo del retains non-trivial recognition capabilities. D. Visual Analysis of PReD-Benc h and Benc hmark Results This section offers a deep er, more intuitiv e understand- ing of our b enc hmark and results through both visual analysis and qualitative examples. First, we present t wo complementary visualizations: a hierarc hical chart to break down the comp osition of PReD-Bench and a radar chart to summarize the comparative mo del p erformances discussed in the main text. F ollowing this quantitativ e o verview, w e provide a series of rep- Figure 7. Performance comparison on MR and PR task across v arying SNR levels. Both tasks show a similar grace- ful degradation, highlighting the mo del’s consistent and ro- bust b eha vior against noise. resen tative qualitative examples to concretely illustrate PReD’s capabilities across the full range of EM tasks. D.1. Hierarchical Composition of PReD-Bench Figure 8 offers a hierarchical visualization of the PReD- Benc h’s comp osition. The chart is structured into three concen tric rings representing differen t levels of granu- larit y: • Innermost Ring (Dimensions): This ring shows the three core capabilities ev aluated by the b enc h- mark: P erception (59.1%), Recognition (17.2%), and Decision-Making (23.6%). • Middle Ring (T asks): This ring breaks down Figure 8. Hierarc hical comp osition of the PReD-Bench, sho wing the distribution across three core dimensions, six sp ecific tasks, and tw o primary question types (Op enQA and MCQA). the dimensions into six sp ecific tasks. It details the sample distribution among SPE (35.5%), SSD (23.6%), MR (5.9%), PR (5.9%), EI (5.4%), and AJSD (23.6%). • Outermost Ring (Question Types): The final ring sp ecifies the question format for eac h task. It sho ws that P erception tasks (SPE, SSD) are de- signed with a mix of Op enQA and MCQA for- mats, while the ma jorit y of Recognition tasks (MR, PR, EI) are exclusiv ely MCQA . The Decision- Making task (AJSD) is designed as a pure Op enQA generativ e task. This lay ered structure illustrates the b enchmark’s comprehensiv e design, ensuring a balanced ev aluation across diverse EM tasks and reasoning formats. D.2. Comparative Perf ormance V isualization on EM T asks The log-scale radar chart in Fig. 9 summarizes the cross-task a verage performance across five represen ta- tiv e EM tasks. PReD consisten tly forms the outer en velope and yields the largest enclosed area , highligh ting its dominan t adv antage throughout the full EM pip eline. In contrast, general-purp ose MLLMs exhibit a clear p erformance gap b etw een foundational and adv anced tasks . They main tain mo derate ca- pabilities on foundational tasks in volving p erception (SPE/SSD) and recognition (MR/PR) . How ev er, their p erformance collapses on the adv anced, op en- ended generativ e task of decision-making (AJSD) . This is particularly evident for mo dels like GPT-5, Gemini-2.5-Pro, and Claude-Sonnet-4, all of which sho w a severe contraction tow ard the AJSD axis. This collective pattern aligns with the tabulated Figure 9. Log-scale radar plot of a veraged p erformance ov er fiv e EM tasks. PReD demonstrates sup erior performance across all ev aluated tasks. results and demonstrates the critical limitation of general-purp ose MLLMs when transitioning from pat- tern recognition to applied, op en-ended reasoning. This further v alidates the effectiv eness of our domain- sp ecific mo deling and multi-stage curriculum in build- ing true end-to-end intelligence. D.3. Qualitative Examples on PReD-Bench T asks T o provide a qualitativ e illustration of PReD’s capa- bilities, w e present representativ e examples for each of the six core tasks defined in the PReD-Benc h. These examples, shown in Figure 10 , sho wcase the mo del’s abilit y to handle a diverse range of inputs and generate accurate, con text-aw are resp onses across the full sp ec- trum of EM intelligence: from foundational p erception and recognition to complex decision-making.It is w orth noting that for the op en-ended nature of Mo dulation Recognition, Proto col Recognition, and Emitter Iden- tification tasks, where different expressions can conv ey the same meaning, we standardized the ev aluation in our test set by remo ving the op en-ended resp onse for- mat for these specific tasks to ensure consistent and ob jectiv e assessment. Eac h subfigure presents a user query , which ma y include single or m ultiple signal images, follow ed b y the detailed, human-lik e resp onse generated by PReD. These examples complemen t the quantitativ e results in the main pap er by offering a direct view into the mo del’s reasoning and generative abilities. OpenQA* Please analyze this time-domain amplitude plot. First, determine if a signal exists. Y ou must answer yes or no and wrap the answer with and . If yes , specify the start time using ... and the end time using ... (in microseconds) yes . T ime range: from 2.6 to 21.8 microseconds. MCQA Please review the signal diagram and choose the option that best corresponds to the signal’s time boundaries. Wrap your answer with and : A. Signal present with start: 2.6±1, end: 21.8±1 microseconds B. Signal present with start: 19.7±1, end: 30.8±1 microseconds C. No signal is present in the plot D. Signal present with start: 1 1.7±1, end: 32.7±1 microseconds E. Unable to answer A (a) T ask 1: Signal Segment Detection (SSD) OpenQA* Can the basic signal parameters be determined from this time-domain plot? List td, pw , pri, and np using , , , tags without any additional content. Signal parameters: T ime delay 6.3 us, pulse width 10.7 us, repetition interval 20.0 us, pulse count 4 . MCQA Looking at this waveform, can you help me read the pulse repetition interval? Choose the correct option from the choices below . Only answer with ABCDE options, and wrap your answer in tags without explanations. A. 20.1±1 us B. 26.5±1 us C. 1 1.2±1 us D. 63.6±1 us E. Unable to answer A (b) T ask 2: Signal Parameter Estimation (SPE) OpenQA* \n\n\n Please determine the modulation type of the signal based on the following four images (IQ constellation diagram, FFT spectrum diagram, STFT time-frequency diagram, and IQ component diagram). Output only the final result, and place it within ... . QAM16 MCQA \n\n\n Determine the modulation type of the signal from the following four images (IQ constellation diagram, FFT spectrum diagram, STFT time-frequency diagram, and IQ component diagram). Which of the following is correct? A. GFSK B. CPFSK C. QAM16 D. WBFM E. unable to answer Please answer only with the letter corresponding to the correct answer, in the format: ... . C (c) T ask 3: Mo dulation Recognition (MR) OpenQA* \n\n\n Please observe the provided IQ constellation diagram, FFT spectrum diagram, STFT time- frequency diagram, and IQ component diagram. Based on these images, identify the protocol type used. Output only the protocol result in the format: ... . SHORT-RANGE MCQA \n\n\n Determine the protocol type of the signal from the following four images (IQ constellation diagram, FFT spectrum diagram, STFT time-frequency diagram, and IQ component diagram). Which of the following is correct? A. AIRBORNE-DETECTION B. AM RADIO C. SHORT-RANGE D. GROUND MAPPING E. Unable to answer Please answer only with the letter corresponding to the correct answer, in the format: ... . C (d) T ask 4: Proto col Recognition (PR) OpenQA* \n\n\n These signal diagrams come from one emitter. Please identify which emitter it is. Output format: ... . USRP_X310_15 MCQA \n\n\n Based on the four signal images (IQ constellation, FFT spectrum, STFT spectrogram, and IQ components), which of the following emitters transmitted this signal? A. USRP_X310_4 B. USRP_X310_15 C. USRP_X310_1 D. USRP_X310_14 E. Unable to answer Please answer only with the letter corresponding to the correct answer, in the format: ... . B (e) T ask 5: Emitter Identification (EI) OpenQA # \n\n\n\nAnalyze the signal plots provided. What countermeasures should be implemented to ensure reliable communication in the presence of this interference? Jamming_type is : Widecomb Interference Resistance Strategies: - T ime Domain Interference Resistance Strategy: Implement randomized burst sending schemes to disrupt interference synchronization, (f) T ask 6: Anti-Jamming Strategy Decision-making (AJSD) Figure 10. Qualitativ e examples of PReD’s performance across the six core tasks of PReD-Bench. Eac h panel sho wcases a different capability , from perception (a, b), to recognition (c, d, e), and finally to complex decision-making (f ). References [1] Haotian Liu, Ch unyuan Li, Qingyang W u, and Y ong Jae Lee. Visual instruction tuning. volume 36, pages 34892–34916, 2023. 2 , 3 [2] Junnan Li, Dongxu Li, Silvio Sav arese, and Steven Hoi. Blip-2: Bo otstrapping language-image pre-training with frozen image enco ders and large language mo dels. v olume 202, pages 19730–19742, 2023. 2 , 3 [3] OpenAI. Gpt-4v(ision) system card, 2023. System Card. 2 , 8 [4] Rohit Girdhar, Alaaeldin El-Nouby , Zhuang Liu, Man- nat Singh, Kalyan V asudev Alwala, Armand Joulin, and Ishan Misra. Imagebind: One embedding space to bind them all. In CVPR , pages 15180–15190, 2023. 2 , 3 [5] Jia wei Shao, Jingw en T ong, Qiong W u, W ei Guo, Zi- jian Li, Zehong Lin, and Jun Zhang. Wirelessllm: Em- p ow ering large language mo dels tow ards wireless intel- ligence. arXiv pr eprint arXiv:2405.17053 , 2024. 2 , 3 [6] Sh uai Chen, Y ong Zu, Zhixi F eng, Shuyuan Y ang, Mengc hang Li, Y ue Ma, Jun Liu, Qiukai Pan, Xin- lei Zhang, and Changjun Sun. Radiollm: Introducing large language mo del in to cognitive radio via hybrid prompt and tok en reprogrammings. arXiv pr eprint arXiv:2501.17888 , 2025. 2 , 3 [7] Haolin Zheng, Ning Gao, Donghong Cai, Shi Jin, and Michail Matthaiou. Uav individual identification via distilled rf fingerprints-based llm in isac netw orks. IEEE Wir eless Communic ations L etters , pages 1–1, 2025. 2 , 3 [8] Hongy e Quan, W anli Ni, T ong Zhang, Xiangyu Y e, Ziyi Xie, Shuai W ang, Y uanw ei Liu, and Hui Song. Large language model agents for radio map genera- tion and wireless netw ork planning. IEEE Netw. L ett. , 7(3):166–170, 2025. 2 [9] Chenxu W ang, Xumiao Zhang, Run wei Lu, Xian- shang Lin, Xuan Zeng, Xinlei Zhang, Zhe An, Gong- w ei W u, Jiaqi Gao, Chen Tian, Guihai Chen, Guyue Liu, Y uhong Liao, T ao Lin, Dennis Cai, and En- nan Zhai. T ow ards llm-based failure lo calization in pro duction-scale netw orks. In Pr o c ee dings of the A CM SIGCOMM 2025 Confer enc e, SIGCOMM 2025, São F r ancisc o Convent, Coimbr a, Portugal, Septemb er 8- 11, 2025 , pages 496–511, 2025. 2 [10] Uma Mahesw ari Natarajan, Raghuram Bharadw aj Diddigi, and Jy otsna Bapat. Rag-inspired inten t-based solution for intelligen t autonomous netw orks. In 17th International Conferenc e on COMmunic ation Systems and NETworks, COMSNETS 2025, Bengaluru, India, January 6-10, 2025 , pages 413–421. IEEE, 2025. 2 [11] Ziheng Qin, Y uheng Ji, Rensh uai T ao, Y uxuan Tian, Y uyang Liu, Yipu W ang, and Xiaolong Zheng. Scaling up ai-generated image detection via generator-aw are protot yp es. arXiv pr eprint arXiv:2512.12982 , 2025. 2 [12] Timoth y J. O’Shea, Johnathan Corgan, and T. Charles Clancy . Con volutional radio mo dulation recognition net works. pages 213–226, 2016. 2 [13] Timoth y James O’Shea, T amoghna Ro y , and T. Charles Clancy . Over-the-air deep learning based radio signal classification. IEEE Journal of Sele cte d T opics in Signal Pr o cessing , 12(1):168–179, 2018. 2 [14] Kürşat T ekbyk, Ali Rıza Ekti, Ali Görçin, Güneş Karabulut Kurt, and Cihat Keçeci. Ro- bust and fast automatic mo dulation classification with cnn under multipath fading channels. pages 1–6, 2020. 2 [15] Timoth y J. O’Shea, Johnathan Corgan, and T. Charles Clancy . Unsup ervised represen tation learning of struc- tured radio communication signals. pages 1–5, 2016. 2 [16] Timoth y J. O’Shea, Latha P emula, Dhruv Batra, and T. Charles Clancy . Radio transformer netw orks: A t- ten tion models for learning to sync hronize in wireless systems. pages 662–666, 2016. [17] Timoth y J. O’Shea, Seth Hitefield, and Johnathan Corgan. End-to-end radio traffic sequence recognition with recurrent neural netw orks. pages 277–281, 2016. 2 [18] Y uheng Ji, Hua jie T an, Jiayu Shi, Xiaoshuai Hao, Y uan Zhang, Hengyuan Zhang, Pengw ei W ang, Mengdi Zhao, Y ao Mu, Pengju An, et al. Rob obrain: A unified brain mo del for rob otic manipulation from ab- stract to concrete. In Pr o c ee dings of the Computer Vi- sion and Pattern R e c o gnition Confer enc e , pages 1724– 1734, 2025. 3 [19] Huajie T an, Y uheng Ji, Xiaoshuai Hao, Xiansheng Chen, Pengw ei W ang, Zhongyuan W ang, and Shang- hang Zhang. Reason-rft: Reinforcemen t fine-tuning for visual reasoning of vision language mo dels. In The Thirty-ninth A nnual Confer enc e on Neur al Informa- tion Pr oc essing Systems , 2025. 3 [20] Dey ao Zh u, Jun Chen, Xiao qian Shen, Xiang Li, and Mohamed Elhoseiny . Minigpt-4: Enhancing vision- language understanding with adv anced large language mo dels. arXiv pr eprint , 2023. 3 [21] Jinze Bai, Shuai Bai, Shusheng Y ang, Shijie W ang, Sinan T an, Peng W ang, Juny ang Lin, Chang Zhou, and Jingren Zhou. Qwen-vl: A versatile vision- language mo del for understanding, lo calization, text reading, and beyond, 2023. 3 [22] Jean-Baptiste Alayrac, Jeff Donahue, P auline Luc, An- toine Miec h, Iain Barr, Y ana Hasson, Karel Lenc, A. Mensch, Katie Millican, Malcolm Reynolds, Roman Ring, Eliza R utherford, Serkan Cabi, T engda Han, Zhitao Gong, Sina Samango o ei, Marianne Monteiro, Jacob Menick, Sebastian Borgeaud, Andy Bro ck, Aida Nematzadeh, Sahand Sharifzadeh, Mikolaj Bink owski, Ricardo Barreira, Oriol Viny als, Andrew Zisserman, and Karen Simony an. Flamingo: A visual language mo del for few-shot learning. arXiv pr eprint , 2022. 3 [23] Zhiliang Peng, W enhui W ang, Li Dong, Y aru Hao, Shaohan Huang, Shuming Ma, and F uru W ei. Kosmos- 2: Grounding multimodal large language mo dels to the w orld. arXiv pr eprint , 2023. 3 [24] W enhao W u, Huanjin Y ao, Mengxi Zhang, Y uxin Song, W anli Ouyang, and Jingdong W ang. Gpt4vis: What can gpt-4 do for zero-shot visual recognition? arXiv pr eprint arXiv:2311.15732 , 2024. 3 [25] Yipu W ang, Y uheng Ji, Y uyang Liu, Enshen Zhou, Ziqiang Y ang, Y uxuan Tian, Ziheng Qin, Y ue Liu, Hua jie T an, Cheng Chi, et al. T ow ards cross-view p oint correspondence in vision-language mo dels. arXiv pr eprint arXiv:2512.04686 , 2025. 3 [26] Y uheng Ji, Yipu W ang, Y uyang Liu, Xiaoshuai Hao, Y ue Liu, Y uting Zhao, Huaihai Lyu, and Xiao- long Zheng. Visualtrans: A b enc hmark for real- w orld visual transformation reasoning. arXiv pr eprint arXiv:2508.04043 , 2025. 3 [27] Nada Ab del Khalek, Deemah H. T ashman, and W alaa Hamouda. Adv ances in machine learning-driv en cogni- tiv e radio for wireless netw orks: A survey . IEEE Com- munic ations Surveys & T utorials , 26(2):1201–1237, 2024. 3 [28] Hengtao He, Shi Jin, Chao-Kai W en, F eifei Gao, Geof- frey Y e Li, and Zongb en Xu. Model-driven deep learn- ing for ph ysical lay er communications. IEEE Wir eless Communic ations , 26(5):77–83, 2019. 3 [29] Y uheng Ji, Hua jie T an, Cheng Chi, Yijie Xu, Y ut- ing Zhao, Enshen Zhou, Huaihai Lyu, Pengw ei W ang, Zhongyuan W ang, Shanghang Zhang, et al. Math- stic ks: A b enc hmark for visual symbolic comp ositional reasoning with matchstic k puzzles. arXiv pr eprint arXiv:2510.00483 , 2025. 3 [30] Y uheng Ji, Y ue Liu, Zhicheng Zhang, Zhao Zhang, Y uting Zhao, Xiaoshuai Hao, Gang Zhou, Xingwei Zhang, and Xiaolong Zheng. Enhancing adversarial robustness of vision-language mo dels through lo w-rank adaptation. In Pr o c e e dings of the 2025 International Confer ence on Multime dia R etrieval , pages 550–559, 2025. 3 [31] Jason W ei, Maarten Bosma, Vincen t Y. Zhao, Kelvin Guu, Adams W ei Y u, Brian Lester, Nan Du, An- drew M. Dai, and Quo c V. Le. Finetuned language mo dels are zero-shot learners. In The T enth In- ternational Confer enc e on L e arning R epr esentations, ICLR 2022, Virtual Event, A pril 25-29, 2022 . Open- Review.net, 2022. 4 [32] Zixian Huang, Ao W u, Jiaying Zhou, Y u Gu, Y ue Zhao, and Gong Cheng. Clues b efore answers: Generation-enhanced multiple-c hoice QA. In Pr o c e e d- ings of the 2022 Confer enc e of the North A meric an Chapter of the A sso ciation for Computational Linguis- tics: Human L anguage T e chnolo gies, NAA CL 2022, Se attle, W A, Unite d States, July 10-15, 2022 , pages 3272–3287. Asso ciation for Computational Linguistics, 2022. 4 [33] T o dor Miha ylov, Peter Clark, T ushar Khot, and Ashish Sabharwal. Can a suit of armor conduct elec- tricit y? A new dataset for op en b ook question answer- ing. In Pr o c e e dings of the 2018 Confer enc e on Empiri- c al Metho ds in Natur al L anguage Pro c essing, Brussels, Belgium, Octob er 31 - Novemb er 4, 2018 , pages 2381– 2391. Asso ciation for Computational Linguistics, 2018. 5 [34] Y uheng Ji, Y uyang Liu, Hua jie T an, Xuch uan Huang, F anding Huang, Yijie Xu, Cheng Chi, Y uting Zhao, Huaihai Lyu, Peterson Co, et al. Prm-as-a-judge: A dense ev aluation paradigm for fine-grained rob otic au- diting. arXiv pr eprint arXiv:2603.21669 , 2026. 6 [35] Haotian Liu, Ch unyuan Li, Qingyang W u, and Y ong Jae Lee. Visual instruction tuning. In A dvanc es in Neur al Information Pr o c essing Systems , volume 36, pages 34892–34916, 2023. 6 [36] Qw en T eam. Qwen3 technical report, 2025. 6 , 8 [37] Alec Radford, Jong W ook Kim, Chris Hallacy , A dity a Ramesh, Gabriel Goh, Sandhini Agarw al, Girish Sas- try , Amanda Askell, Pamela Mishkin, Jack Clark, Gretc hen Krueger, and Ilya Sutskev er. Learning trans- ferable visual mo dels from natural language sup ervi- sion. In Pr o c e e dings of the 38th International Confer- enc e on Machine Le arning, ICML 2021, 18-24 July 2021, Virtual Event , volume 139 of Pr o c e e dings of Machine L e arning R ese ar ch , pages 8748–8763. PMLR, 2021. 6 [38] Qw en T eam. Qwen2.5-vl, January 2025. 8 [39] Seed T eam. Seed1.5-vl technical rep ort, 2025. 8 [40] An thropic. Claude-3.5, Nov em b er 2024. Accessed: 2024-11-02. 8 [41] OpenAI. Gpt-5, 2025. 8 [42] G. Gemini team, 2025. 8 [43] Jinze Bai, Shuai Bai, Shusheng Y ang, Shijie W ang, Sinan T an, Peng W ang, Juny ang Lin, Chang Zhou, and Jingren Zhou. Qwen-vl: A versatile vision-language mo del for understanding, lo caliza- tion, text reading, and b ey ond. arXiv pr eprint arXiv:2308.12966 , 2023. 8 [44] F eng Li, Renrui Zhang, Hao Zhang, Y uanhan Zhang, Bo Li, W ei Li, Zejun Ma, and Chun yuan Li. Llav a- next-in terleav e: T ackling multi-image, video, and 3d in large m ultimo dal mo dels, 2024. 8 [45] Hao yu Lu, W en Liu, Bo Zhang, Bingxuan W ang, Kai Dong, Bo Liu, Jingxiang Sun, T ongzheng Ren, Zhu- osh u Li, Y aofeng Sun, Chengqi Deng, Hanw ei Xu, Zhenda Xie, and Chong Ruan. Deepseek-vl: T ow ards real-w orld vision-language understanding, 2024. 8 [46] Y uan Y ao, Tianyu Y u, Ao Zhang, Chongyi W ang, Jun b o Cui, Hongji Zhu, Tianchi Cai, Haoyu Li, W eilin Zhao, Zhihui He, et al. Minicpm-v: A gpt-4v lev el mllm on your phone. arXiv pr eprint arXiv:2408.01800 , 2024. 8 [47] Lin Chen, Jinsong Li, Xiaoyi Dong, P an Zhang, Con- gh ui He, Jiaqi W ang, F eng Zhao, and Dahua Lin. Sharegpt4v: Improving large multi-modal mo dels with b etter captions, 2023. 8 [48] Kishore P apineni, Salim Roukos, T o dd W ard, and W ei- Jing Zhu. Bleu: a metho d for automatic ev aluation of mac hine translation. In Pr o ce e dings of the 40th A nnual Me eting of the A sso ciation for Computational Linguis- tics , pages 311–318, Philadelphia, USA, 2002. Asso ci- ation for Computational Linguistics. 10 [49] Chin-Y ew Lin. Rouge: A package for automatic ev alu- ation of summaries. In T ext Summarization Br anches Out , pages 74–81, Barcelona, Spain, 2004. Association for Computational Linguistics. 10 [50] Satanjeev Banerjee and Alon Lavie. Meteor: An au- tomatic metric for MT ev aluation with improv ed cor- relation with h uman judgments. In Pr o c e e dings of the A CL W orkshop on Intrinsic and Extrinsic Evaluation Me asures for Machine T ranslation and/or Summariza- tion , pages 65–72, Ann Arb or, USA, 2005. Asso ciation for Computational Linguistics. 10 [51] Ramakrishna V edantam, C. Lawrence Zitnic k, and Devi Parikh. CIDEr: Consensus-based image descrip- tion ev aluation. In Pr o c e edings of the IEEE Confer enc e on Computer Vision and Pattern R e c o gnition , pages 4566–4575, Boston, USA, 2015. IEEE. 10

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment