Q-DIVER: Integrated Quantum Transfer Learning and Differentiable Quantum Architecture Search with EEG Data

Integrating quantum circuits into deep learning pipelines remains challenging due to heuristic design limitations. We propose Q-DIVER, a hybrid framework combining a large-scale pretrained EEG encoder (DIVER-1) with a differentiable quantum classifie…

Authors: Junghoon Justin Park, Yeonghyeon Park, Jiook Cha

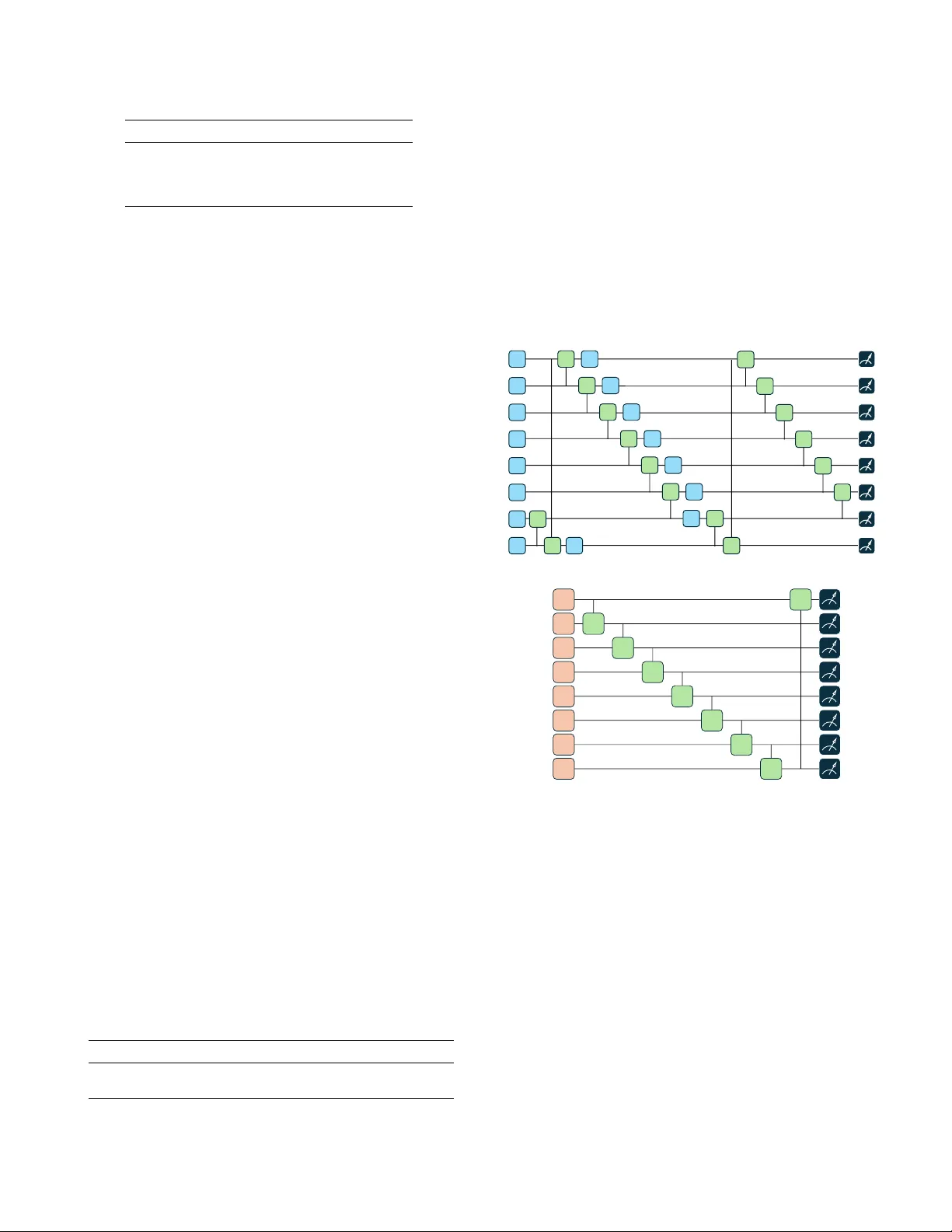

Q-DIVER: Inte grated Quantum T ransfer Learning and Dif ferentiable Quantum Architecture Search with EEG Data Junghoon Justin Park* Seoul National University Seoul, K orea utopie9090@snu.ac.kr Y eonghyeon Park* Seoul National University Seoul, K orea mandy1002@snu.ac.kr Jiook Cha Seoul National University Seoul, K orea connectome@snu.ac.kr * These authors contributed equally Abstract —Integrating quantum circuits into deep learning pipelines remains challenging due to heuristic design limitations. W e propose Q-DIVER, a hybrid framework combining a large- scale pretrained EEG encoder (DIVER-1) with a differentiable quantum classifier . Unlike fixed-ansatz approaches, we employ Differentiable Quantum Architecture Search to autonomously discover task-optimal circuit topologies during end-to-end fine- tuning. On the PhysioNet Motor Imagery dataset, our quan- tum classifier achieves predictiv e performance comparable to classical multi-layer perceptr ons (T est F1: 63.49%) while using approximately 50 × fewer task-specific head parameters (2.10M vs. 105.02M). These results validate quantum transfer learning as a parameter-efficient strategy f or high-dimensional biological signal pr ocessing. Index T erms —Quantum Machine Lear ning, Quantum T ransfer Learning, Differentiable Quantum Architecture Search, EEG Classification, Quantum Time-series T ransformer . I . I N T RO D U C T I O N Recent progress in quantum machine learning has demon- strated that quantum circuits can be integrated into classical deep learning pipelines in v arious ways. These inte gration- focused approaches hav e been instrumental in establishing the feasibility of classical–quantum hybrid models. Ho wev er , they leav e a more fundamental question open: at this stage, which functional components of a classical learning pipeline should quantum models meaningfully replace rather than merely augment? From a practical standpoint, classical machine learning pipelines are already highly mature, while near-term quantum devices operate under significant constraints. As a result, replacing entire classical systems with quantum models— or introducing quantum components without a clearly de- fined functional role—is neither necessary nor well-motiv ated. These considerations call for a more deliberate and structurally informed approach to integrating quantum models, in which their functional role within the classical pipeline is explicitly specified rather than implicitly assumed. In particular, this motiv ates a systematic exploration of where and ho w quantum components should be incorporated, rather than relying on ad hoc circuit choices. This perspective aligns with a long-standing view in clas- sical machine learning that learning systems can be decom- posed into representation learning and task-specific decision making [1], [2]. More recently , the emergence of foundation models has elev ated representation reuse into a central de- sign paradigm, explicitly treating pretrained representations as reusable assets across downstream tasks [3]. On a technical lev el, this paradigm is fundamentally enabled by transfer learn- ing, where large-scale pretraining decouples representation learning from task-specific decision making. Among the many application domains that ha ve adopted this paradigm, electrophysiological signal analysis has applied large-scale self-supervised pretraining to learn transferable spatiotemporal representations [4], [5]. Howe ver , EEG signals exhibit substantial inter- and intra-subject variability and are non-stationary , leading to pronounced distribution shifts across subjects and sessions that challenge downstream generaliza- tion [6], [7]. Recent studies hav e suggested that do wnstream readout mechanisms can play an important role in EEG transfer learning, and that lightweight or generic classifiers may not fully exploit pretrained representations [8], [9]. Nev ertheless, the readout stage is typically treated as part of a broader optimization pipeline rather than examined as a primary object of analysis. In this work, we inv estigate the downstream readout stage as a standalone modeling component and e xplore the use of quantum models as alternati ve readout mechanisms acting on fixed classical representations. Moti v ated by prior studies on quantum time-series transformers, we repurpose this architecture as a quantum readout module. T o av oid attributing potential performance gains to arbitrary circuit choices, we adopt differentiable quantum architecture search (DiffQAS) as a systematic optimization framew ork, enabling controlled analysis of the structural role of the quantum readout. I I . B AC K G RO U N D A. Quantum T ransfer Learning T ransfer learning considers settings in which the source and target domains and/or tasks differ [10]. The transfer learning paradigm has been e xtended to hybrid classical– quantum architectures [11], where transfer is defined by the reuse of representations across stages, independent of whether the source or target models are classical or quantum. Among various settings, the classical–quantum configuration—where a classical backbone provides representations to a quantum readout—has recei ved particular attention in the Near-T erm Intermediate Scale Quantum (NISQ) era due to its practical- ity [11]. Recent quantum transfer learning studies explore different ways of realizing transfer within this hybrid frame work. Tseng et al. [12] formulate transfer learning directly at the lev el of the variational quantum circuit (VQC), modeling domain adaptation as a one-step algebraic estimation of parameter shifts under a fixed circuit and measurement. In contrast, many hybrid approaches freeze a deep classical network pretrained on large-scale datasets (e.g., ImageNet) as a feature extractor and train a VQC as a task-specific classification head on the target domain [11], [13]. B. DIVER-1 Recent advances in electrophysiology foundation models show that large-scale pretraining on heterogeneous neural recordings yields representations that generalize across sub- jects, recording setups, and do wnstream tasks. DIVER-1 [14] ex emplifies this through scale, training strate gy , and architec- tural design. DIVER-1 is pretrained on a diverse corpus of EEG and iEEG data from over 17,700 subjects, spanning substantial inter-subject and cross-modality v ariability [14]. Its data- constrained scaling analysis shows that representation quality depends not only on parameter count, but also on training duration and data div ersity , with smaller models trained longer sometimes outperforming larger models trained briefly . Prior work suggests that transfer gains in data-limited sci- entific domains may reflect overparameterization or training dynamics rather than genuine feature reuse [15]. The scaling behavior observ ed in DIVER-1 supports the view that transfer- able electrophysiological representations cannot be attributed to model size alone. Architecturally , DIVER-1 incorporates permutation equiv- ariance and support for heterogeneous sensor configurations to promote cross-subject and cross-setup robustness [14]. These choices reduce sensitivity to electrode ordering and recording systems, making DIVER-1 a suitable backbone for studying how pretrained electrophysiological representations are lev eraged by do wnstream prediction heads. C. Quantum T ime-series T ransformer T o effecti vely capture long-range temporal dependencies within the high-dimensional EEG embeddings while mini- mizing parameter overhead, we employ the Quantum T ime- series T r ansformer (QTST ransformer) [16]. Unlike classical transformers that rely on computationally intensi ve quadratic attention mechanisms ( O ( L 2 ) ), the QTSTransformer le verages quantum mechanical properties to process sequence data with polylogarithmic complexity ( O ( polylog ( L )) ). The model’ s ar- chitecture is defined by four distinct operational stages: 1) Unitary T emporal Embedding: The first stage maps classical temporal data into the quantum Hilbert space. Let X = { x 0 , . . . , x n − 1 } denote the sequence of latent feature vectors extracted by the classical backbone. Each feature vector x j at time-step j is mapped to a unique V ariational Quantum Circuit (VQC), which defines a specific unitary transformation U j ( θ j ) . This process creates a quantum repre- sentation where the temporal information is encoded directly into the operation of the quantum gate sequence. 2) T ime Sequence Mixing via LCU: T o model temporal correlations, we employ the Linear Combination of Unitaries (LCU) primitive. This serves as a quantum-nati ve attention mechanism. Instead of explicitly computing a pairwise at- tention matrix, the model prepares a control re gister in a superposition state defined by coef ficients α . This control state directs the simultaneous application of the sequence unitaries { U 0 , . . . , U n − 1 } onto a target register . The resulting operation creates a weighted superposition operator M , defined as: M = n − 1 X j =0 e iγ j | a j | 2 U j (1) where e iγ j represents the phase and | a j | 2 represents the magni- tude of the attention weight for the j -th time step. This allows the model to ”attend” to multiple time-steps simultaneously through quantum interference. 3) Non-linearity via QSVT : T o introduce the non-linearity required for complex decision boundaries, we utilize the Quan- tum Singular V alue T r ansformation (QSVT). While classical transformers rely on activ ation functions like Softmax or GELU, the QTSTransformer applies a polynomial transfor- mation P c to the singular values of the mixed operator M . The transformed operator takes the form: P c ( M ) = c d M d + c d − 1 M d − 1 + · · · + c 1 M + c 0 I (2) where { c 0 , . . . , c d } are trainable polynomial coefficients and d is the degree of the polynomial. This transformation allo ws the model to capture richer , higher-order interactions between different time-steps without collapsing the quantum state. 4) Readout and Classical Pr ocessing: In the final stage, the transformed quantum state is measured to extract clas- sical features via Pauli expectation values. These values are subsequently processed by a compact classical feed-forward neural network to produce the final downstream prediction (e.g., classification of motor imagery tasks). This architecture serves as the decision head of our hybrid framew ork. By processing the pre-trained features through this quantum-nativ e attention mechanism, we achie ve high repre- sentational power with significantly fe wer trainable parameters than a comparable classical multi-layer perceptron (MLP). D. Differ entiable Quantum Ar chitectur e Searc h Performance of v ariational quantum circuits can be highly sensitiv e to architectural design and initialization, making it difficult to disentangle intrinsic quantum limitations from incidental circuit choices [17], [18]. T o enable principled ev al- uation and task-specific adaptation, we adopt a differentiable quantum architecture search (Dif fQAS) frame work [19], which relaxes discrete circuit design into a continuous, end-to-end optimizable formulation. 1) Searc h Space Construction: W e construct a modular search space in which a quantum circuit C consists of L sequential units S 1 , . . . , S L . Each unit S l is selected from a predefined candidate library B l . The total number of possible circuit configurations is N = L Y l =1 |B l | . (3) The candidate library includes different entanglement pat- terns and parameterized single-qubit rotation gates (e.g., R x , R y , R z ), allowing exploration of varying expressivity and correlation structures. 2) Differ entiable Optimization F rame work: Rather than se- lecting a single discrete architecture, we assign a learnable structural weight w j to each candidate configuration C j . Let f C j ( x ; θ j ) denote the output of candidate C j with parameters θ j . The effecti ve model output is defined as the weighted ensemble f ens ( x ) = N X j =1 w j f C j ( x ; θ j ) . (4) This continuous relaxation enables joint optimization of circuit parameters and architecture. 3) T raining and Discr etization: W e jointly optimize the variational parameters Θ = { θ 1 , . . . , θ N } and structural weights W = { w 1 , . . . , w N } by minimizing min Θ , W L ( f ens ( x ) , y ) . (5) After conv ergence, the final discrete architecture is obtained by selecting the candidate with the largest structural weight: C final = arg max j w j . (6) In our implementation, DiffQAS is applied in a factorized manner by optimizing the T imestep Modeling and QFF blocks separately and composing the selected candidates. I I I . Q - D I V E R Our Q-DIVER is a hybrid framew ork that integrates the classical and quantum components into a unified pipeline designed for data-efficient fine-tuning on downstream EEG tasks. A. Q-DIVER Hybrid Arc hitectur e The Q-DIVER pipeline effecti v ely bridges the gap between high-dimensional classical data and the quantum feature space. As illustrated in Fig. 1, the architecture operates as a two-stage transfer learning system. Pretrained Foundation Mod el Resampling & T okenization → resampled to 500 Hz/Window = 4 s → T = 2000 → N = 4 tokens (patch=500) DIVER - 1 Backbone • Multi -scale feature extraction • Patch embedding (500 sampl es) • T ransformer encoder (12 laye rs) Reshape for Q uantum Input • Feature projection → rotation params Class Logits QTST ransformer Ti mestep Circuit ( DiffQAS , 24 candidates ) • QSVT polynomial evol ution • LCU timestep mixing QFF Circuit. ( DiffQAS , 24 candidates) Quantum H ead Raw EEG Signal PhysioNet-MI (fs=160 Hz) Fig. 1: Overview of the Q-DIVER hybrid architecture. Raw EEG signals are processed by the pretrained DIVER-1 back- bone and passed to a QTST ransformer-based quantum head. DiffQAS is applied to the Timestep and QFF circuit blocks (24 candidates each). 1) The Hybrid Pipeline: The data flow proceeds sequen- tially through the classical backbone and the quantum classi- fication head: 1) Input Processing: The pipeline accepts raw EEG sig- nals denoted as X ∈ R B × C × T , where B is the batch size, C is the number of channels, and T represents time points. 2) Classical Backbone (DIVER-1): The pre-trained DIVER-1 encoder processes the input X to extract high- lev el spatio-temporal representations. Unlike standard transfer learning where the backbone is often frozen, we perform end-to-end fine-tuning, allowing the backbone to adapt its latent space specifically for the quantum manifold. The output is a latent representation H class ∈ R B × N × d , where N is the sequence length (patches) and d is the feature dimension. 3) Quantum Projection Interface: T o encode the classical features into the quantum circuit, we employ a learnable projection layer with regularization. For each timestep t , the classical feature vector x t ∈ R d feat is mapped to quantum rotation parameters θ t ∈ [0 , 1] n params via: θ t = σ ( W · Dropout ( x t ) + b ) (7) where σ ( · ) is the sigmoid function, ensuring parameters remain within the normalized range. These parameters are then applied to the quantum gates as rotation angles scaled by π / 2 : R α ( θ t,i ) = exp − i · π · θ t,i 2 · σ α (8) where α ∈ { X , Y , Z } corresponds to the selected Pauli rotation gate. 4) Quantum Classification Head: The projected features driv e the QTST ransformer . The quantum head applies the optimal circuit ansatz discovered via DiffQAS to perform unitary ev olution using LCU and QSVT . Fi- nally , quantum measurement (e xpectation v alues of Pauli operators) yields the logits Y out ∈ R B × K , where K is the number of classes. B. P arameter Br eakdown and Efficiency The QTSTransformer head introduces substantially fewer task-specific parameters than a classical dense MLP head applied to flattened spatio-temporal features. The DIVER-1 backbone contains 51.36M parameters. In the end-to-end fine- tuning setting, both the backbone and the classifier head are trainable. T ABLE I: P arameter breakdo wn comparison between DIVER+MLP and DIVER+QTST ransformer (PhysioNet-MI, 4-second trial setting, 8 qubits). Both models are trained end- to-end. Component DIVER+MLP DIVER+QTST ransformer DIVER-1 Backbone 51.36M 51.36M Feature Projection — 2.10M Classifier 105.02M 143 T otal 156.38M 53.46M As sho wn in T able I, the DIVER+MLP configu- ration contains 156.38M trainable parameters, whereas DIVER+QTST ransformer contains 53.46M parameters under end-to-end training. This corresponds to an overall parameter reduction of approximately 2 . 9 × at the full-model lev el. The difference becomes substantially more pronounced when focusing only on the task-specific classifier head. The classical MLP head introduces 105.02M parameters, while the QTST ransformer head requires only 2.10M parameters, yield- ing an approximate 50 × reduction in head-specific trainable parameters. Importantly , in the quantum configuration, the majority of head parameters reside in the classical feature projection layer(2.10M), while the quantum circuit itself introduces only 43 trainable parameters. This corresponds to approximately 0.002% of the head parameters and about 0.00008% of the total model parameters, confirming that the quantum compo- nent contrib utes a ne gligible fraction of the ov erall parameter count. I V . E X P E R I M E N T A. Pretr aining The backbone encoder used in this study follo ws the DIVER-1 self-supervised pretraining procedure [14] and is pretrained on a large-scale corpus of EEG and iEEG record- ings aggregated from multiple publicly available datasets. Raw EEG and iEEG signals were standardized follo wing the DIVER-1 preprocessing protocol: resampling to 500 Hz and minimal filtering with a 0.3–0.5 Hz high-pass and a 60 Hz notch filter , without low-pass filtering. Each training sample consisted of a 30-second continuous window tokenized into temporal patches of either 0.1 s or 1.0 s duration. The pretraining data include intracranial EEG datasets such as AJILE12 [20] and the Penn Electrophysiology of Encoding and Retriev al Study (PEERS) [21], a self-collected iEEG dataset from epilepsy patients, as well as large-scale scalp EEG datasets including the T emple Univ ersity Hospital EEG Corpus (TUEG) [22], the Healthy Brain Network EEG dataset (HBN-EEG) [23], and the Nationwide Children’ s Hospital Sleep DataBank (NCHSDB) [24]. These datasets span div erse recording modalities and subject populations, supporting ro- bust representation learning across heterogeneous electrophys- iological signals. The pretraining objectiv e operates on multiple signal repre- sentations. In addition to reconstructing the raw time-domain signal, masked patches are reconstructed in complementary domains such as spectral representations, and the correspond- ing errors are aggregated into a single loss. T o improve robustness to heterogeneous recording configurations, random subsampling was applied during pretraining: for each 30- second window , up to 32 channels and up to 30 temporal patches were selected. For the 0.1 s patch configuration, the number of patches was capped at 30. Unmasked patches are processed by a lightweight patch- wise conv olutional neural network to extract local temporal features and project them into token representations compat- ible with the transformer backbone. The resulting pretrained encoder is reused for do wnstream tasks with different predic- tion heads. B. Downstream T ask W e ev aluate Q-DIVER on a publicly available EEG dataset not used during pre-training: the PhysioNet Motor Imagery (PhysioNet-MI) dataset [25]. PhysioNet-MI includes EEG recordings from 109 subjects acquired with 64 channels at 160 Hz. The dataset comprises four motor imagery classes (left fist, right fist, both fists, both feet), and we ev aluate a 4-class motor imagery classification task. C. QA QC and Pr epr ocessing Quality assessment and minimal preprocessing followed the same protocol used in DIVER-1 pretraining, including amplitude normalization and clipping-based rejection. T ABLE II: Search Space for Differentiable Quantum Archi- tecture Search Component Options Initialization { Hadamard , None } Entanglement { Linear CNO T , Ring CNOT, CRX Forward , CRX Backward } V ariational Gates { RX , R Y , RZ } D. Experimental Setting W e ev aluate the framework on the downstream EEG dataset (PhysioNet-MI) using a rigorous fine-tuning protocol. Raw EEG recordings (originally sampled at 160 Hz for PhysioNet- MI) were re-referenced and minimally filtered using a 0.3–0.5 Hz high-pass filter and a 60 Hz notch filter . All signals were then resampled to 500 Hz to maintain consistency with the DIVER-1 pretraining configuration. Each trial in the PhysioNet-MI dataset corresponds to a 4-second motor imagery interv al. W e directly used these trial segments as input samples without introducing additional temporal windowing. 1) Hyperparameters and Configuration: The hybrid model is trained end-to-end using the Cross-Entropy loss function. W e utilize the AdamW optimizer to update both the classical backbone weights and the quantum circuit parameters. The specific hyperparameters used for fine-tuning are: batch size 32, learning rate 5 e − 5 , weight decay 1 e − 2 , and dropout 0.1 (applied to the classical projection layer). 2) DiffQAS Sear c h Space: Instead of relying on stochastic Monte Carlo sampling [19], we employ a structured factorized search over the same candidate space. Each of the two circuit blocks (T imestep Modeling and QFF) is optimized indepen- dently as a 24-way softmax mixture, introducing 24 + 24 = 48 learnable structural weights while implicitly representing all 24 × 24 = 576 joint configurations. Giv en the moderate search size, all candidates within each block are ev aluated deterministically at ev ery step. After a short warmup with uniform mixing ( 1 / 24 ), structural weights are optimized via softmax relaxation, and the final architecture is obtained by argmax selection in each block. V . R E S U LT S W e present the PhysioNet-MI experiment results demon- strating the model’ s classification performance, architectural con ver gence, and parameter ef ficiency . A. Classification P erformance As sho wn in T able III, Q-DIVER achie ved a T est F1- score of 63.49%, exceeding the v alidation F1-score of 59.81%, which suggests stable performance on the held-out test set. T ABLE III: Classification performance metrics for the Q- DIVER model on the PhysioNet-MI dataset Split Accuracy F1-Score Kappa Pr ecision Recall V alidation 59.77% 59.81% 0.4636 59.89% 59.77% T est 63.39% 63.49% 0.5118 63.91% 63.38% B. Optimal Quantum Arc hitectur e Discovery Q-DIVER successfully navigated the search space of 576 potential architecture combinations (24 candidates per com- ponent) to identify a task-optimal circuit configuration. The search process conv erged on specific structural motifs that maximize expressi vity for EEG signal classification. • Timestep Circuit: The search selected a 2-layer architec- ture utilizing a CRX Backward Ring entangling pattern and RZ variational gates (Fig. 2a). • Quantum Feed-F orward (QFF) Circuit: The search selected a 1-layer architecture utilizing a CRX F orward Ring entangling pattern and R Y variational gates (Fig. 2b). q0 • • q6 q7 q1 q2 q3 q4 q5 q6 • • RZ RZ RZ RZ RZ RZ RZ RZ • RX RZ • RX RZ • RX RZ • RX RZ • RX RZ • RX RZ RZ RX RX RZ RX RX • RX • RX • RX • RX • RX • RX (a) Timestep Circuit q0 q7 q1 q2 q3 q4 q5 q6 RY RY RY RY RY RY RY RX RX RX RX RX RX RX RY • • • • • • RX • • • • • • • • (b) Quantum Feed Forward Fig. 2: Optimal Quantum Circuit Architecture Obtained from DiffQAS. Three distinct patterns emerged from the optimal architec- ture: • Preference for Parametric Entanglement: Both optimal circuits selected CRX gates ov er static CNOT gates. This suggests that the additional learnable parameters in the entangling layers are crucial for capturing the complex correlations in high-dimensional EEG data. • No Initialization Layer: Neither the timestep nor the QFF circuit selected the Hadamard initialization layer . This indicates that for this specific motor imagery task, starting from the computational basis state | 0 ⟩ ⊗ n and relying solely on v ariational rotations provides suf ficient expressi vity . • Complementary V ariational Gates: The pipeline uti- lizes complementary rotation axes—RZ (phase) for temporal modeling and R Y (amplitude) for feature extraction—enhancing the div ersity of transformations within the quantum Hilbert space. C. Arc hitectur e W eight Con ver gence The differentiable search process exhibited stable con ver - gence behavior . Initialized uniformly at w = 1 / 24 ≈ 0 . 0417 during the 5-epoch warmup phase, the architecture weights for the optimal circuits gradually increased throughout training. By epoch 99, the weights for the optimal Timestep and QFF circuits reached approximately 0.063 and 0.065 , respecti vely . This gradual div ergence, rather than a sharp winner-tak es-all collapse, suggests that the soft selection mechanism allows the model to robustly explore the search space before settling on the optimal configuration. D. P arameter Efficiency For PhysioNet-MI with 4-second motor imagery trials (8 qubits), DIVER+QTST ransformer has 53.46M parameters (51.36M backbone + 2.10M head), whereas DIVER+MLP has 156.38M (51.36M + 105.02M). W ith end-to-end fine-tuning (unfrozen backbone), this yields an overall reduction of 2 . 9 × at the full-model lev el. Focusing on the task-specific readout head, QTSTrans- former uses 2.10M parameters versus 105.02M for the MLP head, corresponding to an approximate 50 × reduction. Most head parameters in the quantum configuration come from the classical feature projection (2.097M); the quantum circuit contributes 43 trainable parameters and the post-measurement linear classifier adds 100. This compact decision head suggests that the optimized quantum ansatz can provide an expressiv e readout under sev ere parameter constraints. V I . C O N C L U S I O N In this paper, we introduced Q-DIVER, a hybrid frame- work synergizing the DIVER-1 foundation model with the QTST ransformer to enable quantum transfer learning for high-dimensional EEG data. By extending Dif fQAS, our ap- proach autonomously discovered task-optimal circuit topolo- gies, eliminating the reliance on heuristic ansatz design. Empirical results on the PhysioNet-MI dataset highlight three key contributions. First, the quantum classifier matched classical MLP performance while using approximately 50 × fewer task-specific head parameters, with detailed parameter comparisons provided in T able I and the Results section. This demonstrates that substantial parameter savings arise primarily from the decision-making module, supporting the high effec- tiv e dimension of quantum feature spaces [26]. Second, the architecture search consistently conv erged on expressi ve para- metric entangling gates (CRX) and esche wed initialization lay- ers, re vealing distinct, task-specific inductive biases [19], [27]. Finally , the model exhibited robust generalization with test performance exceeding validation metrics (T est F1: 63.49%), consistent with theoretical predictions regarding the fa vorable generalization bounds of constrained quantum models [28]– [30]. Q-DIVER offers a viable pathway for deploying power - ful, interpretability-friendly neuroimaging models in resource- constrained en vironments. Beyond empirical performance, our findings cautiously suggest that the structural constraints of quantum circuits may induce a task-relev ant inductive bias, enabling effecti ve decision boundaries under se vere parameter limitations. Future work will expand this framew ork to diverse neurological domains and integrate quantum error mitigation to enhance robustness on NISQ hardware. A C K N O W L E D G M E N T Special thanks to the members of the SNU Connectome Lab, particularly T ae yang Lee, for their inv aluable support to implementing hybrid quantum transfer learning of the DIVER-1 model. This work was supported by the National Research Foundation of K orea (NRF) grant funded by the K orea government (MSIT) (No. 2021R1C1C1006503, RS-2023-00266787, RS-2023- 00265406, RS-2024-00421268, RS-2024-00342301, RS- 2024-00435727, NRF-2021M3E5D2A01022515, and NRF- 2021S1A3A2A02090597), by the Creativ e-Pioneering Researchers Program through Seoul National University (No. 200-20240057, 200-20240135). Additional support was provided by the Institute of Information & Communications T echnology Planning & Ev aluation (IITP) grant funded by the K orea government (MSIT) [No. RS-2021-II211343, 2021-0-01343, Artificial Intelligence Graduate School Program, Seoul National University] and by the Global Research Support Program in the Digital Field (RS-2024- 00421268). This work was also supported by the Artificial Intelligence Industrial Con ver gence Cluster De velopment Project funded by the Ministry of Science and ICT and Gwangju Metropolitan City , by the K orea Brain Research Institute (KBRI) basic research program (25-BR-05-01), by the K orea Health Industry Dev elopment Institute (KHIDI) and the Ministry of Health and W elfare, Republic of Korea (HR22C1605), and by the Korea Basic Science Institute (National Research Facilities and Equipment Center) grant funded by the Ministry of Education (RS-2024-00435727). W e acknowledge the National Supercomputing Center for providing supercomputing resources and technical support (KSC-2023-CRE-0568). An aw ard for computer time was provided by the U.S. Department of Energy’ s (DOE) ASCR Leadership Computing Challenge (ALCC). This research used resources of the National Energy Research Scientific Computing Center (NERSC), a DOE Office of Science User Facility , under ALCC aw ard m4750-2024, and supporting resources at the Argonne and Oak Ridge Leadership Computing Facilities, U.S. DOE Office of Science user facilities at Argonne National Laboratory and Oak Ridge National Laboratory . R E F E R E N C E S [1] J. Y osinski, J. Clune, Y . Bengio, and H. Lipson, “How transferable are features in deep neural networks?, ” Advances in neural information pr ocessing systems , vol. 27, 2014. [2] Y . Bengio, A. Courville, and P . V incent, “Representation learning: A revie w and new perspectiv es, ” IEEE T ransactions on P attern Analysis and Machine Intelligence , vol. 35, no. 8, p. 1798–1828, 2013. [3] R. Bommasani, D. Hudson, E. Adeli, R. Altman, S. Arora, S. Arx, M. Bernstein, J. Bohg, A. Bosselut, E. Brunskill, E. Brynjolfsson, S. Buch, D. Card, R. Castellon, N. Chatterji, A. Chen, K. Creel, J. Davis, D. Demszky , and P . Liang, On the Opportunities and Risks of F oundation Models . 2021. [4] G. W ang, W . Liu, Y . He, C. Xu, L. Ma, and H. Li, “EEGPT: pretrained transformer for uni versal and reliable representation of EEG signals, ” 2024. [5] W . Jiang, L. Zhao, and B.-l. Lu, “Large brain model for learning generic representations with tremendous EEG data in bci, ” in The T welfth International Confer ence on Learning Representations . [6] A. Apicella, P . Arpaia, G. D’Errico, D. Marocco, G. Mastrati, N. Moc- caldi, and R. Prev ete, “T oward cross-subject and cross-session general- ization in ee g-based emotion recognition: Systematic revie w , taxonomy , and methods, ” Neurocomputing , v ol. 604, p. 128354, 2024. [7] J. W ang, S. Zhao, Z. Luo, Y . Zhou, H. Jiang, S. Li, T . Li, and G. Pan, “CBramod: A criss-cross brain foundation model for EEG decoding, ” in The Thirteenth International Conference on Learning Representations , 2025. [8] S. Liang, L. Li, W . Zu, W . Feng, and W . Hang, “ Adaptiv e deep feature representation learning for cross-subject EEG decoding, ” BMC Bioinformatics , vol. 25, no. 1, p. 393, 2024. [9] J. Jeon, S. Jeong, Y . Shon, and H.-I. Suk, “Parameter -efficient transfer learning for EEG foundation models via task-rele vant feature focusing, ” 2025. [10] S. J. Pan and Q. Y ang, “ A surv ey on transfer learning, ” IEEE T ransac- tions on knowledge and data engineering , vol. 22, no. 10, p. 1345–1359, 2009. [11] A. Mari, T . R. Bromley , J. Izaac, M. Schuld, and N. Killoran, “Transfer learning in hybrid classical-quantum neural networks, ” Quantum , vol. 4, p. 340, 2020. [12] H.-H. Tseng, H.-Y . Lin, S. Y .-C. Chen, and S. Y oo, “Transfer learning analysis of variational quantum circuits, ” in 2025 IEEE International Confer ence on Acoustics, Speech, and Signal Pr ocessing W orkshops (ICASSPW) , p. 1–5, IEEE. [13] A. Khatun and M. Usman, “Quantum transfer learning with adver- sarial robustness for classification of high-resolution image datasets, ” Advanced Quantum T echnologies , v ol. 8, no. 1, p. 2400268, 2025. [14] D. D. Han, Y . Gwon, A. L. Lee, T . Lee, S. J. Lee, J. Choi, S. Lee, J. Bang, S. Lee, and D. K. Park, “DIVER-1: Deep integra- tion of vast electrophysiological recordings at scale, ” arXiv pr eprint arXiv:2512.19097 , 2025. [15] M. Raghu, C. Zhang, J. Kleinber g, and S. Bengio, “T ransfusion: Un- derstanding transfer learning for medical imaging, ” Advances in neural information pr ocessing systems , vol. 32, 2019. [16] J. J. Park, J. Seo, S. Bae, S. Y .-C. Chen, H.-H. Tseng, J. Cha, and S. Y oo, “Resting-state fMRI analysis using quantum time-series transformer , ” in 2025 IEEE International Confer ence on Quantum Computing and Engineering (QCE) , vol. 1, p. 2352–2363, IEEE, 2025. [17] J. R. McClean, S. Boixo, V . N. Smelyanskiy , R. Babbush, and H. Neven, “Barren plateaus in quantum neural network training landscapes, ” Natur e Communications , vol. 9, no. 1, p. 4812, 2018. [18] Z. Holmes, K. Sharma, M. Cerezo, and P . J. Coles, “Connecting ansatz expressibility to gradient magnitudes and barren plateaus, ” PRX Quantum , vol. 3, p. 010313, Jan 2022. [19] S.-X. Zhang, C.-Y . Hsieh, S. Zhang, and H. Y ao, “Differentiable quantum architecture search, ” Quantum Science and T echnology , vol. 7, p. 045023, aug 2022. [20] S. M. Peterson, S. H. Singh, B. Dichter , M. Scheid, R. P . N. Rao, and B. W . Brunton, “AJILE12: Long-term naturalistic human intracranial neural recordings and pose, ” Scientific Data , vol. 9, no. 1, p. 184, 2022. [21] M. J. Kahana, J. H. Rudoler, L. J. Lohnas, K. Healey , A. Aka, A. Broitman, E. Crutchley , P . Crutchley , K. H. Alm, B. S. Katerman, N. E. Miller, J. R. Kuhn, Y . Li, N. M. Long, J. Miller, M. D. Paron, J. K. Pazdera, I. Pedisich, and C. T . W eidemann, “Penn Electrophysiology of Encoding and Retriev al Study (PEERS), ” 2023. [22] I. Obeid and J. Picone, “The Temple Univ ersity Hospital EEG Data Corpus, ” Fr ontier s in Neur oscience , vol. 10, p. 196, 05 2016. [23] S. Y . Shirazi, A. Franco, M. S. Hoffmann, N. B. Esper, D. Truong, A. Delorme, M. P . Milham, and S. Makeig, “HBN-EEG: The F AIR implementation of the Healthy Brain Network (HBN) electroencephalog- raphy dataset, ” bioRxiv , p. 2024.10.03.615261, 2024. [24] H. Lee, B. Li, S. DeForte, M. L. Splaingard, Y . Huang, Y . Chi, and S. L. Linwood, “ A large collection of real-world pediatric sleep studies, ” Scientific Data , vol. 9, no. 1, p. 421, 2022. [25] A. L. Goldberger , L. A. N. Amaral, L. Glass, J. M. Hausdorff, P . C. Ivano v , R. G. Mark, J. E. Mietus, G. B. Moody , C.-K. Peng, and H. E. Stanley , “Physiobank, Physiotoolkit, and Physionet: components of a new research resource for complex physiologic signals, ” Circulation , vol. 101, no. 23, p. e215–e220, 2000. doi: 10.1161/01.CIR.101.23.e215. [26] A. Abbas, D. Sutter, C. Zoufal, A. Lucchi, A. Figalli, and S. W oerner , “The power of quantum neural networks, ” Nature Computational Sci- ence , vol. 1, no. 6, pp. 403–409, 2021. [27] S. Y .-C. Chen and P . Tiwari, “Quantum long short-term memory with differentiable architecture search, ” in 2025 IEEE International Confer- ence on Quantum Artificial Intelligence (QAI) , pp. 13–18, 2025. [28] M. C. Caro, H.-Y . Huang, M. Cerezo, K. Sharma, A. Sornborger, L. Cincio, and P . J. Coles, “Generalization in quantum machine learning from few training data, ” Natur e Communications , vol. 13, no. 1, p. 4919, 2022. [29] E. Gil-Fuster , J. Eisert, and C. Bravo-Prieto, “Understanding quantum machine learning also requires rethinking generalization, ” Natur e Com- munications , vol. 15, no. 1, p. 2277, 2024. [30] T . W u, A. Bentellis, A. Sakhnenko, and J. M. Lorenz, “Generalization bounds in hybrid quantum-classical machine learning models, ” arXiv pr eprint , 2025.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment