Attention Frequency Modulation: Training-Free Spectral Modulation of Diffusion Cross-Attention

Cross-attention is the primary interface through which text conditions latent diffusion models, yet its step-wise multi-resolution dynamics remain under-characterized, limiting principled training-free control. We cast diffusion cross-attention as a …

Authors: Seunghun Oh, Unsang Park

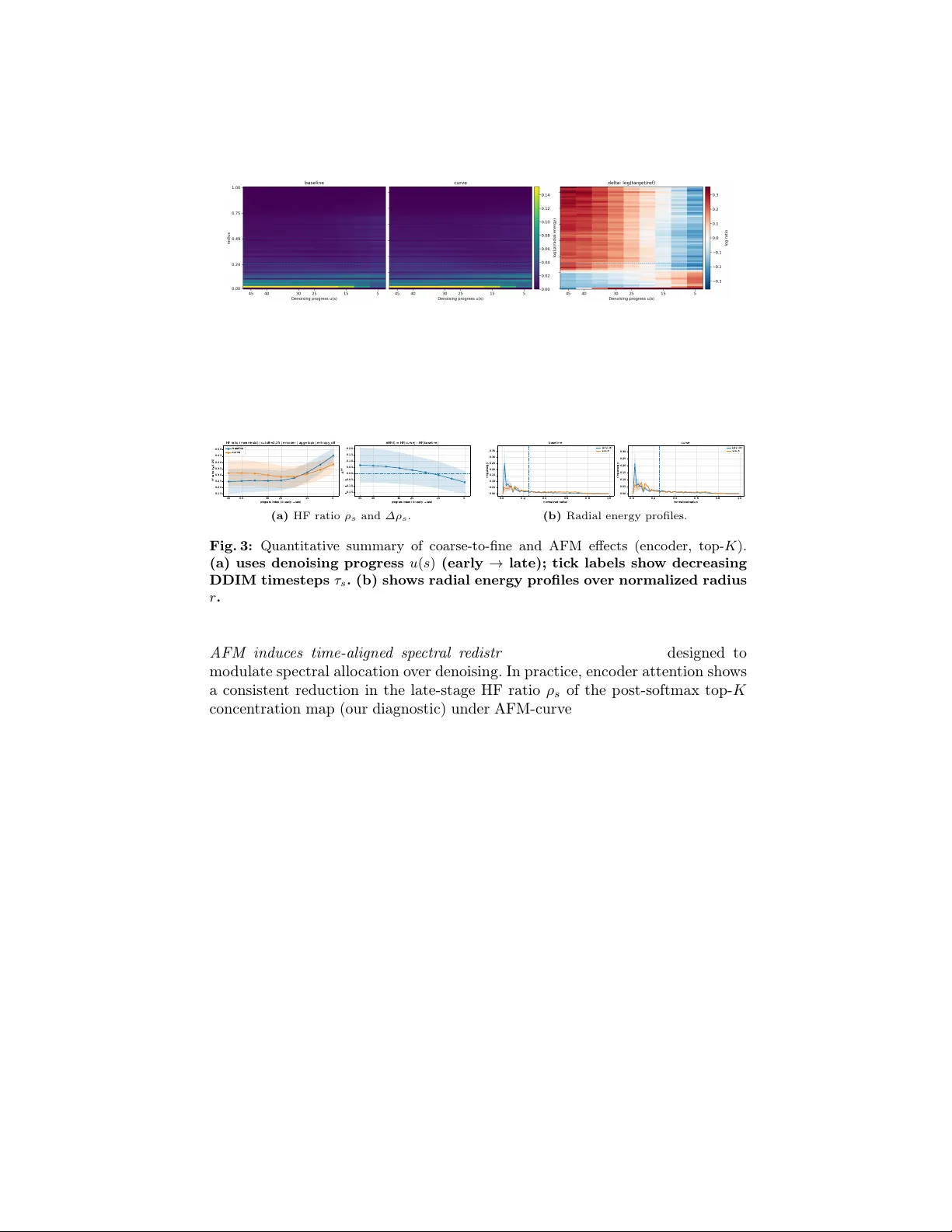

A tten tion F requency Mo dulation: T raining-F ree Sp ectral Mo dulation of Diffusion Cross-A tten tion Seungh un Oh and Unsang Park Sogang Univ ersity , Seoul, Republic of K orea {gnsgus190, uspark}@sogang.ac.kr Abstract. Cross-atten tion is the primary in terface through which text conditions laten t diffusion mo dels, yet its step-wise multi-resolution dy- namics remain under-c haracterized, limiting principled training-free con- trol. W e cast diffusion cross-attention as a spatiotemp oral signal on the laten t grid b y summarizing tok en-softmax w eights into token-agnostic concen tration maps and tracking their radially binned F ourier pow er o ver denoising. Across prompts and seeds, enco der cross-attention ex- hibits a consistent coarse-to-fine spectral progression, yielding a stable time–frequency fingerprint of token comp etition. Building on this struc- ture, we introduce Attention F r e quency Mo dulation (AFM), a plug-and- pla y inference-time interv en tion that edits tok en-wise pr e-softmax cross- atten tion logits in the F ourier domain: low- and high-frequency bands are rew eighted with a progress-aligned schedule and can b e adaptively gated b y token-allocation entrop y , before the token softmax. AFM provides a con tinuous handle to bias the spatial scale of tok en-comp etition patterns without retraining, prompt editing, or parameter up dates. Exp erimen ts on Stable Diffusion sho w that AFM reliably redistributes attention spec- tra and produces substan tial visual edits while largely preserving seman- tic alignmen t. Finally , we find that entrop y mainly acts as an adaptive gain on the same frequency-based edit rather than an indep enden t con- trol axis. Keyw ords: Diffusion Mo dels · Cross-A ttention · T raining-F ree Con trol 1 In tro duction Diffusion-based text-to-image mo dels ac hiev e state-of-the-art syn thesis b y pro- gressiv ely denoising text-conditioned latent representations [ 5 , 18 ]. Despite their success, the inference-time in ternal dynamics that go v ern ho w global la y out, fine detail, and sample-to-sample v ariabilit y emerge ov er denoising steps remain under-explored [ 6 , 10 ]. This gap is not only an in terpretability issue but also a con trollability b ottlenec k: widely used controls such as prompt engineering and guidance tuning often act as user-facing heuristics that entangle multiple fac- tors, offering limited insight in to what changes inside the model and when those c hanges o ccur [ 1 , 3 , 6 ]. A cen tral y et under-explored comp onen t is cross-attention, which injects tex- tual conditioning in to the U-Net at multiple resolutions and at every denoising 2 Seungh un Oh and Unsang Park step [ 18 , 24 ]. Because it is rep eatedly applied along the diffusion tra jectory , cross- atten tion is a natural carrier of stage-dependent signals (e.g., la yout formation early vs. detail refinement late) [ 18 , 24 ]. How ever, existing analyses typically fo- cus on atten tion heatmaps at a few timesteps or tok en relev ance scores, leaving the time-resolv ed organization across spatial scales unclear and limiting princi- pled, training-free con trol. W e ask whether cross-attention exhibits a consistent m ulti-scale organization ov er denoising, and whether that structure can b e used for controllable, inference-time edits. Hyp othesis and appr o ach. W e hypothesize that diffusion cross-attention exhibits a robust c o arse-to-fine sp ectral progression o ver denoising, and that steering this progression in logit space provides a training-free knob that biases token- comp etition to ward coarser or finer spatial patterns (as measured b y our attention- sp ectrum diagnostics), rather than directly measuring or guaran teeing image- lev el la yout/detail disen tanglement. T o test this h yp othesis, w e (i) extract a tok en-agnostic attention c onc entr ation map from cross-atten tion and summarize its sp ectrum ov er sampling progress, and (ii) interv ene by frequency-selective, tok en-wise rew eighting of pr e-softmax cross-atten tion logits. Empirically , en- co der cross-attention exhibits the most stable and monotonic sp ectral tra jectory across prompts and seeds, so w e use it as the primary locus for both analysis and in terven tion, while still rep orting do wnstream effects in middle/decoder blo c ks. A fr e quency-domain view. W e operationalize cross-attention as a spatial signal on the latent grid by mapping each step’s token distribution to a tok en-agnostic concen tration statistic (top- K ) and analyzing its radially binned F ourier p o wer. This yields a compact time–frequency fingerprin t that is stable across prompts and random seeds, revealing a consistent coarse-to-fine evolution inside the de- noising tra jectory . T r aining-fr e e c ontr ol via AFM. Motiv ated b y this fingerprint, we introduce A t- tention F r e quency Mo dulation (AFM), a plug-and-play inference-time in terven- tion that applies frequency-selectiv e reweigh ting to tok en-wise pr e-softmax cross- atten tion logits. AFM provides an in terpretable control handle by reshaping to- k en comp etition patterns in logit space ov er sampling progress, without retrain- ing or arc hitectural modification. W e emphasize that AFM does not “inject” high-frequency detail directly . Instead, it p erturbs token comp etition in logit space, which can ste er the observe d coarse-to-fine sp ectral progression measured on p ost-softmax atten tion summaries. Unless otherwise stated, w e apply AFM to enc o der cross-attention modules, where the spectral tra jectory is most stable, and rep ort downstream effects in middle/deco der blo c ks. Contributions. (i) F requency c haracterization of diffusion cross-atten tion. W e c haracterize cross-attention using a tok en-agnostic c onc entr ation signal (top- K mean) and trac k its sp ectral ev olution o ver denoising, pro viding a stable fingerprin t of coarse-to-fine token c omp etition across prompts and random seeds. A ttention F requency Mo dulation (AFM) 3 (a) Baseline (b) SAG (c) F reeU (d) Ours Fig. 1: Qualitative comparison on Stable Diffusion v1.5 under matc hed sampling set- tings (same prompt/seed). (a) Baseline, (b) SAG, (c) F reeU, (d) Ours (AFM). (ii) T raining-F ree A ttention F requency Modulation (AFM). W e propose an inference-time metho d that directly interv enes on cross-attention logits in the frequency domain, applied tok en-wise before softmax. AFM-curv e suppresses late-stage high-frequency fragmentation in enco der attention (as measured by the post-softmax top- K sp ectrum / ρ s ) while preserving alignment, indicating con trollability ov er the in ternal progression. (iii) Entrop y as a secondary gating signal. W e analyze attention en tropy as a complemen tary statistic capturing concentration/dispersion. When paired with AFM, en tropy gates the effectiv e in terven tion strength and influences v ariabilit y distributionally . R elate d work. T raining-free diffusion control. Prior w ork mo difies diffusion infer- ence without retraining, including atten tion-based editing/excitation and feature- space in terv entions [ 1 – 3 , 7 , 12 , 14 , 21 , 23 ]. Related con trollable generation and parameter-efficien t adaptation methods include ControlNet, T2I-Adapter, and LoRA [ 8 , 15 , 26 ]. W e include SAG and F reeU as represen tative training-free base- lines for direct comparison in our exp erimen ts. In contrast, w e first characterize a stable frequency structure in cross-attention ov er timesteps and then derive a frequency-aligned, logit-space in terven tion. W e choose SAG and F reeU as rep- resen tative training-free interv entions that mo dify the sampling dynamics with- out additional training or external mo dels, matching our plug-and-pla y setting. Other editing metho ds that rely on prompt-sp ecific inv ersion or extra optimiza- tion steps are orthogonal and not directly comparable under our fixed-sampling paired proto col. F requency p erspectives and mec hanistic analyses. F requency-domain to ols and mechanistic analyses ha ve b een used to prob e representation bias and dif- fusion dynamics [ 9 , 13 , 17 , 22 , 25 ]. En tropy and information measures. En tropy has long b een used to quan tify disp ersion in probabilistic assignments [ 20 ]. W e sho w entrop y mainly acts as a gating statistic that modulates the effective strength/v ariability of frequency- based interv entions, rather than defining an orthogonal control axis. 4 Seungh un Oh and Unsang Park 2 Bac kground 2.1 Diffusion mo dels and cross-atten tion along the denoising tra jectory Diffusion mo dels synthesize images via an iterativ e denoising pro cess [ 5 ]. Latent diffusion models (LDMs) p erform this pro cess in a learned latent space for ef- ficien t high-resolution generation [ 18 ]. At denoising step t , given latent x t and text condition c , the rev erse up date is x t − 1 = f θ ( x t , t, c ) , (1) where f θ is typically a U-Net with multi-resolution blo c ks: the do wnsampling path (enco der), the b ottlenec k (middle), and the upsampling path (deco der). T ext conditioning is injected through cross-atten tion lay ers. With queries Q from laten t features and keys/v alues K, V from text embeddings, a standard cross- atten tion op eration is A ttn( Q, K, V ) = softmax QK ⊤ √ d V . (2) Because cross-attention is applied at every denoising step and at multiple spatial resolutions, it naturally admits a stage-dep enden t view of generation dynamics. In this w ork, we treat cross-attention as a temp orally ev olving internal condition- ing signal, rather than a static alignment artifact. F or clarit y across schedulers, w e later reparameterize the step index by a monotone denoising progress. 2.2 Cross-atten tion maps as spatial signals F or a lay er/head at sampling iteration s , token-softmax cross-attention yields A s ∈ R H W × T (spatial queries × tokens). Since each row sums to 1, naiv e token summation is uninformative. W e therefore analyze token-agnostic concen tration summaries (e.g., mean top- K probabilit y) as spatial signals on the latent grid and study their sp ectral ev olution ov er den oising. All definitions (top- K /top-1, en tropy statistics) are provided in Sec. 3.1 and Sec. 3.3 . Entr opy statistics (use d for AFM gating). W e use the normalized mean token en tropy ¯ H tok s (Eq. ( 17 )) as an optional gate for AFM. Additional entrop y diag- nostics are deferred to the supplement. 3 Metho d W e presen t a frequency-domain framew ork to analyze and edit diffusion cross- atten tion at inference time. W e first define a measuremen t pip eline that con verts cross-atten tion into a stable spatial signal and quantifies its sp ectral ev olution o ver denoising, and then in tro duce a training-free, plug-and-play in terven tion that edits tok en-wise pre-softmax cross-atten tion logits via frequency-selective rew eighting. A ttention F requency Mo dulation (AFM) 5 Key design choic es. T w o choices are central. First, we treat cross-attention as a spatiotemp oral signal ev olving o ver denoising progress, which motiv ates a sp ec- tral (F ourier) view. Second, for controllabilit y we operate on tok en-wise logits (pre-softmax), b ecause tok en-softmax inv ariances can render p er-query scalar biases broadcast across tokens a no-op (Sec. 3.3 ). 3.1 Cross-atten tion as a spatiotemp oral signal Cr oss-attention in latent diffusion U-Nets. W e consider laten t diffusion mo dels (LDMs) with a U-Net backbone and cross-attention conditioning [ 18 ]. At each denoising step, cross-atten tion maps latent/image features (queries) to text fea- tures (keys/v alues). Let a giv en U-Net blo c k hav e laten t spatial size H × W (flattened into H W queries) and let the prompt con tain T tokens. F or a single atten tion head, the pre-softmax logits are L s = Q s K ⊤ s √ d ∈ R H W × T , (3) and the tok en-normalized atten tion w eights are A s = softmax( L s ) ∈ R H W × T , (4) where softmax is applied ro w-wise o v er the token dimension. F or m ulti-head atten tion, w e apply the same definitions per head and either analyze heads in- dividually or a verage derived statistics ov er heads. Denoising pr o gr ess (implementation index). W e index the S sampling iterations in the exact order executed b y the sampler as s ∈ { 0 , . . . , S − 1 } ( s =0 : most noisy , s = S − 1 : most clean), and use the normalized progress u ( s ) = s/ ( S − 1) for all sc hedules and plots. Sche duler timesteps (annotation only). Some samplers (e.g., DDIM) asso ciate eac h iteration s with a sc heduler timestep τ s (e.g., ddim_timesteps[s]), which is monotone de cr e asing in s . W e o ccasionally use τ s only for figure tick lab els. All sc hedules, interv entions, and analyses in this pap er are indexed by the sampler step s (or its normalized progress u ( s ) ), i.e., the horizon tal axis is alwa ys early → late in s . Why an “attention map” is non-trivial under token-softmax. A cross-attention ro w A s ( i, :) is a probability distribution o ver tokens at each spatial query i . Th us, naiv e tok en summation is constan t: P T j =1 A s ( i, j ) = 1 for all i . This motiv ates constructing a spatial analysis signal that is (i) defined o ver H × W , (ii) stable across prompts/seeds, and (iii) non-trivial under tok en normalization. 6 Seungh un Oh and Unsang Park T oken-agnostic sp atial summary ( top-1 / top- K ). W e build a tok en-agnostic confidence/p eak edness map capturing how concentrated the tok en distribution is at eac h lo cation. F or eac h spatial query i , S top 1 s ( i ) = max j ∈{ 1 ,...,T } A s ( i, j ) , (5) S top K s ( i ) = 1 K X j ∈ T op K ( A s ( i, :); K ) A s ( i, j ) , (6) and we reshap e S s ( · ) back into S s ∈ R H × W . Why top- K and top-1. top-1 can b e sensitiv e to winner-tak es-all fluctuations, pro ducing noisier tra jectories across seeds. top- K reduces v ariance by a verag- ing among the most attended tokens, yielding smo other and more repro ducible sp ectral statistics. W e therefore use top- K in the main analysis and rep ort top-1 as an ablation to sho w the coarse-to-fine trend is not an artifact of aggregation c hoice. 3.2 F requency decomposition and coarse-to-fine metrics 2D F ourier tr ansform and normalize d p ower sp e ctrum. Giv en S s ∈ R H × W , we compute the 2D F ourier transform ˆ S s = F ( S s ) . W e define the normalized p o w er sp ectrum P s ( f x , f y ) = | ˆ S s ( f x , f y ) | 2 P f x ,f y | ˆ S s ( f x , f y ) | 2 , X f x ,f y P s ( f x , f y ) = 1 . (7) R adial c o or dinate and binning (time–fr e quency matrix). Using FFT-shifted co- ordinates, we define the normalized radius r = q f 2 x + f 2 y r max ∈ [0 , 1] , (8) and bin P s in to B radial bins to obtain the radial energy profile E s ( b ) = X ( f x ,f y ) ∈ bin( b ) P s ( f x , f y ) , B X b =1 E s ( b ) = 1 . (9) High-fr e quency r atio as a c o arse-to-fine indic ator. W e summarize coarse-to-fine b eha vior via the high-frequency (HF) energy ratio using a cutoff radius r c : ρ s = X b : r b ≥ r c E s ( b ) . (10) Since E s is normalized, ρ s measures the fraction of sp ectral energy in the HF band. A consistent increase of ρ s o ver u ( s ) indicates a progressiv e shift tow ard more lo calized attention structure. A ttention F requency Mo dulation (AFM) 7 Cutoff choic e and r obustness. The LF/HF split dep ends on r c , but the tra jectory shap e can remain robust ev en when absolute v alues shift. W e use a default r c for main plots and test robustness with cutoff sweeps (multiple r c v alues), verifying that coarse-to-fine trends and AFM-induced deltas p ersist. F or in tuition, with square grids r max ≈ √ 0 . 5 2 + 0 . 5 2 in cycles/pixel, so r c =0 . 25 corresp onds to a radial frequency of ab out 0 . 18 cycles/pixel (roughly a 5 − 6 pixel wa velength). Intervention diagnostics: deltas and lo g-r atios. T o isolate how an inference-time edit changes sp ectral comp osition, we compute ∆ρ s = ρ (target) s − ρ (ref ) s , (11) and a frequency-resolv ed log-ratio R s ( b ) = log E (target) s ( b ) + ϵ E (ref ) s ( b ) + ϵ , (12) with small ϵ for numerical stabilit y . R s ( b ) provides a direct “what frequencies c hanged, and when” explanation. 3.3 T raining-F ree Atten tion F requency Mo dulation (AFM) W e no w describ e AFM, a plug-and-play inference-time atten tion editing method that p erforms token-wise logit-space spectral reweigh ting during denoising. Why we e dit lo gits (pr e-softmax) inste ad of attention weights. P ost-softmax edits of A s can be atten uated b y the ro w-wise token normalization. In particular, tok en-softmax is in v arian t to adding a per-query scalar bias broadcast across tok ens: softmax L s ( i, :) + b ( i ) 1 = softmax L s ( i, :) . (13) Therefore, a purely spatial additiv e bias shared across tokens cannot c hange tok en assignmen t. T o induce a non-trivial change in A s , the in terven tion must b e tok en-dep enden t and applied before normalization, motiv ating token-wise edits on L s (: , j ) . T oken-wise sp e ctr al r eweighting on lo git maps. F or eac h token j ∈ { 1 , . . . , T } , w e reshap e the logit column L s (: , j ) into a spatial map Z s,j ∈ R H × W and apply FFT: ˆ Z s,j = F ( Z s,j ) . (14) Let M LF = I ( r ≤ r c ) and M HF = I ( r > r c ) with M LF + M HF = 1 . AFM defines the edited sp ectrum as ˆ Z ′ s,j = α LF s ˆ Z s,j ⊙ M LF + α HF s ˆ Z s,j ⊙ M HF , (15) follo wed b y Z ′ s,j = F − 1 ( ˆ Z ′ s,j ) and flattening back to L ′ s (: , j ) . Finally , w e compute the edited atten tion w eights as A ′ s = softmax( L ′ s ) . 8 Seungh un Oh and Unsang Park Har d vs. soft masks (ringing r e duction). Hard binary masks can in tro duce mild spatial ringing due to sharp spectral b oundaries. Optionally , one may replace M LF , M HF with a smo oth radial transition (e.g., cosine ramp around r c ) while preserving interpretabilit y . Our rep orted sp ectral statistics are robust as long as the effective LF/HF separation remains comparable. Stability details: DC term and r e al-value dness. Logit maps can con tain token- sp ecific global biases that affect ov erall token comp etitiv eness. Optionally , w e preserv e the DC co efficient while applying band scaling to the remaining coeffi- cien ts. Radially symmetric masks preserve conjugate symmetry , and the inv erse FFT returns a real map up to numerical precision (w e tak e the real part). Sche dule d (curve) sc aling aligne d with denoising pr o gr ess (entr opy off ). W e use a progress-dep enden t sc hedule α LF s = 1 + λ (1 − u ( s )) , α HF s = 1 + λu ( s ) , (16) where λ con trols the ov erall edit strength. Entr opy gating (entr opy on). W e compute mean normalized token en tropy from the (unmo dified) attention weigh ts A s = softmax( L s ) : ¯ H tok s = 1 H W log T H W X i =1 − T X j =1 A s ( i, j ) log( A s ( i, j ) + ϵ ) , (17) and gate the band scaling as α LF s = 1 + λ (1 − u ( s )) (1 + β ¯ H tok s ) , α HF s = 1 + λu ( s ) (1 + γ (1 − ¯ H tok s )) . (18) When AFM is disabled ( λ = 0 ), en tropy gating is a strict no-op b y construction. Imp ortant: wher e the fr e quency is me asur e d. Eq. ( 16 )–( 18 ) modulate the lo git maps L s (: , j ) in the F ourier domain b efore the tok en softmax, whereas ρ s is com- puted from the p ost-softmax concentration map S top K s . Because tok en softmax is nonlinear and induces token comp etition, the effect of logit-space band scaling on ρ s is not monotonic; we therefore treat ρ s as a diagnostic of token-competition patterns, not a direct measure of image-frequency conten t. Entr opy as an auxiliary gating signal (not an indep endent e dit). ¯ H tok s (Eq. ( 17 )) summarizes token disp ersion: high entrop y indicates diffuse token assignmen t, while lo w en tropy indicates concen trated assignmen t. W e use it only to gate band scaling (Eq. ( 18 )), and it b ecomes a strict no-op when λ = 0 . L ayer/blo ck sc op e. AFM is compatible with any subset of U-Net cross-attention mo dules ( attn2 ). In our main setting, w e apply AFM to enc o der cross-atten tion mo dules only , and leav e self-attention (attn1; con text=None) unc hanged. W e still log enco der/middle/decode r spectra to separate direct edits from downstream effects. A ttention F requency Mo dulation (AFM) 9 Algorithm 1: AFM: token-wise logit-space spectral reweigh ting Input: Cross-attention logits L s ∈ R H W × T at denoising progress s Output: Edited logits L ′ s Compute progress u ( s ) ∈ [0 , 1] ; compute ¯ H tok s using Eq. ( 17 ); Set ( α LF s , α HF s ) using Eq. ( 16 ) (en tropy off ) or Eq. ( 18 ) (entrop y on); for j = 1 to T do Reshap e L s (: , j ) to Z s,j ∈ R H × W ; FFT: ˆ Z s,j = F ( Z s,j ) ; Apply LF/HF reweigh ting with cutoff r c (Eq. ( 15 )); (Optional) preserve DC co efficien t; iFFT: Z ′ s,j = F − 1 ( ˆ Z ′ s,j ) ; Flatten Z ′ s,j bac k into L ′ s (: , j ) ; return L ′ s 4 Exp erimen ts W e ev aluate T raining-F ree Atten tion F requency Mo dulation (AFM) along three axes: (i) attention-lev el evidence that cross-atten tion exhibits a consisten t coarse- to-fine sp ectral ev olution and that AFM induces controlled spectral redistribu- tion, (ii) image-level sensitivit y under paired generation (LPIPS), and (iii) text– image alignmen t stability (CLIP cosine similarity). Unless otherwise stated, we use paired comparisons with matc hed prompts and random seeds to isolate the causal effect of AFM. 4.1 Setup Mo dels. Our main attention-spectrum analyses are conducted on Stable Diffu- sion v1.5 [ 18 ]. W e additionally rep ort image-level robustness (LPIPS/CLIP) on Stable Diffusion v1.4 under the same prompt/seed and sampling proto col. Sampling (fixe d acr oss metho ds). F or repro ducibilit y and fair comparison, within e ach che ckp oint all metho ds use the same sc heduler, n umber of steps, CFG scale, resolution, and negative prompt. W e generate 512 × 512 images using a DDIM sc heduler with S =50 denoising steps and guidance scale 7 . 5 , and use paired sampling with fixed random seeds { 2025 , 2026 , 2027 , 2028 } for ev ery prompt. Only the inference-time interv ention (Baseline / SA G / F reeU / AFM) differs. Pr ompt sets. W e use t wo prompt sources: (i) a COCO-2017 v alidation caption subset [ 11 ] ( N prompt =50 ), and (ii) a LAION caption subset (caption-lik e samples) [ 19 ] ( N prompt =100 ). W e use N seed =4 fixed seeds p er prompt, yielding N pair = N prompt × N seed paired generations (COCO: 200; LAION: 400). 10 Seungh un Oh and Unsang Park AFM c onfigur ation. Unless sp ecified otherwise, AFM uses top- K aggregation with K =8 for attention analysis and a radial cutoff r c =0 . 25 for LF/HF sep- aration. W e apply logit-space spectral rew eigh ting to enc o der cross-attention mo dules only , and log sp ectra for enco der/middle/decoder to measure b oth di- rect and do wnstream effects on attention dynamics. W e use the curve sc hedule in Eq. ( 16 ) with λ = 0 . 2 . F or en tropy gating (when enabled), we use ( β , γ ) = (20 , 4) in Eq. ( 18 ). Intervention sc op e vs. lo gging sc op e. Unless otherwise stated, AFM is applied only to enco der cross-atten tion mo dules (attn2 in the downsampling path / in- put_blo c ks). Middle and deco der cross-attention mo dules are not directly mo d- ified; we rep ort their spectra only as downstr e am diagnostics induced b y the altered denoising tra jectory . Comp ar e d settings. W e ev aluate: Baseline (AFM disabled), curv e (AFM-curve; en tropy gating off ), and curv e + entrop y (AFM-curve; entrop y gating on). W e also include a negative con trol Baseline + entrop y , where entrop y is com- puted/enabled but AFM strength is set to λ =0 ; by construction, this should pro duce iden tical outputs to Baseline. A ttention lo gging and aggr e gation. W e instrumen t cross-attention mo dules to record step-wise statistics. F or frequency analysis, w e report enco der cross- atten tion (6 lay ers av eraged), whic h yields the most stable tra jectories. W e con- v ert token-softmax attention weigh ts in to a tok en-agnostic spatial signal using top- K aggregation (Sec. 3.1 ), then compute frequency statistics p er denoising step. Step c onvention. All plots use denoising progress u ( s ) (early → late); tick labels optionally show the corresponding sc heduler timesteps τ s (Sec. 3.1 ). Metrics. A tten tion-level: time–frequency heatmaps (radially binned normalized FFT p o w er ov er steps), HF energy ratio ρ s (Eq. 10 ), and log-ratio heatmaps b et w een settings. Image-level: LPIPS [ 27 ] on paired outputs. Alignmen t: CLIP cosine similarity distributions (ViT-B/32) [ 4 , 16 ]. A dditionally , we rep ort band- wise LPIPS (LPIPS low /LPIPS high ) by decomp osing outputs into Gaussian lo w- pass and residual high-pass comp onen ts to quantify structure vs. detail c hanges. 4.2 A ttention-lev el results Baseline cr oss-attention exhibits a c o arse-to-fine sp e ctr al evolution. W e first es- tablish a step-wise sp ectral signature of diffusion conditioni ng dynamics. Fig. 2 sho ws the time–frequency evolution of encoder cross-attention under Baseline. Energy concentrates near lo w radius early and progressively shifts out ward as denoising proceeds, consistent with a coarse-to-fine transition in atten tion struc- ture. W e observ e a consistent coarse-to-fine progression throughout denoising, supp orting that the sp ectral dynamics are stable across runs. A ttention F requency Mo dulation (AFM) 11 Fig. 2: Time–frequency ev olution of enco der cross-attention (top- K , mean). Left/middle: normalized radial energy distributions for Baseline and AFM-curv e. Righ t: log energy ratio log( E curve /E baseline ) , highligh ting frequency bands ampli- fied/suppressed b y AFM o ver denoising progress. The x-axis is denoising progress u ( s ) (early → late); tic k lab els show the corresp onding DDIM sc heduler timesteps τ s (decreasing). Dashed line indicates the HF cutoff radius r c used in ρ s . 45 40 30 25 15 5 pr ogr ess inde x (0=early late) 0.15 0.20 0.25 0.30 0.35 0.40 0.45 0.50 HF ratio (r 0.25) HF ratio (mean±std) | cutoff=0.25 | encoder | agg=topk | entr opy_off baseline curve 45 40 30 25 15 5 pr ogr ess inde x (0=early late) 0.15 0.10 0.05 0.00 0.05 0.10 0.15 0.20 HF HF(t) = HF[curve] - HF[baseline] (a) HF ratio ρ s and ∆ρ s . 0.0 0.2 0.4 0.6 0.8 1.0 nor malized radius 0.00 0.05 0.10 0.15 0.20 0.25 0.30 0.35 ring ener gy baseline early=45 late=5 0.0 0.2 0.4 0.6 0.8 1.0 nor malized radius 0.00 0.05 0.10 0.15 0.20 0.25 0.30 ring ener gy curve early=45 late=5 (b) Radial energy profiles. Fig. 3: Quantitativ e summary of coarse-to-fine and AFM effects (enco der, top- K ). (a) uses denoising progress u ( s ) (early → late); tic k lab els show decreasing DDIM timesteps τ s . (b) shows radial energy profiles ov er normalized radius r . AFM induc es time-aligne d sp e ctr al r e distribution. AFM-curv e is designed to mo dulate spectral allocation o ver denoising. In practice, enco der attention shows a consistent reduction in the late-stage HF ratio ρ s of the post-softmax top- K concen tration map (our diagnostic) under AFM-curve, indicating suppression of high-frequency fragmentation relativ e to the natural coarse-to-fine progression. Note that this “HF suppression” refers to ρ s measured on the post-softmax top- K atten tion summary S s , not the raw logit sp ectra being scaled by Eq. ( 16 ). Fig. 3 summarizes this effect using the HF ratio ρ s and ∆ρ s (curv e min us Baseline). L ate-stage summary. W e rep ort the HF ratio a veraged ov er the last 20% of steps (10 steps for S =50 ) and its paired difference ∆ρ late (curv e min us Baseline). Statistic al signific anc e of attention-sp e ctrum changes. W e ev aluate the paired ef- fect of AFM-curv e on the enco der HF ratio ρ s (Eq. 10 ) using matched prompt/seed generations. Bootstrap confidence interv als on the mean ∆ρ late exclude zero, and the direction is consistent across pairs. This indicates that AFM-curve sup- presses late-stage high-frequency fragmen tation in enco der cross-attention, ev en though it induces substan tial p erceptual c hanges at the image lev el (LPIPS) with largely ov erlapping CLIP cosine similarity distributions. W e rep eat the analysis with alternative spatial summaries (e.g., top-1 and v arying top- K ) and observe the same direction of ∆ρ late , suggesting the trend is not a top- K artifact. 12 Seungh un Oh and Unsang Park T able 1: Robustness of late-stage HF-ratio shift ∆ρ late across r c ∈ { 0 . 20 , 0 . 25 , 0 . 30 } and N sub ∈ { 50 , 100 } (top- K =8 ). ∆ρ late is av eraged ov er the last 20% denoising steps ( u ≥ 0 . 8 ). AFM is applied only to the enco der cross-attention; middle/deco der reflect do wnstream c hanges. W e rep ort the fraction of pairs with ∆ρ late < 0 and the min–max range of the mean ∆ρ late across sweeps. Stage Neg. pairs (%) Mean ∆ρ late (min–max) Encoder 98–99 [ − 0 . 063 , − 0 . 040 ] Middle 72–78 [ − 0 . 0346 , − 0 . 0317 ] Decoder 56–64 [ − 0 . 0161 , − 0 . 0092 ] R obustness acr oss r c and sample size. T o v erify that the observ ed encoder HF- ratio shift is not an artifact of a particular metric cutoff in ρ s (Eq. 10 ) or a small sample size, w e sweep the HF cutoff used in the metric, r c ∈ { 0 . 20 , 0 . 25 , 0 . 30 } , and subsampled prompt–seed pairs N sub ∈ { 50 , 100 } (top- K =8 ). W e define ∆ρ late = 1 |S late | P s ∈S late ( ρ curve s − ρ baseline s ) with S late = { s : u ( s ) ≥ 0 . 8 } . Across all sw eeps, the encoder sho ws a highly consisten t negativ e shift ( ∆ρ late < 0 for 98–99% of pairs), with mean ∆ρ late in the range [ − 0 . 063 , − 0 . 040] (T ab. 1 ). Middle/deco der sho w negative means but reduced sign consistency (middle: 72– 78% negativ e; decoder: 56–64% negativ e), indicating a weak er and more v ariable do wnstream tendency . Blo ck-wise diagnostics acr oss U-Net stages. All U-Net stages exhibit non-trivial sp ectral structure ov er denoising, but encoder cross-attention shows the most stable and monotonic coarse-to-fine tra jectory across prompts and seeds. A ccord- ingly , w e apply AFM only to enco der cross-atten tion and treat middle/deco der cross-atten tion as downstream diagnostics. Despite mo difying only the encoder, the mean late-stage HF-ratio shift is negativ e in middle and deco der, but with reduced sign consistency (T ab. 1 ), indicating w eaker and more v ariable do wn- stream effects. 4.3 Image-lev el con trollability and alignmen t W e compare AFM against SA G and F reeU as representativ e training-free inference- time baselines under identical prompts, seeds, and sampling settings [ 7 , 21 ]. Prompts are sampled from COCO and LAION, and results are av eraged ov er fixed seeds (2025–2028). All metho ds share the same scheduler, step count, CFG scale, and output resolution to isolate the effect of the interv en tion. Baselines and our setting. F or SA G w e use sag_scale=1.0. F or F reeU we use ( b 1 , b 2 , s 1 , s 2 ) = (1 . 1 , 1 . 2 , 0 . 9 , 0 . 2) . F or AFM-curve (ours) w e apply logit-space frequency mo dulation to cross-atten tion with r c = 0 . 25 and the timestep-scheduled curv e in Eq. ( 16 ), unless stated otherwise. W e use default/recommended hyper- parameters from the authors’ official implementations, without additional tun- ing. W e ev aluate all metho ds on b oth SD v1.5 (main) and SD v1.4 (chec kp oin t A ttention F requency Mo dulation (AFM) 13 T able 2: P aired LPIPS ↑ (mean ± std) under matc hed prompt/seed sampling. Higher indicates larger perceptual deviation from Baseline. Results are rep orted for SD v1.5 (main) and SD v1.4 (chec kp oin t robustness). SD v1.5 SD v1.4 Comparison COCO LAION COCO LAION Baseline vs. AFM-curve 0.237 ± 0.138 0.249 ± 0.142 0.232 ± 0.132 0.258 ± 0.144 Baseline vs. AFM-curve + en tropy 0.409 ± 0.131 0.419 ± 0.149 0.405 ± 0.134 0.417 ± 0.143 Baseline vs. Baseline (en tropy , λ =0 ) 0.000 ± 0.000 0.000 ± 0.000 0.000 ± 0.000 0.000 ± 0.000 Baseline vs. F reeU 0.300 ± 0.131 0.302 ± 0.158 0.296 ± 0.117 0.294 ± 0.112 Baseline vs. SAG 0.111 ± 0.084 0.125 ± 0.108 0.106 ± 0.055 0.121 ± 0.071 T able 3: Band-wise LPIPS decomp osition (COCO; N =200 ). W e decom- p ose eac h output image into I low = G σ ( I ) (Gaussian blur; σ =4 at 512 × 512 ) and I high = clip [0 , 1] ( I − I low + 0 . 5) , and compute LPIPS on eac h band after resizing to 256 × 256 . LPIPS low pro xies structure/lay out c hange and LPIPS high pro xies de- tail/texture change. High/Lo w is the p er-pair ratio LPIPS high /LPIPS low a veraged ov er pairs (undefined for the exact no-op). P (high > low) is the fraction of pairs where LPIPS high exceeds LPIPS low . Setting LPIPS low LPIPS high High/Low P (high > lo w ) AFM-curve 0.171 ± 0.115 0.188 ± 0.112 1.21 ± 0.28 78.0% AFM-curve + en tropy 0.312 ± 0.116 0.329 ± 0.110 1.08 ± 0.15 72.5% Entrop y only ( λ =0 ) 0.000 ± 0.000 0.000 ± 0.000 – – robustness) for image-level metrics under the same prompt/seed and sampling proto col. Pair e d p er c eptual deviation (LPIPS). W e quantify image-lev el sensitivity using LPIPS on paired prompt/seed generations (T ab. 2 ). AFM induces substan tial perceptual deviations relativ e to Baseline. As a negativ e con trol, enabling entrop y while disabling AFM ( λ = 0 ) yields iden tical outputs (LPIPS = 0 ), confirming that entrop y computation is a strict no-op and only mo dulates the effective strength when paired with AFM. Quantifying c ontr ol lability: structur e vs. detail (b and-wise LPIPS). T o make the notion of “con trol” explicit at the image lev el without ground-truth la youts, we decomp ose each generated image I in to a low-frequency comp onen t I low = G σ ( I ) (Gaussian blur) and a high-frequency residual I high = clip( I − I low + 0 . 5) . W e then compute LPIPS on each band b et w een paired baseline and edited outputs. LPIPS low serv es as a proxy for coarse structure/la yout changes, while LPIPS high captures fine-detail/texture c hanges. As shown in T ab. 3 , AFM tends to induce larger p erceptual changes in the high-frequency residual than in the lo w-frequency comp onen t ( LPIPS high > LPIPS low for the ma jority of pairs; see P (high > lo w ) ), suggesting that AFM steers generation c hanges to ward fine-detail/texture v ariations more than coarse structure. 14 Seungh un Oh and Unsang Park T able 4: CLIP cosine similarit y ↑ (ViT-B/32; mean ± std) under matc hed prompt/seed sampling. Higher indicates b etter text–image alignment. Results are rep orted for SD v1.5 (main) and SD v1.4 (chec kp oin t robustness). SD v1.5 SD v1.4 Setting COCO LAION COCO LAION Baseline 0.318 ± 0.031 0.306 ± 0.038 0.320 ± 0.027 0.306 ± 0.039 AFM-curve 0.319 ± 0.030 0.306 ± 0.039 0.318 ± 0.029 0.304 ± 0.040 AFM-curve + en tropy 0.316 ± 0.030 0.303 ± 0.040 0.317 ± 0.030 0.303 ± 0.038 F reeU 0.307 ± 0.031 0.310 ± 0.037 0.307 ± 0.029 0.310 ± 0.034 SAG 0.305 ± 0.032 0.307 ± 0.036 0.304 ± 0.029 0.308 ± 0.033 Entr opy acts as gain c ontr ol for fr e quency-b ase d e diting. Entrop y gating amplifies the frequency-based edit (T ab. 2 ); enabling entrop y with AFM disabled ( λ = 0 ) is a strict no-op. T ext–image alignment (CLIP c osine similarity). W e ev aluate prompt alignment using CLIP cosine similarity distributions (T ab. 4 ). Distributions largely ov erlap across settings with small mean differences, suggesting AFM redistributes ho w conditioning manifests rather than collapsing it. Baseline comparisons are sum- marized in T ab. 4 . The same qualitative trend is observ ed on SD v1.4, indicating c heckpoint-lev el robustness. 5 Conclusion W e presented a frequency-domain view of diffusion cross-attention b y inter- preting atten tion-derived concen tration maps as spatial signals on the laten t grid, revealing a stable coarse-to-fine sp ectral progression ov er denoising. Build- ing on this structure, we in tro duced Attention F r e quency Mo dulation (AFM), a training-free inference-time interv en tion that edits token-wise pr e-softmax cross- atten tion logits in the F ourier domain with a progress-aligned low/high-frequency sc hedule. AFM provides an interpretable inference-time knob to bias ho w con- ditioning manifests across spatial scales in atten tion-derived diagnostics, which in turn tends to yield stronger c hanges in image high-frequency residuals under our pro xy ev aluation. W e further find that attention entrop y mainly acts as an adaptiv e gain for the same frequency-based edit and is a strict no-op when AFM strength is zero. Limitations and p oten tial negativ e impact. Our coarse-to-fine interpreta- tion is based on spatial-frequency statistics of attention-deriv ed top- K concentra- tion maps, which quantify tok en-comp etition structure on the latent grid. These signals are pr oxies and do not directly measure image F ourier con tent, ob ject la yout, or semantic detail. A ccordingly , our image-level ev aluation (band-wise LPIPS) should b e interpreted as heuristic evidence rather than a ground-truth disen tanglement metric. A ttention F requency Mo dulation (AFM) 15 References 1. Chefer, H., Alaluf, Y., Vinker, Y., W olf, L., Cohen-Or, D.: Attend-and-excite: A ttention-based semantic guidance for text-to-image diffusion mo dels. arXiv preprin t arXiv:2301.13826 (2023) 2. He, Q., W ang, J., Liu, Z., Y ao, A.: Aid: A ttention in terp olation of text-to-image diffusion. In: Adv ances in Neural Information Pro cessing Systems (2024) 3. Hertz, A., Mok ady , R., T enen baum, J., Aberman, K., Pritc h, Y., Cohen-Or, D.: Prompt-to-prompt image editing with cross attention control. arXiv preprin t arXiv:2208.01626 (2022) 4. Hessel, J., Holtzman, A., F orb es, M., Le Bras, R., Choi, Y.: Clipscore: A reference- free ev aluation metric for image captioning. arXiv preprint arXiv:2104.08718 (2021) 5. Ho, J., Jain, A., Abbeel, P .: Denoising diffusion probabilistic models. arXiv preprin t arXiv:2006.11239 (2020) 6. Ho, J., Salimans, T.: Classifier-free diffusion guidance. arXiv preprint arXiv:2207.12598 (2022) 7. Hong, S., Lee, G., Jang, W., Kim, S.: Impro ving sample qualit y of diffusion mo dels using self-attention guidance. arXiv preprint arXiv:2210.00939 (2022) 8. Hu, E.J., Shen, Y., W allis, P ., Allen-Zh u, Z., Li, Y., W ang, S., W ang, L., Chen, W.: Lora: Lo w-rank adaptation of large language models. arXiv preprint arXiv:2106.09685 (2021) 9. Jiang, Z., Bolya, D., Y u, Q., W ang, J., Minhas, M.R., Moorthy , K., F riedric h, C.M., W ang, R., Hoffman, J.: Dissecting and mitigating diffusion bias via mec hanistic in terpretability . In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) (2025) 10. Karras, T., Aittala, M., Aila, T., Laine, S.: Elucidating the design space of diffusion- based generative mo dels. arXiv preprint arXiv:2206.00364 (2022) 11. Lin, T.Y., Maire, M., Belongie, S., Bourdev, L., Girshick, R., Ha ys, J., Perona, P ., Ramanan, D., Zitnick, C.L., Dollár, P .: Microsoft co co: Common ob jects in con text. In: Europ ean Conference on Computer Vision (ECCV) (2014) 12. Lin, Y., Bansal, H., Zhao, J., Gu, S., Li, J., Meng, Y., Li, X., Y ang, J., Ramanan, D.: Ctrl-x: Con trolling structure and app earance for text-to-image generation without guidance. In: Adv ances in Neural Information Pro cessing Systems (2024) 13. Liu, B., W ang, C., Huang, J., Jia, K.: T o w ards understanding cross and self- atten tion in stable diffusion for text-guided image editing. In: Proceedings of the IEEE/CVF Conference on Computer Vision and P attern Recognition (CVPR) (2024) 14. Mok ady , R., Hertz, A., Ab erman, K., Pritch, Y., Cohen-Or, D.: Null-text in- v ersion for editing real images using guided diffusion mo dels. arXiv preprint arXiv:2211.09794 (2022) 15. Mou, C., W ang, X., Xie, L., W u, Y., Zhang, J., Qi, Z., Shan, Y., Qie, X.: T2i- adapter: Learning adapters to dig out more controllable abilit y for text-to-image diffusion mo dels. arXiv preprint arXiv:2302.08453 (2023) 16. Radford, A., et al.: Learning transferable visual models from natural language sup ervision. arXiv preprin t arXiv:2103.00020 (2021) 17. Rahaman, N., Arpit, D., Baratin, A., Draxler, F., Lin, M., Hamprech t, F.A., Ben- gio, Y., Courville, A.: On the sp ectral bias of neural net works. Pro ceedings of the 36th International Conference on Machine Learning (ICML) (2019) 18. Rom bach, R., Blattmann, A., Lorenz, D., Esser, P ., Ommer, B.: High-resolution image syn thesis with laten t diffusion mo dels. In: Pro ceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) (2022) 16 Seungh un Oh and Unsang Park 19. Sc huhmann, C., et al.: Laion-5b: An open large-scale dataset for training next generation image-text mo dels. arXiv preprint arXiv:2210.08402 (2022) 20. Shannon, C.E.: A mathematical theory of comm unication. Bell System T echnical Journal (1948) 21. Si, C., Huang, Z., Jiang, Y., Liu, Z.: F reeu: F ree lunch in diffusion u-net. arXiv preprin t arXiv:2309.11497 (2023) 22. T ancik, M., Sriniv asan, P .P ., Mildenhall, B., F rido vich-Keil, S., Raghav an, N., Singhal, U., Ramamo orthi, R., Barron, J.T., Ng, R.: F ourier features let net- w orks learn high frequency functions in lo w dimensional domains. arXiv preprin t arXiv:2006.10739 (2020) 23. T ang, R., Liu, L., P andey , A., Jiang, Z., Y ang, G., Kumar, K., Stenetorp, P ., Lin, J., Türe, F.: What the daam: Interpreting stable diffusion using cross atten tion. arXiv preprint arXiv:2210.04885 (2022) 24. V aswani, A., Shazeer, N., Parmar, N., Uszk oreit, J., Jones, L., Gomez, A.N., Kaiser, Ł., Polosukhin, I.: Atten tion is all y ou need. arXiv preprin t arXiv:1706.03762 (2017) 25. Yi, Z., Xia, M., Li, R., Zhou, T., Lyu, S.: T ow ards understanding the w orking mec hanism of text-to-image diffusion mo del. In: Adv ances in Neural Information Pro cessing Systems (2024) 26. Zhang, L., Rao, A., Agraw ala, M.: A dding conditional control to text-to-image diffusion mo dels. arXiv preprint arXiv:2302.05543 (2023) 27. Zhang, R., Isola, P ., Efros, A.A., Shech tman, E., W ang, O.: The unreasonable effec- tiv eness of deep features as a perceptual metric. arXiv preprin t (2018)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment