Heddle: A Distributed Orchestration System for Agentic RL Rollout

Agentic Reinforcement Learning (RL) enables LLMs to solve complex tasks by alternating between a data-collection rollout phase and a policy training phase. During rollout, the agent generates trajectories, i.e., multi-step interactions between LLMs a…

Authors: Zili Zhang, Yinmin Zhong, Chengxu Yang

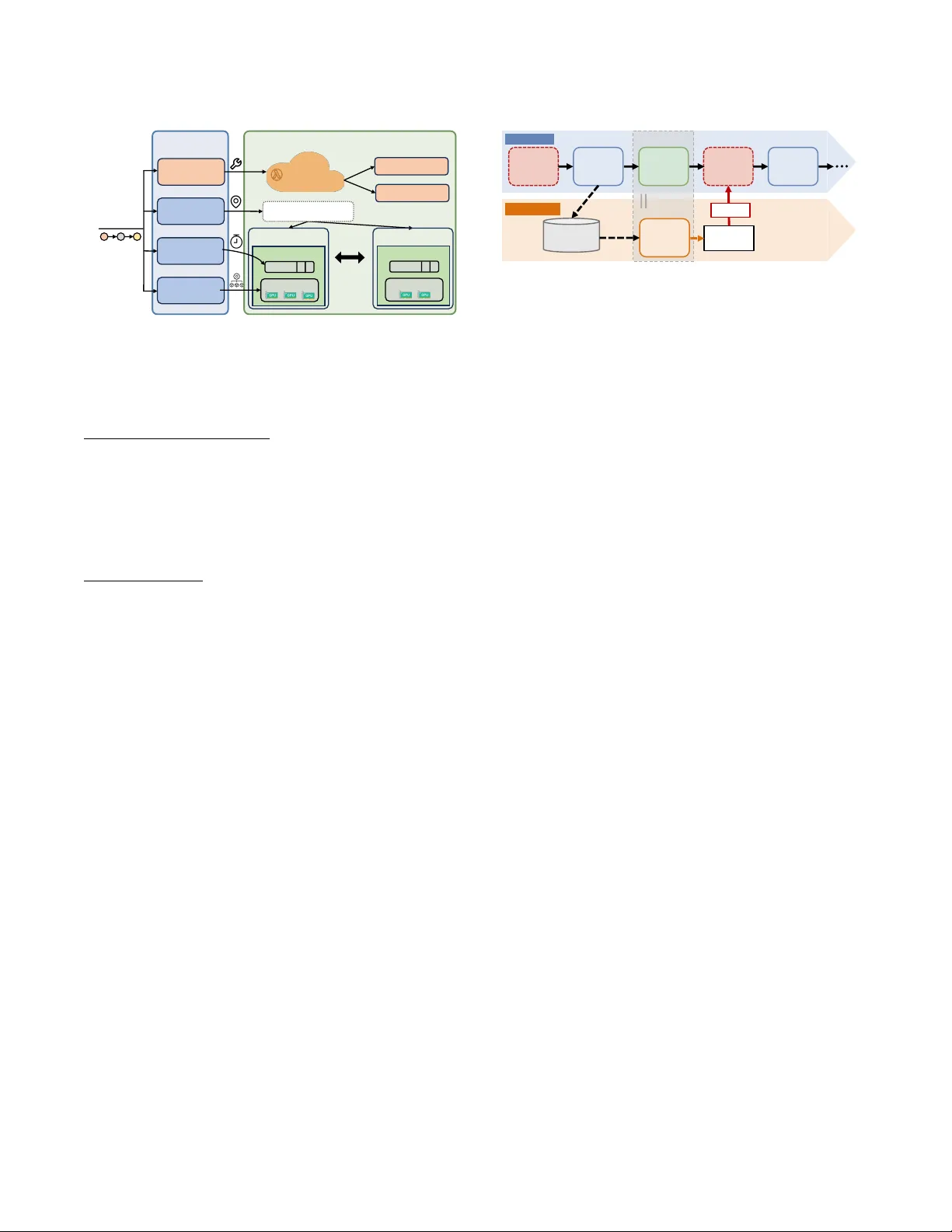

Heddle : A Distributed Orchestration System for Agentic RL Rollout Zili Zhang ∗ Yinmin Zhong ∗ Chengxu Y ang † Chao Jin ∗ Bingyang W u ∗ Xinming W ei ∗ Y uliang Liu † Xin Jin ∗ ∗ School of Computer Science , Peking University † Independent Researcher Abstract Agentic Reinforcement Learning (RL) enables LLMs to solve complex tasks by alternating between a data-collection roll- out phase and a p olicy training phase. During rollout, the agent generates trajectories, i.e., multi-step interactions be- tween LLMs and external tools. Y et, frequent to ol calls induce long-tailed trajector y generation that bottlenecks rollouts. This stems from step-centric designs that ignore trajectory context, triggering three system problems for long-tail trajec- tory generation: queueing delays, interference overhead, and inated per-token time. W e propose Heddle , a traje ctor y- centric system to optimize the when , wher e , and how of agen- tic rollout execution. Heddle integrates three core mecha- nisms: ❶ trajectory-level scheduling using runtime predic- tion and progressive priority to minimize cumulative queue- ing; ❷ trajectory-aware placement via presorted dynamic programming and opportunistic migration during idle tool- call intervals to minimize interference; and ❸ trajectory- adaptive resource manager that dynamically tunes model parallelism to accelerate the per-token time of long-tail tra- jectories while maintaining high throughput for short tra- jectories. Evaluations across diverse agentic RL workloads demonstrate that Heddle eectively neutralizes the long-tail bottleneck, achieving up to 2.5 × higher end-to-end rollout throughput compared to state-of-the-art baselines. 1 Introduction Agentic Reinforcement Learning (RL) [ 19 , 29 , 38 , 43 , 52 ] is characterized by an iterative cy cle of rollout (i.e., data collec- tion) and training (i.e., policy optimization). Moving beyond basic alignment [ 8 , 31 ] and static reasoning [ 14 , 54 ], agen- tic RL enables LLMs to solve complex tasks through itera- tive, multi-step rollouts with e xternal tool usage. This auton- omy allows LLM agents to navigate dynamic environments and continuously rene their strategies. This paradigm has achieved notable success in frontier industry products such as Claude Code [ 7 ], Deep Research [ 30 ], and OpenClaw [ 4 ]. Despite the promise of agentic RL, its training eciency is sever ely constrained by rollout phase . Unlike traditional LLM training that consumes static data, agentic RL relies on online interactions where agents generate interleaved reasoning contexts and real-time tool execution (i.e ., multi- step agentic trajectories), as shown in Figure 1. Empirical Short Tr a j e c t o r y Long - tail Tr a j e c t o r y LLM Gen. (Step 1) LLM Gen. (Step 1) To o l Exec. LLM Gen. (Final Step) To o l Exec. LLM Gen. (Step 2) To o l Exec. … LLM Gen. (Final Step) Resource Idle Rollout Starts Rollout Ends Figure 1: Illustration of the agentic trajectory and the straggler eect. analysis [ 17 , 33 ] identies rollout generation as the dominant bottleneck, consuming over 80% of the entire training time . This ineciency is primarily driven by the sever e straggler eect of rollout phase: a long-tail distribution of generated agentic trajectories wher e a small fraction of complex, multi- step interactions signicantly prolongs the rollout makespan. As shown in Figure 2, proling Qwen3 [ 48 ] coding agentic rollouts on the CodeForces [ 24 ] dataset rev eals that both the number of generated tokens and the tool execution latency are highly skewed. Consequently , as shown in Figure 1, the majority of computational resources remain idle waiting for these stragglers (i.e., long-tail trajectories) to complete, leading to severe r esource underutilization. T o rigorously deconstruct this rollout bottlene ck, we for- mulate the global rollout makespan as b eing dictated by the slowest trajectory in the batch T : 𝑇 = max 𝑖 ∈ T 𝑇 ( 𝑖 ) queue + 𝑁 ( 𝑖 ) tokens · 𝑇 · 𝛼 ( 𝑖 ) + 𝑇 ( 𝑖 ) tool (1) While token count 𝑁 tokens is algorithmically intrinsic and tool latency 𝑇 tool is addressed by elastic serverless infrastruc- ture [ 44 , 55 ], the optimization burden falls on GP U-centric factors. This formulation isolates three tractable components: queueing delay ( 𝑇 queue ), the contention-driven interference coecient ( 𝛼 ), and average base per-token time ( 𝑇 ). These map to three core orchestration decisions: when to sched- ule the long-tail trajectory (minimize its queueing), where to place the long-tail traje ctory (mitigate its compute and memory contention), and how to allocate resources for the long-tail trajectory (reduce its p er-token time). For scheduling, as shown in Figure 3, existing agentic RL frameworks [ 3 , 37 , 59 , 60 ] are fundamentally step-centric , treating agentic steps as isolated requests. This trajector y- agnostic design strips away critical metadata ( e.g., ID , step 1 0 10K 20K 30K Number of Output T okens 0 . 0 0 . 5 1 . 0 Density × 10 − 4 0 10 20 30 T ool Time (seconds) 0 . 0 0 . 2 0 . 4 Figure 2: Long-tailed distribution of coding agents. index, length) required for trajector y orchestration. Con- sequently , scheduling degenerates into a de facto round- robin policy where multi-step traje ctories repeatedly re- queue, inicting severe delays on long-tailed trajectories. For placement, e xisting frameworks typically adopt either cache- anity or least-load strategies. Static cache-anity triggers load imbalance due to lack of runtime migration, while least- load policies incur prohibitive recomputation ov erhead and exacerbate interference for long-tailed trajectories by redis- tributing trajectories per step. Finally , for resource allocation, rigid and homogeneous GP U allo cation fails to balance the high-throughput needs of short trajectories with the low- latency requirements of long-tailed trajectories. Ultimately , these isolated design choices compound to cause sever e re- source underutilization during agentic RL rollout. T o this end, we propose Heddle , a distributed framework that rear chite cts agentic RL r ollout. Mo ving beyond the step- centric design, Heddle adopts a traje ctor y-centric design to directly mitigate the execution stragglers. Specically , we introduce three synergistic techniques, e.g., trajector y- level scheduling, trajectory-aware placement, and trajectory- adaptive resour ce management, to optimize the when , where , and how of computation, respectively . First, trajectory-level scheduling dictates the scheduling ( when ) of execution through progr essive priority scheduling (§4.2). T o bypass the pitfalls of round-robin, Heddle assigns immediate execution precedence to long-tailed traje ctories. Since predicting trajector y length becomes more accurate as interactions unfold, Heddle eschews static prioritization. Instead, it employs a runtime predictor (§4.1) to continuously rene length estimates after each step, dynamically escalat- ing the priority of long-tailed trajectories. This progressive adjustment precisely accelerates true stragglers, eectively minimizing their cumulative queueing delay ( 𝑇 queue ). Second, trajector y-aware placement dictates the spatial distribution ( where ) of workloads via a two-phase strategy: presorted dynamic programming (§5.2) coupled with oppor- tunistic runtime migration (§5.3). During initial dispatch, pr e- sorted dynamic programming sorts trajectories by predicted length, computing an optimal placement that minimizes the interference coecient ( 𝛼 ) of long-tailed trajectories. Since prediction accuracy improves over time, Heddle incorpo- rates runtime traje ctory migration to rectify initial placement deviations by dynamically migrating trajectory contexts and To o l Execution Agentic Tr a j e c t or y 1 Agentic T rajectory 2 To o l Execution LLM Generation LLM Generation Rollout Worker 1 Rollout Worker 1 Environment 1 Environment 2 API Invocation API Invocation Figure 3: Existing agentic RL framework. prex caches. Crucially , Heddle masks this migration over- head by transmitting data asynchronously during tool-call intervals, keeping the critical execution path unblo cked. Ul- timately , this dual approach minimizes the interference of long-tailed trajectories while preserving high data lo cality . Third, trajectory-adaptive resource management optimizes the resource allocation problem ( how ) to match sp ecic tra- jectory characteristics. By breaking the rigid constraint of homogeneous worker congurations, Heddle assigns dis- tinct parallelism strategies to dierent traje ctories. It allo- cates low-latency , high-parallelism resources to accelerate the base per-token time ( 𝑇 ) of long-tail trajectories, while as- signing high-throughput, low-parallelism congurations to short trajectories. T o facilitate ecient online provisioning, we employ sort-initialized simulate d annealing (§6.2) that rapidly converges on a near-optimal parallelism mapping for each trajectory . This adaptive provisioning ensures that the execution of stragglers is accelerated without compromising the aggregate system throughput for short trajectories. In summary , we make the following contributions. • W e rigorously formulate the rollout makespan to decon- struct the system bottleneck. Our analysis isolates three critical performance bottlene cks for long-tail trajectories: queueing delay , interference overhead , and per-token time . • W e propose Heddle , a distributed agentic RL system with a trajectory-centric design. By integrating trajectory-level scheduling, traje ctory-aware placement, and trajector y- adaptive resource management, Heddle systematically optimizes when , where , and how computation occurs, ef- fectively neutralizing the long-tail bottleneck. • W e implement Heddle and evaluate it across diverse agen- tic RL workloads. The experimental results demonstrate that Heddle achieves up to 2.5 × higher throughput com- pared to state-of-the-art baselines. 2 Background and Motivation In this section, we introduce the backgr ound of agentic RL and conduct a comprehensive analysis of the rollout bottle- neck. Then, we summarize the systematic challenges, which motivate the design of Heddle . 2.1 Agentic RL Agentic Reinforcement Learning (agentic RL) [ 19 , 29 , 38 , 43 , 52 ] elevates LLMs into autonomous agents capable of dynamic environmental interaction. Departing from static 2 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 Normalized Agentic Trajectory Time 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 CDF Coding Search Math Figure 4: CDF of normalized agentic trajectory completion time. reasoning tasks [ 2 , 14 , 54 ], agentic RL emp owers LLMs to synthesize high-level plans and execute decisions within dy- namic environments. In this paradigm, during rollout, an LLM agent generates multi-step trajectories: at each step, it generates logical reasoning, invokes an external to ol, and observes the resulting state transition to calibrate its next action. This iterative loop continues until a terminal state is reached. In the training phase, the accumulated interaction trajectories are used to optimize the agent’s policy via algo- rithms like PPO [ 35 ] or GRPO [ 36 ], eectively grounding the LLM’s high-level planning in real-w orld execution. For instance, a coding agent [ 9 , 45 , 49 ] processing a high- level programming requirement generates a multi-step tra- jectory . In its initial step, the agent synthesizes a plan. Fol- lowing the plan, it then retrieves rele vant co debase context, generates candidate snippets, and triggers validation tests. It then integrates the resulting feedback into its context to iteratively debug errors across subsequent steps until all tests pass. As Figure 1 depicts, this looping process transforms a standard single-shot inference request into a long-running agentic trajectory characterized by the complex interleaving of LLM generation and external tool execution. Beyond traditional chatbots, agentic RL underpins production-grade systems like Claude Co de [ 7 ], De ep Re- search [ 30 ], and OpenClaw [ 4 ] that tackle intricate tasks via long-horizon tool interactions. As agentic RL integrates into LLM development pipelines, rollout eciency becomes a critical bottleneck. The ability to sustain high-throughput tra- jectory generation governs system eciency and dictates the nal model capabilities under realistic resource constraints. 2.2 System Characterization A standard RL training step comprises three phases: (1) roll- out (traje ctory generation), (2) inference (reward and r efer- ence computation), and (3) training (policy model update). As quantied in prior works [ 17 , 33 ], the rollout phase dom- inates the RL training pipeline, constituting a b ottleneck fundamentally rooted in a pronounced straggler eect . This straggler eect stems from the inherent long-tailed distributions of agentic trajectories. As Figure 2 illustrates for a representative coding agent task, both token generation and tool execution times are highly skewed. While most trajectories are computationally light and terminate quickly , 0 10 20 30 40 50 60 70 80 90 Prompt Index 0 5 10 15 Trajectory Index 0 10000 20000 Output Len. 𝝉 𝟐 " 𝝉 𝟏 " Figure 5: Trajectory length distribution across dierent prompts. a sparse subset demands extensive multi-step reasoning and prolonged tool interactions. In synchronous frameworks, these outliers become dominant stragglers, forcing cluster- wide idleness that disproportionately inates makespan and degrades RL training throughput. Ultimately , the rollout makespan is dictated by this crit- ical, longest trajector y . T o quantify this, Figure 4 proles trajectory completion times during agentic RL rollout using V erl [ 37 ] and SGLang [ 57 ], normalized against the maximum time to control for task variance. This proling reveals a severe latency tail, where the maximum completion time ex- ceeds the median by over 4 × . Because token counts ( 𝑁 tokens ) are algorithmically invariant and tool execution ( 𝑇 tool ) is ooaded to elastic ser verless infrastructure [ 5 , 6 , 21 , 55 ], our formulation isolates three GP U-centric targets per For- mula 1: queueing delay ( 𝑇 queue ), contention-induced interfer- ence overhead ( 𝑁 tokens · ( 𝛼 − 1 ) · 𝑇 ), and base per-token time ( 𝑁 tokens · 𝑇 ). Base per-token time is the contention-free time of average token generation at batch size one. 2.3 Challenges Based on the system characterization, we identify three key challenges in agentic RL rollout optimization: scheduling problem to minimize queueing delay , placement problem to minimize interference ov erhead, and resource allocation problem to minimize base per-token time. Scheduling. As shown in Figure 3, e xisting frameworks [ 3 , 37 ] decouple LLM generation from tool execution, treating steps as stateless r e quests. Consequently , sche duling defaults to round-robin [15] policy that ignores the agentic trajecto- ries, forcing long-tailed trajectories to accumulate excessive queueing delays acr oss multiple steps. While duration-based priority sche duling is a potential remedy , the high stochastic- ity of agentic rollouts renders static prediction ineective. As illustrated in Figure 5, identical pr ompts often yield highly divergent traje ctory lengths due to dynamic environment feedback. For instance, two trajectories ( 𝜏 1 and 𝜏 2 ) sharing a prompt might generate similar initial code; however , if 𝜏 2 fails the example test, it triggers multiple rectication steps, dras- tically extending its trajectory . This unpredictability makes minimizing queueing delay a fundamental challenge. Placement. Existing frameworks [ 3 , 37 ] rely on cache- anity or least-load placement, neither of which mitigates 3 1 32 64 96 128 160 Single W orker Batch Size 0 2 4 6 8 Normalized Max Time Coding Search Math Figure 6: Interference of long-tailed trajectories. the straggler eect. Cache-anity statically binds trajecto- ries to specic rollout workers to maximize prex cache hits. Howev er , unknown trajector y durations inevitably trig- ger severe load imbalance where some workers idle pre- maturely while others stall on long-tailed trajectories. Con- versely , least-load balancing redistributes trajectories p er- step, incurring prohibitive cache recomputation and exac- erbating the interference coecient ( 𝛼 ) of long-tailed tra- jectories. As shown in Figure 6, equalizing worker loads inadvertently co-locates long-tailed traje ctories with numer- ous short ones, forcing long-taile d traje ctories to execute at high batch sizes that inate per-token time through in- creased memor y and computation contention. Ultimately , existing placement methods fail to resolv e the straggler ef- fect be cause they lack a global, trajectory-aware persp ective of the agentic RL rollout. Resource Management. Existing frameworks [ 3 , 37 ] en- force rigid, homogeneous GP U congurations across all roll- out workers, ignoring the inherent heterogeneity of agentic trajectories. Spe cically , the large volume of short trajec- tories is throughput-bound and benets from low er mo del parallelism (MP) to minimize relative communication o ver- head. Conversely , long-taile d trajectories are latency-bound and require higher MP to reduce per-token time. As sho wn in Figure 7 (where 4 × 2 denotes four workers with two GP Us each), there is a latency-throughput trade-o: scaling data parallelism maximizes throughput but sacrices model par- allelism, severely inating per-token time. Consequently , as shown in Figure 11(a), each worker processes one agentic trajectory . Under homogene ous regimes, under-provisioned long-tail trajectories incur elevated p er-token time. This mismatch necessitates traje ctory-adaptive resource manage- ment that dynamically tunes MP degrees to align GP U re- sources with specic workload demands. 3 Heddle O verview W e propose Heddle , a distribute d system that mitigates agentic RL stragglers via a decoupled design (Figure 8). It separates global orchestration from local execution: a con- trol plane dictates the when , where , and how of traje ctory execution, while a data plane handles the underlying run- time. 8 × 1 4 × 2 2 × 4 1 × 8 0 5 10 15 Per- T oken Time (ms) Per- Token Time roughput 0 20000 40000 60000 80000 roughput (tokens/s) Figure 7: Performance under dierent resource allocation strategies. Control P lane. The control plane serves as the centralized brain of Heddle , maintaining a global view of cluster re- sources and agentic trajectory states. It is composed of three synergistic modules that collectively optimize the rollout. Trajectory-level Scheduler ( when ). The scheduler uses a trainable runtime predictor that fuses static prompt anal- ysis with dynamic runtime context to estimate trajector y length. Since prediction delity improv es monotonically as trajectory context accumulates, the sche duler adopts pro- gressive priority scheduling . By iteratively rening length estimates, it escalates the priority of long-taile d trajectories, granting them execution precedence to minimize cumulative queueing delay ( 𝑇 queue ). Trajectory-aware P lacement ( where ). This component maps agentic trajectories to rollout workers with a two-phase strat- egy . Initially , presorted dynamic programming spatially seg- regates long-tailed trajectories from short ones, minimizing the interference coecient ( 𝛼 ) of long-tailed traje ctories. At runtime, the engine monitors for load divergence caused by evolving pr ediction accuracy (e .g., initially misclassied long- tailed trajectories). Upon dete ction, it triggers opportunistic trajectory migration , instructing the data plane to migrate trajectories during non-blocking tool execution inter vals. Resource Manager ( how ). This component replaces homoge- neous provisioning with a trajectory-adaptive resource allo- cation plan tailored to the specic parallelism requirements of each agentic trajector y . It assigns high-degree model par- allelism (MP) to latency-sensitive long-tailed trajectories to accelerate per-token time ( 𝑇 ), while utilizing lower MP de- grees for throughput-oriented short trajectories. T o ol Manager . The to ol manager orchestrates tool invoca- tions via an elastic ser verless backend, eliminating clus- ter management overhead. By leveraging FaaS optimiza- tions [ 26 , 55 ], it eectively mitigates cold-start latencies and absorbs transient computational bursts during agentic roll- out. The pay-as-you-go billing model reduces the total cost of ownership compared to over-provisioned static resources. Data Plane. The data plane comprises a cluster of adaptive rollout workers that execute intensive agentic workloads. 4 Agentic Tra jec tor ie s Control Plane Data Plane Rollout W orker 1 Tra j e ct o r y Placement LLM Generation Queue GPU Resource Tra j e ct o r y R o ut e r Rollout W orker 2 Resource Manager Tra j e ct o r y Scheduler Queue GPU Resource LLM Generation To o l Manager Serverless Backend Environmen t 1 Environmen t 2 Agentic Traje cto ry Figure 8: Over view of Heddle . Upon receiving contr ol plane directives, these w orkers mate- rialize heterogeneous workers to orchestrate the interleaved execution of model reasoning and tool execution. Opportunistic State Migration. The data plane masks migra- tion overhead by exploiting the natural boundaries b etween model reasoning and tool execution. When a traje ctory trig- gers a tool call, the worker yields its GP U resources. Heddle leverages this idle interval to execute traje ctory migration , transferring the KV cache to a target worker designated by the control plane without pausing the critical e xecution path. Runtime T elemetr y . The data plane closes the feedback loop by streaming real-time execution metrics, such as current trajectory context, tool execution latency , and cache usage, back to the control plane. This telemetr y allows the runtime trajectory predictor to correct its estimates and enables the scheduler to rene priorities for subsequent steps. 4 Trajectory-level Scheduler T o mitigate the queuing delay of long-tail traje ctories in Formula 1, we propose trajector y-level scheduling , shifting agentic rollout fr om a step-centric to a traje ctory-centric par- adigm. As this appr oach relies on trajectory identication, we rst intr o duce progr essive trajector y prediction to dynami- cally identify potential long-tail trajectories at runtime. 4.1 Progressive trajectory Prediction Problem. Conventional prompt analysis [ 16 , 33 , 59 ] relies on static mechanisms that estimate agentic trajectory length a priori using historical data. However , these metho ds fail to capture the dynamic, multi-step nature of agentic rollouts. In RL algorithms like PPO [ 35 ] and GRPO [ 36 ], a single prompt spawns a group of agentic trajector y samples for advantage estimation. T o encourage exploration, high sampling temper- atures are often employed, inherently inducing high output variance. Furthermore , traje ctory length is also dictated by dynamic environmental feedback. For instance, in a coding agent, identical prompts can yield divergent trajectories: 𝜏 1 may pass example test cases in one step, while 𝜏 2 requires Pending Queue (Step 1) LLM Generation (Step 1) Pending Queue (Step 2) LLM Generation (Step 2) Accumulated Context Estimated Length PPS Main Path Async Path To o l Execution (Step 1) Tra j ec t or y Predictor Parallel Execution Figure 9: Progressive trajectory prediction. multiple rectication steps, signicantly extending 𝜏 2 ’s lifes- pan. Thus, as Figure 5 shows, trajectories within the same group exhibit signicant length divergence (i.e., intra-group variance). This runtime stochasticity renders static predic- tion ineective for precise agentic trajectory prediction. Methodology . W e propose progressive trajector y prediction to capitalize on the iterative nature of agentic interaction. As shown in Figure 9, the predictor monotonically renes length estimates as LLM generations and tool outputs enrich the agentic trajector y’s context. Notably , the initial step’s execution plan serves as a strong semantic indicator , anchor- ing the prediction with an accurate early-stage estimate. T o train the predictor , we harvest historical traje ctories and de- compose them into (context, remaining_length) tuples. W e le verage these data to ne-tune a lightweight pre-trained regression model (i.e., Qwen-0.6B). At runtime , Heddle in- vokes this model after each step to up date estimates and progressively r e duce uncertainty . W e address potential overhead concerns through ecient model design. First, training cost is trivial. The lightweight regression model requires only minutes to converge. Sec- ond, deployed as a microservice, the mo del incurs negligible inference latency due to its compact parameter size. Cru- cially , as shown in Figure 9, the prediction is performed asynchronously alongside tool execution. This parallelism eectively masks the inference cost, ensuring zero additional overhead on the agentic trajectory’s critical path. 4.2 Progressive Priority Scheduling Problem. As shown in Figure 3, existing agentic RL frame- works [ 3 , 37 ] are architected for stateless interactions, typ- ically decoupling LLM generation from tool execution. In this paradigm, the system views an agentic trajectory not as a continuous lifecycle, but as a fragmented sequence of independent requests. Consequently , scheduling defaults to a trajectory-agnostic round-robin policy , where the e xecu- tion quantum is rigidly limited to a single step regardless of the trajector y’s accumulated progress. Specically , every time a trajectory returns from a tool execution, it is treated as a de novo LLM generation request and relegated to the tail of the waiting queue. For a long-tail traje ctory requiring 𝑀 steps, this imposes a recurring queuing penalty across 𝑀 distinct scheduling rounds. By disproportionately penalizing 5 Algorithm 1 Progressive Priority Scheduling Require: Pending queue 𝑄 , active set 𝐴 , predictor P // Invoked when trajector y 𝜏 returns from tool execution 1: function Schedule ( 𝜏 ) 2: 𝜏 . pred_len ← P ( 𝜏 . context ) ⊲ progressive prediction 3: 𝜏 . priority ← 𝜏 . pred_len ⊲ longer ⇒ higher priority 4: Insert 𝜏 into 𝑄 5: Sort 𝑄 by descending priority // Preemptive execution 6: 𝑟 min ← arg min 𝑟 ∈ 𝐴 𝑟 . priority 7: if 𝑄 . top . priority > 𝑟 min . priority then 8: Evict 𝑟 min from 𝐴 ; persist K V cache 9: Move 𝑟 min to 𝑄 10: Promote 𝑄 . top to 𝐴 long-tail trajectories with compounding queuing delays, this approach prov es detrimental to the global rollout makespan. Methodology . T o optimize rollout makespan, we use pro- gressive priority scheduling (PPS), an adaptive approxima- tion of the longest-processing-time-rst (LPT) discipline [ 13 ]. LPT minimizes batch makespan by strictly prioritizing long- duration tasks, thereby mitigating the cluster-wide idleness caused by the trailing stragglers. PPS operationalizes this principle by dynamically mapping the progressive trajectory prediction to scheduling priorities. As execution unfolds and prediction delity improves, PPS r eorders the LLM inference requests in the pending queue for each rollout worker . This ensures that identied long-tail trajectories gain execution precedence over short trajectories. Preemptive Exe cution. T o rigorously enforce the LPT dis- cipline, we integrate preemptive execution into SGLang [ 57 ]. This mechanism extends prioritization beyond the waiting queue, enabling high-priority pending generation requests to interrupt active low-priority r equests. Specically , whenev er a pending r equest outranks the lowest-priority active request, the system preempts the active request with the low est pri- ority , relegating it to the waiting queue while persisting its prex cache. The high-priority request is immediately pro- moted to the newly vacated slot. This guarantees immediate execution for high-priority requests, signicantly minimiz- ing the queuing latency of long-tail trajectories. Algorithm 1 illustrates the detailed pseudocode. 5 Trajectory-aware P lacement T o mitigate the interference factor of long-tailed traje ctories in Formula 1, we propose traje ctory-aware placement . W e begin by formalizing the placement optimization problem. Subsequently , we introduce our solution: optimal presorted dynamic programming algorithm, augmented by runtime trajectory migration. 5.1 Problem formulation In agentic rollout, multiple LLM r ollout workers are deployed to execute concurrent trajectories through parallel batching mechanisms. However , this introduces inevitable interfer- ence for token computation due to GP U resour ce contention. W e assume that the average base per-token time ( batch size = 1 ) is a constant 𝑇 and let 𝐿 denote the trajector y length function. Given 𝑛 agentic trajectories { 𝜏 1 , . . . , 𝜏 𝑛 } and 𝑚 LLM rollout workers, we seek a partitioning strategy { 𝑔 1 , . . . , 𝑔 𝑚 } , where 𝑔 𝑖 contains a set of agentic trajectories { 𝜏 𝑖 1 , . . . , 𝜏 𝑖 𝑘 } and 𝑔 𝑖 is assigned to the 𝑖 -th worker . W e dene 𝐹 ( 𝑔 𝑖 ) as the interference factor for group 𝑔 𝑖 . T o minimize the global rollout makespan, we dene the optimization objective as: min { 𝑔 1 ,. . ., 𝑔 𝑚 } 𝑚 max 𝑖 = 1 𝐹 ( 𝑔 𝑖 ) × 𝑘 max 𝑗 = 1 𝐿 ( 𝜏 𝑖 𝑗 ) × 𝑇 (2) The goal is to minimize the completion time across 𝑚 groups, where the group execution time is the product of its interfer- ence factor and the duration of its longest trajectory . This optimization problem is fundamentally NP-har d. In heterogeneous settings (i.e., resource congurations vary across w orkers), the search space scales as 𝑂 ( 𝑚 𝑛 ) ; ev en in the homogeneous case, complexity is governed by the Stirling number of the se cond kind, 𝑆 ( 𝑛, 𝑚 ) [ 40 ]. This combinatorial explosion renders exact enumeration intractable. Further- more, the interference function 𝐹 lacks a close d-form ana- lytic expression, pr eventing the use of o-the-shelf solvers. These barriers preclude exact resolution during the agentic RL rollout, necessitating our specialized presorted dynamic programming algorithm. 5.2 Presorted D ynamic Programming W e ground our placement algorithm on some simplifying premises. First, w e treat trajectory lengths as known a priori . Since the initial prediction remains subject to estimation errors (§ 4.1), we address the prediction deviations via tra- jectory migration (§ 5.3). Second, we model the cluster as homogeneous workers with the same resource congura- tions. W e subsequently relax this constraint in § 6, extending our solution to heterogeneous worker congurations. Finally , we posit that the interference factor is a monotonically in- creasing function determined exclusively by the size of the agentic trajectory group, a property that holds empirically for standard workloads. Insight. W e derive a critical structural insight: Lemma 5.1. Given agentic trajectories in descending length order , there exists an optimal partitioning strategy where each group constitutes a contiguous subsequence of the sorted list. Proof. Consider an optimal partition with two (generaliz- able to 𝑚 ) groups 𝑔 1 and 𝑔 2 , where 𝜏 1 ∈ 𝑔 1 and 𝐿 ( 𝜏 1 ) ≥ 6 · · · ≥ 𝐿 ( 𝜏 𝑛 ) . Suppose the partition is non-contiguous. Then there exists 𝜏 𝑖 ∈ 𝑔 2 such that 𝐿 ( 𝜏 𝑖 ) > 𝐿 ( 𝜏 𝑚𝑖𝑛 ) for some 𝜏 𝑚𝑖𝑛 ∈ 𝑔 1 . W e swap 𝜏 𝑖 and 𝜏 𝑚𝑖𝑛 . Be cause group sizes are invariant, interference factors 𝐹 ( 𝑔 1 ) and 𝐹 ( 𝑔 2 ) remain un- changed according to the aforementioned premise. In 𝑔 1 , the makespan is still dictated by 𝜏 1 since 𝐿 ( 𝜏 1 ) ≥ 𝐿 ( 𝜏 𝑖 ) , so 𝑔 1 ’s completion time is unchanged. In 𝑔 2 , replacing 𝜏 𝑖 with the shorter 𝜏 𝑚𝑖𝑛 ensures the maximum trajectory length is non-increasing. Consequently , the global rollout makespan is non-increasing. Iterative swaps eventually yield a contiguous partition { 𝑔 ∗ 1 , 𝑔 ∗ 2 } satisfying Lemma 5.1 without increasing the rollout makespan of the optimal original partition. Leveraging this insight, we presort agentic traje ctories by descending length and enforce a contiguity constraint on partitioning, which guarantees that the optimal solution derived under this constraint is globally optimal. Dynamic Programming. Given the presorted order , our problem becomes analogous to the linear partition prob- lem [ 39 ]. The contiguous partitioning constraint r educes the search space from the Stirling number of the second kind, 𝑆 ( 𝑛, 𝑚 ) , to 𝑛 − 1 𝑚 − 1 . While signicantly smaller , this magnitude remains intractable for runtime enumeration. T o address this, we propose a dynamic programming algorithm that eciently resolves the optimal partition solution. State Initialization. W e dene 𝑑 𝑝 [ 𝑖 ] [ 𝑗 ] as the optimal makespan when partitioning the rst 𝑖 trajectories across 𝑗 workers. The initialization focuses on the single worker case ( 𝑗 = 1 ). For 𝑖 ∈ [ 1 , 𝑛 ] , we set 𝑑 𝑝 [ 𝑖 ] [ 1 ] = 𝐿 ( 𝜏 1 ) × 𝑇 × 𝐹 ( { 𝜏 1 , . . . , 𝜏 𝑖 }) , with the b oundary condition 𝑑 𝑝 [ 0 ] [ 0 ] = 0 . In this formulation, 𝐿 ( 𝜏 1 ) represents the maximum trajectory length, 𝐹 captures the interference factor , and 𝑇 denotes the base per-token time. State Transition. The state transition function is dened as: 𝑑 𝑝 [ 𝑖 ] [ 𝑗 ] = 𝑖 − 1 min 𝑘 = 1 max 𝑑 𝑝 [ 𝑘 ] [ 𝑗 − 1 ] , 𝐿 ( 𝜏 𝑘 + 1 ) × 𝑇 × 𝐹 ( { 𝜏 𝑘 + 1 , . . . , 𝜏 𝑖 }) (3) Formula 3 recursively identies the optimal partition index 𝑘 separating the 𝑗 -th group from the preceding 𝑗 − 1 groups. The variable 𝑘 iterates through feasible split positions (i.e ., 𝑘 ∈ [ 1 , 𝑖 − 1 ] ), assigning { 𝜏 1 , . . . , 𝜏 𝑘 } to previous workers and { 𝜏 𝑘 + 1 , . . . , 𝜏 𝑖 } to the current 𝑗 -th worker . The inner max operator models the parallel execution nature , i.e ., the global makespan is dictated by the maximum worker’s makespan. Crucially , due to descending order sorting, 𝐿 ( 𝜏 𝑘 + 1 ) repre- sents the dominant length in the current group. Finally , the outer min operator selects the 𝑘 that minimizes the global makespan, ensuring load balancing across workers. The combination of presorting and dynamic programming yields a globally optimal solution based on Lemma 5.1. Fig- ure 10 illustrates the trajector y of this algorithm. Its time Input & Presorting Input Agentic T rajectories (with Pred Length) Presorting Sorted Trajectories Lemma 5.1 Optimal groups: 𝑔 ! 𝑔 " 𝑔 # Contiguous 𝜏 ! 𝜏 " 𝜏 # 𝜏 $ … 𝑔 ! 𝑔 " 𝑔 % Sorted Trajectories 𝑑𝑝 [𝑖][ 𝑗] Worke rs 1 2 … 𝑛 1 2 … 𝑚 State Transition 𝑑𝑝 𝑖 𝑗 = 𝑚𝑖𝑛 !"# $%& max + ( 𝑑𝑝 𝑘 𝑗 − 1 , 𝐿 𝜏 !'& ∗ 𝑇 ∗ 𝐹(𝑔 ( )) Previous workers max time Longest agentic trajectory in the 𝑗 !" worker Interference Factor Profiler Simulator System Execution Tr aj e c t o ry Metadata ID, Placement, Length … Placement Strategy Trajectory Router (Rust- based) • Fast forward • Tra je ct o ry m ig r at io n Worke r 1 Worke r 2 Worke r m … Traj ecto ry Mig rati on 𝑔 ! : 𝜏 ! , 𝜏 " 𝑔 " : 𝜏 # , 𝜏 & … 𝑔 % : 𝜏 ' , 𝜏 '(! … 𝜏 ) Rollout Execution Minimized Rollout time … Tr aj e c t o ri e s Dynamic Programming Figure 10: Illustration of the placement algorithm. complexity is 𝑂 ( 𝑛 2 𝑚 ) , a drastic reduction from the combina- torial baseline 𝑂 ( 𝑛 − 1 𝑚 − 1 ) . However , for large-scale workloads, 𝑂 ( 𝑛 2 𝑚 ) remains computationally substantial. T o further mit- igate the overhead, we aggregate short trajectories below a dened threshold after sorting. This heuristic reduces the eective input size 𝑛 , signicantly accelerating execution with negligible impact on solution quality . Interference Factor . T o derive the interference factor 𝐹 ( 𝑔 𝑖 ) , we employ a proler-based simulation approach. W e rst construct a proler to characterize per-token time across a spectrum of batch sizes. These proles feed into a simulator that models the concurrent execution of trajectories in 𝑔 𝑖 , capturing batch-dependent interference and queuing delays. Agentic Trajectory Router . W e implement a lightweight, Rust-based router to dispatch LLM generation requests. This component maintains essential trajector y metadata, includ- ing placement assignments, predicted lengths, and presorted ranks. During initialization, the router synchronizes with the LLM rollout workers. Then, it ingests the partitioning strategy from the control plane and routes each trajectory to its designate d worker , strictly enforcing the placement decisions derived from presorted dynamic programming. 5.3 Trajectory Migration The presorted dynamic programming algorithm optimizes placement under the assumption of perfect prediction accu- racy . Howe ver , as noted in § 4.1, prompt-only estimates suer from inherent variance. Relying solely on this static assign- ment exacerbates the resource contention for unanticipated long-tailed trajectories. T o mitigate this, we emplo y a run- time mechanism that adapts placement base d on pr ogressive prediction updates. Trajectory Migration Strategy . W e employ trajectory mi- gration to dynamically correct load imbalances. When a trajectory’s predicted length is updated, the trajectory router determines its new rank in the sorte d order . T o avoid re- running the e xp ensive dynamic programming algorithm, we 7 scale the original partition sizes, dened by each group ’s size { 𝑠 1 , . . . , 𝑠 𝑚 } ( 𝑠 𝑖 = | 𝑔 𝑖 | ), proportionally to the number of remaining active trajectories, 𝑛 ∗ . Spe cically , the eective capacity of the 𝑖 -th group becomes 𝑠 𝑖 × 𝑛 ∗ 𝑛 . Using these scaled sizes, the router identies the appropriate worker for the trajectory’s new rank and executes a migration if the target worker diers from the current host. KV Cache Migration. T o mitigate the prell overhead for long-tailed trajectories, we implement a live KV cache migra- tion me chanism. Up on reassignment, the router identies the resident prex cache and initiates a direct transfer to the target worker via GP U-Direct RDMA, ensuring high- throughput and low-latency transmission. T o manage con- current migrations and prevent endp oint contention, we implement a trajectory-aware transmission scheduler . The router prioritizes migration requests in descending order of trajector y length. In each scheduling epo ch, it greed- ily sele cts the longest available trajectory’s migration re- quest, skipping any request that shares a source or desti- nation worker with selected or running migration requests. This transmission scheduler iteratively constructs batches of strictly parallel, non-conicting migration requests. By prioritizing long trajectories and enforcing endpoint exclu- sivity , this strategy maximizes bandwidth utilization while ensuring timely migration for critical long-tailed trajectories. 6 Trajectory-adaptive Resource Manager T o reduce the base p er-token time ( 𝑇 in Formula 1) of long- tailed trajectories while preserving the high throughput of short trajectories, we propose a trajectory-adaptive resour ce manager . W e formally dene the allocation problem and em- ploy a simulated annealing algorithm to eciently converge to a near-optimal solution. 6.1 Problem Formulation In Heddle ’s agentic rollout, we deploy multiple LLM work- ers with heterogeneous resource congurations and model parallelism strategies. As illustrated in Figure 11( b), our core insight is to provision workers with higher degrees of model parallelism for long-tailed trajectories (to minimize per-token time) while assigning lower degrees of model par- allelism to short trajectories (to maximize throughput). For- mally , we allocate a total GP U budget 𝑁 = Í 𝑚 𝑖 = 1 𝑁 𝑖 across 𝑚 workers with model parallelism (MP) degrees { 𝑁 1 , . . . , 𝑁 𝑚 } . Transitioning to heterogeneous workers complicates the original placement problem formulation (§ 5.1) by requiring the joint optimization of trajector y partitions { 𝑔 𝑖 } and MP al- locations { 𝑁 𝑖 } . T o maintain tractability , we decouple it into two manageable subproblems, i.e., mapping and resource allocation. solved via a two-stage heuristic: sort-initialized simulated annealing . Resource Idle Resource Idle MP=2 Tok e n Ge ne r at io n MP=4 (a) (b) MP=2 MP=2 MP=2 Time Reducti on Figure 11: Insight of heterogeneous resource allocation. 6.2 Sort-Initialized Simulated Annealing Mapping. The key insight for our mapping strategy is that the trajectory ordering established in § 5.2 serves as a str ong structural prior . Specically , the partitions { 𝑔 1 , . . . , 𝑔 𝑚 } are inherently sorted in descending order of their predicted lengths. Since our objective is to assign long-tailed trajecto- ries to workers with higher model parallelism (to minimize per-token time), we identically sort the worker resources { 𝑁 1 , . . . , 𝑁 𝑚 } in descending order of their model parallelism degrees. W e then deterministically assign the 𝑖 -th trajector y partition 𝑔 𝑖 to the 𝑖 -th worker possessing 𝑁 𝑖 GP Us. Resource Allocation. Optimizing the GP U allo cation { 𝑁 1 , . . . , 𝑁 𝑚 } is critical, as each 𝑁 𝑖 dictates the per-token time for partition 𝑔 𝑖 and the resulting global makespan (§ 5.1). A naive baseline would exhaustiv ely enumerate all valid al- locations, evaluate the completion time via the presorted dynamic programming (DP) algorithm for each, and select the global minimum. However , a single DP execution re- quires O ( 𝑚 · 𝑛 2 ) time, taking approximately 42 milliseconds for 𝑛 = 6400 and 𝑚 = 16 (§ 7.5). Given the total GP U budget 𝑁 = Í 𝑚 𝑖 = 1 𝑁 𝑖 , the number of valid allocations scales as 𝑁 − 1 𝑚 − 1 . Consequently , an exhaustive search across this combinatori- ally explosive space is computationally intractable. T o eciently navigate this space, we use a simulated an- nealing heuristic. W e initialize the search with a randomized, sorted allo cation: each capacity 𝑁 𝑖 is sampled from prede- ned model parallelism degrees, and the resulting array is sorted in descending order . W e set the initial annealing tem- perature to the starting state’s estimated completion time, computed by applying the sort-initialize d mapping and exe- cuting the presorted DP algorithm to determine trajectory placement. During each search iteration, we perturb the current allocation by randomly applying one of three state transitions: redistribution , split , or merge . While we uncon- ditionally accept a proposed allocation that reduces overall completion time, we also accept suboptimal states with a temperature-dependent probability to escape local optima. The search terminates when the temperature drops below a predened threshold 𝜖 , yielding the nal conguration for the agentic rollout. Algorithm 2 details this pseudocode. 8 Algorithm 2 Sort-Initialize d Simulated Annealing Require: T otal GPU budget 𝑁 , w orkers 𝑚 , MP degrees D , cooling rate 𝛼 , threshold 𝜖 Ensure: Optimal allo cation { 𝑁 ∗ 1 , . . . , 𝑁 ∗ 𝑚 } 1: Sample 𝑁 𝑖 ∼ D for 𝑖 ∈ [ 1 , 𝑚 ] s.t. Í 𝑖 𝑁 𝑖 = 𝑁 2: Sort { 𝑁 1 , . . . , 𝑁 𝑚 } in descending order ⊲ sort-initialized 3: 𝐶 ← PresortedDP ( { 𝑁 1 , . . . , 𝑁 𝑚 }) ⊲ initial makespan 4: 𝑇 ← 𝐶 ; 𝐶 ∗ ← 𝐶 ; { 𝑁 ∗ } ← { 𝑁 } 5: while 𝑇 > 𝜖 do 6: { 𝑁 ′ } ← Perturb ( { 𝑁 } ) ⊲ redistribute / split / merge 7: Sort { 𝑁 ′ 1 , . . . , 𝑁 ′ 𝑚 } in descending order 8: 𝐶 ′ ← PresortedDP ( { 𝑁 ′ 1 , . . . , 𝑁 ′ 𝑚 }) 9: Δ ← 𝐶 ′ − 𝐶 10: if Δ < 0 or rand ( ) < 𝑒 − Δ / 𝑇 then 11: { 𝑁 } ← { 𝑁 ′ } ; 𝐶 ← 𝐶 ′ 12: if 𝐶 < 𝐶 ∗ then 13: 𝐶 ∗ ← 𝐶 ; { 𝑁 ∗ } ← { 𝑁 } 14: 𝑇 ← 𝛼 · 𝑇 15: return { 𝑁 ∗ 1 , . . . , 𝑁 ∗ 𝑚 } By coupling sort-initialized mapping with simulated annealing-based allocation, w e cr eate a robust heterogeneous management heuristic. While the former aligns long-tailed trajectories with high MP workers, the latter navigates the combinatorial search space to optimize GP U provisioning. Jointly , these mechanisms mitigate long-trajector y computa- tion bottlenecks and preserve short-trajectory throughput, ultimately minimizing the global rollout makespan. 7 Evaluation W e implement Heddle based on V erl [ 37 ], SGLang [ 57 ], and Ray [ 28 ] with 15K lines of Rust, Python, and C++. In our eval- uation, we rst evaluate the overall performance of Heddle compared to state-of-the-art agentic RL systems, and then we conduct ablation studies to evaluate the eectiveness of each component of Heddle . In our ablation studies (§ 7.2-§ 7.4), we evaluate each component individually while keeping all other system components and experimental congurations identical to the overall performance evaluation. Finally , we analyze the overhead of Heddle ’s key components in § 7.5. T estb ed. W e conduct our experiments on a cluster of eight servers, totaling 64 GP Us. Each no de is equippe d with eight NVIDIA Hopper GP Us, 160 Intel Xeon CP U cores, and 1.8 TB of host memor y . Intra-no de GP U communication utilizes 900 GB/s N VLink, while inter-node networking is handled by 400 Gb/s N VIDIA Mellanox InniBand supp orting GP UDirect RDMA. Our system software stack includes Py T orch 2.8.0 and CUD A 12.2 (driver 535.161.08). For distributed orchestration and messaging, we use Ray 2.49.0 and ZMQ 4.3.5. Models. W e evaluate Heddle with instruction-tuned Qwen3 [ 48 ] mo dels (8B, 14B, and 32B) possessing founda- tional reasoning and tool-use capabilities. By b enchmarking across these three parameter scales, we evaluate Heddle ’s performance against baselines across a diverse spectrum of computational intensities and resource budgets. W orkloads. W e evaluate Heddle across three representa- tive agentic RL domains: coding, search, and math. For the coding agent [ 49 ], we utilize the CodeForces dataset [ 24 ], equipping the LLM with a sandb ox tool to execute code, run test suites, and check formatting. The search agent [ 19 ] op- erates on the HotpotQA dataset [ 50 ], utilizing a web search tool [ 27 ] for online information retrieval and multi-hop rea- soning. Lastly , the math agent [ 12 ] leverages the DAPO-Math dataset [ 1 ], employing calculator and solver tools to resolve mathematical problems. A cross all agentic rollouts, we en- force a maximum output length of 40K tokens and generate 16 samples per prompt using the GRPO [ 36 ] RL algorithm. W e congure LLM generation hyperparameters with a tem- perature of 1 . 0 and a top- 𝑝 of 0 . 9 , aligning with standard LLM post-training practices [31, 42]. Baselines. W e compar e Heddle against two state-of-the-art open-source agentic RL frameworks: • Slime [ 3 ] is an open-source RL training framework built on SGLang [ 57 ] that employs a customized router to dis- patch agentic trajectories. It achieves load balancing by dynamically routing individual LLM generation requests at each step to the least-loaded worker . • V erl [ 37 ] provides highly scalable agentic training abstrac- tions alongside a hierar chical, hybrid programming model. T o optimize prex cache utilization, it utilizes a static, cache-aware placement strategy , pinning each complete agentic trajectory sample to a de dicated worker . • V erl* extends V erl by integrating the SGLang-router [ 57 ]. It employs a hybrid placement strategy: if the load ske w (max/min) exceeds a threshold (e.g., 32), it uses least-load strategy; otherwise, it defaults to a cache-aware strategy . Notably , both baselines inherently rely on r ound-robin sched- uling and homogeneous resource allocation during agentic rollout. Given that Slime is strictly coupled to SGLang, we standardize our evaluation by conguring SGLang as the rollout backend for V erl as well to ensure a fair comparison. 7.1 Overall Performance W e rst benchmark Heddle against Slime and V erl. For the baseline systems, which rely on homogeneous resource allo- cation, we congure the mo del parallelism degrees of 1, 1, and 2 for the Q wen3-8B, 14B, and 32B variants, respectively , to ensure maximum thr oughput. W e x the baseline batch size at 100 per LLM rollout worker and apply the same global batch size to Heddle . In contrast to the baselines, Heddle 9 Qwen8B Qwen14B Qwen32B 0k 20k 40k 60k 80k Rollout roughput Qwen8B Qwen14B Qwen32B 0k 25k 50k 75k 100k Rollout roughput Qwen8B Qwen14B Qwen32B 0k 25k 50k 75k 100k Rollout roughput Slime V erl* V erl Heddle (a) Coding agent. (b) Search agent. (c) Math agent. Figure 12: Rollout throughput of agentic RL systems under dierent workloads and models. T ail 20% recall T ail 10% recall Pred len pearson 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 Score T ail 20% recall T ail 10% recall Pred len pearson 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 T ail 20% recall T ail 10% recall Pred len pearson 0 . 0 0 . 2 0 . 4 0 . 6 0 . 8 1 . 0 Model-Based History-Based Heddle -1 Heddle -2 (a) Coding agent. (b) Search agent. (c) Math agent. Figure 13: Precision of progressive trajectory prediction under dierent workloads and models. leverages the heter ogene ous resource congurations derived from our trajectory-adaptive manager (§ 6). Given our fo- cus on accelerating agentic RL r ollouts, we use end-to-end rollout throughput (tokens/s) as the performance metric. Figure 12 presents the end-to-end rollout throughput across various agentic RL tasks and mo del sizes. Heddle out- performs both baselines, achieving speedups of 1 . 4 × – 2 . 3 × over V erl, 1 . 1 × – 2 . 4 × over V erl* , and 1 . 2 × – 2 . 5 × over Slime. Notably , these performance gains amplify as model size in- creases. This is because larger models inherently suer from severe computation and memory contention, which height- ens the interference factor of the long-tail trajectories. This bottleneck signicantly degrades the baseline performance. Heddle , howev er , successfully mitigates this through its trajectory-aware placement. Comparing the baselines, Slime demonstrates superior eciency on coding and math agent tasks by utilizing a customized router to mitigate load imbal- ance across r ollout workers. Conversely , V erl outperforms Slime on the search agent due to the task’s shorter sequences and frequent interaction steps, which amplify prell over- head and prioritize pr ex cache locality . In such cache-heavy scenarios, V erl’s cache-aware placement proves mor e ee c- tive. V erl* generally demonstrates intermediate performance between V erl and Slime, representing a heuristic trade-o between cache anity and load balance. Heddle , howe ver , implements a superior trajector y-aware placement strategy that simultaneously maximizes prex cache hit rates and minimizes interference from long-tailed trajectories, outper- forming both baselines across all task types. 7.2 Eectiveness of traje ctory-level Sche duling In this subsection, we evaluate the scheduler from tw o p er- spectives: the precision of progressive trajectory prediction, and the eectiveness of the progressiv e priority scheduling. Prediction. T o assess the precision of the progressive tra- jectory prediction, we compare Heddle ’s predictor against two prompt-based baselines: model-based and history-based prediction. Model-based prediction [ 59 ] employs a light- weight deep learning model to infer prompt complexity , while history-based prediction [ 16 , 33 ] estimates it with sta- tistical heuristics from historical rollout data. In contrast, Heddle leverages both the initial prompt and the runtime- generated context to dynamically predict the complexity of an active agentic trajectory . For our evaluation metrics, we report the recall of long-tailed trajectories (ranging from 0 to 1 ) to measure the precision of relative trajectory length pre- dictions, alongside the Pearson correlation coecient ( − 1 to 1 ) to quantify the relationship between predicted and actual trajectory lengths. Figure 13 presents the pr e diction precision for the Q wen3- 14B model across three agentic tasks. Here , Heddle -1 and Heddle -2 denote the predictions following the rst and sec- ond agentic steps, respectively . Notably , Heddle consistently achieves a higher r ecall and Pearson correlation coecient than the baselines. Furthermore, Heddle -2 outperforms Hed- dle -1 since runtime-generate d context enables progr essively more accurate trajectory length predictions. 10 FCFS RR Aut ellix Heddle Coding 0 500 1000 Rollout Time (s) FCFS RR Aut ellix Heddle Search 0 100 200 FCFS RR Aut ellix Heddle Math 0 250 500 750 eueing Delay Other Time Figure 14: Performance of trajectory-level scheduler. Scheduling. T o evaluate Heddle ’s progressive priority scheduling, we compare it against FCFS, Round-Robin (RR) and A utellix [ 25 ]. While existing agentic RL frameworks de- fault to RR, Autellix employs a shortest-job-rst policy to prevent head-of-line blocking in online LLM agent serving. W e measure the rollout time and the queueing delay of the longest-tailed trajectory using Qwen3-14B. Be cause an agen- tic trajector y submits an LLM generation request at every agentic step, we dene the trajectory’s queueing delay as the sum of the queueing delays incurred across all its steps. As Figure 14 shows, Heddle reduces end-to-end rollout time by 1 . 1 × – 1 . 26 × relative to the baselines. This performance gain stems primarily from minimizing the queueing delays. These results validate the ee ctiveness of our progressive priority scheduling. 7.3 Eectiveness of traje ctory-aware Placement T o evaluate Heddle ’s traje ctory-aware placement, we com- pare it against two baseline placement strategies: • Least-load routes p er-step LLM generation requests to the least-loaded worker if load imbalance e xce eds a con- gurable thr eshold. Otherwise, it r outes the request to the worker with the longest cached prex. • Cache-aware routes per-step LLM generation requests to the worker with the maximum prex cache match, entirely disregarding potential load imbalances. W e evaluate the end-to-end rollout throughput using the Qwen3-14B model. As Figur e 15 illustrates, Heddle achieves a 1 . 2 × – 1 . 5 × higher throughput than both baselines. While the cache-aware method maximizes prex cache hits via static assignments, the highly ske wed output length of agen- tic traje ctories induces severe load imbalance with this method. The least-load method guarantees worker load bal- ance while preserving a degree of cache eciency . However , this method ignores the holistic agentic trajectory context, treating p er-step requests in isolation and incurring high interference for long-tailed trajectories. Heddle overcomes these limitations by le veraging a trajectory-aware placement strategy , i.e., combining presorted dynamic programming with runtime trajectory migration, to explicitly minimize the completion time of these critical long-tailed traje ctories, thereby reducing the overall r ollout makespan. Coding Search Math 0k 20k 40k 60k 80k Rollout roughput Least-Load Cache- A ware Heddle Figure 15: Performance of trajectory-aware placement. Coding Search Math 0 25 50 75 Rollout r oughput 0 50 100 150 0 2000 4000 6000 Active Trajectories Rollout End Fix-1 Fix-8 Heddle (a) Rollout throughput. (b) Active trajectories over time. Figure 16: Performance of trajectory-adaptive resource management. 7.4 Eectiveness of Resource Manager T o evaluate Heddle ’s traje ctory-adaptive resource manage- ment, we compare it against homogeneous resource allo- cation baselines, where identical compute r esources are as- signed to each rollout worker . W e evaluate mo del parallelism sizes of 1 (Fix-1) and 8 (Fix-8), representing throughput- optimized and latency-optimized congurations, respec- tively . W e measure the end-to-end rollout thr oughput with the Qwen3-14B model. Figure 16(a) demonstrates that Hed- dle achieves a 1 . 1 × – 1 . 3 × speedup over both static baselines. Figure 16( b) tracks the number of active trajectories over time for the search agent. While Fix-1 delivers peak initial throughput, its slow per-token time of long-tailed trajecto- ries ultimately bottlene cks the entire rollout. Conversely , Fix-8 minimizes per-token time for long-tailed trajectories at the tail end of e xecution, but its persistently low ov erall throughput throttles the rollout. Heddle resolves this per- formance trade-o by dynamically achie ving lo w-latency for long-tailed trajectories while sustaining high throughput for short trajectories, thereby outperforming both baselines. 7.5 System Overhead Data Plane. In this subse ction, we rst quantify the system overhead introduced by progressiv e trajectory prediction (§ 4.1) and runtime trajectory migration (§ 5.3). Prediction overhead represents the latency of trajectory-length esti- mation, while migration overhead accounts for the time re- quired to transfer a trajectory’s prex cache between work- ers. Notably , Heddle overlaps these two operations with standard tool execution. T able 1 details the average to ol 11 Models Coding Search Math Qwen3-8B T ool Exec. (s) 0.46 1.42 0.051 Pred. (s) 0.103 0.15 0.26 Migration (s) 0.26 0.12 0.24 Qwen3-14B T ool Exec. (s) 0.41 1.41 0.046 Pred. (s) 0.099 0.15 0.27 Migration (s) 0.27 0.15 0.25 Qwen3-32B T ool Exec. (s) 0.45 1.43 0.054 Pred. (s) 0.104 0.16 0.28 Migration (s) 0.35 0.27 0.33 T able 1: Prediction and migration overhead in Heddle . execution times alongside the prediction and migration over- heads across various workloads. As Heddle parallelizes these tasks, both overheads are eectively masked by tool exe- cution in most scenarios. Even when tool execution is ex- ceptionally brief, the exposed ov erhead remains negligible relative to the tens of seconds for per-step LLM generation. Control Plane. W e ne xt quantify the algorithmic ov erheads of placement (§ 5) and resource management (§ 6) executed by the Ray controller w orker . As T able 2 shows, placement overhead is negligible compared to the hundreds of seconds required for total r ollout. Furthermore, the resour ce manage- ment algorithm executes only periodically , amortizing its cost across multiple training steps and rendering its system impact inconsequential. 8 Discussion Asynchronous RL. Asynchronous RL [ 59 ] enhances RL throughput via partial rollout [ 41 ] but requires staleness thresholds to prevent gradient bias and preserve training convergence. While this maximum staleness requirement maintains training stability , it does not resolve the b ottleneck of long-tailed agentic traje ctories. Heddle can be seamlessly integrated with staleness-b ounded asynchronous RL, acceler- ating these stragglers without introducing additional p olicy divergence or policy staleness. PD Disaggregation. Prell-decode (PD) disaggregation [ 32 , 58 ] optimizes LLM serving by applying heterogeneous model parallelism (MP) across prell and decode stages. Howev er , it maintains homogeneous MP within each stage ’s worker pool. Heddle is orthogonal to this approach and can be seamlessly integrated to provide intra-stage heterogeneity . For instance, Heddle can dynamically assign higher mo del parallelism to long-tailed trajectories within the prell po ol, further accelerating agentic rollouts. Speculative Decoding. Spe culative decoding (SD) [17, 23] accelerates LLM decoding by using a small draft model to generate candidate tokens, which are then veried in paral- lel by the target model. How ever , SD degrades throughput at large batch sizes due to the computational overhead of Models Coding Search Math Qwen3-8B Placement (s) 0.036 0.037 0.036 Resource manager (s) 5.69 5.27 5.06 Qwen3-14B Placement (s) 0.038 0.037 0.036 Resource manager (s) 5.05 4.99 4.97 Qwen3-32B Placement (s) 0.037 0.038 0.036 Resource manager (s) 5.06 5.01 4.98 T able 2: Algorithm overheads in Heddle . the rejecte d tokens. SD is ill-suite d for prell-heavy agen- tic RL tasks where short generations across frequent steps make tool-output prell the dominant rollout bottleneck. But Heddle is still ee ctive by optimizing the prell phase . For decoding-heav y workloads, Heddle is orthogonal to SD and can be seamlessly integrated. For instance, Heddle can dynamically route long-tailed trajectories to high model par- allelism workers to reduce decoding time, while concurrently applying SD to further minimize the decoding time. 9 Related W ork LLM Training. LLM development bifurcates into pre- training and post-training phases with distinct computa- tional demands. Pre-training systems [ 11 , 18 , 56 ] optimize static throughput via multidimensional parallelism to maxi- mize FLOP utilization on massive corpora. In contrast, p ost- training paradigms introduce dynamic workloads that alter- nate b etween multi-step trajectory generation (inference) and policy optimization (training). T o support this, existing frameworks adopt either colocated or disaggregated architec- tures. Colocated systems [ 17 , 37 , 60 ] execute both generation and training on the same worker p ool. Disaggregated sys- tems [ 3 , 10 , 59 ] physically separate inference and training onto dedicated and even heterogeneous worker pools. Hed - dle is orthogonal to these existing RL frameworks and can be seamlessly integrated to provide ecient agentic trajectory rollout. LLM Inference. Extensive LLM inference research focuses on optimizing LLM serving and RL rollout eciency . In scheduling, frameworks [ 25 , 47 , 53 ] employ iterative schedul- ing and selective batching to mitigate head-of-line blocking. For memory management, many systems [ 20 , 22 , 34 , 46 , 51 ] leverage PagedAttention [ 22 ] to eliminate memory fragmen- tation and facilitate prex caching. Architecturally , Dist- Serve [ 58 ] and MegaScale-Infer [ 61 ] disaggregate compute resources to isolate interference b etween distinct compu- tation phases. Heddle is orthogonal to these foundational techniques and incorporates these step-level optimizations while introducing trajectory-level metadata to or chestrate ecient agentic rollout. 12 10 Conclusion W e present Heddle , a traje ctory-centric framework that optimizes the agentic RL rollout phase by mitigating the straggler eect caused by long-taile d, multi-step agentic tra- jectories. By decomposing the long-tail traje ctory time into queueing, interference, and base per-token time, Heddle em- ploys a synergistic orchestration of trajectory-centric sche d- uling, placement, and resour ce management. Our evaluation demonstrates that Heddle eectively neutralizes long-tail bottlenecks, achieving up to 2.5 × higher throughput than state-of-the-art baselines. References [1] 2024. D APO-Math-17k Dataset. https://huggingface . co/datasets/ BytedT singhua- SIA/DAPO- Math- 17k. [2] 2025. Seed-Thinking-v1.5: A dvancing Superb Reasoning Models with Reinforcement Learning. https://github . com/ByteDance- See d/Seed- Thinking- v1 . 5. [3] 2025. Slime: An LLM post-training framework for RL Scaling. https: //github . com/THUDM/slime. [4] 2026. OpenClaw — Personal AI Assistant. https://github . com/ openclaw/openclaw. [5] Alibaba Cloud. 2026. Function Compute . https: //www . alibabacloud . com/product/function- compute [6] Amazon W eb Services. 2014. A WS Lamb da . https://aws . amazon . com/ lambda/ [7] Anthropic. 2025. Claude 3.7 Sonnet and Claude Code . https:// www . anthropic . com/news/claude- 3- 7- sonnet Claude 3.7 Sonnet and Claude Code. [8] Paul F Christiano, Jan Leike, T om Brown, Miljan Martic, Shane Legg, and Dario Amodei. 2017. Deep reinforcement learning fr om human preferences. Advances in Neural Information Processing Systems (2017). [9] W einan Dai, Hanlin Wu, Qiying Y u, Huan-ang Gao, Jiahao Li, Chengquan Jiang, W eiqiang Lou, Yufan Song, Hongli Yu, Jiaze Chen, et al . 2026. CUDA Agent: Large-Scale Agentic RL for High-Performance CUDA Kernel Generation. arXiv preprint arXiv:2602.24286 (2026). [10] W ei Fu, Jiaxuan Gao, Xujie Shen, Chen Zhu, Zhiyu Mei, Chuyi He, Shusheng Xu, Guo W ei, Jun Mei, Jiashu W ang, et al . 2025. Areal: A large-scale asynchronous reinfor cement learning system for language reasoning. arXiv preprint arXiv:2505.24298 (2025). [11] Hao Ge, Junda Feng, Qi Huang, Fangcheng Fu, Xiaonan Nie, Lei Zuo, Haibin Lin, Bin Cui, and Xin Liu. 2025. ByteScale: Communication- Ecient Scaling of LLM Training with a 2048K Context Length on 16384 GP Us. In ACM SIGCOMM . [12] Zhibin Gou, Zhihong Shao, Y eyun Gong, Y elong Shen, Yujiu Y ang, Minlie Huang, Nan Duan, and W eizhu Chen. 2024. T oRA: A T ool- Integrated Reasoning Agent for Mathematical Problem Solving. In International Conference on Learning Representations (ICLR) . [13] Ronald L. Graham. 1969. Bounds on multiprocessing timing anomalies. SIAM journal on A pplied Mathematics (1969). [14] Daya Guo, Dejian Y ang, Haowei Zhang, Junxiao Song, Peiyi W ang, Qihao Zhu, Runxin Xu, Ruoyu Zhang, Shirong Ma, Xiao Bi, et al . 2025. Deepse ek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948 (2025). [15] Mor Harchol-Balter . 2013. Performance modeling and design of com- puter systems: queueing theory in action . [16] Jingkai He, Tianjian Li, Erhu Feng, Dong Du, Qian Liu, T ao Liu, Y u- bin Xia, and Haib o Chen. 2025. History rhymes: Accelerating llm reinforcement learning with rhymerl. arXiv preprint (2025). [17] Qinghao Hu, Shang Y ang, Junxian Guo, Xiaozhe Yao , Y ujun Lin, Yuxian Gu, Han Cai, Chuang Gan, Ana Klimo vic, and Song Han. 2025. T aming the long-tail: Ecient reasoning rl training with adaptive drafter . arXiv preprint arXiv:2511.16665 (2025). [18] Ziheng Jiang, Haibin Lin, Yinmin Zhong, Qi Huang, Y angrui Chen, Zhi Zhang, Y anghua Peng, Xiang Li, Cong Xie, Shibiao Nong, et al . 2024. MegaScale: Scaling large language model training to more than 10,000 GP Us. In USENIX NSDI . [19] Bowen Jin, Hansi Zeng, Zhenrui Yue , Jinsung Y oon, Ser can Arik, Dong W ang, Hamed Zamani, and Jiaw ei Han. 2025. Search-r1: Training llms to reason and leverage search engines with reinfor cement learning. arXiv preprint arXiv:2503.09516 (2025). [20] Chao Jin, Zili Zhang, Xuanlin Jiang, Fangyue Liu, Xin Liu, Xuanzhe Liu, and Xin Jin. 2024. RA GCache: Ecient Knowledge Caching for Retrieval- Augmented Generation. arXiv preprint (2024). [21] Chao Jin, Zili Zhang, Xingyu Xiang, Songyun Zou, Gang Huang, Xu- anzhe Liu, and Xin Jin. 2023. Ditto: Ecient serverless analytics with elastic parallelism. In A CM SIGCOMM . [22] W oosuk Kw on, Zhuohan Li, Siyuan Zhuang, Ying Sheng, Lianmin Zheng, Cody Hao Yu, Joseph Gonzalez, Hao Zhang, and Ion Stoica. 2023. Ecient memory management for large language model serving with pagedattention. In ACM SOSP . [23] Y aniv Le viathan, Matan K alman, and Y ossi Matias. 2023. Fast inference from transformers via speculative decoding. In International Confer- ence on Machine Learning (ICML) . [24] Y ujia Li, David Choi, Junyoung Chung, Nate Kushman, Julian Schrit- twieser , Rémi Leblond, T om Eccles, James Keeling, Felix Gimeno, Agustin Dal Lago, et al . 2022. Competition-level code generation with alphacode. Science (2022). [25] Michael Luo, Xiaoxiang Shi, Colin Cai, Tianjun Zhang, Justin W ong, Yichuan W ang, Chi W ang, Y anping Huang, Zhifeng Chen, Joseph E Gonzalez, et al . 2025. Autellix: An ecient serving engine for llm agents as general programs. arXiv preprint arXiv:2502.13965 (2025). [26] Ashraf Mahgoub, Edgar do Barsallo Yi, Karthick Shankar , Sameh El- nikety , Somali Chaterji, and Saurabh Bagchi. 2022. ORION and the three rights: Sizing, bundling, and prewarming for serverless { DA Gs } . In USENIX OSDI . [27] Microsoft. 2026. Bing Search API . https://ww w . microsoft . com/en- us/bing/apis [28] Philipp Moritz, Robert Nishihara, Stephanie W ang, Alexey T umanov , Richard Liaw , Eric Liang, Melih Elib ol, Zongheng Y ang, William Paul, Michael I Jordan, et al . 2018. Ray: A distributed framework for emerg- ing { AI } applications. In USENIX OSDI . [29] Reiichiro Nakano, Jacob Hilton, Suchir Balaji, Je W u, Long Ouyang, Christina Kim, Christopher Hesse, Shantanu Jain, Vine et Kosaraju, William Saunders, et al . 2021. W ebgpt: Browser-assisted question- answering with human feedback. arXiv preprint (2021). [30] OpenAI. 2025. Introducing De ep Research . https://openai . com/index/ introducing- deep- research/ Introducing deep research. [31] Long Ouyang, Jerey Wu, Xu Jiang, Diogo Almeida, Carroll Wain- wright, Pamela Mishkin, Chong Zhang, Sandhini A garwal, Katarina Slama, Alex Ray , et al . 2022. Training language models to follow instructions with human feedback. Advances in Neural Information Processing Systems (2022). [32] Pratyush Patel, Esha Choukse, Chaojie Zhang, Aashaka Shah, Íñigo Goiri, Saeed Maleki, and Ricardo Bianchini. 2024. Splitwise: Ecient generative llm inference using phase splitting. In A CM/IEEE ISCA . 13 [33] Ruoyu Qin, W eiran He, W eixiao Huang, Y angkun Zhang, Yikai Zhao, Bo Pang, Xinran Xu, Yingdi Shan, Y ongwei Wu, and Mingxing Zhang. 2025. Se er: Online context learning for fast synchronous llm reinforce- ment learning. arXiv preprint arXiv:2511.14617 (2025). [34] Ruoyu Qin, Zheming Li, W eiran He, Jialei Cui, Heyi T ang, Feng Ren, T eng Ma, Shangming Cai, Yineng Zhang, Mingxing Zhang, et al . 2024. Mooncake: A kvcache-centric disaggregated architecture for llm serv- ing. ACM T ransactions on Storage (2024). [35] John Schulman, Filip W olski, Prafulla Dhariwal, Alec Radford, and Oleg Klimov . 2017. Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347 (2017). [36] Zhihong Shao , Peiyi W ang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Y ang Wu, et al . 2024. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300 (2024). [37] Guangming Sheng, Chi Zhang, Zilingfeng Y e, Xibin Wu, W ang Zhang, Ru Zhang, Y anghua Peng, Haibin Lin, and Chuan W u. 2025. Hybrid- ow: A exible and ecient rlhf framework. In Eur oSys . [38] Noah Shinn, Federico Cassano, Ashwin Gopinath, Karthik Narasimhan, and Shunyu Y ao. 2023. Reexion: Language agents with verbal rein- forcement learning. Advances in Neural Information Processing Systems (2023). [39] Steven S. Skiena. 2020. The Algorithm Design Manual . The denitive reference for the linear partition pr oblem and its dynamic program- ming solution. [40] Richard P Stanley . 2011. Enumerative combinatorics volume 1 second edition. Cambridge studies in advanced mathematics (2011). [41] Kimi T eam, Angang Du, Bofei Gao, Bowei Xing, Changjiu Jiang, Cheng Chen, Cheng Li, Chenjun Xiao, Chenzhuang Du, Chonghua Liao, et al . 2025. Kimi k1.5: Scaling reinforcement learning with llms. arXiv preprint arXiv:2501.12599 (2025). [42] Hugo T ouvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Alma- hairi, Y asmine Babaei, Nikolay Bashlykov , Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, et al . 2023. Llama 2: Open foundation and ne-tuned chat models. arXiv preprint arXiv:2307.09288 (2023). [43] Guanzhi W ang, Yuqi Xie, Yunfan Jiang, Ajay Mandlekar , Chaowei Xiao, Y uke Zhu, Linxi Fan, and Anima Anandkumar . 2023. V oyager: An open-ended embodie d agent with large language models. arXiv preprint arXiv:2305.16291 (2023). [44] Liang W ang, Mengyuan Li, Yinqian Zhang, Thomas Ristenpart, and Michael Swift. 2018. Peeking behind the curtains of serverless plat- forms. In USENIX A TC . [45] Xingyao W ang, Boxuan Li, Yufan Song, Frank F Xu, Xiangru T ang, Mingchen Zhuge , Jiayi Pan, Y ueqi Song, Bowen Li, Jaskirat Singh, et al . 2024. Openhands: An open platform for ai software developers as generalist agents. arXiv preprint arXiv:2407.16741 (2024). [46] Bingyang Wu, Zili Zhang, Yinmin Zhong, Guanzhe Huang, Yibo Zhu, Xuanzhe Liu, and Xin Jin. 2025. T okenLake: A Unied Segment-level Prex Cache Pool for Fine-grained Elastic Long-Context LLM Serving. arXiv preprint arXiv:2508.17219 (2025). [47] Bingyang Wu, Yinmin Zhong, Zili Zhang, Gang Huang, Xuanzhe Liu, and Xin Jin. 2023. Fast distributed inference serving for large language models. arXiv preprint arXiv:2305.05920 (2023). [48] An Y ang, Anfeng Li, Baosong Y ang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bo wen Yu, Chang Gao , Chengen Huang, Chenxu Lv , et al . 2025. Qwen3 technical report. arXiv preprint arXiv:2505.09388 (2025). [49] John Y ang, Carlos E Jimenez, Alexander W ettig, Kilian Lieret, Shunyu Y ao, Karthik Narasimhan, and Or Press. 2024. Swe-agent: Agent- computer interfaces enable automate d software engineering. Advances in Neural Information Processing Systems (2024). [50] Zhilin Y ang, Peng Qi, Saizheng Zhang, Y oshua Bengio, William W . Cohen, Ruslan Salakhutdinov , and Christopher D. Manning. 2018. HotpotQA: A Dataset for Diverse, Explainable Multi-hop Question Answering. In Conference on Empirical Methods in Natural Language Processing . [51] Jiayi Y ao, Hanchen Li, Y uhan Liu, Siddhant Ray , Yihua Cheng, Qizheng Zhang, Kuntai Du, Shan Lu, and Junchen Jiang. 2025. Cacheblend: Fast large language model ser ving for rag with cached knowledge fusion. In Proceedings of the twentieth European conference on computer systems . [52] Shunyu Y ao, Jerey Zhao, Dian Y u, Nan Du, Izhak Shafran, K arthik Narasimhan, and Y uan Cao. 2023. ReAct: Synergizing Reasoning and Acting in Language Models. In International Conference on Learning Representations (ICLR) . [53] Gyeong-In Y u, Jo o Seong Jeong, Geon- W o o Kim, Soojeong Kim, and Byung-Gon Chun. 2022. Orca: A distribute d serving system for { Transformer-Based } generative models. In USENIX OSDI . [54] Qiying Yu, Zheng Zhang, Ruofei Zhu, Yufeng Y uan, Xiaochen Zuo, Y u Yue, W einan Dai, Tiantian Fan, Gaohong Liu, Lingjun Liu, et al . 2025. Dapo: An op en-source llm reinforcement learning system at scale. arXiv preprint arXiv:2503.14476 (2025). [55] Zili Zhang, Chao Jin, and Xin Jin. 2024. Jolte on: Unleashing the promise of serverless for serverless workows. In USENIX NSDI . [56] Zili Zhang, Yinmin Zhong, Yimin Jiang, Hanpeng Hu, Jianjian Sun, Zheng Ge, Yibo Zhu, Daxin Jiang, and Xin Jin. 2025. Disttrain: Ad- dressing model and data heterogeneity with disaggregated training for multimodal large language models. In ACM SIGCOMM . [57] Lianmin Zheng, Liangsheng Yin, Zhiqiang Xie, Chuyue Sun, Je Huang, Cody H Y u, Shiyi Cao, Christos Kozyrakis, Ion Stoica, Joseph E Gonzalez, et al . 2024. Sglang: Ecient execution of structured lan- guage model programs. Advances in Neural Information Processing Systems (2024). [58] Yinmin Zhong, Shengyu Liu, Junda Chen, Jianbo Hu, Yibo Zhu, Xu- anzhe Liu, Xin Jin, and Hao Zhang. 2024. DistServe: Disaggregating Prell and Decoding for Goodput-optimized Large Language Model Serving. In USENIX OSDI . [59] Yinmin Zhong, Zili Zhang, Xiaoniu Song, Hanpeng Hu, Chao Jin, Bingyang Wu, Nuo Chen, Y ukun Chen, Yu Zhou, Changyi W an, et al . 2025. Streamrl: Scalable, heterogeneous, and elastic rl for llms with disaggregated stream generation. arXiv preprint (2025). [60] Yinmin Zhong, Zili Zhang, Bingyang Wu, Shengyu Liu, Y ukun Chen, Changyi W an, Hanpeng Hu, Lei Xia, Ranchen Ming, Yibo Zhu, et al . 2025. Optimizing RLHF training for large language models with stage fusion. In USENIX NSDI . [61] Ruidong Zhu, Ziheng Jiang, Chao Jin, Peng Wu, Cesar A Stuardo, Dongyang W ang, Xinlei Zhang, Huaping Zhou, Haoran W ei, Y ang Cheng, et al . 2025. Megascale-infer: Ser ving mixture-of-experts at scale with disaggregated expert parallelism. arXiv preprint (2025). 14

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment