InkDrop: Invisible Backdoor Attacks Against Dataset Condensation

Dataset Condensation (DC) is a data-efficient learning paradigm that synthesizes small yet informative datasets, enabling models to match the performance of full-data training. However, recent work exposes a critical vulnerability of DC to backdoor a…

Authors: He Yang, Dongyi Lv, Song Ma

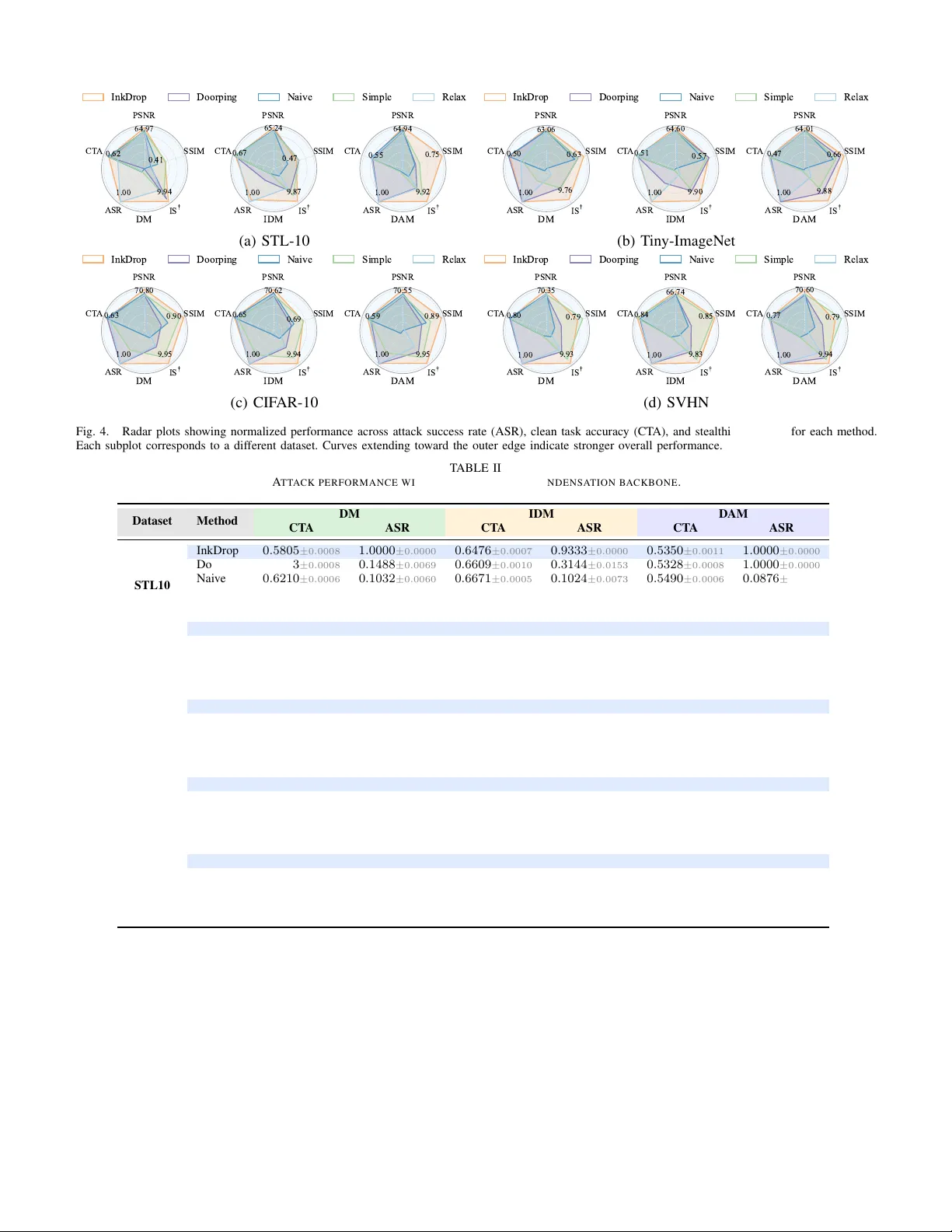

JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 1 InkDrop: In visible Backdoor Attacks Against Dataset Condensation He Y ang, Dongyi Lv , Song Ma, W ei Xi, Member , IEEE, Zhi W ang, Hanlin Gu, Y ajie W ang Abstract —Dataset Condensation (DC) is a data-efficient lear n- ing paradigm that synthesizes small yet inf ormative datasets, enabling models to match the performance of full-data training. Howev er , recent work exposes a critical vulnerability of DC to backdoor attacks, where malicious patter ns ( e.g. , triggers) are implanted into the condensation dataset, inducing targeted misclassification on specific inputs. Existing attacks always prioritize attack effectiveness and model utility , overlooking the crucial dimension of stealthiness. T o bridge this gap, we propose InkDr op, which enhances the imperceptibility of malicious manipulation without degrading attack effectiveness and model utility . InkDrop leverages the inherent uncertainty near model decision boundaries, wher e minor input perturbations can induce semantic shifts, to construct a stealth y and effectiv e backdoor attack. Specifically , InkDrop first selects candidate samples near the target decision boundary that exhibit latent semantic affinity to the target class. It then learns instance-dependent perturbations constrained by perceptual and spatial consistency , embedding targeted malicious behavior into the condensed dataset. Extensive experiments across di verse datasets validate the overall effectiveness of InkDr op, demonstrating its ability to integrate adversarial intent into condensed datasets while pr eserving model utility and minimizing detectability . Our code is available at https://github .com/lvdongyi/InkDrop. Index T erms —Dataset condensation, stealthy backdoor attack, visual imperceptibility . I . I N T RO D U C T I O N D A T ASET Condensation (DC) is an emerging paradigm that aims to synthesize a compact set of representati ve samples while preserving the training utility of large-scale datasets [1]–[5]. It distills core data characteristics into a re- duced form, enabling efficient model training with low memory and computation costs [6]–[8]. DC also enhances priv acy by removing the need to share raw data, making it well-suited for collaborati ve and distrib uted learning where transferring original datasets is impractical [9]. Despite its ef ficiency , DC introduces critical security risks [10]–[14]. The condensation process can be e xploited to implant backdoor triggers into synthetic samples [15]–[18]. These samples directly influence model behavior , making even minor manipulations sufficient to embed persistent malicious functions. Such vulnerabilities compromise the inte grity of do wnstream models and are especially concerning in safety-critical applications. Recent studies [15]–[17], [19], [20] demonstrate that adver - sarial triggers embedded in synthetic data can persist through downstream training and induce targeted misclassifications at inference (See Figure 1). Among the earliest explorations in this domain is the Naiv e Attack [15], which demonstrates that simply ov erlaying a fixed visual pattern onto clean samples before condensation can effecti vely embed persistent backdoor This paper was produced by the IEEE Publication T echnology Group. They are in Piscataway , NJ. Manuscript received April 19, 2021; revised August 16, 2021. Trainable P ar ameters Froze n Parame ters Triggered Test Set Clean Test Set Clean Test Accurac y Training Stage DM IDM DAM … Random Network Distilled Train Set Original Train Set Trained Network Optimizer Batch Size … Trigger Injection Attack Succe ss Rate Evaluation Stage Condensati on Stage Fig. 1. Illustration of backdoor attacks against dataset condensation. behavior . Although conceptually simple, Naive Attack provides a foundational proof, v alidating the feasibility of backdoor injection within the condensation pipeline. Building on this foundation, more advanced strategies ha ve been proposed to amplify the attack effecti veness. A prominent e xample is Doorping [15], which leverages a bilev el optimization framew ork to jointly optimize the synthetic dataset and the backdoor trigger . This approach more effecti vely preserves trigger semantics throughout the condensation process, resulting in substantially higher attack success rates. More recent advances shift to ward a theoretical lens, leveraging kernel- based methods to analyze backdoor vulnerabilities in DC. The Simple-T rigger [16] minimizes the Generalization Gap, defined as the discrepancy between the expected loss over the full manipulated dataset and the empirical loss on a sampled subset, promoting backdoor generalization beyond seen samples. The Relax-T rigger [16] additionally reduces projection loss, which measures the extent to which a model trained on distilled data generalizes to the original clean distrib ution, and conflict loss, which captures feature-space interference between clean and poisoned samples. This joint optimization enhances the stability and transferability of the backdoor attack. Existing approaches always prioritize optimizing model behavior and attack ef ficacy , while o verlooking a critical and underexplored limitation: stealthiness , either in the synthetic data or in triggered test samples. During the condensation process, synthetic data often contain unnatural patterns that expose the presence of the trigger, compromising the covert nature of the attack (See Figure 2). At inference time, the in- serted triggers may produce noticeable visual artifacts, making them easily identifiable by human observers or basic anomaly detection systems. This lack of visual subtlety significantly weakens the practical applicability of these attacks. T o ensure long-term persistence and real-world viability , future backdoor strategies must account for both functional ef fectiv eness and visual inconspicuity . JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 2 D o o rp i n g S i m p l e N a i v e Re l a x Co n d e n s e d Im a g e Cl e a n Im a g e Ba c k d o o re d Im a g e Fig. 2. V isualization of the stealth limitations in e xisting backdoor condensation methods. Both synthetic data and trigger-injected test samples exhibit visible artifacts or unnatural structures, exposing the trigger and undermining the attack’ s stealthiness. Therefore, we propose an in visible backdoor attack InkDrop against DC. InkDrop is motiv ated by the key observation that models exhibit intrinsic uncertainty near decision boundaries, where samples are highly sensitive to small perturbations and susceptible to label shifts. Leveraging this property , InkDrop initiates its attack by identifying source-class samples that display latent af finity to the target class, as indicated by slightly elev ated confidence in the target class dimension. These boundary-adjacent samples form a candidate pool that is inherently vulnerable to misclassification, offering a natural foothold for backdoor injection. Although these candidate samples are inherently susceptible, embedding triggers that are both effecti ve and stealthy poses a significant challenge. Subtle perturbations risk being attenuated or removed during the condensation process, while stronger triggers often introduce visible artifacts that reveal the attack. Existing approaches often fail to strike a balance between attack success and stealthiness. Consequently , achieving this balance remains a fundamental obstacle for stealthy and reliable backdoor attacks against DC. T o address this challenge, InkDrop introduces a learnable at- tack model designed to generate customized, low-perceptibility perturbations for each input. The attack model is trained under four complementary objectives: a contrastiv e InfoNCE loss to align poisoned features with the target class while repelling clean features; an Earth Mover’ s Distance loss to align output distributions with target soft labels; L2 regularization to limit perturbation strength; and an LPIPS perceptual loss to maintain visual consistency . These constraints jointly ensure that the generated triggers are both stealthy and ef fectiv e. The attack model then applies these instance-dependent triggers to candidate samples, which are combined with clean target-class data and fed into the condensation pipeline, embedding the backdoor covertly into the synthetic dataset. Main contributions of this paper are summarized belo w: • W e present InkDrop, a stealthy backdoor attack frame- work targeting dataset condensation, providing the first comprehensi ve inv estigation into jointly optimizing attack effecti veness, model utility , and stealthiness. • InkDrop introduces an instance-dependent attack model that generates visually imperceptible yet ef fectiv e pertur- bations via multi-objective optimization, enabling rob ust and stealthy backdoor injection without compromising condensation quality . • Empirical results on four standard benchmarks show that InkDrop consistently achiev es superior trade-offs com- pared to prior methods, ef fectiv ely balancing stealthiness, attack effecti veness, and model utility . I I . R E L A T E D W O R K A. Dataset Condensation Dataset condensation (DC) [21]–[23] aims to generate a compact synthetic dataset that retains the training utility of the original. Among v arious paradigms, distrib ution matching (DM)-based methods are prominent for their scalability , gener- ality , and empirical effecti veness. These methods align feature- lev el statistics between real and synthetic data, commonly using the maximum mean discrepanc y (MMD) to match distributions within a low-dimensional embedding space. A typical objectiv e that minimizes the MMD between augmented feature embeddings of real samples T and synthetic samples S is: min S E θ ∼ P θ 1 |T | P i ψ ( A ( x i )) − 1 |S | P j ψ ( A ( s j )) 2 , where ψ denotes randomly initialized encoders and A ( · ) represents a differentiable augmentation operator . This formu- lation encourages the synthetic dataset to retain the statistical characteristics of the original data, ensuring generalization across div erse model initializations. Subsequent extensions, such as IDM [24] and DAM [25], enhance class-conditional alignment through kernel-based moment matching, adaptive feature regularization, and encoder updates, yielding improved performance. IDM introduces practical enhancements to the original distribution matching frame work, incorporating progressiv e feature extractor updates, stronger data augmentations, and dynamic class balancing to improv e generalization. In parallel, DAM leverages attention map alignment to better preserve spatial semantics, guiding synthetic samples to activ ate similar regions as real data while maintaining computational efficiency . These methods advance the state of dataset condensation by demonstrating that richer supervision and adaptiv e training dynamics are critical for generating high-fidelity synthetic datasets. B. Bac kdoor against Dataset Condensation Backdoor attacks aim to manipulate model behavior at inference time by injecting ca refully crafted triggers into a subset of training data. When effecti ve, the model per - forms normally on clean inputs b ut consistently misclassifies inputs containing the trigger . While extensi vely studied in standard supervised learning, backdoor attacks in the context of dataset condensation have only recently recei ved attention. A pioneering study by Liu et al. [15] by poisoning real data prior to distillation. Their Naive Attack appends a fixed trigger to target-class samples before condensation, b ut suf fers from trigger degradation and reduced attack efficac y due to the synthesis process. T o address this, Doorping employs a JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 3 bilev el optimization scheme that jointly refines the trigger and the synthetic data. Although more effecti ve, it incurs substantial computational overhead. More recently , Chung et al. [16] provide a kernel-theoretic perspectiv e on backdoor persistence in condensation. They propose Simple-Trigger , which minimizes the generalization gap of the backdoor effect, and Relax-T rigger , which further reduces projection and conflict losses for improved robustness. Ho wever , these methods prioritize either attack effecti veness or model utility , while often neglecting stealthiness. In con- trast, we introduce InkDrop, a unified frame work that jointly optimizes for attack ef fectiveness, model utility , and visual stealthiness. I I I . M E T H O D O L O GY T o enhance clarity and facilitate understanding of the proposed method, we provide a detailed list of notations used throughout the paper, presented in T able I. T ABLE I N OTA T I O N S A N D D E FIN I T I ON S Notation Definition S Condensed dataset e S Poisoned condensed dataset D Original dataset [ S / e S / D ] o Subset of S / e S / D containing samples labeled as class o o ∗ Source class selected for crafting poisoned samples ψ Downstream model f , h, ϕ Surrogate model f of ψ , where h is the classifier and ϕ is the feature e xtractor; f = h ◦ ϕ D T P τ Subset where samples in class τ correctly classified as τ by f C Set of dataset labels g θ Attack model used to generate trigger patterns A. Thr eat Model 1) Attac k Scenario: Follo wing the previous work [15], [16], we consider a scenario in which two parties collaborate on model training through data sharing. One party , possessing a large, high-quality dataset, agrees to share data with the other, who lacks access to such resources. Howe ver , due to practical constraints such as pri vac y concerns, proprietary limitations, or bandwidth restrictions, transferring the full dataset is infeasible. Instead, the data provider supplies a condensed version of the dataset that retains the essential characteristics of the original distribution. This is accomplished through dataset condensation, which synthesizes a small set of representative samples that preserv e class semantics and learning utility . Condensed datasets are particularly valuable in settings where computational efficienc y , storage constraints, or communication costs are critical. The recipient treats the condensed data as a reliable surrogate for the original dataset and incorporates it directly into the training pipeline, assuming it supports effecti ve model learning. In this setting, a malicious data pro vider can exploit their control ov er the condensation process to execute a backdoor attack. W ith full access to the original dataset and complete authority o ver the condensation pipeline , the attack er generates condensed data embedded with backdoor triggers. Although the attacker does not participate in or observe the recipient’ s downstream training, their influence persists through the compromised condensed dataset. These triggers induce the trained model to misbehav e on specific inputs while maintaining high accuracy on standard e valuation benchmarks. This poses a significant security risk in scenarios where condensed data is assumed to be a trustworthy and safe substitute for pri vate datasets. 2) Attacker’ s Goal: This objectiv e inv olves three essential goals: stealthiness, attack effecti veness, and the preserv ation of clean model utility . a) Stealthiness (STH): A fundamental requirement is that the injected backdoor remains stealthy . This entails two aspects. Firstly , the poisoned condensed dataset e S must be visually and statistically indistinguishable from its clean counterpart S . This requirement is critical, as the typically small size of condensed datasets makes them particularly susceptible to anomaly detection through manual inspection or automated analysis. Secondly , test samples containing the trigger should closely resemble normal inputs in both in appearance and statistics, ensuring the attack remains inconspicuous during ev aluation or deployment. b) Attac k Effectiveness: Simultaneously , another objectiv e of the attacker is to implant a latent backdoor that reliably acti vates when a specific trigger pattern is applied, while remain- ing dormant otherwise. Specifically , the attack effectiv eness is quantified by the attac k success rate (ASR) . Let ψ denote the do wnstream model trained on the poisoned condensed dataset e S , and let ∆ represent the trigger . The ASR is defined as: AS R = 1 N p P N p i =1 I ( ψ ( x i + ∆) = τ ) , where τ is the target label and N p is the number of triggered test inputs. A high ASR indicates that the backdoor consistently induces misclassification to the target class. c) Clean Model Utility: In parallel, the attacker must ensure that the model maintains high accuracy on clean, unaltered data. This ensures that the poisoned condensed dataset remains effecti ve for standard learning tasks and does not impair performance on benign inputs. Concretely , the clean model utility is measured by clean test accuracy (CT A) . Let x i be a clean test sample with true label y i , and N c denote the total number of such samples. The CT A is defined as: C T A = 1 N c P N c i =1 I ( ψ ( x i ) = y i ) , where ψ denote the downstream model trained on the poisoned condensed dataset e S . B. Overvie w of InkDrop InkDrop consists of three stages: (1) candidate pool construc- tion , (2) attack model optimization , and (3) stealthy malicious condensation . In the first stage, giv en a target class τ , we identify a source class o ∗ whose samples are most susceptible to being misclassified as τ by a pre-trained model. This le verages the inherent susceptibility of class o to misclassification as τ . W ithin class o ∗ , samples are rank ed by their predicted confidence scores in the τ -th softmax dimension, and the top κ 1 fraction is selected as candidate samples for backdoor injection. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 4 In the second stage, an attack model is trained to generate imperceptible, instance-dependent perturbations ( e.g . , triggers) that, when applied to source samples, consistently induce misclassification into the target class. The training objective integrates four components: (i) a contrastive InfoNCE loss [26] to align poisoned embeddings with the target class while repelling clean samples; (ii) a label alignment loss using Earth Mov er’ s Distance [27] between predicted and target soft labels; (iii) L2 regularization to limit trigger magnitude; and (iv) a learned perceptual image patch similarity loss (LPIPS) [28] to maintain visual fidelity . In the third stage, the trained attack model generates triggers that are applied to selected candidate samples, producing poisoned instances. These are combined with clean target- class data and fed into a dataset condensation framew ork. The condensation process distills this mixture into a compact synthetic dataset, embedding the backdoor functionality within the target class representation while preserving downstream task performance. C. Detailed Design of InkDr op 1) Candidate P ool Construction: The first stage of of InkDrop in volv es constructing a candidate sample pool (See Figure 3). The detailed procedure for constructing the candidate pool is presented in Algorithm 1. The objecti ve is to isolate a subset of data points from the ov erall distribution that exhibit high “inducibility”. Since the success of backdoor injection hinges on the perturbation’ s ability to cross the model’ s decision boundary , the selection process emphasizes samples that already exhibit boundary ambiguity with the target class. Applying triggers indiscriminately across the dataset not only reduces injection efficienc y but also increases the risk of detection by anomaly detectors. Therefore, constructing a candidate pool that responds sensitively to perturbations is a critical foundation for effecti ve and stealthy attacks. a) Source Class Selection: The first step in candidate pool construction in volves selecting the most attack-susceptible source class o ∗ from all non-tar get classes o = τ . The strategy is grounded in analyzing the model’ s misclassification patterns through the lenses of information flow and decision uncertainty . By exploiting inter-class information confusion, this step establishes the most fav orable pathway for effecti ve backdoor injection. T o quantify the informational proximity between the source and target classes, we measure the posterior shift between the source class distribution and the target class representation. This process inv olves two steps: (i) constructing a reference dis- tribution for the target class, and (ii) computing the information shift using Kullback–Leibler (KL) diver gence. (i) reference distrib ution construction. Firstly , we construct a reference output distribution q τ for the target class τ , defined as the model’ s a verage predicti ve distribution o ver true positive samples within the target class τ . Let f ( x ) denote the model’ s predicted probability for an input x . Then, q τ ( y ) ≜ E x ∼D T P τ [ f ( y | x )] D TP τ = { x ∈ D τ | arg max f ( x ) = τ } (1) target class candidate pool decision boundary Fig. 3. Illustration of constructing candidate pool. where D T P τ denotes the subset of target-class samples correctly classified as τ . This formulation excludes low-confidence samples near the decision boundary and captures a stable, high- confidence representation of the target class in the model’ s output space. (ii) information shift measurement. Secondly , for any sample x , we quantify its information shift tow ard the target class τ by computing the KL di ver gence between its predictiv e distribution f ( x ) and the target class reference distribution q τ : D KL ( f ( x ) ∥ q τ ) = C X c =1 f c ( x ) log f c ( x ) q τ ( c ) (2) where C represents the number of the classes in the dataset. Smaller D KL ( f ( x ) ∥ q τ ) indicates that the sample’ s prediction aligns more closely with the model’ s typical output for class τ , implying an inherent bias tow ard the target class τ in the model’ s decision space. T o assess the overall informational proximity of a source class o to the target class τ , we compute the expected KL div ergence over the source class distrib ution: I o → τ ≜ E x ∼D o [ D KL ( f ( x ) ∥ q τ )] (3) where D o represents the source class data. This expectation reflects the posterior information closeness of class o to τ . Lower v alues indicate that the source class is more easily absorbed into the tar get class distribution, highlighting a more e xploitable pathway for backdoor injection. T o further characterize the misclassification tendency of a source class o tow ard the target class τ , we define the misclassification rate as: π o → τ = P x ∼D o [arg max f ( x ) = τ ] (4) This metric captures the probability that samples from D o are classified as τ , quantifying the extent to which class o intrudes into the decision region of the tar get class τ . Finally , we unify posterior information proximity and misclassification probability into a joint selection criterion: o ∗ = arg min o = τ ( I o → τ − λπ o → τ ) (5) JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 5 Algorithm 1 Candidate Pool Construction Input: Original data D , target class τ , label set C , hyper- parameters λ , κ 1 , κ 2 Output: Candidate pool P τ , 1: T rain the surrogate model f on D 2: Construct D T P τ as the set of samples in class τ correctly classified by f 3: Construct T κ 2 τ = T op- κ 2 D T P τ ; f τ ( x ) , with |T κ 2 τ | = m 4: Estimate the reference distribution: q τ ≈ 1 m P m j =1 f ( y | x j ) , x j ∈ T κ 2 τ 5: Initialize Γ = ∅ 6: f or each o ∈ C do 7: ˆ I o → τ = 1 n P n i =1 D KL ( f ( x i ) ∥ q τ ) // Informational proximity 8: ˆ π o → τ = 1 n P n i =1 I [arg max f ( x i ) = τ ] // Misclassifi- cation rate 9: e o = ˆ I o → τ − λ ˆ π o → τ // Joint criterion score 10: Add e o to Γ 11: end for 12: o ∗ ← arg min o Γ // Select the source class with minimal score 13: P τ = { x i ∈ D o ∗ | rank ( f τ ( x i )) ≤ κ 1 · |D o ∗ |} // Con- struct candidate pool 14: r eturn P τ Here, λ is a trade-off parameter that balances structural alignment with the target class and the ability to penetrate the decision boundary . This criterion identifies the most vulnerable inter-class pathway within the model, offering both theoretical grounding and structural guidance for downstream attack sample construction. Since the expectations above are not analytically tractable, we adopt Monte Carlo estimation in practice: Reference Distribution q τ : W e select true positive samples from the target class that are predicted as τ , then retain the top- κ 2 samples ranked by confidence in class τ . A veraging their softmax outputs yields the approximation: q τ ( y ) ≈ 1 m m X j =1 f ( y | x j ) , x j ∈ T κ 2 τ T κ 2 τ = T op- κ 2 D TP τ ; f τ ( x ) (6) where T op- κ 2 ( · ) denotes selecting the top κ 2 proportion ranked by f τ ( x ) in descending order . Information Proximity I o → τ : W e draw n samples from the source class D o and estimate the expected KL diver gence: ˆ I o → τ = 1 n n X i =1 D KL ( f ( x i ) ∥ q τ ) (7) Misclassification Rate π o → τ : This is estimated using an indicator function: ˆ π o → τ = 1 n n X i =1 I [arg max f ( x i ) = τ ] (8) These three estimators constitute a practical and theoretically grounded mechanism for source class selection, designed to ensure structural optimality for backdoor injection. Moreov er , in Subsection III-A , we assume the attacker lacks direct access to the downstream model. In practice, a surrogate model f ( · ) is trained on accessible data to approximate the downstream model’ s decision behavior . This model is trained con ventionally on the original dataset D and captures, to some extent, the do wnstream model’ s class confusion patterns and decision boundary structure. All subsequent analysis and sample construction are performed exclusi vely on this surrogate. Despite lacking access to the downstream model’ s parameters and training specifics, carefully crafted strategies on the surrogate can produce transferable attack effects. b) Candidate Attac k Sample Selection: After determining the source class o ∗ , we further extract the most attack-prone subset from its sample set D o ∗ to form the final candidate pool. The goal is to identify samples that naturally reside near the decision boundary of the target class τ , making them more susceptible to misclassification under minimal perturbation. T o identify optimal attack candidates, we use the model’ s output on the tar get class dimension, f τ ( x ) , as a confidence score indicating the likelihood assigned to class τ . For each sample x ∈ D o ∗ , we define its tar get-class confidence as ζ = f τ ( x ) . Higher values of ζ suggest that the model already exhibits a strong bias toward the target class, characterized by low predictiv e uncertainty and feature representations concentrated near the center of the target class distribution. These samples are located near the decision boundary but ske wed toward the target class, making them prime candidates for backdoor injection. Minimal perturbations are suf ficient to alter their predicted label, facilitating effecti ve attack transfer . Based on the above analysis, we rank all samples in D o ∗ in descending order of f τ ( x i ) , and select the top κ 1 quantile to construct the candidate pool P τ : P τ = { x i ∈ D o ∗ | rank ( f τ ( x i )) ≤ κ 1 · |D o ∗ |} (9) This procedure serves as a form of posterior entropy com- pression, fav oring samples where the model exhibits a strong discriminativ e tendency to ward the target class. Compared to low-confidence instances, these samples are more susceptible to perturbation, more likely to trigger misclassification, and require minimal injection effort. The resulting candidate pool P τ forms a subset that is naturally close to the tar get class, pro viding a stable and efficient medium for backdoor trigger injection. 2) Attack Model T raining: T o enable imperceptible and controllable backdoor injection, we train an attack model g θ that generates instance-dependent perturbations for source samples while maintaining visual fidelity . The objectiv e is to induce misclassification into a designated target class τ under the surrogate model. The attack model learns a perturbation mapping from the input space to the posterior output space, optimizing structural alignment and discriminative transfer to facilitate effecti ve class induction. The training procedure for optimizing the attack model is detailed in Algorithm 2. For clarity , we represent the surrogate model f ( · ) as a composition of a feature extractor and a classifier , that is, f = h ◦ ϕ , where ϕ ( · ) denotes the feature extractor and h ( · ) JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 6 Algorithm 2 Attack Model Training Input: Candidate pool P τ , T κ 2 τ , untrained attack model g θ , hyper-parameters λ 1 , λ 2 , λ 3 , λ 4 Output: T rained attack model g θ 1: Z τ = { ϕ ( x ) | x ∈ T κ 2 τ } // T arget embedding set 2: Q τ = { f ( x ) | x ∈ T κ 2 τ } // T arget soft label set 3: f or epoch e from 1 to E do 4: Compute a feature-space contrastive loss L contrast using Eq (13) 5: Compute a label-space alignment loss L sof t using Eq (14) 6: Compute a perturbation regularization term using Eq (15) 7: Compute a perceptual consistency loss L LP I P S using Eq (16) 8: L = λ 1 · L contrast + λ 2 · L soft + λ 3 · L L2 + λ 4 · L LPIPS 9: Update parameters of g θ using L 10: end for 11: r eturn g θ the classifier . The model’ s prediction for an input image x is expressed as: f ( x ) = h ( ϕ ( x )) ∈ ∆ C (10) where ∆ C denotes the probability simplex over C classes. T o extract representati ve structural information for the target class τ , we compute the feature representation ϕ ( x ) and model output f ( x ) for each sample x ∈ T κ 2 τ , forming two reference sets: T ar get embedding set: Z τ = { ϕ ( x ) | x ∈ T κ 2 τ } (11) T ar get soft label set: Q τ = { f ( x ) | x ∈ T κ 2 τ } (12) These sets serve as supervisory signals during the training of the attack model g θ , guiding poisoned samples to conv erge tow ard the target class in both the feature and output spaces via contrastiv e learning and label alignment. Subsequently , lev eraging the previously constructed target- class reference sets Z τ and Q τ , we train an attack model g θ to learn a instance-dependent perturbation strategy . Gi ven a source-class input x ∈ D o ∗ , the model generates a perturbation δ = g θ ( x ) , yielding a poisoned sample x atk = x + δ . The perturbation must satisfy two key constraints: it should (1) reliably induce misclassification into the target class τ , ensuring target alignment in both representation and output space, and (2) remain imperceptible and structurally consistent to av oid triggering detection mechanisms or visual anomalies. T o achiev e both objecti ves, we design a multi-component loss function to optimize g θ , integrating four complementary terms: • Feature-space contrastiv e loss (InfoNCE): Encourages the representation ϕ ( x atk ) to align with embeddings in Z τ while remaining distant from clean features. • Label-space alignment loss (EMD): Aligns the model output f ( x atk ) with the soft label set P τ . • Perturbation regularization ( L 2 ): Penalizes the norm of δ to constrain perturbation magnitude. • Perceptual consistency loss (LPIPS): Maintains visual fidelity by minimizing perceptual distance between x and x atk . a) F eature-space contrastive loss (InfoNCE): T o guide poisoned samples to ward the tar get class in feature space while repelling them from the source class, we incorporate a contrastive loss based on the InfoNCE formulation. This objectiv e maximizes similarity between poisoned and tar get- class embeddings while minimizing similarity with embeddings from clean source-class samples. Let { x atk i } B i =1 denote a batch of poisoned samples generated by the attack model, with normalized feature embeddings { ˆ z atk i = ϕ ( x atk i ) } B i =1 . For each x atk i , we sample a positiv e embedding ˆ z target i from true positi ve target-class instances (i.e., Z τ ), and its corresponding negati ve embeddings { ˆ z clean j = ϕ ( x j ) } B j =1 . The contrasti ve loss for the i -th sample is defined as: L contrast = − log exp ⟨ ˆ z atk i , ˆ z target i ⟩ /ν P B j =1 exp ⟨ ˆ z atk i , ˆ z clean j ⟩ /ν (13) Here, ν is a temperature parameter that controls distribution sharpness. All embeddings are normalized to unit norm, making the inner product equiv alent to cosine similarity . b) Label-space alignment loss: T o improv e the prediction consistency of poisoned samples toward the target class, we introduce a label-space alignment objective based on Earth Mover’ s Distance (EMD). This metric quantifies the discrepancy between the output distribution of a poisoned sample and that of a high-confidence target-class sample under the surrogate model. During training, we select samples from T κ 2 τ and extract their classifier outputs { q i } as target soft labels. Concurrently , we sample source-class candidates, apply perturbations from the attack model to obtain poisoned samples { x atk i } , and collect their outputs { p i } . The EMD loss for each pair ( p i , q i ) is computed as: L sof t = min T ∈ Π( p i ,q i ) X c 1 ,c 2 T ( c 1 , c 2 ) · d ( c 1 , c 2 ) (14) Here, T is the transport matrix, d ( c 1 , c 2 ) denotes the ground distance between labels, and Π( p i , q i ) is the set of transport plans satisfying marginal constraints. c) P erturbation Re gularization: T o constrain the perturba- tion magnitude in image space and reduce the risk of detection by anomaly detectors or human observ ers, we incorporate an L 2 regularization term on the trigger generated by the attack model. Let δ i = g θ ( x i ) denote the perturbation for each input sample. The regularization loss is defined as: L L2 = 1 B B X i =1 ∥ δ i ∥ 2 2 (15) This term encourages the perturbations to be minimal in norm, promoting sparsity and locality , which enhances the imperceptibility and stealth of the attack. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 7 d) P er ceptual Consistency Loss: While the L 2 regular - ization term constrains perturbation magnitude in Euclidean space, it fails to capture perceptual sensitivity to structural and semantic changes in images. Therefore, we incorporate a structure-aw are constraint using the Learned Perceptual Image Patch Similarity (LPIPS) loss, which promotes consistency between poisoned and clean images in the perceptual space. LPIPS measures structural discrepancies by comparing normal- ized activ ations from multiple deep feature layers, of fering finer sensiti vity to semantic and te xtural dif ferences than pixel-based metrics. Let ˆ y l and ˆ y l 0 hw denote the normalized output of the original and poisoned images from the l -th layer of a pre-trained perceptual network, with spatial dimensions H l and W l , and let w l denote channel-wise weighting. For a pair of original and poisoned images ( x i , x atk i ) , the LPIPS loss is defined as: L LPIPS ( x atk i , x i ) = X l 1 H l W l X h,w w l ⊙ ˆ y l hw − ˆ y l 0 hw 2 2 (16) where ⊙ denotes element-wise (Hadamard) multiplication across channels, and the differences are averaged over spatial dimensions. The final loss is computed as the batch mean across all samples. This constraint mitigates visually perceptible dis- tortions, enhancing both the stealth and perceptual integrity of the injected backdoor . The o verall training objective combines four complementary loss terms: L = λ 1 · L contrast + λ 2 · L soft + λ 3 · L L2 + λ 4 · L LPIPS (17) where λ 1 , λ 2 , λ 3 , λ 4 are scalar weights that balance the contri- butions of contrastive loss, soft label alignment, perturbation magnitude control, and perceptual consistenc y , respecti vely . 3) Stealthy Malicious Condensation: Once the attack model g θ is trained to generate perturbations that induce targeted mis- classification, we integrate the resulting adversarial examples into the condensation pipeline. Specifically , the condensation process for the target class is modified to include triggered samples, ensuring that the backdoor behavior is encoded in the surrogate model and subsequently transferred to the downstream model. a) Constructing the Mixed Data: T o embed backdoor behavior into the condensation data, we begin by constructing a mixed dataset that combines both clean and triggered samples. Giv en the candidate pool P τ , a set of corresponding trigger patterns ∆ ≜ { δ i = g θ ( x i ) | x i ∈ P τ } κ 1 ·|D o ∗ | i =1 is generated by the attack model g θ . Each pattern δ i is applied to the corresponding sample x i ∈ P τ , yielding the triggered set: T trigger = { x i + δ i | x i ∈ P τ , δ i ∈ ∆ } (18) T o a void ov erwhelming the original class distribution while still injecting the backdoor signal, only a small fraction of the triggered samples is incorporated into the clean target class. Specifically , we randomly select ρ · κ 1 · |D o ∗ | samples from T trigger and merge them with the clean samples from D τ . The resulting mixed dataset is defined as: T mixed = D τ ∪ { ( x atk i , τ ) } ρ · κ 1 ·|D o ∗ | i =1 , x atk i ∈ T trigger (19) This blending mechanism introduces the trigger pattern into the target class distribution in a controlled manner . It biases the model to ward associating the trigger with class τ while preserving generalization to clean inputs. b) Malicious Condensation: In the next step, we syn- thesize a condensed dataset e S that preserves model utility while embedding the intended backdoor behavior . For each class o = τ , standard dataset condensation is performed using only the clean data D o . The objective is to match the feature distributions of the real and synthetic samples: S o =arg min S o E x ∼ p D o ,x ′ ∼ p S o ,θ ∼ p θ D ( P D o ( x ; θ ) , P S o ( x ′ ; θ )) + λ R ( S o ) (20) P D o ( x ; θ ) and P S o ( x ′ ; θ ) denote the model-induced feature distributions over real and synthetic samples, respectiv ely , and R ( S o ) is a regularization term. For the target class τ , condensation is carried out using the mixed dataset T mixed constructed in the previous step. This allows the resulting synthetic subset e S τ to capture both the clean semantics and the adversarial characteristics introduced via the trigger . The optimization objecti ve is: e S τ =arg min e S τ E x ∼ p T mixed ,x ′ ∼ p e S τ ,θ ∼ p θ D P T mixed ( x ; θ ) , P e S τ ( x ′ ; θ ) + λ R ( e S τ ) (21) The final condensed dataset is assembled as e S = S o = τ S o ∪ e S τ , combining clean synthetic subsets for non-target classes with the backdoor -aware condensed subset for the tar get class. I V . E X P E R I M E N T S A. Dataset and Networks W e ev aluate InkDrop on four standard image classification benchmarks: STL-10 [29], T iny-ImageNet [30], CIF AR-10 [31], and SVHN [32]. These datasets cover a wide spectrum of visual complexity , semantic granularity , and image resolution, offering a robust basis for assessing the generalizability of InkDrop. Specifically , we retain 50 synthetic samples per class. T o construct the synthetic data, we employ two commonly adopted architectures: Con vNet , representing a compact model, and AlexNetBN [33], which represent lightweight and moderately expressi ve condensation encoders. For downstream model training and ev aluation, we utilize four network architectures of increasing complexity: Con vNet, AlexNetBN, VGG11 [34], and ResNet18 [35]. W e compare InkDrop against four recent state- of-the-art backdoor injection methods: Naive [15], Doorping [15], Simple [16], and Relax [16], enabling a comprehensive ev aluation of its ef fectiv eness across diverse settings. B. Evaluation Metrics W e ev aluate the attack across three primary dimensions: attack success rate (ASR), clean task accuracy (CT A), and stealthiness (STH). Following prior work [36], STH is quanti- fied using three complementary metrics. Firstly , Peak Signal- to-Noise Ratio (PSNR) measures pixel-le vel similarity between clean and triggered samples, with higher v alues indicating JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 8 PSNR SSIM I S ASR CT A 64.97 0.41 9.94 1.00 0.62 DM PSNR SSIM I S ASR CT A 65.24 0.47 9.87 1.00 0.67 IDM PSNR SSIM I S ASR CT A 64.94 0.75 9.92 1.00 0.55 DAM InkDrop Doorping Naive Simple Relax (a) STL-10 PSNR SSIM I S ASR CT A 63.06 0.63 9.76 1.00 0.50 DM PSNR SSIM I S ASR CT A 64.60 0.57 9.90 1.00 0.51 IDM PSNR SSIM I S ASR CT A 64.01 0.66 9.88 1.00 0.47 DAM InkDrop Doorping Naive Simple Relax (b) T iny-ImageNet PSNR SSIM I S ASR CT A 70.80 0.90 9.95 1.00 0.63 DM PSNR SSIM I S ASR CT A 70.62 0.69 9.94 1.00 0.65 IDM PSNR SSIM I S ASR CT A 70.55 0.89 9.95 1.00 0.59 DAM InkDrop Doorping Naive Simple Relax (c) CIF AR-10 PSNR SSIM I S ASR CT A 70.35 0.79 9.93 1.00 0.80 DM PSNR SSIM I S ASR CT A 66.74 0.85 9.83 1.00 0.84 IDM PSNR SSIM I S ASR CT A 70.60 0.79 9.94 1.00 0.77 DAM InkDrop Doorping Naive Simple Relax (d) SVHN Fig. 4. Radar plots showing normalized performance across attack success rate (ASR), clean task accurac y (CT A), and stealthiness (STE) for each method. Each subplot corresponds to a different dataset. Curves extending toward the outer edge indicate stronger ov erall performance. T ABLE II A T T A CK P ER F O RM A N C E W I TH C ON V N E T A S T H E C O ND E N S A T I O N B AC KB O N E . DM IDM D AM Dataset Method CT A ASR CT A ASR CT A ASR STL10 InkDrop 0 . 5805 ± 0 . 0008 1 . 0000 ± 0 . 0000 0 . 6476 ± 0 . 0007 0 . 9333 ± 0 . 0000 0 . 5350 ± 0 . 0011 1 . 0000 ± 0 . 0000 Doorping 0 . 5773 ± 0 . 0008 0 . 1488 ± 0 . 0069 0 . 6609 ± 0 . 0010 0 . 3144 ± 0 . 0153 0 . 5328 ± 0 . 0008 1 . 0000 ± 0 . 0000 Naiv e 0 . 6210 ± 0 . 0006 0 . 1032 ± 0 . 0060 0 . 6671 ± 0 . 0005 0 . 1024 ± 0 . 0073 0 . 5490 ± 0 . 0006 0 . 0876 ± 0 . 0085 Simple 0 . 5972 ± 0 . 0005 0 . 0964 ± 0 . 0094 0 . 6576 ± 0 . 0005 0 . 1000 ± 0 . 0068 0 . 5346 ± 0 . 0007 0 . 1032 ± 0 . 0037 Relax 0 . 5962 ± 0 . 0009 0 . 9996 ± 0 . 0008 0 . 6582 ± 0 . 0008 0 . 9536 ± 0 . 0110 0 . 5348 ± 0 . 0011 1 . 0000 ± 0 . 0000 Tiny ImageNet InkDrop 0 . 4764 ± 0 . 0024 1 . 0000 ± 0 . 0000 0 . 5000 ± 0 . 0047 1 . 0000 ± 0 . 0000 0 . 4688 ± 0 . 0037 1 . 0000 ± 0 . 0000 Doorping 0 . 4958 ± 0 . 0023 1 . 0000 ± 0 . 0000 0 . 5120 ± 0 . 0048 1 . 0000 ± 0 . 0000 0 . 4490 ± 0 . 0030 1 . 0000 ± 0 . 0000 Naiv e 0 . 4968 ± 0 . 0020 0 . 0704 ± 0 . 0020 0 . 5014 ± 0 . 0075 0 . 0424 ± 0 . 0038 0 . 4620 ± 0 . 0029 0 . 0418 ± 0 . 0019 Simple 0 . 4932 ± 0 . 0032 0 . 1000 ± 0 . 0038 0 . 5086 ± 0 . 0028 0 . 0462 ± 0 . 0015 0 . 4582 ± 0 . 0030 0 . 0820 ± 0 . 0024 Relax 0 . 4942 ± 0 . 0029 0 . 9960 ± 0 . 0000 0 . 4840 ± 0 . 0056 0 . 9412 ± 0 . 0019 0 . 4646 ± 0 . 0019 0 . 9730 ± 0 . 0011 CIF AR10 InkDrop 0 . 6217 ± 0 . 0006 0 . 9967 ± 0 . 0000 0 . 6471 ± 0 . 0009 1 . 0000 ± 0 . 0000 0 . 5872 ± 0 . 0005 0 . 9933 ± 0 . 0000 Doorping 0 . 6211 ± 0 . 0005 0 . 9876 ± 0 . 0050 0 . 6539 ± 0 . 0017 0 . 1648 ± 0 . 0070 0 . 5314 ± 0 . 0006 1 . 0000 ± 0 . 0000 Naiv e 0 . 6320 ± 0 . 0008 0 . 1128 ± 0 . 0123 0 . 6520 ± 0 . 0014 0 . 1032 ± 0 . 0063 0 . 5823 ± 0 . 0008 0 . 0864 ± 0 . 0032 Simple 0 . 5837 ± 0 . 0003 0 . 5896 ± 0 . 0118 0 . 6519 ± 0 . 0010 0 . 1424 ± 0 . 0081 0 . 5373 ± 0 . 0009 0 . 6736 ± 0 . 0317 Relax 0 . 5738 ± 0 . 0004 1 . 0000 ± 0 . 0000 0 . 6531 ± 0 . 0018 0 . 5216 ± 0 . 0206 0 . 5592 ± 0 . 0008 0 . 9996 ± 0 . 0008 SVHN InkDrop 0 . 7752 ± 0 . 0003 0 . 9976 ± 0 . 0000 0 . 8187 ± 0 . 0010 1 . 0000 ± 0 . 0000 0 . 7570 ± 0 . 0003 1 . 0000 ± 0 . 0000 Doorping 0 . 7797 ± 0 . 0006 0 . 9996 ± 0 . 0008 0 . 8392 ± 0 . 0012 0 . 0608 ± 0 . 0055 0 . 7209 ± 0 . 0004 1 . 0000 ± 0 . 0000 Naiv e 0 . 7985 ± 0 . 0002 0 . 1108 ± 0 . 0060 0 . 8401 ± 0 . 0004 0 . 1220 ± 0 . 0101 0 . 7703 ± 0 . 0003 0 . 1116 ± 0 . 0064 Simple 0 . 7479 ± 0 . 0002 0 . 1104 ± 0 . 0067 0 . 8415 ± 0 . 0009 0 . 1140 ± 0 . 0081 0 . 7589 ± 0 . 0005 0 . 1140 ± 0 . 0051 Relax 0 . 7473 ± 0 . 0004 1 . 0000 ± 0 . 0000 0 . 8336 ± 0 . 0024 0 . 9920 ± 0 . 0028 0 . 7450 ± 0 . 0008 1 . 0000 ± 0 . 0000 FMNIST InkDrop 0 . 8883 ± 0 . 0005 0 . 9972 ± 0 . 0000 0 . 8417 ± 0 . 0007 0 . 9856 ± 0 . 0011 0 . 8841 ± 0 . 0006 0 . 9889 ± 0 . 0000 Doorping 0 . 8764 ± 0 . 0004 0 . 0928 ± 0 . 0064 0 . 8841 ± 0 . 0003 0 . 9976 ± 0 . 0015 0 . 8126 ± 0 . 0007 1 . 0000 ± 0 . 0000 Naiv e 0 . 8868 ± 0 . 0005 0 . 0900 ± 0 . 0075 0 . 8867 ± 0 . 0004 0 . 0932 ± 0 . 0071 0 . 8811 ± 0 . 0004 0 . 0980 ± 0 . 0046 Simple 0 . 8682 ± 0 . 0004 0 . 1784 ± 0 . 0053 0 . 8786 ± 0 . 0002 0 . 1588 ± 0 . 0069 0 . 8801 ± 0 . 0002 0 . 1508 ± 0 . 0119 Relax 0 . 8279 ± 0 . 0003 1 . 0000 ± 0 . 0000 0 . 8750 ± 0 . 0005 1 . 0000 ± 0 . 0000 0 . 8737 ± 0 . 0003 1 . 0000 ± 0 . 0000 lower visual distortion. Secondly , Structural Similarity Index Measure (SSIM) assesses structural fidelity , where values closer to 1 reflect stronger perceptual alignment. Thirdly , Inception Score (IS) captures the KL diver gence between the predicted class distribution of an individual sample and the marginal distribution, serving as an indicator of semantic recognizability . For interpretability , we define a transformed stealth score as IS † = (10 − 3 − IS )e − 4 , such that higher values correspond to greater stealth and lower detectability . C. Experimental Results 1) Overall Attack P erformance: W e begin by ev aluating the overall attack performance of each backdoor method in balancing three fundamental objecti ves: ASR, CT A, and STH. T o illustrate the trade-offs among these competing factors, we present radar charts (Figure 4) that depict the normalized scores of each approach across all e valuation dimensions. These visualizations provide a unified vie w of how well each attack achieves high ASR, preserves CT A, and maintains visual STH. While existing methods exhibit reasonable performance in specific settings, they often fail to consistently inject JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 9 T ABLE III A T T A CK P ER F O RM A N C E W I TH A LE X N E T B N AS T HE C ON D E NS ATI O N B AC K BO N E . DM IDM D AM Dataset Method CT A ASR CT A ASR CT A ASR STL10 InkDrop 0 . 5553 ± 0 . 0040 0 . 9667 ± 0 . 0000 0 . 6774 ± 0 . 0012 0 . 9067 ± 0 . 0133 0 . 5597 ± 0 . 0067 1 . 0000 ± 0 . 0000 Doorping 0 . 5565 ± 0 . 0038 1 . 0000 ± 0 . 0000 0 . 6462 ± 0 . 0030 1 . 0000 ± 0 . 0000 0 . 5650 ± 0 . 0004 1 . 0000 ± 0 . 0000 Naiv e 0 . 5732 ± 0 . 0041 0 . 1044 ± 0 . 0097 0 . 7292 ± 0 . 0033 0 . 1000 ± 0 . 0067 0 . 6029 ± 0 . 0035 0 . 1008 ± 0 . 0097 Simple 0 . 5436 ± 0 . 0022 0 . 0924 ± 0 . 0072 0 . 7238 ± 0 . 0026 0 . 1016 ± 0 . 0128 0 . 5683 ± 0 . 0025 0 . 0984 ± 0 . 0096 Relax 0 . 5496 ± 0 . 0027 0 . 7064 ± 0 . 0097 0 . 7191 ± 0 . 0020 0 . 6676 ± 0 . 0287 0 . 5658 ± 0 . 0050 0 . 8724 ± 0 . 0223 Tiny ImageNet InkDrop 0 . 4474 ± 0 . 0015 1 . 0000 ± 0 . 0000 0 . 2652 ± 0 . 0044 1 . 0000 ± 0 . 0000 0 . 4048 ± 0 . 0062 1 . 0000 ± 0 . 0000 Dooring 0 . 4854 ± 0 . 0017 1 . 0000 ± 0 . 0000 0 . 2930 ± 0 . 0055 1 . 0000 ± 0 . 0000 0 . 4186 ± 0 . 0101 1 . 0000 ± 0 . 0000 Naiv e 0 . 4628 ± 0 . 0064 0 . 0082 ± 0 . 0013 0 . 2836 ± 0 . 0073 0 . 0000 ± 0 . 0000 0 . 4302 ± 0 . 0129 0 . 0100 ± 0 . 0013 Simple 0 . 4566 ± 0 . 0034 0 . 0110 ± 0 . 0018 0 . 3372 ± 0 . 0063 0 . 0534 ± 0 . 0082 0 . 4434 ± 0 . 0069 0 . 0128 ± 0 . 0023 Relax 0 . 4486 ± 0 . 0029 0 . 8354 ± 0 . 0167 0 . 3126 ± 0 . 0074 0 . 7594 ± 0 . 0577 0 . 4410 ± 0 . 0038 0 . 7870 ± 0 . 0273 CIF AR10 InkDrop 0 . 6037 ± 0 . 0042 0 . 9773 ± 0 . 0053 0 . 7263 ± 0 . 0016 1 . 0000 ± 0 . 0000 0 . 5941 ± 0 . 0023 0 . 9967 ± 0 . 0000 Doorping 0 . 5046 ± 0 . 0009 1 . 0000 ± 0 . 0000 0 . 6387 ± 0 . 0026 1 . 0000 ± 0 . 0000 0 . 5646 ± 0 . 0003 1 . 0000 ± 0 . 0000 Naiv e 0 . 6077 ± 0 . 0019 0 . 0928 ± 0 . 0111 0 . 7392 ± 0 . 0017 0 . 1044 ± 0 . 0086 0 . 6089 ± 0 . 0003 0 . 0964 ± 0 . 0101 Simple 0 . 5807 ± 0 . 0012 0 . 1828 ± 0 . 0127 0 . 7268 ± 0 . 0008 0 . 1456 ± 0 . 0094 0 . 5843 ± 0 . 0010 0 . 2040 ± 0 . 0236 Relax 0 . 6030 ± 0 . 0007 0 . 7040 ± 0 . 0219 0 . 2515 ± 0 . 0022 0 . 6356 ± 0 . 0239 0 . 5905 ± 0 . 0016 0 . 9776 ± 0 . 0043 SVHN InkDrop 0 . 8049 ± 0 . 0003 0 . 9941 ± 0 . 0000 0 . 8846 ± 0 . 0006 0 . 9796 ± 0 . 0027 0 . 8079 ± 0 . 0002 0 . 9863 ± 0 . 0000 Doorping 0 . 7741 ± 0 . 0013 1 . 0000 ± 0 . 0000 0 . 7811 ± 0 . 0016 1 . 0000 ± 0 . 0000 0 . 5931 ± 0 . 0033 1 . 0000 ± 0 . 0000 Naiv e 0 . 6973 ± 0 . 0068 0 . 1236 ± 0 . 0059 0 . 8858 ± 0 . 0006 0 . 1164 ± 0 . 0099 0 . 7007 ± 0 . 0021 0 . 1124 ± 0 . 0077 Simple 0 . 4844 ± 0 . 0097 0 . 0712 ± 0 . 0045 0 . 8797 ± 0 . 0008 0 . 1176 ± 0 . 0077 0 . 6932 ± 0 . 0062 0 . 0924 ± 0 . 0069 Relax 0 . 6723 ± 0 . 0092 0 . 9776 ± 0 . 0074 0 . 8736 ± 0 . 0008 0 . 9996 ± 0 . 0008 0 . 6921 ± 0 . 0028 0 . 9956 ± 0 . 0027 FMNIST InkDrop 0 . 8088 ± 0 . 0035 0 . 9833 ± 0 . 0000 0 . 8569 ± 0 . 0004 0 . 9844 ± 0 . 0014 0 . 8171 ± 0 . 0021 1 . 0000 ± 0 . 0000 Doorping 0 . 6356 ± 0 . 0047 1 . 0000 ± 0 . 0000 0 . 7364 ± 0 . 0011 1 . 0000 ± 0 . 0000 0 . 7579 ± 0 . 0029 1 . 0000 ± 0 . 0000 Naiv e 0 . 8435 ± 0 . 0010 0 . 0904 ± 0 . 0098 0 . 8578 ± 0 . 0008 0 . 1132 ± 0 . 0030 0 . 8208 ± 0 . 0022 0 . 1000 ± 0 . 0028 Simple 0 . 8122 ± 0 . 0064 0 . 9516 ± 0 . 0090 0 . 8489 ± 0 . 0012 0 . 2308 ± 0 . 0281 0 . 8063 ± 0 . 0023 0 . 4816 ± 0 . 1284 Relax 0 . 8155 ± 0 . 0028 1 . 0000 ± 0 . 0000 0 . 8558 ± 0 . 0010 0 . 7188 ± 0 . 0148 0 . 8108 ± 0 . 0016 1 . 0000 ± 0 . 0000 effecti ve backdoors across diverse datasets and condensation strategies. Their attack success is inconsistent, particularly under varying le vels of visual complexity . In contrast, InkDrop consistently achiev es high ASR across all ev aluated datasets and condensation strate gies, demonstrating superior rob ustness and adaptability to varying synthetic training distributions. Moreov er , although some existing methods achie ve a reason- able balance between ASR and CT A, they often compromise on stealth. As shown in Figure 4, their performance on perceptual metrics such as PSNR, SSIM, and IS † is notably weak er, indicating lower visual fidelity and higher detectability of the injected triggers. In comparison, InkDrop achiev es a more fa vorable balance, embedding imperceptible perturbations that preserve benign beha vior while sustaining attack efficac y . These findings underscore the necessity of jointly optimizing stealth- iness, model utility , and attack effecti veness when designing backdoor attacks within dataset condensation frameworks. 2) Attac k Effectiveness on Differ ent Datasets and Synthetic Backbone: Based on the results shown in T able II and III, we provide a comprehensi ve ev aluation of attack performance across fiv e datasets, three condensation strategies, and fi ve attack methods. The ev aluation focuses on two key metrics: CT A and ASR, which jointly characterize the trade-off be- tween maintaining model utility and achie ving effectiv e attack injection. Overall, InkDrop consistently achieves near-perfect ASR while maintaining competitiv e CT A, demonstrating its ability to reliably embed backdoors without compromising model utility across di verse scenarios. Among the baselines, Doorping is the strongest competitor , frequently attaining high ASR and reasonable CT A across datasets. Howe ver , it is often DM IDM DAM 0 20 40 60 PSNR DM IDM DAM 0.0 0.2 0.4 0.6 SSIM DM IDM DAM 0 2e-4 4e-4 6e-4 IS Doorping Naive Simple Relax InkDrop (a) STL-10 DM IDM DAM 0 20 40 60 PSNR DM IDM DAM 0.0 0.2 0.4 0.6 SSIM DM IDM DAM 0 1e-4 2e-4 3e-4 IS Doorping Naive Simple Relax InkDrop (b) T iny-ImageNet DM IDM DAM 0 20 40 60 PSNR DM IDM DAM 0.0 0.2 0.4 0.6 0.8 SSIM DM IDM DAM 0 1e-4 2e-4 3e-4 4e-4 IS Doorping Naive Simple Relax InkDrop (c) CIF AR-10 Fig. 5. Comparison of stealthiness across different datasets using PSNR, SSIM, and IS † metrics. accompanied by reduced stealthiness. In contrast, Nai ve, Simple, and Relax exhibit limited ef fectiv eness, with attack success confined to specific configurations. These methods often fail to achieve a fa vorable ASR-CT A balance, particularly on more complex datasets or under complex condensation strategies. For instance, under the DM setting on STL10, Naiv e, JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 10 T ABLE IV C RO S S - A R C HI T E C TU R E A TTAC K T R A N SF E R AB I L I TY W IT H C O N V N ET S YN T H E SI Z E R . DM IDM D AM Dataset Network CT A ASR CT A ASR CT A ASR STL10 AlexNetBN 0 . 6014 ± 0 . 0015 1 . 0000 ± 0 . 0000 0 . 6568 ± 0 . 0017 1 . 0000 ± 0 . 0000 0 . 6312 ± 0 . 0010 1 . 0000 ± 0 . 0000 ResNet18 0 . 4498 ± 0 . 0010 1 . 0000 ± 0 . 0000 0 . 6246 ± 0 . 0014 1 . 0000 ± 0 . 0000 0 . 4267 ± 0 . 0007 1 . 0000 ± 0 . 0000 VGG11 0 . 5772 ± 0 . 0010 1 . 0000 ± 0 . 0000 0 . 6594 ± 0 . 0005 1 . 0000 ± 0 . 0000 0 . 5482 ± 0 . 0003 1 . 0000 ± 0 . 0000 Tiny ImageNet AlexNetBN 0 . 5328 ± 0 . 0023 1 . 0000 ± 0 . 0000 0 . 5472 ± 0 . 0035 1 . 0000 ± 0 . 0000 0 . 5594 ± 0 . 0043 1 . 0000 ± 0 . 0000 ResNet18 0 . 4508 ± 0 . 0016 1 . 0000 ± 0 . 0000 0 . 4826 ± 0 . 0037 1 . 0000 ± 0 . 0000 0 . 4454 ± 0 . 0015 1 . 0000 ± 0 . 0000 VGG11 0 . 4692 ± 0 . 0013 1 . 0000 ± 0 . 0000 0 . 5324 ± 0 . 0016 1 . 0000 ± 0 . 0000 0 . 4456 ± 0 . 0021 1 . 0000 ± 0 . 0000 CIF AR10 AlexNetBN 0 . 6209 ± 0 . 0013 1 . 0000 ± 0 . 0000 0 . 6922 ± 0 . 0008 1 . 0000 ± 0 . 0000 0 . 6240 ± 0 . 0016 1 . 0000 ± 0 . 0000 ResNet18 0 . 5566 ± 0 . 0002 1 . 0000 ± 0 . 0000 0 . 6563 ± 0 . 0004 1 . 0000 ± 0 . 0000 0 . 5244 ± 0 . 0003 1 . 0000 ± 0 . 0000 VGG11 0 . 5827 ± 0 . 0002 1 . 0000 ± 0 . 0000 0 . 6538 ± 0 . 0002 1 . 0000 ± 0 . 0000 0 . 5423 ± 0 . 0002 1 . 0000 ± 0 . 0000 SVHN AlexNetBN 0 . 7540 ± 0 . 0006 1 . 0000 ± 0 . 0000 0 . 8315 ± 0 . 0008 1 . 0000 ± 0 . 0000 0 . 7820 ± 0 . 0009 1 . 0000 ± 0 . 0000 ResNet18 0 . 8074 ± 0 . 0002 1 . 0000 ± 0 . 0000 0 . 8348 ± 0 . 0005 1 . 0000 ± 0 . 0000 0 . 7949 ± 0 . 0003 1 . 0000 ± 0 . 0000 VGG11 0 . 8263 ± 0 . 0001 1 . 0000 ± 0 . 0000 0 . 8402 ± 0 . 0001 1 . 0000 ± 0 . 0000 0 . 7970 ± 0 . 0001 1 . 0000 ± 0 . 0000 T ABLE V C RO S S - A R C HI T E C TU R E A TTAC K T R A N SF E R AB I L I TY W IT H A L E X N ET B N S Y N T HE S I ZE R . DM IDM D AM Dataset Network CT A ASR CT A ASR CT A ASR STL10 Con vNet 0 . 5392 ± 0 . 0002 1 . 0000 ± 0 . 0000 0 . 6768 ± 0 . 0007 1 . 0000 ± 0 . 0000 0 . 5413 ± 0 . 0004 1 . 0000 ± 0 . 0000 ResNet18 0 . 4340 ± 0 . 0009 1 . 0000 ± 0 . 0000 0 . 6333 ± 0 . 0004 1 . 0000 ± 0 . 0000 0 . 4808 ± 0 . 0011 1 . 0000 ± 0 . 0000 VGG11 0 . 5332 ± 0 . 0008 1 . 0000 ± 0 . 0000 0 . 6596 ± 0 . 0004 1 . 0000 ± 0 . 0000 0 . 5425 ± 0 . 0007 1 . 0000 ± 0 . 0000 Tiny ImageNet Con vNet 0 . 4284 ± 0 . 0024 1 . 0000 ± 0 . 0000 0 . 3728 ± 0 . 0071 1 . 0000 ± 0 . 0000 0 . 4492 ± 0 . 0020 0 . 9667 ± 0 . 0000 ResNet18 0 . 3980 ± 0 . 0030 1 . 0000 ± 0 . 0000 0 . 3086 ± 0 . 0100 1 . 0000 ± 0 . 0000 0 . 4058 ± 0 . 0013 1 . 0000 ± 0 . 0000 VGG11 0 . 4042 ± 0 . 0018 1 . 0000 ± 0 . 0000 0 . 4638 ± 0 . 0037 1 . 0000 ± 0 . 0000 0 . 4122 ± 0 . 0016 0 . 9667 ± 0 . 0000 CIF AR10 Con vNet 0 . 5836 ± 0 . 0008 1 . 0000 ± 0 . 0000 0 . 6656 ± 0 . 0008 1 . 0000 ± 0 . 0000 0 . 5744 ± 0 . 0008 1 . 0000 ± 0 . 0000 ResNet18 0 . 5305 ± 0 . 0005 0 . 9967 ± 0 . 0000 0 . 7011 ± 0 . 0002 1 . 0000 ± 0 . 0000 0 . 5151 ± 0 . 0003 1 . 0000 ± 0 . 0000 VGG11 0 . 5479 ± 0 . 0000 1 . 0000 ± 0 . 0000 0 . 6862 ± 0 . 0006 1 . 0000 ± 0 . 0000 0 . 5449 ± 0 . 0001 1 . 0000 ± 0 . 0000 SVHN Con vNet 0 . 7951 ± 0 . 0004 1 . 0000 ± 0 . 0000 0 . 8412 ± 0 . 0015 1 . 0000 ± 0 . 0000 0 . 7963 ± 0 . 0002 1 . 0000 ± 0 . 0000 ResNet18 0 . 8193 ± 0 . 0002 0 . 9980 ± 0 . 0000 0 . 8964 ± 0 . 0007 1 . 0000 ± 0 . 0000 0 . 8177 ± 0 . 0003 1 . 0000 ± 0 . 0000 VGG11 0 . 8253 ± 0 . 0001 1 . 0000 ± 0 . 0000 0 . 8811 ± 0 . 0003 1 . 0000 ± 0 . 0000 0 . 8347 ± 0 . 0001 1 . 0000 ± 0 . 0000 Simple, and Relax achie ve ASRs of only 0.1488, 0.1032, and 0.0964, respectiv ely , indicating an inability to inject reliable triggers. Moreover , their performance further deteriorates under more adv anced strategies such as IDM and D AM, where maintaining both ASR and CT A becomes increasingly difficult. The results underscore the consistent superiority of InkDrop, which deli vers stable, high-performance backdoor attacks across all datasets and condensation methods. While Doorping remains a viable baseline, its reduced stealthiness limits its practicality in sensiti ve settings. The remaining methods prove fragile and unreliable in realistic or challenging en vironments. These findings highlight the necessity of designing backdoor strategies that are not only ef fectiv e b ut also rob ust to v ariations in data distribution. 3) Effectiveness on Cr oss Arc hitectur es: T o ev aluate the cross-architecture robustness of InkDrop, we perform exper- iments in which the condensation and downstream models differ in architectural capacity . This setting mirrors real-world deployment scenarios, where synthetic datasets are often reused across heterogeneous model families. Following [15], we con- sider four representativ e architectures: Con vNet, AlexNetBN, VGG11, and ResNet18, and construct all non-identical model pairs by using one network for condensation and a dif ferent one for downstream ev aluation. As reported in T able IV and V, I N K D RO P consistently achiev es high ASR and maintains reasonable CT A across the majority of model combinations. This indicates that the backdoor signal is not narrowly tailored to the condensation model but instead transfers reliably to div erse architectures. Even in more challenging settings, such as transferring from a lightweight encoder like ConvNet to a deeper network such as ResNet18, InkDrop preserves strong attack efficac y . While slight variations in performance are observed under certain architecture pairs, these fluctuations are minor and do not compromise the overall integrity of the attack. These results underscore the adaptability of InkDrop, demonstrating its ability to maintain both attack strength and model utility under cross-architecture settings. 4) Evaluation of Stealthiness: As shown in Figure 5, InkDrop exhibits superior stealthiness performance across all condensation strategies, consistently surpassing baseline meth- ods in perceptual fidelity . The generated perturbations preserve high visual quality , as reflected in results across all three stealthiness metrics. This indicates that the injected triggers are subtle and non-disruptiv e, maintaining both local structure and global perceptual coherence. Unlik e prior methods, which often introduce conspicuous artifacts or semantic inconsistencies, InkDrop produces natural-looking modifications that closely resemble clean samples. While certain baselines achie ve mod- JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 11 T ABLE VI A T T A CK E FFE C T IV E N E SS W IT H V A RYI N G T A RG E T . T arget Class 1 T arget Class 2 T arget Class 3 Dataset Method CT A ASR CT A ASR CT A ASR STL10 DM 0 . 5846 ± 0 . 0008 1 . 0000 ± 0 . 0000 0 . 5762 ± 0 . 0006 0 . 9667 ± 0 . 0000 0 . 5836 ± 0 . 0007 0 . 9667 ± 0 . 0000 IDM 0 . 6491 ± 0 . 0010 0 . 9133 ± 0 . 0163 0 . 6454 ± 0 . 0011 1 . 0000 ± 0 . 0000 0 . 6619 ± 0 . 0009 0 . 9333 ± 0 . 0000 D AM 0 . 5355 ± 0 . 0008 1 . 0000 ± 0 . 0000 0 . 5440 ± 0 . 0011 1 . 0000 ± 0 . 0000 0 . 5280 ± 0 . 0010 1 . 0000 ± 0 . 0000 Tiny ImageNet DM 0 . 4910 ± 0 . 0017 1 . 0000 ± 0 . 0000 0 . 4992 ± 0 . 0022 1 . 0000 ± 0 . 0000 0 . 5196 ± 0 . 0017 1 . 0000 ± 0 . 0000 IDM 0 . 5084 ± 0 . 0040 1 . 0000 ± 0 . 0000 0 . 4968 ± 0 . 0050 0 . 9667 ± 0 . 0000 0 . 5140 ± 0 . 0021 0 . 9667 ± 0 . 0000 D AM 0 . 4616 ± 0 . 0022 1 . 0000 ± 0 . 0000 0 . 4710 ± 0 . 0044 1 . 0000 ± 0 . 0000 0 . 4814 ± 0 . 0014 1 . 0000 ± 0 . 0000 CIF AR10 DM 0 . 6038 ± 0 . 0005 1 . 0000 ± 0 . 0000 0 . 6037 ± 0 . 0002 1 . 0000 ± 0 . 0000 0 . 6006 ± 0 . 0007 1 . 0000 ± 0 . 0000 IDM 0 . 6494 ± 0 . 0016 1 . 0000 ± 0 . 0000 0 . 6487 ± 0 . 0015 1 . 0000 ± 0 . 0000 0 . 6525 ± 0 . 0010 0 . 9967 ± 0 . 0000 D AM 0 . 5774 ± 0 . 0004 1 . 0000 ± 0 . 0000 0 . 5857 ± 0 . 0004 1 . 0000 ± 0 . 0000 0 . 5817 ± 0 . 0008 1 . 0000 ± 0 . 0000 SVHN DM 0 . 7692 ± 0 . 0005 1 . 0000 ± 0 . 0000 0 . 7796 ± 0 . 0005 1 . 0000 ± 0 . 0000 0 . 8077 ± 0 . 0005 1 . 0000 ± 0 . 0000 IDM 0 . 8176 ± 0 . 0016 1 . 0000 ± 0 . 0000 0 . 8238 ± 0 . 0012 1 . 0000 ± 0 . 0000 0 . 8241 ± 0 . 0013 1 . 0000 ± 0 . 0000 D AM 0 . 7740 ± 0 . 0006 1 . 0000 ± 0 . 0000 0 . 7595 ± 0 . 0006 1 . 0000 ± 0 . 0000 0 . 7662 ± 0 . 0005 1 . 0000 ± 0 . 0000 T ABLE VII I N DI V I D UA L C O NT R I BU T I O NS O F E AC H L O S S C O MP O N EN T IN I NK D RO P . Loss Func CT A ASR PSNR SSIM IS L contrast 0 . 6048 ± 0 . 0003 0 . 8667 ± 0 . 0000 65 . 7008 0 . 1559 8 . 7760 × 10 − 6 L contrast + L sof t 0 . 6071 ± 0 . 0009 1 . 0000 ± 0 . 0000 58 . 9198 − 0 . 0257 5 . 9028 × 10 − 5 L contrast + L sof t + L L 2 0 . 6081 ± 0 . 0002 1 . 0000 ± 0 . 0000 63 . 5818 0 . 1028 1 . 2014 × 10 − 5 L contrast + L sof t + L L 2 + L LP I P S 0 . 6092 ± 0 . 0003 1 . 0000 ± 0 . 0000 64 . 9668 0 . 1324 6 . 2835 × 10 − 6 T ABLE VIII I M P AC T O F V A RY IN G I P C ( I M AG E P E R C L A SS , N U M B E R O F S Y N TH E T IC S AM P L E S P E R C L AS S ) O N A S R . IPC = 10 IPC = 20 IPC = 50 Method CT A ASR CT A ASR CT A ASR DM 0 . 4469 ± 0 . 0006 1 . 0000 ± 0 . 0000 0 . 5072 ± 0 . 0005 0 . 9667 ± 0 . 0000 0 . 5805 ± 0 . 0008 1 . 0000 ± 0 . 0000 IDM 0 . 5851 ± 0 . 0010 0 . 9333 ± 0 . 0000 0 . 6223 ± 0 . 0015 0 . 9667 ± 0 . 0000 0 . 6476 ± 0 . 0007 0 . 9333 ± 0 . 0000 D AM 0 . 3909 ± 0 . 0003 0 . 9667 ± 0 . 0000 0 . 4508 ± 0 . 0008 1 . 0000 ± 0 . 0000 0 . 5350 ± 0 . 0011 1 . 0000 ± 0 . 0000 Clean Ima ges Triggered Image s Fig. 6. V isual comparison between clean and triggered samples on STL10. erate scores on indi vidual stealth indicators, they compromise on ASR. In contrast, InkDrop achieves a fa vorable balance, embedding imperceptible triggers without compromising ASR or CT A. These findings underscore the ef fectiv eness of jointly optimizing for stealthiness and functionality during attack construction. Moreov er , Figure 6 provides a side-by-side visualization of clean samples and their corresponding triggered versions synthesized by InkDrop on the STL10 dataset. The injected perturbations are visually subtle, preserving both local texture and global structure with minimal perceptual deviation. Notably , the modifications introduce no apparent artifacts or semantic inconsistencies, highlighting the ef fectiv eness of the stealth- aware objectiv e. This visual e vidence supports the claim that InkDrop generates imperceptible triggers while maintaining high visual fidelity . 5) Attack Ef fectiveness with V arying T ar get: T o further ev aluate the robustness and adaptability of InkDrop, we conduct comprehensiv e experiments in which the target class is randomly selected across various datasets and condensation strategies, as shown in T able VI. This setting simulates realistic scenarios where the attacker can flexibly choose an effecti ve target class rather than relying on fixed assumptions. Despite the variability introduced by dif ferent target classes, InkDrop consistently achie ves high ASR. These results demonstrate that InkDrop can effecti vely synthesize trigger patterns that align with di verse class distrib utions, without relying on class-specific heuristics or manual adjustments. This resilience to target class v ariability , combined with its compatibility across condensation JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 12 T ABLE IX A T T A CK E FFE C T IV E N E SS O F I N K D RO P WH E N A P P L Y I NG D EF E N S ES S UC H AS P DB A ND R NP . DM IDM D AM PDB RNP PDB RNP PDB RNP Dataset CT A ASR CT A ASR CT A ASR CT A ASR CT A ASR CT A ASR STL10 0.0940 0.1000 0.4641 1.0000 0.1658 0.9000 0.3015 0.6333 0.0800 0.2333 0.5343 1.0000 Tiny-ImageNet 0.0540 0.0333 0.2100 0.0000 0.0950 0.2333 0.0820 0.0000 0.0390 0.1333 0.2200 0.8667 CIF AR10 0.0723 0.1333 0.2900 0.9933 0.1994 0.5267 0.2407 0.5867 0.0647 0.1033 0.3972 1.0000 SVHN 0.0657 0.1429 0.5192 1.0000 0.2323 0.6538 0.6082 0.9952 0.0547 0.0799 0.6120 1.0000 framew orks, underscores the practicality of InkDrop for real- world deployment, where attackers may seek to optimize impact under dynamic or uncertain conditions. 6) Ablation Study: T able VII reports an ablation study on the STL10 dataset, ev aluating the individual contribution of each loss component in InkDrop. With only the contrastiv e loss, the model achie ves a moderate ASR of 0.8667, indicating initial effecti veness in encoding backdoor beha vior . Introducing soft label alignment loss L soft elev ates ASR to 1, but at the expense of PSNR, SSIM, and IS, reflecting a reduction in visual fidelity . This indicates that the trigger becomes more effecti ve, but also more conspicuous. Adding the L2 regularization term L L2 improv es stealthiness by suppressing the magnitude of excessi ve perturbation, yielding better stealthiness metrics while preserving attack efficacy . The final addition of perceptual loss L LPIPS further enhances visual realism without sacrificing ASR or CT A. Collectively , these results demonstrate the complementary nature of loss components: L contrast and L soft promote effecti ve backdoor embedding, while L L2 and L LPIPS refine stealth and perturbation quality . T ABLE X A T T A CK E FFE C T IV E N E SS O F I N K D RO P WH E N A P P L Y I NG P IX E L A N D A B S . DM IDM D AM Dataset PIXEL ABS PIXEL ABS PIXEL ABS STL10 1.7860 0.20 0.9122 0.18 1.6289 0.16 Tiny-ImageNet 1.1632 0.22 1.8707 0.10 1.6165 0.10 CIF AR10 1.4333 0.44 1.9543 0.38 1.0792 0.51 SVHN 1.7061 0.30 0.8672 0.38 0.9636 0.39 7) Influence of the Size of Condensation Data: T able VIII analyzes how varying the number of images per class (IPC) in the distilled dataset influences the attack performance of InkDrop. Across all condensation strategies and IPC settings of 10, 20, and 50, the attack consistently achieves high ASR. This demonstrates that InkDrop reliably embeds effecti ve backdoors independent of the synthetic dataset size. While CT A increases with larger IPC values, the attack remains effecti ve ev en under constrained data conditions. These results highlight InkDrop’ s effecti veness in handling dif ferent data volumes. 8) Rob ust to Existing Defenses: T o ev aluate the rob ustness of InkDrop against existing defenses, we systematically assess its performance under a comprehensive set of state-of-the-art strategies, including model-lev el, input-le vel, and dataset-level defenses (as shown in T able X and IX). Across all ev aluated settings, InkDrop maintains high ASR and competitiv e CT A, demonstrating strong resilience. For model-level defenses such as PIXEL [37], InkDrop consistently yields anomaly scores belo w detection thresholds, indicating that the injected triggers do not induce recognizable deviations. Input-level defenses like ABS [38], which aim to reconstruct clean inputs, exhibit limited effecti veness; the regenerated inputs f ail to remove the backdoor effect, resulting in sustained attack success. Dataset- le vel methods such as RNP [39] and PDB [40], which attempt to filter poisoned samples, occasionally suppress ASR but simultaneously reduce CT A, reflecting a substantial trade-off. These results underscore the difficulty of defending against the subtle, input-adaptiv e perturbations introduced by InkDrop and highlight its robustness across div erse defense paradigms. V . C O N C L U S I O N This paper presents InkDrop, a stealthy backdoor attack tailored for dataset condensation. Motiv ated by the insight that samples near decision boundaries are inherently susceptible to class shifts, InkDrop selectively perturbs these boundary- adjacent instances. It employs a learnable, instance-specific trig- ger generator trained via a multi-objecti ve loss that integrates contrastiv e alignment, distribution matching, and perceptual consistency . Comprehensive ev aluations across diverse datasets, condensation strategies, and defense mechanisms demonstrate that InkDrop consistently achie ves a fav orable balance between attack effecti veness, model utility , and stealthiness. These find- ings highlight the crucial role of decision-boundary sensiti vity and stealth-aware design in developing effecti ve and resilient backdoor attacks under realistic constraints. R E F E R E N C E S [1] T . W ang, J.-Y . Zhu, A. T orralba, and A. A. Efros, “Dataset distillation, ” arXiv preprint arXiv:1811.10959 , 2018. [2] B. Zhao, K. R. Mopuri, and H. Bilen, “Dataset condensation with gradient matching, ” arXiv pr eprint arXiv:2006.05929 , 2020. [3] Y . He, L. Xiao, J. T . Zhou, and I. W . Tsang, “Multisize dataset condensation, ” in The T welfth International Conference on Learning Repr esentations, ICLR 2024, V ienna, Austria, May 7-11, 2024 . Open- Revie w .net, 2024. [4] D. Qi, J. Li, J. Peng, B. Zhao, S. Dou, J. Li, J. Zhang, Y . W ang, C. W ang, and C. Zhao, “Fetch and forge: Ef ficient dataset condensation for object detection, ” Advances in Neural Information Pr ocessing Systems , v ol. 37, pp. 119 283–119 300, 2024. [5] H. Zhang, S. Li, P . W ang, D. Zeng, and S. Ge, “M3d: Dataset conden- sation by minimizing maximum mean discrepanc y , ” in Pr oceedings of the AAAI Confer ence on Artificial Intelligence , vol. 38, no. 8, 2024, pp. 9314–9322. [6] P . Sun, B. Shi, D. Y u, and T . Lin, “On the diversity and realism of distilled dataset: An efficient dataset distillation paradigm, ” in Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2024, pp. 9390–9399. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 13 [7] D. Su, J. Hou, W . Gao, Y . Tian, and B. T ang, “Dˆ 4: Dataset distillation via disentangled dif fusion model, ” in Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2024, pp. 5809–5818. [8] J. Du, J. Hu, W . Huang, J. T . Zhou et al. , “Di versity-driv en synthesis: Enhancing dataset distillation through directed weight adjustment, ” Advances in neural information pr ocessing systems , v ol. 37, pp. 119 443– 119 465, 2024. [9] T . Dong, B. Zhao, and L. Lyu, “Priv acy for free: How does dataset condensation help pri vacy?” in International Confer ence on Machine Learning . PMLR, 2022, pp. 5378–5396. [10] S. Sun, H. Sun, H. Zhang, and Y . Zhang, “ A study of backdoor attacks on data distillation for text classification tasks, ” in 2024 IEEE 23r d International Conference on T rust, Security and Privacy in Computing and Communications (TrustCom) . IEEE, 2024, pp. 2517–2522. [11] K. Zhang, Y . Song, S. Xu, P . Li, R. Qian, P . Han, and L. Xu, “Risk of text backdoor attacks under dataset distillation, ” in International Confer ence on Information Security . Springer, 2024, pp. 127–144. [12] E. Xue, Y . Li, H. Liu, P . W ang, Y . Shen, and H. W ang, “T ow ards adversarially robust dataset distillation by curvature regularization, ” in Pr oceedings of the AAAI Confer ence on Artificial Intelligence , vol. 39, no. 9, 2025, pp. 9041–9049. [13] Z. Zhou, W . Feng, S. L yu, G. Cheng, X. Huang, and Q. Zhao, “Beard: Benchmarking the adversarial robustness for dataset distillation, ” arXiv pr eprint arXiv:2411.09265 , 2024. [14] Y . W u, J. Du, P . Liu, Y . Lin, W . Xu, and W . Cheng, “Dd-rob ustbench: An adversarial robustness benchmark for dataset distillation, ” IEEE T ransactions on Image Pr ocessing , 2025. [15] Y . Liu, Z. Li, M. Backes, Y . Shen, and Y . Zhang, “Backdoor attacks against dataset distillation, ” arXiv preprint , 2023. [16] M.-Y . Chung, S.-Y . Chou, C.-M. Y u, P .-Y . Chen, S.-Y . Kuo, and T .-Y . Ho, “Rethinking backdoor attacks on dataset distillation: A kernel method perspective, ” in The T welfth International Conference on Learning Representations , 2024. [Online]. A vailable: https: //openrevie w .net/forum?id=iCNOK45Csv [17] T . Zheng and B. Li, “Rdm-dc: poisoning resilient dataset condensation with robust distrib ution matching, ” in Uncertainty in Artificial Intelligence . PMLR, 2023, pp. 2541–2550. [18] M. Arazzi, M. Cihangiroglu, S. Nicolazzo, and A. Nocera, “Secure federated data distillation, ” arXiv pr eprint arXiv:2502.13728 , 2025. [19] Z. Y ang, M. Y an, Y . Zhang, and J. T . Zhou, “Dark distillation: Backdooring distilled datasets without accessing raw data, ” arXiv preprint arXiv:2502.04229 , 2025. [20] Z. Chai, Z. Gao, Y . Lin, C. Zhao, X. Y u, and Z. Xie, “ Adaptive backdoor attacks against dataset distillation for federated learning, ” in ICC 2024- IEEE International Conference on Communications . IEEE, 2024, pp. 4614–4619. [21] S. Lei and D. T ao, “ A comprehensive survey of dataset distillation, ” IEEE T ransactions on P attern Analysis and Machine Intelligence , vol. 46, no. 1, pp. 17–32, 2023. [22] R. Y u, S. Liu, and X. W ang, “Dataset distillation: A comprehensive revie w , ” IEEE transactions on pattern analysis and machine intelligence , vol. 46, no. 1, pp. 150–170, 2023. [23] Y . Liu, J. Gu, K. W ang, Z. Zhu, W . Jiang, and Y . Y ou, “Dream: Efficient dataset distillation by representative matching, ” in Pr oceedings of the IEEE/CVF International Conference on Computer V ision , 2023, pp. 17 314–17 324. [24] G. Zhao, G. Li, Y . Qin, and Y . Y u, “Impro ved distribution matching for dataset condensation, ” in Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2023, pp. 7856–7865. [25] A. Sajedi, S. Khaki, E. Amjadian, L. Z. Liu, Y . A. Lawryshyn, and K. N. Plataniotis, “Datadam: Efficient dataset distillation with attention matching, ” in Pr oceedings of the IEEE/CVF International Confer ence on Computer V ision , 2023, pp. 17 097–17 107. [26] A. v . d. Oord, Y . Li, and O. V inyals, “Representation learning with contrastiv e predictive coding, ” arXiv pr eprint arXiv:1807.03748 , 2018. [27] Y . Rubner, C. T omasi, and L. J. Guibas, “The earth mover’ s distance as a metric for image retrie val, ” International journal of computer vision , vol. 40, pp. 99–121, 2000. [28] R. Zhang, P . Isola, A. A. Efros, E. Shechtman, and O. W ang, “The unreasonable effecti veness of deep features as a perceptual metric, ” in Pr oceedings of the IEEE conference on computer vision and pattern r ecognition , 2018, pp. 586–595. [29] A. Coates, A. Ng, and H. Lee, “ An analysis of single-layer networks in unsupervised feature learning, ” in Proceedings of the fourteenth international conference on artificial intellig ence and statistics . JMLR W orkshop and Conference Proceedings, 2011, pp. 215–223. [30] Y . Le and X. Y ang, “T iny imagenet visual recognition challenge, ” CS 231N , vol. 7, no. 7, p. 3, 2015. [31] A. Krizhevsk y , G. Hinton et al. , “Learning multiple layers of features from tiny images, ” 2009. [32] Y . Netzer , T . W ang, A. Coates, A. Bissacco, B. W u, A. Y . Ng et al. , “Reading digits in natural images with unsupervised feature learning, ” in NIPS workshop on deep learning and unsupervised featur e learning , vol. 2011, no. 2. Granada, 2011, p. 4. [33] A. Krizhevsky , I. Sutskever , and G. E. Hinton, “Imagenet classification with deep conv olutional neural networks, ” in Advances in Neural Information Pr ocessing Systems , vol. 25. Curran Associates, Inc., 2012. [34] K. Simonyan and A. Zisserman, “V ery deep con volutional networks for large-scale image recognition, ” arXiv pr eprint arXiv:1409.1556 , 2014. [35] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” in Pr oceedings of the IEEE conference on computer vision and pattern recognition , 2016, pp. 770–778. [36] J. Xia, Z. Y ue, Y . Zhou, Z. Ling, Y . Shi, X. W ei, and M. Chen, “W aveattack: Asymmetric frequency obfuscation-based backdoor attacks against deep neural networks, ” in Advances in Neural Information Pr ocessing Systems , vol. 37. Curran Associates, Inc., 2024, pp. 43 549– 43 570. [37] G. T ao, G. Shen, Y . Liu, S. An, Q. Xu, S. Ma, P . Li, and X. Zhang, “Better trigger inversion optimization in backdoor scanning, ” in 2022 Confer ence on Computer V ision and P attern Recognition (CVPR 2022) , 2022. [38] Y . Liu, W .-C. Lee, G. T ao, S. Ma, Y . Aafer, and X. Zhang, “ Abs: Scanning neural netw orks for back-doors by artificial brain stimulation, ” in Pr oceedings of the 2019 A CM SIGSAC Confer ence on Computer and Communications Security , ser . CCS ’19. New Y ork, NY , USA: Association for Computing Machinery , 2019, p. 1265–1282. [39] Y . Li, X. L yu, X. Ma, N. Koren, L. Lyu, B. Li, and Y .-G. Jiang, “Reconstructiv e neuron pruning for backdoor defense, ” in ICML , 2023. [40] S. W ei, H. Zha, and B. W u, “Mitigating backdoor attack by injecting proactiv e defensive backdoor , ” in Thirty-eighth Confer ence on Neur al Information Pr ocessing Systems , 2024.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment