Koopman-based surrogate modeling for reinforcement-learning-control of Rayleigh-Benard convection

Training reinforcement learning (RL) agents to control fluid dynamics systems is computationally expensive due to the high cost of direct numerical simulations (DNS) of the governing equations. Surrogate models offer a promising alternative by approx…

Authors: Tim Plotzki, Sebastian Peitz

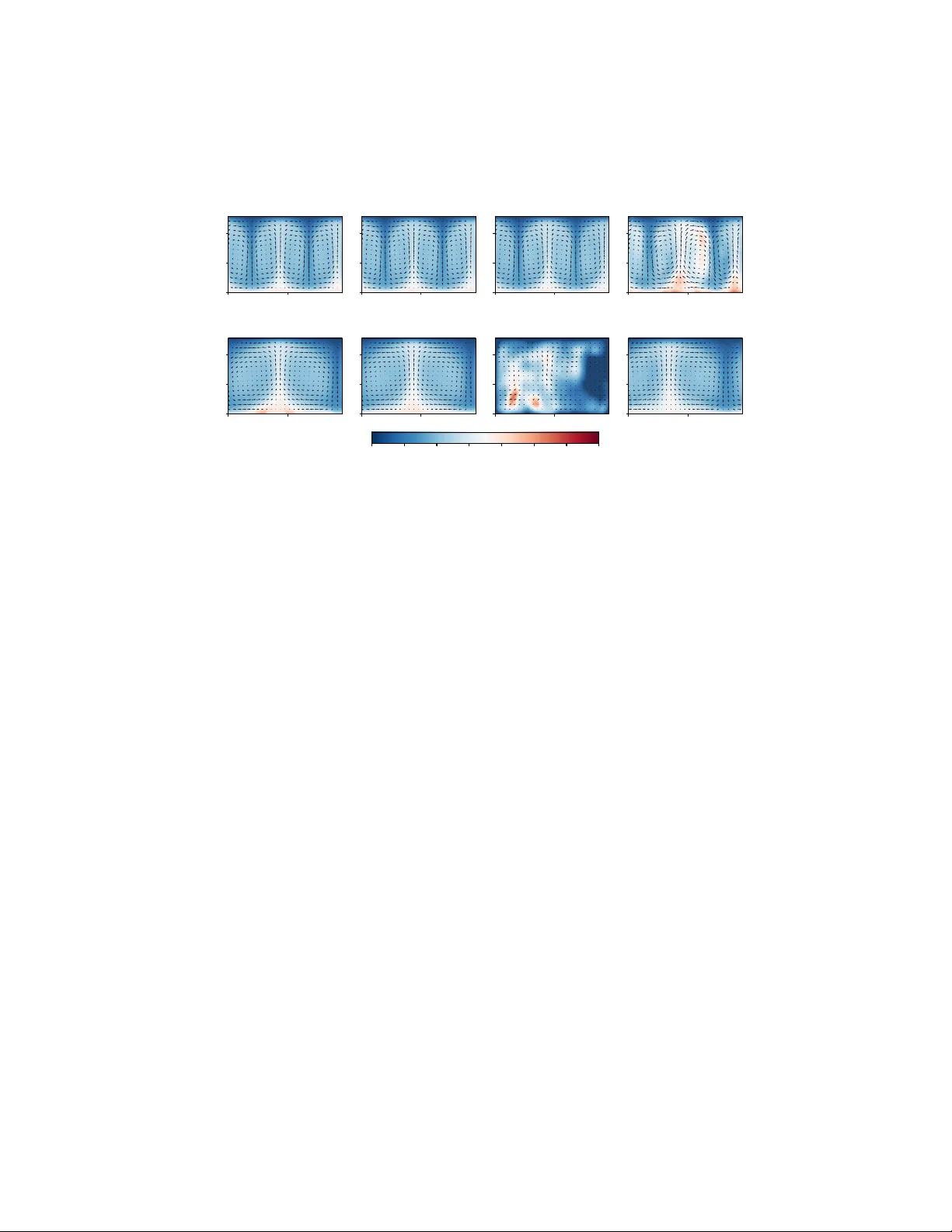

K o opman-based surrogate mo deling for reinforcemen t-learning-con trol of Ra yleigh–Bénard con v ection Tim Plotzki and Sebastian P eitz TU Dortm und Univ ersity & Lamarr Institute for Machine Learning and Artificial In telligence, Dortmund, Germany Abstract. T raining reinforcemen t learning (RL) agen ts to con trol fluid dynamics systems is computationally expensive due to the high cost of di- rect numerical simulations (DNS) of the gov erning equations. Surrogate mo dels offer a promising alternative by appro ximating the dynamics at a fraction of the computational cost, but their feasibility as training envi- ronmen ts for RL is limited by distribution shifts, as p olicies induce state distributions not cov ered b y the surrogate training data. In this work, we in vestigate the use of Linear Recurrent Auto encoder Netw orks (LRANs) for accelerating RL-based control of 2D Rayleigh-Bénard conv ection. W e ev aluate t wo training strategies: a surrogate trained on precomputed data generated with random actions, and a p olicy-aw are surrogate trained it- erativ ely using data collected from an evolving p olicy . Our results sho w that while surrogate-only training leads to reduced con trol p erformance, com bining surrogates with DNS in a pretraining scheme recov ers state-of- the-art p erformance while reducing training time by more than 40% . W e demonstrate that p olicy-a ware training mitigates the effects of distribu- tion shift, enabling more accurate predictions in p olicy-relev ant regions of the state space. Keyw ords: Reinforcemen t Learning · Koopman Op erator · Surrogate Mo deling · Partial Differen tial Equations · Rayleigh-Bénard Conv ection. 1 In tro duction Recen t studies hav e shown that Reinforcement Learning (RL) is capable of con- trolling complex systems gov erned by fluid dynamics. Deep RL algorithms suc h as Pro ximal Policy Optimization (PPO) ha v e b een sho wn to pro duce state- of-the-art con trol strategies for fluid flows, and in particular Ra yleigh-Bénard con vection (RBC), which describ es systems that are driven by buoy ancy forces. These o ccur in a wide range of flav ors, such as in the earth’s atmosphere, in ro oms with heated flo ors, or in nuclear fusion devices. The dynamics are gov- erned b y nonlinear partial differential equations (PDEs) whose state is a func- tion of b oth space and time, rendering direct numerical simulations (DNS) costly . Multi-query tasks such as uncertaint y quan tification, optimization or control are th us either limited to small problem setups, or require massiv e computational 2 Plotzki & Peitz resources. At the same time, surrogate mo deling has emerged as a computation- ally efficient alternative to DNS, capable of predicting the evolution of dynamical systems with reasonable accuracy , but existing research has largely focused on prediction rather than closed-lo op control [7, 13, 19]. Ra yleigh-Bénard conv ection describ es the dynamics of a fluid sub ject to a v ertical temp erature difference [1, 18]. With increasing driving forces, the flow b ecomes increasingly turbulent and more difficult to control, making it an ideal testb ed for studying feedback control of large distributed systems [2]. A key challenge in surrogate mo deling for RL is the distribution shift . As the p olicy improv es during training, the agent explores regions of the state space that differ from states typically seen in an uncontrolled RBC system. As a result, surrogates can b ecome unreliable as they are asked to predict in a region of the state space not co vered by the training data, limiting their usefulness for p olicy optimization and in tro ducing p erformance-degrading biases. This w ork addresses three k ey questions: (1) Can surrogate mo dels pro vide sufficien t accuracy to replace costly DNS during agent training? (2) Is it p ossible to com bine surrogate predictions with DNS to reduce the agents training time without degrading control p erformance? (3) How can the distribution shift b e mitigated to maintain surrogate reliability as the p olicy explores new regions of the state space? Using the example of RBC, we p erform numerical exp erimen ts to address these c hallenges, and giv e recommendations on how to in tertwine surrogate mo deling and reinforcement learning. 2 Related W ork RBC serves as a b enc hmark for surrogate mo deling in fluid dynamics, partic- ularly for ev aluating data-driven reduced-order mo dels under increasingly tur- bulen t conditions [15]. The linear recurrent auto enco der netw ork (LRAN) has emerged as a p opular architectural c hoice for nonlinear dynamical systems [17]. LRANs approximate the underlying Koopman op erator [3] of a system b y learn- ing a nonlinear auto enco der and linear recurrent dynamics in the latent space sim ultaneously . They hav e been sho wn to pro duce go o d quantitativ e and qual- itativ e predictions for the RBC system at a comparatively low computational cost [13]. At the same time, RBC has also been used for testing deep RL meth- o ds aimed at controlling complex dynamical systems [14, 27, 28]. Agents trained on high-fidelit y DNS using PPO ha v e been shown to outperform traditional con trol strategies, and hav e b een shown to generalize across a wide range of states. Besides, RL has b een applied to a wide range of fluid systems such as c hannel flo ws [8], decaying turbulence [20], aero dynamics [21] or the Kuramoto- Siv ashinski equation [4]. The area of mo del-based RL has become increasingly imp ortant in recent y ears, examples b eing the PILCO [6] or dreamer [9] algorithms as well as man y recen t developmen ts on latent dynamics [25]. Surrogate-based RL for PDEs has b een inv estigated using deep learning (e.g., LSTMs [29]) and neural opera- tors [30]. A control framework combining data-driv en manifold dynamics with K o opman surrogate mo deling for RL of Rayleigh–Bénard conv ection 3 RL (DManD-RL) [5] has b een successful in con trolling the tw o-dimensional RBC system by learning a p olicy inside a laten t space. The Koopman-op erator frame- w ork has b een applied to RL in v arious flav ors, mainly to in tro duce linearity through lifting [16, 23]. While K o opman-based surrogate mo deling and deep RL hav e prov en effective on the RBC system in isolation, this work inv estigates whether they can be com bined to efficien tly train RL agents. W e train surrogates that predict the full state that allo w for cheap rollouts while maintaining full compatibilit y with DNS-based control frameworks. W e ev aluate t wo training strategies for these surrogates. First, w e train mo dels on a static pre-gene rated dataset obtained from DNS. Second, we use a p olicy-a ware training pro cedure that adapts the surrogate to p olicy-induced state distributions in order to mitigate distribution shift and pro duce mo dels b etter suited for RL training. 3 Metho ds 3.1 Ra yleigh-Bénard Conv ection In RBC, a fluid b et ween t wo plates is heated from b elow and co oled from ab o ve, creating buoy ancy forces. Once the thermal forcing exceeds a critical threshold, buo yancy-driv en conv ection sets in, observ able as organized flo w structures called c onve ction c el ls [1]. The dynamics are gov erned by a system of partial differential equations derived from the incompressible Navier-Stok es equations. The state s t = ( T , u, w ) at time t is describ ed by the temp erature and v elo cit y fields. W e use standard fixed-temp erature and no-slip b oundary conditions (BCs) at the top and b ottom b oundaries and p eriodic BCs along the horizontal direction. The system is characterized b y t wo key quan tities. The Ra yleigh num ber Ra measures the ratio of buoy ancy-driv en forces to dissipative effects caused by viscosit y and thermal diffusion, where larger v alues lead to increasingly complex flo w structures. W e here fo cus on the mo derately conv ectiv e regime at Ra = 10 4 . The second quantit y is the Nusselt num b er N u quantifying the enhancement of heat transp ort due to conv ection relative to pure conduction 1 : N u = ⟨ w θ ⟩ − κ ∂ θ ∂ z κ ( T b − T t ) /d , where ⟨·⟩ denotes a spatial av erage. N u = 1 corresp onds to purely conduc- tiv e heat transfer, while larger v alues indicate progressively stronger con vectiv e transp ort and flow complexity [1]. Direct n umerical simulation (DNS) is p erformed via the Oc e ananigans.jl pac k age [26] using a grid resolution of 96 × 64 . T o av oid transient effects, all exp erimen ts are initialized from precomputed chec kp oin ts where the system is already in its conv ective phase. Separate sets of c heckpoints are generated in adv ance for training, v alidation, and testing. 1 T b , T t : (mean) temp erature at bottom and top b oundary , θ : nondimensionalized tem- p erature, κ : thermal diffusivit y , z : vertical spatial co ordinate, d : system heigh t 4 Plotzki & Peitz 3.2 Con trol of RBC Similar to [14, 28], we fo cus on reducing N u by controlling 12 thermal actuators lo cated at the b ottom b oundary of the domain. Each actuator can lo cally set a temp erature T i ∈ [1 . 25 , 2 . 75] , sub ject to the constraint that their mean satisfies ⟨ T ⟩ = 2 . The actuators are driven by a normalized control signal a i ∈ [ − 1 , 1] , whic h is mapp ed to the corresp onding temp erature range [14]: ˆ T ′ i = a i − P N i =1 a i N , ˆ T i = 0 . 75 ˆ T ′ i max(1 , | ˆ T ′ | ) . (1) The time b et ween successive con trol inputs of the RL agent is set to 1 . 5 seconds. The p olicy is optimized using Proximal Policy Optimization (PPO) [24]. PPO uses a clipp ed ob jective function that preven ts large, p oten tially destabilizing p olicy updates. F or model-free, con tinuous RL problems suc h as RBC, PPO has emerged as a widely used metho d due to its stabilit y and robustness [14]. The p olicy is appro ximated by a m ultilay er perceptron, taking in coarsened temp erature and velocity fields ( 48 × 8 , then flattened). It has a hidden lay er with 64 neurons, follo wed b y the action v alues as outputs. ReLU is used as the activ ation function. The agent’s reward signal is R ( s t ) = 1 − N u ( s t ) N u base ( Ra ) , whic h corresp onds to the negative Nusselt n um b er normalized to the interv al [0 , 1] . N u base ( Ra ) denotes the maximum Nusselt num b er. 3.3 Surrogate Architecture W e extend the Linear Recurrent Auto enco der Net work (LRAN) architecture, whic h has previously demonstrated strong predictive p erformance [13, 17], to the con trol con text. LRAN learns a latent space in whic h the enco ded v ariables can b e adv anced linearly in time. The enco der and deco der are conv olutional neural net works (CNNs) with five and six la yers, resp ectively . The primary mo dification is the inclusion of control actions at each hidden state, as illustrated in Figure 1. The mo del is optimized with resp ect to the loss L = T − 1 X i =0 δ i T || ˆ s t + i − s t + i || 2 || s t + i || 2 + ϵ , corresp onding to a normalized reconstruction error and encourages accurate pre- diction of all observ ed states within a temporal sequence of length T . The loss function introduces tw o additional h yp erparameters: the sequence length T and a temp oral discount factor δ , which progressively deca ys reconstruction errors at later time steps in the sequence. The architecture is optimized with A dam [12] with L2 regularization λ = 10 − 4 , using gradients accumulated ov er an entire sequence of length T . K o opman surrogate mo deling for RL of Rayleigh–Bénard conv ection 5 Fig. 1. Extension of the LRAN architecture to incorp orate control actions as addi- tional inputs. The action vector a t is added to the next hidden state after an affine transformation defined by an input-to-hidden matrix U and a bias term b u . T raining After a hyperparameter search, the configuration chosen for training the LRANs is (dim( h ) = 200 , δ = 0 . 9 , T = 10) , where dim( h ) refers to the di- mensionalit y of the latent space. F urthermore, a learning rate of α = 5 · 10 − 5 is used. T w o LRANs are trained (see Fig. 1 for details): one using a precomputed dataset with 3300 episo des containing 400 steps eac h using random actions, and one using a p olicy-a w are training sc heme inspired b y Mo del-Based Policy Op- timization (MBPO) [11], in which training data is generated on the fly using actions from a p olicy that is optimized alongside the surrogate. Figure 2 illus- trates the training lo op of this p olicy-a ware training scheme. Surrogate Agent DNS new data training data, initial states actions feedback on actions Replay Buffer actions optimize w .r.t. training data optimize based on feedback Fig. 2. T raining loop of the policy-aw are surrogate training scheme. The surrogate mo del is optimized with data from DNS using actions from the p olicy while the p olicy is optimized by interacting with the surrogate using PPO. 6 Plotzki & Peitz Data Augmentation Since data generation using DNS is computationally exp ensiv e, data efficiency is impro ved b y augmen ting existing episodes using translation and reflection. Because of the perio dic b oundary conditions along the horizontal direction, translation preserves the RBC dynamics if grid points shifted out of the domain are wrapp ed around to the opp osite side. In our setup, with a horizontal grid resolution of 96 and 12 thermal actuators, the domain can b e partitioned into segmen ts of eight grid p oin ts, corresponding to the width of a single actuator. This allo ws for discrete translations by segments, yielding 12 distinct configurations. In addition, each configuration can be reflected ab out the v ertical axis, resulting in a total of 23 distinct synthetic episo des p er sim ulated episo de. 4 Exp erimen ts and Results W e tes t b oth LRANs in tw o separate exp erimen ts to ev aluate their suitability as training environmen ts for RL con trol. First, we measure the control p erformance and training times of agents trained exclusively on the surrogates. Second, we emplo y the surrogates in a pretraining scheme in which training initially starts on the surrogate b efore finishing in a DNS environmen t. The idea is to start learning on the computationally efficient surrogate and only use exp ensive DNS to finetune control p erformance once surrogate imperfections prev ent further progress. T o accurately determine an agent’s capabilities, the rep orted control p erformance is the mean Nusselt n um b er measured in 20 DNS en vironments initialized from random test c heckpoints. The PPO setup is largely iden tical to that used in [14]. The algorithm is implemen ted using the Stable-Baselines3 library [22]. W e use 20 parallel envi- ronmen ts, an episode length of t = 200 , a clipping range of ϵ = 0 . 2 , a discoun t factor of γ = 0 . 99 , a learning rate of α = 10 − 3 , and an entrop y loss co efficient of β = 0 . 01 . All other parameters are k ept at their default v alues. Exp erimen ts are conducted at a Ra yleigh num b er of Ra = 10 4 . Time measurements are from 20 cores of an Intel Xe on E5-2690v4 CPU together with an NVIDIA T esla P100 GPU. 4.1 Exp erimen t 1: Exclusive T raining on Surrogates W e ev aluate the control p erformance of agents after b eing trained in different en vironments. T raining lasts un til p erformance stagnates and no more progress is made. Agents are compared to tw o baselines: "Zero" denotes a p olicy that alw ays outputs a t = { 0 } 12 , whic h is equiv alent to letting the system ev olv e without control. The "Random" baseline corresp onds to a policy that samples actions uniformly from the action space a t ∼ U ([ − 1 , 1] 12 ) at every time step. A dditionally , we consider the control p erformance and training time of an agen t trained directly in a DNS en vironment using 400 , 000 in teractions, following the exp erimen tal setup of [14]. The results are summarized in T able 2. K o opman surrogate mo deling for RL of Rayleigh–Bénard conv ection 7 T able 1. Enco der and deco der architectures of the LRAN. Here, B denotes the batch size and dim( h ) the laten t dimension. Lay ers corresp ond to individual PyT orch classes. A Gaussian Error Linear Unit (GELU) [10] is used as the activ ation function. Enco der Deco der La yer Output shap e Lay er Output shap e Input [ B , 3 , 64 , 96] Input [ B , dim( h )] Con v2d (3 → 32 , 5 × 5) [ B , 32 , 64 , 96] Linear [ B , 12288] MaxP o ol 2 × 2 [ B , 32 , 32 , 48] Unflatten [ B , 32 , 16 , 24] Con v2d (32 → 64 , 5 × 5) [ B , 64 , 32 , 48] Conv2d (32 → 64 , 5 × 5) [ B , 64 , 16 , 24] Con v2d (64 → 32 , 5 × 5) [ B , 32 , 32 , 48] Upsample × 2 [ B , 64 , 32 , 48] MaxP o ol 2 × 2 [ B , 32 , 16 , 24] Con v2d (64 → 32 , 5 × 5) [ B , 32 , 32 , 48] Con v2d (32 → 32 , 5 × 5) [ B , 32 , 16 , 24] Conv2d (32 → 32 , 3 × 3) [ B , 32 , 32 , 48] Flatten [ B , 12288] Upsample × 2 [ B , 32 , 64 , 96] Linear [ B , dim( h )] Con v2d (32 → 16 , 3 × 3) [ B , 16 , 64 , 96] Con v2d (16 → 3 , 5 × 5) [ B , 3 , 64 , 96] T able 2. Control p erformance measured by the Nusselt num b er Nu and total training time for p olicies from different environmen ts. En vironment Nu T raining time Random-A ction 3 . 31 0 h 06 min 200 , 000 interactions P olicy-A ware 2 . 97 0 h 17 min 600 , 000 interactions DNS 2 . 74 4 h 11 min 400 , 000 interactions Zero 4 . 00 - Random 4 . 05 - All surrogate environmen ts pro duce agents that significantly outp erform b oth baselines. Ho wev er, their control performance still remains below that of the DNS-trained agent. Because surrogate rollouts are on a verage 25 . 6 times faster than DNS simulations, the total training time is substantially reduced even when more in teractions are p erformed. The Random-Action surrogate quickly reaches a control p erformance of N u = 3 . 31 after only 200 , 000 interactions, after which learning plateaus. Additional PPO up dates fail to further improv e the agent’s true p erformance when ev al- uated in DNS. In con trast, the Policy-A ware surrogate exhibits slow er initial learning but improv es steadily throughout training. After approximately 350 , 000 in teractions it surpasses the Random-Action surrogate and contin ues to improv e thereafter. Using the Policy-A w are surrogate as the training environmen t, the agen t even tually reaches a control p erformance of N u = 2 . 97 after 600 , 000 inter- 8 Plotzki & Peitz 100 200 300 400 500 600 Timesteps (in thousands) 3 . 0 3 . 2 3 . 4 3 . 6 3 . 8 N u Control Performance ov er timesteps Random-Action Policy-Aw are Fig. 3. Control p erformance of p olicies from b oth surrogates ov er the course of training. actions, corresp onding to a total training time of 17 minutes. Figure 3 compares p olicy p erformance during training on the surrogates. 4.2 Exp erimen t 2: Pretraining on the Surrogates In this exp eriment, the surrogates are used in a pretraining sc heme in whic h training starts in a surrogate en vironmen t b efore con tinuing in DNS. The goal is to exploit the rapid early learning enabled b y the surrogate while relying on DNS to fine-tune the p olicy once surrogate inaccuracies b egin to limit further impro vemen ts. T able 3 summarizes the results. T able 3. Control p erformance and total training time for p olicies from tw o differen t pretraining configurations compared to a DNS environmen t. En vironment Nu T raining time Random-A ction + DNS 2 . 73 3 h 06 min 120 , 000 + 280 , 000 interactions P olicy-A ware + DNS 2 . 75 2 h 24 min 400 , 000 + 200 , 000 interactions DNS 2 . 74 4 h 11 min 400 , 000 interactions Both surrogates are capable of reaching the control p erformance of a purely DNS-trained agent in a pretraining scheme while reducing the ov erall training time. As in the previous experiment, the Random-A ction surrogate pro vides faster p erformance gains during the early stages of training. This allows the agent to ac hieve a slightly improv ed N u within the same total n umber of interactions, of whic h 30% are p erformed on the surrogate mo del. K o opman surrogate mo deling for RL of Rayleigh–Bénard conv ection 9 The Policy-A w are surrogate, ho wev er, requires more total interaction steps to comp ensate for slow er early learning. Nev ertheless, the stronger p olicies even tu- ally obtained when training on this surrogate allow the n umber of required DNS in teractions to be reduced further. As a result, a similar control p erformance is ac hieved with an even low er total training time. 5 Discussion Our results demonstrate that surrogate mo dels, sp ecifically the LRAN, are ca- pable of pro ducing agents significantly b etter than baseline p olicies. In conjunc- tion with DNS using a pretraining scheme, surrogates allo w p olicies to match state-of-the-art control p erformance while requiring few er DNS interactions and consequen tly less total training time. In terestingly , the tw o LRANs demonstrate differen t strengths. All agen ts disco ver the c el l-mer ging strategy during training, which has already b een ob- serv ed in [14]. This strategy collapses the initial four-cell RBC state into tw o larger cells, ultimately reducing conv ection and pro viding a visible example of the distribution shift. How ever, since the static dataset used for the Random- A ction surrogate is generated from random actions that follow no strategy , such states are sev erely underrepresented. As a consequence, the Random-A ction sur- rogate is unable to predict tw o-cell states. In con trast, the Policy-A w are mo del has learned to predict t wo-cell RBC states, but its accuracy degrades in the ini- tial four-cell state space, where it o verestimates the chaotic b eha vior caused by unoptimized zero-input actions. The Random-A ction surrogate do es not display this issue to the same degree. A plausible explanation is that the Policy-A w are surrogate ov erfits to optimized actions, since its training data samples actions from a policy trained alongside the mo del. These effects are illustrated in Figure 4. This observ ation explains the training behavior of agen ts in the previous exp erimen ts. Policies optimized with the P olicy-A w are surrogate exhibit slo wer initial learning due to the reduced mo del accuracy in the initial four-cell s tate space. Ho wev er, once tw o-cell states b ecome relev an t for further optimization, the P olicy-A ware model begins to outperform the Random-A ction surrogate, whose training plateaus b ecause it cannot predict these states. These findings suggest that surrogate mo dels used as RL training environ- men ts should account for p olicy-induced state distributions during training to mitigate the effects of distribution shift. Otherwise, the surrogate may fail to predict states that emerge as the p olicy impro ves and therefore lose reliabilit y as an RL environmen t. How ever, the extent to which p olicy-aw are training is necessary will likely dep end on the characteristics of the underlying system and the degree to whic h p olicy optimization alters the relev ant state distribution. 10 Plotzki & Peitz 0 25 50 Initial State DNS (ground truth) Random-Action Policy-Aw are 0 50 0 25 50 Initial State 0 50 DNS (ground truth) 0 50 Random-Action 0 50 Policy-Aw are 1 . 00 1 . 25 1 . 50 1 . 75 2 . 00 2 . 25 2 . 50 2 . 75 T emp erature (a) Predicting 200 steps in an initial state (b) Predicting one step in a tw o-cell state Fig. 4. Qualitativ e comparison of b oth LRANs. (a) Surrogates predict 200 steps with zero action input in an initial four-cell RBC state. (b) Surrogates predict one step with zero action input in a tw o-cell RBC state. Control with zero action inputs is equiv alent to uncontrolled forward prediction. 6 Conclusion This work show ed that surrogate mo dels can b e used as environmen ts for train- ing RL agents. W e examined different training approaches for the surrogates, highligh ted the impact of distribution shift on downstream p olicy p erformance, and presented an approac h to mitigate it. F urthermore, we demonstrated that surrogates can b e combined with DNS in a pretraining scheme to matc h state- of-the-art con trol p erformance while reducing agen t training time. While this study fo cused on 2D RBC with a mo derate Ra yleigh n umber, fu- ture work could inv estigate the LRAN’s abilit y to generalize to higher Rayleigh n umbers or to different dynamical systems altogether. F urthermore, although this work trained surrogates b etter suited for RL using a p olicy-aw are training approac h, future work could explore alternative p olicy-aw are schemes that re- duce the ov erfitting problem observed here. This may further reduce training times and lead to surrogate models capable of producing even stronger control p olicies. A ckno wledgmen ts. SP ackno wledges funding from the European Research Coun- cil (ERC Starting Grant “K oOp eRaDE”) under the Europ ean Union’s Horizon 2020 researc h and innov ation programme (Grant agreement No. 101161457). W e gratefully ac knowledge the computing time provided on the Linux HPC cluster at T echnical Uni- v ersity Dortm und (LiDO3), partially funded in the course of the Large-Scale Equip- men t Initiative by the German Research F oundation (DF G, Deutsche F orsc hungsge- meinsc haft) as pro ject 271512359. K o opman surrogate mo deling for RL of Rayleigh–Bénard conv ection 11 References 1. Ahlers, G., Grossmann, S., Lohse, D.: Heat transfer and large scale dynamics in turbulen t rayleigh-bénard con vection. Rev. Mo d. Phys. 81 , 503–537 (4 2009) 2. Bec ktep e, J., F ranz, A., Th uerey , N., Peitz, S.: Plug-and-play b enc hmarking of reinforcemen t learning algorithms for large-scale flow control. (2026) 3. Brun ton, S.L., Budišić, M., Kaiser, E., Kutz, J.N.: Mo dern koopman theory for dynamical systems. SIAM Review 64 (2), 229–340 (2022) 4. Bucci, M.A., Semeraro, O., Allauzen, A., Wisniewski, G., Cordier, L., Mathelin, L.: Control of chaotic systems by deep reinforcement learning. Pro ceedings of the Ro yal Society A: Mathematical, Ph ysical and Engineering Sciences 475 (2231), 20190351 (11 2019) 5. Chen, Q., Constan te-Amores, C.R.: Stabilizing ra yleigh-b enard conv ection with reinforcemen t learning trained on a reduced-order mo del. arXiv:2510.26705 (2026) 6. Deisenroth, M., Rasmussen, C.E. : Pilco: A mo del-based and data-efficient approach to p olicy search. In: Pro ceedings of the 28th In ternational Conference on machine learning (ICML-11). pp. 465–472 (2011) 7. F romme, F., Harder, H., Allen-Blanchette, C., Peitz, S.: Surrogate modeling of 3D Rayleigh-Bénard conv ection with equiv ariant auto encoders. (2025) 8. Guastoni, L., Rabault, J., Sc hlatter, P ., Azizpour, H., Vin uesa, R.: Deep rein- forcemen t learning for turbulent drag reduction in channel flo ws. The Europ ean Ph ysical Journal E 46 (4), 27 (2023) 9. Hafner, D., Lillicrap, T., Ba, J., Norouzi, M.: Dream to control: Learning b eha viors b y latent imagination. arXiv:1912.01603 (2019) 10. Hendryc ks, D., Gimp el, K.: Gaussian error linear units (gelus). (2023) 11. Janner, M., F u, J., Zhang, M., Levine, S.: When to trust your mo del: Mo del-based p olicy optimization. arXiv:1906.08253 (2021) 12. Kingma, D.P ., Ba, J.: A dam: A metho d for stochastic optimization. arXiv:1412.6980 (2017) 13. Markmann, T., Straat, M., Hammer, B.: K o opman-based surrogate modelling of turbulen t Rayleigh-Bénard conv ection. In: 2024 International Join t Conference on Neural Netw orks (IJCNN). pp. 1–8 (2024) 14. Markmann, T., Straat, M., P eitz, S., Hammer, B.: Control of rayleigh-bénard con vection: Effectiveness of reinforcement learning in the turbulen t regime. arXiv:2504.12000 (2025) 15. Markmann, T., Straat, M., P eitz, S., Hammer, B.: F ourier neural op erators as data-driv en surrogates for t wo- and three-dimensional Ra yleigh–Bénard conv ec- tion. Neuro computing p. 133201 (2026) 16. Mondal, A.K., Panigrahi, S.S., Ra jesw ar, S., Siddiqi, K., Rav anbakhsh, S.: Effi- cien t dynamics mo deling in in teractiv e en vironments with k o opman theory . In: The T welfth International Conference on Learning Representations (2024) 17. Otto, S.E., Rowley , C.W.: Linearly-recurrent auto encoder netw orks for learning dynamics. arXiv:1712.01378 (2019) 18. P andey , A., Sc heel, J.D., Sc humac her, J.: T urbulent superstructures in rayleigh- bénard conv ection. Nature Communications 9 (1), 2118 (2018) 19. P andey , S., T eutsch, P ., Mäder, P ., Sch umac her, J.: Direct data-driven forecast of lo cal turbulent heat flux in ra yleigh–bénard conv ection. Ph ysics of Fluids 34 (4), 045106 (04 2022) 12 Plotzki & Peitz 20. P eitz, S., Stenner, J., Chidananda, V., W allscheid, O., Brunton, S.L., T aira, K.: Distributed control of partial differen tial equations using conv olutional reinforce- men t learning. Ph ysica D: Nonlinear Phenomena 461 , 134096 (2024) 21. Rabault, J., Kuch ta, M., Jensen, A., Réglade, U., Cerardi, N.: Artificial neural net works trained through deep reinforcement learning discov er control strategies for active flow control. Journal of Fluid Mechanics 865 , 281–302 (2019) 22. Raffin, A., Hill, A., Gleav e, A., Kanervisto, A., Ernestus, M., Dormann, N.: Stable- baselines3: Reliable reinforcement learning implemen tations. Journal of Machine Learning Research 22 (268), 1–8 (2021) 23. Rozw o o d, P ., Mehrez, E., Paehler, L., Sun, W., Brunton, S.L.: K o opman-assisted reinforcemen t learning. arXiv preprint arXiv:2403.02290 (2024) 24. Sc hulman, J., W olski, F., Dhariwal, P ., Radford, A., Klimo v, O.: Proximal p olicy optimization algorithms. arXiv:1707.06347 (2017) 25. Sc hw arzer, M., Anand, A., Goel, R., Hjelm, R.D., Courville, A., Bachman, P .: Data-efficien t reinforcement learning with self-predictive representations. In: In- ternational Conference on Learning Representations (2021) 26. Silv estri, S., W agner, G.L., Hill, C., Ardak ani, M.R., Blaschk e, J., Campin, J.M., Ch uravy , V., Constantinou, N.C., Edelman, A., Marshall, J., Ramadhan, A., Souza, A., F errari, R.: Oceananigans.jl: A julia library that achiev es breakthrough resolu- tion, memory and energy efficiency in global ocean simulations. (2024) 27. V asan th, J., Rabault, J., Alcántara-Ávila, F., Mortensen, M., Vinuesa, R.: Multi- agen t reinforcement learning for the control of three-dimensional rayleigh–bénard con vection. Flo w, T urbulence and Combustion (2024) 28. Vignon, C., Rabault, J., V asanth, J., Alcán tara-Á vila, F., Mortensen, M., Vin- uesa, R.: Effective control of tw o-dimensional rayleigh–bénard conv ection: Inv ari- an t m ulti-agent reinforcement learning is all you need. Physics of Fluids 35 (6) (2023) 29. W erner, S., P eitz, S.: Numerical evidence for sample efficiency of mo del-based o ver mo del-free reinforcement learning control of partial differential equations. In: Europ ean Control Conference (ECC). pp. 2958–2964. IEEE (2024) 30. Zhao, Z., Li, Z., Hassibi, K., Azizzadenesheli, K., Y an, J., Bae, H.J., Zhou, D., Anandkumar, A.: Physics-informed neural-op erator predictive control for drag re- duction in turbulent flows. arXiv:2510.03360 (2025)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment