Quantum-inspired Tensor Network for QUBO, QUDO and Tensor QUDO Problems with k-neighbors

This work presents a novel tensor network algorithm for solving Quadratic Unconstrained Binary Optimization (QUBO) problems, Quadratic Unconstrained Discrete Optimization (QUDO) problems, and Tensor Quadratic Unconstrained Discrete Optimization (T-QU…

Authors: Sergio Muñiz Subiñas, Alej, ro Mata Ali

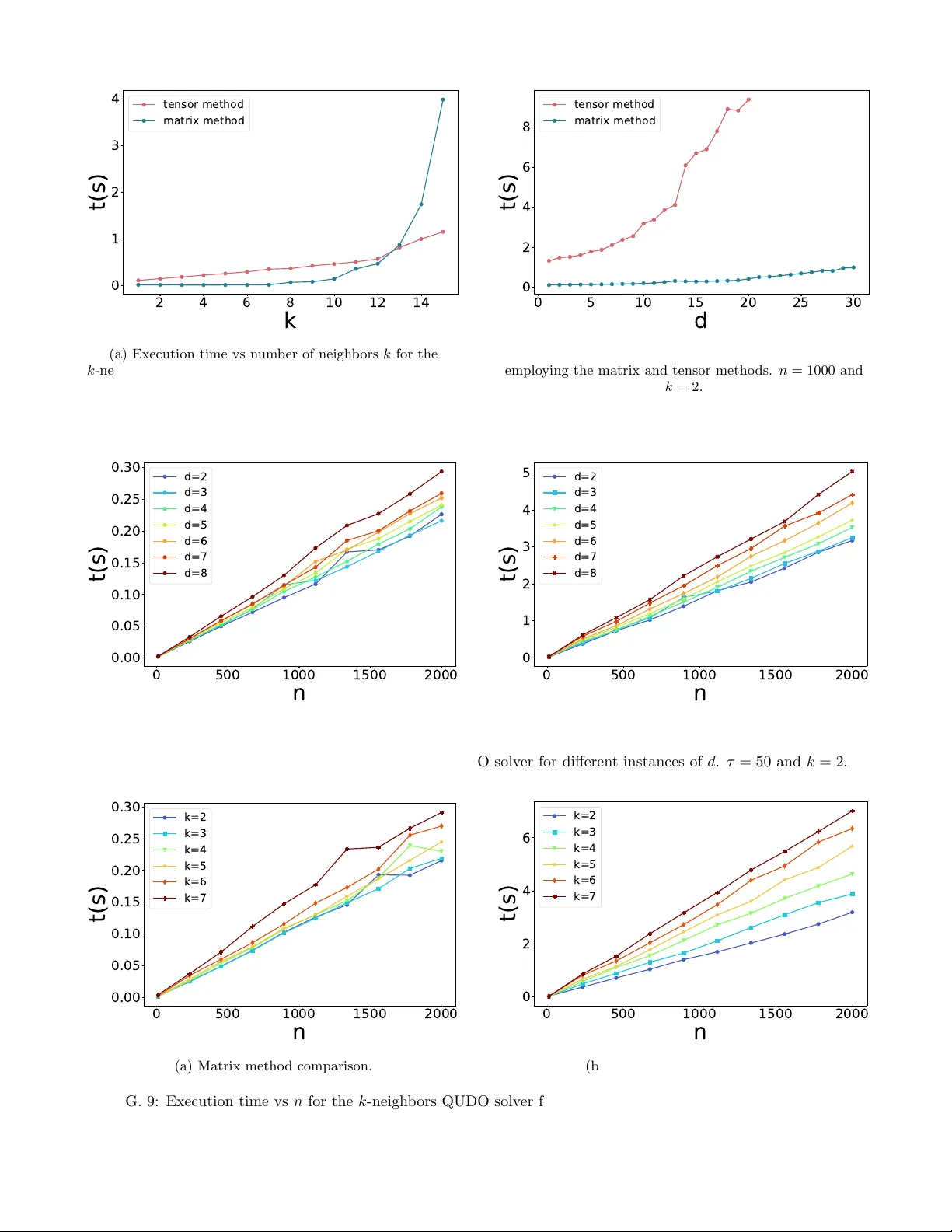

Quan tum-inspired T ensor Net w ork for QUBO, QUDO and T ensor QUDO Problems with k -neigh b ors Sergio Mu ˜ niz Subi ˜ nas, ∗ Alejandro Mata Ali, † Jorge Mart ´ ınez Mart ´ ın, ‡ Miguel F ranco Hernando, § and Javier Sedano ¶ Instituto T e cnol´ ogic o de Castil la y L e´ on, Bur gos, Sp ain ´ Angel Miguel Garc ´ ıa-Vico ∗∗ Andalusian Rese ar ch Institute in Data Scienc e and Computational Intel ligenc e (DaSCI), University of Ja´ en, 23071 Ja´ en, Sp ain (Dated: Marc h 31, 2026) This w ork presen ts a no vel tensor net work algorithm for solving Quadratic Unconstrained Bi- nary Optimization (QUBO) problems, Quadratic Unconstrained Discrete Optimization (QUDO) problems, and T ensor Quadratic Unconstrained Discrete Optimization (T-QUDO) problems. The algorithm proposed is based on the MeLoCoT oN methodology , which solv es combinatorial optimiza- tion problems by employing sup erposition, imaginary time evolution, and pro jectiv e measurements. Additionally , tw o different approaches are presented to solve the QUBO and QUDO problems with k -neigh bors interactions in a lineal c hain, one based on 4-order tensor contraction and the other based on matrix-vector multiplication, including sparse computation and a new technique called “W aterfall”. F urthermore, the p erformance of b oth implementations is compared with a quadratic optimization solver to demonstrate the p erformance of the metho d, showing adv an tages in several instances of problems. Keywords: T ensor netw orks, QUBO, QUDO, Combinatorial optimization I. INTR ODUCTION Com binatorial optimization is a branch of mathemat- ics that plays a central role in a wide range of real-world problems, including scheduling [1], logistics [2], and re- source allo cation [3]. Man y of these problems are classi- fied as NP-hard problems [4, 5], which implies that ex- act metho ds are computationally infeasible for large-scale instances. Consequently , the developmen t of algorithms capable of pro ducing near-optimal solutions constitutes an imp ortan t ob jective of contemporary research. An in teresting family of problems is the class of quadratic optimization problems [6], in whic h the de- cision v ariables are restricted to take v alues from a fi- nite set of discrete num bers. Many imp ortan t combina- torial problems, such as the T ra veling Salesman Problem (TSP) or the Knapsac k Problem, can b e form ulated in this wa y [7, 8], which underscores the practical relev ance of this class of problems. Within the family of quadratic in teger optimization problems, sev eral v arian ts can b e distinguished. When v ariables are restricted to binary v alues, the problem is kno wn as Quadratic Unconstrained Binary Optimization (QUBO) [9]. If v ariables can take arbitrary integer v alues from a finite set, the formulation is kno wn as Quadratic Unconstrained Discrete Optimiza- tion (QUDO) or Quadratic Integer Optimization (QIO). Curren t state-of-the-art metho ds for solving these problems often rely on different classical optimization ∗ sergio.muniz@itcl.es † alejandro.mata@itcl.es ‡ jorge.martinez@itcl.es § miguel.franco@itcl.es ¶ javier.sedano@itcl.es ∗∗ agvico@ujaen.es tec hniques. Exact approaches, including branch-and- b ound [10] and linear programming [11], can obtain opti- mal solutions for small and medium-sized instances, but their scalabilit y is limited. F or larger problems, it is common to employ heuristic and metaheuristic strate- gies, suc h as sim ulated annealing [12], tabu searc h [13] or genetic algorithms [14]. More recently , hybrid tech- niques that in tegrate lo cal searc h with mathematical pro- gramming ha ve shown strong p erformance. Some of the most used and specialized solv ers are CPLEX [15], GUR OBI [16] or OR-T o ols [17]. How ever, some of them ha ve high associated costs and hard integration due to licensing, require p o w erful mac hines for parallelization, or are less adapted to certain types of hard and sp ecific problems. The method employ ed in this w ork is based on ten- sor netw orks [18–20], a widely dev elop ed mathemati- cal formalism in condensed matter ph ysics [21]. T en- sor net works are particularly p o w erful b ecause they en- able the efficient representation and manipulation of high-dimensional data structures. Their strength lies in the abilit y to exploit the lo calit y and constrained con- nectivit y patterns inherent in the problem. In this study , the adopted tensor netw ork technique is Mo dulated Log- ical Com binatorial T ensor Netw orks (MeLoCoT oN) [22], a metho dology originally inspired by quantum computa- tion concepts such as sup erposition and imaginary time ev olution, but implemented entirely in a classical com- putational setting. Building on these considerations, this pap er introduces a tensor netw ork capable of exactly form ulating general QUBO and QUDO problems. It pro vides the exact equa- tion that solves the k-nearest-neighbor case, dev elop ed in t wo alternative forms: one emplo ying higher-order tensor con tractions and another based on matrix–vector multi- 2 plications. In addition, it presents a new technique that impro ves the pro cess of determining the optimal assign- men t of v ariables within the MeLoCoT oN algorithm, re- ducing the computational memory and the ov erflow un- der some circumstances. Briefly , the main contributions of this work are: 1. An exact and explicit equation whic h solv es gen- eral QUBO, QUDO and T ensor-QUDO (T-QUDO) problems, based on a tensor net work lattice, and t wo equations for the particular case of the k - neigh b ors in teraction in a lineal chain. 2. Two contraction schemes and their computational complexit y for b oth problems, with a comparative study of which one is b etter in which problem sizes. 3. A nov el algorithm for the determination of the op- timal v ariable v alues for MeLoCoT oN-type algo- rithms without contracting all the tensor netw ork and av oiding in some circumstances the ov erflo w due to the imaginary time evolution. Additionally , it is pro vided an open-source im- plemen tation of both algorithms and the experi- men tation of the pap er in the GitHub rep ository h ttps://github.com/SergioITCL/QUDO-tensor- net work-solv er. The remainder of this pap er is organized as follows: Sec. I I presents the necessary bac kground and formal definitions of QUBO, QUDO, and T-QUDO, and also briefly explains the bra-ket notation and the MeLoCo- T oN metho dology . Sec. I I I describ es the tensor netw ork form ulation used in this work for QUBO, QUDO and T-QUDO problems, the calculation algorithm and their computational complexity . Sec. IV in tro duces the new v ariation of MeLoCoT oN, the w aterfall metho d. Sec. V rep orts the experimental ev aluation and comparisons with a baseline solver. Finally , Sec. VI concludes the pap er with p ersp ectiv es for future research. I I. PR OBLEM FORMULA TION, PRELIMINARIES AND BACK GR OUND T o provide the necessary context, this section reviews the formulations of QUBO, QUDO, and T ensor-QUDO problems. A brief o v erview of bra–ket notation, along with a summary of the MeLoCoT oN methodology , ex- plaining the fundamental principles of the algorithm and its application to combinatorial optimization problems. A. QUBO, QUDO and T ensor-QUDO form ulations The QUBO problem consists in finding the binary v ec- tor x ∈ { 0 , 1 } n that minimizes a cost function of the form C ( x ) = n − 1 X i,j =0 i ≤ j Q ij x i x j . (1) The definition of the problem is fully characterized by a symmetric matrix Q . An in teresting prop ert y satisfied b y QUBO problems is x 2 i = x i , resulting in the absorption of the linear term into the quadratic comp onent. The QUDO problem generalizes QUBO by allo wing eac h v ariable to take integer v alues from a finite set x i ∈ { 0 , 1 , ..., d − 1 } . Unlik e QUBO, QUDO do es not satisfy the identit y x 2 i = x i , whic h prev en ts the absorption of the linear term into the quadratic term. The cost function is C ( x ) = n − 1 X i,j =0 i ≤ j Q ij x i x j + n − 1 X i =0 D i x i , (2) b eing Q a symmetric matrix and D a vector. A more complex extension is the T-QUDO problem, where the cost is the sum of tensor elements s elected by the v alues of the v ariables. The expression is C ( x ) = n − 1 X i,j =0 i ≤ j ˆ Q i,j,x i ,x j , (3) where ˆ Q is the cost tensor. The QUBO and QUDO form ulations are particular cases where ˆ Q i,j,x i ,x j = Q i,j x i x j . In previous work, similar problems with inter- actions in a linear c hain hav e b een solved [23], in which the matrix Q is tri-diagonal and the cost tensor ˆ Q has only terms ˆ Q i,i,j,k and ˆ Q i,i +1 ,j,k . Sev eral metho ds are commonly applied to these t ype s of problems. Exact methods include branc h-and-b ound [10] and branch-and-cut [24], which systematically explore the solution space while pruning sub optimal branc hes, and cutting-plane tec hniques that iterativ ely tighten the feasible region. Those are the core approaches of some solvers suc h as CPLEX [15] or Gurobi [16]. The most common heuristic techniques are: sim ulated annealing [12], whic h simulates the ph ysical pro cess of physical annealing to escap e lo cal minima by accepting w orse solutions with a certain probabilit y; tabu search [13], which guides a lo cal searc h b y c hecking immediate neighbors; and genetic algorithms [14], which evolv e a p opulation of solutions through selection, crossov er, and m utation op erators [25]. In addition, some emerging technologies prop ose no vel algorithms that sho w promising computational adv antages ov er traditional metho ds. F or example, quan tum computing [26–28] in troduces algorithms that in theory can solv e certain problems more efficiently . 3 Quan tum annealing [29], an algorithm based on the adiabatic theorem that p erforms the evolution of the quan tum state under some conditions in order to obtain the ground state of the Ising Hamiltonian related to the QUBO problem. Or the Quantum Approximate Optimization Algorithm (QAO A) [30] which discretizes the adiabatic theorem and performs an optimization pro cess to obtain the ground state as in the quantum annealing. B. Bra-k et notation In the tensor netw ork formalism, bra-ket notation is a concise wa y of representing tensors and their manipula- tion. In this work, only a brief ov erview of the notation is needed; for a more precise and detailed treatment, the reader s hould consult the references listed in the bibliog- raph y [31]. A k et | ψ ⟩ denotes a column v ector in a v ector space. A bra ⟨ ψ | denotes the hermitian conjugate of the ket. The inner pro duct b et ween t w o v ectors is denoted as a bra-k et op eration ⟨ ϕ | ψ ⟩ , resulting in a num b er. The outer pro d- uct betw een t wo vectors is denoted as a ket-bra op eration | ϕ ⟩ ⟨ ψ | , representing a matrix. F or a system of one v ariable, let H d b e a d -dimensional v ector space, its standard basis consists of d orthonor- mal vectors {| 0 ⟩ , | 1 ⟩ , ..., | d − 1 ⟩} , where each | x ⟩ is rep- resen ted as a column vector with a 1 in p osition x and 0 elsewhere. It is p ossible to express any v ector | ψ ⟩ as | ψ ⟩ = d − 1 X x =0 α x | x ⟩ , α x ∈ C . (4) F or a system of n v ariables, the Hilb ert space is H = H d 0 ⊗ H d 1 ⊗ · · · ⊗ H d n − 1 , where ⊗ denotes the tensorial pro duct, and the dimension of the total space is dim( H ) = n − 1 Y i =0 d i . (5) The standard basis in this situation consists of all p ossi- ble tensor pro ducts of the lo cal basis vectors | x ⟩ = | x 0 x 1 . . . x n − 1 ⟩ = | x 0 ⟩ ⊗ | x 1 ⟩ ⊗ · · · ⊗ | x n − 1 ⟩ . (6) Eac h | x 0 x 1 . . . x n − 1 ⟩ can b e in terpreted as a tensor of n indices, which is very conv enient to represent in the tensor net work formalism. It is p ossible to express an arbitrary tensor within H using this basis | ψ ⟩ = d − 1 X x 0 =0 · · · d − 1 X x n − 1 =0 α x 0 ,...,x n − 1 | x ⟩ , α x 0 ,...,x n − 1 ∈ C . (7) If the state satisfies the condition of b eing expressible as a tensor pro duct of individual subsystems, it can b e written as | ψ ⟩ = n − 1 O i =0 d − 1 X x i =0 α i,x i | x i ⟩ ! , α i,x i ∈ C . (8) C. MeLoCoT oN formalism The MeLoCoT oN method [22] is an approac h that con- v erts any combinatorial problem, such as optimization, in version, or constraint satisfaction, into an exact and explicit tensor netw ork equation. The application of this formalism to a combinatorial optimization problem con- sists of the following steps: 1. Creation of a classical logical circuit. This circuit receiv es as input a p ossible solution and asso ciates an in ternal num ber exp onentially low er with its cost. 2. T ensorization of the logical circuit of the problem, transforming every logical op erator in to a restricted tensor. This provides the tensor netw ork that rep- resen ts the tensor T with elements T = e − τ C ( x ) | x ⟩ ⟨ x | . (9) 3. Initialization and half partial trace, to consider at the same time all p ossible inputs and outputs given a set of v alues for the v ariable to determine. This represen ts the tensor | P i ⟩ = d i − 1 X j =0 X x | x i = j e − τ C ( x ) | j ⟩ . (10) 4. Extraction of v ariable v alues with an argmax func- tion on the P i tensor, or with binary extraction with ( − 1 , 1) vectors, to obtain a tensor netw ork that contr acted is equal to Ω i ( τ ). 5. Iteration to determine all the v ariables. The final expression pro vided by this metho dology usu- ally is an explicit equation of the type x i = lim τ →∞ H (Ω i ( τ )) , (11) b eing H ( · ) the Hea viside step function. This equation is exact in the limit. How ev er, outside the limit ca n b e computed with con traction schemes and provide an ap- pro ximate solution, go o d for large enough v alues of τ . I II. TENSOR NETWORK ALGORITHM This section provides the main contribution of this w ork, the tensor net w ork algorithm for solving QUBO, QUDO, and T ensor QUDO problems. Sec. I I I A fo cus in 4 the resolution of QUDO problems and Sec. II I B presen ts the resolution for QUDO problems with interaction of k - neigh b ors in a linear chain. Both sections include the de- duction of the tensor netw ork equation, the computation algorithm, and its computational complexit y . Sec. I I I C presen ts the generalization of the previous metho ds for the T ensor QUDO problems, and Sec. I I I D presents the final exact and explicit tensor netw ork equation expres- sion summarized. A. General QUDO problems 1. T ensor network de duction As in other algorithms based on MeLoCoT oN [22], the ob jective is to generate a tensor net work that represents the tensor that contains ev ery p ossible com bination with an amplitude asso ciated with its cost. | ψ ⟩ = X x e − τ C ( x ) | x ⟩ , (12) b eing C ( x ) the cost asso ciated with the combination x and τ the imaginary time evolution constant. In this w ay , the amplitude asso ciated with each com bination de- p ends on its cost. Thus, for a sufficiently high v alue of τ , the combination that minimizes the cost function has an exp onentially higher tensor element than other con- figurations. The extraction process and demonstration are explained in detail in [22]. The construction is based in applying lay ers of tensors that p erform sp ecific parts of the resolution. Fig. 1 sho ws the total tensor net work with the lay ers that will b e explained in the follo wing. The tensor construction starts with a sup erp osition la yer, the first lay er in Fig. 1, where each row carries the information of the v ariable x j horizon tally . T aking in to account that each v ariable can take v alues b et w een 0 and d − 1, the sup erp osition tensor is defined as | ψ 0 ⟩ = n − 1 O i =0 d − 1 X x i =0 | x i ⟩ ! = X x ∈{ 0 ,...,d − 1 } n | x ⟩ (13) F or example, for tw o v ariables of dimension three, the tensor is | ψ 0 ⟩ = | 00 ⟩ + | 01 ⟩ + | 02 ⟩ + | 10 ⟩ + | 11 ⟩ + | 12 ⟩ + | 20 ⟩ + | 21 ⟩ + | 22 ⟩ . In the general case, the v ariable i -th can tak e v alues b etw een 0 and d i − 1, simply by c hanging the corresp onding dimension of eac h of the tensors. How ev er, to simplify the explanations, the rest of the pap er deals only with the case where all the v ariables hav e the same range of v alues. Next, the second lay er in Fig. 1 computes the diagonal terms Q ii . That is, self-in teractions of the type Q ii x i x i , resulting in the state | ψ 1 ⟩ = n − 1 O i =0 d − 1 X x i =0 e − τ ( Q ii x 2 i + D i x i ) | x i ⟩ ! . (14) T o implemen t the in teractions b etw een the cross v ari- ables Q ij x i x j , a stair structure is proposed, in which each in teraction is given by the tensors S ij of Fig. 1. The C tensors pass the information of the v ariable in its row do wnw ard. Thus, the tensor S ij computes the in terac- tion b etw een the v ariable x i (horizon tally) and x j (v erti- cally), passing do wnw ard the v alue of x j and horizon tally the v alue of x i . The resultant state of applying all those la yers is | ψ 2 ⟩ = e − τ C ( x ) | x ⟩ , (15) e − τ C ( x ) = n − 1 Y i =0 ϕ ii ( x i ) n − 1 Y j = i +1 ϕ ij ( x i , x j ) , (16) ϕ ii ( x i ) = e − τ ( Q ii x 2 i + D i x i ) , (17) ϕ ij ( x i , x j ) = e − τ Q ij x i x j . (18) Eac h term of the pro duct ϕ ij ( x i , x j ) is pro duced by the action of the corresp onding S ij tensor, and each term of the pro duct ϕ ii ( x i ) is implemented by the action of its corresp onding S ii tensor. App endix A presents a precise definition of each tensor. Finally , in order to determine the v alue of the v ari- able x i , sup erp osition no des are imp osed on all ro ws but the row asso ciated with the v ariable to determine. The tensor netw ork of Fig. 1 represents the equation for ob- taining the first v ariable v alue. This leads to the tensor whose vector representation is | P 0 ⟩ = d − 1 X j =0 X x | x 0 = j e − τ C ( x ) | j ⟩ . (19) This enables the efficient computation of a marginal ‘probabilit y’ of measuring eac h v alue for that v ariable, a voiding the storage of all possible configurations. F or a sufficiently large v alue of τ , it is possible to ensure that the v alue of x 0 is the comp onen t of | P 0 ⟩ with a higher amplitude. Each v ariable x i of the solution vec- tor has an equiv alent tensor netw ork that generates its corresp onding | P i ⟩ leaving free only the index of the i -th v ariable, tracing ov er the other indices. This means that the solution can b e obtained with the iterativ e pro cess prop osed in [22], consisting of determining each v ariable, eliminating in its iteration the tensors of those already determined, and in tro ducing their v alue information into the remaining tensor netw ork. These tensor netw orks are the exact equation that solves the QUDO problem. 2. Calculation and c omputational complexity analysis T o compute the solution v alues, the tensor net work m ust b e contracted. T o contract this tensor net w ork, the 5 + + + + + + + + + + + + + + + + FIG. 1: T ensor netw ork for a dense QUDO problem with 5 v ariables. The mathematical description of eac h tensor is in App endix A. + + + + + + + + a) b) + + + + + c) FIG. 2: a) Con traction scheme of the last row MPS. b) Con traction sc heme b et w een the last row tensor and the MPO of the lay er ab o v e. c) Con traction scheme b et w een a row tensor and the MPO of the la yer ab o ve in the k-neighbours case. con traction scheme consists in contracting the last ro w of the netw ork from right to left, as shown in Fig. 2 a, and then contracting it with the previous ro w, also from righ t to left as sho wn in Fig. 2 b, follo wing a similar approac h to [32]. T o obtain the best performance, the con traction must tak e into accoun t the sparsity of the tensors. Eac h S tensor with more than t wo indices has only O ( d 2 ) non-zero elements, and C tensors ha ve only d non-zero elements. This is due to the v alue transmission constrain ts. The income v alue in a tensor must b e the same in the outcome of the same direction. The contrac- tion of the one- and tw o-indices tensors is less costly and is performed at the start, so they are neglected in the analysis. T o determine the first v ariable v alue, it is necessary to con tract the entire tensor net w ork. The con traction of the last tensor of the last row requires O ( d 3 ) op erations in the sparse format, versus O ( d 4 ) in the full dense format. Con tracting the n − 2 last tensors of the lay er requires O n − 3 X m =1 d 2 d m ! = O d n − 1 . (20) In the full dense case, it would b e O n − 3 X m =1 d 3 d m ! = O ( d n ) . (21) The first tensor of the row is con tracted in O ( d n ) in b oth formats, so this is the total complexity of this step. The con traction of the last ro w with the previous one is more complex. The following considers the contraction of the m -th row, no w a large m -tensor, with the previous ro w, with m tensors. In the contraction sc heme, the large tensor is con tracted with the previous row from right to left, to reduce the num ber of free indices. First, the last tensor of the ro w, which has O ( d ) non- zero elements, is con tracted with the large tensor in O ( d m ). Eac h contraction of the large tensor with a tensor of the previous row is p erformed again in O ( d m ) op era- tions, due to the contraction of t wo indices of the large tensor with the row tensor of O ( d 2 ) non-zero elements. The total complexit y of contracting the large tensor with a complete row is O ( md m ) taking adv an tage of the spar- sit y . If it is contracted in a full dense format, it grows to O ( d m +2 ). Adding the op erations for all the n lay- ers, the con traction of the entire tensor netw ork requires O ( nd n − 1 ) op erations in sparse format and O ( nd n +1 ) in full dense format, making the last tw o lay ers the bot- tlenec k of the con traction. The following v ariables re- quire smaller tensor net works, so the determination of the m -th v ariable, without reuse of intermediate com- putations, requires O (( n − m ) d n − 1 − m ) op erations with sparsit y and O (( n − m ) d n +1 − m ) with full dense format. The total complexity of the algorithm is the same of the first v ariable determination due to this exponential re- duction, with and without reuse of intermediate com- putations. The difference b etw een b oth formats is neg- ligible in complexity , but imp ortan t in practical appli- cations and for further algorithms in this work. Solving the general case exactly is feasible only for a very reduced n umber of v ariables due to the exp onen tial complexity . The computational complexit y of con tracting the general QUDO problem of Fig. 1 is O ( nd n − 1 ), and this is also the complexity of the whole algorithm. The spatial complexity of the initial tensor netw ork no des is O n 2 d 2 , b ecause it is a lattice of O ( n 2 ) ten- sors of O ( d 2 ) non-zero elements. The tensor of the last ro w con tracted has n − 1 indices of dimension d , so it has O ( d n − 1 ) elements. This is the most costly tensor of con traction and can b e rewritten in the following con trac- tion steps. This means that the total spatial complexity of one determination step is O d n − 1 . If there is no reuse of intermediate computations, this is the total spa- tial complexity of the algorithm. Ho w ever, with the reuse of in termediate computations, there is a need to store the tensor obtained after each row contraction step. The m - th row has m indices, so its size is O ( d m ). Then, the total size for storing all of them is O P n − 1 m =0 d m = O d n − 1 , whic h is the total spatial complexity . 6 + + + + + + + + + + + + + + + + FIG. 3: T ensor netw ork for a k -neigh b ors QUDO problem with 5 v ariables and k = 2. The mathematical description of each tensor is in App endix A. + + + a) b) c) + + + FIG. 4: Sc hematic representation of the 5 v ariable k -neigh b ors QUDO tensor netw ork for a) 1, b) 2 and c) 3-neigh b ors. B. k -neigh bors QUDO problems The computational cost of contracting this tensor net- w ork gro ws exp onen tially with the n umber of v ariables, so it is not p ossible to contract the tensor net work with- out employing some kind of approximation. Although there are several approximate techniques that could ad- dress the con traction of the tensor netw ork, the remain- der of the pap er is restricted to solving the particular case of the k -neigh b ors in a lineal c hain QUDO problem. In this case, this section introduces tw o alternative tensor net work constructions to solv e the problem, as illustrated in Figs. 4 and 5. 1. T ensor network de duction This section approaches the k -neighbors QUDO prob- lem. Basically , the difference from the general one is that eac h v ariable interacts only with its k nearest neighbors in a linear chain. The cost function is expressed as C ( x ) = n − 1 X i =0 k X j =0 Q i,i + j x i x i + j + n − 1 X i =0 D i x i . (22) This v arian t is computationally more approachable de- spite the size of the solution space b eing the same. a) b) d) c) FIG. 5: Sc hematic representation of the 5 v ariable k -neigh b ors QUDO tensor netw ork for 1 a), 2 b) and 3 c) neighbors and its representation in the form of a succession of matrices d). The tensor netw ork that implements this v arian t is similar to the netw ork in Fig. 1 by removing the inter- actions betw een the v ariables not connected, as sho wn in Fig. 3. It is p ossible to simplify this tensor netw ork b y contracting the sup erposition and S ii tensors with its corresp onding C and S ij no des. In Fig. 4, the schematic tensor netw ork asso ciated with one, t w o, and three neigh- b ors is represen ted. As expected, as the num b er of neigh- b ors increases, the tensor netw ork becomes more complex and therefore harder to contract. How ev er, for problems where the num b er of neighbors is lo w enough, it is p os- sible to contract the tensor netw ork exactly . F ollo wing an optimal contraction scheme, it is p ossible to contract the entire tensor netw ork in such a wa y that the compu- tational complexit y is O ( knd k ), as sho wn in Sec. I II B 2. In addition, another implementation of the same ten- sor netw ork is prop osed but with a differen t structure. The idea is to use matrices instead of 4-order tensors b e- cause they are easier to work with and the libraries that handle them are highly optimized. Each matrix repre- sen ts all the information of its row of tensors. Fig. 5 sho ws how it is p ossible to transform from a tensor to a matrix representation . 2. Calculation and c omputational complexity analysis It is imp ortant to keep in mind that, in the matrix metho d, the b ond dimension dep ends on the num ber of neigh b ors, as d k . F or example, to translate the tensor M 3 in Fig. 5 c with 6 = 3 · 2 indices into the matrix N 3 , it is necessary to create a matrix d 3 × d 3 . Therefore, contract- ing the whole chain is equiv alen t to computing n matrix- v ector multiplications. Since determining each v ariable in the QUDO solution requires the con traction of a ten- sor chain, in principle, n − 1 chains must b e contracted. Ho wev er, using the technique of taking adv an tage of the in termediate calculations e xplained in [22, 32], only one 7 con traction of the whole tensor net work is needed. The same idea can b e applied to the tensor metho d. F or the method based on matrix-vector m ultiplication, the computational complexit y of contracting the tensor net work and of the algorithm is O ( nd 2 k ). It requires con tracting n times a vector with a d k × d k matrix in the determination of the first v ariable, and the following is simply a single matrix-vector multiplication of dimen- sions d × d p er v ariable. This is acceptable for problems where each v ariable interacts only with a small num ber of neigh b ors. How ev er, taking into account that each ten- sor has k − 1 pairs of input-ouput indices with the same v alue, the tensors are sparse and hav e O ( d k +1 ) elements, so eac h multiplication requires only O ( d k +1 ) op erations. With the n multiplications for the complete con traction, the computational complexity p er v ariable is O ( nd k +1 ), b eing the total O ( n 2 d k +1 ) without reuse and O ( nd k +1 ) with reuse of intermediate computations. The spatial complexit y of this metho d is O ( nd k +1 ), b ecause the first tensors generated hav e the more non-zero elements than the follo wing contracted vectors. The complexit y of contracting the stair structured ten- sor net work in Fig. 4 is more difficult to determine. In the case k = 1, it is directly verified that the com- putational complexity of the algorithm using sparsity is O ( nd 2 ) and for k = 2 is O ( nd 3 ). In cases where k ≥ 3, the computational complexit y of contracting the tensor net work b y rows, from bottom to top, is O ( nkd k ). Fig. 2 a sho ws how the last ro w is contracted. This row is made up of k no des, so the computational complexity of con- tracting the whole row is O ( d k ), resulting in a tensor lik e the one shown in the b ottom of Fig. 2 with k indices. The pro cess of contracting this tensor with the tensor of the ro w ab ov e can b e seen in Fig. 2 b. The con traction with the last tensor of the ro w requires O ( d k ) operations, each con traction in the ro w also requires O ( d k ) operations, and the last one requires O ( d k +1 ) op erations. The sum is O ( k d k + d k +1 ) op erations, and for the n rows, it grows to O ( k nd k + nd k +1 ). T aking that k is a parameter that can grow with the size of the problem, the complexit y of the con traction of the tensor netw ork can b e reduced to O ( k nd k ) using the sparsity of the tensors. Without sparsit y , the contraction complexity is O ( knd k +2 ). This pro cess must b e rep eated for each v ariable, reducing the size of the tensor net work. With reuse of intermediate computations, the total complexity of the algorithm is O ( knd k ), and without reuse, it is O ( k n 2 d k ). The spatial complexit y of this metho d implies that the O ( knd 2 ) elemen ts of the initial tensors and then the O ( d k ) elemen ts of the last ro w tensor con tracted with the last tw o tensors of the previous ro w, so the total spatial complexit y is O k nd 2 + d k . Finally , reusing in termedi- ate computations, it needs storing n in termediate tensors, the spatial complexity is O ( nd k ). When comparing the complexities of b oth metho ds without the use of sparsity , it can b e pro ven that eac h metho d p erforms b etter under different num bers of neigh b ors and dimensions of the v ariables, but the tensor- based metho d alwa ys scales b etter for k > 4. F or d = 2, b oth algorithms hav e the same scaling at k = 4, matrix- based is b etter for k < 4 and tensor-based is b etter for k > 4. F or d = 3, b oth algorithms ha ve the same scaling at k = 3, matrix-based is b etter for k < 3 and tensor- based is b etter for k > 3. F or d > 3, matrix-based is b etter for k < 3 and tensor-based is b etter for k ≥ 3. Ho wev er, with the use of the sparsity , the matrix-vector metho d is alwa ys cheaper in computational complexity . The main adv an tage of the tensor metho d is that it has lo wer spatial complexity , which can b e interesting in cer- tain types of instances, and the p ossibilit y of using com- pression metho ds to approximate the solution. C. T ensor QUDO extension This algorithm can b e extended to a more general problem, the T ensor Quadratic Unconstrained Discrete Optimization (T-QUDO) formulation. This problem has b een approached in [22] with a circular tensor netw ork. Ho wev er, it can b e s olv ed with exactly the same type of tensor netw ork as in the QUDO case presented in Figs. 1, 4, and 5. This time, eac h tensor receiv es the same input and passes the same output as in the QUDO case, the only c hange is the v alue of the tensor elements to implement the cost function (3). Now, the tensor elements of S ij implemen t the pro duct terms ϕ ij ( x i , x j ) = e − τ C i,j,x i ,x j and the tensor elemen ts of S ii implemen t the pro duct terms ϕ ii ( x i ) = e − τ D i,x i . The rest of the algorithm im- plemen tation and op eration is the same. D. Exact explicit equations Giv en these tw o tensor netw ork constructions, it is p os- sible to extract an exact and explicit equation for the problem. Given the P i tensor represented by the tensor net work, the p osition of its largest element corresp onds to the optimal v alue for the i -th v ariable. This means that it is p ossible to extract the searc hed v alue from it. Ho wev er, to obtain a simple and exact equation, the v alue of τ must tend to infinity , which allo ws to av oid having to imp ose the results of the previous v ariables. ω i ( τ ) is defined as the vector-v alued function defined by the ten- sor net w ork represen ting P i , dep ending only on the v alue of τ and the definition of the problem. This implies the equation x i = arg max j lim τ →∞ ω i j ( τ ) . (23) Ho wev er, by binarizing the v ariable x i in to its bits x i,j , the problem solution equation is x i,j = lim τ →∞ H (Ω i,j ( τ )) , (24) 8 = FIG. 6: Con traction of the candidate tensor M t of the p ossible solution t ∈ { 0 , T } and the tensor B m to obtain the tensor B m t . b eing Ω i,j ( τ ) the tensor netw ork obtained by con tracting the tensor netw ork of ω i with a ( − 1 , 1) v ector in the j - th bit index for the i -th v ariable and H ( · ) the Heaviside step function. IV. W A TERF ALL METHOD In order to extend the MeLoCoT oN metho dology , an alternativ e strategy to calculate the solution is prop osed. The basic optimization in MeLoCoT oN relies on taking adv antage of in termediate calculations: while con tracting the first tensor net work to determine the v alue of the first v ariable, the intermediate tensors generated during this con traction are stored in memory . F or the follo wing v ari- ables, it is sufficien t to construct a new tensor that incor- p orates the information of the previous known v ariable solutions and contracts it with the corresp onding stored tensor. This approach av oids the need to p erform n full tensor netw ork con tractions, instead requiring only one initial contraction plus n additional tensor contractions. Ho wev er, this optimization entails storing n intermediate tensors in memory , which can b ecome a limiting factor for certain problem instances. T o address this limitation, a nov el metho d for deter- mining the solution is prop osed. As in the standard MeLoCoT oN approach, the tensor netw ork is contracted from the b ottom to the top. How ever, instead of stor- ing the intermediate contracted tensor at each step, the metho d generates T new vectors for each contraction lev el, each corresp onding to a different p ossible configu- ration of the future determined v ariables. In other words, a separate tensor is created for every p ossible prior so- lution that the contraction pro cess might encounter, as sho wn in Fig. 6. F or the case of k neighbors, the num b er of tensors for the con traction is T = d k , because that is the num b er of p ossible combinations of the v alues of the previous v ariables. The t -th new tensor is contracted with the curren t B m tensor, obtaining a new B t m ten- sor, and the p osition X t m of its largest element is the only stored result. So, the v alue X t m is the correct result if the previous k v ariables ha ve the t -th combination of v alues. By ev aluating all suc h possibilities in adv ance, the v alue of each v ariable for an y given preceding assign- men t can b e determined immediately and stored in a list Y m = ( X 0 m , X 1 m , . . . , X T − 1 m ). Consequen tly , if the v alue of all the previous v ariables (with low er m ) is fixed to the v alue, the subsequent v alue is immediately determined without requiring further contraction. This is p erformed b y selecting the v alue x m = X t m for the t -th p ossible com bination, determined as correct for the k previous v ariables. A notable feature of this metho d is that if Y m is a constan t vector of X t m = X m for a given v ariable, then the information for that v ariable is determined directly as x m = X m . This is b ecause if all p ossible v alues of the previous v ariables yield the same result, this means that this v ariable v alue is indep endent of the rest of the problem, so its optimal v alue is trivial. Consequently , if k = 1 and ev ery v ariable dep ends only on the previous one, the space solution of all the next v ariables is auto- matically reduced, even under fav orable conditions, and it is p ossible to determine the solution unambiguously . This cascading effect, which inspires the term “w ater- fall”, significantly reduces the remaining searc h space. Once the w aterfall pro cess is triggered, the problem effec- tiv ely b ecomes smaller, allowing the use of larger v alues of the imaginary time ev olution parameter τ for the re- maining v ariables, improving the optimization and thus mitigating o verflo w issues. The trade-off is an increase in computational cost: whereas the in termediate-calculation approac h only re- quires con tracting tw o tensors p er v ariable after the first, the waterfall metho d requires T contractions. F or this reason, the metho d is b est suited to problems in whic h T is small. F or example, in the 1-neighbor QUDO problem, the p ossible solutions of the previous no de are { 0 , 1 , . . . , d − 1 } , making the waterfall metho d efficien t and practical in this case. How ev er, instead of contract- ing the tensors to obtain the vectors, it is more simple to access the corresp onding row of the tensor fixing all other indices to their v ariable v alues, which can b e done in O (1), and then multiplying the corresp onding vector b y a diagonal matrix of the extra cost asso ciated with their k neighbors interaction in O ( dk ). In pseudo co de terms t ← k X j =0 d j a j B t m [ z ] ← B m [ a 0 , a 1 , a 2 , . . . , z ] e − P k j =0 Q m,m + j a j z , (25) a j b eing the v alue of the j -th neighbor and z the v alue of the curren t v ariable. This is rep eated for eac h of the T terms, so it has a computational complexit y of O nk d k +1 for the whole algorithm. Ho wev er, for k > 1, activ ating the waterfall effect in the m -th v ariable for all the follo wing v ariables re- quires that all v ectors { Y m , Y m +1 , . . . , Y m + k − 1 } b e con- stan t vectors. This can b e improv ed taking into account that, if Y m is constant in the v alue X m , then the vec- tor Y m +1 only requires to b e constant in X m +1 for the comp onen ts where x m = X m , the vector Y m +2 only re- 9 T ABLE I: Computational complexity for differen t metho ds and neighbor configurations. n is the num b er of v ariables with integer v alues in [0 , d − 1], and k is the num ber of neighbors in the interaction. Metho d T ensor metho d (Dense) Matrix metho d ( k -neigh bors) T ensor metho d ( k -neigh bors) Computational complexity no reuse O ( nd n − 1 ) O ( n 2 d k +1 ) O ( kn 2 d k ) Computational complexity reuse O ( nd n − 1 ) O ( nd k +1 ) O ( knd k ) Computational complexity waterfall O ( nd n ) O ( knd k +1 ) O ( knd k +1 ) Spatial complexity no reuse O ( d n − 1 ) O ( nd k +1 ) O k nd 2 + d k Spatial complexity reuse O ( d n − 1 ) O ( nd k +1 ) O ( nd k ) Spatial complexity waterfall O ( d n ) O md k +1 O md k +1 quires to b e constant in X m +2 for the comp onen ts where x m = X m , x m +1 = X m +1 , and so on. In other words, eac h vector requires d times less comp onents than the previous one to b e equal. Nevertheless, the num b er of comp onen ts that need to satisfy the condition remains O d k . This implies that the probability of success of the waterfall in a v ariable decreases as O P k , P b eing the probability of obtaining a constant v ector Y m , mak- ing it unfeasible for large v alues of k . This means that the effectiv eness of the waterfall metho d dep ends on the probabilit y of having the same result for all new tensors generated from all p ossible previous configurations. The cascade probability for k = 1, which plays a key role in the efficiency of the method, will b e empirically measured and discussed in Sec. V. F or spatial complexity , in eac h step the k -tensor of the contracted row is required, and the extraction of the com bination returns a vector of d components. F rom this v ector, only the v alue X t m is extracted. F or the T = d k com binations, this requires a k -tensor, with a total of O ( d k ) elemen ts. In the worst case scenario, O ( nd k +1 ) tensor elements are required, worse than the original al- gorithm of reuse of in termediate states. How ev er, if the tensor is uniform at some p oin t, all stored Y tensors can b e remov ed. If P is the probability of obtaining a uniform tensor, the num ber of tensors stored until obtaining a uni- form tensor is m ∈ [1 , n ], decreasing when P increases. Then the spatial complexity is O md k +1 , impro ving the existing spatial complexity if m < d , without an extreme increase in computational complexity . The b est-case sce- nario has a spatial complexity of O ( d k +1 ), whic h is better than all other metho ds. T able I summarizes the computational and spatial complexities of the prop osed metho ds. V. EXPERIMENT A TION This section presents a series of exp eriments designed to ev aluate the p erformance of the prop osed algorithms. F or simplicity and analogy with the QUBO case, the term D i x i is zero. The matrix metho d is implemented with Nump y [33] and the tensor metho d with T ensorKrow c h [34]. The sparsity of the tensors is not implemented in these tests b ecause, in practice, the sparse metho d is no- tably slo wer than the full dense metho d. A simple v er- sion of the w aterfall for k = 1 is also implemen ted in Nump y , only for the matrix metho d. Sec. V A presen ts the ev aluation of the scaling of the run time of the algo- rithms, compares them with each other, and benchmarks them against a traditional metho d based on a quadratic optimization implementation of OR-to ols. Sec. V B ev al- uates the p erformance of the waterfall metho d to show the probabilit y of obtaining a uniform tensor. A. General algorithm ev aluation The first exp erimen ts consist of ev aluating the execu- tion time as a function of some parameters, such as the n umber of v ariables n , the num b er of neighbors k , and the dimension d . Since the algorithms dep end on several parameters, only the exp erimen ts that show the most relev ant b ehaviors hav e b een selected. First, Fig. 7 show ho w the execution time t of b oth mo dels b ehav es under the dep endence of k and d . As discussed in Sec. I I I B 2, in case of not using the sparsity , the tensor metho d scales b etter with k and d . How ever, since the matrix implementation is more efficient despite ha ving a worse scaling without using the sparsity , the matrix implementation is faster for sufficiently small v al- ues of k and d . An additional comment is that increasing k is computationally more expensive than increasing d (recall that n = 100 in Fig. 7a and n = 1000 in Fig. 7b). The following exp erimen t ev aluates the dep endency b et w een the execution time and the num b er of v ariables n for b oth metho ds, shown in Figs. 8 and 9. In particu- lar, tw o different tests hav e b een prop osed, one fixing k for different v alues of d , shown in Fig. 8, and the other fixing d for different v alues of k , shown in Fig. 9. In general, the results obtained are quite expected, and as n , k or d increases, so do es the execution time. How- ev er, it is imp ortan t to highligh t some in teresting details. Both methods sho w a linear b eha vior, whic h is consisten t with the theoretical complexit y . Additionally , for the in- stances used, the matrix metho d outp erforms the tensor metho d in all cases. How ev er, this o ccurs only in the cases where k and d are sufficiently small. Finally , the last experiment consists of sho wing the accuracy of the solutions obtained b y the algorithms 10 2 4 6 8 10 12 14 k 0 1 2 3 4 t(s) tensor method matrix method (a) Execution time vs num b er of neigh b ors k for the k -neigh bors QUDO solver employing the matrix and tensor metho ds. n = 100 and d = 2. 0 5 10 15 20 25 30 d 0 2 4 6 8 t(s) tensor method matrix method (b) Execution time vs d for the k -neigh b ors QUDO solver emplo ying the matrix and tensor metho ds. n = 1000 and k = 2. FIG. 7: Execution time vs d and k for a fixed n for the QUDO solver with τ = 400. 0 500 1000 1500 2000 n 0.00 0.05 0.10 0.15 0.20 0.25 0.30 t(s) d=2 d=3 d=4 d=5 d=6 d=7 d=8 (a) Matrix metho d comparison. 0 500 1000 1500 2000 n 0 1 2 3 4 5 t(s) d=2 d=3 d=4 d=5 d=6 d=7 d=8 (b) T ensor metho d comparison. FIG. 8: Execution time vs n for the k -neigh b ors QUDO solver for different instances of d . τ = 50 and k = 2. 0 500 1000 1500 2000 n 0.00 0.05 0.10 0.15 0.20 0.25 0.30 t(s) k=2 k=3 k=4 k=5 k=6 k=7 (a) Matrix metho d comparison. 0 500 1000 1500 2000 n 0 2 4 6 t(s) k=2 k=3 k=4 k=5 k=6 k=7 (b) T ensor metho d comparison. FIG. 9: Execution time vs n for the k -neigh b ors QUDO solver for different instances of k . τ = 50 and d = 2. 11 (a) Relative error vs n for the k -neigh b ors QUDO solver emplo ying the matrix method for different instances of d . k = 2. (b) Relative error vs n for the k -neigh b ors QUDO solver emplo ying the matrix method for different instances of k . d = 2. FIG. 10: Relativ e error ε rel ative = 1 − C ( x tn ) C ( x OR ) analysis for QUDO solver. in Figs. 10. The comparison is p erformed against the Go ogle OR-TOOLS solver, taking the OR-TOOLS so- lution as the correct solution. The searc h time of the OR-TOOLS solv er is set to 5 seconds, b ecause for the cases where d > 2 the algorithm takes to o long to find the most optimal solution and to make a fair comparison b et w een metho ds. The selection of τ was precomputed b efore the algorithm was executed according to the size of the problem instance. Since b oth tensor netw ork implementations obtain ex- actly the same result, the following exp erimen ts are em- plo yed with the matrix metho d. The accuracy measure is the relative error defined as ε rel ative = 1 − C ( x tn ) C ( x OR ) , b e- ing C ( x tn ) the cost of the tensor net w ork solution and C ( x OR ) the cost of the OR-TOOLS solution. Therefore, if ε rel ative < 0, the tensor netw ork algorithm obtains a b etter solution than the OR-TOOLS. Figs. 10 show that the tensor netw ork algorithm outp erforms OR-TOOLS’s in several instances, ha ving at least the same p erformance for most of them. The exp eriments carried out consist of represen ting the dep endence b etw een n and ε rel ative for some d for a given k , shown in Fig. 10a, and for some k for a given d , shown in Fig. 10b. Other optimizers ha ve b een considered, but OR- TOOLS is selected due to its fitness to the problem and a v ailabilit y for repro ducibilit y . F or example, dimo d optimizer from D-W a ve only is not general enough to tac kle QUDO problems, and it pro vides worse solutions. Quan tum computing optimizers hav e the same problems. GUR OBI and CPLEX optimizers provide high quality solutions, but their free versions do not allo w to solve instances with large num b er of v ariables. How ever, the increase in the quality of the solutions is due to a small τ v alue, so it could b e solved with an adaptive metho d to choose it, which also explains the few instances where OR-TOOLS is b etter than the tensor netw ork. B. W aterfall metho d ev aluation This subsection presents the experimental ev aluation of the waterfall metho d. As discussed in IV, the key fac- tor of the metho d is the probability of obtaining a uni- form tensor and triggering the cascade effect. In partic- ular, when a cascade even t o ccurs and it is a 1-neighbor problem, no in termediate tensors need to b e stored in memory up to that p oint. Moreov er, the problem can b e effectiv ely ‘re-started’ as a smaller subproblem b ecause sev eral v ariables hav e already b een determined. This al- lo ws the selection of a larger imaginary time evolution parameter τ for the remaining v ariables, which can im- pro ve the accuracy of the solution. T o quan tify this effect, the follo wing exp eriment tries to empirically measure the waterfall probabilit y for a 1-neigh b or QUDO problem. The waterfall probability w prob is defined as the n umber of times the waterfall ef- fect o ccurs divided by the n umber of nodes. Fig. 11 shows the av eraged measured probability as a function of d for 50 instances of random problems. The result shows a ten- dency for w prob to decrease as d increases. This b eha vior is consistent with the fact that, as the possible dimen- sional solution space increases, it b ecomes less probable for all solutions to b e the same. VI. CONCLUSIONS This pap er presen ts a tensor net w ork algorithm that solv es QUBO, QUDO and T ensor-QUDO problems us- ing the MeLoCoT oN. It has b een concluded that the tensor net work of a dense QUDO problem has a com- putational complexity of O ( nd n − 1 ), which cannot b e ef- ficien tly contracted. F or this reason, a simpler problem with fewer densit y has been prop osed, such as the k - 12 5 10 15 20 d 0.55 0.60 0.65 0.70 0.75 w p r o b FIG. 11: W aterfall av eraged probabilit y vs d for the 1-neigh b or QUDO solv er employing the waterfall matrix metho d ov er 50 random problem instances. Eac h instance is solved for 100 different v alues of τ , and only the b est solution is considered. n = 200. neigh b ors QUDO. Two differen t tensor netw orks hav e b een prop osed, one based on tensors with up to 4 in- dices, the other based on matrices and vectors, and a no vel metho dology for reducing the required memory . The computational complexity of b oth implementations for a n umber of neighbors k has b een studied as O ( nd k +1 ) (matrix method) and O ( nk d k ) (tensor metho d). In order to compare b oth algorithms, a series of exp erimen ts ha ve b een carried out without sparsit y implementation, prov- ing that the tensor-based metho d scales b etter as k and d grow. How ever, for problems where k and d take small v alues, the matrix-based metho d is faster even in this implemen tation. F urthermore, there is a comparison b e- t ween the relative error of the results with an OR-TOOLS solv er, concluding that, for most of the instances of prob- lems, the tensor netw ork algorithm p erforms equal to or b etter. F uture research lines ma y include the inv estigation of an efficient algorithm to determine the optimal v alue τ to scale the resolution and av oid the describ ed ov erflow limitation. Another line may b e the determination of an efficien t tensor netw ork that solves the case of k interac- tions other than the nearest neigh bors, which could b e applied to several known problems. This could be ap- proac hed by taking adv an tage of the symmetries of the problem. Also, the relation b et ween this problem and the Ising model in physics could allo w this tensor netw ork al- gorithm to find the ground state of interesting ph ysical systems. Finally , the application of compression meth- o ds in approximate representations can also b e studied in order to scale the algorithm for larger or more complex problems. A CKNOWLEDGMENT The research has b een funded by the Ministry of Sci- ence and Innov ation and CDTI under ECOSISTEMAS DE INNOV A CI ´ ON pro ject ECO-20241017 (EIFEDE) and ICECyL (Junta de Castilla y Le´ on) under pro ject CCTT5/23/BU/0002 (QUANTUMCRIP). This pro ject has b een funded by the Spanish Ministry of Science, In- no v ation and Universities under the pro ject PID2023- 149511OB-I00. [1] S. Dauz ` ere-P ´ er` es, J. Ding, L. Shen, and K. T amssaouet, The flexible job shop scheduling problem: A review, Eu- rop ean Journal of Op erational Researc h 314 , 409 (2024). [2] H. Zhang, H. Ge, J. Y ang, and Y. T ong, Review of vehi- cle routing problems: Mo dels, classification and solving algorithms, Archiv es of Computational Metho ds in En- gineering 29 , 195 (2022). [3] D. G. Cattrysse and L. N. V an W assenho ve, A survey of algorithms for the generalized assignment problem, Eu- rop ean Journal of Op erational Research 60 , 260 (1992). [4] C. Papadimitriou and K. Steiglitz, Combinatorial Opti- mization: Algorithms and Complexity , V ol. 32 (1982). [5] M. R. Garey and D. S. Johnson, Computers and In- tr actability; A Guide to the The ory of NP-Comple teness (W. H. F reeman & Co., USA, 1990). [6] P . A. Y agi, E. A. P . Quiroz, and M. A. C. Lengua, A sys- tematic literature review on quadratic programming, in Pr oc e e dings of Seventh International Congr ess on Infor- mation and Communication T e chnolo gy , edited by X.-S. Y ang, S. Sherratt, N. Dey , and A. Joshi (Springer Nature Singap ore, Singap ore, 2023) pp. 739–747. [7] S. Gonz´ alez-Bermejo, G. Alonso-Lina je, and P . Atc hade- Adelomou, Gps: A new tsp formulation for its general- izations type qub o (2022), arXiv:2110.12158 [quant-ph]. [8] T. Bontek oe, F. Phillipson, and W. v. d. Schoot, T rans- lating constraints into qub os for the quadratic knapsack problem, in Computational Scienc e – ICCS 2023: 23r d International Confer enc e, Pr ague, Cze ch R epublic, July 3–5, 2023, Pro c e e dings, Part V (Springer-V erlag, Berlin, Heidelb erg, 2023) p. 90–107. [9] G. Ko c hen b erger, J.-K. Hao, F. Glov er, Z. L ¨ u, H. W ang, and Y. W ang, The unconstrained binary quadratic pro- gramming problem: A surv ey , Journal of Combinatorial Optimization 28 (2014). [10] G. L. Nemhauser and L. A. W olsey , Inte ger and Com- binatorial Optimization (Wiley-Interscience, New Y ork, 1988). [11] J.-M. R´ eveillac, 8 - linear programming, in Optimization T o ols for L o gistics , edited by J.-M. R´ eveillac (Elsevier, 2015) pp. 209–237. [12] S. Kirkpatric k, C. D. Gelatt, and M. P . V ecchi, Optimiza- tion by sim ulated annealing, Science 220 , 671 (1983), h ttps://www.science.org/doi/p df/10.1126/science.220.4598.671. [13] F. Glov er, T abu search—part i, ORSA Journal on Com- puting 1 , 190 (1989). [14] J. H. Holland, A daptation in Natur al and Artificial Sys- tems (Universit y of Michigan Press, Ann Arb or, 1975). 13 [15] IBM Corporation, IBM ILOG CPLEX Optimization Stu- dio CPLEX User’s Manual (2025), accessed: 2025-08-05. [16] Gurobi Optimization, LLC, Gur obi Optimizer R efer enc e Manual (2025), accessed: 2025-08-05. [17] Go ogle LLC, OR-T o ols User’s Manual (2025), accessed: 2025-08-05. [18] J. Biamonte and V. Bergholm, T ensor netw orks in a nut- shell (2017), arXiv:1708.00006 [quant-ph]. [19] J. Biamonte, Lectures on quan tum tensor netw orks (2020), arXiv:1912.10049 [quant-ph]. [20] R. Or ´ us, A practical in tro duction to tensor netw orks: Matrix pro duct states and pro jected entangled pair states, Annals of Physics 349 , 117–158 (2014). [21] R. Or ´ us, T ensor netw orks for complex quantum systems, Nature Reviews Physics 1 , 538–550 (2019). [22] A. M. Ali, Explicit solution equation for every combina- torial problem via tensor netw orks: Melo coton (2025), arXiv:2502.05981 [cs.ET]. [23] A. M. Ali, I. P . Delgado, M. R. Roura, and A. M. F. de Leceta, Polynomial-time solver of tridiagonal qub o and qudo problems with tensor netw orks (2024), arXiv:2309.10509 [quant-ph]. [24] H. Luo, S. Chen, and H. W u, A new branch-and-cut al- gorithm for non-conv ex quadratic programming via al- ternativ e direction metho d and semidefinite relaxation, Numerical Algorithms 88 , 993 (2021). [25] M. Hasan, T. AlKhamis, and J. Ali, A comparison b e- t ween simulated annealing, genetic algorithm and tabu searc h metho ds for the unconstrained quadratic pseudo- b oolean function, Computers & Industrial Engineering 38 , 323 (2000). [26] M. A. Nielsen and I. L. Chuang, Quantum Computation and Quantum Information: 10th A nniversary Edition (Cam bridge Universit y Press, 2011). [27] F. Glov er, G. Ko c henberger, and Y. Du, A tuto- rial on formulating and using qub o mo dels (2019), arXiv:1811.11538 [cs.DS]. [28] J. Sevilla and C. J. Riedel, F orecasting timelines of quan- tum computing (2020), arXiv:2009.05045 [quant-ph]. [29] T. Kado waki and H. Nishimori, Quan tum annealing in the transverse ising mo del, Physical Review E 58 , 5355 (1998). [30] E. F arhi, J. Goldstone, and S. Gutmann, A quan- tum appro ximate optimization algorithm (2014), arXiv:1411.4028 [quant-ph]. [31] C. Cohen-T annoudji, B. Diu, and F. Lalo¨ e, Quantum Me- chanics , V ol. 1 (Wiley-Interscience, 1977). [32] A. M. Ali, I. P . Delgado, and A. M. F. de Leceta, T rav el- ing salesman problem from a tensor net works persp ective (2024), arXiv:2311.14344 [quant-ph]. [33] C. R. Harris, K. J. Millman, S. J. v an der W alt, R. Gom- mers, P . Virtanen, D. Cournap eau, E. Wieser, J. T ay- lor, S. Berg, N. J. Smith, R. Kern, M. Picus, S. Hoy er, M. H. v an Kerkwijk, M. Brett, A. Haldane, J. F. del R ´ ıo, M. Wiebe, P . Peterson, P . G´ erard-Marc han t, K. Shep- pard, T. Reddy , W. W ec kesser, H. Abbasi, C. Gohlke, and T. E. Oliphant, Array programming with NumPy, Nature 585 , 357 (2020). [34] J. R. Pareja Mon turiol, D. P´ erez-Garc ´ ıa, and A. Pozas- Kerstjens, T ensorKro wc h: Smo oth integration of tensor net works in machine learning, Quantum 8 , 1364 (2024), [35] P . W. Shor, P olynomial-time algorithms for prime factor- ization and discrete logarithms on a quantum computer, SIAM Journal on Computing 26 , 1484–1509 (1997). [36] X. Xu, S. Benjamin, J. Sun, X. Y uan, and P . Zhang, A herculean task: Classical sim ulation of quan tum comput- ers (2023), arXiv:2302.08880 [quant-ph]. [37] J. Tilly , H. Chen, S. Cao, D. Picozzi, K. Setia, Y. Li, E. Gran t, L. W ossnig, I. Rungger, G. H. Bo oth, and J. T ennyson, The v ariational quantum eigensolv er: A re- view of methods and best practices, Ph ysics Rep orts 986 , 1–128 (2022). 14 + + + a) b) c) d) e) f) FIG. 12: T ensor index naming conv en tion. App endix A: T ensor netw ork definition This App endix pro vides the definition of the tensors used in this pap er. The notation follows the conv en tions established in [22], which should b e consulted for a com- plete understanding. Essen tially , there are five different t yp es of tensor, shown in Fig. 12, the internal logic of eac h is explained b elow. a. Initial sup erp osition tensor The tensor + corresp onding to Fig. 12 a, is defined as + d + i = 1 . (A1) b. Partial tr ac e sup erp osition tensor The tensor P + corresp onding to Fig. 12 b, is another sup erposition tensor as +. How ev er, there is a distinction to mak e it clear that they are used in tw o different places in the tensor netw ork. It is defined as P + d , P + i = 1 . (A2) c. Non-cr oss inter action imaginary time evolution tensor This tensor implemen ts the imaginary time evolution of the QUDO interaction Q ll and D l , shown in Fig. 12 c. The tensor is defined as S l d × d , µ = i, S l iµ = e − ( τ Q ll i 2 + D l i ) . (A3) d. L ast r ow cr oss inter action imaginary time evolu- tion tensor This tensor implemen ts the imaginary time evolution of the QUDO interaction of the last v ariable Q n − 1 ,m , sho wn in Fig. 12 d. The tensor is defined as S n − 1 ,m d × d × d , µ = i S n − 1 ,m iµj = e − τ Q n − 1 ,m ij . (A4) e. 3-index c ontr ol tensor This tensor, shown in Fig. 12 e, receives the informa- tion from i and sends it without mo difying it through µ and ν . It is defined as C d × d × d , µ = ν = i C iµν = 1 . (A5) f. Cr oss inter action imaginary time evolution ten- sor This tensor implemen ts the imaginary time evolution of the qub o interaction Q lm (Fig. 12 f ). The tensor is defined as S lm d × d × d × d , µ = i ν = j S lm iµj ν = e − τ Q lm ij . (A6)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment