CARLA-Air: Fly Drones Inside a CARLA World -- A Unified Infrastructure for Air-Ground Embodied Intelligence

The convergence of low-altitude economies, embodied intelligence, and air-ground cooperative systems creates growing demand for simulation infrastructure capable of jointly modeling aerial and ground agents within a single physically coherent environ…

Authors: Tianle Zeng, Hanxuan Chen, Yanci Wen

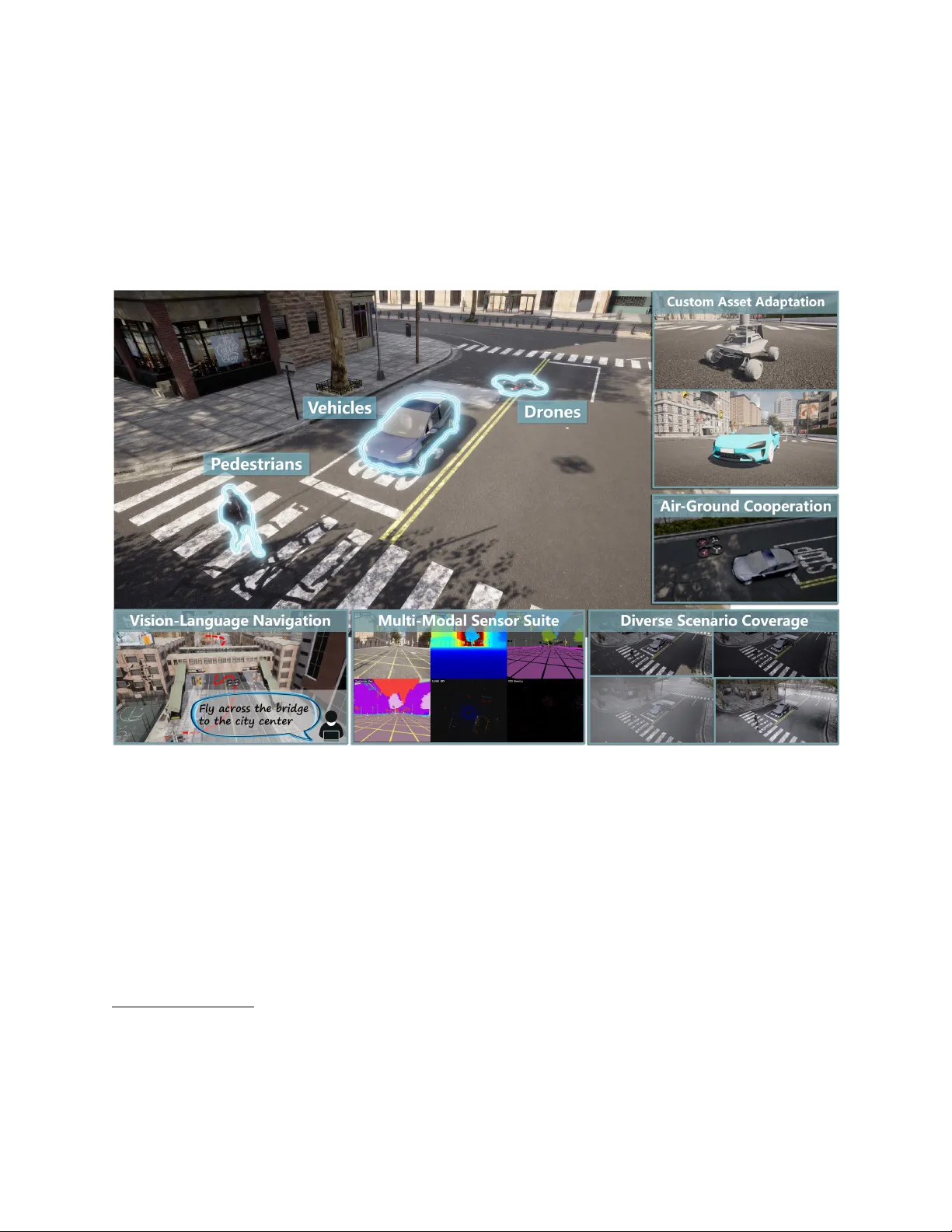

C A R L A - A I R : Fly Drones Inside a CARLA W orld A Unified Infrastructure for Air -Ground Embodied Intelligence T ianle Zeng 1 , ∗ Hanxuan Chen 2 , ∗ Y anci W en 1 Hong Zhang 1 , † Figure 1: Overvie w of CARLA-Air , a unified simulation infrastructure for air-ground embodied intelligence. The examples sho wn here illustrate representativ e capabilities of the platform, including unified air-ground simulation, multi-modal sensing, embodied navigation, asset adaptation, and di verse urban scenarios within a single physically coherent en vironment. Abstract The con ver gence of lo w-altitude economies, embodied intelligence, and air -ground cooperati ve systems creates a growing need for simulation infrastructure capable of jointly modeling aerial and ground agents within a single physically coherent en vironment. Existing open-source platforms remain domain-segre gated: urban driving sim- ∗ Equal contribution. † Corresponding author (hzhang@sustech.edu.cn). 1 Shenzhen Ke y Laboratory of Robotics and Computer V ision, Southern Univ ersity of Science and T echnology . 2 College of Electrical and Information Engineering, Hunan Uni versity . For questions and technical inquiries, please contact louiszeng16@163.com . ulators provide rich traffic populations but no aerial dy- namics, while multirotor simulators offer physics-accurate flight but lack realistic ground scenes. Bridge-based co- simulation can connect heterogeneous backends, yet in- troduces synchronization ov erhead and cannot guarantee the strict spatial-temporal consistency required by modern perception and learning pipelines. W e present CARLA-Air , an open-source infrastruc- ture that unifies high-fidelity urban driving and physics- accurate multirotor flight within a single Unreal Engine process, pro viding a practical simulation foundation for air - ground embodied intelligence research. The platform pre- serves both CARLA and AirSim nativ e Python APIs and R OS 2 interfaces, enabling zero-modification reuse of exist- ing codebases. W ithin a shared physics tick and rendering pipeline, CARLA-Air delivers photorealistic urban and natural en vironments populated with rule-compliant traffic flo w , socially-aware pedestrians, and aerodynamically con- sistent U A V dynamics, while synchronously capturing up to 18 sensor modalities—including RGB, depth, semantic segmentation, LiD AR, radar , IMU, GNSS, and barometry— across all aerial and ground platforms at each simulation tick. Building on this foundation, the platform provides out-of-the-box support for representati ve air-ground em- bodied intelligence workloads, spanning air-ground co- operation, embodied navigation and vision-language ac- tion, multi-modal perception and dataset construction, and reinforcement-learning-based policy training. An exten- sible asset pipeline further allo ws researchers to inte grate custom robot platforms, U A V configurations, and en vi- ronment maps into the shared simulation world. By in- heriting and extending the aerial simulation capabilities of AirSim—whose upstream dev elopment has been archiv ed— CARLA-Air also ensures that this widely adopted flight stack continues to e volv e within a modern, acti vely main- tained infrastructure. CARLA-Air is released with both prebuilt binaries and full source code to support immediate adoption: https: //github.com/louiszengCN/CarlaAir 1 Intr oduction Three con ver ging frontiers are reshaping autonomous sys- tems research. The low-altitude economy demands scal- able infrastructure for urban air mobility , drone logistics, and aerial inspection. Embodied intelligence requires agents that percei ve and act in shared physical en viron- ments through vision, language, and control. Air-gr ound cooperative systems bring these threads together , calling for heterogeneous robots that operate jointly across aerial and ground domains. Simulation is essential for advanc- ing all three frontiers, as real-world deplo yment is costly , safety-critical, and dif ficult to scale. Y et no widely adopted open-source platform provides a unified infrastructure ca- pable of jointly modeling aerial and ground agents within a single physically coherent en vironment. CARLA-Air is designed to fill this gap. The simulation landscape and its gap. Existing open- source simulators address complementary domains without ov erlap. CARLA [ 2 ], built on Unreal Engine 4 [ 3 ], has be- come the de facto standard for urban autonomous dri ving research, offering photorealistic en vironments, rich traffic populations, and a mature Python API. AirSim [ 15 ], also built on UE4, provides physics-accurate multirotor simula- tion with high-frequency dynamics and a comprehensiv e aerial sensor suite. The limitation of each platform is pre- 1 4 8 12 16 0 5 10 15 20 25 Number of concurrent sensors Per-frame data transfer (ms) Bridge co-sim [ 17 ] CARLA-Air (ours) Figure 2: Per-frame inter-process data transfer time as a function of concurrent sensor count. Bridge-based co- simulation [ 17 ] exhibits near-linear growth with sensor count due to cross-process serialization, while CARLA- Air remains ef fecti vely constant ( < 0 . 5 ms) o wing to its single-process architecture. cisely the strength of the other: CARLA lacks aerial agents, while AirSim lacks realistic ground traf fic and pedestrian interactions. Meanwhile, AirSim’ s upstream de velopment has been archiv ed by its original maintainers, leaving a widely adopted flight simulation stack without an activ e ev olution path. Other simulators across dri ving, U A V , and embodied AI domains similarly remain confined to a single agent modality (see Section 2 for a comprehensiv e surve y). As a result, emerging workloads that span both air and ground domains—air-ground cooperation, cross-domain embodied navigation, joint multi-modal data collection, and cooperati ve reinforcement learning—lack a shared simulation foundation. Why not bridge-based co-simulation? A common workaround connects heterogeneous simulators through bridge-based co-simulation, typically via R OS 2 [ 9 ] or cus- tom message-passing interfaces. While functionally viable, such approaches introduce inter -process synchronization complexity , communication overhead, and duplicated ren- dering pipelines. More critically , independent simulation processes cannot guarantee strict spatial-temporal consis- tency across sensor streams—a requirement for percep- tion, learning, and e v aluation in embodied intelligence systems. Fig. 2 quantifies the per-frame inter-process o ver - head contrast between bridge-based co-simulation and the single-process design adopted by CARLA-Air . CARLA-Air: a unified infrastructure for air -gr ound embodied intelligence. W e present CARLA-Air , an open-source platform that integrates CARLA and AirSim within a single Unreal Engine process, purpose-built as a practical simulation foundation for air-ground embod- ied intelligence research. By inheriting and extending AirSim’ s aerial simulation capabilities within a modern, 2 activ ely maintained infrastructure, CARLA-Air also pro- vides a sustainable evolution path for the large body of existing AirSim-based research. Ke y capabilities of the platform include: (i) Single-process air -gr ound integration. CARLA- Air resolves a fundamental engine-lev el conflict— UE4 permits only one acti v e game mode per w orld— through a composition-based design that inherits all ground simulation subsystems from CARLA while spawning AirSim’ s aerial flight actor as a regular world entity . This yields a shared physics tick, a shared rendering pipeline, and strict spatial-temporal consistency across all sensor vie wpoints. (ii) Full API compatibility and zero-modification code migration. Both CARLA and AirSim na- tiv e Python APIs and R OS 2 interfaces are fully pre- served, allo wing existing research codebases to run on CARLA-Air without modification. (iii) Photorealistic, physically coherent simulation world. The platform delivers rich urban and nat- ural en vironments populated with rule-compliant traffic flow , socially-aw are pedestrians, and aero- dynamically consistent multirotor dynamics, with synchronized capture of up to 18 sensor modalities across all aerial and ground platforms at each simu- lation tick. (iv) Extensible asset pipeline. Researchers can import custom robot platforms, U A V configurations, v ehi- cles, and en vironment maps into the shared simula- tion world, enabling flexible construction of di verse air-ground interaction scenarios. Building on these capabilities, CARLA-Air provides out- of-the-box support for representativ e air-ground embodied intelligence workloads across four research directions: (a) Air-gr ound cooperation —heterogeneous aerial and ground agents coordinate within a shared environment for tasks such as cooperative surv eillance, escort, and search-and-rescue. (b) Embodied na vigation and vision-language action — agents navigate and act grounded in visual and lin- guistic input, leveraging both aerial overvie w and ground-lev el detail. (c) Multi-modal per ception and dataset construction — synchronized aerial-ground sensor streams are col- lected at scale to build paired datasets for cross-vie w perception, 3D reconstruction, and scene understand- ing. (d) Reinfor cement-learning-based policy training — agents learn cooperati ve or individual policies through closed-loop interaction in physically consistent air- ground en vironments. As a lightweight and practical infrastructure, CARLA- Air lowers the barrier for developing and ev aluating air- ground embodied intelligence systems, and provides a unified simulation foundation for emerging applications in low-altitude robotics, cross-domain autonomy , and large- scale embodied AI research. 2 Related W ork Simulation platforms relev ant to autonomous systems span autonomous driving, aerial robotics, joint co-simulation, and embodied AI. From the perspecti ve of air -ground em- bodied intelligence, the central question is not whether a platform supports driving or flight in isolation, b ut whether aerial and ground agents can be jointly simulated within a unified, physically coherent, and practically usable en vi- ronment. As illustrated in Fig. 3 and T able 1 , e xisting open- source platforms lar gely remain separated by domain focus, and none simultaneously provides realistic urban traffic, socially-aw are pedestrians, physics-based multirotor flight, preserved nati v e APIs, and single-process execution in one shared simulation world. 2.1 A utonomous Driving Simulators Autonomous driving simulators provide strong support for realistic urban scenes, traffic agents, and ground-vehicle perception. CARLA [ 2 ], built on Unreal Engine [ 3 ], has become the de facto open-source platform for urban dri v- ing research due to its photorealistic en vironments, rich actor library , and mature Python API. LGSVL [ 13 ] of- fers full-stack integration with Auto ware and Apollo on the Unity engine. SUMO [ 8 ] provides lightweight mi- croscopic traffic flow modeling. MetaDriv e [ 7 ] enables procedural en vironment generation for generalizable RL, and VIST A [ 1 ] supports data-dri ven sensor-vie w synthe- sis for autonomous vehicles. These platforms collecti vely cov er a broad range of ground-autonomy research needs, but none nati vely supports physics-based U A V flight, leav- ing air-ground cooperati v e workloads outside their scope. 2.2 Aerial V ehicle Simulators Aerial simulators provide the complementary capability: accurate multirotor dynamics, onboard aerial sensing, and U A V -oriented control interfaces. AirSim [ 15 ] remains one of the most widely adopted open-source U A V sim- ulators, of fering physics-accurate multirotor flight and a comprehensiv e sensor suite on Unreal Engine, though its 3 upstream development has since been archiv ed. Flight- mare [ 16 ] combines Unity-based photorealistic rendering with highly parallel dynamics for fast RL training. Flight- Goggles [ 5 ] provides photogrammetry-based en vironments for perception-driv en aerial robotics. Gazebo [ 6 ], together with MA V -specific packages such as RotorS [ 4 ], of fers a mature R OS-inte grated simulation stack for multi-rotor control and state estimation. OmniDrones [ 19 ] and gym- pyb ullet-drones [ 12 ] target scalable, GPU-accelerated or lightweight RL-oriented multi-agent U A V training. While these systems are well suited to aerial autonomy in isola- tion, they generally lack realistic urban traffic populations, pedestrian interactions, and richly populated ground en vi- ronments, limiting their use as infrastructure for air-ground cooperation or cross-domain data collection. 2.3 Joint and Co-Simulation Platf orms The most rele vant prior efforts attempt to combine aerial and ground simulation through co-simulation. T ran- SimHub [ 17 ] connects CARLA with SUMO and aerial agents via a multi-process architecture supporting syn- chronized multi-view rendering. Other representativ e approaches include ROS-based pairings of AirSim with Gazebo [ 15 , 6 , 9 ]. These systems demonstrate that het- erogeneous simulation backends can be functionally con- nected, but their integration typically depends on bridges, RPC layers, or message-passing middleware across in- dependent processes. As summarized in T able 2 , such designs do not preserve a single rendering pipeline, do not provide strict shared-tick e xecution, and often require adapting existing code to new interfaces. By contrast, CARLA-Air integrates both simulation backends within a single Unreal Engine process, preserving both nati ve APIs while maintaining a shared world state, shared renderer , and synchronized sensing—a system-le vel distinction de- tailed in Section 3 . 2.4 Embodied AI and Robot Learning Plat- f orms Embodied AI platforms prioritize a different design ob- jectiv e: scalable policy training rather than realistic ur- ban air-ground infrastructure. Isaac Lab [ 11 ] and Isaac Gym [ 10 ] emphasize massi vely parallel GPU-accelerated reinforcement learning for locomotion and manipulation. Habitat [ 14 ] and SAPIEN [ 18 ] target indoor navigation and articulated object interaction, while RoboSuite [ 20 ] focuses on tabletop manipulation benchmarks. These plat- forms are valuable for embodied intelligence research, but they do not pro vide the urban traffic realism, socially-aware pedestrian populations, or integrated aerial-ground simula- tion required by lo w-altitude cooperativ e robotics. In this sense, they address complementary research needs and are not direct substitutes for CARLA-Air . 2.5 Summary Fig. 3 positions CARLA-Air and representativ e platforms along two principal design ax es: simulation fidelity and agent domain breadth. Driving simulators pro vide realis- tic urban ground en vironments without aerial dynamics; U A V simulators provide flight realism without populated ground worlds; joint simulators generally rely on multi- process bridging that sacrifices interface compatibility or synchronization fidelity; and embodied AI platforms focus on scalable learning rather than air -ground infrastructure. CARLA-Air is designed to sit at the intersection of these domains, combining realistic urban traffic, socially-a ware pedestrians, physics-based multirotor flight, preserved na- tiv e APIs, and single-process ex ecution within one uni- fied simulation en vironment. T able 1 provides a detailed feature-lev el comparison across all platforms discussed abov e. Agent Domain Breadth Simulation Fidelity Single domain Multi-domain Lightweight High-fidelity CARLA [ 2 ] Ground AirSim [ 15 ] Aerial FlightGoggles [ 5 ] Isaac Lab [ 11 ] Habitat [ 14 ] SUMO [ 8 ] T ranSimHub [ 17 ] OmniDrones [ 19 ] MetaDrive [ 7 ] CARLA-Air (Ours) ⋆ Figure 3: Platform positioning along simulation fidelity and agent domain breadth. CARLA-Air ( ⋆ ) occupies the high-fidelity , multi-domain quadrant without inter -process bridging. Dashed arrows indicate subsumption of upstream capabilities. 3 System Ar chitecture CARLA-Air integrates CARLA [ 2 ] and AirSim [ 15 ] within a single Unreal Engine [ 3 ] process through a mini- mal bridging layer that resolves a fundamental engine-le v el initialization conflict while preserving both platforms’ na- tiv e APIs, physics engines, and rendering pipelines intact. Fig. 4 presents the high-le vel runtime structure; the follo w- ing subsections elaborate each design decision. 4 T able 1: Consolidated comparison of representativ e open-source simulation platforms for air-ground embodied intelligence research. ✓ = supported; ∼ = partial or constrained; × = not supported; — = not applicable. Platform Urban T raffic Pedes- trians U A V Flight Single Process Shared Renderer Native APIs Joint Sensors Preb uilt Binary T est Suite Custom Assets Open Source Autonomous Driving CARLA [ 2 ] ✓ ✓ × ✓ ✓ ✓ × ✓ × ✓ ✓ LGSVL [ 13 ] ✓ ✓ × ✓ ✓ ✓ × ✓ × ∼ ✓ SUMO [ 8 ] ✓ ✓ × ✓ × ✓ × ✓ × × ✓ MetaDriv e [ 7 ] ✓ ∼ × ✓ ∼ ✓ × × × × ✓ VIST A [ 1 ] ∼ × × ✓ ∼ ✓ × × × × ✓ Aerial / U A V AirSim [ 15 ] × × ✓ ✓ ✓ ✓ × ✓ × ✓ ✓ Flightmare [ 16 ] × × ✓ ✓ ✓ ✓ × × × ∼ ✓ FlightGoggles [ 5 ] × × ✓ ✓ ✓ ✓ × × × ∼ ✓ Gazebo / RotorS [ 6 , 4 ] ∼ ∼ ✓ ✓ ∼ ✓ × ✓ × ✓ ✓ OmniDrones [ 19 ] × × ✓ ✓ ✓ ✓ × × × ✓ ✓ gym-pybullet-drones [ 12 ] × × ✓ ✓ ∼ ✓ × × × ∼ ✓ Joint / Co-Simulation TranSimHub [ 17 ] ✓ ✓ ✓ × × × ∼ — × × ✓ CARLA+SUMO [ 2 , 8 ] ✓ ✓ × × × ∼ × — × × ✓ AirSim+Gazebo [ 15 , 6 , 9 ] ∼ ∼ ✓ × × ∼ ∼ — × ∼ ✓ Embodied AI & RL Isaac Lab [ 11 ] × × ∼ ✓ ✓ ✓ × × ✓ ✓ ✓ Isaac Gym [ 10 ] × × ∼ ✓ ✓ ✓ × × × × ✓ Habitat [ 14 ] × ∼ × ✓ ✓ ✓ × × × × ✓ SAPIEN [ 18 ] × × × ✓ ✓ ✓ × × × ∼ ✓ RoboSuite [ 20 ] × × × ✓ ✓ ✓ × × × ∼ ✓ CARLA-Air (Ours) ✓ ✓ † ✓ ✓ ✓ ✓ ✓ ✓ ✓ ✓ ✓ † Pedestrian AI is inherited from CARLA and fully functional; behavior under high actor density in joint scenarios is an acti ve engineering target (Section 6 ). Python CARLA Client Python AirSim Client Single UE4 Pr ocess CARLA RPC Server AirSim RPC Server CARLA-Air Game Mode ( CARLAAirGameMode ) Ground Subsystems episode • weather • traffic actors • scenario recorder acquired via inheritance Aerial Flight Actor physics engine • flight pawn aerial sensor suite composed in B EG IN P L AY Shared UE4 Rendering Pipeline RGB • depth • segmentation • weather effects Figure 4: Runtime architecture of CARLA-Air . A sin- gle engine process hosts both simulation backends, each communicating with its respectiv e Python client via an in- dependent RPC server . CARLAAirGameMode acquires ground simulation functionality through class inheritance and integrates the aerial flight actor through composition. All world actors share a single rendering pipeline. T able 2: Architectural comparison of representativ e joint and co-simulation platforms. a Platform Single Proc. API Kept Shared Render Sync Mode Open Source T ranSimHub [ 17 ] × × × Msg. ✓ CARLA+SUMO [ 2 , 8 ] × ∼ × RPC ✓ AirSim+Gazebo [ 15 , 6 , 9 ] × ∼ × Decpl. ✓ AirSim+CARLA b × × × Decpl. ✓ CARLA-Air (Ours) ✓ ✓ ✓ Shared ✓ a Single Proc. = single-process execution; API K ept = preservation of nati ve simulator APIs; Shared Render = shared rendering pipeline; Sync Mode: Msg. = message passing, Decpl. = decoupled execution, Shared = shared-tick within one process. b Refers to community bridge solutions that run AirSim and CARLA as independent processes connected via R OS 2 or custom middleware; not an of ficial product of either project. 3.1 Plugin and Dependency Structure The system comprises two plugin modules that load se- quentially during engine startup. The ground simulation plugin initializes first, establishing its world management subsystems before any game logic ex ecutes. The aerial simulation plugin declares a compile-time dependency on the ground plugin, enabling CARLAAirGameMode to ac- cess the ground platform’ s initialization interfaces during its own startup phase. This dependency is strictly one- directional: no ground-platform source file references any 5 aerial component, preserving the upstream CARLA code- base’ s update path without modification. T wo independent RPC servers run concurrently within the single process— one per simulator—allo wing the nati ve Python clients of each platform to connect without modification. V ersion compatibility across the two upstream code- bases, network configuration, and port assignments are documented in Appendix A.1 . 3.2 The GameMode Conflict and Its Resolu- tion UE4 enforces a strict in variant: each world may ha ve e x- actly one acti v e game mode. CARLA ’ s game mode orches- trates episode management, weather control, traf fic simu- lation, the actor lifec ycle, and the RPC interf ace through a deep inheritance chain. AirSim’ s game mode performs a separate startup sequence—reading configuration files, adjusting rendering settings, and spawning its flight actor . Because the two game modes are unrelated by inheritance, assigning either to the world map silently skips the other’ s initialization, rendering a lar ge portion of its API surface inoperativ e. Architectural asymmetry . A structural difference be- tween the two systems makes resolution tractable and constitutes the central design insight of CARLA-Air . CARLA ’ s subsystems are tightly coupled to its game mode through inheritance and privile ged class relationships; they cannot be relocated outside the game mode slot without in v asiv e upstream refactoring. AirSim’ s flight logic, by contrast, resides in a class derived from the generic ac- tor base—not the game mode base—and can therefore be spawned as a re gular world actor at an y point after world initialization. Solution: single inheritance plus composition. W e introduce CARLAAirGameMode , which inherits from CARLA ’ s game mode base and occupies the single av ail- able slot. All ground simulation subsystems are thereby acquired through the standard UE4 lifecycle. The aerial flight actor is then composed into the world during the engine’ s B E G I N P L AY phase, after ground initialization is complete, and nev er competes for the game mode slot. Fig. 5 contrasts the nai ve conflict with this adopted solu- tion. Source f ootprint. The integration modifies e xactly two files in the upstream CARLA source tree: two pre viously priv ate members are promoted to protected visibility and one pri vileged class declaration is added. All remaining integration code resides within the aerial plugin as purely additiv e content. The complete modification summary is provided in Appendix A.2 . 3.3 Coordinate System Mapping CARLA and AirSim employ incompatible spatial refer- ence frames that must be reconciled to co-register aerial and ground sensor data. CARLA inherits UE4’ s left- handed system with X forward, Y right, and Z up, in centimeters. AirSim adopts a right-handed North-East- Down (NED) frame with X north, Y east, and Z do wn, in meters. Fig. 6 illustrates both frames and their geometric relationship. Let p ∈ R 3 denote a point in the UE4 world frame and o the shared world origin established during initialization. The equiv alent NED position is p NED = 1 100 p x − o x p y − o y − ( p z − o z ) , (1) where the scale factor con verts centimeters to meters and the sign re versal on the third component reflects the Z-axis in version. Because the X and Y axes are directionally aligned, no axis permutation is required. For orientation, let q = ( w , q x , q y , q z ) denote a unit quaternion in the UE4 frame. The equiv alent NED quater- nion is q NED = w , q x , q y , − q z , (2) where negating q z accounts for the Z-axis reversal and the associated change of frame handedness. Eqs. ( 1 ) and ( 2 ) to- gether fully specify the pose transform, enabling consistent fusion of drone attitude from the aerial API with v ehicle heading from the ground API across all joint simulation workflo ws. 3.4 Asset Import Pipeline CARLA-Air provides an e xtensible asset import pipeline that allows researchers to bring custom robot platforms, U A V models, vehicles, and environment assets into the shared simulation world. Imported assets are fully inte- grated into the joint simulation en vironment: they partic- ipate in the same physics tick and rendering pass as all built-in actors, respond to both ground and aerial API calls, and are visible to all sensor modalities across both sim- ulation backends. This capability enables ev aluation of custom hardware designs—such as novel multirotor con- figurations or application-specific ground robots—within realistic air-ground scenarios without modifying the core CARLA-Air codebase. Fig. 14 shows two examples of user-imported assets operating within the platform. 6 (a) Naive appr oach UE4 Game Mode Slot CARLA Game Mode AirSim Game Mode × × Ground subsystems Aerial flight actor One mode silently discarded; corresponding API inoperativ e (b) CARLA-Air solution CARLA Game Mode Base CARLAAirGameMode occupies game mode slot Ground Subsystems inherited Aerial Flight Actor composed Figure 5: Resolving the UE4 single-game-mode constraint. (a) Both backends pro vide independent game mode classes; assigning either silently discards the other . (b) CARLAAirGameMode inherits all ground functionality from CARLA ’ s game mode base while composing the aerial flight actor as a spawned w orld actor . Figure 6: Coordinate frames of the two simulation back- ends. The transform T requires only a Z-axis sign flip and a centimeter-to-meter scale factor; the forward ( X / X N ) and rightward ( Y / Y E ) ax es are aligned across both con ven- tions. 4 P erf ormance Evaluation This section e valuates CARLA-Air under representa- tiv e joint air -ground workloads across three e xperiments: frame-rate and resource scaling (Section 4.2 ), memory stability under sustained operation (Section 4.3 ), and com- munication latency (Section 4.4 ). Full configuration pa- rameters and raw data are deferred to Appendix A.1 . Reference hardwar e. All measurements are collected on a workstation equipped with an NVIDIA R TX A4000 (16 GB GDDR6), AMD Ryzen 7 5800X (8-core, 4.7 GHz), and 32 GB DDR4-3200, running Ub untu 20.04 L TS. The simulator runs in Epic quality mode with T own10HD loaded unless stated otherwise. All aerial experiments use the built-in SimpleFlight controller with default PID gains. CPU af finity and GPU po wer limits are left at system defaults to reflect realistic research deployment conditions. 4.1 Benchmark Methodology Reliable performance measurement requires eliminating startup transients—map-loading jitter , first-frame shader compilation, and actor lifecycle initialization must be dis- carded before steady-state sampling be gins. Algorithm 1 formalizes the benchmark harness used throughout this section. Algorithm 1 Simulation Performance Benchmark Harness Require: W orkload W , warm-up ticks T w , measurement ticks T m Ensure: Frame-time f , VRAM v , latency ℓ 1: Connect to simulation; load map; wait for shader compilation 2: Spawn actors/sensors per W ; enable synchronous mode 3: for t = 1 to T w do ▷ W arm-up—discarded 4: Advance one tick 5: end for 6: f , v , ℓ ← ⟨⟩ 7: for t = 1 to T m do 8: t 0 ← N OW () ; advance one tick 9: f . A P P E N D (1 / ( N O W () − t 0 )) 10: if VRAM sample interval reached then 11: Record GPU memory → v ; measure API round-trip → ℓ 12: end if 13: end for 14: Destroy all actors; disable synchronous mode 15: return f , v , ℓ Each profile uses T w = 200 warm-up ticks and T m = 2 , 000 measurement ticks. VRAM is sampled ev ery 60 s. All reported frame rates are the harmonic mean of f — the appropriate central tendency for rate quantities—with standard de viations alongside. The latency benchmark is- sues 500 warm-up calls followed by 5 000 measurement calls; actor spawn calls are each paired with an immediate destroy to pre vent scene-state accumulation from contami- nating subsequent measurements. 7 4.2 Experiment 1: Frame Rate and Re- source Scaling W orkload design. Under synchronous-mode operation, per-tick w all time is bounded by the slo west of three con- current contributors: the rendering thread (GPU-bound), the ground-actor dispatch loop (CPU-bound), and the aerial physics engine (CPU-bound, asynchronous at ≈ 1 , 000 Hz on a dedicated thread). Sensor rendering dominates at high resolution due to GPU memory bandwidth satura- tion; actor population contributes via per -mesh draw calls. These relationships motiv ate the workload stratification in T able 3 . Analysis. The moderate joint configuration—combining ground traffic, an aerial agent, and a full sensor suite— sustains 19 . 8 ± 1 . 1 FPS, sufficient for closed-loop pol- icy e valuation at standard RL episode lengths. Compar- ing the standalone ground baseline ( 28 . 4 FPS) against the moderate joint profile ( 19 . 8 FPS) quantifies total integra- tion ov erhead at 8 . 6 FPS (30.3%), of which 2 . 1 FPS is at- tributable to ground co-hosting and the remaining 6 . 5 FPS to the aerial physics engine. Crucially , this aerial ov er- head manifests entirely in CPU utilization ( 54% vs. 38% ) rather than GPU memory: VRAM differs by only 39 MiB between ground-only and moderate-joint profiles. The traffic surveillance profile retains comparable throughput ( 20 . 1 FPS) despite doubling the vehicle count, confirming that sensor rendering—not actor population—is the dom- inant cost driver at 1920 × 1080 . The 3-hour endurance profile ( 19 . 7 ± 1 . 3 FPS) confirms that throughput does not degrade under sustained operation. 4.3 Experiment 2: Memory Stability Steady-state VRAM profile. The base map asset load accounts for approximately 3 702 MiB at idle (T own10HD, Epic quality); each additional ground sensor contributes 4– 10 MiB at 1280 × 720 . A transient peak of approximately 5 000 MiB occurs during map loading and resolves within 30 s. All steady-state profiles remain within 3 878 MiB, retaining approximately 12 506 MiB (76%) of the 16 GB de vice budget for co-located workloads such as GPU-based policy training. Leak verification. T able 4 summarises the 3-hour en- durance run across 357 actor spawn-and-destroy cycles. A linear regression of VRAM against cycle index yields a slope of 0 . 49 MiB/cycle with R 2 = 0 . 11 , indicating no statistically significant accumulation trend. Fig. 7 visu- alises the VRAM trace: the negligible early-to-late drift ( 3 , 868 → 3 , 878 MiB, approximately 10 MiB over 327 c y- cles) is attributable to residual render-tar get caching rather than lifecycle leakage. Time (min) VRAM (MiB) 3800 3840 3880 3920 0 30 60 90 120 150 180 early late ¯ v early = 3868 ¯ v late = 3878 VRAM (60 s interval) Phase mean Figure 7: VRAM trace o ver a 3-hour stability run (357 spawn/destro y cycles, moderate joint configuration, R TX A4000). Early-to-late drift is ≈ 10 MiB; linear regres- sion yields R 2 = 0 . 11 , confirming no significant memory accumulation. Zero API errors and zero simulation crashes across 357 cycles v alidate the robustness of the joint en viron- ment under the repeated agent-reset patterns typical of reinforcement-learning training. 4.4 Experiment 3: Communication Latency Both simulation APIs operate within the same process on the loopback interface, eliminating inter-process serial- ization overhead. The two RPC serv ers use distinct wire protocols and port assignments (detailed in Appendix A.1 ). T able 5 reports round-trip latency for representati ve API calls. Analysis. Lightweight state queries complete in 280– 490 µ s, well belo w the per-tick budget at 20 FPS (50 ms), confirming that API ov erhead does not contribute mean- ingfully to frame-time variance. Actor spawn latency (1 850 µ s) reflects a one-time GPU synchronization point for render-asset registration at episode reset. Image cap- ture latency (3 200 µ s) covers the full sensor pipeline— rendering, buf fer readback, and serialization—and is over - lapped with the rendering thread at synchronous tick rates. All measured values f all below the lo wer bound of bridge- based cross-process synchronization costs reported in [ 17 ] (1 000–5 000 µ s per frame). Tick-rate r econciliation. The aerial physics engine ad- vances at ≈ 1 , 000 Hz on its dedicated thread, while the rendering tick runs at ≈ 20 Hz under the moderate joint workload. Sensor callbacks read the aerial state at render- ing tick boundaries, so each ground-aerial sensor frame pair reflects the drone’ s integrated physical state ov er ≈ 50 aerial physics steps. Ground actor states are also resolved at tick boundaries, ensuring temporal co-registration across all sensor modalities within a single tick. Applications re- quiring finer aerial state resolution should reduce the fixed 8 T able 3: Frame rate and resource consumption across representativ e joint workloads (R TX A4000, T own10HD, Epic quality , synchronous mode). Harmonic mean ± 1 SD over 2 000 ticks after 200 warm-up. Profile Configuration FPS VRAM (MiB) CPU (%) Standalone baselines Ground sim only s 3 vehicles + 2 pedestrians; 8 sensors @ 1280 × 720 28 . 4 ± 1 . 2 3 , 821 ± 10 31 ± 3 Aerial sim only s 1 drone; 8 sensors @ 1280 × 720 44 . 7 ± 2 . 1 2 , 941 ± 8 29 ± 3 Joint workloads Idle T own10HD; no actors; no sensors 60 . 0 ± 0 . 4 3 , 702 ± 8 12 ± 2 Ground only 3 vehicles + 2 pedestrians; 8 sensors @ 1280 × 720 26 . 3 ± 1 . 4 3 , 831 ± 11 38 ± 4 Moderate joint 3 vehicles + 2 pedestrians + 1 drone; 8 sensors @ 1280 × 720 19 . 8 ± 1 . 1 3 , 870 ± 13 54 ± 5 T raffic surv eillance 8 autopilot vehicles + 1 drone; 1 aerial RGB @ 1920 × 1080 20 . 1 ± 1 . 8 3 , 874 ± 15 61 ± 6 Stability endurance Moderate joint; 357 spawn/destroy c ycles; 3 hr continuous 19 . 7 ± 1 . 3 3 , 878 ± 17 55 ± 5 s Standalone baseline: single simulator running without CARLA-Air integration. T able 4: Stability endurance results ov er 3 hours and 357 actor lifecycle c ycles (moderate joint configuration). Metric Early (cycles 1–30) Late (cycles 328–357) Frame rate (FPS) 19 . 9 ± 1 . 2 19 . 7 ± 1 . 3 VRAM (MiB) 3 , 868 ± 14 3 , 878 ± 17 CPU utilization (%) 53 ± 5 55 ± 5 API error count 0 0 Crash count 0 0 VRAM regression slope 0 . 49 MiB/cycle, R 2 = 0 . 11 simulation delta-time accordingly; the throughput trade-off follows from T able 3 . 5 Repr esentative A pplications CARLA-Air is validated through fi v e representativ e work- flows that collecti vely exercise the platform’ s core capa- bilities across the four research directions identified in Section 1 : air-ground cooperation, embodied navigation and vision-language action, multi-modal perception and dataset construction, and reinforcement-learning-based policy training. T able 6 provides a structured overvie w; detailed descriptions follow in Sections 5.1 – 5.5 . All workflo ws share a common dual-client architecture: both API clients ex ecute within the same Python process and operate on the same world state without inter-process communication. The follo wing minimal pattern is common to ev ery workflo w: ground = carla.Client(’localhost’, 2000) aerial = airsim.MultirotorClient() world = ground.get_world() # shared aerial.enableApiControl(True) User Python Script Ground Client Aerial Client Ground RPC Aerial RPC Unified UE4 Process Ground Game Mode Aerial Sim Mode Shared W orld — Actors, Physics, Rendering, W eather Figure 8: Dual-client architecture shared by all fiv e work- flows. A single Python script dri ves both clients, connected via TCP to independent RPC servers inside the unified en- gine process. 5.1 W1: Air -Ground Cooperativ e Precision Landing Autonomous precision landing of a U A V on a moving ground vehicle is a representati ve and challenging sce- nario for air -ground cooperation, with direct applications in drone-assisted logistics, mobile rechar ging, and multi- agent coordination. This workflo w demonstrates real- time cross-domain coordination within CARLA-Air’ s tick- synchronous control loop: the drone must continuously track the vehicle’ s position, plan a descent trajectory , and ex ecute a smooth touchdo wn—all while the v ehicle is in motion through urban traffic. Setup. A ground vehicle follows an autopilot route through T own10HD at moderate speed. A drone starts at ≈ 12 m altitude abov e the vehicle and is tasked with landing on the vehicle’ s roof. At each synchronous tick, the vehicle’ s 3D pose is queried via the ground API, trans- formed into the aerial NED frame using the coordinate 9 T able 5: Round-trip API call latency on the loopback interface (median ± IQR; 5 000 calls after 500 warm-up; R TX A4000; idle scene). API Call Median ( µ s) IQR ( µ s) Ground sim W orld state snapshot 320 40 Ground sim Actor transform query 280 35 Ground sim Actor spawn (+ paired destroy) 1,850 210 Ground sim Actor destroy 920 95 Aerial sim Multirotor state query 410 55 Aerial sim Image capture (1 RGB stream) 3,200 380 Aerial sim V elocity command dispatch 490 60 Bridge IPC [ 17 ] Cross-process state sync (ref.) 3,000 2,000 T able 6: Summary of five representativ e workflo ws validated on CARLA-Air , mapped to the four research directions from Section 1 . W orkflow Research Dir ection Platform F eatures Exer - cised FPS K ey Outcome W1 Precision landing Air-ground cooperation T ick-sync control, de- scent planning, cross- frame coordination ≈ 19 < 0 . 5 m final landing error W2 VLN/VLA data generation Embodied navigation Dual-view sensing, way- point planning, semantic annotation — Cross-view VLN data pipeline W3 Multi-modal dataset Perception & dataset 12-stream sync capture, shared tick ≈ 17 ≤ 1 -tick alignment error W4 Cross-view perception Perception & dataset Shared renderer , weather consistency ≈ 18 14/14 weather presets veri- fied W5 RL training env . RL policy training Sync stepping, stable re- sets, cross-domain re- ward — 357 reset cycles, 0 crashes mapping from Section 3.3 , and used to compute a descent command issued to the aerial flight controller . Control ar chitecture. The landing controller operates in three phases: appr oach (horizontal alignment with the vehicle), descent (controlled altitude reduction while main- taining horizontal tracking), and touchdown (final landing). Let q k ∈ R 2 denote the vehicle’ s horizontal position at tick k in the NED frame. The drone’ s commanded horizontal target tracks the v ehicle continuously: ˆ q k = q k + d , ˆ z k = z ref k , (3) where d is the coordinate origin of fset from Eq. ( 4 ) and z ref k decreases according to a smooth descent profile from the initial altitude to ground lev el. Results. Fig. 9 shows the complete landing sequence. The drone descends from ≈ 12 m to touchdo wn over ≈ 20 s, with horizontal con ver gence error decreasing from ≈ 6 m to within the ± 0 . 5 m tolerance band. The 3D trajec- tory ov erview confirms that the U A V trajectory conv erges smoothly onto the vehicle trajectory throughout the de- scent. T able 7 summarizes the landing performance. T able 7: W1 cooperativ e precision landing results (T own10HD, R TX A4000). Metric V alue Notes Mean FPS 19 . 3 Harmonic mean Initial altitude ≈ 12 m Start of descent Landing duration ≈ 20 s Approach to touchdown Final horiz. error < 0 . 5 m Within tolerance band Initial horiz. error ≈ 6 m At descent start RPC errors 0 Both clients 5.2 W2: Embodied Na vigation and VLN/VLA Data Generation V ision-language na vigation (VLN) and vision-language ac- tion (VLA) are among the most activ e research directions in embodied intelligence, requiring agents to navigate and act in realistic en vironments grounded in natural language instructions and visual observ ations. A key bottleneck is the av ailability of large-scale, div erse training data that pairs language instructions with rich visual observ ations from multiple viewpoints. CARLA-Air provides a natural 10 Figure 9: W1: Air-ground cooperati ve precision landing on a mo ving vehicle. (a) Time-lapse sequence ( t 1 – t 5 ) showing the drone (red box) descending tow ard and landing on a moving ground vehicle through approach, descent, and touchdo wn phases. (b) 3D trajectory ov erview: the U A V trajectory (blue) con ver ges onto the vehicle trajectory (orange), with the ground projection (dashed) sho wing horizontal alignment. (c) Altitude profile over time, illustrating smooth descent from 12 m to ground level. (d) Horizontal conv ergence error, decreasing from ≈ 6 m to within the ± 0 . 5 m tolerance band. All data are recorded within CARLA-Air’ s synchronous tick loop. data generation infrastructure for this purpose: its photo- realistic urban en vironments, socially-aware pedestrians, dynamic traffic, and simultaneous aerial-ground sensing enable the construction of VLN/VLA datasets with cross- vie w visual grounding that single-domain platforms cannot provide. Platform capabilities f or VLN/VLA. CARLA-Air sup- ports VLN/VLA data generation through several platform- lev el features: (i) both aerial and ground agents can be equipped with egocentric RGB, depth, and semantic seg- mentation cameras, providing paired bird’ s-eye and street- lev el visual observ ations along an y navig ation trajectory; (ii) the ground API exposes waypoint-based route plan- ning with lane-lev el precision, which can serve as the basis for generating language-grounded navigation instructions; (iii) the shared rendering pipeline guarantees that all vi- sual observ ations are captured under identical weather , lighting, and scene conditions at each tick; and (iv) the aerial overvie w pro vides a natural “oracle vie w” for gener- ating spatial referring expressions and v erifying na vigation progress. 5.3 W3: Synchronized Multi-Modal Dataset Collection Generating large-scale paired aerial-ground datasets is a bottleneck for training and ev aluating cooperati ve percep- tion models. Manual synchronization across separate sim- ulator processes introduces alignment errors that corrupt correspondence annotations. CARLA-Air eliminates this bottleneck: because both sensor suites are driv en by the same tick counter , the resulting dataset records carry guar- anteed per-tick correspondence with zero interpolation ov erhead. Setup. Eight ground sensors (RGB, semantic segmen- tation, depth, LiD AR, radar , GNSS, IMU, collision) and four aerial sensors (RGB, depth, IMU, GPS) are attached concurrently . The simulation runs in synchronous mode at a fixed timestep; all 12 sensor callbacks are registered before the first tick advance. Ground traf fic is populated with 30 autopilot vehicles and 10 pedestrians. Dataset structure. Each record R k at tick k contains all 12 sensor observations sharing a common tick inde x, plus vehicle and drone pose in the unified world frame. Records are serialized to disk in a flat per -tick directory structure with metadata in JSON. No timestamp interpolation is 11 Figure 10: W2: Embodied navigation with aerial reasoning. A UA V autonomously tracks a pedestrian (red box, bottom) through an urban en vironment using bird’ s-eye visual observations. Each frame is annotated with the drone’ s chain-of-thought reasoning, illustrating how the agent interprets the scene, anticipates occlusions, and adjusts its flight path to maintain persistent visual contact with the target. All frames are rendered within CARLA-Air’ s shared simulation world under consistent lighting and physics. required: the shared tick index serves as the alignment key . Results. Over 1 000 ticks, the workflo w produces 1 000 fully synchronized 12-stream records at a mean collection rate of ≈ 17 Hz. The maximum observed tick-alignment deviation across all 12 streams is one tick (occurring tran- siently under disk-write saturation). T able 8 summarizes collection performance. T able 8: W3 multi-modal dataset collection results (1 000 ticks, 30 vehicles, 10 pedestrians, T own10HD, R TX A4000). Metric V alue Notes Mean FPS 17 . 1 Harmonic mean Concurrent streams 12 8 ground + 4 aerial Records collected 1 000 One per tick Max alignment dev . ≤ 1 tick Normal disk-write load RPC errors 0 Both clients Per-tick write latenc y 61 ± 9 ms Incl. serialization 5.4 W4: Air -Gr ound Cross-V iew Perception Cross-view perception—fusing aerial bird’ s-eye observa- tions with ground-le vel sensing—is an emer ging research direction for cooperativ e autonomous driving, urban scene understanding, and 3D reconstruction. This workflo w demonstrates CARLA-Air’ s ability to pro vide spatially and temporally co-registered multi-modal sensor streams from both aerial and ground viewpoints within a single shared en vironment. Setup. A drone equipped with a depth camera hovers abov e a road segment while a ground e go v ehicle equipped with a semantic segmentation camera traverses the same segment. Both sensors are queried at each synchronous tick. Sensor co-registration. Co-re gistration leverages the coordinate mapping from Section 3.3 . The origin offset d ∈ R 3 between the aerial NED frame and the ground world frame is computed once at initialization: d = T p world spawn − p NED spawn , (4) where T ( · ) applies the scale conv ersion and axis remap- ping. When the drone spawns at the world origin, d = 0 . W eather consistency . T o verify rendering consistency across both sensor layers, the workflow iterates through all 14 official weather presets. For each preset in v olving significant illumination change, the mean pixel intensity of the aerial RGB frame shifts by more than 5% relati ve to the pre vious preset, confirming single-pass weather prop- agation. Because both sensors share the same rendering pipeline, temporal alignment is guaranteed: ϵ k = t gnd k − t air k = 0 , (5) a property that bridge-based architectures cannot provide. Results. Over 500 ticks, the workflo w produces 500 co-registered aerial-depth / ground-se gmentation pairs at ≈ 18 Hz with zero RPC errors. All 14 weather presets pass the illumination consistenc y assertion. T able 9 summarizes the measured outcomes. T able 9: W4 cross-view perception results (500 ticks, T own10HD, R TX A4000). Metric V alue Notes Mean FPS 18 . 2 Harmonic mean Co-registered pairs 500 Aerial depth + ground seg. Per-tick latenc y 52 ± 6 ms Full collection loop Sensor alignment 0 ticks Sync mode guarantee W eather presets passed 14 / 14 All official presets RPC errors 0 Both clients 12 Figure 11: W3: Synchronized multi-modal dataset collection at a single simulation tick. T op row (vehicle perspecti ve): RGB, semantic segmentation, depth, LiD AR bird’ s-e ye view , surface normals, and instance segmentation. Bottom row (drone perspectiv e): the same six modalities captured simultaneously from the aerial vie wpoint. All 12 sensor streams share an identical tick index and are rendered under the same lighting and weather conditions through CARLA-Air’ s shared rendering pipeline, guaranteeing per-tick spatial-temporal correspondence without interpolation. Figure 12: W4: Air-ground cross-vie w perception across div erse en vironments and weather conditions. Each ro w shows an aerial RGB vie w from the drone across six representativ e CARLA maps (T own 01–05 and T own 10HD); each column corresponds to a different weather preset (Clear Noon, Cloudy Noon, Dense Fog, Hard Rain, Night, Soft Rain, and Sunset). 5.5 W5: Reinf orcement Lear ning T raining En vir onment Reinforcement learning in air-ground cooperati v e settings requires a simulation environment that provides closed- loop interaction, consistent state observ ations across het- erogeneous agents, and stable long-horizon episode ex- ecution without memory leaks or synchronization drift. CARLA-Air’ s single-process architecture naturally sat- isfies these requirements, and the stability results from Section 4.3 (zero crashes and zero memory accumulation ov er 357 reset cycles) directly v alidate its suitability as an RL training en vironment. Platf orm capabilities for RL. CARLA-Air supports RL training workflo ws through sev eral platform-lev el features: (i) synchronous stepping mode provides deterministic state transitions compatible with standard Gym-style training loops; (ii) both aerial and ground agents expose program- matic control interfaces (velocity commands, waypoint targets, autopilot toggles) that can serve as action spaces; (iii) the full sensor suite (RGB, depth, segmentation, Li- D AR, IMU, GPS) provides rich observ ation spaces for both state-based and vision-based policies; (i v) episode resets via actor spawn/destroy are validated for stability across hundreds of consecuti ve c ycles (Section 4.3 ); and (v) the shared world state ensures that reward signals com- puted from cross-domain interactions (e.g., aerial-ground relativ e positioning) are physically consistent. 13 Example: cooperative positioning. As a representative RL scenario, consider a drone learning to maintain an optimal aerial observation position relativ e to a moving ground vehicle under varying traffic conditions. The ob- servation space comprises the drone’ s pose, the vehicle’ s pose, and surrounding traffic state; the action space is a 3D velocity command; the re ward encodes lateral tracking error and altitude maintenance. This scenario exercises the full air-ground control loop within CARLA-Air’ s syn- chronous tick, and can be implemented using standard RL libraries (e.g., Stable-Baselines3, RLlib) with minimal wrapper code. 6 Limitations and Futur e W ork The current release of CARLA-Air is v alidated for the workflo ws presented in Section 5 : single- and dual- drone aerial operations o ver moderate-density urban traffic scenes. • Actor density . Joint simulation performance is char- acterized at moderate traf fic loads; high-density scenes with large simultaneous actor populations remain an ac- tiv e engineering target. • En vironment resets. Map switching requires a full process restart due to independent actor lifecycle man- agement in each simulator backend; staged in-session resets are planned for a future release. • Multi-drone scale. Configurations beyond two drones are functional but not yet formally validated across a wide range of scenarios; e xpanded multi-drone charac- terization will be documented once inter-agent beha vior has been fully profiled. None of these constraints affect the workflows in Section 5 , all of which operate within the current boundaries. Because CARLA-Air inherits and extends AirSim’ s aerial capabilities—whose upstream de velopment has been archiv ed—long-term maintenance of the aerial stack is managed within the CARLA-Air project itself. Bug fixes, compatibility updates, and feature extensions to the aerial subsystem are released as part of CARLA-Air’ s re gular up- date cycle, ensuring that the flight simulation capabilities continue to ev olve independently of the original upstream repository . Looking ahead, near-term work will address physics- state synchronization between the two engines and a R OS 2 [ 9 ] bridge that republishes both simulator streams as standard topics for broader ecosystem integration. Longer-term, we aim to support GPU-parallel multi- en vironment execution in the spirit of Isaac Lab [ 11 ] and OmniDrones [ 19 ], bringing CARLA-Air closer to the episode throughput required for large-scale reinforcement learning. 7 Conclusion Simulation platforms for autonomous systems hav e his- torically fragmented along domain boundaries, forcing researchers whose work spans ground and aerial domains to maintain inter-process bridge infrastructure or accept capability compromises. CARLA-Air resolves this frag- mentation by integrating CARLA [ 2 ] and AirSim [ 15 ] within a single Unreal Engine process [ 3 ], exposing both nati ve Python APIs concurrently o ver a shared physics tick and rendering pipeline. T echnical contributions. The central technical con- tribution is a principled resolution of the single- GameMode constraint through a composition-based de- sign: CARLAAirGameMode inherits CARLA ’ s ground simulation subsystems while composing AirSim’ s aerial flight actor as a standard w orld entity , with modifications to exactly two upstream source files. This design yields three properties unav ailable in bridge-based approaches: a shared physics tick that eliminates inter-process clock drift, a shared rendering pipeline that guarantees consistent weather and lighting across all sensor viewpoints, and full preservation of both upstream Python APIs. Platform capabilities. Building on this architecture, CARLA-Air provides a photorealistic, physically coher- ent simulation world with rule-compliant traffic, socially- aware pedestrians, and aerodynamically consistent multi- rotor dynamics. Up to 18 sensor modalities can be syn- chronously captured across aerial and ground platforms at each simulation tick. The platform directly supports four research directions in air-ground embodied intelligence: air-ground cooperation, embodied navigation and vision- language action, multi-modal perception and dataset con- struction, and reinforcement-learning-based policy train- ing. V alidation. The platform is validated through perfor- mance benchmarks demonstrating stable operation at ≈ 20 FPS under joint workloads, a 3-hour continuous sta- bility run with zero crashes across 357 reset cycles, and fiv e representati ve workflo ws that e xercise the platform’ s core capabilities. A consolidated feature comparison with representativ e platforms is provided in T able 1 (Section 2 ). Broader impact. By providing a shared w orld state for aerial and ground agents, CARLA-Air enables research di- rections that are structurally inaccessible in single-domain 14 Figure 13: W5: Reinforcement learning training environment. T op: a drone learns to maintain an optimal aerial observation position above a moving ground vehicle within CARLA-Air’ s urban traffic en vironment. Bottom: the closed-loop RL pipeline. At each synchronous tick, CARLA-Air provides an observation space (drone and vehicle poses, relati ve distance, surrounding traffic state) to the policy network, which outputs 3D velocity commands as actions. The rew ard signal encodes tracking accuracy , altitude maintenance, and collision avoidance. platforms: paired aerial-ground perception datasets from physically consistent viewpoints, coordination policies ov er joint multi-modal observation spaces, and embod- ied na vigation grounded in cross-vie w visual and linguistic input. By also inheriting and extending the aerial simula- tion capabilities of AirSim—whose upstream dev elopment has been archi ved—CARLA-Air ensures that this widely adopted flight stack continues to e volv e within a modern, activ ely maintained infrastructure. Refer ences [1] Alexander Amini, Tsun-Hsuan W ang, Igor Gilitschenski, W ilko Schw arting, Zhijian Liu, Song Han, Sertac Karaman, and Daniela Rus. VIST A 2.0: An open, data-driv en simulator for multimodal sensing and policy learning for autonomous vehicles. In IEEE International Confer ence on Robotics and Automation (ICRA) , pages 4349–4356, 2022. [2] Alex ey Doso vitskiy , German Ros, Felipe Codevilla, Antonio Lopez, and Vladlen K oltun. CARLA: An open urban dri ving simulator . In Pr oceedings of the Confer ence on Robot Learning (CoRL) , pages 1–16, 2017. [3] Epic Games. Unreal Engine 4 documenta- tion. https://docs.unrealengine.com/4. 26/ , 2021. [4] Fadri Furrer , Michael Burri, Markus Achtelik, and Roland Siegw art. RotorS—a modular gazebo MA V simulator framework. In Robot Operating System (R OS): The Complete Reference , volume 1, pages 595–625. Springer , 2016. [5] W inter Guerra, Ezra T al, V arun Murali, Gilhyun Ryou, and Sertac Karaman. FlightGoggles: Pho- torealistic sensor simulation for perception-driven robotics using photogrammetry and virtual reality . In IEEE/RSJ International Conference on Intellig ent Robots and Systems (IR OS) , pages 6941–6948, 2019. [6] Nathan K oenig and Andrew How ard. Design and use paradigms for Gazebo, an open-source multi-robot simulator . In IEEE/RSJ International Conference on Intelligent Robots and Systems (IR OS) , pages 2149– 2154, 2004. [7] Quanyi Li, Zhenghao Peng, Lan Feng, et al. MetaDriv e: Composing di verse dri ving scenarios for generalizable reinforcement learning. IEEE T r ansac- tions on P attern Analysis and Machine Intelligence , 45(3):3461–3475, 2023. 15 [8] Pablo Alvarez Lopez, Michael Behrisch, Laura Bieker -W alz, et al. Microscopic traffic simulation using SUMO. In IEEE International Conference on Intelligent T ransportation Systems (ITSC) , pages 2575–2582, 2018. [9] Stev e Macenski, Tully Foote, Brian Gerkey , Chris Lalancette, and W illiam W oodall. Robot operating system 2: Design, architecture, and uses in the wild. Science Robotics , 7(66):eabm6074, 2022. [10] V iktor Makoviychuk, Lukasz W awrzyniak, Y unrong Guo, et al. Isaac Gym: High performance GPU-based physics simulation for robot learning. In NeurIPS Datasets and Benchmarks T rac k , 2021. [11] NVIDIA. NVIDIA Isaac Lab: A unified and mod- ular framework for robot learning. arXiv pr eprint arXiv:2511.04831 , 2025. [12] Jacopo Panerati, Hehui Zheng, SiQi Zhou, Amanda Prorok, and Angela P . Schoellig. Learning to fly—a gym en vironment with PyBullet ph ysics for reinforce- ment learning of multi-agent quadcopter control. In IEEE/RSJ International Conference on Intelligent Robots and Systems (IR OS) , pages 7512–7519, 2021. [13] Guodong Rong, Byung Hyun Shin, Hadi T abatabaee, et al. LGSVL Simulator: A high fidelity simulator for autonomous driving. In IEEE International Con- fer ence on Intelligent T ransportation Systems (ITSC) , pages 1–6, 2020. [14] Manolis Savv a, Abhishek Kadian, Oleksandr Maksymets, et al. Habitat: A platform for embodied AI research. In IEEE/CVF International Confer ence on Computer V ision (ICCV) , pages 9339–9347, 2019. [15] Shital Shah, Debadeepta Dey , Chris Lov ett, and Ashish Kapoor . AirSim: High-fidelity visual and physical simulation for autonomous vehicles. In F ield and Service Robotics , pages 621–635. Springer , 2018. [16] Y unlong Song, Selim Naji, Elia Kaufmann, Antonio Loquercio, and Davide Scaramuzza. Flightmare: A flexible quadrotor simulator . In Pr oceedings of the Confer ence on Robot Learning (CoRL) , pages 1–16, 2021. [17] Maonan W ang, Y irong Chen, Y uxin Cai, Aoyu P ang, Y uejiao Xie, Zian Ma, Chengcheng Xu, K emou Jiang, Ding W ang, Laurent Roullet, Chung Shue Chen, Zhiyong Cui, Y uheng Kan, Michael Lepech, and Man-On Pun. T ranSimHub: A unified air-ground simulation platform for multi-modal perception and decision-making. arXiv pr eprint arXiv:2510.15365 , 2025. [18] Fanbo Xiang, Y uzhe Qin, Kaichun Mo, et al. SAPIEN: A simAted part-based interactiv e ENvi- ronment. In IEEE/CVF Confer ence on Computer V ision and P attern Recognition (CVPR) , pages 11097– 11107, 2020. [19] Botian Xu, Feng Gao, et al. OmniDrones: An effi- cient and flexible platform for reinforcement learning in drone control. arXiv pr eprint arXiv:2309.12825 , 2023. [20] Y uke Zhu, Josiah W ong, Ajay Mandlekar , Roberto Martín-Martín, et al. robosuite: A modular simula- tion framework and benchmark for robot learning. arXiv pr eprint arXiv:2009.12293 , 2020. A A ppendix A.1 System Configuration Figure 14: Custom assets imported into CARLA-Air through the extensible asset pipeline. T op: a four-wheeled mobile robot with onboard LiD AR, imported from an e x- ternal FBX model. Bottom: a custom electric sport car with user-defined vehicle dynamics. Both assets operate within the shared simulation world alongside all built-in CARLA traffic and AirSim aerial agents, and are visible to all sensor modalities. Reference hardwar e. All experiments in this report were conducted on the following configuration: Ubuntu 20.04/22.04 L TS, NVIDIA R TX A4000 (16 GB GDDR6), AMD Ryzen 7 5800X (8-core, 4.7 GHz), 32 GB DDR4- 3200. 16 Software stack. CARLA 0.9.16, AirSim 1.8.1 (final sta- ble open-source release), Unreal Engine 4.26, Python 3.8+. Network configuration. T able 10 lists the def ault port assignments. Both RPC servers bind to localhost by default; remote connections require e xplicit IP configura- tion. T able 10: Default network port assignments. Service Protocol Port CARLA RPC Server TCP 2000 CARLA Streaming UDP 2001 AirSim RPC Server TCP 41451 Distribution. The prebuilt binary package is approx- imately 19 GB and includes a one-command launcher ( CarlaAir.sh ). The source distribution is approxi- mately 651 MB and is released under the MIT license. A.1.1 API Compatibility Summary T able 11 summarizes the API compatibility status and test coverage of the current CARLA-Air release. All 89 automated CARLA API tests pass without modification; the full AirSim flight control and sensor access API has been verified through manual and scripted testing. A total of 63 R OS 2 topics are published across both simulation backends. T able 11: API compatibility and test coverage. Component Status CARLA API 89/89 automated tests passing AirSim API Full flight control and sensor access verified R OS 2 topics 63 total (43 CARLA + 14 AirSim + 6 generic) K ey upstr eam scripts confirmed working manual_control.py CARLA manual dri ving interface automatic_control.py CARLA autopilot demonstration dynamic_weather.py CARLA weather preset cycling hello_drone.py AirSim basic flight demonstration A.2 Upstream Sour ce Modifications CARLA-Air is designed to minimize modifications to the upstream CARLA codebase. The integration touches only two header files and one source file, totaling approximately 35 lines of changes. All other integration code is purely additiv e, residing within the aerial simulation plugin as the CARLAAirGameMode class ( ∼ 1 , 405 lines of C++). T able 12 provides the complete modification summary . T able 12: Upstream CARLA source modifications. File Modification CarlaGameModeBase.h friend declaration for CARLAAirGameMode CarlaEpisode.h Sensor tagging visibility: private → protected CarlaGameModeBase.cpp State flag assignment (1 line) New inte gration layer (no upstr eam modification) CARLAAirGameMode Unified game mode class; ∼ 1 , 405 lines C++ A.3 Custom Asset Import CARLA-Air supports importing custom 3D assets (robot platforms, vehicles, UA V models, and en vironment ob- jects) through an asset pipeline b uilt on Unreal En- gine’ s content framework. Imported assets are re g- istered as spawnable actor classes and become ac- cessible through the standard CARLA Python API ( world.spawn_actor() ). Once registered, custom assets share the same physics tick, rendering pass, and sen- sor visibility as all b uilt-in actors, ensuring full consistency within the joint simulation en vironment. 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment