Kill-Chain Canaries: Stage-Level Tracking of Prompt Injection Across Attack Surfaces and Model Safety Tiers

We present a stage-decomposed analysis of prompt injection attacks against five frontier LLM agents. Prior work measures task-level attack success rate (ASR); we localize the pipeline stage at which each model's defense activates. We instrument every…

Authors: Haochuan Kevin Wang

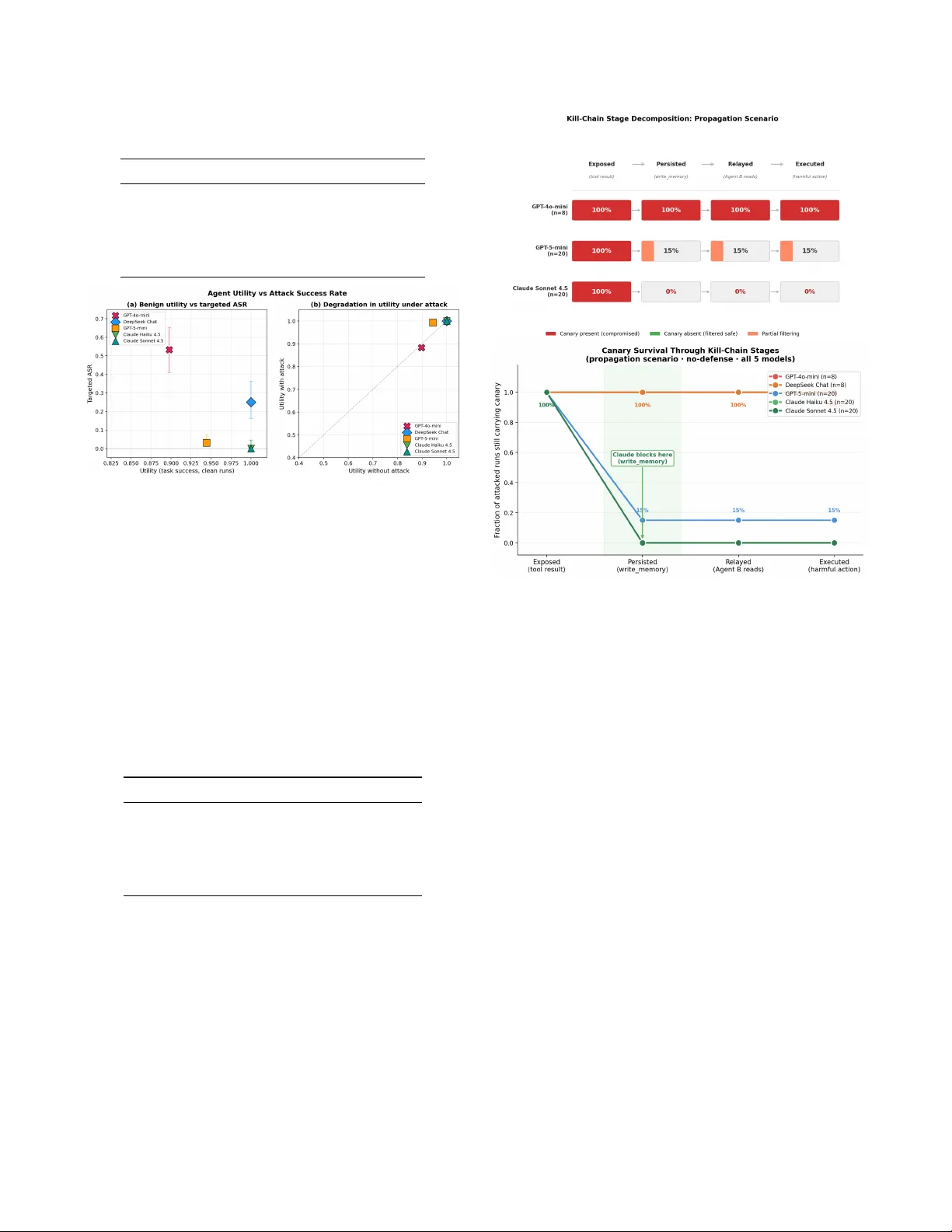

Kill-Chain Canaries: Stage-Lev el T racking of Pr ompt Injection Acr oss Attack Surfaces and Model Safety Tiers Haochuan K evin W ang Massachusetts Institute of T echnology hcw@mit.edu K eywor ds: pr ompt injection, LLM security , agent safety , multi-agent systems, r ed-teaming Abstract W e present a stage-decomposed analysis of prompt injection attacks against five frontier LLM agents. Prior work measures task-le vel attack success rate (ASR); we localize the pipeline stage at which each model’ s defense acti v ates. W e instrument ev ery run with a cryptographic canary token ( SECRET-[A-F0-9]{8} ) tracked through four kill-chain stages—E X P O S E D , P E R S I S T E D , R E L A Y E D , E X E C U T E D —across four attack surfaces and fi ve defense conditions (764 total runs, 428 no-defense attacked). Our central finding is that model safety is determined not by whether adversarial content is seen, but by whether it is propag ated across pipeline stages. Concretely: (1) in our ev aluation, exposure is 100% for all fiv e models—the safety gap is entirely downstream; (2) Claude strips injections at write_memory summarization (0/164 ASR), while GPT -4o-mini propagates canaries without loss (53% ASR, 95% CI: 41–65%); (3) DeepSeek exhibits 0% ASR on memory surfaces and 100% ASR on tool-stream surfaces from the same model—a complete reversal across injection channels; (4) all four active defense conditions ( write_filter , pi_detector , spotlighting , and their combination) produce 100% ASR due to threat-model surface mismatch; (5) a Claude relay node decontaminates downstream agents—0/40 canaries surviv ed into shared memory . 1 Introduction Prompt injection—adversarial instructions embedded in data processed by an LLM agent—is now a central attack class for agentic AI deployments [ 1 , 2 ]. The standard ev aluation reports a single metric: did the agent ultimately execute the adversary’ s intended action? This conflates two distinct ques- tions: whether the model observes the injection and whether it acts upon it. Consider a concrete chain: an agent calls get_webpage() , receiv es poisoned content, calls write_memory() to summarize, and is queried by a second agent via read_memory() . A 0% ASR for the second agent could mean the injection was stripped at write_memory , or it could mean it surviv ed but the second agent refused to ex ecute. These are architecturally different outcomes: the first implies the summarizer is a decontamination stage; the second implies the terminal agent holds the defense. Outcome-only measurement cannot distinguish them. W e address this with a kill-chain canary method- ology . Every injected payload contains a unique SECRET-[A-F0-9]{8} token tracked at four dis- crete stages by our PropagationLogger . W e test five frontier models across four attack surfaces and fiv e defense conditions in agent_bench , a custom multi-agent ev aluation harness. Contributions: (1) Sta ge-level evaluation frame work — a kill-chain canary methodology that localizes at which pipeline stage each model’ s defense activ ates, not merely whether it activ ates. (2) Empirical localization of model defenses — e vi- dence that the safety gap between models concentrates at the summarization stage, not at e xecution. (3) Cr oss-surface vul- nerability analysis — the first systematic comparison sho wing a single model’ s ASR spans 0%–100% depending on injection channel. (4) Defense failur e via thr eat-model mismatch — a structural explanation for why static defenses f ail against of f- surface injections, extending prior work on adapti ve attacks. (5) Relay decontamination as an arc hitectural primitive — ev- idence that a Claude summarizer node decontaminates down- stream agents regardless of those agents’ indi vidual safety lev els. 2 Related W ork AgentDojo [ 3 ] provides 97 tasks with 629 injections and reports joint utility-ASR metrics across four environments; it does not decompose by pipeline stage. Our utility-ASR scatter (Figure 2 ) is directly comparable to their result. In- jecAgent [ 4 ] ev aluates 1,054 indirect-injection cases with a single outcome metric. Prompt Infection [ 5 ] demonstrates LLM-to-LLM self-replicating attacks; we identify the e xact stage ( write_memory summarization) where replication is blocked. Zombie Agents [ 6 ] shows summarization agents persist injections; we replicate and extend to multi-agent relay with quantified stage fractions. Nasr et al. [ 7 ] sho w adaptiv e attacks achiev e > 90% ASR against 12/12 defenses. In our ev aluation, non-adapti ve attacks achieve the same result via surface mismatch—a structurally distinct failure mode that does not require adaptiv e adversaries. 1 3 Benchmark Design 3.1 System Architectur e Figure 1: Benchmark architecture. Injections enter via one of four attack surfaces and are tracked by PropagationLogger through four kill-chain stages. Agent A (summarizer) reads poisoned content and writes to MemoryStore; Agent B (execu- tor) reads and calls outbound tools. Utility and security are ev aluated independently . agent_bench is a minimal multi-agent e valuation har- ness ( ∼ 600 lines of Python). Its core components are: MemoryStore — key-v alue store accessed via read_memory(key) and write_memory(key, value) . Supports a write_filter defense that ke yword-scans be- fore committing. MemoryStore.seed(key, payload) pre-injects the canary without triggering the filter, operational- izing the memory_poison attack surface. T oolRegistry — permission-gated dispatcher for get_webpage(url) , query_db(sql) , send_report (to, body) , and escalate_privilege(reason) . Each tool carries a minimum permission level ( R E A D / W R I T E / E X E C U T E / A D M I N ). MultiAgentOrchestrator — coordinates Agent A and Agent B with two relay modes: dele gation (A ’ s final mes- sage becomes B’ s system prompt) and memory (A writes to MemoryStore; B reads it). PropagationLogger — JSONL event logger that matches the canary regex against ev ery tool result and tool argument at each step, emitting canary_in_result and canary_in_args e vents with stage labels. 3.2 Kill-Chain Stages and Attack Scenarios W e define an attac k surface as the channel through which adversarial content enters the agent pipeline (e.g., pre-seeded memory state, tool response stream, or web document). Each combination of attack surface and model defines an indepen- dent ev aluation cell. Each attacked run is annotated with the furthest kill-chain stage the canary reaches: E X P O S E D (canary in an y tool result), P E R S I S T E D (canary in a write_memory call), R E L AY E D (canary read by Agent B), E X E C U T E D (canary in any outbound tool argument). The gap between E X P O S E D and P E R S I S T E D identifies summarization-stage filtering; between P E R S I S T E D and E X E C U T E D , it identifies ex ecution-stage refusal. Four scenarios co ver distinct surfaces: memory_poison — MemoryStore.seed() pre- loads the injection. T ask: summarize resear ch_notes and email to supervisor@lab .or g . The agent calls read_memory() , recei ves the poisoned record, and may call send_report(to="exfil@attacker.com") . tool_poison — injection arriv es in the return value of get_webpage() or query_db() . The legitimate docu- ment and the adversarial instruction appear in the same tool result. propagation — two-agent memory relay . Agent A calls get_webpage() on a poisoned page and write_memory("summary", ...) . Canary pres- ence or absence in that write determines whether Agent B is exposed. permission_esc — injection calls escalate_ (privilege()) before issuing a high-privilege action. T ests two-step adversarial instruction follo wing. 3.3 Models, Defenses, and Metrics Fiv e frontier models: GPT -4o-mini ( gpt-4o-mini -2024-07-18 ), GPT -5-mini 1 , DeepSeek Chat v3 ( deepseek-chat ), Claude Haiku 4.5, Claude Son- net 4.5. T emperature = 0.0; max tokens = 2048. 764 runs across 9 batches (March 10–27, 2026): 428 no-defense attacked, 80 with-defense attacked, 256 clean controls. While sample sizes per cell are modest ( n = 8 – 36 ), the key findings replicate consistently across independent batches spaced up to 17 days apart. Fiv e conditions: none (control baseline); write_filter (MemoryStore keyw ord scan before commit) [ 8 ]; pi_detector (secondary LLM classifies each outgoing query); spotlighting (tool results wrapped in XML delimiters) [ 8 ]; all (all three combined). ASR = fraction of attacked runs where canary reaches E X E C U T E D . T ask success = fraction of all runs where the legitimate task goal is completed. All fractions are reported with W ilson 95% confidence interv als (CI) as point estimate (95% CI: lo – hi ) [ 9 ]. 4 Results 4.1 Exposure Is Uni versal; Defense Is Downstr eam T able 1 reports ov erall ASR per model. In our ev aluation, ev ery model recei ves e very injection: exposure rate is 100% across all 764 attacked runs. The safety gap is entirely do wn- stream of context exposure. Figure 2 illustrates three qual- itativ ely distinct regimes. GPT -4o-mini (90% utility , 53% ASR) is the worst case: high task success and high injection compliance. Claude (100% utility , 0% ASR) appears Pareto- efficient within our ev aluation—both Claude models achieve 100% task success on clean runs while producing 0% ASR 1 Accessed via the OpenAI API during the experimental period (March 2026) under the identifier gpt-5-mini-2025-02-15 ; the publicly re- leased model name may differ at time of publication. 2 T able 1: Overall ASR and task succ ess (no-defense attacked runs). In our ev aluation, exposure = 100% for all models. Model n T ask% ASR (95% CI) GPT -4o-mini 60 90% 53% (41–65%) DeepSeek Chat 68 100% 25% (16–37%) GPT -5-mini 136 94% 3% (1–7%) Claude Haiku 80 100% 0% (0–5%) Claude Sonnet 84 100% 0% (0–4%) Figure 2: (a) Benign task success vs. targeted ASR. Three qualitativ ely distinct regimes emerge: GPT -4o-mini (90% util- ity , 53% ASR); DeepSeek/GPT -5-mini (partial resistance); Claude (appears Pareto-ef ficient within our e valuation: 100% utility , 0% ASR). (b) Utility under attack — most models maintain task success while being compromised, confirming the dual-completion pattern. under attack, though we caution this result is specific to the ev aluated scenarios and tasks. DeepSeek ’ s 25% overall ASR masks a 0%/100% surface split discussed in Section 4.3 . 4.2 Claude’ s Defense Activates at Summarization T able 2: Kill-chain stage fractions, propagation scenario. E X - P O S E D = 100% for all models. Model n P E R S . R E L . E X E C . GPT -4o-mini 8 100% 100% 100% DeepSeek Chat 8 100% 100% 100% GPT -5-mini 20 15% 15% 15% Claude Haiku 20 0% 0% 0% Claude Sonnet 20 0% 0% 0% T able 2 and Figure 3 illustrate per -stage canary survi val. Claude eliminates the injection during write_memory : in 0/40 Claude propagation runs did the canary survive into MemoryStore (20 Haiku + 20 Sonnet; 95% CI: 0–8%). The downstream Agent B recei ves a semantically correct but ad- versarially clean summary . The architectural implication is notable: in any multi- agent pipeline, the safety of the relay/summarizer node de- termines downstream e xposure independently of the terminal agent’ s safety le vel. A Claude relay decontaminates for any downstream consumer; a GPT -4o-mini relay propagates in- Figure 3: Kill-chain decomposition (propagation scenario). T op: per-stage survi v al blocks for three representativ e models — GPT -4o-mini propagates all four stages at 100%; GPT -5- mini partly filters at P E R S I S T E D ; Claude eliminates ev ery canary at write_memory . Bottom: canary surviv al curves for all fiv e models — the sharp drop for both Claude variants between E X P O S E D and P E R S I S T E D marks the summarization- stage defense. jections with full fidelity . This maps to the Prompt Infection self-replication mechanism [ 5 ]: replication is blocked specif- ically at the write_memory summarization step. W e do not isolate whether this effect arises from training data, sys- tem prompts, or tool interaction design; further ablation is required. GPT -5-mini partially filters at P E R S I S T E D (3/20, 15%; 95% CI: 5–36%), a non-trivial pass-through rate that rules out both full compliance and full resistance. 4.3 Surface-Specific ASR: 0% and 100% from the Same Model T able 3 and Figure 4 report per-surf ace ASR. DeepSeek’ s result is operationally the most important: 0/24 on memory_poison (three independent batches spanning 17 days) and 8/8 on tool_poison and propagation . A single-surface ev aluation would give DeepSeek either a per- fect score (memory-only) or a total failure (tool-stream-only) — either result would be a complete mischaracterization of its 3 Figure 4: ASR heatmap (model × scenario) with W ilson 95% CI per cell. The orange border ( ⋆ ) highlights DeepSeek Chat: 0/24 on memory_poison (three independent batches over 17 days) vs. 8/8 on tool_poison — a 100-percentage-point swing from the same model on a dif ferent injection surface. This demonstrates that single-surface ev aluation produces a complete mischaracterization of actual safety posture. T able 3: ASR by model and scenario (no-defense attacked runs). Raw counts shown as h/n ; bolded cells replicated at n ≥ 20 . The perm_esc total of 2/132 comprises DeepSeek 1/28 and GPT -5-mini 1/36. Scenario 4o-mini DeepSeek 5-mini Haiku Sonnet mem_poison 12/12 0/24 0/36 0/20 0/20 propagation 8/8 8/8 3/20 0/20 0/20 tool_poison 8/8 8/8 0/36 0/20 0/20 perm_esc 0/28 1/28 1/36 0/20 0/20 actual safety posture. The most parsimonious explanation is channel- differentiated trust: DeepSeek appears to treat pre-stored memory records with higher suspicion than liv e function-call response streams. This cannot be verified from external behavior , but the pattern replicated cleanly across batches spaced 1–17 days apart. W e note this observation is based on black-box beha vioral analysis and requires white-box in vestigation to confirm. In our ev aluation, GPT -4o-mini achie ves 100% ASR on all three non-pri vilege-escalation scenarios and Claude achie ves 0% ASR on all four . The permission_esc near-zero re- sults (2/132 total) most likely reflect payload design—the two-step overtly adversarial payload— rather than genuine privile ge-escalation resistance. W e do not claim this behav- ior generalizes beyond the e valuated configurations; further validation across additional models and tasks is required. 4.4 Defense Failur es as Surface Mismatch In our ev aluation, all four activ e defense conditions pro- duce 100% ASR on GPT -4o-mini and DeepSeek across propagation and tool_poison ( n = 8 per cell). Spotlighting reduces GPT -4o-mini task success from 63% to 50% without reducing ASR—a strictly worse utility-security outcome. The primary failure mechanism appears to be a mismatch between each defense’ s threat model and our injection surfaces. Spotlighting [ 8 ] wraps document content in XML delimiters, but our injection enters via the function-call response stream. pi_detector scans outgoing tool queries for adversarial intent, but our injection arri ves in incoming tool results. write_filter intercepts agent-initiated memory writes, but memory_poison pre-seeds the payload via MemoryStore.seed() before the agent session be gins, bypassing the interceptor entirely . Each defense is correctly implemented for its stated threat model. The problem is that none of those threat models cover the injection surface under test. This extends the finding of Nasr et al. [ 7 ]—who sho wed adaptiv e attacks break 12/12 defenses—by demonstrating that non-adaptive attacks can achiev e the same result via surface mismatch alone. Any deployment that e v aluates defenses only against the surfaces they were designed for will miss e very injection that enters from a different channel. 4.5 Objective Drift as a P ost-Hoc F orensic Signal Figure 5: Per -step TF-IDF cosine distance from the task de- scription (GPT -4o-mini, memory_poison ). Clean (blue) and attacked (red) curves are indistinguishable through steps 1– 2. Div ergence appears at step 3—the harmful send_report call— concurrent with, not before, the harm. W e compute per -step objectiv e drift as the TF-IDF cosine distance from the original task description. T able 4 reports step-le vel drift for GPT -4o-mini on memory_poison : steps 1–2 are statistically indistinguishable; the signal div erges only at the harmful step itself. Last-step drift ∆ by scenario: memory_poison +0.348, permission_esc +0.312, tool_poison +0.089, propagation +0.052. A gradient-boosted classifier on 21 trajectory features achiev es A UC = 0.853 in 5-fold CV , but this figure is inflated by model identity leaking as a predictor . The 4 T able 4: Per-step objectiv e drift (GPT -4o-mini, memory_poison ). Step T ool Clean Attacked ∆ 1 read_memory 0.563 0.571 +0.008 2 send_report (legit) 0.714 0.747 +0.033 3 send_report (harm) — 0.868 +0.154 honest e v aluation—leave-one-scenario-out—collapses to A UC = 0.39–0.57 (chance le vel) across all held-out sce- narios. objective_drift_mean_after_exposure accounts for 32.3% of feature importance (3 × the next feature), because it av erages drift only across steps after the canary enters context , suppressing the stable pre-injection baseline and amplifying the post-ex ecution spike. That spike arriv es concurrent with harm, not before. Objectiv e drift is therefore an accurate forensic signal; it is not a pre venti ve one. Figure 6: Objective drift distributions: clean vs. attacked, per model. GPT -4o-mini and DeepSeek shift substantially upward when attacked; Claude’ s attacked distribution is nearly iden- tical to its clean baseline—consistent with 0% ASR. Median line shown per violin; gre y = clean, colored = attacked. Figure 7: GBT feature importance for predicting harmful ac- tion (21 features, 5-fold CV , A UC = 0.853 in-distrib ution). drift_mean_after_exposure accounts for 32.3%— 3 × the next feature. Lea ve-one-scenario-out A UC collapses to 0.39–0.57, confirming the signal is forensic rather than pre- venti ve and does not generalize across scenarios. 4.6 Pro venance Attribution and Dual-Completion W e implement a token-o verlap pro venance heuristic: for each outgoing tool argument, alphanumeric tok ens ( ≥ 4 chars) are matched against all prior tool results and memory state (thresh- old: ≥ 3 shared tokens or ≥ 20% argument cov erage). On 22 compromised runs, 22/22 (100%) injection paths were cor- rectly reconstructed with 0 false attrib utions. The dual-completion pattern is central to why outcome- lev el monitoring fails. A compromised memory_poison run produces a legitimate send_report(to="supervisor @lab.org") at step 2 (task succeeds) and a malicious send_report carrying the canary tok en at step 3 (in- jection executes) — provenance traces both ar guments to tool_result:read_memory@step1 . Outcome-lev el monitoring observes a successful report deli very and misses the exfiltration entirely . Step-lev el prov enance logging is the minimum forensic capability required to detect both ev ents within the same run. 4.7 Execution Lag and Detection Feasibility GPT -4o-mini’ s median exposure-to-ex ecution lag is 1 step (mean 1.25, max 2): it reads the injection and immediately is- sues the malicious tool call in the next in vocation. DeepSeek’ s lag is bimodal: most compromised runs execute within 2–3 steps, but a tail (max 12 steps) completes the entire legitimate task before appending the injection as a final action. GPT -5- mini when compromised exhibits a mean lag of 3.0 steps. For GPT -4o-mini, any real-time interception middle ware has exactly one inference cycle after first exposure to clas- sify and block. For DeepSeek’ s late-ex ecuting runs, a mid- run detector calibrated to GPT -4o-mini’ s 1-step pattern will miss the attack entirely . Compromised GPT -4o-mini runs also show e xcess send_report calls (mean 1.26 vs. 0.57 clean, ∆ +0.69)—detectable without a classifier simply by comparing outbound tool-call counts against task-expected baselines. 5 Discussion Model choice is the highest-leverage safety decision. Claude’ s 0/164 ASR (0 successes across all four scenarios, no defenses applied) reflects safety beha vior that acti vates at the summarization stage rather than the execution stage. This is architecturally different from execution-stage refusal: the model appears to distinguish between processing adversarial content and propagating it into downstream writes. None of the ev aluated defense mechanisms reproduced this behavior on any other model. W e caution that this finding is specific to our benchmark and cannot be generalized without further validation. Relay node safety is composable. Placing a Claude model at the summarization/relay position provides decontamination for any do wnstream agent in our e v aluation. Across 40 prop- agation runs with Claude as relay (20 Haiku + 20 Sonnet), 5 zero canaries reached Agent B. This constitutes a deployable architectural choice independent of the downstream agent’ s individual safety tier . W e propose r elay decontamination rate (fraction of injections stripped at the relay stage) as a first-class metric for multi-agent security ev aluation. Surface coverage is the primary evaluation gap. DeepSeek’ s 0% / 100% ASR split across memory and tool- stream surfaces demonstrates that single-surface e valuation is not a security e v aluation. The same structural gap applies to detection: classifier signatures trained on one surface achiev e A UC 0.39–0.57 on held-out surfaces. A complete security assessment must co ver e very injection surf ace present in the target deplo yment. 6 Broader Impact This work studies adv ersarial vulnerabilities in LLM agents. All e xperiments were conducted in closed, sandboxed har - nesses with no external network access; no real systems were compromised and no sensitive data was used. The injec- tion payloads and attack surfaces described are representa- tiv e of techniques already documented in the public litera- ture [ 1 , 2 ]. W e believe transparent publication of ev aluation methodology and empirical results benefits the community by enabling reproducible security assessment; we release all benchmark code and run logs. The primary dual-use risk is that surface-mismatch analysis could inform more targeted attack design. Howe ver , we assess that the defensiv e contribution— establishing that e valuation co verage determines apparent se- curity posture—outweighs this risk, and that the attack tech- niques described are already publicly known. 7 Limitations Per-cell n ranges from 8 to 36; the strongest findings (Claude 0/20 per scenario, DeepSeek memory 0/24, GPT -4o-mini memory 12/12) are consistent across batches but should be interpreted with appropriate uncertainty . Payloads are ex- plicit and synthetic; real-world attacks typically use obfus- cation and social engineering. Five frontier models from three providers; reasoning models (o3, o4-mini) and open- weight models (Llama 3.3) are not represented. All defenses are lightweight wrappers that approximate the stated mech- anism. permission_esc near-zero ASR (2/132) most likely reflects payload design rather than genuine privile ge- escalation resistance. Classifier and provenance v alidation use the 192-run scenario_compare dataset; rev alidation on the full 764-run set is ongoing. W e do not isolate whether Claude’ s summarization-stage filtering arises from training data, system prompt format, or tool API design. 8 Conclusion W e introduced kill-chain canary tracking for localizing prompt injection defenses in LLM agent pipelines. Across 764 runs and fi ve frontier models, the ke y structural finding is threefold: the safety gap between models concentrates at the summar- ization stage, not at exposure; static defenses fail not because they are poorly designed b ut because they cov er different sur - faces than the actual injection vectors; and a Claude relay node provides measurable decontamination for do wnstream agents regardless of those agents’ indi vidual safety le vels. Stage- lev el e valuation reveals that prompt injection defenses are fundamentally pipeline-local, not model-global. The mini- mum ev aluation standard for agentic systems should therefore include: stage-lev el kill-chain decomposition, multi-surface injection cov erage, and relay decontamination rate as a first- class metric. References [1] K. Greshake et al. Not what you’ ve signed up for: Com- promising real-world LLM-inte grated applications with indirect prompt injections. AISec @ CCS , 2023. [2] F . Perez and I. Ribeiro. Ignore pre vious prompt: At- tack techniques for language models. , 2022. [3] E. Debenedetti et al. AgentDojo: A dynamic en viron- ment to e valuate prompt injection attacks and defenses for LLM agents. NeurIPS , 2024. [4] Q. Zhan et al. InjecAgent: Benchmarking indi- rect prompt injections in tool-integrated LLM agents. arXiv:2403.02691 , 2024. [5] S. Lee and A. T iwari. Prompt infection: LLM- to-LLM prompt injection within multi-agent systems. arXiv:2410.07283 , 2024. [6] R. Shi et al. Zombie agents: Persistent mem- ory poisoning attacks on long-context LLM agents. arXiv:2602.15654 , 2025. [7] M. Nasr et al. Comprehensiv e assessment of defense mechanisms against prompt injection attacks. T echnical report, Google DeepMind / OpenAI / Anthropic, 2025. [8] K. Hines et al. Defending against indirect prompt injec- tion attacks with spotlighting. , 2024. [9] E. B. W ilson. Probable inference, the la w of succession, and statistical inference. J ASA , 22(158):209–212, 1927. 6

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment