Beyond Dataset Distillation: Lossless Dataset Concentration via Diffusion-Assisted Distribution Alignment

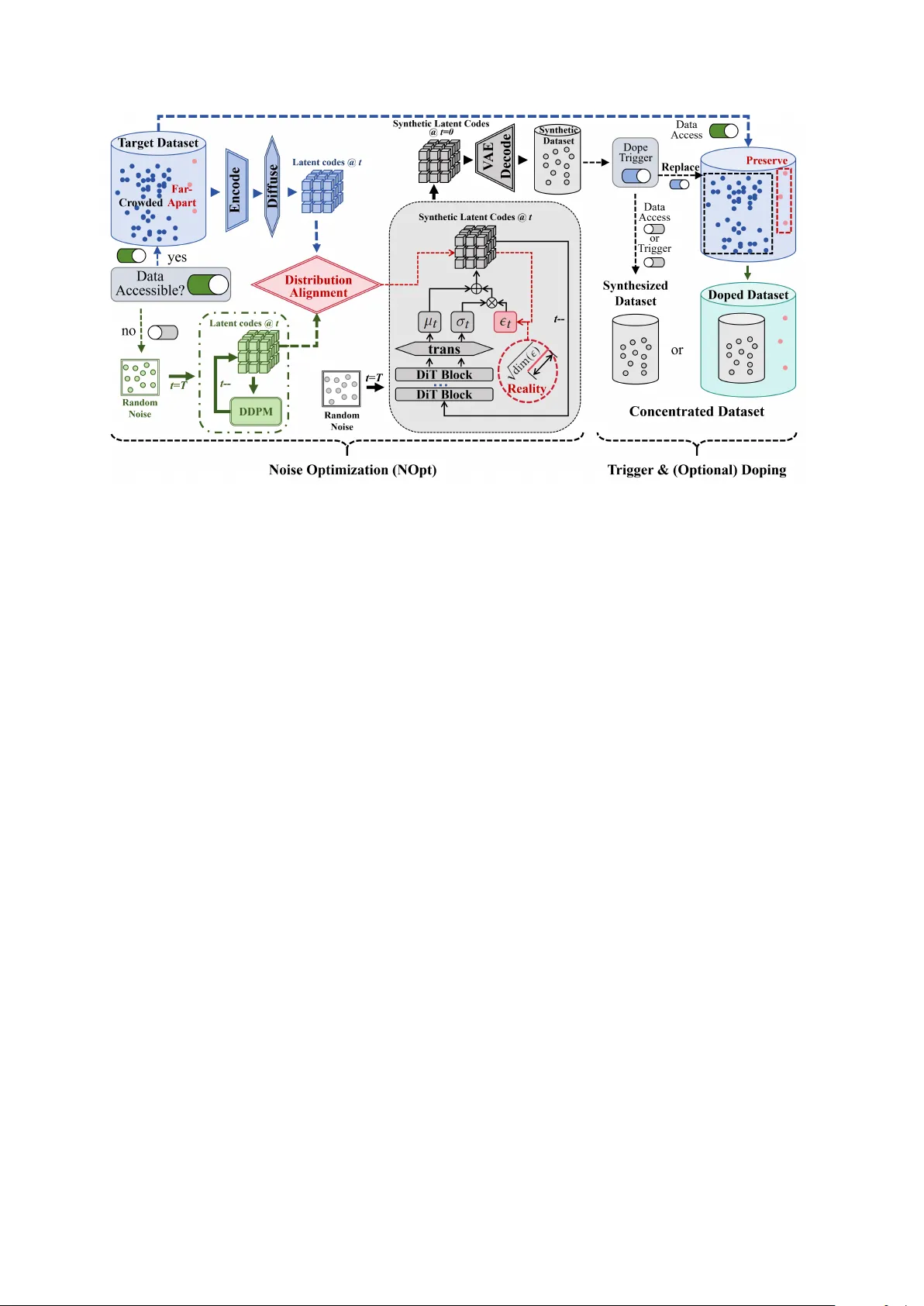

The high cost and accessibility problem associated with large datasets hinder the development of large-scale visual recognition systems. Dataset Distillation addresses these problems by synthesizing compact surrogate datasets for efficient training, …

Authors: Tongfei Liu, Yufan Liu, Bing Li