FedFG: Privacy-Preserving and Robust Federated Learning via Flow-Matching Generation

Federated learning (FL) enables distributed clients to collaboratively train a global model using local private data. Nevertheless, recent studies show that conventional FL algorithms still exhibit deficiencies in privacy protection, and the server l…

Authors: Ruiyang Wang, Rong Pan, Zhengan Yao

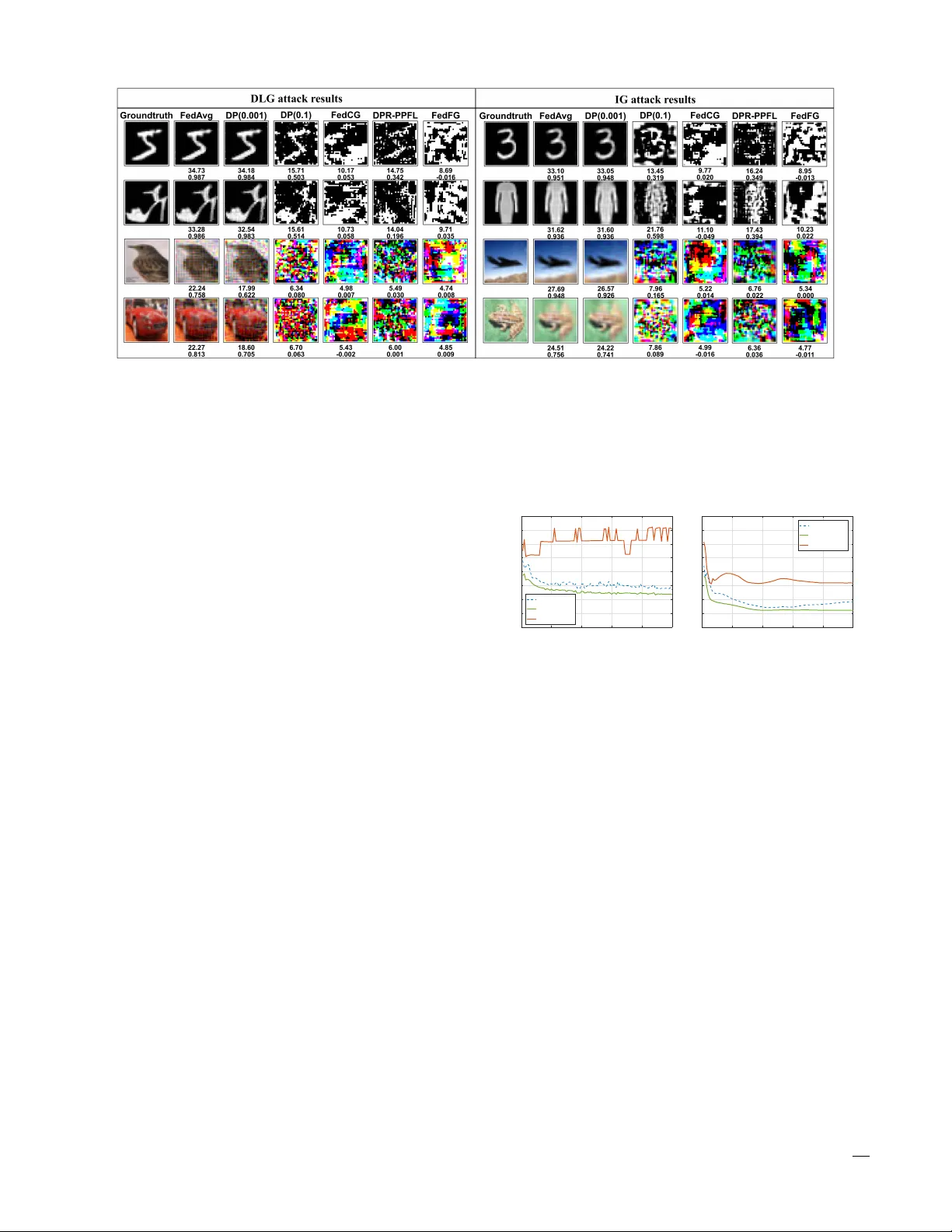

IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 1 FedFG: Pri v ac y-Preserving and Rob ust Federated Learning via Flo w-Matching Generation Ruiyang W ang, Rong Pan, and Zhengan Y ao Abstract —Federated learning (FL) enables distributed clients to collaboratively train a global model using local private data. Nevertheless, recent studies show that con ventional FL algorithms still exhibit deficiencies in privacy protection, and the server lacks a reliable and stable aggr egation rule for updating the global model. This situation creates opportunities for adversaries: on the one hand, they may ea vesdrop on uploaded gradients or model parameters, potentially leaking benign clients’ private data; on the other hand, they may compromise clients to launch poisoning attacks that corrupt the global model. T o balance accuracy and security , we propose F edFG, a rob ust FL framework based on flow-matching generation that simultaneously preserv es client privacy and resists sophisticated poisoning attacks. On the client side, each local network is decoupled into a private feature extractor and a public classifier . Each client is further equipped with a flow-matching generator that replaces the extractor when interacting with the server , thereby protecting private features while learning an appr oximation of the underlying data distribution. Complementing the client-side design, the server employs a client-update verification scheme and a novel robust aggregation mechanism driven by synthetic samples produced by the flow-matching generator . Experiments on MNIST , FMNIST , and CIF AR-10 demonstrate that, compared with prior work, our approach adapts to multiple attack strategies and achieves higher accuracy while maintaining strong privacy protection. Index T erms —Federated learning, privacy preservation, poi- soning attacks, rob ust aggregation, flow-matching generation. I . I N T RO D U C T I O N F EDERA TED learning (FL) [1], [2] has emer ged as a key paradigm for collaborati ve model training across dis- tributed clients, where raw data remain local and only model updates are exchanged. This design aligns with data protection regulations such as GDPR [3] and HIP AA [4], and has enabled deployments in security-critical domains, including healthcare, autonomous driving, and financial services [5]–[9]. Howe ver , as FL is increasingly used to support high-stakes decision making, its security assumptions are being challenged by adversaries operating over open networks and in partially compromised client populations. A central challenge is that FL must simultaneously ensure data confidentiality and model integrity . On the one hand, adversaries can reconstruct high-fidelity priv ate images or text Ruiyang W ang is with the School of Mathematics, Sun Y at-sen Univ ersity , Guangzhou 510275, China. E-mail: wangry25@mail2.sysu.edu.cn. Rong Pan is with the School of Computer Science and Engineering, Sun Y at-sen University , Guangzhou 510006, China. E-mail: panr@sysu.edu.cn. ( Corr esponding author: Rong P an ) Zhengan Y ao is with the School of Mathematics, Sun Y at-sen Univer - sity , Guangzhou 510275, China, and also with the Institute of Advanced Studies Hong Kong, Sun Y at-sen University , Hong K ong. E-mail: mc- syao@mail.sysu.edu.cn. The source code is available at https://github .com/rywangcn/FedFG from observed client gradients via pri vacy attacks, leading to sensitiv e information leakage [10]–[12]; on the other hand, adversaries can control malicious clients to launch advanced poisoning attacks [13]–[17], thereby degrading global model performance and undermining system security . These two risks are tightly coupled: mechanisms that expose more informa- tion for server -side inspection may improv e robustness b ut worsen pri vac y , whereas mechanisms that conceal updates can strengthen priv acy but reduce the server’ s ability to detect malicious contributions. Existing defenses often address only one side of this coupled problem or rely on assumptions that break down in practical non-IID settings. Cryptographic protocols can prev ent plaintext exposure [18]–[20], but their overhead can be prohibitive; more fundamentally , encrypted updates limit the server’ s ability to verify client behavior [21]. Differential priv acy (DP) pro vides formal protection by perturbing updates [22]–[24], yet stringent priv acy budgets can substantially de- grade accuracy . Byzantine-robust aggregators such as Krum [25] and the coordinate-wise median [26] rely on geometric clustering of benign updates, an assumption often violated under heterogeneous client distributions, and can be bypassed by sophisticated attacks [14]. V alidation-based schemes can improv e robustness by screening updates [27], [28], but typi- cally require auxiliary clean data or trusted v alidation signals, which may conflict with the “no e xtra data” premise and introduce additional priv acy concerns. Motiv ated by this tension, we explore a different design axis: using synthetic data as a priv acy–robustness bridge. Unlike raw updates, synthetic samples can be kept in plainte xt to enable server -side verification, while av oiding a one-to-one correspondence with original instances, thereby reducing di- rect leakage risks. This perspective suggests a unified architec- ture in which clients provide behavioral evidence via generated samples rather than revealing sensiti ve feature representations or full parameter updates. As a result, the server can assess update quality and detect poisoning attempts without directly inspecting priv ate client updates. Building on this insight, we propose FedFG, a robust fed- erated learning framework based on flow-matching generation that jointly preserves pri vacy and resists sophisticated poison- ing attacks. On the client side, each model is decoupled into a priv ate extractor and a public classifier , and a flow-matching generator is trained to replace the extractor when interacting with the server , protecting priv ate features while learning an approximation to the local data distribution. On the server side, we design a client update verification scheme and a robust aggregation mechanism driv en by synthetic samples produced IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 2 by these generators, enabling anomaly detection and accuracy- aware reweighting under div erse attack strategies. The main contributions of this paper are summarized as follows: • W e propose FedFG, a federated learning framew ork that lev erages flo w-matching generators to unify client-side priv acy protection and server -side robustness within a single design. • W e design a server-side verification and aggregation scheme that uses synthetic samples to compute outlier and accuracy scores, detect malicious clients, and perform accuracy-a ware reweighting ov er the remaining clients. • W e establish a first-order stationarity guarantee for FedFG under noncon vex objectives, pro viding a rigorous theoretical foundation for federated learning framework that simultaneously address pri vac y preservation and model poisoning defense. • T o the best of our knowledge, this work is the first to adopt flo w-matching as the generative backbone for a unified federated learning architecture capable of mitigat- ing both priv acy leakage and poisoning attacks. Extensive experiments and thorough ev aluations demonstrate the effecti veness and superiority of our approach. The remainder of this paper is organized as follows. In sec- tion II, we re view related work and introduce the flow- matching model. In section III, we present the proposed frame- work, which uses flow-matching generators as a cornerstone for client-side pri vac y protection and server-side robustness, and analyze its con ver gence in section IV. In section V, we detail the e xperimental settings and report results from comparativ e ev aluations. Finally , in section VI, we conclude and discuss potential directions for future work. I I . R E L A T E D W O R K In this section, we re view prior work on priv acy-preserving and robust federated learning, covering major security threats and corresponding defense strategies. W e also discuss gener- ativ e federated learning and flow-matching models, the latter of which provides the foundation for our method. A. Privacy Leakage Pr otection Despite keeping raw data local, FL remains vulnerable to gradient inv ersion attacks [10]–[12] that reconstruct training samples from shared updates. Consequently , explicit protec- tion mechanisms are required, typically in volving trade-of fs among accuracy , rob ustness, and communication overhead. T o mitigate priv acy leakage caused by directly uploading local updates, existing defenses are commonly categorized into three groups: cryptography-based methods, differential priv acy-based methods, and model-masking methods. 1) Cryptogr aphy-based pr otection: Cryptography-based methods enable aggreg ation without exposing plaintext up- dates, blocking gradient inv ersion at the protocol le vel. Recent studies hav e explored improv ements along the efficienc y– security trade-off. Hu and Li [18] propose a gradient-guided mask-selection mechanism that encrypts only a small portion of updates while maintaining protection. FedPHE [19] builds contribution-a ware secure aggregation based on homomorphic encryption with sparsification and obfuscation techniques. Dong et al. [20] leverage secure multi-party computation to implement Byzantine-robust aggregation while preserving pa- rameter priv acy . Nevertheless, cryptography-based protection is not foolproof. Lam et al. [29] show that ev en with secure aggregation, the server may still infer individual updates under certain system conditions. 2) Dif fer ential privacy-based pr otection: Differential pri- vac y (DP) provides a quantifiable pri v acy guarantee by in- jecting noise into client updates. A key advantage of DP is computational ef ficiency; howe ver , tighter priv acy budgets typ- ically reduce model utility . Xue et al. [23] propose an adaptive noise mechanism that allocates noise according to parameter sensitivity , achieving higher accuracy under the same priv acy budget. W ei et al. [24] develop a privac y-budget allocation scheme for personalized FL based on composition theory and deriv e conv ergence bounds. Y ang et al. [30] propose dynamic personalization with adaptiv e constraints to reduce the adverse impact of noise on critical parameters. 3) Model masking methods: Model masking methods av oid uploading complete updates by concealing a subset of net- work parameters. Chen et al. [31] propose FedRSM, which applies representational similarity analysis to estimate layer importance and selectively hide a subset of layers. Building on this, DPR-PPFL [32] extends the approach to IoT settings using layer-wise and model-based similarity analysis to resist both inv ersion and poisoning attacks. Another strategy is to split the network and conceal feature information. W u et al. [33] adopt split learning by partitioning the local model into a priv ate extractor and a public classifier , and employ a cGAN generator as a proxy for the extractor during server interaction, thereby mitigating pri vac y attacks while maintaining accuracy . Moreov er , Ma et al. [34] map images into a target domain via block encryption and train a GAN to model the distribution in that domain, uploading only the generator parameters to support collaboration. Overall, masking-based methods are typically more computation-friendly and can enable the server to perform limited quality assessment using av ailable plaintext information; howe ver , sustaining stable generalization under non-IID data distributions requires careful mechanism design. B. Model P oisoning Defense In model poisoning attacks, adversaries manipulate local updates to degrade global model performance. Representative strategies include sign flipping [13], inner product manip- ulation [14], and optimization-based attacks [15]–[17] that maximize de viation or steer the model to ward attacker -chosen low-accurac y states over multiple rounds. T o defend against model poisoning in federated learning, existing studies can be broadly grouped into three lines: statistically robust aggrega- tion, similarity-based detection, and v alidation-based filtering. 1) Statistical rob ust aggr egation: Statistical approaches rely on robust statistics to suppress the impact of outlier updates. Representative examples include Krum [25], which selects the update closest to the majority; coordinate-wise Me- dian and TrimmedMean [26], which compute per-coordinate statistics after removing extremes; and geometric-median- based aggregators [35], which improv e the breakdown point IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 3 but may suffer irreducible error under highly heterogeneous data. While simple to implement, these methods can be sensitiv e to non-IID heterogeneity and may degrade when benign clients naturally exhibit diverse update patterns. 2) Similarity-based detection: Similarity-based methods exploit consistency patterns that coordinated attackers of- ten exhibit. FoolsGold [15] addresses Sybil poisoning by adaptiv ely down-weighting clients that exhibit high gradient similarity across rounds, under the assumption of colluding attackers. DPR-PPFL [32] moves similarity assessment from parameter space to representation space, applying represen- tational similarity analysis to measure consistency and filter anomalous models in IoT scenarios. 3) V alidation-based filtering: V alidation-based methods au- thenticate updates using trusted or synthesized validation sig- nals. FL T rust [27] assumes the server holds a small clean dataset and assigns trust scores based on directional agree- ment. GANDefense [28] generates audit data with a server- side GAN and remov es malicious updates using an audit- accuracy threshold. GAN-Filter [36] av oids external datasets by letting the global model guide synthetic sample generation for adaptiv e filtering. Ho wever , these approaches often require auxiliary datasets or depend on an unpoisoned global model to guide the generator , which may conflict with strict FL privac y assumptions or fail under early-round attacks. C. Generative F ederated Learning and Flow-Matching Mod- els Existing generative federated learning (GFL) methods often address priv acy or robustness in isolation rather than jointly . Client-side generation approaches [33], [34] use generators to replace pri vacy-sensiti ve representations, but typically lack robust server-side v erification mechanisms. Server -side gener- ation approaches [28], [36] produce audit samples for scoring client updates, but may depend on auxiliary clean validation data and can be unstable under non-IID heterogeneity . These limitations motiv ate a unified architecture in which the gener- ativ e component supports both priv acy protection and robust aggregation. Recent flow-matching methods provide a promising foun- dation by enabling simulation-free training of continuous normalizing flows (CNFs) [37], [38]. A CNF defines a de- terministic probability-flow ODE dx t dt = v θ ( t, x t ) , (1) which transports samples from a source distribution to the data distribution. Flow matching trains v θ via regression to a target vector field: L FM ( θ ) = E t,x ∼ p t v θ ( t, x ) − u t ( x ) 2 2 . (2) Importantly , optimal-transport displacement interpolation can yield straighter conditional trajectories, enabling faster and more stable generation with fewer neural function ev aluations [37]–[39]. These properties make flo w matching well suited to FL: it supports stable sample synthesis under non-IID settings and produces plaintext synthetic data that the server can use for update verification and robust aggregation. Accordingly , we adopt flow matching as the generative backbone and propose FedFG, which tightly couples client-side pri vacy protection with server-side robustness via flo w-matching generated syn- thetic samples. T ABLE I K E Y N OTA T I O NS F O R T H E P RO P O S ED M E T HO D Symbol Meaning N Number of clients K Number of classes R Number of communication rounds B , M Set of benign clients and set of malicious clients D i Local dataset of client i E i Priv ate feature e xtractor of client i C i , F G i Public classifier and flow-matching generator of client i C g , F G g Global classifier and global flow-matching generator θ ( · ) Model parameters of the corresponding network module z ∼ p ( z ) Noise sampled from the prior distrib ution t ∈ [0 , 1] T ime variable in flow-matching h, ˜ h Real feature representation and synthetic feature probe h 0 , h 1 , h t Initial noise feature, target real feature, and intermediate feature on the flow path v θ F G i ( · ) Conditional vector field of the generator u t ( · ) Conditional target flow for flow-matching L cls , L FM Classification loss and flow-matching loss w ( r ) i Aggregation weight of client i at round r . s r i Accuracy score of client i at round r α r i Relativ e accurac y score of client i at round r ¯ α r i Renormalized accuracy weight for benign client i p i Predictiv e probability vector of client i d H Hellinger distance between client classifiers o r i Outlier score of client i at round r τ r Adaptiv e threshold computed via the Hampel rule γ T unable cutoff parameter for the Hampel rule κ Accuracy threshold for filtering I I I . P RO P O S E D M E T H O D In this section, we present FedFG, a secure and priv acy- preserving federated learning framework based on flo w- matching generation. As illustrated in Fig. 1, the framew ork consists of two iterative procedures. W e consider a standard cross-silo FL system consisting of a central server and N clients. Client i holds a priv ate dataset D i = { ( x, y ) } , where x and y ∈ { 1 , . . . , K } denote an input sample and its label, respectiv ely . FedFG decouples each client model into a priv ate feature extractor E i : X → R d and a public classifier C i : R d → R K , and further equips each client with a flow-matching generator F G i : Z × { 1 , . . . , K } → R d that approximates the feature distribution induced by the extractor , where Z is a simple noise distribution (e.g., a standard Gaussian). The server maintains a global generator F G g and a global classifier C g . In each communication round r , clients download ( θ r − 1 F G g , θ r − 1 C g ) , perform local updates, and upload ( θ r F G i , θ r C i ) . The server then verifies client updates using synthetic feature probes produced by F G g and performs IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 4 + + = + noise label synthetic feature + poisoning real feature label noise local data Malicious Client 1 All clients download and Each client uploads and Compute Outlier Scor es & ACC Scores Evaluate Reweight Filter Hampel Rule & ACC Threshold Reweighting based on ACC Scores ❌ ✅ ✅ Filter Out + = Benign Benign Server + real feature label noise local data Benign Client 2 + real feature label noise local data Benign Client 3 Fig. 1. Overview of FedFG. On each client, the local model is decoupled into a priv ate feature extractor and a public classifier , and is equipped with a flow-matching generator that replaces the extractor during communication with the server . The server collects model parameters from clients and performs robust aggregation with three modules: 1) e valuation of outlier and accuracy scores via synthetic samples generated by the flow-matching generator; 2) detection of malicious clients based on the Hampel rule and accuracy threshold; and 3) accuracy-aw are re weighting aggregation over the remaining benign clients. robust aggregation to obtain ( θ r F G g , θ r C g ) . Details are pro vided below for both the client-side and server -side procedures. A. Client Side: Privacy-Preserving Local Update A key priv acy risk in FL stems from sharing parameters that directly interact with raw data, enabling gradient inv ersion and related reconstruction attacks. FedFG mitigates this risk by keeping the extractor E i strictly local. Only ( θ F G i , θ C i ) are exchanged with the server . Since F G i operates in feature space and does not process raw inputs, and since C i maps features to logits, the information directly exposed about raw samples is substantially reduced compared with sharing an end-to-end model. Algorithm 1 describes the procedure in detail. At round r , client i initializes its classifier from the global classifier and then updates θ E i and θ C i on its private data by minimizing the supervised classification loss: L cls ( θ E i , θ C i ) = E ( x,y ) ∼D i h Ω C i ( E i ( x ; θ E i ); θ C i ) , y i , (3) where Ω( · , · ) denotes the cross-entropy loss. The local update is: ( θ E i , θ C i ) ← ( θ E i , θ C i ) − η 1 ∇L cls , (4) with learning rate η 1 . After updating the extractor , client i trains a flow-matching generator F G i to approximate the conditional feature distri- bution of the extractor outputs gi ven label y . Let h = E i ( x ; θ E i ) (5) denote the real feature. Client i optimizes θ F G i via a flow- matching objectiv e: L FM θ F G i ; θ E i = E ( x,y ) ∼D i ,z ∼ p ( z ) h ℓ FM F G i ,E i ( x ) ,y ,z i , (6) where ℓ FM ( · ) is the per-sample flow-matching loss imple- mented by the client-side generator module. The generator update is: θ F G i ← θ F G i − η 2 ∇L FM , (7) with learning rate η 2 . T o instantiate a standard flo w-matching model, we param- eterize the generator as a conditional, time-dependent vector field v θ F G i ( h, t, y ) and define generation through the ordinary differential equation dh ( t ) dt = v θ F G i ( h ( t ) , t, y ) , t ∈ [0 , 1] , (8) where the initial state h (0) is sampled from a simple prior induced by z ∼ p ( z ) and a lightweight mapping from noise space to feature space. Sampling corresponds to integrating (8) from t = 0 to t = 1 to obtain h (1) . For training, we adopt an independent conditional flow- matching probability path between a noise sample h 0 and a real feature h 1 = h as mentioned in Eq. (5): h t = th 1 + (1 − t ) h 0 + σ ϵ, (9) h 0 ∼ N (0 , I ) , ϵ ∼ N (0 , I ) , t ∼ Unif (0 , 1) , (10) where σ ≥ 0 is a scalar that controls the stochasticity of the path. Under this construction, the conditional target flow is u t ( h 1 | h 0 ) = h 1 − h 0 , (11) IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 5 Algorithm 1 Client-Side Procedure of FedFG Require: Local dataset D i ; communication rounds R ; local epochs E loc ; flow epochs E flow ; learning rates η 1 , η 2 ; malicious client set M . 1: f or r = 1 , 2 , . . . , R do 2: /* Step 1: Extractor and classifier training */ 3: for e = 1 , 2 , . . . , E loc do 4: for each minibatch ( x, y ) ∼ D i do 5: Compute L cls using Eq. (3) 6: Update ( θ E i , θ C i ) using Eq. (4) 7: end for 8: end for 9: /* Step 2: Flow-matching generator training */ 10: for e = 1 , 2 , . . . , E flow do 11: for each minibatch ( x, y ) ∼ D i do 12: Extract real features h 1 ← E i ( x ; θ E i ) 13: Sample t, h 0 , ϵ using Eq. (10) and form h t using Eq. (9) 14: Compute the FM loss ℓ FM using Eq. (12) 15: Update θ F G i using Eq. (7) 16: end for 17: end for 18: /* Step 3: Model poisoning by malicious clients */ 19: if i ∈ M then 20: ( θ r F G i , θ r C i ) ← A θ r F G i , θ r C i ; θ r − 1 F G g , θ r − 1 C g 21: end if 22: /* Step 4: Upload public components */ 23: Send ( θ r F G i , θ r C i ) to the server 24: /* Step 5: Download global models */ 25: Receiv e ( θ r F G g , θ r C g ) from the server 26: Update public modules: θ r C i ← θ r C g , θ r F G i ← θ r F G g 27: end for 28: r eturn θ R E i , θ R C i , θ R F G i and the per-sample flow-matching loss can be written as the mean-squared error between the predicted and target flows: ℓ FM F G i ,h 1 ,y ,z = E t h v θ F G i ( h t ,t,y ) − u t ( h 1 | h 0 ) 2 2 i . (12) The condition y is injected into v θ F G i via a learnable label embedding, and time t is provided through a suitable time embedding, enabling the model to learn class-specific transport dynamics in the extractor feature space. During this stage, θ E i is fixed and only θ F G i is updated, so the generator learns the conditional feature distribution induced by the current extractor . After the two-stage update, client i uploads only the public modules ( θ r F G i , θ r C i ) , while keeping θ r E i local. This design preserves pri v acy by prev enting the server from accessing the module that directly transforms raw inputs into intermediate representations. B. Server Side: Update V erification and Robust Aggr egation As illustrated in Fig. 1, malicious clients ( i ∈ M ) may apply a poisoning function A ( · ) to their public parameters before uploading (e.g., inner product manipulation), aiming to degrade the global model accuracy through corrupted updates. T o counter such threats, FedFG verifies client updates using synthetic feature probes generated by the aggregated generator and filters outliers prior to aggregation. The ke y idea is to let F G g act as a judge that produces class-conditional synthetic features, which are then fed into each client classifier to obtain comparable predictive distributions for distinguishing benign updates from poisoned ones. The server first forms preliminary global models by weighted av eraging: θ r F G g ← N X i =1 w ( r − 1) i θ r F G i , θ r C g ← N X i =1 w ( r − 1) i θ r C i , (13) where w ( r − 1) i is the server’ s current aggregation weight for client i (initialized proportionally to local data sizes and later updated using accuracy scores; see below). This preliminary F G r g is then used to generate synthetic feature probes for verification. The server samples labels y ∼ Unif ( { 1 , . . . , K } ) and noise z ∼ p ( z ) , and generates synthetic features ˜ h = F G r g ( z , y ; θ r F G g ) . (14) For each client classifier C r i , the server ev aluates its correct- ness on these synthetic pairs: s r i = E y,z h I arg max C r i ( ˜ h ) = y i , (15) where I ( · ) is the indicator function and the expectation is approximated via Monte Carlo batches. The scores are nor- malized to obtain the relativ e accuracy score: α r i = s r i P N j =1 s r j + ε . (16) Using the same synthetic probes ( ˜ h, y ) , the server computes the predictiv e probability vector of client i : p i ( ˜ h ) = softmax C r i ( ˜ h ) ∈ ∆ K − 1 . (17) For two clients i and j , their predictive discrepancy is mea- sured by the Hellinger distance: d H p i , p j = 1 √ 2 √ p i − √ p j 2 . (18) W e define the outlier score of client i as the av erage pairwise distance to all other clients: o r i = 1 N − 1 X j = i E y,z h d H p i ( ˜ h ) , p j ( ˜ h ) i . (19) Intuitiv ely , poisoning often yields classifiers whose predictiv e behavior on shared probes deviates from that of benign clients, leading to larger o r i . T o robustly identify outliers without assuming a parametric distribution, the server applies a Hampel rule based on the median and MAD: m r = median { o r i } N i =1 , (20) MAD r = median {| o r i − m r |} N i =1 + ε, (21) τ r = m r + γ · 1 . 4826 · MAD r . (22) IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 6 Algorithm 2 Server-Side Procedure of FedFG Require: Number of clients N ; communication rounds R ; accuracy threshold κ ; Hampel cutoff γ . 1: f or r = 1 , 2 , . . . , R do 2: /* Step 1: Collect client updates */ 3: Receiv e { ( θ r C i , θ r F G i ) } N i =1 4: /* Step 2: Preliminary aggregation */ 5: Compute preliminary ( θ r F G g , θ r C g ) using Eq. (13) 6: /* Step 3: Synthetic feature probes */ 7: Sample ( z , y ) and generate synthetic features ˜ h using Eq. (14) 8: /* Step 4: Evaluate client updates on probes */ 9: for i = 1 to N do 10: Compute accuracy score s r i using Eq. (15) 11: Compute relativ e accuracy score α r i using Eq. (16) 12: Compute outlier score o r i using Eqs. (18) and (19) 13: end for 14: /* Step 5: Malicious client detection */ 15: Compute threshold τ r using Eq. (22) 16: B r ← { i | ( o r i < τ r ) ∧ ( α r i > κ ) } 17: /* Step 6: Accuracy-aw are reweighting */ 18: For i ∈ B r , compute renormalized weights ¯ α r i using Eq. (23) 19: Aggregate ( θ r F G g , θ r C g ) ov er B r using Eq. (24) 20: Update next-round prior weights: w ( r ) i ← α r i 21: /* Step 7: Broadcast global models */ 22: Send ( θ r F G g , θ r C g ) to all clients 23: end for 24: r eturn θ R C g , θ R F G g Here, γ is a tunable cutoff in units of the MAD-based scale, and 1 . 4826 rescales MAD to be σ -equi valent under Gaussian noise. Client i is flagged as an outlier if o r i > τ r . In addition to the outlier test, FedFG filters low-accurac y updates by thresholding the relativ e accuracy scores: client i is filtered if α r i < κ . The two filters are combined by set union. Let B r denote the set of remaining benign clients after filtering. The server re-normalizes the accuracy weights ov er B r : ¯ α r i = α r i P j ∈B r α r j + ε , i ∈ B r . (23) Finally , the server aggregates only benign updates: θ r F G g ← X i ∈B r ¯ α r i θ r F G i , θ r C g ← X i ∈B r ¯ α r i θ r C i . (24) The resulting ( θ r F G g , θ r C g ) are broadcast to all clients for the next round. Moreover , we set the next-round prior weights as w ( r ) i = α r i , yielding an adaptiv e, history-aware aggregation scheme. The full procedure is presented in Algorithm 2. I V . C O N V E R G E N C E A NA LY S I S W e establish a first-order stationarity guarantee for FedFG. Let B and M partition the N clients into benign and malicious sets. W e stack the serv er-maintained public parameters as θ r := [ θ r C g ; θ r F G g ] ∈ R p and define each benign client’ s local objectiv e as F i ( θ ) := E ξ ∼D i [ ℓ ( θ ; ξ )] for i ∈ B . The accuracy- weighted global objectiv e at round r is e F r ( θ ) := X i ∈B ¯ α r i F i ( θ ) , (25) where ¯ α r i ≥ 0 , P i ∈B ¯ α r i = 1 are the renormalized accuracy- aware weights from Eq. (23). Let F ∗ ,r ( θ ) := P i ∈B ¯ α r i, ∗ F i ( θ ) denote the population-weight counterpart obtained with in- finitely many synthetic probes, and let E r := {B r = B } be the ev ent that the server correctly identifies all benign clients at round r . W e introduce the follo wing assumptions required for the analysis. Assumptions IV .1–IV .4 are standard in federated optimization [40], [41], while Assumptions IV .5–IV .6 capture the specific properties of FedFG’ s verification mechanism. Assumption IV .1 (Smoothness and boundedness) . F or each i ∈ B , F i is L -smooth, lower bounded by F inf > −∞ , and has bounded gradients: ∥∇ F i ( θ ) ∥ ≤ G for all θ . Assumption IV .2 (Stochastic gradients) . Each benign client samples unbiased stochastic gradients with bounded variance: E [ g i ( θ ; ξ )] = ∇ F i ( θ ) and E ∥ g i ( θ ; ξ ) − ∇ F i ( θ ) ∥ 2 ≤ σ 2 . Assumption IV .3 (Bounded heterogeneity) . Defining F B ( θ ) := 1 |B| P i ∈B F i ( θ ) , we have 1 |B | X i ∈B ∥∇ F i ( θ ) − ∇ F B ( θ ) ∥ 2 ≤ ζ 2 , ∀ θ. (26) Assumption IV .4 (Local update) . Let Q ≥ 1 denote the number of local SGD steps per r ound, and let η r > 0 be the local learning rate at r ound r . Conditioned on ( θ r , E r ) , the aggr egated benign update ∆ r B := θ r +1 − θ r satisfies, for constants B , V ≥ 0 independent of r : E [∆ r B | θ r , E r ] + η r Q ∇ e F r ( θ r ) ≤ B η 2 r Q 2 ζ , (27) E ∥ ∆ r B ∥ 2 | θ r , E r ≤ V η 2 r ( Qσ 2 + Q 2 ζ 2 ) . (28) Assumption IV .5 (Robust verification) . F or all r , P ( E r ) ≥ 1 − δ f for some δ f ∈ (0 , 1) . On ¬E r , the possibly adversarial update satisfies E [ ∥ ∆ r M ∥ 2 | ¬E r ] ≤ G 2 max . Assumption IV .6 (W eight estimation and objecti ve stability) . Using S i.i.d. synthetic pr obes, E [ ∥ ¯ α r − ¯ α r ∗ ∥ 2 1 ] ≤ C α /S for some constant C α > 0 . Moreo ver , E [sup θ | e F r +1 ( θ ) − e F r ( θ ) | ] ≤ ∆ r , with ∆ 0: R := P R − 1 r =0 ∆ r < ∞ . Based on these assumptions, we present two supporting lemmas. Lemma IV .1 (One-step descent) . Under Assumptions IV .1 and IV .4, if η r Q ≤ min { 1 / (2 L ) , 1 } , then on the event E r : E e F r ( θ r +1 ) | θ r , E r ≤ e F r ( θ r ) − c 1 η r Q ∥∇ e F r ( θ r ) ∥ 2 + c 2 η 2 r ( Qσ 2 + Q 2 ζ 2 ) , (29) wher e c 1 = 3 / 4 and c 2 = LV / 2 + B 2 . Pr oof. By L -smoothness and the conditional expectation, E e F r ( θ r +1 ) | θ r , E r ≤ e F r ( θ r ) + ∇ e F r ( θ r ) , E [∆ r B | θ r , E r ] + L 2 E ∥ ∆ r B ∥ 2 | θ r , E r . (30) IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 7 Define b r := E [∆ r B | θ r , E r ] + η r Q ∇ e F r ( θ r ) , so that ∥ b r ∥ ≤ B η 2 r Q 2 ζ by (27). The inner product term becomes ∇ e F r ( θ r ) , E [∆ r B | θ r , E r ] = − η r Q ∥∇ e F r ( θ r ) ∥ 2 + ∇ e F r ( θ r ) , b r . (31) Applying Y oung’ s inequality ⟨ a, b ⟩ ≤ α 2 ∥ a ∥ 2 + 1 2 α ∥ b ∥ 2 with α = η r Q/ 2 giv es ∇ e F r ( θ r ) , b r ≤ η r Q 4 ∥∇ e F r ( θ r ) ∥ 2 + 1 η r Q ∥ b r ∥ 2 . (32) Since η r Q ≤ 1 , we hav e 1 η r Q ∥ b r ∥ 2 ≤ B 2 η 3 r Q 3 ζ 2 ≤ B 2 η 2 r Q 2 ζ 2 . Substituting back and combining with the second-moment bound (28) in (30) yields (29). Lemma IV .2 (W eight perturbation) . Under Assumptions IV .1 and IV .6, for all θ and r : E ∥∇ e F r ( θ ) − ∇ F ∗ ,r ( θ ) ∥ 2 ≤ G 2 C α S . (33) Pr oof. By definition, ∇ e F r ( θ ) − ∇ F ∗ ,r ( θ ) = P i ∈B ( ¯ α r i − ¯ α r i, ∗ ) ∇ F i ( θ ) . Under Assumption IV .1, the triangle inequality implies that ∥∇ e F r ( θ ) − ∇ F ∗ ,r ( θ ) ∥ ≤ G ∥ ¯ α r − ¯ α r ∗ ∥ 1 . (34) Squaring both sides and taking expectations, the result follows from Assumption IV .6. W e now state the main con ver gence result. Theorem IV .1 (Conv ergence of F E D F G ) . Under Assump- tions IV .1–IV .6 and the condition in Lemma IV .1, for any R ≥ 1 : 1 P R − 1 r =0 η r Q R − 1 X r =0 η r Q E ∥∇ F ∗ ,r ( θ r ) ∥ 2 ≤ C 0 P R − 1 r =0 η r Q | {z } initialization + C 1 ( Qσ 2 + Q 2 ζ 2 ) P R − 1 r =0 η 2 r P R − 1 r =0 η r Q | {z } noise & heter ogeneity + C 2 ∆ 0: R + C 3 R δ f P R − 1 r =0 η r Q | {z } drift & verification failure + C 4 S |{z} pr obe estimation , (35) wher e C 0 = 2( E [ e F 0 ( θ 0 )] − e F inf ) /c 1 with e F inf := inf r,θ e F r ( θ ) , and C i > 0( i = 1 , ..., 4) depend on L, G, G max , B , V , C α but not on R or S . Pr oof. W e decompose the expected function value decrease via the law of total expectation: E e F r ( θ r +1 ) = E e F r ( θ r +1 ) 1 E r + E e F r ( θ r +1 ) 1 ¬E r . (36) Corr ect verification ( E r ). Using Lemma IV .1 and the tower property , E e F r ( θ r +1 ) 1 E r ≤ E e F r ( θ r ) 1 E r − c 1 η r Q E ∥∇ e F r ( θ r ) ∥ 2 1 E r + c 2 η 2 r ( Qσ 2 + Q 2 ζ 2 ) , (37) where P ( E r ) ≤ 1 is used to drop the indicator from the last term. V erification failur e ( ¬E r ). On ¬E r , by L -smoothness of e F r , e F r ( θ r +1 ) ≤ e F r ( θ r ) + ⟨∇ e F r ( θ r ) , ∆ r M ⟩ + L 2 ∥ ∆ r M ∥ 2 . (38) Then by Cauchy–Schwarz and Assumption IV .1, ⟨∇ e F r ( θ r ) , ∆ r M ⟩ ≤ ∥∇ e F r ( θ r ) ∥ ∥ ∆ r M ∥ ≤ G ∥ ∆ r M ∥ . (39) Under Assumption IV .5, we hav e E e F r ( θ r +1 ) 1 ¬E r ≤ E e F r ( θ r ) 1 ¬E r + GG max + L 2 G 2 max P ( ¬E t ) ≤ E e F r ( θ r ) 1 ¬E r + G G max + L 2 G 2 max | {z } =: C f δ f . (40) P er-r ound r ecursion. Adding (37) and (40), and bridging consecutiv e objectives via the drift bound in Assumption IV .6 (i.e., E [ e F r +1 ( θ r +1 )] ≤ E [ e F r ( θ r +1 )] + ∆ r ), we obtain E e F r +1 ( θ r +1 ) ≤ E e F r ( θ r ) − c 1 η r Q E ∥∇ e F r ( θ r ) ∥ 2 1 E r + c 2 η 2 r ( Qσ 2 + Q 2 ζ 2 ) + C f δ f + ∆ r . (41) T elescoping. Summing (41) over r = 0 , . . . , R − 1 and using e F R ( θ R ) ≥ e F inf : c 1 R − 1 X r =0 η r Q E ∥∇ e F r ( θ r ) ∥ 2 1 E r ≤ E [ e F 0 ( θ 0 )] − e F inf + c 2 ( Qσ 2 + Q 2 ζ 2 ) R − 1 X r =0 η 2 r + R C f δ f + ∆ 0: R . (42) Removing the indicator . By Assumption IV .1, ∥∇ e F r ( θ r ) ∥ 2 1 ¬E r ≤ G 2 1 ¬E r . Hence, E ∥∇ e F r ( θ r ) ∥ 2 1 E r ≥ E ∥∇ e F r ( θ r ) ∥ 2 − G 2 δ f . (43) Substituting (43) into (42) and letting C ′ f := C f + c 1 G 2 absorb the additional term yields R − 1 X r =0 η r Q E ∥∇ e F r ( θ r ) ∥ 2 ≤ 1 c 1 E [ e F 0 ( θ 0 )] − e F inf + c 2 c 1 ( Qσ 2 + Q 2 ζ 2 ) R − 1 X r =0 η 2 r + C ′ f R δ f c 1 + ∆ 0: R c 1 . (44) Connecting to the population objective. By the inequality ∥ a ∥ 2 ≤ 2 ∥ b ∥ 2 + 2 ∥ a − b ∥ 2 and Lemma IV .2, E ∥∇ F ∗ ,r ( θ r ) ∥ 2 ≤ 2 E ∥∇ e F r ( θ r ) ∥ 2 + 2 G 2 C α S . (45) Multiplying (45) by η r Q , summing ov er r = 0 , . . . , R − 1 , dividing by P R − 1 r =0 η r Q , and substituting (44) completes the proof with C 1 = 2 c 2 /c 1 , C 2 = 2 /c 1 , C 3 = 2 C ′ f /c 1 , and C 4 = 2 G 2 C α . Remark IV .1 (Conv ergence rate interpretation) . The bound in (35) comprises five interpr etable terms: (i) the initial optimality gap, decaying with the total effective step b udget; IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 8 T ABLE II T H E OV E R AL L C O MPA R IS O N O F T H E C O M P A R E D M E TH O D S ( 3 S U P PO RT E D 7 N OT S U PP O RT E D ) Method FedA VG DP FedCG Median TrimmedMean Geometric FoolsGold GAN-Filter DPR-PPFL FedFG(Ours) Priv acy Preservable 7 3 3 7 7 7 7 7 3 3 Model Poisoning Resistible 7 7 7 3 3 3 3 3 3 3 (ii) irr educible noise fr om stochastic gradients and client het- er ogeneity; (iii) objective drift caused by evolving aggr e gation weights; (iv) a penalty for verification failur e; and (v) weight estimation err or fr om finite synthetic pr obes. W ith a constant step size η r = η = Θ(1 / √ QR ) , the first two terms yield the standard O (1 / √ QR ) rate for noncon vex federated opti- mization. The remaining terms vanish as the weights stabilize ( ∆ 0: R / √ QR → 0 ), the verification mechanism succeeds with incr easing pr obability ( δ f → 0 ), and the pr obe budget gr ows ( S → ∞ ), thereby reco vering the canonical rate. V . E X P E R I M E N TA L A NA L Y S I S In this section, we ev aluate the performance of FedFG in terms of priv acy protection and robustness to data poisoning on three representativ e federated learning datasets. A. Experiment Settings 1) Datasets and Model: W e evaluate FedFG on three standard benchmark datasets: MNIST , FMNIST (Fashion- MNIST), and CIF AR10. • MNIST [42] is a classic 10-class handwritten digit dataset (0–9) with 60,000 training and 10,000 test grayscale images of size 28 × 28 . In our experiments, images are resized to 32 × 32 to unify the input resolution. • FMNIST [43] is a balanced 10-class fashion image dataset with 60,000 training and 10,000 test grayscale images of size 28 × 28 . W e also resize images to 32 × 32 to ensure a consistent model input. • CIF AR10 [44] is a widely used 10-class object recog- nition benchmark containing 50,000 training and 10,000 test RGB images of size 32 × 32 , with a balanced class distribution. T o simulate realistic non-IID scenarios in FL, we partition the training data across clients using a Dirichlet distribution following prior work [45]. W e set β = 0 . 5 to distribute data unev enly across clients, resulting in MNIST -0.5, FMNIST -0.5, and CIF AR10-0.5. W e further construct MNIST -0.2 (i.e., β = 0 . 2 ) to model more extreme data heterogeneity among clients. In our experiments, we adopt the canonical LeNet-5 CNN [42] as the backbone model. W e treat the first two con volu- tional layers as the priv ate extractor and the last three fully connected layers as the public classifier . Our flow-matching model architecture is adapted from T orchCFM [38], [46]; specifically , we adjust the vector field dimensionality and step size to match the output dimensions of the extractor and the generator . 2) Baseline Methods: T o enable a comprehensiv e ev alua- tion of both priv acy protection and resistance to data poison- ing, we compare our approach with nine representati ve state- of-the-art baselines summarized in T able II. Among them, FedA VG [2] serves as the canonical FL benchmark without any explicit defense. The remaining methods are grouped into three categories: • Privacy-protecti ve defenses , including Differential Pri- vac y (DP) [22], which mitigates data leakage by inject- ing Gaussian noise into local models (two noise levels: σ 2 = 0 . 1 and σ 2 = 0 . 001 ), and FedCG [33], which splits CNNs into a priv ate feature extractor and a classifier and uses cGAN-based imitation for the latter part of the local model. • Poisoning-resistant defenses , including coordinate-wise Median and T rimmedMean [26], where the server takes the median or remov es a fixed fraction of extreme values before av eraging; Geometric aggregation [35], which reduces sensitivity to outliers via geometric-type combination of updates; FoolsGold [15], which down- weights clients exhibiting high gradient similarity across rounds under the assumption of colluding attackers; and GAN-Filter [36], which emplo ys a server -side cGAN to synthesize validation data for detecting and filtering poisoned updates without relying on external datasets. • Defenses with both capabilities , represented by DPR- PPFL [32], which lev erages representational similarity analysis to support asymmetric local models against data in version while enabling the server to identify benign updates and robustly aggregate them under poisoning. 3) Experimental En vir onment: All experiments were con- ducted in Python and PyT orch on a server equipped with four NVIDIA GeForce R TX 4090 GPUs and Intel(R) Xeon(R) Platinum 8352V CPU@2.10GHz. B. Evaluation on Privacy Protection Ability FedFG provides priv acy protection by updating the flow- matching generator F G t i rather than directly updating the priv ate extractor E t i . T o ev aluate its effecti veness, we conduct experiments against gradient-based pri vacy attacks as follo ws. 1) Compar ed Methods: W e consider FedA VG [2], DP with different noise levels [22], FedCG [33], and DPR-PPFL [32] as baselines. 2) Attac k Setup: W e employ two representativ e gradient in- version attacks to assess the effecti veness of our defense. Deep Leakage from Gradients (DLG) [10] attempts to reconstruct clients’ training samples from the model gradients shared with the server . Inv erting Gradients (IG) [12] improv es upon DLG by matching the observed and reconstructed gradients IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 9 Fig. 2. Comparison of ground truth images and recovered images under DLG and IG attacks. The PSNR (in dB) and SSIM values are listed sequentially below each image. The first row reports results on MNIST , the second row reports results on FMNIST , and the third and fourth ro ws report results on CIF AR10. using a cosine-similarity-based objectiv e, typically yielding higher-quality reconstructions. T o strengthen the attacks in our ev aluation, we add a total variation (TV) [47] regularization term to the loss function for both methods and apply them with customized hyperparameter settings. Specifically , all attacks use the Adam optimizer; DLG runs for 10,000 iterations and IG runs for 5,000 iterations. 3) Experimental Results: Fig. 2 reports both qualitati ve reconstructions and quantitative metrics (PSNR/SSIM; lower values indicate stronger priv acy protection). The left part cor- responds to DLG, and the right part corresponds to IG. W ith- out any defense, FedA vg exhibits the most se vere leakage: re- constructed samples remain visually close to the ground truth, with consistently high PSNR and SSIM. The protection offered by DP strongly depends on the noise le vel. W ith a small noise scale ( σ 2 =0 . 001 ), reconstructions are still highly recognizable and the metric reduction is marginal, indicating that the perturbation is insufficient to suppress in version. Increasing the noise to σ 2 =0 . 1 substantially degrades reconstructed images and leads to a clear drop in PSNR and SSIM. Nev ertheless, this protection is not uniform on grayscale datasets: for MNIST and FMNIST , DP ( σ 2 =0 . 1 ) mostly remains in the range of 15–21 dB with SSIM above 0.5, suggesting that structural cues may still be exploitable, and faint contour details can be discerned in the recov ered images. DPR-PPFL further disrupts the in version process, leading to visibly corrupted, blob-like reconstructions with reduced metrics, although some cases still retain non-negligible PSNR and SSIM. In contrast, FedCG and FedFG consistently produce near-noise reconstructions with lower PSNR and SSIM. Although neither FedCG nor FedFG transmits the extractor , the generator can still act as a prior over the feature distribution for gradient in version attacks. In FedCG, the cGAN tends to learn a more concentrated conditional feature manifold, which strengthens the prior constraints and yields more discrimina- tiv e structure for in version. By contrast, FedFG learns a time- dependent vector field via flo w matching and performs ODE- based sampling; the resulting generated distribution is typically smoother , making gradient matching more underconstrained and the reconstruction process less stable. Consequently , under both DLG and IG, FedFG achie ves lower reconstruction- quality scores, indicating stronger priv acy protection. 0 20 40 60 80 100 Communication Rounds 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Outlier Scores Threshold Benign Malicious (a) SF 0 20 40 60 80 100 Communication Rounds 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Outlier Scores Threshold Benign Malicious (b) IPM Fig. 3. The statistical results of the outlier scores for malicious and benign clients and dynamic thresholds under attacks vary with epochs on MNIST . C. Evaluation on Model P oisoning Resistibility 1) Compar ed Methods: W e compare FedFG with six base- lines that are designed to resist model poisoning: Median [26], T rimmedMean [26], Geometric [35], FoolsGold [15], DPR- PPFL [32], and GAN-Filter [36]. 2) Attac k Setup: T o assess robustness, we implement three representativ e poisoning attacks that cov er both gradient-le vel and model-lev el adversarial behaviors, as summarized below: • Sign Flipping (SF) [13]: Malicious clients re verse the sign of their local updates to push the aggregated global update in the opposite direction. • Inner Product Manipulation (IPM) [14]: Malicious clients manipulate the direction of the aggregated update by making its inner product with the true gradient small or negati ve. • Model Poisoning Attack based on Fake clients (MP AF) [16]: Fake clients steer global updates tow ard an attacker- chosen low-accurac y base model and scale their updates to maximally degrade overall performance. In our implementation, the MAD-based cutoff multiplier γ is set to 3 , corresponding to the classical three-scale cutoff, and the accuracy score threshold κ is empirically set to 1 2 N , IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 10 10 20 30 40 50 60 70 80 90 100 Communication Rounds 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Accuracy attack start Median TrimmedMean Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (a) MNIST , SF 10 20 30 40 50 60 70 80 90 100 Communication Rounds 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Accuracy attack start Median TrimmedMean Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (b) MNIST , IPM 10 20 30 40 50 60 70 80 90 100 Communication Rounds 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Accuracy attack start Median TrimmedMean Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (c) MNIST , MP AF 10 20 30 40 50 60 70 80 90 100 Communication Rounds 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Accuracy attack start Median TrimmedMean Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (d) MNIST -0.5, SF 10 20 30 40 50 60 70 80 90 100 Communication Rounds 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Accuracy attack start Median TrimmedMean Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (e) MNIST -0.5, IPM 10 20 30 40 50 60 70 80 90 100 Communication Rounds 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Accuracy attack start Median TrimmedMean Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (f) MNIST -0.5, MP AF 10 20 30 40 50 60 70 80 90 100 Communication Rounds 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Accuracy attack start Median TrimmedMean Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (g) MNIST -0.2, SF 10 20 30 40 50 60 70 80 90 100 Communication Rounds 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Accuracy attack start Median TrimmedMean Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (h) MNIST -0.2, IPM 10 20 30 40 50 60 70 80 90 100 Communication Rounds 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 Accuracy attack start Median TrimmedMean Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (i) MNIST -0.2, MP AF Fig. 4. T est-accuracy trajectories of FL defense methods under different distribution settings, with the attack starting at round 20. where N denotes the number of clients. Follo wing common FL ev aluation practice, we instantiate an FL system consisting of 10 clients and one central server . Each client performs local training for 10 epochs using a batch size of 64 and a learning rate of 0.001. W e further vary the fraction of malicious clients in { 10% , 20% , 30% } . All experiments run for 100 global communication rounds; unless otherwise specified, adversaries start behaving maliciously from round 20. 3) Pr eliminary V erification: Fig. 3 provides a preliminary verification of FedFG by analyzing the ev olution of clients’ outlier scores over 100 global communication rounds on MNIST under SF and IPM, where 30% of participating clients are malicious. In this stress test, adversaries launch attacks from communication round 0 to e valuate the detection mechanism throughout the entire training trajectory . As shown in Fig. 3(a), under SF , malicious clients con- sistently exhibit substantially larger and more v olatile outlier scores than benign clients across rounds. The MAD-based threshold adapts to the score statistics while remaining abov e the benign maximum and belo w the malicious minimum, thereby maintaining a clear separation margin. Under IPM in Fig. 3(b), the gap between malicious and benign scores is com- parativ ely narro wer , reflecting the more subtle manipulation pattern of IPM; ne vertheless, the threshold remains between the maximum benign score and the minimum malicious score ov er the entire training process. This persistent ordering in- dicates that the proposed score-and-threshold mechanism can reliably distinguish malicious updates from benign ones even when attacks start from round 0. Overall, Fig. 3 shows that the MAD-driven dynamic thresh- old effecti vely tracks the e volving score distribution and continuously separates malicious clients from benign clients in both SF and IPM scenarios. This enables FedFG to detect and filter compromised clients on the fly , providing an initial empirical validation of its robustness and supporting its use as a robust aggregation component. 4) Experimental Results: T able III summarizes the results on IID MNIST , FMNIST , and CIF AR10. FedFG achie ves the highest accuracy across all attack types and malicious-client fractions. On MNIST , even when ϵ increases to 30% under the strong MP AF attack, FedFG remains stable, achie ving an accuracy of 0.946–0.956. Con ventional robust aggre gators, howe ver , degrade to varying degrees. Median and Trimmed- Mean exhibit a clear accuracy drop under SF as ϵ increases. IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 11 T ABLE III P E RF O R M AN C E ( AC C ) U N D E R D I FFE R E NT AT T A CK R A T ES ϵ O N M N I S T, F M NI S T A N D C I F A R 1 0. ϵ = 10% ϵ = 20% ϵ = 30% Methods SF IPM MP AF SF IPM MP AF SF IPM MP AF MNIST FedA vg 0.1742 0.8949 0.0964 0.0950 0.1050 0.0960 0.0831 0.0957 0.0954 Median 0.9362 0.9422 0.9418 0.9013 0.9390 0.9408 0.8166 0.9383 0.9363 T rimmedMean 0.9118 0.9413 0.9418 0.8655 0.9390 0.9400 0.7857 0.9386 0.9357 Geometric 0.1104 0.1104 0.1104 0.1113 0.1113 0.1113 0.1137 0.1137 0.1137 FoolsGold 0.7073 0.5596 0.4802 0.8863 0.5230 0.5115 0.9060 0.4377 0.6969 DPR-PPFL 0.9416 0.9378 0.9416 0.9420 0.9085 0.9420 0.9417 0.0951 0.9417 GAN-Filter 0.9451 0.9400 0.0964 0.9435 0.9293 0.0960 0.9434 0.9100 0.0383 FedFG (Ours) 0.9493 0.9496 0.9558 0.9490 0.9490 0.9558 0.9466 0.9463 0.9551 FMNIST FedA vg 0.3631 0.8122 0.0953 0.0988 0.1035 0.0963 0.0983 0.0983 0.0966 Median 0.8113 0.8227 0.8249 0.7385 0.8238 0.8165 0.3106 0.8217 0.8146 T rimmedMean 0.7973 0.8273 0.8273 0.7320 0.8248 0.8200 0.6746 0.8180 0.8163 Geometric 0.7967 0.8253 0.8204 0.7055 0.8233 0.8128 0.5514 0.8226 0.8057 FoolsGold 0.2022 0.5924 0.4873 0.7733 0.5733 0.5945 0.7994 0.4834 0.5426 DPR-PPFL 0.8300 0.7862 0.8322 0.8273 0.8178 0.8303 0.8337 0.0983 0.8326 GAN-Filter 0.8311 0.8273 0.0953 0.8300 0.7803 0.0963 0.8286 0.7420 0.0966 FedFG (Ours) 0.8429 0.8429 0.8449 0.8430 0.8430 0.8463 0.8417 0.8417 0.8423 CIF AR10 FedA vg 0.0949 0.4473 0.1033 0.0953 0.1053 0.1053 0.0963 0.1034 0.1063 Median 0.4893 0.5104 0.5084 0.4040 0.5240 0.4958 0.2840 0.5034 0.4866 T rimmedMean 0.4442 0.5096 0.5047 0.3993 0.5185 0.4980 0.2349 0.4989 0.4780 Geometric 0.4587 0.5176 0.5020 0.3073 0.5130 0.4853 0.1251 0.4883 0.4780 FoolsGold 0.1769 0.1929 0.1400 0.2820 0.2680 0.2148 0.3531 0.2894 0.2606 DPR-PPFL 0.5171 0.5136 0.5187 0.5070 0.5123 0.5218 0.1060 0.0960 0.5220 GAN-Filter 0.5147 0.5053 0.3484 0.5108 0.4785 0.1053 0.5146 0.3143 0.1063 FedFG (Ours) 0.5611 0.5456 0.5327 0.5558 0.5478 0.5570 0.5234 0.5631 0.5571 FoolsGold is sensiti ve to the attack type and exhibits in- stability . Geometric aggregation nearly collapses to random- guessing performance on MNIST (approximately 0.11) across all attacks, indicating high sensiti vity under the considered threat model and training configuration. Similar trends are observed on FMNIST , where FedFG achiev es an accuracy of approximately 0.842 across different attacks and values of ϵ , outperforming both Median and T rimmedMean while remaining robust in settings where GAN-Filter and DPR-PPFL fail. On CIF AR10, FedFG maintains an accuracy of 0.52– 0.56 across all attacks and malicious fractions, substantially exceeding the best-performing baseline. T able IV reports results under Dirichlet partitioning ( β = 0 . 5 ), which introduces client heterogeneity and further com- plicates robust aggregation. On MNIST -0.5, FedFG maintains high accurac y (approximately 0.90) across all attacks and v al- ues of ϵ . Sev eral baselines, howe ver , become fragile. Median collapses to 0.1002 under IPM with ϵ = 10% and drops to 0.1051 under MP AF with ϵ = 10% , while Geometric aggregation is like wise unstable. DPR-PPFL and GAN-Filter perform well under specific configurations (for example, on MNIST -0.5 with ϵ = 20% , DPR-PPFL still reaches 0.9628 under MP AF , and GAN-Filter exceeds 0.91 under SF and IPM) but can fail sev erely under others. When ϵ = 30% , for instance, DPR-PPFL collapses to near-random performance, and GAN-Filter fails under nearly all MP AF settings. These failures point to practical limitations of each method. Because DPR-PPFL relies on similarity-based filtering, its reliability can degrade under non-IID heterogeneity . GAN-Filter , on the other hand, depends on an unpoisoned global model to guide the server -side generator in producing trustworthy samples and thus can be undermined by multi-round, consistent attacks such as MP AF . On FMNIST -0.5, FedFG achiev es an accuracy of 0.760– 0.781 across all attacks and v alues of ϵ , again outperforming all other methods. Under SF with larger ϵ , many defenses degrade rapidly , whereas FedFG remains substantially more stable. On CIF AR10-0.5, FedFG attains the best results in all settings. Even with ϵ = 30% , FedFG remains abo ve 0.51 under all three attacks, while other defenses are often below 0.45 or fluctuate mark edly . T aken together, these results confirm that FedFG handles complex visual data, strong client heterogeneity , and adversarial poisoning more reliably than existing methods. Fig. 4 shows the test-accuracy trajectories over 100 rounds under three distribution settings: IID MNIST , non-IID MNIST - 0.5, and extremely non-IID MNIST -0.2. The vertical line at round 20 marks the onset of the attack. For IID MNIST (Fig. 4(a)–(c)), coordinate-wise robust methods are generally ef fective under IPM and MP AF but become unstable under SF as the adv ersarial contribution increases, consistent with the results in T able III. FoolsGold exhibits large fluctuations and is strongly af fected by at- tacks. FedFG, in contrast, remains among the top-performing methods throughout training, suggesting that its filtering and reweighting mechanisms suppress poisoned updates while preserving useful learning signals. For MNIST -0.5 (Fig. 4(d)– (f)), client heterogeneity amplifies the damage caused by attacks. Multiple baselines drop noticeably after round 20 and recover slowly , suggesting that even a small number of poisoned updates can hav e a disproportionate impact under heterogeneous conditions. FedFG maintains a smooth, high- IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 12 T ABLE IV P E RF O R M AN C E ( AC C ) U N D E R D I FFE R E NT AT T A CK R A T ES ϵ O N M N I S T- 0. 5 , F M N I ST- 0. 5 A N D C I F A R 1 0 -0 . 5 . ϵ = 10% ϵ = 20% ϵ = 30% Methods SF IPM MP AF SF IPM MP AF SF IPM MP AF MNIST -0.5 FedA vg 0.0922 0.3469 0.1022 0.0920 0.1063 0.1020 0.0900 0.0900 0.1049 Median 0.9089 0.1002 0.1051 0.6008 0.8758 0.1063 0.3831 0.8389 0.8520 T rimmedMean 0.8447 0.9098 0.9182 0.5683 0.8258 0.8703 0.2886 0.8343 0.8497 Geometric 0.1051 0.0920 0.0920 0.4540 0.1063 0.1063 0.1197 0.1066 0.1066 FoolsGold 0.9151 0.2040 0.7380 0.8923 0.8128 0.8993 0.8700 0.8366 0.8600 DPR-PPFL 0.9211 0.3907 0.9271 0.0935 0.8030 0.9628 0.0934 0.0900 0.0934 GAN-Filter 0.9169 0.9242 0.1022 0.9175 0.9190 0.1020 0.8634 0.8354 0.0740 FedFG (Ours) 0.9242 0.9240 0.9238 0.9023 0.9045 0.9065 0.8983 0.8991 0.8971 FMNIST -0.5 FedA vg 0.6453 0.7498 0.1022 0.0998 0.2303 0.0998 0.1011 0.1011 0.1011 Median 0.7080 0.7776 0.7464 0.6073 0.7530 0.7410 0.6529 0.6829 0.6577 T rimmedMean 0.6318 0.7609 0.7609 0.4625 0.7285 0.7300 0.2780 0.6731 0.7023 Geometric 0.7396 0.7540 0.7540 0.5885 0.7508 0.7398 0.2331 0.7151 0.7151 FoolsGold 0.5664 0.4960 0.6369 0.4960 0.3130 0.6395 0.7083 0.7214 0.7249 DPR-PPFL 0.7516 0.7413 0.7520 0.7450 0.7413 0.7473 0.7377 0.3549 0.7354 GAN-Filter 0.7518 0.7567 0.1022 0.7095 0.7433 0.0998 0.7274 0.7463 0.1011 FedFG (Ours) 0.7620 0.7620 0.7780 0.7603 0.7608 0.7675 0.7731 0.7731 0.7806 CIF AR10-0.5 FedA vg 0.1124 0.3542 0.0967 0.0965 0.2978 0.0963 0.0951 0.1037 0.0971 Median 0.4318 0.4696 0.4798 0.3298 0.4680 0.4690 0.1803 0.4006 0.3991 T rimmedMean 0.3593 0.3971 0.4416 0.2173 0.4295 0.4275 0.1174 0.3683 0.4326 Geometric 0.4233 0.4627 0.4671 0.3405 0.4680 0.4570 0.1506 0.4146 0.4220 FoolsGold 0.2818 0.2136 0.2556 0.2233 0.2650 0.4535 0.4149 0.4177 0.4166 DPR-PPFL 0.4809 0.2571 0.4858 0.4738 0.2688 0.4913 0.4486 0.2731 0.4440 GAN-Filter 0.4442 0.4138 0.0791 0.4585 0.4913 0.0753 0.4406 0.4363 0.0971 FedFG (Ours) 0.5080 0.4827 0.5042 0.5263 0.5143 0.5188 0.5163 0.5154 0.5223 0 10% 20% 30% 40% 50% Ratio of malicious clients 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Accuracy FedAvg Median Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (a) FMNIST , SF 0 10% 20% 30% 40% 50% Ratio of malicious clients 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 Accuracy FedAvg Median Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (b) FMNIST , IPM 0 10% 20% 30% 40% 50% Ratio of malicious clients 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Accuracy FedAvg Median Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (c) FMNIST -0.5, SF 0 10% 20% 30% 40% 50% Ratio of malicious clients 0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 Accuracy FedAvg Median Geometric FoolsGold DPR-PPFL GAN-Filter FedFG (d) FMNIST -0.5, IPM Fig. 5. T est accuracy of FL methods on FMNIST and FMNIST -0.5 under SF and IPM as the proportion of malicious clients increases. accuracy trajectory , indicating that the similarity-based filter- ing remains effecti ve under Dirichlet partitioning despite the naturally increased dispersion among benign clients. For the extreme MNIST -0.2 setting (Fig. 4(g)–(i)), the task becomes ev en more challenging, as defenses that rely on simplistic assumptions are more likely to misclassify benign clients or fail during aggregation. Several baselines collapse to low accuracy or remain unstable for extended periods, whereas FedFG sustains high accuracy with relativ ely small fluctu- ations. These results suggest that our distribution-distance score remains discriminative e ven under sev ere heterogeneity , allowing FedFG to maintain stability across div erse client distributions and adversarial conditions. 5) Further Rob ustness Analysis: In this subsection, we vary two factors to stress-test FedFG under more demanding conditions: the proportion of malicious clients and the timing of the attack onset. a) Impact of malicious-client fraction.: Fig. 5 reports accuracy on FMNIST and FMNIST -0.5 under SF and IPM as ϵ increases from 0% to 50%. Most baselines degrade rapidly as ϵ gro ws, and some collapse to near-random performance at moderate-to-high malicious fractions. Coordinate-wise robust aggregators and similarity-based methods are particularly vul- nerable, as they can fail abruptly when attackers dominate the aggregation statistics or when too few benign clients remain to form a stable consensus. FedFG, in contrast, exhibits little to no accuracy loss and maintains high accuracy across the entire range of ϵ . Rather than relying on fixed trimming thresholds or brittle clustering assumptions, FedFG combines a generator- driv en accuracy score with distribution-similarity detection to identify inconsistent behaviors. These two signals complement each other and together preserve robustness as ϵ increases. b) Rob ustness to attacks starting at r ound 0.: In the default setting, attacks begin at round 20, allowing the sys- tem to establish a benign warm-up trajectory . W e further consider a stronger adversary in which poisoning begins at round 0, thereby prev enting any such initialization. Fig. 6 compares representativ e baselines (Median, DPR-PPFL, and IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 13 (a) SF - mnist 0.94 0.88 0.77 0.94 0.94 0.94 0.11 0.10 0.11 0.95 0.95 0.95 10% 20% 30% Attack Ratio 0 0.2 0.4 0.6 0.8 1 Accuracy (b) SF - mnist-0.5 0.88 0.63 0.25 0.92 0.92 0.90 0.10 0.10 0.10 0.93 0.93 0.09 10% 20% 30% Attack Ratio 0 0.2 0.4 0.6 0.8 1 Accuracy (c) IPM - mnist 0.11 0.11 0.94 0.92 0.87 0.11 0.94 0.11 0.11 0.95 0.95 0.95 10% 20% 30% Attack Ratio 0 0.2 0.4 0.6 0.8 1 Accuracy (d) IPM - mnist-0.5 0.93 0.11 0.85 0.15 0.11 0.09 0.93 0.11 0.11 0.94 0.91 0.90 10% 20% 30% Attack Ratio 0 0.2 0.4 0.6 0.8 1 Accuracy Median DPR-PPFL GAN-Filter FedFG Fig. 6. Accuracy comparison of Median, DPR-PPFL, GAN-Filter, and FedFG on MNIST and MNIST -0.5 under SF and IPM when the attack starts at round 0. GAN-Filter) with FedFG on MNIST and MNIST -0.5 under SF and IPM. When poisoning is present from the outset, sev eral baselines suf fer severe accuracy collapse, especially on heterogeneous MNIST -0.5, where early optimization dynamics are more fragile. GAN-Filter is particularly affected because it depends on an unpoisoned global model to guide the server -side generator and thus cannot cope with attacks in the initial rounds. In our experiments, FedFG fails in only one case (MNIST -0.5, SF , ϵ = 30% ) and maintains high accuracy in all remaining scenarios. This is consistent with the preliminary v alidation in Fig. 3, where the MAD-based dynamic threshold effecti vely separates malicious clients from benign ones throughout training, even when attacks begin in the first communication rounds. FedFG therefore serves not only as a post-attack defense b ut also as a viable training strategy when poisoning is present from the start. Overall, the robustness of FedFG rests on two complemen- tary signals, both computed on synthetic features produced by the global generator . The first is a probability-distribution distance that measures how each client’ s predictive distribution deviates from the majority; a MAD-driven adaptiv e threshold then identifies and filters anomalous clients. The second is an accuracy score that ev aluates each client classifier on label- conditioned synthetic features and do wn-weights unreliable clients accordingly . This design tolerates both gradient-le vel and model-lev el attacks while preserving split-learning-style priv acy , as the pri v ate feature extractor remains local. Across multiple datasets and heterogeneity settings, FedFG outper- forms representative robust aggregation and priv acy-preserving baselines, providing a federated optimization framew ork that combines priv acy preservation with strong resistance to poi- soning. V I . C O N C L U S I O N In this paper , we propose FedFG, a robust federated learning framew ork based on flo w-matching generation that jointly preserves client priv acy and defends against sophisticated poisoning attacks. FedFG decouples each client model into a private extractor and a public classifier, and uses a flow- matching generator to replace the extractor during server communication, thereby reducing priv acy leakage from shared updates. On the server side, FedFG verifies client updates using synthetic feature probes and performs robust aggregation via outlier filtering and accuracy-aw are reweighting. Extensive experiments under both IID and non-IID settings show that FedFG consistently outperforms state-of-the-art defenses un- der multiple Byzantine attack strategies. Despite these promis- ing results, FedFG introduces additional computation and com- munication overhead due to generator training and probe-based verification, which may be challenging for large-scale client populations. Future work will focus on improving efficienc y for large-scale deployments and extending the frame work to more adaptive threats and more comple x real-world scenarios. R E F E R E N C E S [1] K. Bonawitz, V . Ivano v , B. Kreuter , A. Marcedone, H. B. McMahan, S. Patel, D. Ramage, A. Segal, and K. Seth, “Practical secure aggregation for priv acy-preserving machine learning, ” in proceedings of the 2017 ACM SIGSAC Conference on Computer and Communications Security , 2017, pp. 1175–1191. [2] B. McMahan, E. Moore, D. Ramage, S. Hampson, and B. A. y Arcas, “Communication-efficient learning of deep networks from decentralized data, ” in Artificial intelligence and statistics . PMLR, 2017, pp. 1273– 1282. [3] P . Regulation, “Regulation (eu) 2016/679 of the european parliament and of the council, ” Regulation (eu) , vol. 679, no. 2016, pp. 10–13, 2016. [4] A. Act et al. , “Health insurance portability and accountability act of 1996, ” Public law , vol. 104, p. 191, 1996. [5] N. Rieke, J. Hancox, W . Li, F . Milletari, H. R. Roth, S. Albarqouni, S. Bakas, M. N. Galtier, B. A. Landman, K. Maier-Hein et al. , “The future of digital health with federated learning, ” NPJ digital medicine , vol. 3, no. 1, p. 119, 2020. [6] D. C. Nguyen, M. Ding, P . N. Pathirana, A. Seneviratne, J. Li, and H. V . Poor, “Federated learning for internet of things: A comprehensiv e survey , ” IEEE communications surve ys & tutorials , vol. 23, no. 3, pp. 1622–1658, 2021. [7] B. Dash, P . Sharma, and A. Ali, “Federated learning for priv acy- preserving: A revie w of pii data analysis in fintech, ” International Journal of Software Engineering & Applications (IJSEA) , vol. 13, no. 4, 2022. [8] J. Liu, Y . Li, M. Zhao, L. Liu, and N. Kumar , “Epffl: enhancing priv acy and fairness in federated learning for distributed e-healthcare data sharing services, ” IEEE Tr ansactions on Dependable and Secure Computing , vol. 22, no. 2, pp. 1239–1252, 2024. [9] C. Fan, J. Cui, H. Jin, H. Zhong, I. Bolodurina, and D. He, “Robust intrusion detection system for vehicular networks: A federated learning approach based on representativ e client selection, ” IEEE Tr ansactions on Mobile Computing , vol. 24, no. 11, pp. 11 942–11 956, 2025. [10] L. Zhu, Z. Liu, and S. Han, “Deep leakage from gradients, ” Advances in neural information pr ocessing systems , vol. 32, 2019. [11] B. Zhao, K. R. Mopuri, and H. Bilen, “idlg: Improved deep leakage from gradients, ” arXiv preprint , 2020. [12] J. Geiping, H. Bauermeister, H. Dröge, and M. Moeller, “Inverting gradients-how easy is it to break privac y in federated learning?” Ad- vances in neural information pr ocessing systems , vol. 33, pp. 16 937– 16 947, 2020. [13] S. P . Karimireddy , L. He, and M. Jaggi, “Learning from history for byzantine robust optimization, ” in International confer ence on machine learning . PMLR, 2021, pp. 5311–5319. [14] C. Xie, O. Koyejo, and I. Gupta, “Fall of empires: Breaking byzantine- tolerant sgd by inner product manipulation, ” in Uncertainty in artificial intelligence . PMLR, 2020, pp. 261–270. IEEE TRANSA CTIONS ON DEPEND ABLE AND SECURE COMPUTING 14 [15] M. Fang, X. Cao, J. Jia, and N. Gong, “Local model poisoning attacks to Byzantine-Robust federated learning, ” in 29th USENIX Security Symposium (USENIX Security 20) . USENIX Association, 2020, pp. 1605–1622. [16] X. Cao and N. Z. Gong, “Mpaf: Model poisoning attacks to federated learning based on fake clients, ” in Proceedings of the IEEE/CVF confer ence on computer vision and pattern recognition , 2022, pp. 3396– 3404. [17] Y . Xie, M. Fang, and N. Z. Gong, “Model poisoning attacks to federated learning via multi-round consistency , ” in Proceedings of the Computer V ision and P attern Recognition Confer ence , 2025, pp. 15 454–15 463. [18] C. Hu and B. Li, “Maskcrypt: Federated learning with selectiv e ho- momorphic encryption, ” IEEE T ransactions on Dependable and Secur e Computing , vol. 22, no. 1, pp. 221–233, 2024. [19] Y . Li, N. Y an, J. Chen, X. W ang, J. Hong, K. He, W . W ang, and B. Li, “Fedphe: A secure and efficient federated learning via packed homo- morphic encryption, ” IEEE T ransactions on Dependable and Secure Computing , vol. 22, no. 5, pp. 5448–5463, 2025. [20] C. Dong, J. W eng, M. Li, J.-N. Liu, Z. Liu, Y . Cheng, and S. Y u, “Priv acy-preserving and byzantine-robust federated learning, ” IEEE T ransactions on Dependable and Secur e Computing , vol. 21, no. 2, pp. 889–904, 2023. [21] J. So, B. Güler, and A. S. A vestimehr , “Byzantine-resilient secure federated learning, ” IEEE Journal on Selected Ar eas in Communications , vol. 39, no. 7, pp. 2168–2181, 2020. [22] K. W ei, J. Li, M. Ding, C. Ma, H. H. Y ang, F . Farokhi, S. Jin, T . Q. Quek, and H. V . Poor, “Federated learning with differential privac y: Algorithms and performance analysis, ” IEEE transactions on information for ensics and security , vol. 15, pp. 3454–3469, 2020. [23] R. Xue, K. Xue, B. Zhu, X. Luo, T . Zhang, Q. Sun, and J. Lu, “Differ- entially priv ate federated learning with an adapti ve noise mechanism, ” IEEE T ransactions on Information F or ensics and Security , v ol. 19, pp. 74–87, 2023. [24] K. W ei, J. Li, C. Ma, M. Ding, W . Chen, J. Wu, M. T ao, and H. V . Poor , “Personalized federated learning with differential priv acy and con vergence guarantee, ” IEEE T ransactions on Information F orensics and Security , vol. 18, pp. 4488–4503, 2023. [25] P . Blanchard, E. M. El Mhamdi, R. Guerraoui, and J. Stainer , “Ma- chine learning with adversaries: Byzantine tolerant gradient descent, ” Advances in neural information pr ocessing systems , vol. 30, 2017. [26] D. Y in, Y . Chen, R. Kannan, and P . Bartlett, “Byzantine-robust dis- tributed learning: T owards optimal statistical rates, ” in International confer ence on machine learning . Pmlr , 2018, pp. 5650–5659. [27] X. Cao, M. Fang, J. Liu, and N. Z. Gong, “Fltrust: Byzantine- robust federated learning via trust bootstrapping, ” arXiv preprint arXiv:2012.13995 , 2020. [28] Y . Zhao, J. Chen, J. Zhang, D. W u, M. Blumenstein, and S. Y u, “Detecting and mitigating poisoning attacks in federated learning us- ing generativ e adversarial networks, ” Concurrency and Computation: Practice and Experience , vol. 34, no. 7, p. e5906, 2022. [29] M. Lam, G.-Y . W ei, D. Brooks, V . J. Reddi, and M. Mitzenmacher , “Gradient disaggregation: Breaking priv acy in federated learning by reconstructing the user participant matrix, ” in International Conference on Machine Learning . PMLR, 2021, pp. 5959–5968. [30] X. Y ang, W . Huang, and M. Y e, “Dynamic personalized federated learn- ing with adaptiv e differential priv acy , ” Advances in Neural Information Pr ocessing Systems , v ol. 36, pp. 72 181–72 192, 2023. [31] G. Chen, S. Liu, X. Y ang, T . W ang, L. Y ou, and F . Xia, “Fedrsm: Representational-similarity-based secured model uploading for federated learning, ” in 2023 IEEE 22nd International Conference on T rust, Secu- rity and Privacy in Computing and Communications (T rustCom) , 2023, pp. 189–196. [32] G. Chen, X. Li, L. Y ou, A. M. Abdelmoniem, Y . Zhang, and C. Y uen, “ A data poisoning resistible and priv acy protection federated-learning mechanism for ubiquitous iot, ” IEEE Internet of Things Journal , vol. 12, no. 8, pp. 10 736–10 750, 2025. [33] Y . W u, Y . Kang, J. Luo, Y . He, L. Fan, R. Pan, and Q. Y ang, “Fedcg: Leverage conditional gan for protecting priv acy and maintaining competitiv e performance in federated learning, ” in Proceedings of the Thirty-F irst International Joint Conference on Artificial Intelligence , 2022, pp. 2334–2340. [34] Y . Ma, Y . Y ao, and X. Xu, “Ppidsg: A privac y-preserving image distri- bution sharing scheme with gan in federated learning, ” in Pr oceedings of the AAAI Conference on Artificial Intelligence , vol. 38, no. 13, 2024, pp. 14 272–14 280. [35] K. Pillutla, S. M. Kakade, and Z. Harchaoui, “Robust aggregation for federated learning, ” IEEE T ransactions on Signal Processing , vol. 70, pp. 1142–1154, 2022. [36] U. Zafar , A. T eixeira, and S. T oor , “Robust federated learning against poisoning attacks: a gan-based defense framework, ” arXiv preprint arXiv:2503.20884 , 2025. [37] Y . Lipman, R. T . Q. Chen, H. Ben-Hamu, M. Nickel, and M. Le, “Flow matching for generative modeling, ” in The Eleventh International Confer ence on Learning Representations , 2023. [38] A. T ong, K. F A TRAS, N. Malkin, G. Huguet, Y . Zhang, J. Rector- Brooks, G. W olf, and Y . Bengio, “Improving and generalizing flow- based generative models with minibatch optimal transport, ” T ransactions on Machine Learning Researc h , 2024. [39] X. Liu, C. Gong, and Q. Liu, “Flow straight and fast: Learning to generate and transfer data with rectified flow , ” arXiv preprint arXiv:2209.03003 , 2022. [40] X. Li, K. Huang, W . Y ang, S. W ang, and Z. Zhang, “On the con ver gence of fedavg on non-iid data, ” in International Conference on Learning Repr esentations , 2020. [41] S. P . Karimireddy , S. Kale, M. Mohri, S. Reddi, S. Stich, and A. T . Suresh, “Scaffold: Stochastic controlled averaging for federated learn- ing, ” in International conference on machine learning . PMLR, 2020, pp. 5132–5143. [42] Y . Lecun, L. Bottou, Y . Bengio, and P . Haffner , “Gradient-based learning applied to document recognition, ” Pr oceedings of the IEEE , vol. 86, no. 11, pp. 2278–2324, 1998. [43] H. Xiao, K. Rasul, and R. V ollgraf, “Fashion-mnist: a novel image dataset for benchmarking machine learning algorithms, ” arXiv pr eprint arXiv:1708.07747 , 2017. [44] A. Krizhe vsky , G. Hinton et al. , “Learning multiple layers of features from tiny images, ” 2009. [45] T .-M. H. Hsu, H. Qi, and M. Brown, “Measuring the effects of non- identical data distribution for federated visual classification, ” arXiv pr eprint arXiv:1909.06335 , 2019. [46] A. T ong, N. Malkin, K. Fatras, L. Atanacko vic, Y . Zhang, G. Huguet, G. W olf, and Y . Bengio, “Simulation-free schrödinger bridges via score and flow matching, ” arXiv preprint 2307.03672 , 2023. [47] L. I. Rudin, S. Osher, and E. Fatemi, “Nonlinear total variation based noise remov al algorithms, ” Physica D: nonlinear phenomena , vol. 60, no. 1-4, pp. 259–268, 1992.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment