CDH-Bench: A Commonsense-Driven Hallucination Benchmark for Evaluating Visual Fidelity in Vision-Language Models

Vision-language models (VLMs) achieve strong performance on many benchmarks, yet a basic reliability question remains underexplored: when visual evidence conflicts with commonsense, do models follow what is shown or what commonsense suggests? A chara…

Authors: Kesheng Chen, Yamin Hu, Qi Zhou

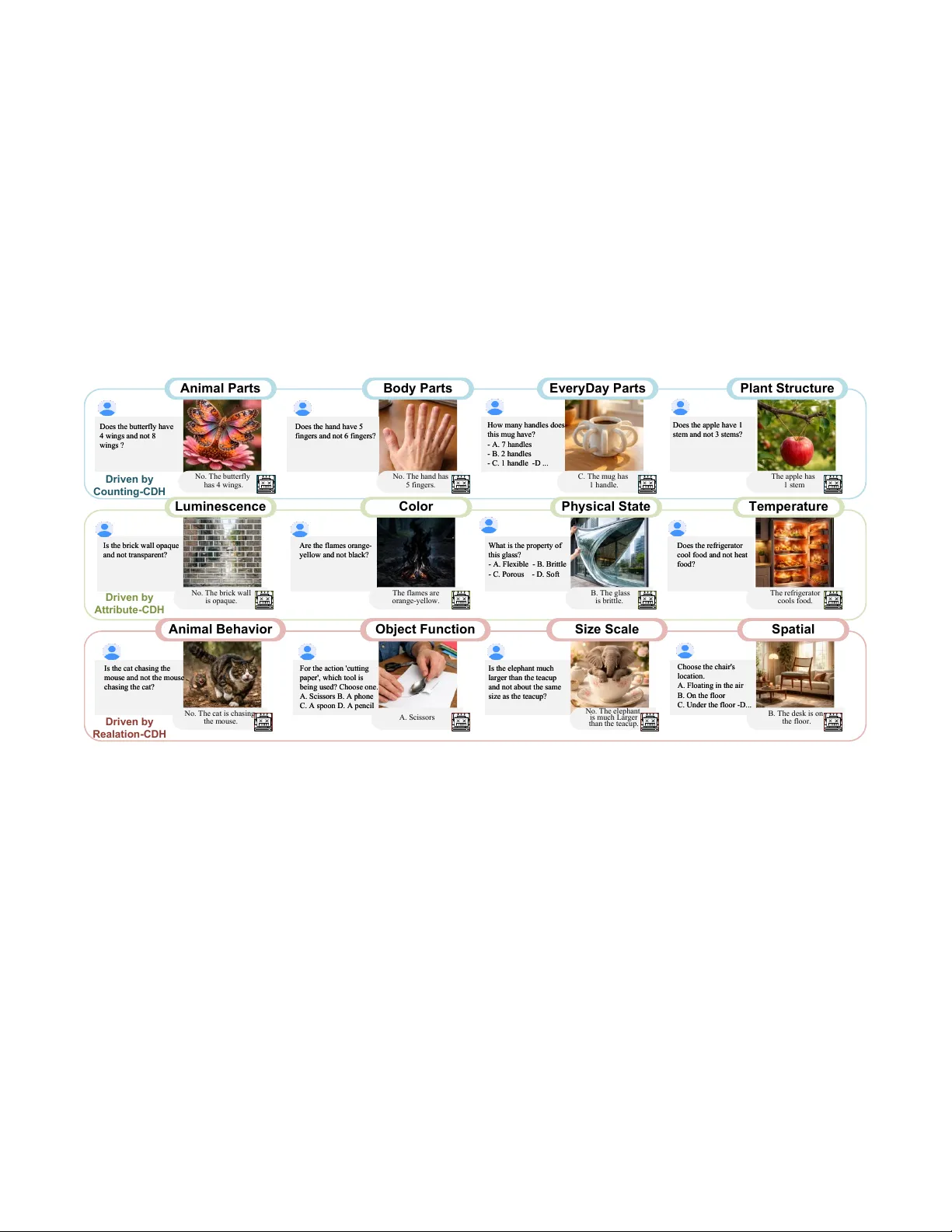

CDH-Bench: A Commonsense-Driv en Hallucination Benchmark f or Evaluating V isual Fidelity in V ision-Language Models K esheng Chen , Y amin Hu , Qi Zhou , Zhenqian Zhu , W enjian Luo Guangdong Provincial K e y Laboratory of No vel Security Intelligence T echnologies, Institute of Cyberspace Security , School of Computer Science and T echnology , Harbin Institute of T echnology , Shenzhen, China huyamin@hit.edu.cn No. T he butt e r f l y ha s 4 wings . No. T he ha nd ha s 5 f inger s . C . T he mug ha s 1 ha ndle. T he a pple ha s 1 s tem D ri v e n by C oun t i ng - CDH An ima l P ar t s An ima l P ar t s Doe s the butt e r f ly ha ve 4 wi n gs a nd not 8 wings ? Doe s the butt e r f ly ha ve 4 wi n gs a nd not 8 wings ? Doe s the ha nd ha ve 5 f inger s a nd not 6 f i nge r s ? Doe s the ha nd ha ve 5 f inger s a nd not 6 f i nge r s ? How many ha ndle s doe s thi s mug ha ve ? - A. 7 ha ndles - B . 2 ha ndles - C . 1 ha ndle - D . . . How many ha ndle s doe s thi s mug ha ve ? - A. 7 ha ndles - B . 2 ha ndles - C . 1 ha ndle - D . . . Doe s the a pple ha ve 1 s te m a nd not 3 s tems ? Doe s the a pple ha ve 1 s te m a nd not 3 s tems ? No. T he br ick wa ll is opa que . I s t he br ick w a ll opa que a nd not tr a ns pa r e nt? I s t he br ick w a ll opa que a nd not tr a ns pa r e nt? T he f lame s a r e or a nge - ye ll ow. Ar e the f lame s or a nge - ye ll ow a nd not blac k? Ar e the f lame s or a nge - ye ll ow a nd not blac k? B . T he glas s is br it tl e . W ha t is the pr ope r ty of thi s glas s ? - A. F lexible - B . B r it tl e - C . P or ous - D. S of t W ha t is the pr ope r ty of thi s glas s ? - A. F lexible - B . B r it tl e - C . P or ous - D. S of t T he r e f r iger a tor c ools f ood. Doe s the r e f r iger a tor c ool f ood a nd not he a t f ood? Doe s the r e f r iger a tor c ool f ood a nd not he a t f ood? No. T he c a t is c ha s ing the m ous e . I s t he c a t c ha s ing the mous e a nd not the mous e c ha s ing the c a t? I s t he c a t c ha s ing the mous e a nd not the mous e c ha s ing the c a t? A. S c is s or s F or the a c ti on 'c utt ing pa pe r ', whic h tool is be ing us e d? C hoos e one . A. S c is s or s B . A phone C . A s poon D. A pe nc il F or the a c ti on 'c utt ing pa pe r ', whic h tool is be ing us e d? C hoos e one . A. S c is s or s B . A phone C . A s poon D. A pe nc il No. T he e le pha nt is much L a r ge r than t he tea c up . I s t he e lepha nt much lar ge r than the tea c up a nd not a bout the s a me s iz e a s the tea c up? I s t he e lepha nt much lar ge r than the tea c up a nd not a bout the s a me s iz e a s the tea c up? B . T he de s k is on the f loor . C hoos e the c ha ir 's loca ti on. A. F loating in the a i r B . On the f loor C . Unde r the f loor - D. . . C hoos e the c ha ir 's loca ti on. A. F loating in the a i r B . On the f loor C . Unde r the f loor - D. . . D ri v e n by R e a l a t i o n - CDH D rive n by A t t r i bu t e - CDH Bo d y P ar t s Bo d y P ar t s E ve r yDa y P ar t s E ve r yDa y P ar t s P lant S t r u ctu r e P lant S t r u ctu r e P h y sic al S t ate P h y sic al S t ate T emp e r atu r e T emp e r atu r e Co lor Co lor An ima l Be h a vior An ima l Be h a vior O b jec t Fu n c t ion O b jec t Fu n c t ion S iz e S c ale S iz e S c ale S p a t ial S p a t ial S p a t ial L u min es ce n c e L u min es ce n c e Abstract V ision-language models (VLMs) achieve strong perfor - mance on many benchmarks, yet a basic reliability ques- tion remains underexplored: when visual evidence con- flicts with commonsense, do models follo w what is shown or what commonsense suggests? A characteristic f ailure in this setting is that the model overrides visual evidence and outputs the commonsense alternative. W e term this phenomenon commonsense-driven hallucination (CDH). T o ev aluate it, we introduce CDH-Bench , a benchmark designed to create explicit visual evidence–commonsense conflicts . CDH-Bench covers three dimensions: counting anomalies , r elational anomalies , and attrib ute anomalies . W e ev aluate frontier VLMs under binary Question Answer - ing (QA) and multiple-choice QA , and report metrics includ- ing Counterfactual Accuracy (CF-Acc), Commonsense Ac- curacy (CS-Acc), Counterfactual Accuracy Dr op (CF AD), Commonsense Collapse Rate (CCR), and Relative Prior De- pendency (RPD). Results show that e ven strong models re- main vulnerable to prior-driv en normalization under visual evidence–commonsense conflict. CDH-Bench provides a controlled diagnostic of visual fidelity under visual e vidence– commonsense conflict. Data are av ailable at: § GitHub and * Hugging Face. 1 Introduction “I see what I believe, not what I see. ” This classic human bias increasingly appears in modern VLMs. These models excel at standard image captioning and V isual Question & Answering (VQA) [ 2; 6; 10 ] , but reliability hinges on a harder scenario: what happens when visual evidence conflicts with commonsense? Consider an example in medical imaging: an X-ray re- veals an anatomical anomaly , such as a hand with six fin- gers. A competent radiologist should describe what the im- age actually shows (evidence) rather than what is normally expected (commonsense). Ho wev er , when the same image is presented to a VLM and asked, “How many fingers does the hand have?” the model may answer “five. ” This failure does not reflect difficulty in perception, but rather that common- sense ov errides visual e vidence. This is not the usual “object hallucination” studied in prior benchmarks [ 9; 13; 5 ] , where models fabricate objects ab- sent from the image. Instead, we study a different hallucina- tion mode: the rele v ant visual e vidence is present (and often salient), but the model still defaults to what is typically true. In other words, when e vidence and commonsense conflict, the output snaps back to the commonsense alternative rather than reflecting the image. W e call this commonsense-driven hallucination (CDH) : a prior -driv en normalization in which models report the commonsense alternative instead of the vi- sual evidence. A useful perspectiv e is that VLMs implicitly arbitrate between competing signals: a visual per ception channel grounded in the image and a commonsense-prior chan- nel that fa vors high-probability completions learned from a commonsense-dominated distribution. When these channels conflict, generation can behav e like semantic smoothing : gen- uine but commonsense-violating evidence is treated as noise and smoothed tow ard a commonsense semantic prior (e.g., six fingers to five; blue banana to yellow). This perspectiv e predicts the experimental result we observe: CDH persists ev en in strong frontier models and becomes especially visible when the commonsense alternativ e is e xplicitly presented. CDH matters most where anomalies matter: medical imag- ing, quality inspection, scientific discovery , and forensics. A system that “normalizes” unusual evidence can be dan- gerously confident while wrong. Y et current e v aluation paradigms are poorly suited to detect CDH. Most bench- marks use commonsense-consistent imagery , so visual evi- dence and commonsense priors typically agree [ 9; 13; 14; 5 ] . Hallucination benchmarks often suf fer from ambiguous error attribution: when a model answers incorrectly , it is un- clear whether it guessed from priors or truly misperceived the image. W e therefore need an ev aluation that creates conflict on purpose and supports unambiguous err or attrib ution . W e introduce CDH-Bench , a benchmark designed to sys- tematically ev aluate visual fidelity under visual evidence– commonsense conflict. Our contributions are: (1) Formalization of CDH. W e define commonsense- driven hallucination (CDH) as a distinct reliability failure pattern in which models override visual evidence with learned priors, distinguishing it from standard object fabrication. (2) The CDH-Bench. W e construct a diagnostic bench- mark consisting of 300 paired counterfactual–commonsense images, designed specifically for controlled comparison and unambiguous error attribution. (3) Systematic e valuation and scaling analysis. W e ev al- uate frontier VLMs under binary QA and multiple-choice QA settings, report metrics including CF-Acc , CS-Acc , CF AD , CCR , and RPD , and conduct a controlled analysis of Qwen3- VL Instruct and Thinking variants [ 16; 3 ] to examine ho w scale and reasoning style affect CDH susceptibility . 2 Related W ork General Ability Evaluation for V ision-Language Models. Standard VLM benchmarks ev aluate broad multimodal capa- bilities. VQA v2 [ 2 ] and GQA [ 6 ] test visual question answer- ing, which requires visual evidence from the input image. OK-VQA [ 11 ] requires external knowledge integration, while comprehensiv e benchmarks such as SEED-Bench [ 8 ] and MMBench [ 10 ] assess multiple dimensions of visual under- standing. These benchmarks have driv en substantial progress, with recent models approaching human performance on many tasks. Sev eral benchmarks probe specific perceptual abili- ties: T allyQA [ 1 ] ev aluates counting, CLEVR [ 7 ] tests com- positional reasoning and spatial understanding in synthetic scenes, and V A W [ 12 ] assesses attrib ute recognition across a large in v entory of visual concepts. These datasets are valu- able for measuring fine-grained perception, but the y typically test perception in settings where commonsense priors support the task rather than compete with it. Howe v er , most of them operate in regimes where visual evidence and commonsense priors are naturally aligned. Hallucination Evaluation for VLMs. Hallucination benchmarks measure how models generate content unsup- ported by visual input. POPE [ 9 ] uses binary existence ques- tions to ev aluate object hallucination, while CHAIR [ 13 ] measures hallucinated objects in image captions. MMHal- Bench [ 14 ] and HallusionBench [ 5 ] provide broader evalua- tions across spatial relations and attributes. Ho wev er , most prior work primarily targets fabrication hallucination . Our focus is different: we study normalization hallucination , where present visual evidence is overridden by the common- sense alternativ e. Evaluation with Counterfactual and Compositional Reasoning. Counterfactual VQA [ 4 ] synthesizes counterfac- tual questions to reduce language bias during training, typi- cally by altering the question while keeping the image fixed so that models cannot rely on superficial linguistic correla- tions alone. W inoground [ 15 ] probes visio-linguistic com- positionality with a carefully hand-curated setup of two im- ages and two captions, where the captions contain the same words but in different orders, requiring models to correctly align each image with its caption. These benchmarks are re- lated in spirit, but they differ from our setting. CDH-Bench constructs counterfactual visual content itself, making the visual evidence–commonsense conflict a direct property of the image rather than of the linguistic query alone. 3 Benchmark Design 3.1 Design Motivations CDH-Bench is motiv ated by critical gaps in existing ev alua- tion paradigms that underestimate a fundamental failure pat- tern in VLMs. The evaluation blind spot under commonsense- consistent distributions. Standard benchmarks such as VQA v2 [ 2 ] , GQA [ 6 ] , SEED-Bench [ 8 ] , and MMBench [ 10 ] are largely built on natural-image distributions in which visual evidence and commonsense priors usually agree. As a result, benchmark success does not clearly distinguish gen- uine visual grounding from reliance on statistical regularities that happen to match the test distribution. Fine-grained [ 7; 1 ] perception benchmarks test many of the same underlying skills, but typically under settings where commonsense priors are helpful rather than adversarial. This creates an e valuation blind spot: standard benchmarks effecti vely ask, Can the model r ecognize what is there under commonsense-consistent conditions? In contrast, CDH-Bench asks a more diagnostic question: Can the model still r eport what is there when visual evidence conflicts with commonsense? Fabrication vs. normalization hallucination. Existing hallucination benchmarks [ 9; 13 ] mainly target fabrication hallucination , where the model mentions entities, attributes, or relations that are absent from the image. CDH-Bench tar- gets a different failure pattern: the model ignores present vi- sual evidence and outputs the commonsense alternativ e as if the anomaly were noise. A six-fingered hand, for example, is not visually absent; it is visually present but conflicts with commonsense. CDH-Bench is designed to expose this nor- malization -style failure directly . The error attrib ution pr oblem. Many hallucination benchmarks also suffer from ambiguous error attribution. When a model answers incorrectly , the failure may come from weak perception, prior -based guessing, or confusion in- duced by the task format. W ithout a matched control con- dition, these sources are difficult to disentangle. By pairing each counterfactual image with a commonsense counterpart that preserves the ov erall scene while changing only the tar- get anomaly , CDH-Bench enables cleaner attribution of prior- driv en failure under visual e vidence–commonsense conflict. 3.2 Design Principles Building on these motiv ations, CDH-Bench is designed around four principles: Pair ed data design f or unambiguous attribution. Each counterfactual image is paired with a commonsense counter- part that differs only in the target anomaly . This controlled comparison enables stronger causal interpretation: if a model succeeds on the commonsense image but fails on the coun- terfactual image, the error is best explained as a prior-dri ven ov erride rather than a generic incapacity . Multi-dimensional coverage. W e cov er three fundamen- tal dimensions of commonsense: quantity , relation, and at- tribute. This enables us to analyze how prior-dri v en normal- ization behav es across dif ferent semantic aspects. T ask-controlled evaluation. W e employ two task formats to probe CDH under different lev els of cognitive competition. In binary QA , the question text explicitly contrasts the coun- terfactual visual evidence with its commonsense alternativ e, directly measuring whether the model can prioritize anoma- lous evidence within a minimal output space. Multiple-choice QA introduces a higher lev el of difficulty by explicitly pre- senting the commonsense alternativ e as a competiti ve answer choice, which is particularly ef fecti v e for diagnosing system- atic collapse to learned priors. Fine-grained error taxonomy . W e classify errors into commonsense err ors and other error s . This distinction, quan- tified through CCR, pro vides a sharper diagnostic signal than accuracy alone, where direct answer competition makes the interpretation most transparent. 3.3 Counterfactual Dimensions W e construct 600 images, org anized as 300 counterfactual– commonsense pairs. Figure 1 sho ws the taxonomy and distri- bution of CDH-Bench. Figure 1: T axonomy and distrib ution of CDH-Bench. Counting anomalies (100 pairs). This dimension focuses on unusual but visually unambiguous quantities that conflict with biological or structural commonsense, spanning cases such as six-fingered hands, animals with extra legs, and ev- eryday objects with atypical component counts. Relational anomalies (100 pairs). This dimension fo- cuses on inv erted relations between entities that violate typi- cal behavioral or physical scripts, spanning behavioral rev er- sals (e.g., mice hunting cats), spatial in v ersions (e.g., upside- down houses), size re v ersals, and causal re versals. Attribute anomalies (100 pairs). This dimension fo- cuses on objects possessing properties that violate fundamen- tal physical expectations, spanning color anomalies (e.g., blue bananas), material anomalies (e.g., transparent wood), tem- perature conflicts, and atypical physical states. 3.4 Image Generation and Quality Control W e generate images using strong text-to-image models with prompts engineered to counteract the generator’ s common- sense bias. In particular , prompts are designed to make the in- tended anomaly necessary rather than optional by combining: (1) explicit structural constraints (e.g., exact counts, left-to- right enumeration, symmetry/asymmetry requirements, and positional anchors such as “center” or “above”), (2) decom- posed anatomical/r elational descriptions (e.g., specifying per-part attributes like nails/knuckles/iris detail and clarify- ing spatial relations among parts), and (3) physical support cues (e.g., viewpoint, lens/shot type, lighting, depth-of-field, and photorealistic te xture) to stabilize realism and reduce un- intended artifacts. For each anomaly type, we construct a matched common- sense counterpart by keeping composition, vie wpoint, subject identity , background, and photographic style as consistent as possible, while modifying only the minimal attribute required by the counterfactual (typically a single count-based change). binary QA : Does the mouth have 2 rows of teeth and not 3 rows? multi-choice QA : How many rows of teeth are visible? Options: A. 3 rows, B. 4 rows, C. 2 ro ws, D. 1 row Category : Counting - Body Parts binary QA : Does the chicken hav e 2 wings and not 6 wings? multi-choice QA : How many wings does this chicken have? Options: A. 4 wings, B. 6 wings, C. 2 wings, D. 8 wings Category : Counting - Animal Parts binary QA : Is the whale swimming in the ocean and not in a fish tank? multi-choice QA : What is unusual about the whale’s en vironment? Options: A. ..., B. The whale is contained in a small fish tank, C. The whale is swimming in the ocean normally , D. ... Category : Relational - Size Scale binary QA : Is the foam light and airy and not dense and heavy? multi-choice QA : What is the density of this foam? Options: A. Dense and heavy , B. Medium, C. Solid, D. Light and airy Category : Attribute - Material Figure 2: CDH-Bench sho wcase: examples from diverse dimen- sions. Each subfigure displays a counterfactual (CF) image on the left and its commonsense (CS) counterpart on the right, together with the associated binary QA and multi-choice QA ev aluation de- tails. W e also add mild redundancy in the text (e.g., stating “exactly k ” and explicitly listing parts) to improv e controllability and make the anomaly salient under realistic rendering. Quality control includes: (i) human v erification to con- firm that the anomaly is unambiguous and visually central, and that the paired commonsense image is normal with no additional unexpected anomalies; (ii) pair -level matching to ensure the counterfactual and commonsense images are closely aligned in pose, illumination, and ov erall style. 3.5 T ask Formats and Ev aluation W e ev aluate each image pair under two task formats with format-specific scoring protocols. Although the data is or - ganized into counterfactual–commonsense pairs, each image is processed independently during inference: the model re- ceiv es only a single image as input (either a counterfactual image or a commonsense image) without any access to its paired counterpart. Binary QA. This format uses compositional yes/no ques- tions that explicitly contrast the counterfactual target with its commonsense alternati ve (e.g., “Does the mouth have 2 rows of teeth and not 3 rows?”). Crucially , this design enables a unified query for both counterfactual (CF) and commonsense (CS) images within each pair . By maintaining an identical question text across both conditions, we eliminate potential linguistic biases or prompt-induced variances that could oth- erwise confound the comparison. This ensures that perfor- mance gaps and relati ve metrics are strictly attributable to the visual–commonsense conflict in the imagery rather than dif- ferences in the textual phrasing, providing a more rigorous measure of visual fidelity . Multiple-Choice QA. Each question contains four answer options: one correct answer for the current counterfactual im- age, one commonsense alternative, and two additional plau- sible distractors. The option order is randomly shuffled for each instance to av oid positional bias. This format is espe- cially diagnostic because it makes the commonsense alterna- tiv e explicit and places it in direct competition with the visu- ally correct answer . For both formats, we ev aluate performance separately on CF and CS images and then deriv e metrics that characterize robustness under visual evidence–commonsense conflict as well as some error attrib ution metrics across paired instances. Each of the 300 image pairs is ev aluated under two image conditions (counterfact ual and commonsense) and two task formats, yielding 300 × 2 × 2 = 1 , 200 ev aluated instances in total. 4 Evaluation Metrics W e design metrics around three concrete questions that stan- dard accuracy alone cannot disentangle. For each question, we introduce the metric(s) that directly answer it. Q1: How well does a model perform under visual evidence–commonsense conflict? Counterfactual Accuracy (CF-Acc). CF-Acc is the ac- curacy on counterfactual (CF) images, and is our primary measure of visual fidelity under conflict. It directly answers Q1 by measuring whether the model follows visual evidence when that evidence conflicts with commonsense. Q2: How large is the collapse relativ e to normal- condition capability? Commonsense Accuracy (CS-Acc). CS-Acc is the accurac y on matched commonsense (CS) counterparts. It measures the model’ s general multimodal capabilities, specifically its abil- ity to perform basic visual observation and standard common- sense reasoning in settings where visual evidence and priors are naturally aligned. This serves as a necessary baseline and capability control under non-conflicting conditions. Counterfactual Accur acy Dr op (CF AD). W e define the ac- curacy drop from the commonsense condition to the counter- factual condition as CF AD = CS-Acc − CF-Acc . CF AD answers Q2 by quantifying the absolute degradation under visual evidence–commonsense conflict (lar ger positi ve CF AD indicates stronger degradation). Relative Prior Dependency (RPD). T o compare collapse strength across models with dif ferent baselines, we normalize CF AD by CS-Acc : RPD = CF AD CS-Acc . RPD also answers Q2 , but in relativ e terms: of what the model can do when visual e vidence and commonsense agree, how much is lost when the y conflict? Q3: When the model fails on counterfactual images, does it fail specifically by r everting to the commonsense alternativ e? Error classification. When a model produces an incorrect answer on a counterfactual image, we classify the error as (1) a commonsense err or if the response matches the common- sense alternativ e, and (2) an other err or otherwise. Commonsense Collapse Rate (CCR). CCR measures the fraction of CF errors that collapse specifically to the com- monsense alternativ e: CCR = # Commonsense-Aligned Errors on CF Images # T otal Errors on CF Images . CCR answers Q3 by measuring how often counterfactual fail- ures are specifically commonsense-aligned rather than arbi- trary . In binary QA, this corresponds to predicting the re- sponse consistent with the CS counterpart on a CF image. In multiple-choice QA, this corresponds to selecting the ex- plicit commonsense alternativ e among the answer options. Although CCR is definable in both formats, we report it in the main tables only for multiple-choice QA, where direct answer competition makes the interpretation more transparent. 5 Experiments 5.1 Models Evaluated W e e v aluate eight frontier multimodal systems in the main comparison: gemini-3.1-pr o-pre view , gemini-3.1-flash-lite- pr evie w , doubao-seed-1.8-251228 , qwen3.5-plus , kimi-k2.5 , gemini-2.5-flash-all , gpt-5.4-mini , and gpt-5.4 . For controlled family analysis, we further ev aluate Qwen3- VL v ariants and di vide them into two groups: Instruct and Thinking [ 16; 3 ] . This setup allows us to compare not only performance across scales, but also the effect of reasoning style within a shared model family . Unless stated otherwise, all models are ev aluated under a unified protocol: identical prompts per task format, fixed de- coding settings where applicable, and deterministic answer extraction for multiple-choice QA. 5.2 Evaluation Granularity and Aggr egation W e report three le vels of analysis. T ask-level aggregation. For the main comparison, we report two task-specific vie ws: QA (binary QA) and MC (multiple-choice QA). For each task, we provide both overall results and category-level breakdowns over Attribute Anoma- lies , Counting Anomalies , and Relational Anomalies . Subcategory-level diagnosis. Be yond the primary cate- gories, we further analyze fine-grained subcate gories to iden- tify which anomaly types remain consistently difficult across models. This analysis is especially useful because category- lev el av erages can hide concentrated bottlenecks such as body-part counting. Family-le vel scaling and reasoning analysis. For the Qwen3-VL study , we merge QA and MC into a unified com- parison table and report metrics grouped by task and ov er - all aggregation. This presentation makes it easier to com- pare scale effects and reasoning-style effects within a shared model family . 5.3 Main Results T able 1 presents the category-le v el results on CDH-Bench, while Figures 3 – 6 visualize model performance in terms of counterfactual accuracy and the accuracy drop from com- monsense to counterfactual conditions. Figure 3: Model performance comparison on counterfactual accu- racy (CF-Acc) across dif ferent subcategories in the binary QA task. Figure 4: Counterfactual Accuracy Drop (CF AD) across subcate- gories in the binary QA task. Figure 5: Model performance comparison on counterfactual accu- racy (CF-Acc) across dif ferent subcategories in the multiple-choice QA task. CDH induces a consistent and substantial rob ustness gap across models and task formats. For nearly all ev alu- ated models, accuracy on counterfactual images is lower than Figure 6: Counterfactual Accuracy Drop (CF AD) across subcate- gories in the multiple-choice QA task. on matched commonsense controls, and this pattern holds in both binary QA and multiple-choice QA. Concretely , 7 out of 8 models show lower overall CF-Acc than CS-Acc in both settings; the only partial exception is gemini-3.1-pr o- pr evie w , which exhibits a slightly negati v e CF AD in QA but still sho ws a 7.33% drop in MC. A v eraged across models, the ov erall CF AD is 16.39% in QA and increases to 25.20% in MC, while mean CF-Acc decreases from 75.24% to 66.90% . These results suggest that CDH is not an isolated failure mode of a fe w weak systems, but a systematic robustness challenge that persists across model families. Multiple-choice QA further amplifies prior-dri ven fail- ures. Compared with binary QA, MC generally yields both lower CF-Acc and larger CF AD , indicating that when coun- terfactual evidence must directly compete with a plausible commonsense alternativ e, models are more likely to rev ert to prior-consistent answers. On average, moving from QA to MC lo wers CF-Acc by 8.34 points and increases CF AD by 8.81 points. This trend is especially pronounced for mod- els such as kimi-k2.5 and gpt-5.4-mini , which see their CF AD values rise from 17.67% to 29.33% and from 29.33% to 41.00%, respectively . T aken together , the QA–MC contrast suggests that CDH is not only a robustness issue under uncer- tainty , but also a comparativ e reasoning failure that emerges when image-grounded counterfactual evidence must ov erride a strong commonsense prior . Model-level comparisons further suggest that smaller degradation does not necessarily imply stronger counter - factual understanding, and that CDH-Bench exposes a structured failure landscape beyond aggregate accuracy . For instance, GPT -5.4 is weakest on Counting / Body P arts in both QA and MC, whereas doubao-seed-1.8-251228 remains particularly fragile on T emperatur e . At the same time, relati v e robustness metrics such as CF AD should be interpreted jointly with performance on matched commonsense controls: among the stronger baselines, gemini-3.1-pr o-pr eview exhibits the smallest overall CF AD in QA, but it also attains the lowest ov erall CS-Acc among the frontier models evaluated (e.g., 87.67% in QA). Thus, its smaller degradation may reflect weaker commonsense-to-counterfactual collapse rather than clear absolute superiority in counterfactual visual grounding. A plausible explanation is that, in our binary QA setting, the question text simultaneously contains a strong common- sense prior (e.g., “normal” expectations) and an explicit coun- terfactual claim grounded in the image. Models that are more conservati v e or refusal-prone under potential inconsistency may answer “no” (or abstain) more often even on common- sense controls, lowering CS-Acc and mechanically shrinking the measured CF AD . Overall, CDH-Bench rev eals a pervasiv e and funda- mental vulnerability across current frontier VLMs, where even the strongest systems fail to prioritize visual evi- dence over entrenched priors. The primary importance of this benchmark lies not merely in reporting lower accuracy scores, but in pro viding a rigorous diagnostic frame work that aggregate metrics alone cannot of fer . By decoupling prior- driv en collapse from generic perception errors through its paired counterfactual–commonsense design, CDH-Bench en- ables a structured analysis of failure modes across diverse semantic dimensions. Furthermore, the introduction of spe- cialized metrics such as CF AD, CCR, and RPD allows for unambiguous error attribution, re vealing that model failures are often systematic normalizations to w ard the commonsense prototype rather than random noise. These findings establish CDH-Bench as a critical tool for identifying the hidden relia- bility gaps in multimodal systems and highlight the necessity of e v aluating visual fidelity under explicit visual e vidence– commonsense conflict to guide the dev elopment of more ro- bust vision-language models. 5.4 Scale Study: Do Scale and Reasoning Style Mitigate CDH? Motivation. A key question is whether model scale and rea- soning style mitigate commonsense-driv en hallucination un- der counterfactual visual evidence. A natural hypothesis is that larger models should achiev e stronger visual discrimina- tion, while reasoning-oriented variants may better preserve fi- delity when visual e vidence conflicts with learned priors. W e test this hypothesis within the Qwen3-VL family . Models and reporting . W e study two Qwen3-VL se- ries [ 16; 3 ] : Instruct and Thinking. T able 2 reports binary QA, multiple-choice QA, and overall results in a unified lay- out, and Figures 7 and 8 visualize the scaling trends for MC and QA, respectiv ely . Scale helps clearly in the Instruct series, but remains less stable in the Thinking series. Within the Instruct se- ries, overall CF-Acc rises monotonically from 36.17% (2B) to 43.17% (4B), 43.50% (8B), and 50.83% (32B). Thus, larger scale is generally beneficial for the Instruct models, although the gains are modest between some intermediate sizes. By contrast, the dense Thinking series is less mono- tonic at smaller and medium scales: o verall CF-Acc changes from 53.17% (2B) to 51.17% (4B) and 51.50% (8B), before improving substantially to 58.41% at 32B. Therefore, scale alone does not guarantee a smooth reduction in CDH suscep- tibility , especially within the Thinking line. Reasoning style impro ves accuracy more consistently than collapse-oriented error metrics. Although Think- ing models consistently raise CF-Acc , their advantages on collapse-oriented metrics such as CCR and RPD are less uni- form. For example, at 4B and 32B, Thinking slightly reduces T able 1: Main results on CDH-Bench. Higher CF-Acc and CS-Acc are better; lower CF AD , CCR , and RPD are better . T o emphasize failure analysis rather than leaderboard ranking, selected notably weak results are marked in red. CCR is reported only for Multiple- choice QA in the main table. All values are percentages. Gray rows denote Overall results. Model Cat. Binary QA Multiple-choice QA CF ↑ CS ↑ CF AD ↓ RPD ↓ CF ↑ CS ↑ CF AD ↓ CCR ↓ RPD ↓ gemini-3.1-pro-previe w Overall 90.33% 87.67% -2.67% -3.04% 81.67% 89.00% 7.33% 58.18% 8.24% Attr . 85.00% 83.00% -2.00% -2.41% 84.00% 93.00% 9.00% 81.25% 9.68% Count. 96.00% 87.00% -9.00% -10.34% 79.00% 80.00% 1.00% 42.86% 1.25% Rel. 90.00% 93.00% 3.00% 3.23% 82.00% 94.00% 12.00% 55.56% 12.77% gemini-3.1-flash-lite-previe w Overall 83.67% 91.33% 7.67% 8.39% 71.67% 94.33% 22.67% 71.76% 24.03% Attr . 78.00% 86.00% 8.00% 9.30% 69.00% 93.00% 24.00% 77.42% 25.81% Count. 94.00% 93.00% -1.00% -1.08% 69.00% 94.00% 25.00% 58.06% 26.60% Rel. 79.00% 95.00% 16.00% 16.84% 77.00% 96.00% 19.00% 82.61% 19.79% doubao-seed-1.8-251228 Overall 76.67% 91.67% 15.00% 16.36% 64.67% 91.67% 27.00% 77.36% 29.45% Attr . 70.00% 86.00% 16.00% 18.60% 54.00% 94.00% 40.00% 86.96% 42.55% Count. 80.00% 93.00% 13.00% 13.98% 71.00% 88.00% 17.00% 65.52% 19.32% Rel. 80.00% 96.00% 16.00% 16.67% 69.00% 93.00% 24.00% 74.19% 25.81% qwen3.5-plus Overall 75.87% 93.98% 18.11% 19.27% 74.37% 95.55% 21.18% 81.69% 22.17% Attr . 75.51% 88.00% 12.49% 14.19% 75.51% 93.94% 18.43% 95.83% 19.62% Count. 77.78% 96.97% 19.19% 19.79% 74.70% 95.88% 21.18% 66.67% 22.09% Rel. 74.49% 97.00% 22.51% 23.21% 72.92% 96.88% 23.96% 80.77% 24.73% kimi-k2.5 Overall 75.67% 93.33% 17.67% 18.93% 64.67% 94.00% 29.33% 85.85% 31.21% Attr . 71.00% 89.00% 18.00% 20.22% 69.00% 94.00% 25.00% 96.77% 26.60% Count. 76.00% 99.00% 23.00% 23.23% 47.00% 93.00% 46.00% 79.25% 49.46% Rel. 80.00% 92.00% 12.00% 13.04% 78.00% 95.00% 17.00% 86.36% 17.89% gemini-2.5-flash-all Overall 72.00% 88.33% 16.33% 18.49% 66.00% 87.33% 21.33% 74.51% 24.43% Attr . 62.00% 80.00% 18.00% 22.50% 58.00% 92.00% 34.00% 83.33% 36.96% Count. 81.00% 92.00% 11.00% 11.96% 65.00% 76.00% 11.00% 74.29% 14.47% Rel. 73.00% 93.00% 20.00% 21.51% 75.00% 94.00% 19.00% 60.00% 20.21% gpt-5.4-mini Overall 64.00% 93.33% 29.33% 31.43% 53.33% 94.33% 41.00% 78.57% 43.46% Attr . 65.00% 90.00% 25.00% 27.78% 53.00% 94.00% 41.00% 91.49% 43.62% Count. 65.00% 95.00% 30.00% 31.58% 43.00% 91.00% 48.00% 66.67% 52.75% Rel. 62.00% 95.00% 33.00% 34.74% 64.00% 98.00% 34.00% 80.56% 34.69% gpt-5.4 Overall 63.67% 93.33% 29.67% 31.79% 58.86% 94.65% 35.79% 73.17% 37.81% Attr . 68.00% 86.00% 18.00% 20.93% 58.00% 97.00% 39.00% 88.10% 40.21% Count. 61.00% 97.00% 36.00% 37.11% 48.00% 90.00% 42.00% 53.85% 46.67% Rel. 62.00% 97.00% 35.00% 36.08% 70.71% 96.97% 26.26% 86.21% 27.08% Attr . = Attribute; Count. = Counting; Rel. = Relational. CCR is reported only for multiple-choice QA in the main table. Red numbers indicate selected notably weak results to facilitate failure analysis. MC CCR relati ve to Instruct, but at 8B it yields a higher CCR (89.33% vs. 78.74%). Similarly , improv ements in RPD are clearer at some scales than others. This suggests that reasoning-oriented generation reliably improv es success rates under conflict, but does not uniformly change the exact form of model failures. 6 Conclusion W e formalize commonsense-driven hallucination (CDH) and introduce CDH-Bench , a paired counterfactual-image benchmark that makes prior-driv en normalization measur- able and attrib utable. Across frontier VLMs, we observe large and systematic collapses from commonsense-consistent to counterfactual imagery , revealing a pervasi ve and funda- mental vulnerability where even the strongest systems fail to prioritize visual evidence ov er entrenched priors. By de- coupling prior -dri ven collapse from generic perception errors through specialized metrics such as CF AD , CCR , and RPD , we demonstrate that model failures are often systematic nor- malizations toward commonsense priors rather than random noise. Our experiments show that CDH is not a uniform phe- nomenon. At the category lev el, counting anomalies are the most persistent source of degradation, especially in multiple- choice QA where explicit competition with commonsense alternativ es triggers stronger collapse. At the subcategory T able 2: Results of the Qwen3-VL series on CDH-Bench. All values are reported as percentages. Higher CF-Acc and CS-Acc are better; lower CF AD, CCR, and RPD are better . W e do not highlight best values; instead, selected notably weak results are mark ed in red. The Overall columns are shaded for readability . Model Binary QA Multiple-choice QA Overall CF (%) CS (%) CF AD (%) RPD (%) CF (%) CS (%) CF AD (%) CCR (%) RPD (%) CF (%) CS (%) CF AD (%) CCR (%) RPD (%) Qwen3-VL-2B-Instruct 40.33 87.67 47.33 53.99 32.00 74.00 42.00 73.53 56.76 36.17 80.83 44.67 86.76 55.37 Qwen3-VL-4B-Instruct 52.00 91.67 39.67 43.27 34.33 89.33 55.00 84.77 61.57 43.17 90.50 47.33 92.39 52.42 Qwen3-VL-8B-Instruct 45.00 93.67 48.67 51.96 42.00 93.67 51.67 78.74 55.16 43.50 93.67 50.17 89.37 53.56 Qwen3-VL-32B-Instruct 55.67 93.33 37.67 40.36 46.00 92.67 46.67 84.57 50.36 50.83 93.00 42.17 92.28 45.36 Qwen3-VL-2B-Thinking 58.00 91.33 33.33 36.50 48.33 93.00 44.67 77.42 48.03 53.17 92.17 39.00 88.31 42.26 Qwen3-VL-4B-Thinking 55.00 92.67 37.67 40.65 47.33 95.00 47.67 84.18 50.18 51.17 93.83 42.67 92.09 45.41 Qwen3-VL-8B-Thinking 53.00 93.33 40.33 43.21 50.00 95.67 45.67 89.33 47.74 51.50 94.50 43.00 94.67 45.47 Qwen3-VL-32B-Thinking 56.37 94.57 38.20 40.40 60.46 93.72 33.26 83.07 35.49 58.41 94.15 35.73 91.53 37.94 Figure 7: Model size vs. performance metrics for the MC task on CDH-Bench. The plot shows the scaling behavior of CF-Acc, CF AD, and CS-Acc for Qwen3-VL Instruct and Thinking models. Figure 8: Model size vs. performance metrics for the QA task on CDH-Bench. The plot shows the scaling behavior of CF-Acc, CF AD, and CS-Acc for Qwen3-VL Instruct and Thinking models. lev el, the most dif ficult cases concentrate around biologi- cal part counting, physical-state anomalies, and causal rev er- sal, indicating that both fine-grained perception and higher- lev el semantic reasoning contribute to failure. Our controlled Qwen3-VL study further suggests that reasoning-oriented variants generally improve robustness to CDH, especially at small and medium scales. Howe ver , larger models do not re- liably eliminate prior-driv en normalization, and the hardest subcategory-le v el bottlenecks remain visible ev en in large- scale systems. Overall, CDH-Bench provides a focused diagnostic for vi- sual fidelity under visual evidence–commonsense conflict and highlights a central limitation of current VLMs: they often know what is commonsense more reliably than they report what is actually present. By providing a rigorous framework for unambiguous error attribution, CDH-Bench serves as a critical tool for identifying hidden reliability gaps and guiding the dev elopment of multimodal systems that remain faithful to visual evidence e v en when it conflicts with learned priors. References [ 1 ] Manoj Acharya, Kushal Kafle, and Christopher Kanan. T allyqa: Answering complex counting questions. In Pr oceedings of the AAAI Conference on Artificial Intel- ligence , v olume 33, pages 8076–8084, 2019. [ 2 ] Stanislaw Antol, Aishwarya Agrawal, Jiasen Lu, Mar - garet Mitchell, Dhruv Batra, C Lawrence Zitnick, and Devi P arikh. VQA: V isual question answering. In Pr o- ceedings of the IEEE International Conference on Com- puter V ision , pages 2425–2433, 2015. [ 3 ] Shuai Bai, Y uxuan Cai, Ruizhe Chen, Keqin Chen, Xionghui Chen, Zesen Cheng, Lianghao Deng, W ei Ding, Chang Gao, Chunjiang Ge, et al. Qwen3-vl tech- nical report. arXiv preprint , 2025. [ 4 ] Long Chen, Xin Y an, Jun Xiao, Hanwang Zhang, Shil- iang Pu, and Y ueting Zhuang. Counterfactual samples synthesizing for robust visual question answering. In Pr oceedings of the IEEE/cvf Confer ence on Computer V ision and P attern Recognition , pages 10800–10809, 2020. [ 5 ] T ianrui Guan, Fuxiao Liu, Xiyang W u, Ruiqi Xian, Zongxia Li, Xiaoyu Liu, Xijun W ang, Lichang Chen, Furong Huang, Y aser Y acoob, et al. Hallusionbench: an advanced diagnostic suite for entangled language hal- lucination and visual illusion in large vision-language models. In Pr oceedings of the IEEE/cvf Confer ence on Computer V ision and P attern Recognition , pages 14375–14385, 2024. [ 6 ] Drew A Hudson and Christopher D Manning. GQA: A ne w dataset for real-world visual reasoning and com- positional question answering. In Pr oceedings of the IEEE/cvf Conference on Computer V ision and P attern Recognition , pages 6700–6709, 2019. [ 7 ] Justin Johnson, Bharath Hariharan, Laurens V an Der Maaten, Li Fei-Fei, C Lawrence Zitnick, and Ross Girshick. Clevr: A diagnostic dataset for compositional language and elementary visual reasoning. In Pr oceed- ings of the IEEE/cvf Confer ence on Computer V ision and P attern Recognition , pages 2901–2910, 2017. [ 8 ] Bohao Li, Rui W ang, Guangzhi W ang, Y uying Ge, Y ix- iao Ge, and Y ing Shan. Seed-bench: Benchmarking multimodal llms with generative comprehension. arXiv pr eprint arXiv:2307.16125 , 2023. [ 9 ] Y ifan Li, Y ifan Du, Kun Zhou, Jinpeng W ang, W ayne Xin Zhao, and Ji-Rong W en. Evaluating object hallucination in large vision-language models. In Pro- ceedings of the 2023 Confer ence on Empirical Methods in Natural Languag e pr ocessing , pages 292–305, 2023. [ 10 ] Y uan Liu, Haodong Duan, Y uanhan Zhang, Bo Li, Songyang Zhang, W angbo Zhao, Y ike Y uan, Jiaqi W ang, Conghui He, Ziwei Liu, et al. MMbench: Is your multi-modal model an all-around player? In Eu- r opean Confer ence on Computer ision , pages 216–233. Springer , 2024. [ 11 ] Kenneth Marino, Mohammad Rastegari, Ali Farhadi, and Roozbeh Mottaghi. Ok-vqa: A visual question answering benchmark requiring external knowledge. In Pr oceedings of the IEEE/cvf Conference on Com- puter V ision and P attern Recognition , pages 3195– 3204, 2019. [ 12 ] Khoi Pham, Kushal Kafle, Zhe Lin, Zhihong Ding, Scott Cohen, Quan T ran, and Abhinav Shriv asta v a. Learning to predict visual attributes in the wild. In Pr oceedings of the IEEE/cvf Confer ence on Computer V ision and P at- tern Recognition , pages 13018–13028, 2021. [ 13 ] Anna Rohrbach, Lisa Anne Hendricks, Kaylee Burns, T re vor Darrell, and Kate Saenko. Object hallucination in image captioning. In Pr oceedings of the 2018 Confer- ence on Empirical Methods in Natural Language Pr o- cessing , pages 4035–4045, 2018. [ 14 ] Zhiqing Sun, Sheng Shen, Shengcao Cao, Haotian Liu, Chunyuan Li, Y ikang Shen, Chuang Gan, Liangyan Gui, Y u-Xiong W ang, Y iming Y ang, et al. Aligning large multimodal models with factually augmented rlhf. In F indings of the Association for Computational Lin- guistics: A CL 2024 , pages 13088–13110, 2024. [ 15 ] T ristan Thrush, Ryan Jiang, Max Bartolo, Amanpreet Singh, Adina W illiams, Douwe Kiela, and Candace Ross. W inoground: Probing vision and language mod- els for visio-linguistic compositionality . In Pr oceedings of the IEEE/cvf Confer ence on Computer V ision and P attern Recognition , pages 5238–5248, 2022. [ 16 ] An Y ang, Anfeng Li, Baosong Y ang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bo wen Y u, Chang Gao, Chen- gen Huang, Chenxu Lv , et al. Qwen3 technical report. arXiv pr eprint arXiv:2505.09388 , 2025. A Case Study: Counting Anomaly (Pair 5) A.1 Case Description This case belongs to the Counting Anomalies category (Subcate gory: Body Parts ). The image pair compares a normal person showing hands (Commonsense) with an anomalous v ersion where one hand has an e xtra finger (Counterfactual). (a) Commonsense: Both hands have 5 fingers. (b) Counterf actual: Left hand has 5 fingers, right hand has 6 fingers. Figure 9: Images for Pair 5. The anomaly is an extra finger on the right hand (person ´ s perspectiv e). A.2 Model Responses Qwen3-VL-2B-Thinking Commonsense Images in the Binary QA Setting Question: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? Status: ✓ Correct Response: So, let’ s check the image. The person has both hands visible. Each hand has 5 fingers. The left hand and right hand both hav e 5 fingers each. The question is asking if it shows both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers. W ait, the question is phrased as ”Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers?” So we need to see if the image has both hands with 5 fingers each, and that it’ s not the case that left has 5 and right has 6. But in the image, both hands hav e 5 fingers each. So the statement is ”both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers”. W ait, the question is a bit confusing. Let’ s parse it. The question is: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? So we need to check if the image has both hands with 5 fingers each, and that it’... [T runcated for length] Counterfactual Images in the Binary QA Setting Question: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? Status: ✗ Incorrect Thinking Process: So, let’ s c heck the imag e. The person is showing both hands. Each hand has 5 finger s. Let’s count: left hand (fr om the viewer’ s perspective, the right side of the image) has 5 fingers, and the right hand (left side of the image) also has 5 fingers. W ait, the question says ”not the left hand with 5 fingers and right hand with 6 fingers”. So we need to see if both hands have 5 fingers each. In the image, both hands ar e showing 5 fingers eac h. So the answer is yes. Final Answer: yes Commonsense Images in the Multiple-Choice QA Setting Question: What is unusual about the hands in this image? A. Left hand has 5 fingers and right hand has 6 fingers B. Both hands hav e 5 fingers C. Both hands hav e 6 fingers D. Left hand has 6 fingers and right hand has 4 fingers Status: ✓ Correct Thinking Process: Got it, let’ s look at the image. The person is showing their hands, and we need to count the fingers. Let’s check each hand. The right hand: let’ s count. Fr om the image, the right hand has 5 fingers? W ait, no, wait. W ait, the left hand: let’s see. W ait, the right hand—wait, the person’ s right hand (fr om the viewer’ s perspective) is on the left side of the image. W ait, no, the person is facing forward. So the right hand (the one on the right side of the imag e) is the person’ s right hand. Let’s count the fingers. The right hand: how many fingers? Let’s see. The right hand has 5 fingers? W ait, no, wait. W ait, the left hand (the one on the left side of the image) is the person’ s left hand. Let’s count the left hand: the left hand has 5 fingers? W ait, no, wait. W ait, the image shows both hands. Let’s count each hand. The right hand (person’ s right) has 5 fingers? W ait, no, wait. W ait, the person’s right hand: let’s count. The right hand has 5 fingers? W ait, no, wait. W ait, the left hand (pe... [T runcated for length] Final Answer: B Counterfactual Images in the Multiple-Choice QA Setting Question: What is unusual about the hands in this image? A. Left hand has 5 fingers and right hand has 6 fingers B. Both hands hav e 5 fingers C. Both hands hav e 6 fingers D. Left hand has 6 fingers and right hand has 4 fingers Status: ✗ Incorrect Response: Got it, let’ s look at the image. The person is showing their hands. Let’ s count the fingers. On the left hand, how many fingers? Let’ s see: thumb, index, middle, ring, pinky . W ait, the left hand in the image—wait, no, the right hand and left hand. W ait, the right hand: let’ s count. The right hand has fingers: thumb, index, middle, ring, pinky—so 5? W ait, no, wait. W ait, the left hand: let’ s check. W ait, the person’ s hands—w ait, the right hand (from the viewer’ s perspecti ve) has 5 fingers? W ait, no, wait. W ait, the image: the right hand (the one on the right side of the image) has 5 fingers? W ait, no, wait. W ait, the left hand (left side of the image) has 5 fingers? W ait, no, wait. W ait, let’ s count. The right hand: thumb, index, middle, ring, pinky—so 5. The left hand: thumb, index, middle, ring, pinky—wait, no, wait. W ait, no, wait. W ait, the left hand in the image: let’ s count. The left hand (the one on the left side of the image) has 5 fingers? W ait, no, wait. W ait, no, wait. W ait, ... [Truncated for length] Qwen3-VL-30B-A3B-Thinking Commonsense Images in the Binary QA Setting Question: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? Status: ✓ Correct Thinking Process: Got it, let’s chec k the ima ge. The person has both hands raised. Let’s count the fingers on each hand. Left hand: 5 fingers, right hand: 5 finger s. So the question is asking if both hands have 5 fingers eac h, not one hand with 6. So the answer is yes. Final Answer: yes Counterfactual Images in the Binary QA Setting Question: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? Status: ✗ Incorrect Thinking Process: So, let’s c heck the image. The person has both hands raised. Let’s count the fingers on each hand. Left hand: 5 fingers. Right hand: 5 fingers. So both have 5 each. The question is asking if it’s showing both with 5, not left 5 and right 6. So the answer is yes. Final Answer: yes Commonsense Images in the Multiple-Choice QA Setting Question: What is unusual about the hands in this image? A. Left hand has 5 fingers and right hand has 6 fingers B. Both hands hav e 5 fingers C. Both hands hav e 6 fingers D. Left hand has 6 fingers and right hand has 4 fingers Status: ✗ Incorrect Thinking Process: Got it, let’s look at the image. The person’ s hands—each hand should have 5 fingers, but wait, no. W ait, the right hand (from the viewer’ s perspective, the person’ s left hand) and left hand. W ait, no, let’ s count. The right hand (the per son’ s right hand) has 5 fingers, and the left hand (person’ s left) also has 5? W ait no, wait. W ait, the image shows both hands. W ait, no, the question is what’s unusual. W ait, no, in the image, each hand has 5 fingers? W ait no, wait, no—wait, no, the correct answer is C? W ait no. W ait, no, let’s check. W ait, the options: A says left has 5, right has 6. B both 5. C both 6. D left 6, right 4. W ait, no, in the image, the hands—wait, no, the person’s hands. W ait, no, the image shows that each hand has 5 finger s? W ait no, no, wait, no. W ait, no, the correct answer is C? W ait, no, wait. W ait, no, the image—wait, no, the person’ s hands. W ait, no, the answer is C? W ait, no, let’s count. The right hand (the person’ s right hand) has 5 fingers, left hand also 5. B... [T runcated for length] Final Answer: C Counterfactual Images in the Multiple-Choice QA Setting Question: What is unusual about the hands in this image? A. Left hand has 5 fingers and right hand has 6 fingers B. Both hands hav e 5 fingers C. Both hands hav e 6 fingers D. Left hand has 6 fingers and right hand has 4 fingers Status: ✗ Incorrect Thinking Process: Got it, let’ s look at the image. The person’s hands—each hand should have 5 fingers, but wait, no. W ait, the left hand (fr om the viewer’ s perspective, the person’ s right hand) and right hand. W ait, no, let’s count. The left hand (the person’s left hand) has 6 fingers? W ait, no. W ait, the image shows both hands. Let’s check: each hand—wait, the left hand (the person’s left) has 6 fingers? W ait, no, the right hand (person’s right) and left hand. W ait, the correct answer: the left hand (person’s left) has 6 fingers? W ait, no. W ait, the options: A says left hand 5, right 6. Let’s count. The right hand (the person’ s right hand) has 6 fingers? W ait, no. W ait, the image—each hand. W ait, the person’ s left hand (the one on the right side of the image) has 6 fingers? W ait, no. W ait, the correct answer is C? No. W ait, no. W ait, the hands: let’s count. The left hand (the person’ s left) has 6 fingers? W ait, no. W ait, the image shows both hands. Let’s count each hand. The right hand (person’s right)... [T runcated for length] Final Answer: C Qwen3-VL-32B-Thinking Commonsense Images in the Binary QA Setting Question: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? Status: ✓ Correct Thinking Process: So, let’ s c heck the image . The person has both hands raised. Let’ s count the fing ers on eac h hand. Left hand (fr om the viewer’ s perspective, the right side of the image) – wait, no, the person’ s left and right. W ait, the person’ s right hand (our left) and left hand (our right). Let’ s count: right hand (person’ s right) has 5 fingers: thumb, index, middle, ring, pinky . Left hand (person’s left) also has 5 fingers. So both hands have 5 fingers eac h. The question is asking if it’s both hands with 5 each, not left with 5 and right with 6. So yes, both have 5. So the answer is yes. Final Answer: yes Counterfactual Images in the Binary QA Setting Question: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? Status: ✗ Incorrect Thinking Process: So, let’s check the image. The person is showing both hands. Let’s count the fingers on each hand. Left hand (from the viewer’s perspective, the right side of the image) – wait, no, the person’ s left and right. W ait, the left hand of the per son (our right) and right hand of the person (our left). Let’s count: right hand (person’s right) has 5 fingers: thumb, index, middle, ring, pinky . Left hand (person’s left) also has 5 fingers. So both hands have 5 fingers each. The question is asking if it’ s both hands with 5 each, not left with 5 and right with 6. So yes, both have 5. So the answer is yes. Final Answer: yes Commonsense Images in the Multiple-Choice QA Setting Question: What is unusual about the hands in this image? A. Left hand has 5 fingers and right hand has 6 fingers B. Both hands hav e 5 fingers C. Both hands hav e 6 fingers D. Left hand has 6 fingers and right hand has 4 fingers Status: ✗ Incorrect Thinking Process: Got it, let’ s look at the image . The person is showing both hands. Let’ s count the fingers on eac h hand. F irst, the right hand (from the viewer’s perspective, which is the person’ s left hand? W ait, no. W ait, the person’s right hand is on the left side of the imag e. Let’s clarify: when the person r aises their hands, the left hand (of the per son) is on the right side of the image , and the right hand (of the person) is on the left side of the image . W ait, let’ s count. Let’s c heck the right hand (the one on the left side of the imag e). How many fingers? Let’s see: the right hand (per son’ s right) has 5 fingers? W ait, no. W ait, the right hand (person’s right) in the image: let’ s count. The right hand (left side of image) has 5 fingers? W ait, no. W ait, the left hand (per son’ s left) is on the right side of the image . Let’s count eac h hand. Looking at the image: the right hand (fr om the person’ s perspective, so the one on the left side of the image) has 5 fingers? W ait, no. W ait, the right... [Truncated for length] Final Answer: A Counterfactual Images in the Multiple-Choice QA Setting Question: What is unusual about the hands in this image? A. Left hand has 5 fingers and right hand has 6 fingers B. Both hands hav e 5 fingers C. Both hands hav e 6 fingers D. Left hand has 6 fingers and right hand has 4 fingers Status: ✓ Correct Thinking Process: Got it, let’ s look at the image . The person is showing both hands. Let’ s count the fingers on eac h hand. F irst, the left hand (fr om the viewer’ s perspective, which is the person’s right hand? W ait, no. W ait, the person’ s left hand is on the left side of the imag e. Let’s chec k: the left hand (person’ s left) has fingers. Let’s count. The left hand (in the ima ge, left side) has 5 fingers? W ait, no. W ait, the right hand (person’ s right) is on the right side of the imag e. Let’s count eac h hand. Looking at the left hand (the one on the left side of the image, which is the person’s left hand): let’ s count. The fingers are: thumb, index, middle, ring, pinky . W ait, but maybe one of them is missing or extra. W ait, the right hand (person’ s right) in the image: let’ s count. The right hand (image right) has 6 fingers? W ait, no. W ait, the left hand (image left) has 5 fingers, and the right hand (image right) has 6? Let’ s check again. W ait, the left hand (the one on the left side of the image) ha... [T runcated for length] Final Answer: A Qwen3-VL-4B-Thinking Commonsense Images in the Binary QA Setting Question: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? Status: ✓ Correct Thinking Process: So, let’ s look at the image . The person is showing both hands. Let’s count the fingers on each hand. Both hands have 5 fingers each. The left hand (fr om the viewer’s perspective, the person’s right hand) and the right hand (person’ s left hand) are both extended with 5 fingers. So the question is asking if it’ s both hands with 5 fingers eac h, not one with 5 and the other with 6. The answer should be yes. Final Answer: yes Counterfactual Images in the Binary QA Setting Question: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? Status: ✗ Incorrect Thinking Process: So, let’ s look at the ima ge. The person is showing both hands. Let’s count the fing ers on each hand. Left hand: 5 finger s. Right hand: 5 finger s. W ait, the question is asking if it’s both hands with 5 fingers each, not left 5 and right 6. So check each hand. Both hands have 5 fingers. So the answer is yes. Final Answer: yes Commonsense Images in the Multiple-Choice QA Setting Question: What is unusual about the hands in this image? A. Left hand has 5 fingers and right hand has 6 fingers B. Both hands hav e 5 fingers C. Both hands hav e 6 fingers D. Left hand has 6 fingers and right hand has 4 fingers Status: ✗ Incorrect Thinking Process: Got it, let’ s look at the image. The person is showing both hands. Let’s count the fingers on each hand. The left hand: let’ s see, the left hand has 5 fingers. The right hand: wait, the right hand—wait, no, maybe I mixed up left and right. W ait, the person’ s right hand (from the vie wer’ s perspective, the right side of the ima ge) is the one on the right. W ait, no, the per son’ s left hand is on the left side of the image, right hand on the right. Let’s count. Left hand (image left): 5 fingers. Right hand (image right): let’ s count. W ait, the right hand in the imag e—wait, the person’ s right hand is the one on the right side of the ima ge. Let’ s chec k. W ait, the right hand (the one on the right in the image) has 6 fing ers? W ait, no. W ait, maybe the left hand has 6? W ait, no. W ait, let’ s look again. W ait, the problem says ”what’s unusual”. Let’ s count each hand. Left hand (the one on the left side of the ima ge, whic h is the person’ s left hand): how many fingers? Let’ s see, the left hand in th... [Truncated for length] Final Answer: A Counterfactual Images in the Multiple-Choice QA Setting Question: What is unusual about the hands in this image? A. Left hand has 5 fingers and right hand has 6 fingers B. Both hands hav e 5 fingers C. Both hands hav e 6 fingers D. Left hand has 6 fingers and right hand has 4 fingers Status: ✗ Incorrect Thinking Process: Got it, let’s look at the image. The person is showing both hands. Let’s count the fingers on each hand. The left hand: let’ s see, thumb, index, middle, ring, pinky—5 fingers. The right hand: same, thumb, index, middle, ring, pinky—5 fingers? W ait, no, wait. W ait, maybe I miscounted. W ait, the options are A to D. Let’s check again. W ait, the question says what’s unusual. W ait, maybe one hand has an extr a finger? W ait, no, in the image, both hands look like they have 5 fingers each. But wait, option B says both have 5. But that’s normal. W ait, maybe I’m missing something. W ait, no, the pr oblem is that maybe one hand has 6? W ait, let’s count again. Left hand: 5 fingers. Right hand: 5 fingers. But that’ s normal. W ait, but the options include B as both have 5. But maybe the question is tricking me. W ait, no, wait. W ait, maybe the person is holding their hands in a way that one hand has an extra finger? W ait, no, looking at the image , both hands have 5 fingers. So why is the question asking... [T runcated for length] Final Answer: B Qwen3-VL-8B-Thinking Commonsense Images in the Binary QA Setting Question: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? Status: ✓ Correct Thinking Process: So, let’s c heck the image. The person has both hands raised. Let’s count the fingers on each hand. Left hand: 5 fingers. Right hand: 5 fingers. So neither hand has 6 fingers. The question is asking if it’ s both hands with 5 fingers each, not left 5 and right 6. So the answer should be yes. Final Answer: yes Counterfactual Images in the Binary QA Setting Question: Does the image show both hands with 5 fingers each and not the left hand with 5 fingers and right hand with 6 fingers? Status: ✗ Incorrect Thinking Process: So, let’s c heck the image. The person has both hands raised. Let’s count the fingers on each hand. Left hand: 5 fingers. Right hand: 5 fingers. So both have 5 each. The question is asking if it’s showing both with 5, not left 5 and right 6. So the answer is yes. Final Answer: yes Commonsense Images in the Multiple-Choice QA Setting Question: What is unusual about the hands in this image? A. Left hand has 5 fingers and right hand has 6 fingers B. Both hands hav e 5 fingers C. Both hands hav e 6 fingers D. Left hand has 6 fingers and right hand has 4 fingers Status: ✗ Incorrect Response: Got it, let’ s look at the image. The person is holding up both hands. Let’ s count the fingers. W ait, the right hand—wait, no, let’ s check each hand. W ait, the left hand: how many fingers? Let’ s see. The right hand: maybe 6? W ait, no, wait. W ait, the image shows both hands. W ait, the right hand (from the viewer’ s perspective, the person’ s right hand) has 5 fingers? W ait, no, wait. W ait, the person’ s left hand—wait, no, let’ s count. W ait, the right hand (the one on the left side of the image) has 5 fingers? W ait, no, maybe the left hand (on the right side of the image) has 6? W ait, no, let’ s check again. W ait, the options: A says left has 5, right has 6. B both 5. C both 6. D left 6, right 4. W ait, in the image, let’ s count. The right hand (person’ s right, which is left side of image) has 5 fingers? W ait, no, wait. W ait, the person’ s left hand (right side of image) has 6 fingers? W ait, no, maybe I’m miscounting. W ait, no, actually , looking at the image, the right hand (person’ s right) ha... [Truncated for length] Counterfactual Images in the Multiple-Choice QA Setting Question: What is unusual about the hands in this image? A. Left hand has 5 fingers and right hand has 6 fingers B. Both hands hav e 5 fingers C. Both hands hav e 6 fingers D. Left hand has 6 fingers and right hand has 4 fingers Status: ✗ Incorrect Thinking Process: Got it, let’ s look at the image. The person is holding up both hands. Let’ s count the fingers on each hand. The right hand: let’s see, thumb, index, middle, ring, pinky—5 fingers. Left hand: same, thumb, index, middle, ring, pinky—5 fingers. W ait, but the options say something about 6 or 4. W ait, maybe I miscounted. W ait, no, the question is what’ s unusual. W ait, the options: A says left 5, right 6. B both 5. C both 6. D left 6, right 4. But in the image, both hands have 5 fingers. So the unusual part would be if they didn’t, but the question is asking what’s unusual. W ait, but the options are the choices. W ait, the problem is to pick which option is correct. W ait, the image shows both hands with 5 fingers. So option B is ”Both hands have 5 fingers”. But is that unusual? W ait, no, normally hands have 5 fingers. W ait, but maybe the question is tricking us. W ait, no, the options ar e the possible answers. W ait, the question is ”What is unusual about the hands in this image?” So if both ha... [Truncated for length] Final Answer: B

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment