SARL: Label-Free Reinforcement Learning by Rewarding Reasoning Topology

Reinforcement learning has become central to improving large reasoning models, but its success still relies heavily on verifiable rewards or labeled supervision. This limits its applicability to open ended domains where correctness is ambiguous and c…

Authors: Yifan Wang, Bolian Li, David Cho

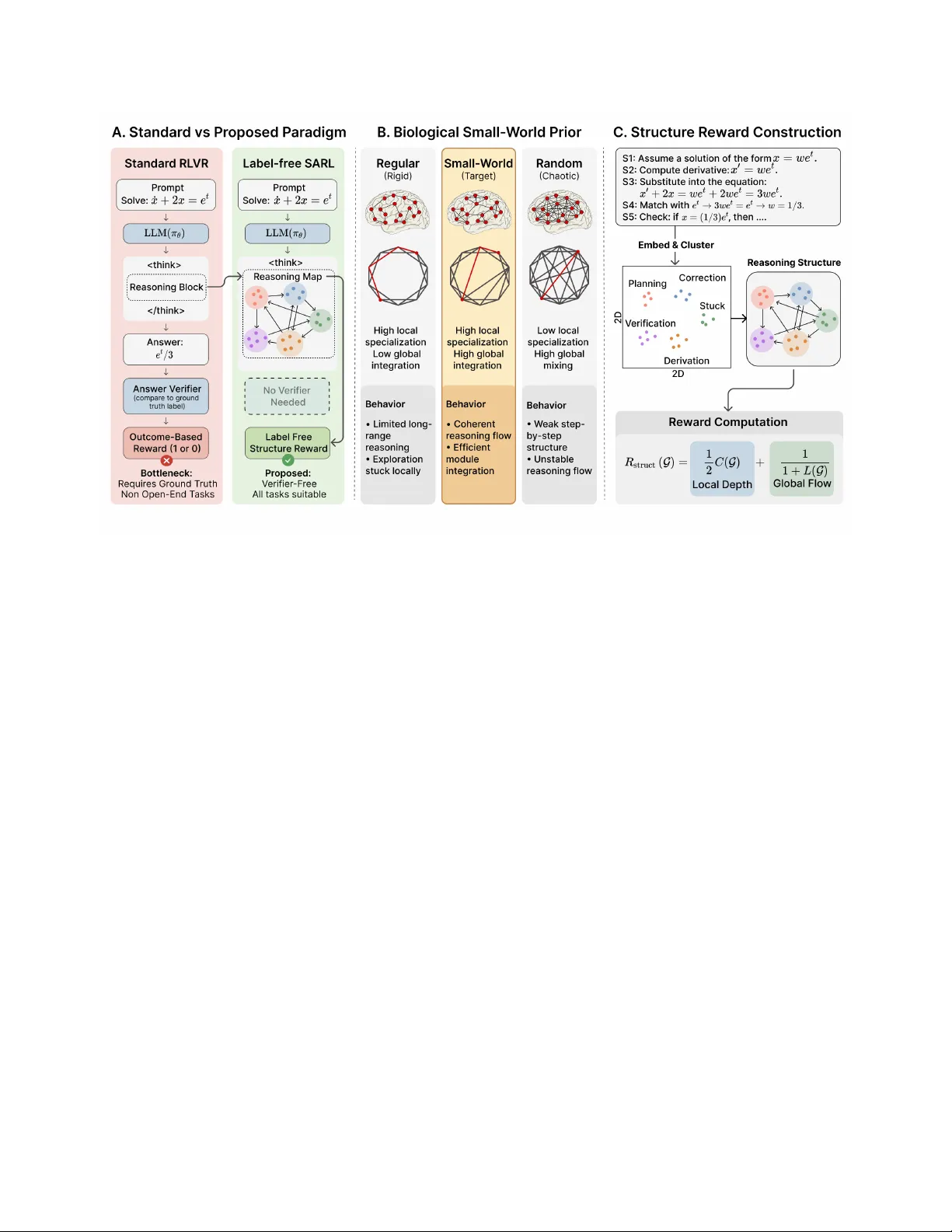

SARL: L abel-F ree R einforcement L earning by R ewarding R easoning T opology Y ifan W ang 1 Bolian Li 1 David Cho 1 R uqi Zhang 1 F anping Sui 2 Ananth Grama 1 1 Purdue University 2 T exas Instruments R einforcement learning has become central to improving large reasoning models, but its success still relies heavily on verifiable rewards or labeled supervision. This limits its applicability to open ended domains where correctness is ambiguous and cannot be verified. Moreover , reasoning trajectories remain largely unconstrained, and optimization towards final answer can favor early exploitation over generalization. In this work, we ask whether general reasoning ability can be improved by teaching models how to think (the structure of reasoning) rather than what to produce (the outcome of reasoning) and extend traditional RL VR to open ended settings. W e introduce structure aware reinforcement learning (SARL), a label free framework that constructs a per response R easoning Map from intermediate thinking steps and rewards its small world topology , inspired by complex networks and the functional organization of the human brain. SARL encourages reasoning trajectories that are both locally coherent and globally efficient, shifting supervision from destination to path. Our experiments on Qwen3-4B show SARL surpasses ground truth based RL and prior label free RL baselines, achieving the best average gain of 9.1% under PPO and 11.6% under GRPO on math tasks and 34.6% under PPO and 30.4% under GRPO on open ended tasks. Beyond good performance, SARL also exhibits lower KL divergence, higher policy entropy , indicating a more stable and exploratory training and generalized reasoning ability . K eywords: Structure aware reinforcement learning, label free RL VR , LLM post-training { Date: 2026-03-29 § Code: https://github.com/cacayaya/SARL # Contact: wang5617@purdue.edu 1 Introduction R ecently , Large R easoning Models (LRMs) have demonstrated transformative capabilities in tackling complex, reasoning-intensive tasks, especially in mathematics and code generation [ 5 , 29 ]. Central to this progress is the Chain-of- Thought (CoT) paradigm, where models generate explicit, step-by-step reasoning steps before producing a final answer [ 27 , 25 ]. These reasoning capabilities are typically catalyzed by supervised fine-tuning (SFT) and, more crucially , by reinforcement learning (RL) [ 15 , 18 ]. By rewarding correct final outcomes, RL allows models to autonomously explore and refine their internal logic, leading to the emergence of sophisticated “thinking” behaviors [ 10 , 23 ]. However , the current success of reasoning models is largely tethered to the R einforcement Learning from V erifiable Rewards (RL VR) framework [ 5 , 18 ]. RL VR relies heavily on tasks with objective ground truths and automated verifiers to provide reward signals. This dependency creates a “bottleneck of 1 verifiability”: in domains where ground truth is ambiguous, expensive to label, or non-existent, such as open-ended strategic planning or complex philosophical inquiry , the RL signal largely vanishes. Furthermore, current reward modeling predominantly focuses on outcome-level rewards, which treat the reasoning process as a “black box” and offer limited control over intermediate steps. While Process R eward Models (PRMs) [ 10 , 13 , 7 ] have been introduced to provide more granular feedback, they are still constrained by the cost of step-level supervision and by limited scalability to tasks without clear , rule-based verification. However , to some extent, PRMs still optimize reasoning primarily through its contribution to final-answer correctness, offering limited control over how intermediate thoughts are structured or organized. This raises a central question: Can complex reasoning be optimized not through supervision derived from ground-truth answers, but by directly shaping how the model thinks? A compelling answer to this question lies in the macro-scale functional architecture of the human brain [ 20 ]. Neuroscientific evidence suggests that human high-level cognition is facilitated by the small-world organization of the brain’s connectome [ 3 ]. This topology is characterized by dense local clusters responsible for specialized information processing and a few strategic long-range shortcuts that minimize the path length between disparate functional modules [ 26 ]. Such a structure allows the brain to achieve an optimal balance between functional segregation and global integration, which enables efficient relational reasoning and creative insight even in the absence of external feedback [ 24 ]. In this biological framework, a thinking process is essentially a traversal across this small-world network. Here, the quality of reasoning is determined by its navigational efficiency and topological coherence [ 17 ]. Inspired by this architecture, we hypothesize that reasoning models can benefit from structural priors that encourage more efficient exploration in latent conceptual space. In this work, we introduce Structure A ware R einforcement Learning (SARL), a label free training framework that improves reasoning by optimizing the topology of the reasoning process itself rather than relying on ground truth labels. SARL constructs a per response Reasoning Map from intermediate thinking steps and rewards its small world structure, encouraging reasoning trajectories that are both locally coherent and globally efficient. Our main contributions are as follows: • W e propose SARL, a label-free RL framework that optimizes the structure of reasoning rather than its outcome, by rewarding the small-world topology of per-response R easoning Maps. • W e show that SARL is effective in both mathematical and open ended reasoning, outperforming prior label free baselines and matching or surpassing ground truth based RL in several settings. • W e find that SARL induces a more stable training regime, with lower policy drift and higher entropy , indicating more exploration and generalization ability . 2 R elated W ork R easoning Structure in Human Cognition and T opology of LRMs Reasoning. The human brain’s connectome exhibits a small-world topology [ 26 , 3 ]: dense local clustering within specialized func- tional modules coexists with short global path lengths across the entire network, enabling simultaneous functional segregation and rapid cross-module integration [ 24 ]. This structural property is not merely ar- chitectural; it underlies the brain’s capacity to navigate abstract cognitive spaces efficiently , coordinating between specialized processing units at minimal energetic cost [ 17 , 20 ]. R ecent empirical work has begun uncovering analogous structural signatures in Large Reasoning Models (LRMs). Minegishi et al. [ 14 ] extract reasoning graphs from the hidden-state trajectories of LRMs and find that distilled reasoning models exhibit substantially higher cyclicity , diameter , and small-world index than base models, with these structural advantages scaling with task difficulty and correlating positively 2 Figure 1: Overview of Structure A ware R einforcement Learning (SARL). Left: SARL replaces outcome based supervision with a label free structure reward computed from the model’s reasoning process. Middle: the reward is motivated by the small world prior , which balances local specialization and global integration. Right: construction of the SARL R easoning Map and Structure R eward. with accuracy . From a geometric perspective, Zhou et al. [ 33 ] model chain-of-thought reasoning as smooth flows in the model’s representation space, showing that logical transitions induce structured geometric trajectories governed by the semantics of intermediate propositions. At the level of trace analysis, F eng et al. [ 4 ] introduce graph-based structural metrics for CoT traces and demonstrate that the fraction of abandoned reasoning branches, rather than trace length or self-review frequency , is the strongest predictor of correctness, providing causal evidence that structural organization shapes reasoning outcomes. T an et al. [ 21 ] further show that topological data analysis features of reasoning traces carry substantially higher predictive power for reasoning quality than standard graph-connectivity metrics. Collectively , these studies establish a robust empirical link between the structural organization of reasoning trajectories and reasoning quality . Our work takes the natural next step: rather than analyzing these structural properties post hoc, we optimize the small-world topology of reasoning graph which built from thinking steps as a direct instrinstic reasoning quality signal. Label F ree RL VR R einforcement Learning from V erifiable Rewards (RL VR) has emerged as the dominant post-training paradigm for LRMs [ 5 ], using binary outcome rewards in domains, primarily mathematics and code, where correctness can be automatically verified. Algorithms such as GRPO [ 18 ] enable stable, scalable RL training; yet RL VR is fundamentally constrained to verifiable tasks. In open-ended domains such as creative writing, strategic planning, or advisory reasoning, no automated verifier exists and the RL signal vanishes entirely . Alternative label-free approaches have emerged to enable RL VR without ground-truth annotations. TTRL [ 34 ] derives reward signals from majority-vote consistency across multiple rollouts, treating the consensus answer as a pseudo-label. While effective on closed-form reasoning tasks, this voting-based binary reward is ill-suited for open-ended generation where no single canonical answer exists. Entropy- 3 based methods offer a more general alternative: EMPO [ 30 ] and concurrent work [ 1 ] minimize the predictive entropy of model outputs in semantic space, using the model’s own uncertainty as the sole training signal without requiring any labeled data. More directly targeting the open-ended setting, Intuitor [ 31 ] introduces R einforcement Learning from Internal F eedback (RLIF), where the model’s self-certainty scores serve as reward signals within a GRPO framework, demonstrating generalization to diverse out-of-domain tasks such as code generation where verifiable external rewards are unavailable. Other work uses output format compliance and response length as surrogate rewards [ 28 ], limiting applicability to math tasks with prescribed answer formats. Beyond those methods, our approach derive the label free reward signal from the structure of the model’s reasoning processes, and applicable to not only highly verifiable tasks like math but also quite open-end and complex tasks in broader and real-world reasoning settings. T able 1 summarizes the key differences between these label-free approaches. T able 1: Comparison of label-free RL methods. “ Algorithm-agnostic” indicates whether the reward can be used beyond some specific RL methods (e.g., not limited to GxPO). Method R eward Signal Algorithm-agnostic Open-ended V erifiable EMPO [ 30 ] Entropy minimization ✗ ✓ ✓ TTRL [ 34 ] Majority voting ✗ ✗ ✓ F ormat & Length [ 28 ] F ormat + length ✓ ✗ ✓ SARL (Ours) R easoning Structure ✓ ✓ ✓ 3 Method SARL replaces outcome based supervision with structural supervision on the reasoning process itself . Given a generated trajectory , SARL constructs a per-response R easoning Map from the intermediate thinking steps and assigns a reward according to its small-world topology . Intuitively , SARL favors reasoning traces that are locally coherent within functional subproblems while remaining globally well connected across different reasoning modes. This section describes the training objective, the construction of the R easoning Map, and the resulting reward. 3.1 Problem F ormulation Let x denote an input question and let π θ be a language model policy parameterized by θ . F ollowing the chain-of-thought paradigm, the model produces a trajectory τ = ( s 1 , s 2 , . . . , s T , a ) , where s 1 , . . . , s T are intermediate reasoning steps inside the block and a is the final answer . Standard reinforcement learning from verifiable rewards optimizes an outcome level objective of the form max θ E x ∼D , τ ∼ π θ ( ·| x ) 1 [ a = y ∗ ] , (1) where y ∗ is a ground truth answer . While effective in verifiable domains, Eq. ( 1 ) depends on access to labels and provides no direct signal about the quality of the reasoning trace itself . SARL replaces the outcome reward with a structural reward computed from the generated reasoning trajectory . F or each rollout, we build a R easoning Map G ( τ ) = ( V , E ) whose nodes represent latent reasoning types and whose edges represent transitions between them. The SARL objective is max θ E x ∼D , τ ∼ π θ ( ·| x ) SR G ( τ ) , (2) 4 where SR denotes the Structure R eward and measures the small-world organization of the resulting graph. Crucially , Eq. ( 2 ) does not rely on y ∗ , allowing the policy to be trained even when correctness labels are unavailable or expensive to obtain. 3.2 Structure A ware R einforcement Learning W e next present the overall SARL pipeline, from R easoning Map Construction (§ 3.2.1 ) to the definition of the Structure R eward (SR) (§ 3.2.2 ).And the full pipeline is summarized in Algorithm 1 . Algorithm 1 Structure A ware R einforcement Learning (label-free) R equire: P olicy π θ , embedding model M , training set D , RL algorithm A , rollouts per question G 1: for each training iteration do 2: Sample batch { x i } from D 3: for each question x i do 4: Generate G trajectories { τ ( g ) i } G g =1 ∼ π θ ( · | x i ) 5: for each trajectory τ ( g ) i do 6: Extract reasoning steps { s t } from 7: Compute step embeddings { e t } and cluster them into nodes V with assignments { z t } 8: Build G ( τ ( g ) i ) from transitions { ( v z t , v z t +1 ) : z t = z t +1 } 9: Compute r ( g ) i ← SR G ( τ ( g ) i ) 10: end for 11: end for 12: Update θ ← A θ , { ( τ ( g ) i , r ( g ) i ) } 13: end for 3.2.1 Reasoning Map Construction Unlike corpus level graph analyses that aggregate traces across many examples, SARL constructs a separate R easoning Map for each generated response. This design makes the reward fully online and compatible with standard policy optimization. Step Extraction. W e first extract the content of the block and split it into reasoning steps at newline boundaries. This choice provides a practical middle ground: token level segmentation is too fine and tends to produce degenerate dense graphs, while paragraph level segmentation is too coarse and can merge distinct operations into a single unit. Step Embedding. Each step s t is mapped to a unit normalized embedding e t ∈ R d , e t = M ( s t ) ∥M ( s t ) ∥ 2 , where M is a text embedding model. In practice, we compute these embeddings with a lightweight exter- nal encoder so that reward construction does not interfere with the main rollout engine. Implementation details are provided in Appendix A.1 . Latent reasoning types. Individual steps may differ in wording while still serving the same functional role. F or example, two algebraic manipulations may appear lexically different but correspond to the same reasoning type. SARL captures this structure by clustering the step embeddings { e t } T t =1 into K latent reasoning types, V = { v 1 , v 2 , . . . , v K } . (3) 5 Each node v k corresponds to one cluster of semantically similar steps, and we use z t ∈ { 1 , . . . , K } to denote the cluster assignment of step s t . Clustering is performed independently for each response. W e consider both KMeans and HDBSCAN for this step; the specific settings and the regimes in which each choice is more suitable are discussed in Appendix A.2 . R easoning transitions. The edge set E captures transitions between reasoning types. W e add an undirected edge ( v i , v j ) ∈ E whenever two consecutive steps s t and s t +1 belong to different clusters, namely when z t = i = j = z t +1 . R epeated transitions between the same pair of reasoning types contribute only one edge. W e use an undirected graph because the reward is intended to measure structural organization and connectivity rather than directional control flow . 3.2.2 Structure Reward (SR) Small-world prior . SARL is motivated by the observation that effective reasoning should balance local specialization with global integration. In graph terms, this suggests two desirable properties: high local clustering, which reflects coherent functional substructures, and short global path lengths, which reflect efficient transitions across different reasoning modes. Let N ( v k ) denote the neighbor set of node v k , and let V ≥ 2 = { v k ∈ V : |N ( v k ) | ≥ 2 } be the set of nontrivial nodes. W e define the average clustering coefficient as C ( G ) = 1 |V ≥ 2 | X v k ∈V ≥ 2 { ( v i , v j ) ∈ E : v i , v j ∈ N ( v k ) } |N ( v k ) | |N ( v k ) | − 1 / 2 , (4) which measures how strongly neighboring reasoning types form locally coherent modules. W e also define the average shortest path length over all reachable node pairs P = { ( v i , v j ) : v i = v j , v j reachable from v i } as L ( G ) = 1 |P | X ( v i ,v j ) ∈P δ ( v i , v j ) , (5) where δ ( v i , v j ) denotes hop count distance. Lower values of L ( G ) indicate more efficient communication across distinct reasoning types. Classical small-world index compare a graph against a random graph, but they are not ideal as direct reinforcement learning rewards. In particular , the resulting score is unbounded and depends on a stochastic reference graph, which can increase reward variance and make training less stable. W e therefore define the Structure R eward (SR) here directly as a combination of the two desired structural properties: SR( G ) = 1 2 C ( G ) | {z } Local Depth + 1 1 + L ( G ) | {z } Global Flow . (6) As shown in Eq. ( 4 ) and Eq. ( 5 ) , C ( G ) captures local specialization, while L ( G ) captures global efficiency . T ogether the two terms discourage traces that collapse into a single reasoning mode or drift through long unstructured chains of transitions. 4 Experiments 4.1 Experimental Setup Our experiments are designed to answer a key question: Can optimizing the structure of reasoning processes rather than solely focusing on final outcomes lead to good reasoning performance? 6 Specifically , we investigate small-world topological as a structural prior for reasoning processes and evaluate its effectiveness on two different types of reasoning tasks: V erifiable Math Reasoning and Open-Ended R easoning. Models. W e conduct all experiments using Qwen3-4B [ 29 ], a strong reasoning model that generates explicit chain-of-thought reasoning within blocks. This reasoning format enables us to directly construct Reasoning Maps from intermediate reasoning traces, which are then used to compute the proposed Structure R eward. T raining Data. W e evaluate SARL under two distinct training regimes that cover both verifiable and non-verifiable reasoning domains. • V erifiable reasoning (Math). W e train on mathematical reasoning problems drawn from histori- cal AIME competitions (1983–2024). These problems require multi-step symbolic reasoning and provide deterministic correctness signals, making them a standard benchmark for evaluating reasoning ability . • Non-verifiable reasoning (Open-ended). W e train on OpenR ubrics-v2 [ 12 ], a large-scale preference dataset spanning diverse domains including creative writing, planning, coding, advice, and analytical reasoning. Unlike mathematical tasks, these problems lack explicit correctness labels, making them suitable for evaluating label-free training methods. Dataset filtering and preparation details are provided in Appendix B.1 . Baselines. F or the verifiable setting (Math Reasoning), we compare against two prominent label-free RL baselines: EMPO [ 30 ] and TTRL [ 34 ]. F or the non-verifiable setting (Open-Ended R easoning), we additionally compare against DPO [ 16 ] training on preference data. T TRL is not applicable for open-ended reasoning as it requires to guess binary labels. T raining and Evaluation. All reinforcement learning experiments are implemented using the veRL framework [ 19 ]. Preference-based training with DPO is implemented using LlamaF actory [ 32 ]. Full training details, including hyperparameters and optimization settings, are provided in Appendix B.2 . F or evaluation, we assess mathematical reasoning performance on four benchmarks: MA TH500 [ 6 ], AIME25 [ 2 ], AMC23 [ 8 ] and Minerva Math [ 9 ]. W e adopt avg@ 8 as our primary evaluation metric, which computes the expected accuracy across multiple samples and provides a more stable assessment of model capability compared to single-run pass@1 scores. F or open-ended reasoning evaluation, we employ the WildBench Elo rating system and task-category macro scores from the WildBench leaderboard [ 11 ]. 4.2 V erifiable R easoning (Mathematical T asks) Results W e first validate the effectiveness of Structure R eward in the well-established verifiable setting of mathematical reasoning, where RL VR methods are most commonly applied. Structure reward closes the gap to ground-truth supervision. As shown in T able 2 , across both optimization algorithms, Structure R eward achieves performance that matches or surpasses ground- truth RL despite requiring no correctness labels. Under GRPO , Structure R eward achieves the best performance among all label-free methods, reaching an average improvement of (+7.65), outperforming EMPO (+6.94) and TTRL (+6.61), and even exceeding ground-truth RL (+7.15). Under PPO , Structure R eward achieves an improvement of (+5.87), again surpassing ground-truth PPO training (+5.67). These results indicate that structural signals alone can provide sufficiently strong learning guidance to rival, and in some cases exceed, correctness based supervision. Notably , unlike EMPO and T TRL, which rely on group-level optimization and are restricted to GRPO -style training, SARL generalizes across both 7 Method AIME25 AMC23 MA TH500 Minerva A vg ( ∆ ) Base 31.67 82.81 90.10 53.45 64.51 Label-free RL PPO w/ Structure R eward (Ours) 42.92 85.00 92.53 61.08 70.38 (+5.87) Ground- T ruth RL (Oracle) PPO w/ Ground- T ruth 41.67 86.56 92.75 59.74 70.18 (+5.67) Label-free RL GRPO w/ Entropy Minimization (EMPO) 44.58 86.56 93.23 61.44 71.45 (+6.94) GRPO w/ Majority V oting (TTRL) 42.91 86.56 93.15 61.86 71.12 (+6.61) GRPO w/ Structure R eward (Ours) 45.83 87.50 93.30 61.99 72.16 (+7.65) Ground- T ruth RL (Oracle) GRPO w/ Ground- T ruth 46.67 84.38 93.15 62.45 71.66 (+7.15) T able 2: R esults on mathematical reasoning benchmarks. EMPO and T TRL rely on group-level opti- mization signals and are therefore only applicable to GRPO -style training, while Structure R eward is compatible with both PPO and GRPO . PPO and GRPO frameworks. This algorithm-agnostic compatibility highlights its practical applicability across diverse reinforcement learning pipelines. The largest gains from Structure R eward training occur on AIME25 , the most challenging benchmark requiring long multi-step mathematical derivations. Under GRPO , performance improves from 31.67 to 45.83 , corresponding to a gain of +14.16 points (+45% relative improvement). Such improvements are substantially larger than those observed on easier benchmarks, like MA TH500 and AMC23, suggesting that structural constraints become increasingly beneficial as reasoning becomes more complex. This pattern is consistent with the small-world structural prior: complex problems require coordinated multi-phase reasoning, where local coherence and global connectivity must be balanced across long reasoning chains. 4.3 Non- V erifiable R easoning (Open-Ended T asks) R esults A core motivation of our work is to enable reinforcement learning beyond verifiable domains using label-free structural rewards. T o test this, we apply SARL on OpenR ubrics-v2 [ 12 ], a diverse open-ended QA dataset. Method Creative Planning Math Info Code WB Score ( ∆ ) Base 51.01 36.23 16.35 48.71 14.72 29.91 DPO 51.16 37.82 17.06 48.37 14.34 30.34 (+0.43) PPO w/ Structure R eward (Ours) 57.05 45.87 27.70 53.47 30.05 40.26 (+10.35) GRPO w/ Entropy Minimization (EMPO) 51.01 36.11 17.62 45.69 12.92 29.20 (-0.71) GRPO w/ Structure R eward (Ours) 55.34 43.56 26.83 51.98 29.91 39.01 (+9.10) T able 3: WildBench evaluation results for open-ended reasoning training. Numbers in parentheses indicate absolute improvement over the base model. 8 Structure reward enables reinforcement learning beyond verifiable domains. The most striking observation is that Structure Reward achieves substantial performance gains despite receiving no labels or preference signals. Under PPO , the WB Score improves from 29.91 to 40.26 (+10.35), while under GRPO it improves to 39.01 (+9.10). These improvements are significantly larger than those obtained by alternative training strategies, indicating that structural optimization alone can provide a sufficiently strong learning signal for open-ended reasoning. P erformance improvements are broad across diverse reasoning tasks. Structure R eward improves performance across all five task categories under both PPO and GRPO . Beyond notable improvements on Math and Coding tasks, which we have established benefit from structured reasoning, the most significant finding is the substantial gains in truly open-ended domains: Creative writing (+6.04 for PPO , +4.33 for GRPO), Planning (+9.64 and +7.33), and Info/Advice (+4.76 and +3.27). These domains are less formally structured and require flexible reasoning and knowledge synthesis. The consistent gains across such diverse categories indicate that the structural prior does not merely benefit mathematical reasoning, but generalizes to a wide range of open-ended cognitive tasks. Comparison with applicable baselines in the open-ended setting. Our primary comparison focuses on methods that are genuinely label-free. However , T TRL [ 34 ] requires voting on binary labels and format-based label free rewards [ 28 ] only define math-specific output formats, making them unable to generalize to open-ended tasks. W e therefore compare primarily against EMPO [ 30 ], which could be extended to open-ended tasks by clustering multiple sampled responses in semantic space and using the resulting semantic entropy as the reward signal. And we additionally report DPO [ 16 ] as a stronger reference that uses preference labels. Even with this extra supervision, DPO yields only a marginal improvement (+0.43 WB Score), while EMPO slightly decreases performance (-0.71). In contrast, Structure R eward consistently delivers large gains, suggesting that structural prior provides a more general and effective signal for open-ended tasks. 4.4 Analysis Figure 2: T raining dynamics of different methods (reward signals) under GRPO . T raining dynamics analysis. Figure 2 reveals a clear pattern: SARL achieves the strongest perfor- mance while inducing the smallest policy drift. Its KL divergence remains near zero throughout training, whereas T TRL and EMPO exhibit steadily increasing KL, indicating much larger deviation from the reference policy . This behavior reflects a common failure mode of label-free RL methods: excessive policy drift can destabilize optimization and eventually lead to model collapse. 9 At the same time, SARL maintains high policy entropy over most of training, indicating sustained exploration rather than early collapse to narrow reasoning patterns. In contrast, training directly on ground-truth rewards often drives entropy drop quickly , suggesting premature exploitation that may hurt generalization. The central insight is that SARL improves reasoning through a stable optimization regime that combines minimal policy shift with relatively high exploration. Method A vg T okens ∆ vs. Base V erifiable reasoning (Math). Base 5736.6 0.0 PPO w/ Structure R eward 5075.6 -661.0 GRPO w/ Structure R eward 4587.8 -1148.8 Non-verifiable reasoning (Open-ended). Base 2677.91 0.00 PPO w/ Structure R eward 2303.17 -374.74 GRPO w/ Structure R eward 2285.87 -392.04 T able 4: A verage response length comparison. Length analysis. One possible concern is that the gains from Structure Reward may come simply from generating longer responses. T able 4 shows that this is not the case. Structure R eward substantially re- duces average response length in both open- ended and mathematical reasoning settings while achieving much higher performance. These results suggest that the benefit comes from producing more efficient and better or- ganized reasoning traces rather than simply extending length. Full length comparisons against other baselines are provided in Ap- pendix B.3 . 5 Limitations SARL is most effective for tasks that involve sufficiently long and complex reasoning processes. F or very straightforward or simple tasks with only a few steps, the benefit of enforcing structural organization may be limited, as such tasks do not require complex reasoning. In addition, although the small world structure proves effective across the benchmarks considered in this work, it may not always be the optimal structural prior for every domain. Different tasks may favor distinct reasoning structures, and more fine-grained or domain specific structural priors could further improve performance. W e emphasize that structure-aware training should be viewed as a general framework rather than a fixed design. Our results suggest that structural priors offer a promising direction for reinforcement learning in reasoning tasks, while leaving substantial room for future work to explore richer and more specialized structural priors tailored to different problem domains. 6 Conclusion In this work, we introduced structure aware reinforcement learning (SARL), a label free framework that improves reasoning by rewarding the organization of the reasoning process rather than the correctness of final answers. By constructing Reasoning Maps and optimizing their small world topology , SARL provides a scalable training signal that applies to both verifiable and non verifiable tasks. Empirically , SARL matches or surpasses ground truth based RL on mathematical reasoning benchmarks, outperforms existing label free baselines such as EMPO and TTRL, and delivers large gains on open ended W ildBench evaluation without using correctness labels or preference supervision. Our analysis further shows that these improvements are not driven by longer outputs, but by a more stable optimization regime with minimal policy drift and sustained exploration. T aken together , these results suggest that reasoning structure itself is a meaningful and general learning signal, opening a path toward reinforcement learning beyond the bottleneck of verifiability . 10 R eferences [1] Shivam Agarwal, Zimin Zhang, Lifan Y uan, Jiawei Han, and Hao P eng. The unreasonable effectiveness of entropy minimization in llm reasoning. arXiv preprint , 2025. [2] Mislav Balunovi ´ c, Jasper Dekoninck, Ivo P etrov , Nikola Jovanovi ´ c, and Martin V echev . Matharena: Evaluating llms on uncontaminated math competitions, F ebruary 2025. URL https://matharena. ai/ . [3] Danielle S Bassett and Edward T Bullmore. Small-world brain networks revisited. The Neuroscien- tist , 23(5):499–516, 2017. [4] Y unzhen F eng, Julia Kempe, Cheng Zhang, P arag Jain, and Anthony Hartshorn. What characterizes effective reasoning? revisiting length, review , and structure of cot. arXiv preprint , 2025. [5] Daya Guo, Dejian Y ang, Haowei Zhang, Junxiao Song, P eiyi W ang, Qihao Zhu, R unxin Xu, R uoyu Zhang, Shirong Ma, Xiao Bi, et al. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint , 2025. [6] Dan Hendrycks, Collin Burns, Saurav Kadavath, Akul Arora, Steven Basart, Eric T ang, Dawn Song, and Jacob Steinhardt. Measuring mathematical problem solving with the math dataset. arXiv preprint arXiv:2103.03874 , 2021. [7] Muhammad Khalifa, Rishabh Agarwal, Lajanugen Logeswaran, Jaekyeom Kim, Hao P eng, Moon- tae Lee, Honglak Lee, and Lu W ang. Process reward models that think. arXiv preprint arXiv:2504.16828 , 2025. [8] knoveleng. Amc-23. https://huggingface.co/datasets/knoveleng/AMC- 23 , 2025. [9] Aitor Lewkowycz, Anders Andreassen, David Dohan, Ethan Dyer , Henryk Michalewski, V inay Ramasesh, Ambrose Slone, Cem Anil, Imanol Schlag, Theo Gutman-Solo, et al. Solving quantitative reasoning problems with language models. Advances in neural information processing systems , 35: 3843–3857, 2022. [10] Hunter Lightman, V ineet Kosaraju, Y uri Burda, Harrison Edwards, Bowen Baker , T eddy Lee, Jan Leike, John Schulman, Ilya Sutskever , and Karl Cobbe. Let’s verify step by step. In The T welfth International Conference on Learning Representations (ICLR) , 2024. [11] Bill Y uchen Lin, Y untian Deng, Khyathi Chandu, F aeze Brahman, Abhilasha Ravichander , V alentina Pyatkin, Nouha Dziri, R onan Le Bras, and Y ejin Choi. Wildbench: Benchmarking llms with challenging tasks from real users in the wild. arXiv preprint , 2024. [12] Tianci Liu, Ran Xu, T ony Y u, Ilgee Hong, Carl Y ang, T uo Zhao, and Haoyu W ang. Openrubrics: T owards scalable synthetic rubric generation for reward modeling and llm alignment. arXiv preprint arXiv:2510.07743 , 2025. [13] Qianli Ma, Haotian Zhou, Tingkai Liu, Jianbo Y uan, P engfei Liu, Y ang Y ou, and Hongxia Y ang. Let’s reward step by step: Step-level reward model as the navigators for reasoning. arXiv preprint arXiv:2310.10080 , 2023. [14] Gouki Minegishi, Hiroki Furuta, T akeshi Kojima, Y usuke Iwasawa, and Y utaka Matsuo. T opology of reasoning: Understanding large reasoning models through reasoning graph properties. arXiv preprint arXiv:2506.05744 , 2025. [15] Long Ouyang, Jeffrey W u, Xu Jiang, Diogo Almeida, Carroll W ainwright, P amela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray , et al. T raining language models to follow instructions with human feedback. Advances in neural information processing systems , 35:27730– 27744, 2022. 11 [16] Rafael Rafailov , Archit Sharma, Eric Mitchell, Christopher D Manning, Stefano Ermon, and Chelsea Finn. Direct preference optimization: Y our language model is secretly a reward model. Advances in neural information processing systems , 36:53728–53741, 2023. [17] Caio Seguin, Martijn P V an Den Heuvel, and Andrew Zalesky . Navigation of brain networks. Proceedings of the National Academy of Sciences , 115(24):6297–6302, 2018. [18] Zhihong Shao, P eiyi W ang, Qihao Zhu, R unxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Y ang W u, et al. Deepseekmath: Pushing the limits of mathemati- cal reasoning in open language models. arXiv preprint , 2024. [19] Guangming Sheng, Chi Zhang, Zilingfeng Y e, Xibin W u, W ang Zhang, R u Zhang, Y anghua P eng, Haibin Lin, and Chuan W u. Hybridflow: A flexible and efficient rlhf framework. arXiv preprint arXiv: 2409.19256 , 2024. [20] Olaf Sporns. Networks of the Brain . MIT press, 2016. [21] Xue W en T an, Nathaniel T an, Galen Lee, and Stanley Kok. The shape of reasoning: T opological analysis of reasoning traces in large language models. arXiv preprint , 2025. [22] Leandro von W erra, Y ounes Belkada, Lewis T unstall, Edward Beeching, T ristan Thrush, Nathan Lambert, Shengyi Huang, Kashif Rasul, and Quentin Gallouédec. TRL: T ransformers R einforcement Learning, 2020. URL https://github.com/huggingface/trl . [23] P eiyi W ang, Lei Li, Zhihong Shao, R unxin Xu, Damai Dai, Yifei Li, Deli Chen, Y u W u, and Zhifang Sui. Math-shepherd: V erify and reinforce llms step-by-step without human annotations. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , pages 9426–9439, 2024. [24] R ong W ang, Mianxin Liu, Xinhong Cheng, Y ing W u, Andrea Hildebrandt, and Changsong Zhou. Segregation, integration, and balance of large-scale resting brain networks configure different cognitive abilities. Proceedings of the National Academy of Sciences , 118(23):e2022288118, 2021. [25] Xuezhi W ang, Jason W ei, Dale Schuurmans, Quoc Le, Ed Chi, Sharan Narang, Aakanksha Chowdh- ery , and Denny Zhou. Self-consistency improves chain of thought reasoning in language models. arXiv preprint arXiv:2203.11171 , 2022. [26] Duncan J W atts and Steven H Strogatz. Collective dynamics of ‘small-world’networks. nature , 393 (6684):440–442, 1998. [27] Jason W ei, Xuezhi W ang, Dale Schuurmans, Maarten Bosma, F ei Xia, Ed Chi, Quoc V Le, Denny Zhou, et al. Chain-of-thought prompting elicits reasoning in large language models. Advances in neural information processing systems , 35:24824–24837, 2022. [28] Rihui Xin, Han Liu, Zecheng W ang, Y upeng Zhang, Dianbo Sui, Xiaolin Hu, and Bingning W ang. Surrogate signals from format and length: R einforcement learning for solving mathematical problems without ground truth answers. arXiv preprint , 2025. [29] An Y ang, Anfeng Li, Baosong Y ang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Y u, Chang Gao, Chengen Huang, Chenxu Lv , et al. Qwen3 technical report. arXiv preprint , 2025. [30] Qingyang Zhang, Haitao W u, Changqing Zhang, P eilin Zhao, and Y atao Bian. Right question is already half the answer: Fully unsupervised llm reasoning incentivization. arXiv preprint arXiv:2504.05812 , 2025. [31] Xuandong Zhao, Zhewei Kang, Aosong F eng, Sergey Levine, and Dawn Song. Learning to reason without external rewards. arXiv preprint , 2025. 12 [32] Y aowei Zheng, Richong Zhang, Junhao Zhang, Y anhan Y e, Zheyan Luo, Zhangchi F eng, and Y ongqiang Ma. Llamafactory: Unified efficient fine-tuning of 100+ language models. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (V olume 3: System Demonstrations) , Bangkok, Thailand, 2024. Association for Computational Linguistics. URL http://arxiv.org/abs/2403.13372 . [33] Y ufa Zhou, Y ixiao W ang, Xunjian Y in, Shuyan Zhou, and Anru R Zhang. The geometry of reasoning: Flowing logics in representation space. arXiv preprint , 2025. [34] Y uxin Zuo, Kaiyan Zhang, Li Sheng, Shang Qu, Ganqu Cui, Xuekai Zhu, Haozhan Li, Y uchen Zhang, Xinwei Long, Ermo Hua, et al. Ttrl: T est-time reinforcement learning. arXiv preprint arXiv:2504.16084 , 2025. A Implementation Details A.1 Step embedding extraction W e provide two practical implementations for computing step embeddings e t used in § 3 . Implementation A (directly from the policy model hidden states during training). Given a generated trajectory τ = ( s 0 , . . . , s T ) , we run the policy model itself on the full sequence (or on per-step chunks) and extract the token hidden states from layer ℓ . Thus, the step embedding can be obtained directly from the policy model, without requiring a separate embedding model. Let L t denote the number of tokens in step s t . If h ℓ t,j is the hidden state of the j -th token in step s t at layer ℓ , we define e t = 1 L t L t X j =1 h ℓ t,j . This implementation is straightforward during training, when the policy model’s hidden states are accessible, for example using HuggingF ace TRL library [ 22 ] for RL training. Implementation B (serving-style rollout in RL using vLLM / sglang). In many RL pipelines, rollouts are generated by high-throughput inference engines (e.g., vLLM or sglang). In practice, these serving stacks may not expose the current policy model’s intermediate hidden states. In that case, we can compute step embeddings by running a separate text embedding model on each step string (after grouping tokens into steps using the newline delimiter) and using the resulting sentence embedding as e t . In our experiments we use Qwen/Qwen3-Embedding-0.6B as this separate embedding model. R emarks. Both implementations produce similar performances; Implementation B is preferred for efficiency and normally it will be much faster than Implementation A since it could use high-throughput inference engines (e.g., vLLM or sglang) to generate rollouts. A.2 Clustering details for functional nodes W e consider two practical choices for clustering step embeddings into latent reasoning types: KMeans and HDBSCAN. KMeans. KMeans is well suited to settings where the number of reasoning steps is not very large and the step structure is relatively regular . In our experiments, we use KMeans for the mathematical reasoning setting, where trajectories often follow a more regular progression such as setup, derivation, verification, and answer extraction, and we find KMeans empirically stable and reproducible across runs. Since KMeans requires specifying the number of clusters, we use k ≈ √ M , where M is the number of reasoning steps in the current response. 13 HDBSCAN. HDBSCAN is attractive when the step structure is more heterogeneous because it can infer the number of clusters automatically and can better capture non spherical cluster geometry . In our experiments, we use HDBSCAN for the open ended setting, where the reasoning patterns are more di- verse across prompts. F or HDB SCAN, we use Euclidean distance ( metric=’euclidean’ ), i.e., d ( e , e ′ ) = ∥ e − e ′ ∥ 2 . W e set min_cluster_size = max(2 , min(5 , M / 4)) and min_samples = min_cluster_size − 1 , where M is the total number of step embeddings. Noise points (assigned label − 1 ) are either grouped into a separate cluster or , if all points are noise, assigned to a default cluster . B Experimental Details B.1 Data Preparation AIME Historical Problems (Math Setting). W e use competition problems from the American Invitational Mathematics Examination (AIME) spanning 1983 to 2024, sourced from the publicly available AIME Problems 1983–2024 dataset on Kaggle. 1 This dataset contains around 1,000 problems in total. Each problem is formatted as a single-turn prompt with the instruction “Let’s think step by step and output the final answer within \boxed{}.” appended to guide the model to solve the problem and box the final answer . OpenR ubrics-v2 (Open-Ended Setting). W e use the OpenRubrics/OpenRubric-v2 dataset from HuggingF ace, 2 which contains preference pairs across diverse task categories including creative writing, planning, coding, and advice. W e use only the instruction field as the training prompt and apply the following filtering pipeline to select high-quality prompts. First, we perform response length filtering: since this dataset contains reference responses, we use response length as a proxy to identify questions that elicit sufficiently complex reasoning. W e require both reference responses to fall within the token range [512 , 4096] . R esponses shorter than 512 tokens likely correspond to trivial questions that do not require deep reasoning, while responses longer than 4096 tokens are excluded for efficient training. This filtering ensures we select prompts that generate appropriately complex reasoning traces. Second, we apply deduplication by removing duplicate instructions (identified by hash) to prevent duplicate training samples. After filtering, we retain around 3,000 samples. B.2 T raining Configuration All training are mainly implemented via the verl library [ 19 ], though we also have TRL [ 22 ] version. All experiments are conducted on a single node equipped with 8 NVIDIA A100 GP Us using Fully Sharded Data P arallel (FSDP2) for model parallelism. The Structure R eward requires step embeddings computed from each rollout’s block. T o avoid interfering with the main policy rollout (which runs under vLLM), we launch a standalone embedding server using vllm.entrypoints.openai.api_server with the Qwen/Qwen3-Embedding-0.6B model on a dedicated port, consuming only 5% of one GPU’s memory . The reward function queries this server via HTTP for each generated trajectory . T ables 5 and 6 summarize the training hyperparameters used in our PPO and GRPO experiments, respectively . Across both algorithms, the Math and Open-Ended settings mainly differ in the training data, maximum response length, total epochs, and clustering method. 1 https://www.kaggle.com/datasets/tourist800/aime- problems- 1983- to- 2024 2 https://huggingface.co/datasets/OpenRubrics/OpenRubric- v2 14 Hyperparameter Math Setting Open-Ended Setting RL algorithm PPO PPO Advantage estimator GAE GAE T raining data AIME 1983–2024 OpenR ubrics-v2 Base model Qwen/Qwen3-4B Qwen/Qwen3-4B R ollout engine vLLM vLLM R ollouts per prompt ( G ) 8 8 T rain batch size 256 256 P olicy mini batch size 256 256 P olicy micro batch size / GPU 32 32 Critic micro batch size / GPU 32 32 Actor learning rate 1 × 10 − 6 1 × 10 − 6 Critic learning rate 1 × 10 − 5 1 × 10 − 5 Max prompt length 1,024 tokens 1,024 tokens Max response length 8,192 tokens 4,096 tokens Filter overlong prompts T rue T rue T runcation mode error error Data shuffle F alse F alse KL loss coefficient 0.001 0.001 KL loss type low_var_kl low_var_kl Entropy coefficient 0 0 Use KL in reward F alse F alse Critic warmup 0 0 T otal epochs 15 5 Save frequency 20 20 T est frequency 5 5 P arallelism strategy FSDP2 FSDP2 Dynamic batch sizing T rue T rue Gradient checkpointing T rue T rue Activation offload T rue T rue R ollout GPU memory utilization 0.7 0.7 Embedding model Qwen/Qwen3-Embedding-0.6B Qwen/Qwen3-Embedding-0.6B Embedding server standalone vLLM API server standalone vLLM API server Clustering method KMeans HDBSCAN T able 5: PPO training hyperparameters for the Math and Open-Ended settings. 15 Hyperparameter Math Setting Open-Ended Setting RL algorithm GRPO GRPO T raining data AIME 1983–2024 OpenR ubrics-v2 Base model Qwen/Qwen3-4B Qwen/Qwen3-4B R ollout engine vLLM vLLM R ollouts per prompt ( G ) 8 8 T rain batch size 256 256 P olicy mini batch size 256 256 P olicy micro batch size / GPU 32 32 Actor learning rate 1 × 10 − 6 1 × 10 − 6 Max prompt length 1,024 tokens 1,024 tokens Max response length 8,192 tokens 4,096 tokens Filter overlong prompts T rue T rue T runcation mode error error Data shuffle F alse F alse KL loss coefficient 0.001 0.001 KL loss type low_var_kl low_var_kl Entropy coefficient 0 0 Use KL in reward F alse F alse Critic warmup 0 0 T otal epochs 15 5 Save frequency 20 20 T est frequency 5 5 P arallelism strategy FSDP2 FSDP2 Dynamic batch sizing T rue T rue Gradient checkpointing T rue T rue Activation offload T rue T rue R ollout GPU memory utilization 0.7 0.7 Embedding model Qwen/Qwen3-Embedding-0.6B Qwen/Qwen3-Embedding-0.6B Embedding server standalone vLLM API server standalone vLLM API server Clustering method KMeans HDBSCAN T able 6: GRPO training hyperparameters for the Math and Open-Ended settings. 16 B.3 Additional Length Analysis F or completeness, we report the full response length comparisons against all available baselines for both the open ended and math settings. Method A vg T okens ∆ vs. Base Base 2677.91 0.00 DPO 2661.46 -16.45 EMPO 2731.92 +54.01 PPO w/ Structure R eward 2303.17 -374.74 GRPO w/ Structure R eward 2285.87 -392.04 T able 7: Full average response length comparison on WildBench. Method AIME25 AMC23 MA TH500 Minerva A vg ∆ vs. Base Base 7728.0 5884.1 4182.6 5151.7 5736.6 0.0 PPO w/ Ground- T ruth 7047.7 4634.7 3124.9 4021.7 4707.3 -1029.3 PPO w/ Structure R eward 7296.6 5069.4 3451.1 4485.4 5075.6 -661.0 GRPO w/ Ground- T ruth 6477.7 3956.6 2559.0 3143.8 4034.3 -1702.3 GRPO w/ Entropy Minimization (EMPO) 6651.5 4058.6 2702.4 3327.9 4185.1 -1551.5 GRPO w/ Majority V oting (TTRL) 6635.0 4057.8 2663.5 3258.5 4153.7 -1582.9 GRPO w/ Structure R eward 6984.9 4506.9 3029.4 3829.9 4587.8 -1148.8 T able 8: Full average response length comparison on mathematical reasoning benchmarks. 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment