Principal Prototype Analysis on Manifold for Interpretable Reinforcement Learning

Recent years have witnessed the widespread adoption of reinforcement learning (RL), from solving real-time games to fine-tuning large language models using human preference data significantly improving alignment with user expectations. However, as mo…

Authors: Bodla Krishna Vamshi, Haizhao Yang

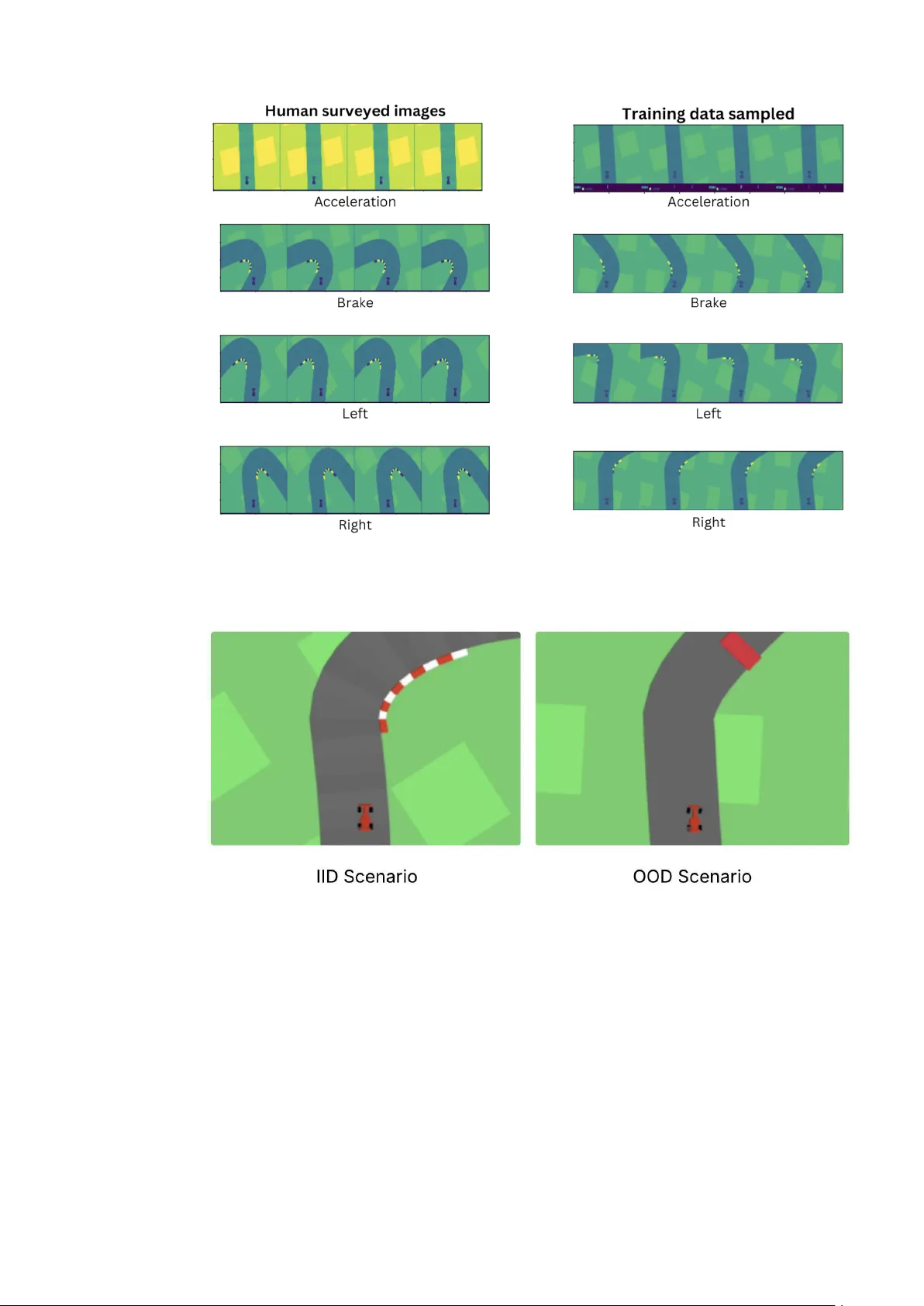

P APER Principal Protot yp e Analysis on Manifold fo r Interpretable Reinfo rcement Lea rning Bo dla Krishna V amshi 1 and Haizhao Y ang 2 1 Department of Computer science, University of Maryland College park, USA 2 Department of Mathematics, Universit y of Maryland College park, USA Keyw ords: Interpretabilit y , Reinforcemen t learning agen ts, Manifold learning Abstract Recen t years hav e witnessed the widespread adoption of reinforcement learning (RL), from solving real-time games to fine-tuning large language mo dels using h uman preference data significan tly impro ving alignment with user expectations. Ho w ev er, as mo del complexit y gro ws exp onentially , the in terpretabilit y of these systems b ecomes increasingly challenging. While n umerous explainability metho ds ha v e been dev elop ed for computer vision and natural language pro cessing to elucidate both lo cal and global reasoning patterns, their application to RL remains limited. Direct extensions of these metho ds often struggle to main tain the delicate balance betw een interpretabilit y and p erformance within RL settings. Protot yp e-W rapper Netw orks (PW-Nets) ha v e recently shown promise in bridging this gap by enhancing explainabilit y in RL domains without sacrificing the efficiency of the original blac k-box models. Ho w ev er, these metho ds t ypically require man ually defined reference protot yp es, whic h often necessitate exp ert domain knowledge. In this work, we prop ose a metho d that remo v es this dep endency b y automatically selecting optimal protot yp es from the a v ailable data. Preliminary exp eriments on standard Gym en vironments demonstrate that our approac h matches the p erformance of existing PW-Nets, while remaining comp etitive with the original black-box models. 1 In tro duction Deep reinforcemen t learning (RL) models hav e achiev ed state-of-the-art p erformance in domains suc h as Go Silver et al. [ 2016 ], Chess Silver et al. [ 2017 ], inv erse scattering Jiang et al. [ 2022 ], and self-driving cars Kiran et al. [ 2021 ]. More recen tly , RL has b een successfully applied to align large language mo dels with h uman preferences, receiving considerable attention as a p ow erful p ost-training strategy using extensiv e human feedback data Ouyang et al. [ 2022 ], Rafailov et al. [ 2024 ]. Ho w ev er, despite these adv ances, the deploymen t of RL agents in sensitive domains remains limited due to the opaque nature of their decision-making pro cesses. Extracting the rationale b ehind an agen t’s actions in a h uman-interpretable format remains a significant challenge, yet doing so is crucial for understanding failure mo des and ensuring trust in these systems. T o address this c hallenge, prototype-based netw orks hav e emerged as a promising approach for enhancing the in terpretabilit y of deep learning mo dels. ProtoPNet Chen et al. [ 2019 ], initially prop osed for image classification tasks, in tro duced pre-ho c interpretabilit y by asso ciating predictions with learned protot yp e representations. This idea w as later extended to deep RL with Prototype-W rapper Netw orks (PW-Nets) Kenny et al. [ 2023 ], whic h provide p ost-ho c interpretabilit y while preserving the p erformance of the underlying blac k-b o x agent. By incorp orating exemplar-based reasoning, PW-Nets allow users to insp ect and understand the agen t’s ac tions through user-defined reference examples, without degrading task p erformance. Despite these recen t adv an tages, there is a remaining challenge to automatically and efficien tly discov er data-adaptive reference examples for interpreting RL b eha viors, since manually curated prototypes present several limitations: Human-selected protot yp es are costly to acquire, difficult to scale, and often lack consistency across environmen ts, reducing the repro ducibility and generalization of explanations. T o ov ercome the ab ov e limitations, w e prop ose our principal prototype analysis on manifold: an automated prototype sampling metho d that eliminates the need for man ual in terv en tion and selects prototypes adaptive to RL tasks on the data manifold. T o the b est of our knowledge, this is among the first works to explore automated protot yp e discov ery in reinforcement learning settings while retaining the p erformance of the blac k-b o x agent. Our approach leverages a combination of metric and manifold learning ob jectiv es to select prototypes directly from the enco ded state space that reflects a low-dimensional geometric represen tation of the RL task, providing a more scalable and principled mechanism for protot yp e discov ery . The co de is publicly av ailable at § Co de • Automated and Decoupled Prototype Disco v ery: Our metho d prop oses a nov el t w o-stage architecture that decouples prototype discov ery from p olicy optimization. In the first stage, it automatically selects protot yp es from the agen t’s tra jectory data using a ligh t w eigh t neural netw ork trained with combined manifold and metric learning ob jectives, remo ving the need for human-curated examples. In the second stage, these prototypes are fixed and in tegrated into the PW-Net for interpretable action prediction, preserving blac k-b o x p erformance. • Geometry-Aw are and F aithful Prototypes via Real Instances: Instead of learning abstract em b eddings, our metho d grounds each learned proxy vector in real training samples b y mapping them to their nearest enco ded instance. This ensures prototypes are b oth geometry-a w are by leveraging piecewise-linear manifold approximations and semantically faithful, enabling more in tuitiv e and interpretable b ehavior analysis of RL agents. 2 Related W orks In terpretabilit y in neural netw ork architectures, particularly in computer vision (CV) and natural language pro cessing (NLP), has adv anced substantially , encompassing b oth pre-ho c and p ost-ho c strategies. In CV, p ost-ho c metho ds such as Grad-CAM Selv ara ju et al. [ 2019 ], RISE P etsiuk et al. [ 2018 ], and o cclusion-based tec hniques like Meaningful Perturbations F ong and V edaldi [ 2017 ] hav e enabled visual explanations b y highlighting image regions most influen tial to predictions. How ev er, these metho ds pro vide explanations only after decisions are made, offering limited insight into the decision-making pro cess itself. In NLP , pre-ho c approaches include interpretable rule-based decision sets Lakk ara ju et al. [ 2016 ] and, more recently , Proto-LM Xie et al. [ 2023 ], which embeds protot ypical reasoning directly into large language mo dels. Post-hoc metho ds such as LIME Rib eiro et al. [ 2016 ] and In tegrated Gradien ts Sundarara jan et al. [ 2017 ] are widely used to appro ximate lo cal mo del b ehavior and attribute predictions to input features. Other efforts hav e c hallenged conv en tional practices; for instance, Jain and W allace [ 2019 ] questioned the reliability of atten tion weigh ts as explanations, while Arras et al. [ 2016 ] applied Lay er-wise Relev ance Propagation to trace decision origins in text classifiers. Although sev eral interpretabilit y techniques hav e b een prop osed for reinforcement learning (RL) mo dels V ouros [ 2022 ], Milani et al. [ 2022 ], most prior work relies on interpretable surrogate mo dels, such as decision trees, that imitate agent b eha vior in symbolic domains. These approaches, ho w ev er, do not scale to complex environmen ts with high-dimensional observ ations such as high-dimensional pixel-based observ ations. In deep RL settings, most interpretabilit y research has fo cused on p ost-ho c metho ds utilizing atten tion mechanisms Zambaldi et al. [ 2019 ], Mott et al. [ 2019 ] or tree-based surrogates Liu et al. [ 2018 ], but these often fall short in revealing the underlying reasoning or in ten t of the agent Rudin et al. [ 2021 ]. Some approaches attempt to distill recurrent neural net w ork (RNN) p olicies into finite-state machines Danesh et al. [ 2021 ], Koul et al. [ 2018 ], but suc h metho ds can yield opaque explanations and are constrained to sp ecific architectures. Our w ork builds on prototype-based neural netw orks, which are inherently interpretable by design. These mo dels asso ciate test instances with protot ypical examples during the forw ard pass, enabling in tuitiv e, exemplar-based reasoning. A foundational example of this approach was presen ted by Li et al. [ 2017 ], who in tro duced a pre-ho c metho d that learns prototypes in latent space and classifies inputs based on their L2 distance to these prototypes. This metho d also required a deco der to visualize protot yp e represen tations. A notable extension was ProtoPNet Chen et al. [ 2019 ], whic h asso ciated protot ypes with image parts rather than entire images, enhancing fine-grained in terpretabilit y . The prototype netw ork paradigm has b een substantially extended since ProtoPNet, with several lines of w ork addressing its core limitations. ProtoT ree Nauta et al. [ 2021 ] embedded prototype reasoning within a hierarc hical decision tree structure, enabling global explanations through a sequence of prototype comparisons rather than a single similarity score. PIPNet Nauta et al. [ 2023 ] further impro v ed visual coherence by enforcing that prototypes corresp ond to contiguous, h uman-in terpretable image patches rather than distributed feature vectors. In the few-shot learning literature, Protot ypical Netw orks Snell et al. [ 2017 ] demonstrated that class-level protot yp e representations computed as the mean of support set embeddings provide a p ow erful inductiv e bias for generalization, establishing the theoretical basis for prototype-based reasoning in lo w-data regimes. Building on this, P AL Arik and Pfister [ 2019 ] introduced prototype-based atten tiv e learning to dynamically weigh t prototype contributions, improving robustness to in tra-class v ariation. A common limitation across these metho ds is that prototypes are either learned end-to-end alongside the classification ob jectiv e, risking a p erformance-interpretabilit y tradeoff, or fixed as simple class statistics such as means or medoids, which fail to capture the in trinsic geometry of the learned representation space. Our metho d addresses this gap by decoupling protot yp e discov ery from task optimization and grounding prototype selection in the manifold structure of the enco ded state space, ensuring that prototypes are b oth geometrically faithful and discriminativ e without degrading task performance. A related but distinct line of in terpretabilit y research fo cuses on concept-based explanations, whic h seek to explain model b ehavior in terms of high-level semantic concepts rather than individual training instances. T esting with Concept Activ ation V ectors (TCA V) Kim et al. [ 2018 ] quan tifies the sensitivity of mo del predictions to user-defined concept directions in activ ation space, enabling global explanations without mo difying the mo del arc hitecture. Concept Bottleneck Mo dels (CBMs) Koh et al. [ 2020 ] extend this idea by explicitly conditioning predictions on a learned concept la y er, allowing human interv en tion at inference time. More recent work such as ConceptSHAP Y eh et al. [ 2022 ] has sought to automate concept discov ery by identifying a complete and minimal set of concepts that explain mo del b ehavior without requiring predefined concept annotations. While these approac hes provide semantically rich explanations, they share t w o limitations that constrain their applicability to RL settings: they typically require either predefined concept v o cabularies or auxiliary conce pt-annotated datasets, and they do not ground explanations in concrete training instances, making it difficult for non-exp ert users to interpret agen t b ehavior through direct analogy . Our prototype-based approach complements concept-lev el metho ds b y providing instance-level, exemplar-based explanations that are immediately in terpretable without domain-sp ecific concept sup ervision, while the geometry-aw are selection mec hanism ensures that chosen exemplars faithfully represe n t the decision-relev an t structure of the agen t’s learned state representation. 3 Metho dology 3.1 Motivation Protot yp e-based metho ds offer an interpretable wa y to asso ciate each class with representativ e examples; here the representativ e examples are termed as prototypes. A straightforw ard baseline to define protot yp es is using simple statistics such as the class mean or medoids in the embedding space. Ho w ev er, such naive approaches fail to capture the intrinsic geometry of enco ded represen tations: they are biased by outliers, insensitive to multi-modal distributions within classes, and often yield protot yp es that are statistically central but semantically uninformative. T o construct meaningful prototypes, it is essential to account for the geometry of the data distribution itself. According to the manifold hypothesis Ca yton [ 2005 ], high-dimensional representations typically reside on lo w er-dimensional manifolds. Leveraging this prop erty enables geometry-aw are prototype sampling. Classical manifold learning tec hniques, how ev er, come with limitations metho ds like t-SNE v an der Maaten and Hinton [ 2008 ], UMAP McInnes et al. [ 2020 ], and LLE Row eis and Saul [ 2000 ] emphasize neigh b orho o d preserv ation but often distort lo cal dep endencies or fail to provide consisten t global structure. T o address this, we adopt a piecewise-linear manifold learning approac h in which nonlinear manifolds are decomp osed into lo cally linear regions. This design ensures that protot yp es are drawn from regions that reflect lo cal geometry , av oiding the pitfalls of global a v erages or distorted embeddings. While manifold learning preserv es geome tric structure, prototypes must also b e disc riminativ e across classes. Geometry alone do es not guaran tee that prototypes tightly capture intra-class consistency or maximize in ter-class separation. T o achiev e this, we incorp orate a metric learning ob jectiv e. Methods such as triplet or contrastiv e loss require predefined protot yp es and extensive sample mining, whic h is inefficien t and often unstable. Instead, we employ Proxy-Anc hor loss, whic h introduces learnable class-level proxy vectors that directly enforce compact clustering within a class and clear separation b et w een classes. After training, each proxy is mapp ed to its nearest training instance, yielding protot yp es that are sim ultaneously geometry-aw are and discriminative. In Chen et al. [ 2019 ], the notion of learnable prototypes was in tro duced for image classification, where protot yp e learning was jointly optimized alongside the classification ob jective. While this approac h prov ed effective for sup ervised image tasks, its adaptation to reinforcement learning in Kenn y et al. [ 2023 ] (PW-Net*) resulted in noticeably weak er p erformance compared to black-box RL mo dels. T o ov ercome this limitation, we prop ose to decouple these ob jectives into tw o sequen tial stages. In the first stage, we fo cus on sampling prototypes that serve as robust and represen tativ e anchors for each class. In the second stage, these prototypes are fixed and used within PW-Net, whic h is then trained exclusively on the RL ob jectiv e. 3.2 Dataset Our metho d b egins with the assumption that w e ha v e access to a pre-trained p olicy π bb op erating within a Mark o v Decision Pro cess (MDP) Sutton and Barto [ 2015 ]. Since all p olicies used in our exp erimen ts are implemented as neural netw ork architectures, we assume that each p olicy concludes with a final linear la y er. Under this setting, the p olicy π bb can b e decomp osed in to tw o comp onen ts: an enco der f enc , which maps the input state s to a latent representation z , and a final linear la y er defined by weigh ts W and bias b . The resulting p olicy function can b e expressed as: π bb ( s ) = W f enc ( s ) + b, Where z = f enc ( s ) represen ts the enco ded state.T o construct the dataset used for training our protot yp e selection mechanism, we execute the pre-trained agent in its original environmen t for n time steps. During this rollout, w e collect enco ded state–action pairs, res ulting in a dataset D : D ← { ( z i , π bb ( s i )) } n i =1 . 3.3 T r aining overview Figure 1. Overview of the prop osed method As men tioned in 3.1 , our metho d consists of t w o stages. In the first stage of our metho d, we initialize a simple neural net w ork h θ and train it on Dataset D to jointly optimize manifold learning Eq.: 4 and metric learning ob jectiv es Eq.: 2 . The neural netw ork h θ learns to map the high-dimensional enco ded represen tations into low er dimensions. Before the training pro cess, we initialize the pro xies θ q and θ m ; here b oth the pro xies are unique for eac h class and initiated randomly with θ q = θ m . The pro xy vector θ q is learned using the metric learning ob jectiv e Eq.: 2 and up dated via bac k-propagation. The proxy vector θ m is up dated via the Momen tum up date He et al. [ 2020 ] where γ is the momentum constant. θ m ← γ θ m + (1 − γ ) θ q (1) Before training our mo del h θ , w e reformat the dataset D to consist of pairs of enco ded state represen tations and their corresp onding discretized actions (Section 4.1 ). This discretization allows the use of a metric learning ob jectiv e Eq.: 2 that clusters enco ded states with similar actions and separates those with dissimilar ones, and also enables learning discriminative protot yp es. During training, for ev ery mini-batch B we build linear piecewise manifolds as outlined in Section 3.4 . F or every p oint in B , we then compute the manifold-based similarity following the pro cedure in Section 3.5 . This similarity measure is used to compute the manifold p oint-to-point loss L manifold . A t the same time, we compute the Proxy Anchor loss L P A using randomly initialized class pro xies θ q and laten t representations z in batc h B . The final loss is computed as L total = L P A + L manifold . The manifold p oin t-to-p oin t loss is designed to reduce the distance b etw een p oints lying on the same manifold, th us preserving lo cal geometric structure while increasing the distance b etw een p oin ts on different manifolds. In contrast, the Proxy Anc hor loss encourages samples from the same class to cluster closer together while pushing samples from different classes further apart; this encourages the discriminativ e learning of protot yp es. F or ev ery ep o ch, the netw ork h θ is up dated through bac kpropagation, and the proxy vectors are up dated according to the procedure describ ed in Eq.: 1 . Once the training is completed, w e use the learned pro xy vectors θ m to select the nearest training data sample as protot yp es for eac h class to be used in the stage of tw o of training PW-net; here, for ev ery c lass, there is only θ m b eing initialized, i.e., w e will be only getting one protot yp e p er class. 3.4 Manifold c onstruction Based on the Manifold h yp othesis, we assume that the enco ded state representations pro duced by the p olicy π bb , though inherently complex and non-linear, can b e lo cally approximated in to smaller c h unks of linear regions. Our approach leverages this structural assumption to automatically iden tify representativ e prototypes that capture the essential characteristics of each action class. T o efficiently approximate the structure of the data manifold, we adopt a piecewise linear manifold learning metho d, whic h constructs lo calized m -dimensional linear submanifolds around selected anc hor p oints. Giv en a batch B con taining N data p oints, we randomly select n of them to serv e as anchors. F or each anchor p oint h θ ( z i ), w e initially collect its m − 1 nearest neighbors in the enco ded represen tation space based on Euclidean distance to form the neighborho o d set X i . The manifold expansion pro cess pro ceeds iterativ ely b y attempting to add the m - th nearest neigh b or to X i . After eac h addition, we recompute the b est-fit m -dimensional submanifold using PCA and assess whether all p oin ts in X i can b e reconstructed with a qualit y ab o v e a threshold T %. If the reconstruction qualit y remains acceptable, the new p oint is retained in X i ; otherwise, it is excluded. This ev aluation is rep eated for subsequent neighbors N ( h θ ( x i )) j for j ∈ { m l + 1 , . . . , k } , gradually constructing a lo cal linear approximation of the manifold. The final set X i comprises all p oin ts in the anc hor’s neighborho o d that lie well within an m -dimensional linear submanifold. A basis for this submanifold is computed b y applying PCA to X i and extracting the top m eigen v ectors. W e choose PCA for this task as it is computationally efficien t and well-suited for capturing linear approximations of non-linear data, in alignmen t with our assumption of lo cally linear structure within the high-dimensional state space. 3.5 L oss F unctions Pro xy Anc hor Loss: W e use a modified version of proxy anchor loss with Euclidean distance instead of cosine similarit y: L P A = 1 | Θ + | X θ q ∈ Θ + log 1 + X z ∈Z + θ q exp ( − α · ( ∥ h θ ( z ) − θ q ∥ 2 − ϵ )) (2) + 1 | Θ | X θ q ∈ Θ log 1 + X z ∈Z − θ q exp ( α · ( ∥ h θ ( z ) − θ q ∥ 2 − ϵ )) (3) Here, Θ denotes the set of all proxies, where each proxy θ q ∈ Θ serves as a representativ e vector for a class. The subset Θ + ⊆ Θ includes only those proxies that hav e at least one p ositive em b edding in the current batch B . F or a giv en proxy θ q , the laten t representations Z in B (where z ∈ Z ) are partitioned in to t w o sets: Z + θ q , the p ositiv e embeddings b elonging to the same class as θ q , and Z − θ q = Z \ Z + θ q , the negativ e embeddings. The scaling factor α con trols the sharpness of optimization b y amplifying hard examples when large (fo cusing gradients on difficult pairs) or smo othing training when small (spreading w eigh t across all pairs). The margin ϵ enforces a buffer zone b et w een p ositives and negatives by requiring p ositives to b e closer to their proxies and negativ es to b e sufficiently farther aw a y . Manifold Poin t-to-Poin t Loss: This loss helps in estimating the p oint to p oint similarities preserving the geometric structure: L manifold = X i,j ( δ · (1 − s ( z i , z j )) − ∥ h θ ( z i ) − h θ ( z j ) ∥ 2 ) 2 (4) where s ( z i , z j ) is the manifold similarit y computed as: s ( z i , z j ) = s ′ ( z i , z j ) + s ′ ( z j , z i ) 2 with s ′ ( z i , z j ) = α ( z i , z j ) · β ( z i , z j ), where: α ( z i , z j ) = 1 (1 + o ( z i , z j ) 2 ) N α β ( z i , z j ) = 1 (1 + p ( z i , z j )) N β δ is the scaling factor, it determines the maximum separation b etw een dissimilar p oints. The loss encourages Euclidean distances in the em b edding space to matc h manifold-based dissimilarities 1 − s ( z i , z j ), ensuring that the learned metric space resp ects the underlying manifold structure. o ( z i , z j ) is the orthogonal distance from p oin t z i to the manifold of p oin t z j , and p ( z i , z j ) is the pro jected distance b etw een p oint z j and the pro jection of z i on the manifold. The parameters N α and N β con trol how rapidly similarity decays with distance, with N α > N β ensuring that similarit y decreases more rapidly for p oin ts lying off the manifold than for p oin ts on the same manifold. Distance Calculation. F or each p oint pair ( z i , z j ), the distances o ( z i , z j ) and p ( z i , z j ) are calculated using the manifold basis v ectors P j asso ciated with p oin t z j . The pro jection of z i on to P j is computed as pro j P j ( z i ) = z j + P k ⟨ z i − z j , v k ⟩ v k , where v k are the basis v ectors of P j . The orthogonal distance is then o ( z i , z j ) = ∥ z i − pro j P j ( z i ) ∥ 2 , and the pro jected distance is p ( z i , z j ) = ∥ pro j P j ( z i ) − z j ∥ 2 . This pro cess is rep eated for all p oin t pairs, capturing the full geometric structure of the data manifold. The total loss is the sum of these tw o comp onents, allowing the mo del to simultaneously learn a metric space that resp ects action classes while preserving the geometric structure of the data. 3.6 Performanc e r eview The action output a ′ from the Protot yp e-W rapp er Netw ork (PW-Net) can in some cases generalize b etter than the original blac k-b o x mo del’s action a Snell et al. [ 2017 ], Li et al. [ 2021 ], due to impro v ed alignment with class-representativ e prototypes—even without further interaction with the en vironmen t. This generalization is critically influenced by the quality and representativ eness of the selected protot yp es. The black-box p olicy π bb computes the action as: a = W f enc ( s ) + b where z is the latent state represen tation obtained from the enco der. PW-Net enforces structured reasoning through protot yp es and computes sim ilarit y scores as: a ′ i = N i X j =1 W ′ i,j sim( z i,j , p i,j ) The similarit y function is defined as: sim( z i,j , p i,j ) = log ( z i,j − p i,j ) 2 + 1 ( z i,j − p i,j ) 2 + ϵ . This ensures actions are c hosen based on structured protot yp e distances rather than raw neural activ ations. The mo del uses prototype-based regularization, which provides b etter generalization b y using the learned p olicy π bb as additional input signal. F or simplicity assume a deep RL domain with only t w o actions p ossible, the action can b e computed as a ′ a ′ 1 = W ′ 1 , 1 log d 2 1 , 1 + 1 d 2 1 , 1 + ϵ ! + W ′ 1 , 2 log d 2 1 , 2 + 1 d 2 1 , 2 + ϵ ! d i,j = z i,j − p i,j . Where W ′ is the man ually defined weigh t matrix for each action, the output a ′ is hea vily dep enden t on the similarity score b etw een the z i,j and p i,j , this metric enables PW-Net to a v oid completely mimic king the p olicy π bb and instead use it as an additional input signal along with the c hoice of prototype to b etter align resp onses with human choices. 3.7 Mo del Ar chite ctur e This section includes details ab out the blac k-b o x mo dels, user study , and the mo del architecture ( h θ ) used in our metho d. W e used a single-lay er netw ork with intermediate normalizations. The protot yp e size is set to 50 for all the environmen ts. The motiv ation for using a simpler mo del is to a v oid losing information in the enco ded vectors during manifold construction. T able 1. Mo del Arc hitecture La y er La y er Parameters Linear (latent size z , prototype size) InstanceNorm1d prototype size ReLU - 4 Exp erimen ts 4.1 A ction Discr etization Our protot yp e discov ery framework requires discrete class lab els to enable metric learning and clustering of enco ded state represen tations. While such lab els are naturally av ailable in discrete-action en vironmen ts, contin uous control settings lack an explicit class structure. T o address this, w e discretize the contin uous action space by mapping each action vector to a single dominan t action class. Sp ecifically , we transform the action v alues using a sigmoid function and assign eac h enco ded state to the class corresp onding to the maximum activ ation. This pro cess iden tifies the most influential control dimension at each state, thereby preserving the primary decision signal of the p olicy . W e use absolute v alues of the action comp onents prior to transformation to emphasize action magnitude rather than direction. This design c hoice aligns with our ob jective of capturing dominan t b ehavioral mo des for interpretabilit y , rather than mo deling fine-grained directional v ariations in control. F or instance, in the Car Racing environmen t, the original action output is represen ted as a tuple [(acc, brake), left, right] . W e first restructure this into a unified v ector format: [acc, brake, left, right] . The enco ded state representation is then assigned a discrete lab el based on the index of the maximum v alue obtained after applying the sigmoid function to this transformed v ector. This discretization pro cedure is consisten tly applied across all con tin uous action environmen ts, including the Bip edal W alker and Humanoid Standup environmen ts, enabling compatibility with our protot yp e selection and metric learning pip eline. Imp ortantly , our goal is not to exactly reconstruct the con tin uous action space, but to enable interpretable grouping of states based on dominan t action tendencies. This discretization provides a consistent and scalable mechanism for protot yp e discov ery across b oth discrete and contin uous domains, while maintaining alignment with the agen t’s decision structure. Empirically , we observe that this transformation do es not degrade p olicy p erformance, as demonstrated in Section 4.2 . 4.2 Numeric al R esults Metho d Car Racing Bip edalW alker HumanoidStandup (Rew ard) (Rew ard) (Rew ard) Our metho d 220.91 ± 0.85 312.10 ± 0.17 75112.60 ± 840.25 PW-Net 220.72 ± 0.34 308.27 ± 3.41 74980.37 ± 816.84 VIPER N/A -92.36 ± 10.09 - PW-Net* -10.23 ± 2.20 197.85 ± 52.19 - k-means -1.56 ± 0.81 -105.68 ± 0.54 - Black-Bo x 219.56 ± 0.85 312.32 ± 0.21 74930.50 ± 837.61 T able 2. Reward comparison on Car Racing, Bip edal W alker, and Humanoid Standup tasks T ables 2 and 3 presen t the p erformance comparison across six reinforcemen t learning en vironmen ts. All metho ds are ev aluated under a controlled setting using identical enco der arc hitectures (black-box), training pro cedures, hyperparameters (including ep o chs), and random seeds. The only difference b et w een metho ds lies in the prototype selection strategy . Our metho d consisten tly matches or outp erforms PW-Net across all en vironments, while achieving p erformance comparable to the original blac k-b o x p olicies. This demonstrates that automated prototype selection can impro v e interpretabilit y without sacrificing p olicy p erformance. W e do not rep ort Metho d P ong (Reward) Lunar Lander (Rew ard) Acrob ot (Rew ard) Our metho d 14.96 ± 0.45 218.01 ± 1.47 -83.12 ± 2.39 PW-Net 10.72 ± 0.26 216.38 ± 1.69 -84.67 ± 2.42 VIPER N/A -423.22 ± 53.91 - PW-Net* 8.64 ± 1.27 131.01 ± 89.52 - k-means -19.47 ± 2.49 -423.41 ± 97.85 - Black-Bo x 12.07 ± 0.39 214.75 ± 1.08 -85.54 ± 3.37 T able 3. Reward comparison on Pong, Lunar Lander, and Acrobot en vironmen ts. results for VIPER, PW-Net*, and k-means in certain high-dimensional environmen ts (e.g. HumanoidStandup and Acrob ot), as these metho ds fail to scale to such settings and do not pro duce meaningful p olicies in preliminary exp erimen ts. The PW-Net Kenn y et al. [ 2023 ] relied on human-curated prototypes in visually interpretable en vironmen ts such as Car Racing. How ev er, this approach b ecomes infeasible in complex domains with high-dimensional, non-visual state spaces and large con tin uous action sets. F or instance, the Humanoid Standup en vironmen t (Section 4.3 ) features a high-dimensional vector input and 17 con tin uous control actions across joints and rotors, making manual prototype selection impractical without domain-sp ecific to ols or exp ertise. Our automated protot yp e selection metho d ov ercomes this limitation b y leveraging geometric and class-level structure in the latent space. Notably , in the Humanoid Standup task, our approac h achiev es a mean reward of 75,112.60 (SE = 840.25), closely matc hing the original black-box mo del’s p erformance of 74,930.50 (SE = 837.61). This result demonstrates that our metho d retains p erformance ev en in settings where man ual protot ype curation is infeasible. F or the new environmen ts (Humanoid Standup and Acrob ot) without man ually curated prototypes, we use class-mean protot yp es as a repro ducible heuristic baseline. This do es not reflect the original PW-Net design but provides a consisten t comparison in settings where man ual selection is infeasible. This follows the approach used by the PW-Net authors (Section 4.6 ) that they hav e used for training on the Bip edalW alk er and Lunar Lander en vironmen ts. T o analyze the effect of v arying hyperparameters, we hav e p erformed an ablation study (Section 5 ) on the Bi-p edal and A tari P ong environmen ts. T o ensure a fair comparison, PW-Net and our metho d share the same enco der arc hitecture, training pro cedure, h yperparameters (including learning rate, n um ber of ep o chs), and random seeds. The only difference b etw een the tw o metho ds lies in protot yp e selection: PW-Net relies on manually or heuristically selected prototypes, whereas our metho d automatically disco v ers prototypes through the prop osed geometry-aw are approach. This con trolled setup isolates the effect of prototype selection on p erformance and interpretabilit y . While the original PW-Net w ork ev aluates on four environmen ts with manually selected protot yp es, we extend the ev aluation to six environmen ts. F or environmen ts without predefined protot yp es, we employ class-mean representations as a standardized baseline. This choice ensures repro ducibilit y and reflects realistic settings where exp ert-defined prototypes are unav ailable. Imp ortan tly , this highlights a key limitation of PW-Net—its reliance on manual prototype selection—whic h our metho d addresses through automated, geometry-aw are prototype discov ery . 4.3 Black-Box Mo dels F or the CarRacing environmen t, we used a CNN mo del trained using PPO JinayJain [ 2025 ]. This pre-trained mo del w as ev aluated under b oth I ID and OOD settings during the user study . F or A tari Pong, we used a simple CNN trained with the Double Dueling DQN metho d bhctsntrk [ 2025 ]. The mo del used for Bip edalW alker was trained using TD3 nikhilbarhate99 [ 2025b ], and the LunarLander mo del w as trained using the Actor-Critic metho d nikhilbarhate99 [ 2025a ]. These net w orks are relatively simple, reflecting the symbolic nature of their resp ective environmen ts. F or the HumanoidStandup and CartP ole environmen ts, we used mo dels from Stable-Baselines3 Raffin et al. [ 2021 ], trained using PPO with an MLP p olicy . The diversit y of environmen ts, models, and algorithms demonstrates the robustness of our approac h. 4.4 T r aining p ar ameters F or the first phase of training prototype discov ery we train our netw ork for 200 ep o chs on the training dataset (Section 3.2 ) using tw o separate Adam optimizers: one for the netw ork parameters and one for the pro xy parameters. Both optimizers use a learning rate of 1e-3 , accompanied by a learning rate sc heduler with decay rate η t = 0 . 97. The dime nsionalit y of the enco ded vector z v aries dep ending on the environmen t and the enco der mo del, but generally falls near the order of 100. W e use a mini-batch size of 128 samples and set the reconstruction threshold T to 90%. The scale parameter δ is set to 2 (the maximum distance b etw een tw o p oints on a unit sphere), and the submanifold dimension m is fixed at 3. F or the second phase training and ev aluating the sampled prototypes within the PW-Net framew ork we use the Adam optimizer with a learning rate of 1e-2 , again paired with a scheduler using η t = 0 . 97. T raining and ev aluation are conducted ov er 5 indep endent iterations. In each iteration, the PW-Net mo del is trained for 10 ep o c hs and ev aluated ov er 30 simulation runs to compute the mean and standard deviation of the resulting rewards. All exp erimen ts were conducted on an NVIDIA R TX A6000 GPU. In the first stage of our metho d, w e train a light w eigh t neural netw ork h θ to sample protot yp es, which requires appro ximately 640 MB of GPU memory and ab out 7 hours of training time without parallelization. With parallelized estimation of manifold-based similarities, the training time is reduced to roughly 2 hours, with a p eak GPU memory usage of ab out 4700 MB across all environmen ts. 4.5 User Study Figure 2. IID and OOD distribution plots for b oth user groups The in terpretabilit y of PW-Nets arises from their case-based reasoning approach, where decisions are explained through analogies to represen tativ e prototypical states. Prior work Kenny et al. [ 2023 ] demonstrated that h uman-selected protot ypes enable users to form accurate mental mo dels of agen t b ehavior, supp orting effective prediction of b oth successes and failures. Our automated prototype selection is designed to preserv e this interpretabilit y mechanism by identifying states that capture the same decision-critical features that human exp erts w ould highligh t. T o ev aluate the plausibility and faithfulness of the sampled prototypes, and to analyze how protot yp e-based explanations influence participants’ ability to interpret and anticipate the agent’s decisions in b oth I ID and OOD conditions, w e conducted a user study in the CarRacing en vironmen t (Figure 5 ). Out of the six environmen ts considered in our exp eriments, four are sym b olic domains where states are represented as vectors of physical prop erties, while CarRacing and A tari Pong op erate on raw pixels that can b e visually in terpreted. CarRacing was chosen b ecause its driving actions are naturally understandable to non-exp ert users, making it suitable for visual insp ection and ev aluation Rudin et al. [ 2021 ]. Tw o groups of 25 participan ts were recruited. The first group interacted with PW-Nets using our sampled protot yp es as global explanations, while the second group was assigned to a black-box condition in whic h participants were told: “The car has learned to complete the track as fast as p ossible in this en vironmen t by learning from millions of simulations, but no explanation is a v ailable.” In this condition, prototype images were replaced with text-only information, while the protot yp e group received visual exemplars that directly conv ey ed the agent’s reasoning pro cess. This design isolates the con tribution of protot ypes to in terpretability by contrasting a case-based explanation with no explanation. Figure 3. Comparison of Visual similarity between prototypes P articipan ts were presented with 20 scenarios: 10 in-distribution (ID) from the standard CarRacing-v0 en vironmen t where the agent drov e safely , and 10 out-of-distribution (OOD) from a mo dified en vironmen t NotAnyMik e [ 2025 ] introducing new road types and red obstacles that led to actual failure cases. After viewing the car’s curren t state and the corresp onding explanatory condition, participan ts predicted whether the v ehicle would op erate safely on a five-point Likert scale. This setup assessed ho w well explanations enabled users to anticipate agent b ehavior in b oth familiar and no v el situations. Results are summarized in Figure 5 . In the ID scenarios, b oth groups pro duced similar ratings, indicating that participan ts could reliably interpret safe b ehavior in either condition. In contrast, for the OOD cases where the agen t failed, participan ts in the prototype condition were more sensitiv e to these failures: their ratings more closely reflected the unsafe ground truth, while the blac k-b o x group tended to ov erestimate safety . This demonstrates that prototype-based explanations enhance in terpretabilit y by helping users anticipate failure mo des, even if they do not increase o v erall rep orted confidence. In addition, w e ev aluated the interpretabilit y of our sampled prototypes relative to the h uman-curated prototypes used in PW-Nets (Figure 4.5 ). F or each action class, participants rated on a 1–5 scale ho w w ell the prototype represented the corresp onding decision. F or the acceleration class, ratings w ere comparable across b oth metho ds, while for the other classes human-curated protot yp es were slightly preferred. While human-curated prototypes are slightly preferred in some cases, the observ ed differences are marginal. This suggests that our metho d achiev es comparable in terpretabilit y in practice, while eliminating the need for manual prototype selection. Imp ortan tly , our metho d deliv ers this interpretabilit y b enefit in high-dimensional settings where human protot yp e selection is infeasible. 4.5.1 User Study details Tw o groups of 25 users eac h participated in the study . All participan ts provided informed consent. No p ersonally identifiable information was collected. One group w as presented with the black-box mo del (the ”Black-Bo x Group”) (Figure 4 ), while the other with sampled protot yp es (the ”Sampled Prototypes Group”) (Figure 4 ). Both groups were giv en identical scenarios and instructions on how to rate them independently . The figure b elo w sho ws a sample of the I ID and OOD cases shown to users. 4.6 Metho d c omp arisons VIPER Bastani et al. [ 2019 ] extracts interpretable decision-tree p olicies from trained RL agents via imitation learning. Although it offers global explanations, it is limited to lo w-dimensional settings and do es not scale w ell to high-dimensional or con tin uous control environmen ts. The k-means metho d selects protot yp es by choosing the cluster ce n ters within each action class. In contrast, PWnet* learns protot yp es through a joint ob jective that combines a clustering loss and a separation loss while sim ultaneously optimizing for RL p erformance. Moreo v er, our approach Figure 4. Visual Comparison of Human surveyed and Automated Prototypes Figure 5. User Study Overview significan tly reduces the reliance on sub jectiv e inputs, thereb y promoting a more ob jective assessmen t of the prototypes. F or all the environmen ts, we used the same black-box mo dels (Section 4.3 ) used in PW-net. F or the Car Racing and Atari Pong environmen ts, we recomputed the p erformance of the blac k-b o x mo dels, but we retain the rep orted PW-Net Kenny et al. [ 2023 ] results for CarRacing and P ong, as their ev aluation dep ends on human-surv ey ed prototypes, which cannot b e faithfully repro duced. F or all other environmen ts, we recompute PW-Net under identical settings. In the case of sym b olic domains, we constructed canonical protot ypical action-space examples, where the action of in terest was set to 1 or -1 and all others to 0, and subsequently mapp ed these to the closest training samples. These protot yp es were then used to reev aluate PW-Net’s p erformance across the four sym b olic domains in this work. 5 Ablation Study T o analyze the effect of each individual parameter, we hav e p erformed an ablation study on one mo del eac h from the Contin uous and discrete action spaced environmen ts. T o achiev e this we used the Bip edalW alker and Atari p ong environmen ts respectively . 2 4 6 8 300 305 310 315 320 m Rewar d (a) Rew ar d vs m 0 . 4 0 . 6 0 . 8 1 300 305 310 315 γ Rewar d (b) Rew ar d vs γ 1 2 3 300 305 310 315 N β Rewar d (c) Rew ar d vs N β 2 4 6 300 305 310 315 N α Rewar d (d) Rew ar d vs N α 0 . 7 0 . 8 0 . 9 300 305 310 315 T Rewar d (e) Rew ar d vs T 1 2 3 300 305 310 315 δ Rewar d (f ) Rew ar d vs δ 10 20 30 300 305 310 315 α Rewar d (g) Rew ar d vs α 0 0 . 1 0 . 2 300 305 310 315 ϵ Rewar d (h) Rew ar d vs ϵ 0 20 40 308 310 312 314 316 318 no.of pr ototy pes Rewar d (i) Rew ar d vs no.of pr ototy pes Figure 6. Ablation study on BipedalW alker environmen t 5.1 Effe ct of m The parameter m denotes the dimension of the linear submanifold X i , whic h lo cally approximates the data manifold around a p oin t h θ ( z ). T o examine its effect, we v ary m in the range [2 , 8] with a step size of 1. As sho wn in (Figure 7 and Figure 6 )(a), p erformance consistently decreases in b oth the en vironmen ts as m increases. This trend arises b ecause X i is in tended to approximate the immediate neigh b orho o d of a p oint, which is inherently low-dimensional. Larger v alues of m may lead to o v erfitting, since only a limited num ber of nearby samples are av ailable within a batch to reliably estimate X i , thereb y degrading p erformance. F urthermore, we observe that the computational o v erhead for prototype sampling increases with larger m , underscoring the trade-off b et w een accuracy and efficiency . 2 4 6 8 5 10 15 20 m Rewar d (a) Rew ar d vs m 0 . 4 0 . 6 0 . 8 1 5 10 15 20 γ Rewar d (b) Rew ar d vs γ 1 2 3 10 12 14 16 N β Rewar d (c) Rew ar d vs N β 2 4 6 10 12 14 16 N α Rewar d (d) Rew ar d vs N α 0 . 7 0 . 8 0 . 9 10 12 14 16 T Rewar d (e) Rew ar d vs T 1 2 3 10 12 14 16 δ Rewar d (f ) Rew ar d vs δ 10 20 30 10 12 14 16 α Rewar d (g) Rew ar d vs α 0 0 . 1 0 . 2 10 12 14 16 ϵ Rewar d (h) Rew ar d vs ϵ 0 10 20 10 12 14 16 no.of pr ototy pes Rewar d (i) Rew ar d vs no.of pr ototy pes Figure 7. Ablation study on Atari Pong enviro nment 5.2 Effe ct of γ The parameter γ denotes the momentum constant used to up date the proxy vector θ m during protot yp e sampling. F ollowing He et al. [ 2020 ], higher v alues of γ are exp ected to yield impro v ed p erformance, as the pro xy up dates b ecome smoother and more stable. Consistent with this observ ation, (Figure 7 and Figure 6 )(b) shows that in b oth mo dels, p erformance improv es as γ increases, highligh ting the imp ortance of stable momentum up dates for effective representation learning. 5.3 Effe ct of N α & N β The parameters N α and N β con trol the decay of similarity based on the orthogonal and pro jected distances, resp ectiv ely , of a p oint from the linear submanifold in the neighborho o d of another p oin t. W e v ary N α in the range [1 , 6] with a step size of 1, and N β in the range [0 . 5 , 3] with a step size of 0 . 5. As shown in (Figure 7 and Figure 6 )(c), increasing N β leads to decrease in p erformance in b oth the en vironmen ts. In contrast, (Figure 7 and Figure 6 )(d) shows that p erformance impro v es with larger N α in b oth the en vironmen ts. This effect can b e explained b y the relationship b et w een N α and N β : as N α approac hes N β , a p oin t A at distance ε within the linear neighborho o d of a p oint B (and thus sharing many features with B and its neighbors) may b e treated as equally dissimilar to B as another p oint C lo cated at an orthogonal distance ε from the neigh b orho o d of B . In the exp eriments when N β w as v aried N α is set to 4, as N β increases from 0.5 to 3 it b ecomes closer to N α whic h is leading to a decrease in p erformance. When N α w as v aried from 1 to 6 N β w as set to 0.5, as N α increases from it b ecomes larger than N β whic h is leading to an increase in p erformance. 5.4 effe ct of T The reconstruction threshold T determines the qualit y of points admitted into the linear submanifold X i . W e v ary T in the range [0 . 7 , 0 . 95] with a step size of 0 . 05. As shown in (Figure 7 and Figure 6 )(e), the mo dels in b oth en vironmen ts exhibit a clear upw ard trend in p erformance as T increases, underscoring the imp ortance of ensuring that only high-quality p oints are incorp orated in to X i . 5.5 Effe ct of δ The scaling factor δ regulates the maximum separation b etw een dissimilar p oints. W e v ary δ in the range [0 . 8 , 3 . 2] with a step size of 0 . 4. As sho wn in (Figure 7 and Figure 6 )(f ), the p erformance remains relativ ely stable across this range in b oth environmen ts, highlighting the robustness of our metho d. 5.6 Effe ct of α The scaling factor α controls the sharpness of the exp onential term in the Proxy Anchor loss. W e v ary its v alue ov er 5 , 10 , 15 , 20 , 25 , 30 , 32. As shown in (Figure 7 and Figure 6 )(g), mo dels in b oth en vironmen ts exhibit an ov erall increasing trend in p erformance with larger α . 5.7 Effe ct of ϵ The margin parameter ϵ enforces that p ositiv e em beddings are pulled within this distance from their corresp onding class pro xies. W e v ary its v alue across 0 . 001 , 0 . 005 , 0 . 05 , 0 . 1 , 0 . 2. As shown in (Figure 7 and Figure 6 )(h), mo dels in b oth the en vironmen ts demonstrate stable performance across the range of ϵ , undermining its effect in the loss function. 5.8 Effe ct of no.of pr ototy pes T o inv estigate the effect of prototype count on p erformance, we conducted an ablation study in the Bip edal W alker and Atari Pong environmen ts. In Bip edal W alker 6 (i), rewards consistently increased with additional protot yp es until reaching a plateau. In contrast, in Atari Pong 7 (i), rew ards initially improv ed with more prototypes but b egan to decline b eyond a certain p oint. W e attribute this div ergence to difference s in state represen tation. Bip edal W alker is a symbolic domain where states enco de physical prop erties such as p osition and v elo cit y , providing relatively low-noise inputs. By comparison, Atari Pong represents states as ra w pixels, which must b e enco ded by a neural netw ork b efore prototype selection. This pixel-based enco ding in tro duces noise, and as the num b er of prototypes increases, the accumulated noise degrades p erformance. 6 Conclusion and F uture w ork The application of Deep Reinforcemen t Learning (Deep RL) spans from automated game sim ulations to fine-tuning large language models (LLMs) using preference data. How ever, in the absence of transparency regarding the agen t’s actions and in ten tions, deploying such systems in high-stak es or sensitive domains remains impractical Rudin [ 2019 ]. PW-Net addresses this c hallenge by providing interpretabilit y for deep RL agents through example-based reasoning using h uman-understandable concepts. While relying on human-annotated prototypes offers v aluable insigh ts, it is not feas ible across all domains. T o ov ercome this limitation, our approach automatically samples protot yp es from the training data itself, leveraging the geometric structure of the enco ded state space to select representativ e and discriminative exemplars without requiring exp ert curation. Through user studies, w e demonstrate that trust in the model’s b ehavior esp ecially under out-of-distribution (OOD) scenarios where failures are lik ely can b e effectiv ely assessed using our automatically sampled protot yp es, with participan ts b etter anticipating agent failures compared to a blac k-b o x baseline. Sev eral promising directions remain op en for future work. First, while our metho d supp orts a v ariable num b er of prototypes p er class with p erformance scaling b ehavior analyzed across sym b olic and pixel-based domains in Section 5 extending the prototype discov ery framework to output spaces of significan tly larger cardinalit y , suc h as those encountered in generative language mo deling, presen ts an imp ortant challenge. In such settings, the action space may grow to v o cabulary scale, and efficient prototype selection under these conditions would require hierarchical or clustering-based strategies that preserv e the geometry-a w are prop erties of our current approach. Xie et al. [ 2023 ] made initial progress in this direction for sentence classification, but protot yp e-based interpretabilit y for op en-ended generation remains largely unsolved. Second, our curren t framework op erates p ost-ho c on a fixed pre-trained p olicy; an in teresting extension would b e to in v estigate whether geometry-aw are prototype discov ery can b e incorp orated as a soft regularizer during p olicy training itself, p oten tially guiding the encoder to pro duce representations that are b oth task-optimal and inheren tly more amenable to protot yp e-based explanation. Third, the piecewise-linear manifold construction currently op erates on enco ded state representations from a single p olicy . Extending this to multi-task or transfer learning settings where a shared enco der serv es multiple p olicies across environmen ts could yield prototypes that capture transferable, task-agnostic b eha vioral primitives, broadening the scop e of interpretabilit y b eyond individual en vironmen t instances. Finally , ev aluating our approach in higher-stakes real-world domains such as autonomous driving sim ulation and robotic manipulation, where prototype-based explanations could directly supp ort h uman ov ersigh t and interv en tion, represents a natural and impactful next step. References Sercan O. Arik and T omas Pfister. Protoattend: Atten tion-based prototypical learning, 2019. URL https://arxiv.org/abs/1902.06292 . Leila Arras, F ranzisk a Horn, Gr´ egoire Mon ta v on, Klaus-Rob ert M ¨ uller, and W o jciech Samek. Explaining predictions of non-linear classifiers in nlp, 2016. URL https://arxiv.org/abs/1606.07298 . Osb ert Bastani, Y ewen Pu, and Armando Solar-Lezama. V erifiable reinforcement learning via p olicy extraction, 2019. URL . bhctsn trk. OpenAIPong-DQN: Solving atari p ong game with duel double dqn in pytorc h. https://github.com/bhctsntrk/OpenAIPong- DQN , 2025. Accessed: 2025-09-22. La wrence Cayton. Algorithms for manifold learning. 07 2005. Chaofan Chen, Oscar Li, Chaofan T ao, Alina Jade Barnett, Jonathan Su, and Cynthia Rudin. This lo oks lik e that: Deep learning for interpretable image recognition, 2019. URL https://arxiv.org/abs/1806.10574 . Mohamad H. Danesh, An urag Koul, Alan F ern, and Saeed Khorram. Re-understanding finite-state represen tations of recurrent p olicy netw orks, 2021. URL . Ruth C. F ong and Andrea V edaldi. In terpretable explanations of black b oxes by meaningful p erturbation. In 2017 IEEE International Confer enc e on Computer Vision (ICCV) . IEEE, Octob er 2017. doi: 10.1109/iccv.2017.371. URL http://dx.doi.org/10.1109/ICCV.2017.371 . Kaiming He, Hao qi F an, Y uxin W u, Saining Xie, and Ross Girshick. Momen tum contrast for unsup ervised visual represen tation learning, 2020. URL . Sarthak Jain and Byron C. W allace. Atten tion is not explanation, 2019. URL https://arxiv.org/abs/1902.10186 . Han y ang Jiang, Y uehaw Kho o, and Haizhao Y ang. Reinforced inv erse scattering, 2022. URL https://arxiv.org/abs/2206.04186 . Jina yJain. deepracing: Implemen ting pp o from scratch in pytorc h to solve carracing. https://github.com/JinayJain/deep- racing , 2025. Accessed: 2025-09-22. Eoin M. Kenn y , Mycal T uc k er, and Julie Shah. T o w ards interpretable deep reinforcement learning with h uman-friendly prototypes. In The Eleventh International Confer enc e on L e arning R epr esentations , 2023. URL https://openreview.net/forum?id=hWwY_Jq0xsN . Been Kim, Martin W attenberg, Justin Gilmer, Carrie Cai, James W exler, F ernanda Viegas, and Rory Sa yres. In terpretabilit y b eyond feature attribution: Quantitativ e testing with concept activ ation vectors (tcav), 2018. URL . B Ra vi Kiran, Ibrahim Sobh, Victor T alpaert, Patric k Mannion, Ahmad A. Al Sallab, Senthil Y ogamani, and Patric k P´ erez. Deep reinforcement learning for autonomous driving: A survey , 2021. URL . P ang W ei Koh, Thao Nguyen, Y ew Siang T ang, Stephen Mussmann, Emma Pierson, Been Kim, and Percy Liang. Concept b ottleneck mo dels, 2020. URL . An urag Koul, Sam Greydanus, and Alan F ern. Learning finite state representations of recurrent p olicy net w orks, 2018. URL . Himabindu Lakk ara ju, Stephen H. Bac h, and Jure Lesk o v ec. In terpretable decision sets: A joint framew ork for description and prediction. In Pr o c e e dings of the 22nd ACM SIGKDD International Confer enc e on Know le dge Disc overy and Data Mining , KDD ’16, page 1675–1684, New Y ork, NY, USA, 2016. Asso ciation for Computing Machinery . ISBN 9781450342322. doi: 10.1145/2939672.2939874. URL https://doi.org/10.1145/2939672.2939874 . Gen Li, V arun Jampani, Laura Sevilla-Lara, Deqing Sun, Jonghyun Kim, and Jo ongkyu Kim. Adaptiv e prototype learning and allo cation for few-shot segmentation, 2021. URL https://arxiv.org/abs/2104.01893 . Oscar Li, Hao Liu, Chaofan Chen, and Cyn thia Rudin. Deep learning for case-based reasoning through protot yp es: A neural netw ork that explains its predictions, 2017. URL https://arxiv.org/abs/1710.04806 . Guiliang Liu, Oliv er Sch ulte, W ang Zhu, and Qingcan Li. T ow ard interpretable deep reinforcement learning with linear mo del u-trees, 2018. URL . Leland McInnes, John Healy , and James Melville. Umap: Uniform manifold approximation and pro jection for dimension reduction, 2020. URL . Stephanie Milani, Nic hola y T opin, Manuela V eloso, and F ei F ang. A survey of explainable reinforcemen t learning, 2022. URL . Alex Mott, Daniel Zoran, Mik e Chrzano wski, Daan Wierstra, and Danilo J. Rezende. T ow ards in terpretable reinforcement learning using attention augmented agents, 2019. URL https://arxiv.org/abs/1906.02500 . Meik e Nauta, Ron v an Bree, and Christin Seifert. Neural prototype trees for interpretable fine-grained image recognition, 2021. URL . Meik e Nauta, J¨ org Schl¨ otterer, Maurice v an Keulen, and Christin Seifert. Pip-net: Patc h-based in tuitiv e prototypes for interpretable image classification. In Pr o c e e dings of the IEEE/CVF Confer enc e on Computer Vision and Pattern R e c o gnition (CVPR) , pages 2744–2753, June 2023. nikhilbarhate99. Actor-Critic-PyT orch: P olicy gradient actor-critic implementation (lunar lander v2) in p ytorc h. https://github.com/nikhilbarhate99/Actor- Critic- PyTorch , 2025a. Accessed: 2025-09-22. nikhilbarhate99. TD3-PyT orch-BipedalW alk er-v2: Twin delay ed ddpg (td3) pytorc h solution for rob osc ho ol and b ox2d environmen ts. https://github.com/nikhilbarhate99/TD3- PyTorch- BipedalWalker- v2 , 2025b. Accessed: 2025-09-22. NotAn yMik e. gym: An improv emen t of carracing-v0 from op enai gym for hierarchical reinforcemen t learning. https://github.com/NotAnyMike/gym , 2025. Accessed: 2025-09-22. Long Ouy ang, Jeff W u, Xu Jiang, Diogo Almeida, Carroll L. W ain wrigh t, Pamela Mishkin, Chong Zhang, Sandhini Agarw al, Katarina Slama, Alex Ra y , John Sc h ulman, Jacob Hilton, F raser Kelton, Luk e Miller, Maddie Simens, Amanda Askell, Peter W elinder, Paul Christiano, Jan Leik e, and Ryan Low e. T raining language mo dels to follow instructions with human feedback, 2022. URL . Vitali P etsiuk, Abir Das, and Kate Saenko. Rise: Randomized input sampling for explanation of blac k-b o x mo dels, 2018. URL . Rafael Rafailo v, Archit Sharma, Eric Mitchell, Stefano Ermon, Christopher D. Manning, and Chelsea Finn. Direct preference optimization: Y our language mo del is secretly a reward mo del, 2024. URL . An tonin Raffin, Ashley Hill, Adam Glea ve, Anssi Kanervisto, Maximilian Ernestus, and Noah Dormann. Stable-baselines3: Reliable reinforcement learning implementations. Journal of Machine L e arning R ese ar ch , 22(268):1–8, 2021. URL http://jmlr.org/papers/v22/20- 1364.html . Marco T ulio Rib eiro, Sameer Singh, and Carlos Guestrin. ”why should i trust you?”: Explaining the predictions of an y classifier, 2016. URL . Sam T. Ro w eis and Lawrence K. Saul. Nonlinear dimensionality reduction by lo cally linear em b edding. Scienc e , 290(5500):2323–2326, 2000. doi: 10.1126/science.290.5500.2323. URL https://www.science.org/doi/abs/10.1126/science.290.5500.2323 . Cyn thia Rudin. Stop explaining black b ox machine learning mo dels for high stakes decisions and use in terpretable mo dels instead, 2019. URL . Cyn thia Rudin, Chaofan Chen, Zhi C hen, Haiyang Huang, Lesia Semenov a, and Chudi Zhong. In terpretable machine learning: F undamental principles and 10 grand challenges, 2021. URL https://arxiv.org/abs/2103.11251 . Ramprasaath R. Selv ara ju, Michael Cogswell, Abhishek Das, Ramakrishna V edan tam, Devi Parikh, and Dhruv Batra. Grad-cam: Visual explanations from deep netw orks via gradient-based lo calization. International Journal of Computer Vision , 128(2):336–359, Octob er 2019. ISSN 1573-1405. doi: 10.1007/s11263- 019- 01228- 7. URL http://dx.doi.org/10.1007/s11263- 019- 01228- 7 . Da vid Silver, Aja Huang, Christopher J. Maddison, Arthur Guez, Laurent Sifre, George v an den Driessc he, Julian Schritt wieser, Ioannis Antonoglou, V eda P anneershelv am, Marc Lanctot, Sander Dieleman, Dominik Grew e, John Nham, Nal Kalch brenner, Ilya Sutskev er, Timothy Lillicrap, Madeleine Leac h, Koray Kavuk cuoglu, Thore Graep el, and Demis Hassabis. Mastering the game of go with deep neural net w orks and tree searc h. Natur e , 529:484–503, 2016. URL http://www.nature.com/nature/journal/v529/n7587/full/nature16961.html . Da vid Silver, Thomas Hub ert, Julian Schritt wieser, Ioannis Antonoglou, Matthew Lai, Arthur Guez, Marc Lanctot, Lauren t Sifre, Dharshan Kumaran, Thore Graep el, Timothy Lillicrap, Karen Simon y an, and Demis Hassabis. Mastering chess and shogi by self-play with a general reinforcemen t learning algorithm, 2017. URL . Jak e Snell, Kevin Swersky , and Richard S. Zemel. Prototypical netw orks for few-shot learning, 2017. URL . Mukund Sundarara jan, Ankur T aly , and Qiqi Y an. Axiomatic attribution for deep netw orks, 2017. URL . Ric hard S. Sutton and Andrew G. Barto. R einfor c ement L e arning: An Intr o duction . MIT Press, 2nd edition, 2015. URL https://web.stanford.edu/class/psych209/Readings/SuttonBartoIPRLBook2ndEd.pdf . Draft, in progress. Laurens v an der Maaten and Geoffrey Hinton. Visualizing data using t-sne. Journal of Machine L e arning R ese ar ch , 9(86):2579–2605, 2008. URL http://jmlr.org/papers/v9/vandermaaten08a.html . George A. V ouros. Explainable deep reinforcement learning: State of the art and challenges. A CM Computing Surveys , 55(5):1–39, December 2022. ISSN 1557-7341. doi: 10.1145/3527448. URL http://dx.doi.org/10.1145/3527448 . Sean Xie, Soroush V osoughi, and Saeed Hassanp our. Proto-lm: A prototypical netw ork-based framew ork for built-in interpretabilit y in large language mo dels, 2023. URL https://arxiv.org/abs/2311.01732 . Chih-Kuan Y eh, Been Kim, Sercan O. Arik, Chun-Liang Li, T omas Pfister, and Pradeep Ra vikumar. On completeness-aw are concept-based explanations in deep neural netw orks, 2022. URL . Vinicius Zam baldi, David Rap oso, Adam Santoro, Victor Bapst, Y ujia Li, Igor Babuschkin, Karl T uyls, David Reichert, Timothy Lillicrap, Edward Lo ckhart, Murray Shanahan, Victoria Langston, Razv an Pascan u, Matthew Botvinick, Oriol Viny als, and Peter Battaglia. Deep reinforcemen t learning with relational inductive biases. In International Confer enc e on L e arning R epr esentations , 2019. URL https://openreview.net/forum?id=HkxaFoC9KQ .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment