Symbolic Density Estimation: A Decompositional Approach

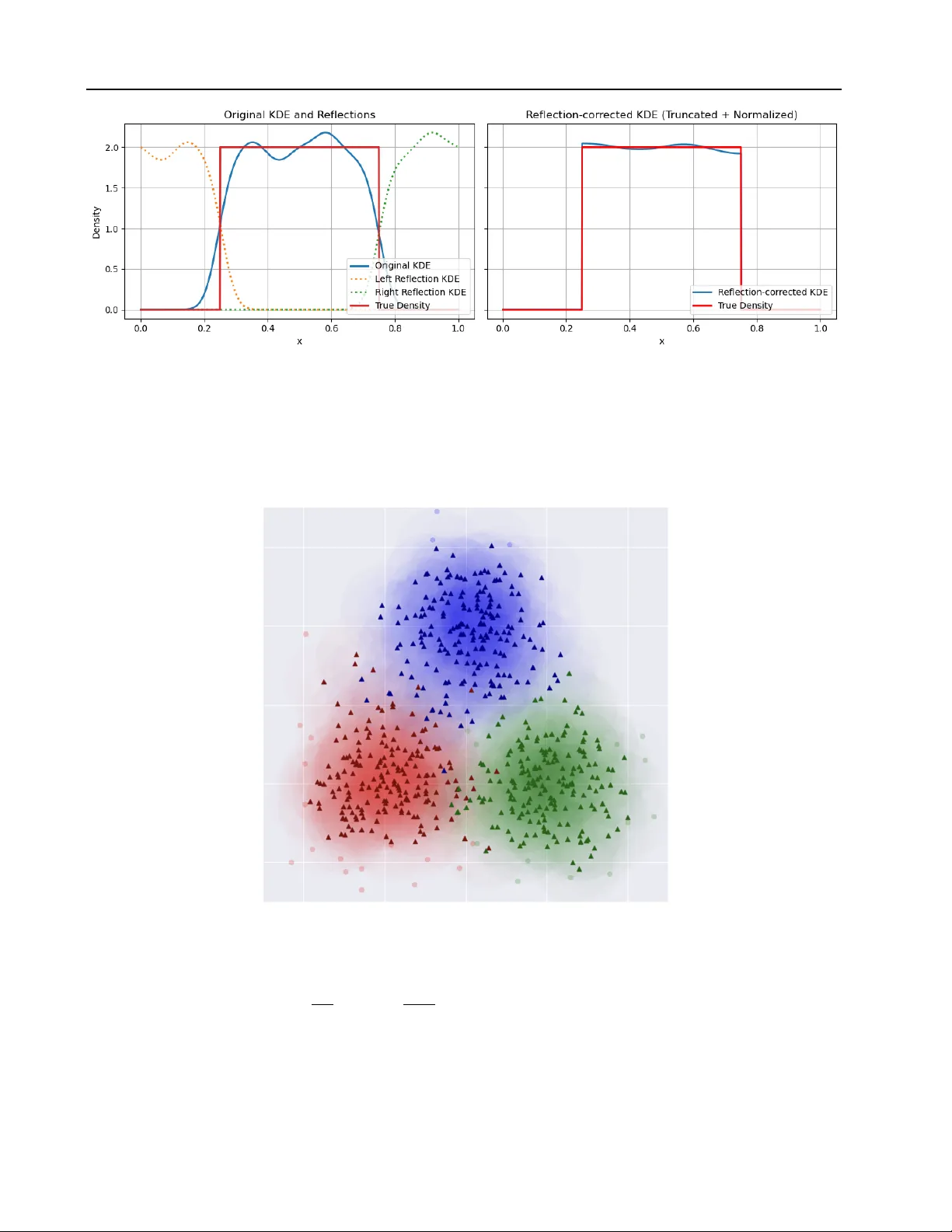

We introduce AI-Kolmogorov, a novel framework for Symbolic Density Estimation (SymDE). Symbolic regression (SR) has been effectively used to produce interpretable models in standard regression settings but its applicability to density estimation task…

Authors: Angelo Rajendram, Xieting Chu, Vijay Ganesh