JaWildText: A Benchmark for Vision-Language Models on Japanese Scene Text Understanding

Japanese scene text poses challenges that multilingual benchmarks often fail to capture, including mixed scripts, frequent vertical writing, and a character inventory far larger than the Latin alphabet. Although Japanese is included in several multil…

Authors: Koki Maeda, Naoaki Okazaki

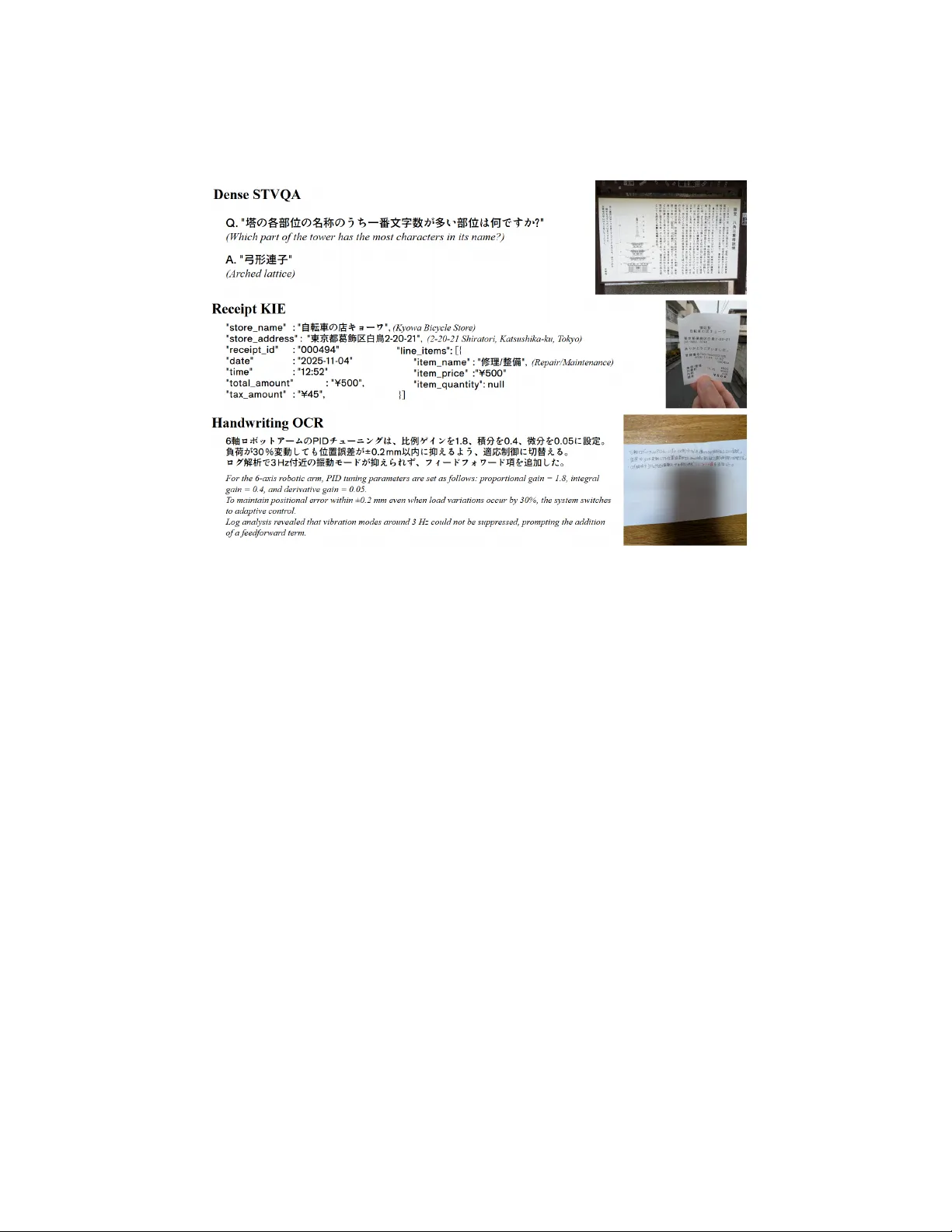

JaWildT ext: A Benc hmark for Vision-Language Mo dels on Japanese Scene T ext Understanding K oki Maeda 1 , 2 [0009 − 0008 − 0529 − 3152] and Naoaki Ok azaki 1 , 2 [0000 − 0001 − 7635 − 6175] 1 Institute of Science T oky o, T oky o, Japan 2 Researc h and Developmen t Cen ter for Large Language Mo dels, National Institute of Informatics, T oky o, Japan {koki.maeda@nlp.,okazaki@}comp.isct.ac.jp Abstract. Japanese scene text p oses c hallenges that multilingual bench- marks often fail to capture, including mixed scripts, frequen t v ertical writing, and a c haracter inv entory far larger than the Latin alphabet. Although Japanese is included in several multilingual b enchmarks, these resources do not adequately capture the language-sp ecific complexities. Mean while, existing Japanese visual text datasets hav e primarily focused on scanned documents, lea ving in-the-wild scene text underexplored. T o fill this gap, w e introduce JaWildT ext , a diagnostic b enc hmark for ev aluating vision-language mo dels (VLMs) on Japanese scene text under- standing. JaWildT ext contains 3,241 instances from 2,961 images newly captured in Japan, with 1.12 million annotated c haracters spanning 3,643 unique character t yp es. It comprises three complemen tary tasks that v ary in visual organization, output format, and writing style: (i) Dense Scene T ext Visual Question Answ ering (STVQA), whic h requires reasoning o ver multiple pieces of visual text evidence; (ii) Receipt Key Information Extraction (KIE), which tests lay out-aw are structured extraction from mobile-captured receipts; and (iii) Handwriting OCR, which ev aluates page-lev el transcription across v arious media and writing directions. W e ev aluate 14 op en-w eigh t VLMs and find that the best mo del ac hieves an a verage score of 0.64 across the three tasks. Error analyses sho w recogni- tion remains the dominan t b ottlenec k, esp ecially for k anji. JaWildT ext enables fine-grained, script-a ware diagnosis of Japanese scene text capa- bilities, and will b e released with ev aluation co de. Keyw ords: Japanese scene text · vision-language mo dels · b enc hmark · handwriting recognition · key information extraction 1 In tro duction T ext is ubiquitous in ev eryday en vironmen ts: on street p osters, handwritten notes, receipts, and storefron ts. F or decades, text-cen tric vision systems relied on a modular workflo w that first applied OCR to con vert pixels into c haracters and then fed the text to separate mo dules for downstream tasks [ 20 ]. With the rise of 2 K. Maeda and N. Ok azaki Fig. 1: Ov erview of the JaWildT ext b enc hmark: (i) Dense STVQA, (ii) Receipt KIE, and (iii) Handwriting OCR. W e added English translations for readability . vision-language mo dels (VLMs) suc h as GPT-4V [ 33 ], this w orkflow is shifting to ward an end-to-end approac h: VLMs generate outputs of a do wnstream task directly from natural images. This new workflo w is increasingly adopted as a practical alternative to dedicated OCR pip elines. This shift, how ever, complicates ev aluation. When a VLM is ev aluated only b y downstream task accuracy , it is often unclear whether an error stems from a failure of character recognition or from incorrect reasoning o ver correctly rec- ognized text. Disentangling these t wo failure mo des is critical b ecause precise reading is a prerequisite for any higher-lev el text understanding. Benc hmarks that assess reading in natural images and separate recognition failures from reasoning failures are therefore essen tial, y et few existing resources offer this diagnostic capability , particularly for non-English scripts. Although multilingual b enc hmarks [ 29 , 30 , 39 ] hav e extended scene text ev alu- ation b ey ond English [ 4 , 22 , 38 ], they prioritize language breadth ov er language- sp ecific diagnostics. This matters particularly for Japanese, whose scene text frequen tly mixes k anji, hiragana, k atak ana, and Latin alphanumerics. Because the resulting failure patterns differ from those of other scripts, it is difficult to diagnose failures without targeted ev aluation. Existing Japanese scene text re- sources pro vide recognition data at the character or word level [ 12 , 15 , 28 ]. In con trast, Japanese VLM b enchmarks target scanned documents or knowledge- cen tric m ultimo dal tasks [ 31 , 32 ] without explicitly measuring the underlying JaWildT ext: Japanese Scene T ext Understanding Benchmark 3 recognition abilit y . As a result, no existing benchmark can tell whether VLM errors on Japanese scene text arise from recognition or from reasoning. T o fill this gap, we in tro duce JaWildT ext, a fine-grained b enc hmark for ev al- uating VLMs on Japanese scene text understanding. The benchmark is designed to disentangle reading from reasoning. As sho wn in Figure 1 , JaWildT ext con- sists of three tasks that form a compact yet comprehensive configuration. The tasks are chosen to v ary three factors that commonly confound end-to-end ev al- uation: visual organization (from cluttered to structured), output format (from free-form to v erbatim), and writing st yle (prin ted or handwritten). This de- sign exposes failure modes that remain conflated when models are scored only b y do wnstream task accuracy . Dense Scene T ext Visual Question Answ ering (Dense STVQA) tests m ulti-region reading and cross-reference reasoning in clut- tered sign b oards and p osters. Receipt Key Information Extraction (Receipt KIE) ev aluates lay out-aw are structured extraction from in-the-wild imagery . Hand- writing OCR (page-level transcription) assesses long-con text transcription of handwritten text, providing a recognition-dominant setting that complemen ts the reasoning-hea vy tasks ab o ve. JaWildT ext contains 3,241 ev aluation instances from 2,961 newly collected images in Japan, with 1.12 million annotated char- acters spanning 3,643 unique c haracters. W e b enc hmark 14 op en-weigh t VLMs on JaWildT ext. The experiments show that the best mo del ac hieves an av erage score of 0.64 across the three tasks. Our error analysis iden tified distinct b ottlenec ks: models that read text accurately ma y still fail at reasoning, and recognition difficult y v aries drastically b y script t yp e. In summary , these results demonstrate that JaWildT ext pro vides fine- grained diagnostic evidence that is invisible in aggregated accuracy alone. The contributions of this pap er are as follows: 1. W e introduce JaWildT ext, to our knowledge, the first b enc hmark dedicated to ev aluating VLMs on Japanese scene text understanding across three com- plemen tary tasks grounded in real-world images. 2. W e b enc hmark 14 op en-w eigh t VLMs, establish repro ducible baselines, and quan tify substan tial p erformance gaps across arc hitectures. 3. W e provide an error analysis that disentangles recognition from reasoning failures, showing that their relative sev erity v aries markedly across model families. 2 Related W ork 2.1 Benc hmarking T ext Understanding in Natural Images F or English, ev aluation resources ha ve matured along three complementary tracks. Sc ene text b enc hmarks first targeted detection and recognition in natural im- ages [ 40 , 45 ], and then adv anced to text-centric VQA [ 4 , 18 , 22 , 23 , 38 ], which requires models to read and reason ov er recognized text. R e c eipt and do cument understanding benchmarks such as SROIE [ 13 ], CORD [ 34 ], and FUNSD [ 17 ] ev aluate structured key information extraction (KIE), testing whether models 4 K. Maeda and N. Ok azaki can map visually organized fields to predefined categories. Handwriting r e c o g- nition b enc hmarks, anc hored by the IAM Handwriting Database [ 21 ], assess v erbatim transcription of diverse writing styles; recent work shows that VLM p erformance degrades substantially on non-English handwriting [ 7 ]. T ogether, these tracks span a range of visual organization, output format, and writing st yle, forming a comprehensiv e ev aluation ecosystem for English. Sev eral b enc hmarks extend this ecosystem to other languages, progressively broadening language cov erage and task complexity . The ICDAR ML T chal- lenges [ 29 , 30 ] introduce multilingual scene text detection and recognition across up to ten languages. XFUND [ 43 ] extends form understanding to seven lan- guages. T argeting VLMs directly , MTVQA [ 39 ] shifted the fo cus to multilingual text-cen tric VQA with native annotations. OCRBench [ 9 , 19 ] and CC-OCR [ 44 ] broaden the scop e to multilingual OCR for VLMs. While these efforts increase language co verage, they treat eac h language as one among many and provide limited diagnostic depth for language-sp ecific c hallenges. Among CJK languages, dedicated b enc hmarks hav e emerged for Chinese scene text recognition [ 5 ] and Korean text-cen tric VQA [ 14 ]. How ev er, Japanese remains without a comprehensive ev aluation despite its unique challenges, no- tably concurrent use of m ultiple scripts within a single text, complex lay outs, and thousands of distinct c haracters. 2.2 Japanese T ext Understanding Existing Japanese-sp ecific resources address isolated facets of text understand- ing. F or scene text recognition, existing resources target scene text sp otting in omnidirectional video [ 16 ], isolated c haracter classification [ 12 ], vertical text recognition [ 36 ], and comics [ 2 ], restricted to a sp ecific visual setting or tex- tual granularit y . F or receipt understanding, existing resources supp ort training or fine-tuning on mobile-captured receipts and post-OCR correction [ 10 , 27 ], but none serve as a b enchmark for assessing general-purp ose VLM capabilities. F or handwriting, existing datasets pro vide isolated characters or online stroke data [ 24 – 26 , 28 ], or target classical cursive [ 6 ]; none co v ers page-level offline recognition of modern handwriting. On the reasoning side, JDo cQA [ 31 ] ad- dresses question answ ering o ver scanned do cumen ts, and JMMMU [ 32 ] bench- marks multimodal understanding centered on cultural and academic knowledge rather than text recognition abilit y . Mapping these resources onto the three ev aluation dimensions of visual or- ganization, output format, and writing style reveals that none connect recog- nition with reasoning for Japanese scene text. JaWildT ext fills this gap with three complemen tary tasks that systematically v ary these dimensions, enabling fine-grained diagnosis of where and why current VLMs fail on Japanese text in real-w orld images. JaWildT ext: Japanese Scene T ext Understanding Benchmark 5 3 Dataset: JaWildT ext JaWildT ext is designed to expose where a mo del fails, whether in c haracter recognition, lay out understanding, or reasoning, rather than rep orting only ag- gregated task accuracy . T o this end, it comprises three complementary tasks, eac h annotated to disentangle recognition errors from reasoning and formatting errors. Because such fine-grained diagnosis requires image diversit y and annota- tion qualit y that are una v ailable in web-scraped corp ora, w e collected original images and annotations tailored for this w ork. 3.1 Dense STV QA Dense STVQA ev aluates whether a mo del can read and reason ov er dense Japanese scene text, using visually complex real-world images suc h as signboards, bulletin b oards, p osters, and pro duct pac k ages. Image Collection. T o test recognition under realistic conditions, w e asked w orkers from a data collection agency in Japan to photograph text-ric h scenes with c ameras and smartphones, resulting in 745 images. W e instructed w orkers to cov er indoor and outdo or lo cations under b oth da ytime and nighttime ligh ting and av oid m ultiple shots of the same sub ject, ensuring diversit y in lay out, font st yle, and visual con text. W e retained natural artifacts, suc h as bac kground clutter, partial o cclusion, and reflections, to test recognition robustness. Annotation. Annotations are structured in to tw o lay ers to separate recog- nition from reasoning. In the first lay er, annotators marked text regions with quadrilateral b ounding b oxes. They transcrib ed each region, which is defined as a line-level or column-level sequence of visually recognizable c haracters. Each image contains 45.1 annotated text regions on av erage. In the second la yer, na- tiv e Japanese sp eak ers authored op en-ended question-answer pairs ov er these transcrib ed regions. Annotators w ere encouraged to write questions that require reasoning across multiple text regions rather than extracting a single string from a single area. W e exclude yes/no and multiple-c hoice formats to minimize chance- lev el correctness. Crucially , each question is link ed to evidenc e r e gions : the mini- mal set of text regions necessary and sufficien t to derive the answ er. This link age enables automatic diagnosis; if a mo del fails a question but correctly recognizes the evidence regions, the error is attributable to reasoning rather than recogni- tion. In total, we created 1,025 question-answer pairs. 3.2 Receipt KIE Receipt KIE ev aluates structured field extraction from real-world photographs of Japanese receipts. This setting introduces c hallenges largely absen t from scene text: rigid columnar la youts, mixed use of full-width and half-width characters, and domain-sp ecific abbreviations pro duced b y thermal printers. Image Collection. Diagnostic v alue dep ends on testing und er realistic cap- ture conditions; hence, we collected photographs of consumer receipts from ev- eryda y transactions rather than flatbed scans. W e retained natural artifacts suc h 6 K. Maeda and N. Ok azaki as creases, folds, and hand-held tilt to ensure that mo dels are ev aluated against the geometric and photometric distortions encountered in practical use. T o max- imize visual div ersity , we disallow ed multiple receipts from the same store while p ermitting receipts from different branc hes of the same chain, yielding 1,151 unique receipt images. Annotation. W e annotate rec eipts at the individual field lev el so that ev al- uation can pinp oin t which field t yp es a model struggles with, rather than pro- ducing only a single p er-receipt score. Our key sc hema builds on the four header fields of SROIE [ 13 ] ( store_name , date , store_address , total_amount ) and ex- tends it in t wo directions tailored to Japanese receipts: three additional header fields ( receipt_id , time , tax_amount ) that are usually prin ted but absen t from SR OIE, and line-item-level tuples of item_name , item_price , and item_quantity , whic h test a mo del’s ability to maintain structured alignment across rep eated ro ws. F or eac h field, annotators recorded the text string and a quadrilateral b ounding b o x; fields absen t from a receipt are explicitly marked as n ull, allo wing ev aluation to distinguish extraction errors from correct recognition of absence. In total, w e annotated 56,095 text regions across 1,151 receipts. 3.3 Handwriting OCR Handwriting OCR ev aluates page-level recognition of m ulti-sentence handwrit- ten Japanese text. Unlike isolated-word or single-line recognition, page-level ev al- uation requires models to handle lay out in terpretation, line segmentation, and script mixing sim ultaneously , reflecting realistic reading scenarios. Image Collection. T o obtain diverse yet controlled handwriting samples, we designed a collection pip eline that separates con tent generation from handwriting pro duction. First, w e defined ov er 100 genre-k eyword pairs spanning ev eryday topics such as work planning, tra vel, and cooking. W e generated up to 20 prompt texts p er pair using a large language mo del. 3 Eac h prompt was constrained to appro ximately 100 c haracters, long enough to span m ultiple lines across mixed scripts yet short enough to fit naturally on a single page. Then, we distributed the prompts to 51 native Japanese writers, who tran- scrib ed them onto designated media and photographed the results using their o wn devices. T o systematically introduce visual v ariation, we sp ecified the writ- ing medium and writing direction (horizontal or vertical) for eac h instance. The media include lined pap er, unlined plain pap er, whiteb oards, and tablets, while writers choose line-break positions freely . Each instance is accompanied b y meta- data recording the writer ID, writing medium, writing instrumen t, ink color, and writing direction, enabling fine-grained analysis of how these factors affect recog- nition p erformance. Annotation. F or each image, the target output is a transcription of all visi- ble handwritten text. Annotators transcribed the text line by line and drew a quadrilateral b ounding b ox around each region. A k ey design principle is that 3 Sp ecifically , we used openai/gpt-oss-120b to generate the prompt texts. The gen- erated texts serve only as writing prompts. JaWildT ext: Japanese Scene T ext Understanding Benchmark 7 T able 1: Summary statistics of JaWildT ext. #Instances denotes the ev aluation unit: an instance is a question–answer pair in Dense STVQA, and a single im- age in Receipt KIE and Handwriting OCR. #Regions denotes the n umber of annotated quadrilateral text regions. Subset #Instances #Images #Regions #Chars #Unique Chars Dense STVQA 1,025 745 33,608 571,979 3,313 Receipt KIE 1,151 1,151 56,095 433,558 2,001 Handwriting OCR 1,065 1,065 6,002 111,977 1,830 T otal 3,241 2,961 95,705 1,117,514 3,643 Fig. 2: Represen tative images from eac h task, illustrating the diversit y of JaWildT ext. Dense STVQA co vers signboards, p osters, and pro duct pack ages under v arying conditions. Receipt KIE includes receipts with creases, folds, and div erse p ersp ectiv es. Handwriting OCR spans m ultiple writing media and direc- tions. the ground truth should reflect what is visually present in the image rather than what the writer intended. A ccordingly , we preserv ed writer-in tro duced errors suc h as missp ellings or omitted characters. F or c haracters that a writer started but left incomplete due to writing errors, we assigned a dedicated symbol ( □ ) to mark them explicitly . This annotation p olicy ensures that mo del ev aluation measures visual recognition fidelity rather than error correction abilit y . W e anno- tated 1,065 handwriting instances with 6,002 lines, totaling 111,977 characters. 3.4 Qualit y Con trol Image collection and annotation were conducted by a professional data curation agency with comp ensated annotators. T o calibrate annotation guidelines b efore full-scale pro duction, the authors and the agency jointly reviewed the first 10% 8 K. Maeda and N. Ok azaki (a) Distribution of total c har- acter length p er image. (b) Spatial distribution of text-region centers. Fig. 3: Image-level text prop erties in JaWildT ext. of deliv erables and refined the guidelines based on observ ed inconsistencies. In the main phase, eac h instance was lab eled by one annotator and indep enden tly v erified by a second; disagreements were resolv ed through discussion. W e ex- cluded images containing non-public p ersonally iden tifiable information, such as faces, vehicle license plates, or credit card num b ers, while retaining publicly displa yed information (e.g., store phone num b ers on receipts) needed for the b enc hmark tasks. The dataset, including all images and annotations, will be publicly released under the Apac he License 2.0. 4 3.5 Dataset Statistics T able 1 summarizes key statistics of JaWildT ext. The dataset comprises 3,241 instances drawn from 2,961 unique images, with 95,705 annotated text regions totaling 1,117,514 characters across 3,643 unique characters. By design, eac h task comprises approximately 1,000 instances, allowing for broadly comparable score precision across tasks. Figure 2 shows represen tativ e images from each task, and Figure 3 visualizes text densit y and lay out prop erties discussed b elo w. T ext Density and Spatial La yout. Dense STVQA and Receipt KIE are text- dense, often con taining several h undred characters p er image, whereas Handwrit- ing OCR is inten tionally con trolled to appro ximately 100 characters per image (Figure 3a ). Dense STV QA exhibits a long tail b eyond 2,000 characters p er im- age, reflecting the high information density of signboards and bulletin boards; this density forces models to lo cate and integrate information across man y text regions, making the task sensitive to b oth recognition errors and cross-region reasoning failures. In contrast, the controlled length of Handwriting OCR iso- lates recognition ability from reasoning, providing a setting in which errors can b e attributed almost en tirely to character-lev el reading. The spatial distribution of text-region cen ters (Figure 3b ) mirrors these design c hoices: Dense STVQ A regions spread broadly across the frame, Receipt KIE regions form a narro w v ertical band consisten t with elongated receipt la y outs, and Handwriting OCR clusters near the page cen ter along b oth axes. 4 h ttps://huggingface.co/datasets/llm- jp/ja wildtext JaWildT ext: Japanese Scene T ext Understanding Benchmark 9 Fig. 4: Stack ed character-t yp e comp osition by task. Japanese scripts dominate Dense STVQA and Handwriting OCR, while Receipt KIE allo cates a large frac- tion to ASCI I digits. T able 2: Question-t yp e dis- tribution in Dense STVQA. Question type # (%) Compositional retriev al 406 (39.6%) Counting 374 (36.5%) Calculation 125 (12.2%) Spatial 84 (8.2%) Other 36 (3.5%) T able 3: Receipt KIE field fill rates. Field Fill Rate store_name 100.0% date 100.0% total_amount 99.8% time 98.3% tax_amount 95.2% receipt_id 92.0% store_address 48.9% T able 4: W riting surface and direction in Handwrit- ing OCR. Me dium #Instanc es (%) Lined paper 470 (43.9%) Unlined paper 344 (32.3%) T ablet 130 (12.2%) Whiteboard 121 (11.4%) Dir e ction #Re gions (%) Horizontal 4,222 (70.3%) V ertical 1,780 (29.7%) Character-t yp e Comp osition. Character-t yp e distributions differ markedly across tasks (Figure 4 ), which foreshado w the character-t yp e analysis in Sec- tion 4.3 . Dense STVQA and Handwriting OCR are dominated by k anji (36.0% and 39.7%) and hiragana (22.6% and 24.8%), consisten t with natural Japanese prose and placing heavy demands on mixed-script recognition. Receipt KIE con- tains a muc h larger share of ASCI I digits (33.5%), reflecting prices, quan tities, and dates; accurate digit reading is therefore a decisive factor for extraction p erformance in this task. Overall, JaWildT ext contains 2,866 unique k anji char- acters. Of the 2,136 J¯ oy¯ o k anji (the Japanese gov ernment’s daily-use list), the dataset co vers 1,985 (92.9%), and it additionally includes 881 k anji b ey ond the J¯ oy¯ o set, meaning that mo dels must generalize beyond standard literacy inv en- tories to handle real-world text. T ask-sp ecific prop erties. Eac h task contributes a distinctive ev aluation sig- nal. In Dense STVQA, questions cov er a balanced mix of reasoning types: comp o- sitional retriev al, counting, calculation, and spatial reasoning (T able 2 ). Ques- tions are link ed to minimal evidence regions, making it possible to separate recognition errors (failing to read individual regions) from reasoning errors (fail- ing to com bine correctly read evidence). In Receipt KIE, field fill rates v ary sub- 10 K. Maeda and N. Ok azaki stan tially (T able 3 ): store_name and date are presen t in all receipts, whereas store_address has the low est fill rate (48.9%), reflecting real-w orld omission patterns and testing whether mo dels can handle missing fields without halluci- nating conten t. In Handwriting OCR, 51 writers contributed data with controlled n umbers of instances p er writer, spanning m ultiple writing media and directions (T able 4 ), ensuring that recognition p erformance is ev aluated across a range of handwriting v ariability rather than b eing biased tow ard a single style. 4 Exp erimen ts 4.1 Exp erimen tal Setup Inference. W e ev aluate 14 op en-w eight VLMs from five mo del families. W e include four recen t high-p erforming families: Qwen3-VL [ 3 ], Intern VL3.5 [ 41 ], Gemma3 [ 11 ], and Phi-4-Multimo dal [ 1 ]. F or families offering multiple sizes, we select v arian ts with 1B to 38B parameters (see T able 5 for the complete list). W e additionally include Sarashina2.2-Vision [ 37 ], a model built on a Japanese- cen tric LLM backbone and trained with Japanese do cumen t and OCR data. This selection lets us examine how mo del scale and language-sp ecific training influence performance on JaWildT ext. W e set the temperature to 0 and the maxim um token length to 2,048. Each instance uses a single image at its original resolution; resizing or tiling is applied according to the default prepro cessing. Ev aluation. T o reliably extract the final answ er from mo del output, we enforce mac hine-parseable output formats using a fixed prompt template for each task. Mo dels m ust enclose the final answ er in \boxed{...} for Dense STVQA, return a single JSON ob ject following the predefined schema for Receipt KIE, and out- put plain text transcriptions for Handwriting OCR. Any output that cannot be parsed receives a score of 0. Before scoring, we apply Unico de NFK C normal- ization to b oth predictions and references to absorb sup erficial c haracter-form differences. In the Dense STVQA task, answ ers are open-ended and may v ary due to differences in units or paraphrasing. Thus, we adopt judge-based accuracy: an LLM verifier compares eac h prediction against the reference and returns a binary correctness lab el. 5 Com bined with the evidence region annotations, this binary signal enables error analysis to attribute each failure to recognition or reasoning. In the Receipt KIE task, outputs are structured, and field b oundaries are well- defined. W e report o verall F1 and field-level accuracy for ma jor header fields. This indicates which field types are most c hallenging to extract. F or Handwriting OCR, we compute character-lev el similarity as max(0 , 1 − CER) , where CER is defined as the Lev ensh tein distance b et ween prediction and reference, divided by reference length. Character-lev el scoring is suitable for Japanese b ecause it lac ks explicit w ord b oundaries. W e further rep ort script-t yp e breakdo wn (e.g., k anji vs. hiragana) in CER. W e compute the o verall score as the un weigh ted a verage of the three task scores. 5 W e emplo y openai/gpt-5.1-2025-11-13 via the Azure OpenAI API as the judge mo del. W e will release the verifier prompt to repro duce scoring. JaWildT ext: Japanese Scene T ext Understanding Benchmark 11 T able 5: Results on the JaWildT ext b enc hmark. Mo del P arams Overall Dense STVQA Receipt KIE Handwriting OCR A ccuracy F1 1-CER Qw en3-VL-8B 8B 0.64 0.62 0.53 0.79 Qw en3-VL-4B 4B 0.60 0.52 0.50 0.77 Qw en3-VL-2B 2B 0.52 0.31 0.48 0.76 Inte rn VL3.5-38B 38B 0.55 0.44 0.50 0.71 Inte rn VL3.5-14B 14B 0.49 0.30 0.47 0.71 Inte rn VL3.5-8B 8B 0.53 0.39 0.48 0.72 Inte rn VL3.5-4B 4B 0.48 0.31 0.44 0.70 Inte rn VL3.5-2B 2B 0.44 0.23 0.42 0.66 Inte rn VL3.5-1B 1B 0.37 0.11 0.37 0.61 Sarashina2.2-Vision-3B 3B 0.50 0.44 0.40 0.68 Gemma3-27B-IT 27B 0.43 0.37 0.39 0.53 Gemma3-12B-IT 12B 0.40 0.32 0.38 0.51 Gemma3-4B-IT 4B 0.19 0.12 0.23 0.20 Phi-4-Multimo dal 14B 0.18 0.008 0.23 0.29 Fig. 5: Performance scaling trends with mo del size across b enchmark tasks. Larger mo dels improv e ov erall performance, but gains differ by task family . 4.2 Main Results T able 5 summarizes the p erformance of all ev aluated mo dels on JaWildT ext. The best mo del, Qw en3-VL-8B, ac hieves an ov erall score of only 0.64, indicating that Japanese scene text understanding remains a substan tial challenge for cur- ren t op en-w eight VLMs. Dense STVQA exhibits the widest p erformance spread across mo dels (0.008–0.62), suggesting that this task effectively differentiates mo dels with v arying levels of Japanese scene text capability . In Handwriting OCR, 10 of 14 mo dels score at least 0.60, indicating that most mo dels already p ossess a baseline handwriting recognition ability , though a ceiling around 0.80 p ersists even for the strongest models. Performance differences across mo del fam- ilies are significant: Qwen3-VL consisten tly outp erforms Intern VL3.5 at similar parameter scales, surpassing In tern VL3.5 b y 0.11 Overall (0.64 vs. 0.53) at 8B parameters. Gemma3 trails b oth families despite ha ving up to ∼ 3 . 4 × more pa- 12 K. Maeda and N. Ok azaki T able 6: Receipt KIE field-level accuracy on JaWildT ext. Mo del Params Store Name Address Receipt ID Date Time T otal T ax Qw en3-VL-8B 8B 0.14 0.52 0.13 0.81 0.91 0.81 0.77 Qw en3-VL-4B 4B 0.16 0.55 0.11 0.28 0.92 0.84 0.80 Qw en3-VL-2B 2B 0.11 0.43 0.08 0.52 0.88 0.82 0.82 Inte rn VL3.5-38B 38B 0.15 0.34 0.18 0.52 0.92 0.83 0.82 Inte rn VL3.5-14B 14B 0.15 0.35 0.13 0.26 0.89 0.83 0.79 Inte rn VL3.5-8B 8B 0.11 0.31 0.13 0.50 0.89 0.79 0.74 Inte rn VL3.5-4B 4B 0.14 0.29 0.21 0.26 0.90 0.77 0.73 Inte rn VL3.5-2B 2B 0.13 0.30 0.11 0.24 0.89 0.77 0.75 Inte rn VL3.5-1B 1B 0.09 0.23 0.10 0.33 0.80 0.69 0.70 Sarashina2.2-Vision-3B 3B 0.11 0.28 0.18 0.28 0.75 0.72 0.67 Gemma3-27B-IT 27B 0.11 0.20 0.08 0.52 0.71 0.72 0.64 Gemma3-12B-IT 12B 0.06 0.21 0.11 0.39 0.67 0.70 0.60 Gemma3-4B-IT 4B 0.03 0.10 0.05 0.20 0.59 0.59 0.35 Phi-4-Multimo dal 14B 0.01 0.004 0.07 0.13 0.51 0.51 0.48 rameters than the b est-performing model. A t the lo wer end, Phi-4-Multimo dal attains near-zero accuracy on Dense STVQA (0.008), struggling to follo w the re- quired output format. Notably , ra w parameter coun t do es not fully explain these gaps. Sarashina2.2-Vision-3B achiev es 0.44 accuracy on Dense STVQA, match- ing Intern VL3.5-38B despite few er parameters. Ho wev er, this adv antage do es not generalize to Receipt KIE or Handwriting OCR, where Sarashina2.2-Vision- 3B is comparable to In tern VL3.5-2B. This con trast suggests that the b enefit of Japanese-cen tric training data ma y b e task-dep enden t. T o examine whether each task captures scaling b eha vior effectively , we plot p erformance trends within mo del families as parameter coun t (Figure 5 ). Within eac h family , all three tasks sho w improv emen t with scale, but their tra jectories differ. Dense STV QA and Receipt KIE contin ue to impro ve across the parameter range we ev aluate, with no clear saturation p oin t. Handwriting OCR, by con- trast, plateaus beyond a certain scale within eac h mo del family: In tern VL3.5 sat- urates around 0.70 from 4B on ward, and Qw en3-VL shows a marginal gain from 2B to 8B (0.76 → 0.79). A cross families, how ever, scale is not decisive: Qwen3- VL-8B surpasses Intern VL3.5-38B by 0.09 Ov erall, indicating that architecture and training data comp osition can matter more than parameter count alone. The header-field accuracies in T able 6 exhibit sharp v ariation across field t yp es in Receipt KIE. F ormat-constrained fields such as time are relatively well handled, with several top mo dels ac hieving accuracy ab o v e 0.90. In contrast, store_name and store_address remain difficult, with b est accuracies of only 0.16 and 0.55, respectively; these fields often require aggregating non-con tiguous text spans across the receipt la yout rather than cop ying a single con tiguous line. This gap indicates that the primary bottleneck in Receipt KIE is not character recognition alone but spatial reasoning ov er do cument lay out. JaWildT ext: Japanese Scene T ext Understanding Benchmark 13 Fig. 6: Error taxonomy on Dense STVQA. Eac h bar decomp oses instances into Cor- rect, Reasoning Error, Recognition Error, and F ormat Error. Fig. 7: Character-t yp e CER on Handwriting OCR. Color scale is capp ed at 1.0; v alues ex- ceeding 1.0 indicate hallucination- dominan t outputs. 4.3 Analysis Error taxonom y for Dense STV QA. T o disentangle recognition failures from reasoning failures on Dense STVQA, w e define an error taxonom y with four categories. F or each Dense STV QA image, we separately prompt the mo del to transcribe all visible text, indep enden tly of the QA task. W e then compare the resulting transcript against the ground-truth transcriptions of each question’s annotated evidence regions: an evidence region is considered “read” if its whole ground-truth string appears as an exact substring in the transcript. Based on this comparison, each instance is assigned one of four outcomes. Recognition Error : the answ er is not recov erable from the recognized text alone b ecause at least one required evidence region is missing from the transcript. Reasoning Error : all evidence regions are present, but the final answ er is incorrect. F ormat Error : the output cannot b e parsed under the prescribed \boxed{} format. Correct : the parsed answ er matc hes the reference. Figure 6 illustrates that Recognition Error is the largest category for most mo dels, indicating that Japanese scene text recognition remains the primary b ottlenec k. Qw en3-VL-8B, which achiev es the highest Correct rate (62.3%), still exhibits a 14.2% Recognition Error rate, showing that even the b est-performing mo del has not fully ov ercome this b ottlenec k. Sarashina2.2-Vision-3B sho ws a relatively low Recognition Error rate (38.0%) compared to other mo dels, suc h as Intern VL3.5-8B (45.9%) and Gemma3-27B-IT (56.7%), suggesting that Japanese-cen tric training may partially improv e scene text recognition capabil- it y . Intern VL3.5 and Gemma3 remain heavily dominated b y Recognition Errors (44.7–58.9% and 56.7–67.3%, resp ectiv ely). Phi-4-Multimo dal is instead dom- inated b y F ormat Errors, failing to follo w the prescrib ed output format. This 14 K. Maeda and N. Ok azaki Fig. 8: Representativ e failure cases on Handwriting OCR. (Left) Gemma3-12B misrecognizes k anji characters, substituting visually dissimilar characters. (Cen- ter) Intern VL3.5-14B confuses visually similar k atak ana characters. (Right) Gemma3-4B pro duces hallucinated output en tirely unrelated to the input image. Red b old text indicates erroneous c haracters in the mo del predictions. taxonom y m ak es visible the failure stage that aggregate accuracy alone cannot rev eal, enabling targeted diagnosis of eac h mo del’s b ottlenec k. Script-category analysis on Handwriting OCR. T o examine ho w error rates differ across script categories, w e decomp ose CER by script category (k anji, hiragana, k atak ana, ASCI I digits, and ASCI I letters). F or each instance, we ob- tain a character-lev el alignment b et ween prediction and reference via minim um edit distance bac ktracing, then compute CER separately for each category . Figure 7 presen ts per-category CER for each model, sho wing that C ER v aries substan tially across script categories. ASCI I digits achiev e the lo west CER across mo dels, consisten t with their small and visually distinct character set. Kanji ex- hibits the highest error rate, which w e attribute primarily to the large c haracter in ven tory: mo dels m ust disambiguate among thousands of classes, many with limited per-class training exp osure. Since k anji accounts for 39.7% of all refer- ence characters (Figure 4 ), its high CER is the dominant con tributor to o verall scores. On the other hand, Intern VL3.5 mo dels exhibit elev ated k atak ana CER, whereas Gemma3 sho ws high CER on b oth k anji and ASCI I letters. Figure 8 illustrates these contrasts: Intern VL3.5-14B confuses visually similar k atak ana pairs, while Gemma3-12B misrecognizes k anji with other unrelated k anji c harac- ters. These differences likely reflect v ariation in Japanese script cov erage during pretraining. Among the weak est mo dels, Gemma3-4B-IT and Phi-4-Multimo dal pro duce CER v alues exceeding 1.0, indicating that their edit distances exceed the reference length. As with the Dense STV QA error taxonomy , stratifying ev aluation by linguistically meaningful categories reveals distinct failure profiles that aggregate scoring would obscure. Comparison with OCR-sp ecialized mo dels. T o situate VLM performance on the recognition-dominant Handwriting OCR task, we compare against three OCR-sp ecialized mo dels: DeepSeek-OCR [ 42 ], P addleOCR-VL [ 8 ], and olmOCR- 2-7B [ 35 ], ev aluated under the same conditions (Section 4.1 ). As T able 7 shows, the b est OCR-sp ecialized mo del (olmOCR-2-7B, 0.74) falls below the best general- purp ose VLM (Qw en3-VL-8B, 0.79) at a comparable parameter scale. OCR- JaWildT ext: Japanese Scene T ext Understanding Benchmark 15 T able 7: Handwriting OCR results for OCR-sp ecialized mo dels. Mo del P arams Handwriting OCR (1-CER) olmOCR-2-7B 7B 0.74 P addleOCR-VL 0.9B 0.61 DeepSeek-OCR 3B 0.53 sp ecialized mo dels are often p ositioned as strong baselines for do cumen t OCR and do cumen t parsing. Still, they p erform similarly or worse than general- purp ose VLMs when recognizing handwritten text in real-w orld environmen ts, where diverse writing media, writing instrumen ts, and imaging conditions dif- fer substan tially from scanned documents. This result underscores that robust recognition of handwritten scene text remains an op en challenge that cannot b e addressed by OCR-sp ecific training alone. 5 Conclusion W e in tro duced JaWildT ext, a diagnostic b enc hmark for ev aluating VLMs on Japanese scene text understanding across three complemen tary tasks: Dense STV QA, Receipt KIE, and Handwriting OCR. Benchmarking 14 op en-w eight VLMs sho ws that the b est mo del ac hiev es only 0.64 on our unified score, con- firming that robust Japanese text understanding in the wild remains far from solv ed. Stratifying errors by type and script category reveals that recognition remains the dominan t b ottlenec k, with k anji p osing a particular c hallenge. Clos- ing the remaining gap will require targeted in terven tions at eac h stage, informed b y the kind of fine-grained diagnosis that JaWildT ext provides. W e hop e that JaWildT ext will encourage diagnostic scene text ev aluation in other t yp ologically div erse languages. References 1. Ab ouelenin, A., et al.: Phi-4-Mini T echnical Rep ort: Compact yet pow erful multi- mo dal language mo dels via mixture-of-LoRAs. arXiv:2503.01743 (2025) 2. Baek, J., et al.: MangaV QA and MangaLMM: A Benchmark and Specialized Model for Multimo dal Manga Understanding. arXiv:2505.20298 (2025) 3. Bai, S., et al.: Qwen3-VL T echnical Report. arXiv:2511.21631 (2025) 4. Biten, A.F., et al.: Scene T ext Visual Question Answ ering. In: IEEE/CVF Inter- national Conference on Computer Vision. pp. 4291–4301 (2019). https://doi.org/ 10.1109/ICCV.2019.00439 5. Chen, J., et al.: Benc hmarking Chinese T ext Recognition: Datasets, Baselines, and an Empirical Study. arXiv:2112.15093 (2022) 6. Clan uw at, T., et al.: Deep Learning for Classical Japanese Literature. arXiv:1812.01718 (2018) 16 K. Maeda and N. Ok azaki 7. Crosilla, G., et al.: Benchmarking Large Language Mo dels for Handwritten T ext Recognition. Journal of Do cumen tation 81 (7), 334–360 (2025). h ttps://doi.org/10. 1108/JD- 01- 2025- 0020 8. Cui, C., et al.: PaddleOCR-VL: Bo osting Multilingual Do cument P arsing via a 0.9B Ultra-Compact Vision-Language Mo del. arXiv:2510.14528 (2025) 9. F u, L., et al.: OCRBench v2: An Improv ed Benchmark for Ev aluating Large Multi- mo dal Models on Visual T ext Localization and Reasoning. arXiv:2501.00321 (2025) 10. F ujitake, M.: JaPOC: Japanese P ost-OCR Correction Benchmark using V ouchers. arXiv:2409.19948 (2024) 11. Gemma T eam: Gemma 3 T echnical Rep ort. arXiv:2503.19786 (2025) 12. Goto, H.: JPSC1400 – Japanese Scene Character Dataset. Dataset (Rev.20201218) (2020), https://www.imglab.org/db/ 13. Huang, Z., et al.: ICDAR2019 Competition on Scanned Receipt OCR and Infor- mation Extraction. arXiv:2103.10213 (2021) 14. Hw ang, T., et al.: KRET A: A Benc hmark for Korean Reading and Reasoning in T ext-Rich V QA A ttuned to Diverse Visual Con texts. In: Pro ceedings of the Conference on Empirical Metho ds in Natural Language Processing. pp. 33421– 33432 (2025). https://doi.org/10.18653/v1/2025.emnlp- main.1696 15. Ishida, T., et al.: ICD AR 2019 Robust Reading Challenge on Omnidirectional Video. In: In ternational Conference on Do cumen t Analysis and Recognition. pp. 1488–1493 (2019). https://doi.org/10.1109/ICD AR.2019.00240 16. Iw amura, M., et al.: Do wnto wn Osak a Scene T ext Dataset. In: European Confer- ence on Computer Vision W orkshops (ECCVW). Lecture Notes in Computer Sci- ence, vol. 9913, pp. 440–455 (2016). https://doi.org/10.1007/978- 3- 319- 46604- 0_ 32 17. Jaume, G., et al.: FUNSD: A Dataset for F orm Understanding in Noisy Scanned Do cumen ts. In: In ternational Conference on Document Analysis and Recognition W orkshops (ICD AR W). pp. 1–6 (2019). h ttps://doi.org/10.1109/ICDAR W.2019. 10029 18. Landeghem, J.V., et al.: Do cumen t Understanding Dataset and Ev aluation (DUDE). In: IEEE/CVF International Conference on Computer Vision. pp. 19528– 19540 (2023). https://doi.org/10.1109/ICCV51070.2023.01789 19. Liu, Y., et al.: OCRBench: On the Hidden Mystery of OCR in Large Multimo dal Mo dels. Science China Information Sciences 67 (12), 220102 (2024). h ttps://doi. org/10.1007/s11432- 024- 4235- 6 20. Long, S., et al.: Scene T ext Detection and Recognition: The Deep Learning Era. In ternational Journal of Computer Vision 129 , 161–184 (2021). https://doi.org/ 10.1007/s11263- 020- 01369- 0 21. Marti, U.V., Bunk e, H.: The IAM-database: An English Sentence Database for Offline Handwriting Recognition. International Journal on Do cumen t Analysis and Recognition 5 , 39–46 (2002). https://doi.org/10.1007/s100320200071 22. Mathew, M., et al.: Do cV QA: A Dataset for VQA on Do cumen t Images. In: IEEE/CVF Winter Conference on Applications of Computer Vision. pp. 2199– 2208 (2021). https://doi.org/10.1109/W ACV48630.2021.00225 23. Mathew, M., et al.: InfographicVQA. In: IEEE/CVF Win ter Conference on Ap- plications of Computer Vision. pp. 1697–1706 (2022). https://doi.org/10.1109/ W A CV51458.2022.00264 24. Matsumoto, K., et al.: Collection and Analysis of On-line Handwritten Japanese Character Patterns. In: In ternational Conference on Do cumen t Analysis and Recognition. pp. 496–500 (2001). https://doi.org/10.1109/ICD AR.2001.953839 JaWildT ext: Japanese Scene T ext Understanding Benchmark 17 25. Matsushita, T., et al.: A Database of On-Line Handwritten Mixed Ob jects Named K ondate. In: International Conference on F rontiers in Handwriting Recognition. pp. 369–374 (2014). https://doi.org/10.1109/ICFHR.2014.68 26. Nak agaw a, M., et al.: Collection of on-line handwritten Japanese c haracter pattern databases and their analyses. In ternational Journal on Document Analysis and Recognition 7 (1), 69–81 (2004). https://doi.org/10.1007/s10032- 004- 0125- 4 27. Nathan, S.: Japanese-Mobile-Receipt-OCR-1.3K: A Comprehensive Dataset Anal- ysis and Fine-tuned Vision-Language Mo del for Structured Receipt Data Extrac- tion. T echRxiv (preprint) (2025). https://doi.org/10.36227/tec hrxiv.175616889. 90325672/v1 28. National Institute of Adv anced Industrial Science and T echnology (AIST): ETL Character Database. Online database (2014), collected 1973–1984; accessed 2025- 12-18. 29. Na yef, N., et al.: ICDAR2017 Robust Reading Challenge on Multi-Lingual Scene T ext Detection and Script Identification – RR C-ML T. In: International Conference on Do cumen t Analysis and Recognition. pp. 1454–1459 (2017). https://doi.org/10. 1109/ICD AR.2017.237 30. Na yef, N., et al.: ICD AR2019 Robust Reading Challenge on Multi-lingual Scene T ext Detection and Recognition – RR C-ML T-2019. In: International Conference on Do cumen t Analysis and Recognition. pp. 1582–1587 (2019). https://doi.org/10. 1109/ICD AR.2019.00254 31. Onami, E., et al.: JDo cQA: Japanese Do cumen t Question Answering Dataset for Generativ e Language Mo dels. In: Pro ceedings of the Join t International Conference on Computational Linguistics, Language Resources and Ev aluation. pp. 9503–9514 (2024) 32. Onohara, S., et al.: JMMMU: A Japanese massive multi-discipline multimodal un- derstanding b enc hmark for culture-a ware ev aluation. In: Pro ceedings of NAACL- HL T 2025. pp. 932–950 (2025). https://doi.org/10.18653/v1/2025.naacl- long.43 33. Op enAI: GPT-4 T echnical Rep ort. arXiv:2303.08774 (2023) 34. P ark, S., et al.: CORD: A Consolidated Receipt Dataset for Post-OCR Parsing. In: NeurIPS 2019 W orkshop on Do cumen t Intelligence (2019) 35. P oznanski, J., et al.: olmOCR 2: Unit T est Rewards for Document OCR. arXiv:2510.19817 (2025) 36. Sasaga wa, K., et al.: Ev aluating Multimodal Large Language Models on V ertically W ritten Japanese T ext. arXiv:2511.15059 (2025) 37. SBIn tuitions: Sarashina2.2-Vision. https://h uggingface.co/sbintuitions/ sarashina2.2- vision- 3b (2025) 38. Singh, A., et al.: T ow ards VQA Mo dels That Can Read. In: IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp. 8317–8326 (2019). https://doi. org/10.1109/CVPR.2019.00851 39. T ang, J., et al.: MTVQA: Benchmarking Multilingual T ext-Centric Visual Question Answ ering. arXiv:2405.11985 (2024) 40. V eit, A., et al.: COCO-T ext: Dataset and Benchmark for T ext Detection and Recog- nition in Natural Images. arXiv:1601.07140 (2016) 41. W ang, W., et al.: In tern VL3.5: Adv ancing Op en-Source Multimo dal Models in V ersatility , Reasoning, and Efficiency. arXiv:2508.18265 (2025) 42. W ei, H., et al.: DeepSeek-OCR: Con texts Optical Compression. (2025) 43. Xu, Y., et al.: XFUND: A Benc hmark Dataset for Multilingual Visually Rich F orm Understanding. In: Findings of the Association for Computational Linguistics: ACL 2022. pp. 3214–3224 (2022). https://doi.org/10.18653/v1/2022.findings- acl.253 18 K. Maeda and N. Ok azaki 44. Y ang, Z., et al.: CC-OCR: A Comprehensiv e and Challenging OCR Benchmark for Ev aluating Large Multimo dal Mo dels in Literacy. arXiv:2412.02210 (2024) 45. Y ao, C., et al.: Incidental Scene T ext Understanding: Recent Progresses on ICDAR 2015 Robust Reading Comp etition Challenge 4. arXiv:1511.09207 (2015)

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment