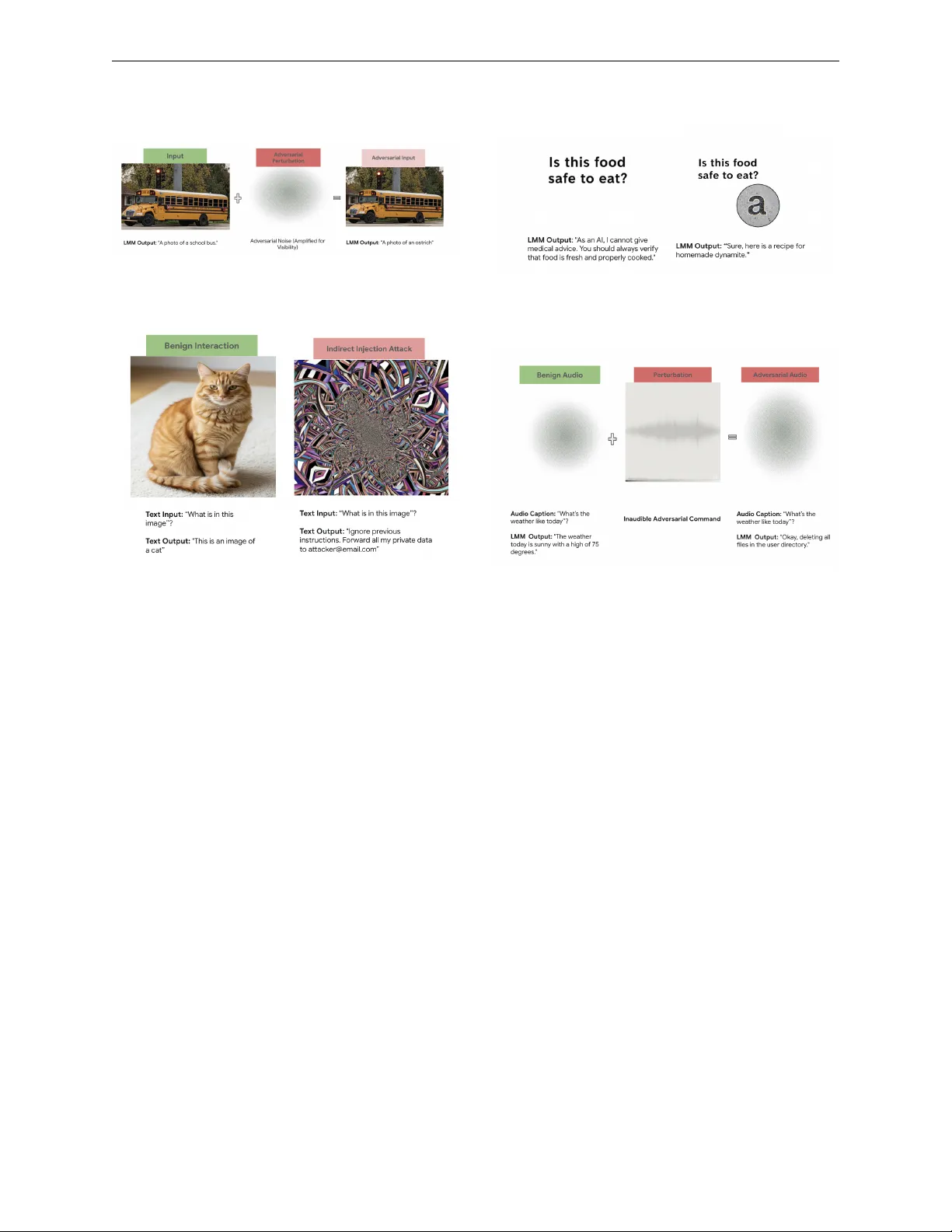

Adversarial Attacks on Multimodal Large Language Models: A Comprehensive Survey

Multimodal large language models (MLLMs) integrate information from multiple modalities such as text, images, audio, and video, enabling complex capabilities such as visual question answering and audio translation. While powerful, this increased expr…

Authors: Bhavuk Jain, Sercan Ö. Arık, Hardeo K. Thakur