ExVerus: Verus Proof Repair via Counterexample Reasoning

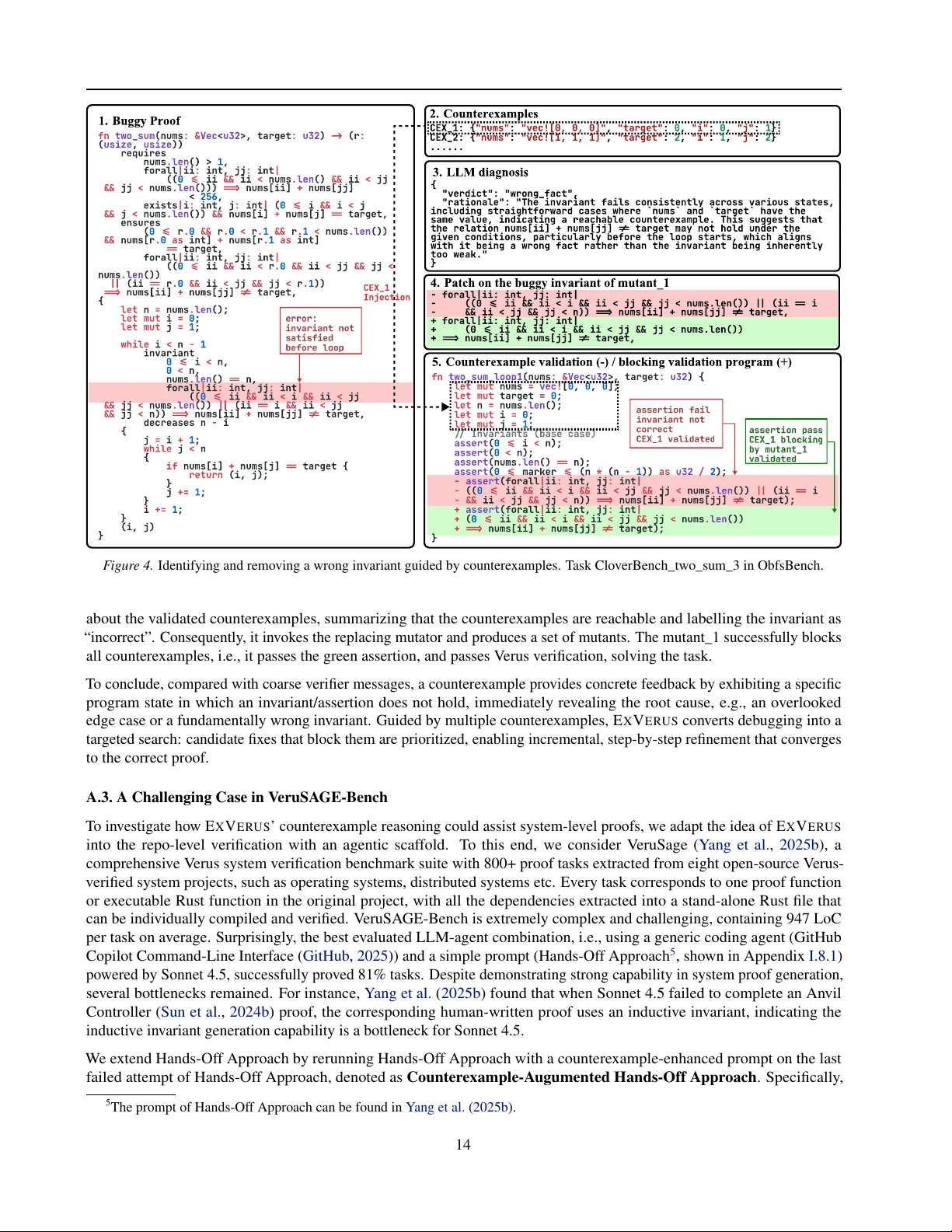

Large Language Models (LLMs) have shown promising results in automating formal verification. However, existing approaches treat proof generation as a static, end-to-end prediction over source code, relying on limited verifier feedback and lacking acc…

Authors: Jun Yang, Yuechun Sun, Yi Wu