Software Supply Chain Smells: Lightweight Analysis for Secure Dependency Management

Modern software systems heavily rely on third-party dependencies, making software supply chain security a critical concern. We introduce the concept of software supply chain smells as structural indicators that signal potential security risks. We des…

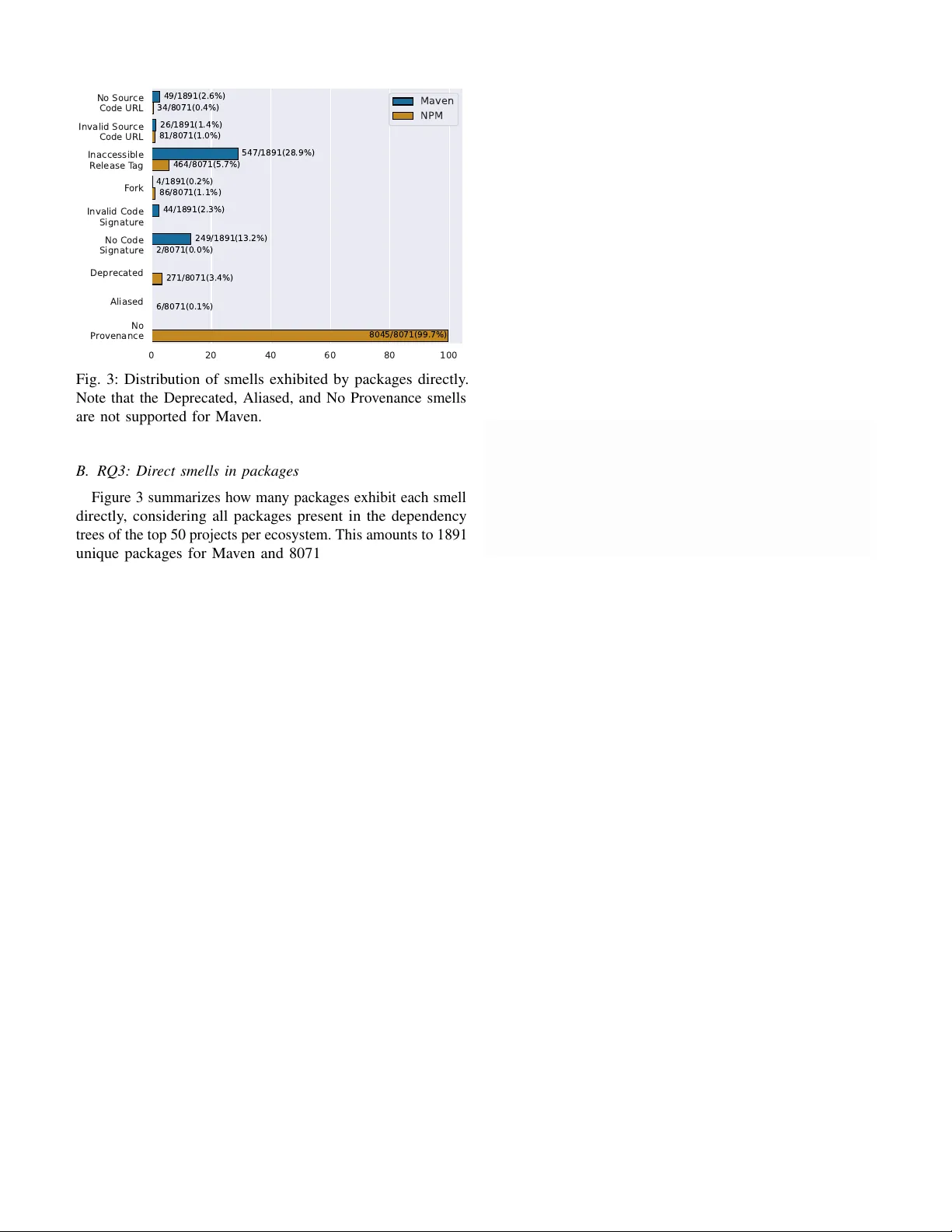

Authors: Larissa Schmid, Diogo Gaspar, Raphina Liu