Code Review Agent Benchmark

Software engineering agents have shown significant promise in writing code. As AI agents permeate code writing, and generate huge volumes of code automatically -- the matter of code quality comes front and centre. As the automatically generated code …

Authors: Yuntong Zhang, Zhiyuan Pan, Imam Nur Bani Yusuf

Code Review Agent Benchmark Y untong Zhang ∗ zhang.yuntong@u.nus.edu National University of Singapore Zhiyuan Pan ∗ † zy_pan@zju.edu.cn Zhejiang University Imam Nur Bani Y usuf ‡ inbyusuf@nus.edu.sg National University of Singapore Haifeng Ruan haifeng.ruan@u.nus.edu National University of Singapore Ridwan Sharideen sharideenr@acm.org SonarSource Abhik Roychoudhury abhik@nus.edu.sg National University of Singapore Abstract Software engineering agents have shown signicant promise in coding tasks - either writing an entire application, or writing a code patch to improve a software project by remediating an issue. As AI agents permeate code writing, and generate huge volumes of code automatically - the matter of code quality comes front and centre. As the automatically generated code gets integrated into huge code-bases — the issue of code review and broadly quality assurance becomes important. In this paper , we take a fresh look at the problem and curate a code review dataset for AI agents to work with. Our dataset called c-CRAB (pronounced see-crab) can evaluate agents for co de review tasks. Specically given a pull- request (which could b e coming from code generation agents or humans), if a code review agent produces a revie w , our evaluation framework can asses the reviewing capability of the code revie w agents. Our evaluation framew ork is used to evaluate the state of the art today - the open-source PR-agent, as well as commercial code review agents from De vin, Claude Co de , and Codex. Our c-CRAB dataset is systematically constructed from human reviews - giv en a human revie w of a pull request instance we gener- ate corresponding tests to evaluate the code review agent generated reviews. Such a benchmark construction gives us several insights. First of all, all of the existing review agents taken together can solve only around 40% of the c-CRAB tasks, indicating the potential to close this gap by future research. Secondly , we obser v e that the agent reviews often consider dierent asp ects from the human reviews - indicating the potential for human-agent collaboration for code inspection and review that could be deployed in future software teams. Last but not the least, the agent generated tests from our data-set act as a held out test-suite and hence quality gate for agent generated re views. What this will mean for future collaboration of code generation agents, test generation agents and code review agents - remains to be investigated. Ke y w ords A utomated Co de Re view , Benchmark, LLM Agent 1 Introduction Code review is a fundamental quality assurance practice in mod- ern software development. Before a proposed change is merge d ∗ The rst two authors contributed equally to this research. † W ork done while author is full-time at National University of Singapore. ‡ Corresponding author . into the main codebase, dev elopers insp ect pull r equests (PRs) to detect defects, enforce project standards, and maintain long-term code quality . Traditionally , this pr o cess r elies on human reviewers who carefully examine pull requests (PRs) and provide feedback to authors. Recent progress in software engineering agents has signif- icantly accelerated the process of writing code. AI systems can now generate large amounts of code automatically , both for implement- ing new features [ 8 , 31 ] and for resolving issues [ 26 , 30 ] in existing repositories. As these systems become increasingly integrated into development worko ws, they contribute to a growing number of generated code and submitted PRs [ 16 , 19 ]. Howev er , the capacity of human reviewers has not scaled at the same pace [ 4 ], creating a new bottleneck in the development pipeline: while code generation is increasingly automated, ensuring the quality of generated code still relies heavily on manual inspection. This challenge has motivated the development of automated code review agents that analyze pull requests and generate review feedback [ 7 , 18 , 21 , 22 ]. Several tools and systems have recently been proposed to automatically produce review comments aimed at identifying bugs, design issues, or maintainability concerns. Y et a central question remains: to what extent do these automate d review tools identify the same issues that human reviewers would raise? Existing evaluation methods do not answer this question well. Most benchmarks compare generated comments against human- written reviews using textual overlap or embedding similarity met- rics [ 5 , 6 , 13 , 24 , 25 ]. Such metrics primarily measure resemblance in wording rather than whether a revie w identies a meaningful issue in the code. However , we posit that these evaluation approaches are not well suited for measuring how closely AI-generated reviews align with the concerns raised by human r eviewers. Human revie w comments are often noisy artifacts of the review pr ocess: they may include clarication questions, subjective stylistic suggestions, or back-and-forth discussions between reviewers and authors. As a result, the wor ding of a comment does not always correspond di- rectly to the underlying issue being raised. Measuring similarity between comments is therefore problematic. A review may cor- rectly identify a valid issue using entirely dierent wording and still receive a low similarity score . Similar limitations also arise in other evaluation methods, such as localization metrics [ 3 , 29 ] and LLM-as-a-judge [ 3 ]. Localization metrics only assess whether an issue is identied at the same code lo cation as the ground truth human reviews while ignoring the substance of the review . Fur- thermore, LLM-as-a-judge appr oaches can be sensitive to prompt design and inherent randomness, causing their judgments dicult to reproduce reliably . In this paper , we revisit the problem of evaluating automate d code review tools and intr oduce c-CRAB (pronounced see-crab ), a benchmark designed to assess how well automated review tools identify the same issues that human reviewers raise in realistic PR settings. Instead of evaluating reviews solely based on similarity to reference comments, c-CRAB adopts a test-based evaluation. Specically , human revie w feedback is systematically converted into executable tests that capture the underlying issues identied during the review pr ocess. These tests ser ve as objective evaluation oracles: if a review agent identies an issue corresponding to a failing test, the issue can be veried automatically in the code. T o evaluate a review tool, we provide the tool with a PR and collect the review comments it produces. A separate coding agent then revises the code in the PR based on the generated review comments from the revie w to ols, and the r evised version is executed against the curated tests. If the tests pass, this indicates that the review successfully identies actionable issues that lead to correct code improvement. By doing so, c-CRAB measures the extent to which automated review tools discover issues originally identied by human reviewers. This e valuation therefore measures whether automated review ers can raise issues that correspond to those raised by human review ers in real PRs. Using c-CRAB , we evaluate several state-of-the-art review agents, including the open-source PR-Agent, and the review capabilities of Devin Review , Claude Code, and Codex. Our results show that current systems successfully identify only about 40% of the issues captured in the benchmark, indicating substantial room for im- provement in automated code revie w . W e further obser v e that AI- generated reviews often focus on dierent aspects of code compared to human reviewers. Ho wever , they remain valuable in identifying problems and suggesting improvements. This dierence highlights complementary perspectives that may supp ort future human- AI collaborative review worko ws. In summar y , the contributions of this paper are as follows: • W e introduce c-CRAB , a benchmark dataset for evaluating au- tomated code review agents on real-world pull r equests. • W e propose an evaluation framework that converts human review feedback into executable tests, enabling objective validation of review quality . • W e evaluate se veral state-of-the-art review agents and show that current systems solve only ab out 40% of the b enchmark tasks, indicating substantial room for improvement in automated code review . • W e analyze dierences between human and AI-generated re- views, rev ealing complementar y perspectives that motivate fu- ture human-agent collaboration in code review . 2 Background and Relate d W ork 2.1 A utomated Code Review T ools A utomated co de r eview tools can be broadly categorized into static analysis-based approaches and AI-based approaches. Static anal- ysis tools detect vulnerabilities and code quality issues based on pre-dened rules, patterns, or data-ow analysis. In contrast, AI- based review tools typically lev erage LLMs to detect issues based on learned patterns during LLM’s training, as well as contextual knowledge provided through prompts or agentic scaold. These review tools are typically triggered when a Pull Re quest (PR) is opened on GitHub, GitLab , or BitBucket. When triggered, AI-based review to ols collect necessary context, such as the modied lines in the PR, the surrounding code context, and repository-level context such as dependency graphs. This colle cted context is then ana- lyzed by an LLM to identify potential issues. Some AI-based r eview tools may perform additional post-processing, such as false positive ltering or severity ranking, before the reviews are presented to developers. As output, most r eview tools present the reviews as a summary comment or inline comments in the PR. In this pap er , we focus specically on the evaluation of AI-based co de r eview tools. 2.2 Benchmarks for A utomated Code Review T able 1 summarizes the existing available benchmarks for code re- view . Early work primarily focuses on ne-grained settings, where review comments are aligned with localized code changes such as lines, methods, or di hunks. Datasets, such as in [ 5 , 9 , 13 , 24 , 25 ], construct large-scale pairs of code changes and human-written comments mined from op en-sour ce repositories. These datasets have been widely use d to train and evaluate models for review comment generation. However , such datasets typically have lim- ited context granularity , often isolating individual di hunks and abstracting away the broader pull requests and repository context. Consequently , they do not fully capture the complexity of real- world code review . More recent benchmarks move toward PR-level and repositor y-aware evaluation. Datasets, such as in [ 3 , 22 , 29 ], incorporate richer contextual signals, including full pull requests, surrounding code, and r ep ository structure . These benchmarks bet- ter reect practical deployment settings, where re view tools must reason over larger conte xts and diverse types of issues. Despite these progress, existing benchmarks dier in how they dene evaluation oracles. Most rely on mined human review com- ments as reference signal, while more recent work introduces expert annotations [ 29 ] or LLM-augmented labels [ 13 ] to improv e data quality . Nevertheless, the majority of benchmarks still treat natural- language human comments as the primar y ground truth, which introduces challenges due to noise, incompleteness, and variability in human revie w practices. Ours is the only dataset that depends on dynamic program behavior as witnessed by tests (albeit generate d from human revie ws). 2.3 Evaluation Methods for Co de Reviews A common strategy for evaluating automate d code revie w systems compares generated comments against human-written reviews based on similarity with the human revie ws, such as BLEU [ 14 ], ROUGE [ 10 ], chrF [ 17 ], and emb edding-based similarity [ 5 , 6 , 13 , 24 , 25 ]. While these metrics are easy to compute at scale, r e cent studies further show that human review comments used as references can be noisy or incomplete [ 6 , 11 ]. Several studies also uses lo calization to evaluate code review tools [ 3 , 29 ]. This metric measures whether automated code review tools identify concerns at the same locations as human reviewers. Howev er , it evaluates only the location of identied issues, instead of the content or nature of the concerns raised. Another study [ 22 ] 2 T able 1: Comparison of automated code review benchmarks. Dataset Y ear Oracle source Repo-lev el Support Evaluation T ufano-21 [ 25 ] 2021 Mined OSS PR reviews No N-gram overlap CodeReviewer [ 9 ] 2022 Mined OSS PR reviews No N-gram overlap T ufano-22 [ 24 ] 2022 Mined OSS PR reviews No N-gram overlap CRScore corpus [ 13 ] 2025 LLM + static analysis No Embedding similarity ContextCRBench [ 5 ] 2025 Mined OSS PR reviews No N-gram overlap SWE-CARE [ 3 ] 2025 Mined OSS PR reviews Y es Localization + N-gram overlap + LLM-as-a-judge AA CR-Bench [ 29 ] 2026 Mined OSS PR reviews Y es Localization RovoDev [ 22 ] 2026 Logs + user feedback Y es Online production metrics Qodo Benchmark [ 27 ] 2026 Mined OSS PR reviews Y es LLM-as-a-judge CR-Bench [ 15 ] 2026 Mined OSS PR issue description Y es LLM-as-a-judge c-CRAB (Ours) 2026 Mined OSS PR reviews Y es T est-based evaluates code review tools using online production metrics, such as acceptance rate. While these metrics more accurately reect real-world usage, they are dicult to obtain because they require access to a production environment for data collection. Another emerging paradigm uses large language models as judges to directly assess revie w quality [ 3 , 28 ]. In this setting, an LLM is prompted to assess the quality of generated revie w com- ments by comparing them with human-written fe edback or by reasoning about the code changes and the identied issues [ 3 , 28 ]. Compared with lexical or emb edding-based similarity metrics, LLM- based evaluation can consider richer contextual information and may better capture aspe cts such as correctness, relevance , and use- fulness of review feedback. Although promising, LLM-as-judge ap- proaches can suer from bias, instability , and sensitivity to prompt design [ 20 , 23 ]. These factors make it dicult to ensure reproducibil- ity and consistency when LLMs are used as evaluation oracles. In contrast, c-CRAB introduces a test-based evaluation frame- work for automated code review . Instead of comparing generated reviews against reference comments, we convert human review feedback into executable tests that capture the underlying issues identied by humans during the review pr o cess. Each benchmark instance includes an executable environment, enabling automated review ers to be evaluated in a reproducible setting. These tests serve as objective evaluation oracles, allowing scalable, stable, and veriable assessment of automated review agents. 3 Motivation First, we demonstrate that existing evaluation methods fail to cap- ture whether automated reviews align with the intentions of human review ers. W e then sho w how our test-base d evaluation in c-CRAB overcomes this limitation. 3.1 Comparison with Similarity Metrics Consider the pull request from python-telegram-bot 1 , which in- troduces a check to reject keyboard inputs with more than two dimensions. A human reviewer raise d the following concern on the line shown in Figure 1 : 1 https://github.com/python- telegram- bot/python- telegram- bot/pull/3514# discussion_r1083264889 if isinstance (keyboard[0][0], Sequence) and not isinstance (keyboard [0][0], ( str , bytes)) Figure 1: The line of code under revie w . At this point we haven’t yet established that the rows are sequences as well. check_keyboard_type([1]) would raise an exception with this addition IISC. This comment identies a robustness issue: the implementation assumes that nested elements are sequences and may raise an ex- ception on malformed inputs (e.g., [1] ) instead of safely rejecting them. Now consider the review generated by Codex for the same patch: keyboard[0][0] is accessed before validating shape/contents, so mal- formed but common invalid inputs like [] , [[]] , or [1] can raise IndexError / TypeError instead of returning False (and then produc- ing the expected ValueError in markup constructors). W e can observe that this comment raises the same concern about in- dexing that can lead to runtime err ors. Howe ver , the two comments describe the issue in dierent ways. T able 2: Comparison of c-CRAB against prior similarity- based metrics. Metric V alue Interpretation BLEU-4 0.00 The degree of n-gram overlap between human and automated tool reviews. ROUGE-L 7.02 chrF 20.74 Embedding similarity 54.59 The relative distance between hu- man and automated tool reviews in the embedding space. Our W ork T est passed? Y es Whether the reviews successfully capture concerns raised by humans during review . T able 2 reports the similarity scores obtained using metrics com- monly adopte d in prior work on co de review evaluation. Here, 3 BLEU-4 measures the precision of up to 4-gram overlaps with a brevity penalty , ROUGE-L captures the longest common subse- quence (LCS) to reect sequence-level similarity , and chrF com- putes an F-score based on character-level 6-gram overlap. For the embedding similarity , we convert both reviews into an embedding vector using Sentence Transformers 2 and compute the cosine sim- ilarity , following the prior work [ 6 ]. All scores are normalized to 0–100. The result show that all similarity metrics yield low scores. The low scores on BLEU-4, ROUGE-L, and chrF are expe cted due to the minimal n-gram overlap between the human and automated tool reviews. The embedding-base d similarity yields better score than chrF. However , the score remains moderate and cannot precisely capture that both reviews raise the same concern. This is because embedding-based similarity measures the proximity in the embed- ding space and the emb edding representation is ae cted by how the reviews ar e expressed (e.g., w ord choices, sentence structure) [ 1 ]. As a result, two reviews that describes the same concern can still be relatively distant in the embedding space if they dier in phrasing. 3.2 Comparison with LLM-as-a-Judge live_events_columns: ArrayField = ArrayField(models.CharField( max_length=200), null=True, blank=True) Figure 2: The line of code under revie w . Let us consider another pull request from posthog 3 . As shown in Figure 2 , a eld with type ArrayField was added to a class. On this line of code change, a human review er raised the concern: Let’s make it a TextField . Somebody might want to put three 60- character-long columns here, and boom, we ’re out of space. Claude Code generated the following review comment: posthog/models/team/team.py:line 114 live_events_columns uses ArrayField(models.CharField(max_length=200)) . Property keys can be arbitrary strings; a hard limit of 200 characters may silently truncate very long property names or cause save failures. Consider aligning this with person_display_name_properties which uses max_length=400 , or document the constraint. Claude Code does a go od job at defect detection and points out the same issue as human review: CharField in django library can- not handle arbitrarily long strings. However , the automated revie w comment is still not fully aligned with the human reviewer’s intent. Here, its suggested x is dierent: it only proposes a trivial miti- gation while the human review er suggeste d r eplacing CharField with TextField , which truly solves the root issue. For prior work that adopts vanilla LLM-as-a-Judge evaluation, LLM is typically used to judge whether an automated review points out the same issue as human reviews. Ho wever , as the ab o ve exam- ple shows, alignment with human intent means more than this, as the corr e ctness and quality of suggested x also plays an important 2 https://sbert.net 3 https://github.com/posthog/posthog/pull/10293#discussion_r897800551 def test_check_keyboard(): """ Test for a bug where check_keyboard_type tries to access keyboard[0][0] before validating that rows are sequences. For keyboard=[1], the function should not raise an exception and should return False. """ keyboard = [1] try : result = check_keyboard_type(keyboard) except Exception as exc: ... assert result is False Figure 3: The test ensuring check_keyboard_type([1]) re- turns False instead of raising an exception. role in code review , and previous LLM-as-a-Judge methods cannot distinguish between the above two review comments. 3.3 Our benchmark: c-CRAB The key idea of c-CRAB is to evaluate review comments based on whether they lead to behaviorally correct xes. During evaluation, we provide the generated re view to a coding agent, which revises the patch accordingly . The revised patch is then executed against the synthesized test. If the revised patch passes the test, we consider the review useful, as it successfully captures the underlying issue originally identied by the human reviewer . For the human r eview in Figure 1 , we convert the human revie w into the executable test shown in Figure 3 to capture its intent. This test directly captures the re viewer’s concern by asserting that the function must not perform unsafe nested indexing before validat- ing input structure. Unlike similarity-based metrics, which rely on textual overlap or semantic similarity of text, this test encodes the expected behavior as an executable spe cication. In this example, the review generated by the automated review to ol enables the coding agent to revise the initial patch that passes the test. This indicates that, despite low textual similarity , the generate d r eview successfully captures the same underlying issue as the human re- view and is therefore consider e d useful under our e valuation. For the human review in Figure 2 , the to ol-generated review does not successfully guide the coding agent toward a patch that satises the executable test. This is b ecause the test expects the presence of TextField in the source code, whereas the tool-generated review proposes an alternative x by increasing the maximum length. Con- sequently , the revised patch fails to satisfy the test. In contrast, in our experiment, an LLM-as-a-judge considers both reviews to raise the same concern although both revie ws suggesting dierent xes. This ability to capture the precise review intent using executable tests is what makes c-CRAB dierent compared to LLM-as-a-judge. Moreover , another concern with the LLM-as-a-judge is the inherent randomness of its output that makes the evaluation not reliable. 4 Methodology In this section, we rst present the high-level design of c-CRAB , followed by discussion on benchmark construction, evaluation pro- cedure, and implementation details. 4 Pass? Benchmark Cur ation Agent R eview Evaluation Executable Te s t Forked Commit Human Re view Pull Req uest Commit Under Review Merged Commit Fixed Code Fixed Code Commit Under Review Agent Re view Automat ed Review Tool Distinguishing? Coding Agent Differential Testing Commit Under Review Merged Commit Filteri ng Discard Pull R equest Executa ble Te s t Executi on Envir onment Coding Agent Pass? Discard Add Be nchmark Instance Coding Agent Executable Oracle Pass Fail Legend Yes/Pass No/Fail Figure 4: Idea b ehind Benchmark Construction. 4.1 c-CRAB Over vie w The challenge in evaluating co de review lies in the fact that the value of a review comment is not determined by how closely it matches human wording, but by whether it identies an issue whose resolution improves the code. This obser vation motivates the design of c-CRAB , a benchmark that evaluates revie w comments based on whether they lead to veriable improvements in the code. At a high level, each b enchmark instance consists of a pull re- quest containing a patch to b e reviewed and a set of test cases derived from human review feedback. These tests enco de issues identied by human review ers. Each test is constructed to fail on the original patch and pass once the corresponding issue has been resolved. During evaluation, an automated review tool is provided with a PR instance and asked to analyze the PR and generate re- view comments. These comments are used as guidance for a coding agent to revise the code changes in the PR. The revised code is subsequently executed against the b enchmark tests. Because each test represents an issue originally identied by human r eviewers, passing a test indicates that the corresponding issue has been suc- cessfully addressed. Therefore, if a test fails on the original PR but passes after the revision, we attribute the impro vement to the usefulness of the review feedback that guided the modication. c- CRAB measures review usefulness by whether generated feedback leads to resolving human-identied issues, as shown by passing of corresponding tests. 4.2 Benchmark Curation Figure 4 illustrates our benchmark curation pipeline. For each pull request, the pipeline takes three artifacts as input: the pull request description, the candidate patch, and the asso ciated human revie w comments. The goal of the pipeline is to construct a benchmark instance that preserves the PR context while providing a set of tests derived from human revie ws. This goal is nontrivial for two rea- sons. First, raw code review discussions are inherently noisy: many comments are conversational or exploratory and therefore cannot be reliably translated into executable tests. Second, generated tests must be executed within a properly constructed environment that reproduces the repository’s dependencies and runtime conditions. Without such infrastructure, tests cannot be executed consistently during evaluation. T o address these challenges, we design a structured curation pipeline consisting of four stages: review ltering , executable envi- ronment construction , converting NL comments to executable tests , and validation with coding agents . First, we lter re view comments to retain only those that correspond to objectively veriable issues. Second, we construct an executable environment for each PR in- stance to ensur e that generated tests can be executed reliably . Thir d, we synthesize tests from the retained review comments. The key idea is to encode the issue raised by a human r eview comment as a test that fails on the candidate patch but passes once the issue is xed. Finally , we perform additional validation to ensure that benchmark instances contain only comments that can b e resolv ed by a coding agent. W e describe each stage of the pip eline below . 4.2.1 Review Filtering. The objective of the ltering stage is to re- tain only review comments that can be translated into high-quality test cases serving as benchmark targets. In raw PR discussions, not every human comment reects a concrete and actionable con- cern in the candidate patch. Many comments are conversational in nature, such as requests for clarication, acknowledgments, or praise. While such comments may still play an important role in real-world collaboration, they are not suitable for our benchmark because they cannot be mapped unambiguously to executable tests. Furthermore, these types of comments ar e generally not expecte d to be produced by automated code review tools. Including them would introduce noise into the curation pipeline and reduce the validity of the resulting tests. Therefore , the goal of the ltering stage is to identify review comments that expr ess veriable issues whose resolution can, in principle, be validated through execution. T o achieve this in a scalable manner , we design an automated ltering classier by prompting an LLM. T o dene the ltering prompt, we rst construct a small gold set D through manual annotation, which is used to develop and rene the LLM prompt later . W e randomly sample 100 review comments from the candidate pool, and two authors indep endently label each comment as either high-quality or low-quality for benchmark construction, providing a short justication for each label. A comment is labeled as high- quality if it identies a specic, actionable, and objectively veriable issue in the patch. Conv ersely , comments are labeled as low-quality if they lack a concrete action item, such as conversational exchanges, acknowledgments, or concerns that cannot be reliably validated through testing. After the independent annotation phase, the tw o authors compare their labels and resolve any disagreements through discussion, resulting in a set of ground truth labels for D . Given this gold set with ground-truth lab els, we iteratively rene an LLM prompt so that an LLM can make correct classications in the gold set. This prompt renement process continues until the prompt can make the LLM-based classication reach a predened 5 precision threshold. The resulting prompt is used to classify the remaining review comments outside of the gold set, ltering out low-quality instances. 4.2.2 Execution Environment Construction. An execution environ- ment is essential in the curation of c-CRAB , as a generate d test has to be executed and veried before being used as a reliable oracle for evaluating review comments. During this stage, we construct an isolated Docker environment for each pull request in the dataset. A successfully built environment is a Docker image that consists of pre-installed operating system and software dependencies, and a pre-congured source code repository , providing out-of-the-box scaolding for subsequent pipeline stages. The detailed steps to build such environments are as follows. First, we generate scripts that clone the git repository and check out the commit to be review ed. Se cond, w e implement a heuristic script builder that automatically generates installation scripts by detecting build tools and inferring software dependencies and their versions from the corresponding specication les (e.g., setup.py and pyproject.toml in Python projects). These scripts, along with Dockerles that set up the op erating system, form all necessary components required by execution environment construction. Fi- nally , each built Do cker image is tested to ensure that an editable installation of the repository exists and all dependencies are in- stalled. In some cases, especially when it comes to historic pull requests, the automatically generated installation scripts can fail b ecause the original specications are too lo ose now . For example, some projects did not specify the versions or only specied lower b ounds for some of the dependencies. T o overcome the issue for these his- toric commits, we use an automated coding agent running in an isolated environment to audit the dependency requirements, pin- point the accurate dependency versions and override the previously generated installation script. 4.2.3 Converting NL Comments to T ests. The objective of this stage is to transform each retained natural-language review comment into an executable test that captures the issue raised by the human review er . For a review comment 𝑐 associated with le 𝑓 , the input to this stage consists of three components: (1) the natural-language comment and its corresponding di hunk, (2) the r ep ository state at the PR head commit (the before version), and (3) the repositor y state after the issue has b een addressed (the after version). Given this information, we invoke an LLM-based loop to synthesize a test case that reects the issue described in the comment. The goal is to generate a test case 𝑡 𝑐 that fails in the before version and passes in the after version. The generated test 𝑡 𝑐 therefore serves as an executable oracle repr esenting the issue originally identied by the human review er . Before generating tests, we construct the contex tual information required to interpret each r eview comment. Review comments in pull requests often refer to specic lines within a di. Therefore, we align each comment with its corresponding code region using the di metadata provided by the pull request. Then, we retrie ve the full le contents of the aected le 𝑓 at both the before and after commits This context provides the LLM with sucient information to understand the intended behavior and the modication that resolves the issue. Using the extracted context, we prompt an LLM to generate a candidate test case that captures the issue described in the comment. The prompt includes the r eview comment, the relevant di hunk, and the le-level code context. After a candidate test is generated, we execute it against both the before and after repositor y states. The execution outcome determines whether the test satises the fail-then-pass requirement. If the test fails on the before version and passes on the after version, it is accepted as a valid oracle. In practice, the initial generation may not satisfy the fail-then- pass requirement due to issues such as incorrect imports, runtime errors, or assertions that do not distinguish between the two r ep os- itory states. T o address this, we employ an execution-guided re- nement loop. When a generated test does not satisfy the required behavior , the execution traces and error messages ar e fed back to the coding agent, which revises the test accordingly . This process continues for a xed number of iterations until either a valid test is produced or the attempt budget is exhausted. 4.2.4 V alidation with Coding Agents. The nal stage of the cura- tion pipeline ensures that each b enchmark instance can be reliably resolved by the coding agent used in the evaluation phase when provided with the corresponding human review . This validation step is necessar y because the evaluation protocol of c-CRAB relies on a coding agent to modify the patch accor ding to generated re- view comments. Therefore , we must ensure that when the coding agent fails to make the generated test pass during evaluation, the failure can be attributed to the quality of the revie w feedback rather than limitations of the coding agent itself. Concretely , for each instance, we ask a coding agent A to im- prove the code using the human review comment as guidance. The agent is provided with the patch under review , the relevant le context, and the natural-language review comment that motivated the generated test. The tests produced during the test generation stage are not exposed to the agent and are used only for post-hoc verication. An instance is considered valid if the agent produces a revision that causes the corresponding test to pass, thereby sat- isfying the fail-then-pass property . If the agent fails to pr o duce a revision that passes the test within a xed numb er of attempts, the instance is discarded. 4.3 Benchmark Usage 4.3.1 W orkf lo w . The goal of the evaluation phase is to measure the usefulness of review feedback generated by automated code review tools. Sp ecically , we evaluate whether the issues raised by an automated review tool can guide the coding agent A (the same coding agent used in Section 4.2.4 ) to improve the patch under review and cause the e xe cutable tests to pass. The evaluation comprises two stages: review generation and review-guided r evision. In the rst stage, each automated r eview tool analyzes every instance in the nal b enchmark and generates review comments given the patch under review and PR description. In the second stage, the generated reviews are used to guide the coding agent A to revise the patch. Specically , we provide the agent with the patch under revie w and the review comments pro- duced by the tool under evaluation. The agent attempts to modify the patch according to the generated revie ws. The revised patch is then executed against the tests associated with the benchmark 6 T able 3: The numb er of remaining PR instances and Review Comments after each processing step. Pipeline Stage # PR # Comments Initial Dataset 671 1,313 Review Filtering 410 595 Executable Environment Construction 410 595 Converting NL Comments to T ests 339 481 V alidation with Coding Agents (Final) 184 234 instance. If the revision causes a test to pass, the corresponding review feedback is considered aligned with human reviews, as it successfully guides the same agent A to resolve the issue originally identied by human reviewers. 4.3.2 Evaluation Metric. Formally , let 𝑇 𝑖 denote the validated test set associated with benchmark instance 𝑖 , and let ˆ 𝑃 𝑖 denote the patch produced by the coding agent A when guided by the re- view ndings generated by a tool. W e dene the instance-level performance as the pass rate of the validated tests: 𝑠 𝑖 = | { 𝑡 ∈ 𝑇 𝑖 | 𝑡 ( ˆ 𝑃 𝑖 ) = PASS } | | 𝑇 𝑖 | . The overall performance of a review tool is measured by the aggre- gate test pass rate across benchmark instances. W e note that the pass rate in c-CRAB reects the extent to which review tools can identify issues found by human reviewers (i.e., the true positive rate when human reviews are treated as ground truth). A utomated review tools may also generate other valuable comments that ar e not identied by human reviewers; howev er , like other existing benchmarks, c-CRAB does not directly evaluate these additional comments. T o provide a more complete picture , we further discuss the quality of such additional r eviews generate d by automated tools in Section 5.2 . 4.4 Benchmark Implementation W e build c-CRAB on top of SWE-CARE [ 3 ] because it already provides PR instances with the necessary commit metadata More generally , however , our pipeline is dataset-agnostic: any pull request that provides the required metadata could serve as a candidate instance for the curation process. 4.4.1 Implementation of the Curation Pip eline . All the prompts used during review ltering, test generation, and nal validation step are included in the replication package [ 2 ]. W e leverage GPT - 5.2 to perform review ltering (Section 4.2.1 ). GPT -5.2 is also used for NL comments to tests conv ersion (Section 4.2.3 ), and up to three LLM attempts with execution feedback are allow e d to generate the nal test. For validation (Section 4.2.4 ), we use Claude Code with Sonnet-4.6 backend as the coding agent. 4.4.2 Benchmark Statistics. Table 3 summarizes the number of remaining pull requests and re view comments after each stage of the curation pipeline. Overall, the resulting benchmark contains 184 pull request instances and 234 validated review comments, each associated with at least one executable test. The reduction in number of comments from initial dataset is expected, given the T able 4: Statistics of the nal c-CRAB benchmark. Statistic V alue Pull Requests Repositories 67 Instances (PRs) 184 A vg. mo died lines 418.1 T ests / Review Comments T otal review comments (tests) 234 Behavioral 42 (17.9%) Structural 192 (82.1%) A vg. tests p er instance 1.27 A vg. lines p er test 31.8 strict requirements imposed at each stage of the pipeline. The nal dataset represents a carefully curated subset of the original data and supports a faithful evaluation of review usefulness. The detailed statistics of the curated benchmark is shown in Table 4 . The 234 tests in c-CRAB are further categorized into behavioral and structural . Behavioral tests import and execute the tested co de at runtime. They invoke the tested functions with specic inputs, and check outputs or verify exceptions. On the other hand, structural tests inspect source code text, matches patterns, and check API surfaces to determine whether desired code changes have been made. 4.4.3 T est Suites ality Assurance. T o assess the quality of the test cases, we randomly sampled 50 instances and two authors independently evaluate whether each test faithfully captures the concern expressed in the corr esp onding human review comment. For each instance, annotators were provided with the generated test, the original review comment, and the relevant di hunk, and were allowed to che ck the original pull request when additional context was necessary . The annotators achieved an agreement rate of 84%, indicating that the generated tests generally align well with human-identied issues. The remaining disagreement cases arise from partial coverage of the re view comment and occasional overtting of tests to specic implementations. 5 Results W e aim to answer the following research questions: • RQ1: How do dierent automated review tools perform on c-CRAB ? This RQ quantitatively evaluates how eectively automate d review tools can capture issues raised by humans, as measured by their ability to help a coding agent pass tests derived from human reviews. • RQ2: How do es reviews generated from the automated review tools dier compared to human reviews? The goal in this RQ is to analyze the types of reviews raised by automate d tools and humans and analyze the dierences between them. 5.1 Experimental Settings 5.1.1 Evaluated T ools. W e select four representative automated code review tools spanning both open-source and proprietary so- lutions that are actively used in practice: Claude Code 4 , Codex 5 , 4 https://code.claude.com/docs/en/overview 5 https://openai.com/codex 7 T able 5: Performance comparison of automate d review to ols. # Comments Pass Rate (%) Reviewer T otal A vg/PR Behavioral Structural Overall A utomate d T ool Claude-Code 1336 7.3 38.1 30.7 32.1 Codex 324 1.8 38.1 16.1 20.1 Devin 1344 7.3 31.0 23.4 24.8 PR- Agent 524 2.8 38.1 19.8 23.1 Human 234 1.3 100 100 100 Figure 5: Overlap of passe d test for each review tool. Devin Review 6 , and PR- Agent 7 . PR- Agent is an open-source tool, while the remaining tools are pr oprietar y solutions. W e run each review tool to pr o duce per-instance r eview comments on the 184 PR instances in c-CRAB . 5.1.2 Evaluation Configuration and Environment. W e keep the cod- ing agent use d in benchmark curation validation (Se ction 4.2.4 ) and review evaluation (Section 4.3.1 ) consistent. Both steps use Claude Co de with a xe d Sonnet-4.6 backend. All experiments were conducted on a Ubuntu 20.04 server . 5.2 RQ1: Evaluation Scores on c-CRAB T able 5 presents the p erformance of automated code r eview tools on c-CRAB . Recall that each test encodes a concern originally raised by a human revie wer . Therefore, a higher pass rate indicates that the review comments produced by a to ol more often guide the coding agent toward changes that address concerns similar to those identied by human reviewers. - Clear gap b etw e en human and automated review tools. The results show that the automated r eview tools achieve pass rates ranging from 20.1% to 32.1%, whereas human review ers achieve a pass rate of 100%. Figure 5 shows the overlap of the passed tests across the four review tools. Considering the union across all four tools, 97 out of the 234 tests were passed by at least one tool (41.5%), which shows there is still a substantial gap between AI-generated revie ws and human reviews, e ven when multiple tools are combined. 6 https://app.devin.ai/revie w 7 https://github.com/qodo- ai/pr- agent T able 6: Manually lab elled usefulness of review comments generated by four AI co de review tools across six PRs. T ool # Comments # Useful Useful % Claude Code 40 31 78% Codex 8 7 88% Devin 26 22 85% PR- Agent 18 17 94% T otal 92 77 84% - Interpreting the gap: automate d tools do not raise the same concerns as humans. This gap should be interpreted carefully . The lower pass rates do not necessarily imply that the generated reviews ar e low quality and not useful. Instead, they suggest that the reviews pr o- duced by automated tools are less aligned with the types of issues that human reviewers typically raise. This dierence indicates that automated review tools and humans may be complementary , open- ing opportunities to combine b oth within the software development life cycle. W e investigate this dier ence further in RQ2. - Reviews from automated review tools are useful. T o b etter interpret the low pass rates, we manually insp ected a sample of 92 review comments from the evaluated tools on six randomly selected PRs. T wo annotators independently examined each PR along with its generated review comments, with access to the full repositor y . They then labeled each comment as useful or not, where useful comments are those that point out valid issues or suggest improv ements to the code. Disagreements were resolved through discussion. The result in T able 6 shows that most comments ar e useful. This nding indicates that the low pass rates are not a result of po or-quality re- views, but rather be cause automated revie w tools tend to highlight dierent concerns during the revie w process compared to human review ers. W e investigate this dierence further in RQ2. Answer to RQ1: There exists a clear gap between reviews generated by automated to ols and re views produced by human. 5.3 RQ2: Categories of Review Comments For RQ2, we aim to analyze the alignment between human and automated code reviews by assigning a categor y label to each review comment. The categorization is done manually with the following two-staged protocol. In stage 1, two authors individually perform preliminary inspections on human reviews in the base SWE-CARE dataset, and arrived at the following categories. (1) Functional Correctness: Concerns about incorrect or unex- pected high-level behavior , regressions, semantic mismatches, or functional bugs. (2) T esting: Concerns about incorrect, missing, fragile or aky test functions, weak assertions, lack of test coverage, or requests improvements of test functions or les. (3) Robustness: Concerns about implementation-level edge cases, such as handling of corner cases in input and control ow . (4) Compatibility: Concerns about version, software or hardwar e compatibility . (5) Documentation: Concerns about correctness, wording, struc- ture, or clarity in docs, examples, links, or code comments. 8 0% 10% 20% 30% Maintainability F unctional Cor r ectness Documentation T esting Design Er r or Handling R obustness Compatibility Efficiency Security PR-Agent -20.3 pp -4.3 pp +4.3 pp -3.4 pp +16.3 pp 0% 10% 20% 30% Devin -9.9 pp -5.8 pp +3.8 pp +4.2 pp +5.3 pp 0% 20% 40% Codex -24.5 pp +23.7 pp -5.6 pp -5.4 pp +7.8 pp 0% 10% 20% 30% Claude Code -10.3 pp +4.1 pp -3.5 pp +6.8 pp Shar e of categories (%) T ool R eviews Human R eviews Figure 6: Categories of review comments generated by four tools and comparison with human reviews. 0% 20% 40% 60% 80% Maintainability F unctional Cor r ectness Documentation T esting Design Er r or Handling R obustness Compatibility Efficiency Security 22.2% (14/63) 34.0% (18/53) 7.7% (2/26) 23.1% (6/26) 20.0% (5/25) 23.1% (3/13) 33.3% (4/12) 20.0% (2/10) 0.0% (0/4) 0.0% (0/2) PR-Agent 0% 20% 40% 60% 80% 23.8% (15/63) 32.1% (17/53) 11.5% (3/26) 34.6% (9/26) 16.0% (4/25) 15.4% (2/13) 41.7% (5/12) 30.0% (3/10) 0.0% (0/4) 0.0% (0/2) Devin 0% 20% 40% 60% 80% 7.9% (5/63) 39.6% (21/53) 15.4% (4/26) 15.4% (4/26) 16.0% (4/25) 23.1% (3/13) 41.7% (5/12) 10.0% (1/10) 0.0% (0/4) 0.0% (0/2) Codex 0% 20% 40% 60% 80% 27.0% (17/63) 41.5% (22/53) 30.8% (8/26) 30.8% (8/26) 24.0% (6/25) 23.1% (3/13) 75.0% (9/12) 20.0% (2/10) 0.0% (0/4) 0.0% (0/2) Claude Code A verage test pass rate (%) Figure 7: Pass rate of automated review tools grouped by the categor y of the executable tests. (6) Design: Concerns about interaction with other parts of the software, such as API interface signature and r eturn values. (7) Error Handling: Concerns about warning/error messages or exception behavior . (8) Maintainability: Concerns about readability , information log- ging, code smells, code complexity , code organization, and con- sistency with repository-spe cic coding practices. (9) Eciency: Concerns about overhead and computational e- ciency . (10) Security: Concerns about unsafe behavior , security exposure, or safety issues. In stage 2, the same authors individually assign each revie w com- ment in the dataset to one of the categories dened in stage 1. Then they discuss and resolve the dier ences. - The distribution of review categories produced by automated tools diers from that of human reviewers. Figure 6 presents the percent- age distribution of review comment categories from automated code review tools. Automated tools produce proportions of com- ments on security , eciency , compatibility , and error handling that are comparable to those of humans; howe ver , they address design , documentation , and maintainability substantially less frequently . A primar y reason for this under representation is that these as- pects are often highly specic to individual code repositories and reect coding practices accumulated over time. This observation highlights a potential gap in automated tools’ understanding of repository-level coding conventions. From another perspective, au- tomated tools tend to raise concerns related to robustness and testing more frequently than humans, suggesting a gr eater sensitivity to potential corner cases. While such complementary suggestions can be benecial, the resulting false positives may also substantially increase the burden on maintainers during software de velopment. - Automated tools capture human-identied issues with var ying e- cacy across categories. While previous analysis examins what types of issues automated tools tend to raise, we now investigate whether these tools successfully capture the issues that human revie wers identied per categor y . T o this end, Figure 7 reports the pass rate of automated review tools when benchmark instances are grouped by the category of the corresponding human review comments. Recall 9 that each benchmark instance is asso ciated with an executable test derived from a human review . Since human revie ws are categorized during the previous lab eling process, each executable test inher- its the same category . Therefore, the pass rate for each categor y reects how often automated revie ws successfully capture issues identied by human revie wers. The results show that automated tools do not capture human concerns uniformly across categories. Overall, across tools, high pass rate is obser v ed on robustness and functional correctness. One possible explanation is that these cate- gories are more localized in the patch under review and this makes them easier to capture. In contrast, low pass rate is observed on documentation and design. The pass rate on maintainability is also limited, ranging from 7.9% to 27.0%. These categories often require on broader repository context and project-specic conventions, which current repositories do not document this well and current tools do not utilize this well. Answer to RQ2: Automated review tools dier from human review ers b oth in the distribution of issue categories they raise and in the categories of human concerns they capture. 6 Actionable Insights a. Automated review tools should be viewed as complements to hu- man reviewers rather than replacements. In RQ1, all evaluated to ols achieve substantially lo wer pass rates than human review ers, de- spite often producing more revie w comments. Further analysis in RQ2 suggests that this gap is at least partly explained by dierences in the typ es of issues raised: automated to ols tend to emphasize certain categories ( e.g., robustness and testing) while does not cover well others that humans frequently identify . Taken together , these ndings point to the possibility for division of lab or in future review workows. b. Rep ository-specic context is a likely missing ingredient for im- proving automate d review tools. In RQ2, automated tools rarely raise issues related to maintainability , design, and documentation, categories that often depend on knowledge specic to a reposi- tory . A concrete example can be found in a human review from the Lightning- AI project 8 , where the review er suggested using os.getenv(’COLAB_GPU’) instead of IS_COLAB to maintain con- sistency with existing code. This is not a generic correctness issue; rather , it reects a local coding convention that is obvious to main- tainers but dicult for an automated review er to infer from the patch alone. This observation has two implications. For practition- ers, documenting repository-specic rules in accessible artifacts, such as architecture notes, style guides, or agent-facing instructions (e .g., AGENTS.md [ 12 ]), may impro ve the eectiveness of automated review tools. For to ol developers, review agents should be grounded in richer project context, including ar chite ctur e documents, prior review history , naming conventions, and similar code patterns, so that generated feedback can better align with human expectations. c. T ests can be useful artifacts for building code review agents. A key advantage of our benchmark is that it evaluates review comments based on whether they lead to code changes that resolve issues identied during the review process, as captured by executable 8 https://github.com/Lightning- AI/pytorch-lightning/pull/4654#discussion_ r526484745 tests. This provides a clear and obje ctiv e optimization target for evaluation, allowing automated re view tools to b e assessed using execution-based signals rather than relying solely on te xtual sim- ilarity or subjective LLM-as-a-judge assessments. More broadly , executable tests also suggest a useful future direction for training review systems, since they re ward issue identication that leads to veriable improv ements in co de . 7 Threats to V alidity a. Internal V alidity . Our evaluation relies on a coding agent to revise patches based on review comments. Ther efore, the outcomes may reect not only the quality of the reviews but also the capability of the agent. T o mitigate this, we validate b enchmark instances using human-written comments prior to inclusion, and the same coding agent is used in both the validation and the evaluation pipeline. This ensures that all retained instances are solvable. b. External V alidity . Our b enchmark is constructed from SWE-CARE and includes 184 pull request instances with 234 veriable oracles across 56 r ep ositories. While this curation impro ves reliability , it may limit representativeness with respect to the broader software ecosystem. Nevertheless, the dataset can be easily extended, as the pipeline can be applied to new pull request instances. c. Construct V alidity . The ltering stage in our pipeline leverages an LLM-based classier , which may retain borderline comments. T o mitigate this, we leverage a strict fail-then-pass criterion during test generation and verify whether each problem can be solved during the validation phase using the original human comments. Moreover , manual analysis of the r eviews inv olve human judgment. T o mitigate this, two authors independently p erformed labeling. 8 Conclusion The rapid advancement of AI-base d code generation has made generating co de becomes increasingly easy and scalable. On the other hand, the process of reviewing the code remains a b ottle- neck. Sev eral automated code r eview tools have been proposed, but evaluating their eectiveness is an open challenge. In this paper , we re visit the problem of evaluating code revie w tools. W e propose c-CRAB , a benchmark designed to assess how well automated review tools identify the same issues that human review ers raise in realistic PR settings. c-CRAB adopts a test-based evaluation, where it converts human revie w comments into exe- cutable tests. Our empirical results show that current state-of-the- art review tools identify only a fraction of the issues captured in the benchmark. This nding highlights the limitations in existing code review tools. Further analysis rev eals that tool-generate d r e- views often highlight dierent aspects of code compared to human review ers. This suggests that human and automated review tools are complementary to each other . Lo oking forward, our ndings suggest several directions for future r esearch, such as integrating automated tools into human-centric review w orkows and improv- ing the ability of code review tools to better capture relevant context in the codebase. Data A vailability Our replication package, including source co de , b enchmark datasets and results, is available at https://github.com/c- CRAB- Benchmark . 10 Acknowledgments This work was partially supported by a Singapore Ministry of Ed- ucation (MoE) Tier 3 grant “ Automated Program Repair”, MOE- MOET32021-0001. Disclaimer The views and conclusions expressed in this pap er ar e those of the authors alone and do not represent the ocial policies or endorse- ments of SonarSource. Furthermore, the ndings presented herein are independent and should not be interpreted as an evaluation of the quality of products at SonarSource. References [1] Maria Antoniak and David Mimno. 2018. Evaluating the Stability of Emb edding- based W ord Similarities. Trans. Assoc. Comput. Linguistics 6 (2018), 107–119. [2] Anonymous A uthors. 2026. Artifact for paper “c-CRAB: curated Code Revie w Agent Benchmark”’ . https://doi.org/10.5281/zeno do .19253371 [3] Hanyang Guo, Xunjin Zheng, Zihan Liao, Hang Y u, Peng Di, Ziyin Zhang, and Hong-Ning Dai. 2025. CodeFuse-CR-Bench: A Comprehensiveness-aware Benchmark for End-to-End Code Review Evaluation in Python Projects. CoRR abs/2509.14856 (2025). [4] Ahmed E. Hassan, Hao Li, Dayi Lin, Bram Adams, Tse-Hsun Chen, Y utaro Kashiwa, and Dong Qiu. 2025. Agentic Software Engine ering: Foundational Pillars and a Research Roadmap. CoRR abs/2509.06216 (2025). [5] Ruida Hu, Xinchen W ang, Xin-Cheng W en, Zhao Zhang, Bo Jiang, Pengfei Gao, Chao Peng, and Cuiyun Gao. 2025. Benchmarking LLMs for Fine-Grained Code Review with Enriched Context in Practice. CoRR abs/2511.07017 (2025). [6] Y anjie Jiang, Hui Liu, Tianyi Chen, Fu Fan, Chunhao Dong, Kui Liu, and Lu Zhang. 2025. Deep Assessment of Code Review Generation Approaches: Beyond Lexical Similarity . CoRR abs/2501.05176 (2025). [7] Shuochuan Li, Dong W ang, Patanamon Thongtanunam, Zan W ang, Jiuqiao Yu, and Junjie Chen. 2025. Issue-Oriented Agent-Based Framework for Automated Review Comment Generation. CoRR abs/2511.00517 (2025). [8] W ei Li, Xin Zhang, Zhongxin Guo, Shaoguang Mao, W en Luo, Guangyue Peng, Y angyu Huang, Houfeng W ang, and Scarlett Li. 2025. FEA-Bench: A Benchmark for Evaluating Repository-Level Code Generation for Feature Implementation. In ACL (1) . Association for Computational Linguistics, 17160–17176. [9] Zhiyu Li, Shuai Lu, Daya Guo, Nan Duan, Shailesh Jannu, Grant Jenks, De ep Majumder , Jared Green, Alexey Svyatkovskiy , Shengyu Fu, and Neel Sundare- san. 2022. A utomating code review activities by large-scale pre-training. In ESEC/SIGSOFT FSE . ACM, 1035–1047. [10] Chin- Y ew Lin. 2004. ROUGE: A Package for Automatic Evaluation of Summaries. In T ext Summarization Branches Out . Association for Computational Linguistics, Barcelona, Spain, 74–81. https://aclanthology .org/W04- 1013/ [11] Junyi Lu, Xiaojia Li, Zihan Hua, Lei Yu, Shiqi Cheng, Li Y ang, Fengjun Zhang, and Chun Zuo. 2025. DeepCRCEval: Revisiting the Evaluation of Code Review Comment Generation. In FASE (Lecture Notes in Computer Science, V ol. 15693) . Springer , 43–64. [12] Jai Lal Lulla, Seyedmoein Mohsenimodi, Matthias Galster , Jie M. Zhang, Sebas- tian Baltes, and Christoph Treude. 2026. On the Impact of AGENTS.md Files on the Eciency of AI Coding Agents. CoRR abs/2601.20404 (2026). arXiv: 2601.20404 doi:10.48550/ARXIV .2601.20404 [13] Atharva Naik, Marcus Alenius, Daniel Fried, and Carolyn P. Rosé. 2025. CRScore: Grounding A utomated Evaluation of Code Review Comments in Code Claims and Smells. In NAACL (Long Papers) . Association for Computational Linguistics, 9049–9076. [14] Kishore Papineni, Salim Roukos, T odd W ard, and W ei-Jing Zhu. 2002. Bleu: a Method for Automatic Evaluation of Machine Translation. In ACL . A CL, 311–318. [15] Kristen Pereira, Neelabh Sinha, Rajat Ghosh, and Debojyoti Dutta. 2026. CR-Bench: Evaluating the Real- W orld Utility of AI Code Review Agents. arXiv: 2603.11078 [cs.SE] [16] Dung Pham and Taher A. Ghaleb . 2026. Code Change Characteristics and Descrip- tion Alignment: A Comparative Study of A gentic versus Human Pull Requests. CoRR abs/2601.17627 (2026). [17] Maja Popovic. 2015. chrF: character n-gram F-score for automatic MT e valuation. In WMT@EMNLP . The Association for Computer Linguistics, 392–395. [18] Zeeshan Rasheed, Malik Ab dul Sami, Muhammad W aseem, Kai-Kristian Kemell, Xiaofeng Wang, Anh Nguyen, Kari Systä, and Pekka Abrahamsson. 2024. AI- powered Code Review with LLMs: Early Results. CoRR abs/2404.18496 (2024). [19] Romain Robbes, Théo Matricon, Thomas Degueule, André C. Hora, and Stefano Zacchiroli. 2026. Agentic Much? Adoption of Coding Agents on GitHub. CoRR abs/2601.18341 (2026). [20] Lin Shi, Chiyu Ma, W enhua Liang, Xingjian Diao, W eicheng Ma, and Soroush V osoughi. 2025. Judging the Judges: A Systematic Study of Position Bias in LLM-as-a-Judge. In IJCNLP- AACL (long papers) . The Asian Federation of Natural Language Processing and The Association for Computational Linguistics, 292– 314. [21] Xunzhu T ang, Kisub Kim, Y ewei Song, Cedric Lothritz, Bei Li, Saad Ezzini, Haoye Tian, Jacques Klein, and T egawendé F . Bissyandé. 2024. Co de Agent: A utonomous Communicative Agents for Code Revie w. In EMNLP . Association for Computa- tional Linguistics, 11279–11313. [22] Chakkrit Kla T antithamthavorn, Y aotian Zou, Andy W ong, Michael Gupta, Zhe W ang, Mike Buller , Ryan Jiang, Matthew W atson, Minwoo Jeong, Kun Chen, and Ming Wu. 2026. RovoDev Code Review er: A Large-Scale Online Evaluation of LLM-based Code Review Automation at Atlassian. CoRR abs/2601.01129 (2026). [23] Aman Singh Thakur , Kartik Choudhary , V enkat Srinik Ramayapally , Sankaran V aidyanathan, and Dieuwke Hupkes. 2024. Judging the Judges: Evaluating Alignment and Vulnerabilities in LLMs-as-Judges. CoRR abs/2406.12624 (2024). arXiv: 2406.12624 doi:10.48550/ARXIV .2406.12624 [24] Rosalia T ufano, Simone Masiero, Antonio Mastropaolo, Luca Pascar ella, Denys Poshyvanyk, and Gabriele Bavota. 2022. Using Pre- Trained Models to Boost Code Review A utomation. In ICSE . ACM, 2291–2302. [25] Rosalia T ufano, Luca Pascarella, Michele Tufano, Denys Poshyvanyk, and Gabriele Bavota. 2021. To wards Automating Code Review Activities. In ICSE . IEEE, 163–174. [26] Chengxing Xie , Bowen Li, Chang Gao, He Du, W ai Lam, Difan Zou, and Kai Chen. 2025. SWE-Fixer: Training Open-Source LLMs for Eective and Ecient GitHub Issue Resolution. In ACL (Findings) (Findings of ACL, V ol. ACL 2025) . Association for Computational Linguistics, 1123–1139. [27] T omer Y anay and Bar Fingerman. 2026. How W e Built a Real- W orld Bench- mark for AI Code Review . https://w ww .qo do .ai/blog/how- we- built- a- real- world- benchmark- for- ai- code- review/ Qodo blog post. [28] Zhengran Zeng, Ruikai Shi, Keke Han, Yixin Li, Kaicheng Sun, Yidong W ang, Zhuohao Yu, Rui Xie, W ei Y e, and Shikun Zhang. 2025. Benchmarking and Studying the LLM-based Code Review . CoRR abs/2509.01494 (2025). [29] Lei Zhang, Y ongda Y u, Minghui Y u, Xinxin Guo, Zhengqi Zhuang, Guoping Rong, Dong Shao, Haifeng Shen, Hongyu Kuang, Zhengfeng Li, Boge W ang, Guoan Zhang, Bangyu Xiang, and Xiaobin Xu. 2026. AACR-Bench: Evaluating A utomatic Code Review with Holistic Repository-Level Context. arXiv: 2601.19494 [cs.SE] https://arxiv .org/abs/2601.19494 [30] Y untong Zhang, Haifeng Ruan, Zhiyu Fan, and Abhik Roychoudhury . 2024. Au- toCodeRover: A utonomous Program Improvement. In ISST A . ACM, 1592–1604. [31] Qixing Zhou, Jiacheng Zhang, Haiyang W ang, Rui Hao, Jiahe W ang, Minghao Han, Y uxue Y ang, Shuzhe Wu, Feiyang Pan, Lue Fan, Dandan T u, and Zhaoxiang Zhang. 2026. FeatureBench: Benchmarking A gentic Coding for Complex Feature Development. arXiv: 2602.10975 [cs.SE] https://arxiv .org/abs/2602.10975 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

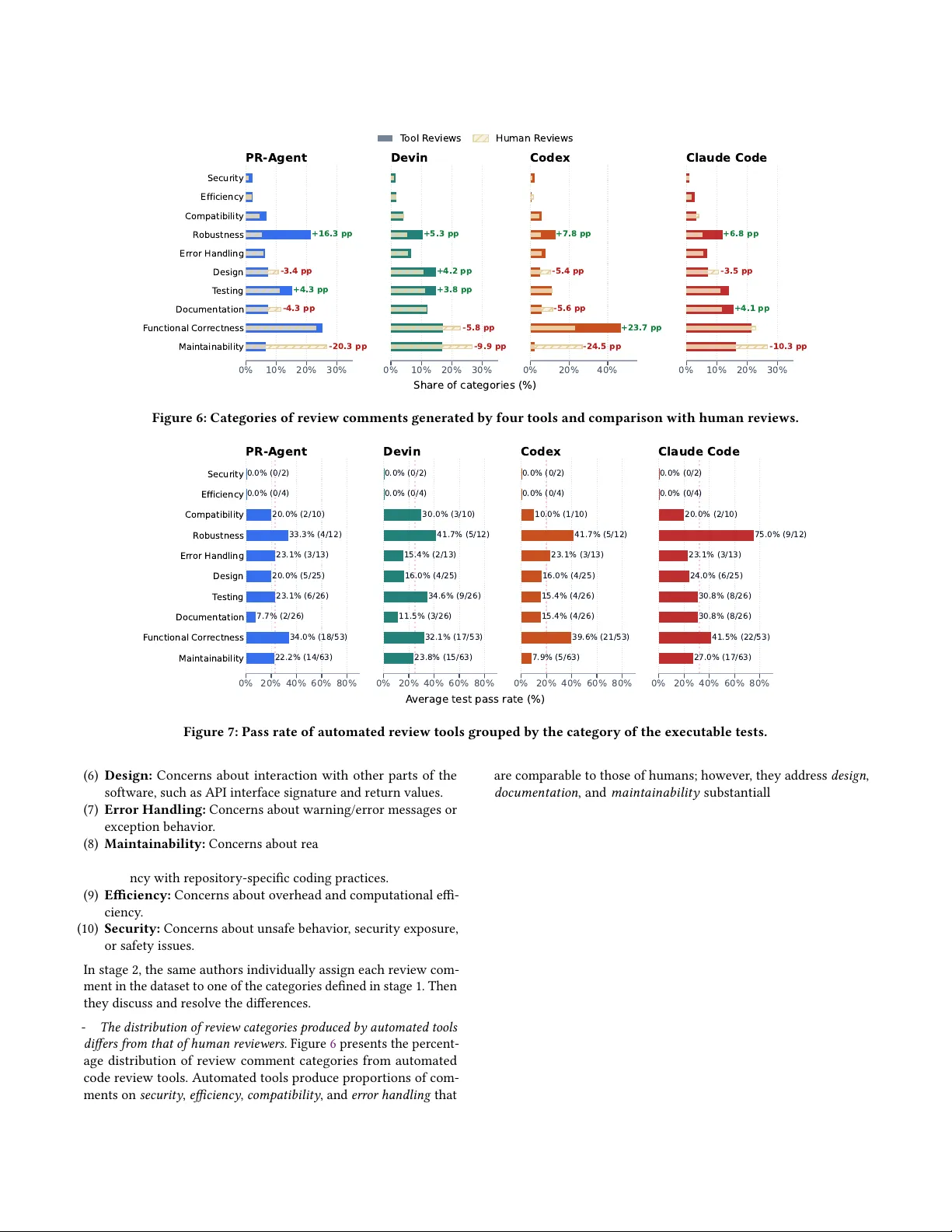

Leave a Comment