Modernizing Amdahl's Law: How AI Scaling Laws Shape Computer Architecture

Classical Amdahl's Law quantifies the limit of speedup under a fixed serial-parallel decomposition and homogeneous replication. Modern systems instead allocate constrained resources across heterogeneous hardware while the workload itself changes: som…

Authors: Chien-Ping Lu

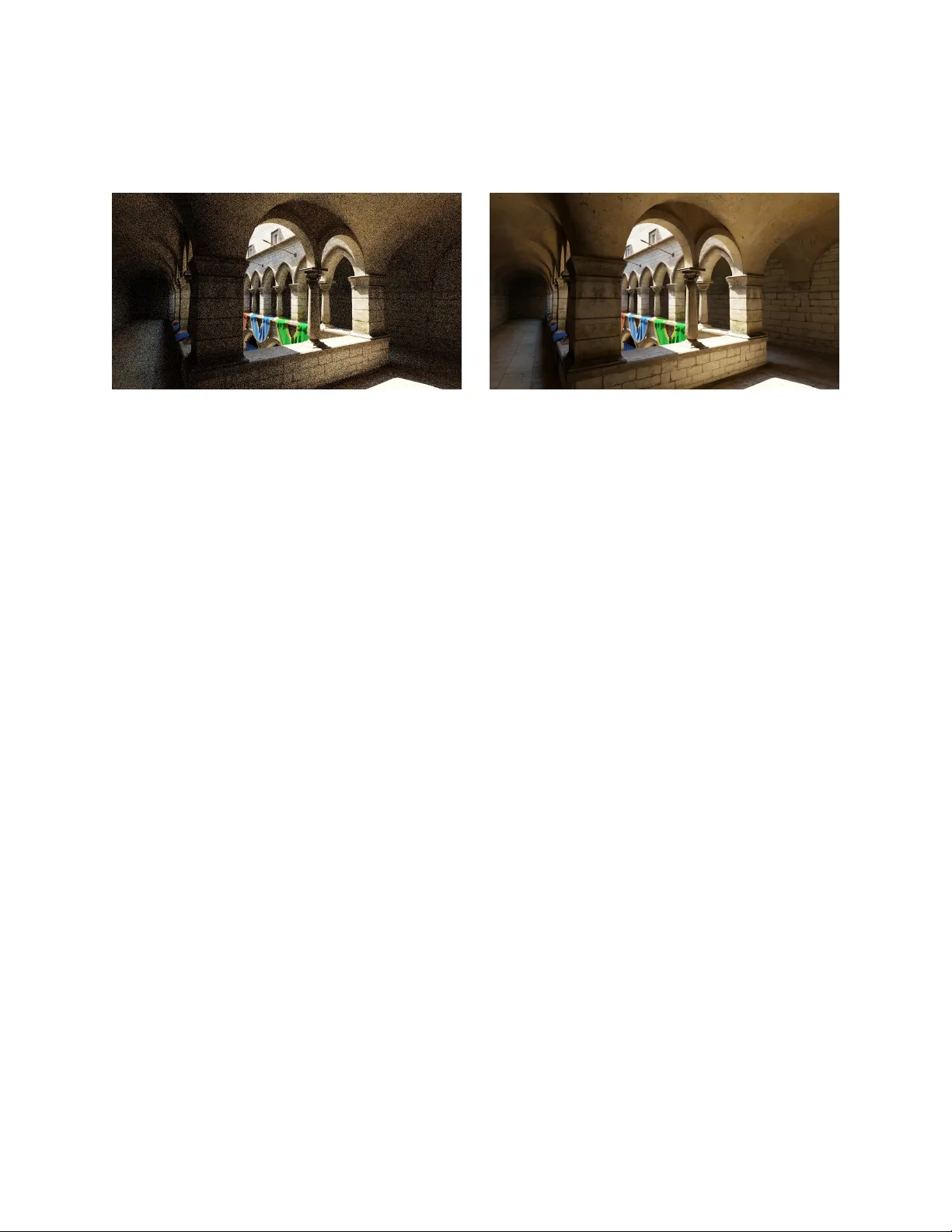

Mo dernizing Amdahl’s La w Ho w Scaling La ws Reshape Computer Arc hitecture Through Optimal Hardw are Allo cation Chien-Ping Lu Abstract Classical Amdahl’s La w quantifies the limit of speedup under a fixed serial–parallel decomp osition and homogeneous replication. Mo dern systems instead allocate constrained resources across heterogeneous hardw are while the w orkload itself c hanges: some stages b ecome effectiv ely b ounded, whereas others con tin ue to absorb additional compute because more compute still creates v alue. This pap er reformulates Amdahl’s Law around that shift. W e replace processor coun t with an allo cation v ariable, replace the classical parallel fraction with a value-sc alable fr action , and mo del specialization by a relativ e efficiency ratio b et ween dedicated and programmable compute. The resulting ob jective yields a finite collapse threshold. F or a sp ecialized efficiency ratio R , there is a critical scalable fraction S c = 1 − 1 /R b ey ond which the optimal allo cation to sp ecialization becomes zero. Equiv alen tly , for a giv en scalable fraction S , the minim um efficiency ratio required to justify specialization is R c = 1 / (1 − S ) . Th us, as v alue-scalable w orkload gro ws, sp ecialization faces a rising bar. The p oint is not that programmable hardw are is alw ays sup erior, but that specialization must k eep re-earning its place against a mo ving prog rammable substrate. The model helps explain increasing GPU programmabilit y , the migration of v alue-producing w ork to w ard learned late-stage computation, and why AI domain-sp ecific accelerators do not simply displace the GPU. 1 In tro duction Amdahl’s La w [ 2 ] has long served as a foundational mo del for reasoning ab out performance scaling: Sp eedup = 1 (1 − P ) + P N (1) where P denotes the fraction of w ork assumed to be parallelizable and N denotes the n um b er of pro cessors. This form ulation assumes: • homogeneous compute units, • replication-based scaling, and • a fixed decomposition b et w een serial and parallel comp onen ts. These assumptions no longer reflect mo dern systems. Mo dern platforms mix programmable compute, dedicated units, tensor engines, mixed precision arithmetic, and la yered memory systems within a single device. Hennessy and Patterson describ ed the present p eriod as a “new golden age for computer arc hitecture” [ 6 ], driv en b y domain-specific efficiency optimization. Our point is that the cen tral arc hitectural issue is no longer simply whether hardw are is domain-specific or general-purpose, 1 but ho w scaling la ws [ 8 ] reshap e w orkload structure and, through it, resource allo cation b et ween them. T o da y’s architectures are heterogeneous. They rely on programmable compute augmen ted by sp ecialized units, and their workload mix evolv es with scale. In that setting, “sp eedup” and “core coun t” are no longer sufficient ph ysical descriptions. Ev en the term “core” no w cov ers a wide range of structures, from SIMD lanes and tensor datapaths to fixed-function assist blo c ks. W e therefore return to a more fundamen tal quan tity: T otal normalize d exe cution time under c onstr aine d r esour c es. The central mov e of this pap er is to replace “How muc h sp eedup do more cores buy?” with “Ho w should constrained resources b e allo cated as the workload changes?” The mathematical center of the pap er is the resulting collapse threshold: for a giv en sp ecialized efficiency ratio R , there is a critical v alue-scalable fraction S c = 1 − 1 /R b ey ond which the optimal sp ecialized allo cation falls to zero; equiv alently , for a giv en S , sp ecialization m ust exceed R c = 1 / (1 − S ) to justify nonzero resource share. The examples that follow are interpretations of that result. 2 F rom Classical Amdahl to Hardw are Allo cation Classical Amdahl analysis writes normalized time as T A ( N ) = (1 − P ) + P N (2) under a fixed decomp osition b etw een serial and parallel w ork. Gustafson’s Law [ 5 ] relaxes that fixed decomp osition by allo wing the effective w orkload ratio to c hange with system scale: Sp eedup G = (1 − P ) + P · N . (3) Both are historically imp ortan t, but b oth remain framed in the language of replication, pro cessor coun t, and sp eedup. Figure 1 places that language in context. The present pap er keeps the concern with p erformance under constrain t, but c hanges the physical question: not how muc h sp eedup replication can buy , but how constrained resources should be allocated across heterogeneous hardw are when the workload itself is changing . 3 The Mo dernized Amdahl Mo del The central change in physical description is simple. Classical Amdahl-style analysis is written in terms of serial versus parallel w ork, pro cessor coun t, and sp eedup under replication. Mo dern systems instead face a different design question: ho w should a constrained hardware budget b e allo cated b et w een sp ecialized logic and programmable compute when the workload itself is c hanging? W e therefore in tro duce three v ariables: • x ∈ [0 , 1) : the fraction of constrained hardwar e resource allo cated to sp ecialized logic; • R > 1 : the relative efficiency adv an tage of sp ecialized hardware o ver programmable compute on the b ounded p ortion of the workload; • S ∈ [0 , 1] : the v alue-scalable fraction of the w orkload, meaning the p ortion for whic h additional compute contin ues to create meaningful v alue ov er the design interv al under consideration. 2 20 40 60 0 20 40 60 N (pro cessor count) sp eedup Sp eedup F orm Amdahl, P = 0 . 5 Amdahl, P = 0 . 9 Amdahl, P = 0 . 99 Gustafson, P = 0 . 5 Gustafson, P = 0 . 9 Gustafson, P = 0 . 99 20 40 60 0 0 . 2 0 . 4 0 . 6 0 . 8 1 N (pro cessor count) normalized time Time F orm Figure 1: Historical legacy of classical scaling laws. Amdahl’s law (solid) and Gustafson’s law (dashed) sho wn side by side in sp eedup form and normalized-time form. Both are expressed in terms of pro cessor count N and replication-based sp eedup, whic h is the framing the present pap er leav es b ehind. The corresp onding normalized execution time is T ( x ) = 1 − S 1 + ( R − 1) x + S 1 − x . (4) This setup makes four assumptions explicit. First, S and 1 − S are normalized workload shares ov er the design interv al under consideration. Second, sp ecialization improv es only the b ounded share through the relativ e efficiency ratio R . Third, the v alue-scalable share remains on the programmable side b ecause that is where additional compute contin ues to b e pro ductiv ely absorb ed. F ourth, the hardw are trade-off is reduced to one constrained allo cation dimension x , so the mo del is delib erately a first-order allo cation la w rather than a full c hip-level microarchitectural description. The first term mo dels the effectively b ounded p ortion of the workload, which can b enefit from sp ecialization; the second mo dels the v alue-scalable p ortion, whic h remains on the programmable side b ecause it con tinues to absorb additional compute. Differen tiating t wice giv es T ′′ ( x ) = 2(1 − S )( R − 1) 2 1 + ( R − 1) x 3 + 2 S (1 − x ) 3 . (5) This expression is strictly p ositiv e on [0 , 1) , so the ob jectiv e is strictly conv ex and admits a unique global minimizer. The threshold condition is then obtained b y differen tiating once: T ′ ( x ) = − (1 − S )( R − 1) 1 + ( R − 1) x 2 + S (1 − x ) 2 . (6) A t the origin, T ′ (0) = − (1 − S )( R − 1) + S. (7) 3 So T ′ (0) < 0 exactly when S < 1 − 1 R . (8) Since the ob jective is strictly conv ex, this condition separates the interior regime from the collapse regime. If S ≥ 1 − 1 /R , the unique optimum is the b oundary p oint x ∗ = 0 . In the interior regime, solving T ′ ( x ) = 0 yields x ∗ = r (1 − S )( R − 1) S − 1 r (1 − S )( R − 1) S + ( R − 1) . (9) F or a fixed scalable fraction S , sp ecialization is justified only if R c = 1 1 − S . (10) Equiv alen tly , for a fixed efficiency ratio R , sp ecialization collapses once S c = 1 − 1 R . (11) Within the interior regime, the optimal sp ecialization share decreases monotonically as the v alue- scalable fraction rises, which follows directly from Equation ( 9 ). The threshold is finite rather than asymptotic. F or example, when S = 0 . 9 , sp ecialization requires at least a 10 × relativ e efficiency adv an tage; when S = 0 . 95 , it requires at least 20 × . As the v alue-scalable fraction rises, the bar for sp ecialization rises rapidly . The baseline mo del treats the sp ecialization adv antage R as a first-order quan tity . A bandwidth- limited extension is giv en in App endix A ; it strengthens the pressure to ward programmable hardw are in the interior of the surface but do es not alter the threshold condition itself. 4 In terpreting the V alue-Scalable F raction V alue-scalable fraction. The v alue-scalable fraction S is the share of normalized workload for whic h additional compute contin ues to create meaningful task-lev el v alue ov er the design interv al under consideration, for a fixed task family , op erating regime, and ev aluation criterion. Dep ending on the domain, such v alue may mean improv ed accuracy , fidelity , capability , robustness, or utility . This is broader than the classical parallel fraction. A stage may b e highly parallel yet effectiv ely v alue-bounded if additional compute mainly raises throughput without materially improving the deliv ered result. Conv ersely , a stage b elongs to S when more compute con tin ues to impro v e the result itself. The endp oin t cases are excluded only to av oid degeneracy: S = 1 gives x ∗ = 0 immediately , while S = 0 pushes the mo del to the fully sp ecialized endp oin t. In mo dern AI systems, that distinction is not merely philosophical. Empirically observ ed scaling la ws [ 8 ] sho w that larger mo dels, richer p ost-training, and more inference-time compute often con tinue to pro duce measurable gains. In that sense, S is partly a design choice, but one anchored in observed v alue scaling. 4 0 5 · 10 − 2 0 . 1 0 . 15 0 . 2 0 . 25 0 . 3 0 . 35 0 . 4 0 . 45 0 . 5 0 . 55 0 . 6 0 . 65 0 . 5 1 1 . 5 2 2 . 5 3 x ∗ = 0 x (specialization fraction) T ( x ) (normalized execution time) S = 0 . 2 S = 0 . 5 S = 0 . 8 S = 0 . 9 S = 0 . 95 optimal locus x ∗ ( S ) Figure 2: Normalized execution time T ( x ) versus sp ecialization fraction x for R = 10 and v arying S . Dashed markers indicate the optimal allo cation x ∗ . F or low S , the curves are U-shaped and sp ecialization is b eneficial; as S approac hes S c = 0 . 9 , the optimum collapses tow ard the origin. The dashed black curve traces the optimal lo cus, terminating at the collapse p oin t x ∗ = 0 . Ab o ve the threshold ( S = 0 . 95 ), the curve is monotonically increasing and no inv estmen t in dedicated hardware is optimal. 0 0 . 1 0 . 2 0 . 3 0 . 4 0 . 5 0 . 6 0 . 7 0 . 8 0 . 9 5 10 15 20 25 30 S (v alue-scalable fraction) R (efficiency ratio) R c = 1 1 − S Collapse region: x ∗ = 0 Sp ecialization region: x ∗ > 0 Figure 3: Race diagram in ( S, R ) space for dedicated hardware versus programmable compute. The curv e R c = 1 / (1 − S ) is the b oundary of optimal sp ecialization. As S rises, the required efficiency ratio clim bs up ward, placing increasing pressure on dedicated hardware to main tain a larger lead o v er general compute. Ab o ve the curve, sp ecialization collapses to x ∗ = 0 ; b elow it, a nonzero allo cation to sp ecialized hardware reduces total execution time. 5 5 Arc hitectural Consequences The reformulation has several immediate arc hitectural consequences: • S is not the classical parallel fraction; it is the v alue-scalable fraction: the part of the workload for which additional compute still creates v alue. • A rise in S do es not imply merely adding more pro cessors; it refers to additional effective compute, whic h may come from hardware scale, lo w er precision, sparsity , soft ware optimization, or improv ed mo del design. • R is a relativ e quantit y , not a one-wa y constan t; the programmable substrate also evolv es to ward the dominant scalable workload, and cannot b e treated as standing still while sp ecialization impro ves. • The collapse threshold works in b oth directions: for a given efficiency gap R , there is a critical scalable fraction S c ; for a given scalable fraction S , there is a critical required efficiency gap R c . As v alue-scalable workload grows, the bar for sp ecialization rises with it. • Programmabilit y should not b e confused with uniform efficiency across all tasks. It is a flexible but still biased substrate for workloads whose v alue-producing stages keep shifting. As S increases, the contribution of early-stage, fixed-function computation declines and the dominan t p ortion of execution time shifts tow ard dynamically scaling computation. This leads to x ∗ → 0 (12) as a design tendency . The interpretation is not that b ottlenec ks disapp ear, but that b ounded stages o ccup y a shrinking fraction of total execution time. Once the scalable p ortion dominates, the mo del biases the optimum to ward more general and more reconfigurable compute fabrics. 6 Supp orting Examples In modern AI systems, workload scaling is no longer merely hypothetical. It has become an empirically grounded structural prop ert y of dominant w orkloads. AI scaling laws [ 8 ] pro vide empirical evidence that additional effective compute can contin ue to improv e mo del quality in a structured wa y . Pre- training, p ost-training, and test-time computation hav e all b ecome genuine scaling axes, so workload gro wth is no longer merely an analytical conv enience. As computational capacity increases, it is frequen tly absorb ed into larger mo dels, ric her training pro cedures, and more compute-intensiv e inference rather than solely reducing execution time. In the presen t mo del, the parameter S captures the fraction of computation asso ciated with these dynamically scaling workload comp onen ts. 6.1 Graphics and Reconstruction Rendering mak es the distinction concrete. Classical rasterization is highly parallel in implemen tation, but once screen-space resolution and visibility ha ve b een fixed it is not strongly v alue-scalable: additional compute adds little new scene information [ 1 ]. By contrast, ray tracing and path tracing without learned reconstruction remain v alue-scalable b ecause additional samples con tinue to improv e fidelit y . Neural denoising and reconstruction c hange this allo cation. Once high p erceptual quality can b e reco vered from low sample coun ts, brute-force sampling b ecomes increasingly v alue-b ounded while 6 neural inference b ecomes the stage where marginal compute still adds visible v alue [ 10 ]. In that sense, mo dern reconstruction systems do not simply accelerate rendering; they reallo cate which stages b elong to S and whic h b ecome effectiv ely b ounded. Figure 4 summarizes this shift. Mon te Carlo, 16 spp (noisy) Denoised / reconstructed Figure 4: Example rendered images illustrating ho w neural denoising and reconstruction shift graphics workload structure. Lo w-sample Mon te Carlo rendering provides a noisy acquisition signal, while learned denoising recov ers useful image quality from that input; as reconstruction qualit y impro ves, brute-force Monte Carlo rendering b ecomes effectiv ely v alue-b ounded and a larger share of the scalable workload shifts into learned p ost-pro cessing. The example sho wn is the Crytek Sp onza scene at 16 samples p er pixel from the Intel Op en Image Denoise gallery . A cross application domains, the growing influence of AI and learned mo dels is driving shifts suc h as: • R e c onstruction replacing direct computation: systems infer a full result from partial, noisy , or c heaply sampled inputs (e.g. denoising, sup er-resolution, neural co decs, and inpainting), with close analogues in non-visual signal recov ery and imaging, not only in rendering pip elines. • L ate-stage p ost-processing dominating execution time: the exp ensiv e work migrates to stages after a cheaper front end—for example neural passes after rasterization in graphics, large rerank ers after retriev al, long or sp eculative deco ding in language mo dels, or heavy fusion la yers after light w eight enco ders. • Mo del-driven output generation: learned mo dels substantially pro duce or shap e the delivered artifact, as in large language or co de assistants, sp eec h synthesis, and machine translation, not only in display-cen tric workloads. These shifts limit what can b e achiev ed by lo cal optimization of a fixed pip eline within one v ertical. As the scalable ratio S rises, more w all-clo c k time and v alue-producing work shift in to stages that scale with mo del capacity and inference compute, so end-to-end progress dep ends less on fron t-end sp ecialization and more on the shared scalable path. 6.2 Wh y GPUs Keep Becoming More Programmable Giv en the empirical trends summarized in Section 6 , the mo del predicts a gradual erosion of fixed-function pip elines and an expansion of programmable domains as the scalable fraction S rises. Graphics is esp ecially illustrative. The shift of visual quality generation to ward later-stage compute predates AI. A ma jor milestone was deferr e d shading , which made fron t-end visibility and rasterization stages serv e increasingly as data-preparation steps for richer downstream computation 7 rather than as the sole lo cus of image quality generation [ 1 ]. AI-generated pixels, frame reconstruction, denoising, and neural upscaling intensify that trend by further reducing brute-force sampling and shifting v alue-pro ducing work in to learned stages whose cost and capabilit y scale with mo del complexit y and inference compute [ 10 ]. In the language of the present mo del, graphics increasingly inherits scaling-law b eha vior [ 8 ] through these AI-driven stages, while fron t-end visibility and rasterization act more like b ounded acquisition stages. These trends increase S , pushing w orkloads to ward the collapse region of Figure 3 . This is consistent with the long-run tra jectory of GPUs: • fixed-function graphics pip elines gav e wa y to shaders—for example, hardware transform-and- ligh ting mov ed into programmable vertex shaders, and fixed texture combiners and p er-pixel ligh ting mo ved into programmable pixel (fragmen t) shaders, • shaders evolv ed into unified programmable compute, and • graphics hardw are absorb ed tensor acceleration, which is now increasingly presented as pro- grammable matrix machinery rather than rigid frontends. As rendering qualit y dep ends more on neural reconstruction than on fixed m ulti-pass logic, graph- ics pro cessors are pulled tow ard more programmable, matrix-oriented substrates. Programmabilit y is not itself equiv alent to AI scaling; rather, it is the architectural resp onse to workloads whose v alue-producing stages keep moving and whose scalable work increasingly lands on shared tensor- and compute-intensiv e machinery . Figure 5 shows this shift at the level of rendering passes: in ra y-traced pip elines with learned reconstruction, early visibility stages b ecome b ounded acquisition passes, while later stages absorb more of the scalable, compute-intensiv e workload. App endix A sho ws that bandwidth limits reinforce rather than o verturn this direction of change: memory friction deforms the in terior optimum but do es not change the threshold itself. The same logic motiv ates the next question: if scalable work keeps migrating, why do AI domain-sp ecific accelerators not simply displace the GPU? Graphics pip eline under rising S P ass 1 Primary visibility b ounded / lo w-res P ass 2 Secondary visibility b ounded / lo w-sample P asses 3+ an ti-aliasing, de noising, reconstruction, frame synthesis, post-pro cessing b ecoming dominan t ( S ↑ ) Bounded acquisition stages Scalable, compute- and tensor-in tensive stages Figure 5: Shift of graphics workload structure under rising S . In ray-traced or path-traced rendering with learned reconstruction, neural denoising and reconstruction compress the classical high-sample rendering regime: once useful image quality can b e reco vered from low-reso lution or low-sample acquisition, brute-force Monte Carlo rendering b ecomes effectively v alue-b ounded, primary and secondary visibility increasingly b eha v e as b ounded acquisition stages, and passes 3+ absorb a larger share of the scalable workload through anti-aliasing, denoising, reconstruction, frame synthesis, and related p ost-processing. In the extreme limit, many of these later passes collapse in to a single learned reconstruction stage. 8 6.3 Wh y AI Domain-Sp ecific A ccelerators Hav e Not Displaced the GPU AI domain-sp ecific accelerators win when S is small, the workload is stable, and computational b oundaries remain fixed. In that regime, the b ounded p ortion of the w orkload is large enough for sp ecialization to matter. Ho wev er, when workload structure evolv es, their relative contribution diminishes and more of their functionality is absorb ed in to programmable systems. This helps explain why AI domain- sp ecific accelerators hav e not simply displaced GPUs. Once the workload mix describ ed in Section 6 shifts tow ard larger v alues of S , the efficiency adv an tage of fixed hardware faces a rising bar while scalable work dominates the runtime budget. Figure 3 should therefore b e read as a race, not as a winner-take-all verdict: ev en b elo w the b oundary , x ∗ > 0 still implies a mixed system in whic h programmable compute remains essential. Go ogle’s TPUs are an imp ortan t b oundary case. In the sense emphasized by Hennessy and P atterson, they w ere in tro duced as domain-sp ecific architectures [ 6 , 7 ], but they did not sp ecialize around one particular mo del. Instead, they elev ated dense tensor computation itself to a broad computational substrate. In that sense, they do not refute the presen t argument; they illustrate that successful sp ecialization in AI often mov es upw ard in abstraction. The key p oin t is v alue rather than physical p ossibilit y . It is often p ossible in principle to scale hardw are around a fixed mechanism, but its v alue declines if softw are and mo del evolution mo ve the fron tier of useful computation elsewhere. In AI, sparsity , routing, cache compression, quantization, and inference-time system optimization can reduce cost p er tok en without requiring a corresp onding fixed-function redesign [ 3 , 4 ]. In that regime, softw are optimization can outrun narrow hardware adv an tage, so the contribution of any one dedicated mec hanism b ecomes effectively b ounded relativ e to the evolving programmable workload. More broadly , this is a statement ab out AI domain-sp ecific architectures: sp ecialization remains attractiv e only while the targeted mechanism sta ys a large and stable share of v alue-producing computation. 7 Conclusion The pap er’s central result is simple. Once Amdahl-style analysis is rewritten in terms of hardware allo cation, relativ e efficiency , and a v alue-scalable workload fraction, sp ecialization acquires a finite collapse threshold. F or a giv en efficiency ratio R , there is a critical scalable fraction S c = 1 − 1 /R b ey ond whic h the optimal sp ecialized allo cation b ecomes zero; equiv alen tly , for a given S , the required efficiency ratio is R c = 1 / (1 − S ) . The claim is therefore not that sp ecialization disapp ears in every engineering con text. It is that sp ecialization faces a rising bar when the v alue-pro ducing share of computation keeps mo ving to ward workloads that remain worth scaling. In that regime, programmable compute is not a passiv e baseline but a moving target that also improv es. The graphics, GPU, and AI-accelerator examples are consequences of that allo cation law. Mo dernized Amdahl analysis thus replaces the old question—ho w muc h sp eedup can replication buy despite a serial fraction?—with a more relev an t one for mo dern heterogeneous systems: ho w should limited hardware resources b e allo cated when the workload itself keeps relo cating where additional compute creates v alue? 9 A Bandwidth-Limited Extension The baseline mo del treats the sp ecialization adv an tage R as a constan t. That is appropriate for the first-order allo cation law, but one obvious ob jection is that memory bandwidth can prev ent sp ecialized compute from realizing its full theoretical efficiency . In Ro ofline-st yle terms [ 9 ], increasing compute allo cation without a corresp onding increase in data supply even tually makes p erformance bandwidth-limited. One simple wa y to capture this effect is to replace the constan t efficiency ratio by an effective ratio that decays with increasing sp ecialized allo cation: R eff ( x ) = R max 1 + γ R max x , (13) where R max is the p eak theoretical efficiency ratio and γ is a memory-friction co efficien t summarizing the workload’s arithmetic intensit y relativ e to av ailable memory bandwidth. The execution-time surface then b ecomes T mem ( x ) = 1 − S 1 + ( R eff ( x ) − 1) x + S 1 − x . (14) This extension has t wo useful implications. First, memory friction pulls the right-hand side of the surface up ward, so the interior optimum x ∗ mo ves closer to zero even b efore the collapse threshold is reac hed. Bandwidth limitations therefore strengthen the pressure to ward programmable hardware. Second, for the friction mo del in Equations 13 – 14 , the collapse threshold itself is unchanged. Ev aluating the deriv ativ e at the origin gives dT mem dx x =0 = − (1 − S )( R max − 1) + S, (15) whic h yields the same b oundary condition S c = 1 − 1 R max . (16) F or this bandwidth-friction extension, memory hierarc h y effects deform the in terior of the allo cation surface without altering the first-order phase b oundary . The finite collapse remains go verned by w orkload structure and p eak sp ecialization adv an tage, while bandwidth limitations flatten the approach to that threshold. References [1] T omas Akenine-Möller, Eric Haines, Naty Hoffman, Angelo P esce, Michał Iw anicki, and Sébastien Hillaire. R e al-Time R endering . A K Peters/CR C Press, 4 edition, 2018. [2] Gene M. Amdahl. V alidity of the single pro cessor approac h to achieving large scale computing capabilities. In Pr o c e e dings of the April 18–20, 1967, Spring Joint Computer Confer enc e , AFIPS ’67 (Spring), pages 483–485. ACM, 1967. [3] DeepSeek-AI. Deepseek-v2: A strong, economical, and efficient mixture-of-exp erts language mo del. arXiv pr eprint arXiv:2405.04434 , 2024. 10 [4] William F edus, Barret Zoph, and Noam Shazeer. Switch transformers: Scaling to trillion parameter mo dels with simple and efficient sparsit y . Journal of Machine L e arning R ese ar ch , 23(120):1–39, 2022. [5] John L. Gustafson. Reev aluating Amdahl’s law. Communic ations of the A CM , 31(5):532–533, 1988. [6] John L. Hennessy and David A. Patterson. A new golden age for computer architecture. Communic ations of the A CM , 62(2):48–60, 2019. [7] Norman P . Jouppi, Cliff Y oung, Nishant P atil, et al. In-datacenter p erformance analysis of a tensor processing unit. In Pr o c e e dings of the 44th Annual International Symp osium on Computer A r chite ctur e , pages 1–12, 2017. [8] Jared Kaplan, Sam McCandlish, T om Henighan, T om B. Brown, Benjamin Chess, Rewon Child, Scott Gray , Alec Radford, Jeffrey W u, and Dario Amo dei. Scaling laws for neural language mo dels. arXiv pr eprint arXiv:2001.08361 , 2020. [9] Sam uel Williams, Andrew W aterman, and Da vid P atterson. Ro ofline: An insightful visual p erformance mo del for multicore architectures. Communic ations of the ACM , 52(4):65–76, 2009. [10] Lei Xiao, Salah Nouri, Matt Chapman, Alexander Fix, Douglas Lanman, and An ton Kaplany an. Neural sup ersampling for real-time rendering. ACM T r ansactions on Gr aphics , 39(4):142:1– 142:12, 2020. 11

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment