Object-Reconstruction-Aware Whole-body Control of Mobile Manipulators

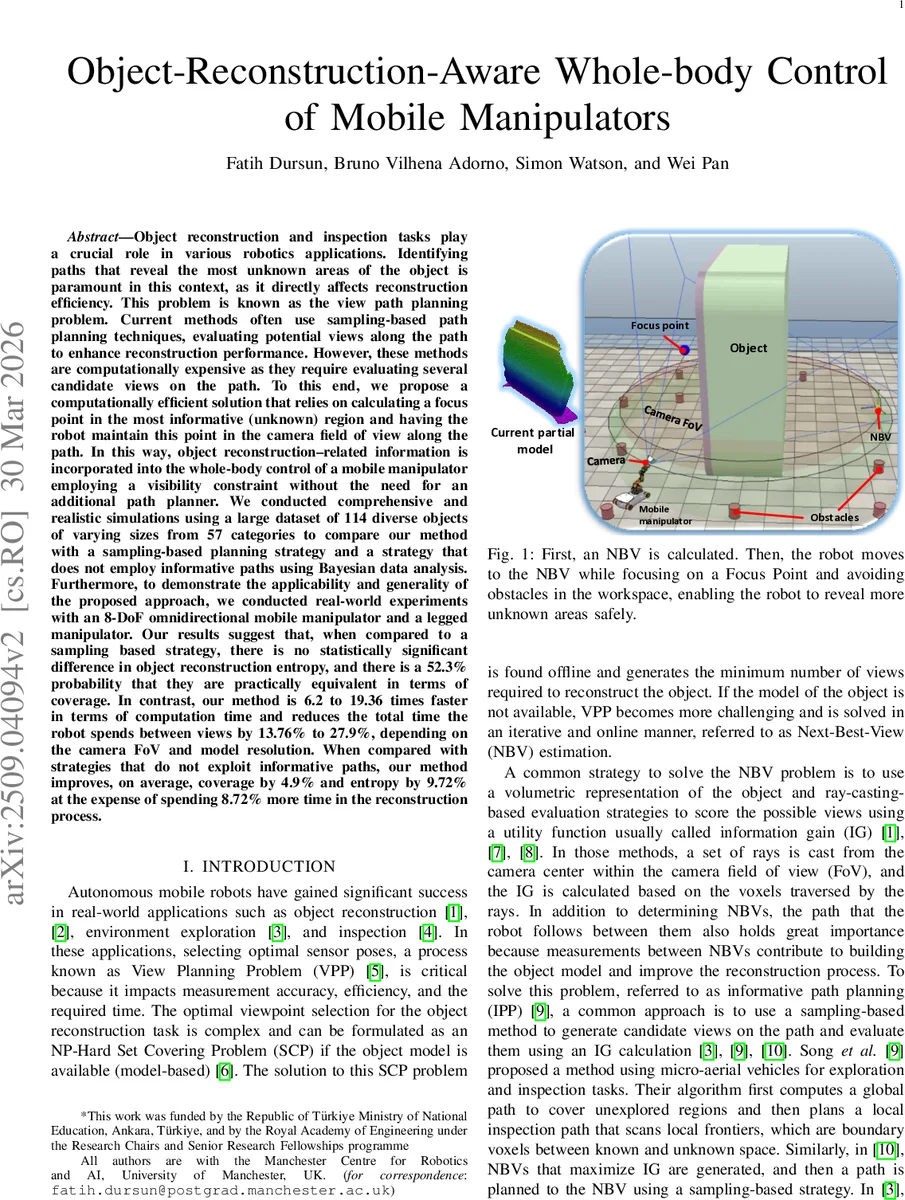

Object reconstruction and inspection tasks play a crucial role in various robotics applications. Identifying paths that reveal the most unknown areas of the object is paramount in this context, as it directly affects reconstruction efficiency. Current methods often use sampling based path planning techniques, evaluating views along the path to enhance reconstruction performance. However, these methods are computationally expensive as they require evaluating several candidate views on the path. To this end, we propose a computationally efficient solution that relies on calculating a focus point in the most informative region and having the robot maintain this point in the camera field of view along the path. In this way, object reconstruction related information is incorporated into the whole body control of a mobile manipulator employing a visibility constraint without the need for an additional path planner. We conducted comprehensive and realistic simulations using a large dataset of 114 diverse objects of varying sizes from 57 categories to compare our method with a sampling based planning strategy and a strategy that does not employ informative paths using Bayesian data analysis. Furthermore, to demonstrate the applicability and generality of the proposed approach, we conducted real world experiments with an 8 DoF omnidirectional mobile manipulator and a legged manipulator. Our results suggest that, compared to a sampling based strategy, there is no statistically significant difference in object reconstruction entropy, and there is a 52.3% probability that they are practically equivalent in terms of coverage. In contrast, our method is 6.2 to 19.36 times faster in terms of computation time and reduces the total time the robot spends between views by 13.76% to 27.9%, depending on the camera FoV and model resolution.

💡 Research Summary

The paper addresses the view‑path planning problem that arises in object reconstruction and inspection tasks performed by mobile manipulators. Conventional approaches treat the problem as a two‑stage pipeline: first a Next‑Best‑View (NBV) is selected, then an informative path (IPP) is generated by densely sampling candidate viewpoints along the trajectory and evaluating each with a ray‑casting based information‑gain (IG) metric. While effective, this sampling‑based IPP becomes computationally prohibitive as the volumetric resolution of the scene increases, because the number of ray‑casting evaluations grows dramatically. Moreover, recent learning‑based NBV methods still rely on a fixed set of candidate views, limiting their applicability to arbitrary viewpoints.

The authors propose a fundamentally different strategy: instead of discretising the motion between NBVs, they embed reconstruction awareness directly into the whole‑body controller of the robot. After an NBV is chosen (any NBV algorithm can be used; the authors employ the rear‑side‑voxel method), the robot computes a “focus point” inside the object’s bounding box that lies in the region of highest uncertainty (highest entropy) of the current partial model. This focus point is obtained by casting rays from a virtual camera frustum into the volumetric map and selecting the voxel that maximally reduces uncertainty. While traveling to the NBV, the controller enforces a visibility constraint that keeps the focus point inside the actual camera field‑of‑view (FoV). Simultaneously, obstacle avoidance is handled with vector‑field inequalities (VFIs) and a circulation constraint to prevent the robot from getting trapped in local minima at obstacle boundaries. Crucially, the authors exploit the observation that the camera’s roll angle has negligible impact on the volume covered by the FoV; therefore, only the camera position and viewing direction need to be controlled, freeing one degree of freedom and simplifying the control problem.

By maintaining the focus point in view, the robot continuously gathers informative measurements during the entire NBV‑to‑NBV motion, eliminating the need to sample and evaluate intermediate viewpoints. This yields a dramatic reduction in computational load: the proposed method avoids the “t_eval + t_exec” components of traditional IPP, where t_eval is the time spent evaluating candidate views and t_exec is the execution time of visiting them. In extensive simulations on 114 objects drawn from 57 categories of the ShapeNet dataset, the authors compare three strategies: (1) the proposed visibility‑constrained whole‑body control, (2) a conventional sampling‑based IPP, and (3) a baseline that does not exploit informative paths. Using Bayesian data analysis, they find no statistically significant difference in reconstruction entropy between (1) and (2), and a 52.3 % probability that coverage is practically equivalent. However, the proposed method is 6.2–19.36 × faster in computation and reduces the robot’s travel time between views by 13.76 % to 27.9 % depending on camera FoV and voxel resolution. When compared to the non‑informative baseline, coverage improves on average by 4.9 % and entropy by 9.72 % at the cost of an 8.72 % increase in total reconstruction time, which is still acceptable given the overall efficiency gains.

Real‑world experiments validate the approach on two platforms: an 8‑DoF omnidirectional mobile manipulator and a legged manipulator. Both robots successfully maintain the focus point within the camera view while navigating around obstacles and reaching NBVs, confirming that the method is platform‑agnostic and robust to complex terrain.

Key contributions are:

- Integration of a visibility constraint into whole‑body control, turning the NBV‑to‑NBV segment into a continuous, information‑aware motion.

- Elimination of candidate‑view sampling and ray‑casting along the path, achieving orders‑of‑magnitude reduction in planning time.

- A control formulation that frees one degree of freedom by ignoring camera roll, enhancing redundancy exploitation and simplifying implementation.

- Demonstration of generality across wheeled and legged mobile manipulators, and thorough quantitative validation via Bayesian analysis on a large, diverse object set.

In summary, the work shows that embedding reconstruction objectives directly into the robot’s motion controller can match or exceed the performance of state‑of‑the‑art sampling‑based IPP while delivering far superior computational efficiency and applicability to a wide range of robotic platforms. This paradigm shift opens the door to real‑time, high‑resolution 3‑D reconstruction in dynamic or time‑critical scenarios such as on‑site inspection, disaster response, and autonomous exploration.

Comments & Academic Discussion

Loading comments...

Leave a Comment