Tracking without Seeing: Geospatial Inference using Encrypted Traffic from Distributed Nodes

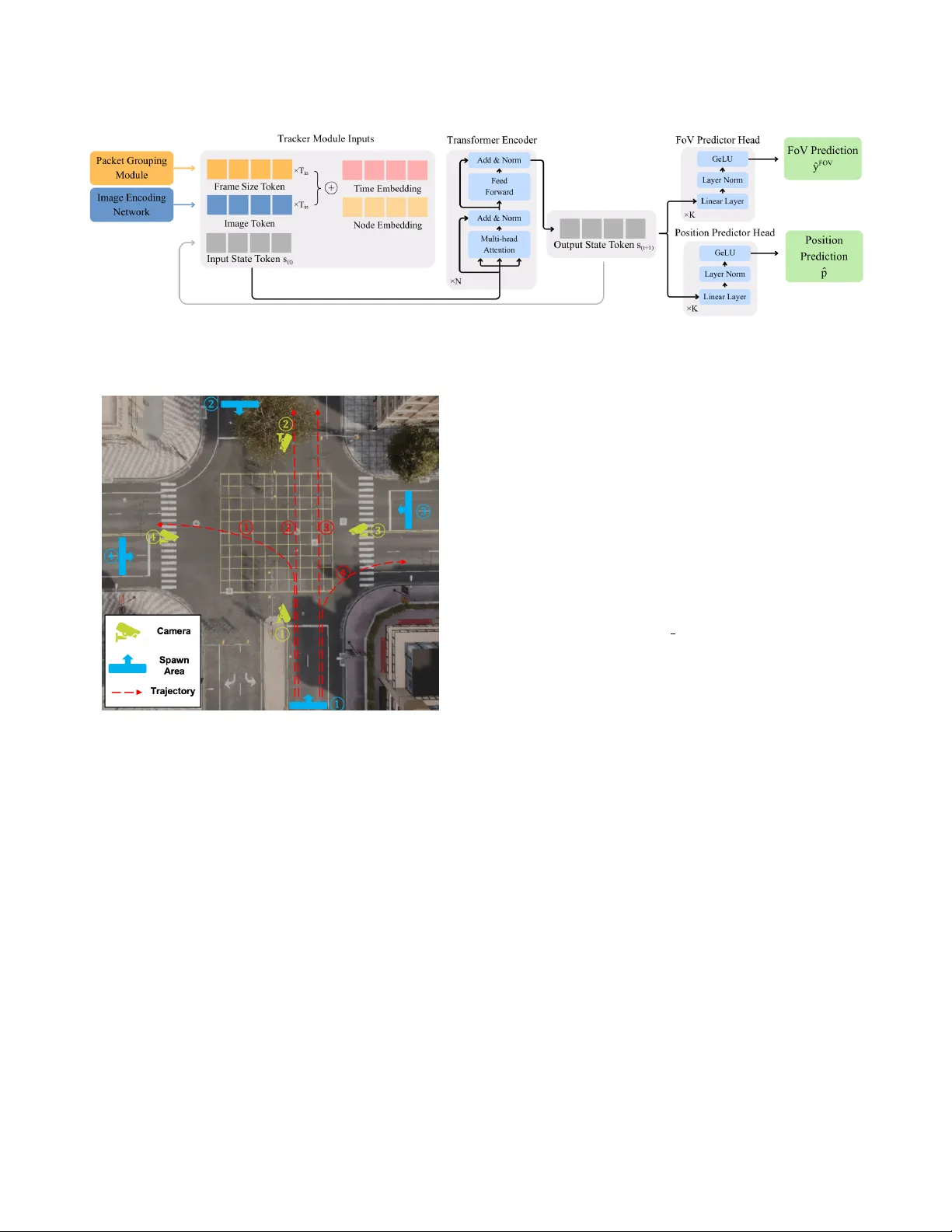

Accurate observation of dynamic environments traditionally relies on synthesizing raw, signal-level information from multiple distributed sensors. This work investigates an alternative approach: performing geospatial inference using only encrypted pa…

Authors: Sadik Yagiz Yetim, Gaofeng Dong, Isaac-Neil Zanoria