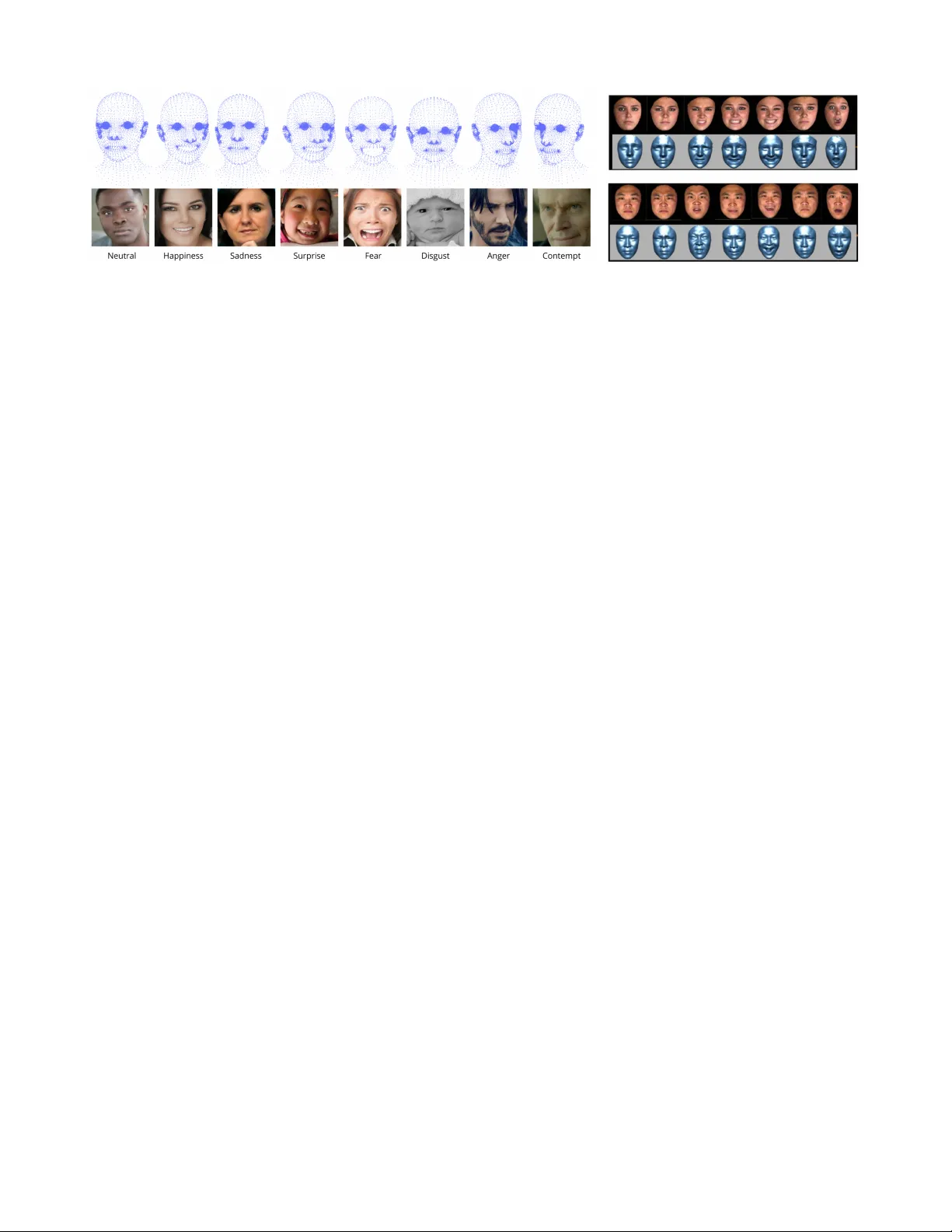

Towards Emotion Recognition with 3D Pointclouds Obtained from Facial Expression Images

Facial Emotion Recognition is a critical research area within Affective Computing due to its wide-ranging applications in Human Computer Interaction, mental health assessment and fatigue monitoring. Current FER methods predominantly rely on Deep Lear…

Authors: Laura Rayón Ropero, Jasper De Laet, Filip Lemic