What-If Explanations Over Time: Counterfactuals for Time Series Classification

Counterfactual explanations emerge as a powerful approach in explainable AI, providing what-if scenarios that reveal how minimal changes to an input time series can alter the model's prediction. This work presents a survey of recent algorithms for co…

Authors: Udo Schlegel, Thomas Seidl

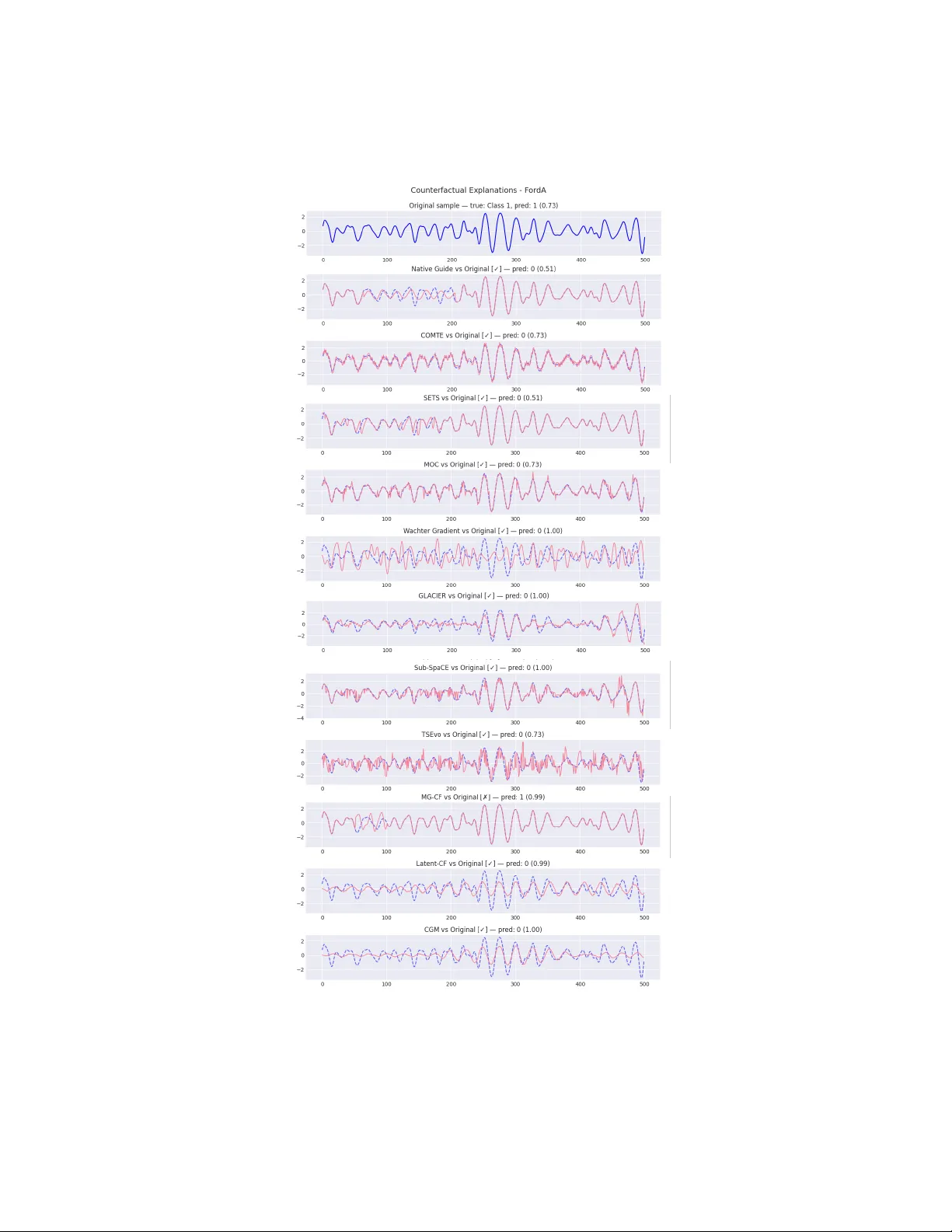

What-If Explanations Ov er Time: Coun terfactuals for Time Series Classification Udo Sc hlegel 1 , 2 [0000 − 0002 − 8266 − 0162] and Thomas Seidl 1 , 2 [0000 − 0002 − 4861 − 1412] 1 LMU Munic h, German y {schlegel,seidl}@dbs.ifi.lmu.de 2 Munic h Center for Machine Learning (MCML), Germany Abstract. Coun terfactual explanations emerge as a p o w erful approach in explainable AI, providing what-if scenarios that rev eal ho w minimal c hanges to an input time series can alter the mo del’s prediction. This w ork presents a survey of recent algorithms for coun terfactual explana- tions for time series classification. W e review state-of-the-art methods, spanning instance-based nearest-neighbor tec hniques, pattern-driven al- gorithms, gradien t-based optimization, and generative models. F or eac h, w e discuss the underlying methodology , the models and classifiers they target, and the datasets on whic h they are ev aluated. W e highlight unique c hallenges in generating coun terfactuals for temp oral data, such as main- taining temp oral coherence, plausibility , and actionable in terpretability , whic h distinguish the temp oral from tabular or image domains. W e an- alyze the strengths and limitations of existing approac hes and compare their effectiveness along k ey dimensions (v alidit y , pro ximity , sparsity , plausibilit y , etc.). In addition, w e implemented an open-source implemen- tation library , Counterfactual Explanations for Time Series (CFTS), as a reference framework that includes many algorithms and ev aluation met- rics. W e discuss this library’s contributions in standardizing ev aluation and enabling practical adoption of explainable time series tec hniques. Finally , based on the literature and identified gaps, w e prop ose future researc h directions, including improv ed user-centered design, integration of domain knowledge, and coun terfactuals for time series forecasting. Keyw ords: Time Series Classification · Explainable AI · In terpretabil- it y · What-If Explanations · Counterfactuals · Contrastiv e Explanations 1 In tro duction Mac hine learning mo dels for time series classification are increasingly applied in critical domains such as healthcare (e.g., ECG rhythm diagnosis), finance (e.g., anomaly detection in sto c k prices), and industrial applications (e.g., predictiv e main tenance) [38]. In these settings, understanding why a mo del made a partic- ular prediction is as imp ortan t as the prediction’s accuracy [33]. Counterfactual explanations (CFEs) hav e gained prominence as an explainable AI (XAI) tech- nique to answer what-if questions: th ey identify how an input instance could b e minimally mo dified to achiev e a differen t outcome [15]. F or example, for a 2 Sc hlegel et al. patien t’s heart rate time series classified as "at risk", a counterfactual explana- tion migh t demonstrate how a slight reduction of a certain spik e in the series w ould hav e led the mo del to predict a normal outcome [38]. Counterfactuals are app ealing b ecause they offer actionable insigh t [22]. They suggest concrete c hanges to the input that w ould alter the mo del’s decision, p oten tially guiding in terven tions or insights in learned patterns [15]. While coun terfactual explanation metho ds ha v e b een well-studied for tabu- lar data [30], applying them to time series data is more c hallenging [12]. Time series classification (TSC) inv olves sequential, often correlated data points, so an y perturbation m ust consider temp oral dep endencies to remain realistic [12]. Changing a v alue at one time step can influence future time steps, making simple coun terfactual generation algorithms unplausible for users (e.g., a counterfactual ECG with an abrupt p eak) [11]. Additionally , p ossible real-world use of time se- ries CFEs often implies algorithmic recourse: guiding users on how to change b eha vior to achiev e a different outcome, such as suggesting lifestyle changes to prev ent unfa vourable ev ents [8,30]. This p ossible dual use of CFEs, as b oth ex- planations and recommendations, imp oses extra requirements lik e plausibility and user-sp ecific feasibility that go b ey ond flipping the mo del’s outcome. Ov er the past few years, a gro wing n umber of metho ds hav e b een prop osed to generate counterfactual explanations for time series classifiers [38]. These meth- o ds v ary in their approach and assumptions. Some techniques adapt distance- based optimization originally developed for tabular data, for instance, extending the loss from W ach ter et al. [40] to time series by incorp orating c hannel-wise distances [1]. Other approac hes leverage the structure of time series by using case-based reasoning, e.g., finding a close example from a different class and ex- c hanging its salient subsequence to serve as a counterfactual, an idea introduced as Native Guide [12]. There are pattern-driv en methods that exploit shap elets, motifs, or discords, repetitive or anomalous subsequences, to guide coun terfac- tual generation. Mean while, researchers ha ve explored evolutionary algorithms that search the space of p ossible time series p erturbations using genetic op era- tions, as well as deep learning approaches such as training generative mo dels to pro duce plausible counterfactuals. This diversit y of strategies reflects the com- plexit y of the task and the lack of a one-size-fits-all solution. In this work, we review the current state of algorithms for coun terfactual ex- planations for time series classification. W e pro vide a structured review of promi- nen t me thods, emphasizing how they generate counterfactuals, which mo dels or scenarios they target, and what datasets and metrics are used for ev aluation. W e highlight the unique challenges that time series data bring to counterfactual generation, c hallenges of temp oral coherence, plausibility , and user actionabil- it y that are less prominent in other domains. W e then compare the strengths and limitations of differen t approac hes in addressing these challenges. T o do so, w e implemented an op en-source library , CFTS (Coun terfactual Explanation Algorithms for Time Series Mo dels) 3 , which implements man y state-of-the-art 3 The pack age is av ailable on Github under gith ub.com/visual-xai-for-time-series/counterfactual-explanations-for-time-series What-If Explanations Over Time 3 algorithms under a common framework. By examining results and visualizations from this library , we illustrate differences betw een metho ds. Finally , w e outline promising directions for future researc h, including integrating causal reasoning and improving scalabilit y . Through this comprehensive review, we aim to high- ligh t the progress made so far and identify the gaps that remain op en for creating effectiv e, human-cen tered counterfactual explanations for time series. 2 Related W ork and Bac kground Coun terfactual Explanations – The concept of counterfactual explanations in machine learning was p opularized by W ach ter et al. [40] as a wa y to explain individual predictions without revealing mo del internals. A counterfactual is t yp- ically defined as a p erturbed input x ′ that yields a different output (prediction) y ′ from the original input x with output y , while x ′ is as "close" to x as p ossible. F ormally , one can frame finding a counterfactual as an optimization problem minimizing a weigh ted sum of (a) a v alidit y loss that encourages the mo del’s predicted class to switch to the desired target, and (b) a proximit y term that measures distance b et w een x and x ′ [40]. In practice, v arious distance metrics ( L 1 , L 2 , ...) or domain-sp ecific measures can b e used to quantify the size of the c hange. Additionally , constraints or regularizers are often applied to promote sparsit y (changing as few features or time p oin ts as p ossible), and feasibility (a voiding changes that violate domain knowledge or ph ysical p ossibilit y) [11]. Time Series Classification – Explaining time series mo dels p oses distinct c hallenges b ecause time series data inherently hav e a temp oral order. A time series classifie r f ( x ) is often implemented as a deep neural netw ork (CNN or RNN), trained on sequences. Time series often arise from pro cesses in which only certain patterns or con tiguous segments are seman tically meaningful (e.g., an arrhythmia segmen t in an ECG) [38]. Unlik e tabular data, where any fea- ture can often be c hanged independently , in time series, an in terven tion at one time p oin t may necessitate correlated c hanges in other time p oin ts to main tain a realistic pattern [36]. Moreov er, many time series datasets hav e m ultiv ariate (m ulti-channel) inputs, whic h adds complexity; counterfactuals might inv olve mo difying one or more channels, raising questions ab out whether to treat c han- nels indep enden tly or consider their join t dynamics [38]. Common b enc hmark datasets used in literature come from the UCR/UEA Time Series Archiv e and similar collections [10,2]. These include a wide range of domains (sensor read- ings, medical signals, syn thetic control series, etc.), for example: ECG5000 (heart electrical signals) [10], F ordA (automotive sensor) [10], Epilepsy (multic hannel EEG) [2], and Sp ok en Arabic Digit dataset (m ultiple-channel audio traces) [2]. Existing Surv eys and Position P ap ers – Counterfactual explanations in general (not sp ecific to time series) ha ve b een survey ed b y multiple au- thors [15,22,30]. These w orks review metho ds across domains and discuss prop- erties lik e actionability , sparsit y , and ev aluation metrics. Ho wev er, un til recently , the time series domain receiv ed relatively little attention in these surv eys. 4 Sc hlegel et al. A v ery recent p osition paper by Ch ukwu et al. [8] argues that counterfactual explanations for time series must be human-cen tered and temp orally coheren t, noting that many curren t tec hniques struggle with those asp ects. Our work com- plemen ts suc h p erspectives by pro viding a deep div e sp ecifically into time series coun terfactual methods, summarizing the tec hnical progress to date, and pin- p oin ting how w ell they meet the desired criteria. In the following sections, w e organize the literature b y metho dological cate- gories and highlight representativ e techniques. T able 1 gives a high-level o verview of prominent counterfactual explanation metho ds for time series classification, including the mechanism they use and whether they apply to univ ariate (U) or m ultiv ariate (M) data. W e then discuss each category in detail. 3 Collected Metho ds for Counterfactual Explanations in Time Series Classification and Others T o provide a structured ov erview of existing approaches, we organize the lit- erature into six metho dological categories based on how counterfactuals are generated and the structural constraints imp osed on the time series. This tax- onom y emphasizes the underlying mechanism rather than sp ecific applications and enables principled comparison across metho ds. The categories are: (i) optimization-based metho ds, (ii) ev olutionary metho ds, (iii) instance- based metho ds, (iv) latent space metho ds, (v) segmen t-based meth- o ds, and (vi) hybrid metho ds. T able 1 summarizes implemented works in CFTS within eac h category with basic concepts. Metho dology – Our o verview builds up on the w ork of Theissler et al. [38] and Chukwu et al. [8], which pro vide comprehensive o verviews of counterfactual explanation metho ds in machine learning. Using these works as a conceptual and structural foundation, we extended the literature search to identify more recen t, domain-sp ecific studies. T o this end, we conducted a systematic search on Go ogle Scholar using keyw ords related to counterfactual explanations and time series data. Additionally , we examined the citation netw orks of one of the earliest counterfactual works for time series to unco ver subsequen t researc h and extensions. This com bination of targeted keyw ord searc h and citation tracing ensured broad cov erage of b oth seminal and emerging contributions in the field. 3.1 Optimization-Based Metho ds Optimization-based metho ds formulate coun terfactual generation as a direct op- timization problem in the input space. Given an input time series and a trained classifier, these approac hes searc h for a mo dified time series that minimizes a com bination of (i) a v alidity ob jective, enforcing the mo del to predict a desired target class, and (ii) a pro ximity ob jective, measuring the distance to the orig- inal instance. These approaches usually op erate in the input space and either assume access to the classifier’s gradients or rely on score-based approximations, What-If Explanations Over Time 5 Method Y ear Data Category Core Idea W ac hter et al. [40] 2017 U/M Optimization-based Input-space loss minimization to induce class change CoMTE [1] 2021 M Optimization-based Channel-wise counterfactual optimiza- tion for multiv ariate time series TS-T w eaking [20] 2020 U Optimization-based Greedy optimization that tw eaks shapelet-aligned segments TSCF 2024 U/M Optimization-based Custom input-space counterfactual opti- mization framework MOC [9] 2020 U/M Evolutionary Multi-ob jectiv e evolutionary counterfac- tual search TSEvo [17] 2022 U/M Evolutionary Evolutionary coun terfactual explana- tions tailored to time series Sub-SpaCE [32] 2023 U Evolutionary Sparse evolutionary search over contigu- ous subsequences Multi-SpaCE [31] 2024 M Evolutionary Multi-ob jective subsequence-based CF s for multiv ariate time series Native Guide [12] 2021 U Instance-based Nearest unlike neighbor–guided subse- quence replacement CELS [27] 2023 U Instance-based Saliency-guided counterfactual edits for univ ariate series M-CELS [28] 2024 M Instance-based Multiv ariate extension of saliency-guided CF s AB-CF [26] 2023 M Instance-based Atten tion-guided coun terfactual explana- tion framework Latent-CF [6] 2020 U/M Latent space Latent-space counterfactual baseline us- ing auto encoders CGM [39] 2021 U/M Latent space Conditional generative modeling for counterfactual explanations LASTS [16] 2020 U/M Latent space Latent surrogate explanations for time series classifiers GLACIER [41] 2024 U/M Latent space Locally constrained, realism-aware latent counterfactuals CounTS [43] 2023 U/M Latent space Structured generative CF s with inter- pretable latent variables SG-CF [25] 2022 U/M Segmen t-based Shapelet-guided subsequence counterfac- tual explanations SETS [3] 2022 U/M Segmen t-based Efficien t shapelet-based counterfactual generation DisCOX [5] 2024 U/M Segment-based Discord (anomalous segmen t) replace- ment for CF s CFW oT [37] 2024 M Segment-based Subsequence-based CF s without access to training data TS-CEM [23] 2020 U/M Segment-based Time-series adaptation of CEM with con- trastive temporal segments MG-CF [29] 2022 U/M Hybrid Motif-guided counterfactuals combining segment and instance ideas SP AR CE [24] 2022 M Hybrid Structured sparsity for actionable coun- terfactual recourse T eR CE [4] 2022 M Hybrid Symbolic temporal-rule-based counter- factual explanations Time-CF [19] 2024 U/M Hybrid GAN-based counterfactuals guided by temporal shap elets T able 1. Overview of counterfactual explanation methods for time series classification, including publication year and supported data type (U: univ ariate, M: multiv ariate). where a surrogate density mo del is estimated to appro ximate the gradien t and used to guide the searc h for v alid yet minimally p erturbed counterfactuals. The form ulation introduced by W ac hter et al. [40] serv es as the conceptual foundation of this family . Although originally proposed for tabular data, it has b een widely adapted to time series by defining suitable distance measures and up date rules. When applied to temp oral data, W ac h ter-style optimization allows fine-grained p oin twise adjustments, enabling counterfactuals with very small dis- tances. Ho w ever, this often leads to temporally diffuse c hanges, where small p erturbations are spread across many time steps, limiting interpretabilit y . 6 Sc hlegel et al. CoMTE [1] extends optimization-based counterfactual generation to m ulti- v ariate time series by explicitly p erforming channel-wise optimization. Instead of treating all dimensions jointly , CoMTE ev aluates which subset of channels m ust b e mo dified to ac hieve a class change, thereby improving interpretabilit y in set- tings where channels correspond to distinct physical sensors. This design helps iden tify which v ariables matter, but it op erates at a relativ ely coarse temporal gran ularity and may miss fine-grained temp oral patterns within channels. TS-T weaking [20] form ulates explainable time series t weaking as an optimiza- tion problem that seeks minimal changes to an input series so that a random shap elet forest (target mo del) flips its prediction. The local v arian t greedily edits shap elet-aligned segments for a single instance, while the global v ariant derives reusable transformation rules by clustering time series and learning common t weaks that generalize across many instances. TSCF, a custom, optimization-based framework, follows a similar approach but offers greater flexibility in defining temp oral constrain ts and distance mea- sures. By incorporating domain-specific regularization or smo othness p enalties, TSCF can partially address the temporal incoherence observ ed in naïv e input- space optimization approac hes. A dv an tages – Optimization-based metho ds are computationally efficient, relativ ely simple to implement, and go od at pro ducing low-distance counterfac- tuals. They can b e applied mo del-agnostically when gradien ts are appro ximated. Limitations – Without strong temp oral constraints, these metho ds often pro duce diffuse, hard-to-interpret c hanges and struggle to balance proximit y with temp oral sparsity , particularly for long time series. 3.2 Ev olutionary Metho ds Ev olutionary metho ds frame coun terfactual generation as a m ulti-ob jective opti- mization problem solved using p opulation-based search algorithms. Rather than optimizing a single weigh ted ob jective, these metho ds seek a set of Pareto- optimal counterfactuals that balance comp eting criteria such as v alidit y , pro x- imit y , sparsity , and temp oral compactness. The MOC framework b y Dandl et al. [9] introduced multi-ob jective coun ter- factual reasoning, establishing principles that later inspired time series–specific adaptations. TSEvo [17] extends this framew ork to time series classification b y defining mutation and crosso ver op erators tailored to temp oral data. Candidate time series are iteratively evolv ed, with fitness functions ev aluating multiple ex- planation ob jectives to find fitting counterfactuals. T o improv e in terpretability , Sub-SpaCE [32] restricts ev olutionary edits to a small num b er of contiguous subsequences. This encourages the algorithm to find explanations that mo dify lo calized temp oral regions rather than individ- ual points scattered across time. Multi-SpaCE [31] further extends this idea to m ultiv ariate time series, allowing simultaneous subsequence edits across multiple c hannels while preserving temp oral alignmen t. Ev olutionary metho ds pro duce diverse coun terfactual explanations, offering users m ultiple plausible alternatives. This diversit y is particularly v aluable in What-If Explanations Over Time 7 decision-supp ort scenarios. Ho wev er, p opulation-based searc h incurs a high com- putational cost, as eac h generation requires numerous mo del ev aluations. A dv an tages – Ev olutionary metho ds excel at pro ducing sparse, lo calized, and div erse counterfactuals, and are fully mo del-agnostic. Limitations – They are computationally exp ensiv e and ma y not scale well to long, high-dimensional, or real-time settings. 3.3 Instance-Based Metho ds Instance-based metho ds generate coun terfactual e x planations b y incorp orating real examples from the dataset. The cen tral assumption is that plausible coun- terfactuals resem ble existing instances from the target class. Nativ e Guide Coun terfactuals (NG-CF) [12] iden tify a nearest unlike neigh- b or from the target class and adapt salient subsequences to form a counter- factual. Imp ortance scores or attribution metho ds are often used to determine whic h parts of the time series should b e replaced. This results in temp orally coheren t counterfactuals comp osed of realistic patterns. Building on this idea, CELS [27] introduces saliency-guided counterfactual generation, where learned imp ortance maps restrict mo difications to influential time steps. M-CELS [28] extends this approach to m ultiv ariate time series, while AB-CF [26] lev erages attention mechanisms within neural classifiers to guide coun terfactual edits across channels and time. Instance-based approaches naturally preserve plausibility and are highly in- terpretable, as the changes corresp ond to known examples. How ever, their suc- cess dep ends on the a v ailabilit y of suitable target-class neigh b ors and ma y de- grade when dataset co verage is limited. A dv an tages – Because coun terfactuals are constructed from real observ ed instances, instance-based metho ds tend to align well with domain exp ectations and are often easier for practitioners to trust and v alidate. Limitations – Their reliance on nearest neighbors can also introduce bias to ward frequen tly observ ed patterns, p oten tially ov erlooking rare but v alid coun- terfactual alternativ es. 3.4 Laten t Space Metho ds Laten t space methods op erate on learned representations of time series rather than directly manipulating raw inputs. These representations, t ypically learned via auto encoders or generative mo dels, capture global temp oral structure and statistical dep endencies. Laten t-CF [6] provides a simple baseline by generating coun terfactuals in an auto encoder’s laten t space and deco ding them back to the input domain. CGM [39] extends this idea using conditional generativ e models, allowing coun- terfactuals to b e sampled from a learned class-conditional distribution. LASTS [16] in tro duces surrogate-based explanations, approximating a blac k-b o x classifier with an in terpretable latent mo del. 8 Sc hlegel et al. More recen t work, suc h as GLACIER [41], in tro duces lo cally constrained laten t optimization that explicitly enforces smo othness, plausibility , and neigh- b orhoo d consistency . CounTS [43] embeds counterfactual reasoning in to a struc- tured generativ e mo del, separating m utable and immutable latent factors and enabling self-in terpretable explanations. Laten t space metho ds are particularly effective at maintaining plausibility and global temp oral coherence but rely heavily on the quality and interpretabilit y of the learned represen tations. A dv an tages – By op erating on compressed represen tations, laten t space metho ds can capture long-range temp oral dep endencies that are difficult to en- force explicitly in input-space approac hes. Limitations – The abstract nature of latent v ariables ma y obscure the meaning of the suggested c hanges, complicating their use for actionable recourse. 3.5 Segmen t-Based Metho ds Segmen t-based metho ds restrict coun terfactual mo difications to contiguous sub- sequences, aligning explanations with human intuition ab out temp oral ev ents. These subsequences ma y corresp ond to discriminative shap elets, recurring mo- tifs, or anomalous segmen ts. SG-CF [25] and SETS [3] rely on shap elet represen tations to iden tify in- formativ e subsequences and mo dify them to induce class c hanges. DisCO X [5] fo cuses on replacing discord (anomalous) segments that disprop ortionately in- fluence the classifier. CFW oT [37] enables subsequence-lev el edits ev en in the absence of training data, making it suitable for data-limited scenarios. TS-CEM [23] extends CEM’s [14] contrastiv e explanation framework to deep time series classifiers b y optimizing o ver discriminative temp oral segments rather than pixels or tabular features. F or a given series and predicted class, TS-CEM searc hes for contiguous subsequences whose remov al or alteration would flip the prediction (p ertinent p ositiv es/negatives), yielding con trastive “why this vs. that” explanations grounded in sp ecific time interv als. By fo cusing on segments rather than individual points, these metho ds yield compact and interpretable counterfactuals. How ev er, their success dep ends on robust mec hanisms for pattern discov ery . A dv an tages – Restricting edits to contiguous segments allows these meth- o ds to express explanations in terms of patterns rather than isolated v alues. Limitations – If the decision b oundary dep ends on subtle global trends rather than lo calized patterns, segment-based constraints may fail to identify v alid counterfactuals. 3.6 Hybrid Metho ds Hybrid metho ds combine elements from multiple metho dological families to lev erage complementary strengths. These approaches often in tegrate generative mo deling, pattern-based constraints, symbolic reasoning, or sparsity ob jectives. What-If Explanations Over Time 9 MG-CF [29] com bines motif-based reasoning with instance-based ideas, while SP ARCE [24] introduces structured sparsity constraints to generate actionable recourse. T eR CE [4] uses sym b olic temp oral rules to express counterfactuals at a high level, and Time-CF [19] integrates generative adversarial mo deling with shap elet guidance to pro duce realistic yet interpretable coun terfactuals. Hybrid metho ds are highly flexible and expressive, making them suitable for complex and high-stakes applications, though they often in tro duce additional mo deling complexity . A dv an tages – By com bining complementary mechanisms, h ybrid methods can flexibly adapt to different datasets and explanation ob jectiv es without b eing tied to a single inductiv e bias. Limitations – This flexibility can mak e hybrid approaches harder to analyze theoretically and more sensitiv e to design choices and hyperparameter settings. 3.7 Summary This extended taxonomy highligh ts the diversit y of coun terfactual explanation metho ds for time series classification and clarifies their respective trade-offs. Optimization-based methods fav or pro ximity; evolutionary methods emphasize div ersity and sparsity; instance- and segment-based metho ds excel in in ter- pretabilit y; latent space metho ds enhance plausibilit y; and hybrid approac hes aim to unify these strengths. This structured persp ectiv e enables systematic ev aluation and provides a solid foundation for futu r e research. 4 Challenges Unique to Time Series Counterfactuals Designing algorithms for coun terfactual explanations for time series has unique c hallenges that researchers m ust address to make these explanations useful and trust worth y . W e highlight sev eral key challenges and considerations: T emp oral Coherence – Any counterfactual mo dification must resp ect the temp oral nature of time series. Changing a single time step in isolation can break temp oral patterns or in tro duce inconsiste ncies (e.g., a sudden jump that violates ph ysical laws). Counterfactuals should ideally mo dify contiguous segments to k eep the ov erall sequence smo oth. T emp oral coherence also means accounting for lead-lag relationships. This means that if a cause precedes an effect in the original series, a meaningful coun terfactual likely needs to reflect a plausible adjustmen t to the following time steps, not just an arbitrary p erturbation. Plausibilit y – A counterfactual time series should "lo ok lik e" a time series that could hav e b een observ ed in the real world (preferably of the target class). This is particularly crucial in domains like medicine: a generated ECG that con- tains ph ysiologically imp ossible wa veform shap es would not be credible to a doc- tor. Plausibility can inv olve simple v alue constraints (e.g., no negative v alues for features that can’t b e negative) and more complex statistical prop erties (preserv- ing auto correlation structure, frequency con tent, or kno wn pattern signatures of the phenomenon) [11]. Many methods address this implicitly by drawing on real 10 Sc hlegel et al. data (instance-based, segment-based), or explicitly via constraints and gener- ativ e mo dels. Nonetheless, ensuring plausibility remains c hallenging, esp ecially for multiv ariate series where inter-v ariable correlations must be maintained [11]. Measures s uc h as domain constraint violations and statistical similarity hav e b een proposed to quantify plausibilit y , but not all studies ev aluate them, risking coun terfactuals that optimize the loss but would never occur in reality . A ctionability and User Constrain ts – In time series contexts, we of- ten interpret coun terfactuals as recommendations for action, e.g., "if the patient had w alk ed 1000 extra steps eac h da y , their glucose level time series w ould fall in to a normal pattern." [8] F or such recommendations to b e actionable, we m ust a void suggesting changes to immutable aspects of the series (lik e past ev ents or static attributes). W e should also consider whether the suggested change can b e feasibly carried out by the user or the system. F or instance, reducing a manufac- turing sensor’s vibration at a sp ecific cycle might require a sp ecific interv en tion, whic h has a cost. The feasibilit y of counterfactuals is a challenge: ensuring that eac h change corresp onds to a real interv en tion and that the magnitudes are rea- sonable. This ties into causal considerations. One should generate only those coun terfactuals that correspond to interv en tions in the domain’s causal mo del (c hanging an effect without c hanging its cause w ould be infeasible). Curren t metho ds rarely integrate user-specific constrain ts automatically , leaving this an imp ortan t gap in making CFEs truly useful in real-world applications. Sparsit y vs. Context – A longstanding principle in counterfactual expla- nation is that changes should b e sparse. Ho w ever, in time series, sparsit y needs to b e balanced with temp oral context. Changing a single time p oin t might b e minimal, but it could also b e meaningless (or subtle in a sensor signal). Many time series CF metho ds thus aim for segmen t-lev el sparsit y , altering a small n umber of con tiguous segmen ts or a key pattern. This yields what some metrics call compactness, measuring whether c hanges o ccur in a small part of the time series. Ac hieving high compactness is challenging with contin uous optimization, as it often distributes small changes widely; tec hniques suc h as pattern-based constrain ts hav e b een shown to achiev e greater compactness. The challenge is to iden tify the right granularit y for "sparsity", p oin t-level for some domains vs. pattern-lev el for others, and to enforce it without sacrificing v alidity . Ev aluation Metrics for Time Series CF – Ev aluating counterfactual qualit y in time series requires going b ey ond the traditional v alidity , pro ximity , and sparsity . There is a temp oral blind sp ot in many ev aluation proto cols: met- rics borrow ed from tabular settings (such as L 1 /L 2 distances or the n umber of features changed) do not capture how changes are distributed o ver time. F or ex- ample, tw o coun terfactuals migh t ha v e the same L 2 distance from the original, but one might make a one-time shift of a segment, while the other adds high- frequency noise throughout; clearly , the former is more interpretable. Metrics lik e Dynamic Time W arping (DTW) distance can measure similarity , allowing for slight time shifts, which might b e relev ant if the timing of patterns can v ary . Other sp ecialized metrics include temp oral inconsistency (whether the time or- dering of even ts is distorted) and shape-based distance. A dditionally , plausibilit y What-If Explanations Over Time 11 metrics, as noted ab o ve, are critical to measure. Some b enc hmarking efforts pro- p ose multi-faceted ev aluations cov ering v alidit y , pro ximity , sparsity , plausibility , div ersity , stability , etc [11,13,21] A challenge is that optimizing for all is imp ossi- ble, so understanding trade-offs is key . The lack of univ ersal metrics for temp oral plausibilit y mak es comparing metho ds across pap ers difficult. One method migh t claim a better L 2 distance, another better sparsit y . T o truly mov e forward, the field needs ev aluation frameworks that reflect the temp oral dimension. User Understanding and T rust – Beyond technical metrics, a core chal- lenge is ho w h umans p erceiv e and trust time series counterfactual explanations. A user (b e it a do ctor, engineer, or customer) might b e presen ted with an alter- nativ e time series and an assertion that "this would c hange the prediction." If the counterfactual is to o complex or unrealistic, the user might disregard it or, w orse, lose trust in the AI system. There is early recognition that user studies are needed to ev aluate if, say , clinicians find these explanations useful in practice [8]. Time series, with their often incomprehensible lines, are not as immediately in- terpretable as images; th us, conv eying the con tent of a counterfactual (via visual highligh ts of changed regions or descriptions) is part of the challenge [35]. So far, most research has fo cused on algorithmic asp ects rather than h uman factors. Ensuring that time series CFEs are h uman-centered (aligned with user men tal mo dels and decision pro cesses) remains an op en c hallenge. In summary , counterfactual explanations for time series must o vercome is- sues of temp oral logic, plausibility , actionable insigh t, and ev aluation complex- it y . Many of these challenges are active areas of research. In the next section, we analyze how the current metho ds fare with resp ect to these issues, and where impro vemen ts are still needed. 5 Comparativ e Analysis of Existing Metho ds Ha ving review ed the landscape of coun terfactual explanation metho ds for time series classification, we now analyze their strengths and limitations in light of the challenges discussed. While all survey ed metho ds aim to pro duce v alid coun- terfactuals, they differ substantially in how they balance compe ting prop erties suc h as proximit y , sparsit y , temp oral coherence, plausibility , and computational efficiency . T able 2 provides a qualitative comparison of ma jor metho dological families across criteria, including v alidity , temporal coherence, sparsity , plausi- bilit y , and computational cost. This comparison is conceptual in nature and is based on rep orted empirical results in the literature as well as our syn thesized understanding of these approac hes. V alidit y as a Baseline Requiremen t – First, most metho ds prioritize v a- lidit y; a counterfactual that fails to flip the model’s prediction is inherently unin- formativ e. Most approaches explicitly enco de v alidity either as a hard constraint or as a dominant optimization ob jectiv e, and man y rep ort near-100% success rates on b enc hmark datasets when a counterfactual can be found. Consequently , v alidit y alone is rarely the differen tiating factor b et w een metho ds. Instead, prac- tical differences emerge primarily in the remaining explanation prop erties. 12 Sc hlegel et al. Method F amily V alidity T emporal Coherence Sparsity Realism Computational Cost Optimization-based High Low–Medium Low Low–Medium Lo w Evolutionary High High High Medium–High High Instance-based Medium– High High Medium– High High Medium Latent space High High Medium High Medium–High Segment-based Medium– High V ery High High High Medium Hybrid High High High High Medium–High T able 2. Qualitative comparison of counterfactual explanation metho d families for time series classification. Assessmen ts are based on reported results in the literature and conceptual analysis rather than absolute quantitativ e b enc hmarks. Pro ximity vs. Sparsit y T rade-offs – A key distinction across metho ds lies in the trade-off b et w een proximit y and sparsit y . Optimization-based ap- proac hes, including W ach ter-st yle metho ds [40] and latent-space v ariants such as LatentCF [6], tend to excel in proximit y by finely adjusting v alues across the en tire sequence. This often leads to very low L 2 or DTW distances. Ho wev er, these gains t ypically come at the cost of sparsit y: many time steps are sligh tly mo dified, resulting in diffuse changes that may b e difficult to in terpret. In contrast, ev olutionary , pattern-based, and segment-based metho ds are ex- plicitly designed to promote sparsit y and temp oral compactness. T ec hniques suc h as Multi-SpaCE [31], SG-CF [25], or motif-based approac hes inten tionally restrict modifications to one or a few con tiguous regions, yielding explanations that are visually and semantically lo calized. Our empirical studies illustrate this con trast: evolutionary approac hes alter as little as 10% of a sequence, whereas unconstrained optimization metho ds may mo dify ov er 70% of time p oin ts, al- b eit by very small magnitudes. These observ ations suggest that a multi-ob jectiv e p erspective is essential—users may prefer a slightly larger, lo calized change o ver a n umerically smaller, globally disp ersed modification. T emp oral Plausibilit y – T emporal plausibility represents another ma- jor differentiator. Instance-based and pattern-based approac hes, such as NG- CF [12], motif-guided coun terfactuals, and discord replacement metho ds, inher- en tly pro duce realistic changes b ecause they rely on real subsequences. Their outputs t ypically preserve the global structure and reduce unnatural noise. By contrast, unconstrained gradient-based metho ds can in tro duce subtle os- cillations or artifacts across the entire sequence. While these artifacts may b e mathematically optimal, they often fail qualitative plausibility chec ks. Recent metho ds suc h as GLACIER [41] and Time-CF [19] address this limitation by in tro ducing latent constraints or generative mo dels, respectively , resulting in mark edly more realistic counterfactuals as confirmed b y b oth quantitativ e met- rics and h uman ev aluation. Ev olutionary metho ds sit b et w een these extremes: while they do not inher- en tly guarantee plausibility , it can b e enforced through fitness terms or crosso ver op erators that recom bine real data segmen ts, as in TSEv o [17]. A notable limi- tation across nearly all metho ds, ho wev er, is the limited treatment of long-range What-If Explanations Over Time 13 temp oral dep endencies such as seasonality or trends. F ew approaches explicitly ensure that counterfactuals preserv e these global temp oral structures, highlight- ing an imp ortan t open research direction. Multiv ariate Co ordination – Handling multiv ariate time series intro- duces additional complexity . Metho ds suc h as CoMTE [1] and shap elet-based approac hes like SETS [3] explicitly reason ab out c hannels independently , often iden tifying a subset of v ariables that require mo dification while leaving others un touched. This can impro v e in terpretability when c hannels corresp ond to dis- tinct sensors or measuremen ts. In contrast, approaches such as TSEv o [17] or GLACIER [41] often treat m ultiv ariate inputs jointly , which can inadv ertently introduce correlated changes across c hannels. This raises subtle concerns: in m an y real-world systems, mo d- ifying one v ariable may require co ordinated c hanges in others due to physical or causal constraints. Most current metho ds do not guarantee such co ordination explicitly and instead rely on the predictive model to implicitly p enalize unre- alistic combinations. Consequently , the appropriateness of channel-wise versus join t mo dification strategies depends strongly on the application domain. Computational Efficiency – There are significant differences in computa- tional efficiency across metho ds. Instance-based approac hes and simple pattern- replacemen t strategies are t ypically fast, often requiring only nearest-neighbor re- triev al and minor adjustments, making them suitable for large-scale use. Gradient- based optimization metho ds are also efficient in most cases, esp ecially when im- plemen ted on GPUs, although latent approaches incur additional prepro cessing costs due to represen tation learning. Ev olutionary algorithms are consisten tly the most computationally expen- siv e, with rep orted runtimes ranging from minutes to hours p er instance, de- p ending on sequence length and p opulation size. While acceptable for offline analysis, this limits their practicalit y in time-sensitiv e settings. Reinforcement- learning-based approaches offer an interesting compromise: although training the p olicy can b e expensive, inference is extremely fast once the agent is learned. F rom a systems p erspective, this distinction b ecomes critical when explanations are required at scale. The op en-source library discussed later in this pap er explic- itly supp orts runtime b enc hmarking, encouraging more transparent rep orting of efficiency alongside explanation qualit y . Div ersity of Explanations – Diversit y is an often underemphasized but imp ortan t prop ert y of counterfactual explanations. Multi-ob jectiv e evolutionary approac hes suc h as MOC [9] and TSEvo [17] pro duce sets of diverse coun ter- factuals, representing different wa ys to alter a prediction. This aligns well with b est practices in XAI, which caution against presen ting a single explanation as the sole in terpretation. Other metho ds t ypically return a single coun terfactual unless explicitly re-run with different initializations or constraints. Incorp orating user preferences—allowing users to c ho ose among multiple plausible counterfac- tuals—remains an underexplored but promising direction. Illustrativ e Example – T o illustrate these trade-offs concretely , consider a univ ariate time series from the F ordA [10] dataset that is initially classified as 14 Sc hlegel et al. class 1. A gradient-based counterfactual may achiev e class 2 b y slightly shifting the entire time series do wnw ard, mo difying nearly every time step. In contrast, an instance-based metho d such as NG-CF [12] might replace a single oscillatory segmen t around time 100 with a segment dra wn from a class 2 example, leav- ing the remainder untouc hed. An ev olutionary approach may generate sev eral alternativ es: one mo difying the segmen t at time 100, another altering a v alley around time 250, each pro viding a distinct explanation. The "b est" counterfac- tual depends on context, for example, whether a specific segment corresp onds to a kno wn physical even t or fault in the system. Summary and Outlook – In summary , existing coun terfactual explana- tion metho ds for time series classification exhibit complemen tary strengths. Optimization-based metho ds excel in pro ximit y , instance- and segment-based metho ds prioritize interpretabilit y and plausibilit y , ev olutionary approac hes of- fer principled multi-ob jectiv e trade-offs at higher computational cost, and latent or hybrid metho ds seek to unify these prop erties. The field is increasingly mo ving to ward hybrid framew orks that combine generative plausibility , temp oral struc- ture aw areness, and optimization efficiency . A t the same time, the diversit y of metho ds underscores the need for standardized b enc hmarks, shared ev aluation proto cols, and op en libraries to enable systematic comparison. 6 A Unified Library for Coun terfactuals for Time Series T o supp ort research and practical application of coun terfactual explanations for time series, we developed an op en-source library , Counterfactual Explanations for Time Series (CFTS). The library serves as a unified implementation of many of the algorithms discussed in this ov erview, pro viding a common co debase and standardized in terfaces. Below, w e describe the library’s scop e, implemen ted metho ds, and its contribution in the context of the existing literature. Implemen ted Algorithms – The CFTS library is a mo dular PyT orch- based framew ork that implements a broad range of counterfactual generation tec hniques for time series classification, spanning all families describ ed in sec- tion 3. The goal of the library is not to exhaustively implemen t ev ery published metho d, but to pro vide represen tative approaches that enable systematic com- parison across paradigms to sho wcase differences. Curren tly , the library includes implementations of optimization-b ase d meth- o ds , evolutionary metho ds , instanc e-b ase d metho ds , latent sp ac e metho ds , se gment- b ase d metho ds , and hybrid metho ds . F or optimization-b ase d metho ds , we pro vide W ac hter-st yle [40] gradien t-based coun terfactuals, a genetic-algorithm v ari- an t of W ach ter-style optimization, CoMTE [1] for multiv ariate time series, TS-CEM [23] as a CEM-style temporal segment approac h, and TSCF for input-space optimization with temporal smoothness and sparsit y . The evolu- tionary metho ds curren tly implemented are MOC (Multi-Ob jectiv e Coun ter- factuals) [9], TSEv o [17] for evolutionary time series coun terfactuals, and Sub- SpaCE [32] together with Multi-SpaCE [31] for sparse subsequence search in uni- and multiv ariate series. F or instanc e-b ase d metho ds , the library includes What-If Explanations Over Time 15 Nativ e Guide counterfactuals [12], saliency-guided metho ds CELS [27] and M-CELS [28], and AB-CF [26] which leverages attention w eights from neu- ral classifiers. The latent sp ac e metho ds comprise Laten t-CF [6] as a latent- space baseline, CGM [39] for class-conditional coun terfactual sampling, and GLA CIER [41] for lo cally constrained, plausibility-a ware latent optimization. Among se gment-b ase d metho ds , w e implement shapelet-based counterfactuals SETS [3] and SG-CF [25], discord-based counterfactuals DisCO X [5], and TS- T w eaking [20] for greedy t weaking of shap elet-aligned segments. Finally , the hybrid metho ds include Time-CF [19] combining generativ e mo deling with pat- tern guidance, MG-CF [29] integrating motif-guided, instance-, and segment- based reasoning, T eRCE [4] for sym b olic temporal-rule-based coun terfactuals, and SpArCE [24] with structured sparsit y for actionable recourse. Ov erall, CFTS currently implemen ts a diverse and metho dologically com- plete set of coun terfactual explanation techniques, co vering all six categories in tro duced in this ov erview. Eac h algorithm is encapsulated in its o wn mo dule with a shared interface, enabling consisten t execution, ev aluation, and visual- ization across metho ds. This level of cov erage makes CFTS a comprehensive op en-source library for coun terfactual explanations in time series classification. By consolidating these approaches under a single framework with standardized inputs and outputs, CFTS remov es a ma jor barrier to repro ducibilit y and b enc h- marking that has historically limited progress in this area [7]. Ev aluation Metrics and T o ols – A cen tral contribution of CFTS is its extensiv e ev aluation framework, designed to reflect the multifaceted nature of coun terfactual explanations. Metrics are group ed in to six categories: – V alidit y metrics , ensuring that the coun terfactual ac hieves the desired target class. – Pro ximity metrics , including L 1 , L 2 , Dynamic Time W arping (DTW), and F réchet distance, capturing b oth p oin twise and shap e-based similarit y . – Sparsit y and compactness metrics , such as L 0 (n umber of modified p oin ts), segment coun t, and mo dified segment length. – Plausibilit y metrics , measuring distributional similarity , auto correlation preserv ation, and sp ectral consistency . – Div ersity metrics , quantifying differences among multiple counterfactuals generated for the same input. – Stabilit y metrics , assessing robustness to small p erturbations of the input. The inclusion of DTW and sp ectral measures reflects an imp ortan t design c hoice: in time series analysis, shifting a pattern slightly in time ma y b e seman- tically acceptable and should not b e penalized as harshly as entirely c hanging its structure. By making such metrics readily av ailable, CFTS encourages re- searc hers to mov e beyond single-n umber ev aluations and report further results to ensure coun terfactual quality . Visualization and User Interface – CFTS provides built-in visualization metho ds to support qualitativ e analysis. These include ov erlays of original and coun terfactual time series that highlight modified regions, as well as side-by-side comparisons across m ultiple metho ds. Figure 1 illustrates an example for the 16 Sc hlegel et al. Fig. 1. A F ordA sample with v arious counterfactual metho ds applied to a CNN trained to classify the data betw een normal and anomaly classes. What-If Explanations Over Time 17 F ordA dataset, where multiple counterfactuals ac hieve the same class flip but differ in the segmen ts they mo dify . F or multiv ariate data, the library supp orts channel-wise visualizations, en- abling insp ection of inter-c hannel dep endencies. This is particularly imp ortan t for datasets suc h as sp ok en Arabic digit signals, where counterfactuals ma y alter only a subset of c hannels while preserving realistic relationships among others. Visualization of ev aluation metrics—such as bar charts comparing proximit y , sparsit y , and plausibility across algorithms—is also supp orted, closely mirroring comparativ e figures commonly found in the literature. Practical Usability and Extensibilit y – Bey ond research, the unified de- sign of CFTS low ers the barrier for practitioners to apply counterfactual explana- tions to real-world time series mo dels. A user can supply a trained classifier and dataset, select a metho d (e.g., wachter , native_guide ), and obtain counterfac- tual explanations through a consistent API. A shared CounterfactualEvaluator class ensures that metrics are computed uniformly across metho ds. The mo dular architecture also facilitates extensibility . New algorithms can b e added b y implementing a standardized in terface, and the repository in vites comm unity contributions. While the library already supports evolutionary and optimization-based methods, reinforcemen t-learning-based coun terfactuals are not y et included, highlighting an opp ortunit y for future extensions. Con text in the Literature – The CFTS library aligns with a broader mo vemen t tow ard b enc hmarking and standardization in explainable AI. Prior w ork has emphasized the need for systematic ev aluation of counterfactual expla- nations, and XTSC-Bench [18] has provided b enc hmark datasets for explainable time series classification. CFTS complemen ts these efforts b y providing the im- plemen tations needed to run such b enc hmarks in practice. By including b oth foundational metho ds (e.g., W ach ter-st yle counterfactuals) and recent adv ances (e.g., GLA CIER, Multi-SpaCE, AB-CF), the library enables researc hers to quantify progress across generations of metho ds—for example, ev aluating whether newer approac hes genuinely improv e plausibility or sparsity and at what computational cost. Summary – In summary , CFTS represents a significant contribution to ex- plainable AI for time series. It op erationalizes a wide range of coun terfactual explanation metho ds, provides a comprehensiv e ev aluation suite, and supp orts b oth qualitative and quantitativ e analysis. By low ering barriers to repro ducibil- it y and b enc hmarking, the library helps bridge the gap b et ween metho dological researc h and real-world application. W e recommend that future coun terfactual metho ds for time series b e ev aluated within such shared frameworks to facilitate fair and transparent comparison, and w e view CFTS as a foundation up on which the comm unity can contin ue to build. 7 F uture Research Directions Our ov erview rev eals a rapidly ev olving field with many promising approac hes, but also underscores op en problems and opportunities. In this section, we outline 18 Sc hlegel et al. sev eral future research directions for counterfactual explanations in time series classification, based on gaps iden tified in the literature and emerging trends: User-Cen tered and Domain-Sp ecific Coun terfactuals – A recurring theme is the need for coun terfactuals that are meaningful and useful to end-users (b e it do ctors, engineers, or individuals sub ject to algorithmic decisions) [35]. F u- ture work should incorp orate human-in-the-loop design [36]: for example, in terac- tiv e to ols where users can specify which features or time segmen ts are actionable for them, and the counterfactual generation adapts accordingly . Domain-sp ecific kno wledge should b e em b edded in to the counterfactual generation process, for instance, using clinical constraints in medical time series (do not suggest imp os- sible vital signs). This might inv olv e collab oration with domain exp erts to craft constrain t rules or the use of simulators to verify the plausibility of coun terfac- tual mo difications [21]. Ultimately , conducting user studies to ev aluate how well coun terfactual explanations improv e human decision-making and trust in each domain will guide refinemen ts to these metho ds [35]. Causalit y and Recourse in T emp oral Settings – Building on the initial application of causal mo deling (like CounTS [43]), further research should inte- grate causal inference with time series counterfactuals. One direction is to use causal disco very on time series to learn whic h v ariables truly influence outcomes, and then restrict coun terfactual changes to those causal driv ers. Another is to address the non-indep enden t and identically distributed nature of time series: a coun terfactual c hange at time t migh t influence the mo del’s prediction not just at t but at future time steps in temp oral mo dels (for example, in forecasting mo dels). There is a need for algorithms that provide counterfactual recourse o ver time, suggesting a series of actions ov er a timeline that will lead to a de- sired outcome [8]. This could b e framed as a temp oral decision-making problem or using techniques from time series causal analysis . A dditionally , questions of fairness and bias in time series mo dels could be tackled b y counterfactual meth- o ds that reveal differential treatment: e.g., do es a certain type of time series pattern systematically require larger changes for one class vs. another to achiev e a fav orable outcome? Addressing causalit y and fairness together might in volv e coun terfactual analysis on generative temp oral causal mo dels. Scalabilit y and Real-Time Explanations – As time series datasets grow in length and sampling frequency (e.g., high-resolution sensor streams), existing coun terfactual metho ds ma y not scale w ell. Research should fo cus on computa- tional efficiency . This could mean dev eloping appro ximate metho ds that sacrifice some ob jectiv es for sp eed, for example, using segmen t-level abstraction (dividing the series in to larger c hunks and only optimizing those) to reduce dimensional- it y . A reinforcement learning approach could b e promising here. Once trained, it could pro vide near-instan taneous coun terfactual generation, which is suitable for real-time systems (e.g., explaining an anomaly detection to an op erator immedi- ately so they can tak e action). Exploring other fast paradigms, lik e differentiable programming or heuristic searc h for time series, could yield metho ds that op- erate in near-linear time with series length. Moreov er, parallel and distributed computing might be leveraged for evolutionary algorithms to handle large p opu- What-If Explanations Over Time 19 lations in less time. The field would benefit from b enc hmarking not just on small academic datasets, but on longer m ultiv ariate series (h undreds or thousands of time steps) to iden tify b ottlenec ks and drive optimizations. In tegration with Other Explainability T ec hniques – Coun terfactual explanations need not exist in isolation. Combining them with feature attri- butions or example-based explanations could pro duce ric her insights [35]. F or example, one migh t use an attribution metho d to first highligh t whic h time re- gion influenced the prediction, then generate a counterfactual fo cused on that region, this t wo-step approach could b e more efficient and yield explanations that are easier to communicate ("the model fo cused on this spike; here’s how alter- ing that spike c hanges the prediction") [34]. Similarly , counterfactuals could b e used to v alidate and refine concept-based explanations: if a mo del claims to de- tect a concept (like "oscillation pattern") for classification, one could generate a coun terfactual that remov es that pattern to see if the prediction changes. Visual analytics systems that allo w users to toggle betw een different explanation mo des (global feature imp ortance, lo cal coun terfactual examples, etc.) w ould also en- hance interpretabilit y [35]. Some initial work in visual explainable AI for time series hints at interfaces where users can manipulate parts of a time series and see the mo del’s resp onse, p erforming their o wn counterfactual exp erimen ts [35,36]. Robustness and Uncertain ty in Counterfactuals – Another research a ven ue is quantifying the uncertaint y or robustness of counterfactual explana- tions. Time series data often con tain noise, and small fluctuations might flip a b orderline prediction. So it’s w orth knowing: is the counterfactual stable un- der slight noise? Some works hav e started testing robustness by adding noise to the original and counterfactual to see if the prediction still flips. More formal approac hes could use Bay esian neural net works or ensemble mo dels to gauge confidence in the counterfactual’s effect. If a counterfactual only works for one sp ecific fine-tuned change and fails if the user’s action is slightly differen t, it ma y not b e a reliable recommendation. Developing metho ds to produce robust coun terfactuals (ones that tolerate a range of implementation errors or natural v ariabilit y) will increase their practical utility . This might inv olv e optimizing for not just a single instance but a neigh b orhoo d of instances around the original, or pro viding a confidence interv al for how muc h c hange is needed. Applications and Case Studies – W e adv o cate for increased application- driv en research that demonstrates coun terfactual explainabilit y in real-world settings, consistent with the call by Keane et al. [21] to ev aluate explanations in use rather than in isolation. F or instance, in healthcare, counterfactual ex- planations could support p ersonalized treatmen t planning by illustrating how minimal changes in patient tra jectories affect mo del predictions [42]; in finance, they may b e used to analyze alternative inv estmen t or risk managemen t scenar- ios; and in en vironmental science, counterfactual weather or climate tra jectories could help in terpret complex forecasting mo dels. Imp ortan tly , deploying coun- terfactual explanations in applied domains is lik ely to surface domain-sp ecific c hallenges, including regulatory constrain ts, ethical considerations, and the fea- sibilit y of suggested interv en tions, which are often o verlooked in purely metho d- 20 Sc hlegel et al. ological work. Moreov er, well-documented case studies are essen tial to assess whether the theoretical adv antages of counterfactual explanations translate into practical utilit y , whether domain experts and decision-mak ers find them un- derstandable, trustw orthy , and actionable. Such empirical evidence will play a critical role in enabling the broader adoption of coun terfactual explanations in real-w orld decision-making systems. In summary , the future direction of research on time series counterfactual explanations should strive to make them more user-aligned, causally sound, scal- able, and widely applicable. While existing frameworks pro vide a robust founda- tion, addressing these future research directions is essential for transitioning from purely algorithmic optimizations to coun terfactual explanations that facilitate h uman-centric interpretation of complex temp oral dynamics. 8 Conclusion Coun terfactual explanations for time series classification ha ve gro wn from an emergen t idea to a large field of study in the last few y ears. W e review ed state-of- the-art metho ds, ranging from nearest-neigh b or case-based reasoning to sophisti- cated m ulti-ob jective evolutionary searches and emerging reinforcemen t learning approac hes. W e discussed ho w these methods tac kle the core problem of "how can we change this time series to alter the mo del’s decision?" through div erse strategies, whether by swapping a key subsequence with one from another class or t weaking latent representations with gradien t guidance. W e also highlighted the distinctiv e challenges time series data p ose: ensuring temp oral coherence, main taining plausibility , and providing actionable and sparse explanations, all while balancing m ultiple ob jectives. Our comparative analysis show ed that no single metho d dominates in all as- p ects; eac h comes with trade-offs. This underscores the imp ortance of contin ued researc h and the v alue of integrated frameworks lik e the CFTS library , which w e featured as a unifying platform for implementation and ev aluation. By stan- dardizing algorithms and metrics, suc h to ols allow for clearer iden tification of strengths and weaknesses and for driving progress in a more organized manner. Lo oking ahead, it is clear that the journey to ward human-cen tered, explain- able AI for time series is still underwa y . W e envision future coun terfactual meth- o ds that are even more aligned with user needs, p erhaps through incorporat- ing causal knowledge and allowing interactiv e exploration of “what-if ” scenarios o ver time. The p oten tial impact is significant: from helping physicians under- stand mo del-assisted diagnoses by visualizing how a patient’s vitals can affect the outcome, to enabling engineers to preemptively identify ho w sligh t changes in mac hine sensor patterns can preven t failures. In summary , counterfactual explanations offer a comp elling narrative form of explanation. They do not just sa y “feature A was imp ortan t”, but rather “if X had b een differen t, Y w ould hav e resulted”. Applying this to time series data brings unique difficulties, but also unique opp ortunities to guide temp oral decision-making. W e hop e this ov erview provides a comprehensive foundation What-If Explanations Over Time 21 for researchers entering this area and sparks new ideas that will address current limitations. By combining technical inno v ation with a fo cus on real-w orld us- abilit y , the field of time series coun terfactual explainabilit y can mature in to an indisp ensable comp onen t of trustw orth y AI systems for temp oral data. A ckno wledgmen ts. W e thank the creators of the UCR/UEA Time Series Arc hive for enabling rigorous ev aluation of these metho ds. W e are also grateful to the researc hers whose work we reviewed for their contributions to in terpretable machine learning. References 1. A tes, E., Aksar, B., Leung, V.J., Coskun, A.K.: Coun terfactual explanations for m ultiv ariate time series. In: International Conference on Applied Artificial Intelli- gence (2021) 2. Bagnall, A., Dau, H.A., Lines, J., Flynn, M., Large, J., Bostrom, A., Southam, P ., Keogh, E.: The uea multiv ariate time series classification archiv e, 2018. arXiv preprin t arXiv:1811.00075 (2018) 3. Bahri, O., Boubrahimi, S.F., Hamdi, S.M.: Shap elet-based counterfactual explana- tions for multiv ariate time series. arXiv preprin t arXiv:2208.10462 (2022) 4. Bahri, O., Li, P ., Boubrahimi, S.F., Hamdi, S.M.: T emporal rule-based coun terfac- tual explanations for multiv ariate time series. In: IEEE International Conference on Machine Learning and Applications (ICMLA) (2022) 5. Bahri, O., Li, P ., Filali Boubrahimi, S., Hamdi, S.M.: Discord-based counterfactual explanations for time series classification. Data Mining and Knowledge Discov ery (2024) 6. Balasubramanian, R., Sharpe, S., Barr, B., Wittenbac h, J., Bruss, C.B.: Latent- cf: a simple baseline for rev erse counterfactual explanations. arXiv preprint arXiv:2012.09301 (2020) 7. Bhattac harya, A., V erb ert, K.: "How Goo d Is Y our Explanation?": T o wards a Standardised Ev aluation Ap proac h for Diverse XAI Metho ds on Multiple Dimen- sions of Explainability . In: A CM Conference on User Mo deling, Adaptation and P ersonalization (2024) 8. Ch ukwu, E.C., Sc houten, R.M., T abak, M., P echenizkiy , M.: Counterfactual Ex- planations for Time Series Should b e Human-Centered and T emp orally Coherent in Interv en tions. arXiv preprin t arXiv:2512.14559 (2025) 9. Dandl, S., Molnar, C., Binder, M., Bischl, B.: Multi-ob jective counterfactual ex- planations. In: In ternational conference on parallel problem solving from nature (2020) 10. Dau, H.A., Bagnall, A., Kamgar, K., Y eh, C.C.M., Zhu, Y., Gharghabi, S., Ratanamahatana, C.A., Keogh, E.: The UCR time series archiv e. IEEE/CAA Jour- nal of Automatica Sinica (2019) 11. Del Ser, J., Barredo-Arrieta, A., Díaz-Rodríguez, N., Herrera, F., Saranti, A., Holzinger, A.: On generating trustw orthy counterfactual explanations. Informa- tion Sciences (2024) 12. Delaney , E., Greene, D., Keane, M.T.: Instance-based counterfactual explanations for time series classification. In: International Conference on Case-Based Reasoning (2021) 22 Sc hlegel et al. 13. Delaney , E., Pakrashi, A., Greene, D., Keane, M.T.: Counterfactual explanations for misclassified images: How human and machine explanations differ. Artificial In telligence (2023) 14. Dh urandhar, A., Chen, P .Y., Luss, R., T u, C.C., Ting, P ., Shanm ugam, K., Das, P .: Explanations based on the missing: T ow ards contrastiv e explanations with p er- tinen t negatives. Adv ances in Neural Information Processing Systems (NeurIPS) (2018) 15. Guidotti, R.: Counterfactual explanations and how to find them: literature review and b enc hmarking. Data Mining and Kno wledge Discov ery (2022) 16. Guidotti, R., Monreale, A., Spinnato, F., P edreschi, D., Giannotti, F.: Explain- ing an y time series classifier. In: In ternational Conference on Cognitiv e Machine In telligence (2020) 17. Höllig, J., Kulbach, C., Thoma, S.: T sev o: Evolutionary coun terfactual explana- tions for time series classification. In: IEEE International Conference on Mac hine Learning and Applications (ICMLA) (2022) 18. Höllig, J., Thoma, S., Grimm, F.: XTSC-bench: quantitativ e b enc hmarking for ex- plainers on time series classification. In: IEEE International Conference on Machine Learning and Applications (ICMLA). pp. 1126–1131. IEEE (2023) 19. Huang, Q., Chen, W., Bäc k, T., v an Stein, N.: Shap elet-based model-agnostic coun terfactual lo cal explanations for time series classification. arXiv preprint arXiv:2402.01343 (2024) 20. Karlsson, I., Rebane, J., Papapetrou, P ., Gionis, A.: Lo cally and globally explain- able time series tw eaking. Knowledge and Information Systems (2020) 21. Keane, M.T., Kenny , E.M., Delaney , E., Smyth, B.: If only we had better counter- factual explanations: Five k ey deficits to rectify in the ev aluation of counterfactual xai techniques. International Joint Conference on Artificial Intelligence (2021) 22. Keane, M.T., Sm yth, B.: Goo d counterfactuals and where to find them: A case- based tec hnique for generating count erfactuals for explainable AI (XAI). In: In ter- national Conference on Case-Based Reasoning (2020) 23. Labaien, J., Zugasti, E., Carlos, X.D.: Contrastiv e Explanations for a Deep Learn- ing Mo del on Time-Series Data. In: International Conference on Big Data Analytics and Knowledge Discov ery (2020) 24. Lang, J., Giese, M.A., Ilg, W., Otte, S.: Generating sparse coun terfactual explana- tions for multiv ariate time series. In: International Conference on Artificial Neural Net works (ICANN). Springer (2023) 25. Li, P ., Bahri, O., Boubrahimi, S.F., Hamdi, S.M.: SG-CF: Shap elet-Guided Coun- terfactual Explanation F or Time Series Classification. In: IEEE International Con- ference on Big Data (Big Data) (2022) 26. Li, P ., Bahri, O., Boubrahimi, S.F., Hamdi, S.M.: A ttention-based counterfactual explanation for multiv ariate time series. In: International Conference on Big Data Analytics and Knowledge Discov ery (2023) 27. Li, P ., Bahri, O., Boubrahimi, S.F., Hamdi, S.M.: Cels: Counterfactual explanations for time series data via learned saliency maps. In: IEEE International Conference on Big Data (Big Data). IEEE (2023) 28. Li, P ., Bahri, O., Boubrahimi, S.F., Hamdi, S .M.: M-cels: Counterfactual explana- tion for m ultiv ariate time series data guided b y learned saliency maps. In: IEEE In ternational Conference on Mac hine Learning and Applications (ICMLA) (2024) 29. Li, P ., Boubrahimi, S.F., Hamdi, S.M.: Motif-guided time series counterfactual explanations. In: International Conference on Pattern Recognition (ICPR) (2022) What-If Explanations Over Time 23 30. P aw elczyk, M., Bielawski, S., V an den Heuvel, J., Rich ter, T., Kasneci, G.: CARLA: A Python Library to Benchmark Algorithmic Recourse and Coun terfactual Ex- planation Algorithms. In: Conference on Neural Information Pro cessing Systems Datasets and Benchmarks T rac k (2021) 31. Refo yo, M., Luengo, D.: Multi-SpaCE: Multi-Ob jectiv e Subsequence-based Sparse Coun terfactual Explanations for Multiv ariate Time Series Classification. arXiv preprin t arXiv:2501.04009 (2024) 32. Refo yo, M., Luengo, D.: Sub-space: Subsequence-based sparse counterfactual expla- nations for time series classification problems. In: W orld Conference on Explainable Artificial Intelligence (2024) 33. Rudin, C.: Stop explaining black box machine learning mo dels for high stak es decisions and use in terpretable models instead. Nature Machine Intelligence (2019) 34. Sc hlegel, U., Keim, D.A.: Time Series Model A ttribution Visualizations as Ex- planations. In: W orkshop on TRust and EXp ertise in Visual Analytics (TREX) (2021) 35. Sc hlegel, U., Oelke, D., Keim, D.A., El-Assady , M.: Visual Explanations with A ttributions and Coun terfactuals on Time Series Classification. arXiv preprin t arXiv:2307.08494 (2023) 36. Sc hlegel, U., Rausc her, J., Keim, D.A.: Interactiv e Counterfactual Generation for Univ ariate Time Series. In: W orkshop on eXplainable Knowledge Discov ery in Data Mining (XKDD) (2024) 37. Sun, X., Aoki, R., Wilson, K.H.: Counterfactual explanations for multiv ariate time- series without training datasets. arXiv preprint arXiv:2405.18563 (2024) 38. Theissler, A., Spinnato, F., Schlegel, U., Guidotti, R.: Explainable AI for time series classification: a review, taxonomy and research directions. IEEE A ccess (2022) 39. V an Loov eren, A., Klaise, J., V acan ti, G., Cobb, O.: Conditional generative mo dels for counterfactual explanations. arXiv preprin t arXiv:2101.10123 (2021) 40. W ach ter, S., Mittelstadt, B., Russell, C.: Counterfactual explanations without op ening the black box: Automated decisions and the GDPR. Harv. JL & T ec h. (2017) 41. W ang, Z., Samsten, I., Miliou, I., Mo c haourab, R., Papapetrou, P .: Glacier: guided lo cally constrained coun terfactual explanations for time series classification. Ma- c hine Learning (2024) 42. W ang, Z., Samsten, I., P apap etrou, P .: Counterfactual explanations for surviv al prediction of cardio v ascular ICU patients. In: International conference on artificial in telligence in medicine (2021) 43. Y an, J., W ang, H.: Self-interpretable time series prediction with coun terfactual explanations. In: International Conference on Machine Learning (ICML) (2023) 24 Sc hlegel et al. Method Y ear Data Category Model access What it changes Typical strengths T ypical limitations W ac hter et al. 2017 U/M Optimization- based Gradients / scores Poin twise input values Low-distance counterfactuals; simple and efficient Diffuse temporal changes; lo w interpretabilit y CoMTE 2021 M Optimization- based Black-box Whole channels or channel segments Identifies relevan t v ariables in multiv ariate data Coarse temp oral granularit y TS-T w eaking 2020 U Optimization- based Black-box Shapelet-aligned segments and p oin ts Actionable tw eaks with lo cal and global transformation rules Restricted to random shap elet forests; NP-hard search re- quires heuristics TSCF 2024 U/M Optimization- based Black-box / gradi- ents Input v alues with temp oral constraints Flexible framework; customiz- able ob jectives Depends on hand-crafted con- straints MOC 2020 U/M Evolutionary Black-box Flexible (p oin ts or segments) Multi-ob jective trade-offs; di- verse solutions High computational cost TSEvo 2022 U/M Evolutionary Black-box Poin ts and/or segments Sparse, diverse counterfactuals Slow for long time series Sub-SpaCE 2023 U Evolutionary Black-box F ew contiguous subsequences High temporal compactness Restricted solution space Multi-SpaCE 2024 M Evolutionary Black-box Multiv ariate subsequences Sparse and interpretable multi- v ariate CF s Computationally exp ensiv e Native Guide (NG-CF) 2021 U Instance-based Black-box Local subsequence replace- ment Highly realistic; data-grounded F ails without suitable neigh- bors CELS 2023 U Instance-based Blac k-b o x Salient time p oin ts T argeted edits via learned saliency Sensitive to saliency noise M-CELS 2024 M Instance-based Black-box Salient multiv ariate regions Handles m ultivariate imp or- tance Requires stable saliency learn- ing AB-CF 2023 M Instance-based Model-internal (at- tention) Atten tion-weigh ted regions Uses model’s internal reasoning Mo del-dependent Latent-CF 2020 U/M Latent space Enco der–decoder Latent representations Manifold-aware counterfactu- als Latent variables lack semantics CGM 2021 U/M Latent space Generative mo del Generated latent samples High realism and diversit y Requires training generative models LASTS 2020 U/M Latent space Surrogate mo del Latent surrogate features Model-agnostic explanations Approximation error GLACIER 2024 U/M Latent space Enco der–decoder Lo cally constrained latent ed- its Realistic, smooth coun terfactu- als Additional optimization over- head CounTS 2023 U/M Latent space Mo del-based Mutable latent factors F easibilit y-aware explanations Strong modeling assumptions SG-CF 2022 U/M Segment-based Black-box Shapelet subsequences Pattern-lev el interpretabilit y Shapelet mining ov erhead SETS 2022 U/M Segment-based Black-box Shapelet-based segments Efficient and interpretable Dep enden t on shap elet quality DisCOX 2024 U/M Segment-based Black-box Anomalous segments Clear explanation for anoma- lies Limited to discord-driven deci- sions CFW oT 2024 M Segment-based Black-box Subsequences W orks without training data Limited optimization control TS-CEM 2020 U/M Segment-based Black-box / gradi- ents Discriminative temp oral seg- ments (PPs/PNs) CEM-based con trastive, segment-lev el explanations Assumes CEM-style optimiza- tion; less suited to highly dif- fuse decisions MG-CF 2022 U/M Hybrid Black-box Motif subsequences Combines realism and sparsity Motif extraction required SP AR CE 2022 M Hybrid Black-box Structured sparse features Actionable recourse fo cus Complex constraint design T eR CE 2022 M Hybrid Rule-based Symbolic temp oral rules Human-readable explanations Lo wer fidelity to complex mo d- els Time-CF 2024 U/M Hybrid Generator + classi- fier Generated sequences / seg- ments High realism via GANs T raining instability T able 3. Comprehensive ov erview of counterfactual explanation metho ds for time series classification and beyond. U/M denotes univ ariate (U) or multiv ariate (M) data. See the other table for references.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment