Exploring Student Perception on Gen AI Adoption in Higher Education: A Descriptive Study

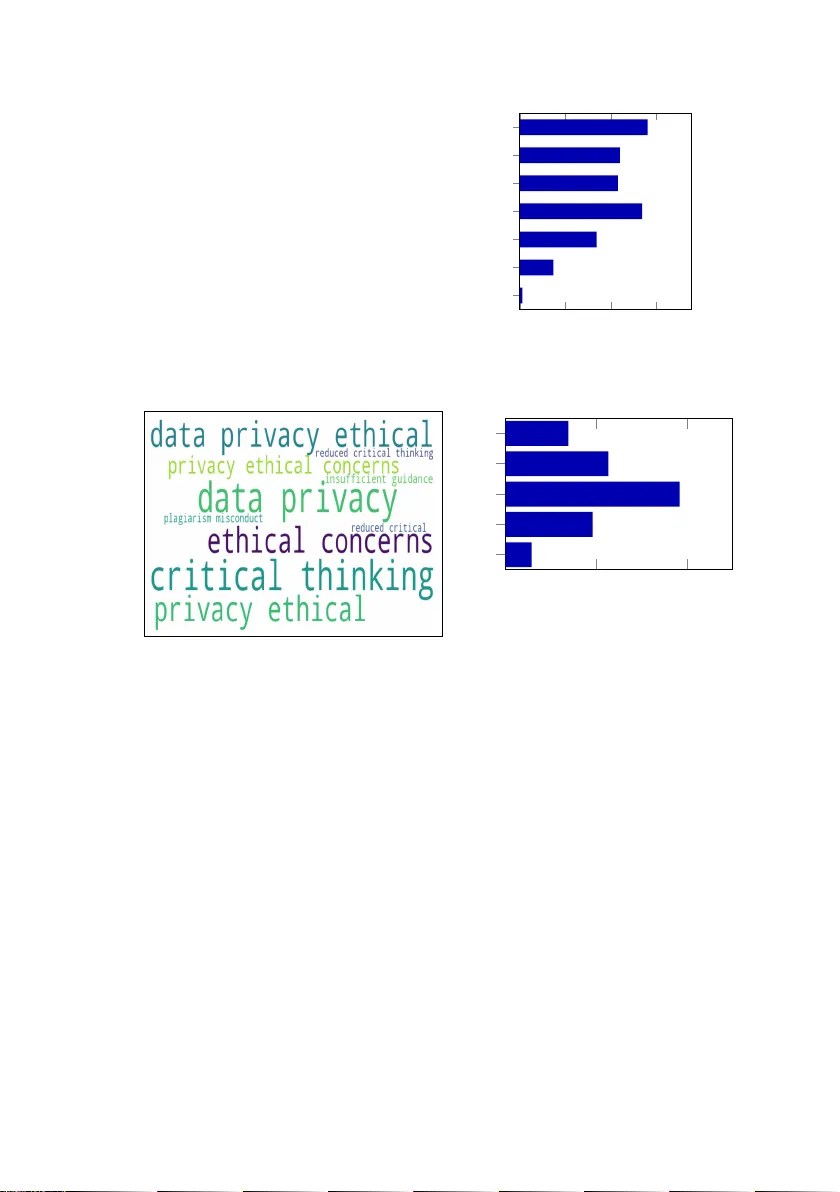

The rapid proliferation of Generative Artificial Intelligence (GenAI) is reshaping pedagogical practices and assessment models in higher education. While institutional and educator perspectives on GenAI integration are increasingly documented, the st…

Authors: Harpreet Singh, Jaspreet Singh, Satwant Singh