Let the Agent Steer: Closed-Loop Ranking Optimization via Influence Exchange

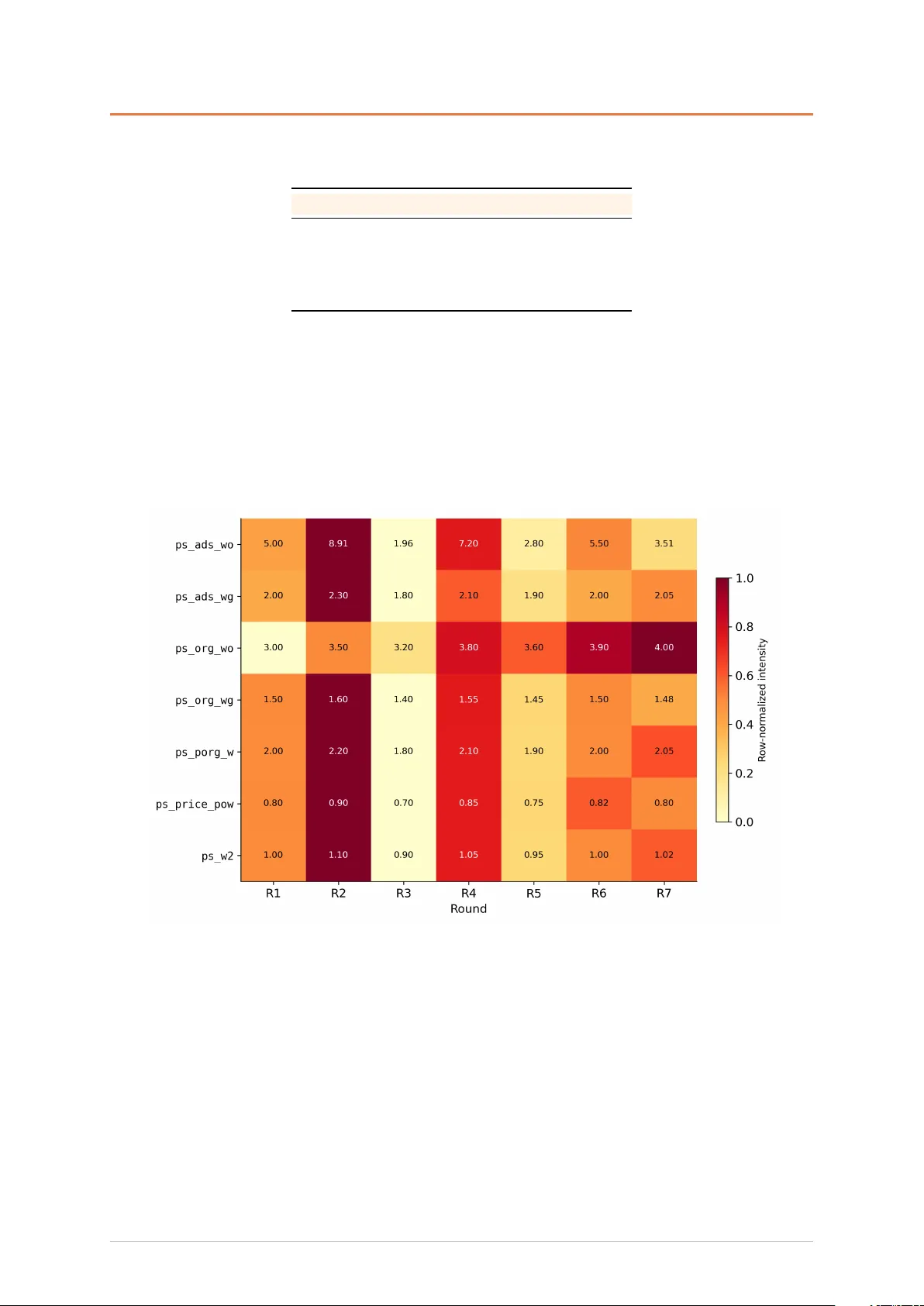

Recommendation ranking is fundamentally an influence allocation problem: a sorting formula distributes ranking influence among competing factors, and the business outcome depends on finding the optimal "exchange rates" among them. However, offline pr…

Authors: Yin Cheng, Liao Zhou, Xiyu Liang