CrossHGL: A Text-Free Foundation Model for Cross-Domain Heterogeneous Graph Learning

Heterogeneous graph representation learning (HGRL) is essential for modeling complex systems with diverse node and edge types. However, most existing methods are limited to closed-world settings with shared schemas and feature spaces, hindering cross…

Authors: Xuanze Chen, Jiajun Zhou, Yadong Li

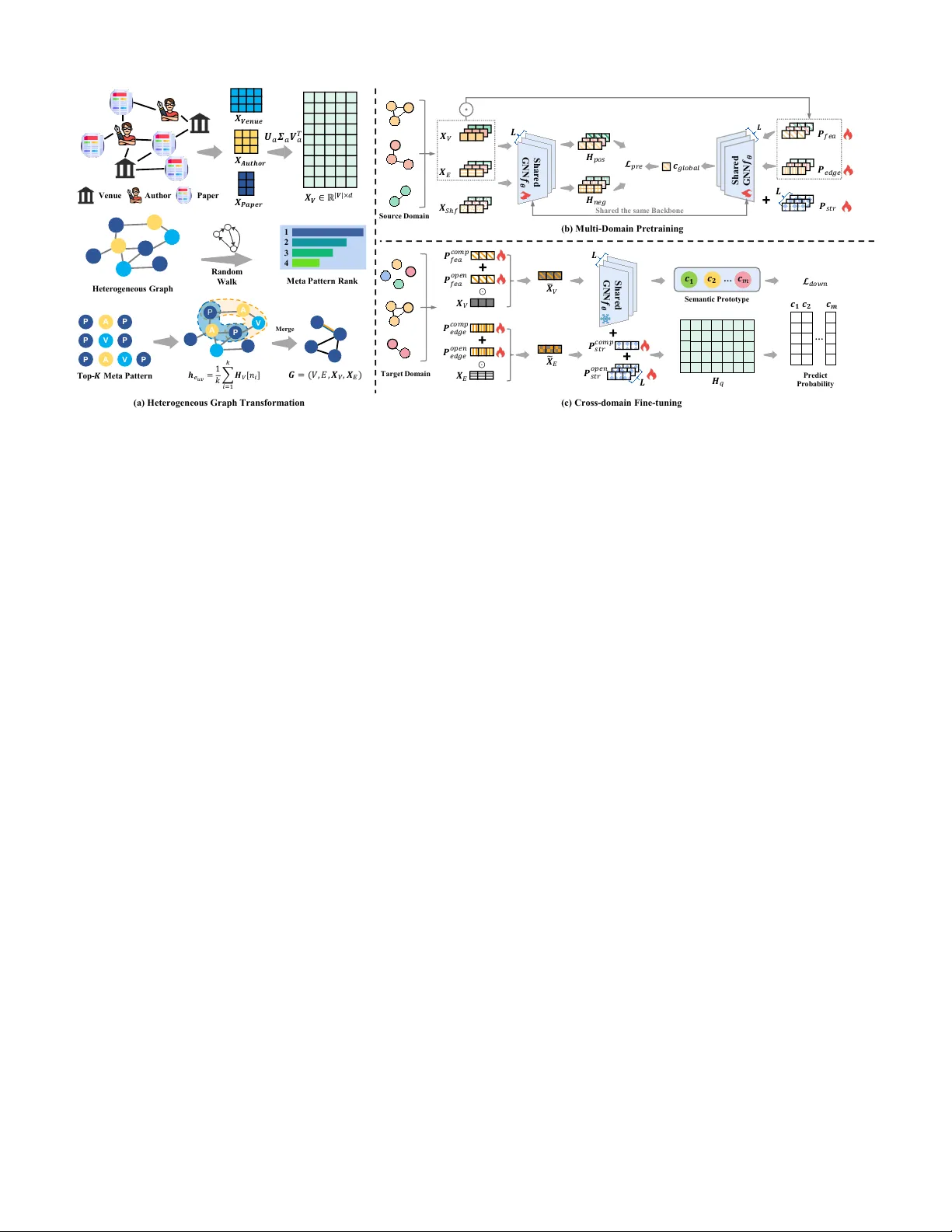

JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 1 CrossHGL: A T e xt-Free F oundation Model for Cross-Domain Heterogeneous Graph Learning Xuanze Chen, Jiajun Zhou, Y adong Li, Shanqing Y u, Qi Xuan, Senior Member , IEEE Abstract —Heterogeneous graph representation learning has attracted incr easing attention, as heterogeneous graphs can model complex systems with div erse node and edge types. Despite recent progr ess, most existing methods ha ve been dev eloped in closed- world settings, in which the training and testing data share similar graph schemas and feature spaces, thereby limiting their cross-domain generalization. Recent graph foundation models hav e impro ved transferability; howe ver , existing appr oaches either focus on homogeneous graphs, r ely on domain-specific heterogeneous schemas, or depend on rich textual attributes for semantic alignment. Consequently , cross-d omain heterogeneous graph learning in text-free and few-shot settings remains insuffi- ciently explor ed. T o address this problem, we propose CrossHGL , a foundation framework that explicitly preser ves and transfers multi-relational structural semantics without relying on external textual supervision. Specifically , a semantic-pr eserving graph transformation strategy is designed to homogenize heterogeneous graphs while encoding heterogeneous interaction semantics into edge features. Based on the transformed graph, a prompt- aware multi-domain pre-training framework with a T ri-Prompt mechanism captur es transferable knowledge from featur e, edge, and structure perspecti ves through self-supervised graph con- trastive learning. For target-domain adaptation, we further develop a parameter -efficient fine-tuning strategy that freezes the pre-trained backbone and performs few-shot classification via prompt composition and prototypical learning. Experiments on downstream node-level and graph-le vel tasks show that CrossHGL consistently outperforms representati ve pr e-training & fine-tuning baselines, yielding a verage r elative improvements of 25.1% and 7.6% in Micr o-F1 f or node classification and graph classification, respectiv ely , while remaining competitive under more challenging feature-degenerated settings. Index T erms —Graph Neural Networks, Foundation Model, Heterogeneous, F ew-shot, Cross-domain I . I N T R O D U C T I O N Heterogeneous graphs, characterized by div erse types of nodes and edges, ha ve become an important data structure for modeling complex interactiv e systems in the real world [1]– [4]. Over the past few years, Heterogeneous Graph Neural This work was supported in part by National Natural Science Foundation of China (No. 62503423), in part by the National K ey Research and De velopment Program of China (No. 2025YF A1510900), in part by the Ke y Research and Dev elopment Program of Zhejiang Province (No. 2026C02A1233), in part by the Y angtze River Delta Science and T echnology Innovation Community Joint Research Project (No. 2026ZY03003, No. 2025CSJGG01000). (Correspond- ing authors: Jiajun Zhou.) Xuanze Chen and Y adong Li are with the Institute of Cyberspace Security , Zhejiang University of T echnology , Hangzhou 310023, China, and also with the Binjiang Cyberspace Security Institute of ZJUT , Hangzhou, 310056, China (e-mail: chenxuanze@zjut.edu.cn). Jiajun Zhou, Shanqing Y u and Qi Xuan are with the Institute of Cyberspace Security , Zhejiang Univ ersity of T echnology , Hangzhou 310023, China, with the Binjiang Cyberspace Security Institute of ZJUT , Hangzhou, 310056, China, and with the Soo var T echnologies Co., Ltd., Hangzhou 310056, China (e-mail: jjzhou@zjut.edu.cn). Schem a Mi sm at ch F eat ure Mi sm at ch R el at i on Mi sm at ch T r a n s f e r C h a l l e n g e s So urce Do m a ins Ve n u e P ap e r Au t h o r M o vie Ac t o r Dir e c t o r … Fig. 1. Challenges in cross-domain heterogeneous pre-training. Networks (HGNNs) ha ve achie ved strong performance in learning e xpressive node and graph representations [5], [6]. Despite their effecti veness, conv entional HGNNs inherently operate under a closed-world assumption, meaning they re- quire the training and testing data to share strictly identical graph schemas and feature spaces, which substantially limits their generalization to unseen domains [7]–[9]. T o alle viate the hea vy reliance on massi ve labeled data, the pre-training and fine-tuning paradigm has recently stimulated the dev elopment of graph foundation models [10]–[12]. Ex- isting studies hav e advanced along se veral main directions, including foundation models for homogeneous graphs [13], [14], foundation models for heterogeneous graphs within spe- cific domains [15], [16], and Large Language Model (LLM) enhanced heterogeneous graph foundation models [17]–[19]. Howe ver , extending foundation models to cross-domain het- erogeneous graphs without external text assistance remains challenging. A major difficulty lies in the severe heterogeneity shift across domains, where graph schemas, interaction pat- terns, and feature dimensions may differ substantially [20]. Many existing heterogeneous foundation models are still tightly coupled with specific meta-paths or predefined relation types [21], which limits their applicability in more general cross-domain settings. As illustrated in Fig. 1, when a model is pre-trained across multiple source heterogeneous graphs, schema mismatch, feature mismatch, and relation mismatch JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 2 naturally arise across domains, making direct cross-domain transfer considerably more dif ficult. Recently , se veral studies ha ve attempted to unify cross- domain heterogeneous graphs by inte grating LLMs [17], [18]. By aligning ra w data into a shared textual semantic space, these LLM-enhanced models can alleviate structural mismatches across domains. Ne vertheless, their effecti veness fundamentally depends on the av ailability of rich textual at- tributes. In many safety-critical and priv acy-sensiti ve domains, such as financial transaction monitoring [22], network anomaly detection [23] and molecular property prediction [24] graph features are purely numerical, cate gorical, or anonymized, resulting in a text-free setting. In such scenarios, LLM- based semantic alignment is no longer applicable. Moreover , although te xt-free cross-domain foundation models have been explored for homogeneous graphs [25], [26], directly applying them to heterogeneous graphs usually only aligns feature spaces and simplified neighborhood structures, while failing to adequately preserv e the rich multi-relational semantics embedded in heterogeneous interactions. Consequently , robust knowledge transfer for text-free heterogeneous graphs under extreme fe w-shot constraints remains under-e xplored. T o address these limitations, a novel and adaptable frame- work, denoted as Cr ossHGL , is proposed for text-free, fe w- shot, cross-domain heterogeneous graph learning. Rather than relying on external textual semantics or indiscriminately flat- tening network topologies, the proposed framework explicitly decouples and transfers the inherent structural and topological semantics within graphs. The overall architecture consists of three phases. First, a semantic-preserving graph transfor- mation strategy is designed to reduce heterogeneity shifts while retaining informative interaction patterns. This phase lev erages SVD-based feature alignment and automated meta- pattern mining to homogenize heterogeneous topologies while compressing multi-relational context into semantics-enriched edges. Second, a prompt-aware multi-domain pre-training mechanism is introduced. By integrating a T ri-Prompt system, including feature, edge, and structure prompts, with a shared Graph Neural Network (GNN) backbone, the frame work captures transferable representations through self-supervised graph contrastive learning. Finally , a parameter-ef ficient fine- tuning strategy is deployed for the target domain. By freezing the pre-trained backbone, the framework employs an attention- based prompt composition to adapt prior knowledge to no vel domains, enabling fe w-shot classification via non-parametric prototypical networks. The primary contributions of this work are consolidated as follo ws: • It proposes Cr ossHGL , a foundation framew ork for text- free, few-shot, and cross-domain learning on heterogeneous graphs, av oiding the reliance on LLMs and reducing the semantic loss caused by direct topology flattening. • A semantic-preserving graph transformation strategy is in- troduced, which couples SVD-based feature alignment with automated meta-pattern mining to unify disparate graph schemas into semantics-enriched homogeneous topologies. • A parameter -efficient Tri-Prompt architecture is devised to decouple multi-dimensional graph semantics during pre- training, alongside an attention-based prompt composition mechanism that enables few-shot adaptation on a frozen GNN backbone. Extensiv e experiments on diverse real- world heterogeneous graph datasets demonstrate the effec- tiv eness and transferability of the proposed framework. I I . R E L AT E D W O R K A. F oundation Models for Homog eneous Graphs Recent years hav e witnessed the rapid de velopment of graph foundation models on homogeneous graphs, where pre-training and prompt-based adaptation hav e become two representativ e paradigms. Early studies such as GPPT [10] and GraphPrompt [11] show that graph prompts can effec- tiv ely bridge pre-training and do wnstream adaptation, thereby improving transferability under limited supervision. Building on this line, more recent works, such as GCOPE [25] and SAMGPT [26], further explore cross-graph or cross-domain generalization, demonstrating the promise of foundation-style learning be yond a single graph domain. Nev ertheless, these methods are primarily designed for homogeneous graphs, where node and edge semantics are relatively unified. As a result, they cannot be directly extended to heterogeneous graphs, whose semantic complexity arises from div erse node types, relation types, and interaction patterns. B. F oundation Models for Heter og eneous Graphs T o better capture heterogeneous semantics, recent studies hav e be gun to in vestigate foundation models tailored for het- erogeneous graphs. Representative methods such as HePa [15] and HetGPT [27] introduce heterogeneous-a ware pre-training strategies, relation-sensitive objectives, or schema-aware mod- eling mechanisms to learn transferable representations from complex multi-type graphs. In addition, HGPrompt [16] fur- ther shows that prompt-based adaptation can also be extended to heterogeneous settings, enabling more flexible do wnstream transfer . Ho wev er , most of these approaches are still dev eloped under fixed-schema or in-domain settings, where the relation types and semantic structures remain largely stable across training and testing. Consequently , although they are more expressi ve than homogeneous graph foundation models, they are not well suited for cross-domain heterogeneous transfer, where graph schemas, feature spaces, and structural semantics may differ substantially across domains. C. LLM-enhanced Heter ogeneous Graph F oundation Models Another emerging direction incorporates large language models into heterogeneous graph learning. Methods such as HiGPT [17] and LMCH [18] leverage textual descriptions and external semantic kno wledge to align heterogeneous graphs from different domains into a shared semantic space. This strategy provides a promising way to mitigate schema mis- match and improve cross-domain transferability . Nevertheless, the effecti veness of these models fundamentally depends on the av ailability of rich textual attributes. In many practical applications, such as financial transaction graphs, network traffic graphs, and sensor interaction graphs, node and edge attributes are numerical, cate gorical, or anon ymized rather JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 3 than textual. In such text-free settings, LLM-based semantic alignment becomes inapplicable. Therefore, existing LLM- enhanced heterogeneous graph foundation models still leave a critical gap in text-free cross-domain heterogeneous learning. Existing studies hav e advanced graph foundation models along three major directions, namely homogeneous graph foundation models, heterogeneous graph foundation models, and LLM-enhanced heterogeneous graph foundation models. Howe ver , homogeneous approaches fail to preserve heteroge- neous semantics, heterogeneous approaches are often limited to fixed-schema settings, and LLM-based approaches rely heavily on textual information. Therefore, a unified frame- work for text-free, few-shot, cross-domain learning on het- erogeneous graphs remains unaddressed. This gap motiv ates Cr ossHGL , which preserves and transfers heterogeneous struc- tural semantics without relying on external textual supervision. I I I . P R E L I M I N A R I E S A. Heter ogeneous and Homogeneous Gr aphs Heterogeneous graphs possess multiple types of nodes and edges, enabling them to model complex interacti ve systems in the real world, which distinguishes them from traditional homogeneous graphs that contain only a single node and edge type. F ormally , we define a heterogeneous graph as G = ( V , E , A , R , ϕ, φ, X V , X E ) , where V and E denote the sets of nodes and edges, respecti vely; A and R represent the sets of node types and edge types. Each node v ∈ V is associated with a specific node type via a mapping function ϕ : V → A . Similarly , each edge e ∈ E is associated with an edge type via φ : E → R . A key characteristic of a heterogeneous graph is that |A| + |R| > 2 , meaning there are at least two types of nodes or edges. W e denote the node feature set as X V = { X a ∈ R |V a |× d a | a ∈ A} , where V a = { v ∈ V | ϕ ( v ) = a } is the set of nodes of type a , X a is the original feature matrix for these nodes, and d a is the feature dimension for type a . The edge feature set is X E = { X r ∈ R |E r |× d r | r ∈ R} , where X r represents the feature matrix for edges of type r . T o address the structural mismatch issues caused by het- erogeneity , we can transform the heterogeneous graph into a homogeneous counterpart. In this context, the homogeneous graph not only implies uniform node and edge types but also dictates that all nodes and edges have been projected into a unified latent feature space. W e define the transformed homogeneous graph as G = ( V , E , A , X V , X E ) . Here, V and E denote the transformed node and edge sets, respec- tiv ely , and A is the adjacency matrix describing the graph connectivity . T o resolv e the feature dimension discrepancy ( d a ) across dif ferent domains and node types, we employ Singular V alue Decomposition (SVD) to reduce and align the original features, obtaining the aligned node feature matrix X V ∈ R | V |× d . The aligned edge feature matrix is denoted as X E ∈ R | E |× f . Importantly , we preserv e the semantic information from the original heterogeneous graph in the form of edges; thus, rich semantic features are encapsulated within the edges of the transformed homogeneous graph. B. Graph Encoder W e adopt a Graph Neural Network (GNN) based on the message-passing mechanism as our backbone encoder . Specif- ically , we utilize a uni versal graph encoder f θ , parameterized by θ , to learn high-order representations of nodes. The com- putation entails stacking multiple message-passing layers. Let h ( l ) v denote the hidden embedding of node v at the l -th layer . The update process is formulated as: h ( l ) v = Aggr h ( l − 1) v , n h ( l − 1) u , e uv : u ∈ N v o ; θ ( l ) (1) where N v denotes the set of neighbors for node v , and e uv represents the semantic edge feature connecting nodes u and v (which inherently corresponds to a row vector in X E ). This edge feature explicitly participates in the aggregation process, ensuring that the model le verages the original heterogeneous information preserved on the edges. The function Aggr ( · ; θ ( l ) ) represents the message-passing and aggregation function at the l -th layer , gov erned by the learnable parameters θ ( l ) ∈ θ . C. T arg et Domain and F ew-shot Classification T ask In cross-domain transfer learning, we assume the model is pre-trained on multiple source domains containing ab undant unlabeled data. Following pre-training, our objecti ve is to transfer the extracted uni versal knowledge to a target graph G T with a different data distribution. T o enhance frame work universality , we model do wnstream node classification and graph classification tasks as instance- level classification . Specifically , an “instance” can refer to a single node in the target graph G T or a local subgraph obtained through multi-hop sampling centered on a specific node. For the fe w-shot instance classification task on the tar get domain, we assume the target dataset comprises | C | distinct instance cate gories, forming a label space Y = { 1 , 2 , ..., | C |} . Under the stringent few-shot constraint, we can only access a small number of labeled instances, K , for each category m ∈ Y in the tar get domain. W e define this collection of | C | × K labeled samples as the training set S for the few-shot fine- tuning phase. The ultimate task of the model is to adapt the prior prompts using this extremely limited labeled set S , and to accurately predict the cate gories of the remaining unknown instances in the tar get dataset, which are strictly partitioned into a validation set and a test set. I V . M E T H O D This section presents the technical details of Cr ossHGL , which consists of three phases: semantic-preserving graph transformation, multi-domain semantic graph pre-training, and cross-domain semantic fine-tuning. First, heterogeneous graphs are con verted into unified homogeneous graphs through SVD-based feature alignment and data-driven meta- pattern mining, with heterogeneous interactions preserved in semantics-enriched edges. Next, a Tri-Prompt mechanism, including feature, edge, and structure prompts, is integrated with a shared GNN backbone for self-supervised multi-domain pre-training under the Deep Graph Infomax (DGI) paradigm. Finally , for the target domain, the pre-trained backbone is JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 4 实验 - 方案 M e ta Pa tte r n Ra n k H e te r o g e n e o u s G r a p h V e n u e Pa p e r Au th o r Ra n d o m W a lk P A P V P A P P P V To p - M e ta Pa tte r n P A P A V M er ge 1 2 3 4 Sh a re d G NN Sh a re d G NN Sh a re d G NN Sh a re d G NN Sh a re d G NN Sh a re d G NN S h a r e d t h e sa m e B a c k b o n e S o u r c e D o m a i n ( a ) H et ero g eneo u s G ra p h T ra n s f o rm a t io n ( b) M ulti - Do m a in P re t ra ini ng ( c) Cro s s - d o m a in Fine - t u n in g Sh a re d G NN Sh a re d G NN Sh a re d G N N T a r g e t D o m a i n … S e m a n t i c Pr o t o t y p e Pr e d i c t Pr o b a b i l i t y … Fig. 2. Overvie w of the Cr ossHGL framework. The workflow consists of three phases: 1) Semantic-preserving graph transf ormation : each source and target heterogeneous graph is con verted into a unified homogeneous graph through SVD-based feature alignment and meta-pattern mining, while heterogeneous semantics are preserv ed in semantics-enriched edge features; 2) Multi-domain semantic graph pre-training : a T ri-Prompt mechanism with feature, edge, and structure prompts is integrated with a shared GNN backbone to capture transferable knowledge from feature, relation, and topology perspecti ves through self-supervised contrastive learning; 3) Cross-domain semantic fine-tuning : the pre-trained backbone is frozen, source-domain prompts are composed with target-specific open prompts for few-shot adaptation, and the adapted representations are used for prototype-based prediction on target-domain instances. frozen and lightweight prompt modules are adapted via prompt composition and prototype-based few-shot learning. The over - all architecture is illustrated in Fig. 2, and the complete cross- domain learning procedure is summarized in Algorithm 1. A. Semantic-Pr eserving Graph T ransformation T o address the issues of inconsistent node feature dimen- sions in heterogeneous graphs and the dif ficulty of directly adapting heterogeneous structures to a unified graph model, a semantic-preserving graph transformation strategy is proposed. This strategy maps a heterogeneous graph into a feature- aligned homogeneous graph G with semantics-enriched edges. The specific process in v olves the following three stages: 1) F eatur e Space Alignment: Nodes of different types in heterogeneous graphs typically possess varying feature dimen- sions, and some nodes may ev en lack features. T o eliminate this feature space heterogeneity , Singular V alue Decomposi- tion (SVD) is first employed to reduce the dimensionality and align the features across all node types. For each node type a ∈ A , given its raw feature matrix X a ∈ R |V a |× d a , SVD is performed as follows: X a ≈ U a Σ a V ⊤ a (2) Specifically , a unified target feature dimension d is predefined. T o deriv e the dense representations, the matrices U a and Σ a are truncated to retain only the top- d singular values and their corresponding left singular vectors, denoted as U a,d ∈ R |V a |× d and Σ a,d ∈ R d × d , respectiv ely . The dimension- reduced feature matrix ˜ X a for node type a is then computed by multiplying the truncated left singular matrix with the diagonal matrix of the top- d singular values: ˜ X a = U a,d Σ a,d (3) Prior to the SVD application, a pre-processing step is ex ecuted to address dimensional deficiencies. For node types completely lacking initial attributes, one-hot vectors are em- ployed for feature initialization. Additionally , in cases where the original feature dimension falls short of the target dimen- sion (i.e., d a < d ), zero-padding is applied directly to the raw feature matrix X a to explicitly e xtend its dimensionality to d . Ultimately , by unifying these pre-processed features through the aforementioned SVD and truncation, a global node feature matrix X V ∈ R | V |× d is constructed, ensuring strict dimensional consistency across all nodes in the graph. 2) Data-Driven Mining of Meta-P atterns: Unlike tradi- tional methods that rely on expert knowledge to predefine meta-paths, we adopt a data-driven approach to automatically mine high-frequency meta-patterns for the target node type. Specifically , we execute constrained random walks on the het- erogeneous graph. For each target-type node, walk sequences of length L are generated. Subsequently , a sliding window of size w is applied to these sequences to systematically e xtract local sub-paths. T o capture interaction patterns with closed- loop semantics, node type sequences within these windo ws where both the starting and ending node types correspond to v target are filtered out as candidate meta-patterns. These can- didates are denoted as P = { P 1 , P 2 , . . . , P m } , where P i rep- resents a specific sequence of node types (e.g., Paper -Author- Paper). Finally , the frequencies of all candidate patterns are counted, and the T op- K key meta-patterns are selected based on their occurrence. This process not only discov ers e xplicit relationships but also captures latent semantic dependencies. 3) Semantic Graph Homogenization: Guided by the mined meta-pattern set P , the target homogeneous graph G = ( V , E , A , X V , X E ) is constructed. Specifically , the node set V exclusi vely retains target-type nodes, defined as V = { v ∈ JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 5 Algorithm 1 Cross-domain Learning Procedure of Cr ossHGL 1: Input: Source heterogeneous graphs {G ( k ) } K k =1 , tar get heterogeneous graph G T , target support set S . 2: Output: Predictions for tar get-domain instances. 3: Initialization: Initialize a shared GNN backbone f θ and source-domain T ri-Prompts { P ( k ) fea , P ( k ) edge , P ( k ) str } K k =1 . 4: // Phase 1: Semantic-preserving graph transformation 5: for each graph G ∈ {G ( k ) } K k =1 ∪ {G T } do 6: Align type-specific node features X V into a unified feature space X V via SVD. 7: Mine the T op- K meta-pattern set P = { P 1 , . . . , P m } by constrained random w alks. 8: Construct the transformed homogeneous graph: G = ( V , E , A , X V , X E ) . 9: end for 10: // Phase 2: Multi-domain semantic graph pre-training 11: for each source domain k = 1 to K do 12: Apply P ( k ) all = { P ( k ) fea , P ( k ) edge , P ( k ) str } to the transformed source graph. 13: Construct positi ve, augmented, and negati ve vie ws: ( X V , A , X E ) , ( ˜ X V , ˜ A , ˜ X E ) , and ( X Shf , A , X E ) . 14: Obtain node representations H pos , H neg , and graph summary c global using f θ . 15: Update f θ , P ( k ) all by minimizing contrastive loss L pre . 16: end for 17: // Phase 3: Cross-domain semantic fine-tuning 18: Freeze the pre-trained backbone f θ . 19: Construct composed prompts P comp ∗ and initialize tar get- specific open prompts P open ∗ , where ∗ ∈ { fea , edge , str } . 20: Adapt target prompts on G T with the few-shot support set S and obtain target representations Z final . 21: Compute class prototypes c m from the adapted target representations. 22: Predict labels for target-domain instances via prototype matching p ( y = m | h q ) . 23: retur n T arget-domain predictions. V | ϕ ( v ) = v target } , with aligned features X V . For each meta- pattern P i ∈ P , a ne w edge ( u, v ) is established in E whenev er a matching path instance connects two distinct target nodes ( u, v ∈ V , u = v ) in the original heterogeneous graph. In densely connected heterogeneous networks, multiple path instances often concurrently connect the same pair of target nodes. Let I uv denote the set of all valid path instances connecting nodes u and v under the pattern set P . T o com- prehensiv ely preserve the rich semantic context during this topological transformation, the features of all intermediate nodes across all path instances in I uv are simultaneously aggregated to formulate the semantic edge feature v ector e uv : e uv = 1 |I uv | X p ∈I uv 1 k p k p X j =1 X V [ n ( p ) j ] (4) where k p represents the number of intermediate nodes in a specific path instance p , and n ( p ) j denotes the j -th intermediate node within that path. Subsequently , the collection of all ag- gregated edge vectors e uv constitutes the semantics-enriched edge feature matrix X E for the target homogeneous graph. Through the aforementioned process, the complex hetero- geneous graph topology is transformed into a homogeneous graph containing only a single node type b ut highly enriched edge features. This enables a univ ersal homogeneous graph encoder to efficiently process the graph structure while simul- taneously percei ving the complex semantic interactions of the original heterogeneous graph. B. Multi-Domain Semantic Graph Pre-tr aining T o enable Graph Neural Networks (GNNs) to learn uni ver- sal graph representations in an unsupervised setting and effec- tiv ely utilize the pre viously constructed homogeneous graphs rich in meta-pattern semantics, a T ri-Prompt Graph Contrasti ve Pre-training Framew ork is proposed. The core idea of this framew ork is to decouple the adaptation processes of node features, graph topologies, and edge semantics. By utilizing lightweight prompts to guide a shared GNN backbone, the model captures universal knowledge from div erse perspectives and optimizes it via Local-Global Mutual Information Maxi- mization in a self-supervised manner . 1) T ri-Pr ompt Design: As detailed in Section IV -A, the original heterogeneous graph is uniformly transformed into a target-node homogeneous graph G = ( V , E , A , X V , X E ) . Here, X V encapsulates the aligned node features, while X E acts as a rich semantic container for the intermediate heteroge- neous interactions. T o fully exploit and seamlessly decouple this multi-dimensional information without altering the pre- trained backbone, the T ri-Prompt module is designed. From a functional perspective, this framew ork operates sequentially across two highly synergistic phases: input-lev el semantic filtration and layer-le vel topological intervention. During the initial phase of input-level semantic filtration , both node features and edge semantics often carry univ ersal knowledge that may contain task-irrelev ant noise for a specific downstream domain. T o address this, a unified element-wise modulation strategy is employed to adaptively filter and align the input spaces. Specifically , the feature prompt P fea and the semantic edge prompt P edge act as learnable dynamic filters that smoothly project the initial features into a task-specific latent space prior to deep encoding: ˜ X V = X V ⊙ P fea , ˜ X E = X E ⊙ P edge (5) While the modulated node feature matrix ˜ X V directly serves as the initial hidden states for the GNN, the purified edge feature matrix ˜ X E requires further mapping to guide struc- tural routing. Because these edge features deeply compress heterogeneous meta-paths, they are subsequently transformed into scalar attention weights α uv via a dedicated edge encoder: α uv = σ ( MLP edge ( ˜ e uv )) , ∀ ( u, v ) ∈ E (6) By unifying the feature and edge prompts at the entry point of the network, this filtration phase ensures that the model selectiv ely acti vat es only the node attrib utes and interaction paths most critical to the current domain, effecti vely stripping away redundant background noise. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 6 Subsequent to the input space calibration, the framework ex ecutes a layer-level topological intervention dri ven by the structure prompt P str . Operating directly on the hidden layers of the GNN, this prompt deeply intervenes in the topology- aware mechanisms of message passing. The encoder modu- lated by the structure prompt is denoted as f ′ θ . For the hidden state matrix H ( l ) produced by the l -th GNN layer , the layer - specific structure prompt matrix P ( l ) str dynamically updates the node representations through residual modulation: Z ( l ) = Aggregate ( H ( l ) , A , α ) (7) H ( l +1) = ReLU (( Z ( l ) + H ( l ) ) ⊙ P ( l ) str ) (8) Through the integration of these three orthogonal prompts, the framew ork achiev es a highly expressi ve yet parameter -efficient adaptation, elegantly decoupling the initial semantic alignment from the deep structural aggreg ation. 2) Pr ompt-A war e Graph Contrastive Pre-tr aining: Having established the tri-prompt system, the Deep Graph Infomax (DGI) paradigm is adopted to ex ecute unified self-supervised pre-training. The entire pre-training pipeline fundamentally consists of three sequential stages: vie w construction, dual- path encoding, and discriminator optimization. T o construct the positive and negati ve sample pairs required for contrasti ve learning, specific augmentation strate gies [28] are applied in the topological and feature spaces. The positi ve view strictly retains the local structure and features of the orig- inal graph, denoted as the tuple ( X V , A , X E ) . Concurrently , an augmented view is generated by perturbing the topology through random edge dropping with probability p , yielding an augmented adjacency matrix ˜ A and a corresponding edge feature subset ˜ X E . T o establish a contrastiv e baseline, a negati ve vie w is constructed by randomly shuffling the node features along the node dimension. This shuffling operation deliberately breaks the joint correspondence between the graph topology and node attributes to introduce spurious node se- mantics, yielding the shuf fled feature matrix: X Shf = Shuffle ( X V ) (9) The negati ve view thereby preserves the original topology A alongside these corrupted features. During the encoding phase, the shared GNN backbone f θ , coupled with distinct prompt pathways, extracts three lev els of graph representations corresponding to the constructed vie ws. The positiv e anchor vie w is fed into the base backbone to acquire the genuine local context features H pos for all nodes: H pos = f θ ( X V , A , X E ) (10) Similarly , the negati ve view is input into the backbone to obtain the counterfeit node representation matrix H neg : H neg = f θ ( X Shf , A , X E ) (11) For the global graph summary , the augmented vie w is pro- cessed by the backbone equipped with the complete tri-prompt set, denoted collecti vely as P all = { P fea , P edge , P str } . A readout function R subsequently aggre gates all perturbed node representations to generate the graph-le vel global summary: c global = σ 1 | V | X i ∈ V h f θ ˜ X V , ˜ A , ˜ X E , P all i i ! (12) The pre-training optimization objective is to enable the global graph summary c global to accurately identify its gen- uine constituents (positi ve node vectors h pos ) and repel the misaligned counterfeit nodes (ne gativ e node vectors h neg ). T o this end, a bilinear discriminator D , parameterized by a weight matrix W , is i ntroduced to calculate the similarity scores between node-lev el representations and the global summary: D ( h , c ) = σ ( h ⊤ W c ) (13) The final pre-training loss function L pre is defined as a standard Binary Cross-Entropy (BCE) loss: L pre = − 1 | V | | V | X i =1 [log D ( h pos ,i , c global ) + log(1 − D ( h neg ,i , c global ))] (14) Throughout the multi-domain pre-training phase, the model shares the same GNN backbone parameters across K different source domain datasets, but independently optimizes a specific set of tri-prompts { P ( k ) fea , P ( k ) edge , P ( k ) str } K k =1 for each dataset. C. Cr oss-domain Semantic F ine-tuning Upon completion of the multi-domain pre-training, the shared GNN backbone f θ learns transferable graph representations, while a prior prompt kno wledge base { P ( k ) fea , P ( k ) edge , P ( k ) str } K k =1 is obtained from the source domains. For N -way K -shot cross-domain adaptation on the target domain, the backbone parameters are kept frozen, and a Tri- Prompt Composition and Decoupled Fusion Mechanism is introduced to adapt prior kno wledge to the ne w domain. 1) T ri-Pr ompt Composition Mechanism: For prompt mod- ules across all dimensions, a joint construction strate gy com- bining a composed prompt and an open prompt is adopted to balance prior kno wledge transfer with target domain adapta- tion. Specifically , to dynamically distill the universal kno wl- edge from the K source domains, a learnable attention v ector λ ∈ R K is introduced. The normalized attention weight w k for the k -th pre-trained prompt is computed via Softmax, yielding the composed prompt P comp ∗ for any specific prompt type ∗ ∈ { fea , edge , str } through a weighted summation: w ∗ k = exp( λ ∗ k ) P K j =1 exp( λ ∗ j ) , P comp ∗ = K X k =1 w ∗ k P ( k ) ∗ (15) Simultaneously , a task-specific open prompt P open ∗ is randomly initialized to absorb the specific distribution of the ne w target domain. During the forw ard pass of fine-tuning, the edge semantics are first adapti vely filtered. The updated edge feature matrix ˜ X E is jointly modulated by the composed edge prompt and the open edge prompt: ˜ X E = P comp edge ( X E ) + β · P open edge ( X E ) (16) where β is a learnable coefficient controlling the intensity of edge semantic fusion in the target domain. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 7 Follo wing the edge semantic modulation, the node repre- sentations are inferred through a decoupled dual-branch archi- tecture. In the feature-focused branch, the initial node features X V are mapped using the feature prompt, and combined with the purified semantic edge features ˜ X E as inputs to the frozen backbone f θ . This branch solely reshapes the e xternal feature space to extract the feature-driven embedding matrix Z fea , without injecting any additional structure prompts: ˜ X V = P comp fea ( X V ) + γ fea · P open fea ( X V ) (17) Z fea = f θ ( ˜ X V , A , ˜ X E ) (18) Parallel to the feature branch, the structure-focused branch aims to adjust the message passing preferences under the target domain’ s topology . Here, the original node features X V are kept unchanged, and the structure prompt P str is directly injected into the frozen GNN. The final structure- driv en embedding matrix Z str is inferred jointly by the prior composed structure prompt and the open structure prompt: Z str = f θ ( X V , A , ˜ X E ; P comp str ) + γ str · f θ ( X V , A , ˜ X E ; P open str ) (19) where γ str dynamically balances the prior topological rules with the no vel topological rules of the target domain. Finally , the comprehensiv e representations Z final is obtained through the weighted residual fusion of both branches: Z final = Z fea + α · Z str (20) where α is the branch balancing coefficient. 2) Pr ototype-based Classification and Robust Evaluation: T o enhance the univ ersality of the framework, the downstream tasks are uniformly modeled as instance-lev el classification problems, employing non-parametric Prototypical Networks to mitigate overfitting in few-shot scenarios. After obtaining the final node representations Z final , a unified instance-lev el embedding vector h i is constructed for each target sample i . For node classification tasks, the instance representation is directly deri ved from the final node embedding, such that h i = Z final [ i ] . Con versely , for graph or subgraph classi- fication tasks, a local subgraph G i is e xtracted via multi- hop sampling centered on the tar get node, and a readout function (e.g., mean pooling) aggregates the node represen- tations within the subgraph to yield the global representation h i = Readout ( { Z final [ j ] | j ∈ G i } ) . During the fe w-shot training phase, a support set S is constructed by randomly sampling K labeled instances for each category m . The semantic prototype vector c m for category m is then computed by averaging the embeddings of all instances belonging to the same class within S : c m = 1 K X i ∈S m h i (21) For any unknown query instance h q in the test set, the cosine similarity between its normalized embedding and each cate- gory prototype c m is calculated. This similarity is con verted into a prediction probability via the Softmax function: p ( y = m | h q ) = exp( sim ( h q , c m ) /τ ) P | C | j =1 exp( sim ( h q , c j ) /τ ) (22) The optimization objecti ve for fine-tuning minimizes the cross- entropy loss on the training set. Since the backbone network is completely frozen, only the lightweight prompt parameters are updated. T o eliminate the random bias introduced by few- shot sampling, an early stopping is employed on a validation set, and fine-tuning is independently repeated multiple times using different random seeds to ensure rob ust ev aluation. V . E V A L UAT I O N S A. Experimental Settings 1) Datasets: Extensive e xperiments are conducted on four widely used real-world heterogeneous graph benchmarks for cross-domain ev aluation: ACM, DBLP [29], IMDB [30], and Freebase [29]. During the feature initialization phase, any raw textual attributes associated with the nodes are directly pre-processed into pure numerical vectors. By intentionally stripping away all natural language semantics and precluding the use of LLM-based alignment, this setup forces the models to rely strictly on the inherent topological structures and relational meta-patterns for cross-domain transfer . T able I summarizes the basic statistics of the four hetero- geneous graph benchmarks. These datasets dif fer not only in graph scale, but also in schema comple xity , as reflected by the numbers of node types and edge types. Such di versity provides a suitable testbed for ev aluating the cross-domain transferability of heterogeneous graph foundation models. T ABLE I S U MM A RY O F T H E H E T E RO GE N E O US G RA P H D A T A S E TS U SE D IN O UR C RO S S - D O M AI N EV A L UATI O N . Dataset #Nodes #Node #Edges #Edge #Classes T ypes T ypes A CM 10,942 4 547,872 8 3 DBLP 26,128 4 239,566 6 4 IMDB 11,616 3 34,212 4 3 Freebase 180,098 8 1,057,688 36 7 2) Baselines: T o comprehensi vely demonstrate the superi- ority of CrossHGL , it is e valuated against ten representati ve state-of-the-art baselines, which are systematically catego- rized into four groups: (1) Homogeneous GNNs: GCN [31], GraphSA GE [32], and GA T [33], which inherently flatten the heterogeneous topology to perform message passing; (2) Heterogeneous GNNs: HAN [34] and Simple-HGN [35], which are representativ e HGNNs that account for hetero- geneity through neighborhood aggregation guided by meta- paths or edge-type-specific attention; (3) Graph Contrastiv e Learning: GraphCL [36] and DGI [37], which are repre- sentativ e self-supervised pre-training methods designed for univ ersal graph representation learning; (4) Graph F ounda- tion Models: GPPT [10], GraphPrompt [11], GCOPE [25], and SAMGPT [26], which encompass recent explorations in prompt-based graph learning. 3) Evaluation Pr otocol and F ew-Shot Settings: T o ev aluate cross-domain transferability , a Lea ve-One-Out pre-training and fine-tuning protocol is adopted [25], [26]. Specifically , one JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 8 T ABLE II F E W - S H OT N O D E C L AS S I FI CAT IO N R E S U L T S : AVE R AG E M I C RO - F1 A ND M AC RO - F1 ( %) ± S TA ND AR D DE V I A T I ON AC RO S S F O UR C RO SS - D O MA I N TA S KS . B O LD FAC E L E TT E R S M A R K T HE B ES T PE R F O RM A N C E , W HI L E U N D ER L I N ED L ET T E R S I ND I C A T E T H E S E C ON D BE S T . Paradigm Category Method A CM DBLP IMDB Freebase Micro-F1 Macro-F1 Micro-F1 Macro-F1 Micro-F1 Macro-F1 Micro-F1 Macro-F1 Supervised Learning Heterogeneous HAN 52.66 ± 3.90 49.20 ± 6.99 41.72 ± 5.52 36.68 ± 6.84 37.25 ± 2.15 27.68 ± 5.49 40.12 ± 7.39 8.01 ± 1.29 Simple-HGN 48.15 ± 5.44 45.15 ± 3.67 37.53 ± 2.16 33.26 ± 4.16 35.14 ± 2.42 24.15 ± 4.31 37.73 ± 4.27 7.52 ± 2.32 Homogeneous GCN 61.02 ± 10.23 56.19 ± 12.92 37.95 ± 4.98 34.84 ± 6.16 37.09 ± 2.06 27.20 ± 4.92 22.42 ± 0.19 4.02 ± 0.21 SA GE 47.83 ± 5.38 46.56 ± 6.10 44.01 ± 5.34 41.42 ± 5.82 37.67 ± 1.60 30.36 ± 4.15 29.21 ± 0.15 7.45 ± 0.03 GA T 54.10 ± 6.96 46.75 ± 8.28 38.50 ± 4.47 33.91 ± 5.20 37.24 ± 2.05 31.49 ± 3.81 25.95 ± 0.14 5.30 ± 0.02 Pre-training & Fine-tuning Contrastiv e GraphCL 43.20 ± 6.25 37.12 ± 8.78 36.73 ± 6.74 33.39 ± 7.21 33.88 ± 3.17 27.42 ± 4.51 12.51 ± 5.16 10.51 ± 2.36 DGI 41.16 ± 4.31 34.51 ± 4.15 38.22 ± 2.46 34.21 ± 4.42 34.02 ± 4.21 27.93 ± 3.25 12.87 ± 3.53 11.23 ± 5.32 Foundation Models GraphPrompt 33.15 ± 6.52 16.24 ± 9.12 30.39 ± 6.24 11.65 ± 4.07 27.11 ± 1.35 14.22 ± 3.14 12.24 ± 7.76 11.42 ± 4.94 GCOPE 34.12 ± 1.24 27.05 ± 4.46 39.91 ± 6.74 35.90 ± 8.27 35.98 ± 1.66 25.43 ± 4.62 14.42 ± 3.21 12.41 ± 5.25 SAMGPT 50.23 ± 9.57 46.12 ± 11.89 38.48 ± 6.40 36.40 ± 6.94 34.56 ± 2.47 31.39 ± 3.33 14.68 ± 5.72 10.83 ± 2.87 Ours CrossHGL 66.41 ± 5.01 64.99 ± 5.94 85.18 ± 9.45 83.15 ± 11.43 37.84 ± 5.90 31.45 ± 7.07 17.52 ± 4.14 15.03 ± 3.17 T ABLE III F E W - S H OT G R A PH C LA S S I FIC ATI O N R E S ULT S . A V E R AG E M I CR O - F 1 A N D M AC R O - F 1 ( % ) ± S TAN D AR D DE V I A T IO N AR E RE P O RT ED . B O L D F AC E L E TT E R S M A RK T HE B ES T PE R F O RM A N C E , W HI L E U N D ER L I N ED L ET T E RS I ND I C A T E T H E S E C ON D B E S T . Paradigm Category Method A CM DBLP IMDB Freebase Micro-F1 Macro-F1 Micro-F1 Macro-F1 Micro-F1 Macro-F1 Micro-F1 Macro-F1 Supervised Learning Heterogeneous HAN 47.47 ± 8.42 43.02 ± 10.98 49.13 ± 8.66 45.68 ± 9.38 34.20 ± 2.99 26.48 ± 6.38 34.13 ± 7.39 7.67 ± 2.48 Simple-HGN 44.26 ± 5.16 40.02 ± 7.54 46.12 ± 6.26 43.15 ± 5.21 35.16 ± 3.15 27.34 ± 6.26 31.73 ± 4.27 5.32 ± 1.29 Homogeneous GCN 54.68 ± 9.08 48.26 ± 11.79 43.38 ± 6.28 37.64 ± 7.06 35.02 ± 2.85 27.36 ± 4.89 20.04 ± 0.41 9.40 ± 0.05 SA GE 42.63 ± 5.59 36.44 ± 8.02 47.14 ± 8.32 43.64 ± 9.28 35.24 ± 2.52 28.80 ± 5.35 26.94 ± 4.41 9.87 ± 2.60 GA T 42.61 ± 4.17 34.01 ± 5.57 44.67 ± 7.53 40.61 ± 8.80 34.89 ± 2.63 26.89 ± 5.65 21.19 ± 5.75 8.78 ± 0.94 Pre-training & Fine-tuning Contrastiv e GraphCL 43.87 ± 7.13 37.56 ± 9.99 39.98 ± 8.22 31.20 ± 8.69 35.04 ± 1.79 26.38 ± 5.45 12.31 ± 5.76 7.92 ± 2.35 DGI 40.21 ± 4.26 34.66 ± 6.48 36.41 ± 6.51 28.12 ± 4.21 34.15 ± 2.16 27.33 ± 6.15 11.24 ± 5.53 7.43 ± 1.89 Foundation Models GraphPrompt 31.16 ± 5.16 14.15 ± 6.16 28.56 ± 4.16 11.65 ± 4.07 24.16 ± 4.15 13.82 ± 3.25 10.83 ± 5.46 9.39 ± 4.75 GCOPE 33.15 ± 5.26 25.16 ± 3.16 35.43 ± 3.17 35.90 ± 8.27 34.17 ± 2.83 24.42 ± 3.62 11.42 ± 4.16 10.11 ± 4.36 SAMGPT 42.88 ± 10.48 37.17 ± 10.69 40.28 ± 7.82 33.95 ± 9.27 34.58 ± 3.42 27.77 ± 5.41 13.58 ± 4.51 9.74 ± 2.31 Ours CrossHGL 57.86 ± 11.26 52.16 ± 14.32 55.72 ± 14.69 49.19 ± 15.06 36.01 ± 4.81 29.13 ± 6.23 17.16 ± 9.43 11.47 ± 3.36 dataset is designated exclusi vely as the target domain, while the remaining datasets serve as source domains for multi- domain pre-training. For downstream few-shot adaptation, K labeled nodes per category ( | C | ) are randomly sampled to form the support set S ( |S | = | C | × K ), with the remaining nodes ev enly bisected into validation ( V ) and test ( T ) sets. During this phase, the pre-trained backbone is entirely frozen, and only the lightweight prompts are updated. All baselines are ev aluated using the same few-shot data partitioning strategy . For the homogeneous and heterogeneous GNNs, training is performed in a fully supervised manner on the same fe w-shot training set used during the fine-tuning stage of pre-training, contrastive, and graph foundation model base- lines. For the remaining baselines, we follow the standard pre- training and fine-tuning paradigm: these methods are first pre- trained on the source domains under the lea ve-one-out protocol and then adapted to the target domain using the same fe w- shot training set. Furthermore, standard homogeneous GNNs, contrastiv e learning methods, and homogeneous foundation models are inherently designed for homogeneous structures. For these baselines, the input heterogeneous graphs are directly flattened into homogeneous topologies by ignoring node and edge type distinctions prior to ex ecution. B. Analysis W e answer fi ve R esearch Q uestions ( RQ ) and demonstrate our arguments by extended experiments. 1) RQ1: Does CrossHGL surpass state-of-the-art base- lines in text-free, f ew-shot cross-domain heterogeneous graph learning? : W e ev aluate Cr ossHGL against div erse baseline paradigms under the extreme 1-shot cross-domain setting. The node classification results are reported in T able II, while the graph classification results are reported in T a- ble III. Overall, the results consistently demonstrate the strong positiv e transferability of Cr ossHGL across both node-lev el and graph-le vel tasks. It is worth noting that GCN, Graph- SA GE, GA T , HAN, and Simple-HGN are supervised baselines trained directly on the target few-shot set, whereas Cr ossHGL , GraphCL, DGI, GraphPrompt, GCOPE, and SAMGPT all follow the same pre-training-and-fine-tuning protocol under the lea ve-one-out setting. This distinction is important for interpreting the results: supervised baselines mainly reflect direct adaptation ability under scarce labels, while the latter group ev aluates whether transferable cross-domain knowledge can be effecti vely acquired before tar get adaptation. For node classification, Cr ossHGL achiev es the best results on three of the four tar get datasets, with the clearest gains on A CM and DBLP . On A CM, it improves over the strongest baseline, GCN, by 8.8% in Micro-F1 and 15.7% in Macro-F1. On DBLP , the advantage becomes particularly pronounced, where CrossHGL substantially outperforms both the strongest supervised baseline, GraphSA GE, and the strongest founda- tion baseline, GCOPE, by a wide margin in both Micro- F1 and Macro-F1. On IMDB, although the margin becomes JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 9 smaller , CrossHGL still achieves the best Micro-F1 and the second-best Macro-F1, indicating stable transferability ev en when competing methods perform similarly . Overall, these results suggest that semantic-preserving transformation and T ri-Prompt adaptation enable more effecti ve transfer of hetero- geneous structural priors than direct supervision, contrasti ve pre-training alone, or homogeneous flattening. A similar pattern is observ ed for graph classification, con- firming that the advantage of Cr ossHGL extends beyond node- lev el prediction. As shown in T able III, CrossHGL ranks first on ACM, DBLP , and IMDB in both Micro-F1 and Macro-F1. On A CM, it improves over GCN by 5.8% in Micro-F1 and 8.1% in Macro-F1. On DBLP , it surpasses HAN by 13.4% and 7.7%, respectiv ely . On IMDB, the gains remain consistent but more modest, reaching 2.2% in Micro-F1 and 1.2% in Macro-F1 over the strongest competitor , GraphSAGE. These results indicate that Cr ossHGL can transfer not only node-le vel semantics but also graph-lev el structural patterns. Freebase re veals an important boundary condition. Unlike A CM, DBLP , and IMDB, this dataset is inherently feature- poor , so after one-hot initialization and SVD alignment, the target graph is reduced to a largely structural identifier space with very weak feature semantics. Under such a setting, supervised baselines can more easily ov erfit to local struc- tural cues in the target few-shot set, whereas pre-training- and-fine-tuning methods are required to transfer knowledge across a much larger semantic gap. This helps explain why Cr ossHGL does not outperform HAN on Freebase Micro- F1, e ven though it still achie ves the best Macro-F1 on both node classification and graph classification. Therefore, the Freebase results should be interpreted not simply as weaker ov erall transfer , b ut as e vidence that class-balanced transfer under sev ere feature degeneration remains a challenging case for all pre-training-based paradigms. T aken together , these findings sho w that Cr ossHGL deliv ers consistent and often substantial improvements across both node classification and graph classification, v alidating the effecti veness of preserv- ing heterogeneous semantics throughout transformation, pre- training, and fine-tuning. 2) RQ2: How resilient is CrossHGL to varying degrees of data scarcity during cross-domain adaptation? : T o ex- amine the robustness of Cr ossHGL under different levels of target-domain supervision, we conduct a K -shot analysis on A CM with K ∈ { 1 , 2 , . . . , 10 } . W e compare CrossHGL with representati ve baselines under the “pre-training and fine- tuning” paradigm, and report the Micro-F1 and Macro-F1 trends in Fig. 3. Overall, Cr ossHGL consistently achie ves the best performance across the entire K -shot range. Its advantage is particularly evident in the extremely low-shot regime ( K ≤ 3 ), where only a handful of labeled nodes are av ailable for adaptation. This suggests that Cr ossHGL can exploit scarce supervision more effecti vely than competing methods, benefiting from transferable heterogeneous priors learned during pre-training rather than relying hea vily on target-domain labels. As K increases, all methods improve with more labeled data, but Cr ossHGL maintains a clear lead and exhibits a steady upward trend, reaching 81.06% Micro-F1 and 80.52% MicroF1(%) 10.00 28.75 47.50 66.25 85.00 Shot k 1 2 3 4 5 6 7 8 9 10 CrossHGPT GraphCL GraphPrompt GCOPE SAMGPT G ra phCL G ra phP rom pt G CO P E SAMGPT Cros s H G P T 1 43.2 33.15 34.34 50.23 66.41 2 52.14 34.38 38.56 59.33 68.96 3 58.52 35.52 42.12 66.52 72.81 4 60.93 36.67 44.85 69.52 76.27 5 64.34 37.85 46.72 71.55 77.78 6 66.49 38.94 47.91 75.41 77.39 7 68.83 40.12 48.65 77.08 78.87 8 70.92 41.28 49.12 79.09 80.04 9 71.91 42.31 49.43 79.96 80.72 10 72.62 43.2 49.65 81.13 81.06 MacroF1(%) 10.00 28.75 47.50 66.25 85.00 Shot k 1 2 3 4 5 6 7 8 9 10 CrossHGPT GraphCL GraphPrompt GCOPE SAMGPT G ra phCL G ra phP rom pt G CO P E SAMGPT Cros s H G P T 1 37.12 16.24 26.21 46.12 64.99 2 49.59 18.57 31.45 57.87 66.04 3 57.1 21.03 35.82 65.83 71.35 4 60.28 23.41 38.91 68.92 74.87 5 63.91 25.82 41.15 71.33 77.01 6 66.23 28.15 42.68 75.3 76.52 7 68.65 30.54 43.72 76.92 77.9 8 70.71 32.88 44.41 78.97 79.43 9 71.91 35.05 44.86 79.97 80.21 10 72.49 37.12 45.12 81.05 80.52 1 Fig. 3. Impact of sample size ( k ) on A CM classification performance 表格 1 M i c ro-F 1 M a c ro-F 1 M i c ro-F 1 M a c ro-F 1 M i c ro-F 1 M a c ro-F 1 M i c ro-F 1 M a c ro-F 1 3 66.41 64.99 85.18 83.15 37.84 31.45 17.52 15.03 2 65.86 63.49 85.27 83.07 37.3 32.49 16.92 14.03 1 60.65 54.45 83.49 80.44 36.8 30.21 16.22 14.13 1 61.51 56.47 36.63 31.89 17.52 15.03 ACM F1(%) 50.00 55.00 60.00 65.00 70.00 Micro-F1 Macro-F1 DBLP 75.00 78.75 82.50 86.25 90.00 Micro-F1 Macro-F1 IMDB F1(%) 25.00 28.75 32.50 36.25 40.00 Source 3 2 1 Micro-F1 Macro-F1 Freebase 10.00 12.50 15.00 17.50 20.00 Source 3 2 1 Micro-F1 Macro-F1 1 Fig. 4. Performance comparison under different pre-training source set- tings. The full multi-source setting consistently achie ves the best o verall performance, while reducing the number of source domains leads to clear performance degradation on several target datasets. Macro-F1 at 10-shot. These results indicate that Cr ossHGL not only excels under extreme label scarcity , but also retains strong adaptation capability as supervision becomes less limited. In other words, preserving heterogeneous semantics during pre- training and fine-tuning yields stronger low-shot resilience and superior sample efficiency in cross-domain transfer . 3) RQ3: How important is multi-source pre-training for CrossHGL ? : T o inv estigate the effect of pre-training source domains, we progressiv ely remove source datasets during pre-training while keeping the target-domain fine-tuning and ev aluation protocol unchanged. Fig. 4 shows that the full multi-source setting consistently yields the strongest overall performance, confirming that CrossHGL benefits from di verse source-domain knowledge during pre-training. When only one source domain is remo ved, the performance drop is relati vely limited, especially on DBLP , indicating that the contribution of different source domains is not uniform across target domains. This suggests that different source domains pro vide transfer- able structural and semantic priors with dif ferent degrees of relev ance to each target dataset. When the pre-training setting is further reduced to a single source domain, the degradation becomes much more evi- dent. In particular, the av erage Micro-F1 drop reaches 8.03% on A CM and 1.98% on DBLP . Overall, these results show that although individual source domains contrib ute unev enly , JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 10 T ABLE IV A B LAT IO N S T U DY R E S ULT S O F T R I - P R OM P T C O M P ON E N T S O N F O UR T A R G ET DAT A S ET S . B O L DFA CE D EN OT E S T H E B E S T P E RF O R M AN C E . Method Dataset A CM DBLP IMDB Freebase Micro-F1 Macro-F1 Micro-F1 Macro-F1 Micro-F1 Macro-F1 Micro-F1 Macro-F1 w/o Edge Prompt 64.27 ± 8.60 59.40 ± 10.62 85.03 ± 9.60 83.06 ± 11.78 36.65 ± 6.14 31.37 ± 7.26 15.23 ± 6.08 9.69 ± 2.47 w/o Structure Prompt 62.72 ± 6.25 56.46 ± 8.01 84.87 ± 8.42 84.61 ± 10.16 36.37 ± 5.34 31.30 ± 6.41 15.02 ± 6.72 9.50 ± 2.49 w/o Feature Prompt 60.85 ± 6.60 54.56 ± 8.22 84.92 ± 8.57 84.03 ± 10.74 36.75 ± 5.98 31.27 ± 7.18 15.11 ± 5.92 9.93 ± 2.46 CrossHGL 66.41 ± 5.01 64.99 ± 5.94 85.18 ± 9.45 83.15 ± 11.43 37.84 ± 5.90 31.45 ± 7.07 17.52 ± 4.14 15.03 ± 3.17 表格 1 M i c ro-F 1 A CM D BL P IM D B F re e ba s e Flatten 61.02 37.95 37.09 22.42 O urs 72.04 72.87 37.29 47.72 Performance Comparison on GC N 20.00 35.00 50.00 65.00 80.00 Flatten Ours ACM DBLP IMDB Freebase Performance Comparison on SAGE 25.00 33.75 42.50 51.25 60.00 Flatten Ours ACM DBLP IMDB Freebase 表格 1-1 A CM D BL P IM D B F re e ba s e Flatten 47.83 44.01 36.67 29.21 O urs 49.4 56.08 38.22 40.5 表格 1-1-1 A CM D BL P IM D B F re e ba s e Flatten 54.1 38.5 37.24 25.95 O urs 65.93 64.54 39.03 45.54 Performance Comparison on GA T 20.00 33.75 47.50 61.25 75.00 Flatten Ours ACM DBLP IMDB Freebase 1 Fig. 5. Comparison between direct flattening and our semantic-preserving graph transformation on GCN, GraphSA GE, and GA T under the 1-shot supervised setting, where the remaining nodes are split into validation and test sets following the protocol used in the main results. multi-source pre-training exposes CrossHGL to more diverse heterogeneous structures and relation patterns, enabling it to learn more transferable semantic priors and achiev e better generalization on unseen tar get domains. 4) RQ4. Does the T ri-Prompt in CrossHGL work? : T able IV reports the ablation results of the three prompt components. Overall, the full Cr ossHGL achie ves the best performance on all four target datasets, showing that the gains of Cr ossHGL arise from the complementary effects of the entire T ri-Prompt design rather than any single prompt alone. Among the three v ariants, removing the Feature Prompt causes the largest de gradation on ACM, where Micro-F1 decreases by 8.37%. This indicates that feature-lev el calibration is partic- ularly important when the tar get graph contains informati ve node attributes. Meanwhile, removing the Edge Prompt or the Structure Prompt also consistently weakens performance, especially on DBLP and IMDB, indicating that both relation- aware interactions and topology-aware adaptation are impor - tant for preserving transferable heterogeneous semantics. W e also observe that different datasets sho w dif ferent sen- sitivities to prompt removal. ACM is more affected by remov- ing the Feature Prompt, whereas DBLP and IMDB exhibit relativ ely consistent declines across all v ariants. These results suggest that the three prompts capture complementary aspects of transferable knowledge, and their joint use is necessary for robust cross-domain adaptation. 5) RQ5: How crucial is the Semantic-Preserving Graph T ransformation for retaining multi-relational priors? : T o validate the contribution of the graph transformation module, we compare our semantic-preserving strategy with direct flat- tening using GCN, GraphSA GE, and GA T under the same 1- shot supervised setting. Specifically , for each target dataset, we use 1-shot labeled nodes for training and split the remaining nodes into validation and test sets following the same protocol as in the main results. As sho wn in Fig. 5, the models equipped with our transformation consistently outperform their directly flattened counterparts across all three backbones. This result indicates that directly flattening a heterogeneous graph discards important node- and edge-type semantics, mak- ing it harder for the model to e xploit transferable structural priors under scarce supervision. In contrast, our transformation preserves multi-relational information in a unified homoge- neous graph, allowing ev en standard homogeneous GNNs to better capture heterogeneous dependencies. These results suggest that semantic-preserving transformation pro vides an effecti ve structural inducti ve bias for fe w-shot learning on heterogeneous graphs. V I . C O N C L U S I O N S A N D O U T L O O K In this work, we in vestig ate the problem of text-free, fe w- shot, cross-domain learning on heterogeneous graphs and propose Cr ossHGL , a foundation framew ork that explic- itly preserves and transfers heterogeneous structural seman- tics. By integrating semantic-preserving graph transforma- tion, prompt-a ware multi-domain pre-training, and parameter- efficient cross-domain fine-tuning, Cr ossHGL effecti vely mit- igates heterogeneity shifts across domains without relying on external textual supervision. Extensive experiments on four real-w orld heterogeneous graph benchmarks demonstrate that Cr ossHGL consistently achieves competitive and often superior performance under extremely limited target-domain supervision. The empirical results further indicate that pre- serving multi-relational semantics during both pre-training and adaptation is important for effecti ve kno wledge transfer in het- erogeneous settings. In particular , the results of source-domain ablation, prompt ablation, and graph transformation analysis collectiv ely support the effecti veness of the proposed design. This work suggests that semantic-preserving transformation and prompt-based adaptation offer a practical direction for JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 11 heterogeneous graph foundation models in te xt-free scenarios. In future work, it would be v aluable to extend this frame work to graph-lev el tasks, larger -scale graphs, and more challenging open-world scenarios, as well as to explore more efficient pre-training objecti ves and more general semantic alignment strategies across highly div erse domains. R E F E R E N C E S [1] C. Jin, J. Zhou, C. Xie, S. Y u, Q. Xuan, and X. Y ang, “Enhanc- ing ethereum fraud detection via generativ e and contrastive self- supervision, ” IEEE T r ansactions on Information F or ensics and Security , vol. 20, pp. 839–853, 2024. [2] B. Hu, C. Shi, W . X. Zhao, and P . S. Y u, “Leveraging meta-path based context for top-n recommendation with a neural co-attention model, ” in Pr oceedings of the 24th ACM SIGKDD international confer ence on knowledge discovery & data mining , 2018, pp. 1531–1540. [3] C. Jin, J. Jin, J. Zhou, J. W u, and Q. Xuan, “Heterogeneous feature augmentation for ponzi detection in ethereum, ” IEEE T ransactions on Cir cuits and Systems II: Express Briefs , vol. 69, no. 9, pp. 3919–3923, 2022. [4] Z. Liu, C. Chen, X. Y ang, J. Zhou, X. Li, and L. Song, “Heterogeneous graph neural netw orks for malicious account detection, ” in Pr oceedings of the 27th ACM international confer ence on information and knowledge management , 2018, pp. 2077–2085. [5] M. Schlichtkrull, T . N. Kipf, P . Bloem, R. V an Den Berg, I. T itov , and M. W elling, “Modeling relational data with graph con volutional networks, ” in European semantic web confer ence . Springer , 2018, pp. 593–607. [6] Z. Hu, Y . Dong, K. W ang, and Y . Sun, “Heterogeneous graph trans- former , ” in Pr oceedings of the web confer ence 2020 , 2020, pp. 2704– 2710. [7] Q. Zhang, X. W u, Q. Y ang, C. Zhang, and X. Zhang, “Few-shot heterogeneous graph learning via cross-domain knowledge transfer, ” in Pr oceedings of the 28th A CM SIGKDD Conference on Knowledge Discovery and Data Mining , 2022, pp. 2450–2460. [8] C. Zhang, K. Ding, J. Li, X. Zhang, Y . Y e, N. V . Chawla, and H. Liu, “Few-shot learning on graphs, ” in 31st International J oint Confer ence on Artificial Intelligence, IJCAI 2022 . International Joint Conferences on Artificial Intelligence, 2022, pp. 5662–5669. [9] Q. Dai, X.-M. Wu, J. Xiao, X. Shen, and D. W ang, “Graph transfer learning via adversarial domain adaptation with graph conv olution, ” IEEE T ransactions on Knowledg e and Data Engineering , vol. 35, no. 5, pp. 4908–4922, 2022. [10] M. Sun, K. Zhou, X. He, Y . W ang, and X. W ang, “Gppt: Graph pre-training and prompt tuning to generalize graph neural networks, ” in Pr oceedings of the 28th ACM SIGKDD confer ence on knowledge discovery and data mining , 2022, pp. 1717–1727. [11] Z. Liu, X. Y u, Y . F ang, and X. Zhang, “Graphprompt: Unifying pre- training and downstream tasks for graph neural networks, ” in Proceed- ings of the ACM web confer ence 2023 , 2023, pp. 417–428. [12] P . Jiao, J. Ni, D. Jin, X. Guo, H. Liu, H. Chen, and Y . Bi, “Hgmp: heterogeneous graph multi-task prompt learning, ” in Proceedings of the Thirty-F ourth International Joint Conference on Artificial Intelligence , 2025, pp. 2982–2990. [13] H. Liu, J. Feng, L. Kong, N. Liang, D. T ao, Y . Chen, and M. Zhang, “One for all: T ow ards training one graph model for all classification tasks, ” in 12th International Conference on Learning Repr esentations, ICLR 2024 , 2024. [14] X. Sun, H. Cheng, J. Li, B. Liu, and J. Guan, “ All in one: Multi-task prompting for graph neural networks, ” in Pr oceedings of the 29th ACM SIGKDD conference on knowledge discovery and data mining , 2023, pp. 2120–2131. [15] J. Jinghong, L. Song, J. Li, and Y . Kong, “Hepa: Heterogeneous graph prompting for all-level classification tasks, ” in Pr oceedings of the AAAI Confer ence on Artificial Intelligence , vol. 39, no. 11, 2025, pp. 11 915– 11 923. [16] X. Y u, Y . F ang, Z. Liu, and X. Zhang, “Hgprompt: Bridging homo- geneous and heterogeneous graphs for fe w-shot prompt learning, ” in Pr oceedings of the AAAI confer ence on artificial intelligence , vol. 38, no. 15, 2024, pp. 16 578–16 586. [17] J. T ang, Y . Y ang, W . W ei, L. Shi, L. Xia, D. Yin, and C. Huang, “Higpt: Heterogeneous graph language model, ” in Pr oceedings of the 30th A CM SIGKDD conference on knowledge discovery and data mining , 2024, pp. 2842–2853. [18] J. Y ang, R. W ang, C. Y ang, B. Y an, Q. Zhou, Y . Juan, and C. Shi, “Harnessing language model for cross-heterogeneity graph knowledge transfer , ” in Pr oceedings of the AAAI Confer ence on Artificial Intelli- gence , vol. 39, no. 12, 2025, pp. 13 026–13 034. [19] R. Jia, M. W u, Y . Ding, J. Lu, and Y . Zhang, “Hetgcot: Heterogeneous graph-enhanced chain-of-thought llm reasoning for academic question answering, ” in Findings of the Association for Computational Linguis- tics: EMNLP 2025 , 2025, pp. 15 950–15 963. [20] X. Y u, S. Y e, R. Liang, C. Zhou, H. Cheng, X. Zhang, and Y . Fang, “Evaluating progress in graph foundation models: A comprehensiv e benchmark and new insights, ” arXiv preprint , 2026. [21] Z. Xu, X. Chen, W . Pan, and Z. Ming, “Heterogeneous graph transfer learning for category-aw are cross-domain sequential recommendation, ” in Proceedings of the ACM on W eb Confer ence 2025 , 2025, pp. 1951– 1962. [22] X. Huang, Y . Y ang, Y . W ang, C. W ang, Z. Zhang, J. Xu, L. Chen, and M. V azirgiannis, “Dgraph: A lar ge-scale financial dataset for graph anomaly detection, ” Advances in Neural Information Pr ocessing Sys- tems , vol. 35, pp. 22 765–22 777, 2022. [23] Y . Liu, S. Pan, Y . G. W ang, F . Xiong, L. W ang, Q. Chen, and V . C. Lee, “ Anomaly detection in dynamic graphs via transformer , ” IEEE T ransactions on Knowledge and Data Engineering , vol. 35, no. 12, pp. 12 081–12 094, 2021. [24] Z. Ni, C. Liu, H. W an, and X. Zhao, “Robust heterogeneous graph classification for molecular property prediction with information bottle- neck, ” in Pr oceedings of the AAAI Confer ence on Artificial Intelligence , vol. 39, no. 1, 2025, pp. 640–648. [25] H. Zhao, A. Chen, X. Sun, H. Cheng, and J. Li, “ All in one and one for all: A simple yet effecti ve method towards cross-domain graph pretraining, ” in Proceedings of the 30th A CM SIGKDD Conference on Knowledge Discovery and Data Mining , 2024, pp. 4443–4454. [26] X. Y u, Z. Gong, C. Zhou, Y . Fang, and H. Zhang, “Samgpt: T ext-free graph foundation model for multi-domain pre-training and cross-domain adaptation, ” in Proceedings of the A CM on W eb Confer ence 2025 , 2025, pp. 1142–1153. [27] Y . Ma, N. Y an, J. Li, M. Mortazavi, and N. V . Cha wla, “Hetgpt: Harnessing the po wer of prompt tuning in pre-trained heterogeneous graph neural networks, ” in Proceedings of the ACM W eb Confer ence 2024 , 2024, pp. 1015–1023. [28] J. Zhou, C. Xie, S. Gong, Z. W en, X. Zhao, Q. Xuan, and X. Y ang, “Data augmentation on graphs: A technical survey , ” ACM Computing Surveys , v ol. 57, no. 11, pp. 1–34, 2025. [29] Q. Lv , M. Ding, Q. Liu, Y . Chen, W . Feng, S. He, C. Zhou, J. Jiang, Y . Dong, and J. T ang, “ Are we really making much progress? revisiting, benchmarking and refining heterogeneous graph neural networks, ” in Pr oceedings of the 27th ACM SIGKDD confer ence on knowledge discovery & data mining , 2021, pp. 1150–1160. [30] X. Fu, J. Zhang, Z. Meng, and I. King, “Magnn: Metapath aggregated graph neural network for heterogeneous graph embedding, ” in Pr oceed- ings of the web conference 2020 , 2020, pp. 2331–2341. [31] T . N. Kipf and M. W elling, “Semi-supervised classification with graph con volutional networks, ” in International Confer ence on Learning Rep- r esentations , 2017. [32] W . Hamilton, Z. Y ing, and J. Leskovec, “Inductive representation learning on lar ge graphs, ” Advances in Neural Information Pr ocessing Systems , vol. 30, 2017. [33] P . V eli ˇ ckovi ´ c, G. Cucurull, A. Casanova, A. Romero, P . Lio, and Y . Bengio, “Graph attention networks, ” in International Confer ence on Learning Representations , 2018, pp. 1–12. [34] X. W ang, H. Ji, C. Shi, B. W ang, Y . Y e, P . Cui, and P . S. Y u, “Hetero- geneous graph attention network, ” in The world wide web confer ence , 2019, pp. 2022–2032. [35] X. Y ang, M. Y an, S. P an, X. Y e, and D. Fan, “Simple and efficient heterogeneous graph neural network, ” in Pr oceedings of the Thirty- Seventh AAAI Confer ence on Artificial Intelligence and Thirty-F ifth Confer ence on Innovative Applications of Artificial Intelligence and Thirteenth Symposium on Educational Advances in Artificial Intelli- gence , 2023. [36] Y . Y ou, T . Chen, Y . Sui, T . Chen, Z. W ang, and Y . Shen, “Graph con- trastiv e learning with augmentations, ” Advances in neural information pr ocessing systems , vol. 33, pp. 5812–5823, 2020. [37] P . V elickovic, W . Fedus, W . L. Hamilton, P . Li ` o, Y . Bengio, R. D. Hjelm et al. , “Deep graph infomax. ” ICLR (poster) , vol. 2, no. 3, p. 4, 2019. JOURNAL OF L A T E X CLASS FILES, VOL. 14, NO. 8, A UGUST 2021 12 Xuanze Chen received the B.S. degree from W en- zhou Univ ersity , W enzhou, China, in 2023. He is currently pursuing the Ph.D. degree with the Insti- tute of Cyberspace Security , Zhejiang Univ ersity of T echnology , Hangzhou, China. His current research interests include graph data mining and graph foun- dation models. Jiajun Zhou received the Ph.D de gree in control theory and engineering from Zhejiang Uni versity of T echnology , Hangzhou, China, in 2023. He is currently a Postdoctoral Research Fellow with the Institute of Cyberspace Security , Zhejiang Uni versity of T echnology . His current research interests include graph data mining, c yberspace security and data management. Y adong Li received a BS degree from Tianjin Univ ersity of T echnology , Tianjin, China, in 2024. He is currently pursuing a Master’ s de gree in Control Science and Engineering at Zhejiang Uni versity of T echnology . His current research interests include graph neural networks and large language models. Shanqing Y u received the M.S. degree from the School of Computer Engineering and Science, Shanghai Univ ersity , China, in 2008 and received the M.S. de gree from the Graduate School of In- formation, Production and Systems, W aseda Uni- versity , Japan, in 2008, and the Ph.D. degree, in 2011, respectiv ely . She is currently a Lecturer at the Institute of Cyberspace Security and the College of Information Engineering, Zhejiang University of T echnology , Hangzhou, China. Her research inter- ests cover intelligent computation and data mining. Qi Xuan (M’18) receiv ed the BS and PhD degrees in control theory and engineering from Zhejiang Univ ersity , Hangzhou, China, in 2003 and 2008, respectiv ely . He was a Post-Doctoral Researcher with the Department of Information Science and Electronic Engineering, Zhejiang Uni versity , from 2008 to 2010, respectively , and a Research Assistant with the Department of Electronic Engineering, City Univ ersity of Hong K ong, Hong K ong, in 2010 and 2017. From 2012 to 2014, he was a Post-Doctoral Fellow with the Department of Computer Science, Univ ersity of California at Davis, CA, USA. He is a senior member of the IEEE and is currently a Professor with the Institute of Cyberspace Security , College of Information Engineering, Zhejiang University of T echnology , Hangzhou, China. His current research interests include network science, graph data mining, cyberspace security , machine learning, and computer vision.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment