Energy Score-Guided Neural Gaussian Mixture Model for Predictive Uncertainty Quantification

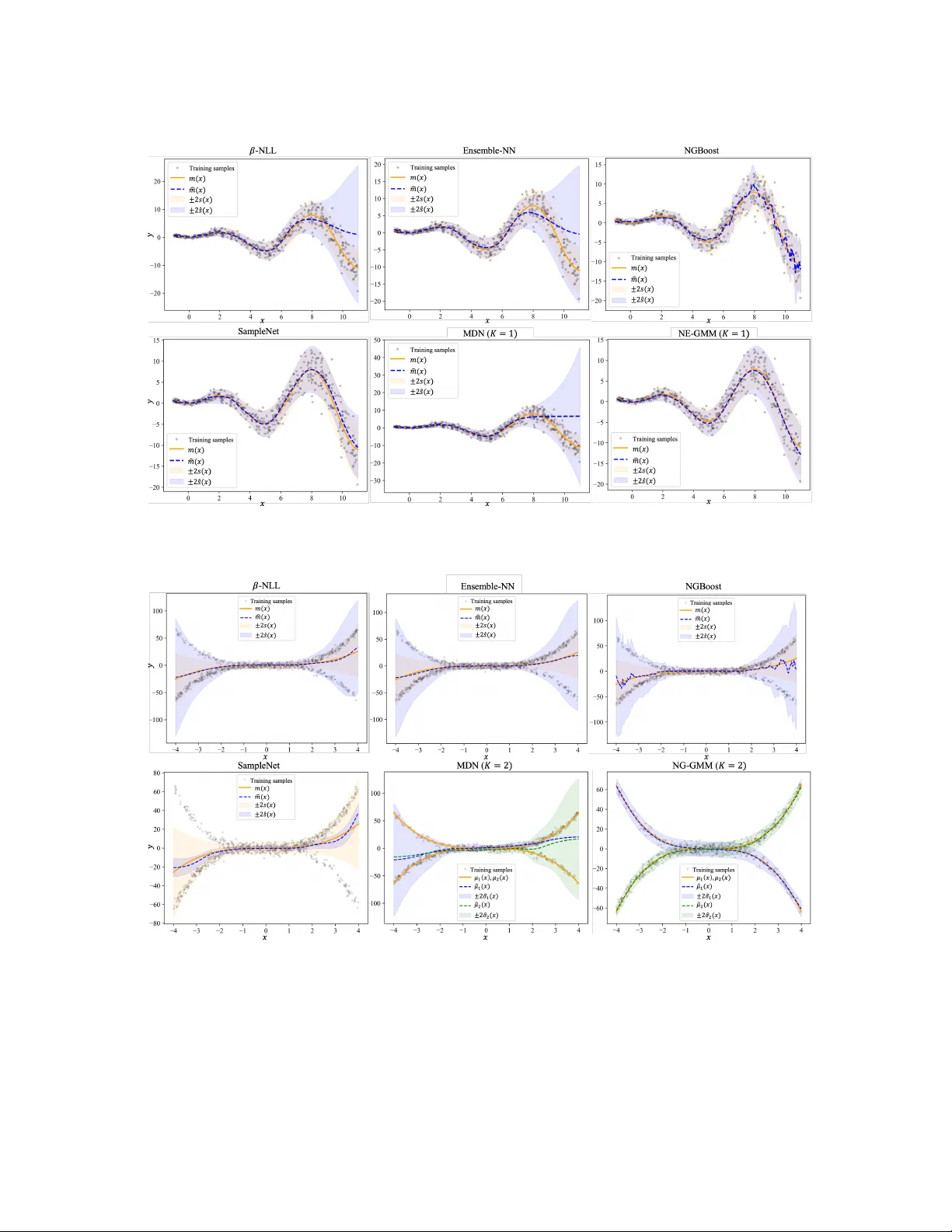

Quantifying predictive uncertainty is essential for real world machine learning applications, especially in scenarios requiring reliable and interpretable predictions. Many common parametric approaches rely on neural networks to estimate distribution…

Authors: Yang Yang, Chunlin Ji, Haoyang Li