Test-Time Instance-Specific Parameter Composition: A New Paradigm for Adaptive Generative Modeling

Existing generative models, such as diffusion and auto-regressive networks, are inherently static, relying on a fixed set of pretrained parameters to handle all inputs. In contrast, humans flexibly adapt their internal generative representations to e…

Authors: Minh-Tuan Tran, Xuan-May Le, Quan Hung Tran

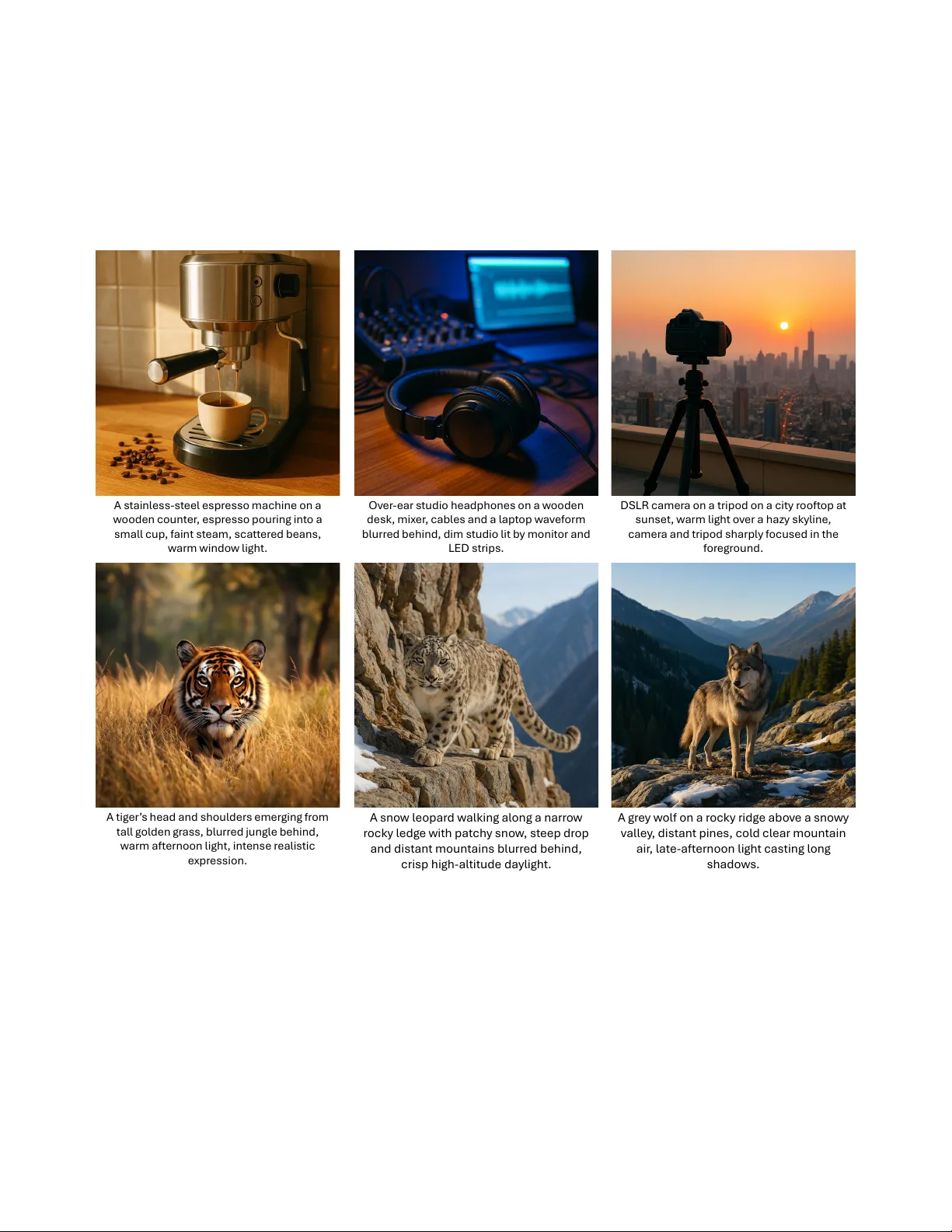

T est-T ime Instance-Specific Parameter Composition: A New Paradigm f or Adaptiv e Generativ e Modeling Minh-T uan T ran 1 , Xuan-May Le 2 , Quan Hung T ran 3 , Mehrtash Harandi 1 , Dinh Phung 1 , T rung Le 1 1 Monash Uni versity , 2 The Uni versity of Melbourne, 3 Meta Inc { tuan.tran7,mehrtash.harandi,dinh.phung,trunglm } @monash.edu xuanmay.le@student.unimelb.edu.au, quanhungtran@meta.com Abstract Existing generative models, such as dif fusion and auto- r egr essive networks, ar e inher ently static, r elying on a fixed set of pr etrained parameters to handle all inputs. In con- trast, humans fle xibly adapt their internal gener ative r epr e- sentations to each perceptual or imaginative context. In- spir ed by this capability , we intr oduce Composer , a new paradigm for adaptive generative modeling based on test- time instance-specific parameter composition. Composer generates input-conditioned parameter adaptations at in- fer ence time, which ar e injected into the pr etrained model’ s weights, enabling per-input specialization without fine- tuning or r etraining. Adaptation occurs once prior to multi- step g eneration, yielding higher-quality , context-awar e out- puts with minimal computational and memory overhead. Experiments show that Composer substantially impr oves performance acr oss diverse gener ative models and use cases, including lightweight/quantized models and test-time scaling. By levera ging input-awar e parameter composi- tion, Composer establishes a new paradigm for designing generative models that dynamically adapt to each input, moving beyond static parameterization. The code will be available at https : // github .com / tmtuan1307 / Composer . 1. Introduction Generativ e models such as diffusion [ 24 , 25 , 34 ] and visual auto-regressi ve models (AR) [ 10 , 20 , 26 , 27 ] hav e achiev ed remarkable progress in synthesizing high-fidelity and di- verse images. Despite this success, most existing models remain static, a single, fixed set of parameters must accom- modate all prompts, scenes, and modalities. This rigidity fundamentally limits adaptability: while humans dynami- cally adjust their internal generativ e representations to each perceptual or imaginativ e context [ 1 , 7 ], current generativ e models rely on immutable weights that cannot specialize Compose r P r ompt Pr e t r a i n e d W ei g h t s 𝑾 ∈ 𝑹 𝒅 × 𝒅 A da p t e d W ei g h t s 𝑾 ∗ ∈ 𝑹 𝒅 × 𝒅 + = Pr e t r a i n e d W ei g h t s 𝑾 ∈ 𝑹 𝒅 × 𝒅 P r ompt (a) Origin al Inf er enc e St age (b) Our Inf er enc e St age Iter a tiv e Ge ner a tin g P r oc es s 𝑨 ∈ 𝑹 𝒅 × 𝒓 𝑩 ∈ 𝑹 𝒓 × 𝒅 G e n e r a te d W e ig h ts 𝑾′ 𝑨𝑩 ∈ 𝑹 𝒅 × 𝒅 𝑋 𝑇 𝑋 1 𝑋 𝑇 𝑋 1 Figure 1. Comparison of static versus adapti ve parameterization. Composer dynamically composes instance-specific parameter up- dates, allowing per -input adaptation without fine-tuning. to the nuances of each input. As a result, they often pro- duce oversmoothed or inconsistent samples under complex or ambiguous conditions. Recent advances in test-time training [ 11 , 13 , 14 , 22 ] demonstrate that adapting model parameters at inference time can improve performance. While such techniques hav e become increasingly popular in large language mod- els, they remain largely unexplored for image generative models, where high-resolution diffusion and V AR back- bones already demand massi ve computational budgets, making instance-wise gradient optimization at test time pro- hibitiv ely expensiv e. Mixture-of-Experts (MoE) architec- tures [ 3 , 4 , 9 , 23 ] provide conditional computation by acti- vating different experts per input, but their routing is coarse- grained and tied to a fixed expert pool, limiting instance- specific adaptation. In addition, they usually entail archi- tectural changes and full retraining, rather than being appli- cable to off-the-shelf pretrained models. In this work, we move beyond these limitations by en- dowing generative models with the ability to compose pa- rameters dynamically for each input. Instead of iterativ e fine-tuning, we propose to synthesize instance-specific pa- rameter adaptations directly from the conditioning signal T able 1. Comparison of average generation quality , inference time, and peak memory for class-conditional image generation on Ima- geNet 256 × 256 . It is clear that Composer improves FID while introducing negligible ov erhead in inference time and memory , un- like test-time training which is significantly more expensi ve. Method FID ↓ Inference T ime (s) Peak Memory (GB) Standard 3.03 15.6 7.33 T est-time T raining 2.86 84.24 (+540%) 13.26 (+180%) Composer 2.77 15.63 (+0.2%) 7.59 (+3.6%) through a lightweight auxiliary network. These low-rank updates are composed with the pretrained model weights, forming an adapti ve configuration that better aligns with the semantics of each input. W e introduce Composer , a plug-in meta-generator that produces compact, input-conditioned parameter updates for arbitrary pretrained generators at inference time. The gen- erated updates serve as adapti ve bridges between the con- ditioning features and the pretrained parameters, enabling per-input specialization without additional optimization. Composer performs this parameter composition once prior to generation, introducing negligible computational ov er- head while significantly improving fidelity and consistency . Our framew ork is model-agnostic and integrates seam- lessly into v arious generativ e backbones, including diffu- sion and visual auto-regressiv e models. Empirically , Com- poser enhances generation quality , controllability , and con- text alignment across diverse settings. Furthermore, its adaptiv e mechanism generalizes to lightweight and quan- tized backbones, partially restoring generation quality while maintaining efficienc y . Our main contributions ar e as follows: • W e propose Composer , a plug-in framework for test- time instance-specific parameter composition, enabling pretrained generati ve models to adapt their weights dy- namically per input at inference without backbone’ s ar- chitectur al changes or exhaustive r etraining . • W e introduce a meta-generator transformer that maps input conditions to low-rank parameter updates, en- abling efficient, context-aware adaptation while lev erag- ing frozen pretrained weights. Moreov er, we design a nov el training pipeline that encourages consistent adapta- tion for semantically similar inputs and preserv es contrast across different contexts, improving stability and general- ization. • W e demonstrate that Composer consistently improves the performance of diverse generative backbones across class-conditional image generation, text-to-image synthe- sis, post-training quantization, and test-time scaling for diffusion models. By enabling input-aware parameter composition, Com- poser advances the field toward adapti ve generative model- ing, where models can reconfigure their internal parameters on-the-fly , akin to how human cognition dynamically adapts T r ansf orm er Encoder T e xt Em bedding Mer gin g Q ue r y M atr i x V al u e M at r i x P r om pt / C ondition L in ear D ec ode r ( ) Q u e r y M at r i x V alu e M at r i x P osi tion E mbeddin g L ine a r E nc oder ( ) Used in T r a ini ng Sta g e On l y ; St oring f or I nf er enc e Sta g e Figure 2. Overview of Composer . Given any weight matrix W from the backbone, Composer generates a lo w-rank update W ′ = W + AB conditioned on the input. Specifically , the query and value matrices W Q and W V from the pretrained model are linearly projected from R d × d to R 2 r × d model . The projected rep- resentations are then separated to initialize tokens A 0 i and B 0 i ∈ R 1 × d model . During training, these tokens are combined with prompt tokens P i ∈ R 1 × d model and processed by a transformer to produce W ∗ = AB . The adapted parameters W ′ = W + W ∗ are used for generation. At inference, the first projection layers are removed, while A 0 i and B 0 i are stored for fast instance-specific adaptation. to context and imagination. 2. Adaptiv e Generativ e Modeling with Com- poser Existing generativ e models, such as diffusion and auto- regressi ve netw orks, are inherently static , relying on a fix ed set of pretrained parameters to handle all inputs. In contrast, humans flexibly adapt their internal representations for each perceptual or imaginative context. Inspired by this capabil- ity , we propose Composer , a new paradigm for adaptive generative modeling based on instance-specific parameter composition . Composer generates input-conditioned parameter adap- tations at inference time, which are injected into the pre- trained backbone weights, enabling per -input specializa- tion without fine-tuning or retraining. Adaptation oc- curs once prior to multi-step generation, producing higher- fidelity , context-a ware outputs with minimal computational and memory ov erhead. Figure 1 illustrates the Composer workflo w , sho wing how instance-specific updates W ∗ are generated, composed with the pretrained weights W , and used in diffusion-based generation. 2.1. Instance-Specific P arameter Composition in Generative Models Modern generativ e architectures, such as diffusion and auto-regressi ve networks, consist of deep backbones param- eterized by numerous weight matrices W . In diffusion mod- els, W typically represents the linear projections for the at- tention components (i.e., W Q , W V , W V ) or the conv olu- tional filters within the U-Net. In this work, we primar- ily apply our method to the query ( W Q ) and value ( W V ) projection matrices within transformer-based architectures [ 15 , 32 ], as these components hav e proven most effecti ve in prior fine-tuning approaches [ 12 , 35 ]. W e also con- ducted an ablation study comparing the ef fectiveness and efficienc y of applying adapters to dif ferent combinations of W Q , W K , W V , and W O , as detailed in the Supplementary Material . Despite their expressi veness, these weights are fr ozen af- ter pretraining: the same parameters ar e applied to every input, r egar dless of content or modality . Such static usage limits contextual adaptability and often requires expensi ve fine-tuning or specialized adapters to achiev e task-specific performance. Moreover , in LoRA [ 12 , 35 ], the weight up- date is defined as W ′ = W + AB , where A and B are two low-rank matrices shared across all input data, hence limiting the ability of A and B to adapt to complex tasks. T o ov ercome this limitation, Composer reformulates the weight matrix W as a composable structure that can be dy- namically adapted for each input instance. W e introduce two low-rank matrices A and B that define an instance- specific residual update: W ′ = W + AB , (1) where A ∈ R d × r , B ∈ R r × d , and r ≪ d . Here, A and B are two low-rank matrices generated by inputting the orig- inal weight matrix W and an individual input (e.g., a text prompt) into our Composer, serving as lightweight, learn- able corrections that modulate the pretrained weights with- out altering their underlying knowledge. This formulation allows flexible, per-input specialization during inference, maintaining efficienc y while enhancing generativ e fidelity . In the following subsection, we describe how Composer lev erages a transformer-based generator to produce these instance-specific low-rank matrices conditioned on contex- tual signals such as prompts or class embeddings. 2.2. T ransformer -Based Parameter Generation Composer generates instance-specific parameter updates W ′ using a low-rank decomposition: W ′ = W + AB , A ∈ R d × r , B ∈ R r × d , (2) where d denotes the dimension of the backbone weight ma- trix, and r denotes the rank of the update ( r ≪ d ). Indeed, we view each A and B as a sequence of r tokens, where each token has a dimension of d × 1 . T o enable fast inference while capturing correlations across weight matrices, we design the instance-specific pa- rameters using a transformer-based model. The architecture of our parameter generator is illustrated in Figure 2 . T oken Initialization. During training, each token of A and B is initialized from the pretrained weight matrices of the pretrained transformer (e.g., W Q and W V with a dimension of d × d ) via a linear projection: [ A 0 Q 1 , . . . , A 0 Q r , B 0 Q 1 , . . . , B 0 Q r ] = Linear ( W Q ) , (3) [ A 0 V 1 , . . . , A 0 V r , B 0 V 1 , . . . , B 0 V r ] = Linear ( W V ) (4) where Linear ( · ) maps R d × d to R 2 r × d model , producing r to- kens for A and r for B in R 1 × d model . This initialization lev er- ages pretrained knowledge, providing a strong starting point for learning. Each resulting vector forms a token: A 0 Q = { A 0 Q 1 , . . . , A 0 Q r } , B 0 Q = { B 0 Q 1 , . . . , B 0 Q r } , A 0 V = { A 0 V 1 , . . . , A 0 V r } , B 0 V = { B 0 V 1 , . . . , B 0 V r } . (5) During inference, the linear projection is removed, and the learned A 0 Q | V and B 0 Q | V tokens are stored for efficient reuse. Positional Embedding and Prompt Conditioning. Learned positional embeddings are added to each token: A 1 Q i = A 0 Q i + P E ( A 0 Q i ) , B 1 Q i = B 0 Q i + P E ( B 0 Q i ) , A 1 V i = A 0 V i + P E ( A 0 V i ) , B 1 V i = B 0 V i + P E ( B 0 V i ) (6) and concatenated with input pr ompt tokens P = [ P 1 , . . . , P m ] during training: X = [ P 1 , . . . , P m , A 1 Q 1: r , B 1 Q 1: r , A 1 V 1: r , B 1 V 1: r ] . (7) Including prompt tokens allows the transformer to condi- tion parameter generation on input context such as textual or semantic cues. T ransformer Architecture. Composer employs an encoder-style transformer with multi-head attention and feed-forward layers. Attention operates according to the following scheme, as illustrated in Figure 3 : • All component tokens attend to ev ery prompt token to en- sure context-a ware adaptation. L o c al Block - wis e Att entio n Globa l F ir s t T o k en Att entio n Figure 3. Illustration of the attention scheme. Component tokens attend to prompt tokens for context, maintain local block-wise at- tention, and the first token of each block captures inter-block cor- relations. • Local block-wise attention among component tokens re- duces computational cost while preserving intra-block co- herence. • The first token from each block attends across blocks to capture inter-block correlations. After processing, the output embeddings at the L -th layer corresponding to A L Q , B L Q and A L V , B L V tokens are re- shaped to reconstruct the lo w-rank matrices A Q , B Q and A V , B V , yielding the instance-specific update: W ′ Q = W Q + A Q B Q and W ′ V = W V + A V B V . (8) 2.3. W eight Composition: T raining vs. Inference T raining Stage. During training, Composer generates instance-specific low-rank matrices A and B conditioned on the prompt P : A, B = Composer ( A 0 , B 0 , P ) , (9) which define the update W ′ = W + AB . The model output is computed as: h = W x + AB x, (10) allowing gradients to propagate through A and B while pre- serving the original pretrained behavior of W . Prompt to- kens and positional embeddings provide contextual condi- tioning during training. Inference Stage. At inference, the learned tokens A 0 and B 0 are used to generate the low-rank updates, which are merged with the pretrained weight: A, B = Composer ( A 0 , B 0 , P ) , (11) W ′ = W + AB , h = W ′ x, (12) enabling efficient, per-input, context-aw are generation in a single forward pass. 2.4. Context-A ware T raining Pipeline for Composer T o train Composer effecti vely , we introduce a context- aware training strategy that balances consistency and di ver- sity in instance-specific adaptations. W e organize this dis- cussion into four parts. i. V anilla T raining. Standard approaches for train- ing generative models randomly collect ( image , text ) or ( image , class ) pairs from the dataset. While straightfor- ward, this random sampling does not explicitly enforce se- mantic relationships, which can lead to inconsistent gen- eration across similar inputs and unstable adaptations in instance-specific weights. ii. Full-Class or Similar-Pr ompt Sampling. An improve- ment over random sampling is to select a full set of images from a single class or all samples with semantically similar prompts for each batch. This strategy encourages consistent adaptations for inputs sharing the same label or prompt con- text. Howe ver , focusing solely on one class or prompt type can reduce div ersity and increase the risk of mode collapse across the dataset. iii. Context-A ware Pipeline for Class-Conditioned Im- age Generation. Our approach combines the benefits of consistency and diversity by splitting each batch based on a parameter α ∈ [0 , 1] : • α × b images are sampled from the same class or with se- mantically close prompts to enforce consistent instance- specific adaptations. • (1 − α ) × b images are sampled from dif ferent classes or distant contexts to ensure outputs remain distinct. For a batch of images x with conditioning signals P , the adapted weights are generated as: W ′ = W + AB , A, B = Composer ( A 0 , B 0 , P ) . (13) The model is trained with the standard diffusion objecti ve: L diffusion = E x,ϵ,t ∥ ϵ − ϵ θ ( x t , t ; W ′ , P ) ∥ 2 2 , (14) where x t = √ γ t x + √ 1 − γ t ϵ and ϵ ∼ N (0 , I ) . This structured pipeline balances consistency among related in- puts with div ersity across contrasting contexts, and outper- forms vanilla random-pair training. iv . Context-A ware Pipeline for T ext-to-Image Genera- tion. For te xt-to-image tasks, we further refine batch selec- tion by measuring semantic similarity in a learned embed- ding space (e.g., CLIP embeddings). • For each input, we select the top α × b most similar images in embedding space to encourage consistent adaptations. • The remaining (1 − α ) × b samples are dra wn from distant embeddings to enforce div ersity . This dynamic similarity sampling captures semantic rela- tionships beyond exact class labels or prompt ov erlap, im- proving the conte xtual coherence of instance-specific adap- tations while preserving output div ersity . 3. Further Adaptation 3.1. Composer f or Post-T raining Quantization Efficient machine learning [ 6 , 16 , 17 , 28 ? – 31 ] plays a key role in enabling modern deep models to be deployed un- der limited memory and computation budgets. As a further application of our proposed method, we Composer can be extended to post-training quantization (PTQ) of generativ e models [ 2 , 5 , 16 – 18 , 33 ]. In quantized models, both weights and activ ations are reduced to lo w-precision formats (e.g., INT8 or INT4), which can introduce significant approxi- mation errors and degrade generati ve fidelity . T o address this, we propose a quantization-aware Composer , where the low-rank updates and the Composer weights themselves are trained in the same low-precision format as the backbone. For a quantized backbone weight W q and full-precision activ ations x , Composer generates instance-specific low- rank updates A and B and a learnable activ ation scaling factor γ : W ′ q = W q + AB , (15) x ′ q = Quantize ( γ · x ) , (16) h q = W ′ q x ′ q , (17) where x ′ q is the scaled and quantized acti v ation, and h q is the resulting hidden representation. The updates ( A, B , γ ) are generated from the stored tokens ( A 0 , B 0 , γ 0 ) condi- tioned on the input context P : [ A, B , γ ] = Composer ( A 0 , B 0 , γ 0 , P ) . (18) T raining Composer in a quantization-aw are manner en- sures that the low-rank updates are effecti ve under the low- precision setting, enabling input-dependent compensation for quantization errors. W e adopt a knowledge distillation loss from the full-precision teacher model: L KD = ∥ h − h q ∥ 2 2 , (19) where h = W x is the teacher output and h q is the output of the quantized backbone with Composer updates. This frame work allows Composer to restore the fidelity lost due to both weight and activ ation quantization, while keeping memory and computation overhead low , and re- quiring no retraining of the original quantized model. By training Composer in the same low-precision format as the backbone, we ensure that it is fully compatible and effectiv e in practical quantized deployment scenarios. 3.2. Composer for T est-T ime Scaling in Diffusion Models Another practical application of Composer is test-time scal- ing for diffusion-based generativ e models [ 8 , 19 , 21 , 27 ]. In this setting, the pretrained backbone weights remain fixed, and Composer is used to generate input-conditioned low- rank updates at inference time, allo wing adaptiv e scaling of the model’ s behavior for each instance. In contrast to quantization-aware training, here Com- poser is applied post hoc at test time, without retraining the backbone or the Composer weights. This enables instance- specific adaptation and scaling during inference, providing improv ed flexibility and higher-fidelity outputs in multi- step diffusion generation. Moreov er, Composer can further enhance test-time scal- ing by producing refined, input-dependent parameter up- dates, effecti vely compensating for limitations of naive scal- ing strategies. By lev eraging these adapti ve updates, the model can dynamically adjust its internal representations for each input, improving output quality and main 4. Experiments W e ev aluate Composer across multiple generative model- ing scenarios to demonstrate its effecti veness in instance- specific adaptation, computational efficienc y , and high- fidelity generation. Our experiments cov er both class- conditioned and text-to-ima ge g eneration , as well as practi- cal applications in quantization-awar e and test-time scaling settings . Experimental Setup: W e compare our method with stan- dard static generative models. T o further demonstrate the benefits of Composer, we also conduct experiments using standard test-time training for generativ e models, where similar data to the input is randomly selected and the model is fine-tuned (using the same lo w-rank dimension r as in our method) before generating images. In all experiments, we set the low-rank dimension to r = 8 and the context- aware sampling ratio to α = 0 . 75 . All experiments are trained using NVIDIA A100 80GB. The training process employs the AdamW optimizer with a weight decay rate of 0.05. The learning rate is set to 1e-4, with the training epochs are 50 for all experiments. For ev aluation, FID and IS (Inception Score) are emplo yed for class-conditional im- age generation, whereas GenEval is used for text-to-image generation. 4.1. Class-Conditional Image Generation Datasets: W e use the ImageNet dataset for class- conditional image generation at resolutions of 256 × 256 and 512 × 512 . All models are ev aluated on the standard ImageNet validation set. Backbone Models: W e consider various generative model architectures including V AR (d-16, d-24, d-30) and DiT (L/2, XL/2). For each backbone, we compare three ap- proaches: (i) Standard, the baseline generative model; (ii) T est-time T raining (TTT), where the model is fine-tuned on input-specific data at inference; and (iii) Composer, our T able 2. Comparison of different generative models and methods in class-conditional image generation on ImageNet 256 × 256 . Inference time shows absolute and percentage increase relati ve to Standard. FID IS Pre Rec Step Parameter Time Memory Standard 3.55 274.4 0.84 0.51 10 310M 0.4 2.37G T est-time Training [ 14 ] 3.22 277.2 0.84 0.53 10 310M 40.52 (+10,030%) 4.58G V AR d-16 [ 27 ] Composer 3.15 280.4 0.85 0.53 10 412M 0.42 (+5%) 2.57G Standard 2.33 312.9 0.82 0.59 10 1.0B 0.60 8.21G T est-time Training [ 14 ] 2.15 317.3 0.8 0.64 10 1.0B 75.84 (+12,540%) 14.41G V AR d-24 [ 27 ] Composer 2.08 319.5 0.83 0.64 10 1.15B 0.63 (+5% ) 8.51G Standard 1.97 323.1 0.82 0.59 10 2.0B 1 16.57G T est-time Training [ 14 ] 1.85 327.7 0.81 0.62 10 2.0B 112.37 (+11,137%) 28.41G V AR d-30 [ 27 ] Composer 1.79 330.4 0.83 0.63 10 2.2B 1.07 (+7%) 16.97G Standard 5.02 167.2 0.75 0.57 250 458M 31 3.4G T est-time Training [ 14 ] 4.55 185.0 0.74 0.6 250 458M 75.21 (+142%) 6.8G DiT -L/2 [ 24 ] Composer 4.41 192.4 0.76 0.59 250 560M 31.02 (+0.06%) 3.5G Standard 2.27 278.2 0.83 0.57 250 675M 45 6.1G T est-time Training [ 14 ] 2.12 280.0 0.82 0.60 250 675M 117.24 (+160%) 12.1G DiT -XL/2 [ 24 ] Composer 2.06 285.6 0.84 0.58 250 825M 45.03 (+0.07%) 6.4G T able 3. Comparison in ImageNet 512 × 512 . Backbone Method FID IS Time Memory V AR d-36 [ 27 ] Standard 2.63 303.2 1.00 21.56G T est-time Training 2.54 305.4 112.37 (+11,100%) 32.45G Composer 2.51 305.7 1.07 (+7%) 21.96G DiT -XL/2 [ 24 ] Standard 3.04 240.8 81.00 8.3G T est-time Training 2.87 245.2 152.24 (+87%) 14.3G Composer 2.81 248.1 81.03 (+0.04%) 8.5G proposed framew ork that dynamically composes instance- specific parameters. Discussion: T able 2 reports results on ImageNet 256 × 256 , showing that Composer consistently outperforms both Stan- dard and T est-time Training (TTT) across all model scales. For instance, on V AR d-16, Composer reduces FID from 3.55 (Standard) and 3.22 (TTT) to 3.15 , while improving IS and maintaining precision and recall. Similar trends hold for larger backbones (V AR d-24/d-30, DiT -L/2, DiT - XL/2), confirming Composer’ s ability to produce more re- alistic and div erse generations. In addition, Composer incurs negligible computational cost. As shown in T able 2 , peak inference memory and runtime remain comparable to Standard, while TTT is sig- nificantly more expensi ve due to repeated fine-tuning. For example, on V AR d-30, Composer requires only 1.07s and 16.97G , compared to TTT’ s 11.12s and 28.41G. T able 3 extends the comparison to 512 × 512 resolution and larger models (V AR d-36, DiT -XL/2). Composer again achiev es the best trade-off between quality and efficiency , improving FID to 2.51 on V AR d-36 and 2.81 on DiT -XL/2, while keeping inference cost nearly identical to Standard. Overall, Composer delivers consistent gains in gener - ation quality with minimal overhead , establishing it as a scalable and efficient framework for high-fidelity class- conditional image generation. Computational and Memory Analysis: W e ev aluate the efficienc y of Composer in terms of inference time and peak memory , comparing it to Standard and T est-time T raining (TTT). As reported in T ables 2 and 3 , Composer introduces negligible overhead while consistently improving genera- tion quality . For instance, on V AR d-16 ( 256 × 256 ), Com- poser increases inference time from 0.40s to 0.42s (+0.2%) and peak memory from 2.37G to 2.57G (+3.6%), whereas TTT requires 40.52s (+10,030%) and 4.58G (+93%). This ef ficiency is maintained across all backbones and resolutions. On larger models such as V AR d-30 and DiT - XL/2, Composer keeps inference time and memory nearly identical to Standard (e.g., V AR d-30: 1.07s, 16.97G vs. Standard 1.00s, 16.57G), while TTT incurs significant com- putational cost due to per-instance gradient updates. Simi- larly , at 512 × 512 resolution, Composer achieves improved FID (e.g., V AR d-36: 2.51) with only minor increases in time and memory , whereas TTT consumes ov er 100× more time and nearly 50% more memory . These results highlight that Composer’ s instance-specific parameter composition is highly ef ficient: lo w-rank updates generated by a lightweight meta-generator enable adaptiv e inference without iterative optimization or large memory duplication. Consequently , Composer is a practical solu- tion for high-resolution and large-scale generati ve models, combining quality improv ements with scalability . 4.2. T ext-to-Image Generation T able 4. Comparison of text-to-image generation performance on Stable Diffusion 2.1 (SD2.1) using FID-30K and CLIP-30K met- rics. Backbone Method FID-30K CLIP-30K Time Memory SD2.1 [ 25 ] Standard 13.45 0.30 1.00 8G T est-time Training 13.15 0.31 41.52 (+4,000%) 55G Composer 13.07 0.32 1.02 (+2%) 8.4G Datasets: W e ev aluate our method on the MS-COCO 2014 dataset, following the standard 30K prompt ev aluation pro- tocol used in prior works. The metrics include FID-30K and CLIP-30K, which assess image fidelity and text–image semantic alignment respectiv ely . Discussion: T able 10 summarizes the quantitative results on SD2.1. Composer consistently improv es generation T able 5. Quantized diffusion model performance with and with- out Composer . W/A denotes weight/activation bitwidth. Metrics include IS, FID, sFID, and Precision. Bitwidth (W/A) Baseline IS FID sFID Precision 32/32 Full Precision 364.73 11.28 7.7 93.66 4/8 Q-Diffusion [ 17 ] 336.8 9.29 9.29 91.06 + Composer 347.4 8.95 8.92 92.35 4/8 CTEC [ 18 ] 355.62 8.52 7.31 93.81 + Composer 359.2 8.25 7.11 94.15 2/8 Q-Diffusion [ 17 ] 49.08 43.36 17.15 43.18 + Composer 78.21 35.26 14.5 55.2 2/8 CTEC [ 18 ] 176.37 7.43 7.98 80.2 + Composer 191.74 7.11 7.45 82.5 quality o ver both Standard and TTT . Specifically , it reduces FID-30K from 13.45 (Standard) and 13.15 (TTT) to 13.07 , while increasing CLIP-30K from 0.30 to 0.32 , indicating better text–image correspondence and o verall fidelity . In terms of ef ficiency , Composer remains nearly as lightweight as the Standard model, requiring only 1.02s in- ference time and 8.4G peak memory—far lower than TTT , which demands 41.52s and 55G. Overall, these results demonstrate that Composer gen- eralizes effecti vely to text-to-image generation, deliv ering higher semantic alignment and visual quality with mini- mal computational overhead , making it a practical frame- work for adapti ve, efficient diffusion-based image synthe- sis. 4.3. Composer f or Quantized Diffusion Models Datasets and Setting: W e ev aluate Composer on COCO 2014 for dif fusion models under post-training quantization. Bitwidths for weights and activ ations (W/A) v ary across ex- periments, including 32/32 (full precision), 4/8, and 2/8. W e compare standard quantized backbones (Q-Diffusion and CTEC) with their Composer-augmented counterparts. Met- rics include Inception Score (IS), FID, sFID, and Precision (%). Discussion: T able 5 shows that Composer consistently improv es generativ e quality and stability under quantiza- tion. F or 4/8 bit models, Composer increases IS and re- duces FID and sFID for both Q-Diffusion and CTEC, while slightly improving Precision. For e xtreme 2/8 quantization, Composer provides dramatic improvements: Q-Dif fusion + Composer increases IS from 49.08 to 78.21 and Pre- cision from 43.18% to 55.2%, while reducing FID and sFID substantially . CTEC + Composer sho ws similar gains, demonstrating that Composer ef fectively compensates for quantization-induced degradation. Overall, these results highlight that Composer enables high- fidelity , quantization-aware generation, restoring quality while maintaining computational ef ficiency , and is effecti ve across dif ferent backbone architectures and extreme low- precision settings. T able 6. Comparison of SD2.1 variants on COCO 2014 (30K sam- ples). Metrics include FID, CLIP similarity , inference time (s), and peak GPU memory . Method FID-30K CLIP-30K Time Memory SD2.1 [ 25 ] 13.45 0.30 1 8G + Composer 13.07 0.32 1.02 (+2%) 8.4G (+5%) + ORM [ 8 ] 13.15 0.33 20 8G + ORM + Composer 12.87 0.34 20.02 (+0.1%) 8.4G (+5%) + P ARM [ 8 ] 13.07 0.33 20 8G + P ARM + Composer 12.82 0.34 20.02 (+0.1%) 8.4G (+5%) (a) IS scor e f or r (b) FID scor e f or r (c) IS scor e f or 𝛼 (d) FID scor e f or 𝛼 Figure 4. Impact of low-rank dimension r and context-aw are sam- pling parameter α on ImageNet 256 × 256 class-conditional image generation. (a) Inception Score (IS) vs. r , (b) FID vs. r , (c) IS vs. α , (d) FID vs. α . Higher IS and lower FID indicate better genera- tion quality . 4.4. Composer f or T est-T ime Scaling T able 6 shows that Composer consistently improv es gen- eration quality across all settings. For the baseline SD2.1, Composer reduces FID from 13.45 to 13.07 and increases CLIP from 0.30 to 0.32, with minimal ov erhead (+2% run- time, +5% memory). When applied on top of ORM [ 8 ] and P ARM [ 8 ] backbones, Composer further improv es FID and CLIP: SD2.1 + ORM + Composer achiev es FID 12.87 and CLIP 0.34, while SD2.1 + P ARM + Composer reaches FID 12.82 and CLIP 0.34, with ne gligible increase in infer- ence cost. Overall, these results demonstrate that Composer reliably enhances generation quality and semantic align- ment for SD2.1, while maintaining efficiency across differ - ent model augmentations. 4.5. Ablation Studies Low-rank Dimension r : W e e v aluate the effect of the lo w- rank dimension r on class-conditional image generation us- ing ImageNet 256 × 256 . Figures 4 (a-b) illustrate the IS and FID, respectiv ely , for different v alues of r ( 4 , 8 , 16 , 32 ). As shown, increasing r generally improves IS while slightly reducing FID, indicating that a larger lo w-rank dimension enhances both fidelity and div ersity of generated images. Context-aware Sampling Parameter α : W e further ana- lyze the impact of the context-aware sampling parameter α on generation quality . Figures 4 (c-d) show IS and FID for α values from 0 . 0 to 1 . 0 . Moderate values of α (around 0 . 5 - 0 . 75 ) achiev e the best balance between IS and FID, suggest- ing that context-aw are sampling improves both realism and div ersity without overfitting to conte xt-specific prompts. Standard vs Global-Local Attention. W e ev aluate the im- pact of different attention mechanisms on class-conditional image generation for V AR d-16 and V AR d-24. The atten- tion variants include Standard Attention, Local Attention, and Global-Local Attention (first block global, rest local). T able 7 reports FID and IS metrics for each setup. From T a- ble 7 , Global-Local Attention achieves the lowest FID and highest IS on both V AR d-16 and V AR d-24, demonstrating that combining global and local context improves genera- tiv e quality compared to purely local or standard attention. T able 7. FID and IS comparison of attention mechanisms on V AR d-16 and V AR d-24 for ImageNet 256 × 256 . Attention V AR d-16 V AR d-24 FID ↓ IS ↑ FID ↓ IS ↑ Standard Attention 3.55 274.4 2.33 312.9 Global-Local Attention 3.15 280.4 2.08 319.5 Comparing Our T ransformer -Based Generator vs Other Architectur es. W e ev aluate the impact of different backbone architectures on class-conditional image genera- tion for V AR d-16 and V AR d-24. Architectures include CNN-based, MLP-based, and Transformer -based genera- tors. T able 8 reports FID and IS metrics for each setup. From T able 8 , the T ransformer-based generator clearly out- performs CNN- and MLP-based architectures on both V AR d-16 and V AR d-24, achieving the lo west FID and high- est IS. This indicates that Transformers are more effecti ve at modeling complex image distributions, producing more realistic and div erse class-conditional images for ImageNet 256 × 256 . T able 8. FID and IS comparison of different generator architec- tures on V AR d-16 and V AR d-24 for ImageNet 256 × 256 . Architecture V AR d-16 V AR d-24 FID ↓ IS ↑ FID ↓ IS ↑ CNN-based Generator 3.35 276.1 2.18 315.2 MLP-based Generator 3.32 275.4 2.21 315.0 Transformer -based Generator 3.15 280.4 2.08 319.5 Comparing Different T raining and Sampling Pipelines. T ables 8 and 10 report the performance of different gen- erativ e frame works in class-conditional and text-to-image settings, respectively . For ImageNet 256 × 256 , T able 8 shows that the T ransformer-based generator consistently outperforms CNN- and MLP-based architectures for V AR d-16 and V AR d-24, achieving the lowest FID and highest IS. This indicates that Transformers better model complex image distributions, producing more realistic and diverse class-conditional generations. In the text-to-image setting on COCO 2014 (T able 10 ), the Context-A ware Pipeline using Composer achiev es the best FID-30K and CLIP-30K scores, outperforming both V anilla T raining and Similar-Prompt Sampling. This sho ws that Composer improves generation quality and text–image alignment while remaining efficient. T able 9. FID and IS comparison of different training/sampling pipelines on V AR d-16 and V AR d-24 for ImageNet 256 × 256 . Pipeline V AR d-16 V AR d-24 FID ↓ IS ↑ FID ↓ IS ↑ V anilla Training 3.55 274.4 2.33 312.9 Full-Class 3.28 277.6 2.18 316.5 Context-A ware Pipeline 3.15 280.4 2.08 319.5 T able 10. Comparison of text-to-image generation pipelines on SD2.1 using COCO 2014. Method FID-30K ↓ CLIP-30K ↑ V anilla Training 13.45 0.30 Similar-Prompt Sampling 13.15 0.31 Context-A ware Pipeline 13.07 0.32 Additional visualization, ablation studies and results can be found at Supplementary Material . 5. Conclusion W e introduced Composer , a plug-in framew ork that turns static diffusion and visual auto-regressiv e models into adap- tiv e generators by composing lightweight, low-rank pa- rameter updates per input. Composer sidesteps expensiv e test-time training, providing fine-grained, instance-specific adaptation on top of frozen pretrained backbones with neg- ligible computational and memory overhead. Experiments on v arious image generation task including quantization and test-time scalings show consistent performance gains. By enabling input-aware parameter composition for off-the- shelf generators, Composer moves generativ e modeling to- ward genuinely adapti ve, context-sensiti ve behavior . Limitation and Future work. Despite these gains, Com- poser introduces a small amount of additional inference time and memory usage. Its ef ficiency depends on the num- ber of generation steps: for models with man y steps, such as DiT with 250 iterations, the overhead remains below 0.1%, whereas for models with very few steps, such as one-step diffusion, the relativ e ov erhead becomes more noticeable. In future work, we aim to extend this paradigm to broader vision tasks, including transfer learning for generative mod- eling, where only Composer is fine-tuned while the back- bone remains frozen. Acknowledgements T rung Le, Mehrtash Harandi, and Dinh Phung were sup- ported by the ARC Discovery Project grant DP250100262. T rung Le and Mehrtash Harandi were also supported by the Air Force Office of Scientific Research under award number F A9550-23-S-0001. References [1] Charlotte Caucheteux, Alexandre Gramfort, and Jean-R ´ emi King. Evidence of a predictive coding hierarchy in the hu- man brain listening to speech. Natur e human behaviour , 7 (3):430–441, 2023. 1 [2] Lei Chen, Y uan Meng, Chen T ang, Xinzhu Ma, Jingyan Jiang, Xin W ang, Zhi W ang, and W enwu Zhu. Q-dit: Ac- curate post-training quantization for diffusion transformers. In Pr oceedings of the Computer V ision and P attern Recogni- tion Confer ence , pages 28306–28315, 2025. 5 [3] Kun Cheng, Xiao He, Lei Y u, Zhijun T u, Mingrui Zhu, Nan- nan W ang, Xinbo Gao, and Jie Hu. Diff-moe: Diffusion transformer with time-aware and space-adaptiv e experts. In F orty-second International Conference on Machine Learn- ing . 1 [4] Damai Dai, Chengqi Deng, Chenggang Zhao, RX Xu, Huazuo Gao, Deli Chen, Jiashi Li, W angding Zeng, Xingkai Y u, Y u W u, et al. Deepseekmoe: T ow ards ultimate expert specialization in mixture-of-experts language models. arXiv pr eprint arXiv:2401.06066 , 2024. 1 [5] Juncan Deng, Shuaiting Li, Zeyu W ang, Hong Gu, Kedong Xu, and Kejie Huang. Vq4dit: Efficient post-training vector quantization for diffusion transformers. In Proceedings of the AAAI Conference on Artificial Intelligence , pages 16226– 16234, 2025. 5 [6] Jianping Gou, Baosheng Y u, Stephen J Maybank, and Dacheng T ao. Knowledge distillation: A survey . Interna- tional journal of computer vision , 129(6):1789–1819, 2021. 5 [7] Antonino Greco, Julia Moser, Hubert Preissl, and Markus Siegel. Predictive learning shapes the representational ge- ometry of the human brain. Nature communications , 15(1): 9670, 2024. 1 [8] Ziyu Guo, Renrui Zhang, Chengzhuo T ong, Zhizheng Zhao, Rui Huang, Haoquan Zhang, Manyuan Zhang, Jiaming Liu, Shanghang Zhang, Peng Gao, et al. Can we generate images with cot? let’ s verify and reinforce image generation step by step. arXiv preprint , 2025. 5 , 7 [9] Seokil Ham, Sangmin W oo, Jin-Y oung Kim, Hyojun Go, Byeongjun Park, and Changick Kim. Diffusion model patch- ing via mixture-of-prompts. In Pr oceedings of the AAAI Confer ence on Artificial Intelligence , pages 17023–17031, 2025. 1 [10] Jian Han, Jinlai Liu, Y i Jiang, Bin Y an, Y uqi Zhang, Zehuan Y uan, Bingyue Peng, and Xiaobing Liu. Infinity: Scaling bit- wise autore gressive modeling for high-resolution image syn- thesis. In Pr oceedings of the Computer V ision and P attern Recognition Confer ence , pages 15733–15744, 2025. 1 [11] Moritz Hardt and Y u Sun. T est-time training on near- est neighbors for large language models. arXiv pr eprint arXiv:2305.18466 , 2023. 1 [12] Edward J Hu, Y elong Shen, Phillip W allis, Zeyuan Allen- Zhu, Y uanzhi Li, Shean W ang, Lu W ang, W eizhu Chen, et al. Lora: Low-rank adaptation of large language models. ICLR , 1(2):3, 2022. 3 [13] Jinwu Hu, Zhitian Zhang, Guohao Chen, Xutao W en, Chao Shuai, W ei Luo, Bin Xiao, Y uanqing Li, and Mingkui T an. T est-time learning for large language models. arXiv pr eprint arXiv:2505.20633 , 2025. 1 [14] Jonas H ¨ ubotter , Sascha Bongni, Ido Hakimi, and Andreas Krause. Efficiently learning at test-time: Active fine-tuning of llms. arXiv preprint , 2024. 1 , 6 [15] Xuan-May Le, Ling Luo, Uwe Aickelin, and Minh-T uan T ran. Shapeformer: Shapelet transformer for multiv ariate time series classification. In Proceedings of the 30th A CM SIGKDD Conference on Knowledge Discovery and Data Mining , pages 1484–1494, 2024. 3 [16] Dongyeun Lee, Jiw an Hur , Hyounguk Shon, Jae Y oung Lee, and Junmo Kim. Dmq: Dissecting outliers of dif fusion mod- els for post-training quantization. In Pr oceedings of the IEEE/CVF International Conference on Computer V ision , pages 18510–18520, 2025. 5 [17] Xiuyu Li, Y ijiang Liu, Long Lian, Huanrui Y ang, Zhen Dong, Daniel Kang, Shanghang Zhang, and Kurt Keutzer . Q-diffusion: Quantizing diffusion models. In Pr oceedings of the IEEE/CVF International Conference on Computer V i- sion , pages 17535–17545, 2023. 5 , 7 [18] Y anxi Li and Chengbin Du. Optimizing quantized diffusion models via distillation with cross-timestep error correction. In Pr oceedings of the AAAI Conference on Artificial Intelli- gence , pages 18530–18538, 2025. 5 , 7 [19] Fangfu Liu, Hanyang W ang, Y imo Cai, Kaiyan Zhang, Xiao- hang Zhan, and Y ueqi Duan. V ideo-t1: T est-time scaling for video generation. arXiv pr eprint arXiv:2503.18942 , 2025. 5 [20] Jinlai Liu, Jian Han, Bin Y an, Hui W u, Fengda Zhu, Xing W ang, Y i Jiang, Bingyue Peng, and Zehuan Y uan. Infini- tystar: Unified spacetime autoregressi ve modeling for visual generation. arXiv preprint , 2025. 1 [21] Zheyuan Liu, Munan Ning, Qihui Zhang, Shuo Y ang, Zhongrui W ang, Y iwei Y ang, Xianzhe Xu, Y ibing Song, W eihua Chen, Fan W ang, et al. Cot-lized diffusion: Let’ s reinforce t2i generation step-by-step. arXiv pr eprint arXiv:2507.04451 , 2025. 5 [22] J Pablo Mu ˜ noz and Jinjie Y uan. Rttc: Reward-guided col- laborativ e test-time compute. In F indings of the Association for Computational Linguistics: EMNLP 2025 , pages 24793– 24809, 2025. 1 [23] Byeongjun Park, Hyojun Go, Jin-Y oung Kim, Sangmin W oo, Seokil Ham, and Changick Kim. Switch diffusion trans- former: Synergizing denoising tas ks with sparse mixture-of- experts. In Eur opean Confer ence on Computer V ision , pages 461–477. Springer , 2024. 1 [24] W illiam Peebles and Saining Xie. Scalable dif fusion models with transformers. In Proceedings of the IEEE/CVF inter- national conference on computer vision , pages 4195–4205, 2023. 1 , 6 [25] Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser , and Bj ¨ orn Ommer . High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF conference on computer vision and pattern r ecognition , pages 10684–10695, 2022. 1 , 6 , 7 [26] Peize Sun, Y i Jiang, Shoufa Chen, Shilong Zhang, Bingyue Peng, Ping Luo, and Zehuan Y uan. Autoregressi ve model beats diffusion: Llama for scalable image generation. arXiv pr eprint arXiv:2406.06525 , 2024. 1 [27] K eyu T ian, Y i Jiang, Zehuan Y uan, Bingyue Peng, and Li- wei W ang. V isual autoregressi ve modeling: Scalable image generation via next-scale prediction. Advances in neural in- formation processing systems , 37:84839–84865, 2024. 1 , 5 , 6 [28] Minh-T uan T ran, Trung Le, Xuan-May Le, Jianfei Cai, Mehrtash Harandi, and Dinh Phung. Large-scale data-free knowledge distillation for imagenet via multi-resolution data generation. arXiv preprint , 2024. 5 [29] Minh-T uan Tran, Trung Le, Xuan-May Le, Mehrtash Ha- randi, and Dinh Phung. T ext-enhanced data-free approach for federated class-incremental learning. In Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , pages 23870–23880, 2024. [30] Minh-T uan Tran, Trung Le, Xuan-May Le, Mehrtash Ha- randi, Quan Hung Tran, and Dinh Phung. Nayer: Noisy layer data generation for efficient and effecti ve data-free knowl- edge distillation. In Proceedings of the IEEE/CVF con- fer ence on computer vision and pattern recognition , pages 23860–23869, 2024. [31] Minh-T uan Tran, T rung Le, Xuan-May Le, Thanh-T oan Do, and Dinh Phung. Enhancing dataset distillation via non- critical region refinement. In Pr oceedings of the Computer V ision and P attern Recognition Conference , pages 10015– 10024, 2025. 5 [32] Ashish V aswani, Noam Shazeer , Niki Parmar , Jakob Uszko- reit, Llion Jones, Aidan N Gomez, Łukasz Kaiser , and Illia Polosukhin. Attention is all you need. Advances in neural information pr ocessing systems , 30, 2017. 3 [33] Haoxuan W ang, Y uzhang Shang, Zhihang Y uan, Junyi Wu, Junchi Y an, and Y an Y an. Quest: Low-bit diffusion model quantization via efficient selective finetuning. In Pr oceed- ings of the IEEE/CVF International Conference on Com- puter V ision , pages 15542–15551, 2025. 5 [34] Lvmin Zhang, Anyi Rao, and Maneesh Agrawala. Adding conditional control to text-to-image diffusion models, 2023. 1 [35] Zhan Zhuang, Y ulong Zhang, Xuehao W ang, Jiangang Lu, Y ing W ei, and Y u Zhang. Time-v arying lora: T o wards ef fec- tiv e cross-domain fine-tuning of diffusion models. Advances in Neural Information Pr ocessing Systems , 37:73920–73951, 2024. 3 T est-T ime Instance-Specific Parameter Composition: A New Paradigm f or Adaptiv e Generativ e Modeling Supplementary Material A s ta inl es s - s t eel es pr es so mach ine on a w ooden c ount er , es pr es so pourin g int o a sm a l l c up , f a int s t eam, sc a tt er ed b eans , wa rm windo w l ig ht. O v er - ear s tud io hea dphones on a w ooden desk , mi x er , c a bl es a nd a l a pt op wa v ef orm bl ur r ed behin d, dim s tud io l it b y mo nit or a nd L E D s trips . D S LR c a me r a o n a tripod on a c ity r oof t op a t sun se t, wa rm l ig ht o v er a haz y sky l ine , c a me r a a nd t ri pod sh a rpl y f oc use d in the f or eg r ound. A t iger ’ s head a nd shoul der s em er g ing fr om ta l l g ol den g r a ss , bl ur r ed jun g l e behin d, wa rm a f t ern oon l ig ht, int ens e r ealis tic e xp r es si on. A sno w l eo pa r d wa l ki ng al o ng a na r r o w r o ck y l edg e with pat chy sno w , s t eep d r o p and di s t ant m o un t ai ns bl ur r ed behind, crisp hig h - al titude da yli gh t . A g r ey w o l f o n a r o ck y r id g e ab o v e a s no wy val l ey , di s t ant p ines , cold cl ear m o un t ai n ai r , l a t e - af t er no o n l igh t c asting l o ng sha do w s . Figure 5. Qualitativ e comparison on additional text-to-image examples. For each prompt, we show images produced by baseline methods and by our approach. 6. V isualization T o better understand how Composer affects the generativ e process beyond aggregate metrics, we provide additional qualitativ e examples in Figure 5 . For each text prompt, we juxtapose images produced by competiti ve baselines with those generated by our approach. Across a wide range of prompts, including both simple single-object de- scriptions and more challenging compositional or attribute- heavy queries, our method consistently produces samples that better match the specified content. V isually , Composer tends to preserve fine-grained ob- ject structure and attributes (e.g., pose, color, accessories) while also producing more coherent and contextually ap- propriate backgrounds. In contrast, baseline models often miss attrib utes, distort object shapes, or introduce clutter and artifacts in the scene. The improvements are especially noticeable for prompts that require binding multiple con- straints (such as specific styles, actions, or en vironments), where our instance-specific parameter composition yields sharper details and more faithful semantic alignment. These qualitativ e results complement our quantitative findings and highlight that Composer enhances both realism and prompt adherence in text-to-image generation. 7. Additional Ablation Studies. 7.1. Component Analysis W e provided the ablation to isolate each component (see T able 11 ). Both the Context-A ware Pipeline and Global- Local Attention individually improve FID/IS, and combin- ing them (full Composer) yields the best performance, indi- cating complementary gains. T able 11. Ablation isolating the two improvements in Composer: (i) Context-A ware Pipeline and (ii) Global-Local Attention. All settings use the same V AR backbone and training budget; we add one component at a time, then combine them. Method V AR d-16 V AR d-24 FID ↓ IS ↑ FID ↓ IS ↑ Composer 3.15 280.4 2.08 319.5 Composer – Context-A ware Pipeline 3.28 277.6 2.18 316.5 Composer – Global-Local Attention 3.26 276.2 2.16 314.9 Composer – Context-A ware Pipeline – Global-Local Attention 3.55 274.4 2.33 312.9 7.2. T oken initialization: W e provide an ablation to quantify the benefit of different type of token initialization in T able 12 . T able 12. Ablation on token initialization. Comparing our weight-projected initialization/anchoring vs. using constant learn- able tokens (trained as embeddings). Lower FID / higher IS is better . Method V AR d-16 V AR d-24 FID ↓ IS ↑ FID ↓ IS ↑ Constant learnable tokens (embeddings) 3.17 279.8 2.07 318.7 Ours (projected init/anchored to frozen weights) 3.15 280.4 2.08 319.5 7.3. Where to apply Composer: which layers / which weights? W e next study where Composer should be applied inside the self-attention blocks by ablating which projection matrices are modified. Concretely , we inject instance-specific low- rank updates into different subsets of the attention projec- tions { W Q , W K , W V , W O } and report results on ImageNet 256 × 256 for V AR d-16 and d-24 in T able 13 . Adapting only a single projection ( W Q , W K , or W V ) already yields competitiv e performance, with W V slightly outperforming W Q and W K , suggesting that modulating the value con- tent is particularly important. Combining projections con- sistently impro ves results: variants that include W V such as W Q + W V and W K + W V are stronger than W Q + W K , and our default choice W Q + W V achiev es the best or near-best FID/IS across both backbones (e.g., 3 . 15 FID / 280 . 4 IS for V AR d-16 and 2 . 08 FID / 319 . 5 IS for V AR d-24). Extend- ing Composer to all projections ( W Q + W K + W V + W O ) brings only mar ginal changes relati ve to W Q + W V while increasing the number of adapted parameters and memory footprint. W e therefore adopt adapting W Q and W V as the default configuration in all main e xperiments, as it pro vides the best accuracy–ef ficiency trade-of f. T able 13. Ablation on modified attention layers for V AR d-16 and V AR d-24 on ImageNet 256 × 256 . Layers V AR d-16 V AR d-24 FID ↓ IS ↑ FID ↓ IS ↑ W Q 3.29 274.4 2.18 312.9 W V 3.28 277.6 2.18 316.5 W K 3.32 273.8 2.21 311.2 W Q + W K 3.23 276.1 2.13 315.1 W K + W V 3.21 278.9 2.13 317.8 W Q + W V (Our choice) 3.15 280.4 2.08 319.5 W Q + W K + W V 3.16 279.9 2.09 318.7 W Q + W K + W V + W O 3.15 280.7 2.08 319.4 7.4. How large should the Composer generator’ s d model be? W e further in vestigate the capacity of the Composer meta- generator by ablating its transformer width d model . W e vary d model ∈ { 256 , 512 , 1024 , 2048 , 4096 } while keep- ing the backbone, lo w-rank dimension r , and all training settings fixed, and report results on ImageNet 256 × 256 for V AR d-16 and V AR d-24 in T able 14 . As we in- crease d model from 256 to 1024, both backbones consis- tently benefit: FID steadily decreases and IS impro ves (e.g., from 3.21/276.1 to 3.15/280.4 for V AR d-16, and from 2.14/313.2 to 2.08/319.5 for V AR d-24). Beyond d model = 1024 , further widening yields only mar ginal changes in FID and slightly fluctuating IS (e.g., V AR d-16 attains a slightly lower FID of 3.14 at 4096 but with lower IS than at 1024), while incurring additional parameters and train- ing cost. Overall, d model = 1024 provides the best or near- best FID/IS across both backbones under a modest com- putational budget, and we therefore adopt it as the default Composer configuration in all main experiments. T able 14. Ablation on Composer generator width d model for V AR d-16 and V AR d-24 on ImageNet 256 × 256 . d model V AR d-16 V AR d-24 FID ↓ IS ↑ FID ↓ IS ↑ 256 3.21 276.1 2.14 313.2 512 3.18 278.4 2.12 315.4 1024 (Our choice) 3.15 280.4 2.08 319.5 2048 3.15 279.9 2.09 318.9 4096 3.14 279.4 2.09 318.4 7.5. Composer f or OOD image generation: W e provide the additional experiment on personalized text- to-image generation in T able 15 . Unlike TTT , which fine- tunes the model per subject, Composer directly composes subject-adapted weights at inference time without gradi- ents, achieving stronger gains under the same test-time bud- get. This extension relies on single-subject training data, benefits from a larger Transformer backbone, and can fur- ther improv e with optional image-caption descriptor condi- tioning. T able 15. FID and IS comparison of different training/sampling pipelines on V AR d-16 and V AR d-24 for ImageNet 256 × 256 . Category Model FID ↓ IS ↑ Pre Rec Step Parameter Time Memory V AR d-16 T est-time Training 3.28 279.1 0.85 0.53 10 310M 40.52 (+10,030%) 4.58G Composer (ours) 3.23 281.4 0.87 0.56 10 412M 0.42 (+5%) 2.57G

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment