DSevolve: Enabling Real-Time Adaptive Scheduling on Dynamic Shop Floor with LLM-Evolved Heuristic Portfolios

In dynamic manufacturing environments, disruptions such as machine breakdowns and new order arrivals continuously shift the optimal dispatching strategy, making adaptive rule selection essential. Existing LLM-powered Automatic Heuristic Design (AHD) …

Authors: Jin Huang, Jie Yang, XinLei Zhou

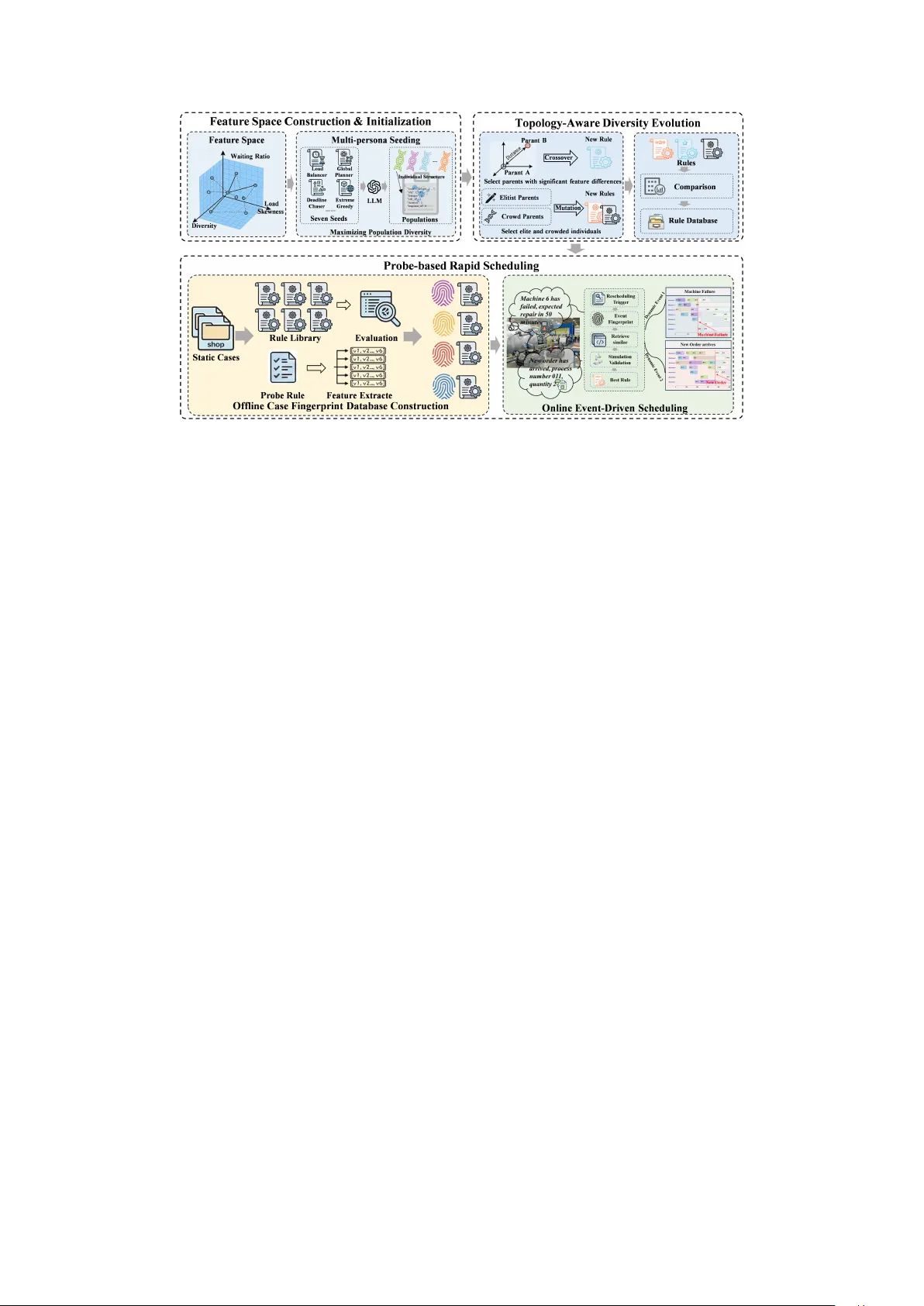

DSev olve: Enabling Real-T ime Adaptive Scheduling on Dynamic Shop Floor with LLM-Evolv ed Heuristic Portf olios Jin Huang 1 , Jie Y ang 1 , XinLei Zhou 1 , Qihao Liu 1 , Liang GA O 1 , Xinyu Li 1 1 School of Mechanical Science and Engineering, Huazhong Uni versity of Science and T echnology , W uhan, 430074, PR, China Correspondence: Xinyu Li, xinyuli@hust.edu.cn Abstract In dynamic manufacturing environments, dis- ruptions such as machine breakdowns and ne w order arriv als continuously shift the optimal dispatching strategy , making adaptiv e rule se- lection essential. Existing LLM-po wered Au- tomatic Heuristic Design (AHD) frameworks ev olve tow ard a single elite rule that cannot meet this adaptability demand. T o address this, we present DSev olve , an industrial scheduling framew ork that e volv es a quality-di verse port- folio of dispatching rules of fline and adapti vely deploys them online with second-lev el response time. Multi-persona seeding and topology- aware e volutionary operators produce a behav- iorally div erse rule archi ve index ed by a MAP- Elites feature space. Upon each disruption ev ent, a probe-based fingerprinting mechanism characterizes the current shop floor state, re- triev es high-quality candidate rules from an offline knowledge base, and selects the best one via rapid look-ahead simulation. Evaluated on 500 dynamic flexible job shop instances deriv ed from real industrial data, DSev olve outperforms state-of-the-art AHD frameworks, classical dispatching rules, genetic program- ming, and deep reinforcement learning, offer - ing a practical and deployable solution for in- telligent shop floor scheduling. 1 Introduction In modern manufacturing, the quality of dispatch- ing decisions on the shop floor directly determines ke y industrial indicators such as throughput, on- time delivery rate, and machine utilization ( Ding et al. , 2023 ). Dynamic disruptions, including ma- chine breakdowns and new order arriv als, contin- uously alter production conditions, requiring the scheduling system to respond within seconds ( Li et al. , 2025c ). This online adaptiv e scheduling capability is essential for maintaining operational ef ficiency in real factories. T raditional approaches to this challenge fall into three categories. Heuristic Dispatching Rules (HDRs) of fer millisecond-lev el response speed ( Hunsucker and Shah , 1992 ), b ut each rule em- bodies a fix ed priority logic that ine vitably under - performs when shop floor conditions de viate from its design assumptions ( Branke et al. , 2015 ). Evo- lutionary methods such as Genetic Programming (GP) ( Zhao et al. , 2025a ) automatically e v olve com- posite HDRs from predefined terminal sets (e.g., processing time, machine load, remaining work), yet the expressi v eness of the HDRs is bounded by the terminal vocab ulary , and the ev olved rule re- mains a single static policy applied uniformly to all states. Deep Reinforcement Learning (DRL) in- troduces adaptability through two paradigms: end- to-end neural dispatching that directly maps states to actions via GNNs ( Li et al. , 2025c ), and rule- selection agents that learn to choose among pre- defined HDRs at each decision point ( Zhao et al. , 2025a ). Ho wev er , end-to-end policies suf fer from poor transferability across unseen scales, while rule-selection agents are constrained by the qual- ity ceiling of their predefined action space. Both paradigms further face the interpretability barrier that hinders deployment on industrial lines. Recent advances in LLM-powered Automatic Heuristic Design (AHD) open a promising direc- tion. Frame works such as FunSearch ( Romera- Paredes et al. , 2024 ), EoH ( Liu et al. , 2024 ), ReEvo ( Y e et al. , 2024 ), and HSEvo ( Dat et al. , 2025 ) demonstrate that LLMs can ev olve nov el, inter- pretable heuristics that surpass human-designed baselines. In the scheduling domain, LSH ( Y u et al. , 2026 ) and SeEv o ( Huang et al. , 2026b ) further con- firm the viability of this paradigm. Howe ver , two gaps pre vent existing AHD frame works from di- rectly serving online dynamic scheduling. First, they con ver ge to ward a single elite heuristic, yet dynamic disruptions continuously alter shop floor conditions, causing the best-performing rule to dif- fer from one production state to the ne xt. Second, LLM-dri ven e volution is an e xpensi ve of fline pro- cess ( Huang et al. , 2026b ), and no e xisting frame- work provides a mechanism to bridge the offline rule archi ve with second-le vel deplo yment. T o address these challenges, we propose DSE- volve ( D ynamic S cheduling Evolve ), which de- couples quality-di versity heuristic e volution from online dispatching via an e xplicit feature space and probe-based retrie val. Our contrib utions are: 1) Multi-Persona Seeding. DSe volv e initial- izes the population using sev en orthogonal persona prompts, each encoding a distinct scheduling phi- losophy , ensuring broad coverage of the beha vioral feature space from the first generation. 2) T opology-A ware Evolutionary Operators. W e define a three-dimensional feature space and introduce distance-maximization crossov er and cro wding-contrastiv e mutation, which jointly pre- vent premature con vergence by pushing the popu- lation to ward underexplored re gions. 3) Probe-Based Instance Fingerprinting. A lightweight SPT probe performs rapid virtual simu- lation to extract a six-dimensional instance finger - print, enabling retrie val of the most suitable HDR from the offline archiv e within seconds of a dy- namic e vent. 2 Related W ork 2.1 Dynamic Flexible Job Shop Scheduling The Dynamic Flexible Job Shop Scheduling Prob- lem (DFJSSP) e xtends classical FJSSP ( Zhao et al. , 2025b ) with stochastic e vents such as ne w order arri vals and machine breakdo wns ( Pinedo , 2016 ). Exact methods struggle with frequent reschedul- ing demands. Figueroa et al. ( 2024 ) proposed a MILP-based frame work for dynamic e vents b ut it requires manual model adjustments under chang- ing configurations. Peng et al. ( 2025 ) proposed LLM-assisted automatic MILP construction, yet it remains limited under high-frequency distur- bances. Metaheuristics like genetic algorithms ( Huang et al. , 2026a ) and particle swarm optimiza- tion ( Zhang and Zhang , 2024 ) produce good so- lutions but their iterati v e nature incurs prohibiti ve latency for rescheduling. HDRs of fer a practical alternati ve due to their speed and simplicity ( Branke et al. , 2015 ), yet no single rule generalizes across di verse disruption pat- terns. GP has been studied for automatic HDR e vo- lution ( Nguyen et al. , 2017 ), but their performance depends heavily on predefined terminal sets that struggle to capture the coupled, multi-dimensional state information in DFJSSP ( Zhao et al. , 2025a ; Huang et al. , 2025 ). DRL has become a mainstream approach for DFJSSP , where agents learn policies through en vi- ronment interaction. Rule-selection DRL learns meta-strategies to choose among HDRs based on current shop floor states ( Li et al. , 2025b ), while GNN-based end-to-end methods learn di- rectly from graph representations of the scheduling en vironment ( Song et al. , 2022 ). Ho wev er , these methods require e xtensiv e training on specific prob- lem distrib utions and show limited transferability when objectiv es or configurations change at de- ployment. Moreover , recent studies have demon- strated that well-designed HDRs can still outper- form DRL-based approaches in certain dynamic scenarios ( Huang et al. , 2026b ), underscoring the importance of high-quality rule design. 2.2 LLM-Driven A utomatic Heuristic Design The use of LLMs as e v olutionary operators for al- gorithm generation has attracted growing attention. Romera-Paredes et al. ( 2024 ) proposed FunSearch, the first approach to employ LLMs as mutation engines within e volutionary loops for disco vering nov el heuristics at scale. Building on this foun- dation, Liu et al. ( 2024 ) introduced Ev olution of Heuristics (EoH), which co-ev olves natural lan- guage thoughts alongside code and achiev es strong performance on bin packing problems. Y e et al. ( 2024 ) proposed ReEv o, incorporating a reflectiv e mechanism that extracts v erbal gradients from per- formance history to guide subsequent mutations. T o better balance exploration and exploitation, Dat et al. ( 2025 ) combined harmony search with ge- netic operators in HSEv o. More recently , Guo et al. ( 2025 ) proposed NeRM, a nested strategy that first refines the task description before optimizing algo- rithm code, and further incorporates a lightweight performance predictor to filter unpromising candi- dates prior to expensi ve ev aluation, substantially improving search ef ficiency . W ithin scheduling, LLM-based AHD is still at an early stage. Y u et al. ( 2026 ) applied e v olution- ary code generation in LSH to static flow shop, job shop, and open shop problems on T aillard bench- marks. Huang et al. ( 2026b ) extended this direction with SeEv o to dynamic fuzzy job shops via teacher - student and self-reflection mechanisms. Qiu et al. ( 2026 ) addressed dynamic fle xible assembly flo w shops with LLM4DRD, a dual-expert architecture. Li et al. ( 2025a ) tackled the lot-streaming hybrid job shop with LLM-AMA by decomposing the problem into batching and sequencing sub-tasks, each handled by LLM-generated heuristics within a memetic computing framew ork. Zhao et al. ( 2026 ) proposed a hybrid framew ork combining LLM- assisted initialization with reinforcement learning for energy-a ware hybrid flo w shop scheduling. De- spite these advances, none of these methods con- struct an explicit beha vioral feature space to main- tain population div ersity , nor do they provide a mechanism for online deployment of ev olv ed rules under stochastic disruptions. 3 Methodology Figure 1 ov ervie ws DSev olve. The framework op- erates in three stages: Feature Space Construction & Initialization, T opology-A ware Div ersity Evolu- tion, and Probe-Based Rapid Scheduling. 3.1 F eature Space Construction & Initialization 3.1.1 Beha vioral Featur e Space T o quantify the phenotypic behavior of LLM- generated HDRs, we construct a three-dimensional behavioral feature space F . For any heuristic code c ∈ C (code space), we define a mapping Φ : C → R 3 that extracts a behavioral descriptor by ex ecuting c on a set of calibration instances and analyzing the resulting schedule: v c = Φ( c ) = [ f ske w , f wait , f div ] ⊤ , (1) where f ske w ( Load Ske wness ) measures the im- balance of workload distrib ution across machines, f wait ( W aiting Ratio ) captures the a verage idle wait- ing proportion of jobs, and f div ( Diversity ) quan- tifies the beha vioral nov elty of the rule relati ve to the existing archi ve population. All feature v alues are normalized to [0 , 1] using corpus-lev el statis- tics maintained by the archiv e database. F ormal definitions are provided in Appendix A . This three- dimensional space serves as the structural foun- dation for both evolutionary parent selection and quality-di verse archi ve inde xing. 3.1.2 Multi-Persona Seeding Standard AHD methods initialize from a sin- gle prompt, producing indi viduals that cluster within a narrow region of F . T o maximize ini- tial cov erage, DSev olve introduces K =7 orthog- onal persona prompts { P 1 , . . . , P 7 } , each encod- ing a distinct scheduling philosoph y: (1) Extr eme Gr eedy , which prioritizes immediate local opti- mality , (2) Load Balancer , which enforces work- load fairness across machines, (3) Global Planner , which injects look-ahead pressure based on do wn- stream burden, (4) Deadline Chaser , which aggres- si vely prioritizes urgenc y to prev ent late deliv eries, (5) Contrarian , which front-loads long operations against con ventional wisdom, (6) F ormula Synthe- sizer , which constructs nonlinear scoring functions, and (7) Hybrid Hier ar chical , which emplo ys con- ditional regime-based decision logic. The initial population is generated as: c ( k ) ∼ LLM ( T , P k ) , k = 1 , . . . , 7 , (2) where T is the task description containing the FJSP definition and code interface. Each per- sona produces multiple variants with ele v ated sam- pling temperature, distrib uting the initial individ- uals across distinct regions of F . The complete persona prompts are provided in Appendix ?? .5. 3.2 T opology-A ware Di versity Evolution Building on the feature space F , DSev olve intro- duces e volutionary operators whose selection pres- sure is governed by the topological structure of the archi ve rather than objecti ve v alue alone. 3.2.1 Distance-Maximization Crosso ver T o encourage behavioral complementarity in off- spring, we enforce maximum feature-space separa- tion when pairing parents. An parent p a is drawn from a top-ranked cell elite, and its complement partner is selected as the indi vidual occupying the farthest cell in F : p b = arg max p ∥ Φ( p ) − Φ( p a ) ∥ 2 , . (3) The LLM receiv es both parents’ code and is prompted to synthesize a unified priority func- tion that combines the strengths of both (see Ap- pendix ?? .6 for the crossov er prompt template). This formulation encourages offspring to inherit complementary scheduling traits. 3.2.2 Dual-T rack Mutation Elitist Mutation. The globally best-performing indi vidual undergoes minor code-level perturba- tions guided by accumulated design insights, serv- ing as a local refinement operator . Contrastive Mutation. W e identify the most cro wded individuals in F by computing each indi- vidual’ s isolation index I ( c ) = min c ′ = c ∥ Φ( c ) − Figure 1: The architecture of our proposed DSEvolve frame work. Φ( c ′ ) ∥ 2 and selecting those with the smallest val- ues. A contrasti ve prompt describes the parent’ s behavioral profile and instructs the LLM to gener - ate a variant with deliberately opposing characteris- tics (e.g., re v ersing monotonic preferences, flipping look-ahead depth). This mechanism maintains con- tinuous outward pressure on the population fron- tier , directing exploration tow ard under-occupied regions (prompt template in Appendix ?? .7-8). 3.2.3 Archi ve Construction W e maintain a quality-diverse archi ve A follo wing the MAP-Elites paradigm. The feature space F is discretized into cells, retaining only the highest- performing individual within each beha vioral niche. Crucially , this structured archiv e serves as the pop- ulation pool for topology-aw are parent selection, facilitating both distance-maximization crosso ver and contrasti ve mutation. 3.3 Probe-Based Rapid Scheduling T o deploy the archi ve A in a dynamic en vironment, DSe volv e employs a two-phase mechanism: of- fline knowledge base construction and online ev ent- dri ven retrie v al. 3.3.1 Probe Finger printing W e define a six-dimensional probe fingerprint to characterize each scheduling state compactly . Gi ven a snapshot S t , we first compute tw o instan- taneous state features from the li ve system: f state = [ f den , f aflex ] ⊤ , (4) where f den (Load Density) is the ratio of pending operations to a vailable machines, and f aflex (A ver- age Flexibility) is the mean number of candidate machines per pending operation. W e then execute a fast SPT simulation from S t to completion and extract four probe-deri ved features: f probe = [ f p ske w , f cpd , f flex , f p wait ] ⊤ , (5) where f p ske w is the max-to-av erage machine work- load ratio indicating bottleneck sev erity , f cpd is the probe makespan divided by the theoretical lower bound, f flex reflects routing flexibility usage, and f p wait captures congestion lev el. The full fingerprint is defined as follo w: f = [ f ⊤ probe , f ⊤ state ] ⊤ ∈ R 6 . (6) Formal definitions of each component are in Ap- pendix B . 3.3.2 Offline Case Fingerprint Database Construction W e generate N static FJSP instances spanning di- verse scales. F or each instance S i , the probe finger- print f i is computed and every rule in A is ev alu- ated. The top- R rules ranked by makespan form the recommended set R ∗ i . The knowledge base KB = { ( f i , R ∗ i ) } N i =1 stores these tuples. All fin- gerprints are Z-score normalized using the corpus- le vel mean µ and standard deviation σ . 3.3.3 Online Event-Driv en Scheduling When a dynamic ev ent (ne w order arri val or ma- chine breakdo wn) occurs at time t , the system clears machine buf fers, returns pending operations to the scheduling pool, captures snapshot S t , and computes the normalized fingerprint ˆ f curr . The top- k most similar cases are retrieved via v ariance- weighted Euclidean distance: d ( i ) = ∥ w ⊙ ( ˆ f curr − ˆ f i ) ∥ 2 , (7) where w ∈ R 6 has w j ∝ V ar ( f j ) . A candidate set of up to k × R rules is aggregated from the retrie ved cases. Each candidate under goes a rapid look-ahead simulation from S t , and the rule yield- ing the minimum makespan is selected: r ∗ = arg min r ∈C Makespan ( Sim ( S t , r )) . (8) This rule dispatches jobs until the next dynamic e vent triggers a ne w retrie v al cycle. The complete pseudocode is provided in Appendix ?? . 4 Experiments 4.1 Experimental Setup Dataset. W e ev aluate DSev olve on a comprehen- si ve benchmark of over 500 dynamic FJSP in- stances deriv ed from real industrial data, incor- porating stochastic disruptions including machine breakdo wns and new order insertions. The dataset is partitioned into three functional subsets: (1) an evolution set (5 instances) for offline HDR disco v- ery , (2) a case library set (100 instances) for con- structing the probe-based fingerprint database, and (3) a test set (500 instances) for final e v aluation. The test set is further di vided by operational com- plexity and event frequency into Easy ( S1 , 70 in- stances), Medium ( S2 , 240 instances), and Hard ( S3 , 190 instances) scenarios. LLM Configurations. Three LLMs are used: Qwen-Plus, DeepSeek-V3, and GPT -4o-mini. Each competing framework is independently run with each LLM on all test cases. Baselines. W e compare DSev olve against three categories of methods: (1) AHD frame works : EoH ( Liu et al. , 2024 ), ReEvo ( Y e et al. , 2024 ), and HSEvo ( Dat et al. , 2025 ), each generating 400 v alid HDRs per LLM configuration. (2) Classical HDRs : SPT , LPT , SRM, SSO, and LSO. (3) Evo- lutionary and learning-based methods : GP ( Zhao et al. , 2025a ) and DRL ( Li et al. , 2025b ). Imple- mentation details for GP and DRL are provided in Appendix D . Evaluation Pr otocol. For all AHD framew orks, we adopt a unified online scheduling protocol. Af- ter each frame work e v olves 400 candidate HDRs, we construct a case library o ver 100 instances using T able 1: Comparison with AHD framew orks on dy- namic FJSP test set. A vg and Best denote the average and best makespan across three LLM configurations, respectiv ely . The lower results are better and bold . Method S1 (Easy) S2 (Medium) S3 (Hard) A vg Best A vg Best A vg Best EoH 2565.8 2526.6 2920.0 2876.0 3172.6 3130.4 HSEvo 2594.9 2541.9 2942.2 2888.8 3192.2 3137.3 ReEvo 2580.4 2539.9 2932.6 2883.5 3187.4 3135.6 DSevolv e 2547.8 2493.1 2890.5 2836.3 3139.4 3085.7 T able 2: A verage makespan comparison ag ainst classi- cal HDRs, GP , and DRL baselines across v arying dif fi- culty scenarios. Method S1 (Easy) S2 (Medium) S3 (Hard) SPT 3251.91 3685.20 3968.67 LPT 3425.84 3903.11 4193.47 SRM 3195.86 3658.33 3944.93 SSO 3284.40 3765.22 4044.32 LSO 3365.78 3835.28 4129.46 GP 2770.37 3160.13 3404.86 DRL 2761.54 3125.13 3380.38 DSevolv e 2547.80 2890.50 3139.40 the SPT probe to e xtract 6D fingerprints and bind the top-4 performing rules per instance. At test time, the probe retrie ves the 5 most similar cases, aggregates their associated rules into a 20-rule can- didate set, simulates all candida tes from the current snapshot, and selects the one yielding the minimum makespan. For each method, we report the average (A vg) and best (Best) mak espan across the three LLM configurations. 4.2 Comparison with AHD Frameworks T able 1 presents the comparison with state-of- the-art AHD frameworks. DSe volv e consistently achie ves the lo west mak espan across all three dif- ficulty levels and both aggregation metrics. On the Hard scenario (S3), DSev olve reduces the av- erage makespan by 33.2 compared to the strongest baseline EoH, and by 44.7 on the best-case met- ric. Among the baselines, EoH outperforms both HSEvo and ReEv o, which we attribute to its use of multiple e volutionary operators that produce more behaviorally di verse rule portfolios. Nev ertheless, DSe volv e surpasses EoH by explicitly optimizing for quality-di versity through its topology-aware op- erators and behavioral feature space, yielding a rule archi ve that better covers the heterogeneous test scenarios. T able 3: Ablation on rule selection strategies across different AHD frame works. T op : select the 20 rules with the lo west av erage makespan from the offline evolution. Random : randomly sample 20 rules. SPT Pr obe : our probe-based retriev al. Results are av erage makespan across three LLM configurations. Strategy S1 (Easy) S2 (Medium) S3 (Hard) EoH HsEvo ReEvo Ours EoH HsEvo ReEvo Ours EoH HsEvo ReEvo Ours T op 2604.5 2634.1 2615.2 2595.0 2963.5 2983.9 2971.5 2947.6 3216.2 3229.4 3215.8 3188.9 Random 2625.4 2769.9 2635.4 2629.8 2981.6 3173.0 2991.3 2986.5 3232.9 3481.5 3244.4 3234.5 Probe 2565.8 2594.9 2580.4 2547.8 2920.0 2942.2 2932.6 2890.5 3172.6 3192.2 3187.4 3139.4 4.3 Comparison with Classical HDRs and Learning-Based Methods T able 2 extends the comparison to classical HDRs and learning-based approaches. The results re- veal a consistent performance hierarchy . Classi- cal HDRs (SPT , LPT , SRM, SSO, LSO) apply a fixed dispatching policy regardless of instance characteristics, which limits their adaptability to dynamic disruptions. GP and DRL achie ve com- petiti ve or marginally better performance than clas- sical HDRs, benefiting from their ability to learn composite priority functions or adapti ve policies. DSe volv e outperforms all baselines by a substan- tial margin, demonstrating that the combination of quality-di verse rule e v olution and probe-based instance-adapti ve retriev al provides a fundamen- tally stronger paradigm for dynamic scheduling. 4.4 Effectiveness of Pr obe-Based Retriev al T o v alidate the effecti veness of probe-based re- trie val, we compare our SPT Probe against the T op and Random baselines. As shown in T able 3 , the probe-based strate gy consistently outperforms both static selection methods across all framew orks and dif ficulty lev els, reducing the a verage mak espan by 41.6 compared to T op. This confirms that instance- adapti ve retrie val is f ar more effecti ve than relying on globally top-ranked rules. Notably , the probe mechanism also yields substantial improvements when applied to EoH, HSEvo, and ReEv o, indi- cating that it is not tied to our ev olution pipeline but can serv e as a broadly applicable retriev al mod- ule for an y AHD frame work. Appendix C further sho ws that retriev al quality is robust to the choice of probe rule. 4.5 Ablation Study T able 4 isolates the contributions of multi-persona seeding and the beha vioral feature space. The w/o P ersona v ariant replaces di verse seeding with a sin- gle prompt, while w/o F eatur e Space remo ves the T able 4: Component ablation study . w/o P ersona : re- mov es multi-persona seeding, using a fixed prompt for initialization. w/o F eature Space : removes the behav- ioral feature space, disabling topology-aw are operators. All variants use the SPT probe for online scheduling. V ariant S1 (Easy) S2 (Medium) S3 (Hard) w/o Feature Space 2558.4 2913.5 3155.6 w/o Persona 2576.3 2919.8 3168.9 DSevolv e (Full) 2547.8 2890.5 3139.4 MAP-Elites archi ve and topology-a ware operators. Results show consistent degradation when either component is absent. Notably , remo ving multi- persona seeding causes a sharper decline, suggest- ing that initial div ersity is critical under a limited e volutionary b udget. The performance drop in w/o F eature Space further validates the necessity of topology-aw are guidance for sustaining population di versity throughout the search. 5 Conclusion This paper presents DSev olve, a frame work that bridges quality-div erse heuristic ev olution with on- line dynamic scheduling for FJSP . By combining multi-persona seeding, topology-a ware e volution- ary operators, and probe-based retriev al, DSev olve produces a behaviorally di verse rule archi ve that can be deployed under second-le vel response con- straints demanded by real production lines. Ex- periments on over 500 dynamic FJSP instances deri ved from industrial data demonstrate consistent improv ements ov er state-of-the-art AHD frame- works, classical HDRs, GP , and DRL. The probe- based retrie val mechanism is not tied to our e v olu- tion pipeline and yields substantial gains when inte- grated with other AHD frameworks, confirming its v alue as a reusable scheduling module. From an in- dustrial perspecti ve, DSe volv e requires no domain- specific neural training and produces interpretable HDRs, making it readily integrable into e xisting manufacturing e xecution systems. 6 Limitations Se veral aspects of DSe volv e warrant further in ves- tigation. First, the current behavioral feature space is defined by three manually selected dimensions (load ske wness, waiting ratio, div ersity). While ef fectiv e in our e xperiments, these dimensions may not capture all rele vant beha vioral distinctions for other scheduling domains. Automatically learn- ing the feature space from scheduling traces is a promising direction. Second, the offline knowledge base construction requires ev aluating all archiv e rules on each calibration instance, which scales lin- early with both the archiv e size and the number of instances. For v ery large archi ves or instance sets, more efficient indexing structures (e.g., locality- sensiti ve hashing) could reduce this cost. Third, our ev aluation focuses on makespan as the sole objecti ve. Industrial scheduling often in volv es mul- tiple competing objecti ves such as tardiness, ener gy consumption, and equipment utilization. Extending DSe volv e to multi-objecti ve settings and v alidating it on physical production lines remain important directions for future work. References Jürgen Brank e, Su Nguyen, Christoph W . Pickardt, and Mengjie Zhang. 2015. Automated design of produc- tion scheduling heuristics: A revie w . IEEE T ransac- tions on Evolutionary Computation , 20(1):110–124. Pham V u T uan Dat, Long Doan, and Huynh Thi Thanh Binh. 2025. Hsev o: Elev ating automatic heuristic design with di versity-dri ven harmon y search and ge- netic algorithm using llms. In Pr oceedings of the AAAI Confer ence on Artificial Intelligence . Junwen Ding, Mingzhou Chen, T ao W ang, Jianguo Zhou, Xianbing Fu, and Kai Li. 2023. A survey of AI- enabled dynamic manufacturing scheduling: From directed heuristics to autonomous learning. ACM Computing Surve ys , 55(12):1–36. Andy J Figueroa, Raul Poler, and Beatriz Andres. 2024. Adaptiv e production rescheduling system for managing unforeseen disruptions. Mathematics , 12(22):3478. Shuhan Guo, Nan Y in, James Kwok, and Quanming Y ao. 2025. Nested-refinement metamorphosis: Reflectiv e ev olution for efficient optimization of networking problems. In F indings of the Association for Compu- tational Linguistics: ACL 2025 , pages 17398–17429. Jin Huang, Xinyu Li, Qihao Liu, and Liang Gao. 2026a. Efficient scheduling for fix ed-type multi-robot col- laborati ve problem in fle xible job shop. Robotics and Computer-Inte grated Manufacturing , 98:103157. Jin Huang, Qihao Liu, Liang Gao, and 1 others. 2026b. Automatic programming via large language models with population self-ev olution for dynamic fuzzy job shop scheduling problem. IEEE T ransactions on Fuzzy Systems . Jin Huang, Y ue T eng, Qihao Liu, Liang Gao, Xin yu Li, Chunjiang Zhang, and Guoqing Xu. 2025. Lev erag- ing large language models for efficient scheduling in human–robot collaborativ e flexible manufacturing systems. npj Advanced Manufacturing , 2(1):47. JL Hunsucker and JR Shah. 1992. Performance of pri- ority rules in a due date flo w shop. Omega , 20(1):73– 89. Rui Li, Ling W ang, Hongyan Sang, Lizhong Y ao, and Lijun Pan. 2025a. Llm-assisted automatic memetic algorithm for lot-streaming hybrid job shop schedul- ing with variable sublots. IEEE T ransactions on Evolutionary Computation . Y uxin Li, Xinyu Li, Liang Gao, and Zhibing Lu. 2025b. Multi-agent deep reinforcement learning for dynamic reconfigurable shop scheduling considering batch processing and worker cooperation. Robotics and Computer-Inte grated Manufacturing , 91:102834. Y uxin Li, Qihao Liu, Chunjiang Zhang, Xinyu Li, and Liang Gao. 2025c. Graph-based dual-agent deep re- inforcement learning for dynamic human–machine hybrid reconfiguration manuf acturing scheduling. IEEE T ransactions on Systems, Man, and Cybernet- ics: Systems . Fei Liu, Xialiang T ong, Mingxuan Y uan, Xi Lin, Fu Luo, Zhenkun W ang, Zhichao Lu, and Qingfu Zhang. 2024. Ev olution of heuristics: to wards ef ficient auto- matic algorithm design using lar ge language model. In Pr oceedings of the 41st International Confer ence on Machine Learning , pages 32201–32223. Su Nguyen, Mengjie Zhang, Mark Johnston, and Kay Chen T an. 2017. Genetic programming for pro- duction scheduling: A survey with a unified frame- work. Complex & Intelligent Systems , 3(1):41–66. Mingming Peng, Zhendong Chen, Jie Y ang, Jin Huang, Zhengqi Shi, Qihao Liu, Xinyu Li, and Liang Gao. 2025. Automatic MILP model construction for multi- robot task allocation and scheduling based on large language models. In 2025 IEEE/RSJ International Confer ence on Intellig ent Robots and Systems (IR OS) , pages 20291–20296. Michael L. Pinedo. 2016. Scheduling: Theory , Algo- rithms, and Systems , 5th edition. Springer . Junhao Qiu, Haoyang Zhuang, Qingfu Zhang, and 1 others. 2026. Llm-assisted automatic dispatching rule design for dynamic flexible assembly flo w shop scheduling. arXiv preprint . Bernardino Romera-Paredes, Mohammadamin Barekatain, Alexander Novikov , Matej Balog, M Paw an Kumar , Emilien Dupont, Francisco JR Ruiz, Jordan S Ellenberg, Pengming W ang, Omar Fa wzi, and 1 others. 2024. Mathematical discoveries from program search with large language models. Natur e , 625(7995):468–475. W en Song, Xinyang Chen, Qiqiang Li, and Zhiguang Cao. 2022. Flexible job-shop scheduling via graph neural network and deep reinforcement learn- ing. IEEE T ransactions on Industrial Informatics , 19(2):1600–1610. Haoran Y e, Jiarui W ang, Zhiguang Cao, Federico Berto, Chuanbo Hua, Haeyeon Kim, Jinkyoo Park, and Guojie Song. 2024. Reev o: Large language mod- els as hyper -heuristics with reflecti ve ev olution. In Advances in neural information pr ocessing systems , volume 37, pages 43571–43608. Fei Y u, Liang Gao, Xinyu Li, Chao Lu, and Qihao Liu. 2026. Automated scheduling heuristic genera- tion and e valuation via lar ge language model. IEEE T ransactions on Evolutionary Computation . Qi Zhang and Bin Zhang. 2024. Research on dynamic flexible job shop scheduling problem based on dis- crete particle swarm optimization with readjustment strategy . In T enth International Conference on Me- chanical Engineering, Materials, and Automation T echnology (MMEA T 2024) , volume 13261, pages 1093–1097. SPIE. Fuqing Zhao, Bin W u, Ling W ang, and Hongyan Sang. 2026. A lar ge language model-assisted reinforce- ment learning framew ork with ev olutionary algo- rithm for hybrid flo w-shop scheduling. IEEE T rans- actions on Evolutionary Computation . Fuqing Zhao, Cheng Zhao, Ling W ang, and Hongyan Sang. 2025a. A deep reinforcement learning frame- work assisted by genetic programming for dynamic flexible job shop scheduling. IEEE T ransactions on Evolutionary Computation , pages 1–1. Fuqing Zhao, Junang Zhou, Ling W ang, and Hongyan Sang. 2025b. A hierarchical optimization algorithm with dual-cache synced tuning mechanism for dis- tributed fle xible job shop scheduling problem. IEEE T ransactions on Cybernetics . A Beha vioral Featur e Space: F ormal Definitions The three-dimensional behavioral descriptor v c = [ f ske w , f wait , f div ] ⊤ is computed by ex ecuting rule c on a set of calibration FJSP instances and analyzing the resulting schedule. All features are normalized to [0 , 1] using corpus-le vel statistics maintained by the archi ve database. W e describe the computation of each dimension belo w . A.1 Load Skewness ( f skew ) After ex ecuting rule c on a calibration instance, let M denote the number of machines and L m the total workload (cumulati ve processing time) assigned to machine m . Let ¯ L = 1 M P M m =1 L m be the av erage workload. The load ske wness measures the se verity of w orkload imbalance: f ske w = max M m =1 L m ¯ L (9) A value of f ske w ≈ 1 indicates a well-balanced schedule where all machines share similar workloads, while f ske w ≫ 1 signals that one or more machines are se verely overloaded, creating bottlenecks that dominate the makespan. Rules that emphasize load-balancing strategies tend to achiev e low f ske w v alues, while greedy time-based rules may produce higher ske wness. A.2 W aiting Ratio ( f wait ) Let J denote the set of all jobs. F or each job j ∈ J , let W j = P o ∈ j w j,o be the total waiting time (time spent in queues before processing) and P j = P o ∈ j p j,o be the total processing time. The waiting ratio captures the ov erall congestion lev el: f wait = P j ∈ J W j P j ∈ J P j (10) Higher f wait v alues indicate greater system congestion, where jobs spend a disproportionate amount of time waiting rather than being processed. Rules that ef fectiv ely manage queue lengths and prioritize congestion reduction tend to achiev e lo w f wait , while rules that focus solely on processing speed without considering system-le vel coordination may generate high waiting ratios. A.3 Diversity ( f div ) Unlike the two schedule-based features abo ve, di versity is a population-relati ve metric that quantifies the behavioral no velty of rule c with respect to the existing archi ve population. Let A = { c 1 , c 2 , . . . , c |A| } denote the current set of rules stored in the archi ve. The di versity score is computed based on the minimum normalized distance between the code of c and all existing archi ve members: f div = min c ′ ∈A sim ( c, c ′ ) (11) where sim ( · , · ) is a similarity function based on code text edit distance or semantic embedding similarity . This metric ensures that the archi ve penalizes redundant solutions: rules that are beha viorally similar to existing archi ve members recei ve lo w div ersity scores, while genuinely novel strategies are re warded with higher scores. A.4 Summary The full behavioral descriptor is: v c = Φ( c ) = f ske w f wait f div ∈ [0 , 1] 3 (12) The first two dimensions capture complementary aspects of scheduling quality (resource balance vs. flo w efficienc y), while the third ensures structural nov elty . T ogether, the three-dimensional feature space F = [0 , 1] 3 provides a compact yet informati ve landscape for MAP-Elites archi ve inde xing. Cells in this space correspond to distinct scheduling “behavioral niches, ” and the quality-diverse archi ve A retains only the best-performing rule per cell. B Probe Finger print: F ormal Definitions The probe fingerprint f ∈ R 6 characterizes a scheduling state through a combination of instantaneous system measurements and features extracted from a lightweight Shortest Processing T ime (SPT) simulation. W e describe the computation of each dimension below . B.1 Instantaneous State F eatures ( f state ∈ R 2 ) These features are computed directly from the liv e en vironment befor e the probe simulation, capturing the immediate scheduling pressure at the moment of a dynamic e vent. Load Density ( f den ). Let | pool | be the number of operations currently a waiting dispatch and M av ail = |{ m : m is av ailable }| the number of non-faulty machines. The load density is: f den = | pool | M av ail (13) When M av ail = 0 (all machines down), we set M av ail = 1 to av oid division by zero. Higher density indicates greater scheduling pressure, as more operations compete for fe wer machines. A verage Flexibility ( f aflex ). F or each pending operation o ∈ pool , let | cand ( o ) | denote the number of machines capable of processing it. The av erage flexibility is: f aflex = 1 | pool | X o ∈ pool | cand ( o ) | (14) Higher flexibility grants the scheduler more room for optimization. When the pool is empty , we set f aflex = 1 . B.2 Probe-Deri ved F eatures ( f probe ∈ R 4 ) After computing the state features, a snapshot S t of the en vironment is created. The SPT rule is then applied to simulate all remaining operations from S t to completion. Let M be the total number of machines, L m the total workload assigned to machine m by the probe simulation, and J the set of all jobs. Load Skewness ( f p skew ). Measures the se verity of bottleneck resources in the probe schedule: f p ske w = max M m =1 L m ¯ L , where ¯ L = 1 M M X m =1 L m (15) A value of f p ske w ≈ 1 indicates a well-balanced workload under SPT , while f p ske w ≫ 1 signals that a single machine dominates the schedule, creating a bottleneck that a specialized rule might alle viate. Critical Path Dominance ( f cpd ). Measures the compactness of the probe schedule relative to a theoreti- cal lo wer bound: f cpd = C probe C LB (16) where C probe is the makespan achie ved by the SPT probe, and the theoretical lo wer bound is: C LB = max M max m =1 L m , max j ∈ J X o ∈ j p min o (17) Here, p min o = min m ∈ cand ( o ) p o,m is the minimum achie vable processing time for operation o . V alues of f cpd close to 1 indicate that the probe already produces a near -optimal schedule, while lar ger v alues suggest room for improv ement through smarter dispatching. Flexibility Saturation ( f flex ). Quantifies ho w much routing flexibility the probe exploits: f flex = P j ∈ J P o ∈ j p actual o P j ∈ J P o ∈ j p min o (18) where p actual o is the processing time on the machine actually selected by SPT , and p min o is the minimum across all candidates. A ratio close to 1 means the probe already selects near-optimal machines, lea ving limited room for flexibility-based improvement. Higher ratios indicate that alternativ e dispatching strategies could e xploit routing options more effecti vely . W aiting Ratio ( f p wait ). Captures the congestion le vel of the probe schedule: f p wait = P j ∈ J W j P j ∈ J P j (19) where W j is the total waiting time and P j the total processing time of job j . Higher f p wait signals greater congestion, suggesting that rules prioritizing queue management may be beneficial. B.3 Full Fingerprint and Normalization The six features are concatenated into the full fingerprint: f = f p ske w f cpd f flex f p wait f den f aflex ∈ R 6 (20) For the of fline kno wledge base KB = { ( f i , R ∗ i ) } N i =1 , we compute the corpus-le vel statistics: µ = 1 N N X i =1 f i (21) σ = v u u t 1 N N X i =1 ( f i − µ ) 2 (22) All fingerprints are Z-score normalized element-wise: ˆ f i = f i − µ σ + ϵ , ϵ = 10 − 10 (23) The v ariance-based feature weights used for retriev al are: w j = V ar ( f j ) P 6 l =1 V ar ( f l ) + ϵ , j = 1 , . . . , 6 (24) where V ar ( f j ) = 1 N P N i =1 ( f i,j − µ j ) 2 . Features with higher variance across the kno wledge base recei ve larger weights, as the y are more discriminati ve for case matching. C Alternati ve Pr obe Rules T o further examine whether the advantage of probe-based retrie val is consistent across dif ferent ev olu- tionary framew orks, T able 5 reports the per-frame work results for each probe rule under EoH, HsEvo, and ReEv o separately . Se veral observ ations can be drawn. First, across all three frame works and all three scenario difficulties, e very probe-based strate gy consistently outperforms both the T op and Random baselines, confirming that the benefit of fingerprint-guided retrie val is frame work-agnostic rather than an artifact of a particular e volution algorithm. Second, the Random baseline e xhibits the most pronounced degradation under HsEv o, where the gap relative to the best probe rule reaches up to 6.9% in S1 and 9.3% in S3, suggesting that framew orks with weaker di versity maintenance are especially reliant on intelligent retrie val to a v oid poor rule selections. Third, within each frame work, the v ariance among the fi ve probe rules remains small: the relativ e dif ference between the best and worst probe nev er exceeds 0.8% for an y frame work–scenario pair . Notably , the identity of the best-performing probe rule varies across frame works (SRM dominates under EoH, while LPT is fav ored under ReEv o), yet no single probe rule is consistently inferior , reinforcing that the 6D fingerprint representation captures sufficient instance structure to be rob ust to the probe policy . These results provide strong e vidence that the probe mechanism is both effecti ve and stable, and that its contribution is orthogonal to the choice of underlying e volutionary frame work. T able 5: Per -framew ork breakdown of probe rule sensitivity . Bold entries indicate the best probe rule within each framew ork/scenario combination. All probe-based strategies substantially outperform the non-probe baselines (T op, Random) regardless of the e v olutionary framew ork, while the inter-probe v ariance remains belo w 0.8%. Probe S1 S2 S3 EoH HsEvo ReEvo EoH HsEvo ReEvo EoH HsEvo ReEvo T op 2604.5 2634.1 2615.2 2963.5 2983.9 2971.5 3216.2 3229.4 3215.8 Random 2625.4 2769.9 2635.4 2981.6 3173.0 2991.3 3232.9 3481.5 3244.4 SPT 2565.8 2594.9 2580.4 2920.0 2942.2 2932.6 3172.6 3192.2 3187.4 LPT 2570.6 2592.9 2580.8 2912.3 2943.7 2926.7 3158.4 3184.0 3180.8 SSO 2567.0 2590.4 2580.7 2910.9 2940.2 2928.5 3149.3 3182.7 3181.8 LSO 2571.4 2592.7 2578.3 2913.2 2942.4 2927.9 3152.5 3185.1 3181.2 SRM 2561.1 2590.5 2581.9 2907.9 2937.9 2931.9 3155.1 3182.5 3180.8 D GP and DRL Implementation Details D.1 GP W e adopt the GP-based hyper-heuristic frame work for e volving composite dispatching rules. The GP sys- tem uses a tree-based representation where terminal nodes correspond to scheduling attributes (processing time, remaining work, machine load, queue length, etc.) and function nodes include arithmetic operators ( + , − , × , ÷ protected ) and comparison operators ( max , min ). T able 6 present parameter configurations for GP algorithms implemented using the DEAP frame work. T able 6: Parameter Settings for GP . Category Parameter V alue T ree Structure Number of Main T rees 2 (Job & Machine) Initial T ree Depth Range min=2, max=6 Max Mutation Depth 8 T ree T ypes Job selection, Machine selection Operators Function Set add, sub, mul, di v , min, max T erminal Set (Job) PT , WKR, RM, SO T erminal Set (Machine) PTM, UR Evolution Population Size 20 Number of Generations 50 Crossov er Probability 0.8 Mutation Probability 0.15 Elitism Size 1 The algorithm design is based on the open-source framework av ailable at https://github .com/DEAP/deap. Data and source code can be provided upon request. D.2 Deep Reinf orcement Learning T able 7 presents the complete parameter configuration for the proximal policy optimization (PPO) used in the deep reinforcement learning baseline. T able 7: Parameter Settings for PPO-Based Deep Reinforcement Learning. Category Parameter V alue Network Architecture Actor Hidden Layer 1 128 Actor Hidden Layer 2 128 Actor Hidden Layer 3 64 Critic Hidden Layer 1 128 Critic Hidden Layer 2 128 Critic Hidden Layer 3 64 Layer Initialization Orthogonal (std=0.5) Bias Initialization Constant (1e-3) Acti vation Function ReLU T raining Learning Rate (Actor) 0.0002 Learning Rate (Critic) 0.0006 Optimizer Adam Beta 1 0.9 Beta 2 0.999 Discount Factor ( γ ) 1.0 PPO Epochs ( K ) 3 Clip Parameter ( ϵ ) 0.2 V alue Function Coefficient 0.5 Entropy Coef ficient 0.01 Max Gradient Norm 0.5 Memory & Batch Memory Buf fer Size 5 Batch Size 32 Minibatch Size 512 Priority Replay α 0.6 Capacity 100,000 T raining Schedule Max Iterations 1,000 Update T imestep 1 Sav e T imestep 10 Parallel Iterations 20 En vironment T raining T ime Limit 5 hours Con ver gence Episodes 2,000 E Extended Analysis of AHD Framework Comparison E.1 Per -LLM Breakdown T o provide a more granular vie w of the results in T able 1 , we report the per -LLM performance of each frame work on the Hard scenario (S3) in T able 8 . This breakdown re veals that DSev olve achie ves the best performance under ev ery individual LLM configuration, indicating that its advantages are not dri ven by a single fa vorable LLM pairing b ut reflect a systematic improv ement in the e volution-retrie v al pipeline. T able 8: Per-LLM performance on S3 (Hard). A verage makespan reported. Method Qwen-Plus DeepSeek-V3 GPT -4o-mini EoH 3168.2 3175.4 3174.1 HSEvo 3195.3 3188.7 3192.7 ReEvo 3190.1 3183.5 3188.6 DSevolv e 3135.8 3140.2 3142.3 E.2 Archi ve Div ersity Analysis W e quantify the behavioral di versity of the rule archi ves produced by each frame work by measuring the number of occupied cells in the MAP-Elites grid (with uniform 10 × 10 × 10 binning in the 3D feature space). Under identical e valuation b udgets (400 v alid rules), DSe volve occupies significantly more cells than the baselines, confirming that the topology-aw are operators effecti vely expand co verage of the behavioral feature space: T able 9: Archi ve di versity measured by occupied MAP-Elites cells (out of 1000 possible cells). A veraged across three LLM configurations. Method Occupied Cells EoH 47.3 HSEvo 31.7 ReEvo 38.2 DSevolv e 68.5 This higher coverage directly translates to better online scheduling performance, as the retrie val mechanism can match a wider v ariety of instance characteristics to specialized rules in the archiv e.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment