STRIDE: When to Speak Meets Sequence Denoising for Streaming Video Understanding

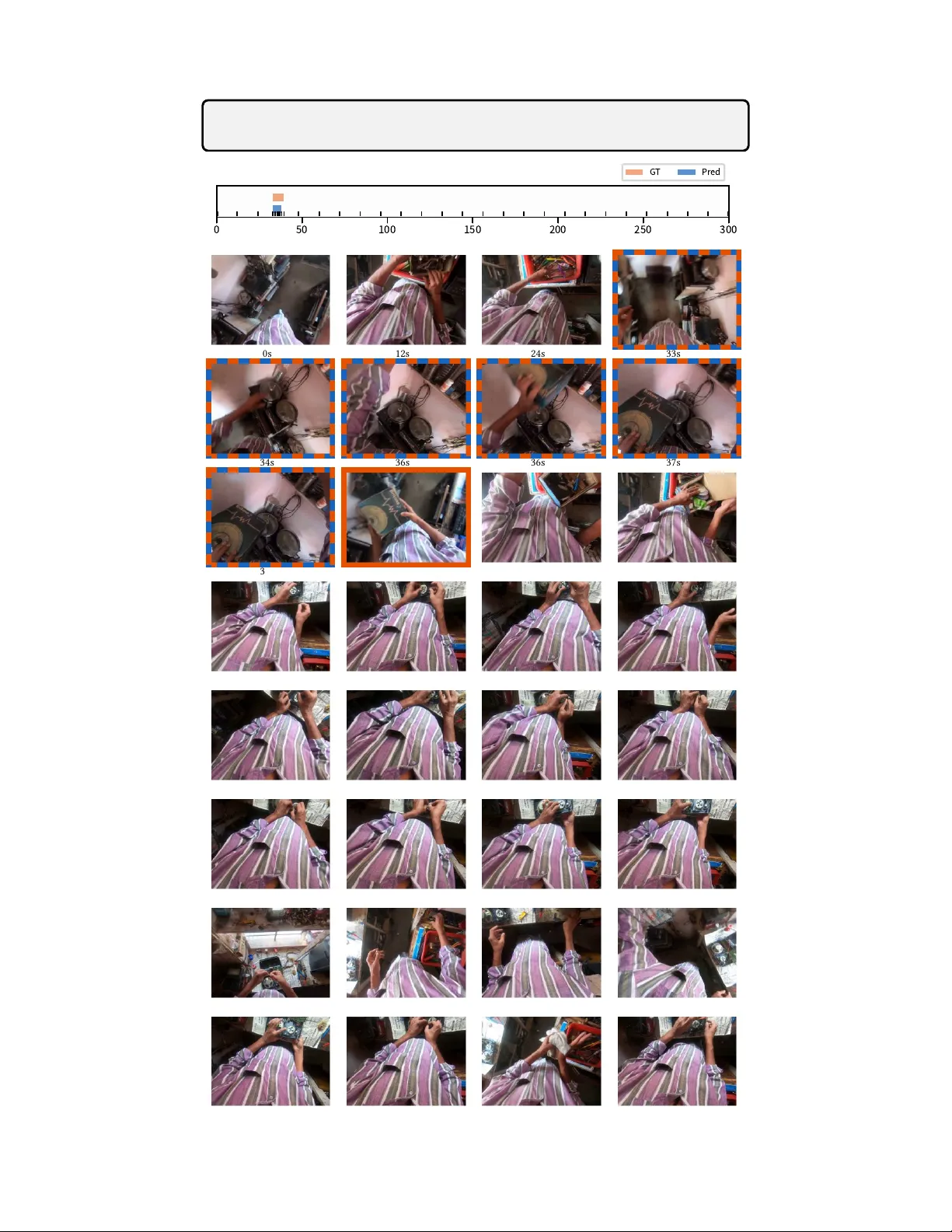

Recent progress in video large language models (Video-LLMs) has enabled strong offline reasoning over long and complex videos. However, real-world deployments increasingly require streaming perception and proactive interaction, where video frames arr…

Authors: Junho Kim, Hosu Lee, James M. Rehg