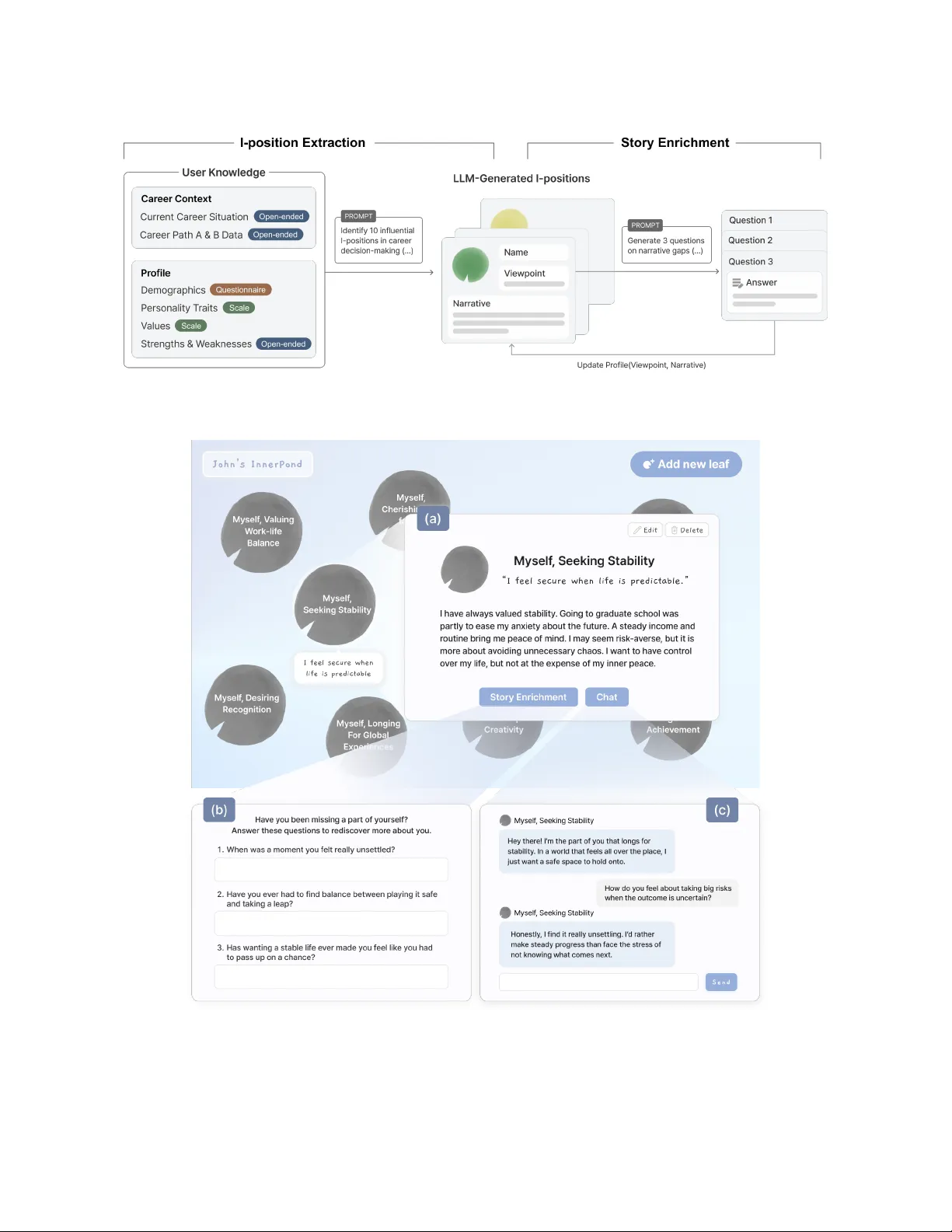

InnerPond: Fostering Inter-Self Dialogue with a Multi-Agent Approach for Introspection

Introspection is central to identity construction and future planning, yet most digital tools approach the self as a unified entity. In contrast, Dialogical Self Theory (DST) views the self as composed of multiple internal perspectives, such as value…

Authors: Hayeon Jeon, Dakyeom Ahn, Sunyu Pang