Understanding NPM Malicious Package Detection: A Benchmark-Driven Empirical Analysis

The NPM ecosystem has become a primary target for software supply chain attacks, yet existing detection tools are evaluated in isolation on incompatible datasets, making cross-tool comparison unreliable. We conduct a benchmark-driven empirical analys…

Authors: Wenbo Guo, Zhongwen Chen, Zhengzi Xu

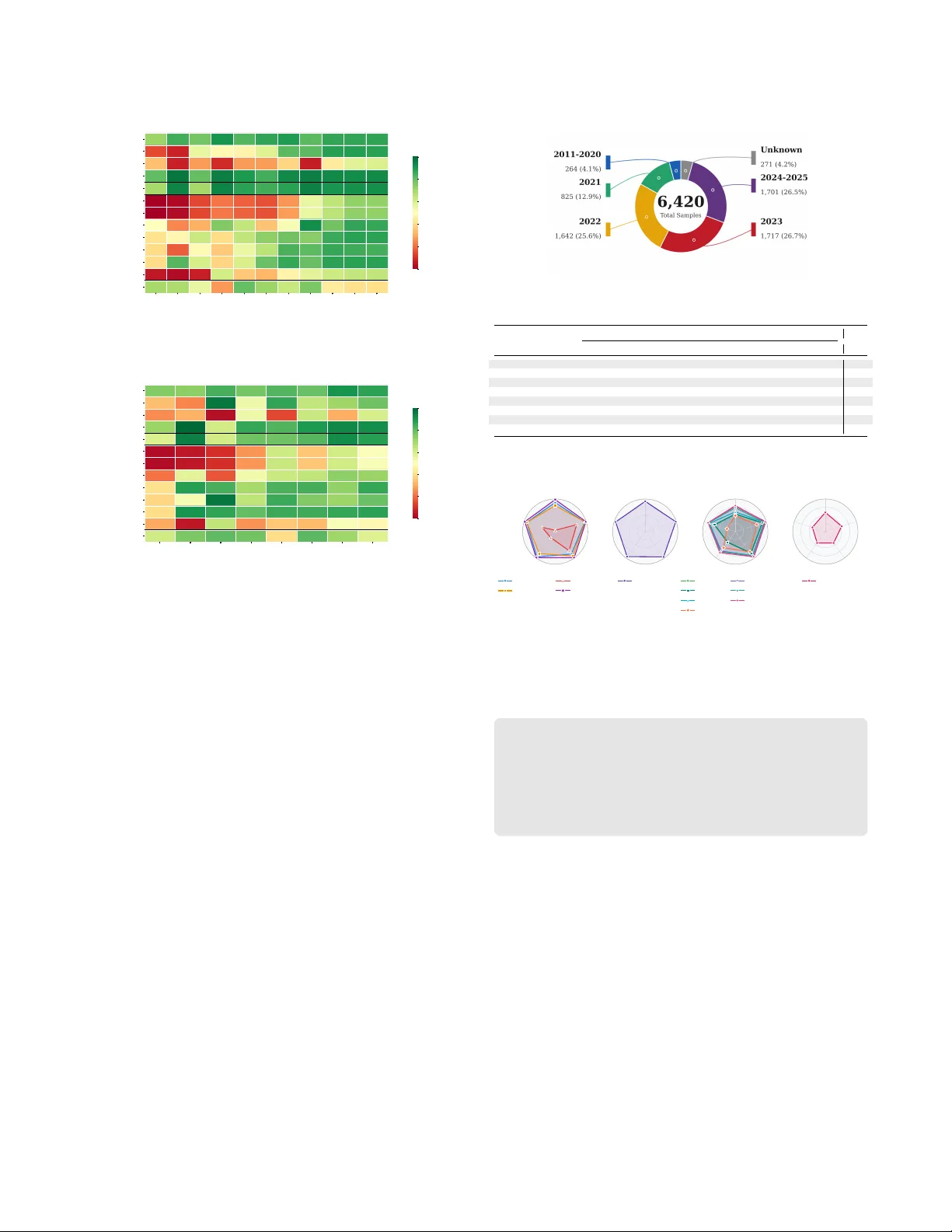

Understanding NPM Malicious Package Detection: A Benchmark-Driven Empirical Analysis W enbo Guo honywenair@gmail.com Nanyang T echnological University Singapore Zhongwen Chen yinglilikz@stu.scu.edu.cn Sichuan University China Zhengzi Xu z.xu@imperial.ac.uk Imperial Global Singapore Singapore Chengwei Liu chengwei.liu@nankai.edu.cn Nankai University China Ming Kang kreleasem@gmail.com Sichuan University China Shiwen Song swsong@smu.edu.sg Singapore Management University Singapore Chengyue Liu CHENGYUE001@e.ntu.edu.sg Nanyang T echnological University Singapore Yijia Xu xuyijia@scu.edu.cn Sichuan University China W eisong Sun weisong.sun@ntu.edu.sg Nanyang T echnological University Singapore Y ang Liu yangliu@ntu.edu.sg Nanyang T echnological University Singapore Abstract The NPM ecosystem has become a primary target for software supply chain attacks, yet existing detection tools are evaluated in isolation on incompatible datasets, making cross-tool comparison unreliable. W e conduct a benchmark-driven empirical analysis of NPM malware detection, building a dataset of 6,420 malicious and 7,288 benign packages annotated with 11 behavior categories and 8 evasion techniques, and evaluating 8 tools across 13 variants. Unlike prior work, we complement quantitative e valuation with source- code inspection of each tool to expose the structural mechanisms behind its performance. Our analysis reveals v e key ndings. T ool precision-recall po- sitions are structurally determined by ho w each tool resolves the ambiguity between what code can do and what it intends to do, with GuardDog achieving the best balance at 93.32% F1. A single API call carries no directional intent, but a behavioral chain such as collecting environment variables, serializing, and e xltrating dis- ambiguates malicious purp ose, raising SAP_DT detection fr om 3.2% to 79.3%. Most malware requires no evasion because the ecosystem lacks mandatory pre-publication scanning. ML degradation stems from concept convergence rather than concept drift: malware be- came simpler and statistically indistinguishable from benign code in feature space. T ool combination ee ctiveness is governed by complementarity minus false-positive introduction, not paradigm diversity , with strategic combinations reaching 96.08% accuracy and 95.79% F1. Our benchmark and evaluation framework are publicly available. Ke ywords Software supply chain security; Malicious package detection; NPM ecosystem; Empirical study; Benchmark 1 Introduction NPM (Node Package Manager) serves as the cornerstone of JavaScript development, hosting over 3.57 million packages with 7.09 billion weekly downloads [ 2 ] and used by ov er 40% of develop- ers w orldwide [ 38 ]. Modern web applications routinely incorporate hundreds of NPM dependencies [ 39 , 41 ], creating supply chain net- works where a single compromised package can aect thousands of downstream projects. The NPM registry adopts an open publication model with minimal gatekeeping [ 11 , 39 ], which prioritizes acces- sibility but also enables attackers to publish malicious packages. Reported threats include credential harvesters [ 36 ], cryptocurrency miners [ 9 ], and backdo or installation scripts [ 18 ]. Attackers also exploit naming similarities through typosquatting [ 52 ], or gain access via dependency confusion and compromised maintainer ac- counts [ 49 ]. Several high-prole incidents illustrate the impact of these attacks. The event-stream package, with 2 million w eekly downloads, was compromised to steal Bitcoin wallet keys [ 17 ]. Attacks on ua-parser-js [ 6 ], coa , and rc [ 4 ] aected thousands of projects, showing that NPM has become a major v ector for software supply chain attacks. T o counter these threats, researchers and practitioners have developed various detection tools. These tools fall into four cate- gories. Static analysis methods examine source code and metadata without execution, such as GuardDog [ 7 ] and OSSGadget [ 26 ]. Dy- namic analysis tools execute packages in controlled environments to observe runtime behaviors, including MalOSS [ 9 ] and Packj [ 30 ]. Machine learning approaches train classiers on package features to distinguish malicious from benign code, represented by Cere- bro [ 50 ] and AMALFI [ 37 ]. More recently , LLM-based methods leverage large language models to analyze code semantics [ 46 , 48 ]. Conference’17, July 2017, W ashington, DC, USA W enbo Guo, Zhongwen Chen, Zhengzi Xu, Chengwei Liu, Ming Kang, Shiwen Song, Chengyue Liu, Yijia Xu, W eisong Sun, and Yang Liu Each category has shown promising detection results in their pub- lished evaluations. Howev er , malicious packages remain a persistent problem de- spite the availability of these tools. More fundamentally , we lack understanding of why dierent detection approaches succeed or fail, which prev ents principled tool design and deployment. Four questions remain unanswered. (Research Gap 1: Structural precision-recall trade-os.) Existing tools exhibit precision ranging from 50% to 99% and recall from 44% to 99%, yet no study has investigated whether these dier- ences reect engineering quality or a deeper structural constraint. Since malicious and benign packages invoke the same OS APIs (e .g., fs.readFile , https.request , child_process.exec ), every dete ction tool must resolve the ambiguity b etween code capability and code intent , yet how each tool’s design resolves this ambiguity , and whether a structural trade-o exists, has not been analyzed. (Research Gap 2: Attack-dete ction interaction.) Individual tool papers report overall accuracy on their own datasets, but the rela- tionship between specic attack characteristics (behaviors, evasion techniques, attack surface) and detection diculty remains unclear . It is unknown whether detection failures stem fr om sophisticated evasion or from a mismatch between where the attack resides (e.g., package.json vs. JavaScript les) and what the tool analyzes. (Research Gap 3: T emporal degradation mechanisms.) ML-based tools trained on historical data are known to suer performance degradation, commonly attributed to “concept drift. ” How ever , the specic mechanism driving degradation in the npm ecosystem has not been establishe d. It is unclear whether attackers develop more sophisticated evasion over time or whether changes in malware coding style, account for the observed decline. (Research Gap 4: Combination principles.) Practitioners are ad- vised to deploy multiple to ols for better coverage , yet no study has examined the principles governing combination eectiveness. It is unknown whether tool diversity guarantees improvement or whether certain combinations can be counterproductive. T o address these gaps, we conduct a large-scale empirical study of NPM malicious package detection to ols, combining quantita- tive evaluation with sour ce-code analysis of each tool’s detection mechanism. W e curate a unied b enchmark of 6,420 malicious and 7,288 benign packages, annotated with 11 behavior categories and 8 evasion techniques. Our study investigates four resear ch questions: • RQ1 (Detection Eectiveness) : What are the detection per- formance of current tools, and what structural mechanisms underlie their precision-recall trade-os? (Gap 1) • RQ2 (Fine-grained Behavioral Analysis) : How do specic malicious behaviors, e vasion techniques, and attack surfaces interact with dierent detection approaches? (Gap 2) • RQ3 (T emporal Evolution Analysis) : Ho w does detection performance evolve ov er time, and what mechanism drives the temporal degradation of ML-based tools? (Gap 3) • RQ4 (T ool Complementarity Analysis) : What principles govern the eectiveness of tool combinations, and under what conditions can combination be counterproductive? (Gap 4) T o answer these questions, we go beyond running tools as black boxes: we inspect the source code of all 8 to ols to understand their detection rules, feature designs, thresholds, and architectural as- sumptions. This enables us to explain why tools succeed or fail, not merely how often . W e curate a unied dataset of 6,420 malicious and 7,288 benign packages from academic datasets and security advisories, construct a taxonomy of 11 malicious behavior cate- gories and 8 evasion techniques through LLM-assisted analysis and expert revie w , and evaluate 8 to ols with 13 variants across packages published from 2020 to 2025. Our evaluation re veals several ke y ndings. First, detection ef- fectiveness varies signicantly across tools, with GuardDog achiev- ing the best balance (93.32% F1-score) while other tools exhibit precision-recall trade-os ranging from 50.41% to 99.88% precision and 44.41% to 98.69% recall (RQ1) . Source-code analysis r eveals that the fundamental challenge is distinguishing code capability from intent : malicious and benign packages invoke identical OS APIs, and each tool’s position on the precision-recall spectrum reects how it resolves this ambiguity . Second, among 8 e vasion categories, environment detection (mean 39%) and anti-analysis (mean 54%) are the hardest to detect, with ML-based tools showing critical vulnera- bilities (e.g., SAP_DT/RF: 5% against anti-analysis) (RQ2) . Thir d, ma- chine learning approaches experience sev ere temporal degradation, with SAP_DT declining from 87.15% to 39.49% (47.66 percentage points) detection rate between 2021 and 2023 (RQ3) . W e trace this to concept convergence : attackers adopted minimal-footprint code that is statistically indistinguishable from benign packages, render- ing ML features uninformative. Fourth, strategic tool combinations achieve up to 96.08% accuracy and 95.79% F1-score , substantially outperforming any individual tool (RQ4) . Combination eectiveness depends on detection complementarity minus false-p ositive intro- duction, not simply paradigm div ersity: a high-recall, low-precision partner can degrade a strong tool. The main contributions of this paper are: (1) Benchmark and taxonomy . W e curate the largest NPM malware benchmark (6,420 malicious + 7,288 benign pack- ages) with a taxonomy of 11 behavior categories and 8 eva- sion techniques, enabling ne-grained analysis not possible with prior datasets. (2) Large-scale Evaluation . W e evaluate 8 tools (13 variants) and, unlike prior b enchmarks that treat tools as black b oxes, inspect each to ol’s source code to identify the structural mechanisms behind its performance. (3) Novel ndings. W e identify ve structural principles: (i) capability-intent ambiguity as the root cause of precision- recall trade-os; (ii) concept convergence driving ML temporal degradation; (iii) attack surface mismatch leaving 22% of mal- ware structurally undetectable by code-analysis tools; (iv) be- havioral coupling amplication boosting detection signals by up to 76 percentage points; and (v ) complementarity-minus- FP-introduction as the governing principle of tool combina- tion eectiveness. (4) W e release our dataset, evaluation framework, and all analy- sis scripts to facilitate future research [1]. Understanding NPM Malicious Package Detection: A Benchmark-Driven Empirical Analysis Conference’17, July 2017, W ashington, DC, USA T able 1: Overview of Studied npm Malicious Package Dete c- tion T ools Type Name Y ear Target Feature Classier A vail. Static-Based LastJSMile [34] 2022 Phantom les Code di, Metadata RegEx ✗ OSSGadget [26] 2024 Full package Code, Metadata Rule-based ✔ GuardDog [7] 2024 Full package Code, Metadata Semgrep ✔ di-CodeQL [23] 2023 Full package Code di CodeQL ✗ GENIE [24] 2024 Full package Code CodeQL ✔ MalWuKong [22] 2023 Full package Code, API call CodeQL ✗ Dynamic-Based MalOSS [31] 2021 Source, binaries API call, Dynamic Rule-based ✔ Packj [30] 2023 Full package API call, Dynamic Rule-based ✔ ML-Based Cerebro [50] 2023 Full package AST BERT ✔ SAP [20] 2023 Full package Statistical DT , RF, XGB ✔ AMALFI [37] 2022 Full package API call, Metadata, Update DT , NB, SVM ✗ DONAPI [15] 2024 Full package AST , API sequence, Dynamic RF ✗ Maltracker [47] 2024 Full package Code, Graph DT, RF , XGB ✗ Ohm et al. [28] 2022 Full package Metadata, Code SVM, MLP, RF ✗ MeMPtec [14] 2024 Metadata Metadata SVM, GLM, GBM, DRF, DL ✗ Maldet [51] 2024 Full package Statistical, API call, Metadata DT, RF, NB, SVM ✗ SpiderScan [16] 2024 Full package Code, API call, Dynamic RF ✗ MalPacDetector [45] 2025 Full package AST, Featur e Set RF, SVM, NB, MLP ✔ LLM-Based SocketAI [48] 2025 Full package Code Content LLMs ✔ Note: A vail.=Available. ✔ =Y es, ✗ =No. DT =Decision Tree, RF=Random Forest, XGB=X GBoost, NB=Naive Bayes, SVM=Support V ector Machine, MLP=Multi-Layer Perceptron. 2 Related W ork 2.1 NPM Supply Chain Security The NPM registr y is a centralized platform where developers publish packages that are mirr ored to do wnstream registries world- wide [ 9 , 19 , 29 ]. When developers run npm install , npm resolves dependencies and automatically e xecutes lifecycle scripts ( preinstall , install , postinstall ) with full user privileges before any explicit code invocation [ 11 , 49 ]. At runtime, packages can fr eely access le systems, network resources, and environment variables [ 9 ], poten- tially compromising development envir onments, CI/CD pipelines, and production systems [ 52 ]. The dense dependency network fur- ther amplies these risks, as an average package implicitly trusts 79 third-party packages [52]. Attackers exploit this model through several vectors: typosquat- ting registers packages with names similar to popular ones [ 27 , 29 , 40 , 44 ], dep endency confusion publishes public packages match- ing private internal package names [ 3 , 5 , 13 , 19 , 21 ], and account takeover compromises maintainer credentials to inject malicious code into legitimate packages [ 10 , 12 , 52 ]. Approximately 2.2% of npm packages use install scripts, and prior analysis identied mul- tiple malicious packages that exltrate data through this me cha- nism [ 49 ]. Once triggered, malicious packages commonly exltrate sensitive information such as environment variables, SSH keys, and credentials [ 13 , 29 ], establish persistent backdoors via reverse shells [9], or consume resources for cryptocurrency mining. 2.2 NPM Malicious Package Detection Existing NPM malicious package detection approaches can be categorized into four types based on their underlying techniques, as summarized in T able 1. (1) Static-based methods analyze package code and metadata without execution. These approaches employ pattern matching techniques such as regular expressions [ 34 ], rule- based detection [ 26 ], and Semgrep rules [ 7 ], including OSSGad- get developed by Microsoft and GuardDog by Datadog. Several works leverage CodeQL for semantic analysis through information ow tracking [ 22 – 24 ]. GENIE [ 24 ] develops semantic signatures by analyzing data ows to capture malicious intent, while Mal- WuK ong [ 22 ] combines CodeQL with API call analysis to detect suspicious invocation patterns. (2) Dynamic-based metho ds exe- cute packages in sandbox environments to monitor runtime b ehav- iors such as system calls, network communications, and le op era- tions [ 30 , 31 ]. MalOSS [ 31 ] traces sensitive API invocations during execution, while Packj [ 30 ] combines static metadata analysis with dynamic behavior monitoring. (3) ML-based methods extract fea- tures from AST [ 45 , 50 ], call graphs [ 47 ], API sequences [ 15 , 16 ], metadata [ 14 , 28 , 51 ], and statistical properties [ 20 ], then train clas- siers such as Random Forest, SVM, and neural networks [ 37 ]. Cere- bro [ 50 ] employs BERT to learn cross-language semantic patterns from b ehavior sequences, while MeMPtec [ 14 ] groups metadata fea- tures by manipulation diculty to improve adversarial robustness. (4) LLM-based methods leverage large language models to analyze code semantics. SocketAI [ 48 ] applies GPT -3 and GPT -4 with itera- tive self-renement to improve detection accuracy . W yss et al. [ 46 ] evaluate LLM-based detection of malicious package updates by analyzing semantic dis between package versions. 3 Evaluation Framework 3.1 Dataset Construction 3.1.1 Malicious Dataset Construction. T o construct a representa- tive malicious dataset, we systematically collect NPM malware packages from two categories of sources: academic datasets and public security advisories. Academic Datasets. Prior resear ch has released malicious pack- age datasets for reproducibility . W e collect packages from ve pub- licly available datasets: BK C [ 29 ] (2,113 versions), which catalogs supply chain attacks across multiple e cosystems; DONAPI [ 15 ] (1,159 versions), which focuses on API-base d detection; DataDog [ 8 ] (935 versions), which provides samples identied by Datadog’s de- tection rules; MalOSS [ 31 ] (567 versions), which includes packages with dynamic analysis traces; and Maltracker [ 47 ] (230 versions), which provides LLM-enhanced annotations. These datasets span from 2020 to 2024, ensuring diverse cov erage of attack patterns. Security Advisories. Public security databases such as OSV and Snyk record reported malicious NPM packages with their names and versions. Howev er , these packages have be en removed from the ocial NPM registry after disclosure. Following the obser vation that removed packages may still exist in downstr eam mirrors [ 13 ], we extract package names and versions fr om these advisories and retrieve sour ce code from NPM mirror repositories. This approach enables us to collect 4,547 additional versions that would otherwise be inaccessible. 3.1.2 Benign dataset construction. For b enign packages, we col- lected 7,288 packages from the ocial NPM registry based on monthly download statistics. W e prioritize d highly downloaded packages for three reasons. First, prior studies have shown that popular packages trigger signicantly higher false p ositive rates than randomly sampled packages [ 35 , 43 ], providing a more rigor- ous test for detection precision. Second, p opular packages are less likely to contain malicious code, as any malicious behavior would be quickly discovered given their large user base and community scrutiny . Third, these packages r epresent real-world deployment scenarios with higher code complexity and functional div ersity . For each package, we selected only the latest version, as r ecent versions Conference’17, July 2017, W ashington, DC, USA W enbo Guo, Zhongwen Chen, Zhengzi Xu, Chengwei Liu, Ming Kang, Shiwen Song, Chengyue Liu, Yijia Xu, W eisong Sun, and Yang Liu T able 2: Dataset Sources Paper Count Vers. Y ear Upd. A vail. Paper Count V ers. Y ear Upd. Avail. Cerebro [50] 1,789 1,789 2023 ✗ ✗ MalOSS [31] 567 567 2020 ✗ ✔ SocketAI [48] 2,180 2,776 2024 ✗ ✗ BKC [29] 2,113 2,113 2020 ✔ ✔ AMALFI [37] 643 643 2022 ✗ ✗ Scalco et al. [34] 119 119 2022 ✗ ✗ SAP [20] 102 102 2023 ✗ ✗ DataDog [8] 821 935 2024 ✔ ✔ DONAPI [15] 1,159 1,159 2024 ✗ ✔ Maltracker [47] 230 230 2024 ✗ ✔ SpiderScan [16] 364 364 2024 ✗ ✗ Ours 4,286 6,420 2025 ✔ ✔ Note: V ers.=V ersions, Upd.=Update, A vail.=Available. ✔ =Y es, ✗ =No. typically receive more timely security maintenance and better re- ect current coding practices. W e veried with mainstr eam se curity databases (OSV and Snyk) that no known malicious packages were present in our benign dataset. 3.2 Dataset Preprocess The raw dataset collected from multiple sources contains du- plicates, invalid entries, and potential false positives. W e perform three preprocessing steps to ensur e data quality . Deduplication. Packages with identical names and versions appear across multiple source datasets. W e remove 262 duplicate entries from the origi- nal 9,551 malicious versions, leaving 9,289 versions. Placeholder Removal. When NPM ocially removes a malicious package, it replaces the package content with a placeholder version marked as 0.0.1-security [ 32 ]. These placeholders no longer contain ma- licious code. W e identify and remove 1,502 such versions, leaving 7,787 versions. Manual V erication. Source datasets may contain false positives due to mislabeling or outdated annotations. T o en- sure ground truth quality , three se curity experts holding PhDs in software supply chain security independently revie w each package . A package is labeled as malicious only when at least two experts agree. Acr oss all 7,787 revie wed packages, the three-way consen- sus rate is 97.4%, and the average pairwise Cohen’s 𝜅 is 0.78. This process removes 1,367 false positives, requiring appro ximately 287 expert-hours in total. After preprocessing, we obtain a curated dataset of 6,420 malicious v ersions across 4,286 unique packages. T able 2 summarizes the nal dataset statistics and sources. 3.3 Malicious Behavior T axonomy T o enable ne-grained analysis of dete ction capabilities, we con- struct a taxonomy of malicious behaviors through three steps: mali- cious code localization, context extraction, and b ehavior clustering. Malicious Code Localization. Manually lo cating malicious code across 6,420 packages is infeasible , so we automate this step using GuardDog and OSSGadget, two complementary static analy- sis tools with br oad coverage . GuardDog’s taint-based rules identify 10,059 lo cations (1.57 per package), focusing on data exltration and command execution patterns. OSSGadget’s regex rules identify 27,350 locations (4.26 per package) with broader coverage including encoded strings and dynamic code e valuation. T ogether they detect 6,352 packages, leaving only 68 packages (1.06%) requiring manual analysis. For locations agged by both tools, w e r etain one instance to avoid duplicate contexts. Code Context Extraction. Detection reports contain only 1- 3 lines of agged code, which is insucient for understanding complete malicious behavior: intent often depends on variable de- nitions, data o w , and control structures that span multiple lines or functions. T o obtain complete behavioral semantics, we use LLMs to e xtract the full execution context for each identied location. For each detection, we input the entire source le with the agged le path and line numbers as anchors, and prompt the LLM to extract surrounding variable denitions and assignments, function call chains invoking the malicious operations, and contr ol ow struc- tures determining execution conditions. The LLM only expands context around agged locations and never decides whether code is malicious; ground-truth labels come entirely from the dataset. For the 68 packages evading both to ols, security experts perform man- ual extraction following the same method to ensure consistency . In total, we extract 17,285 code contexts from all detections and obtain 5,699 unique contexts after deduplication. The detection count exceeds the package count because a single package often contains multiple malicious locations, such as an install script in package.json and a payload in a JavaScript le. The unique count is lower than the package count because typosquatting campaigns reuse identical payloads across many packages with only the name changed. The LLM only expands co de context around agged loca- tions and never decides whether code is malicious. Our labels come from the ground-truth dataset, keeping taxonomy construction independent from LLM judgment. In our scan, Guar dDog reports no matches for 647 packages (10.08%) and OSSGadget reports no matches for 542 packages (8.44%). Only 68 packages (1.06%) evade both tools, so we locate their malicious co de manually . W e validate the accuracy of LLM-based context extraction in Section 3.5. Behavior Summary and Clustering. Based on each extracted code context, we prompt the LLM to generate a structur ed behav- ioral summar y that captur es (i) the triggering condition/entry point, (ii) the main malicious actions (e .g., collection, execution, exltra- tion), and (iii) the key targets or artifacts involved (e.g., tokens, environment variables, les, network endpoints). This produces 5,699 summaries corresponding to the unique contexts and provides a normalized semantic representation for downstream clustering. W e then p erform semantic clustering over the summaries. W e en- code each summary using Sentence-BERT ( all-mpnet-base-v2) [ 33 ], apply UMAP for dimensionality reduction, and cluster the embed- dings using K -Means [ 25 ]. T o determine the number of clusters, we sweep 𝐾 over a candidate range and sele ct the value that jointly optimizes clustering quality (e.g., silhouette score) and stability across random seeds, while yielding semantically coherent and in- terpretable groups upon manual inspection. Three domain experts (the same annotators as in Se ction 3.1) independently reviewed the resulting clusters against the original code contexts. Each e xpert assigned a descriptive label to each cluster and proposed merges for functionally overlapping groups. Initial pair wise label agree- ment reached 84.2%, after which the experts r esolved disagreements through discussion. This process produces a nal set of 11 malicious behavior categories, including data e xltration, arbitrary command execution, credential theft, environment r econnaissance, and per- sistence installation. T axonomy Analysis. Figure 1 shows the distribution of 11 mali- cious behavior categories across our dataset. The three most preva- lent behaviors are command execution (4,483 packages), data exl- tration (4,350 packages), and data collection (4,207 packages), which together form the core attack pattern in NPM malware: collecting sensitive information and transmitting it to attacker-controlled servers via shell commands. Other notable behaviors include C2 communication (327 packages), malicious download (288 packages), Understanding NPM Malicious Package Detection: A Benchmark-Driven Empirical Analysis Conference’17, July 2017, W ashington, DC, USA Command Execution Data Exfiltration Data Collection C2 Communication Malicious Download Persistence Credential Theft Dynamic Code Execution File Manipulation Reverse Shell W eb Injection 0 1000 2000 3000 4000 5000 Number of Packages 4483 4350 4207 327 288 236 232 149 91 63 56 Figure 1: Behavior Distribution and persistence (236 packages), which indicate more sophisticated attack chains involving remote control and long-term access. Less frequent but critical behaviors such as credential theft (232 pack- ages), dynamic code execution (149 packages), and reverse shell (63 packages) reect targeted attack objectives including runtime payload delivery and interactive remote access. Further analysis reveals that malicious packages typically com- bine multiple behaviors, with a mean of 2.4 and a median of 3 per package. W e analyze pair wise co-occurrence patterns across the 11 behavior categories. Data collection and data exltration co-o ccur most frequently in 4,144 packages, followed by command execution with data exltration (3,020) and data collection (2,903), conrming a dominant collect-and-exltrate pattern in which shell commands serve as the shared mechanism for gathering and transmitting sensi- tive information. Co-occurrences such as credential theft with data exltration and command e xecution with persistence (158) indicate more sophisticated attack chains aimed at long-term access. 3.4 Detection T ools Selection W e select tools based on four criteria: (1) Diversity : covering static, dynamic, ML, and LLM approaches to ensure comprehensive coverage; (2) Impact : prioritizing tools with demonstrated inu- ence in the npm ecosystem, including those developed by major corporations such as Microsoft and Datadog; (3) Re cency : pub- lished or signicantly updated within 2020–2025; (4) A vailability : publicly accessible with API or CLI interfaces to ensure reproducibil- ity . W e collected 8 NPM malicious package detection tools repre- senting 13 distinct detection variants from academic resear ch and industry practice. The evaluation includes: (1) Static analysis tools : GuardDog, OSSGadget, and GENIE, which analyze source code and metadata without execution; (2) Dynamic analysis tool : Packj, congured for both static analysis and dynamic trace mo des; (3) Machine learning tools : SAP implemented with three classiers (XGBoost, Random Forest, and Decision Tr ee), MalPacDetector im- plemented with three classiers (MLP , Naive Bayes, and SVM), and Cerebro which employs BERT to learn semantic patterns from be- havior sequences. Although MalPacDete ctor uses LLMs, we classify it as ML-based as its features are ultimately constructed and classi- ed by conventional ML models; (4) LLM-base d tool : So cketAI, developed by Socket Inc., op erating through a three-stage analysis pipeline with Initial Report generation, Critical Reports analysis, and Final Report synthesis. T o manage computational expenses, we congured SocketAI with GPT -4.1 mini as the underlying language model, utilizing the Final Rep ort output for malicious package clas- sication. T able 1 summarizes the dete ction tools. 3.5 Experimental Setup Environment. W e evaluate detection tools on our unie d dataset of 6,420 malicious package versions and 7,288 benign packages con- structed as described in Se ction 3.1. Each tool processes packages using default conguration parameters to ensure reproducibility and reect real-world deplo yment scenarios. Static analysis tools (GuardDog, GENIE, OSSGadget, Packj_static), dynamic analysis tool (Packj_trace), machine learning-based tools (SAP variants, MalPacDetector variants, Cerebro), and LLM-base d tool (So cke- tAI) process packages directly thr ough their respective detection pipelines. All experiments are conducte d on Ubuntu 22.04.5 LTS systems equipped with 32GB RAM and Intel Xeon processors. Context Extraction V alidation. T o evaluate the accuracy of our LLM-based code context extraction (Section 3.3), we randomly sample 500 extracted contexts and have three security experts in- dependently verify whether each context correctly captures the complete malicious code segment with its surrounding dependen- cies. A context is marked as correct only when at least two experts agree. The validation achieves 97.2% accuracy (486/500 correct) with a 97.4% consensus rate among experts, conrming the reliability of our extraction approach. Behavior Summar y V alidation. T o evaluate the accuracy of LLM-generated b ehavior summaries, we randomly sample 500 summaries and have three security experts independently verify whether each summary correctly describes the malicious behav- ior . A summary is marked as correct when at least two experts agree. The validation achieves 97.0% accuracy (485/500 correct) with 97.6% consensus rate and F leiss’ Kappa of 0.74, indicating sub- stantial inter-rater agreement. Among the 15 incorrect samples, 8 cases miss important behaviors that the co de actually has, 4 cases claim behaviors that the code does not have, and 3 cases describe behaviors that do not match actual code behavior . 4 Empirical Results 4.1 RQ1: Detection Eectiveness Results & Analysis. T able 4 pr esents the detection performance of all evaluated tools. Detection eectiveness varies dramatically across paradigms. Among static-based tools, GuardDog achieves the best overall balance with an F1-score of 93.32%, while GENIE sacrices recall for near-perfect precision and Packj_static do es the opposite, generating 6,234 false positives to recover 98.69% of malicious packages. Dynamic analysis improv es recall to 95.47% but compounds the false p ositive problem, with Packj_trace producing 4,656 FP. ML-based tools exhibit a consistent precision-recall trade- o: SAP_DT and SAP_RF attain near-perfe ct precision yet miss roughly one-third of malicious packages, whereas SAP_X GB and the MalPacDetector variants oer a more balanced trade-o with F1-scores ranging from 83.32% to 88.66%. SocketAI, the only LLM- based detector , achieves only 44.41% recall, missing 3,569 packages, which renders it impractical as a standalone detection tool. W e analyze the dete ction logic of each tool through source-code inspection to understand why these performance dierences exist. Capability-end tools ag any invocation of sensitive APIs re- gardless of context. Packj_static maps 56 JavaScript APIs to 10 permission categories and ags any matching package, achieving 98.69% recall but a signal-to-noise ratio of 1:71. OSSGadget’s false Conference’17, July 2017, W ashington, DC, USA W enbo Guo, Zhongwen Chen, Zhengzi Xu, Chengwei Liu, Ming Kang, Shiwen Song, Chengyue Liu, Yijia Xu, W eisong Sun, and Yang Liu T able 3: T axonomy of Malicious Behavior Categories Category Description Category Description Command Execution Executing OS-level shell commands via child process APIs (e.g., exec , spawn ) Data Exltration Transmitting collected data to attacker-controlled servers (e.g., H T TPS POST , DNS queries) Data Collection Gathering host and environment information (e .g., hostname, username, network interfaces) C2 Communication Establishing persistent bidirectional connections to remote servers for receiving commands Malicious Download Fetching and executing remote payloads or platform-specic binaries Persistence Maintaining long-term access by injecting code into existing applications (e.g., Discord modules) or installing backdoors Credential Theft Stealing authentication materials (e.g., SSH keys, API tokens, browser cookies, crypto wallets) Dynamic Code Exec. Constructing and executing code at runtime via eval or deobfuscation File Manipulation Unauthorized le system operations such as writing payloads to disk or deleting evidence Reverse Shell Op ening interactive shell connections to remote servers W eb Injection Injecting malicious content into web pages (e.g., iframe injection, form- jacking, phishing redirects) T able 4: Performance Comparison of Dete ctors on Datasets T ype Detector Accuracy Precision Recall F1-Score Static-Based OSSGadget 53.87% 50.41% 91.56% 65.02% GuardDog 93.97% 96.99% 89.92% 93.32% GENIE 74.72% 99.76% 46.14% 63.10% Packj_static 53.91% 50.41% 98.69% 66.73% Dynamic-Based Packj_trace 63.91% 56.82% 95.47% 71.24% ML-Based SAP_DT 83.86% 99.88% 65.62% 79.21% SAP_RF 84.16% 99.88% 66.26% 79.67% SAP_XGB 89.99% 94.47% 83.52% 88.66% MalPacDetector_MLP 84.90% 97.03% 73.00% 83.32% MalPacDetector_NB 85.15% 97.74% 72.92% 83.53% MalPacDetector_SVM 85.70% 98.00% 73.81% 84.20% Cerebro 81.52% 99.23% 58.80% 73.85% LLM-Based SocketAI 73.58% 98.21% 44.41% 61.16% positives arise largely from matching ke ywords such as curl and wget in README les and comments rather than executable co de, a structural aw no rule update can fully resolve. Intent-end tools imp ose stricter evidence requirements at the cost of recall. GENIE’s theft-os query demands ≥ 3 unique OS data sources owing to the same H T TP sink, creating a step-function boundary: multi-source exltration is caught with near-perfect precision, while single- or dual-source attacks are missed entirely , and non-H T TP exltration channels are excluded by denition. SAP_DT/RF achieve 99.88% precision through a dierent mecha- nism: their learne d boundaries are so narrow that only patterns closely matching the 102-sample training set are agge d. SocketAI’s low recall stems fr om its own pipeline design, where the Critical Reports stage explicitly instructs the LLM to challenge claims about vulnerabilities, systematically downgrading initially correct mal- ware judgments. GuardDog sits b etween these two extremes by using taint anal- ysis that requires end-to-end data ow from sensitive sources to network sinks as partial evidence of malicious intent, combine d with an allowlist of known legitimate ho ok patterns to suppress false positives. Notably , just tw o of its 22 rules account for o ver 90% of dete ctions: npm-install-script targeting life cycle script abuse and shady-links targeting suspicious outbound URLs, rev ealing that NPM malware is homogeneous in its attack entry point. A mong ML-based tools , feature quality matters more than classi- er choice. MalPacDetector’s 24 security-oriented binary features yield consistent accuracy across all three classiers, while SAP’s 140 features are structurally compromised: 65% are le-extension counts with no security semantics, and its two ostensibly discrimi- native features turn out to b e r everse signals, with danger ous token counts and Base64 chunk counts both scoring higher in benign packages than in malicious ones. LLM-Based T ools. SocketAI’s low recall has three structural causes: (1) its Critical Reports stage explicitly instructs the LLM to chal- lenge prior judgments about malicious behavior , systematically downgrading initially correct detections; (2) LLMs produce conser- vative scores, placing genuine malware below the 0.5 classication threshold; and (3) a 20-le-per-package limit causes payloads in deep directory structures to be skipped entirely . At 146 seconds per package, it is also impractical for large-scale deployment. Runtime Performance. W e evaluate runtime cost on selected packages averaging 47.4 les and 2,961.8 lines of code. Guar dDog processes packages fastest at 2.55 se conds due to ecient Semgrep execution. OSSGadget requires 7.94 seconds for 133 pattern rules. SAP_XGB needs 9.58 seconds for feature extraction and inference . Packj_trace demands 17.90 seconds for dynamic analysis. So cketAI is slowest at 146.34 seconds due to multiple LLM queries. For large- scale scanning, GuardDog processes 1,412 packages per hour while SocketAI processes only 24. Finding 1: The fundamental challenge of malicious package detection is distinguishing capability (what code can do) from intent (what code aims to do), because malicious and benign packages invoke the same OS APIs. Capability-end tools achieve high recall but ood false positives by agging any sensitive API usage regardless of context. Intent-end tools achiev e high precision but miss attacks that do not satisfy their strict evidence requirements. GuardDog bridges this gap through taint analysis requiring end-to-end source-to-sink data ow , achieving the best balance at 93.32% F1 with only 179 false positives. 4.2 RQ2: Fine-grained Behavioral Analysis While RQ1 evaluated overall detection eectiveness, RQ2 exam- ines detection capabilities across specic malicious behaviors and evasion techniques. 4.2.1 RQ2.1: Behavioral Detection Capability . Results & Analysis. Detection performance varies signicantly across the 11 behavior categories and 13 tool variants, as shown in Figure 3. Packj_static achieves the broadest coverage, exceeding 80% for 10 of 11 cate- gories through comprehensive API monitoring. In contrast, GENIE and SAP_DT/RF show critical gaps in dynamic code execution, C2 communication, and web injection, reecting the limitations dis- cussed in RQ1. GuardDog exhibits the most uneven prole among well-performing tools: it reaches 88% for data exltration and com- mand execution but drops to only 7% for dynamic code execution Understanding NPM Malicious Package Detection: A Benchmark-Driven Empirical Analysis Conference’17, July 2017, W ashington, DC, USA and 14% for web injection, b ecause its Semgrep rules were not designed to cover these categories. High-detectability behaviors. Data collection, data exltration, and command execution achieve mean detection rates above 75% across all to ols. Beyond their prevalence, these behaviors bene- t from a coupling ee ct: they rarely occur in isolation, and co- occurrence amplies detection signals. When data collection and exltration co-occur , mean detection rises from 44.6% and 69.9% individually to 82.7%. The eect is most pronounced for SAP_D T , which jumps from 3.2% to 79.3%, because a single os.hostname() call is ambiguous, but the chain os.hostname() → JSON.stringify() → https.request() constitutes unambiguous intent evidence. Low-detectability behaviors. Dynamic code execution, web injec- tion, and credential theft show the widest cross-tool variation and the lowest mean detection rates. These behaviors do not form be- havioral chains, so there is no coupling eect to amplify the signal. More critically , web injection targets browser-side DOM manip- ulation, but all evaluated tools analyze server-side Node.js code, creating a structural attack surface mismatch that no detection rule can bridge. Credential theft faces a similar pr oblem: the APIs involved are identical to those use d by legitimate authentication libraries, leaving feature-based tools with no discriminative signal. Finding 2: Detection diculty is shaped by two structural fac- tors. Behavioral coupling amplies intent signals when behav- iors co-occur: the collection+exltration chain raises SAP_DT detection from 3.2% to 79.3%. Attack surface misalignment makes certain behaviors structurally undetectable: web injec- tion targets browser-side DOM while to ols analyze Node.js code, and credential theft uses APIs identical to legitimate authenti- cation libraries. 4.2.2 RQ2.2: Evasion T echnique A nalysis. Figure 2a shows the dis- tribution of 8 evasion technique categories, and Figur e 4 presents detection rates against each technique. Results & Analysis. Among 6,420 malicious packages, 80.3% employ no evasion technique at all. Among the 19.7% that do, string obfuscation is most pre valent (541 packages), follow ed by encoding obfuscation, silent error handling, and ho ok abuse. Despite their rarity , environment detection and anti-analysis are the hardest to detect, with mean detection rates of 39% and 54% respectively . Obfuscation techniques. String and code structure obfuscation de- feat feature-lev el tools by altering the surface form fr om which fea- tures are computed. Attackers rename identiers with he x-encoded strings such as _0x27b98b and atten control ow , stripping the statistical signals that SAP_DT/RF and Cerebro rely on, all three dropping to around 26% detection. Packj_static remains unaected at 86% because it monitors API calls regardless of how identiers are named. Execution hiding techniques. Hook abuse executes malicious code through npm lifecycle scripts before any runtime analysis begins, giving detection to ols no opportunity to obser ve the behavior at execution time. GuardDog detects this at 86% through manifest pat- tern matching, while GENIE drops to 14% for lacking corresponding CodeQL queries. Silent error handling wraps malicious operations in empty try/catch blo cks and is reliably detected by pattern-based String Encoding Code Structure Silent Error Hook Abuse Env . Detection Anti-Analysis Trace Cleanup Number of Packages 541 373 309 366 352 81 42 31 Obfuscation Execution Hiding Anti-Detection (a) Evasion Distribution String Obfusc. Code Struct. Silent Error Encoding Obfusc. Env . Detect. Anti- Analysis Hook Abuse T race Cleanup 25.2% 15.2% 10.8% 9.6% 5.5% (b) Evasion Co-occurrence Figure 2: Evasion T echnique Distribution tools because the syntactic pattern is distinctive, though Cerebro at 48% struggles to separate it from legitimate defensive coding. A nti-detection techniques. Anti-analysis exhibits the largest detec- tion gap of all categories, from 5% for SAP_DT/RF and Cer ebro to 100% for Packj_static. Attackers use self-modifying eval wrappers with integrity checks: if a scanner modies the payload, the hash changes and the co de refuses to execute, defeating any tool that instruments or rewrites co de during analysis. Packj_static bypasses this entirely by monitoring system calls at the OS le vel, never touch- ing the code itself. T race cleanup shows an equally sharp split: SAP variants and GENIE detect below 16% because evidence is deleted before feature extraction completes, while GuardDog and MalPac- NB both reach 97% through pattern matching on le deletion APIs. Paradigm-level pattern. Evasion ee ctiveness dep ends on whether the technique targets the same abstraction lev el as the detector . Ob- fuscation defeats feature-level tools but leaves API-level tools unaf- fected. Anti-analysis and environment detection defeat code-level tools by using APIs identical to legitimate system queries, but fail against syscall-level tools that obser ve actual OS interactions rather than inspecting code. No single te chnique defeats all abstraction levels simultaneously . Evasion combinations. Attackers frequently layer techniques, with code structure combined with string obfuscation account- ing for 36.81% of all combinations. Layering more techniques does not linearly improve e vasion eectiveness. Packages using 2-3 te ch- niques are dete cted by fewer tools on average than those using only one, conrming that combining techniques helps. But packages using 4 or more techniques are dete cted by more tools than any other group, because heavy obfuscation produces its own detectable anomalies: elevated entropy , abnormal co de structure, and large decoding scaolds give tools a signal even without understanding the underlying malicious behavior . Evasion code intende d to hide intent ends up signaling that something is being hidden. Finding 3: Most attackers do not bother with evasion because the npm ecosystem has no mandator y pre-publication scan- ning, making simple attacks ee ctive enough. Among those that do evade, adding more techniques eventually backres: packages using 4 or more te chniques ar e detected by more tools than those using 2–3, because heavy obfuscation produces its own detectable signatures. When a technique stops working, attackers do not rene it but abandon it entirely: the decline of encoding obfuscation and the rise of hook abuse show that attackers respond to better detection by moving to an attack layer that tools do not inspect, not by evading more cleverly . Conference’17, July 2017, W ashington, DC, USA W enbo Guo, Zhongwen Chen, Zhengzi Xu, Chengwei Liu, Ming Kang, Shiwen Song, Chengyue Liu, Yijia Xu, W eisong Sun, and Yang Liu W eb Inject. Dyn. Code Exec. Cred. Theft C2 Comm. Mal. Download File Manip. Persistence Rev . Shell Cmd Exec. Data Exfil. Data Collect. Malicious Behavior Type OSSGadget GuardDog GENIE Packj-Static Packj- Trace SAP-DT SAP-RF SAP- XGB MalPac-MLP MalPac-NB MalPac-SVM Cerebro SocketAI 71 85 78 91 82 87 89 81 87 87 87 14 7 56 47 46 58 81 81 88 88 88 34 7 28 9 27 27 38 6 44 58 60 80 97 80 95 89 85 93 95 93 93 93 70 94 70 94 88 85 88 95 90 91 92 0 2 14 21 18 15 26 56 65 73 73 0 3 14 21 18 15 28 56 66 73 73 50 24 31 76 64 34 52 92 78 86 86 41 47 62 40 65 74 78 75 85 88 87 39 17 48 36 55 66 83 81 86 85 84 39 82 61 38 60 79 86 79 87 88 88 5 3 6 61 35 32 46 57 62 64 65 70 68 56 27 80 71 64 76 43 41 40 Static Dynamic ML LLM 0 20 40 60 80 100 Detection Rate (%) Figure 3: Detection Rates Across Malicious Behaviors Env . Detection Anti-Analysis Trace Cleanup String Obfusc. Hook Abuse Code Struct. Encoding Obfusc. Silent Error Evasion T echnique Category OSSGadget GuardDog GENIE Packj-Static Packj- Trace SAP-DT SAP-RF SAP- XGB MalPac-MLP MalPac-NB MalPac-SVM Cerebro SocketAI 75 74 84 77 82 79 90 87 33 24 97 54 86 64 71 78 25 31 3 54 14 63 30 60 72 100 61 86 83 89 93 92 59 95 61 78 78 82 92 88 2 5 10 26 62 35 61 51 2 5 10 27 63 35 62 52 21 57 16 54 62 65 74 72 41 88 84 70 84 84 73 86 38 52 97 65 86 76 71 79 40 90 87 80 85 85 86 87 30 5 65 26 35 33 52 48 62 76 81 78 39 82 71 58 Static Dynamic ML LLM 0 20 40 60 80 100 Detection Rate (%) Figure 4: Detection Rates Against Evasion T echniques 4.2.3 RQ2.3: Installation Script Abuse. Results & Analysis. Among 6,420 malicious packages, 4,636 (72.21%) exploit installation scripts as attack vectors. Preinstall scripts dominate , appearing in 61.15% of all malicious packages, followed by p ostinstall (6.74%) and in- stall scripts (2.20%). Malicious code concentrates in two locations: index.js in 46.9% of packages and the package.json scripts section in 38.2%. More strikingly , 1,362 packages (21.9%) rely entirely on install scripts with no malicious JavaScript code in any le. Preinstall scripts are the preferred entry p oint for a structural reason: they execute automatically during npm install with full user privileges before dependency resolution, before security to ol- ing can activate, and regardless of whether the installation ul- timately succee ds. The silent execution pattern "preinstall": "node index.js > /dev/null 2>&1" further ensures no con- sole output reveals the attack. This is not a vulnerability being exploited but a design decision: npm’s lifecycle hook mechanism was built on the assumption that publishers are benign, while its open publication model provides no such guarantee. The fundamental detection diculty is not te chnical but seman- tic. Legitimate build tools such as husky , patch-package , and prisma generate use the exact same preinstall and p ostinstall ho oks for entirely b enign purposes. A tool that ags all install scripts pro- duces massive false positives as Packj do es with 6,243 FP; a tool that requires stronger evidence of malicious intent misses most attacks as GENIE does with only 14.77% hook abuse detection. The 21.9% of install-only malware sharpens this further: when the entire attack is a single command string in package.json invoking a separate Figure 5: Package T emporal Distribution T able 5: Evasion T echniques Across Time Periods Evasion T echnique Time Period T otal ≤ 2020 (249) 2021 (817) 2022 (1,596) 2023 (1,704) 2024-25 (1,622) String Obfuscation 31 (12.4%) 77 (9.4%) 149 (9.3%) 104 (6.1%) 150 (9.2%) 541 Encoding Obfuscation 48 (19.3%) 136 (16.6%) 47 (2.9%) 90 (5.3%) 37 (2.3%) 373 Code Structure Obfusc. 10 (4.0%) 63 (7.7%) 102 (6.4%) – 102 (6.3%) 309 Silent Error Handling – 59 (7.2%) 112 (7.0%) 63 (3.7%) 106 (6.5%) 366 Hook Abuse 12 (4.8%) 55 (6.7%) 59 (3.7%) 49 (2.9%) 152 (9.4%) 352 Environment Detection 29 (11.6%) – – – – 81 Anti-Analysis – – – – – 42 Trace Cleanup – – – 21 (1.2%) – 31 Note: Only top-5 evasion techniques per period are shown. “–” indicates the te chnique was not among the top ve for that period. '11-20 '21 '22 '23 '24-25 25% 50% 75% 100% Static-Based OSSGadget GuardDog GENIE Packj-Static '11-20 '21 '22 '23 '24-25 25% 50% 75% 100% Dynamic-Based Packj- Trace '11-20 '21 '22 '23 '24-25 25% 50% 75% 100% ML-Based SAP-DT SAP-RF SAP- XGB Cerebro MalPac-MLP MalPac-NB MalPac-SVM '11-20 '21 '22 '23 '24-25 25% 50% 75% 100% LLM-Based SocketAI Figure 6: Dete ction Rate Comparison Across Time Periods by T ool Category JavaScript le, there is no obfuscation or suspicious API to detect, just a pattern indistinguishable from any legitimate build script. Finding 4: 72.21% of malicious packages exploit install scripts because npm lifecycle hooks guarantee execution without sand- boxing. The 21.9% that emb ed attacks entirely in package.json expose an architectural blind spot: every code-analysis tool as- sumes malicious behavior lives in code, and when it does not, no rule renement helps. 4.3 RQ3: T emporal Evolution Analysis While RQ1 and RQ2 evaluated detection eectiveness on our complete dataset, RQ3 examines whether detection tools maintain consistent performance as attack techniques evolve over time . Timestamp Collection. W e collect publication timestamps via the NPM Registr y API, retrieving timestamps for 6,149 of 6,420 packages (98.5%). As shown in Figure 5, 95.7% of packages were published between 2021 and 2025, reecting the growing threat of supply chain attacks and incr eased detection eorts in recent y ears. 4.3.1 Detection Performance Evolution. Figure 6 presents detection rate trends across time periods for all 13 tool variants. Results & Analysis. Detection tools exhibit divergent tempo- ral patterns. Packj_static maintains above 97% across all periods with only ± 1.15% variance, while SAP_DT drops from 87.15% in Understanding NPM Malicious Package Detection: A Benchmark-Driven Empirical Analysis Conference’17, July 2017, W ashington, DC, USA 2021 to 39.49% in 2023, Cerebro collapses from 76.61% to 27.04% be- tween 2022 and 2024–2025, and GENIE oscillates b etween 3.79% and 72.17%. All tools use xed dete ction parameters, so the divergence reects changes in the malware population, not tool updates. The ML degradation contradicts the conventional concept drift explanation. Between 2022 and 2023, obfuscation decreased and mal- ware became simpler , not more sophisticated. SAP_DT collapsed because minimal-footprint malware became statistically indistin- guishable from benign packages in feature space, a phenomenon we term concept convergence . Its partial recovery in 2024–2025 was not adaptation: the ho ok-abuse surge happene d to trigger SAP ’s install-script feature, shifting the malware population back into its coverage. Cer ebro ’s collapse follows a dierent mechanism: its BERT embeddings peak on 2022 malware be cause its training cor- pus aligns with that era, then fail silently as attack code evolves beyond its learned representations. GENIE’s 68-point oscillation reects a third failure mode: not degradation but query-pattern misalignment, peaking when dominant attacks match its CodeQL conditions and collapsing when they do not. GuardDog is the only tool showing genuine recovery , demonstrating that pattern-based tools can adapt thr ough rule maintenance in a way that ML models fundamentally cannot. Underlying all these patterns is a single principle: tools that detect what code does are temp orally robust because attack objectives ar e invariant, while tools that detect code surface properties are fragile because appearances evolve freely . 4.3.2 Evasion T e chnique Evolution. Table 5 pr esents the evolution of evasion techniques across 8 categories from 2011 to 2025. Results. The numb er of packages emplo ying evasion techniques grows from 94 in 2020 to 358 in 2024–2025, a 3.8x increase. Encoding obfuscation dominated early malware but declined sharply from 19.3% to 2.3% as attackers abandoned it. String obfuscation remains consistently pre valent across all periods at 6.1%–12.4%. Hook abuse shows a notable resurgence, rising from 2.9% in 2023 to 9.4% in 2024– 2025, becoming the most prevalent technique in recent packages. Anti-detection techniques remain rare throughout. Analysis. The shift from encoding obfuscation (19.3% → 2.3%) to hook abuse (4.8% → 9.4%) reects changing attacker strategies. Encoding obfuscation (Base64, hexadecimal) triggers high-entropy detection in ML tools, prompting attackers to adopt cleaner code patterns. Hook abuse exploits npm’s trusted execution model where preinstall / postinstall scripts execute with full privileges, bypassing code-level analysis entirely . The decline of encoding obfuscation directly explains SAP degradation. SAP models trained on 2021 data learned high-entropy patterns as malicious indicators. When attackers shifted to clean code with standard APIs, these featur es no longer distinguish malicious from benign packages, causing the 47.66 percentage point detection drop observed in 2023. Similarly , the resurgence of hook abuse in 2024–2025 correlates with Guard- Dog’s recovery (91.42%), as its Semgrep rules eectively match manifest-level hook patterns that other tools miss. Finding 5: What appears as a single phenomenon of ML degrada- tion is three distinct failure modes: SAP collapsed b ecause mal- ware converged towar d benign code in feature space, Cerebro 2011-2020 2021 2022 2023 2024-2025 0 2000 4000 6000 Data Access vs T ransfer Network FileSystem 2011-2020 2021 2022 2023 2024-2025 0 2000 4000 System Reconnaissance System Info Process Info 2011-2020 2021 2022 2023 2024-2025 0 200 400 Code Exec & Crypto Execution Crypto 2011-2020 2021 2022 2023 2024-2025 0 2000 Data Encoding Encoding Figure 7: Evolution of Sensitive API Usage in Malicious NPM Packages Over Time because its emb eddings b ecame stale as attack co de evolved be- yond its training distribution, and GENIE because its queries os- cillate with attack pattern prevalence rather than degrade mono- tonically . GuardDog is the only tool that recovered, through rule updates rather than retraining. 4.3.3 Sensitive API Evolution. T o understand how malicious code patterns evolve beyond evasion techniques, we analyze the sensitive APIs invoked by malicious packages across time periods. API Extraction. W e extract sensitive API calls from malicious code snippets using tree-sitter [ 42 ], a parsing library that constructs abstract syntax trees (AST s) for JavaScript code. For each code snippet, we parse the AST and identify API invocations through three mechanisms: (1) call expressions matching known sensitive functions, (2) member expressions accessing module methods (e.g., fs.readFile , os.hostname ), and (3) string literals containing API names to capture obfuscated dynamic calls. Following the sensitive API taxonomy established in prior work [ 9 , 50 ], w e categorize e xtracted APIs into seven categories: network, lesystem, system_info , pro- cess_info, execution, encoding, and crypto. This extraction success- fully processes 6,239 packages, identifying 53,576 API invocations. Results & Analysis. Figure 7 presents the temporal evolution of sensitive API usage across 6,239 packages. Most categories peaked in 2022 and declined thereafter , while execution APIs grew con- tinuously across all p eriods. The average API count p er package declined from 12.52 in 2021 to 6.82 in 2023, and by 2024–2025, 12.6% of malware invokes only 1–2 APIs total, up from 5.1% in 2021, with 51% of these minimal packages importing only child_pr ocess to exe- cute a single shell command. Crypto APIs emerged after 2022 and have grown steadily , suggesting increasing use of encrypte d com- munication channels. This reects a structural shift from 2021-era full reconnaissance attacks, which colle cted hostname, environment variables, and user information before exltrating via https.request , toward single-step execution: when the netw ork operation is em- bedded inside a shell command such as exec(‘curl attacker.com | sh‘) , API-level to ols tracking https.request as a network sink cannot observe it at all, and the behavioral coupling eect that amplies detection signals disappears entirely . Finding 6: Sensitive API usage has shifted from broad multi- category reconnaissance toward minimal execution-focuse d attacks. Single-step shell command attacks eliminate the be- havioral coupling that amplies detection signals and bypass API-level sink denitions entirely , leaving process-execution- level monitoring as the only r eliable detection surface against this pattern. Conference’17, July 2017, W ashington, DC, USA W enbo Guo, Zhongwen Chen, Zhengzi Xu, Chengwei Liu, Ming Kang, Shiwen Song, Chengyue Liu, Yijia Xu, W eisong Sun, and Yang Liu OSSGadget GENIE Packj- T SAP-RF Cerebro MalPac-NB Socket.AI GuardDog Packj-S SAP-DT SAP- XGB MalPac-MLP MalPac-SVM (a) Union: TP (b) Union: TN (c) Intersection: TP (d) Intersection: TN 3000 4000 5000 6000 2000 4000 6000 2000 3000 4000 5000 6000 2000 4000 6000 Figure 8: Complementary Analysis of Detection T ools: TP/TN Performance Matrix T able 6: Performance Metrics for T op T ool Combinations Union Strategy Intersection Strategy T ool Combination Acc. Prec. Rec. F1 Tool Combination Acc. Prec. Rec. F1 GuardDog + SocketAI 96.08 96.45 95.14 95.79 GuardDog + Packj_static 93.76 97.39 89.07 93.04 SocketAI + MalPac_SVM 95.83 97.36 93.63 95.46 Packj_static + MalPac_SVM 93.30 98.23 87.27 92.43 Cerebro + MalPac_SVM 96.09 97.58 92.84 95.15 GuardDog + Packj_trace 92.70 97.86 86.29 91.71 GuardDog + MalPac_SVM 95.40 96.89 93.18 95.00 Packj_static + MalPac_MLP 92.44 97.41 86.14 91.43 SocketAI + MalPac_MLP 95.21 96.48 93.16 94.79 GuardDog + MalPac_SVM 92.27 98.13 85.12 91.17 Note: Union ags packages when either tool dete cts; Intersection requires both to agree. 4.4 RQ4: T ool Complementarity Analysis While RQ1-RQ3 revealed signicant performance variations among individual tools, RQ4 examines whether strategic tool combi- nations can overcome these limitations. W e evaluate pairwise com- binations using two strategies: union (agging packages detected by either tool) and intersection (requiring both to ols to agree). Results & Analysis. T able 6 and Figure 8 present the top combi- nations acr oss all 13 tool variants. For union, GuardDog + SocketAI achieves the best F1 at 95.79% with 95.14% recall, outperforming any individual tool. For intersection, GuardDog + Packj_static leads with 93.04% F1 and 97.39% precision. Cross-methodology pairs consistently outperform same-methodology ones, yet paradigm diversity is a proxy for complementarity rather than its cause . Combination eectiveness is governed by two opposing for ces: detection complementarity and false-positive introduction. W e quantify complementarity using McNemar’s 𝜒 2 : GuardDog vs. Sock- etAI achieves 𝜒 2 = 1 , 920 , while SAP_DT vs. SAP_RF achieves only 𝜒 2 = 39 because both extract identical features and disagree on almost nothing. High 𝜒 2 is necessar y but not sucient: Guard- Dog vs. Packj_static reaches 𝜒 2 = 4 , 020 yet fails in union because Packj_static adds only 665 true positives while introducing 6,015 false positives, collapsing combined precision from 97% to 50%. The intra-ML pair Cerebro + MalPac_SVM succee ds because their detection mechanisms are genuinely dierent: Cerebro uses BERT embeddings while MalPac_SVM uses 24 binary AST featur es, so the packages each tool misses are largely dierent. SAP_DT + SAP_RF, despite coming from dierent classiers, extract the same 140 featur es and miss almost identical packages, providing virtually no benet from combination. This explains a counterintuitive result: GuardDog, the strongest individual tool, is on average hurt by union combination at − 0.05 F1 b ecause most partners introduce more false positives than additional true positives, while SocketAI, the weakest tool, benets most at + 0.08 F1. For intersection, GuardDog + Packj_static succeeds where union fails because requiring both tools to agree lters out each tool’s independent false positives, GuardDog over-matches code patterns while Packj_static ags legitimate system calls, but packages agge d by both are almost always genuine malware. Finding 7: GuardDog + SocketAI achieves the best union F1 (95.79%) and Cerebro + MalPac_SVM the best intra-paradigm result (95.15%), both succe eding be cause their miss sets are genuinely disjoint. The de eper principle is asymmetric: the strongest tool degrades under combination be cause partners add more false positives than new true positives, while the weakest tool benets most be cause a strong partner lls its blind spots at low false-positive cost. 4.5 Cross-RQ Synthesis The six ndings form a coherent causal structure anchored by a single root cause: the capability-intent gap . Because malicious and benign packages invoke identical OS APIs, every to ol must choose between agging all suspicious API usage and requiring stronger evidence of malicious intent. This single constraint propagates through all ndings: (1) to ols that ag any sensitive API achieve high recall but massive false positives, while tools requiring multi- source evidence achieve high precision but miss partial attacks (RQ1); (2) b ehaviors whose APIs are shared with legitimate code are systematically harder to detect, and co-occurring behaviors amplify intent signals precisely because chains are harder to explain away (RQ2); (3) attackers do not nee d evasion in an unscanned ecosystem, and install-script attacks succeed because code-analysis tools ne ver inspect build conguration (RQ2); (4) tools monitoring actual API calls remain stable over time b ecause those calls are invariant, while tools detecting statistical co de pr operties degrade as malware sheds obfuscation and converges toward benign code (RQ3); (5) combination succeeds when partners resolve the capability-intent ambiguity through independent mechanisms, and fails when one partner’s false positives outweigh its additional true positives (RQ4). 5 Implications For Practitioners. T ool selection should match op erational requirements: GuardDog + Packj_static intersection suits CI/CD pipelines requiring low false positives, while GuardDog + Socke- tAI union maximizes coverage for security-critical environments. As hook abuse surged to 9.4% in 2024–2025, organizations should consider disabling automatic script execution via npm install –ignore-scripts for untrusted packages. ML-based to ols require periodic retraining as attack patterns shift; teams unwilling to re- train quarterly should switch to b ehavioral detection to ols that remain stable over time . For Researchers. The capability-intent gap identie d in this study points to a concr ete design dir ection: future tools should seek mechanisms that provide partial intent evidence without requir- ing strict formal verication that limits recall. T aint analysis and behavior-chain detection are promising directions, as they resolve ambiguity thr ough data-ow evidence rather than surface-level fea- tures. Dynamic code execution, web injection, and credential theft remain structurally underdetected because their APIs are indistin- guishable from legitimate usage, representing the most pressing open problems for targeted research. For Ecosystem Maintainers. The 21.9% of malware residing entirely in package.json install scripts exposes a gap that no code- analysis tool can bridge through rule renement alone. This is not a Understanding NPM Malicious Package Detection: A Benchmark-Driven Empirical Analysis Conference’17, July 2017, W ashington, DC, USA vulnerability but a design decision: npm’s open publication mo del assumes publisher trust, while providing no mechanism to v erify it. Mandatory pre-publication scanning with behavioral sandboxing, or restricting lifecycle script execution by default, would struc- turally reduce the attack surface that 72.21% of malicious packages currently exploit. 6 Threats to V alidity Internal V alidity . Ground truth depends on expert annotation; three experts achieved 97.4% consensus, but edge cases may be mis- labeled. T o ols use default parameters for reproducibility; dierent settings could aect results. LLM-based extraction achieves 97.2% accuracy on 500 validated samples, and the remaining 2.8% errors may aect behavioral analysis. External V alidity . Results may not generalize to PyPI, Maven, or other ecosystems with dierent package structures and attack patterns. The dataset concentrates on 2021–2025 packages (95.7%), with ndings from 2021 onward statistically r obust based on 800+ samples per period. Several tools in T able 1 were unavailable due to closed source co de or deprecate d dependencies, limiting the diversity of evaluated approaches. Construct V alidity . The behavioral taxonomy derives from LLM summaries and clustering. Alternative clustering parameters or dierent embedding models could produce dierent category boundaries. Evasion technique categorization involves subjective judgment. Some te chniques overlap (e.g., encoding obfuscation and string obfuscation), and b oundary denitions may var y across researchers, potentially introducing selection bias. 7 Conclusion This pap er evaluates 8 NPM detection to ols with 13 variants on a unied dataset of 6,420 malicious and 7,288 benign packages. GuardDog achieves the best balance at 93.32% F1, and strategic combinations reach up to 96.08% accuracy . ML-based tools degrade severely because malware converged toward benign code as obfus- cation became unnecessar y in an unscanned ecosystem, not be cause attacks grew more sophisticated. Source-code inspection reveals that precision-recall trade-os, temporal fragility , and combina- tion eectiveness all stem from a single r oot cause: the ambiguity between code capability and malicious intent. T ools anchored to attack objectives are stable; tools anchored to attack implementa- tions are fragile. Future detection should be designed around what malware must do, not how it curr ently does it. 8 Data A vailability Our dataset and evaluation framework are available online: https: //doi.org/10.6084/m9.gshare.31869370. Conference’17, July 2017, W ashington, DC, USA W enbo Guo, Zhongwen Chen, Zhengzi Xu, Chengwei Liu, Ming Kang, Shiwen Song, Chengyue Liu, Yijia Xu, W eisong Sun, and Yang Liu References [1] Code and dataset. https://doi.org/10.6084/m9.gshare.31869370. Accessed: 2026- 03-27. [2] Npmjs. https://w ww.npmjs.com/. Accessed: 2025-07-08. [3] Abdalkareem, R., Oda, V ., Mujahid, S., and Shihab, E. On the impact of using trivial packages: An empirical case study on npm and pypi. Empirical Software Engineering 25 , 2 (2020), 1168–1204. [4] Broadcom . Malicious coa and rc npm packages discovered. https: //www.br oadcom.com/support/security- center/protection- bulletin/malicious- coa- and- rc- npm- packages- discovered. Accessed: 2025-07-08. [5] Cao, Y., Chen, L., Ma, W ., Li, Y., Zhou, Y ., and W ang, L. T owards better dependency management: A rst look at dependency smells in python projects. IEEE Transactions on Software Engineering 49 , 4 (2022), 1741–1765. [6] CISA . Malware discovered in popular npm package ua-parser-js. https://www.cisa.gov/ne ws- events/alerts/2021/10/22/malware- discovered- popular- npm- package- ua- parser- js. Accessed: 2025-07-08. [7] Da t aDog . Guarddog: A threat detection tool. https://github.com/DataDog/ guarddog, 2024. Accessed: 2024-12-16. [8] Da t aDog . Malicious software packages dataset - npm samples. https://github. com/DataDog/malicious- software- packages- dataset/tree/main/samples/npm, 2024. Accessed: 2024-12-05. [9] Duan, R., Alra wi, O., Kasturi, R. P ., Elder, R., Salt aformaggio, B., and Lee, W . To wards measuring supply chain attacks on package managers for interpreted languages. In Network and Distributed Systems Security (NDSS) Symposium (2021). [10] ESLint Team . Postmortem for malicious packages published on july 12th, 2018. https://eslint.org/blog/2018/07/p ostmortem- for- malicious- package- publishes/, July 2018. Accessed: 2025-07-08. [11] Ferreira, G., Jia, L., Sunshine, J., and Kästner, C. Containing malicious package updates in npm with a lightweight permission system. In 2021 IEEE/ACM 43rd International Conference on Software Engineering (ICSE) (2021), IEEE, pp. 1334– 1346. [12] Garrett, K., Ferreira, G., Jia, L., Sunshine, J., and Kästner, C. Detecting suspicious package updates. In 2019 IEEE/ACM 41st International Conference on Software Engineering: New Ideas and Emerging Results (ICSE-NIER) (2019), IEEE, pp. 13–16. [13] Guo, W ., Xu, Z., Liu, C., Hu ang, C., Fang, Y., and Liu, Y . An empirical study of malicious code in pypi ecosystem. In 2023 38th IEEE/ACM International Conference on Automated Software Engineering (ASE) (2023), IEEE, pp. 166–177. [14] Halder, S., Bewong, M., Mahboubi, A., Jiang, Y ., Islam, M. R., Islam, M. Z., Ip, R. H., Ahmed, M. E., Ramachandran, G. S., and Ali Babar, M. Malicious package detection using metadata information. In Proceedings of the ACM W eb Conference 2024 (2024), pp. 1779–1789. [15] Hu ang, C., W ang, N., W ang, Z., Sun, S., Li, L., Chen, J., Zhao, Q ., Han, J., Y ang, Z., and Shi, L. { DONAPI } : Malicious { NPM } packages detector using behavior sequence knowledge mapping. In 33rd USENIX Security Symposium ( USENIX Security 24) (2024), pp. 3765–3782. [16] Huang, Y ., W ang, R., Zheng, W ., Zhou, Z., Wu, S., Ke, S., Chen, B., Gao, S., and Peng, X. Spiderscan: Practical detection of malicious npm packages based on graph-based behavior modeling and matching. In Procee dings of the 39th IEEE/ACM International Conference on Automated Software Engineering (2024), pp. 1146–1158. [17] Intrinsic . Compromised npm package event-stream. https://medium.com/ intrinsic- blog/compromised- npm- package- event- stream- d47d08605502. Ac- cessed: 2025-07-08. [18] Kaisersla utern-Landa u, R. Remote access trojaner in npm paket. https://rptu.de/en/informationssicherheit/sicherheitswarnungen/details/ news/remote- access- trojaner- in- npm- paket. Accessed: 2025-07-08. [19] Ladisa, P., Pla te, H., Martinez, M., and Barais, O. Sok: Taxonomy of attacks on open-source software supply chains. In 2023 IEEE Symposium on Security and Privacy (SP) (2023), IEEE, pp. 1509–1526. [20] Ladisa, P., Pont a, S. E., Ronzoni, N., Martinez, M., and Barais, O. On the feasibility of cross-language detection of malicious packages in npm and pypi. In Proceedings of the 39th A nnual Computer Security A pplications Conference (2023), pp. 71–82. [21] La tendresse, J., Mujahid, S., Cost a, D. E., and Shihab, E. Not all dependencies are equal: An empirical study on production dependencies in npm. In Proceedings of the 37th IEEE/A CM International Conference on Automated Software Engineering (2022), pp. 1–12. [22] Li, N., W ang, S., Feng, M., W ang, K., W ang, M., and W ang, H. Malwukong: T owards fast, accurate, and multilingual detection of malicious code poisoning in oss supply chains. In 2023 38th IEEE/A CM International Conference on Automated Software Engineering ( ASE) (2023), IEEE, pp. 1993–2005. [23] lmu plai . Distaticanalyzer - dierential static analysis tool for npm packages to detect malicious updates. https://github.com/lmu- plai/di- CodeQL, 2023. Accessed: 2025-4-16. [24] lmu plai . Guarding the npm ecosystem with semantic malware detection. https: //github.com/lmu- plai/GENIE, 2024. Accessed: 2025-4-16. [25] Mceen, J. B. Some methods of classication and analysis of multivariate observations. In Proc. of 5th Berkeley Symposium on Math. Stat. and Prob. (1967), pp. 281–297. [26] Microsoft . Ossgadget: Open source software supply chain risk assessment. https://github.com/microsoft/OSSGadget, 2024. Accessed: 2024-12-16. [27] Neupane, S., Holmes, G., Wyss, E., Davidson, D., and De Carli, L. Beyond typosquatting: an in-depth look at package confusion. In 32nd USENIX Se curity Symposium ( USENIX Security 23) (2023), pp. 3439–3456. [28] Ohm, M., Boes, F ., Bungartz, C., and Meier, M. On the feasibility of supervise d machine learning for the detection of malicious software packages. In Proceedings of the 17th International Conference on A vailability , Reliability and Security (2022), pp. 1–10. [29] Ohm, M., Pla te, H., Sykosch, A., and Meier, M. Backstabber’s knife collection: A review of open source software supply chain attacks. In Detection of Intrusions and Malware, and Vulnerability Assessment: 17th International Conference, DIMV A 2020, Lisbon, Portugal, June 24–26, 2020, Proceedings 17 (2020), Springer , pp. 23–43. [30] ossilla te inc . Packj ags malicious/risky open-source packages. https://github. com/ossillate- inc/packj, 2023. Accessed: 2025-4-16. [31] osssanitizer . T owards measuring supply chain attacks on package managers for interpreted languages. https://github.com/osssanitizer/maloss, 2021. Accessed: 2025-4-16. [32] Phylum . Npm se curity holding. https://docs.phylum.io/analytics/npm_security_ holding. Accessed: 2025-07-08. [33] Reimers, N., and Gurevych, I. Sentence-bert: Sentence emb eddings using siamese bert-networks. In Proceedings of the 2019 Conference on Empirical Meth- ods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP) (2019), Association for Computa- tional Linguistics, p. 3982. [34] Scalco, S., P aramitha, R., V u, D.-L., and Massacci, F. On the feasibility of detect- ing injections in malicious npm packages. In Proceedings of the 17th international conference on availability, reliability and security (2022), pp. 1–8. [35] Schw artz, M. J. One-third of p opular pypi packages mistakenly agged as malicious. https://ww w .darkreading.com/application- security/one- third- pypi- packages- mistakenly- agged- malicious, 2023. Accessed: May 24, 2025. [36] Securelist . Lofylife: Malicious npm packages. https://securelist.com/lofylife- malicious- npm- packages/107014/. Accessed: 2025-07-08. [37] Sejfia, A., and Schäfer, M. Practical automated detection of malicious npm packages. In Proce edings of the 44th international conference on software engineer- ing (2022), pp. 1681–1692. [38] Singh, V . Node.js usage statistics. https://www.brilw orks.com/blog/nodejs- usage- statistics/. Accessed: 2025-07-08. [39] Socket . 2023 npm retrospective. https://socket.dev/blog/2023- npm- retrospective. Accessed: 2025-07-08. [40] T a ylor, M., V aidy a, R., Da vidson, D., De Carli, L., and Rast ogi, V . Defending against package typosquatting. In International conference on network and system security (2020), Springer , pp. 112–131. [41] Technology, B. No de.js statistics. https://ww w .bacancytechnology .com/blog/ nodejs- statistics. Accessed: 2025-07-08. [42] Tree-sitter . Tree-sitter: An incremental parsing system for programming tools. https://github.com/tree- sitter/tree- sitter. Accessed: 2025-07-08. [43] Vu, D.-L., Newman, Z., and Meyers, J. S. Bad snakes: Understanding and improving python package index malware scanning. In 2023 IEEE/ACM 45th International Conference on Software Engineering (ICSE) (2023), IEEE, pp. 499–511. [44] Vu, D .-L., P ashchenko, I., Massa cci, F., Pla te, H., and Sabett a, A. T yposquat- ting and combosquatting attacks on the python ecosystem. In 2020 iee e european symposium on security and privacy workshops (euros&pw ) (2020), IEEE, pp. 509– 514. [45] W ang, J., Li, Z., , J., Zou, D., X u, S., Xu, Z., W ang, Z., and Jin, H. Malpacde- tector: An llm-based malicious npm package detector . IEEE Transactions on Information Forensics and Security 20 (2025), 6279–6291. [46] Wyss, E., T assio, D., De Carli, L., and Da vidson, D . Evaluating llm-based detec- tion of malicious package updates in npm. In Proceedings of the 28th International Symposium on Research in Attacks, Intrusions and Defenses (RAID) (2025). [47] Yu, Z., Wen, M., Guo, X., and Jin, H. Maltracker: A ne-grained npm mal- ware tracker copiloted by llm-enhanced dataset. In Proceedings of the 33rd ACM SIGSOFT International Symposium on Software Testing and A nalysis (2024), pp. 1759–1771. [48] Zahan, N., Burckhardt, P., Lysenk o, M., Aboukhadijeh, F., and Williams, L. Leveraging large language mo dels to dete ct npm malicious packages. In 2025 IEEE/ACM 47th International Conference on Software Engineering (ICSE) (2025), IEEE Computer Society , pp. 683–683. [49] Zahan, N., Zimmermann, T ., Godefroid, P., Murphy, B., Maddila, C., and Williams, L. What are weak links in the npm supply chain? In Proceedings of the 44th International Conference on Software Engineering: Software Engine ering in Practice (2022), pp. 331–340. [50] Zhang, J., Huang, K., Hu ang, Y ., Chen, B., W ang, R., W ang, C., and Peng, X. Killing two birds with one stone: Malicious package dete ction in npm and pypi using a single model of malicious behavior se quence. ACM Transactions on Understanding NPM Malicious Package Detection: A Benchmark-Driven Empirical Analysis Conference’17, July 2017, W ashington, DC, USA Software Engineering and Methodology 34 , 4 (2025), 1–28. [51] Zhang, Y ., , H., Y ing, L., and W ang, L. Maldet: An automated malicious npm package detector based on behavior characteristics and attack vectors. In 2024 IEEE 23rd International Conference on Trust, Security and Privacy in Computing and Communications (TrustCom) (2024), IEEE, pp. 1942–1947. [52] Zimmermann, M., St aicu, C.-A., Tenny, C., and Pradel, M. Small world with high risks: A study of security threats in the npm ecosystem. In 28th USENIX security symposium (USENIX Se curity 19) (2019), pp. 995–1010.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment