Q-BIOLAT: Binary Latent Protein Fitness Landscapes for QUBO-Based Optimization

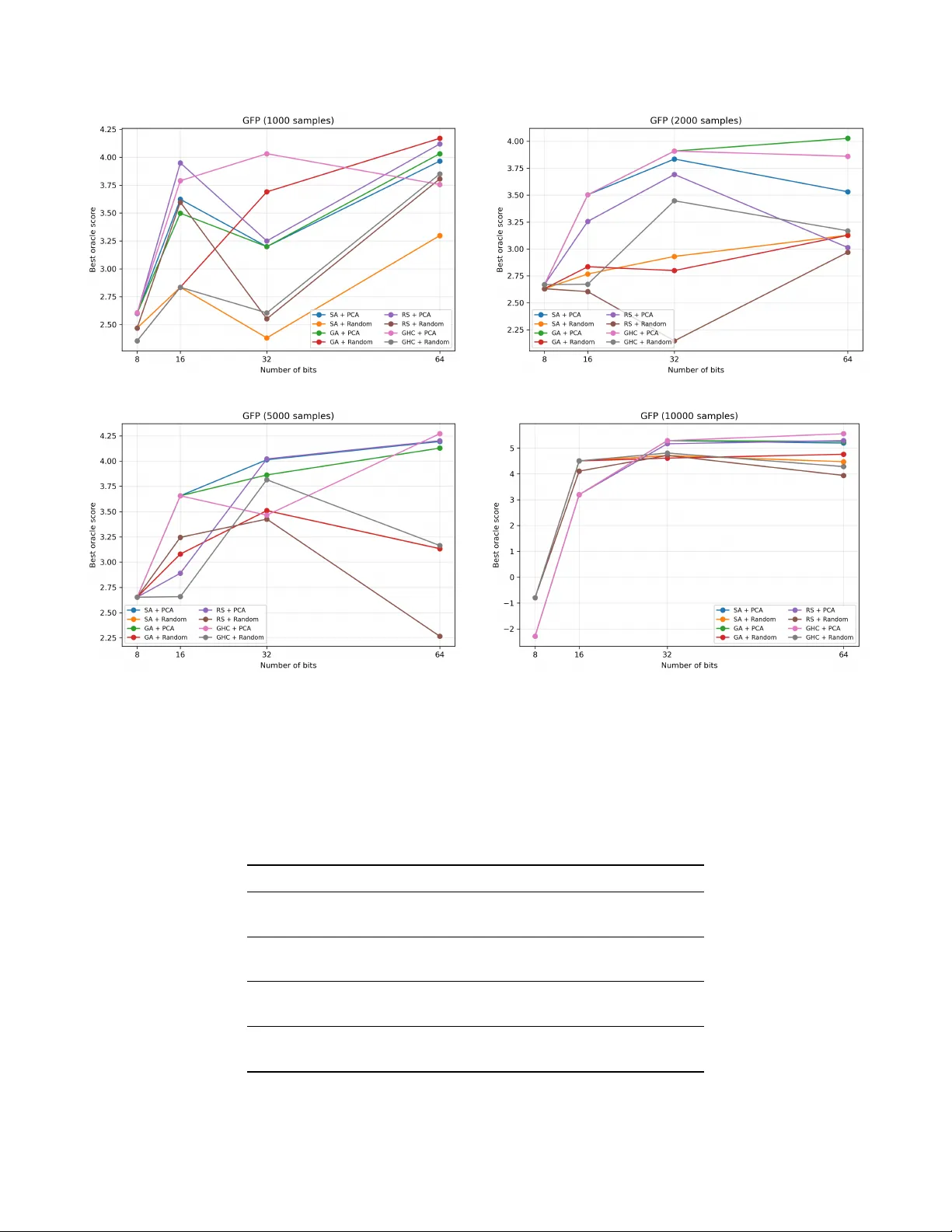

Protein fitness optimization is inherently a discrete combinatorial problem, yet most learning-based approaches rely on continuous representations and are primarily evaluated through predictive accuracy. We introduce Q-BIOLAT, a framework for modelin…

Authors: Truong-Son Hy