KV Cache Quantization for Self-Forcing Video Generation: A 33-Method Empirical Study

Self-forcing video generation extends a short-horizon video model to longer rollouts by repeatedly feeding generated content back in as context. This scaling path immediately exposes a systems bottleneck: the key-value (KV) cache grows with rollout l…

Authors: Suraj Ranganath, Vaishak Menon, Anish Patnaik

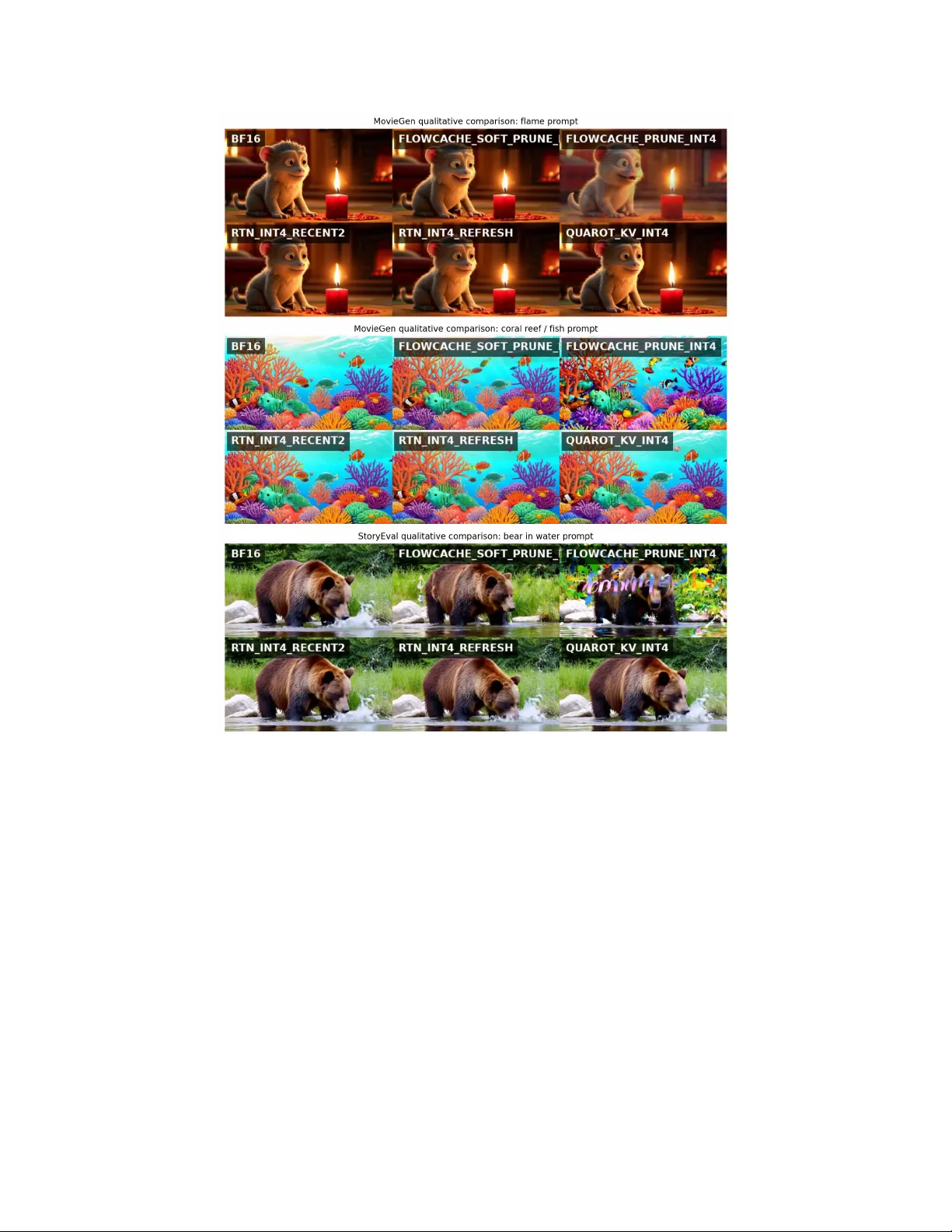

KV Cache Quantization f or Self-F or cing V ideo Generation: A 33-Method Empirical Study Suraj Ranganath ∗ Univ ersity of California, San Diego V aishak Menon Univ ersity of California, San Diego Anish Patnaik Univ ersity of California, San Diego Abstract Self-forcing video generation extends a short-horizon video model to longer roll- outs by repeatedly feeding generated content back in as context. This scaling path immediately exposes a systems bottleneck: the ke y-value (KV) cache gro ws with rollout length, so longer videos require not only better generation quality but also substantially better memory behavior . W e present a comprehensi ve em- pirical study of KV -cache compression for self-forcing video generation on a Wan2.1 -based Self-F orcing stack. Our study covers 33 quantization and cache- policy variants, 610 prompt-level observations, and 63 benchmark-lev el sum- maries across two ev aluation settings: M OV I E G E N for single-shot 10-second generation and S T O RY E V A L for longer narrativ e-style stability . W e jointly e val- uate peak VRAM, runtime, realized compression ratio, VBench imaging quality , BF16-referenced fidelity (SSIM, LPIPS, PSNR), and terminal drift. Three find- ings are robust. First, the strongest practical operating region is a FlowCache- inspired soft-prune INT4 adaptation, which reaches 5 . 42 – 5 . 49 × compression while reducing peak VRAM from 19.28 GB to about 11.7 GB with only modest runtime ov erhead. Second, the highest-fidelity compressed methods, especially PRQ_INT4 and QUAROT_KV_INT4 , are not the best deployment choices because they preserve quality at sev ere runtime or memory cost. Third, nominal com- pression alone is not sufficient: several methods shrink KV storage but still ex- ceed BF16 peak VRAM because the current integration reconstructs or retains large BF16 buf fers during attention and refresh stages. The result is a bench- mark harness, analysis workflo w , and empirical map of which KV -cache ideas are practical today and which are promising research directions for better memory integration. Code, data products, and the presentation dashboard are a vailable at https://github.com/suraj- ranganath/kv- quant- longhorizon/ . 1 Introduction Autoregressi ve video diffusion has become a compelling route to long-horizon video generation because it can roll forward indefinitely by treating previously generated content as context for future frames. Self-Forcing makes this idea especially practical by training with self-generated conte xt and KV -cache rollouts rather than relying only on teacher -forced conditioning [Huang et al., 2025]. The same mechanism that makes long rollout possible also creates a central scaling bottleneck: as context grows, the KV cache gro ws, and that cache must be stored, read, written, and sometimes transformed ∗ suranganath@ucsd.edu . Preprint. repeatedly during generation. In other words, longer rollout is not limited only by model quality . It is also limited by memory capacity , memory bandwidth, and the implementation details of how cached state is represented. This observation motiv ates a specific empirical question. If we compress the KV cache, which methods actually improv e end-to-end long-video generation, and which ones merely look ef ficient in isolation? A method can report an attractive nominal compression ratio while still failing in deployment because it introduces lar ge dequantization buf fers, refresh ov erhead, or quality collapse under rollout. For video generation, these systems and quality axes cannot be separated cleanly . A useful method must reduce memory pressure enough to matter , remain computationally viable, preserve perceptual realism, and a void structural drift relati ve to the uncompressed reference. W e study this question on a Wan2.1 -based Self-Forcing stack [T eam W an et al., 2025, Huang et al., 2025]. W e benchmark 33 methods spanning naiv e quantization, asymmetric quantization, rotation-based quantization, progressiv e residual coding, temporal heuristics, spatial mixed precision, and FlowCac he-inspired retention/pruning policies. W e e v aluate both single-shot video quality and narrati ve-style rollout stability , and we aggregate each method through six axes: peak VRAM, runtime, KV compression ratio, VBench imaging quality [Huang et al., 2023], BF16-relativ e fidelity , and drift- last imaging quality . Our dataset comprises 610 prompt-level observ ations and 63 benchmark-le vel method summaries. The main empirical result is not that one uni versal method dominates e very objecti ve. Instead, the study re veals a structured design space. Flo wCache-inspired soft pruning is the strongest practical operating point for real memory relief. PRQ_INT4 and QUAROT_KV_INT4 define a high-fidelity region that is academically useful but operationally expensiv e. Recency- and refresh-based R TN variants re veal polic y ideas that help quality , yet their current inte gration still allows transient BF16 reconstruction to dominate peak VRAM. Spatially mix ed methods demonstrate a clear neg ative result: a plausible spatial-importance heuristic can fail catastrophically for autoregressi ve video generation. 2 Related W ork Our work sits at the intersection of modern video generation, autoregressiv e long-horizon rollout, and KV -cache systems optimization. Large-scale video foundation models such as Movie Gen and W an demonstrate that transformer-based video generation can achiev e strong perceptual quality when giv en enough scale, context length, and inference engineering [Polyak et al., 2024, T eam W an et al., 2025]. Self-Forcing extends this trajectory by explicitly addressing the train-test mismatch in autoregressi ve video dif fusion and by making KV -cached rollout part of training and inference rather than a purely downstream systems optimization [Huang et al., 2025]. In parallel, the systems community has shown that KV -cache management is a first-order bottleneck in lar ge-sequence inference. PagedAttention made this point e xplicit for language models by sho wing that request-level throughput can be constrained as much by KV memory management as by raw arithmetic throughput [Kwon et al., 2023]. This insight transfers naturally to long-horizon video generation, where cache growth is tied directly to time. FlowCache e xtends the idea from generic memory management to autoregressi ve video generation by proposing chunkwise caching policies and KV compression mechanisms tailored to chunked video rollout [Ma et al., 2026]. Our study is closely related in spirit, but the of ficial FlowCache implementation does not support the Self-Forcing Wan2.1 stack used here, so the FlowCache-labeled rows in our experiments should be read as an in-house adaptation of FlowCache-inspired ideas rather than an upstream reproduction. The quantization literature gives several concrete tools for compressing KV tensors. KIVI uses asymmetric statistics-aware quantization for keys and values and is a strong low-bit reference for cache compression [Liu et al., 2024]. QuaRot attacks the outlier problem directly by rotating activ ations into a more quantization-friendly basis before lo w-bit coding [Ashkboos et al., 2024]. More recently , Quant V ideoGen (QV G) proposes a training-free KV -cache quantization frame work for autoregressi ve video generation and reports strong memory-quality P areto improvements, including a higher-quality operating mode denoted QVG-Pro [Xi et al., 2026]. Our benchmark includes these methods together with project-specific extensions: PRQ, QA Q, Age-T ier , TPTQ, FlowCache- inspired variants, and spatial mixed-precision policies. The goal is not to introduce a single new quantizer in isolation, but to compare a wide family of plausible design ideas under one end-to-end video-generation harness. 2 Recent long-video systems also provide important complementary conte xt. CausV id distills a slo w bidirectional teacher into a fast causal student for on-the-fly generation [Y in et al., 2025], while HiAR proposes hierarchical denoising to reduce error accumulation in long autoregressi ve rollouts [Zou et al., 2026]. These systems target long-horizon generation from different angles than direct KV compression, which is exactly why the y matter for future benchmarking: if our conclusions transfer across these causal generators, then the lessons are lik ely about cache policy itself rather than only about one implementation of Self-Forcing. Finally , ev aluation itself is a nontrivial challenge for video generation. VBench provides a multi- dimensional realism benchmark and has become a practical tool for separating perceptual quality from other axes of model beha vior [Huang et al., 2023]. In this project, we complement VBench with BF16-referenced SSIM, LPIPS, and PSNR, and with prefix-based drift curv es. This dual-axis view is important because some methods remain visually plausible while structurally di ver ging from the BF16 baseline. 3 Method Families and Benchmark Harness 3.1 Problem Setting W e study KV -cache compression for a Self-Forcing video generator b uilt on top of Wan2.1 -style causal video generation. Each rollout generates 165 frames at 480 × 832 and 16 fps, corresponding to approximately 10.3 seconds of video. At this horizon, the cache is already large enough that memory-management choices materially affect feasibility in single-GPU settings. W e therefore treat KV compression as a multi-objecti ve optimization problem o ver memory , runtime, perceptual realism, structural fidelity , and temporal stability . 3.2 Benchmarks W e ev aluate two complementary settings. M O V I E G E N is a single-shot prompt benchmark deri ved from a MovieGen-style prompt suite and is designed to re veal systems beha vior and prompt-level output quality in isolated 10-second samples. S T O RY E V A L uses 10 narrative-style prompts and ev aluates whether a method remains stable ov er a longer causal rollout, summarized through a drift curve o ver prefix duration and a drift-last imaging-quality score. T ogether , these benchmarks separate immediate per-prompt quality from long-horizon stability under self-conditioning. 3.3 Method Families Across the full study we ev aluate 33 methods. T able 1 enumerates the full in ventory and groups the variants by design idea. BF16 is the reference operating point. R TN and KIVI are standard low-bit baselines. QuaRot, PRQ, and QA Q aim to preserve fidelity more carefully . Age-Tier and TPTQ introduce recency-a ware temporal heuristics. The FlowCache-inspired methods mov e beyond plain quantization and ask which cache chunks should be retained, compressed, summarized, or reused. Spatial mixed-precision v ariants test whether foreground/background partitioning is a useful inductiv e bias for autoregressi ve rollout. Appendix A provides code-lev el implementation notes for ev ery ev aluated method. 3.4 Evaluation Axes Every method is ev aluated along six axes. Peak VRAM is the maximum allocated GPU memory observed in generation logs. Runtime is the end-to-end wall-clock generation time per prompt. Compression ratio is the BF16-equiv alent KV footprint di vided by the compressed KV footprint. Perceptual realism is primarily measured through VBench imaging quality [Huang et al., 2023]. Structural fidelity is measured against BF16 references using SSIM, LPIPS, and PSNR from e xact video comparisons. T emporal stability is summarized by drift-last imaging quality , the final point on the prefix-quality curve. This metric design is deliberate and follows a dual-axis vie w of quality . VBench answers whether a video still looks plausible as a video. SSIM, LPIPS, and PSNR answer whether it stayed f aithful to the BF16 reference without hallucinating away scene structure. Prefix drift answers whether the method 3 Family Exact methods in this study What changes across variants Provenance / role BF16 BF16 Uncompressed reference cache stored in native BF16 precision; all BF16-relati ve fidelity deltas are measured against this baseline. Native model baseline. R TN RTN_INT2 , RTN_INT4 , RTN_K2_V4 , RTN_INT4_RECENT2 , RTN_INT4_REFRESH INT2 and INT4 set uniform 2- or 4-bit block quantization; K2_V4 uses 2-bit keys and 4-bit values; Recent2 preserv es a recent BF16 context window; Refresh periodically re-quantizes the cache to limit drift. Standard low-bit baseline; custom video-stack integration. KIVI KIVI_INT2 , KIVI_INT4 , KIVI_K2_V4 , KIVI_INT4_REFRESH Uses asymmetric channel-wise key coding and token-wise v alue coding; the variants mirror R TN bit-widths, asymmetric K/V precision, and refresh cadence. KIVI baseline [Liu et al., 2024]. QuaRot QUAROT_KV_INT2 , QUAROT_KV_INT4 , QUAROT_KV_INT4_ RECENT2 , QUAROT_KV_INT4_ REFRESH Adds Hadamard rotation before R TN-style coding to suppress outliers; the recent-window and refresh variants test the same heuristics after rotation. QuaRot baseline [Ashkboos et al., 2024]. Project high-fidelity quantizers PRQ_INT2 , PRQ_INT4 , QAQ_INT2 , QAQ_INT4 PRQ uses two-stage progressive residual coding; QA Q stores large outliers separately from the clipped low-bit bulk; INT2 and INT4 set the base storage budget. Novel methods in this project. T emporal heuristics AGE_TIER_INT2 , AGE_TIER_INT4 , TPTQ_INT2 Age-Tier keeps recent tokens at higher precision while compressing older history more aggressively; TPTQ adds a PRQ-coded older zone plus e xplicit outlier preservation. Novel methods in this project. FlowCache-inspired adaptations FLOWCACHE_ HYBRID_INT2 , FLOWCACHE_ ADAPTIVE_INT2 , FLOWCACHE_ PRUNE_INT2 , FLOWCACHE_ PRUNE_INT4 , FLOWCACHE_SOFT_ PRUNE_INT2 , FLOWCACHE_SOFT_ PRUNE_INT4 , FLOWCACHE_NATIVE , FLOWCACHE_ NATIVE_SOFT_ PRUNE_INT4 Hybrid uses chunk-age and layer-aware b udgets; Adaptive adds drift-aware allocation; Prune hard-evicts lo w-importance chunks; Soft-Prune replaces evicted chunks with summaries; Nativ e reuses internal features; Native-Soft-Prune combines reuse with soft-pruned INT4 caching. In-house FlowCache-style adaptation [Ma et al., 2026]; not a direct upstream reproduction on Wan2.1 . Spatial mixed precision SPATIAL_MIXED_FG_ RTN_INT4_BG_ RTN_INT2 , SPATIAL_MIXED_FG_ RTN_INT4_BG_ RTN_INT4 , SPATIAL_MIXED_FG_ KIVI_INT4_BG_ KIVI_INT2 , SPATIAL_MIXED_FG_ QUAROT_KV_INT4_BG_ RTN_INT2 Uses a motion-derived spatial mask so fore ground tokens receive a higher-fidelity encoder and background tokens a cheaper one; the four variants test R TN-only , KIVI-only, and QuaRot-foreground mixes. Novel methods in this project. T able 1: Complete method inv entory for the 33-method study . The table lists every e valuated v ariant and clarifies what changes within each family . Appendix A provides code-le vel implementation notes for each method. remains stable as self-generated context accumulates. In other words, the benchmark is a multi-axis gauntlet ov er memory , runtime, compression, realism, fidelity , and drift rather than a single-score leaderboard. W e report PSNR for completeness, but because BF16 is compared against itself, BF16 PSNR is infinite by definition and some compressed methods also inherit non-finite aggre gates if a subset of frames match e xactly . For that reason, our decision analysis primarily emphasizes SSIM and LPIPS rather than PSNR alone. 4 Experimental Setup All methods are run through a common harness. V ideo generation is launched from a single generation entrypoint that attaches an optional quantizer at the causal KV -cache boundary , logs runtime and peak VRAM, writes out prompt-level videos, and records per-prompt traces. Fidelity is then computed against BF16 reference videos by exact frame alignment with PSNR, SSIM, and LPIPS. VBench ev aluation is run separately . For S T O RY E V A L , a dedicated script truncates generated videos to a sequence of prefixes and re-runs VBench imaging quality o ver each prefix to obtain a drift curve. The final combined comparison dataset contains 610 prompt-le vel rows and aggregates to 63 benchmark-lev el method summaries. Each summary contains method metadata, systems metrics, VBench realism scores, BF16-referenced fidelity , and drift statistics. All paper tables and figures are generated directly from that dataset or from the original trace logs. This is the same data model used by the accompanying Streamlit dashboard, so the manuscript, plots, and interacti ve presentation view 4 Benchmark Method Comp. Peak VRAM Runtime Img. SSIM Drift Moviegen BF16 1.00 19.28 58.6 0.739 1.000 0.739 Moviegen FlowCache Soft-Prune INT4 5.49 11.71 75.0 0.739 0.544 0.738 Moviegen FlowCache Prune INT4 5.50 11.71 72.2 0.727 0.457 0.726 Moviegen PRQ INT4 1.60 20.69 160.0 0.739 0.824 0.739 Moviegen QuaRot KV INT4 3.20 19.98 236.6 0.738 0.724 0.738 Moviegen R TN INT4 Recent2 2.43 21.37 68.9 0.736 0.732 0.735 Moviegen R TN INT4 Refresh 3.20 22.64 65.0 0.736 0.693 0.735 Moviegen Spatial Mixed (QuaRot fg, R TN bg) 3.46 14.38 224.8 0.399 0.433 0.394 Storyev al BF16 1.00 19.28 56.8 0.693 1.000 0.695 Storyev al FlowCache Soft-Prune INT4 5.42 11.76 75.2 0.680 0.518 0.679 Storyev al FlowCache Prune INT4 5.43 11.75 72.4 0.682 0.465 0.680 Storyev al PRQ INT4 1.60 20.69 158.0 0.699 0.724 0.699 Storyev al QuaRot KV INT4 3.20 19.98 239.6 0.687 0.685 0.689 Storyev al R TN INT4 Recent2 2.43 21.37 68.6 0.680 0.721 0.684 Storyev al R TN INT4 Refresh 3.20 22.64 64.6 0.678 0.654 0.679 Storyev al Spatial Mixed (QuaRot fg, R TN bg) 3.46 14.38 224.1 0.400 0.444 0.398 T able 2: Representativ e operating points across both benchmarks. FLOWCACHE_SOFT_PRUNE_INT4 is the strongest practical deployment choice, whereas PRQ_INT4 and QUAROT_KV_INT4 define a high-fidelity but systems-e xpensiv e region. all deriv e from a single source of truth. Appendix B collects the additional repository-aligned figures, and Appendix C includes full benchmark tables for both benchmarks. 5 Results 5.1 Global T rade-offs Across 33 Methods T able 2 summarizes the main archetypal methods. Figures 1 and 2 show the full trade-of f landscape for all methods on both benchmarks. Sev eral patterns appear immediately . First, naiv e compression is not enough. RTN_INT4 reduces nominal KV size by 3 . 20 × on both benchmarks, yet peak VRAM remains essentially unchanged relative to BF16: 19.98 GB versus 19.28 GB on both M OV I E G E N and S T O RY E V A L . It also degrades structural fidelity substantially , reaching SSIM 0.688 on M OV I E G E N and 0.661 on S T O RY E V A L . This is the clearest evidence that nominal low-bit coding alone does not solve the deployment problem for self-forcing video generation. Second, the strongest high-fidelity methods are not automatically the best systems choices. PRQ_INT4 is one of the closest compressed methods to BF16 on structural fidelity: on M OV I E G E N it reaches SSIM 0.824 and LPIPS 0.082, and on S T O RY E V A L it reaches SSIM 0.724 with the highest drift-last quality among non-BF16 methods. But it is systems-negati ve, taking about 160 s per prompt and peaking at 20.69 GB. QUAROT_KV_INT4 occupies a similar region: better quality than plain R TN, b ut 236–240 s runtime and peak VRAM still near 20 GB. Third, the strongest practical memory-quality operating point is a FlowCache-inspired soft-prune INT4 adaptation. On M OV I E G E N , FLOWCACHE_SOFT_PRUNE_INT4 reaches 5 . 49 × compression, 11.71 GB peak VRAM, 75.0 s runtime, and imaging quality 0.739, ef fectiv ely matching BF16 on perceptual realism while preserving drift-last quality at 0.738. On S T O RY E V A L , it reaches 5 . 42 × compression, 11.76 GB peak VRAM, and drift-last quality 0.679. This is the best practical single-GPU point in the current stack. The most important cav eat is that this practical winner is not the strongest BF16-faithfulness winner . Its VBench realism remains near BF16, but its SSIM and LPIPS are noticeably worse than PRQ or QuaRot. This “FlowCache paradox” is scientifically useful rather than embarrassing: it shows that perceptual plausibility and e xact structural fidelity can separate sharply in long-horizon video generation. 5.2 Par eto and Frontier Analysis A single scalar ranking obscures too much structure, so we also analyze four frontiers: balanced practical, quality-preserving compression, systems efficienc y , and quality-first. Figures 3 and 4 sho w these frontiers for all methods on both benchmarks. The frontier picture is stable across benchmarks. 5 Figure 1: Global systems-quality landscape on M O V I E G E N . FlowCache-inspired prune/soft-prune methods dominate the practical lo w-VRAM region, PRQ and QuaRot occupy the high-fidelity region, and spatially mixed methods collapse despite plausible moti vation. Compression-oriented frontiers consistently include the INT4 Flo wCache-inspired prune and soft- prune v ariants, while quality-oriented frontiers include BF16, PRQ, QuaRot, and recency-aware R TN variants. Systems-ef ficiency frontiers are much smaller , reflecting the fact that only a few methods truly improv e memory without paying catastrophic runtime or quality cost. The cross-benchmark agreement is unusually strong. When we correlate benchmark-le vel summaries across common methods, compression ratio correlates at r = 0 . 9999 , runtime at r = 0 . 9996 , peak VRAM at r = 0 . 99999 , imaging quality at r = 0 . 9318 , and drift-last quality at r = 0 . 9374 . This matters because it suggests that the high-level conclusions are not an artifact of one prompt suite. In particular , the practical recommendation does not flip between M OV I E G E N and S T O RY E V A L : FlowCache-inspired soft pruning remains the best operational compromise, while PRQ and QuaRot remain quality-first reference points. 6 Figure 2: Global systems-quality landscape on S T O RY E V A L . The qualitativ e structure mirrors M OV I E G E N : the practical winner remains in the Flo wCache-inspired soft-prune region, while the highest-fidelity compressed methods sit at much higher runtime or peak-memory cost. Figure 3: Pareto/frontier analysis on M OV I E G E N . Methods that surviv e the quality-preserving compression frontier are not the same methods that surviv e the systems-efficienc y frontier, which is why deployment winners and research-quality winners di ver ge. 7 Figure 4: Pareto/frontier analysis on S T O RY E V A L . The same separation persists under narrative-style rollout stability , reinforcing that the design-space conclusions are not artif acts of one benchmark. 8 Figure 5: Curated six-method qualitati ve comparisons for two M OV I E G E N examples and one S T O RY E V A L example. 5.3 Qualitative Behavior Figure 5 shows the three prompt f amilies we used most in the li ve presentation: a candle/flame prompt and a coral reef / fish prompt from M OV I E G E N , and a bear-in-water prompt from S T O RY E V A L . These panels help explain why realism and fidelity must be separated. The FlowCache-inspired soft-prune INT4 method usually remains visually plausible and often aesthetically strong, but its videos can de viate structurally from BF16. QuaRot and R TN_RECENT2 often preserv e object layout and temporal identity better , albeit without improving realized peak VRAM. The spatially mixed failure case sho ws the opposite extreme: a method can be explicitly designed to preserve “important” regions and still break scene coherence under autore gressiv e rollout. 5.4 Why Does Peak VRAM Sometimes Increase Despite Compression? One of the most surprising results in the study is that some quantized methods compress the KV cache substantially and still exceed BF16 peak VRAM. The three most important examples are QUAROT_KV_INT4 , RTN_INT4_RECENT2 , and RTN_INT4_REFRESH . Their benchmark-le vel summary numbers already sho w the anomaly . On M OV I E G E N , BF16 peaks at 19.28 GB. Y et QUAROT_KV_INT4 peaks at 19.98 GB, RTN_INT4_RECENT2 at 21.37 GB, and RTN_INT4_REFRESH at 22.64 GB. 9 Figure 6: Representative per-prompt traces from a vailable 10-second runs. Left: allocated VRAM ov er normalized generation time for BF16 and selected quantized methods. Right: BF16-equiv alent versus compressed KV footprint for the R TN policy variants. These traces show that some methods genuinely compress the cache but still incur higher measured peaks because the current integration reconstructs or updates large BF16 b uffers transiently . (a) Peak VRAM versus compression. (b) T emporal drift comparison. Figure 7: Complementary summary views of systems and long-horizon behavior across methods. Left: peak VRAM versus realized compression highlights which methods con vert KV sa vings into actual memory relief. Right: temporal drift comparison highlights which methods preserve rollout stability as context accumulates. Our explanation combines implementation inspection with trace e vidence. The current integration stores compressed state, but se veral methods still reconstruct dense BF16 tensors during attention reads or maintain BF16 regions intentionally . QuaRot adds another layer of overhead because rotated lo w-bit v alues must be mapped back through in v erse Hadamard-style transforms before attention. The recency-protected R TN variant keeps a recent BF16 tail liv e while reconstructing the older quantized prefix. The refresh variant is e ven more e xpensiv e because it periodically holds and re writes large BF16 states during refresh. These are transient systems effects, not proof that compression failed mathematically; Appendix A summarizes the implementation choices behind these behaviors. The traces in Figure 6 make this concrete. In a representativ e M OV I E G E N prompt, RTN_INT4_REFRESH reaches an allocated peak of 21.41 GB ev en though the active KV cache at that moment corresponds to 11.25 GB in BF16-equiv alent form and only 3.51 GB in compressed form. RTN_INT4_RECENT2 shows the same pattern: 20.47 GB allocated at peak with 11.25 GB BF16-equiv alent KV and 4.62 GB compressed KV . The compression is real, but the observ ed maxi- mum is dominated by reconstruction and update phases rather than by the steady-state compressed footprint. This is precisely why these methods still matter scientifically ev en when they are not the best deployment choice. Figure 7 provides a compact cross-method summary of the two most deployment-rele vant global views: memory relief as a function of realized compression, and terminal temporal drift across methods. 10 6 Discussion The study suggests a clear way to interpret the 33-method landscape. There are deployment winners and research winners, and they are not always the same methods. The deployment winner is the Flo wCache-inspired soft-prune INT4 re gion because it is the only re gion that simultaneously achie ves large realized compression, materially lower peak VRAM, and acceptable runtime. The research winners are methods such as PRQ_INT4 , QUAROT_KV_INT4 , and RTN_INT4_RECENT2 , which identify algorithmic ideas that preserve quality better but hav e not yet been turned into the most efficient memory integration. The negati ve results are equally important. Spatial mixed precision looked attractive because it encoded a plausible vision prior: preserve foreground objects more carefully than background content. Y et in autoregressiv e rollout that prior appears misaligned with the actual causal dependencies of the model, and the method collapses badly on both realism and drift. This is a useful warning against ov er-trusting intuition about what the model should care about. More broadly , the paper reinforces a methodological point. F or long-horizon video generation, reporting only one metric is misleading. Compression ratio without peak VRAM can hide transient- memory failures. VBench realism without BF16-reference fidelity can hide structural hallucination. Fidelity without runtime can recommend impractical methods. A useful empirical study therefore has to treat memory , speed, realism, fidelity , and drift as a joint analysis problem rather than a single-score leaderboard. 7 Future W ork The first next step is systems-oriented and follows directly from the peak-memory anomalies in Section 4. The current stack often stores quantized KV state but still reconstructs or preserves dense BF16 buf fers during attention, refresh, or recency-protected reads. The cleanest immediate follo w-up is therefore to redesign the attention path so compressed KV tensors can be consumed with f ar less transient BF16 materialization. This is the systems experiment most likely to tell us whether the quality-preserving ideas identified here, especially recency protection and outlier-a ware quantization, can become practical deployment winners rather than only research winners. The second next step is to reproduce ne wer KV -aware long-video systems on the same benchmark harness. The most relev ant current target is Quant V ideoGen, which introduces both QVG and the stronger QVG-Pro operating mode for autore gressive video generation [Xi et al., 2026]. As of March 17, 2026, we did not identify an of ficial public code release linked from the arXiv record, so reproduction on our stack remains an open engineering task rather than a simple re-run. That is precisely why it is v aluable: it would let us test whether the reported quality-memory gains survi ve on the same Self-Forcing Wan2.1 path, hardware profile, and measurement pipeline used throughout this paper . The third next step is longer -horizon ev aluation on stronger compute. Our current clips are already long enough to expose memory pressure, b ut they are still short-horizon proxies for the more se vere consistency failures that appear ov er 20 seconds or more. Pushing the same study to substantially longer rollouts would let us measure temporal drift, identity breakdo wn, and scene inconsistency directly rather than infer them from 10-second behavior and prefix-quality curv es. The fourth next step is benchmarking breadth beyond one causal generator . CausV id and HiAR illus- trate that long-horizon video generation can be improved by better causal distillation or hierarchical denoising rather than only by cache compression [Y in et al., 2025, Zou et al., 2026]. Evaluating the same 33-method design space, or a distilled subset of it, on those alternati ve stacks would help determine which conclusions are fundamental to KV -cache policy and which ones are specific to Self-Forcing Wan2.1 . The fifth next step is to mo ve be yond pure text-to-video benchmarks and into first-frame-grounded and embodied settings. These settings put much sharper pressure on identity retention, layout persistence, and action consistency ov er long horizons. If a method drifts there, the failure is much easier to observe and much more consequential, making them a stronger do wnstream testbed for the cache-compression ideas studied here. 11 8 Conclusion W e presented a comprehensiv e empirical study of KV -cache quantization for self-forcing video generation. The study spans 33 methods, two benchmarks, 610 prompt-lev el observations, and a unified analysis stack that produces both static figures and an interactiv e dashboard. The headline conclusion is deliberately nuanced. FlowCache-inspired soft pruning is the strongest practical operating point in the current stack because it deliv ers real peak-VRAM relief and strong perceptual quality . PRQ, QuaRot, and recency-aw are R TN variants remain highly v aluable because they identify quality-preserving directions that a better systems integration could make practical. And some plausible ideas, especially spatial mixed precision, fail clearly enough to narrow the design space. For long-horizon video generation, that full map is more useful than a single winner . 12 References Saleh Ashkboos, Amirkei van Mohtashami, Maximilian L. Croci, Bo Li, Pashmina Cameron, Martin Jaggi, Dan Alistarh, T orsten Hoefler , and James Hensman. Quarot: Outlier-free 4-bit inference in rotated llms. arXiv preprint , 2024. URL 00456 . Xun Huang, Zhengqi Li, Guande He, Mingyuan Zhou, and Eli Shechtman. Self forcing: Bridging the train-test gap in autoregressi ve video dif fusion. arXiv pr eprint arXiv:2506.08009 , 2025. URL https://arxiv.org/abs/2506.08009 . Ziqi Huang, Y inan He, Jiashuo Y u, F an Zhang, Chenyang Si, Y uming Jiang, Y uanhan Zhang, Tianxing W u, Qingyang Jin, Nattapol Chanpaisit, Y aohui W ang, Xinyuan Chen, Limin W ang, Dahua Lin, Y u Qiao, and Ziwei Liu. Vbench: Comprehensiv e benchmark suite for video generati ve models. arXiv pr eprint arXiv:2311.17982 , 2023. URL . W oosuk Kwon, Zhuohan Li, Siyuan Zhuang, Y ing Sheng, Lianmin Zheng, Cody Hao Y u, Joseph E. Gonzalez, Hao Zhang, and Ion Stoica. Efficient memory management for lar ge language model serving with pagedattention. arXiv pr eprint arXiv:2309.06180 , 2023. URL https://arxiv. org/abs/2309.06180 . Zirui Liu, Jiayi Y uan, Hongye Jin, Shaochen Zhong, Zhaozhuo Xu, Vladimir Braverman, Beidi Chen, and Xia Hu. Kivi: A tuning-free asymmetric 2bit quantization for kv cache. arXiv preprint arXiv:2402.02750 , 2024. URL . Y uexiao Ma, Xuzhe Zheng, Jing Xu, Xiwei Xu, Feng Ling, Xiawu Zheng, Huafeng Kuang, Huixia Li, Xing W ang, Xuefeng Xiao, Fei Chao, and Rongrong Ji. Flow caching for autoregressi ve video generation. arXiv preprint , 2026. URL 2602.10825 . Adam Polyak, Amit Zohar , Andre w Brown, Andros Tjandra, Animesh Sinha, Ann Lee, et al. Movie gen: A cast of media foundation models. arXiv pr eprint arXiv:2410.13720 , 2024. URL https://arxiv.org/abs/2410.13720 . T eam W an, Ang W ang, Baole Ai, Bin W en, Chaojie Mao, Chen-W ei Xie, et al. W an: Open and advanced large-scale video generative models. arXiv pr eprint arXiv:2503.20314 , 2025. URL https://arxiv.org/abs/2503.20314 . Haocheng Xi, Shuo Y ang, Y ilong Zhao, Muyang Li, Han Cai, Xingyang Li, Y ujun Lin, Zhuoyang Zhang, Jintao Zhang, Xiuyu Li, Zhiying Xu, Jun W u, Chenfeng Xu, Ion Stoica, Song Han, and K urt Keutzer . Quant videogen: Auto-regressi ve long video generation via 2-bit kv-cache quantization. arXiv pr eprint arXiv:2602.02958 , 2026. URL . T ianwei Y in, Richard Zhang, Qiang Zhang, William T . Freeman, Frédo Durand, Eli Shechtman, and Xun Huang. From slow bidirectional to fast autore gressiv e video diffusion models. arXiv pr eprint arXiv:2412.07772 , 2025. URL . Kai Zou, Dian Zheng, Hongbo Liu, Tiankai Hang, Bin Liu, and Nenghai Y u. Hiar: Ef ficient autoregressi ve long video generation via hierarchical denoising. arXiv pr eprint arXiv:2603.08703 , 2026. URL . 13 A Method-by-Method Implementation Notes T able 3 summarizes the code-le vel implementation choices behind e very e valuated method. These notes are deriv ed from the quantizer classes, the generation entrypoint, and the cache-update path in the Self-Forcing inte gration. They are intended to make the experimental design auditable from the paper itself. The full codebase, benchmark harness, analysis scripts, and dashboard are available at https: //github.com/suraj- ranganath/kv- quant- longhorizon/ . T able 3: Code-lev el implementation notes for all 33 e valuated methods. Shared defaults across the harness include blockwise KV quantization at the causal cache boundary with block size 16 when a quantizer is activ e. Method Family Implementation detail Runtime / code path BF16 BF16 No quantizer object; the causal KV cache is stored and read back in native BF16 throughout generation. No quantized cache-policy hook; acts as the uncompressed reference path. Code: 01_generate.py; causal_model.py R TN_INT2 R TN R TNQuantizer with symmetric blockwise quantization of both K and V , block_size=16, 2-bit packed storage plus per-block scales. Quantize-on-write every step; dequantize the active cache back to dense BF16 before attention. Code: rtn.p y; 01_generate.py; causal_model.py R TN_INT4 R TN R TNQuantizer with symmetric blockwise quantization of both K and V , block_size=16, 4-bit packed storage plus per-block scales. Quantize-on-write every step; dequantize the active cache back to dense BF16 before attention. Code: rtn.p y; 01_generate.py; causal_model.py R TN_K2_V4 R TN R TNQuantizer mixed-precision variant with 2-bit ke ys and 4-bit values on the same symmetric blockwise path. Same per-step quantize/dequantize path as standard R TN. Code: rtn.py; 01_generate.py R TN_INT4 RECENT2 R TN R TN INT4 quantizer for the old prefix; the newest 2 frame-blocks are carved out and stored separately as BF16 recent_k/recent_v state. Per-step quantize-on-write with recent_blocks=2; on read, the quantized prefix is dequantized and the BF16 recent tail is copied back in. Code: rtn.p y; 01_generate.py; causal_model.py R TN_INT4 REFRESH R TN R TN INT4 quantizer, b ut used only during the clean-context refresh pass rather than every denoising step. quantize_cadence=refresh_only; denoising keeps liv e BF16 cache state, then the timestep-zero context rerun writes quantized cache state. Code: rtn.p y; 01_generate.py; causal_inference.py KIVI_INT2 KIVI KIVIQuantizer with asymmetric quantization: keys use per-channel scales/zero-points over block tokens, v alues use per-token scales/zero-points, both at 2 bits. Per-step quantize-on-write with dense BF16 reconstruction before attention. Code: kivi.py; 01_generate.p y; causal_model.py KIVI_INT4 KIVI KIVIQuantizer with asymmetric quantization: keys use per-channel scales/zero-points over block tokens, v alues use per-token scales/zero-points, both at 4 bits. Per-step quantize-on-write with dense BF16 reconstruction before attention. Code: kivi.py; 01_generate.p y; causal_model.py KIVI_K2_V4 KIVI KIVIQuantizer mixed-precision variant with 2-bit k ey quantization and 4-bit value quantization on the same asymmetric path. Per-step quantize-on-write with asymmetric dequantization before attention. Code: kivi.py; 01_generate.p y KIVI_INT4 REFRESH KIVI KIVI INT4 quantizer invok ed only during refresh, keeping the asymmetric K/V quantizer but changing the cache cadence. refresh_only cadence during denoising, followed by clean-context re-quantization. Code: kivi.py; 01_generate.py; causal_inference.py QU ARO T_KV_INT2 QU ARO T QuaRotKVQuantizer applies a Hadamard transform on the channel axis, uses R TN-style symmetric block quantization in rotated space, then inv erse-rotates on read at 2 bits. Per-step quantize-on-write with dense BF16 reconstruction plus inv erse Hadamard rotation before attention. Code: quarot_kv .py; 01_generate.py; causal_model.py QU ARO T_KV_INT4 QU ARO T QuaRotKVQuantizer applies a Hadamard transform on the channel axis, uses R TN-style symmetric block quantization in rotated space, then inv erse-rotates on read at 4 bits. Per-step quantize-on-write with dense BF16 reconstruction plus inv erse Hadamard rotation before attention. Code: quarot_kv .py; 01_generate.py; causal_model.py QU ARO T_KV_INT4 RECENT2 QU ARO T QuaRot INT4 on the old prefix plus a BF16 recent tail that skips rotation/quantization for the newest 2 frame-blocks. Per-step quantize-on-write with recent_blocks=2; rotated old context is dequantized, inv erse-rotated, and then concatenated with the BF16 tail. Code: quarot_kv .py; 01_generate.py; causal_model.py QU ARO T_KV_INT4 REFRESH QU ARO T QuaRot INT4 quantizer combined with refresh-only cache cadence rather than per-step writes. BF16 cache persists during denoising; a clean-context refresh pass performs rotated quantization and cache write-back. Code: quarot_kv .py; 01_generate.py; causal_inference.py PRQ_INT2 PRQ PRQQuantizer uses two-stage symmetric residual coding: stage 1 quantizes the original tensor, stage 2 quantizes the reconstruction residual in 64-block chunks, with base storage at 2 bits. Per-step quantize-on-write; read path reconstructs both stages and sums them before attention. Code: prq.p y; 01_generate.py PRQ_INT4 PRQ PRQQuantizer uses two-stage symmetric residual coding: stage 1 quantizes the original tensor, stage 2 quantizes the reconstruction residual in 64-block chunks, with base storage at 4 bits. Per-step quantize-on-write; read path reconstructs both stages and sums them before attention. Code: prq.p y; 01_generate.py 14 Method Family Implementation detail Runtime / code path QA Q_INT2 QAQ QA QQuantizer clips each block to an outlier threshold, quantizes the clipped bulk asymmetrically at 2 bits, and stores explicit indices/values for outliers in higher precision. Per-step quantize-on-write; dequantization restores the bulk and then writes preserved outliers back into the tensor . Code: qaq.py; 01_generate.py QA Q_INT4 QAQ QA QQuantizer clips each block to an outlier threshold, quantizes the clipped bulk asymmetrically at 4 bits, and stores explicit indices/values for outliers in higher precision. Per-step quantize-on-write; dequantization restores the bulk and then writes preserved outliers back into the tensor . Code: qaq.py; 01_generate.py AGE_TIER_INT2 AGE_TIER AgeTierQuantizer splits the sequence by a trailing recent_ratio mask, applies a higher-bit recent quantizer to the recent slice, and applies an 2-bit old quantizer to the older slice. Per-step quantize-on-write; both slices are dequantized separately and stitched back together before attention. Code: age_tier .py; 01_generate.py AGE_TIER_INT4 AGE_TIER AgeTierQuantizer splits the sequence by a trailing recent_ratio mask, applies a higher-bit recent quantizer to the recent slice, and applies an 4-bit old quantizer to the older slice. Per-step quantize-on-write; both slices are dequantized separately and stitched back together before attention. Code: age_tier .py; 01_generate.py TPTQ_INT2 TPTQ TPTQQuantizer keeps a recent slice with a higher-precision recent quantizer, compresses older tok ens with PRQQuantizer, and separately preserves the strongest old-key outliers up to outlier_max_ratio. Per-step quantize-on-write; the old PRQ reconstruction and stored outliers are merged on read. Code: tptq.p y; prq.py; 01_generate.py FLOWCA CHE_HYBRID INT2 FLOWCA CHE FlowCacheHybridQuantizer partitions the acti ve cache into frame-aligned chunks, keeps recent chunks at higher precision, compresses older chunks at low precision, and modulates the recent budget by layer role. Per-step quantize-on-write using chunk boundaries from frame_seq_length and layer budget scaling. Code: flo wcache_hybrid.py; 01_generate.py FLOWCA CHE_AD APTIVE INT2 FLOWCA CHE FlowCacheAdapti veQuantizer extends Hybrid by computing chunk summaries, scoring old chunks by relative-L1 delta and recency , and upgrading a small important-old subset to higher precision. Per-step quantize-on-write with adapti ve importance scoring at each layer . Code: flowcache_adapti ve.py; flowcache_hybrid.py; 01_generate.p y FLOWCA CHE_PR UNE INT2 FLOWCA CHE FlowCachePruneQuantizer keeps recent chunks and important old chunks, retains only a limited low-bit old subset at 2 bits, and treats the rest as pruned chunks reconstructed as zeros. Chunk plans are cached and refreshed only when frame-aligned chunk count changes; dequantization leaves pruned spans zero-filled. Code: flo wcache_prune.py; flowcache_adapti ve.py; 01_generate.py FLOWCA CHE_PR UNE INT4 FLOWCA CHE FlowCachePruneQuantizer keeps recent chunks and important old chunks, retains only a limited low-bit old subset at 4 bits, and treats the rest as pruned chunks reconstructed as zeros. Chunk plans are cached and refreshed only when frame-aligned chunk count changes; dequantization leaves pruned spans zero-filled. Code: flo wcache_prune.py; flowcache_adapti ve.py; 01_generate.py FLOWCA CHE_SOFT PRUNE_INT2 FLOWCA CHE FlowCacheSoftPruneQuantizer follo ws the prune plan but stores one pooled BF16 summary per pruned chunk and repeats that summary token across the evicted span during reconstruction, with old retained chunks at 2 bits. Same cached chunk-plan logic as hard prune; pruned spans are reconstructed from summary tokens instead of zeros. Code: flowcache_soft_prune.py; flowcache_prune.py; 01_generate.p y FLOWCA CHE_SOFT PRUNE_INT4 FLOWCA CHE FlowCacheSoftPruneQuantizer follo ws the prune plan but stores one pooled BF16 summary per pruned chunk and repeats that summary token across the evicted span during reconstruction, with old retained chunks at 4 bits. Same cached chunk-plan logic as hard prune; pruned spans are reconstructed from summary tokens instead of zeros. Code: flowcache_soft_prune.py; flowcache_prune.py; 01_generate.p y FLOWCA CHE_NA TIVE FLOWCA CHE No KV quantizer; instead, a FlowCacheReuseManager is attached to the pipeline and decides when internal features can be reused based on a relative-L1 drift threshold and optional w armup. Latency-oriented runtime reuse path rather than memory compression; representative runs use flowcache_nati ve_rel_l1_thresh metadata in the registry . Code: 01_generate.py::attach_flowcache_nati ve FLOWCA CHE_NA TIVE SOFT_PRUNE_INT4 FLOWCA CHE Combines FlowCacheReuseManager with a Flo wCacheSoftPrune INT4 quantizer so generation can skip recomputation on low-drift steps while still compressing retained cache chunks. Reuse-manager gating plus the soft-prune chunk planner; this is the only method that composes latency reuse with cache compression in one path. Code: 01_generate.py::attach_flowcache_nati ve; flowcache_soft_prune.py SP A TIAL_MIXED FG_R TN_INT4 BG_R TN_INT2 SP A TIAL_MIXED SpatialMixedQuantizer builds a fore ground/background token mask from temporal variance and routes foreground tok ens to RTN_INT4 while routing background tokens to R TN_INT2. Per-step quantize-on-write; mask_policy and foreground-ratio bounds decide whether the mask is threshold-based, top-k, or hybrid. Code: spatial_mixed.py; 01_generate.py SP A TIAL_MIXED FG_R TN_INT4 BG_R TN_INT4 SP A TIAL_MIXED SpatialMixedQuantizer builds a fore ground/background token mask from temporal variance and routes foreground tok ens to RTN_INT4 while routing background tokens to R TN_INT4. Per-step quantize-on-write; mask_policy and foreground-ratio bounds decide whether the mask is threshold-based, top-k, or hybrid. Code: spatial_mixed.py; 01_generate.py SP A TIAL_MIXED FG_KIVI_INT4 BG_KIVI_INT2 SP A TIAL_MIXED SpatialMixedQuantizer builds a fore ground/background token mask from temporal variance and routes foreground tokens to KIVI_INT4 while routing background tokens to KIVI_INT2. Per-step quantize-on-write; mask_policy and foreground-ratio bounds decide whether the mask is threshold-based, top-k, or hybrid. Code: spatial_mixed.py; 01_generate.py 15 Method Family Implementation detail Runtime / code path SP A TIAL_MIXED FG_QU ARO T_KV_INT4 BG_R TN_INT2 SP A TIAL_MIXED SpatialMixedQuantizer builds a fore ground/background token mask from temporal variance and routes foreground tok ens to QU ARO T_KV_INT4 while routing background tokens to R TN_INT2. Per-step quantize-on-write; mask_policy and foreground-ratio bounds decide whether the mask is threshold-based, top-k, or hybrid. Code: spatial_mixed.py; 01_generate.py 16 B Additional Repository Figures Figure 8: MovieGen generation-quality summary from the repository assets. This plot complements the main-paper realism/fidelity discussion by showing the same benchmark slice in a compact static view . 17 Figure 9: MovieGen memory-footprint summary from the repository assets. This view complements the main-paper peak-VRAM and compression analysis with the dashboard-style static summary used in presentation material. 18 Figure 10: MovieGen performance summary from the repository assets. W e include it separately so runtime and deployment-facing beha vior remain legible in print. 19 C Full Benchmark T ables Appendix tables use compact human-readable method labels for readability . The exact raw method identifiers are preserved in the repository datasets and dashboard exports. T able 4: Moviegen benchmark summary for all methods. BF16 is the reference baseline ro w . Img. denotes imaging quality , and Drift denotes drift-last imaging quality . Method Comp. Peak VRAM Runtime Img. SSIM LPIPS PSNR Drift FlowCache Prune INT2 7.78 11.11 69.9 0.637 0.467 0.483 15.26 0.633 FlowCache Soft-Prune INT2 6.82 11.71 76.1 0.662 0.482 0.440 15.84 0.658 FlowCache Prune INT4 5.50 11.71 72.2 0.727 0.457 0.412 15.30 0.726 FlowCache Soft-Prune INT4 5.49 11.71 75.0 0.739 0.544 0.297 17.67 0.738 FlowCache Nati ve Soft-Prune INT4 5.49 11.74 63.6 0.726 0.411 0.475 13.26 0.724 R TN INT2 5.33 19.98 87.1 0.567 0.451 0.475 15.04 0.562 QuaRot KV INT2 5.33 19.98 242.0 0.601 0.440 0.467 14.73 0.597 KIVI INT2 5.31 19.99 95.5 0.621 0.241 0.671 11.42 0.618 QA Q INT2 5.18 14.42 109.8 0.620 0.365 0.530 13.34 0.618 FlowCache Hybrid INT2 4.61 14.38 82.6 0.616 0.471 0.454 15.62 0.612 Age-Tier INT2 4.41 14.38 105.3 0.578 0.457 0.470 15.18 0.573 FlowCache Adapti ve INT2 4.27 14.38 92.6 0.616 0.448 0.464 15.19 0.611 SpatialMixed fg R TN INT 4 / bg R TN INT 2 3.68 14.38 106.6 0.411 0.421 0.558 13.93 0.407 Spatial Mixed (QuaRot fg, R TN bg) 3.46 14.38 224.8 0.399 0.433 0.570 14.06 0.394 SpatialMixed fg KIVI INT 4 / bg KIVI INT 2 3.45 14.38 110.4 0.529 0.427 0.642 13.72 0.521 QuaRot KV INT4 3.20 19.98 236.6 0.738 0.724 0.148 22.64 0.738 R TN INT4 Refresh 3.20 22.64 65.0 0.736 0.693 0.178 21.45 0.735 R TN INT4 3.20 19.98 86.3 0.735 0.688 0.180 21.32 0.734 KIVI INT4 Refresh 3.19 22.63 68.1 0.714 0.420 0.509 13.73 0.712 KIVI INT4 3.19 19.99 92.7 0.681 0.405 0.571 13.07 0.678 Age-Tier INT4 3.18 14.38 103.9 0.735 0.688 0.180 21.32 0.734 SpatialMixed fg R TN INT 4 / bg R TN INT 4 3.18 14.38 105.5 0.693 0.577 0.310 18.89 0.690 QA Q INT4 3.14 14.42 110.0 0.589 0.262 0.647 11.97 0.586 TPTQ INT2 2.72 19.85 167.2 0.724 0.627 0.240 19.91 0.722 R TN INT4 Recent2 2.43 21.37 68.9 0.736 0.732 0.148 23.69 0.735 QuaRot KV INT4 Recent2 2.43 21.69 111.3 0.730 0.706 0.183 inf 0.729 PRQ INT2 2.00 20.69 156.6 0.739 0.800 0.094 25.13 0.740 PRQ INT4 1.60 20.69 160.0 0.739 0.824 0.082 26.54 0.739 BF16 1.00 19.28 58.6 0.739 1.000 0.000 inf 0.739 FlowCache Nati ve 1.00 19.31 48.3 0.738 0.412 0.451 13.25 0.737 QuaRot KV INT4 Refresh – 22.82 97.5 0.722 0.613 0.214 19.64 0.719 R TN K2/V4 – 22.68 75.3 0.531 0.434 0.495 14.74 0.524 KIVI K2/V4 – 22.67 76.3 0.623 0.374 0.578 13.03 0.619 T able 5: Storye val benchmark summary for all methods. BF16 is the reference baseline row . Img. denotes imaging quality , and Drift denotes drift-last imaging quality . Method Comp. Peak VRAM Runtime Img. SSIM LPIPS PSNR Drift FlowCache Prune INT2 7.68 11.14 70.2 0.516 0.492 0.506 14.26 0.516 FlowCache Soft-Prune INT2 6.72 11.76 74.4 0.532 0.497 0.495 14.18 0.536 FlowCache Prune INT4 5.43 11.75 72.4 0.682 0.465 0.490 inf 0.680 FlowCache Soft-Prune INT4 5.42 11.76 75.2 0.680 0.518 0.416 inf 0.679 FlowCache Nati ve Soft-Prune INT4 5.42 11.78 64.2 0.657 0.436 0.523 11.94 0.657 R TN INT2 5.33 19.98 86.1 0.464 0.459 0.526 13.57 0.471 QuaRot KV INT2 5.33 19.98 239.0 0.477 0.443 0.532 13.01 0.480 KIVI INT2 5.31 19.99 94.7 0.531 0.243 0.735 inf 0.527 QA Q INT2 5.19 14.42 109.9 0.579 0.324 0.635 11.79 0.585 FlowCache Hybrid INT2 4.59 14.38 82.2 0.492 0.479 0.512 13.95 0.494 Age-Tier INT2 4.41 14.38 101.9 0.469 0.463 0.523 13.67 0.473 FlowCache Adapti ve INT2 4.26 14.38 91.3 0.498 0.451 0.528 inf 0.496 SpatialMixed fg R TN INT 4 / bg R TN INT 2 3.69 14.38 106.6 0.421 0.430 0.587 12.64 0.419 Spatial Mixed (QuaRot fg, R TN bg) 3.46 14.38 224.1 0.400 0.444 0.599 12.97 0.398 SpatialMixed fg KIVI INT 4 / bg KIVI INT 2 3.45 14.38 110.2 0.454 0.453 0.654 13.20 0.451 QuaRot KV INT4 3.20 19.98 239.6 0.687 0.685 0.217 19.25 0.689 R TN INT4 3.20 19.98 88.8 0.674 0.661 0.245 18.66 0.675 R TN INT4 Refresh 3.20 22.64 64.6 0.678 0.654 0.252 18.55 0.679 KIVI INT4 3.19 19.99 93.0 0.635 0.424 0.575 12.41 0.635 KIVI INT4 Refresh 3.19 22.63 66.7 0.645 0.414 0.569 12.49 0.641 Age-Tier INT4 3.18 14.38 102.4 0.674 0.661 0.245 18.66 0.676 SpatialMixed fg R TN INT 4 / bg R TN INT 4 3.18 14.38 106.6 0.606 0.587 0.349 inf 0.607 QA Q INT4 3.15 14.42 109.9 0.567 0.238 0.719 11.03 0.571 TPTQ INT2 2.72 19.77 166.6 0.654 0.615 0.301 17.03 0.658 R TN INT4 Recent2 2.43 21.37 68.6 0.680 0.721 0.187 inf 0.684 QuaRot KV INT4 Recent2 2.43 21.69 112.9 0.666 0.707 0.222 inf 0.670 PRQ INT2 2.00 20.69 155.6 0.698 0.733 0.179 20.66 0.698 PRQ INT4 1.60 20.69 158.0 0.699 0.724 0.188 inf 0.699 20 Method Comp. Peak VRAM Runtime Img. SSIM LPIPS PSNR Drift BF16 1.00 19.28 56.8 0.693 1.000 0.000 inf 0.695 FlowCache Nati ve 1.00 19.31 49.0 0.681 0.451 0.508 11.95 0.682 21

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment