Revisiting the Replication Study Design Used in Computing Education Research

Replication studies play an important role in Computing Education Research (CER) by supporting the development of consistent and reliable scientific knowledge. However, prior research indicates that the CER community tends to prioritise novel contrib…

Authors: Rita Garcia, Ellie Lovellette, Xi Wu

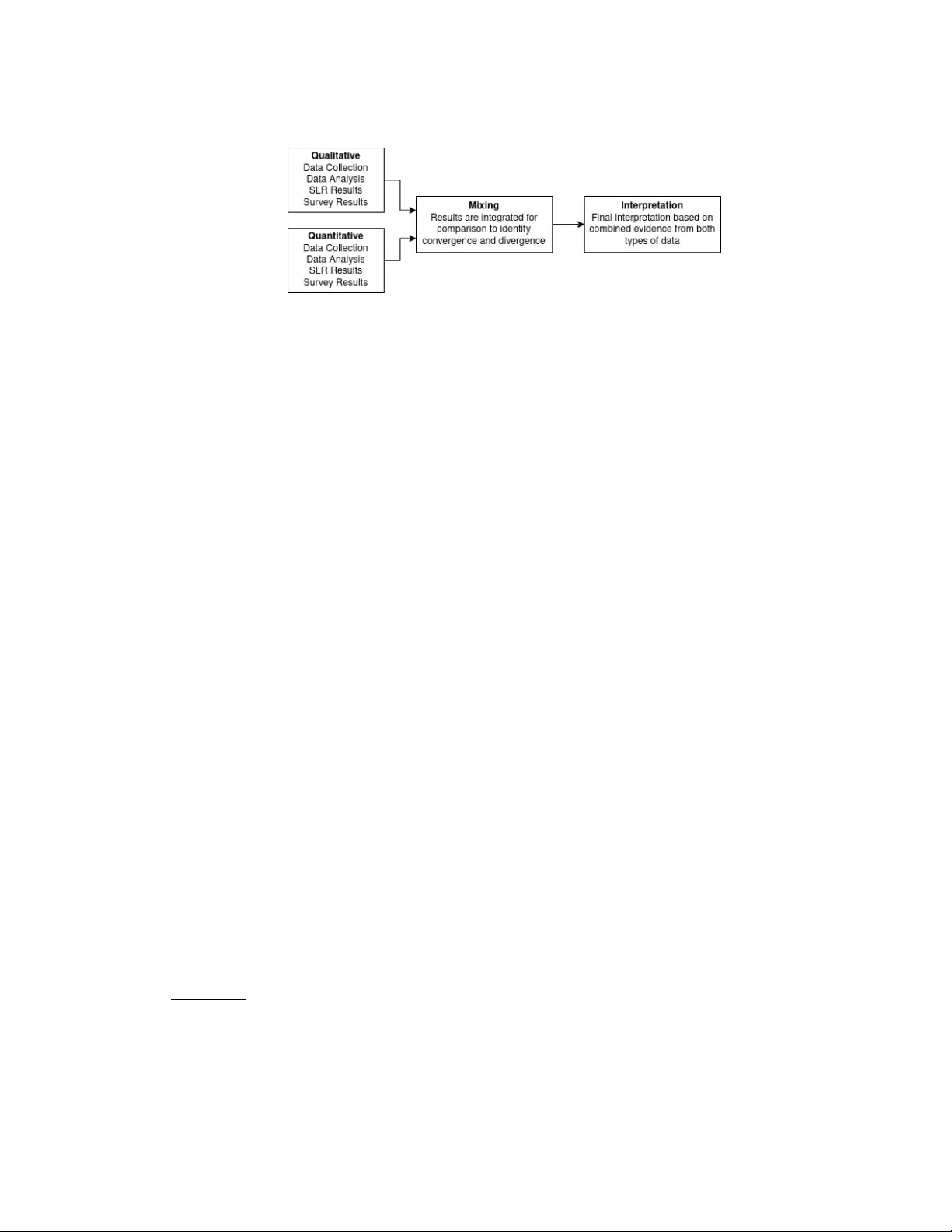

Revisiting the Replication Study Design Used in Computing Education Research RI T A GARCIA, Victoria University of W ellington, W ellington, New Zealand ELLIE LO VELLET TE, College of Charleston, USA XI W U, University of Sy dney, Australia ANGELA ZA V ALET A BERN UY, McMaster University, Hamilton, ON, Canada Background and Context: Replication studies play an important role in Computing Education Research ( CER) by supporting the development of consistent and reliable scientic knowledge. However , prior research indicates that the CER community tends to prioritise novel contributions over replication. A 2019 Systematic Literature Review (SLR) identied only 54 (2.38%) replication studies among 2,269 papers published between 2009 and 2018 across ve major CER venues. In r esponse, the Computer Science Education journal released a special issue dedicated to replication studies to encourage greater adoption of this r esearch design. Objectives: This study aims to examine how the landscape of replication research in CER has evolved since 2019. Specically , we investigate whether the prevalence of replication studies has incr ease d and explor e current perceptions and experiences of CER resear chers regarding replication. Method: W e replicated two prior studies. First, we conducted an updated SLR to identify replication studies published between 2019 and 2025 in the same ve CER v enues. Second, we replicated a survey of Computing Education r esearchers to better understand their perceptions, experiences, and challenges r elate d to conducting and publishing r eplication studies. Findings: Our SLR identied 63 (2.50%) replication studies among 2,516 published papers. While the proportion of replication studies has increased slightly , overall growth remains limited. W e observed a shift toward more published replication studies in journals and an increase in authors replicating their own prior work. Survey results indicate that although many r esearchers engage in replication within their teaching and research practice, they encounter signicant challenges when attempting to publish r eplication studies. Implications: Despite increased discourse around open science and research rigour , the adoption of replication studies in CER has not substantially grown. Our ndings oer opportunities for futur e research to pr omote replication in CER and to e xplore how the CER community can encourage researchers to publish replication studies. CCS Concepts: • Social and professional topics → Computing education ; • Applied computing → Education ; • General and reference → Surveys and overviews . Additional K ey W ords and Phrases: Replication, Computing Education Research, Educational Policy A CM Reference Format: Rita Garcia, Ellie Lovellette, Xi W u, and Angela Zavaleta Bernuy. 2026. Revisiting the Replication Study Design Used in Computing Education Research. In Proceedings of International Computing Education Research (ICER ’26). ACM, New Y ork, N Y , USA, 19 pages. https://doi.org/XXXXXXX.XXXXXXX Authors’ Contact Information: Rita Garcia, rita.garcia@vuw .ac.nz, Victoria University of W ellington, , W ellington, New Zealand; Ellie Lovellette, College of Charleston, South Carolina, USA, lovelletteeb@cofc.edu; Xi Wu, University of Sydney, Australia, xi.wu@sydne y .edu.au; Angela Zavaleta Bernuy, zavaleta@mcmaster .ca, McMaster University, Hamilton, ON, Canada. Permission to make digital or hard copies of all or part of this work for personal or classr oom use is granted without fee provided that copies are not made or distributed for prot or commercial advantage and that copies bear this notice and the full citation on the rst page. Copyrights for components of this work owned by others than the author(s) must b e honored. Abstracting with credit is permitted. T o copy other wise, or republish, to post on servers or to redistribute to lists, requires prior specic permission and /or a fe e. Request permissions from permissions@acm.org. © 2026 Copyright held by the o wner/author(s). Publication rights licensed to A CM. Manuscript submitted to ACM Manuscript submitted to ACM 1 2 Garcia et al. 1 Introduction A replication study is a research type with similar goals and study methods as another study , designe d to conrm consistent conclusions and verify the reliability and validity of the original study [ 17 ]. The Computing Education Research (CER) community encourages r esearchers to do more replication studies because they ensure the original study is not a phenomenon and conrm prior ndings in dierent contexts [ 3 , 13 ]. Unfortunately , replication studies are not commonly applied in CER [ 14 ] due to educational institutions [ 27 ] and the community [ 3 , 13 ] placing a higher value on novel resear ch. A 2019 Systematic Literature Review (SLR) [14] investigated the application of replication in CER, identifying 54 papers (2.38%) published in ve computing venues b etween 2009 and 2018. Howev er , since the publication of the 2019 SLR, the Computer Science Education Journal ( CSEJ) published a 2022 special issue 1 focusing on replication studies, which motivated us to re-e xamine the adoption of replication studies in CER over the last seven years. For this study , we address the following research questions (RQs): (1) RQ1: How has the adoption of replication study design changed over the last se ven years? (2) RQ2: What perceptions do Computing Education researchers have of using r eplication in their work? (3) RQ3: How can the CER community support researchers in adopting the replication study design? T o answer these RQs, we bring together two studies focusing on replication studies in the context of today’s r esearch landscape, where AI is available to assist students in solving activities and educators teaching post-COVID are facing challenges, such as encouraging student participation and engagement in distance learning environments [ 23 ]. These factors merit revisiting resear ch within these contexts. W e replicated a 2019 SLR [ 14 ] to identify replication studies published between 2019 and 2025. Like the original study , we used the same approach to identify replication studies in ve prominent Computing Education venues, enabling us to compare and contrast results with the original study . W e also replicated a study [ 3 ] that surveyed Computing Education researchers to collect their perspe ctives on the study design. W e used a mixed-metho ds approach to analyse the collected data. Our SLR identies 63 (2.50%) papers among the 2516 published in the ve venues during the time period of interest, with a shift toward publishing in journals and authors replicating their own work. W e found replication has not grown as the current discourse around open science and rigour suggests [ 18 ]. How ever , when sp eaking with Computing Education researchers, many are actively engaging in replication in their own teaching and research, but are experiencing various challenges with this research design. From these ndings, we identify opportunities to advance replication in CER and explore how the CER community can encourage r esearchers to publish replication studies successfully . 2 Background Replication is a critical mechanism for enhancing scientic knowledge by assessing the reliability and validity of prior ndings. At its core a r eplication study uses similar goals and design methods as another study , to evaluate whether earlier conclusions remain valid [ 17 ]. Replication studies could also help determine whether r esults hold under new conditions, dierent populations, or alternative methodological choices. Prior r esearch [ 4 , 7 , 11 , 15 , 20 ] presented the following replication types: • Exact replication studies attempt to repr o duce the original study’s design and proce dures under the same conditions. • Conceptual replication studies investigate the same underlying hypothesis using dierent operational methods, measurements, or instructional contexts. • Methodological replication studies vary measurement tools or techniques to test the robustness of ndings [ 4 , 11 ]. 1 https://www.tandfonline .com/toc/ncse20/32/3 Manuscript submitted to ACM Revisiting the Replication Study Design Used in Computing Education Research 3 • Operational (direct) replication studies preser ve the conditions with identical sampling and empirical procedures to reproduce ndings outside of the original context. Despite their importance, replication studies remain uncommon in the CER community . One barrier is that many publications do not pr ovide sucient methodological details for researchers to repeat the work reliably [ 16 ]. A potential reason may be due to space limitations imposed by the publication venue. Previously , Brown et al . [7] addressed publication bias in CER for replication resear ch by launching registered reports that were peer-revie wed b efore data collection, ensuring methodological rigour . The initiative demonstrated the feasibility of registered report replications and highlighted how editorial inter vention can aid diverse research methodologies. This eort led to the aforementioned CSEJ special issue 1 on replication studies, which only accepted registered reports replicating prior studies. In summary , replication is fundamental to enhancing the credibility and generalisability of scientic ndings, yet its uptake within the CER community remains limited, partly due to inadequate methodological transparency and constraints imposed by publication venues. Recent initiatives have demonstrated that systematic replication, such as registered reports, can help address publication bias and pr omote methodological rigour . These eorts highlight the continuing need to rene editorial processes and prioritise replicability , ultimately strengthening the robustness and reliability of research in CER. 3 Related W ork Our work replicates two studies [ 3 , 14 ] focusing on replication studies in Computing Education Research (CER). W e mentioned in Section 1 the 2019 Hao et al . [14] Systematic Literature Review (SLR) on replications, where the researchers used the quer y “ replicat[ a-z]* ” to identify papers. Of the 2,269 papers published between 2009 and 2018 across the ve venues, that SRL identied 54 (2.38%) replication studies and examined: • Whether the papers performed a direct, conceptual, or other types of replication study design, • Whether the ndings are consistent with the replication target, • Whether the same authors conducted the replication studies, ensuring one overlapping author , • What methodologies the authors adopted, such as a quantitative, qualitative, or mixed-methods approach, and • The applied themes, base d on the 18 themes proposed in prior computing education research [24, 28, 29]. When presenting the results, the researchers separated the journal and conference papers, stating, “the two journals (TOCE and CSEJ) published 330 studies between 2009 and 2018, of which 7 studies w ere identied as replication studies. In comparison, the three conferences (SIGCSE, ICER, and ITiCSE) published 1,939 studies between 2009 and 2018, of which 47 studies were identied as replication studies” [ 14 , p. 5]. The results show ed that the majority of papers use d the conceptual replication type, which tests the original hypothesis and theoretical values, but with dierent measures for data collection and analysis [ 15 ]. The majority (N=34, 63%) of the papers conrmed the ndings of their original studies, predominantly in quantitative replication studies (N=40, 74%). Overall, the replication studies identied by Hao et al . [14] predominantly focused on learning and teaching strategies, assessment, learning behaviour and theory , and performance prediction, with the majority (N=29, 54%) focusing on undergraduate CS students. The authors argue for the need for replication in CER and acknowledge challenges and stigmas we also encountered in our conversations with CER resear chers. The study suggeste d solutions to encourage more replication in CER, including revisions to policies to support replication and guidelines for reviewing replication Manuscript submitted to ACM 4 Garcia et al. Fig. 1. Diagram of Study Method using Triangulation Mixed-Methods Design (A dapted from Creswell and Clark [10]) studies. The authors concluded by promoting replication on merit, noting that “a healthy environment of replication studies needs to be cultivate d and maintained” [14, p. 11]. In the 2016 work by Ahadi et al . [3] , the researchers e xamine d the CER community’s vie ws on replication studies. The authors surveyed 73 Computing Education researchers to collect their perceptions. Their ndings sho wed that participants perceived that novel w ork had a greater impact and oered more opportunities for researchers to secure grants and citations. Replication work was acknowledged to b e dicult, with a large portion of researchers attempting and failing to replicate their own results (48%) or the result of another researcher (40%). When participants were asked if they had published a replication study , 18% of respondents answered in the armative, while 19% had tried but were unsuccessful. The maximum number of published replications was 2, by two respondents. These two studies pro vide insights into the landscape of replication in CER, highlighting both its limited presence and the challenges it poses. The SLR study [ 14 ] revealed that replication studies remain a small pr oportion of published work, with a majority conrming original ndings. The survey study [ 3 ] further underscored the diculties resear chers face in replicating studies, alongside participants’ perceptions that the CER community value d nov el research more in terms of impact and career progr ession. Both studies advocate cultivating a supportive envir onment for replication, suggesting policy changes and clearer guidelines to encourage and recognise its importance in CER, a goal we also seek to advance. 4 Study Design Our study design rst conducted a metho dological replication study [ 11 ] of the 2019 Systematic Literature Review [ 14 ] we examined in Section 3. W e then performed a conceptual replication study [ 15 ] of the Ahadi et al . [3] survey study described in the same section. W e used a mixed-methods approach [ 9 ], illustrated in Figure 1, employing a triangulation design [ 10 ] to analyse the collected data. W e collected and analysed the data from the SLR and the survey separately , then integrated them to compare and identify areas of convergence , complementarity , or divergence. Figure 1 shows how we bring together the ndings from the SLR and survey , with the survey results oering additional perspectives on the SLR ndings. W e made our instruments, data collecte d from the SLR, and SLR references available on FigShare. 2 W e obtained ethics (IRB) approval from the ethics committee at «university» to conduct this study . 4.1 Participants W e recruited survey participants thr ough multiple channels. W e distributed the survey to the SIGCSE members’ mailing list and posted announcements in community forums and social networks. In addition, we used snowball sampling [ 1 ], 2 https://doi.org/10.6084/m9.gshare.28862405 Manuscript submitted to ACM Revisiting the Replication Study Design Used in Computing Education Research 5 asking colleagues and contacts to take the survey and/or to distribute it to their own networks to incr ease response rates. 4.2 Systematic Literature Review For our replication of the 2019 SLR study [ 14 ], we utilised the same methods as the original SLR, including the same venues, but for the subsequent years: 2019-2025. W e applied the search query “ replicat[a-z]* ” to the digital libraries for the v e v enues: SIGCSE, ICER, I TiCSE, T ransactions of Computing Education (TOCE), and Computer Science Education Journal (CSEJ). W e searched for the string in the entire paper , including the title, keywords, and abstract. Our search query identied 645 pap ers across the v e venues with no duplicates. T wo raters conducted the rst pass of the selection process t o identify papers replicating other studies. T o understand the original study’s selection process, the raters re viewed papers [ 12 , 21 , 22 ] Hao et al . [14] used in the original study to construct the selection criteria. W e examined 15 of their selected papers to better understand how the original authors met the selection criteria. In addition, we spoke with a co-author of the original study for further insight. The raters used a spreadsheet for the selection process. If there was a discrepancy between their selections, a third rater examined and discusse d the selections to reach a decision. The third rater intervened on four (0.16%) pap ers during the selection process. For two pap ers, all three raters were unsure how to rate, so we contacte d the papers’ primary authors for conrmation. During the selection process, one rater recorded pap ers that encouraged replication of their work by explicitly stating it, making their resear ch materials available for future research, or both. For example, one paper [ 5 ] stated, “to encourage replication and extension of our analysis, we make these scripts publicly available through a GitHub repository” [ 5 , p. 176]. Of the 645 papers collected from our sear ch quer y , we identied 63 (2.50%) actual replication studies according to the denition. W e used Cohen’s Kappa (k) to measure interrater reliability [ 8 ] in the selection process, showing that the raters’ coding achieved a kappa of 0.952, which is considered almost perfect agreement [19]. W e analysed the 63 papers that met our selection criteria, using Google Forms to collect the data and store it in a spreadsheet, mirroring the approach use d in the original study . W e recorded the replication study types, the methodology , authors, and themes used in the replication papers. For the replication types, the form listed Direct and Conceptual based on the work by Hao et al. [14], but included an op en-text eld for other r eplication typ es. W e used comparative analysis [ 25 ] to assess our r esults against the original SLR, focusing on conte xt, methodologies, topics, and authorship. W e used the quantied evidence presented in the original SLR to compare with our ndings. T o help situate our SLR results, we identied the number of accepted papers in the venues. For the conferences, we used the conference chairs’ welcome, which presents the number of accepted papers. 3 For the journals, we used their online digital libraries to identify the total number of published papers for each year . Upon completing the data analysis, we extracted the frequency matrix from the spreadsheet to present our ndings. 4.3 Survey W e conducted a conceptual replication study of the research by Ahadi et al . [3] . The survey informs the SLR’s ndings and provides a more in-depth look at the CSER community’s application of replication study design in their work. T o adhere to appropriate ethics (IRB) requirements, we hosted the survey on the «university» ’s Qualtrics platform, where the research group gained Institutional Revie w Board approval. 3 https://dl.acm.org/doi/proceedings/10.1145/3702652 Manuscript submitted to ACM 6 Garcia et al. The survey contained 12 questions: four closed-ende d and eight open-ended. Dep ending on how the participant answered the rst survey question asking them if they had done a r eplication study b efore , they were pr esented with dierent sets of questions. Participants who had previously performed replication studies r e ceived six additional questions focusing on their prior experience with those studies, for example , “How many CER replication studies have you conducted?” and “How many CER replication studies have you successfully published?” . W e also asked those participants to provide any replication studies they had pre viously published, provided they did not mind potential deanonymisation by the r esearch team. If the respondent provided their published r eplication studies, two of the authors downloaded and review ed them to determine the publication year , venue, replication type, and whether the publication appeared in the original or our SLR. Analysing the pro vided papers allowed us to determine whether the CER community published replication studies outside the ve v enues and to examine whether our selection criteria would have selected them for r eview , thereby informing how we examine and identify r eplication studies in CER in the future. All participants, regardless of the answer they gave to the rst question, were asked to pr ovide their opinions on replication studies with the open-text question “What are your opinions on the value of replication studies in CER?” . W e asked if they were planning on conducting a replication study in the future, and gave them the chance to write am open-ended response to the question “Why do you think the CER community does not do more replication studies?” . T wo authors independently review e d and coded the open-ended responses, each applying their o wn preliminary cod- ing scheme. After completing this initial coding round, they met to compare their code assignments and collab oratively develop a unied codebook, drawing on their initial tags and interpretations. Using the agreed-upon codebook, b oth authors re-coded the responses to ensure consistency with the standardised codes. One week later , they met again to review their coding, discuss any discrepancies, and reconcile dierences. They repeated this process until they reached consensus on all codes, thereby enhancing the reliability and validity of the coding procedure for the dataset. 4.4 Positionality Statement Our research team consists of four w omen researchers, one identifying as white and three identifying as members of dierent minoritised racial groups. W e adopt a mixe d-methods perspective and acknowledge that our backgrounds and experiences shape our interpretations of replication practices in CER [ 6 ]. T o mitigate individual bias, we employed collaborative and reexive analytic practices throughout the study . T wo researchers independently conducted paper selection for the Systematic Literature Review , with disagreements resolved through discussion and third-rater ad- judication, informed by the proce dures of the original study . For the survey’s op en-ended responses, tw o authors independently coded the data, developed a shared codebook, and iteratively reconciled dierences until they reached consensus. These practices wer e intended to strengthen analytic rigour and balance individual p erspectives when interpreting replication types and community attitudes toward r eplication in CER. 5 Results 5.1 Systematic Literature Review Results Of the 2516 papers publishe d in SIGCSE, I TiCSE, ICER, TOCE and CSEJ between 2019 and 2025, 63 (2.50%) applied replication. Of these 2516 papers, 147 (6%) encouraged replication of their work. T able 1 presents our SLR results, showing the total number of publications per venue and the replication studies identie d at each venue. The table Manuscript submitted to ACM Revisiting the Replication Study Design Used in Computing Education Research 7 T able 1. SLR Results Across CER V enues, Including T otal (T ot) and Replication Studies (Rep) Found in Each V enue and Y ear V enues Journals Conferences Y ear CSEJ TOCE ICER I TiCSE SIGCSE T otal T ot Rep T ot Rep T ot Rep T ot Rep T ot Rep T ot Rep 2019 15 1 (7%) 40 1 (3%) 28 1 (4%) 66 0 (0%) ‡ 179 5 (3%) 328 8 (2%) 2020 18 0 (0%) ‡ 27 0 (0%) ‡ 27 1 (4%) 72 3 (4%) 171 3 (2%) 315 7 (2%) 2021 18 0 (0%) ‡ 46 0 (0%) ‡ 30 2 (7%) 84 3 (4%) 170 2 (1%) 348 7 (2%) 2022 19 4 (21%) † 43 3 (7%) 25 0 (0%) ‡ 79 1 (1%) 144 2 (1%) 310 10 (3%) 2023 24 0 (0%) ‡ 30 1 (3%) 35 1 (3%) 80 5 (6%) 165 3 (2%) 334 10 (3%) 2024 32 1 (3%) 57 1 (2%) 36 5 (14%) 107 2 (2%) 216 1 (1%) 448 10 (2%) 2025 21 0 (0%) ‡ 90 6 (7%) 31 1 (3%) 99 0 (0%) ‡ 192 4 (2%) 433 11 (3%) T otal 147 6 (4%) 333 12 (4%) 212 11 (5%) 587 14 (2%) 1237 20 (2%) 2516 63 (3%) Journal: T ot=480 Rep=18 (4%) Conference: T ot=2036 Rep=45 (2%) † Includes special issue on replication studies ‡ Y ear for venue with no reported replication studies groups the results by conference and journal, with years presented in descending order . It also shows the total numb er of papers and replication studies for each venue within the selected time frame. When examining results by venue and year , CSEJ 2022 has the highest percentage of replication studies (N=4, 21%), mainly because of the CSEJ special issue focusing on replication studies published that year , denote d with † in T able 1. TOCE 2025 has the next-highest number of replication studies (N=6, 7%). W e also found nine journal volumes or conference proceedings containing no replication studies at all, denoted with ‡ in T able 1. These include CSEJ 2020, 2021, 2023, and 2025; TOCE 2020 and 2021; ICER 2022; and I TiCSE 2019 and 2025. When examining the numb er of replication studies published as a percentage of all publications for the time period at each venue, we found that ICER published the most (5%, N=11), followed by CSEJ (4%, N=6), while SIGCSE (2%, N=20) had the fewest. 300 600 900 1 200 1 500 1 800 2 100 Original Study Replication Study 45 1991 47 1892 Number of Papers (a) Conference Results 50 100 150 200 250 300 350 400 450 500 Original Study Replication Study 18 462 7 323 Number of Papers (b) Journal Results Fig. 2. Comparing Original and Replication Studies’ Results by Conference and Journal Publications Non-Replication Papers Replication Papers Manuscript submitted to ACM 8 Garcia et al. 10 20 30 40 50 60 70 Original Study Replication Study 1 1 6 17 29 9 31 24 Number of Papers Fig. 3. Comparing the Context Results Between Original and Replication Studies Undergraduate CS1 K -12 Graduate Other Naturally , we also compared our SLR results with the original SLR study [ 14 ] we wer e replicating. While the original SLR examined replication studies from 2009 to 2018, its ndings covered only publications from 2011 to 2018, a period of eight years (since no replication studies w ere found for the rst two years). Their results bring the time frames for the two SLRs closer together , potentially minimising anomalies in the discussion of the results between the two studies. When comparing the overall r eplication studies identied in the two SLRs, the original SLR found 54 (2.38%) replication studies, while ours found 63 (2.50%), a comparable number in both. Next, we compared r esults by conference/journal venue typ e, an approach use d in the original SRL, previously presented in Section 3. Figure 2 shows this comparison, with Figure 2a presenting the conference results, while Figure 2b shows the journal results. Figure 2a sho ws 47 (87%) of the original SLR’s replication papers publishe d at conferences, while our SLR identied 45 (71.43%). When comparing journal publications, the original SLR saw seven (13%) replication studies, whereas our SLR identied 18 (29%), demonstrating an increase in replication studies in journals. Then, we compared the Computing Education topics. The original SLR did not pro vide numeric information when quantifying results for topics. Instead, the authors presented the rst ve topics in the following order: Learning & T eaching Strategies , Assessment , Learning Behaviour , Learning Theor y , and Performance Prediction . W e also found that Learning & T eaching Strategies (N=17, 27%) and Assessment (N=14, 22%) continue to b e the most common topics in replication studies. Howe ver , our replication study found a higher level of interest evaluating T ools (N=13, 20%). Next, we compared the contexts, as shown in Figure 3. The predominant context found in the original SLR was Undergraduate (N=29, 54%), followed by CS1 (N=17, 31%), while Graduate and Other contexts had the least (N=1, 2%) number of papers. Our SLR found that CS1 (N=31, 49%) was the predominant context, follo wed by Undergraduate (N=24, 38%), whereas no replication studies used the Graduate or Other contexts. T able 2 presents other comparisons between the two SLRs, examining methodologies, replication types, and author- ship. For methodologies, Quantitative remains the most common for b oth SLRs, with the original SLR study identifying 74% (N=40) of their papers applying quantitative appr oaches, while we saw 60% (N=38) of our papers utilising them. The least common approach across both SLRs was Qualitative , with the original SLR identifying four papers (7%) and our SLR identifying six (10%). When comparing the replication typ es, our SLR identied authors applying dierent replication study designs, including Methodological (N=8, 13%) and Empirical (N=4, 6%) approaches. In contrast, the original SLR did not report on these methodologies. For authorship, the original SLR stated that “the same authors, the replication success rate was 72% and the failur e rate was 22%. In contrast, the success rate dropped to 58% and failure rate increased to 28% when all authors of a study were dier ent” [ 14 , p. 42]. Our results show that the same authors had a high success rate (82%), with 18% of the studies Manuscript submitted to ACM Revisiting the Replication Study Design Used in Computing Education Research 9 failing; by contrast, with dierent authors, the success rate dropped to 60%, with the failure rate increasing to 25%. W e found that more of the same authors (N=35, 56%) replicated their work than the original SLR found (N=18, 33%). Overall, our ndings indicate a modest increase in the already limited prevalence of replication studies in CER compared to the original SLR, with a slight shift towar d journal publications and a broader range of topics. The continued dominance of quantitative metho dologies and the predominance of same-author replications suggest that, while the eld is progressing, challenges remain in encouraging independent replications and diversifying methodological approaches. Notably , the context of replication studies has shifted, with CS1 now surpassing undergraduate contexts as the most common setting. These results highlight both areas of growth and persistent gaps, underscoring the need for ongoing eorts to promote replication across varied contexts, authorship models, and resear ch designs within CER. 5.2 Survey Results In the eight weeks during which the survey remained open, we recorded 116 responses. W e asked all the participants “Have you implemented a published Computing Education Research (CER) intervention or work in your classroom (i.e. done a replication study)?” (Q1). W e received “Y es” from 53 (46%) participants, while 63 (54%) responded “No” . W e asked the 53 participants who answered “Y es“ additional questions including how many replication studies they had conducted (Q2), how many of those they had submitted for publication (Q3), and how many they had successfully publishe d (Q4). T able 3 outlines their responses to these questions. For questions Q2-Q4, the table shows the number of responses, along with the mean, median, minimum, and maximum values provided by the participants. For Q4, two participants responded “not applicable ” because they answered “0” to Q3. The answers showed that educators conducting replication T able 2. Original (Org) and Replication (Rep) SLR Study Results by Methodology , Replication (Rep) Type, and A uthorship (A dash ( -) represents category not reported) Methodology T otal Success Mixed Failure Unknown Org Rep Org Rep Org Rep Org Rep Org Rep Quantitative 40 (74%) 38 (60%) 27 (68%) 29 (76%) 9 (22%) 1 (3%) 4 (10%) 8 (21%) - 0 (0%) Mixed 10 (19%) 19 (30%) 5 (50%) 12 (63%) 5 (50%) 3 (15%) 0 (0%) 2 (11%) - 2 (11%) Qualitative 4 (7%) 6 (10%) 2 (50%) 4 (66%) 2 (50%) 0 (0%) 0 (0%) 1 (17%) - 1 (17%) Rep T yp e T otal Success Mixed Failure Unknown Org Rep Org Rep Org Rep Org Rep Org Rep Conceptual 41 (76%) 41 (65%) 27 (66%) 28 (68%) 3 (7%) 3 (7%) 11 (27%) 7 (18%) - 3 (7%) Direct 13 (24%) 10 (16%) 7 (54%) 8 (80%) 3 (23%) 0 (0%) 3 (23%) 2 (20%) - 0 (0%) Methodological - 8 (13%) - 6 (75%) - 0 (0%) - 1 (12.5%) - 1 (12.5%) Empirical - 4 (6%) - 4 (100%) - 0 (0%) - 0 (0%) - 0 (0%) A uthorship T otal Success Mixed Failure Unknown Org Rep Org Rep Org Rep Org Rep Org Rep Same 18 (33%) 35 (56%) 13 (72%) 29 (82%) 1 (6%) 0 (0%) 4 (22%) 3 (18%) - 3 (18%) Dierent 36 (67%) 28 (44%) 21 (58%) 17 (60%) 5 (14%) 3 (11%) 10 (28%) 7 (25%) - 1 (4%) T able 3. Publication Details by Sur ve y Respondents Previously Conducting Replication Studies Question N Mean Median Min Max Q2. How many replication studies performed? 29 2.66 2 1 9 Q3. How many replication studies submitted for publication? 29 1.97 1 0 9 Q4. How many replication studies successfully published? * 27 1.74 1 0 8 * T wo non-applicable responses Manuscript submitted to ACM 10 Garcia et al. studies are likely to submit most, if not all, of them for publication. Only ve (17%) of the 29 participants who reported performing several replications (Q2) answ ered that they submitted none of them for publication (Q3). W e asked participants whether they would voluntarily provide their previously published replication studies, which yielded 19 papers from 13 (11%) participants. W e found that 14 (74%) came from the v e v enues we revie wed for the SLR. W e received direct mention of six (32%) papers from SIGCSE, four (21%) fr om I TiCSE, two (11%) from CSEJ, and two (11%) from CSEJ. W e found the remaining ve (26%) published at other venues: Koli Calling (N=2, 11%), The W estern Canadian Conference on Computing Education (WCCCE) (n=1, 5%), Frontiers in Education (FIE) (N=1, 5%), and The International Conference on the Future of Education, T eaching Systems and Information T e chnology (IFETS) (N=1, 5%). When evaluating the participants’ 19 papers to determine whether they would meet our sele ction criteria, we found that one (5%) paper appeared in the original SLR [ 14 ], while nine (47%) appeared in our SLR. W e observed that four (21%) would not have met our search criteria because the term “replicat[a-z]*” did not appear in the publications. T wo (11%) papers would also have been excluded under our selection criteria because they did not explicitly state that the studies performed replication, while the remaining three (16%) were not yet published, so we could not evaluate them. Of the 29 participants with experience in replication, seven (24%) reporte d that venues rejected their replication papers during the peer-review process, identifying SIGCSE, ITiCSE, ICER, K oli Calling, and “ ACM and T aylor & Francis” as the submission venues. When we asked participants whether they would consider conducting replication studies in the future, 56% wer e unsure, 34% said “Y es” , and 30% said “No” . W e asked all participants two open-ended questions: “What are your opinions on the value of replication studies in CER?” (Q8) and “Why do you think the CER community does not do more replication studies?” (Q10). The r esp onses frame seven ov erarching value dimensions, with corresponding themes presented next and outlined in T able 4 and T able 5. 5.2.1 High Epistemic V alue and Generalisability . Our survey participants consistently framed op en-text responses on replication as central to the development of trustworthy knowledge in CER. Rather than viewing replication as redundant, they emphasised its role in determining if ndings extend beyond a single study or a specic instructional context. As one participant noted, “High value: it’s go o d to know whether an inter vention done in one context is more broadly eective ” (R003) . Others echoed this concern with transferability and context, writing, ‘ ‘V aluable - espe cially in suciently dierent contexts that they increase our belief in the general validity of the idea” (R005) and “They are valuable. Context is a signicant issue in CER studies, which only replication can nally address” (R015) . 5.2.2 Confirmation of Knowledge, Exposing Knowledge Gaps. W e also report that participants repeatedly described replication as a mechanism for strengthening condence in existing results. One participant wrote , “W ould b e go od to do more. W ould let us conrm the validity of ndings” (R070) , while another added, “I think they are useful as they can bring more credibility to previous ndings” (R076) . In this sense, R076’s response positions replication as part of building a cumulative evidence base rather than pr o ducing isolated ndings, as reected in the comment, “They’r e critical for establishing computing education as science/social science. W e have to be able to test and build on prior work” (R010). Importantly , participants not only framed replication as conrmatory but also emphasised its value in exposing limitations and gaps in the literature. One note d, “[Replication studies] are crucial to impro ving our collective understanding” (R024) , and another observed, “I would encourage more of them! I think a tendency toward novelty above all else—except in circumstances where we are lo oking into new things and exploration is perhaps more valuable than certainty—can leave gaps in our knowledge. Particularly since generalisation is dicult in contexts as population dependent as education, signicant amounts of replication can lead to a deeper understanding of how these ndings vary across populations” (R080) . Manuscript submitted to ACM Revisiting the Replication Study Design Used in Computing Education Research 11 T able 4. alitative Coding Scheme for Perceived V alue of Replication (Q8) and Explanation of Limite d Replication in CER (Q10). (Percentages of unique respondents discussing the theme do not sum up to 100% because responses could contain multiple themes. T otal number of open-text responses N = 147) V alue / Barrier Dimension Associated Thematic T ags Resp (%) Theme Summary Building Trustw orthy Knowledge Generalisability 37% (value, barrier) Replication tests if ndings hold across dierent contexts, populations, or institutions Conrmation of Knowl- edge 37% (value) Replication validates, conrms, or strengthens condence in existing results Knowledge Gaps 24% (value) Replication is a way to identify limitations, missing evidence , or areas where current knowl- edge is insucient; ( barrier ) Replication is necessar y but hindered because existing studies lack sucient methodological detail or accessible materials to en- able replication Contradictory Results 12% (value , barrier) Replication can reveal inconsistencies or challenge prior ndings Supporting the Field V aluable 74% (value) Replication is important, worthwhile, or nec- essary to CER Benecial to the Field 16% (value) Replication contributes to the health, credi- bility , or development of the CER community or dis- cipline, (barrier) even though it is structurally un- supported, highlighting the disconnect between epis- temic importance and institutional reward Underutilised 14% (barrier) Replication is insuciently used, rare, or neglected within CER Incentives, Recognition, Reward Structures and Publication Culture Novelty 58% (barrier) The dominance of novelty , originality , or “newness” is a cultural or review expectation that disadvantages replication Research Recognition 24% (barrier) Replication receives limited recognition within the research community (e.g., in publication prestige, citations, or scholarly status) Professional Recogni- tion 20% (barrier) Replication is undervalue d in career-related contexts ( e.g., hiring, tenure, promotion, performance evaluation) Undervalued 15% (barrier) Replication is culturally p erceiv e d as less important or prestigious Reviewer Bias 15% (barrier) Peer-review practices or reviewer expecta- tions disadvantage non-novel work Other survey participants were more explicit about the role of replication in surfacing contradictions. For example , R034 stated, “They are incredibly valuable, but the nature of replication sits slightly at odds with the innate variation of participants found across cultural and socio-temporal b oundaries - there is a question as to exactly how much we can replicate. [...] Replication studies should b e carrie d out on all CER research as a matter of course, given how many studies we are already aware of that cannot be replicated” (R034) . Manuscript submitted to ACM 12 Garcia et al. T able 5. alitative Coding Scheme for Perceived V alue of Replication (Q8) and Explanation of Limite d Replication in CER (Q10). (Percentages of unique respondents discussing the theme do not sum up to 100% because responses could contain multiple themes. T otal number of open-text responses N = 147) V alue / Barrier Dimension Associated Thematic T ags Resp (%) Theme Summary Practical Challenges Hard to Do 30% (barrier) Replication is methodologically , logistically , or practically dicult to carry out Hard to Publish 18% (barrier) Replication is dicult to place in appropriate venues or to have accepted for publication Hard to W rite 3% (barrier) Replication is dicult to frame, report, or argue for in a way that ts publication norms Context and Ethical Constraints Context 45% (barrier) Replication inuenced by contextual factors ( e.g., institutional, cultural, curricular dierences) that compli- cate replication or comparability Ethics 4% (barrier) Ethical constraints (e.g., consent, student risk, intervention withholding) limit replication design or fea- sibility Denitional Ambiguity Replication Adjacent 8% (barrier) Not a ”pure ” replication but blends conrmation with extension, adaptation, or contextual variation Lack of Interest Not Interested 8% (barrier) Lack of personal or community interest in con- ducting replication studies 5.2.3 Beneficial to the Field but Underutilised and Under valued. Beyond its epistemic r ole, participants widely described replication as work that supports the CER eld as a whole. Participants expressed this concisely and forcefully , stating “Tremendous value ” (R022) , “Must be done” (R021) , and “Urgently neede d and hugely underappreciated” (R074) . These statements reect a perception of replication as foundational to the credibility and health of CER, even when it does not directly b enet individual researchers. Participants highly valued replication in CER, making its value the most-discussed topic, with nearly three-quarters commenting on it. One succinctly described the underutilisation of replication, stating, “There are so few replication studies that I wonder whether we can actually call ourselves a “science”” (R074) . 5.2.4 The Expe ctation of Novelty , Definitional A mbiguity and Review er Bias. Some participants pointe d to ambiguity around what should count as replication, particularly from a r eviewer and publication standpoint. For example, R068 stated, “I also received fe e dback on a rejected paper from a reviewer that gave the impression they saw the replication study needing to be exact replication, which my wasn’t. Perhaps rejected replication papers due to misunderstandings on the types of replication studies available may be why the community doesn’t do more of these studies” (R068) . Participants mentioned that partial replication and replication with extensions are also har d to publish. Participants repeatedly describ ed replication as underutilised and insuciently rewarded. One participant summarised this by describing it as “V aluable, but not rewarded as easily as original research” (R075) . Another participant stated, “I think they’re sup er imp ortant, but also hard to publish be cause reviewers tend to say “what’s the novelty in this?”” (R004) . Survey participants noted a signicant mismatch between the scientic imp ortance of replication and the ways CER evaluates and re wards it. Participants explicitly linked this to novelty-driv en review cultures, with one noting, “There ’s too much of a premium on “novelty” in CS research in general. Replication studies almost always get viewed as less interesting because of the perception of lack of novelty” (R003) . Another wrote, “Reviewers negatively comment on the Manuscript submitted to ACM Revisiting the Replication Study Design Used in Computing Education Research 13 ‘value’ and ‘novelty’ of the work” (R029) , and a third explained, “They’re hard to publish. I think they’re looked down on as not being novel or rigorous” (R004) . As a matter of fact, the novelty bias issue was the second-most-often addressed topic in participants’ written responses, with more than half discussing it. Responses also mentioned reviewer bias as a vexation in connection with study setting, “frustrations over the review process; For example, some review ers might complain that the student p opulations in each study are “too dierent” when in fact, that the entire point of doing the replication study” (R098) . Participants raised issues with CER venues, for example, “SIGCSE/ICER/I TICSE are the only real outlets for CER, and they don’t put much value on replication studies” (R086) . Participants not only mentioned novelty related to revie wer bias, but also noted that it is a reason the CER community does not conduct more replication studies. One train of thought was that such work will not be valuable for job seekers, “Since much of research is driven by graduate students who will have to p eacock in front of search committees to get jobs, being able to demonstrate your own intelligence is easier to do when you’re doing “novel” work rather than replicating” (R080) . Others pointed to the CER community culture as the culprit. For example, participant R002 stated, “ A n oft-cited reason is that they are not valued. But I think CER does value them, and people are usually happy to se e them. I think it’s more that people are unexcited by the idea of taking someone else’s research design and re-running it; they want the fun and invention and novelty of doing their own design” (R002) . Another participant stated, “Likely more interested in chasing the next shiny object and not having to argue about why it is nee de d when it has b een done before” (R104) , while another participant wrote, “I believe that scientists nd replication studies as less-than. They b elieve that this is not an original study , therefore not as imp ortant as an original idea/inter vention. Unfortunately , this hurts science!” (R037) . 5.2.5 Lack of Incentives and Recognition. The responses emphasised novelty tied to broader existing incentive structures, such as the common topics of research and professional recognition, or the lack thereof. As one participant stated, “No motivation, equal work with low probability of publication, and low citation count if it is published” (R036) . Participant R072 noted replication studies receive less recognition than the original, novel study , stating, “There is a sense that less “cr edit” is given to replications. If the replication study conrms the original study , then in some sense the credit go es to the original study designer” (R072) . Another participant summarised the structural misalignment as “Lack of incentive structure, grant funding not aligned” (R033) , with participant R025 explaining, “for those who need tenure, replication studies may not provide the value that tenure and promotion committees are lo oking for” (R025) . In this context, survey participants described replication as valuable and legitimate work while also emphasising the lower pr ofessional payo, such as one participant stating, “It is just a lot of work and very unlikely to pay o for a researcher” (R098) . 5.2.6 Practical Challenges and Ethical Considerations. Participants raised the practical diculty of conducting replica- tion. One participant wrote, “[Replication studies] would be ver y useful, but very challenging due to local constraints and educational setups” (R007) . Others addressed diculties in reporting replication, such as one participant stating, “It also seems harder to write a replication study paper” (R033) and another stating, “Certainly worthwhile, but I have never written or review ed one. I would b e nervous about submitting one and am unsure how I would structure such a paper” (R023) . Participants also raised concerns with replication studies requiring IRB approval, acknowledging it as a constraint on replication. For example , one participant stated, “I don’t know ab out other researchers, but a major barrier for me is getting the ne cessary ethics approval” (R021) . Another participant mentioned the overhead of obtaining IRB approval, stating, “getting IRB approval and setting up the whole thing correctly takes a lot of time and coordination” (R099) . 5.2.7 Context. Context was commented on extensively by 40% of our survey participants. They emphasised that the educational context fundamentally shapes what replication means in CER, and that dierences across institutions, Manuscript submitted to ACM 14 Garcia et al. students, and instructional settings complicate both the design of replication studies and the interpretation of their ndings, with generalisability as the central issue . For example, one participant stated, “It can be an opportunity to make sure the approach is still relevant and/or extend ndings to a new context” (R104) . Participants p osit that context makes replication necessar y , and that this is a core challenge in conducting replication, not a nuisance variable that researchers can control away . Additionally , the lack of information and context pro vide d by original studies also compounds the complexity for conducting replication, with participant R007 stating, “Publications seldom include enough information about the context, making dicult to evaluate possible replication of a study” (R007) . Despite these practical challenges, one participant perceives replication occurring in CER, but remaining largely invisible, stating “I think [the CER community do es replication studies], they just don’t publish them” (R027) . 5.2.8 The Risk of Contradictory Results. Finally , a recurrent concern among our participants related to the consequences of producing contradictory results, especially in seminal studies. For example, one participant stated, “concerns of a null result being at odds with accepted fact” (R034) . Participants noted that replication is often implicitly expected to conrm prior work, and that studies which fail to reproduce original ndings can be particularly dicult to position and publish. Participants described a perceived asymmetry in how conrmatory versus contradictory replications are received. For example , one participant stated, “People may worr y what happens if they get contradictor y results; is this viewed as an insult to the original authors?” (R002) . Another noted, “If people publish replication studies and the results are negative. Often, the community attacks the researchers. I have seen this in other communities outside of CER” (R095) . Another participant stated, “I hear from folks it can be awkward b ecause a person might discover faults in the original study” (R091) , and participant R072 explained, “If the replication study does not conrm the original study , then there is a bit of an implied hostility that could be tricky to manage” (R072) . These responses related to potential hostilities are worrisome for some participants, with one noting that “if the results do not replicate (lack verication or validation) someone could fear making an enemy in the community when publishing” (R104) . Participant’s R104 response reects perceived cultural risks associated with challenging establishe d ndings and helps explain why some replications may nev er be submitted or published. 5.2.9 What Should W e Do? The nal sur vey question asked participants whether they had anything to add b efore completing the survey . Some of the parting thoughts suggested that incentives, such as funding, awards, and smaller dedicated events, could encourage more replication in CER, along with introducing special workshops and venues that designate sp ecial issues and special tracks for replication. For example, one participant state d, “If the dearth of replication studies is, as I believe, a system issue related to incentives, then it needs a systemic, cultural approach to solving it. Create dedicated tracks within top conferences, hold workshops and discussion sessions, publish a whitepaper with broad community authorship and support about the importance and value of replication studies, etc. ” (R006) . Participants also addressed the need to strengthen the permissibility of contradictor y results, such as one participant stating, “ ACM should have sp e cic tracks for replications (and null result experiments) with lower barrier to entry , this could make it easy for newcomers, or graduate students to get some “starter publications”” (R036) . W e also received r esponses emphasising the need for re viewer training. For example , “I think we need to do a lot more of them! A nd reviewers nee d to b etter understand the value of doing them” (R029) . One participant summarised “It would be interesting to consider a separate review track for it; There is usually a question on novelty of the work, and it seems like some review ers get hung up on that. Perhaps a separate track or separate guidance on replication studies would help review ers better navigate; I really hope we see more of them!” (R098) . Manuscript submitted to ACM Revisiting the Replication Study Design Used in Computing Education Research 15 W e observed training arguments interleaved with the need for sucient context sharing. For example, “W e should be writing up our research so everything can b e replicated, sharing our data sets, but building a review and replication analysis community that supports p ositive and constructive reviews to develop research skills and improve our knowledge. There is a very big dierence b etween constructive and honest feedback and ad hominen / ex authoritas arguments to crush faile d replications of prominent studies” (R034) . Participants emphasise d the need to request studies to share details to enable replication, such as “Maybe if the journals and conferences somehow require open-source code and datasets availability , we are going to see more and more studies on the replication studies in CER” (R094) , and “Having explicit paper tracks for replication would be helpful, also, making explicit when reviewing papers, that enough detail should be provided so someone else can replicate their study” (R113) . Participants also wrote that the cultur e of the CER community needs to change for replication to b ecome more prevalent. For example, one participant stated, “Our community just needs to mature in attitudes. But, most of us are PhDs and we believe (trained to be?) inventors and experimenters. W e are not trained to adopt others’ work” (R082) . Overall, our survey responses show that the challenge for CER is not whether the community values replication, but whether current research cultur es and incentive structures make it feasible, visible, and professionally sustainable. 6 Discussion 6.1 RQ1: How has the adoption of replication study design change d over the last seven years? The most striking aspect of our ndings is that while replication persists, its growth has not matched the expectations set by recent discussions on open science and research rigour [ 18 ]. W e found that the overall proportion of published replication studies r emains small and remarkably stable, with more resear chers replicating their work and publishing in journals. Though the number of published replications remain small, our sur ve y found that many researchers actively engage in replication in their own teaching and resear ch, even if that work ne ver reaches publication. T aken together , this suggests that adoption has increased in practice more than in print. W e found that replication occurs in CER, but it is not consistently visible . A potential reason may be r esearchers’ apprehension about publishing ndings that do not align with the original ndings. Our survey participants expressed particular concern about the consequences of producing contradictor y results, including increased scrutiny , defensive reviewing, and professional risk when challenging well-established study ndings. Replications that fail to conrm earlier work are less visible in peer-reviewed publications, resulting in literature biased towards positive conrmations. Future work could build on our ndings and explor e how resear chers’ apprehensions inuence their willingness to publish or pursue replication studies that could yield contradictory results. W e also found the CER community supp orts replication as a legitimate design, yet the structures that determine what gets published have not changed over time . This support may have inuenced the growth in r eplication in CER publications, and the CER community has recently promoted replication. For example, Bro wn et al . [7] suggested a future journal initiative that includes an additional re view cycle with an initial stage of registered replication reports. These eorts could encourage more r eplication, and the CER community could intr o duce such initiatives to conferences. 6.2 RQ2: What perceptions do Computing Education researchers have of using replication in their work? W e found little disagreement ab out the value of replication across our survey responses, in which participants con- sistently described it as important for verifying knowledge, strengthening the eld, and understanding the limits of existing results. Our participants framed replication as part of responsible research practice. W e found 147 (6%) papers Manuscript submitted to ACM 16 Garcia et al. during our SLR that encouraged replication of their work, and observed authors pr oviding instruments, treatments, interventions, and datasets, where possible, for replication. From prior eorts [ 14 ], researchers, such as Poulsen et al . [26], were inspired to conduct a replication study . At the same time, our survey participants’ positive views of r eplication were pair ed with clear reservations. They consistently describe d replication works as high-eort, high-risk, and low-rewar d. Participants strongly endorsed the value of replication, but also reported misalignment with prevailing incentive structures, particularly those that prioritise novelty and rapid publication. In 2016, Guzdial [13] observed a lack of support for replication that may persist today during the peer-review pr ocess. This suggests future work to determine whether CER venues and evaluation systems are set up in ways that actually make replication feasible and worthwhile . Survey participants perceived replication as demanding, time-consuming, and risky , esp ecially when results did not align with the original study . However , nearly half of the sur vey r esp onses reported having implemented at least one published CER inter vention in their own teaching or research, suggesting substantial replication beyond peer-review ed publications. This could explain why the proportion of published r eplications has remained stable despite the increasing community attention and support on the topic. W e found participants perceived that replication is harder to write, harder to publish, and less likely to b e rewarded than novel work. In practice, this creates a chasm between what researchers believe is important to do and what they feel is professionally sustainable and advantageous. Overall, we found that the CER community respects the importance of replication, but the eort and risks are not easy to justify . In this sense, the SLR’s stable replication rate do es not reect a lack of interest or engagement, but a ltering eect: only a small subset of replication studies are perceived as suciently publishable under current norms. How ever , as more resear chers replicate their work, some may not view it as replication, which futur e work could help clarify the criteria and distinctions for replication studies [ 2 ] to strengthen the CER community’s understanding and practice of this research design. Our survey responses show that v er y few view their w ork as a “pure ” replication, potentially demonstrating the community’s perception that replication is “exact” , where studies attempt to reproduce the original study’s design and procedures under the same conditions [ 15 ]. Instead, most participants describe replication as some form of adaptation or extension, shaped by local context and practical constraints. 6.3 RQ3: How can the CER community support researchers in adopting the replication study design? Overall, both the SLR and the sur vey support structural changes in our venues and evaluation systems to increase the number of publications that apply replication in CER. Fortunately , we have noticed positive changes over the last sev en years. Firstly , we observed that venues matter for publishing replication studies. Journal initiatives and special issues for replication have clearly made a dierence . Secondly , our results show that review practices ne ed to change. Participants’ concerns about novelty bias and the treatment of contradictory results suggest that r eplication must b e evaluated on rigour and transparency , not on whether it conrms or extends prior work. In addition, our survey results highlight why more r eplication studies are appearing in journals rather than conferences. Participants emphasised the nee d for space to justify context, document methodological variation, and provide supporting materials. Our ndings strengthen pr evious work by Ihantola et al . [16] , who found that page-length restrictions in conference proceedings often limit the details available for replication in CER. From this perspective, publishing more in journals is not simply a change in venue preference, but a response to the reporting demands that credible replication in CER entails. Thirdly , better support for sharing materials and metho ds would lower the barrier to entry . Many respondents emphasised the diculty of accessing sucient detail to replicate studies well. Normalising artefact sharing and reuse Manuscript submitted to ACM Revisiting the Replication Study Design Used in Computing Education Research 17 would directly support higher-quality replication. Fortunately , ser vices such as gshare and Center for Open Science (COS) provide platforms for sharing research materials and promoting transparency in instruments, research materials, and data and can be used by both journal and conference publications. 4 While some data, such as those r equiring ethics (IRB) board approval, may continue to pose challenges for r eplication, this presents a valuable opportunity for future research to explor e ways of providing data that meet IRB requirements. Finally , for our sur vey participants, recognition matters. If replication continues to b e under valued in hiring, promotion, and evaluation, it will r emain a secondary activity . T reating replication as a legitimate scholarly contribution rather than lesser work is essential for sustained adoption. When the CER community explicitly welcomes replication, and the review criteria r eect this, researchers are more willing to inv est the ne cessary eort. 7 Limitations Our study has threats to validity and limitations. Firstly , we acknowledge volunteer bias in our survey , as participants may be interested in replication studies. A limitation for our SLR may be one raised by the original study [ 14 ], where we may have missed papers presenting a r eplication study because the paper did not spe cify replication as the study design. Another limitation is the number of years used to compare the two SLRs. The original evaluated publications over ten years, whereas our SLR used seven years, so the time periods did not align. However , as previously mentioned in Subsection 5.1, the original SLR did not nd replication studies in the rst two y ears, 2009-2010. Hence, the results came from eight years, bringing the time span closer . Survey participation was voluntary and distribute d through professional networks and CER venues. Hence, par- ticipants were likely mor e engage d and interested in r esearch practice than the broader CER community . This could explain the relatively high pr oportion of participants who reported having conducted replication studies. In addition, despite the survey dening “replication” , participants interpreted it dierently , often including replication-adjacent or extension work. This, combined with the survey’s reliance on participants’ self-reported research histories, is likely to make the results imprecise. Participants may have underreported unsuccessful submissions, misremembered counts, or emphasised successful outcomes, particularly in relation to publication and rejection experiences. Finally , although we applied systematic thematic analysis to the qualitativ e sur vey r esp onses, coding necessarily involves interpretiv e judgment. While the themes capture recurrent patterns, alternative categorisations ar e possible, so these themes reect trends in the data rather than hard boundaries or exact totals. 8 Conclusions and Future W ork Replication plays a crucial role in establishing consistent and reliable ndings in Computing Education Research (CER). Our study oers an updated perspective on the per vasiveness and p erception of replication studies within CER. By replicating a 2019 Systematic Literature Review and a survey of resear chers, we found that although the CER community has historically favoured novel r esearch over replication, there has been a modest but notable increase in the number of replication studies published between 2019 and 2025, particularly in journal venues, while conference proceedings showed a slight decrease in replication papers. This suggests that targeted initiatives, such as the CSEJ 2022 special journal issue on replication, may be eective in encouraging such w ork. Our survey responses indicate that, while awareness and appreciation of r eplication studies are improving, challenges related to recognition, publication opportunities, and perceived value remain. Overall, our results indicate progress in the acceptance and implementation 4 gshare: https://gshare.com/; COS: https://www .cos.io/products/osf Manuscript submitted to ACM 18 Garcia et al. of replication studies, but highlight the ne ed for continued advocacy and support to ensure replication b ecomes a standard practice across all CER publication venues. Continued eorts are r e quired to further normalise replication as a valuable research practice, especially in conferences, to help shape revie w practices and assist revie wers, and to support researchers undertaking this important work. Our ndings underscore the importance of ongoing community dialogue and institutional support to foster a culture where replication is both valued and rewarded in CER. References [1] Abu S. Abdul-Quader , Douglas D. Heckathorn, K eith Sabin, and T obi Saidel. 2006. Implementation and analysis of respondent driv en sampling: Lessons learned from the eld. Journal of urban health : bulletin of the New Y ork Academy of Medicine 83, 6 (2006), i1–i5. [2] ACM SIGSOFT Empirical Standar ds Project. 2023. Empirical Standards: Replication . Accessed: 2025-12-04. [3] Alireza Ahadi, Arto Hellas, Petri Ihantola, Ari Korhonen, and Andr ew Petersen. 2016. Replication in computing education research: researcher attitudes and experiences. In Proceedings of the 16th K oli Calling International Conference on Computing Education Research (Koli, Finland) (K oli Calling ’16) . A ssociation for Computing Machinery, Ne w Y ork, NY, USA, 2–11. doi:10.1145/2999541.2999554 [4] Benjamin M. Ampel and Steven Ullman. 2023. Why Following Friends Can Hurt Y ou: A Replication Study . AIS Transactions on Replication Research 9 (2023), 1–16. Issue 1. doi:10.17705/1atrr .00001 [5] Austin Cory Bart and Cliord A. Shaer . 2019. What Have W e Talked About? . In Proceedings of the 50th A CM T echnical Symposium on Computer Science Education (Minneapolis, MN, USA) (SIGCSE ’19) . Association for Computing Machiner y , New Y ork, N Y , USA, 175–180. doi:10.1145/3287324.3287441 [6] Virginia Braun and Victoria Clarke. 2023. T oward good practice in thematic analysis: A voiding common problems and be (com) ing a knowing researcher . International journal of transgender health 24, 1 (2023), 1–6. [7] Neil C. C. Brown, Eva Marinus, and Aleata Hubbard Cheuoua. 2022. Launching Registered Report Replications in Computer Science Education Research. In Proceedings of the 2022 ACM Conference on International Computing Education Research - V olume 1 (Lugano and Virtual Ev ent, Switzerland) (ICER ’22) . 309–322. [8] Jacob Cohen. 1968. W eighte d Kappa: Nominal Scale A greement Provision for Scaled Disagreement or Partial Credit. 70 (1968), 213–220. Issue 4. [9] John W . Creswell. 2012. Educational research: Planning, conducting, and evaluating quantitative and qualitative research (4th ed.). Pearson, Boston, MA. [10] John W . Creswell and Vicki L. Plano Clark. 2006. Choosing a mixed methods design. In Designing and Conducting Mixed Methods Research . Thousand Oaks, CA: Sage Publications, Chapter 4, 125–143. [11] Alan R. Dennis and Joseph S. V alacich. 2014. A Replication Manifesto. AIS T ransactions on Replication Research 1 (08 2014), 1–4. [12] Michael C. Frank and Rebecca Saxe. 2012. T eaching Replication. Perspectives on Psychological Science 7, 6 (2012), 600–604. doi:10.1177/ 1745691612460686 [13] Mark Guzdial. 2016. SIGCSE 2016 Pre view: Miranda Parker replicated the FCS1 . Retrieved January 30, 2026 from https://computinged.wordpress.com/ 2016/03/02/sigcse- 2016- preview- miranda- parker- replicated- the- fcs1 [14] Qiang Hao, David H. Smith IV , Naitra Iriumi, Michail T sikerdekis, and Amy J. Ko. 2019. A Systematic Investigation of Replications in Computing Education Research. ACM Trans. Comput. Educ. 19, 4, Article 42 (Aug. 2019), 18 pages. doi:10.1145/3345328 [15] Joachim Hümeier , Jens Mazei, and Thomas Schultze. 2016. Re conceptualizing replication as a sequence of dierent studies: A replication typology . Journal of Experimental Social Psychology 66 (2016), 81–92. Rigorous and Replicable Methods in Social Psychology . [16] Petri Ihantola, Arto Vihavainen, Alireza Ahadi, Matthew Butler , Jürgen Börstler , Stephen H. Edwards, Essi Isohanni, Ari K orhonen, Andrew Petersen, Kelly Rivers, Miguel Ángel Rubio , Judy Sheard, Bronius Skupas, Jaime Spacco, Claudia Szabo, and Daniel T oll. 2015. Educational Data Mining and Learning Analytics in Programming: Literature Review and Case Studies. In Procee dings of the 2015 I TiCSE on W orking Group Reports (Vilnius, Lithuania) (I TICSE- WGR ’15) . 41–63. [17] Harold Jereys. 1974. Scientic Inference (3rd ed.). Cambridge University Press. [18] M. Korbmacher , F. Azevedo, C. R. Pennington, H. Hartmann, M. Pownall, K. Schmidt, M. Elsherif, N. Br eznau, O. Robertson, T . Kalandadze, S. Y u, B. J. Baker , A. O’Mahony , J. Ø. Olsnes, J. J. Shaw , B. Gjoneska, Y. Y amada, J. P. Röer , J. Murphy , S. Alzahawi, and T . Evans. 2023. The replication crisis has led to positive structural, procedural, and community changes. Communications Psychology 1, 1 (2023), 3. doi:10.1038/s44271- 023- 00003- 2 [19] J. Richard Landis and Gary G. Koch. 1977. The Measurement of Obser ver A greement for Categorical Data. Biometrics 33 (1977), 159–174. [20] David T . Lykken. 1968. Statistical signicance in psychological research. In Psychological Bulletin . 151–159. Issue 70. [21] Matthew C. Makel and Jonathan A. Plucker . 2014. Facts Are More Important Than Nov elty: Replication in the Education Sciences. Educational Researcher 43, 6 (2014), 304–316. doi:10.3102/0013189X14545513 [22] Matthew C. Makel, Jonathan A. P lucker , and Boyd Hegarty. 2012. Replications in Psychology Research: How Often Do They Really Occur? Perspectives on Psychological Science 7, 6 (2012), 537–542. doi:10.1177/1745691612460688 [23] Lorenz S. Neuwirth, Svetlana Jović, and B Runi Mukherji. 2021. Reimagining higher education during and post-CO VID-19: Challenges and opportunities. Journal of Adult and Continuing Education 27, 2 (2021), 141–156. doi:10.1177/1477971420947738 Manuscript submitted to ACM Revisiting the Replication Study Design Used in Computing Education Research 19 [24] Arnold Pears, Stephen Seidman, Crystal Eney , Päivi Kinnunen, and Lauri Malmi. 2005. Constructing a core literature for computing education research. SIGCSE Bull. 37, 4 (De c. 2005), 152–161. doi:10.1145/1113847.1113893 [25] Christopher G. Pickvance. 2001. Four varieties of comparative analysis. Journal of Housing and the Built in Environment 16 (2001), 7–28. [26] Seth Poulsen, Liia Butler , Abdussalam Alawini, and Georey L. Herman. 2020. Insights from Student Solutions to SQL Home work Problems. In Proceedings of the 2020 A CM Conference on Innovation and T echnology in Computer Science Education (Trondheim, Norway) (ITiCSE ’20) . Association for Computing Machinery , New Y ork, N Y , USA, 404–410. doi:10.1145/3341525.3387391 [27] Felipe Romero. 2019. P hilosophy of science and the replicability crisis. Philosophy Compass 14, 11 (2019), e12633. [28] Judy Sheard, S. Simon, Margaret Hamilton, and Jan Lönnberg. 2009. Analysis of research into the teaching and learning of programming. In Proceedings of the Fifth International W orkshop on Computing Education Research W orkshop (Berkeley , CA, USA) (ICER ’09) . Association for Computing Machinery , New Y ork, N Y , USA, 93–104. doi:10.1145/1584322.1584334 [29] David W . Valentine . 2004. CS e ducational research: a meta-analysis of SIGCSE technical symposium proceedings. SIGCSE Bull. 36, 1 (March 2004), 255–259. doi:10.1145/1028174.971391 Manuscript submitted to ACM

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment