GIFT: Bootstrapping Image-to-CAD Program Synthesis via Geometric Feedback

Generating executable CAD programs from images requires alignment between visual geometry and symbolic program representations, a capability that current methods fail to learn reliably as design complexity increases. Existing fine-tuning approaches r…

Authors: Giorgio Giannone, Anna Clare Doris, Amin Heyrani Nobari

GIFT : Bootstrapping Image-to-CAD Program Synthesis via Geometric F eedback Giorgio Giannone 1 2 Anna Clare Doris 2 Amin Heyrani Nobari 2 Kai Xu 1 Akash Srivasta v a 3 4 Faez Ahmed 2 Abstract Generating executable CAD programs from im- ages requires alignment between visual geometry and symbolic program representations, a capa- bility that current methods fail to learn reliably as design complexity increases. Existing fine- tuning approaches rely on either limited super- vised datasets or expensi v e post-training pipelines, resulting in brittle systems that restrict progress in generativ e CAD design. W e argue that the pri- mary bottleneck lies not in model or algorithmic capacity , but in the scarcity of div erse training ex- amples that align visual geometry with program syntax. This limitation is especially acute be- cause the collection of div erse and verified en- gineering datasets is both expensi v e and dif fi- cult to scale, constraining the development of robust generative CAD models. W e introduce Geometric Infer ence F eedback T uning (GIFT), a data augmentation framework that lev erages ge- ometric feedback to turn test-time compute into a bootstrapped set of high-quality training sam- ples. GIFT combines two mechanisms: Soft- Rejection Sampling (GIFT -REJECT), which re- tains di verse high-fidelity programs beyond e x- act ground-truth matches, and F ailure-Driven Augmentation (GIFT -F AIL), which con verts near- miss predictions into synthetic training examples that improv e robustness on challenging geome- tries. By amortizing inference-time search into the model parameters, GIFT captures the benefits of test-time scaling while reducing inference com- pute by 80%. It improv es mean IoU by 12% ov er a strong supervised baseline and remains com- petitiv e with more comple x multimodal systems, without requiring additional human annotation or specialized architectures. 1 AI Innov ation T eam, Red Hat 2 DeCoDE Lab, MIT 3 Core AI, IBM 4 MIT -IBM W atson AI Lab. Correspondence to: < ggiorgio@mit.edu >. Pr eprint. 1. Introduction From its theoretical origins ( Ross &Rodriguez , 1963 ; Coons , 1963 ; Mäntylä , 1987 ), Computer-Aided Design (CAD) has ev olved into the cornerstone of modern engineering, enabled by the sophisticated modeling capabilities of geometric ker - nels ( Piegl &T iller , 2012 ). 1 2 3 4 5 6 7 8 9 10 K 0.70 0.72 0.74 0.76 0.78 0.80 0.82 IoU GIF T GIF T -REJECT SF T F igur e 1. Efficienc y vs. Performance. W e compare Pass@k (test set IoU) across compute budgets. GIFT (green) matches the peak performance of the CAD-Coder -SFT baseline (orange) while using 80% less compute (requiring far fe wer samples). GIFT outper- forms both SFT and GIFT -REJECT at e very compute level, demon- strating that self-training with geometric feedback significantly enhances image-conditional CAD generation. Results reflect mean IoU on the GenCAD test subset, including failure cases. An ex- tended scaling analysis is provided in Appendix C (Figure 12 ). Deep generati ve modeling is transforming engineering de- sign, of fering ne w solutions to comple x, high-dimensional problems ( Ahmed et al. , 2025 ; Regenwetter et al. , 2022 ; Song et al. , 2023 ). Recent empirical successes highlight the versatility of these methods in tasks such as topology opti- mization ( Nie et al. , 2021 ; Mazé &Ahmed , 2023 ; Giannone et al. , 2023 ; Nobari et al. , 2025 ), mechanism linkage de- sign ( Nobari et al. , 2024 ), vehicle dynamics ( Elrefaie et al. , 2024 ), and CAD generation ( Alam &Ahmed , 2024 ). While researchers hav e de veloped direct forw ard models to synthesize 3D shapes from div erse inputs like point clouds, images, and text ( Alam &Ahmed , 2024 ; Y u et al. , 2025 ; Tsuji et al. , 2025 ), these approaches face a critical bottle- neck: the output format. Existing generati ve models ( Lam- bourne et al. , 2021 ; Li et al. , 2025b ) typically yield tessel- 1 Geometric Inference F eedback T uning Method IoU Median Mean 0.846 0.695 0.905 0.742 0.948 0.781 SF T GIF T -REJECT GIF T (a) Accuracy . SF T GIF T -REJECT GIF T 200 300 400 500 600 700 800 900 1000 Number of T ok ens (Gr ound T ruth) (b) Complexity - T okens. T ask Comple xity 0.5 0.6 0.7 0.8 0.9 IOU SF T GIF T -REJECT GIF T (c) Performance vs T ask Com- plexity . 0.0 0.1 0.2 0.3 0.4 0.5 0.6 P r oblems Solved SF T GIF T -REJECT GIF T +31.4% +53.8% (d) Ratio Problems Solved. F igur e 2. Robustness Analysis. ( a ) GIFT achiev es superior accuracy (Mean/Median IoU). ( b, c ) While all models degrade with increased task complexity (token length), GIFT maintains higher resilience than SFT . ( d ) GIFT solv es 53% more problems compared to the baseline, highlighting the benefit of div erse training data. lated meshes (STL) or Boundary Representations (B-Rep, STEP). These formats are inherently verbose and topologi- cally complex, making them dif ficult for engineers to param- eterize or edit. Moreover , the need for specialized 3D de- coders or large language models to generate such structures imposes significant computational and structural ov erhead. Modality-to-Program T o circumvent these limitations, a gro wing paradigm lev erages high-le vel symbolic pro- grams ( Bhuyan et al. , 2024 ; Hewitt et al. , 2020 ; Jones et al. , 2022 ) as an intermediate representation. Unlike rigid geo- metric formats, code offers a compact and editable struc- ture ( Ganeshan et al. , 2023 ) that is naturally amenable to autoregressi v e language modeling - a property that has al- ready proven ef fecti ve in domains such as mathematical reasoning ( Guo et al. , 2025 ) and visual-language ground- ing ( Surís et al. , 2023 ). This approach effecti vely abstracts geometry into parametric operations, though it necessitates an execution environment (e.g., a geometric kernel like OpenCASCADE 1 ) to render the generated code into v alid CAD formats. Despite this dependenc y , the modality-to- program strategy has gained significant traction in recent literature ( Doris et al. , 2025 ; Kolodiazhn yi et al. , 2025 ; Li et al. , 2025c ; Rukhovich et al. , 2025 ; W ang et al. , 2025b ), with a particular emphasis on CadQuery 2 , a Python-based scripting language for parametric CAD design. Post-T raining The dominant paradigms for post- training ( Ouyang et al. , 2022 ) are Supervised Fine-T uning (SFT) and Reinforcement Learning (RL). SFT provides a foundation for CAD generation but often suffers from weak alignment between visual features and program syntax. Recent works attempt to mitigate this by con- ditioning on auxiliary modalities such as point clouds and depth maps, though reliance on ad-hoc architectures limits model generality ( W ang et al. , 2025b ; Chen et al. , 1 https://github.com/Open-Cascade-SAS/OCCT 2 https://github.com/CadQuery/cadquery 2025 ). RL can impro ve alignment and performance but is resource-intensi ve ( Schulman et al. , 2017 ; Guo et al. , 2025 ), incurring high memory and communication costs due to the need to execute CPU-bound CAD kernels during online training ( Li et al. , 2025c ; K olodiazhnyi et al. , 2025 ). W e ar gue, ho we ver , that the primary bottleneck in SFT and RL post-training for engineering design is not model qual- ity or algorithmic sophistication, b ut the fine-tuning data itself. Our approach is moti vated by a simple observ ation: advances in LLMs and VLMs hav e been dri ven largely by high-quality , div erse datasets, yet assembling such data for data-driv en engineering design remains expensiv e. Data augmentation of fers a natural remedy , b ut the central chal- lenge is determining ho w to use av ailable compute most effecti vely to address model strengths and weaknesses while exploiting the structural properties of the underlying engi- neering problem. T o address these limitations, we introduce Geometric Infer- ence F eedbac k T uning (GIFT), a framew ork for verifier- guided data augmentation in Image-to-CAD generation. GIFT uses offline geometric v erification to mine div erse valid programs and structured near-miss failures, con v ert- ing them into a high-quality augmented training set. By distilling the benefits of test-time search into the training data, our approach improv es generation quality while reduc- ing inference cost and av oiding the complexity of online reinforcement learning. GIFT separates exploration from learning: candidate pro- grams are generated and verified offline during inference- time sampling, then used for standard supervised updates. This design preserves the benefits of reinforced geomet- ric feedback without introducing the instability of online training. Contributions Our key contrib utions are: • W e introduce Geometric Infer ence F eedback T uning 2 Geometric Inference F eedback T uning (GIFT), a scalable data augmentation framew ork for Image-to-CAD program synthesis that uses offline geo- metric feedback to con vert inference-time samples into augmented supervised training data. • W e propose a dual augmentation strategy: Soft- Rejection Sampling (GIFT -REJECT), which adds di- verse geometrically v alid alternati v e programs as new output tar gets, and Failure-Driv en Augmentation (GIFT -F AIL), which renders near-miss predictions into synthetic inputs paired with the original ground-truth code. • W e sho w that this augmentation pipeline expands the effecti ve training set substantially , improves single- shot accuracy over a strong supervised baseline, and reduces the reliance on expensi v e test-time sampling. • W e demonstrate through e xtensi ve analysis that GIFT improv es robustness on complex geometries, narrows the amortization gap between pass@1 and pass@k, and remains competitive with more complex multimodal systems without requiring additional human annotation or specialized architectures. 2. Background V ision-Language Models V ision-Language Models (VLMs ( Liu et al. , 2024 ; Bai et al. , 2025 )) are po werful foundation models that integrate Large Language Models (LLMs) with vision encoders to process visual information (Fig. 4 ). These systems are highly versatile, supporting div erse tasks such as visual captioning, QA, multitasking, referring expressions, and code generation. Although aligning visual and textual components remains an open challenge, recent VLMs based on Qwen hav e established strong baselines. Base Prompt Image VLM Code STEP FILE Generation Geometric Alignment STEP FILE GT IoU F igur e 3. The CAD-Coder pipeline processes multimodal inputs (text prompts and images) to generate ex ecutable CAD code. This code is conv erted into STEP files via geometric alignment and validated ag ainst the ground truth using IoU metrics. Image-Conditional CAD Program Synthesis Image-to- CAD ( Alam &Ahmed , 2024 ; Li et al. , 2025c ; Y ou et al. , 2025 ) remains a central challenge in generativ e design. Re- cently , CAD-Coder ( Doris et al. , 2025 ) introduced an end- to-end pipeline to bridge the gap between 2D images and parametric code (Fig. 3 ). Its three-stage workflo w utilizes a visual encoder (e.g., LLaV A ( Liu et al. , 2024 ) or Qwen- VL ( Bai et al. , 2025 )) to project geometric features into language embeddings, employs an autoregressiv e LLM to generate executable CadQuery scripts, and executes the code via a Python interpreter to reconstruct B-Rep models. The system is trained via Supervised Fine-T uning (SFT) on the 160k image-code pairs of the GenCAD-Code dataset ( Alam &Ahmed , 2024 ; Doris et al. , 2025 ). The training optimizes a standard next-token prediction objecti ve: F S F T ( θ ) = E ( x , c ) ∼D S F T [log p θ ( x | c )] = X c T X t =1 log p θ ( x t | x 25 T ask Comple xity - Operations 0.5 0.6 0.7 0.8 0.9 IOU SF T GIF T -REJECT GIF T (c) Performance vs Complexity (Operations). <300 300-400 >500 T ask Comple xity - T ok ens 0.5 0.6 0.7 0.8 0.9 IOU SF T GIF T -REJECT GIF T (d) Performance vs Complexity (T okens). F igur e 16. Performance metrics under different data-augmentation strategies. W e ev aluate T op-K ( K = 10 ) generations filtered by geometric validity ( I oU ≥ 0 . 9 against the ground truth) and compare accurac y , solved-problem rate, and performance as a function of task complexity . GIFT improves both geometric fidelity and rob ustness, with the largest gains appearing on more comple x problems. 20 Geometric Inference F eedback T uning SF T GIF T -REJECT GIF T 10 15 20 25 30 35 40 Number of Operations (Gr ound T ruth) (a) Operations (Ground T ruth). SF T GIF T -REJECT GIF T 200 300 400 500 600 700 800 900 1000 Number of T ok ens (Gr ound T ruth) (b) T okens (Ground T ruth). SF T GIF T -REJECT GIF T 10 15 20 25 30 35 40 Number of Operations (Generated) (c) Operations (Generated). SF T GIF T -REJECT GIF T 200 300 400 500 600 700 800 900 Number of T ok ens (Generated) (d) T okens (Generated). 0 10 20 30 40 50 60 Operations (Generated) 0.00 0.02 0.04 0.06 0.08 Density SF T GIF T -REJECT GIF T (e) Mean Operations (Generated). 0 250 500 750 1000 1250 T ok ens (Generated) 0.000 0.001 0.002 0.003 0.004 Density SF T GIF T -REJECT GIF T (f) Mean T okens (Generated). F igur e 17. Complexity statistics for ground-truth and generated programs with high-fidelity . W e compare the distributions of operation counts and token lengths across SFT , GIFT -REJECT , and GIFT . The results show that GIFT improves alignment with valid target structures. 21 Geometric Inference F eedback T uning E. Can Frontier Models Zer o-Shot Image-to-CAD? T able 9 compares GIFT against a frontier general-purpose multimodal model in a zero-shot Image-to-CAD setting. The results show that foundation models e xhibit nontri vial geometric competence, especially with higher-resolution inputs, b ut still trail a specialized domain-adapted system by a lar ge mar gin. This reinforces the v alue of targeted geometric supervision and domain-specific amortization ev en in the era of v ery capable generalist VLMs. T able 9. Mean and median IoU on a subset of the GenCAD test set for zero-shot Gemini 3.0 Pro and the specialized CAD-Coder -GIFT model. Results are shown for different input resolutions to assess the sensiti vity of frontier multimodal models to visual detail. Model Mode Input Res IoU (mean) IoU (median) Gemini-3-PR O Zero-Shot Low 0.200 0.141 Gemini-3-PR O Zero-Shot High 0.540 0.560 CAD-Coder GIFT - 0.782 0.948 T o assess state-of-the-art multimodal capabilities in engineering design, we ev aluated Gemini 3.0 Pro ( T eam et al. , 2023 ) on zero-shot Image-to-CAD generation. As shown in T able 9 , performance is heavily dependent on input resolution, with high-resolution inputs more than doubling mean IoU. While this indicates a remarkable emergent geometric understanding in general-purpose foundation models, they still lag behind specialized systems. Our domain-specific CAD-Coder with GIFT significantly outperforms the frontier model, demonstrating that smaller, specialized architectures remain superior for high-fidelity CAD program synthesis. 22 Geometric Inference F eedback T uning F . Dataset Generation 0.90 0.92 0.94 0.96 0.98 IoU 0 5 10 15 20 Density F igur e 18. Distribution of T op-1 samples with I oU > 0 . 9 . Sampling Strategy Our objecti ve is to curate a di verse dataset of CadQuery programs for single images, effec- tiv ely mimicking the multiple valid reasoning paths exhibited by lar ge language models. T o achie ve this, we employ QwenVL-2.5-7B-CADCoder as our primary sampler . W e strictly utilize the existing training set as source material, lev eraging ground truth STEP files as the e xclusi ve source of geometric feedback.The dataset is constructed via a multi-stage pipeline that scales candidate generation across fiv e distinct computational b udgets: N ∈ { 8 , 16 , 32 , 64 , 128 } . Starting with a base pool of 80,000 training images, we sample subsets of 10,000 (increasing to 40,000 for the N = 32 budget). T o maximize semantic di versity , we implement an in verse scaling strate gy across 29 hyperparameter configurations. As detailed in T able 10 , lo wer compute b udgets utilize broader temperature ranges (e.g., T ∈ { 0 . 2 , 0 . 4 , 0 . 6 } ) to encourage exploration, whereas higher b udgets prioritize precision through strict, lo w-temperature sampling ( T = 0 . 2 ). T able 10. Dataset budget mix and sampling hyperparameter configurations used during data generation. The Samples column reports the selected subset size retained for each compute budget after top-10% filtering. Budget Inputs Samples ( T , p ) N = 8 10,000 10,000 T = 0 . 2 : p ∈ { 0 . 7 , 0 . 8 , 0 . 9 , 1 . 0 } T = 0 . 4 : p ∈ { 0 . 7 , 0 . 8 , 0 . 9 , 1 . 0 } T = 0 . 6 : p ∈ { 0 . 8 , 0 . 9 , 1 . 0 } N = 16 10,000 20,000 T = 0 . 2 : p ∈ { 0 . 7 , 0 . 8 , 0 . 9 , 1 . 0 } T = 0 . 4 : p ∈ { 0 . 8 , 0 . 9 , 1 . 0 } N = 32 40,000 120,000 T = 0 . 2 : p ∈ { 0 . 7 , 0 . 8 , 0 . 9 , 1 . 0 } T = 0 . 4 : p ∈ { 0 . 9 , 1 . 0 } N = 64 10,000 60,000 T = 0 . 2 : p ∈ { 0 . 8 , 0 . 9 , 1 . 0 } N = 128 10,000 120,000 T = 0 . 2 : p ∈ { 0 . 9 , 1 . 0 } 23 Geometric Inference F eedback T uning Selection Strategy From approximately 1 million generated raw samples, we compute the Intersection over Union (IoU) against ground truth scripts to partition the data into three distinct splits. The FULL split retains the top 10% of successful generations per input (where I oU > 0 . 5 ). The FAIL split isolates failure modes by selecting the highest-scoring candidate for inputs where satisfactory reconstruction failed ( I oU < 0 . 9 ). Finally , we construct the Soft Rejection Sampling ( SRS ) set for high-quality fine-tuning. This split combines the original ground truth data with the single best generated candidate (T op-1) falling within the range 0 . 9 < I oU < 0 . 99 (excluding exact duplicates). Oversampling While selecting the best of K generations is generally ef fecti v e, it relies on the assumption that a valid candidate exists within the sample pool. Our analysis indicates that for a significant portion of the training set, simply increasing sampling frequency fails to yield high-IoU results. T o mitigate this, we le verage geometric feedback to identify these persistent failures and selecti vely augment the dataset with these hard samples. 0.0 0.2 0.4 0.6 0.8 1.0 IoU 0 5000 10000 15000 20000 25000 30000 35000 40000 F r equency (a) Before balancing. 0.0 0.2 0.4 0.6 0.8 1.0 IoU 0 2500 5000 7500 10000 12500 15000 17500 F r equency (b) After balancing. F igur e 19. IoU distribution of sampled programs before and after balancing. Balancing reduces over -representation of the densest score ranges and produces a more ev en distribution of training examples across quality le v els, improving the usefulness of both SRS and FD A data. 24 Geometric Inference F eedback T uning G. Amortizing Inference-T ime A ugmentation: Gradients In this section, we analyze the gradients of the GIFT objective function and establish the theoretical connection between our rejection-sampling approach and standard policy gradient methods. The Reinfor cement Learning Perspectiv e Ideally , we aim to maximize the expected geometric validity (IoU) of the generated programs. Let J ( θ ) be the expected rew ard under the policy p θ : J ( θ ) = E c ∼ q D , z ∼ p θ ( ·| c ) [ r ( z , z g t )] , (6) where the re ward r ( z , z g t ) = f ( z ) is the Intersection ov er Union (IoU) defined in the Method. Maximizing this objecti ve via gradient ascent yields the standard REINFORCE policy gradient: ∇ θ J ( θ ) = E c , z [ f ( z ) ∇ θ log p θ ( z | c )] . (7) In standard online RL (e.g., PPO), this expectation is approximated by sampling batches from the current policy π θ during training. Howe ver , this is computationally prohibiti v e for CAD synthesis because computing f ( z ) requires ex ecuting an expensi v e geometric kernel for e v ery step. G.1. The GIFT Appr oximation GIFT bypasses online sampling by approximating the expectation using a static, high-quality dataset collected via Inference- T ime Scaling (ITS). W e treat the sample selection process as an importance sampling step where the weights are binary (hard rejection). SRS Gradient (Diversity) The SRS component ef f ecti v ely performs Rew ard-W eighted Regression with a hard threshold. Instead of weighting e very sample by its scalar reward f ( z ) , we define a binary weight w srs ( z ) = 1 [ τ v alid ≤ f ( z ) < τ match ] . The gradient for the SRS objectiv e pro vided in Eq. 4 is: ∇ θ F S RS = E ( c , z ) ∼D S RS [ ∇ θ log p θ ( z | c )] . (8) Substituting the definition of D S RS , this is equiv alent to a Monte-Carlo approximation of the policy gradient where samples with low re ward are zeroed out: ∇ θ F S RS ≈ N X n =1 K X k =1 w srs ( z ( k ) ) ∇ θ log p θ ( z ( k ) | c ( n ) ) . (9) This formulation stabilizes training by treating the generated programs z ( k ) as fixed "pseudo-ground-truths," con verting the unstable RL problem into a standard supervised maximum likelihood estimation (MLE) problem. FD A Gradient (Robustness) The FD A component introduces a distinct gradient signal. Unlike SRS, which optimizes the likelihood of the generated sample z , FD A optimizes the likelihood of the gr ound truth z g t conditioned on a noisy input ˜ c . The objectiv e is a supervised denoising loss: F F DA = E ( ˜ c , z gt ) ∼D F D A [log p θ ( z g t | ˜ c )] . (10) The gradient is straightforward: ∇ θ F F DA = N X n =1 K X k =1 w f da ( z ( k ) ) ∇ θ log p θ ( z ( n ) g t | ϕ ( d ( sg [ z ( k ) ]))) . (11) Here, the gradient signal does not encourage the production of the failed sample z ( k ) . Instead, it updates the visual encoder and language decoder to be inv ariant to the geometric perturbations present in ˜ c = ϕ ( d ( z ( k ) )) , pulling the distribution back tow ards the mode z g t . 25 Geometric Inference F eedback T uning A ugmented Gradient Update Combining the Base (SFT), Diversity (SRS), and Robustness (FDA) terms, the final parameter update at each step is: ∆ θ ∝ X n ∇ θ log p ( z ( n ) g t | c ( n ) ) | {z } SFT : Grounding + X n,k w ( k ) srs ∇ θ log p ( z ( k ) | c ( n ) ) | {z } SRS: Div ersity + X n,k w ( k ) f da ∇ θ log p ( z ( n ) g t | ˜ c ( k ) ) | {z } FD A: Robustness (12) This formulation allows GIFT to amortize the cost of search into the model weights offline, avoiding the v ariance and computational cost of online policy gradient estimation. 26 Geometric Inference F eedback T uning H. V isualizations (a) (b) (c) (d) (e) (f) (g) (h) (i) F igur e 20. Rendered examples of generated CAD programs with intermediate IoU quality ( I oU > 0 . 5 and I oU < 0 . 9 ). These visualizations sho w ho w geometrically imperfect b ut ex ecutable programs can be projected back into the image domain and reused as synthetic inputs for Failure-Dri ven Augmentation. 27 Geometric Inference F eedback T uning Gr ound T ruth CAD Input Image Generated CAD 0 200 400 0 100 200 300 400 Augmented Image Gr ound T ruth CAD Input Image Generated CAD 0 200 400 0 100 200 300 400 Augmented Image Gr ound T ruth CAD Input Image Generated CAD 0 200 400 0 100 200 300 400 Augmented Image Gr ound T ruth CAD Input Image Generated CAD 0 200 400 0 100 200 300 400 Augmented Image Gr ound T ruth CAD Input Image Generated CAD 0 200 400 0 100 200 300 400 Augmented Image Gr ound T ruth CAD Input Image Generated CAD 0 200 400 0 100 200 300 400 Augmented Image Gr ound T ruth CAD Input Image Generated CAD 0 200 400 0 100 200 300 400 Augmented Image F igur e 21. Examples of low-IoU but ex ecutable generations used for Failure-Driv en Augmentation. The rendered failures provide structured hard negati v es that train the model to recov er the original ground-truth program from imperfect visual geometry . 28 Geometric Inference F eedback T uning Generate cadquery code for this image Image-to-CAD SFT IoU = 1 (a) SFT workflo w description Image-to-CAD GIFT (b) GIFT workflo w description F igur e 22. W orkflow comparison between standard SFT and GIFT . In the SFT pipeline, each input image is paired with a single ground-truth program. In GIFT , multiple sampled programs are geometrically verified, and both high-quality alternati ves and structured f ailures are con verted into additional supervised training signals. 29 Geometric Inference F eedback T uning Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD F igur e 23. Example of high IoU CAD generations used for SRS augmentation. 30 Geometric Inference F eedback T uning Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD F igur e 24. Example of high IoU CAD generations used for SRS augmentation. 31 Geometric Inference F eedback T uning Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD Gr ound T ruth CAD Generated CAD F igur e 25. Example of high IoU CAD generations used for SRS augmentation. 32 Geometric Inference F eedback T uning I. Details T able 11. Experimental setup and implementation details for training, inference-time scaling, and GIFT -specific augmentation. W e summarize model backbones, dataset sizes, sampling budgets, filtering thresholds, optimization settings, and hardw are. Hyperparameter V alue Model Ar c hitectur e Base Model Qwen-VL-2.5-7B-Instruct/Qwen-VL-2.5-3B-Instruct Parameter Count 7B/3B Datasets Source Dataset GenCAD-Code / DeepCAD T raining Size (Original SFT) 163k Augmented Dataset Size 370k IN T est Set Size 7355 OOD T est Set Size 400 Infer ence-T ime Scaling (Data Generation) Sampling Budgets ( N ) { 8 , 16 , 32 , 64 , 128 } Sampling T emperatures ( T ) { 0 . 2 , 0 . 4 , 0 . 6 } T op- p values { 0 . 7 , 0 . 8 , 0 . 9 , 1 . 0 } Sampler Model QwenVL-2.5-7B-CADCoder GIFT Configuration SRS V alidity Range ( τ v alid ) 0 . 9 ≤ IoU < 0 . 99 FD A Range ( τ noise ) 0 . 5 ≤ IoU < 0 . 9 Geometric Kernel OpenCASCADE / CadQuery T raining Optimization Optimizer AdamW Learning Rate 2 e − 5 LR Scheduler Cosine Decay Batch Size / GPU 4 T raining Epochs 10 Precision BF16 Hardware 8x A100 80GB 33

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

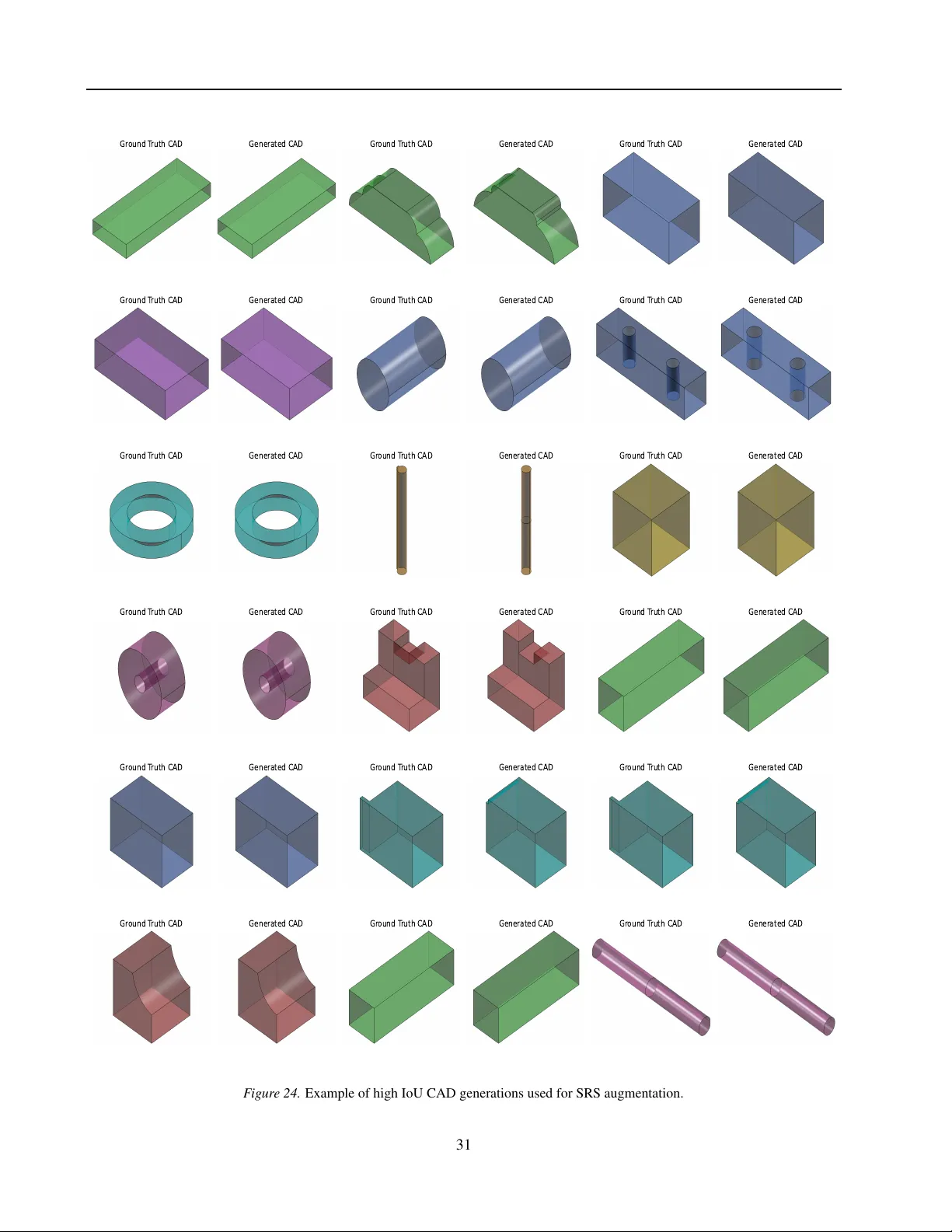

Leave a Comment